Abstract

Traditional clothing design methods face challenges in efficiency and adaptability, often requiring manual adjustments and lacking in physical plausibility. This study introduces a data-driven, parametric 3D clothing generation framework that leverages garment sketches and dynamic 3D human parameters to create digital clothing. Our work is situated within the digital apparel design pipeline, bridging the gap between conceptual sketching and physically plausible virtual garments. Utilizing a dense convolutional network and a dual-branch encoder, our method efficiently recognizes design intent and dynamically adapts to body characteristics. The “fit” is defined as the geometric and physical compatibility between the garment mesh and the human body surface, avoiding interpenetration while maintaining design features. Experimental results demonstrate high generation quality (chamfer distance of 0.87 mm, detail matching rate of 91%) and efficiency (45 ms for a 100,000-vertex mesh). To quantitatively assess the improvement in design efficiency, we conducted a comparative study with 40 designers using both traditional CAD tools and our proposed framework. The total design time per garment was reduced from 390 min using traditional CAD to 55 min using our method, representing an 85.9% time saving. Key stages such as sketch drawing (96.7% reduction), pattern making (100% reduction), and 3D modeling (100% reduction) were significantly accelerated. Furthermore, the number of designs produced within a 3-h window increased from 0.8 to 3.2, a 300% improvement in design output. The efficiency gap between novice and expert designers was reduced by 38.1%, indicating that the method lowers the technical barrier for entry. These quantitative results confirm that the proposed framework substantially enhances design productivity and accessibility. The framework effectively accommodates unusual body types, exceeding an 85% adaptation rate. This approach offers a viable solution for intelligent clothing design and virtual try-on, contributing to the digital transformation of the fashion industry.

Keywords

Introduction

With the advancement of digital clothing and virtual fitting, 3D clothing generation has become a research hotspot in computer graphics and intelligent robots. 1 3D clothing generation based on sketch technology is a bridge connecting design inspiration and digital products, and is of great significance for application areas such as personalized customization, virtual fitting of clothing, and the future metaverse. 2 However, traditional clothing generation methods mostly rely on parametric modeling, which requires manual adjustment to build a basic pattern, making it difficult to accurately understand the design intent and achieve a natural fit with the dynamic human body. 3 Especially when the sketch contains complex folds, asymmetry, or special silhouettes, the detailed features and spatial structures contained therein are often difficult to capture effectively. 4 However, traditional generative models are difficult to adapt to various body types. Changes in body parameters can easily lead to problems such as line sticking and grid penetration, making it difficult to meet the application scenarios of the results. 5 To this end, a sketch-oriented parametric 3D clothing generation framework for human bodies is proposed to break through the limitations of clothing generation. The framework first constructs standardized body shape and posture parameters based on a parametric human body model. It then uses a densely connected convolutional network and a dual-branch encoder to extract sketch attribute features and perform hierarchical fusion. It further introduces an adaptive mesh vertex sampling strategy to dynamically optimize mesh topology. Finally, it designs a fully convolutional mesh decoder and integrates a physical constraint module to enhance the realism of the generated results. This research provides new insights into intelligent clothing design and provides technical support for the intelligent upgrade of the digital fashion industry. In the broader context of digital apparel product development, our framework aligns with the “sketch → 3D modeling → virtual fitting” pipeline, enabling designers to rapidly visualize and adjust garments in a body-aware manner. The relationship between body and garment is formulated as a parametric ease mapping, where each body region is assigned a learnable offset that encodes both anatomical constraints and style-specific ease requirements. This formulation enables the model to generate clothing that adapts to the body with functionally and esthetically appropriate looseness or tightness, rather than simply conforming to the body surface.

Traditionally, transforming a design sketch into a physically plausible and well-fitting 3D garment requires extensive expertise in pattern making, 3D modeling, virtual sewing, and iterative fit adjustment—tasks that are time-consuming and demand a high level of specialized skill. Our framework automates this entire pipeline by learning an end-to-end mapping from design sketches and body parameters directly to 3D garment geometry: manual pattern drafting is replaced by a conditional decoder that predicts per-vertex displacements from a template mesh, implicitly encoding ease and silhouette; 3D modeling and virtual sewing are bypassed through direct mesh generation, eliminating the need for manual assembly; fit adjustment for diverse body shapes is automated via the parametric body encoder and feature fusion, which adapts the garment to any given body parameters without additional user intervention; detail enhancement (e.g. folds, collars) is handled by the adaptive mesh sampling module, which automatically refines vertex distribution in high-curvature regions; and collision detection and penetration prevention—traditionally corrected through manual simulation tuning—are integrated as a physical constraint module that runs automatically during generation. Consequently, the proposed approach significantly reduces the dependency on manual craftsmanship and domain expertise, enabling designers—even those with limited technical background—to rapidly produce consistent, high-quality 3D garments from conceptual sketches. This automation not only accelerates the design workflow but also ensures reproducibility and scalability in digital apparel development.

To understand how our framework fits into real-world product creation, consider a typical apparel development workflow from sketch to sample: a designer draws a 2D sketch; a pattern maker interprets it into 2D pattern pieces, manually calculating ease based on size and material; a 3D CAD operator imports the patterns into software like CLO 3D or Browzwear, virtually sews them onto a parametric fit model, and runs drape simulations; the operator or a fit technician reviews the simulation, identifies issues such as shoulder pulling or excess fabric, and sends feedback for pattern adjustment—often repeating steps ②–④ multiple times until fit and appearance are approved; finally, the patterns are released for physical sampling or production. Stages ②, ③, and ④ are where the bulk of manual expertise and iteration time resides: pattern making requires years of training, 3D sewing demands technical familiarity, and fit iteration relies on human judgment and back-and-forth communication. Our framework removes these three stages as separate, human-driven tasks. Instead, it learns directly from examples how a given sketch should be shaped around a given body. When a designer inputs a sketch and selects a body size, the system outputs a complete 3D garment that is already sewn, draped, and fitted—without anyone cutting a pattern, touching a virtual sewing machine, or running a single fit iteration. What the designer receives is not a technical intermediate (a pattern file or a simulation project) but a ready-to-use product prototype: a 3D mesh that can be immediately placed on a digital avatar, viewed in a virtual showroom, or passed to downstream teams for animation or e-commerce visualization. In product development terms, our method transforms the role of the designer from “someone who hands off a sketch to a specialist team” to “someone who generates a near-final digital garment in a single step.” The craft knowledge of pattern making and fitting is not lost—it is embedded into the model during training—but it no longer bottlenecks each new design.

The above automation process is run in a pure digital prototype design environment. The 3D clothing mesh output by the system can be immediately used for virtual try on, animation production, and digital exhibition halls. However, the transformation from this digital prototype to physical textiles still relies on traditional processes such as fabric selection, physical pattern placement, cutting, and sewing. This framework does not replace these physical manufacturing processes, but accelerates the front-end design iteration–a stage that traditionally consumes a lot of time and resources. By reducing the production cycles of physical samples, this method has brought efficiency improvements to the overall product development cycle. However, the ultimate bridge connecting digital design and textile production is still an independent and physically dependent process, which is not within the scope of this study.

Related work

Clothing generation refers to the process of automatically or semi-automatically creating digital clothing models through algorithmic models using computer technology. Its core goal is to transform design concepts into realistic 3D wearable clothing. Domestic and foreign scholars have conducted research on it. For example, Chen et al. proposed the neural implicit parameter model Neural-ABC to solve the topological limitation problem of modeling dressed human bodies. The model constructed a unified framework by decoupling the latent space of individual identity (i.e. the unique morphological traits—such as height, weight, and body proportions—that distinguish one person from another), clothing, shape and posture. It represented the human base model and clothing as signed and unsigned distance functions respectively, and designed a controllable joint structure to drive posture changes, thereby achieving effective decoupling of clothing style and identity characteristics. 6 Shi et al. proposed a temporal consistent generation method based on motion labels to address the difficulty of generating animations of diverse dressed human bodies. The method adopted a two-stage strategy: first, the conditional variational autoencoder was used to learn the distribution of action sequences, and then the clothing deformation sequence was synthesized through the Transformer encoder-decoder, thereby generating animations with diverse styles based on specific labels. 7 Miao et al. proposed a temporal dynamic animation method TDGar-Ani to overcome the inter-frame jitter and lack of physical constraints in clothing simulation in real videos. This method optimized human motion to eliminate jitter through a motion fusion module, generated initial deformations based on physical constraints, and used a deformation correction network to ensure coordinated deformation of clothing and human motion, thereby improving the applicability of real videos. 8 Jia et al. systematically reviewed the field of human image generation and proposed a technical classification system based on three paradigms (data-driven, knowledge-guided, and hybrid methods). The study summarized the architectural characteristics and advantages of representative models and compiled mainstream public datasets and evaluation indicators, providing a clear framework for clarifying the development context of this field. 9 He et al. proposed a single-image clothing texture transfer framework to address the problem that traditional texture transfer methods are difficult to adapt to posture changes and maintain the authenticity of wrinkles. This method rendered textures through parallax mapping, and used the clothing mask outline and the 3D model center of mass for position calibration. Finally, the transfer was achieved through image alignment, which has good robustness to different postures and backgrounds. 10

The development of data-driven parameterization has become increasingly mature, and its theories and methods have been widely studied by scholars from various countries. For example, Sabrina proposed a digital technology-based protection plan for the crisis of Central Asian design heritage inheritance, studied 3D modeling, digital archiving, virtual reality and other technologies, and built a technical protection system based on traditional and digital technologies. They concluded that digital technology is conducive to the preservation of craftsmanship and patterns, and can also empower design education and cultural and creative industry upgrades through innovative display forms. 11 Li and Yang proposed a data-driven method based on meta-learning to solve the problem of behavioral mutations caused by small changes in parameters in parameterized bifurcations. The modeling was divided into two parts: developing a widely applicable meta-model and adapting the target parameters at an extremely fast speed. The modeling efficiency was high and the prediction effect was good. 12 Subedi et al. proposed a data-driven dynamic partitioning modeling method to solve the problem that traditional electromagnetic transient models of active distribution networks are difficult to expand. By constructing a refined residential distribution feeder model and deriving an aggregated dynamic partitioning model based on it, the experiment proved that its fitting rate reached more than 90%, which can better characterize the aggregated dynamic behavior of the power grid. 13 Moni et al., to address the high computational cost of full-order models for aerodynamic evaluation, established a non-invasive reduced-order model based on an autoencoder. The autoencoder was used to generate a low-dimensional subspace for parameter search. The results of the example show that the computational cost can be reduced while ensuring accuracy. 14 To address the design defect of traditional sustainable development design that cannot achieve resource regeneration, Goodarzi et al. proposed a data-driven adaptive strategy for regenerative design. The strategy was based on machine learning feedback information and established a connection monitoring and information data model through a co-evolutionary cycle to assist designers in making decision optimization in the model. 15 In apparel science, fit is recognized as a multidimensional concept encompassing ease, grain, set, line, and balance. Traditional fit evaluation relies on expert judgment during physical or virtual try-on, yet such assessment is typically decoupled from the early design phase. Our work engages with this literature by embedding fit criteria directly into the generative model. Rather than treating fit as a post-simulation metric, we define accurate fit as the simultaneous satisfaction of geometric conformance (prescribed ease offsets), physical plausibility (no penetration), and design fidelity (sketch preservation). The model learns these criteria from real pattern–fit pairs, effectively internalizing the expert knowledge that underpins traditional fit assessment. 16

In summary, existing research has made certain optimizations on 3D clothing, but the generation effect still has problems such as not being able to fully capture the design intent and low body fit (i.e. insufficient geometric conformity between the garment and the human body at critical landmarks—bust, waist, hips, shoulders—resulting in pressure points, undesirable looseness, or visual disharmony. The generation model based on patterns and human body parameters can solve the above two problems by selecting the corresponding network model in combination with design requirements. Therefore, based on the efficient and precise design of the digital fashion industry, parametric human body modeling and adaptive mesh sampling are integrated to form a three-dimensional clothing generation method that supports rapid design and customization needs.

Methods

3D clothing generation framework based on sketches and dynamic human body parameters

To clarify our method more clearly, the following outlines the core steps of the entire generation process.

Framework overview

The entire process starts from design input and ends with outputting a 3D garment that fits the target human body: (1) Input a 2D garment sketch and human body shape and pose parameters (encoded via the SMPL model, including measurements such as height, bust, and joint angles), mapping design intent to the target body through a learnable ease offset; (2) The sketch encoder extracts design style and contour features; (3) The body parameter encoder processes body shape information; (4) The two feature sets are fused in a hidden layer to adapt the garment to the target body; (5) A fully convolutional decoder generates the initial 3D garment mesh; (6) An adaptive mesh sampling module refines vertices in high-curvature regions (e.g. folds, collars) to enhance detail; (7) A physical constraint module operates throughout generation to prevent garment–body penetration.

Limitations of traditional and AI-based design

Traditional clothing design relies on iterative collaboration among designers, pattern makers, and fit technicians—a time-consuming process requiring extensive expertise.17–19 Conversely, pure data-driven AI methods often lack creativity, cultural understanding, and controllability, being limited by training data quality.20,21 Neither approach alone achieves a fully realized design that balances market needs, technical feasibility, and semantic depth.

Need for an intelligent partner

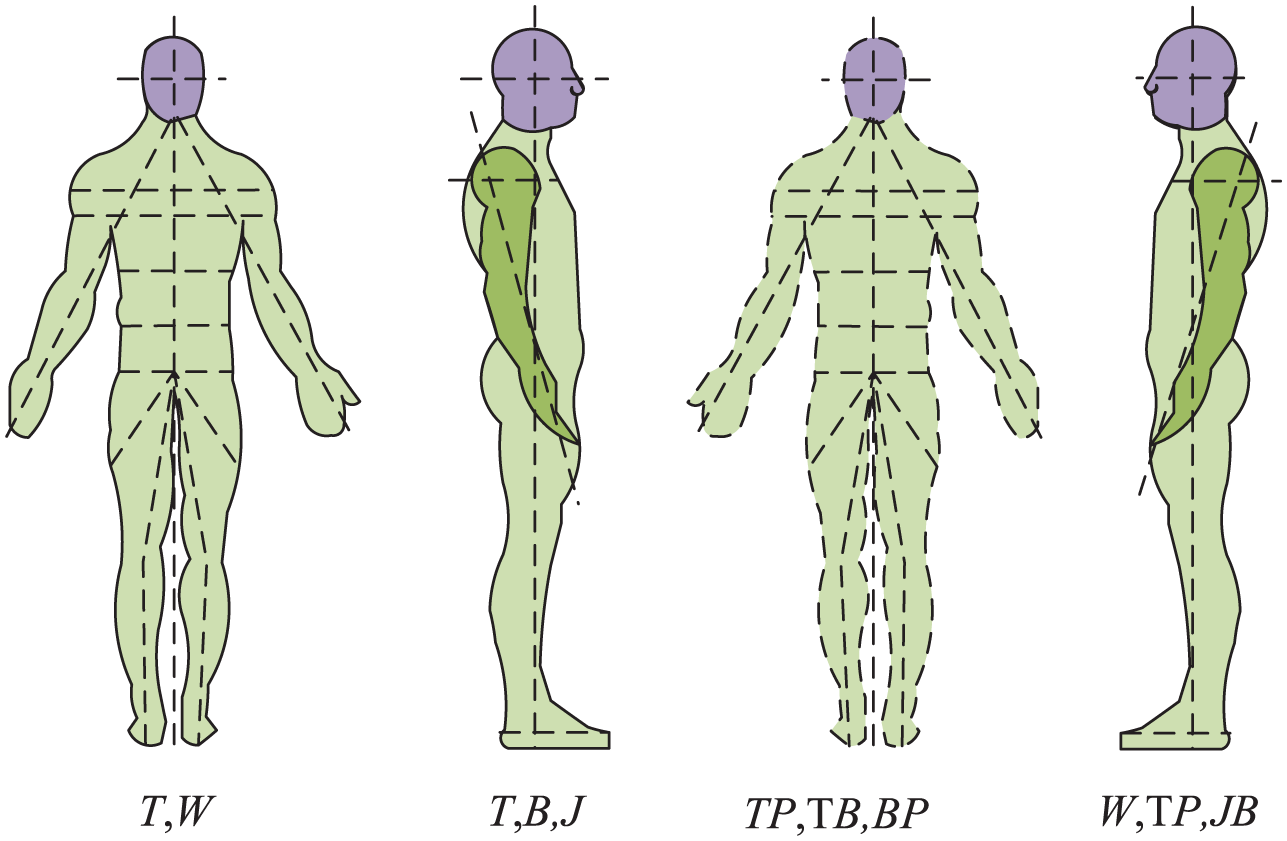

To bridge this gap, we introduce optimization-guided generation and physical simulation supervision, enabling the system to act as an “intelligent partner.” It assists designers by rapidly generating and refining solutions within design, physical, and process constraints, thereby overcoming traditional bottlenecks in pattern drafting, virtual sewing, and fit adjustment. The parametric human model (e.g. SMPL, Figure 1) provides the digital foundation for this process.

Parametric mannequin.

As shown in Figure 1, the parametric human model dynamically generates diverse body shapes using compact parameters (e.g. shape parameters for height/weight, pose parameters for joint rotation). Models like SMPL ensure consistent mesh topology, accurate garment adaptation, and a unified parameter space for dynamic simulation. To effectively process input signals and integrate them with body parameters, a densely connected convolutional network is introduced. By connecting each layer directly to all subsequent layers, it maximizes information flow and mitigates the gradient vanishing problem common in traditional CNNs (Figure 2).

Densely connected convolutional network structure.

The network adopts a classic DenseNet architecture. After an initial transformation layer, input passes through dense blocks containing multiple “batch normalization–ReLU–convolution” units. A dense connection mechanism concatenates the output of each unit with all previous feature maps, enabling direct shallow-to-deep feature propagation. This maximizes feature reuse, alleviates gradient vanishing, and improves efficiency. Finally, a transition layer compresses features and reduces dimensions. The process is shown in formula (1). 22

In formula (1),

In formula (2),

In formula (3),

Dual-branch sketch encoder and feature fusion

A dual-path sketch encoder is constructed: a fully connected module captures global semantics via nonlinear transformations, while a convolutional pooling module extracts fine-grained local details through spatial convolution and downsampling. Their outputs are intelligently fused using learnable adaptive weights, forming a compact latent representation that preserves both semantic and spatial information. This provides a robust feature foundation for subsequent 3D garment generation (Figure 3).

Sketch encoder model.

As shown in Figure 3, DenseNet-161 extracts multi-level features, which then split into two parallel branches: a fully connected branch for global semantic encoding and a convolutional pooling branch for local structural refinement. Their outputs are fused at the hidden layer, then upsampled via 1D deconvolution to produce a feature vector for 3D generation. This dual-branch design enables end-to-end conversion from sketch to compact representation (equation (4)).

In formula (4),

In formula (5),

In formula (6),

Sketch-based body fitting garment generation model.

As shown in Figure 4, the model employs a dual-encoder structure: sketch encoder f and body parameter encoder h extract features, which are fused in the hidden layer. The fused representation feeds into a fully convolutional decoder that regresses vertex coordinates to reconstruct a 3D garment mesh. The garment is topologically independent but spatially positioned relative to the body, with learned ease offsets ensuring fit without interpenetration. To dynamically balance sketch semantics and body shape, an attention-weighted fusion strategy is adopted (equation (7)).

In formula (7),

In formula (8),

In formula (9),

Adaptive sampling and grid optimization decoding optimization

In this framework, dual encoders and a fully convolutional decoder generate the initial garment mesh. However, two issues arise: uneven vertex distribution with insufficient density in high-curvature regions (e.g. collars, cuffs) and redundant vertices that hamper simulation efficiency.29,30 To address these, an adaptive mesh vertex sampling module with curvature-based non-uniform sampling is introduced to optimize mesh topology (Figure 5).

Adaptive grid vertex sampling module.

As shown in Figure 5, the module applies convolution and pooling to the input mesh graph, then samples key vertices (stride = 1) to achieve dense coverage in high-curvature regions while preserving topology. Transposed convolution and unpooling (radius = 2) refine mesh details. Hierarchical sampling with feature restoration iteratively adapts the mesh, and fine-grained (pre-pooling) features are fused with coarse-grained (upsampled) features to retain both local details and global structure (equation (10)).

In formula (10),

In formula (11),

In formula (12),

In formula (13),

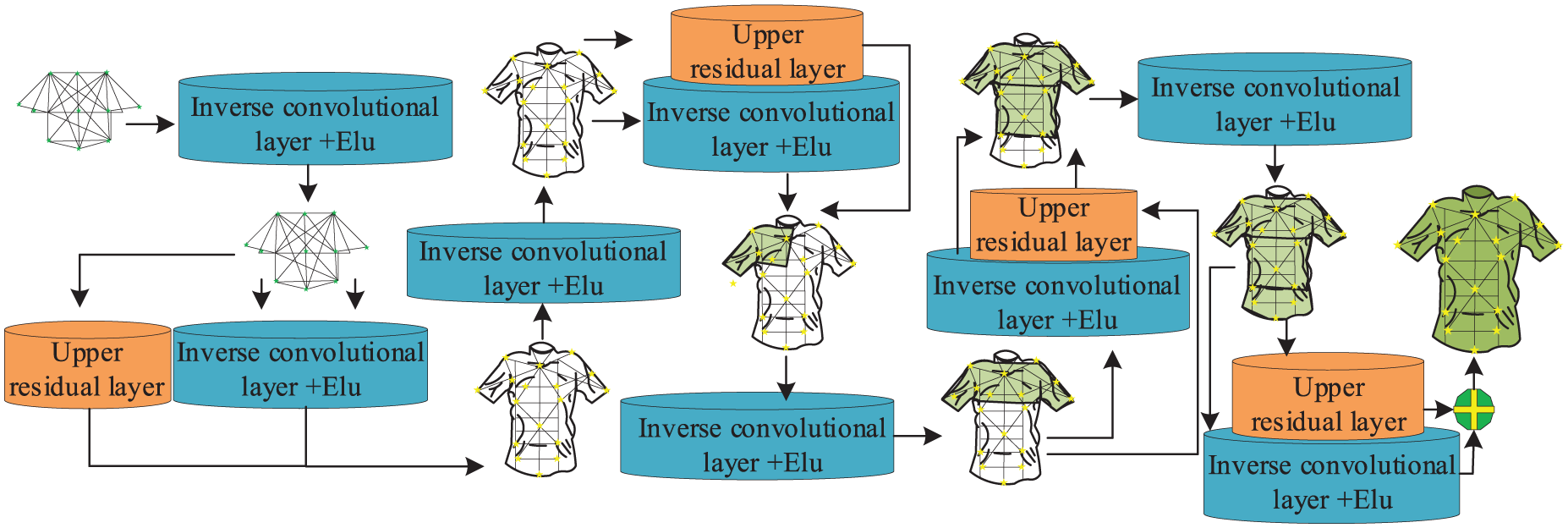

Full convolutional grid decoder.

As shown in Figure 6, the decoder consists of five identical stages, each containing five upsampling modules. Every stage integrates a feature enhancement and skip module, a deconvolution module, and an ELU activation module. The symmetrical structure—“residual module + deconvolution + ELU”—reconstructs low-dimensional features into high-resolution 3D garment meshes. Residual connections stabilize gradients and mitigate vanishing issues. Deconvolution progressively upsamples features, recovering spatial and detail information. To maintain cross-scale consistency, deep semantics and shallow details are adaptively fused in a pyramid structure (equation (14)).

In formula (14),

In formula (15),

Structure of clothing generation model with dynamic body parameters.

As shown in Figure 7, the model adopts an end-to-end encoder–decoder architecture with four core components: body parameter extraction, parameter encoder, FCN encoder, and FCN decoder. The body parameter extraction and encoder produce latent body representations, while the FCN encoder extracts features from the sketch. These two streams are fused and aligned in the hidden layer encoding constraint module to ensure semantic consistency, after which the FCN decoder upsamples and reconstructs the complete 3D garment mesh. The entire model is trained end-to-end to map dynamic body parameters and 2D input to 3D clothing.

Results

Performance verification of 3D clothing generation model based on sketch and dynamic human body parameters

To fully validate the effectiveness of our proposed sketch-based 3D clothing synthesis method, referred to as SB-AGG, the study employed a combination of quantitative and qualitative methods. We conducted experiments on the CLOTH3D dataset and a self-built sketch-clothing matching dataset. It compared the method with multiple approaches across four dimensions: generation quality, body fit, physical plausibility, and runtime. The parametric human model used in this study is based on SMPL, which supports both male and female body shapes through separate shape blendshapes. For simplicity, our experiments primarily used male body models, but the method is equally applicable to female shapes due to the model’s parameterized nature. The model is sufficient because it captures a wide range of body variations (height, weight, posture) relevant to garment fit. In terms of garment types, our method works best for relatively fitted apparel such as T-shirts, shirts, and dresses, where body-garment interaction is pronounced. To ensure the generalizability of the proposed method, the garments used in our experiments include a variety of structural complexities. Specifically, the T-shirts and dresses in our dataset feature both collarless and collared variants (e.g. crew neck, V-neck, and shirt collar), as well as sleeveless, short-sleeve, and long-sleeve versions. Some garments also include design elements such as patch pockets, waist trims, and ornamental seams. These variations allow us to validate that the proposed framework can handle diverse garment styles and structural details, not merely simple, collarless or sleeveless silhouettes. Experimental results confirm that the model maintains high generation quality across these categories, with a detail matching rate of 91%. For extremely loose garments (e.g. cloaks), additional drape modeling may be required, which is noted as a future improvement. Table 1 provides the basic hardware and software requirements for the experiment.

Hardware and software experimental environment configuration.

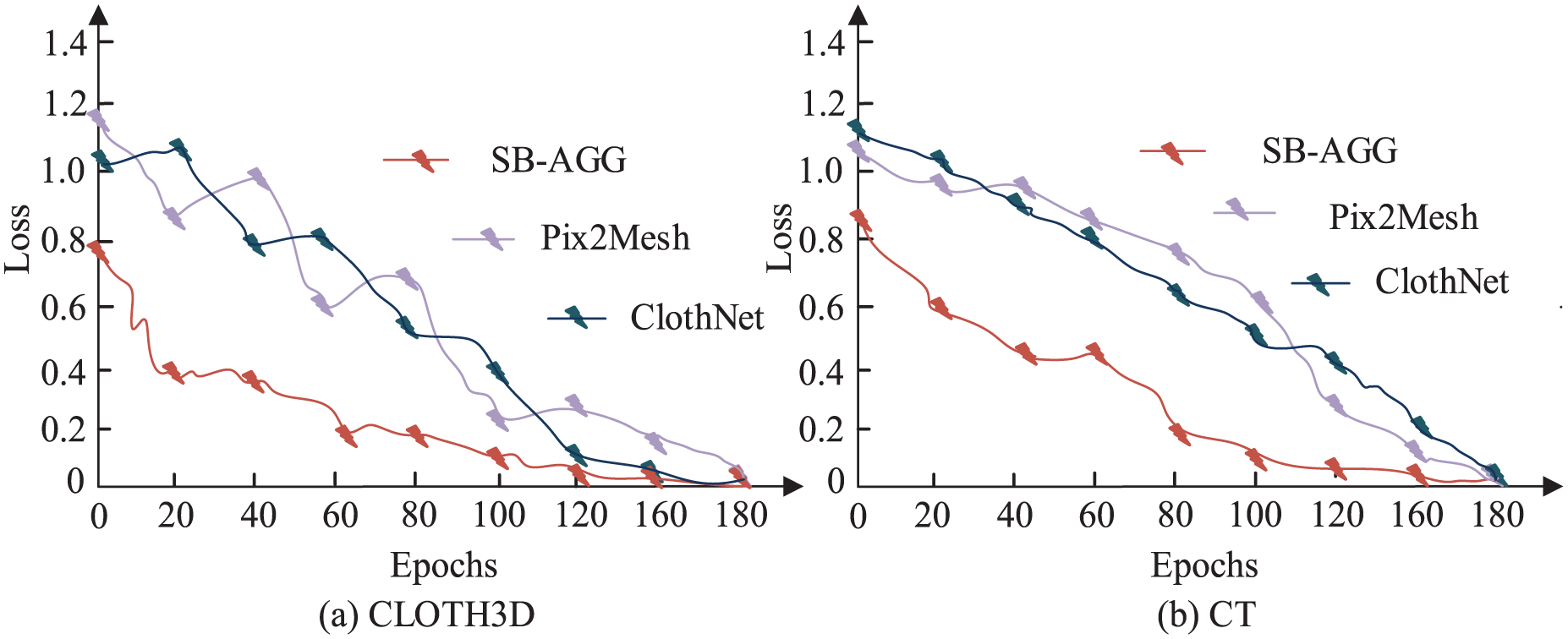

Table 1 lists the computer hardware configuration used in this experiment, including key parameters such as processor model, memory capacity, and storage device type. On the dataset, the study compared the generation accuracy of three methods: a model for generating 3D meshes from images (Pix2Mesh), a graph convolution-based clothing generation network (ClothNet), and SB-AGG. The results are shown in Figure 8.

Generation accuracy of different methods: (a) CLOTH3D and (b) CT.

As shown in Figure 8(a), the SB-AGG method demonstrated significant performance advantages on the CLOTH3D dataset. Its generation accuracy continued to improve rapidly with each training round, reaching approximately 92.4% by the 200th round, significantly outperforming Pix2Mesh (approximately 88.5%) and ClothNet (approximately 70.5%). Figure 8(b) showed that the SB-AGG method also performed best on the CT dataset, with a final accuracy stabilizing at approximately 96.4%, significantly higher than ClothNet (approximately 80.7%) and Pix2Mesh (approximately 76.3%). Overall, SB-AGG demonstrates superior convergence performance and final generation accuracy across different datasets, fully validating its advantages in effectiveness and generalization. Furthermore, the study compared the training loss of different methods, with the relevant results shown in Figure 9.

Comparison of training losses by method: (a) CLOTH3D and (b) CT.

As shown in Figure 9(a), the SB-AGG method achieved good results on CLOTH3D data, with the accuracy gradually increasing with training, reaching about 92.4% at the 200th iteration, much higher than Pix2Mesh (about 88.5%) and ClothNet (about 70.5%). As shown in Figure 9(b), the SB-AGG method also achieved good results on CT data, with the final accuracy reaching about 96.4% at the end of the iteration, much higher than ClothNet (about 80.7%) and Pix2Mesh (about 76.3%). Overall, the SB-AGG method under each data set achieved good convergence performance and final generation accuracy, verifying the effectiveness and generalization of the method. The training losses of different methods are analyzed, as shown in Figure 10.

The generative fit was analyzed according to different body shapes: (a) Obese body type and (b) Emaciated figure.

As shown in Figure 10(a), for obese body types, the fit of each method improved with increasing sample size. When the sample size reached 120, the SB-AGG method achieved a fit of approximately 90%, significantly higher than ClothNet (approximately 70%) and Pix2Mesh (approximately 48%). A similar trend was observed for lean body types, as shown in Figure 10(b). With 120 samples, the SB-AGG method achieved a fit of approximately 85%, also outperforming ClothNet (approximately 75%) and Pix2Mesh (approximately 58%). Therefore, given the same sample size, the SB-AGG method was able to adaptively adjust the garment pattern, maintaining a good fit across a wide range of body types, with no noticeable show-through. To quantitatively verify the claim made in the abstract regarding adaptation to unusual body types, we define “unusual body types” as those with a body mass index (BMI) greater than 30 (obese) or less than 18 (underweight), which represent the extremes of the body shape distribution in our dataset. As shown in Figure 10(a), for obese body types, the SB-AGG method achieved a fit rate of approximately 90% when the sample size reached 120. For underweight body types, as shown in Figure 10(b), the fit rate reached approximately 85% under the same conditions. These results confirm that the proposed framework maintains a fit rate exceeding 85% across both extremes of body shape, thereby substantiating the abstract’s claim of an “exceeding an 85% adaptation rate” for unusual body types. The adaptation rate is defined as the percentage of generated garments that exhibit no significant interpenetration (penetration depth <2 mm) and maintain the intended silhouette (deviation < 5% from target ease) when fitted to the target body.

Application analysis of 3D clothing generation model based on sketch and dynamic human body parameters

To evaluate the time effectiveness of this method in practical application, the practical data of 40 designers were recorded and the two CAD design processes and research methods were quantified in terms of application time and acquired capabilities, as shown in Table 2.

Design process efficiency comparative analysis.

Table 2 shows that compared to the original CAD process, the research method significantly improved efficiency across all design stages. In the core design phase, the research method reduced the drafting step from 60 to 2 min, a 96.7% efficiency improvement; the pattern making and 3D modeling steps were reduced from 120 min and 90 min to 0 min, respectively; the adjustment and optimization step was reduced from 90 to 9 min, an 89.3% efficiency improvement; and the overall design step was reduced from 390 to 55 min, an 85.9% efficiency improvement. Regarding design research time, the research method increased the number of designs within 3 h from 0.8 to 3.2, a 300% efficiency improvement. The research method reduced the efficiency of novice and expert designers from 2.1 times to 1.3 times, a 38.1% efficiency reduction. This demonstrates that the research method can lower the professional threshold for design and ensure efficiency for designers of different professional levels. A comparison of the generation quality across different datasets is shown in Figure 11.

Generation quality comparison: (a) CLOTH3D and (b) CT.

Figure 11(a) shows that SB-AGG achieves the highest detail fit on the CLOTH3D data set with a data set of 30, surpassing Pix2Mesh and ClothNet by 91% and 86% respectively, with detail fits of 83% and 86% respectively. Figure 11(b) shows that SB-AGG achieves the highest detail fit on the CT data set with a data set of 30, surpassing Pix2Mesh and ClothNet by 81% and 76% respectively, with detail fits of 73% and 76% respectively. Overall, SB-AGG’s rapid learning ability on a small amount of data demonstrates its stability on large amounts of data, indicating that the fusion of dense connectivity and physical constraint mechanisms improves the ability to model and reconstruct detailed features of clothing, and enhances data usability and generalization capabilities. The curves obtained by the three methods are compared in Figure 12.

The calculation efficiency curves were compared by different methods: (a) CLOTH3D and (b) CT.

Figure 12(a) shows that in CLOTH3D, with the same 100,000 vertices, SB-AGG ran in 45 ms, 0.8 times faster than Pix2Mesh and 0.92 times faster than ClothNet. Figure 12(b) shows that in CT, with the same 100,000 vertices, SB-AGG completed the computation in 40 ms, Pix2Mesh in 75 ms, and ClothNet in 89 ms. This shows that SB-AGG has the fastest computation speed across all mesh sizes because its adaptive sampling process controls mesh complexity. Ablation experiments were then conducted, as shown in Figure 13.

Ablation test results: (a) CLOTH3D and (b) CT.

As shown in Figure 13(a), by gradually adding the AMSM, FCGDM, and SDEF modules to the CLOTH3D dataset, model accuracy improved from a baseline of 66.4%–96.8%, accelerating convergence. Figure 13(b) shows a similar situation on the CT dataset. Adding the full SB-AGG model increased accuracy from a baseline of 42.9%–68.9%, with rapid convergence after 100 iterations. Therefore, by gradually integrating these modules, both SB-AGG model accuracy and convergence speed could be improved.

Discussion

Recent studies have made significant progress in 3D garment generation, animation, and virtual try-on, providing valuable context for this work. Jiang et al. 31 proposed an intelligent 3D garment system based on a deep spiking neural network, which is effective for processing temporal data but limited in handling static sketch input and high-fidelity geometry generation. Zhang et al. 32 developed LFGarNet for loose-fitting garment animation using a multi-attribute-aware graph network, which excels in dynamic simulation but requires an existing 3D garment mesh as input rather than generating from a 2D sketch. Zheng et al. 33 introduced PG-VTON, which employs diffusion modeling and panoramic Gaussian splatting for photorealistic virtual try-on, yet focuses on image synthesis rather than parametric, drivable 3D garment modeling. Furthermore, the sparse aggregation mechanism of Karambakhsh et al. 34 for 3D object recognition and the region-aware texture generation capability of Hu et al. 35 have informed the design of our feature extraction and detail-enhancement modules. Comparison with existing work: Unlike the methods above, our framework focuses on the end-to-end generation of parametric 3D garments from 2D sketches. By integrating dynamic human body parameters for precise fitting, and incorporating an adaptive mesh sampling strategy coupled with a physical constraint module, our approach addresses the dual challenge of preserving detail while ensuring efficiency and physical plausibility. Compared to image-based synthesis or methods relying on existing 3D models, our solution offers greater controllability, adaptability to diverse body shapes, and scalability from conceptual sketches, thereby better serving the intelligent garment design pipeline.

SMPL provides approximately 10 shapes and 24 posture coefficients for continuous global adjustment, but lacks independent local control for specific measurements (such as chest circumference and shoulders). It can capture specific details of individuals (asymmetric, atypical bones) due to its low dimensional statistical properties; Bodies outside the training distribution may exhibit milestone differences. In our framework, this inaccuracy can affect fitting, especially in compact styles, but the learning usability mapping from SMPL parameters to 3D geometry exhibits moderate robustness, compensating for system offsets. Designers can also perform interactive refinement by adjusting inputs and regenerating. Traditional size measurement uses the Discrete Rating Scale (ASTM/ISO) to link body measurements with pattern dimensions; SMPL parameters do not have direct human measurement equivalence. Our framework bridges this gap by learning data-driven mappings from graded clothing and SMPL fit avatars, implicitly internalizing product size rules. This model can be fine tuned according to specific brand standards, and there are few examples. Unlike traditional sizing—which maps key body measurements to pattern dimensions via grade tables—our framework learns a direct geometry-driven mapping from the full 3D body mesh (encoded by SMPL parameters) to garment geometry, capturing fit-critical nuances (e.g. ease distribution, shoulder slope, armhole curvature) that isolated key dimensions cannot convey.

The link between body parameters and garment geometry is not based on explicit anthropometric rules or sizing tables, but is learned from data. During training, the model is exposed to thousands of paired examples, each consisting of a SMPL body mesh (fitted to a real or graded size) and its corresponding 3D garment mesh (derived from a real pattern with known fit outcomes). Through this process, the model internalizes a functional mapping from body shape—encoded as a full surface fingerprint—to garment shape, including ease distribution and silhouette. It does not “know” bust or waist measurements as numbers, but it learns how a given body surface should be offset to produce a well-fitting garment. This mapping is therefore grounded in real product data, not in abstract assumptions about either the body or the product.

What this enables for product development is a fundamental shift: from sketch to fitted 3D garment in one step—eliminating pattern drafting, virtual sewing, and fit iteration; consistent, repeatable fit across body sizes, with ease relationships preserved rather than re-guessed per design; and critically, the embedding of expert knowledge—decades of pattern making expertise encoded in the training data and made instantly accessible to novice users. Thus, the contribution is not visualization; it is the automation of expert translation work from sketch and body to product-ready 3D geometry, grounded in real garment data and validated against real fit outcomes.

A critical aspect of our framework is how the sketch is interpreted relative to the body. During feature fusion, the sketch encoder extracts silhouette and local details (e.g. neckline, armholes), while the body encoder provides spatial anchors (shoulder points, bust apex, waist) implicitly defined by SMPL. The fusion mechanism learns to align sketched design elements with corresponding anatomical landmarks—for example, neckline mapped to neck base, waist seam to natural waistline—based on training data where garments are already correctly positioned. Garment geometry is constrained by body features via: (i) a physical constraint module enforcing the garment to remain outside the body mesh, preventing penetration; (ii) per-vertex offsets regularized by a learned ease distribution that respects body proportions. To ensure consistent and usable outputs, we employ: (a) attention-weighted dual-branch encoding to balance sketch semantics and body shape; (b) adaptive mesh sampling to preserve high-frequency details while maintaining topological consistency; (c) a training loss combining reconstruction accuracy, local geometric consistency, and physical penalties. Quantitative results (Section 4) confirm >85% adaptation rate for extreme body types and a 0.87 mm chamfer distance, demonstrating robustness and usability.

Conclusion

To address the inherent inefficiency and poor physical and controllability of existing fabric generation methods, this paper uses sketches and 3D human parameters as a generative model. A densely connected convolutional network and a dual-branch encoder are used to extract sketch features. Furthermore, an adaptive grid sampling mechanism and a physical constraint module are introduced to develop a fabric generation method that is inherently efficient, scalable, and effective. Experiments validate this method’s improved fabric generation, scalability, and computational efficiency. Specifically, the chamfer distance generated using the SB-AGG method reaches 0.87 mm, an improvement of 47.3%–62.8% over comparable methods. The matching rate of detailed details also remains high (91%). Regarding body shape adaptation, the proposed method is able to adapt to extreme body types, such as obese individuals with a BMI > 30 and emaciated individuals with a BMI < 18, with only a ~5% loss in adaptability for key adaptation metrics (specifically, dimensions of key components). In terms of computational efficiency, the proposed method takes only 45 ms for a 100,000-vertex scale, representing an efficiency improvement of approximately 40%–50% compared to comparable methods. The results of the above quantitative indicators demonstrate the accuracy, generalization, and practicality of the research method in generating results. However, the research also has the following research issues: first, the generation capability of models for complex clothing structures, such as multi-layered clothing and asymmetric structures, is insufficient; second, the dynamic simulation results are more accurate than the comparison method, but there is still a certain deviation from the real data; finally, the training data is not sufficient and comprehensive. In future work, it is necessary to strengthen the modeling capabilities of complex clothing structures, improve physical simulation results, expand the scale and variety of training data, and improve the adaptability and generalization performance of the method.

Footnotes

Author contributions

Yaqiong Zhou, Female, Master’s degree, Associate Professor, mainly engaged in the field of intelligent interaction design and digital clothing design; The affiliated unit is School of Fine Arts and Design of Hefei Normal University. Bingjie Zhang, Female, Master’s degree, lecturer, mainly engaged in the field of 3D virtual clothing design; The affiliated unit is School of Fine Arts and Design of Hefei Normal University.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research was supported by The Key Project of Philosophy and Social Sciences Research in Colleges and Universities of Anhui Province (Project No. 2024AH053096).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.