Abstract

As evaluation methodologies evolve, there is increasing emphasis on understanding not only whether a programme works but also how and why its outcomes are achieved. Yet existing literature offers limited guidance on integrating multiple qualitative and quantitative data sets. This article introduces the Adapted Extended Pillar Integration Process (Adapted ePIP) as a structured approach for integrating complex mixed methods data. Using an evaluation of inquiry-based learning in speech-language pathology education, the article illustrates how the Adapted ePIP supports systematic integration across more than three data sources, thereby overcoming a key limitation of the original ePIP. This contribution advances mixed methods research by demonstrating a replicable process for developing meta-themes and meta-inferences that strengthen legitimation and enhance the interpretive depth of programme evaluations.

Introduction

The integration of qualitative and quantitative approaches is central to mixed methods research. It allows researchers to develop more comprehensive understandings of complex phenomena that cannot be fully captured by a single methodological approach (Plano Clark, 2017). Integration may occur at various stages of a study, such as during the development of theoretical frameworks, during data collection and analysis, or at the level of interpretation and reporting. Researchers must select an integration strategy that aligns with their study’s philosophical assumptions, research question, methodological design, and types of data collected (Plano Clark, 2017). Despite its importance, achieving meaningful integration remains a persistent challenge in mixed methods research. A common pitfall is the tendency to present qualitative and quantitative findings side by side without fully synthesising them and ensuring legitimation.

This challenge is especially evident in complex programme evaluations, which require the synthesis of multiple data types collected across stakeholder groups, time points, and intervention levels. Programme evaluations aim to capture both processes and outcomes, often requiring integration strategies that can manage large and diverse data sets while maintaining coherence (Donaldson, 2022; Skivington et al., 2021). However, most existing integration techniques, including those using joint displays, were developed for studies that include only two or three data sources (Gauly et al., 2024; Hall & Mansfield, 2023; Johnson et al., 2019). As a result, they do not offer the flexibility or capacity needed for complex programme evaluations.

Crucially, integration also plays a key role in ensuring the legitimation of mixed methods results. Legitimation refers to the extent to which qualitative and quantitative data sets are meaningfully combined and interpreted in ways that uphold the integrity and credibility of the research (Onwuegbuzie et al., 2011). Rather than occurring at a single point, legitimation is a dynamic process that must be considered throughout all aspects of a study (Onwuegbuzie et al., 2011). As such, integration is not only a technical activity but also a methodological and epistemological imperative. Perez et al. (2023) further emphasise that legitimation provides a comprehensive framework for assessing quality across all phases of a mixed methods study. Their findings suggest that legitimation helps researchers navigate integration challenges by addressing potential threats to validity, encouraging reflexivity, and ensuring that meta-inferences are both defensible and grounded in the study’s design. This expanded view highlights the centrality of legitimation in producing high-quality, integrated findings in mixed methods research.

One key mechanism through which legitimation is achieved is the development of meta-themes and meta-inferences during integration. According to Creswell and Plano Clark (2018), researchers should aim to produce meta-themes and meta-inferences when integrating data sets. Meta-themes are overarching patterns that emerge across qualitative and quantitative data sets, while meta-inferences represent higher-level interpretations that unify findings and offer deeper insights into the research question (Riazi & Farsani, 2024). To support this synthesis, the literature describes several strategies to aid researchers in integrating their findings in a transparent and systematic manner.

To address this gap, this paper presents the Adapted ePIP framework using a worked example drawn from a programme evaluation. In Barber’s (2025) doctoral research, which explored the impact of inquiry-based learning on the development of critical thinking skills in speech-language pathology (SLP) students, integration was not only essential for understanding both process and outcome data but also presented a methodological challenge in terms of navigating pillar building across a much larger number of data sets.

Established Strategies for Integration

Three main strategies are described in the literature to support integration during interpretation and reporting. The first is integration through narrative. This approach involves presenting qualitative and quantitative findings separately while conceptually linking them to illustrate areas of convergence and divergence (Fetters et al., 2013). The second strategy is integration through data transformation. This method requires converting one form of data into another, such as by quantifying qualitative codes or using qualitative interpretation to explain statistical findings (Fetters et al., 2013; Bazeley, 2024). Although this approach facilitates comparison, it may compromise the richness of the original data. The third strategy is the use of joint displays. Joint displays are visual representations, such as matrices or tables, that align qualitative and quantitative findings. This strategy is particularly effective for comparing findings, identifying contradictions, and developing integrated interpretations (Fetters & Tajima, 2022; Johnson et al., 2019).

Of the three approaches, joint displays have gained increasing recognition for their ability to support transparent and structured integration. They visually highlight convergence and divergence in the data, enabling the development of meta-inferences and revealing insights that might remain unnoticed if the data were analysed in isolation (Fetters et al., 2013; Gauly et al., 2024; Johnson et al., 2019). The application of joint displays extends beyond reporting to include data planning and analysis. By organising qualitative and quantitative findings in a matrix or table, researchers can observe patterns, contrasts, and relationships in real time, facilitating comprehensive interpretation (Peters & Fàbregues, 2024). As such, joint displays are now considered integral to mixed methods research, offering a visual tool that enhances the integration and interpretation of complex data sets. Two structured approaches that utilise joint displays in a systematic manner are the Pillar Integration Process (PIP) and the extended Pillar Integration Process (ePIP), both of which provide procedures for aligning and synthesising data across sources.

The Pillar Integration Process (PIP)

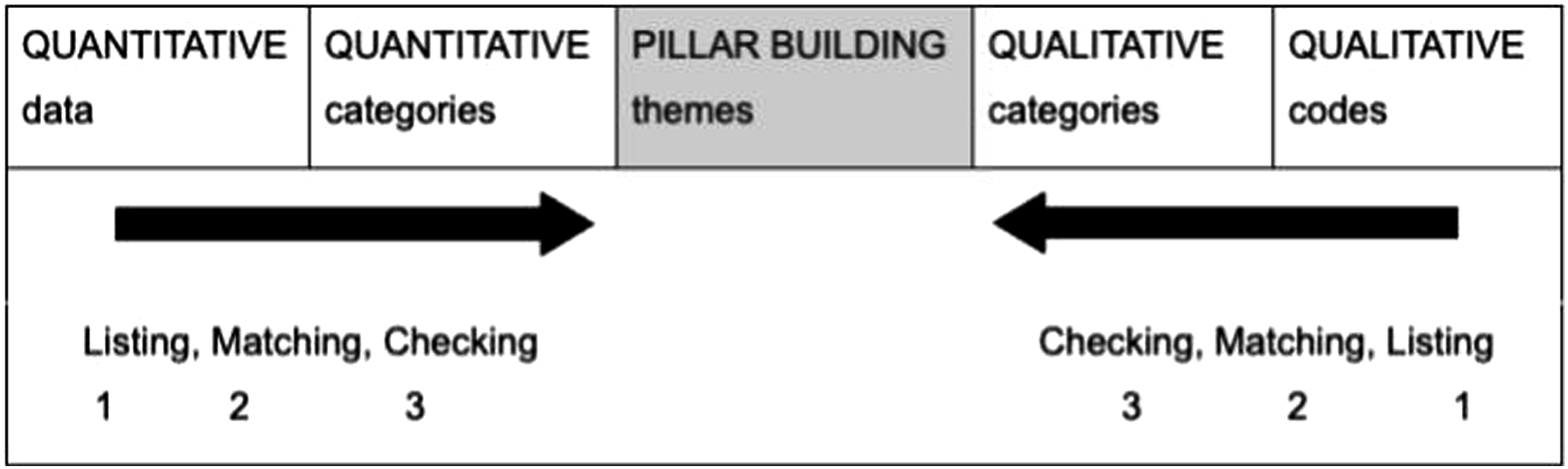

To promote the use of joint displays in mixed methods studies, Johnson et al. (2019) developed the Pillar Integration Process, commonly referred to as PIP. PIP is a systematic approach for integrating one qualitative and one quantitative data set. It consists of four stages.

The first stage is listing. Researchers begin by listing raw or analysed findings from each data set in separate columns of a joint display. The second stage is matching. Findings that address similar ideas or constructs are aligned horizontally across the display. Where there is no corresponding finding, the researcher notes ‘no matching data’. The third stage is checking. During this stage, the joint display is reviewed for accuracy, completeness, and coherence. Researchers refine the data and reflect on emerging patterns. The final stage is pillar building. In this step, the central column of the joint display is used to develop integrated themes, known as pillars, that synthesise the findings from the matched data. Figure 1 illustrates the PIP framework. The pillar integration process (based on Johnson et al., 2019)

PIP has been utilised in various applied research contexts, including programme evaluation, theory development, and service improvement (Drury et al., 2023; Hall & Mansfield, 2023; Johnson et al., 2019). It is valued for its transparency and potential to reduce observer bias.

Despite its structured approach, PIP remains limited in its capacity to integrate only two data sources. This constraint reduces its usefulness in complex mixed methods studies that involve multiple data sets across different levels or time points. Johnson et al. (2019) highlighted this limitation and noted that the method had limited historical application in mixed methods research. They called for further validation across diverse contexts and recommended the development of more case studies to demonstrate PIP’s potential for enhancing methodological transparency and reducing observer bias. However, since this recommendation, there has been limited empirical follow-up to rigorously evaluate the effectiveness or scalability of PIP.

One of the few studies to respond to this call was conducted by Hall and Mansfield (2023), who tested the applicability of PIP in two case studies evaluating a workplace intervention through a pilot randomised controlled trial and a process evaluation. Their study demonstrated the benefits of using PIP to achieve a more comprehensive understanding of intervention efficacy and to inform future intervention design. Importantly, they advanced the method by considering the philosophical assumptions that underpin mixed methods integration and illustrating how integration can occur both within and across traditionally defined ‘qualitative’ and ‘quantitative’ findings. While their findings supported PIP’s value in fostering interpretive synthesis, they also reinforced the need for further methodological development to enable integration across more complex designs and larger data sets.

Drury et al. (2023) applied an adapted version of PIP for theory development in the context of cancer survivorship. While their work illustrated the potential of the method to support conceptual modelling, the deductive approach they used introduced analytical complexities that may not be suitable for all mixed methods designs. They recommended further refinement of PIP to support diverse theoretical frameworks and emphasised the need for additional guidance on using the method for theory development in health research.

Other applications of PIP, such as that by Amir et al. (2024), have used the method in general practice research without evaluating its effectiveness or reflecting on its methodological contribution. This trend, where PIP is applied descriptively rather than critically, highlights a broader issue in the field: the under-theorisation and under-evaluation of integration strategies in mixed methods research. Peters and Fàbregues (2024) echoed similar concerns in educational technology research, observing that although mixed methods studies are increasingly used to address complex educational problems, explicit and rigorous integration remains rare. In particular, few studies apply recommended strategies such as joint displays, which can result in missed opportunities for generating deeper insights. Their methodological contribution provided practical guidance for integrating data through visual joint displays at multiple stages of the research process, including theoretical framing, analysis, interpretation, and reporting.

Together, these studies demonstrate both the promise and the persistent limitations of PIP. Although it continues to be applied across disciplines, its capacity to integrate only two data sets and the lack of critical evaluation in many studies limit its broader methodological utility.

The Extended Pillar Integration Process (ePIP)

To address the particular limitation of PIP being only able to integrate two data sets, Gauly et al. (2024) introduced the Extended Pillar Integration Process (ePIP). This method expands on PIP by enabling the integration of three data sets, whether qualitative or quantitative. It also introduces additional stages to accommodate the increased complexity of handling multiple data sources.

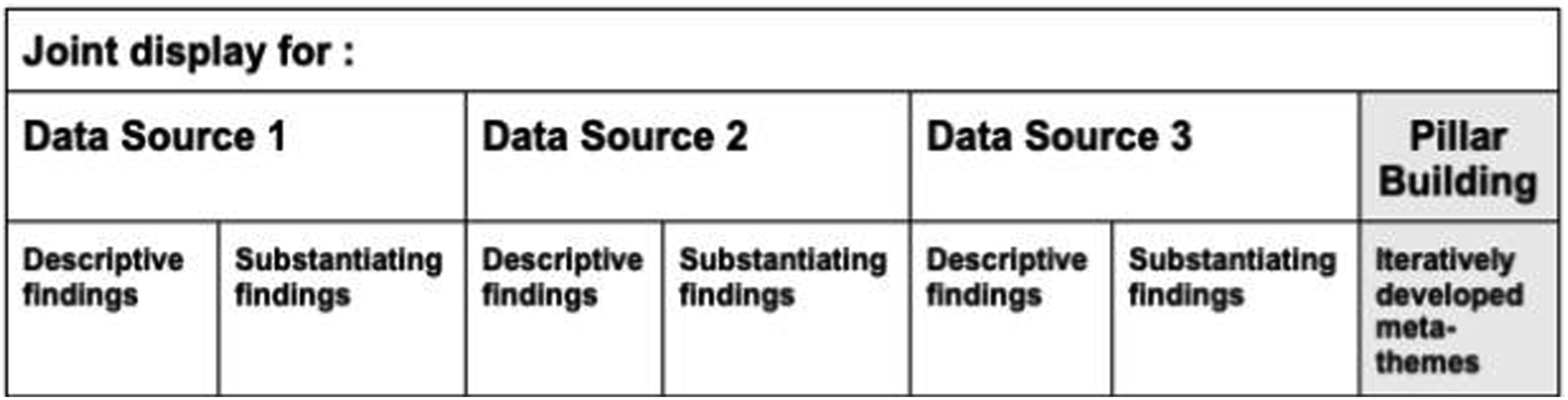

The ePIP consists of five stages. The first is listing, where descriptive findings from the first data set are recorded. Alongside each finding, the researcher begins to develop preliminary meta-themes. The second stage is matching. Findings from the second and third data sets are compared and aligned with those from the first data set. When related findings are identified, they are grouped in the same row of the joint display. Unrelated findings are added to new rows. The third stage is checking, which involves reviewing the joint display for completeness and accuracy. This process is supported by peer debriefing to enhance validity and reduce bias. The fourth stage builds the pillars which involve developing overarching meta-themes. At this point, meta-themes may be grouped into broader categories to support a more organised synthesis. The final stage is the write-up. This includes producing a summary table of integrated findings, noting their sources and any gaps, and presenting the finalised meta-themes. Figure 2 illustrates the ePIP. The joint display used for the extended pillar integration process (based on Gauly et al., 2024)

The ePIP method retains the visual nature of the original PIP but offers more flexibility for moderately complex studies. Gauly et al. (2024) demonstrated the application of ePIP in the fields of health sciences and automotive human factors, underscoring its potential adaptability across disciplines.

However, several limitations remain. The ePIP is currently designed for the integration of up to three data sets, and its use in more complex designs involving a greater number of data sources has not yet been formally evaluated. According to the ePIP developers, while the tool could potentially be extended to accommodate additional data sources by adding extra columns as described, this approach requires confirmation through future research. Although developing and refining meta-themes throughout the integration process can enhance analytical depth, it may introduce procedural complexity for researchers who are less familiar with advanced integration techniques. Moreover, Gauly et al. (2024) highlight that the use of peer debriefing may vary depending on the expertise and availability of peers, which is a helpful consideration when planning quality assurance processes. These challenges highlight the need for further refinement of the ePIP and the development of more flexible integration strategies to support complex, mixed methods designs.

Limitations of Current Integration Frameworks for Complex Programme Evaluations

Despite recent methodological advances for integration in mixed method studies, researchers conducting longitudinal or multilevel mixed methods studies continue to face significant challenges in integrating more than three data sets (Gauly et al., 2024). These challenges are particularly evident in complex programme evaluations. PIP and ePIP were not originally designed for studies with multiple phases or stakeholders, and they offer little guidance on how to synthesise findings across numerous sources while maintaining methodological coherence.

Programme evaluation refers to a structured theoretical approach used to initiate, document, and analyse change within organisations or communities (Donaldson, 2022; Skivington et al., 2021). It extends beyond assessing whether an intervention is effective by examining how it works, why it produces certain outcomes, and which contextual factors influence its implementation. In this context, there is an emphasis on evaluating both the process and outcomes of a programme. Process evaluation explores how a programme is implemented in practice, with attention to the contextual factors that shape participants’ experiences (Donaldson, 2022; Skivington et al., 2021). Outcome evaluation, in contrast, focuses on the effects or changes resulting from the programme and how these outcomes manifest in real-world conditions. Importantly, these two dimensions are not confined to isolated stages of the research. Instead, they occur concurrently and are examined throughout the entire evaluation to capture the dynamic and evolving nature of complex interventions.

Given this complexity, programme evaluations often require the use of multiple data sets collected across different stakeholder groups, time points, and educational or clinical settings. These data are inherently interconnected and must be synthesised in a way that reflects the multifaceted nature of the intervention. Traditional integration approaches, such as narrative integration and data transformation, are often insufficient in this regard. Narrative integration can become unwieldy when applied to large volumes of data, making it difficult for researchers to identify overarching patterns – thus obscuring rather than clarifying the findings (Fetters et al., 2013). Data transformation, which involves converting one form of data into another (e.g. quantifying qualitative codes or narrativising numerical results), may oversimplify the data and diminish the richness and nuance that qualitative findings contribute to the evaluation (Fetters et al., 2013).

These limitations underscore the need for more robust and systematic approaches to integration in programme evaluation. Joint displays offer a promising alternative by visually aligning qualitative and quantitative data in a way that reveals patterns, convergence, divergence, and contextual relationships. This approach supports interpretive synthesis across diverse data types and stakeholder perspectives while preserving the depth and complexity of the original data (Fetters & Tajima, 2022; Johnson et al., 2019; Peters & Fàbregues, 2024). In evaluations involving multiple data sets, joint displays provide a practical means for researchers to draw meaningful meta-inferences without sacrificing methodological rigour or analytic depth.

Our Methodological Contribution: the Adapted ePIP Framework

In response to the challenge of integrating data from different stages of research, multiple data sets, and stakeholders, we developed an Adapted ePIP framework for analysing multiple data sets in programme evaluation studies. This framework presents a novel methodological contribution that extends the utility of joint display integration for more complex mixed methods designs. The Adapted ePIP provides a structured and transparent approach for synthesising large, heterogeneous data sets and supports the development of meta-themes and meta-inferences. This approach retains the core strengths of PIP and ePIP, such as their visual logic and systematic integration process, while addressing prior concerns regarding limited scalability, over-reliance on researcher judgment, and a lack of clarity in managing more than three data sets. To address these issues, the Adapted ePIP introduces expandable joint displays and additional integration stages, which support the development and refinement across a larger number of data sources while maintaining coherence and transparency. By outlining both the enhancements and the conditions under which the Adapted ePIP is most suitable, this paper contributes to refining the joint display methodology in complex mixed methods research.

We begin by providing an overview of the SLP education programme evaluation. We then outline the conceptual foundations of the Adapted ePIP framework and describe its development. We then explain how the framework was applied to integrate our data, followed by a discussion of its utility and potential for broader application in similarly complex mixed methods studies.

The Adapted ePIP Framework in the Context of an SLP Education Programme Evaluation

Background to the Research Project

This article utilises Barber’s (2025) doctoral research as the application example through which the Adapted ePIP framework was developed and implemented. The research employed programme evaluation to explore the efficacy and potential impact of an inquiry-based learning approach in the clinical education of SLP students. Building on this methodological foundation, social constructivism played a crucial role in shaping the integration of mixed methods research throughout the study. This epistemological stance, which emphasises that knowledge is co-constructed through social, cultural, and contextual factors, required us to adopt a mixed methods approach to capture the complex, multidimensional nature of the research question. The integration of qualitative and quantitative data was essential because each approach offered complementary insights into the research problem. Quantitative data provided objective measures of students’ critical thinking skills and self-reflection, while qualitative data provided the subjective experiences of both SLP students and clinical educators. This mixed methods approach reflected our epistemological commitment to understanding diverse, context-specific experiences and the interplay of these factors within the programme.

Integrating mixed methods in this study was particularly valuable for addressing the inherent complexity of programme evaluation. As Creswell and Plano Clark (2018) and Bamberger (2012) suggest, a mixed methods design systematically combines multiple evaluation frameworks and tools to produce a more comprehensive understanding of the phenomenon. For example, integrating qualitative insights with quantitative data allowed us to explore how clinical educators implemented pedagogy and how students experienced its impact, while also considering the larger contextual and relational dynamics at play.

Ultimately, our understanding of complexity, informed by both social constructivism and complexity theory, reinforced the need to integrate mixed methods throughout the study. By embracing complexity, we recognised that the interactions between individual components of the programme, such as student experiences, clinical educator practices, and the programme context, were not linear and could lead to both intended and unintended outcomes. This perspective aligned with the idea that the whole is greater than the sum of its parts (Grant et al., 2023; Plsek & Greenhalgh, 2001; Thompson et al., 2016). Integrating multiple data sets in each stage of the programme evaluation enabled us to gain a holistic understanding of the process, outcomes, and factors influencing the SLP education programme. This integration, rooted in our philosophical and theoretical framework, allowed us to fully capture the complexity of the programme and its impact on students, educators, and the clinical environment.

Application of Mixed Methods Research in Our Current Study

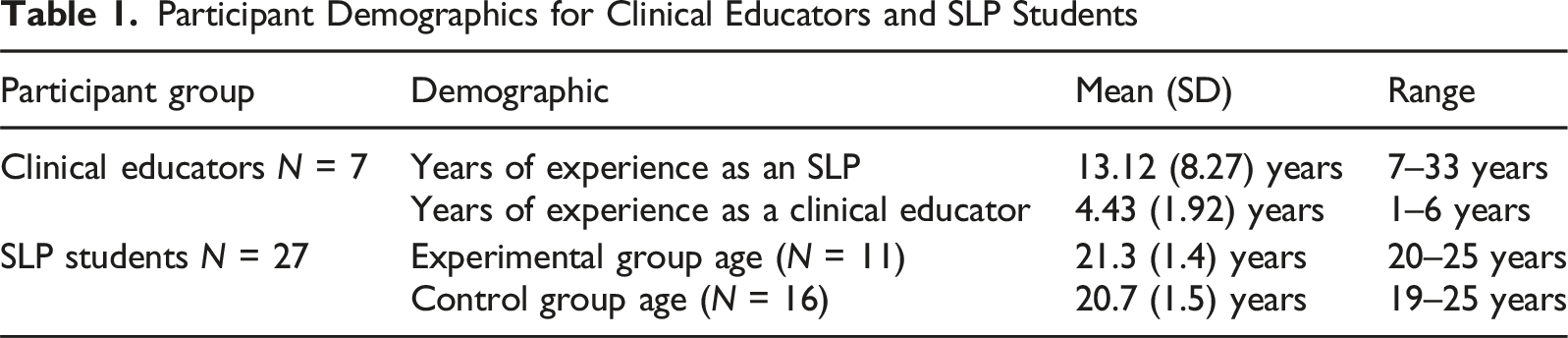

Participant Demographics for Clinical Educators and SLP Students

We collected qualitative and quantitative data from both participant groups before, during, and after the implementation of inquiry-based learning. Although data collection occurred at multiple stages, the data collection was sequential and integration of data was conducted only at the end of the programme evaluation.

In our study, a serendipitous division occurred among student participants due to varying levels of participation by clinical educators. Students whose clinical educators chose to participate in the research formed the experimental group, while those whose educators did not participate formed the control group. Initially, we had not planned for such groupings due to practical and ethical challenges.

The Nine Data Sets Collected Over the Year from Both Groups of Participants that Needed to be Integrated

Note. CE refers to clinical educator; Stu refers to students.

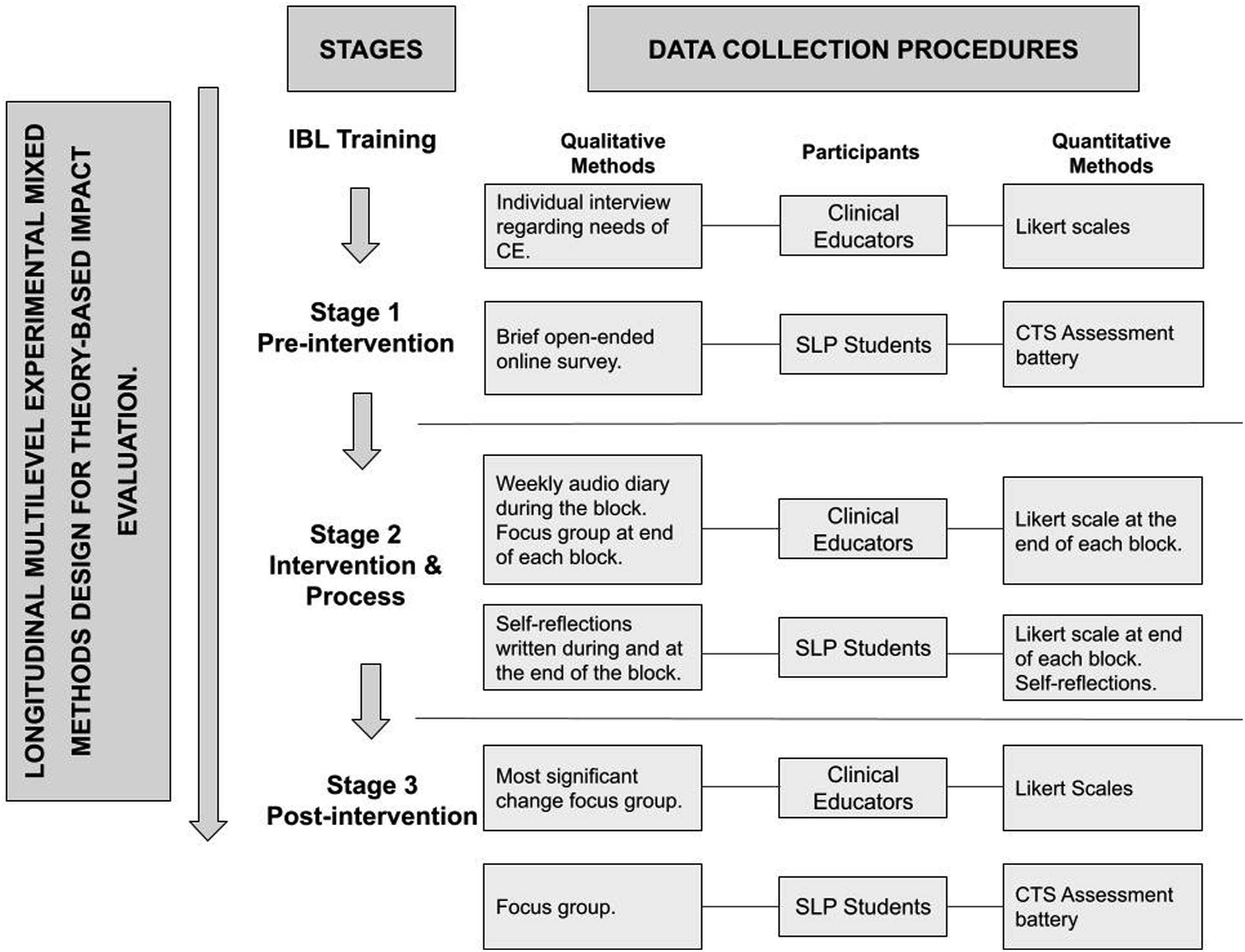

Figure 3 represents the longitudinal multilevel experimental mixed methods design for data collection and analysis in this study. We collected qualitative and quantitative data from both participant groups before, during, and after the implementation of inquiry-based learning. Although data collection was conducted sequentially, the findings from earlier stages were not used to inform subsequent stages. Instead, integration of all data sets took place only at the end of the programme evaluation. Our approach to data integration was shaped by our constructivist ontology, which recognises the co-construction of meaning through participants’ experiences and the researchers’ interpretations. This ontological stance, together with our overarching research question and the design of the study, informed our decision to integrate qualitative and quantitative data at the end of the evaluation. The sequential nature of data collection and the diversity of data types were best suited to a post hoc integration process, which allowed for a holistic synthesis of the findings using joint displays. This approach enabled us to preserve the complexity of the real-world intervention while identifying meta-themes and meta-inferences from multiple data sets. Longitudinal multilevel experimental mixed methods data collection and analysis in Barber (2025)

Adaptation of the ePIP and the Two Levels of Integration within Our Programme Evaluation

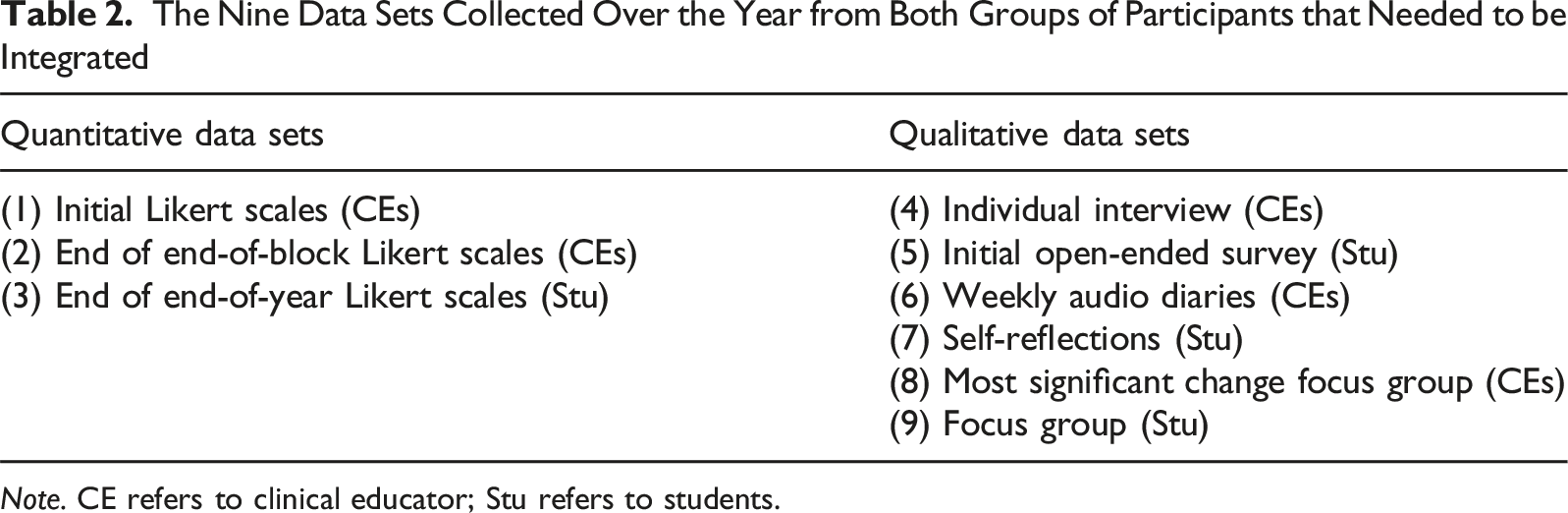

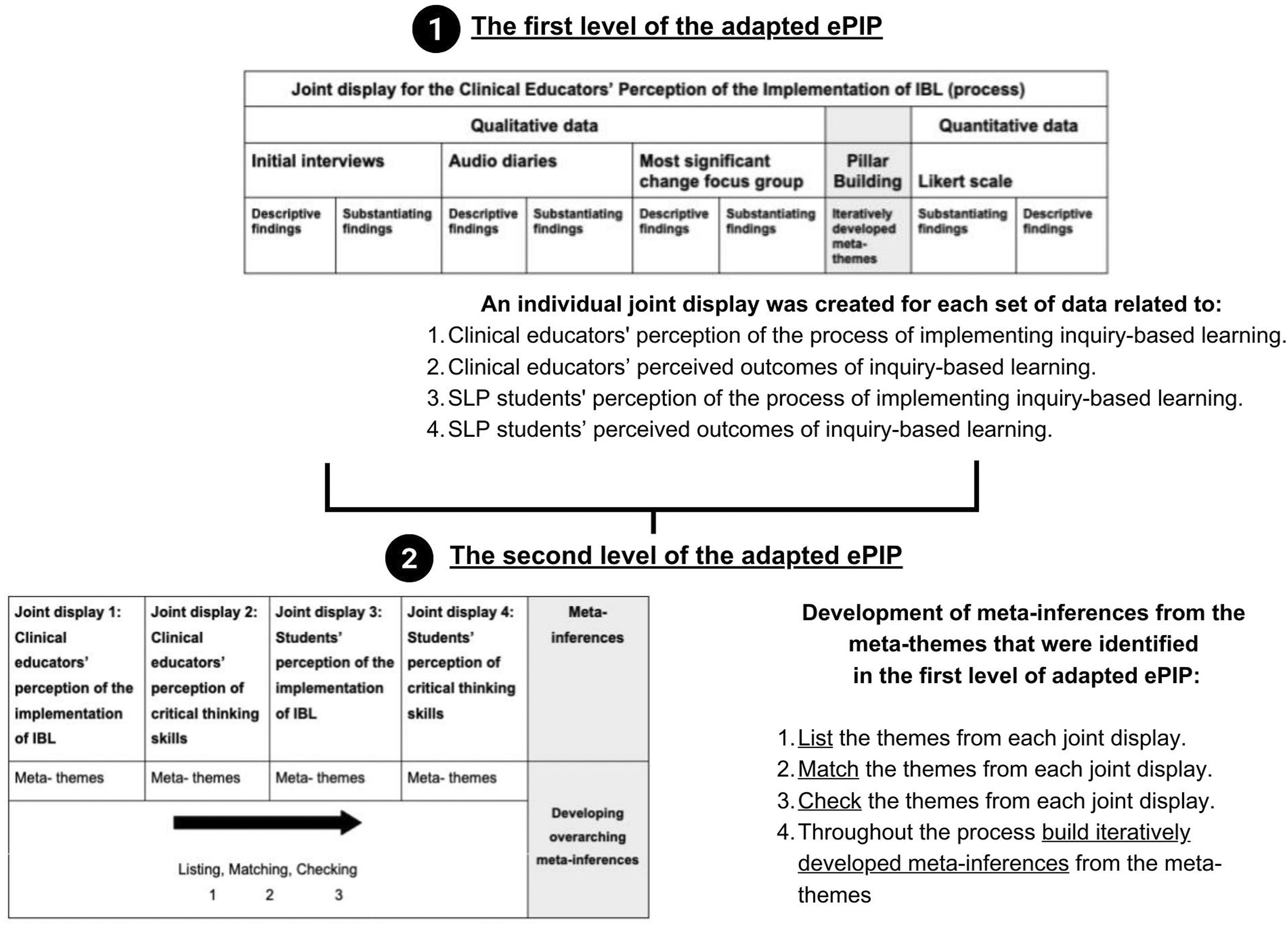

Integrating the results from a longitudinal multilevel experimental mixed methods design strategy is complex and messy. The integration of these results, therefore, needed to be considered systematically. There is improved legitimation in mixed methods if a systematic, well-defined method of integrating the data is used (Creswell & Plano Clark, 2018). Thus, to systematically and transparently integrate more than three sources of data collected from the different participant groups and during different stages of the research to answer a central research question, we adapted the ePIP and named it the Adapted ePIP. The Adapted ePIP is a method of integrating multiple qualitative and quantitative data sets. To integrate the multiple data sets, the Adapted ePIP consists of two levels of integration. Figure 4 provides a visual illustration of the Adapted ePIP and the two levels of integration using our programme evaluation as an example. Adapted ePIP, with its two levels of integration

Given the large volume of data we collected, it was impractical to develop meta-themes and meta-inferences into a single joint display as one would do with PIP and ePIP. Instead, we conducted a first level of integration to separately analyse the process and outcomes of inquiry-based learning before progressing to Level 2 analysis. This facilitated the development of meta-inferences by linking processes with outcomes.

Level 1 of the Adapted ePIP: Initial Integration of Data Sources

In Level 1, we created four joint displays, each integrating qualitative and quantitative data according to a particular participant group and whether the focus was on the process or outcomes of implementing inquiry-based learning: (1) Clinical educators’ perceptions of the process of implementing inquiry-based learning. (2) Clinical educators’ perceived outcomes of inquiry-based learning. (3) SLP students’ perceptions of the process of implementing inquiry-based learning. (4) SLP students’ perceived outcomes of inquiry-based learning.

Each joint display followed the structure illustrated in Figure 4. To facilitate integration, we modified the original ePIP format by introducing additional columns to accommodate multiple data sources.

We changed the joint display in the original PIP visualisation, which shows the pillar building in the middle of the joint display, as we wanted to organise the data according to whether it was qualitative or quantitative, so that it was easier to visualise patterns and make inferences regarding the data and then derive meta-inferences in Level 2. The number of columns varied depending on the number of data sets being integrated. To manage the data complexity, we prioritised matching qualitative or quantitative data first, depending on the dominant data type in each joint display.

We retained the term ‘pillar building’ from Johnson et al. (2019). However, we adapted our approach by incorporating the Extended Pillar Integration Process (ePIP) developed by Gauly et al. (2024) to enhance the process of matching and checking. The original Pillar Integration Process (PIP) focuses on sequentially listing, matching, checking, and then constructing meta-themes in the final stage. However, Gauly et al. (2024) introduced refinements that allowed us to develop and refine meta-themes during the integration process rather than at the end of the integration process or at the end of the research. Specifically, we followed their approach by: (1) Developing and refining meta-themes rather than waiting until the pillar-building stage, ensuring that themes were identified organically throughout the integration process. (2) Adjusting the joint display structure to facilitate clearer visualisation of connections between qualitative and quantitative findings, which helped maintain coherence across multiple data sources. (3) Conducting systematic peer debriefing during the checking stage to enhance the validity of our integration and refine emerging meta-themes. (4) Ensuring transparency in data synthesis by refining how findings were listed and matched.

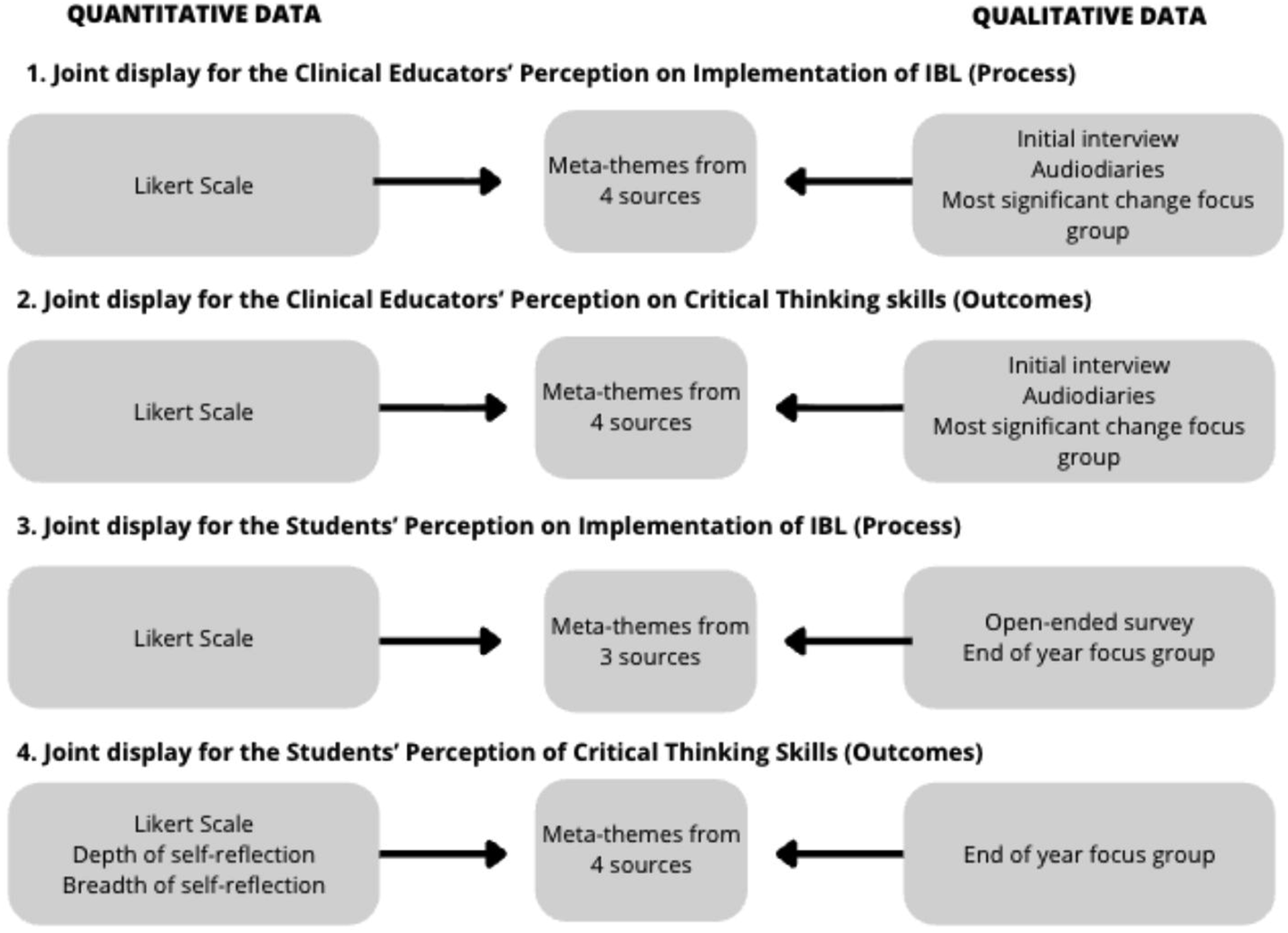

Through this initial integration process, we observed that separating themes by participant group did not provide a cohesive understanding of the programme evaluation. The joint displays from Level 1 resulted in overlapping and repetitive patterns, highlighting the need for a second level of integration to answer the central research question holistically. Figure 5 shows the qualitative and quantitative data sets that we integrated according to the participant group and whether we were investigating the process or outcomes of the programme. Although this separation helped to manage the large volume of data, it did not yet establish clear connections between processes and outcomes. Integrated data sources to create a joint display at each level of the programme evaluation in Barber (2025)

Level 2 of the Adapted ePIP: Development of Meta-Inferences

Recognising the need for a holistic understanding, we developed Level 2 to integrate the meta-themes identified in Level 1 into meta-inferences. This second level of integration allowed us to merge themes across participant groups and stages of the research. To achieve this, we: (1) Created a new joint display that listed and matched meta-themes from the four Level 1 joint displays. (2) Conducted the listing, matching, and checking process to develop and refine the meta-inferences. (3) Discussed emerging meta-inferences with the other authors to refine integration.

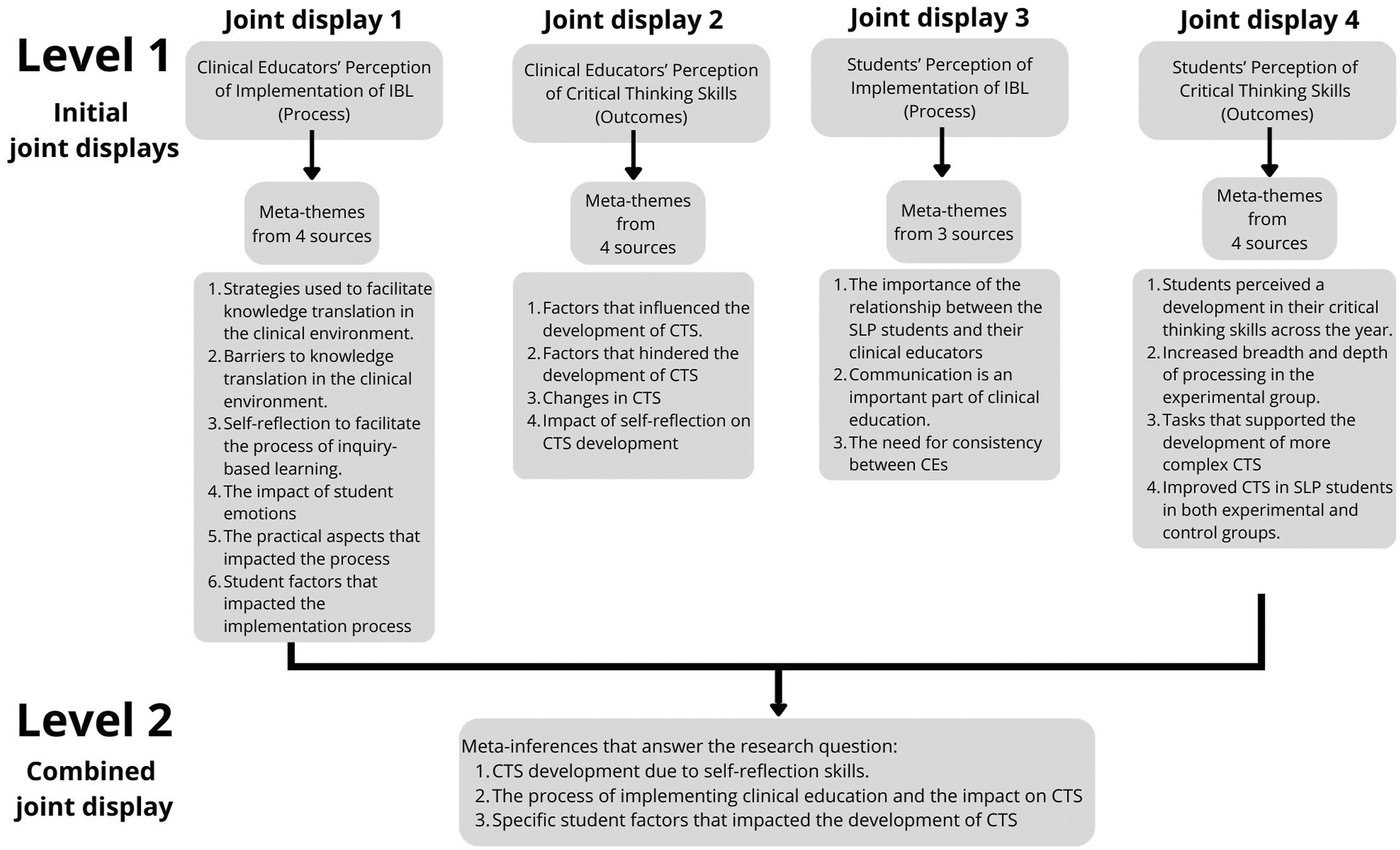

This second level of integration involved a new joint display, which we provided in Figure 6. This display systematically mapped the process-related meta-themes (from Level 1) to corresponding outcome-related meta-themes. Through refinement, we merged overlapping themes and identified causal relationships, ultimately leading to the development of meta-inferences that holistically addressed the research question. Adapted ePIP with its two levels of integration for the programme evaluation

For example, the meta themes of ‘self-reflection to facilitate the process of inquiry-based learning’ from joint display 1, ‘the impact of self-reflection on critical thinking skills development’ and ‘changes in critical thinking skills’ from joint display 2, and ‘increased breadth and depth of processing in the experimental group’ from joint display 4 led to the development of the meta-inference of ‘critical thinking skills development was due to self-reflection skills’.

Similarly, the meta themes of ‘strategies used to facilitate knowledge’ from joint display 1, ‘factors that influenced the development of critical thinking skills’ and ‘factors that hindered the development of critical thinking skills’ from joint display 2, ‘communication is an important part of clinical education’ and ‘the need for consistency between CEs’ from joint display 3, and ‘tasks that supported the development of more complex CTS’ from joint display 4 led to the development of the meta-inference of ‘the process of implementing clinical education and the impact on CTS’. During the matching phase, we developed and refined these meta-inferences, rather than just at the end of the phase.

Rationale for the Two-Level Approach

The two-level integration approach was essential for handling the complexity of the data while ensuring clarity in linking processes with outcomes. Separating the process and outcomes in Level 1 allowed for a detailed investigation of each component before synthesising findings in Level 2. This approach facilitated the systematic integration of multiple data sets, ensuring a clear and structured process for analysing diverse sources of data. It provided clarity in theme development by allowing for structured matching and checking, ensuring that patterns and relationships were identified consistently. Additionally, it enabled a holistic understanding of the research findings by linking meta-themes to form meta-inferences, offering deeper insights into both the processes and outcomes of the programme evaluation.

Ultimately, Level 2 integration allowed us to determine whether the programme was beneficial and understand the underlying reasons why or what not the programme was beneficial, thereby answering our central research question. Figure 6 provides a visual illustration of the Adapted ePIP with the meta-themes that we integrated to form the meta-inferences. Due to the large amount of data we collected, it was simpler to navigate and integrate the meta-themes from Level 1 to form meta-inferences in Level 2. However, we consistently returned to the original data sets during analysis and reporting to ensure that the contextual richness and nuance of the data were preserved. This approach safeguarded against the loss of depth often associated with data transformation methods, which risk oversimplifying complex qualitative insights.

Discussion

The Adapted ePIP framework provides a structured approach to integrating multiple qualitative and quantitative data sources in programme evaluation. This research aimed to assess the impact of inquiry-based learning on the critical thinking skills of SLP students while accounting for the complexity of real-world implementation. To achieve this, we required a method that could systematically and transparently integrate a large number of data sets to answer the research aim. The Adapted ePIP facilitated this integration by allowing us to build pillars that connect different data sources while ensuring that both the processes and outcomes of the programme evaluation were captured. This discussion explores how the framework was applied, the challenges of integrating multiple data sets, and the ways in which we developed meta-themes and meta-inferences to enhance the legitimacy and comprehensiveness of the findings.

The Potential of the Adapted ePIP Framework for Integrating More than Three Data Sources to Determine the Processes and Outcomes of Programme Evaluation

Ensuring the legitimation of results is essential for research integrity in mixed methods research, which requires a systematic and transparent approach to integrating qualitative and quantitative data (Creswell & Plano Clark, 2018). In this research study, which investigated the impact of inquiry-based learning on the critical thinking skills of SLP students, we required a method to integrate multiple qualitative and quantitative data sets to answer the main research aim. This paper presents a real-world, complex example of a study involving multiple data sets. Using the Adapted ePIP assists the researcher in integrating the multiple data sets whilst incorporating real-world complexity.

When analysing the data collected for each participant group and stage for either the process or the outcomes associated with implementing inquiry-based learning, we built numerous meta-themes. We initially decided to build the meta-themes according to the participant groups and the stage of the research, as there was a large amount of data that we needed to integrate. There were three or four data sets per group, as highlighted previously in Figure 5.

We found that developing the Adapted ePIP with a second level of integration of the initial meta-themes allowed us to identify and consolidate the meta-themes into meta-inferences that were essential to understanding the process and outcomes of the programme evaluation. The researcher needs to be able to understand the nuances as well as the data as a whole to make conclusions about the impact of the programme they are implementing. As much as researchers want to understand the process and outcomes of programme evaluation, it is also important to be able to look at the two aspects holistically and integrate them to understand how the process and the outcomes occur (Gertler et al., 2016). In addition, researchers also need to be able to identify how the program is working, why it is working, and what additional factors (intended and unintended) are impacting the process of implementation and the outcomes (Haji et al., 2013; Skinvington et al., 2021).

To support quality assurance during this process, we used structured peer debriefing. Throughout the analysis, the first author met regularly with three experienced qualitative researchers who independently reviewed the evolving meta-themes, integration tables, and analytic decisions. These discussions helped interrogate assumptions, refine interpretations, and ensure that the integration process aligned with methodological principles of transparency and trustworthiness. Peer debriefing therefore strengthened the credibility of the Adapted ePIP by providing external oversight and confirming that meta-themes and meta-inferences were grounded in the data rather than researcher bias.

Legitimation of the Results from the Joint Displays Created for the SLP Education Programme Evaluation

The Adapted ePIP framework supports legitimation in mixed methods research by ensuring the validity of integration and addressing potential biases when combining qualitative and quantitative data. Legitimation in mixed methods refers to the extent to which findings from different methodological approaches are meaningfully combined and interpreted while maintaining research integrity (Onwuegbuzie et al., 2011). Onwuegbuzie et al. (2011) conceptualise legitimation as a continuous and dynamic process rather than as a discrete step occurring at a single stage of research. To align with this perspective, we adapted the ePIP into two levels of integration, ensuring that the research process met the standards of multiple legitimation types as outlined in their framework.

Addressing Legitimation through the Adapted ePIP

The two-level approach of the Adapted ePIP strengthened weakness minimisation legitimation, which refers to the extent to which the limitations of one method (qualitative or quantitative) are compensated for by the strengths of the other (Onwuegbuzie et al., 2011). In Level 1, we structured integration around participant groups and stages of the research to ensure that we carefully analysed the process- and outcome-related data before integration. This systematic structuring helped mitigate potential biases that might arise from overemphasising one methodological approach over another.

Inside-outside legitimation refers to the extent to which both insider (emic) and outsider (etic) perspectives are appropriately represented in the research (Onwuegbuzie et al., 2011). We addressed this by integrating researcher-driven interpretations and participant perspectives into the pillar-building process. By structuring the integration of multiple qualitative and quantitative data sources in Level 2, we ensured that meta-themes reflected both the experiences of participants and broader theoretical interpretations, enhancing the depth and applicability of our findings.

Paradigmatic mixing legitimation refers to the extent to which qualitative and quantitative paradigms are coherently combined in a mixed methods study while maintaining their underlying philosophical and methodological integrity (Onwuegbuzie et al., 2011). This type of legitimation ensures that the integration of data from different methodological traditions does not compromise their respective epistemological foundations. Instead, it facilitates a meaningful synthesis that aligns with the study’s overall research objectives. The Adapted ePIP upheld paradigmatic mixing legitimation, ensuring philosophical coherence in how we integrated qualitative and quantitative data. We achieved this legitimation by adapting the joint display format to distinguish qualitative and quantitative findings while allowing for their systematic comparison and integration. Through the development of meta-inferences, we established a coherent and structured way of integrating different methodological perspectives without compromising the integrity of either paradigm.

Ensuring Valid Meta-Inferences

The Adapted ePIP facilitated commensurability legitimation, ensuring that the integration process reflected a mixed worldview rather than simply juxtaposing qualitative and quantitative findings. By listing, matching, and checking at each level, we engaged in Gestalt switching, as described by Onwuegbuzie et al. (2011). This Gestalt switching allowed us to transition between different epistemological perspectives to develop a holistic understanding of the research problem.

Finally, the Adapted ePIP framework provided a clear pathway for achieving multiple validities legitimation, ensuring that qualitative, quantitative, and mixed methods standards of rigour were upheld throughout the research process. The structured integration in Level 2 allowed us to link processes with outcomes, facilitating the development of meta-inferences that holistically addressed the central research question.

Contribution to the Field of Mixed Methods

The Adapted ePIP offers a valuable contribution to mixed methods research by providing a structured approach to developing meta-themes and meta-inferences from multiple qualitative and quantitative data sources. This method is particularly beneficial in longitudinal multilevel experimental mixed methods designs, where researchers must synthesise large and complex data sets across participant groups and research stages.

A key contribution of the Adapted ePIP is its ability to integrate more than three data sources, addressing a limitation of the original ePIP developed by Gauly et al. (2024). The introduction of two levels of integration ensures that meta-themes are not just identified within isolated data sets but are further synthesised into meta-inferences that provide a holistic understanding of the research question. This approach enhances legitimation in mixed methods research by ensuring weakness minimisation, inside-outside legitimation, and paradigmatic mixing (Onwuegbuzie et al., 2011). The refinement of meta-themes across different participant groups and research stages facilitates commensurability legitimation, ensuring that the integration process captures both participant perspectives and broader theoretical interpretations.

In this paper, we have provided a practical example of how the Adapted ePIP supports the development of meta-themes and meta-inferences in a healthcare education programme evaluation. Unlike the PIP or ePIP, which primarily identifies themes at an individual data set level, the Adapted ePIP framework allows researchers to link meta-themes across multiple data sets systematically. This structured approach ensures that findings are not just methodologically rigorous but also applicable to real-world programme improvements. Appendix A provides a template for researchers to use when implementing the adapted ePIP. Furthermore, this research contributes to the broader mixed methods literature by demonstrating how meta-inferences can be systematically developed from diverse data sources. The Adapted ePIP provides a structured framework that ensures meta-themes evolve from meaningful patterns rather than being arbitrarily derived. By aligning with legitimation principles in mixed methods research, this approach upholds methodological coherence and epistemological transparency, making it replicable for researchers conducting complex programme evaluations.

This study highlights the Adapted ePIP as a valuable tool for mixed methods researchers seeking to synthesise complex data into meta-inferences. By demonstrating how meta-themes from different data sets can be meaningfully integrated, this research provides possible support for the Adapted ePIP as a data integration strategy in mixed methods research.

Limitations of the Adapted ePIP Framework

The Adapted ePIP framework has only been used for this SLP research study, which is based on complexity theory and pragmatism framed within theory-based impact programme evaluation. These are specific theoretical underpinnings of research that influence how the system is perceived, as well as its outcomes and processes (Long et al., 2018). Therefore, researchers need to be careful when considering whether to use the Adapted ePIP framework to integrate their mixed methods data sets and whether it aligns with their theoretical assumptions. We also used the framework specifically for programme evaluation which other studies may not be employing.

The adapted ePIP was also useful in our programme evaluation as it answered a central question regarding the process and outcomes of implementing inquiry-based learning. However, researchers may only be able to use this integration technique if they have a central research question and multiple qualitative and quantitative data sets that need integration.

Conclusion

A programme evaluation in response to a central research question with multiple data sets and real-world complexity requires a transparent and systematic method to integrate information. Expanding upon the ePIP framework to provide an Adapted ePIP framework proves advantageous when researchers encounter diverse data sets, leading to the identification of numerous themes that interconnect and offer nuanced insights into programme evaluation. Additionally, it facilitates the integration of meta-themes to ascertain the meta-inferences across the programme evaluation. This approach can assist researchers with the holistic integration of mixed methods results for healthcare education programme evaluation with multiple data sets and incorporate real-world complexity.

This paper demonstrates how the Adapted ePIP framework can be used to integrate information so that researchers can evaluate both the processes and outcomes of a programme. The focus of programme evaluation extends beyond determining whether a programme works, aiming to understand why and how it works, as well as what else occurs incidentally due to its implementation (Haji et al., 2013; Skivington et al., 2021). Despite its significance, programme evaluation does not always provide specific instructions on how to determine why or how a programme functions.

Building on our ontological and epistemological foundations, the integration of mixed methods through the Adapted ePIP framework aligns with the study’s commitment to understanding complexity. Social constructivism emphasises that knowledge is co-constructed through social, cultural, and contextual factors, necessitating the use of mixed methods to capture the multidimensional nature of programme evaluation. By systematically integrating qualitative and quantitative data, the Adapted ePIP framework facilitates a comprehensive exploration of both the intended and unintended consequences of the programme. Using this framework aligns with complexity theory, which underscores that interactions among programme components, such as student experiences, clinical educator practices, and the broader programme context, are dynamic and non-linear (Grant et al., 2023; Plsek & Greenhalgh, 2001; Thompson et al., 2016).

The Adapted ePIP framework offers a valuable integration method for identifying the outcomes and processes of programme evaluation. Determining these aspects involves understanding how the programme is functioning, why it is effective, and what additional impacts emerge. Applying this approach to multiple sources of qualitative and quantitative data reinforces its potential in healthcare education research. Further studies should explore its use in different types of studies to assess its broader applicability and validity.

Supplemental Material

Supplemental Material - Unlocking the Potential of an Adapted Extended Pillar Integration Process: Investigating Its Role in Programme Evaluation of Clinical Education in Speech-Language Pathology

Supplemental Material for Unlocking the Potential of an Adapted Extended Pillar Integration Process: Investigating Its Role in Programme Evaluation of Clinical Education in Speech-Language Pathology by Nancy Barber, Jennifer Watermeyer, Joanne Neille, and Kim Coutts in Journal of Mixed Methods Research.

Footnotes

Acknowledgements

The authors thank the students and clinical educators who participated in the programme evaluation study and generously contributed their time, reflections, and insights.

Ethical Considerations

Ethical approval for this study was granted by the University of the Witwatersrand Human Research Ethics Committee (Non-Medical) H21/11/06.

Consent to Participate

All participants were informed about the purpose and procedures of the study and provided written informed consent. Participation was voluntary, and all data were anonymised to protect confidentiality.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are not publicly available due to ethical and confidentiality restrictions. De-identified excerpts may be made available by the corresponding author upon reasonable request and contingent on institutional ethical approval.

Supplemental Material

Supplemental material for this article is available online.