Abstract

Mixed methods research in developing countries has been increasing since the turn of the century. Given this, there is need to consolidate insights for future researchers. This article contributes to the methodological literature by exploring how cultural factors and logistical challenges in developing contexts interplay with mixed methods research design and implementation. Insights are based on the author’s research experience of using mixed methods in six projects across three African and three Caribbean countries. Three lessons are provided to aid researchers using mixed methods working in developing countries. First, cultural factors call for more reflexivity. Second, adopting a pragmatic research paradigm is necessary. And third, the research process should be iterative and adaptive.

International development research has conventionally been underscored by a positivist approach, often focusing on quantitative measurement of concepts, large surveys, and statistical techniques to establish relationships (Krishna, 2004; Luong, 2015). Recently, there has been a trend toward using mixed methods (Ngulube & Ngulube, 2015), with notable examples like the Q-Squared initiative (Shaffer, 2013).

Despite the increasing methodological popularity, mixed methods research still occupies a small share of work published on some developing regions (Alatinga & Williams, 2019). Moreover, few have written on the operationalization of mixed methods in developing countries (Alatinga & Williams, 2019; Bamberger et al., 2010; K. Jones, 2017; Luong, 2015; Taghipoorreyneh & de Run, 2020; Teye, 2012).

The objective of this article is to expand this area of knowledge by drawing on insights from research experience in six developing countries. This includes three Caribbean countries—Barbados, Grenada, and Trinidad and Tobago (which are all small-island developing states), and three African countries—Ethiopia a large low-income East African country, and Liberia and Sierra Leone which are both small, low-income, post-conflict West African nations (United Nations, 2020).

This article contributes to the methodological literature by exploring how cultural factors and logistical challenges in developing contexts interplay with mixed methods research design and its operationalization. Understanding the role of cultural factors in mixed methods research is particularly important to social scientists as social interactions shape and is shaped by the research process (May & Perry, 2010). The collection, analysis, and interpretation of data are thus often difficult to disentangle from the researchers themselves (Coghlan & Brydon-Miller, 2014). From the author’s research experience, examples of mixed methods across a range of research projects and contexts are discussed. In so doing, the value of adopting an integrative approach is demonstrated, and recommendations provided to enhance the practice of mixed methods research in developing countries. 1 The article is a useful resource to academics, doctoral students, international development agencies, and others engaged in policy research.

The term developing country is routinely used in the article. The classification has been contested, with some questioning its precision and general usefulness (Khokhar & Serajuddin, 2015). Albeit, it is still widely used in international development practice, as most African, Asian, Pacific, Middle Eastern, Latin American, and Caribbean countries are collectively grouped as “developing.” For example, both Barbados and Trinidad and Tobago are “high-income countries” according to the World Bank’s (2020) classification; but are categorized as “developing countries” by the United Nations (2020) and as “emerging markets and developing countries” by the International Monetary Fund (2020). In practice, most of these countries benefit from Official Development Assistance (ODA), are primary targets of the Sustainable Development Goals, and have special borrowing facilities like the International Bank for Reconstruction and Development (IBRD). Though contentious, “developing country” is used here as it is the most practical term to collectively group the six countries being discussed, which are otherwise very different in economic and political structure, size, and geographical location.

The article proceeds as follows. The next section discusses mixed methods as a methodological approach, and its application in developing countries. The third section outlines the case studies and describes how mixed methods have been applied by the author. This is followed by a discussion on how mixed methods can enhance the research process drawing on lessons from the case studies. Next, lessons related to logistical challenges and cultural factors are presented. Thereafter, three key contributions to the field of mixed methods are put forward, followed by conclusions and areas for further enquiry.

Mixed Methods Research and the Developing Country Context

Developing countries provide very different research contexts to those of developed countries. In many developing countries, the research infrastructure is less advanced, and projects are often led by foreign researchers who need to adapt to local systems (Luong, 2015). There are also questions around informed consent, and the ethical complexities involved in conducting research in these contexts (Benatar, 2002; Ford et al., 2009). Others have written on challenges related to sampling, language, access, and safety (Mathee et al., 2010). Though informative, these articles have often been based on single method studies and/or used a single case study, and as such, do not generate sufficient lessons specific to the conduct of mixed methods in a variety of developing contexts. This matters as mixed methods research can be seen as a “third major research approach or research paradigm,” distinct from single-method projects which use either quantitative or qualitative methods (Johnson et al., 2007, p. 112). It is sufficiently distinct in its philosophy and operationalization (Tashakkori & Teddlie, 2010).

Under a mixed methods design, quantitative and qualitative methods can be combined either simultaneously (QUAL + QUAN) or sequentially (QUAL→QUAN or QUAN→QUAL), oftentimes with either the QUAN or QUAL aspect occupying a relatively dominant role as dictated by the research question (Morse, 2010; Morse & Niehaus, 2009). A more deductive research question which explores relations and/or causation lends itself to a more dominant quantitative component, while a more inductive, descriptive, and/or interpretative research question may have a more dominant qualitative part (Morse, 2010). The choice of a more quantitative or qualitative strategy is closely related to the epistemological approach adopted, for example, positivists (largely quantitative) versus constructivist (largely qualitative) (Morse, 2010). Such contrasting philosophical approaches have different assumptions and practices. Combining them in mixed methods leads to what Mahoney and Goertz (2006) described as the amalgamation of two cultures or traditions. The coming together of these two paradigms has been an area of discussion in the mixed methods literature (Howes, 2017; Johnson & Gray, 2010; Maxwell & Mittapalli, 2010; Morgan, 2007). Some have posited that realism as a stance facilitates effective collaboration between qualitative and quantitative researchers (Maxwell & Mittapalli, 2010), while others have argued for pragmatism as a research paradigm (Biesta, 2010; Feilzer, 2010). More recent contributions have called for expanding philosophical perspectives to include non-North American and European views like the Chinese Yangying philosophy (Fetters & Molina-Azorin, 2019) and the indigenous Māori approach (Martel et al., 2021).

It follows that the integration of quantitative and qualitative methods not only poses unique challenges but also new possibilities (Almalki, 2016; Teye, 2012; Zhou & Wu, 2020). It is precisely these challenges and opportunities which generate an interest in the study of mixed methods (Fetters & Molina-Azorin, 2017).

Contributions by Fetters and Molina-Azorin (2019), Martel et al. (2021), and Reviere (2001) indicated that research conducted in non-European/North American contexts should force us to consider the importance of non-European/North American research philosophies. Arguably, different cultural norms and social organizations in developing countries influence the types of research questions asked, how data can be meaningfully collected, and the interpretation of the data conditional on the researcher’s positionality. As such, an assessment of mixed methods research in developing countries warrants its own area of enquiry.

Previous methodological contributions have advanced our understanding. Teye (2012) provided evidence of the importance of positionality in mixed methods social science research in developing countries as it relates to power relations, and showed how researchers need to quickly adapt to changing power relations. Alatinga and Williams (2019) showed how an exploratory sequential mixed methods research design can usefully influence health policy, while Jones (2017) explored the role spatial representation can play in mixed methods analyses. Taghipoorreyneh and de Run (2020) documented how mixed methods can enhance the reliability and validity of instruments which measure cultural values. In more operational pieces, Bamberger et al. (2010) discussed contemporaneous debates on using mixed methods in monitoring and evaluation projects in developing countries, and Luong (2015) provided insights on managing the research process.

There remains scope to contribute to the study of mixed methods research in developing countries. Several articles focus on one country only (Alatinga & Williams, 2019; Luong, 2015; Taghipoorreyneh & de Run, 2020; Teye, 2012) or are limited to West Africa (Jones, 2017). Bamberger et al.’s (2010) emphasis on evaluation studies excludes a large share of other social science research in international development. This article contributes to the discourse by drawing on a range of case studies, thus broadening understanding of the practical operationalization and benefits of mixed methods in various developing contexts. It also deepens our understanding of the importance of cultural and logistical factors in shaping mixed methods design. It has been argued that the local context should be considered in the epistemological approach (Fetters & Molina-Azorin, 2019; Martel et al., 2021). This article puts forward evidence that culture shapes the design and implementation of mixed methods research, and offers suggestions on how this can be accounted for.

Research Case Studies

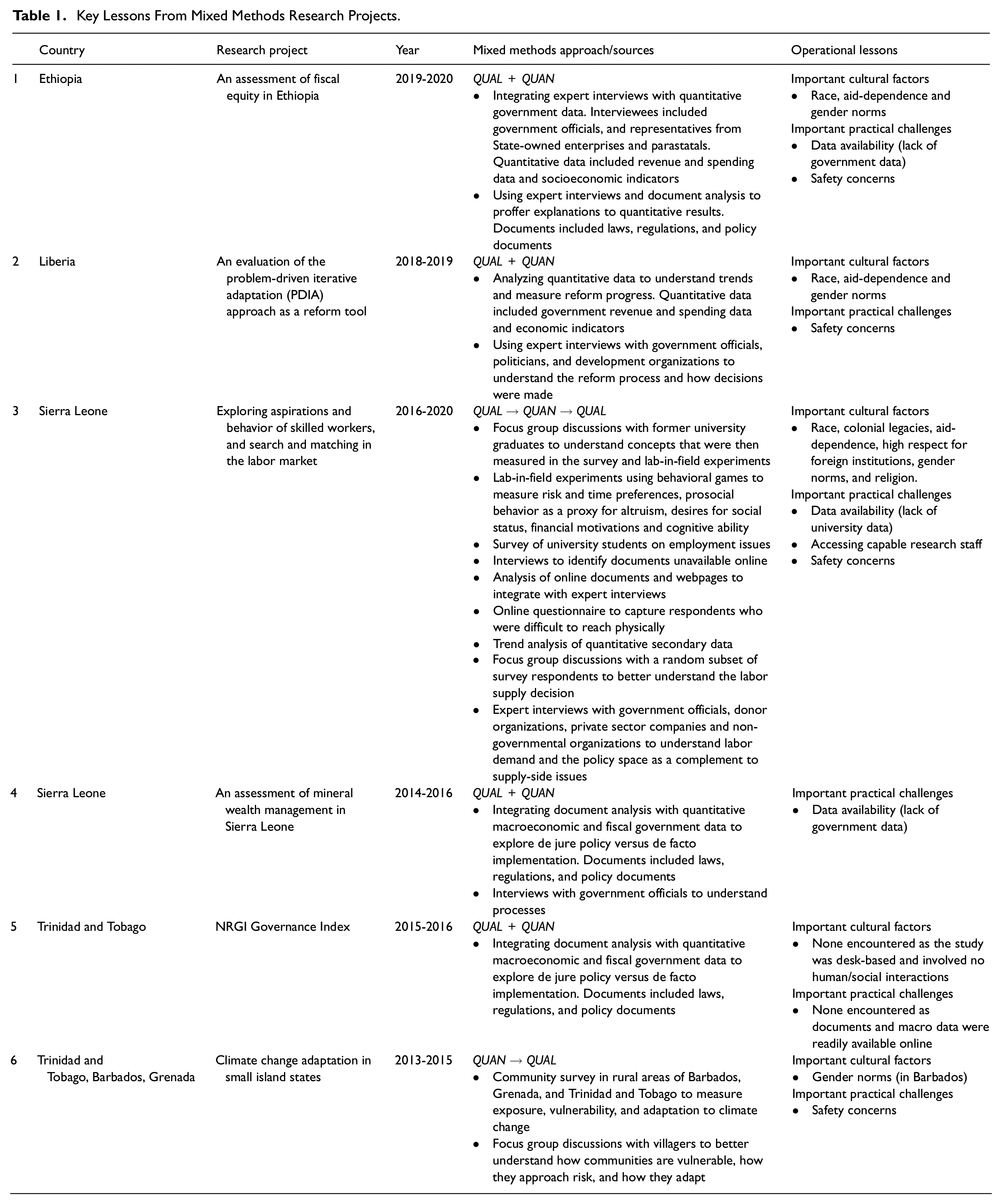

The discussion draws on six mixed methods research projects across six countries that the author was involved in between 2015 and 2020. Research projects range from expert interviews combined with secondary quantitative analysis to community-based research. They include different “types of mixed method research” based on Johnson et al.’s (2007, pp. 123-124) taxonomy, and different mixing sequences as discussed in Morse (2010). Details of each project are summarized in Table 1. Each project has been assigned a number in the first column of Table 1, which is used as a reference in the succeeding discussion.

Key Lessons From Mixed Methods Research Projects.

The first project in Ethiopia assessed fiscal equity of government spending. Here, a QUAL + QUAN approach was used. Elite interviews and document analysis were the primary methods and were complemented by analysis of government revenue and spending data. Project 2 in Liberia involved an evaluation of the problem-driven iterative adaption approach, which aims to develop state capabilities. 2 A QUAL + QUAN approach was also used. Again, elite interviews were integrated with quantitative macro data. The third project was conducted in Sierra Leone and explored aspirations and behaviors of skilled workers. It was the most complex project and relied on an equal share of quantitative and qualitative methods, but a sequential mix that saw qualitative data collection on either side of quantitative methods (QUAL→QUAN→QUAL). On the quantitative side, lab-in-field experiments, a field survey, and secondary data from the university were collected and analyzed. 3 This was integrated with interviews, focus group discussions, and document analysis.

The fourth was a project on mineral wealth management in Sierra Leone, and employed a complementary QUAL + QUAN approach to explore de jure policy versus de facto implementation. The fifth project similarly utilized a complementary QUAL + QUAN approach to compare de jure policy versus de facto implementation, this time looking at resource governance in Trinidad and Tobago. The sixth explored climate vulnerability and was multi-sited (including Barbados, Grenada, and Trinidad and Tobago). A sequential mix was applied (QUAN → QUAL), using surveys followed by focus group discussions to better understand results.

Using Mixed Methods to Improve Data Quality and Results

Previous authors have noted that mixed methods in developing countries can help overcome budget and time constraints (Bamberger et al., 2010); explore complexities, contradictions, and messiness of human behavior (Teye, 2012); and improve reliability and validity of instruments that measure cultural values (Taghipoorreyneh & de Run, 2020). Lessons from the six projects highlight four benefits. These include (a) improving construct validity, (b) understanding processes, (c) explaining regression results, and (d) addressing different parts of the same research question. The former two provide new insights, while the latter two corroborate existing evidence.

Improving Construct Validity

Project 3 (Table 1) aimed to understand how university graduates sort themselves across different occupations based on risk preferences, time preferences, prosocial behavior, desires for social status, financial motivations, and cognitive ability. These constructs are all difficult to measure, as they have multiple features and a universally agreed definition is lacking. This creates issues related to construct validity or “the degree to which a construct under investigation is accurately measured and interpreted” (Sullivan, 2009, p. 107).

In Project 3, latent traits were elicited using lab-in-field experiments which used multiple price lists (MPLs). MPLs are common tools for measuring risk preferences or attitudes toward risk in economics. They comprise a lottery of options that respondents choose between (Falk et al., 2016). Based on the choice, the researcher can ascertain the extent to which respondents are willing to trade-off between risks and rewards. A common belief in quantitative labor economics is that riskier job seekers self-select into self-employment as this is often associated with income uncertainty and the absence of a formal employment contract (Falco, 2014; Skriabikova et al., 2014).

Findings by the author from Project 3 in Sierra Leone challenges this belief as respondents rated self-employment as the most stable option. Focus group discussions after the survey proved useful for elucidating the paradoxical result. According to one graduate: The private sector company is run by someone else. They can die, then you’re out of a job. If new ownership takes over, you have to reapply. Even though private salaries are higher, it’s less stable. For the government, you need to be connected to get a job. When the government changes, they sack you. I would go for self-employment.

Understanding of employment risks among Sierra Leonean graduates is therefore not only limited to the presence (formal employment) or absence (informal employment) of a contract as understood by economists like Falco (2014) and Skriabikova et al. (2014) but is also concerned with the degree of self-control on job security. The private sector in Sierra Leone collapsed during the decade-long civil war between 1991 and 2002, and contracted with the Ebola outbreak in 2014 to 2016. This has led several graduates to question the stability of private sector employment. Moreover, a culture of state corruption in the country modifies the probability of securing public sector employment and introduces a new type of risk (Harris, 2020). Taken together, context and culture matter for fully tapping into difficult to measure constructs.

As such, although the survey used established tools, was piloted before the full roll-out, and three hypothetical “attitudes to risk” questions were included to test for reliability—all recommended practices of quantitative methods (Wolf et al., 2016), a key dimension of risk preferences in the Sierra Leonean labor market was missing. This was only uncovered because of the mixed methods approach.

The pilot and qualitative inputs into the design of the questionnaire and lab-in-field experiments were useful in confirming some aspects of the risk preferences construct and highlighting new ones which were then measured in the quantitative stage. However, there was still need for follow-up qualitative research to expose other dimensions of risk preferences, and better explain the results. This example provides justification for a QUAL→QUAN→QUAL approach. The second round of QUAL does not feed back into measuring the construct in the same study but is useful for providing more nuanced interpretations of findings and can feed into improved measurement in future studies.

In sum, constructs may be multifaceted and context-dependent, implying that measurement tools developed in Western contexts, may not have high construct validity in some developing countries. This relates to Reviere’s (2001) call for a more “Afrocentric research methodology.” Analogous to Taghipoorreyneh and de Run’s (2020) claim that mixed methods can improve validity of cultural measures, evidence here suggest similar benefits for latent traits.

Understanding Processes

Both the Ethiopian and Liberian projects investigated government spending and public financial management reforms. With such projects, quantitative data are useful for understanding trends over time but are silent on how and why decisions are made. The latter is fundamental to government policies and reform processes. Expert interviews are thus a critical source of understanding processes. From a policy standpoint, measuring if a government is spending more (or less) on health or education, for example, is only useful if budget allocation decisions are explored and understood as this drives the reform process.

In more developed regions, allocation decisions and budgetary processes are often documented and publicly available. In contrast, in developing countries where reform needs are arguably greater, from the author’s experience, expert interviews are the most consistent source of these data, as physical records and management information systems are often underdeveloped (Avgerou, 2008; Heeks, 2002).

Furthermore, governance research assesses what countries have legislated compared with what is implemented. The distinction is important as there is often disconnect between legislation being enacted versus being accepted and enforced locally (Grindle, 2004). These types of research questions therefore require different methods to address two sides of the same coin. In the fourth and fifth projects, document analysis was used to understand governance de jure by reviewing laws, regulations, and policy documents. This was then compared with government’s fiscal and macroeconomic data to understand governance de facto. For example, if legislation states that extractive wealth from oil and gas should be saved based on a prescribed fiscal rule, this should be compared with quantitative data from government’s fiscal accounts to determine if the fiscal rule and associated processes are being adhered to.

In the examples above, not only is a mixed methods approach advantageous but also pragmatic with respect to the research paradigm. As Feilzer (2010, p. 8) noted, the process may be less aligned with the constructivist versus positivist split, but “relates more closely to an ‘existential reality.’” In other words, the practical realities concerning availability and relevance of data sources necessary for answering the research question should guide the research approach.

Explaining Regression Results

Using qualitative methods to better explain regression results is a documented benefit of mixed methods (Almalki, 2016; Johnson et al., 2007; Shaffer, 2013). Evidence here adds further strength to this claim.

In Project 3, focus group discussions were used to better understand survey results on the labor supply decisions. The survey data usefully partitioned respondents into their preferred employment sector. The rationale for such choice was further explored using focus group discussions. Similarly, in Project 6, survey data were used to measure community vulnerability. Thereafter focus group discussions were conducted to better understand how communities are vulnerable, how they approach risks, and how they adapt to climate change.

In both cases, narrative information was used to complement the regression results, explain unexpected findings, and identify ways in which the regression model could be re-specified in future research. Most substantively, in Project 3, the qualitative data from focus group discussions gave rise to a new theory of labor market frictions that could not have been extracted from the survey results.

Addressing Different Parts of the Same Research Question

As mentioned, Project 3 was concerned with the labor supply decision of university graduates. Though this is a supply-side question, labor supply decisions are often linked to opportunities available in the labor market. To complement the survey and focus group discussions which addressed labor supply, interviews were conducted with various employers to understand the dynamics of labor demand, the government to understand the institutional and regulatory setting, and donor organizations to understand the international development policy agenda. Collectively quantitative and qualitative methods were used to paint a more complete picture of the labor market. Similar gains from an integrative approach have been highlighted by Luong (2015), Shaffer (2013), and Teye (2012).

Similarly, six de jure and de facto questions are conceptually different (Projects 4 and 5), and thus, require different methods not only to understand reform processes as discussed above but also to address two fundamentally different questions: what has been legislated (de jure) versus what has been implemented (de facto).

Cultural Factors and Mixed Methods Research

Previous studies have noted the importance of specific cultural contexts and non-North American/European perspectives in broadening the view of mixed methods (Fetters & Molina-Azorin, 2019; Martel et al., 2021) and research in developing countries generally (Reviere, 2001). The discussion here emphasizes how culture can influence both the design and operationalization of mixed methods in developing countries. Cultural factors addressed relate to race, colonial legacies and aid-dependence, gender norms, and religion.

According to Staudt (1991, p. 35): “Understanding culture is a starting point for learning the meaning of development, the values that guide people’s actions, and the behavior of administrators.” It is also the starting point of effectively conducting research in foreign countries as the cultural context affects positionality. As used here, positionality refers to “the stance or positioning of the researcher in relation to the social and political contexts of the study—the community, the organization or the participant group” (Coghlan & Brydon-Miller, 2014, p. 2).

Race, Colonial Legacies, and Aid-Dependence

When White researchers study minority groups or race relations in developed countries, race and positionality is important for access and data quality (Bourke, 2014). In developing contexts, the combination of race and foreigner-status often merge, and further interact with colonial legacies. Foreigners often face issues with access and consequently rely on local research staff and/or partner organizations (Mathee et al., 2010). Such partner organizations are often non-governmental organizations and have existing relationships with research participants. By affiliation, foreign researchers inherit the existing power dynamics (often one of aid-giver vs. aid-recipient), and the perceptions that may be associated with the partner organization. Access must therefore be considered alongside positionality.

Sierra Leone is a former British colony, having gained independence in 1961. With this former-colony status and interactions with primarily White donors, the status of “white” and “foreign” matter for data collection. For example, given the power dynamics between the former colonizer and former colonized, having a “white foreigner” present during data collection was shown to affect behavior in Sierra Leone as respondents behaved less prosocial and more needy (Cilliers et al., 2015). In the same context, the author’s positionality introduced a new power dynamic. First, being of African-decent and a citizen of a former colony, the author was often perceived as someone “willing to tell their side of the story” according to one Sierra Leonean respondent. This unique position allowed high levels of access to top officials and rich data on sensitive topics like corruption and patronage, in addition to collaboration with institutions for quantitative data. There were seldom issues with access as described by other researchers (Mathee et al., 2010). Second, access was further enriched by being affiliated with a top foreign university as with Teye (2012). The experience was similar in Liberia and Ethiopia, which are also aid-receiving countries.

The author’s unique position as “foreign sympathizer” from a former colony, affiliated with a reputable foreign institution, afforded privileged access to both quantitative and qualitative data sources. However, this did not extend to local research assistants. In Ethiopia (Project 1), local university-educated research staff were unable to secure meetings with government officials until the author was present. In Sierra Leone (Project 3), the author needed to accompany enumerators for the first day of the survey to add legitimacy to the process. Access to data can therefore be contingent on the principal investigator being physically present, which in turn has logistical implications.

The culture of aid-dependence also affects data collection when considering respondents’ expectations. For instance, in Sierra Leone and Liberia, government officials and citizens look to donors and other development partners for financial and in-kind aid. It was therefore extremely important to manage expectations of research participants in relation to what the research results could achieve, as well as expectations related to reciprocity. For Project 3 in Sierra Leone, it was vital to communicate that participation in the research would not necessarily lead to a change in government or donor policy, nor would it secure the respondent employment. And in Liberia, that a favorable assessment by the research team would not necessarily translate into aid flows to the government. Managing these expectations and relationships were crucial to not only the ongoing project but also future engagement with respondents and the country more generally.

Gender Norms

The role of positionality in mixed methods research as it relates to power dynamics when interacting with state officials has been highlighted (Teye, 2012). Similar arguments can be made here concerning gender based on research conducted in Ethiopia, Liberia, and Sierra Leone, which are all largely patriarchal societies. In all three cases, elite interviews were conducted (Projects 1-3 in Table 1), where the author (as a young woman) interviewed management-level staff from the government, private companies, non-governmental organizations, and international donor organizations. Respondents were often male, older, and well established in their roles. Many were surprised to meet a young female researcher after preliminary e-mail correspondence. During semistructured interviews, extra effort was needed to respectfully lead the discussion.

Gender and positionality has primarily been discussed with respect to qualitative research (Bourke, 2014; Coghlan & Brydon-Miller, 2014; Teye, 2012). Based on the author’s experience, there are also implications for quantitative methods, specifically for sample selection and representativeness. In the third project in Sierra Leone, data were collected using SurveyCTO which allows for real-time uploading/analysis of data. Enumerators approached groups of students at the university campus and randomly selected respondents. After the first week of data collection, it was evident that female students were underrepresented in the survey. This was likely driven by the fact that the four enumerators were all male and unconsciously sampled male students that they could relate to and/or females were less likely to speak to male enumerators. To correct for this, a conscious effort was made to recruit female respondents in the second week of the survey which affected the random sampling approach.

The author attempted to avoid an all-male team, but this was affected by the pool of candidates applying to the enumerator position. From applications received, 10 were short-listed, of which only two were females. The selection process comprised an oral interview, written test, and mock survey administration exercise. The top four candidates emerging were all male. This was likely driven by local gender dynamics which affect the selection pool. In Sierra Leone, fewer females progress to university education, women are marginally less likely to participate in the labor force; and those that do participate, are on average lower skilled than their male counterparts (Statistics Sierra Leone, 2015, pp. 5-9). Structural imbalances between groups (gender or other) that exist in the country influence access to local research staff, which in turn affects access to respondents and data collection.

Barbados (Project 6) provides another example of the importance of gender when sampling. Here, a systematic sampling approach was used, where there was a set interval between households sampled on a given street. 4 There was a burglary in the community during the week preceding the survey. As a result, households were less willing to open the door to male enumerators. In both Sierra Leone and Barbados, the gender of the enumerator, in part determined the survey sample, and consequently the data collected. This in turn had implications for follow-up focus group discussions, which utilized a subset of the survey sample as part of a sequential mixed methods design.

Religion

A third important cultural factor is religion. Five incentivized lab-in-field experiments were used in Sierra Leone (Project 3) to measure risk preferences, time preferences, prosocial behavior as a proxy for altruism (two games), and cognitive ability. For example, to measure prosocial behavior, instead of asking how charitable respondents think they are or how much they would donate hypothetically, respondents were given a monetary endowment and asked to make a donation. These types of methods are common in behavioral economics (Viceisza, 2016). To manage the overall budget and simultaneously incentivize truthful behavior, all five games were played but payment was given for one randomly selected game. This was determined by tossing a fair die. Though useful for randomness and adhering to budget constraints, introducing the die was perceived by a very small number of Islamic students as gambling. These students declined to participate in the survey. Their voices where then absent from focus group discussions, which drew on a subset of the survey sample.

Practical Challenges in Developing Countries

This section examines some practical challenges with operationalizing mixed methods in developing countries. Previous studies have highlighted challenges rated to sampling, language, access, and safety (Luong, 2015; Mathee et al., 2010). Four challenges are discussed here, and solutions adopted explained. These include data availability, accessing capable research staff, and safety and ethics.

Data Availability

As previously noted, data management systems are often lacking in many developing countries (Avgerou, 2008; Heeks, 2002). In extreme cases like Sierra Leone, the decade-long civil war saw several documents and records being destroyed (Gberie, 2005). To understand resource wealth management in Project 4 (in Sierra Leone), policy documents and quantitative data were needed to track policy and fiscal changes over time. In the absence of these data, the research question had to be modified to explore the evolution of processes based on interviews with government officials. Similarly, in Ethiopia, the original aim was to track sector-level spending data. Given that the data were unavailable, the question was modified to focus on understanding allocation decisions of state-owned enterprises, as these data could be collected using interviews. In both cases, a pragmatic approach was taken. 5

Such data limitations were not unique to government studies. For the university survey in Project 3, the first step of the sampling process was to acquire a sampling frame of university finalists at Fourah Bay College, University of Sierra Leone. However, such a list was unavailable from the university. Lack of centralized information systems at higher education institutions in Sierra Leone was highlighted in 2013 (World Bank, 2013, p. 25), and continues to hold true. The university possessed partial registration lists as many students do not officially register because of costly registration fees. Students attend lectures and write examinations all the same. After final examinations, students settle outstanding fees to access their transcripts and degree certificates. Taking the list of officially registered students would have resulted in a downward estimate of the population, and biased sampling toward those students financially better off or on fully-funded scholarships. Again, in adopting a pragmatic research paradigm, qualitative techniques were employed.

Course representatives, who act as liaisons between staff and students, were referred by departmental heads and were interviewed. Interview data produced an estimate of students enrolled on each degree program. After the first week of the survey, another estimate was taken from three randomly selected students from each degree program. This innovation of using interviews to approximate the true population was best at the time, and implied updating the sequencing mix from the initially planned QUAN→QUAL to a QUAL→QUAN→QUAL for this component of the project. A year after the survey, estimates were compared with official university graduation records and postsampling weights applied.

Accessing Capable Research Staff

Capable research staff (such as research assistants and translators) and reliable logistical staff (fixers and drivers) are critical to developing country research. The first group can often be sourced from local research companies (if the project budget allows). For cheaper options, the main local university is a useful source. If university students are used, from experience in Sierra Leone, Barbados, Grenada, and Trinidad and Tobago, it is best to recruit students enrolled in a field closely linked to the research and to screen candidates. This increases staff motivation and quality of data collection.

Oftentimes, a fixer may be required to negotiate access to institutions, set up interviews and/or facilitate meetings before the roll-out of a large survey. The ideal candidate is well connected, speaks the local language and that of the lead researcher, and can easily navigate local bureaucracies. In highly networked societies, a fixer can facilitate large amounts of access to some groups, but simultaneously hurt access to others. They may also affect the data collected on account of associated positionality, similar to working through local organizations (Mathee et al., 2010). For example, the third project was in Sierra Leone where political allegiances matter to wider social and economic circumstances. A well-connected fixer, capable of setting up very-difficult-to-obtain meetings, was recommended to the author. However, the fixer was known to be associated with the then-opposition party. Though the project did not have an explicit political focus, this mattered for two reasons. First, the fixer would be less capable of organizing meetings with informants that sympathized with the then-governing party. Second, although the fixer would not be present at interviews, being associated with the fixer would likely affect how respondents perceived the researcher and answered questions. This was particularly important to a mixed methods design as some initial interviews informed the subsequent survey. The trade-off between access and data quality should thus be considered when engaging local research staff.

Safety and Ethics

Ethically, the researcher should ensure that no harm comes to participants, and research and logistical staff under their supervision (Benatar, 2002; Ford et al., 2009). Conducting research in a foreign country is helped by a strong local team, though this should not render a false sense of security in contexts were crime and unrest may be a factor. For example, in Project 6, female enumerators often worked in pairs due to safety concerns. This undoubtedly increases the cost per completed questionnaire but supports the safety of enumerators. In Barbados, the increased survey costs were balanced by higher access to some households as female respondents were more averse to opening the door to male enumerators, and more inclined to do so if a female enumerator was also present.

Contribution to the Field of Mixed Methods

Lessons from the six studies highlight the importance of cultural and practical/logistical factors to the design and operationalization of mixed methods research in developing countries. As noted, the use of mixed methods in development research has been increasing (Ngulube & Ngulube, 2015; Shaffer, 2013). Three lessons are presented here to help further advance the use of mixed methods in developing countries.

The first key lesson is that culture not only matters to the research philosophy (Fetters & Molina-Azorin, 2019; Martel et al., 2021; Reviere, 2001) but also to the types of questions that can be asked, how data can be collected, and the quality of data based on the researcher’s position within the cultural context. This calls for more reflexivity in mixed methods research—something which is commonplace in qualitative-only studies (Berger, 2015; May & Perry, 2010). Given the cultural context, researchers should therefore reflect on the following questions: (a) does my positionality and that of my research staff impact on accessing necessary data? (b) does my positionality and that of my research staff impact on data quality? (c) should the methodological approach be updated due to cultural factors? Depending on the answers to these questions, the research question, research design, and/or operational plans may need to be updated. Positionality, given the cultural context, should also factor into the analysis stage as “the worldview and background of the researcher affects the way in which he or she constructs the world” (Berger, 2015, p. 220), and thus how findings are interpreted.

The second lesson is that mixed methods is often a pragmatic approach to development research given practical constraints like data availability and also the nature of some development-oriented questions (for instance, de jure vs. de facto questions). This supports previous calls for pragmatism as a research paradigm (Biesta, 2010; Feilzer, 2010). Traditionally, the choice of a more quantitative or qualitative strategy is closely related to the epistemological approach—positivist versus constructivist, respectively (Morse, 2010; Morse & Niehaus, 2009). However, when conducting research in developing countries, researchers should look beyond this traditional dichotomy. There is need to adopt a pragmatic philosophy, and quickly update the research questions and research design given the context.

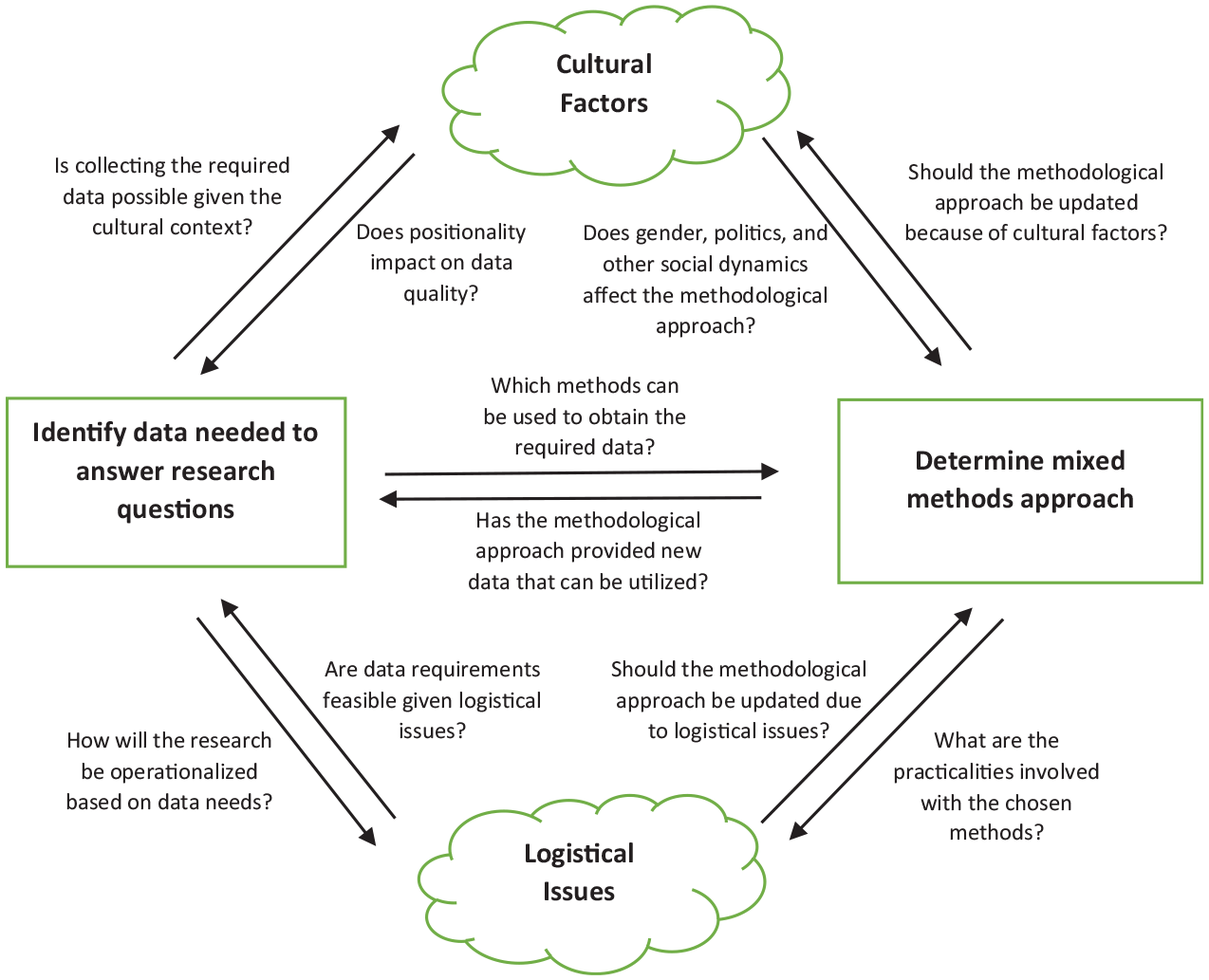

The third lesson draws on the first two and calls for an iterative research process, where the research question and data requirements, mixed methods approach, cultural, and practical factors all shape, and are shaped by each other. The type and quality of data that can be collected are affected by how methods can be practically operationalized and the local culture. At the same time, the type of data needed to answer the research question has bearings on the practical implementation and financial aspects of fieldwork. It also determines how researchers, translators, and fixers approach the research context. And finally, how data can be practically collected is largely determined by social norms, culture, and the institutional setting. Such an iterative approach complements reflexivity discussed above and requires several feedback loops as shown in Figure 1.

An iterative process for researchers using mixed methods in developing countries.

Conclusions

Mixed methods in development research can help us better understand different elements of poverty, well-being, livelihoods, migration, unemployment, marginalization, gender-based violence, refugees’ experiences, vulnerability to disasters, and conflict. These are all pertinent issues currently being tackled by development scholars, policy makers, and practitioners.

As more researchers exploit the advantages of mixed methods to study these topics, they should be mindful of cultural factors and practical challenges that are unique to developing contexts. It remains that, ex ante, the choice and combinations of methods should be guided by the research question. However, researchers should be reflexive and iterative in their approach, incorporating feedback loops, which may ultimately lead to a change in the research questions, qualitative versus quantitative mix, and/or methodological sequencing.

These cultural and practical factors should be considered within the broader research cycle which starts with developing the research question, all the way through to reporting findings. This article has discussed how mixed methods can be used, the benefits of mixing, and cultural and practical factors that emerge as important. It is, however, silent on how mixed methods questions can be developed as in Plano Clark and Badiee (2010), and how findings should be combined for a diverse audience as in Bazeley (2009, 2015). Generating knowledge on how development-specific research questions can be defined, and how analytical findings from cross-disciplinary studies can be written for consumption by academics, development practitioners, and policy makers in both developed countries (which fund research) and developing countries (where research is policy relevant) remains an area for future enquiry.

Footnotes

Acknowledgements

I am grateful to all my research participants, research assistants, enumerators, and drivers who made these research projects a success. I also wish to thank my colleagues from the University of the West Indies, and those involved in the NRGI-led Sierra Leone Benchmarking Project and 2017 Resource Governance Index. A special thank you to my colleagues at Fiscus Ltd for providing interesting opportunities, which bring research and policy together. And to Alex Jones for commenting on various versions of this piece.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.