Abstract

With the increasing popularity of AI, it is critical to gain an understanding of how academic research ethics are impacted. Unfortunately, in Canada, the Tri-Council Policy Statement: Ethical Conduct for Research Involving Humans, or the TCPS2, lags far behind current technology when it comes to ethical research related to AI. We suspected that REBs at Canadian public universities are struggling to determine how the TCPS2 can or should be interpreted when it comes to AI research. To gauge these challenges, we conducted a survey of REB Chairs/Vice-Chairs and REO managers/supervisors. The results of the survey confirmed our suspicions. They also show the lack of guidance from the Tri-Council and the Panel on Research Ethics is likely making this struggle worse. Through an analysis of the TCPS2, proposed Canadian legislation, and the survey results, we developed a series of recommendations for both the Tri-Council and REBs which may help overcome these challenges and ease the confusion.

Introduction

While academic research with or about artificial intelligence (AI) is not new, the incredible advancements in AI technology in the last few years has raised many ethical and legal concerns (Ada Lovelace Institute [ALI], 2022; Naik et al., 2022; Pazzanese, 2020; Schiff et al., 2021). Using large amounts of data scraped from the web to train popular, general use AI models is now the norm, even though the legality of such data collection methods is ambiguous at best (Brittain, 2025; Reisner, 2025; Sellars, 2018). These legal issues are so new that there are very few completed court cases or legal proceedings related to them by which to set a precedent, though there are several legal cases currently underway (Wallace & Akinremi, 2025; Williams & Csathy, 2025).

It is not unusual for researchers to use web scraping to gather large amounts of data from the internet to use in their research, even if that research is not related to AI (Kempny & Brzoska, 2023; Lam et al., 2026; Supriyono et al., 2025). This data gathering is made effortless via easy-to-write web scraping algorithms and site-specific APIs. In fact, researchers can use AI tools to write web scraping code, allowing less tech savvy researchers easy access to large amounts of internet data (Cohen & Hage-Youssef, 2025; Semeler et al., 2024). The use of web scraping for academic research has generally been considered permissible by researchers and research ethics boards (REBs), if the data does not contain personally identifiable information or if there was no reasonable expectation of privacy (Panel on Research Ethics [PRE], 2023a). However, there are researchers who believe these research ethics policies should be stricter (Fiesler, 2019).

In addition, research ethics policies do not go into sufficient detail for researchers to determine if the use of popular AI tools (such as ChatGPT or Copilot), which were trained using web scraped data that likely included personally identifiable information and where privacy was reasonably expected, would fall under the same research ethics policies that apply to researchers doing their own data collection via web scraping. This is especially confusing as research has shown that popular AI tools could reproduce personal information as part of their output (Carlini, 2021; Chen et al., 2026; Naser, 2026), causing significant privacy concerns.

Legislation and regulations (in Canada and beyond) for the development and use of AI is sparse. The European Union’s AI Act (European Parliament, 2024) has passed, but will not take full effect for existing AI tools till 2027 (Future of Life Institute [FLI], 2025). The AI Bill of Rights that had been posted to the White House's website by the Biden Administration has now disappeared (Office of Science and Technology Policy [OSTP], 2024), though some US states have passed their own AI legislation (e.g., Colorado pass the Consumer Protections for Artificial Intelligence Act in 2024 and California pass the Generative Artificial Intelligence Accountability Act in 2024).

Bill C-27 in Canada included the new Artificial Intelligence and Data Act (AIDA), but the bill died on the Order Paper after a federal election was called in early 2025 (Canada, 2025). After his election win in April of 2025, Prime Minister Mark Carney created a new cabinet position specifically related to AI. Evan Soloman was appointed by Carney as the new Minister of Artificial Intelligence and Digital Innovation (Amaoui, 2025). In September 2025, Minister Soloman announced that a new AI task force was being assembled to develop an updated AI strategy for Canada, which he claimed would be tabled by the end of 2025 (it was not) (Karadeglija, 2025). In December 2025, Minister Soloman than announced the updated AI strategy would not be released until some time in 2026, along with new privacy legislation designed to protect data and children from AI (Boynton, 2025). Interestingly, at the same time, the Canadian federal government has earmarked almost $1 billion (over five years) for “large-scale sovereign public AI infrastructure” (Boynton, 2025), working towards building AI infrastructure before the development of an AI strategy or legislation.

While there is a lack of AI legislation and regulation, there are quite a few AI ethical guiding principles from a variety of sources (Corrêa et al., 2023; Khan et al., 2022; Schiff et al., 2021) including the Governments of Canada and Ontario, the Privacy Commissioner of Canada, the Organization for Economic Cooperations and Development (OECD), and the National Institute of Standards and Technology (NIST). Some of these principles were written only for the use of AI by specific governments (e.g., Canada, 2024b; Ontario, 2023), while others were written by organizations as recommendations to private companies (e.g., National Institute of Standards and Technology [NIST], 2023; Organisation for Economic Co-operation and Development [OECD], n.d.; Office of the Privacy Commissioner of Canada [OPC], 2023). These principles “provide useful guidance for the development and use of AI…without including specific guidance concerning the development and use of AI in scientific research” (Resnik & Hosseini, 2025). Resnik and Hosseini note that while “the use of AI does

Resnik and Hosseini's, 2025 article addresses the issue of AI and general research ethics, however, its focus is on guidance for researchers as opposed to guidance for REBs. Unfortunately, “REBs are not equipped…to adequately evaluate AI research ethics and require…guidelines to help them do so” (Bouhouita-Guermech et al., 2023). Ideally, to ensure consistency and transparency, these guidelines need to come from a place of authority such as the Panel on Research Ethics, as opposed to being developed by individual REBs across dozens of universities.

Overall, the use of web scraping and private-sector AIs in academic research includes a variety of important and disparate research ethics challenges. For this study, we focused on the challenges related to (information and data) privacy. To help identify and address these challenges, we conducted a study to gather the views of Canadian public university REBs regarding the use of web scraping

To gather these views, we conducted a survey in the fall of 2023, distributed to Canadian public universities in English and French. Through the survey, we were interested to learn:

an estimate of the number of researchers currently conducting research using web scraping and AI versus the number of applications the REBs received related to research using web scraping and AI; the implications Bill C-27

1

may have on the methods and policies the REBs use to evaluate ethics applications related to web scraping and AI; how researchers using web scraping and AI in research could be encouraged or educated to submit required ethics applications for what type of support or guidance REBs require in order to better evaluate ethics applications for research that uses web scraping and AI.

Background

Incomplete Legislation in Canada

In Canada, privacy is legislated at both the federal and provincial level. At the federal level, the Personal Information Protection and Electronic Documents Act (PIPEDA), 2000 legislates how federal public sector organizations and all private sector commercial organizations should handle personal information (OPC, 2014). Each province and territory in Canada also has privacy legislation for their provincial/territorial public sector organizations (OPC, 2014). Provinces and territories are also able to develop privacy legislation for provincially regulated private sector organizations, as long as that legislation is as good as or better than PIPEDA (OPC, 2017). To date, only three provinces—Alberta, BC, and Quebec—have such private sector privacy laws.

On June 16, 2022, the Digital Charter Implementation Act or Bill C-27, was introduced in the House of Commons (Canada, 2024a). The Consumer Privacy Protection Act was intended to replace Part 1 of PIPEDA. The Personal Information and Data Protection Tribunal Act and the Artificial Intelligence and Data Act (AIDA) were new acts to address items not currently in existing legislation. On April 24, 2023, it was sent to the Standing Committee on Industry and Technology for further discussion where it was debated at over 30 committee meetings, the last of which was September 26, 2024. Canadian parliament was prorogued on January 6, 2025, for a federal election, which put an end to all parliamentary committees and in-progress bills.

AIDA was intended “to regulate international and interprovincial trade and commerce in [AI] systems by establishing common requirements, applicable across Canada, for the design, development and use of those systems” and “to prohibit certain conduct in relation to [AI] systems that may result in serious harm to individuals or harm to their interests” (Canada, 2022). Harm refers to “physical or psychological harm…damage to an individual's property [and/or]…economic loss to an individual” (Canada, 2022). This type of harm is not dissimilar to one of the Core Principles outlined in the Tri-Council Policy Statement: Ethical Conduct for Research Involving Humans (TCPS2)—Concern for Welfare—which states the “welfare of a person is the quality of that person's experience of life in all its aspects” including their “physical, mental, and spiritual health, as well as their physical, economic, and social circumstances” (PRE, 2023b).

AIDA would have been the first piece of legislation in Canada that specifically addressed the harms caused by AI, helping ensure those developing and working with AI reduced or eliminated those harms. It also would have provided guidance for the Panel on Research Ethics (PRE) and REBs to develop guidelines for the ethical assessment of AI-related research projects.

Gaps in the Tri-Council Policy Statement (TCPS2)

Overview of the TCPS2 and the Panel on Research Ethics

The Terms of Reference for the PRE states that they will “advise the Agencies about the ongoing development and evolution of the TCPS2” (PRE, 2016a). It also refers to a “commitment of the Agencies to keep the TCPS current and responsive to the ethical issues that arise in the course of research” (PRE, 2016a). A news release dated January 11, 2023, which announced the release of the 2022 version of the TCPS2, stated that the TCPS2 “is a living document” (PRE, 2023c).

These statements imply that the Tri-Council and the PRE understand how important it is to keep the TCPS2 up-to-date. They also imply that while the TCPS2 has been ‘officially’ updated every four years, it can (and should) be updated more often. However, updating an ‘official’ document every four years does not keep that document “current and responsive,” especially when it comes to AI.

The PRE also provides an interpretation service to “support the needs of participants, researchers, and REBs in the effective use and understanding of the TCPS” (PRE, 2016b). The most recent set of interpretations, from May 2024, does not include anything related to AI.

Researcher Knowledge of the TCPS2

It is incumbent upon REBs to share their knowledge of the TCPS2 with members of their institution. Researchers also have a responsibility to understand and abide by the TCPS2. By accepting Tri-Council funding, they are attesting to the fact that they will follow the TCPS2, received appropriate ethical clearance, and will abide by research integrity policies. In addition, every institution has at least one policy related to research integrity to which all affiliated researchers must follow, even if they do not receive Tri-Council funding.

Unfortunately, many researchers consider the research ethics process to be cumbersome, confusing, and, in some cases, a waste of time (Masso et al., 2025; Silberman & Kahn, 2011; Snooks et al., 2023). Like writing grant applications, the process can be time-consuming and frustrating. Unlike grant applications, there is no obvious benefit or positive outcome (i.e., funding) associated with a successful ethics application.

Reasonable Expectation of Privacy

Popular AI models are trained using massive amounts of data. This data largely comes from the internet, captured electronically using a variety of methods and stored on servers from which those AIs are trained. The extent of what this data includes and where it came from is not typically shared with users. While developers like OpenAI claim to have obtained all this data legally and ethically, that data is not available for review, making it impossible to know if their statement is true and whether it meets the ethical standards for obtaining human research data as outlined in the TCPS2.

Article 2.2 of the TCPS2 states that “research does not require REB review when it relies exclusively on information that is…in the public domain and the individuals to whom the information refers have

Some research situations surrounding the reasonable expectation of privacy are obvious, while other include a lot of grey area (Fiesler, 2019). Researchers who want to use data that falls into this grey area need to do their due diligence to be sure there was no reasonable expectation of privacy. If they are not sure, ethics approval would be required.

It is important to note that Article 2.2 of the TCPS2 does not stipulate what type of data it is referring to, just that an REB review is not required if there is no reasonable expectation of privacy. Therefore, if there is a reasonable expectation of privacy, any data obtained, whether it is personally identifiable or not, requires an REB review. As an example, OpenAI says that “[d]ata that is publicly accessible and does not contain personally identifiable information or sensitive information generally does not raise significant privacy concerns” (OpenAI, 2024). This implies that OpenAI standards do not align with the TCPS2.

Secondary Use of Data

Chapter 5, Section D of the TCPS2 states that secondary use of data is “the use in research of information originally collected for a purpose

As already mentioned, popular AI models like ChatGPT use training data from unknown and unverifiable sources, some of which includes identifiable information. When a researcher uses a popular AI tool for a research project, most of the output (i.e., the research data) will not contain identifiable information. However, the report Large Language Models and the Disappearing Private Sphere demonstrated that it is possible to identify individuals through privacy leakages when using popular AI platforms (Cappello et al., 2024). From a TCPS2 perspective, this would be considered secondary use of data requiring ethics approval that satisfies the conditions noted above.

However, this ethics process assumes that the researcher knows they will be using identifiable information in advance. Privacy leakages in popular AI tools are unknown until they appear (Ye et al., 2024). Does a researcher then need to apply for ethics approval retroactively? Or should they only continue the project with data that is not identifiable?

Article 5.7 of the TCPS2 regarding data linkage refers to connecting data from multiple sources that could potentially identify an individual (PRE, 2023b). In these situations, ethics approval is required, and researchers are required to prove that such linkages are necessary and that the resulting information will be protected. But what if the researcher is not the one linking the data, but is simply using the output of that linked data? Does research of this type still fall under Article 5.7?

These gaps present researchers and REBs with immense challenges when it comes to research that includes popular AIs. Not knowing how these gaps may be filled by the PRE or future legislation has created confusion.

Methods

Survey Development

A survey questionnaire was developed consisting of 20 questions in five sections: Demographics, AI Research at the University, Web Scraping, Bill C-27, and AI and LLM Use at the Institutional Level. The questions included a variety of multiple choice and short answer responses. Please refer to Appendix A for the full survey questionnaire (in English) presented to participants.

Survey Recruitment

A list of all 97 Canadian universities by province was obtained from the website

A search of these 74 institutional websites was conducted to find the names and email addresses of REB chairs and research ethics office (REO) managers/supervisors. If an institutional REB had a generic email address (i.e., ethics@school.ca), that email address was also included. At least one email address was obtained for each of the 74 universities.

Survey Communication

Between October 16 and 18, 2023, 143 English emails were sent inviting potential participants to complete the survey by October 27. On October 27, 2023, 143 English follow-up emails were sent reminding potential participants of the survey and extending the deadline till November 3. On November 22, 2023, 28 French emails were sent inviting potential participants to complete the survey by December 6. On December 7, 2023, 28 French follow-up emails were sent reminding potential participants of the survey and extending the deadline till December 13.

Results

Response Rate

The surveys received a total of 50 responses. Of these, 40 were usable (with two not providing consent and eight consenting but leaving the survey blank). A total of 171 invitation emails were sent, meaning the survey experienced a response rate of 29% (50 responses) or 23% (40 responses).

Of the 40 usable responses, 22 (or 55%) were from REB Chairs or Vice Chairs, 17 (or 43%) were from REO managers or supervisors, and one (or 3%) was from an unknown position. The one ‘unknown’ respondent's answers were added to the manager/supervisor category, which preserved the distribution of responses between the two position types (i.e., a distribution of 55%/45% versus the original 56%/44% distribution).

Demographics

Provinces

Of the 40 usable responses, 10 (or 23%) were from Ontario, nine (or 23%) from British Columbia (BC), eight (or 20%) from Alberta, six (or 15%) from Quebec, three (or 8%) from Nova Scotia, and one (or 3%) each from Manitoba, New Brunswick, Newfoundland, and Saskatchewan.

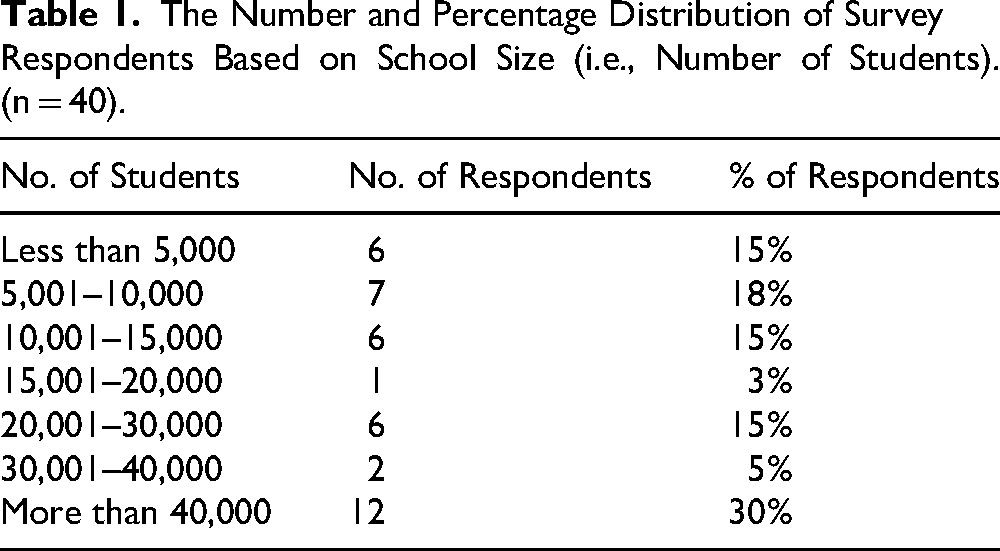

University Size and Research Intensity

We asked respondents to tell us approximately how many students (full and part time, undergraduate and graduate) are enrolled at their universities (Appendix A, Q3). The resulting breakdown is in Table 1.

The Number and Percentage Distribution of Survey Respondents Based on School Size (i.e., Number of Students). (n = 40).

We also asked respondents if their university is considered research intensive, though we did not provide a specific definition (Appendix A, Q4). Of the 40 usable responses, 30 (or 75%) indicated their universities are considered research intensive.

Demographic Aggregation

Most universities only have a single general research ethics board (GREB) and a single REO. This means that it may be possible to identify respondents if their positions, province, and/or school size were known. For example, there is only one public Canadian university in Newfoundland. To prevent the identification of respondents, results were aggregated into groups with at least five responses.

For province, five of the nine provinces had fewer than five responses (Manitoba, New Brunswick, Newfoundland, Nova Scotia, and Saskatchewan). Therefore, results used the following groupings: BC (n = 9 or 23%); Prairies, including Alberta, Saskatchewan, and Manitoba (n = 10 or 25%); Ontario (n = 10 or 25%); Quebec (n = 6 or 15%); and the East Coast, including New Brunswick, Newfoundland, and Nova Scotia (n = 5 or 13%).

For school size, two of the seven categories have less than five responses (15,001–20,000 and 30,001–40,000). Therefore, results used the following groupings: less than 5,000 (n = 5 or 15%); 5,001–15,000 (n = 13 or 33%); 15,001–30,000 (n = 7 or 18%); and more than 30,000 (n = 14 or 35%).

Number of AI Researchers or Labs

As part of the AI Research at the University section, we asked respondents to estimate how many labs or researchers were doing AI-related research at their institution and whether they expected that number to increase in the next 1–5 years (Appendix A, Q5 and Q6). The purpose of these questions was to understand what respondents thought was the size of the challenge at their institution, without pre-defining these terms for respondents.

Ten respondents (or 25%) estimated their institutions had 0–4 labs or researchers doing AI-related research, and another 10 respondents (or 25%) estimated their institutions had 5–9 labs or researchers. Three respondents (or 8%) estimated 10–14, two (or 5%) estimated 15–19, and eight (or 20%) estimated over 20 labs or researchers. Seven respondents (or 18%) did not want to provide an estimate.

Of all the respondents, 32 (or 80%) expect that the number of researchers or labs doing AI-related research will increase in the next 1–5 years. None of the respondents thought they would see a decrease in this number and eight (or 20%) indicated they did not know.

AI Research Receiving Ethics Approval

The next three questions in the AI Research at the University section asked respondents about AI research and the ethics approval process at their institution. The first question asked respondents to estimate the percentage of AI research at their institutions that went through the ethics approval process (Appendix A, Q7). Fifteen respondents (or 38%) estimated that less than 20% of AI research gets ethics approval; seven (or 15%) estimated 21–40%; four (or 10%) estimated 41–60%; five (or 13%) estimated 61–80%; and only one (or 3%) estimated 81–100% of AI research goes through the ethics approval process. Eight (or 20%) did not know.

These results seem to imply that, in general, respondents do not believe many labs/researchers conduct AI-related research, but of those that do, many do not submit an associated ethics application. While the lack of ethics applications may seem like an issue, it is possible that the low number of labs/researchers involved may not make the REBs feel like this is an urgent or high priority issue.

We then asked respondents to rank (from a set of pre-written responses) why they thought AI-related research was not going through the ethics approval process (Appendix A, Q8). Thirty-six (36 or 90%) respondents provided an answer. Based on an analysis of the responses, the following overall ranking was calculated:

Researchers are unaware of ethics requirements. Ethics approval is not required. Guidelines for ethics approval are unclear for AI research. Approval process is too slow and/or difficult. Other reasons.

The next question asked respondents to speculate on what would need to change to ensure more AI research goes through the ethics approval process (Appendix A, Q9). Respondents were provided with six possible responses plus the opportunity to provide their own response. They were able to select as many responses as they wished. The following ranking is based on popularity:

Clearer guidelines around AI research in the TCPS2. (n = 34 or 85%) Clearer understanding by researchers around the data used in AI research. (n = 24 or 60%) Increased understanding of ethics requirements by researchers. (n = 23 or 58%) Clearer understanding by ethics boards around the data used in AI research. (n = 22 or 55%) Increased understanding of ethics requirements by university admin and/or research office. (n = 15 or 38%) Clearer guidelines around AI research ethics in federal and provincial legislation. (n = 14 or 35%) Other changes. (n = 1 or 3%)

The ranking of the responses to Q8 and Q9 seem to align, at a high level. But there are some interesting differences. For example, in Q8, respondents believe the lack of ethics guidelines for AI-related research is the third highest reason why they do not receive more ethics applications. The highest reason is due to the lack of awareness of TCPS2 ethics requirements by researchers. Yet, in Q9, these two reasons are reversed in the ranking. Clearer guidelines about AI-related research was the number one solution, whereas researchers gaining a better understanding of TCPS2 ethics requirements is the third highest solution. While the two issues and solutions are related, they are not identical. General ethical requirements would apply to all researchers, to help them understand what type of research (i.e., research that includes human participants, etc.) would require ethics approval. Whereas ethical guidelines specific to AI-related research would guide researchers on what specific information is required for ethics applications for research that includes AI. If the number one reason why ethics applications are not being received is because researchers are unaware of what research does and does not require ethics clearance, the intuitive solution would be to ensure all researchers are better aware of ethics requirements.

In addition, and probably not surprisingly, respondents did not view the approval process itself as being much of an issue (ranked 4th), and they felt that increasing their own knowledge of the data used in AI research as not being as big a solution (also ranked 4th). Respondents actually felt that a better understanding of the data used in AI research by the researcher is a better solution (ranked 2nd) than increasing their own knowledge of the same topic.

These responses may indicate a possible area for future studies on the same topic in order to better understand why respondents answered in this way.

Guidance on AI Research Ethics

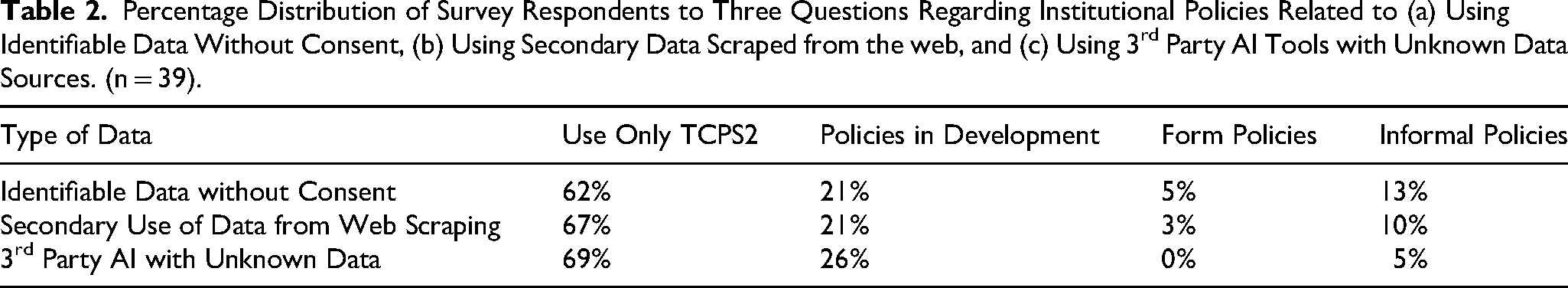

In the last part of the survey, respondents were asked seven policy-related questions. The first three questions asked if the REB or REO had formal or informal, completed or in progress policies, procedures, or guidelines associated with the use of data in three different situations (Appendix A, Q10 to Q12).

Based on the responses outlined in Table 2, about two-thirds of respondents are

Percentage Distribution of Survey Respondents to Three Questions Regarding Institutional Policies Related to (a) Using Identifiable Data Without Consent, (b) Using Secondary Data Scraped from the web, and (c) Using 3rd Party AI Tools with Unknown Data Sources. (n = 39).

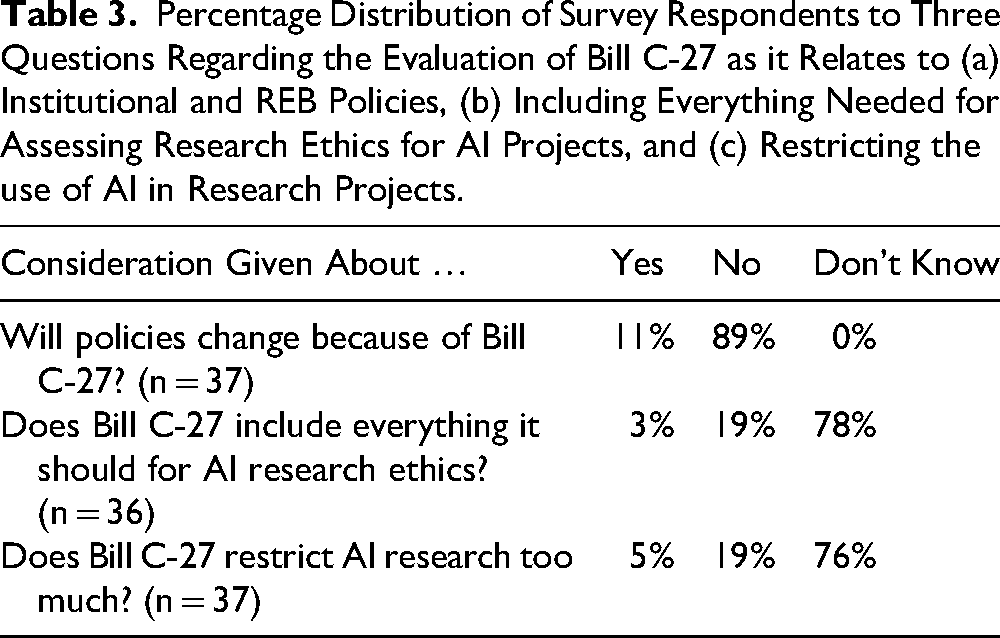

The second set of three questions, in Table 3, asked respondents about Bill C-27, whether they have given any thought to how it might impact their work, and their opinion on the usefulness of the bill with regards to AI research (Appendix A, Q13, Q15, and Q17).

Percentage Distribution of Survey Respondents to Three Questions Regarding the Evaluation of Bill C-27 as it Relates to (a) Institutional and REB Policies, (b) Including Everything Needed for Assessing Research Ethics for AI Projects, and (c) Restricting the use of AI in Research Projects.

The majority of respondents (or 89%) had not yet had the time to consider how Bill C-27 would impact their work or policies. Bill C-27 was not known well enough for REBs and REOs to be able to provide an opinion on whether its AI provisions would be effective (as per 76–78% of respondents). Only around a quarter of respondents (or 22–24%) had looked at Bill C-27 enough that they felt comfortable forming an opinion about it.

The final policy-related question asked respondents whether their institutions had released any kind of policy or set of guidelines related to AI (Appendix A, Q18). These could relate to anything at the university, including teaching and learning, research or academic integrity, scholarly publishing, etc. This could also have been something released internally or externally. Overall, 15 respondents (or 42%) indicated that their institutions have not yet released any AI-related policies; 18 respondents (or 50%) had released something internally; and only three respondents (or 8%) had released something externally. It is important to note that this survey was completed in October and November 2023. In the months since then, most universities have released AI-related policies externally.

Discussion

AI Research Underusing Ethics Approval

Based on responses to Q8, only one respondent (or 2%) believes that at least 80% of the AI research conducted at their institution is going through the ethics approval process. Thirty-two respondents (or 78%) believe that less than 80% of this research is going through the ethics approval process. Therefore, for the majority of respondents, they believe they only see about three-quarters or less of the AI-related research via ethics applications. As noted in both the Introduction and Background sections of this paper, it is very difficult to know the specific criteria for AI-related research projects that would require ethics approval, based on the lack of guidance from the PRE. However, the best people at each institution to determine whether a project requires ethics approval, even with this confusion, are the REBs and REOs. Yet most respondents do not believe they are being given the opportunity to review and evaluate all AI-related research to determine which ones may require approval and which ones fall outside the TCPS2.

In their answers to Q8, respondents make it clear that lack of awareness and unclear guidelines are the main reasons why ethics applications for AI research are not received. For Q9, they conclude that clearer guidelines and an increased understanding of requirements would be the ideal solutions to these problems. However, it is important to recall that both the PRE and the REBs share the responsibility of providing clear guidelines for ethics processes and educating researchers about ethics requirements.

Under the Authority and Application of Interpretation section of the TCPS2 2022 Interpretations website, the PRE notes that they consider REBs “as the primary source of guidance for research ethics questions in their community” and that interpretations of the TCPS2 by REBs can take into account the specific “research under review as well as applicable policies, laws, and regulations” (PRE, 2016b). While the PRE absolutely needs to provide better guidance and advice around AI research and web scraped data use, the REBs have it within their authority to interpret the current version of the TCPS2 for such research.

If the reason why AI labs and researchers are not submitting ethics applications is because they are unclear about the general ethics requirements, providing informational and educational resources about these requirements would help those researchers. Such informational and educational resources can be provided to researchers by the REBs and REOs without oversight of the PRE.

Respondents ranked the fact that they would benefit from a clearer understanding of the data used in AI research as 4th, while they ranked the fact that researchers themselves need a clearer understanding of the data used in AI research higher at 2nd. There is a good chance that AI researchers already know a great deal about the data they use in AI tools and research, but they do not understand whether that data requires ethics approval, which was ranked as 3rd.

It is likely that a lot of AI research is being conducted by researchers in disciplines such as computer science and engineering. Much of the research conducted in these disciplines does not usually require ethics approval as it does not involve the use of (direct) human participants (or their identifiable data). Therefore, researchers in these areas would not be as familiar with the ethics approval process (Munteanu et al., 2015; Wright, 2007) or the TCPS2. Ensuring informational and educational resources are shared with all disciplines and departments within each institution may help to increase researchers’ understanding of ethics requirements, which may then increase the number of ethics applications submitted.

It is clear, however, that the most popular change that must occur is to provide clearer guidelines around AI research (Ferretti et al., 2021) directly in the TCPS2. Without such guidelines, it is difficult for REBs and researchers to know when and if a specific AI research project needs ethics approval as the type of data used, and the way in which it is used, is not clearly discussed in the current version of the TCPS2. This solution lies solely with the PRE and the Tri-Council.

Lack of Guidance on AI Research Ethics

Even if something like Bill C-27 were passed, it alone may not provide sufficient information to assist the PRE or REBs. Like most bills, Bill C-27 could be interpreted in several different ways and requires subsequent regulations to be written and implemented before the public is able to effectively abide by the legislation. The gap between the passing of the EU AI Act in 2024 and its full implementation in 2027 is a perfect example of the need to develop regulations once legislation is passed. Without regulations providing specific guidance, REBs, REOs and the PRE may find it difficult—but not impossible—to interpret legislation for the purpose of updating policies and guidelines. For example, Resseguier and Ufert provide examples of how the ethical obligations outlined in the EU AI Act could be used for research ethics review purposes (2024). In addition, and as noted previously, a multitude of AI ethical guiding principles have been produced that could be used as a roadmap for TCPS2 updates and guidance.

Even without legislation or guiding principles, the PRE and the Panel on the Responsible Conduct of Research (PRCR) could provide guidance around AI-related research and its various data sources based solely on their own requirements. For example, Knight at al. note that it is not necessary to reinvent the wheel and that “existing REC models can effectively support consideration of ethical issues in AI research,” (2025). It does not appear that the PRE and PRCR have even considered this route, possibly due to the lengthy consultation process normally undertaken by government organizations. AI research, however, is in a league of its own. The potential harm caused by AI is both vast and various. At a minimum, high-level guidance, even in a draft format, related to the ethics of AI research needs to be developed quickly.

Best Practices

Based on the various gaps in the TCPS2 and the results of our survey, the following recommendations have been developed.

We recognize the limitations of both the Tri-Council and REBs with regards to staffing levels, funding availability, and other resources. However, with the pace at which AI is currently moving, we do not have the luxury of taking our time. Solutions do not have to be perfect, or final. Opening the lines of communication, for example, would go a long way to assuaging concerns amongst all groups involved. If the ethical impacts of AI research are not considered in short order, the speed of the technology may pass the point of no return. Rather than having ethics drive AI research, we could be faced with AI research dictating how ethics will (and will not) work.

Tri-Council Recommendations

REB Recommendations

Research Agenda

Since this survey was conducted in 2023, many Canadian public universities have created and released policies and procedures related to AI. However, the majority of those policies and procedures have focused on students and academic integrity, not research ethics or integrity (Gillmore, 2024; Marcel & Kang, 2024; Usher & Desforges, 2023). Also, since this survey was conducted, Ontario passed Bill 194, the Strengthening Cyber Security and Building Trust in the Public Sector Act in November 2024. This Act includes AI-related legislation that may impact Ontario public universities, but the associated regulations have yet to be developed. Other provinces are also developing AI-related legislation and regulations that may impact public universities. Future research into this topic could include an investigation into how legislation and university-specific policies may impact the research ethics process at public universities at the provincial level.

Future research into this topic could also include a survey of AI researchers to investigate how they view the research ethics process and what about that process could be improved or changed from their perspective. The results of such a survey could be compared to these survey results to contrast the perspectives of REBs to AI researchers to develop additional recommendations and best practices that could benefit both groups.

Educational Implications

Based on both the results of this study, and the experiences of the authors, several audiences would benefit from education related to the recommendations presented. First, the Tri-Council and the PRE must start the educational process for both REBs and researchers on how to handle AI-related research projects, before any official TCPS2 updates. This education should be based on current expert feedback and be continuously updated as new information is obtained, technology advances, and legislation and regulations are implemented.

Second, REBs must take accountability for their processes and better educate AI researchers on those processes. They need to streamline the application process to remove unnecessary complexities which likely prevent some researchers from applying for ethics approval. Third, REBs must take it upon themselves to learn more about the AI research being conducted at their institutions, specifically how data is used in different contexts and how the current TCPS2 applies to that data.

Footnotes

Acknowledgements

The authors wish to thank the Office of the Privacy Commission of Canada for grant funding that allowed this survey and the larger project to be conducted. The authors also wish to thank the members of the Ethics & Technology Lab at Queen's University that worked on the larger project and assisted with the development of the final report and website. Last, but certainly not least, the authors would like to sincerely thank all peer reviewers and editors for their valuable feedback on this manuscript.

Ethical Considerations

This study was approved by the Queen's University General Research Ethics Board (GREB) (#6039533) on September 28th, 2023.

Consent to Participate

Survey participants were provided with a detailed Letter of Information about the study via the online survey platform Qualtrics. Participants were asked to consent to participating in the study by clicking a button labeled “Yes, I wish to continue with the survey” which also moved them forward to the first question of the survey. No personal information was collected for the study in order to keep responses anonymous. However, due to a combination of demographics (province, university size, and ethics position), identities of respondents could potentially be determined by the process of elimination. In order to remove this possibility from study results, data collected about these three demographics were combined or grouped in categories of five (5) responses or more. For example, the three (3) responses from Nova Scotia plus the one (1) response each from New Brunswick and Newfoundland were grouped into a general “East Coast” category.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Office of the Privacy Commissioner of Canada [Officium: 777-6-560655].

Declaration of Conflicting Interest

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability

The datasets generated and analyzed during this study are available from the corresponding author on reasonable request.

Notes

Author Biographies

Appendix A

Letter of Information and Survey Questionnaire

SECTION: Letter of Information & Consent

This study has been reviewed for ethical compliance by the Queen's University General Research Ethics Board.