Abstract

Prospective research participants do not always retain information provided during consent procedures. This may be relatively common in online research and is considered particularly problematic when the research carries risks. Clinical psychology studies using the trauma film paradigm, which aims to elicit an emotional response, provide an example. In the two studies presented here, 112–126 participants were informed they would be taking part in an online study using a variant of this paradigm. The information was provided across five digital pages using either a standard or an interactive format. In both studies, compared to the control condition, participants in the interactive condition showed more retention of information. However, this was only found for information about which they had been previously asked via the interactive format. Therefore, the impact of adding interactivity to digital study information was limited. True informed consent for an online study may require additional measures.

Keywords

Introduction

Prospective research participants do not always fully read the information provided during consent procedures and may therefore not be aware of the risks associated with a study. Several online studies have manipulated digital study information with the aim of improving reading and, ultimately, comprehension of the information. 1 The present experiments replicate and extend this line of research by applying one promising manipulation, namely increased interactivity, using a clinical psychology paradigm known to carry ethical risks.

Past Experiments on Improving Consent Procedures

Geier et al. (2021) examined the impact of how digital study information is presented on how much information is remembered. University students read one of six versions of the information: a control version, based on a template provided by the local institutional review board, and five experimental versions involving (1) more spaces and bolding (see also Perrault & Keating, 2018), (2) a brief text followed by an optional longer text (Perrault & McCullock, 2019), (3) a low reading level, (4) providing initials at the end of the text, or (5) splitting the text across multiple pages and adding questions to each page. Subsequently, participants were asked what they remembered from the text using the same open-ended questions as in the fifth experimental condition. Answers were scored as correct or incorrect. The only experimental version to yield more correct answers than the control version was the one asking page-by-page questions. Geier et al. (2021) argued that by interacting with the text, more attention was drawn to the information provided. They concluded that online research should include an interactive consent procedure.

One field in which online research has become more prevalent is psychology. Within the field, it has been acknowledged that obtaining informed consent is more difficult online than in the traditional laboratory setting (Kraut et al., 2003). Nonetheless, the direct relevance of the aforementioned studies aiming to improve digital consent procedures (e.g., Geier et al., 2021; Perrault & Keating, 2018; Perrault & McCullock, 2019) for psychological research is limited given their phenomenological rather than translational nature (Gosling & Mason, 2015). That is, these studies focused on the problem of the online environment itself (i.e., the difficulty of obtaining informed consent when study information is present digitally) rather than on how to ensure that a study traditionally conducted offline, e.g., in a psychological laboratory, is well-implemented in this online environment. Although such a translation might be seen as a mere change in the way study measures are delivered, the increase in ethical risks can be substantial.

One relevant example of how traditional psychological studies have been translated to the online environment comes from clinical psychology studies employing the trauma film paradigm (TFP; Holmes & Bourne, 2008). This procedure uses aversive video material to examine predictors of trauma-related psychopathology as well as how this may be prevented or treated. As the video material is meant to briefly cause distress, participants should be briefed carefully about the nature of the material and about their right to stop at any time during the study, before providing consent (James et al., 2016). TFP studies have traditionally been conducted in psychological laboratories, but their closing during the COVID-19 pandemic has contributed to a shift to online implementations (e.g., Bücken et al., 2022; Jones & McNally, 2022). Moreover, in recent years TFP researchers have been attracted by online participant platforms to obtain the large sample sizes required for high statistical power (e.g., Taylor et al., 2022). To the best of our knowledge, these online studies did not alter their consent procedure to the new environment. More insight in how well participants in online TFP studies read, retain, and comprehend the digital information and how this may be improved is imperative to ensure true informed consent. This is particularly important given that TFP studies have ethical risks (James et al., 2016).

The Present Research

Participants in the two studies presented here were led to believe they would be taking part in online research involving procedures resembling the TFP. Following Geier et al. (2021), participants in both studies were digitally informed using either five pages of text derived from a previous TFP study (control version) or five pages of text accompanied by page-by-page questions (interactive version). However, our study differed from Geier et al. (2021) in that (a) the text informed about a study with known ethical risks, and (b) after participants provided consent, we asked them not only the same “old” questions previously asked to the participants in the interactive condition, but also “new” questions covering other parts of the information. This was our retention test.

We expected participants in the interactive condition to answer more questions correctly during this test than participants in the control condition (hypothesis 1). We also expected this effect to be moderated by question novelty; the effect size for the “old” questions would be larger than that for the “new” questions (hypothesis 2). If so, this would indicate that participants in the interactive condition selectively remembered information they were asked about before, rather than the study information more generally. This is important to know from an informed consent perspective.

As in Geier et al. (2021), participants were students taking part in the study in exchange for partial course credit. At our university, students participate in multiple studies during their first year. Their risk of habituation to consent procedures increases as the year progresses. Therefore, while Study 2 mainly served to replicate the results of Study 1, it was purposely conducted earlier in the year. Also given the risk of non-replication associated with psychological research (Shrout & Rodgers, 2018), with our replication we aimed to explore the robustness of the findings. Indeed, results were comparable across the two studies. We present them parallelly.

Methods

Statement of Transparency

Both studies were positively evaluated by our departmental ethics committee. 2 Both studies were preregistered on the Open Science Framework (OSF) before data collection, see https://osf.io/xqb6y (Study 1) and https://osf.io/uqt8 h (Study 2). We present the data from our retention test as well as from self-report measures administered in both studies. Measures only administered in one study were collected to support student work and are not reported here (but available on the OSF).

There were multiple deviations from the preregistrations. (1) Students could only sign up for Study 1 if they had not previously taken part in an actual online TFP study that was also recruiting first-year students at the time. This selection criterion was not mentioned in the preregistration for Study 1. It was also not mentioned in the preregistration for Study 2 and it was erroneously not applied there, even though an actual online TFP study was also ongoing then. (2) The number of unique participants included in the analyses was lower than the numbers planned after our power analyses (Study 1: 20%; Study 2: 8%). This was due to some students providing multiple entries, which we only fully realised when preparing this paper. (3) Due to researcher error in Study 1, the number of “old” vs. “new” questions on the retention test was imbalanced, see Measures for details. This was purposely not corrected in Study 2. (4) In Study 1, our actual study information provided after debriefing erroneously included a download option for the debriefing text rather than for the information text. This was corrected in Study 2. (5) While for hypothesis 2 our preregistered analysis plans (Study 1: https://osf.io/xfku4; Study 2: https://osf.io/b8yu3) predicted a significant 3 interaction between condition and question novelty, follow up analyses were not specified. We compared the two conditions within each level of question novelty (see Analysis below).

Participants

Participants were first-year students in the Dutch or English Bachelor of Psychology programs at the University of Groningen. We did not obtain demographic data for privacy reasons. However, based on previous studies, we assume an average age of 19–20 years, a female-to-male ratio greater than 2, a Dutch-to-international ratio of around 1, and a comparable English-language proficiency across the two programs.

For both studies we aimed to recruit 70 participants per condition. This is comparable to Geier et al. (2021). We conducted a sensitivity analysis in G*Power (Faul et al., 2007) for a one-sided independent-samples t-test using a power of 80% and an alpha of 0.05. This analysis indicated that 140 participants would suffice to detect a standardized effect size of Cohen's d = 0.48.

There were 187 unique individuals who started Study 1 and 192 who started Study 2. However, 75 and 66 of them, respectively, did not actively consent to the use of their data collected on our main outcome measure, mostly because they stopped the study early on (and if they restarted, these entries were not counted, see Analysis). This left 112 participants in Study 1 and 126 participants in Study 2.

Measures

https://osf.io/hq6t8 (Study 1) and https://osf.io/srxvh (Study 2) contain the complete Qualtrics surveys. The main outcome measure was our retention test which consisted of 20 three-choice questions, 9 “old” questions (previously presented in the interactive condition during the first consent procedure, see Procedures) and 11 “new” questions (not previously presented). See the Qualtrics surveys for an overview of the questions and the answer options; Qualtrics randomized their order for each participant. We calculated an overall retention score using the total number of correct answers (range 0–20). We also calculated scores from the numbers of correct answers on the old vs. new questions; these scores were adjusted to account for the imbalanced number of questions per score.

The retention test was administered before the debriefing (see Procedures). There were two more pre-debriefing measures. Firstly, 12 items derived from the Reactions to Research Participation Questionnaire (RRPQ; Kassam-Adams & Newman, 2002) assessed expected responses to participation in the fake study. A 5-point Likert scale ranging from “Strongly disagree” to “Strongly agree” replaced the original 3-point scale. Secondly, 2 items using the same 5-point Likert scale assessed self-reported reading and understanding of the fake information. For details, again see the Qualtrics surveys.

Post-debriefing, participants completed the same RRPQ items in relation to their experiences in previous SONA studies. They also estimated how many previous online and in-person SONA studies they took part in. General experiences with consent procedures during these past SONA studies were then asked using six questions with the same 5-point Likert scale.

Procedures

Participants were recruited via SONA (www.sona-systems.com). First-year Psychology students at the University of Groningen use this online platform to find studies to take part in and obtain partial course credit. Study 1 participants were recruited during the fourth quarter (April 5-May 1) and Study 2 participants during the second quarter a year later (December 23-February 10, excluding the holidays). We advertised for an online three-part study titled “How do you react to seeing emotional pictures.” See https://osf.io/5ca8 h and https://osf.io/f3uqk for the text.

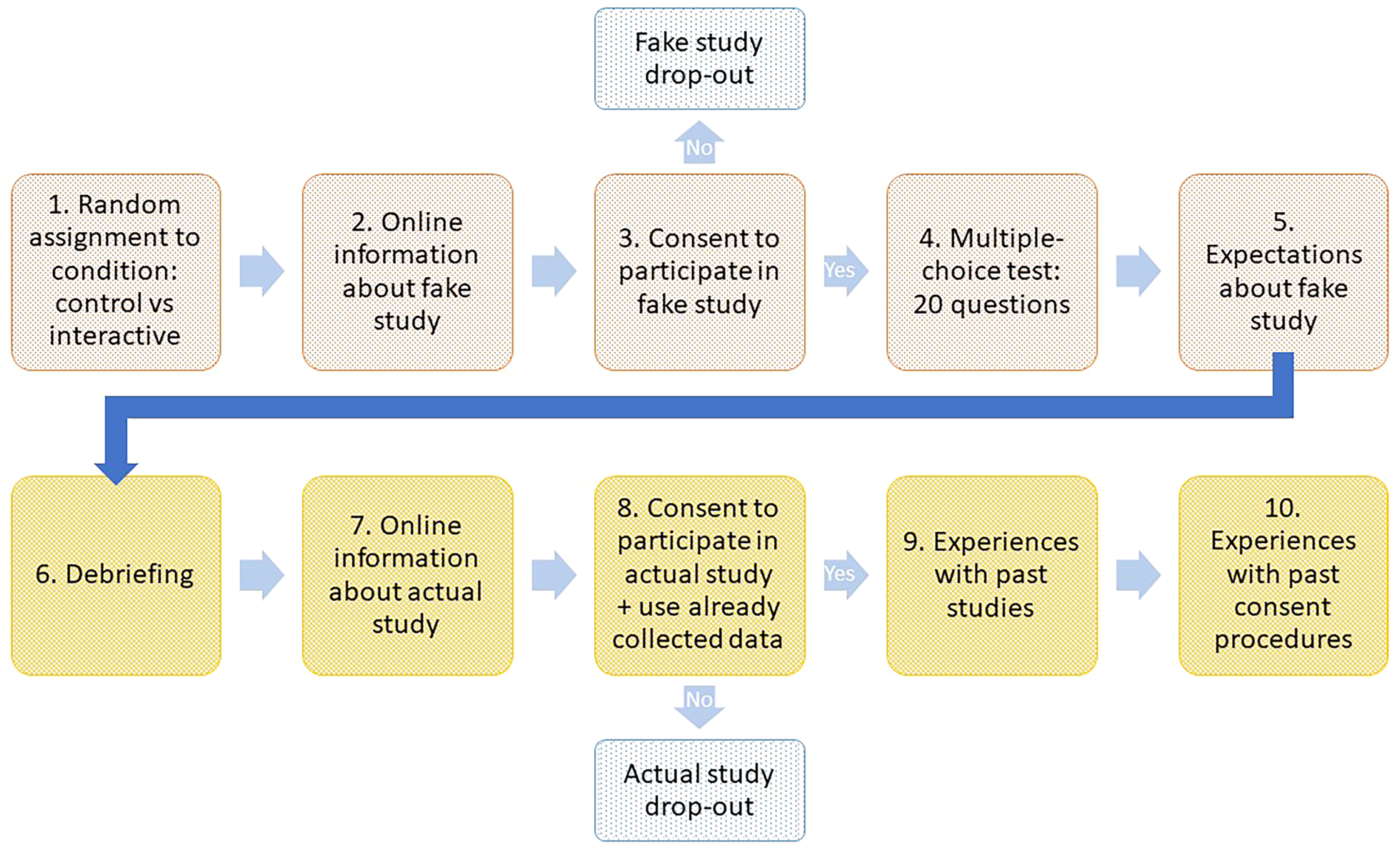

Advertisements included a link to Qualtrics (www.qualtrics.com). The study was programmed in this online platform and started by announcing that students would read detailed information before being asked to consent to participate and that, if they consented, they would subsequently complete part 1 of the study while parts 2 and 3 would be offered at a later point in time. Upon proceeding to the next online page, students were randomly assigned by Qualtrics to one of the two experimental conditions (Figure 1, step 1) and subsequently proceeded to the study information (step 2). In the control condition, students were given standard information divided across five digital pages (see below). In the interactive condition, the bottom of each page also included two multiple-choice questions about the text provided on that page. Students were required to answer both questions correctly before being able to proceed.

Graphic representation of study flow. Note. Participants who dropped out during the consent procedure for the fake study were not compensated. Participants who dropped out during the consent procedure for the actual study were compensated.

The information for Study 1 was derived from information used in a recent TFP study that was also preregistered, see https://osf.io/w7384. Study 2 used the same text as Study 1; see https://osf.io/hq6t8 (Study 1) and https://osf.io/srxvh (Study 2). The original text from the TFP study was changed as follows: (1) There were minimal textual adjustments that did not meaningfully alter the information. (2) The study was said to involve “seeing emotional pictures” rather than “watching stressful film scenes” to not overlap with an actual TFP study that was also recruiting SONA students at the time. (3) The study information was divided across five pages. Page 1 listed the researchers involved, provided contact information for the principal investigator, included a general introduction, and stated the purpose of the research. Page 2 provided detailed procedures for parts 1–3, gave the duration of and compensation for each part, and stated the benefits. Page 3 provided detailed information about risks. Page 4 described privacy measures taken to ensure confidentiality and explained how the data would be handled during and after the study. Page 5 included a statement on the voluntariness of participation and provided information on whom to contact for questions about the study and about participant rights.

Page 6 asked for consent to participate in the (fake) study (see Figure 1, step 3). Students who withheld consent were redirected to SONA and received no credits. Students who consented were asked to answer, before starting part 1 of the study, some questions to assess what they remembered from the study information (Figure 1, step 4). They were instructed to answer to the best of their ability and told that after answering the questions there would be the option to read the information again. After answering the questions, they were asked about their expected responses to participating in the study (step 5), and how well they thought they read and understood the study information. See Measures for details.

Subsequently, students were debriefed (Figure 1, step 6). They were informed that they had been deceived and that the actual study was about how much they remembered from the fake study information. They were then informed about the purpose of the deception and about the purpose of the actual study. The actual information followed, in a similar format as the fake information except it was not divided across multiple pages (step 7). Consent to participate in the actual study, and to use the data collected before the debriefing, was asked on a subsequent page (step 8). Students who withheld consent were redirected to SONA but received credits for their time. Students who consented proceeded with the rest of the study (steps 9–10) and received credits afterwards. Figure 1 summarizes the procedures.

Analysis

All analyses were conducted in SPSS version 26 (IBM Corporation, Armonk, NY, USA). Preparation of the Qualtrics data involved several steps. Firstly, SONA ID numbers were replaced with random numbers. Secondly, rows showing a response type other than “IP address” (e.g., “Spam”) were deleted (Study 1 vs. Study 2: 1 vs. 0). Thirdly, rows were checked for multiple entries. In case of multiple entries (61 vs. 80), only the first entry was kept (187 vs. 192). Fourthly, entries lacking an affirmative response to the false consent question were deleted (Study 1: 35 missing and 2 individuals did not consent, Study 2: 32 missing and 1 individual did not consent). Fifthly, we checked how many remaining individuals did not respond affirmatively to the true consent question (none were found).

Hypothesis 1, that participants in the interactive condition would answer more questions on our retention test correctly than participants in the control condition, was tested using an independent samples t-test. In case of a significant Levene's test we report the unequal variances option with adjusted degrees of freedom. Hypothesis 2, that the effect of condition would be moderated by question novelty (a within-subjects factor with two levels: “old” versus “new”, see Measures), was tested with a mixed-design analysis of variance with a specific focus on the interaction between condition and novelty. A significant interaction was followed by post-hoc t-tests for differences between conditions within each level of question novelty.

Given concerns about a non-normal data distribution, we planned additional Mann-Whitney U-tests for both hypothesis 1 (one test) and hypothesis 2 (one test per level of question novelty). As their outcomes led to comparable results, these are only presented on the OSF.

We derived effect sizes (Cohen's d) from t-test outcomes using the formula d2/(d2 + 4) = t2/(t2 + df) (Rosnow & Rosenthal, 1996). As SPSS does not provide t-values for post-hoc t-tests we calculated these from means and standard errors using an online calculator (www.graphpad.com).

Results

On average, study 1 participants had previously participated in 17 online and 4 in-person SONA studies (range 4–44 and 0–12, respectively); these numbers do not include 3 values that were either missing or unrealistically high, defined as reporting over 60 studies. There were 47 participants in the control condition and 65 participants in the interactive condition.

Participants in Study 2, which took place earlier in the academic year than Study 1, had previously participated in a mean of 13 online and 3 in-person SONA studies (range 4–50 and 0–12, respectively); these numbers do not include 3 unrealistically high values. There were 60 participants in the control condition and 66 participants in the interactive condition.

Hypothesis Testing on the Main Outcome Measure

Hypothesis 1: In Study 1, the difference between conditions was significant, t(110) = 5.84, p < .0001, d = 1.11. Participants in the interactive condition obtained a higher overall retention score, M = 16.2, SD = 2.1, than participants in the control condition, M = 13.7, SD = 2.3. Study 2 showed comparable results, M = 16.4, SD = 1.9 vs. M = 13.5, SD = 2.6, respectively, t(124) = 7.20, p < .0001, d = 1.29.

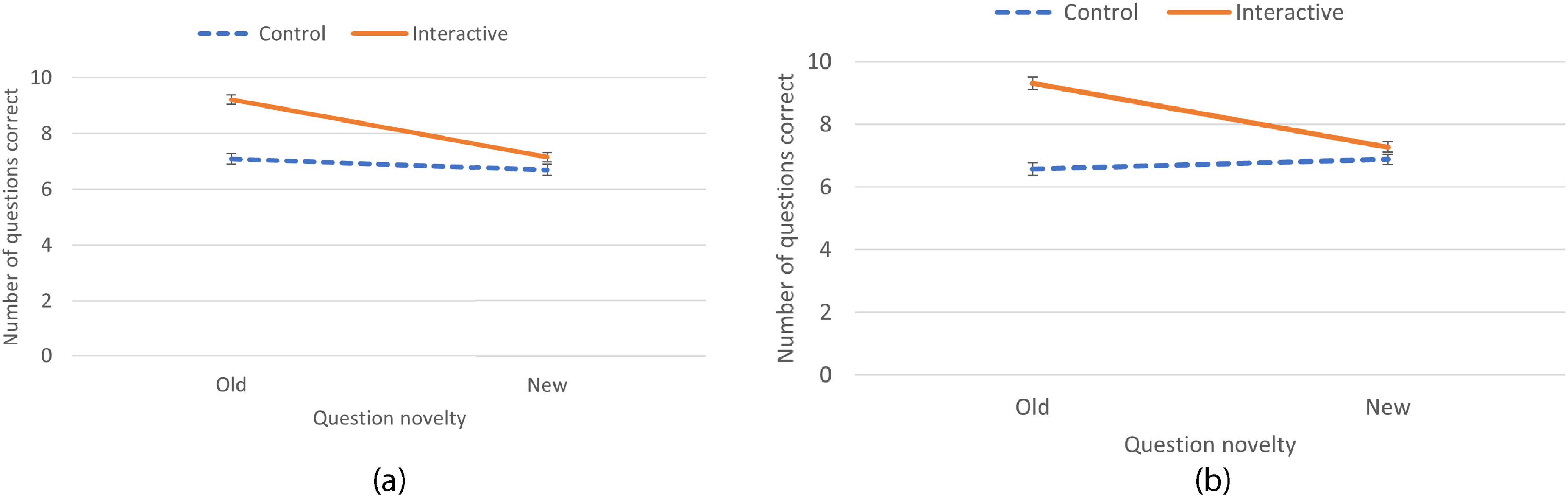

Hypothesis 2: In Study 1, the condition by novelty interaction term was significant, F(1,110) = 26.74, p < .0001, see Figure 2a. Post-hoc tests revealed a significant effect for condition with the old questions, t(56.7) = 8.00, p < .0001, d = 2.12, but not with the new questions, t(56.7) = 1.76, p = .08, d = 0.47. Again, Study 2 obtained comparable results. Figure 2b visualizes the significant interaction term, F(1,124) = 53.96, p < .0001. There was a significant effect for condition with the old questions, t(124) = 9.72, p < .0001, d = 1.75, but not with the new questions, t(124) = 1.69, p = .09, d = 0.31.

Mean scores on the multiple-choice retention test as a function of condition (control, interactive) and question novelty (old, new) in (a) study 1, and (b) study 2. Note. Error bars represent standard errors around the mean. As we erroneously included an unbalanced number of old vs. new questions in the retention test, scores were corrected such that they are comparable across the two levels of question novelty.

Analyses on Additional pre-Debriefing Measures

Participants’ expectations about the fake study (measured using an adapted RRPQ, see Measures) did not statistically differ between the two conditions in either study, see Supplementary Table 1.

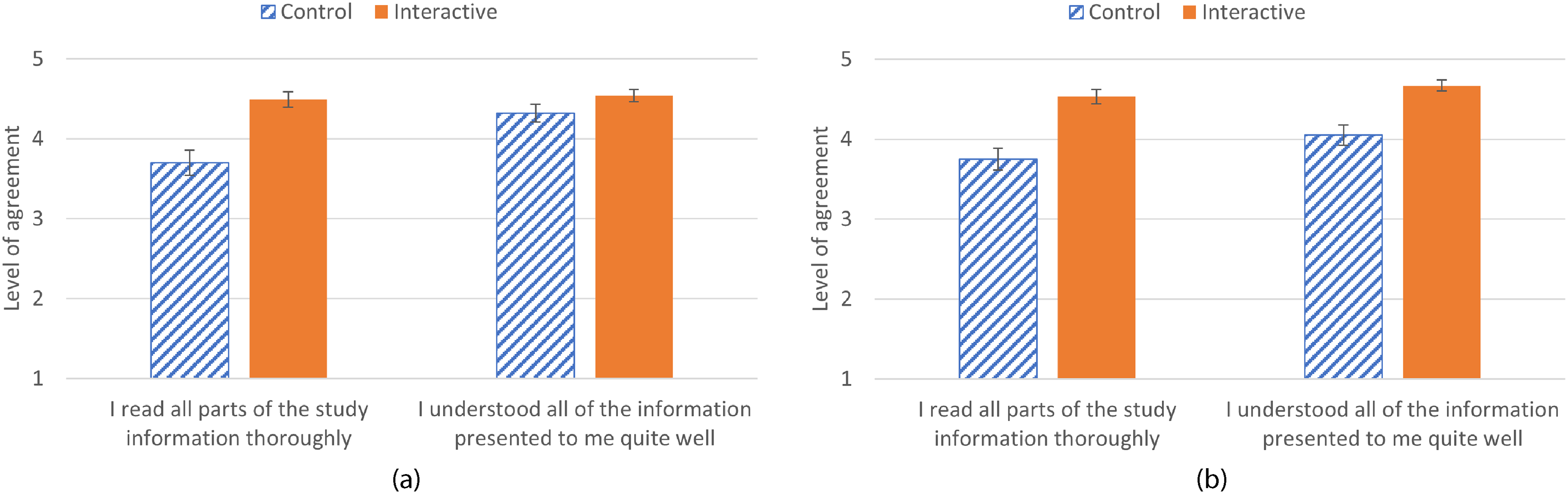

In both studies, participants in the interactive condition reported reading the study information better than participants in the control condition (Study 1: t(110) = 4.51, p < .0001, d = 0.86; Study 2: t(102.75) = 4.75, p < .0001, d = 0.94). Study 2 participants also reported having understood the information better when they were in the interactive condition rather than in the control condition, t(91.20) = 4.21, p < .0001, d = 0.88. However, Study 1 participants in both conditions did not differ significantly in their self-reported understanding of the study information, t(110) = 1.69, p = .09, d = 0.32. See Figures 3a and 3b for a visual representation of these results.

Mean scores on two questions on Reading and Comprehension as a function of condition (control, interactive) in (a) study 1, and (b) study 2. Note. Error bars represent standard errors around the mean.

Analyses on Post-Debriefing Measures

There were no significant differences between the two conditions in experiences with past SONA studies nor with past consent procedures, see Supplementary Tables 2 and 3, respectively.

Discussion

This research built on Geier et al. (2021), who found that participants who answered questions on each page of digitally presented study information (interactive condition) remembered the information better than participants who reviewed standard information (control condition). Likewise, our interactive condition showed a higher overall retention score than our control condition (Hypothesis 1). The interactive and control conditions in our research were comparable to the conditions in Geier et al. (2021).

However, when Geier et al. (2021) asked questions about the information to assess how well participants remembered it, they used same questions that had been used in the interactive condition during the reading phase. In comparison, we asked questions that were old (i.e., previously presented during the consent procedure) to participants in the interactive condition as well as questions that were new (i.e., not previously presented) to all participants. Unlike Geier et al. (2021), we examined question novelty as a moderator of the effect of condition on the number of correct answers (Hypothesis 2). Importantly, we found that the manipulation of adding interactivity only benefited the number of correct answers to the previously-presented questions. Thus, it seems the interactive procedure increased retention of study information, but only if participants had previously answered questions about it. The impact of interactive elements in an online consent procedure may therefore be more limited than Geier et al. (2021) suggested. Such elements may improve the retention of some aspects of the study information, but not of all information relevant for true informed consent.

Our research also differed from Geier et al. (2021) in that we used study information derived from a previous study employing the TFP, which carries risks that should be carefully mitigated, including by ensuring informed consent (James et al., 2016). Not retaining the information provided during a consent procedure for an online TFP study is considered particularly problematic. The ethical risks associated with TFP studies may be larger online, where TFP studies are increasingly often being conducted, than in the laboratory. In particular, although this can be avoided by means of a real-time video connection, no researcher is usually present to monitor participant distress and guarantee debriefing (Nosek et al., 2002). Moreover, the privacy risks may be more complex (Bashir et al., 2015). In light of this, it is relevant to mention that our retention test included questions about both the ethical risks and the privacy risks.

The test also included arguably less vital questions such as about the researchers involved, the general purpose of the research, and details on data handling. Nonetheless, providing information on the nature of the material and about participants’ right to stop at any time is particularly important for TFP studies (James et al., 2016). Thus, future online studies may benefit even more from adding interactive elements to their digitally presented study information when the questions asked during the consent procedure specifically cover these aspects.

Limitations

While Geier et al. (2021) used open-ended questions to assess how much from the study information was remembered, we used multiple-choice questions. Open-ended questions assess recall of the previously presented information; multiple-choice questions merely assess recognition. Nonetheless, as remembering can involve both recognition and recall, we believe our test assessed retention.

Yet we are less certain than Geier et al. (2021) that it assessed comprehension. This idea is supported by our finding that participants in the interactive condition provided more correct answers only to previously asked questions. Being able to retain information (i.e., having factual knowledge) is not the same as fully appreciating it (i.e., understanding the knowledge). Study 2 participants in the interactive condition reported a higher level of both reading and understanding the study information, which is reassuring. However, in Study 1, participants in the interactive condition reported a higher level of reading the information but not that they understood it better. Similarly, Geier et al. (2021) did not find higher self-reported readability of the study information in the interactive condition.

As a final note, actual online TFP studies were recruiting from the same SONA pool at the time of both Study 1 and Study 2. For Study 1 we restricted participation to students who had not taken part in the ongoing TFP study. This way their previous reading of a highly similar text would not affect their reading of the fake information. As this selection criterion was not used in Study 2, it is possible that some Study 2 participants took part in the ongoing TFP study before signing up for our study. However, it appears this did not have a major impact, as Studies 1 and 2 yielded comparable results.

Conclusion

The impact of interactive elements in an online consent procedure may be more limited than previously thought (Geier et al., 2021). Our results indicated that answering questions while reading study information increases retention of the particular information that was probed. Yet, this advantage did not seem to generalise to unprobed information.

Best Practices

Consent procedures may benefit from adding interactivity in the form of mandatory questions on study information, in particular if the questions address the most important parts of the information. Studies adding such interactive questions should make sure to cover both ethical and privacy aspects.

Research Agenda

Future research on this topic may help answer the following questions. Firstly, how to ensure that the interactivity leads to participants providing consent with full knowledge and understanding of the risks. Secondly, how to improve actual comprehension of the study information.

Educational Implications

University students participating in research in exchange for course credit often take part in multiple studies within a year. Over time they may become less motivated to read information about a study. Increased awareness of how they may miss information about the associated risks seems pertinent.

Supplemental Material

sj-docx-1-jre-10.1177_15562646241280208 - Supplemental material for How Making Consent Procedures More Interactive can Improve Informed Consent: An Experimental Study and Replication

Supplemental material, sj-docx-1-jre-10.1177_15562646241280208 for How Making Consent Procedures More Interactive can Improve Informed Consent: An Experimental Study and Replication by Marije aan het Rot and Ineke Wessel in Journal of Empirical Research on Human Research Ethics

Footnotes

Acknowledgements

We are grateful to the input of A.C. McGrane and A.C. Waldeck, who assisted in Study 1 as part of their Bachelor Honours Research Internship I, and to I. Perricci and F.E. Reichwein, who assisted in Study 2 as part of their Master Thesis.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Materials and Data Availability Statement

All materials are publicly available on https://osf.io/hq6t8 (Study 1) and ![]() (Study 2).

(Study 2).

The raw datasets generated by Qualtrics during Study 1 and Study 2 are not publicly available as they were not anonymous. The fully anonymised datasets (generated in step 1 of the data preparation phase) are also not available as not all participants consented to the use of their data after debriefing. The analysed datasets (generated in step 2 of the data generation phase) are available, see https://osf.io/gmsfu for Study 1 (N = 112) and https://osf.io/28sv3 for Study 2 (N = 126). On the OSF we also provide SPSS syntax used to (a) create the fully anonymised datasets, (b) prepare these datasets for analyses, and (c) analyse these prepared datasets. Also, we provide the SPSS output resulting from (b) and (c) combined, which includes all analysis outcomes. See https://osf.io/y5crz for Study 1 syntax and output and ![]() for Study 2 syntax and output (uploaded in PDF format).

for Study 2 syntax and output (uploaded in PDF format).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.