Abstract

As digital technologies increasingly permeate everyday life, concerns about privacy, surveillance, and algorithmic control have become central to contemporary society. Fictional narratives, particularly in science fiction games, reflect and simulate these concerns, depicting privacy as both a vulnerable right and a commodified asset. This paper examines how video games incorporate surveillance not only through narrative and aesthetics but also through artificial intelligence (AI)-driven systems that track, model, and adapt to player behavior as part of the act of play. Drawing on Foucault’s disciplinary power, Deleuze’s control societies, and Zuboff’s surveillance capitalism, we analyze how games such as Little Nightmares, The Sims, and Cobra Club stage surveillance as atmospheric discipline, everyday normalization, and networked consent. We extend Bogost’s notion of procedural rhetoric with Galloway’s concept of the allegorithm to show how games make ethical arguments through code as well as story. We argue that such games function as laboratories for AI ethics, foregrounding issues of consent, transparency, and autonomy, and propose a procedural ethics of transparency for understanding how play both rehearses and resists algorithmic governance.

Introduction

In an era where digital technologies shape everyday interactions, concerns about privacy and surveillance have become urgent, ethical, and deeply embedded in infrastructural and cultural domains. From predictive artificial intelligence (AI) algorithms to biometric profiling, the boundary between private agency and algorithmic governance is increasingly porous. Fictional narratives—across literature, film, and video games—have long served as speculative spaces for exploring the ethical tensions of technology, using both plausible and hypothetical consequences as central narrative devices. While science fiction provides particularly vivid metaphors for surveillance and control, similar dynamics also emerge in other genres—from everyday simulation and horror to social play—where algorithmic visibility is staged in more mundane or intimate forms. These narratives reflect anxieties surrounding surveillance and autonomy, projecting them into dystopian futures and offering cautionary insights into trajectories rooted in the current political and cultural landscapes.

Novels and short stories like 1984 (Orwell, 1949) (state surveillance and totalitarian control) and Minority Report (Dick, 2002; Spielberg, 2002) (predictive policing and pre-crime), films like 2001: A Space Odyssey (Clarke, 1968; Kubrick, 1968) (technological autonomy and algorithmic governance) and The Dark Knight (Nolan, 2008) (mass surveillance as moral dilemma), and video games like Cyberpunk 2077 (CD Projekt Red, 2020) (corporate-dominated control society), Watch Dogs (Ubisoft, 2014) (explicitly themed around digital surveillance), and Deus Ex (Ion Storm, 2000) (augmentation and surveillance infrastructures), depict worlds in which omnipresent surveillance enables the commodification of privacy and its exploitation for various purposes (Suknot et al., 2014; Willmetts, 2018).

Unlike literature or cinema, video games can often add an explicitly interactive dimension to these concerns. In video games, the “player” does not passively observe surveillance; they experience it through participation and, in some cases, actively contribute to it. More specifically, non-player characters (NPCs) in such games “watch” and react to the player’s behavior, simulating the logic of predictive systems and causality. This dynamic, often referred to as procedural rhetoric, means that games also make arguments through the design and enforcement of rules and systems, in addition to the narrative settings and stories (Bogost, 2007). We can also draw on Galloway’s notion of the allegorithm (Galloway, 2006), in which allegory and algorithm converge, to emphasize that surveillance in games is simultaneously representational and procedural. Such interactions transform gameplay into a mechanism of ethical engagement, where players navigate systems that anticipate and respond to their actions—often without complete transparency or foreknowledge. This structure aligns closely with Der Derian’s cultural logic of simulation and “preemptive seeing” in a world governed by speed, control, and predictive modeling (Der Derian, 1990).

Hayles’s account of the posthuman subject—distributed, datafied, and fragmented—offers a conceptual anchor for understanding this shift (Hayles, 1999). Players often inhabit hybrid identities within a game’s virtual worlds that are shaped by narrative choices, algorithmic modeling, and in-game data capture to track and respond to the player’s actions. This tension between distributed subjectivity and the player’s felt sense of autonomy highlights the paradox of posthuman play: Games simultaneously fragment identity and invite experiences of agency. As technologies and capabilities advance, games increasingly simulate “intelligent” NPCs that track, record, and adjust to the player’s behavior, blurring the lines between simulation, subjectivity, and system. Players often tolerate or even embrace such monitoring when it yields personalization or convenience—a dynamic echoed across digital platforms where the comforts of prediction obscure the costs of surveillance.

At the same time, the broader gaming industry reflects the concerns it critiques through its narratives. As surveillance capitalism explains, once collected, behavioral data becomes an asset to be extracted, predicted, and sold (Zuboff, 2019, p. 8). Game companies collect increasingly vast quantities of player data in the form of engagements and behavioral data to build profiles, often under opaque terms of service, and use this information to develop monetization strategies based on exploitation of behavioral models built using profiling (González López, 2020). The duality of using real-time data to monitor and manage behavior, thereby creating an interactive narrative within the game, and the game developer exploiting collected data in ways that exceed privacy norms, reveals deeper asymmetries of power, knowledge, and consent. Thus, even as games depict autonomy and surveillance in a dystopian fashion as a cautionary tale, they often and increasingly replicate these structures within the real world, a parallel that players of these games are unlikely to realize.

This paper presents three contributions. First, we examine game stage surveillance through both allegorical narratives and algorithmic systems, linking procedural rhetoric to allegorithmic critique. Second, we ground this argument in focused case studies—including Little Nightmares (atmospheric disciplinary surveillance), The Sims (systemic normalization), and Cobra Club (intimate consent and data leakage). Third, we situate these dynamics within surveillance studies, from Foucault’s Discipline and Punishment (Foucault, 1977) to Deleuze’s Societies of Control (Deleuze, 1992), and connect them to Zuboff’s Surveillance Capitalism (Zuboff, 2019). In doing so, we show that games do not merely reflect ethical dilemmas; they enact them, habituating players to trade-offs between data and immersion while opening space for critical reflection. We focus in particular on what we term the “procedural ethics of transparency”—that is, the ways games make visible (or conceal) the algorithmic processes that govern player experience, and how these mirror broader concerns about opaque data systems in real-world AI and surveillance infrastructures.

Fictional Surveillance Worlds

Despite being a fundamental right, privacy is a contested and contingent value shaped by technological, legal, and cultural systems. In the age of surveillance capitalism, privacy is increasingly reframed not as a right but as a resource to be extracted and monetized (Zuboff, 2019). Fictional narratives, particularly in science fiction literature and games, reflect and amplify this shift, offering symbolic critiques of surveillance regimes that normalize the collection and control of data. In media such as Black Mirror (Brooker, 2011), The Matrix (Wachowski & Wachowski, 1999), and Super Sad True Love Story (Shteyngart, 2010), privacy is portrayed as a fragile, nearly obsolete construct—undermined by intrusive technologies and sociopolitical manipulation (Willmetts, 2018). These narratives invite audiences to reflect on the erosion of autonomy under surveillance and to consider how such systems disproportionately impact marginalized groups. Artistic explorations like We and The Lives of Others serve as enduring critiques of totalitarian surveillance, reminding us that the gaze of power is rarely neutral (von Donnersmarck, 2006; Zamyatin, 1924).

Video games extend this critique into an interactive and procedural form. Games transform abstract ethical concerns into experiential challenges by allowing players to inhabit roles within surveillance systems. In titles like Deus Ex (Ion Storm, 2000), Watch Dogs (Ubisoft, 2014), and Immaculacy: A game of privacy (Suknot et al., 2014) (a critique of data transparency), players encounter environments where privacy is compromised or actively weaponized (Solberg, 2022; Whitson & Simon, 2014). These game worlds embed surveillance into core mechanics, prompting players to negotiate trade-offs between visibility, power, and freedom. Crucially, these interactions are not merely narrative devices, but systemic in nature.

Drawing on the concept of procedural rhetoric, we can understand such games as arguments enacted through play (Bogost, 2007). As Galloway’s allegorithm suggests (Galloway, 2006), these systems fuse allegorical critique with algorithmic enforcement, dramatizing how code itself functions as surveillance. For example, in Watch Dogs: Legion (Ubisoft, 2020), players manipulate an open surveillance system, taking on both the role of the watcher and the watched. This dynamic aligns with “simulated surveillance” where players participate in systems that mirror the logic of control operating in contemporary geopolitics (Der Derian, 1990, pp. 29–30).

Moreover, these game mechanics increasingly blur the line between fictional play and real-world datafication, where algorithmic identity is not a static profile but a constantly inferred and adjusted model based on data traces (Cheney-Lippold, 2017). Many games now track player decisions, behaviors, and preferences—creating internal surveillance systems that shape how narratives unfold, often without the player’s full awareness. This surveillance is evident in morality systems, reputation scores, and behavioral branching—gameplay mechanisms that mirror real-world scoring systems used in policing and marketing. Here, Whitson’s work on gamification clarifies that such systems do not just “reward” players but normalize monitoring as pleasurable surveillance: See Whitson (2013, p. 172); Whitson (2015, p. 539). These social credit systems disproportionately impact vulnerable populations, contributing to a “digital poorhouse” (Eubanks, 2018; Noble, 2018). Bizarrely, the industry behind the development of these games mirrors the systems they are seen to critique within the game. Extensive data collection enables developers and their so-called “surveillance partners” to track and profile players for monetization and behavioral prediction in the name of efficient advertising (Kröger et al., 2023).

This data collection creates an ironic tension: Games that critique the suppression of autonomy and surveillance as a dystopian mechanic often reproduce it as a business model. While some games aspire to raise awareness of these issues, others exploit their aesthetics for commercial gain. Themes of privacy and AI are increasingly deployed as branding strategies rather than ethical commitments (González López, 2020; O’Donnell, 2014). The commodification of critique within gaming culture reflects the normalization of surveillance logic, even in ostensibly oppositional media. Yet, games remain potent tools for engaging with the ethics of surveillance. Through interactivity, they allow players to test boundaries, resist systems, or participate in their reproduction. This dual potential—critique and complicity—is what makes gaming a unique site of cultural negotiation. Posthuman subjectivity emerges not in opposition to technology but through entanglement with it (Hayles, 1999). Švelch’s notion of “contained monstrosity” helps explain this ambivalence: Games render surveillance sublime at first but quickly codify it into manageable, rule-bound systems (Švelch, 2023, p. 14). Games, then, do not simply depict posthuman surveillance; they instantiate it, making players complicit in the systems they explore while simultaneously being subjected to it.

The convergence of fictional and real digital worlds thus invites us to consider how speculative environments shape public attitudes toward surveillance, privacy, and agency. Whether on the page, screen, or hand-held controller, design fiction and science fiction offer critical blueprints for imagining alternatives to dominant surveillance paradigms (Wong et al., 2017). Therefore, we should explore and negotiate how these insights are not confined to narrative alone but are embedded in the interactive logic of game systems, raising urgent questions about the ethics of AI and player behavior modeling.

To illustrate this convergence, our analysis could draw primarily on key titles from “AAA” gaming alone; for example, Deus Ex, Cyberpunk 2077, and Watch Dogs. However, these titles can be contrasted with more intimate or experimental works such as Little Nightmares, The Sims, and Cobra Club. Together, these examples demonstrate how games embody both Foucault’s disciplinary surveillance (Foucault, 1977) and Deleuze’s control societies (Deleuze, 1992), situating players in systems that are at once allegorical, algorithmic, and complicit (Galloway, 2006).

Case Studies

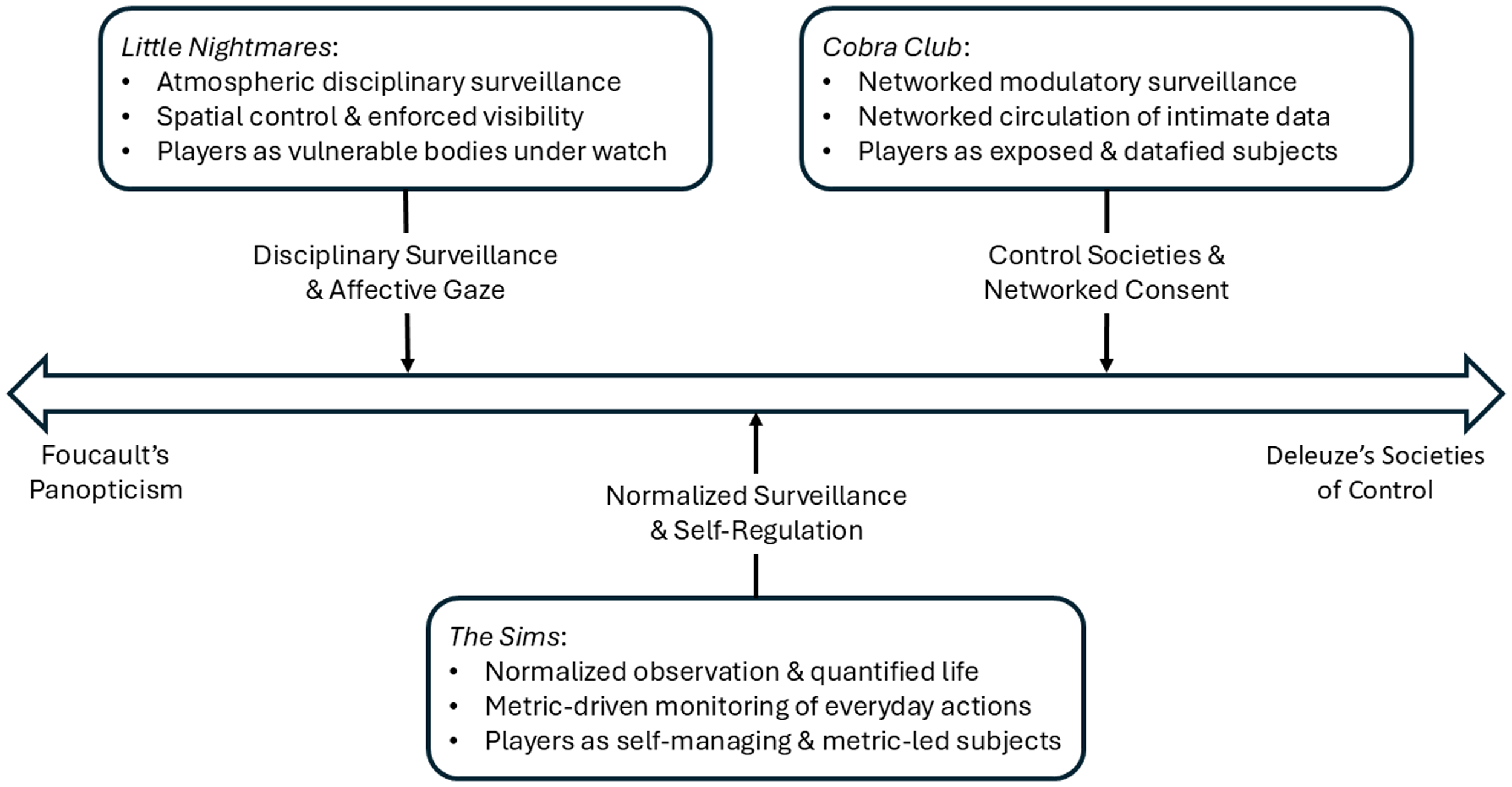

Building on the theoretical distinctions outlined previously, this next section examines three aforementioned contrasting case studies that demonstrate how video games materialize different modalities of surveillance. Each title embodies a distinct configuration of power: Little Nightmares, The Sims, and Cobra Club. The sequence of case studies follows a theoretical trajectory from Foucault’s disciplinary enclosures to Deleuze’s societies of control, see Figure 1. By doing so, we trace a movement from Foucault’s enclosed, panoptic architectures of discipline (Foucault, 1977) to the distributed and affective logics of Deleuzian control societies (Deleuze, 1992). Thus, we reveal how games translate theories of surveillance into embodied, procedural, and affective experiences.

Continuum of surveillance across case studies. Points under each case study denote the surveillance logic, the core mechanism through which it operates, and the resulting player position.

Little Nightmares: Disciplinary Surveillance and the Affective Gaze

Little Nightmares (Tarsier Studios, 2017) places players in a world saturated by fear, control, and constant observation, see Figure 2. The player embodies Six, a small, childlike figure navigating ‘The Maw’—a surreal vessel teeming with grotesque adults and insatiable appetites. As both setting and system, The Maw is a metaphor for surveillance capitalism: It is not merely a backdrop but a living apparatus of consumption, discipline, and control. Although it does not feature explicit digital surveillance infrastructures like the unsubtle Watch Dogs series, it is precisely this atmospheric and embodied quality that makes Little Nightmares significant: It stages surveillance as a disciplinary regime in Foucault’s sense of panopticism, where visibility itself is a mechanism of control (Foucault, 1977, pp. 200–202).

Annotated screenshot highlighting Little Nightmare’s panoptic surveillance system, where visibility determines safety, movement, and bodily consequence.

Visually and mechanically, the game transforms abstract critiques of AI surveillance and data extraction into tangible, affective experiences. Claustrophobic level design, ambient soundscapes, and perpetual risk of detection create a mood of anxious hyper-vigilance. The recurring motif of the eye—emblazoned on doors, fixtures, and mechanized sentinels—serves as both a mechanical threat and a symbolic index of panoptic power. These eyes exemplify the normalizing gaze: They do not simply watch, but sort the player’s actions into categories of visible/unseen, compliant/resistant. These eyes function similarly to technologies like the Pentagon’s “Gorgon Stare,” a real-world airborne surveillance platform capable of tracking hundreds of targets simultaneously (Weinberger, 2019). Just as Gorgon Stare erases anonymity in urban landscapes, the game’s ocular devices erase agency, turning players into objects of constant scrutiny.

The game’s stealth mechanics demand a particular kind of ethical engagement. Players are not given tools of resistance but must instead evade, adapt, and submit to the rhythms of surveillance. This mechanism echoes claims that ethical gameplay often emerges not through explicit moral choices but through the systems that constrain player action (Sicart, 2011). Combat is impossible; however, victory lies in remaining unseen. The mechanics thus force players into procedural enactments of powerlessness—what it feels like to live under systemic observation. Surveillance in Little Nightmares is both visual and bodily, as well as spatial. The grotesque, oversized inhabitants of The Maw—The Janitor, The Twin Chefs, The Guests, and The Lady—literalize the asymmetry of power. Their sheer scale reinforces the impossibility of confrontation. These characters act as algorithmic enforcers, patrolling, reacting, and pursuing without reflection. Their hunger and mindless routines reflect the logic of extraction under surveillance capitalism, where systems consume data without regard for subjectivity or consent (Zuboff, 2019). In Foucauldian terms, these NPCs embody the disciplinary function of the guard: They routinize surveillance into constant, predictable enforcement, producing docile subjects through fear and habituation.

If the grotesque figures of The Maw embody the extractive gaze of surveillance capitalism, then stealth becomes the player’s only viable form of resistance—an embodied strategy of evasion that reflects contemporary anxieties surrounding being captured, tracked, and predicted within algorithmic systems. Little Nightmares reflects this condition: The player must avoid becoming legible to systems that do not care who they are, only that they are detectable. In this sense, stealth functions as an adaptive mode of survival in asymmetric power dynamics, requiring constant alertness and self-modification. Though it may appear passive, each act of hiding enables active, forward movement through hostile environments (Pape, 2024). These moments of invisibility constitute tactical intrusions, wherein the player must dismantle themselves as a readable subject, aligning with Pape’s aforementioned notion of stealth as a selective shaping of one’s information signature. In this way, stealth in Little Nightmares operates as a form of counter-surveillance, enabling the player to navigate the oppressive structures without fully submitting to their gaze. This maps closely to what Deleuze distinguishes from control: Rather than continuous modulation across networks (Deleuze, 1992), Little Nightmares exemplifies the older disciplinary enclosure, training players through repetition and constraint.

An escalating negotiation between submission and resistance shapes Six’s journey. Her acts—evading capture, exploiting the cages of other imprisoned children, choosing which meat to consume—illustrate how survival under surveillance often requires morally ambivalent behavior. These choices reveal the affective dimensions of surveillance: Fear, isolation, and moral compromise. The player’s complicity becomes part of the ethical terrain. This complicity aligns with notions of the posthuman subject: One constituted through distributed systems of perception, control, and response (Hayles, 1999, pp. 288–291). The final confrontation with The Lady—a spectral figure who hides her face and shuns mirrors—encapsulates the game’s ethical critique. The Lady cannot bear her reflection, a metaphor for systems of surveillance that resist accountability. Her downfall comes when Six uses a mirror as a weapon, symbolically turning the gaze back on the watcher. This moment resonates with broader calls for transparency and reflexivity in surveillance systems. When surveillance cannot reflect on itself—when it remains opaque and unaccountable—it collapses under its own contradictions.

Through its design, Little Nightmares stages a procedural allegory of life under constant digital monitoring. The game enacts what is described as “instrumentarian power”—a system that observes and modifies behavior at scale (Zuboff, 2019, pp. 353–373). Yet, it also reveals the emotional toll such systems take on those subjected to them. By placing the player in a world where every move is judged and punished, the game offers more than a dystopian narrative: It becomes a speculative ethics simulator, prompting reflection on autonomy, consent, and the psychological weight of living under the gaze. In this way, Little Nightmares offers a compelling story and an effective model of posthuman surveillance life. It also justifies its place alongside more obvious examples like Watch Dogs or Cobra Club, since it demonstrates how surveillance is not only informational but atmospheric and embodied, training players through visibility, fear, and repetition in a disciplinary mode. It exemplifies how games can embody ethical critique through procedural systems and affective experience, extending the discourse on privacy, control, and digital subjectivity beyond narrative into the play itself.

The Sims: Normalized Surveillance and Self-Regulation

The Sims (Maxis, 2000) has often been read as a sandbox of domestic fantasy, see Figure 3. Yet, it also exemplifies what Foucault terms disciplinary power, as outlined earlier: A regime of normalization that trains subjects. Unlike games that dramatize surveillance through explicit infrastructures of cameras or data leaks, The Sims reveals the banality of surveillance: Everyday life governed by invisible metrics of satisfaction, hygiene, productivity, and consumption. The player is compelled to act not by narrative demands but by system indicators, which constantly measure and display bodily needs, job performance, and social success. These meters operate as what Foucault describes as the examination: A perpetual feedback system that both observes and qualifies the subject (Foucault, 1977).

Annotated screenshot of The Sims showing how player routines are steered by quantified needs and objects that script behavior within the gaming environment.

As Whitson argues, all video games necessarily surveil their players because interfaces must track inputs and states to function: See (Whitson, 2013, pp. 163–165) and (Whitson, 2015, p. 18). The Sims makes this surveillance visible through its meters and icons, normalizing monitoring as pleasurable play. In this sense, it prefigures contemporary gamification systems, where achievements, dashboards, and progress bars govern behavior by reframing surveillance as entertainment. The ethics of The Sims therefore lie not in discrete moral choices but in the systemic valorization of particular lifestyles. Players succeed not by exercising autonomy in an open field but by aligning with the model of a “good life” encoded in the system—one of consumerist aspiration, heteronormative family structures, and labor discipline. The simulation’s surveillance thus operates as a form of cultural pedagogy, teaching what it means to thrive within its boundaries. Critics have noted that the apparent openness of The Sims belies this underlying system logic: Complete freedom is impossible, since behavior is always evaluated within the metrics of the game (Jayemanne, 2017, pp. 17–19; 31–33). Jayemanne’s distinction between felicitous and infelicitous play helps make sense of this: Acts are judged not by player intent but by whether they fit the game’s framing devices. The felicity of play here is achieved when players comply with system demands, while infelicity arises when players resist, producing breakdowns or failures.

By framing everyday life as a surveilled system of routines, The Sims demonstrates how normalization functions as a subtle but powerful form of surveillance. It belongs alongside games like Little Nightmares, Watch Dogs, and Cobra Club because it foregrounds the mundane metrics and feedback loops through which surveillance becomes naturalized. In doing so, it highlights how games act as laboratories not only for spectacular dystopias but also for the everyday mechanics of control.

Cobra Club: Control Societies and Networked Consent

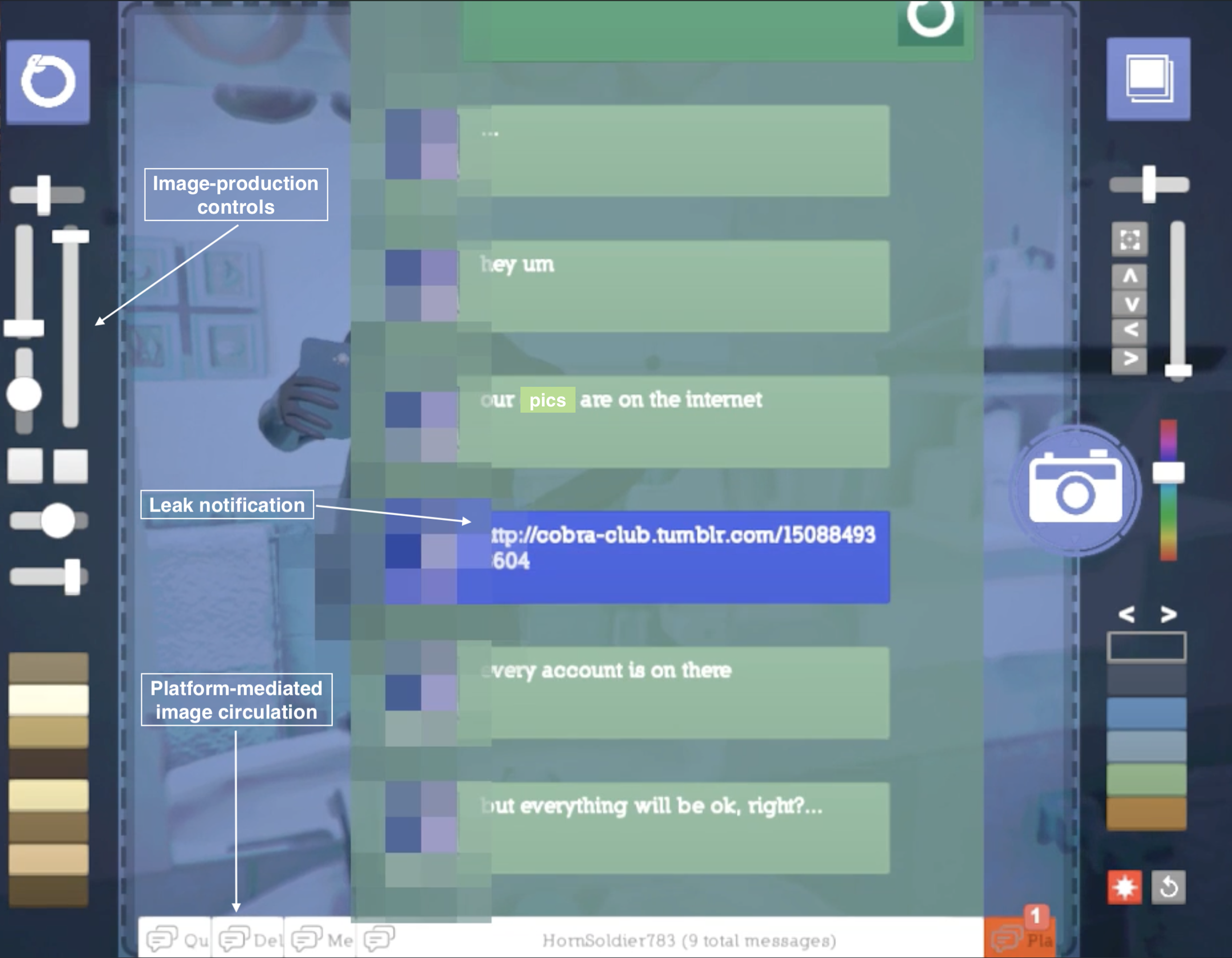

Cobra Club (Yang, 2015) is a short, experimental game that stages surveillance not through ambient atmospheres or normalized systems, but through the intimate act of sharing nude selfies, see Figure 4. The player customizes and photographs a digital penis, choosing angles, lighting, and filters before sending images to a fictional dating app. At first playful, the experience soon ruptures: The player’s images are leaked and uploaded to a government database, dramatizing the fragility of consent and the porousness of networked infrastructures.

Annotated screenshot of Cobra Club revealing the interface through which players produce and share images, drawing attention to platform-level circulation, leak notifications, and the precariousness of digital self-exposure.

Gallagher positions Cobra Club as a deliberately bawdy but explicitly political game, foregrounding what he calls “networked privates” rather than hidden depths of subjectivity (Gallagher, 2017); unlike psychological games that treat the player as a subject to be analyzed (e.g., Silent Hill: Shattered Memories, with its psychometric profiling via the CANOE model), Cobra Club treats the subject as a “black box”: Inputs and outputs circulate across networks, while the inner self is less important than the flows of visibility, context, and leakage. This emphasis highlights how identity online is relational and infrastructural, not only individual. The game’s twist enacts what Boyd calls context collapse: Media created with one audience in mind is suddenly exposed to unintended ones (Boyd, 2010). Gallagher argues that this collapse is central to Cobra Club (Gallagher, 2017), as the sudden leakage underscores the opacity of the infrastructures through which intimate data circulates. The game, therefore, does not merely depict surveillance but implements it procedurally: The player’s choices to share or not share are always already embedded in architectures that exceed their control. This raises broader questions about consent in digital systems. As Whitson’s work on gamification shows, surveillance often becomes palatable because it is framed as play, self-tracking, or voluntary sharing (Whitson, 2013, 2015). Cobra Club pushes this logic to an extreme: The pleasurable act of playful exhibition is converted into infrastructural exploitation, revealing how quickly voluntary sharing becomes involuntary exposure.

From Discipline to Control: The Evolution of Game Surveillance

Taken together, these case studies trace a continuum of surveillance in games, moving from the disciplinary enclosures of Foucault’s panopticism to the distributed modulation of Deleuze’s control societies—what begins as the fear of being seen becomes the algorithmic logic of being known. In sum, when placed alongside games like The Sims and Little Nightmares, Cobra Club expands the scope of surveillance in game studies. Where Little Nightmares exemplifies disciplinary surveillance through atmospheres of fear, and The Sims demonstrates normalization through metrics and routines, Cobra Club dramatizes the logics of control societies (Deleuze, 1992), where data circulates across networks, audiences collapse, and consent is always precarious. By implicating players in this collapse, the game exemplifies how surveillance capitalism thrives not only on coercion but also on the seductions of play, intimacy, and self-exposure.

Together, these case studies demonstrate the progression outlined in Section “Fictional Surveillance Worlds”, from disciplinary surveillance to control societies. They show how games materialize surveillance through distinct modalities: Atmospheric discipline in Little Nightmares, normalized self-monitoring in The Sims, and networked consent and exposure in Cobra Club. The following section extends this analysis by considering how adaptive and AI-driven systems operationalize these logics procedurally.

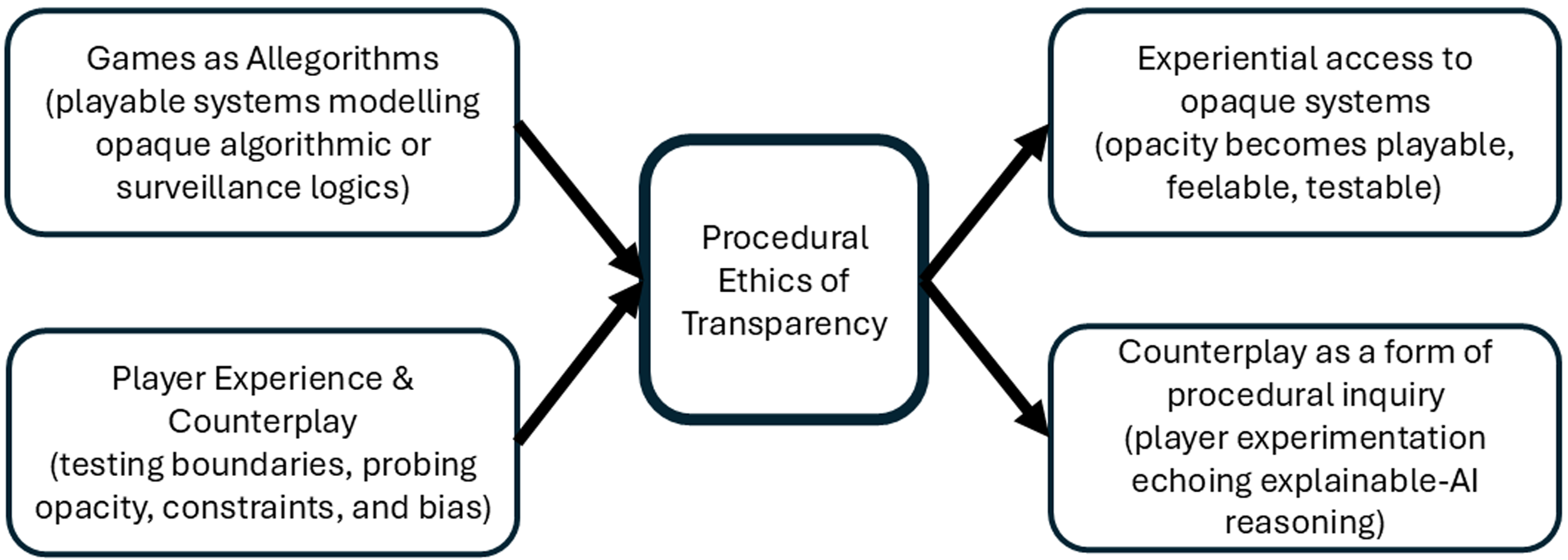

This leads us to what we term the “procedural ethics of transparency”—the way game systems enact (or withhold) knowledge about how decisions are made, how players are categorized, and what forms of agency are permitted. In some cases, games offer only subtle cues or symbolic feedback, creating an affective atmosphere of uncertainty or helplessness. In others, the rules of interaction are discoverable, but only retroactively: After the player has already acted within a rigged system. These dynamics do not simply reproduce narrative tropes of control; they simulate the conditions of informational asymmetry that characterize real-world AI systems. In doing so, such games do not just tell stories about surveillance—they make players feel it, procedurally.

From Simulation to System

Having examined how surveillance manifests across narrative and affective registers in the selected case studies, we now turn to games that do not merely represent surveillance but algorithmically enact it. In these systems, the logic of observation and adaptation operates procedurally: Every player input becomes data, every response a training signal. This shift from representation to computation marks the point where the ethics of surveillance become embedded in the architecture of play itself.

So far, we have explored how fictional and interactive narratives represent surveillance as an oppressive force—visualized through dystopian aesthetics, embedded in allegorical storytelling, and embodied by characters who must navigate omnipresent control. Games like Little Nightmares, Watch Dogs (Ubisoft, 2014) and Deus Ex (Ion Storm, 2000), dramatize the ethical consequences of life under surveillance, inviting players to inhabit roles shaped by systems of observation and control. These games reflect contemporary anxieties and exploitations surrounding privacy, autonomy, and algorithmic governance—but they also foreshadow a shift in how such systems are implemented in gameplay itself. The next stage in our analysis turns away from symbolic critique and toward procedural systems: The architectures of game design that model behavior, learn from interaction, and adapt responsively to players. If earlier sections asked what surveillance looks like in games, we now ask how games function as surveillance systems in their own right. This move parallels the theoretical shift traced earlier—from Foucault’s enclosed disciplinary apparatuses to Deleuze’s open, networked control societies, in which modulation replaces enclosure and feedback replaces command (Deleuze, 1992; Foucault, 1977).

As the governance of NPC behavior, dialogue, memory, and environmental response is increasingly algorithmic and seems poised to exploit AI approaches to mirror realistic conversations and actions, the line between simulation and system begins to dissolve. What emerges is not only a model of surveillance but a playable interface for interacting with algorithmic reasoning. The NPC becomes an agent of predictive modeling, shaping player experience while subtly gathering behavioral data in return. This convergence—between fictional surveillance and real-time algorithmic feedback—signals a new paradigm in game ethics. No longer merely critiquing surveillance from within the diegesis, games now operationalize surveillance logic through behavioral inference, adaptive mechanics, and, more recently, AI-driven emotional response. In doing so, they raise urgent questions about transparency, consent, and the normalization of tracking in both virtual and physical spaces.

What follows details how such systems operate in practice and why their ethical stakes matter, before tracing their bleed-over into adjacent, real-world infrastructures. We examine this convergence by focusing on the ethical implications of AI surveillance in contemporary games. We explore how NPCs and procedural systems simulate, test, and reinforce norms of data collection, behavior profiling, and adaptive interaction—revealing the complex role that games play in reflecting and shaping the evolving ethics of AI surveillance.

Ethical AI Surveillance in Gaming

Contemporary games increasingly embed surveillance not just as a theme but as a function of play. Yet, unlike traditional data systems, these procedural structures rarely make space for explicit consent—instead, they embed behavioral assumptions and adaptive feedback as implicit participation, normalizing surveillance through interactivity itself. For example, through AI-driven systems, NPCs observe, remember, and react to player behavior, constructing feedback loops that resemble real-world predictive modeling. In games such as Red Dead Redemption 2 (Rockstar Games, 2018), The Sims (Maxis, 2000), and the Elder Scrolls series (Bethesda, 1994, 1996, 2002, 2006, 2011), NPCs adapt to players’ actions over time, building complex relationships that simulate social intelligence (Millington, 2019; Umarov & Mozgovoy, 2014). Moreover, as Švelch observes, such systems transform monsters and NPCs into “agents of fairness” whose behavior must be legible and predictable to maintain player trust (Švelch, 2023). As noted earlier, Jayemanne similarly frames these adaptive architectures as performative “framing devices” that communicate whether play is felicitous or infelicitous, training players to internalize the evaluative logic of the system (Jayemanne, 2017, pp. 37–39). These interactions do more than increase immersion—they encode a logic of monitoring and response that mirrors the structure of AI surveillance systems.

For instance, Red Dead Redemption 2 (Rockstar Games, 2018) implements a hidden “Honor” system that tracks the player’s morality based on behavior. NPC reactions shift based on this invisible metric, subtly reinforcing ethical norms through procedural reinforcement. This system mirrors “algorithmic identity,” wherein users are continuously modeled and categorized based on inferred behaviors rather than fixed attributes (Cheney-Lippold, 2017). The game’s responsive system mediates the player’s self-perception, which is shaped not by direct feedback but by opaque calculations and gradual environmental shifts. In Alien: Isolation (Creative Assembly, 2014), the eponymous alien utilizes AI to “hunt” the player—not through scripted patterns, but through real-time environmental cues such as sound, door usage, and movement that simulate the intent and intelligence in a separate and independent state from the rest of the game’s rules to create what Der Derian describes as “preemptive surveillance”—an anticipatory system that infers proximity and potential threats, fostering a pervasive sense of uncertainty and paranoia (Der Derian, 1990).

Similarly, the 2017 title Echo (Ultra Ultra, 2017) extends this logic further: Every enemy is an AI clone that learns directly from the player’s actions, turning self-observation into a recursive system of control. The game thus literalizes Deleuze’s modulation by collapsing the difference between observer and observed—every act of resistance becomes new training data for the system. Players are compelled to adopt cautious, adaptive behavior, engaging in embodied algorithmic negotiation. We Happy Few (Compulsion Games, 2018) similarly blends narrative and system, forcing players to navigate a society dependent on behavioral conformity and pharmaceutical compliance. Here, NPCs act as enforcers of ideological normalcy—reacting to non-compliance in ways that parallel real-world algorithmic policing or social credit systems. These examples illustrate how games increasingly serve as spaces where players encounter systems that infer, categorize, and discipline—much like AI surveillance in broader digital life.

While such systems enhance gameplay and immersion, they raise complex ethical concerns about consent, transparency, and normalization. Players implicitly consent to be monitored within the diegetic frame of gameplay in the expectation that any tracking and data collection done within the game is part of a larger system that will reward them as part of the gameplay, but this consent is often limited to the game’s narrative frame. In practice, the same data is frequently used outside the gameplay context—for monetization, profiling, or even employment screening—a shift that occurs without renewed or meaningful user consent. In reality, players rarely consider how the procedural structures that systematically collect and act on their behavior behind the scenes are much alike the systems in the real world which also utilise data to track, monitor, and manipulate individuals in a different kind of “surveilled game” where “instrumentarian power” operates not through coercion but by modifying behavior through feedback systems engineered for extraction and control (Zuboff, 2019, p. 353).

As games and the AI systems they utilise within them to simulate real-world behavior and functionality grow in complexity, they start becoming similar to influential structures of power and control already present within the physical world, blurring the line between narrative immersion and behavioral conditioning. In this, the real-world systems are often constrained by the realities of technical limitations and the nature of socio-economic rules, where behavior is dictated by autonomy and free will. In games, instead, the same person is acting within the limits of an artificial system that cleverly imitates freedom while working behind the scenes to manipulate and guide the player’s actions as part of the intentionally designed gameplay. This gap between perceived agency and procedural control raises questions about the ethics of awareness and ultimately of consent as lines blur within the systems and algorithms used within a game to carry out surveillance to influence the gameplay, and in the real world, where “gamification” is used as an incentive to coerce specific behaviors (Fitzpatrick & Marsh, 2022, p. 400).

It has been observed that games rarely implement “consent mechanics”—systems that allow players to explicitly opt in or out of behavioral tracking and adaptive feedback (Nguyen & Ruberg, 2020). Instead, such systems are often buried within terms of service or presented as “features,” leaving players unaware of the extent to which their play is observed, profiled, and potentially monetized. While such activities might be justified as part of an essential necessity to achieve the intended gameplay mechanics, they are also utilised outside of contextual norms related to the playing of that game. This usage includes the game developers who increasingly see this data as a rich source to be utilised to understand their “gamers” needs and behavioral patterns, and under the misinterpretation of privacy norms and regulations, that the anonymised data derived from gameplay does not need any consent from the players. At the same time, there has been a marked increase in the tracking and profiling of gamers while playing the game and sharing this data with third parties who have no role within the gameplay (Kröger et al., 2023), which also happens without awareness or consent.

Studies have demonstrated that acceptance of in-game surveillance by players increases over time, suggesting a desensitization effect that mirrors trends in broader digital culture (Canossa, 2014; Melhart et al., 2023). This finding mirrors a similar phenomenon where making it challenging to investigate and consent to online tracking constantly creates “consent fatigue” over time, and leads people to accept whatever is presented to them (Utz et al., 2019). Interestingly, both games and online consent mechanisms utilise common themes of manipulation, such as the highlighting of suggested interactive components and the intentional friction in activities. In contrast, in consent and cookie dialogues, these are used to “dissuade” the user from making more privacy-aware choices. In both cases, the designer intends to create an environment where the person thinks they have freedom, but in reality, they are only “playing the game” designed by someone else’s rules.

Such coercion and manipulation being part of the system also shape player expectations about autonomy and agency. This illusion of agency not only reinforces system logic but also undermines meaningful autonomy: Players may feel in control, while their behavior is subtly nudged or constrained—not unlike algorithmic governance systems outside the game that present manipulative choice architectures under the guise of freedom. In games like The Sims (Maxis, 2000), NPCs simulate social interaction in ways that appear organic but are governed by probabilistic models. As players engage with these systems, they develop behavioral patterns, refine their relationships, and adjust their decisions accordingly. Apperley (2010) argues that play is always situated (embodied, local, and rhythmic) so even tightly governed systems are continually reinterpreted through player practice. Counterplay arises when players modulate or re-time these rhythms, exploiting the gaps between algorithmic control and lived experience. Such actions reveal that procedural governance is never total: Rhythm breaks, glitches, and emergent tactics make the game’s underlying logic newly legible, translating systemic opacity into momentary transparency. The result is a feedback loop in which players learn to navigate by system logic rather than personal intent—mirroring how users adapt their behavior on social media platforms to satisfy opaque algorithmic incentives.

The ethical implications of such design extend beyond the virtual world within the game as players, in their minds, become habituated to participating and ‘succeeding’ based on a limited but invisible set of rules. While learning any game involves mastering its rules, disciplinary habituation arises when those rules are opaque, adaptive, or extend beyond the game’s frame—when learning to play well also means internalizing invisible constraints that mirror real-world algorithmic governance. Yet players also exercise forms of resistance and counterplay. As Meades (2015) and Apperley (2010) note, even the most prescriptive systems can be exploited, subverted, or played against their intended logic. High-level or “glitch” play often exposes the boundaries of algorithmic control, demonstrating that procedural governance is never total. Still, we argue that when game systems normalize behavioral tracking and adaptive surveillance, they risk habituating players to mechanisms that are similar to those found in the real world. This effect can be observed in the convergence of gaming and surveillance technologies, rooted in military simulation and big data, that create robust systems of social sorting, nudging, and control (Solberg, 2022; Whitson & Simon, 2014). In such systems, emotional elicitation, transparency, and player profiling become not just design features but mechanisms of influence.

Such systems also utilise and exploit the apparent “morality” of players in making a choice, where specific actions are only possible if one is willing to carry out particular actions. Perhaps the most popular demonstration of how such “gamified morality” affects the real world is MIT’s “Moral Machine” experiment, which asked people to make decisions on how an autonomous car should deal with specific gamified scenarios that involve sacrificing and saving specific people (Awad et al., 2018). This research shows that “player behavior” is a mechanism that has functionality beyond games, and that ultimately, the use of AI surveillance in games functions as a testbed for broader debates about autonomy, consent, and the ethics of algorithmic governance.

In this sense, games prefigure broader debates around algorithmic accountability and explainability: Just as machine learning systems generate outputs that are opaque even to their designers, adaptive gameplay produces emergent behaviors that challenge player understanding and consent. As such, the ethics of surveillance in games foreshadow those of AI governance more generally: Issues of transparency, auditability, and asymmetrical power between data subjects and data controllers. Therefore, we propose that games mirror and prototype real-world data collection architectures by operationalizing surveillance through procedural systems. This dual role—as both critique and rehearsal—makes the gaming space a crucial domain for examining the social, emotional, and political dimensions of living with intelligent systems. As games evolve, designers and players must grapple with the question: When does immersive design become a form of infrastructural control?

We suggest a “procedural ethics of transparency” for exploring how contemporary games represent and enact surveillance systems, moving from symbolic critique to procedural participation Through mechanics that monitor behavior, adapt dynamically to player actions, and reinforce norms through responsive AI, games operationalize surveillance capitalism within their structures. What begins as narrative engagement—navigating dystopias in Watch Dogs (Ubisoft, 2014), avoiding predation in Little Nightmares (Tarsier Studios, 2017), or conforming in We Happy Few (Compulsion Games, 2018)—transforms into systemic reinforcement. Games teach (often young) players how to anticipate algorithmic logic, optimize for unseen rules, and accept tracking as the price of interaction. In doing so, they prototype behavioral norms that resonate far beyond the screen.

This convergence of storytelling, simulation, and systems reflects the evolution of games into ethical laboratories—spaces where players encounter and engage with algorithmic governance. Crucially, these systems do not remain confined to virtual play. As we have explored, the architectures of game surveillance are increasingly blurring into real-world applications, from player data used in recruitment to psychometric profiling and behavior-informed monetization strategies. To examine this convergence explicitly, we may also ask: How do the infrastructures of surveillance in games extend outward—into labor, law, and everyday algorithmic life? And what does this mean for the ethics of design, play, and technological accountability?

The Convergence of Fictional and Real AI Surveillance

The increasingly sophisticated AI systems in gaming—particularly those that track, adapt, and respond to player behavior—underscore a convergence between game mechanics and real-world surveillance. These systems blur the boundaries between in-game immersion and out-of-game data infrastructures, transforming play into a site of behavioral modeling. AI-driven NPCs now simulate surveillance systems akin to those used in targeted advertising, social scoring, and predictive policing. By incorporating observation, memory, and adaptive response, these characters turn fictional surveillance into functional simulation (Whitson & Simon, 2014).

Games often embed surveillance in mechanics that feel natural, even playful. Hide-and-seek dynamics and omnipresent “cyborg vision” interfaces enable players to embody and interact with systems of control, often without recognizing their ethical implications (Koskela & Mäkinen, 2016; Solberg, 2022). These interactions mirror and normalize constant monitoring in digital life, facilitating the gradual “normalization of instrumentarian power” (Zuboff, 2019). In this model, the gamer becomes both subject and contributor to a system of observation that extends well beyond entertainment. This convergence becomes ethically fraught when data collected through gameplay is repurposed for uses outside its original context. While players may accept in-game tracking as part of the immersive experience, few anticipate that behavioral data—such as decision-making patterns, hesitation, aggression, cooperation, etc.—could be repurposed for advertising, recruitment, or risk assessment. As such, privacy becomes contextual: Information shared within one domain may become ethically problematic when shifted to another without explicit consent (Nissenbaum, 2004). Even when legal consent exists—often buried in dense terms of service—the ethical expectations of players are frequently violated, primarily when surveillance extends into real-world systems.

This surveillance becomes more concerning with the rise of biometric and embodied gaming, including VR headsets and wearable sensors that gather sensitive data such as gaze, heart rate, and movement characteristics (Giaretta, 2024). These technologies provide immersive feedback but simultaneously intensify surveillance, creating detailed psychographic profiles from which emotional states and behavioral tendencies can be inferred (Kröger et al., 2023). As games evolve into live, data-rich services, the distinction between in-game modeling and real-world profiling becomes increasingly tenuous (Newman & Jerome, 2014). Emerging applications outside traditional gaming contexts reinforce this blurring. Corporations, including law firms and media companies, have begun using game-based assessments to evaluate applicants’ risk tolerance, decision-making, and emotional intelligence (Harver, 2024; O’Melveny, 2018). Vendors like Pymetrics (now Harver) 1 create psychometric profiles from gameplay, comparing applicants to organizational archetypes derived from employee data. While such techniques claim objectivity, they embed the same opacity and bias risks that plague AI systems elsewhere (Hunkenschroer & Luetge, 2022; Seif El-Nasr & Kleinman, 2020).

In this context, gaming becomes

It has been argued that games could lead the way by implementing consent mechanics: Systems that foreground data collection and offer meaningful, contextualized choices to players (Nguyen & Ruberg, 2020). Instead, players are often nudged toward normalization, becoming habituated to behavioral tracking as a standard interactivity feature (Canossa, 2014). This habituation risks extending into broader digital life, where acceptance of surveillance becomes a precondition for participation. Moreover, as Large Language Models (LLMs) and generative AI enter gaming environments—powering dialogue systems, dynamic narratives, or player support—new challenges emerge regarding accuracy, bias, and manipulation, where these systems may unintentionally reinforce stereotypes, misinterpret behavior, or offer emotionally disturbing feedback under the guise of interactivity (Sas, 2024).

Ultimately, the convergence between fictional and real AI surveillance in gaming demands critical reflection. Games no longer represent systems of control, they build and operate them. As behavior modeling and prediction platforms, they intersect with entertainment, labor, and surveillance capitalism. Ethical standards that apply to algorithmic systems in education, employment, and healthcare must now extend to game design and player data practices. Gaming is no longer separate from the infrastructure of algorithmic life; it is increasingly a foundational part of it.

Procedural Ethics of Transparency: Video Games As a Lens for Ethics and Politics

Fictional narratives—particularly games—do more than depict dystopian futures or critique surveillance culture. They function as speculative frameworks through which the public, designers, and policymakers can engage with the ethical dimensions of AI, surveillance, and data governance. As immersive environments that blend narrative with system logic, games uniquely position themselves as “procedural rhetorics”: Persuasive systems that make arguments through interaction, not just storytelling (Bogost, 2007). Complementing this, Galloway’s notion of the allegorithm highlights how allegory is enacted through code, such that political claims are articulated by algorithmic operations themselves (Galloway, 2006). Through these systems, players confront and participate in ethical challenges: Navigating NPC surveillance, managing visibility, negotiating consent, and experiencing algorithmic bias firsthand. Such moments of resistance and exposure amount to an informal ethics of transparency (Apperley, 2010; Meades, 2015): Counterplay transforms play into an investigative practice that mirrors demands for explainable AI, making opaque systems graspable through participation, see Figure 5. Titles like Deus Ex (Ion Storm, 2000), Cyberpunk 2077 (CD Projekt Red, 2020), and Watch Dogs (Ubisoft, 2014) (proceduralized urban hacking) embed ethical tension within mechanics, not just within the plot. The result is a form of experiential ethics—the “ethical architecture” of gameplay, wherein moral reflection emerges from constraint, feedback, and ambiguity (Sicart, 2011). Read through the lens of ethics and politics, these architectures situate players within shifting regimes of discipline, normalization, and control rather than merely narrating them.

Procedural ethics of transparency.

These experiences offer insight into the lived consequences of AI systems—insight that policy frameworks too often treat abstractly. Speculative fiction can anticipate and prefigure ethical dilemmas before they fully materialize in the real world: See (Hayles, 1999, pp. 24–25 and pp. 246–248) and (Cave et al., 2020, pp. 10–11). Games operationalize this anticipation by letting players inhabit, simulate, and contest algorithmic environments in ways that traditional discourse cannot. They thus serve not only as mirrors of society’s technological anxieties but also as simulations through which new ethical paradigms can be tested. This perspective aligns with research on design fiction and critical play, which uses speculative artifacts—games, scenarios, or mock platforms—to foreground the ethical implications of emerging technologies (Ballard et al., 2019b; Wong et al., 2017). Games like Immaculacy: A game of privacy (Suknot et al., 2014), Judgment Call (Ballard et al., 2019a), and AI or Nay-I? (Jr et al., 2019) exemplify this potential: They challenge players to deliberate over data consent, medical surveillance, and algorithmic regulation through interactive dilemmas. Such experiences make abstract concerns about fairness, agency, and control feel urgent and embodied. In doing so, they translate speculative foresight into procedural practice, surfacing frictions around contextual integrity, informed consent, and explainability.

Video games also provide feedback loops that can inform AI policy. Designers capture data on what users find intuitive, invasive, and ethically troubling as players respond to NPC surveillance, adaptive dialogue, or emotion-sensing systems. This information can inform broader efforts to define ethical standards for AI, particularly in terms of transparency, autonomy, and emotional impact. Yet these same loops risk normalizing observation and nudging as baseline interaction, a point underscored by the works on gamification and governance we have already explored. At the same time, the immersive qualities that make games compelling also carry ethical risks. Games normalize behavioral tracking, desensitize players to opaque surveillance, and reproduce algorithmic bias. The convergence of narrative critique and datafication shows that games are not just allegorical—they are algorithmic systems in their own right. Interestingly, it has been shown that the extraction and prediction of behavior have become central to new forms of economic and technological governance (Zuboff, 2019, p. 68 & p.203). Games must be understood as active participants in this system, not outside. Consequently, lessons drawn from play have direct purchase on debates about data minimization, purpose limitation, and meaningful consent beyond entertainment domains.

This dual nature positions gaming as a critical interface between AI policy and public ethics. On the one hand, games provide speculative spaces for education, critique, and participatory reflection. On the other hand, they function as behavioral laboratories that operationalize the logic of surveillance, which they sometimes oppose. Understanding this contradiction is crucial for effectively leveraging games in the development of ethical AI. Concretely, this implies design and policy interventions that treat play as a site for consent mechanics (granular, contextual opt-ins), transparency by design (in-situ disclosures of what is tracked and why), and explainable adaptation (player-facing accounts of how behavior influences models), alongside data minimization and purpose limitation for any telemetry beyond core functionality (Nguyen & Ruberg, 2020; Nissenbaum, 2004) To develop AI systems that are equitable, accountable, and responsive to human values, we must incorporate cultural practices, such as gaming, within the scope of ethical design. Games are not distractions from the AI policy conversation; they are its experimental terrain. Analyzing games as allegorithms as well as narratives clarifies how governance operates procedurally; this, in turn, yields actionable strategies that are prototyped in play for shaping more human-centered AI futures for the physical world.

Taken together, these insights prepare us for the inevitable: That if games already serve as laboratories for experimenting with algorithmic ethics, then recognizing their dual role as sites of critique and as infrastructures of control becomes essential for imagining more accountable futures of AI design and governance.

Conclusion

This paper has traced the evolving role of video games in representing, simulating, and enacting surveillance and AI systems. Beginning with speculative fiction and dystopian game worlds, we explored how narrative environments critique the commodification of privacy and the normalization of control. Yet, as we moved deeper into game mechanics and player experience, it became clear that these systems do not merely represent surveillance—they function as it. From NPCs that monitor and adapt to player behavior to feedback loops that shape emotion and action, contemporary games operationalize surveillance logic through procedural systems. These systems mirror and anticipate real-world infrastructures of algorithmic profiling, behavior prediction, and data monetization. In doing so, games become cultural critiques of surveillance capitalism and platforms where that very capitalism is rehearsed and refined.

In Foucauldian terms, contemporary game architectures blur the distinction between representation and discipline, producing subjects who learn to internalize the gaze through play. Following Deleuze, this dynamic extends beyond enclosed spaces toward the continuous modulation of control societies, where player data circulates across networks and platforms. The theoretical trajectory from enclosure to modulation thus finds its cultural expression in the evolution from single-player simulation to live service ecosystems and biometric tracking.

This convergence—between fictional imagination and real technological implementation—poses critical ethical challenges. Players implicitly consent to in-game observation but often remain unaware of how their behaviors are tracked, modeled, and potentially repurposed. As biometric tracking, emotion sensing, and LLMs become integrated into gameplay, these concerns become increasingly urgent. Games are increasingly shaping the ethical expectations of surveillance, influencing how we respond to and internalize it. And yet, games also offer profound opportunities. As procedural interaction systems, they allow players to engage directly with algorithmic structures, confront dilemmas of consent and autonomy, and reflect on the power of data-driven decision-making. In this sense, games act as speculative laboratories for AI ethics, where users can test, contest, and imagine alternative futures. Fiction, in this context, becomes a tool not only for critique but also for civic imagination. At the same time, games themselves become subjects of debate about the design of systems that perpetuate the same actions they are trying to critique, and thereby provide an opportunity to discuss a system both from within and from the outside.

To advance this conversation, we must move beyond treating games as merely illustrative of ethical problems and instead recognize them as procedural experiments in governance. As Whitson notes, the game industry’s reliance on metrics and datafication constrains not only players but also developers themselves, who navigate the same surveillance logics they simulate (Whitson, 2013, 2015). Similarly, Švelch’s and Jayemanne’s work reminds us that systems of fairness, performance, and framing are not neutral: They encode values that shape what counts as ethical, felicitous, or efficient behavior. Understanding these procedural ethics as forms of governance situates game design squarely within the politics of AI accountability.

If games are to serve as ethical laboratories, they must not only simulate dilemmas but also confront their own complicity in eroding meaningful consent and autonomy. Future design ethics must center explicit, contextualized consent—not just in mechanics, but in the data architectures that support them. This requires what we call a procedural ethics of transparency: An approach that makes visible the flows of data, the conditions of feedback, and the politics of adaptation embedded in play. Therefore, to meet the ethical demands of our AI-saturated present, we must take seriously the systems that shape public understanding—video games among them. Future research will extend this concept by analyzing emergent uses of generative AI in game design, where adaptive storytelling and procedural ethics converge in real time—turning the algorithm itself into a co-author of surveillance narratives. By recognizing the dual role of games as both agents and critics of surveillance, we open new avenues for participatory ethics, inclusive policy, and human-centered design. In the gaming systems we build and the stories we tell through them, we glimpse the technologies of control and the futures we still have time to choose.

Footnotes

Funding

The author(s) received the following financial support for the research, authorship, and/or publication of this article: This article was supported by the Horizon Europe Framework Program (HORIZON) under grant agreement 101070109. This work was also conducted with the financial support of the Research Ireland Centre for Research Training in Digitally-Enhanced Reality (d-real) under Grant No. 18/CRT/6224. For the purpose of Open Access, the authors have applied a CC BY public copyright licence to any Author Accepted Manuscript version arising from this submission. Harshvardhan J. Pandit is part of the AI Accountability Lab, which has been funded under the AI Collaborative, an Initiative of the Omidyar Group; the Bestseller Foundation; and the John D. and Catherine T. MacArthur Foundation; and the ADAPT Centre for Digital Media Technology is funded by Research Ireland through the Research Centres Programme and is co-funded under the European Regional Development Fund (ERDF) through Grant#13/RC/2106 P2.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.