Abstract

Hate speech, harassment, and an increasing prevalence of right-wing extremism in online game spaces are of growing concern in the United States. Understanding trends in how and to what extent extremist groups utilize online gaming spaces is vital in taking action to protect players. To synthesize the current state of extant research and suggest future directions, we conduct a systematic review of the literature on right-wing extremism in videogames. We detail our search protocol, selection criteria, and analysis of the collected work, and then summarize the findings. Important themes include how and why extremists’ targeting of online game communities began, the role of Gamergate in this process, and the industry and market context in which such activities emerged. We describe the current nature of the problem, with extremist language and ideology providing a kind of on-ramp for radicalizing disenfranchised gamers. We conclude with a summary of responses from industry and legislators.

In November 2021, the children's gaming platform Roblox filed a lawsuit against content creator Ruben Sim for organizing a cybermob to terrorize the platform, its players, and content developers (Carpenter, 2021). Ruben Sim had been banned from Roblox for several years previously for right-wing extremist rhetoric and player harassment, yet continued to access the platform through borrowed accounts. Sim's rhetoric escalated to ominous threats of violence against the Roblox Developers Conference in San Francisco in October 2021, causing a temporary lockdown of the event. Similar to other titles, Roblox struggled with extremism long before this terror threat and ensuing lawsuit. As early as 2009, it had already faced escalating extremist fan-made content, language, and in-game behavior (D’Anastasio, 2021).

Roblox is far from alone. According to a report from the Anti-Defamation League (2021a), hate speech and hate-based harassment in online games increasingly undermine their positive effects. Within the United States, roughly one in 10 players (10% for teens, 8% for adults) encounter white supremacist ideology in online games, including claims of white superiority and Holocaust denial (Anti-Defamation League, 2019). Three out of five (60%) teenage online players (ages 13–17) and five out of six (83%) adult online players experienced harassment, an increase of 9% in just two years. Women (49%), Black or African American (42%), and Asian American (38%) players all faced increasing harassment in the past year (Anti-Defamation League, 2021a).

This increasing presence of white supremacist rhetoric in online games and the potential normalization of extremist beliefs and hate-based harassment is of growing public concern given the centrality of videogames in today's entertainment ecosystem. The videogame industry is now the largest sector in entertainment, with revenue exceeding the film and music industries combined (Richter, 2020). Nearly 227 million Americans play videogames: two-thirds (67%) of the adults and three-fourths (76%) of the youth (Entertainment Software Association, 2021). Playing online with others became more popular, as the notable increase in average weekly online gameplay indicates (from 6.5 to 7.5 hr).

Extremist groups are known for being early adopters of novel technologies, and now they take advantage of gaming culture the same way they adopted the internet and social media for their benefit (Bliuc et al., 2018; Condis, 2019a; Conway et al., 2019). Right-wing extremists utilize online gaming platforms for various purposes, including recruitment (Khosravi, 2017; Lakhani & CIVIPOL, 2021; Vaux, Gallagher, & Davey, 2021), community building (King & Leonard, 2016; Lakhani & CIVIPOL, 2021; Vaux, Gallagher, & Davey, 2021), reinforcing ideologies (Lakhain & CIVIPOL, 2021; Robinson & Whittaker, 2020), and normalizing white supremacist beliefs (Anti-Defamation League, 2020a; Barlow, 2019; D'Anastasio, 2020; Hansson & Keck, 2019). A comprehensive understanding of right-wing extremism in online games is crucial to preventing the proliferation and normalization of hate-based ideologies. To date, however, no synthesis or summary of the extant research base exists. In this article, we review the literature on extremism in gaming within the United States through September 2021. We identify common themes and conclusions across the extant research base in terms of when, how, and why extremists began targeting online game communities; the role of Gamergate in that process; the industry and market context in which this troubling trend arose; aspects in the game industry that catalyzed that trend; how the norms and values of gaming culture served as a nurturing seedbed for extremism to take root and grow; and the nature of the problem we have now, with extremist language, ideology, recruitment, and enculturation serving as an on-ramp for the radicalization of disenfranchised gamers. We then detail the lack of substantive response from the games industry and legislators.

Methods

Defining Terms

Our focus is on the relationship between right-wing extremism and mainstream videogames. We use the Anti-Defamation League's official definition of extremism: “religious, social or political belief systems that exist substantially outside of belief systems more broadly accepted in society (i.e., ‘mainstream’ beliefs). Extreme ideologies often seek radical changes in the nature of government, religion or society” (Anti-Defamation League, n.d.). Extremism can also refer to the radical wings of movements with contrasting orientations, such as the anti-abortion movement or the environmental movement. And though not all are inherently “bad” in intent, they exist outside of the mainstream because many of their views and/or tactics are perceived as objectionable. We are specifically interested in the right-wing extremist movements within the United States. Right-wing extremism is an umbrella term referring to many different groups and organizations in the United States. Jackson (2019) describes these movements as falling within three different ideologies: racist extremism (e.g., anti-Black), nativist extremism (e.g., anti-Islam or anti-Semitic), and anti-government extremism (e.g., sovereign citizens). While he notes some fluidity and overlaps amongst these groups and their goals, they all “[seek] to restore a (perceived) past ‘golden age'” (p. 4). As such, these groups often operate independently of one another and with their own variations of common white supremacist ideologies (Anti-Defamation League, 2018; Lavin, 2020), but with the shared agenda of a continued regime of white-only authority that positions minority populations as the other and a threat rather than equal citizens. We focus on these groups due to the fact that the overwhelming majority of extremist terror committed in the United States is fueled by right-wing extremism. According to the Anti-Defamation League's (2018) report on American white supremacy, 95% of all “domestic extremist related murder in the United States” between 2008 and 2017 was committed by right-wing extremists (p. 58).

We use the term mainstream videogames to refer to dominant game titles in the marketplace, as defined by sales, subscriptions, revenue generated, time spent playing, and cultural influence. Included in this definition are commercially successful games (e.g., Minecraft (2011) , Roblox (2006) , The Sims (2000) ) and games with broad player bases and broader cultural reach (e.g., Among Us (2018) , Candy Crush (2012) , Tetris (Pajitnov, 1984)). While lower barriers to entry and digital distribution have begun pushing many independent (indie) games into the mainstream, we focus primarily on titles developed by larger AAA companies due to their mass distribution, significant financial investment, and broad cultural import.

Data Collection

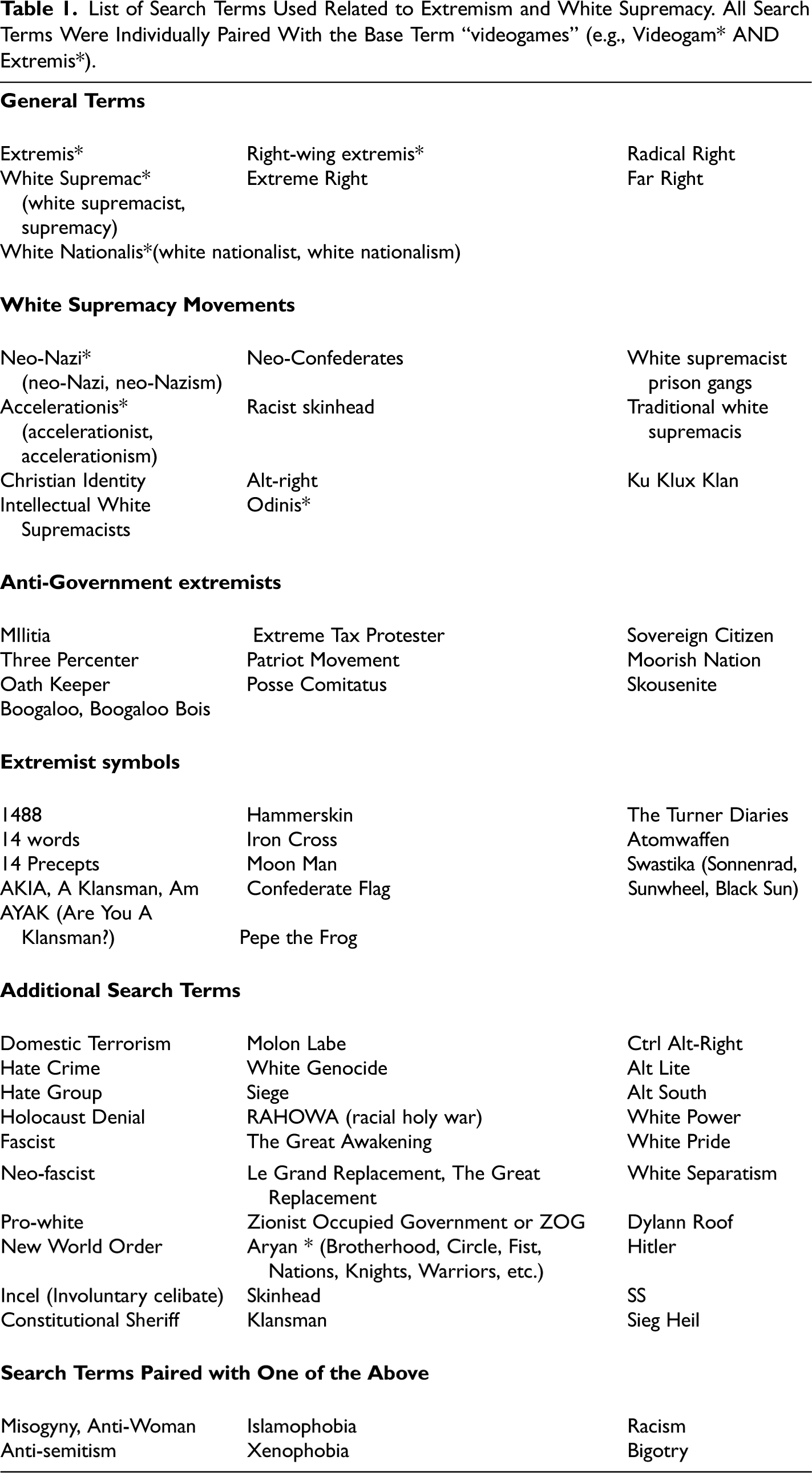

The search protocol and selection criteria were defined in advance to reduce any selection bias. To identify relevant articles on extremism in videogames, we conducted a three-stage search of the extant research base between March and September 2021. The first search was a preliminary assessment of the core literature available through the following academic databases: Community Abstracts, Web of Science, Scopus, Compendex, Inspec, Alternative Press Index, Anthropology Plus, Education Source, LISTA, American History & Life, Academic Search Complete, Business Search Complete, Library Search, JStor, Proquest Dissertations, and Google Scholar. The search terms focused exclusively on games while capturing all forms of right-wing extremist and white supremacist groups and behaviors. To accomplish this, we searched for “gam-,” “video gam-,” and all related permutations in combination with 76 key terms relevant to the topics of white supremacy, the alt-right, and domestic extremism within the United States (Appendix Table 1). We focused on the United States because while many international groups’ political philosophies can be regarded as right-wing extremism, interpreting their behaviors requires a deep understanding of their regional histories and cultural contexts. These differences necessitate a more narrow focus.

List of Search Terms Used Related to Extremism and White Supremacy. All Search Terms Were Individually Paired With the Base Term “videogames” (e.g., Videogam* AND Extremis*).

We excluded discussions of “garden variety” racism, sexism, and violence in videogames with no explicit ties to right-wing extremism. While still relevant, the literature bases for these topics are too large to sufficiently summarize in this review. The subsequent two searches were supplementary in nature and used to adjust and refine our terms in order to ensure no relevant work was omitted.

The inquiry was limited to English language publications from peer-reviewed journals, thesis papers, manuscripts, white papers, and book chapters as well as a selection of relevant journalistic articles from mainstream and reputable media outlets (The New York Times, National Public Radio, etc.) We then compared our database to the reference sections of the most relevant articles in the corpus to verify the sample. Additional searches were periodically conducted until February 2023 to check for any newer publications or related newsworthy events.

Narrowing and Analyzing the Corpus

We identified 243 potential pieces and organized them to record primary information, such as the source database, author(s), title, journal, publication year, publication type, link to the article, full citation, and the reason for exclusion, if applicable. Further, we extracted data regarding the applied method, the research question(s), findings or arguments, future direction, and additional notes. The research team reviewed each publication to identify those directly addressing the research question. Applying our selection criteria, we only included papers with a substantial focus on right-wing extremism, videogames, and their relationship. Articles that mentioned videogames in passing as an example of broader online extremism trends but did not make any further points or claims about them were excluded.

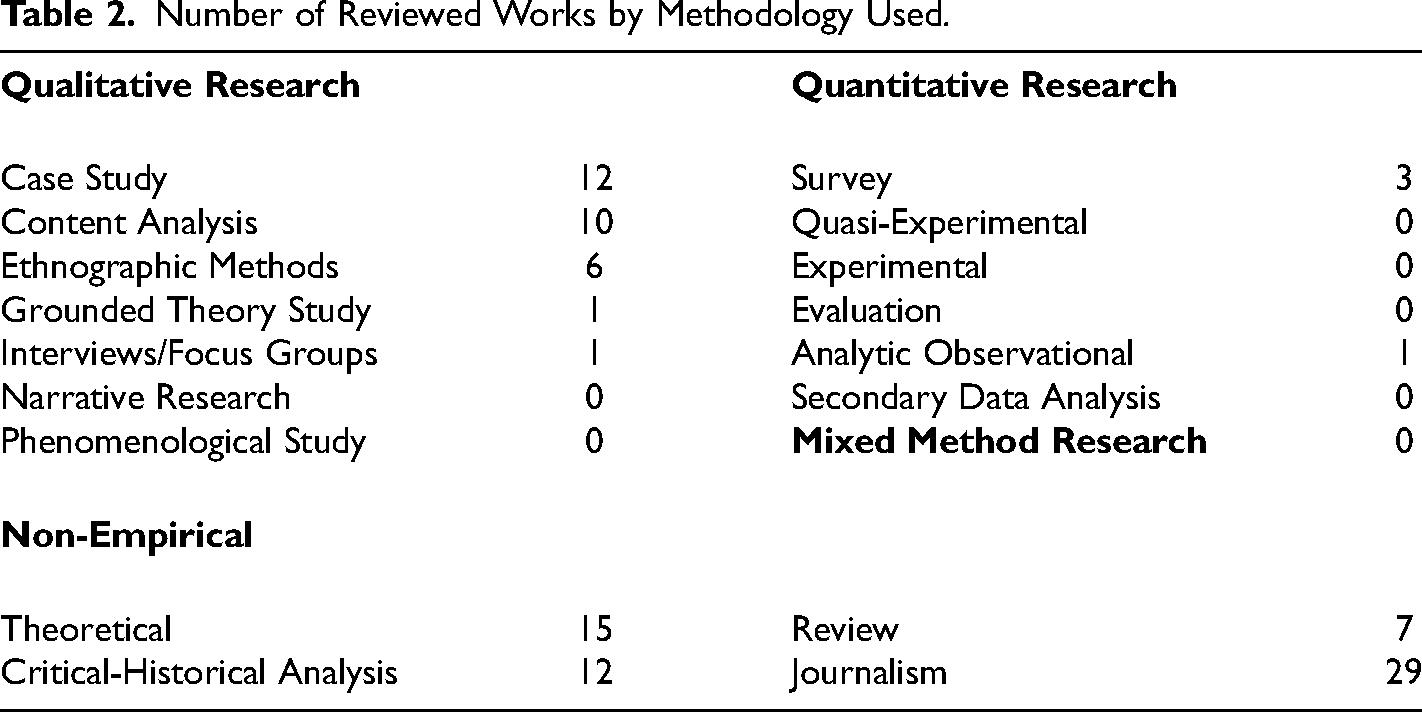

The final literature corpus consisted of 97 works: 37 academic articles, 28 web articles & periodicals, 12 book chapters, nine dissertation theses, six books, and five reports. Appendix Table 2 categorizes works by methodology. The majority of the papers were non-empirical (for example, theoretical treatises and critical-historical analyses (64.9%)) or qualitative in nature, with case studies, content analyses, and ethnographic approaches accounting for nearly all empirical studies (28.9%). Journalistic accounts of newsworthy events were included for their relevance and impact on the conversation in the academic literature. Quantitative research on extremism and games was exceedingly rare at the time of this review. The compiled literature spans a wide range of research traditions and includes different types of academic and journalistic work.

Number of Reviewed Works by Methodology Used.

In order to review this extant literature base, we collaboratively conducted close reading and thematic analysis of each article and then synthesized their primary topics and arguments into shared topics and cohesive claims and arguments. At least two reviewers independently read each publication in the corpus and then compared annotations; when differences between primary readers were found, a third reader reviewed the manuscript and together the triad negotiated to resolve the differences. Over 10 weeks, two principal investigators led full team discussions to review and organize the emerging arguments reflected in the literature corpus. In what follows, we summarize the collective claims and arguments reflected in the literature corpus.

Limitations

The design of this review inherently works under the assumption that there is indeed an existing relationship between right-wing extremism and videogames. While the literature included in this report provides general support for this belief, it is possible that studies refuting this relationship also exist but remain unpublished through the “file drawer problem” (Rosenthal, 1979). Andrews (2023) stands as one example, arguing for researchers to consider alternative explanations before asserting a theoretical relationship between gamification and extremist attacks.

While research on the topic of extremism in videogames has increased in recent years in response to the growing problem, the lack of substantial empirical studies available for this review reflects a field still in its infancy. Work to date has focused primarily on theoretical, critical-historical, and journalistic work with some analyzed case studies but few quantitative or qualitative analyses of data. Significant research remains ahead.

It is also important to note the somewhat artificial limitations set in our bounding of the problem under investigation. The ideology of right-wing extremism is built upon several different types of hate, including but not limited to racism, misogyny, and anti-semitism. These topic areas each have their own expansive sets of literature within games research. While our strict parameters focused this review, they also inevitably excluded literature with import on the topic, however indirect. Incorporating findings from these areas of study would add additional depth to these results and provide a broader, contextualized understanding of the complex interactions at play.

Finally, by focusing primarily on right-wing extremism in the United States, we lose the ability to assess how other extremist and terrorist organizations utilize videogames across the globe and how these global extremist groups compare and intersect. Here too, a broader review that goes beyond U.S. national borders may provide additional key insights into the nature of the problem and, potentially, how it might be effectively addressed. Limiting our scope geopolitically allowed for deeper analysis into a specific national and political context than would have been possible with the inclusion of outside groups.

Findings

Enabling Conditions in the Game Industry & Market

Lack of Industry Diversity

The games industry is overwhelmingly dominated by White, heterosexual men (Daniels & LaLone, 2012; Euteneuer & Meints, 2020; Hammar, 2020; Nakamura, 2012), with disproportionate under-representation of African-American developers (Hammar, 2020; Ong, 2016). A similar disparity prevails regarding female industry professionals. Women are severely underrepresented, especially in leading positions and content-driven roles, and they frequently earn less than their male colleagues (Bezio, 2018; Tomkinson & Harper, 2015).

As a consequence, women in the games industry are often expected to work in hostile environments where they are at risk of being misrecognized, disregarded, and treated in a manner rarely tolerated in other mainstream industries (Campbell, 2018; Tomkinson & Harper, 2015). Inappropriate behavior has historically been minimized, covered up, dismissed as misunderstandings on the part of the injured parties, or unreported by intimidated victims (Tomkinson & Harper, 2015). Recent lawsuits such as Department of Fair Employment and Housing v. Activision Blizzard, Inc. (2021) highlight these ongoing industry issues for consumers, investors, and the public at large.

Privileging the White Male Gamer

This homogeneity across the mainstream professional game industry has implications for the products they design. From the early 1990s, mainstream games were marketed exclusively to white, male, suburban youth through both imagery and content (Bezio, 2018; Cassell & Jenkins, 1998). This exclusion of marginalized groups and female players was evident in game narratives (Nae, 2018), representations (Russworm, 2018), and mechanics (Salvati, 2019). War, science-fiction, and fantasy themes rose in prevalence, as well as stories revolving around an exceptional white male protagonist fighting enemy invasion or conquering land or space (Harrer, 2020; Leonard, 2006; Nae, 2018). The games that portrayed women at all rendered them either weak, sexualized, or both (Euteneuer & Meints 2020; Leonard, 2003).

Although the games industry is constantly evolving in terms of gaming platform, genre, and mechanics, this gendered contestation continues without significant abatement (Maloney, Roberts & Graham, 2019). In 2021, an Entertainment Software Association report touted increasing diversity among players in terms of age, ethnicity, and gender, with nearly half of all U.S. game players (45%) identifying as female (Entertainment Software Association, 2021). Yet despite shifts in gamer demography, racist and sexist gatekeeping in the games industry persists: marketing continues to cater to young White males (Bezio, 2018; Braithwaite, 2016; Euteneuer & Meints 2020), emboldening these players to enforce gaming culture as white male space (Ratan et al., 2015).

Misogyny at the Root

Gendering games as a male space confers male-identified players a sense of superiority and foments an in-group out-group divide (Euteneuer & Meints, 2020; Hammar, 2020). The increasing female presence in gaming spaces challenges this traditionally masculine custodianship and becomes seen as an incursion into gamer culture rather than an integral part of it. As a result, some male gamers justify racist and sexist behavior as self-defense of their “geek” identity (Campbell, 2018; Tomkinson & Harper, 2015). Humor, sexualization, and objectification are typical instruments of trivializing and excluding gamers from minoritized groups (Euteneuer & Meints, 2020). In more extreme cases, rape threats and death threats are administered instead (Bezio, 2018; Tomkinson & Harper, 2015). In the words of one hate campaign survivor, They’re [male gamers are] starting to freak out because they feel deeply entitled to this space. They talk about it like it's the last bastion of masculinity. Like, “These feminazis have taken everything from us, now they are coming for our games” (Anita Sarkeesian, cited by Campbell, 2018).

Hardcore Gamers & the Manosphere

Over time, the “hardcore gamer” identity comes to include resentment and aggrievement—a sense that they themselves are the victims and not perpetrators of discrimination. Such sentiments align with broader fears in other reactionary white male organizations about the feminization of school, work, and play spaces (Campbell, 2018); about a general loss of status and identity (Anahita, 2006; Campbell, 2018; Nagle, 2017); and about supremacy (Anahita, 2006; Condis, 2018). It is these feelings of threat and rejection that tie gamers to other predominantly white-male organizations and that make them prime targets for right-wing extremist recruitment.

Members of reactionary white male organizations also blame women for their perceived loss of privilege in cultural spaces historically framed as “theirs” (Anahita, 2006; Campbell, 2018; Condis, 2018). Men's rights activists and alt-right radicals appeal to that motif of fighting against others invading digital spaces by naming white-male gamers as victims of women and minorities looking to deny them their political and social capacities (Bezio, 2018; Condis, 2018). (White) masculinity comes to demarcate the “true” gamer from the “other” (often a minoritized group), who then faces hostility and exclusion as a result (Bezio, 2018; Gray, 2016; Tomkinson & Harper, 2015). It is this same struggle to secure dominance over gaming culture that makes these players particularly susceptible to white supremacist ideas (Bezio, 2018; Condis, 2019b; Kamenetz, 2018). Hardcore gamers blaming women and minorities for their perceived loss of status align with far-right extremists seeking to normalize their fringe worldviews and expand their membership. That alignment is their means to advance a shared narrative of disempowerment in relation to not only videogames but to broader social, cultural, and political life (Condis, 2019a, 2019b; Hansson & Keck, 2019; Kamenetz, 2018).

The Role of Gamergate

The events of Gamergate are often framed as a watershed moment in the relationship between hardcore gamer culture and right-wing extremism (Braithewaite, 2016; Condis, 2018; Harley, 2020; Salvati, 2019). Gamergate was a mass online movement where gamers harassed and abused feminist activists advocating for diversity and equality within gaming communities. The movement began in response to a controversy where a female game developer was harassed for her relationship with a journalist (who had no role in reviewing or covering her work), which allowed gamergaters to operate under the guise of fighting for “ethics in video game journalism” (Braithwaite, 2016; Quinn, 2017). As Ferguson and Glasgow note (2021), the intricacies of the situation were complex and many supporters of the movement fell outside of the hardcore gamer stereotype. Nonetheless, right-wing extremists saw Gamergate as an opportunity to recruit like-minded individuals. As Peckford (2020) observes, opinions expressed by Gamergate supporters … mirror those of right wing extremists; namely, the “us versus them” mentality of feeling disenfranchised, as well as the opinion that their community largely made up of white men had been “invaded” by women and people of colour (p. 67).

Steve Bannon and Milo Yiannapolous, leaders in the right-wing extremist movement then working at the conservative news website Breitbart, saw an opportunity to co-opt the erupting hate campaign. They leveraged their resources to provide mainstream coverage and support to the movement (Harley, 2020; Lees, 2016). This strategy appeared fruitful, as subsequent analyses of Reddit sub-communities have found substantial overlap between Gamergaters and fervent Donald Trump supporters (Maloney, Roberts, & Graham, 2019; Martin, 2017).

Over time, online right-wing extremists adopted the harassment tactics of Gamergaters. From the use of terms like “safe space” and “snowflake” for dismissing progressive views (Bezio, 2018) to increased incidents of “doxxing, trolling, and overt threats of violence,” the tactics used in Gamergate are now commonly being implemented by these extremist groups online (Russworm, 2018). Such tactics have become “part of their online routine,” resulting in today's online discourse where “online harassment to silence minorities and especially women is no longer an odd episode, as was initially the case with Gamergate, but has become a ‘normal’ part of the internet” (Aghazadeh et al., 2018). Thus, the Gamergate controversy became the official debut of organized right-wing extremism on the contemporary center stage of youth culture that is games.

Right-Wing Extremism in Mainstream Games

Extremist Early Tech Adoption

White supremacist organizations have always been quick to adopt new technologies as tools for accomplishing their goals (Bliuc et al., 2018; Conway & MacDonald, 2021). The advent of the internet, coupled with its regulators’ unyielding commitment to free speech and self-regulation, has given them a powerful tool to spread their ideologies (Back, 2002; Brown & Hennis, 2019; Hale, 2012). Moving their operations online has made the “dissemination of hate materials quicker and more cost effective” (Hale, 2012). It provided an efficient avenue for fundraising (Fisher, 2021; Squire, 2021), normalizing and publicizing their ideologies amongst the general public (Hale, 2012), and radicalizing and recruiting new members (Conway et al., 2019; Hale, 2012). Extremists exploit the anonymity, ephemerality, and lack of content moderation of digital platforms to spread their messaging with relatively little pushback (Aghazadeh et al., 2018; Beram, 2019; Conway et al., 2019). As videogames and gaming culture have ascended to mainstream prominence, white supremacists have naturally pivoted toward exploiting these spaces for their own benefit. This has resulted in an increasing entrenchment of right-wing extremist politics in mainstream online games and gaming communities (Barlow, 2019; Campbell, 2018; Davey, 2021; Salvati, 2019).

Normalizing Extremist Discourse & Ideology

Right-wing extremist gamers inhabit the same online gaming spaces as millions of other players, providing ideal conditions for normalizing radical ideologies (Anti-Defamation League, 2020a; Barlow, 2019; D'Anastasio, 2020; Hansson & Keck, 2019) and spreading propaganda (Davey, 2021; Robinson & Whittaker, 2020). Two of the most discussed strategies of these groups are filtering their beliefs through humor (Condis, 2019a; Daniels & LaLone, 2012; Euteneuer & Meints, 2020) and using political dog whistles (Condis, 2019a; Lopez, 2015; Peckford, 2020; Salvati, 2019). By presenting their beliefs as jokes and memes, extremists hope to appeal to their young target audience while disguising hate as trolling or sarcasm. As Condis (2019a) describes, it allows them “to showcase their beliefs while also utilizing a thin veil of plausible deniability, making it intentionally unclear whether a particular racist message comes from a place of sincere hatred or if it is just some troll shitposting ‘for the lulz’” (p. 147). Dog whistles work similarly; adopting a more sophisticated rhetoric and coded language allows them to sneak their ideologies past content moderators. One striking example is in the massively popular multiplayer game World of Warcraft (2004) where racist guilds have been said to refer to black players as “Orcs,” hiding their racism within the game's own terminology to dodge accountability (Makuch, 2019).

Such tactics reflect the efforts by white supremacist groups to “soft-sell” their views (Hale, 2012). They utilize pop culture touchstones like games and music to connect with their target audience and then gradually introduce them to extremist ideology (Fisher, 2021). As blatant bigotry and hateful expression have become nearly universally derided, such groups have softened their rhetoric in order to improve their public image and sneak their ideology into mainstream discourse (Bliuc et al., 2018). In both the gaming community and society at large, such furtive efforts allow right-wing extremists to normalize their views and attract potential recruits who might otherwise have been repelled. This approach simultaneously makes it difficult for authorities, regulators, and moderators to find explicit offenses on which to act.

Right-Wing Extremist Games

In addition to normalizing extremist ideology and evangelizing extremist views, white supremacists have designed videogames based on their worldviews (Khosravi, 2017) and made them readily available for download on popular white supremacist websites (Robinson & Whittaker, 2020). In these games, players often assume the role of a cisgender white male protagonist as he is tasked with violently eliminating perceived enemies coming in the form of people of color, people of different sexual orientations, and people of Jewish or Muslim faiths (Selepak, 2010). Some view these games as attempts at appealing to younger audiences (King & Leonard, 2016), while others perceive their function more in terms of community-building and mobilizing established members already familiar with the symbols, iconography, and underlying ideology (Robinson & Whittaker, 2020). Regardless of their ultimate function or purpose, extremist developers’ general lack of game development expertise lends many such titles an overall poor production value that, in turn, limits their popularity, even among other like-minded individuals (Běláč, 2018; Robinson & Whittaker, 2020).

As a result, there is a more recent shift in focus from developing extremist videogames wholesale to instead injecting extremist content into existing mainstream titles through modding. The modders will insert changes, such as “Nazi themes, graphics, and game objects” (Salvati, 2019), Ku Klux Klan skins (Hernandez, 2020), and NPCs designed to look like members of targeted minority groups that players can attack (Khosravi, 2017). The most frequently targeted games for modding are popular first-person shooters such as Doom (1993) and Counterstrike (2000) (Condis, 2019a; Khosravi, 2017), the same genre used in most white supremacist-developed titles.

For other game mods, their extremist nature and intent are less overt and easier to plausibly deny. Extremist mods of historical strategy games like Hearts of Iron IV (2016) and Civilization (1991) (Salvati, 2019; Winkie, 2018) adjust gameplay restrictions so that players can “recreate” history to better reflect their own fringe worldviews, for example, by making Hitler-led Nazi Germany a playable nation or setting the extermination of certain countries or ethnic groups as an in-game win state. As Salvati (2019) states, “the practice of modding strategy games to include explicitly racist, ethno-nationalist, or neo-fascist themes (often defended by their creators on grounds of comedic irony or free expression) has become something of a cottage industry in recent years” (pp. 156–157). Whether intended to spread white supremacist propaganda or to allow players to indulge in right-wing extremist fantasies, such game mods provide hate groups with potential recruitment and dissemination tools with far more mainstream appeal than less polished games developed wholly on their own.

Mainstream Games as Soft Sell White Supremacy

Mainstream videogames are culturally appealing to right-wing extremists beyond a shared sense of white male aggrievement and home-grown extremist game builds or mods. Many mainstream games advance in-game representations, narratives, and mechanics that reflect existing racial and structural inequities and even align with extremist worldviews (Harrer, 2020; King & Leonard, 2016; Leonard, 2003, 2006; Nae, 2018; Salvati, 2019). As King and Leonard (2016) describe, “Games such as World of Warcraft (2004), Resident Evil (1996) , Killzone (2004) , Grand Theft Auto III (2001) , Grand Theft Auto: Vice City (2002), and various World War II games are ubiquitously cited as both sources of fun and games that allow for the celebration or promotion of white nationalism” (p. 113). Some extremists view such titles as educational tools showing the true, criminal nature of people of color (King & Leonard, 2016; Leonard, 2006), as opportunities to play out anti-Semitic and anti-Muslim ideological fantasies (Davey, 2021; Vaux, Gallagher, & Davey, 2021), or even as training modules to prepare for race war (King & Leonard, 2016).

Game developers help promote and maintain white dominance as the social norm by allowing stereotypes to guide depictions of individuals from marginalized groups (Aguilera, 2022; Daniels & LaLone, 2012; Harrer, 2020; King & Leonard, 2016; Leonard, 2006; Russworm, 2018; Salvati, 2019). Asians are often depicted as criminals or martial artists (Hutchinson, 2017; Leonard, 2006), African Americans appear as violent antagonists or athletes (Daniels & LaLone, 2012; Leonard, 2003; Ong, 2016), and female characters are often sexualized and positioned as victims or prostitutes (Daniels & LaLone, 2012; Euteneuer & Meints, 2020; Leonard, 2003). Such design choices perpetuate racial biases and misogyny found in gamer culture and society more broadly. Leonard (2006) emphasizes this point, arguing that “such stereotypes do not merely reflect ignorance or the flattening of characters through stock racial ideas but dominant ideas of race, thereby contributing to our commonsense ideas about race, acting as a compass for both daily and institutional relations’’ (p. 85).

The setting and narrative of mainstream games also often promote notions of white superiority. War games situated in the Middle East (e.g., Call of Duty (2003), Medal of Honor (1999)) are seen to justify American imperialism and to promote the notion of a war against black and brown people in real-life conflict zones (Grewal, 2017; Harrer, 2020; Leonard, 2006; Mckeand, 2021; Steeby, 2019). Real-time strategy games such as Civilization (1991) and Hearts of Iron (2016) often appeal to right-wing extremists for their emphasis on playing out fantasies of nationalism and imperialism (Khosravi, 2017; Salvati, 2019; Vaux, Gallagher, & Davey, 2021). Such games are characterized as applauding settler colonialism and promoting Western conceptions of progress where “it is presumed that technological modernity is the ideal state of human society, and its pursuit justifies annihilation of the game's ‘unproductive’ minor tribes” (Salvati, 2019, p. 161). Even games like Far Cry 5, designed to critique right-wing radical trends in modern politics, may have helped these groups by depicting them as “inept and ineffective” rather than outright condemning their beliefs (Green, 2022, p. 1033). Such white-centric perspectives in mainstream commercial games may normalize and soft-sell right-wing extremist views to millions of players.

Insufficient Response From Leadership

From the inception of the internet (Back, 2002; Brown & Hennis, 2019; Hale, 2012) to the first steps of virtual reality (Harley, 2020), the purveyors of modern communication technologies have long prioritized free speech and self-regulation at the expense of minoritized groups of consumers targeted by harassment. Unfortunately, as Condis (2019a) argues “this desire on the part of technology companies to wash their hands of their responsibility to moderate their platforms is a key feature for white supremacists and other hate groups to exploit” (p. 152).

The Apolitical is Political

While game companies often claim an apolitical stance (Pfister, 2018), their silence on the misogyny in the industry and market, the targeted harassment of marginalized communities, and the alarming presence and promotion of extremist ideologies arguably reflects the opposite (Aghazadeh et al., 2018; Anti-Defamation League, 2019; Condis, 2019a). Their refusal to take action against white supremacy allows extremist groups to openly normalize and inculcate players into their ideologies (Condis, 2019a; D’Anastasio, 2021; Makuch, 2019).

Game-related technology and service providers are culpable as well. The digital game store Steam (Anti-Defamation League, 2020b; Campbell, 2018; D’Anastasio, 2020; Vaux et al., 2021) and communication platform Discord (Davey, 2021) are both frequently cited as spaces harboring extremist activity within their forums and private servers. Discord even served as the organizing hub for the 2017 deadly “Unite the Right” rally in Charlottesville, Virginia (Barlow, 2019; Conway et al., 2019; D’Anastasio, 2020; Salvati, 2019; Theriault, 2019), and only removed the responsible accounts after the fact (Roose, 2017).

Lack of Content Moderation

Despite the evidence of right-wing extremists on games and game-related platforms, companies generally avoid addressing online hate and elude implementing effective moderation practices (Brown & Hennis, 2019; Davey, 2021; Makuch, 2019). Consequently, the industry fails to protect its communities, leaving members vulnerable to harassment and exposure to extremist propaganda (Condis, 2019a; Makuch, 2019).

Content moderation is a daunting task for companies, particularly when trying to implement it on a global scale (Kamenetz, 2018; Roberts, 2019; Theriault, 2019). However, both Condis (2019a) and Hammar (2020) note there may be other factors at play. They argue that ignoring white supremacist activity increases profits through increased engagement that hate speech inevitably generates while simultaneously circumventing the expenses associated with addressing it. The players producing hate speech can often be game companies’ most passionate consumers, further incentivizing a hands-off approach to moderation.

Players are given flags and reporting systems to file complaints against bad actors (Nakamura, 2012; Kamenetz, 2018). Such practices allow game companies to delegate the moderation burden to community volunteers, who then must invest their time and labor in monitoring and reporting malevolence (Brown & Hennis, 2019). Reporting, however, can only confront hate-based harassment retroactively (Nakamura, 2012), and many players still choose not to report, citing the perceived ineffectiveness of the existing systems (Anti-Defamation League, 2020a).

The failure to eliminate such behavior has created an environment that tacitly condones it. Videogame companies allow their services to continue without impediment or intervention, even when overrun by extremist, sexist, or racist speech (Gray, 2016). As a result, many players have simply resigned themselves to accepting disruptive behavior as an expected or normal part of gameplay (Anti-Defamation League, 2020a). When players shout or type explicitly racist, sexist, or antisemitic slurs during gameplay, though, dismissing it as harmless only helps normalize extremist rhetoric.

Lack of External Law and Policy

Legislative regulation fails to keep up with the recruitment strategies employed by right-wing extremist groups. The United States has long-standing general harassment laws that have been retrofitted to cover online hate and harassment like cyberbullying, doxing, and swatting (Anti-Defamation League, 2021b; Hinduja & Patchin, 2021). However, these laws are inconsistent in their coverage, often unenforced, and, as Aghazadeh et al. (2018) note, they generally do not address mob behaviors like those used in Gamergate. In methods of mob harassment with “multiple individuals each contributing a small piece to the online torrent of abuse, law enforcement officials are stymied when they attempt to prosecute one person for the attacks” (Aghazadeh et al., 2018, p. 193). Further, any new laws will likely still see challenges trying to address more subtle provocations, including those hidden in memes and dog whistles. Regulators’ strict adherence to the concept of free speech in the first amendment often allows hate speech to slip through the cracks (Cohen-Almagor, 2018; Lamberg, 2001), exposing at-risk groups to abuse online. By eschewing stronger regulations against hate speech in favor of a broader interpretation of free speech, the federal government “helps to normalize or even authorize the relevant hate speakers to carry on doing what they are doing” (Cohen-Almagor, 2018, p. 45). Likewise, communication and VoIP apps like Discord are protected under 47 USC 230: Protection for private blocking and screening of offensive material (1996), which frees platforms from liability for user-generated content, allows them to screen content without being labeled a publisher, and demotivates the policing of content (Communications Decency Act of 1996, 1996). This enables platforms to harbor extremist and white supremacist ideology with little fear of government oversight. Politicians have made efforts to introduce legislation that punishes these offenses and holds companies accountable, although with limited results (Aghazadeh et al., 2018; Makuch, 2019). With these past struggles for legislative regulation in mind, Roblox's lawsuit against Ruben Sim (Carpenter, 2021) represents an intriguing step in determining how game companies might utilize legal means to hold players accountable.

Conclusions

Many mainstream games reflect inherent equity and inclusion problems in the industry. Those games then circulate cultural models that position non-White, non-male persons as outsiders. This, in turn, has fomented toxicity and harassment toward marginalized players and allowed gaming culture to become one where once-fringe, anti-democratic, right-wing extremist views are increasingly normalized. As a result, misogynists and white supremacists continue to operate in the open with impunity. The long-term impacts of a generation of youth witnessing, if not participating directly in, such a culture without consequence is difficult to predict. The immediate impacts of hate directed towards marginalized players continue. Much work remains as we identify and understand the conditions, mechanisms, consequences, and enablers of this process.

Footnotes

Acknowledgments

This work was generously supported by a small gift from the Anti-Defamation League although the opinions, conclusions, and recommendations listed herein may not necessarily reflect their views. We would also like to thank Julia Gelfand, University of California-Irvine School of Information and Computer Sciences Librarian, for her assistance in identifying and obtaining many of the articles cited herein.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Biographies

Agnes was a curator at the Semmelweis Museum in Budapest, Hungary, and before coming to UCI, she worked as a web developer and UX designer in Okinawa, Japan.

Agnes’s research focuses on how game design and added moderation tools impact the social dynamics in online multiplayer games. She seeks to find ways to reduce disruptive behavior and hate-based harassment and create more welcoming, inclusive, and safe environments for all. In her current research, she examines game companies’ policies and moderation mechanisms to understand how to improve online safety and the well-being of the gaming community.

Constance formerly served as Senior Policy Analyst under the Obama administration in the White House Office of Science and Technology Policy, advising on videogames and digital media. She is the founder of the Federal Games Guild, a working group across federal agencies using games and simulations as tools for thought, and the Higher Education Video Games Alliance, an academic non-for-profit organization of game-related programs in higher education. Her research has been funded by the Anti-Defamation League, the Samueli Foundation, the MacArthur Foundation, the Gates Foundation, the National Academy of Education/Spencer Foundation, the National Science Foundation, and the Universities of Cambridge, Wisconsin-Madison, and California-Irvine. She has published over one hundred articles and book chapters including six conference proceedings, four special journal issues, and two books. She has worked closely with the National Research Council and National Academy of Education on special reports relate to videogames, and her work has been featured in Science, Wired, USA Today, New York Times, LA Times, ABC, CBS, CNN NPR, BBC and The Chronicle of Higher Education.

Constance has a PhD in Literacy Studies, an MS in Educational Psychology, and three Bachelor’s Degrees in Mathematics, English, and Religious Studies. Her dissertation was a cognitive ethnography of the MMOs Lineage I and II where she ran a large siege guild. Her husband Dr. Kurt Squire is Co-Director of the GLS center at UCI. They live with their two adolescent gamers in Southern California where they enjoy surfing, trail running, camping, and all manner of headset-wearing, dps-flinging, computer-screened mayhem.