Abstract

Defence often uses rationalistic approaches for the adoption of AI, but we question whether this is appropriate. By studying adoption using naturalistic approaches in the work environment where AI is actually employed, we better can capture situated issues around trust and adoption. We conducted a longitudinal study of expert intelligence analysts adopting a machine-vision Object Detection and Recognition Overlay (ODRO) within their mission teams and tooling, premised on improving analyst functions. We employ and adapt naturalistic decision-making models to identify key situational challenges, showing that naturalistic approaches can better inform Defence’s AI adoption. Specifically, we find that organisational rewards for accuracy influence analysts’ willingness to adopt and rely on imperfect tools, and that analysts develop a non-uniform relationship with ODRO over time, which we translate into design implications. Crucially, our results support a model of interdependence over prevalent task-allocation paradigms that assume human and AI as functionally divided, rational entities.

Keywords

Introduction

Defence organisations face a paradox; conceptual thinking and technology suppliers promise Artificial Intelligence (AI) will provide a decision advantage, yet its adoption remains slow and uneven across the organisation. Internally, the Defence Concepts Teams (Ministry of Defence, 2018) have proposed that automated tools such as machine vision must be adopted to achieve a decision advantage over adversaries, and recent reports have identified the need to ‘rapidly up our digital game’, highlighting the gaps between ambition and observed practical adoption (House of Commons, 2025). These findings are backed by allies, including NATO, who have identified that AI is a cross-cutting capability that will embed itself in the cross-section of operational processes, including the intelligence function, in the coming decades (Wells, 2022). Therefore, there is a strategic imperative to adopt AI that is challenged by the practical difficulty of achieving that adoption.

We report a longitudinal naturalistic study of expert intelligence analysts as they adopt a machine vision tool. The analysts’ role is to identify and recognise objects, scenes, and activities within live and historical video footage. This human analytical role is time and resource-consuming; it can be monotonous, with lengthy video streams often containing little to no recognisable activity. At other times, high levels of concentration are required to interpret and understand busy environments, thus analysts find themselves decision-making in complex environments, with time pressure, accountability, high stress, and uncertainty experienced. The machine vision tool that the intelligence analysts are adopting is an Object Detection and Recognition Overlay (ODRO) that is being introduced into existing workflows. ODRO provides visual outputs in the form of bounding boxes around detected objects, classification labels, and confidence percentage indicators overlaying the video feed. We focus on the situated factors that shape operator trust as they adopt this tool, embedding it into their work practice.

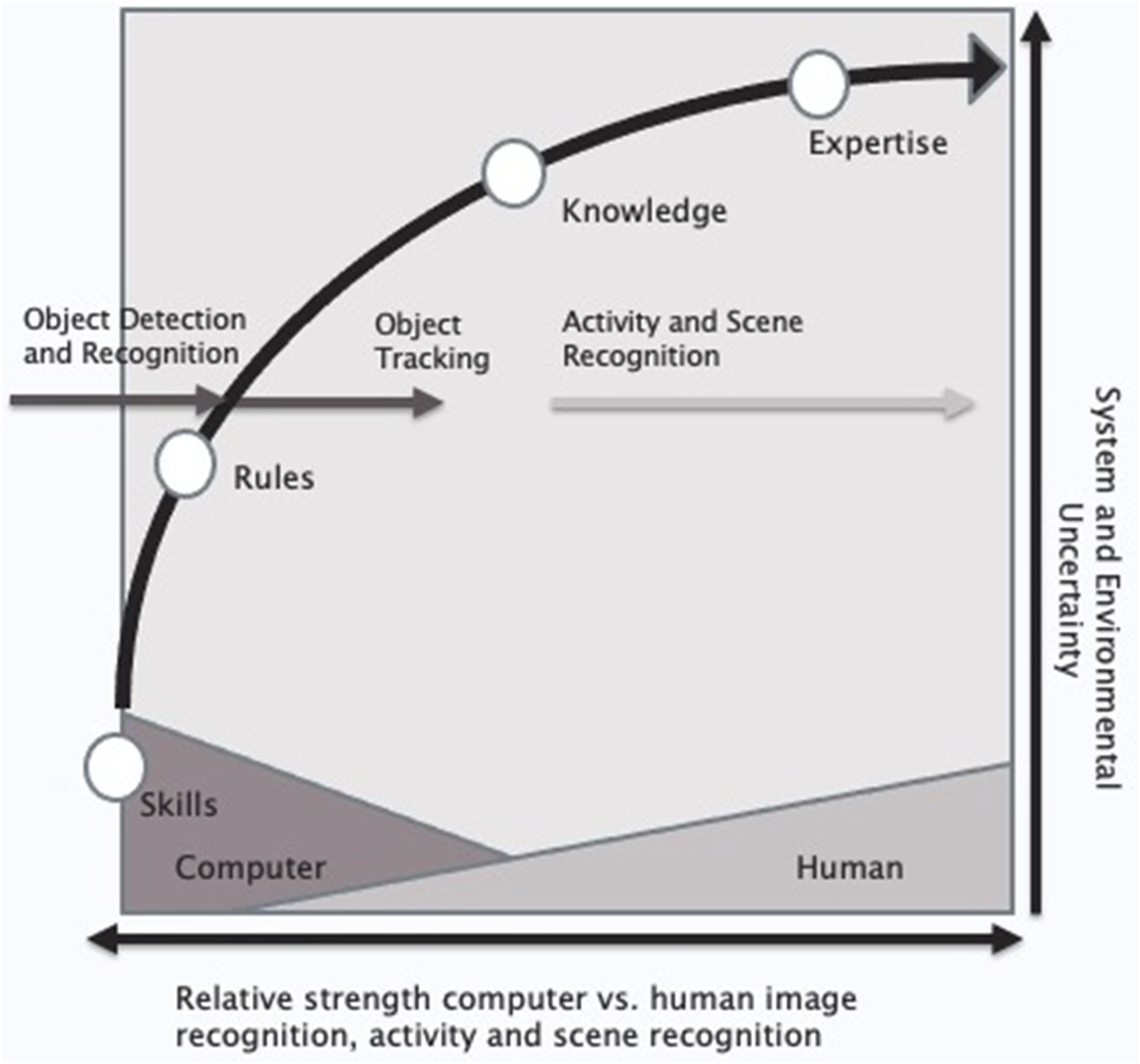

ODRO is designed to detect, recognise, and track objects; it cannot perform activity or scene recognition, this is beyond its relative strength (Cummings, 2018), but it supports the analyst’s activity recognition. For example, ODRO can detect, classify, and track a vehicle. It is for the analysts to recognise the scene, with responsibility for any decisions that are made based upon that recognition. Thus, there is an interdependence between analysts and ODRO as the analysts’ decision-making is informed by the object detection and tracking that ODRO performs. It should be noted that ODRO is imperfect; it makes errors in its detections, it misses objects, and it incorrectly identifies and classifies objects, which depending upon the situation, if employed by the analysts could have potentially severe consequences.

We focus on three related but distinct constructs. Firstly, trust, which has been conceptualised as a relational construct (Castelfranchi & Falcone, 1998; Mayer et al., 1995). Trust is informed by an individual’s willingness to depend on another party. A dependence belief may be hard, manifest as either a perceived necessity, ‘I must depend on the party to achieve the desired outcome’, or soft, as a flexible preference, ‘I will achieve a better outcome by depending on the party’ (Castelfranchi & Falcone, 1998). ‘Trust’ is therefore the analyst’s dynamic belief state governing their willingness to rely appropriately on ODRO. It is a relational state influenced by technical factors (system accuracy and predictability), situational factors (mission criticality), and organisational factors (rewards). Specifically, we examine how the organisational imperative for accuracy acts as a reward mechanism that shapes the analysts’ willingness to adopt and rely on the tool. Trust is continually earned and re-calibrated through the analyst’s ongoing experience, particularly as they learn the boundaries of system failure. Secondly, interdependence, Hoffman and Johnson studied the interdependence between humans and machines (Hoffman & Johnson, 2019), building on the work of Cummings when she described that both machines and humans have relative strengths (Cummings, 2018), and that there is a need to leverage the strength of each. Considered as a method, it can be used to understand the relationship between analysts and tool, and to inform the design approach. By adopting this approach the question becomes how do we build and support interdependence relationships, rather than how do we allocate functions (Johnson et al., 2018). ‘Interdependence’ denotes the ongoing, mutual shaping of the human and tool’s contribution over time within the socio-technical system. It recognises that the relationship is not the simple rationalistic allocation of functions but an evolving coupling that leverages the relative strengths of both participants; ODRO in object detection/tracking, and the analyst in scene understanding and decision-making. This is manifested in the analyst’s sense of being informed by rather than simply using the system. Thirdly, ‘adoption’, refers to the act of integrating ODRO into the analyst’s practices and product development, representing a behavioural and practical commitment where the tool is embedded into the daily workflow. This process is dynamically shaped by the analysts’ evolving perceptions of ODRO, which is discussed as a shift from ‘teammate’ to ‘tool’. By clarifying the distinctions between our three constructs, we can interpret how analysts calibrate their reliance on the ODRO tool, thus reshaping their workflows as ODRO is adopted.

Consequently, this study aims to identify and articulate the factors of interdependence that intelligence analysts perceive as influencing their trust and the adoption of the ODRO tool. This, in turn, facilitates the development of an interdependent relationship in the pursuit of decision advantage. Hence, we propose two research questions: - R1. What situated factors shape perceived trust and adoption when expert analysts begin using ODRO in complex environments? - R2. How do trust and adoption perceptions change, with experience and across situational contexts, for example, the perceived criticality or risk to mission?

R1 examines the perceived factors that influence trust and adoption in complex settings. While the concept of trust and adoption has been studied extensively in the context of human–machine interaction, our research diverges by exploring trust and adoption in complex, highly critical, risky Defence environments that are characterised by stress and uncertainty, as tools are utilised in team-based settings. R2 was developed to address the research gap on the dynamic and situated nature of trust (manifesting as undertrust and overtrust) and its impact on informed decision-making and information sharing between analysts and ODRO.

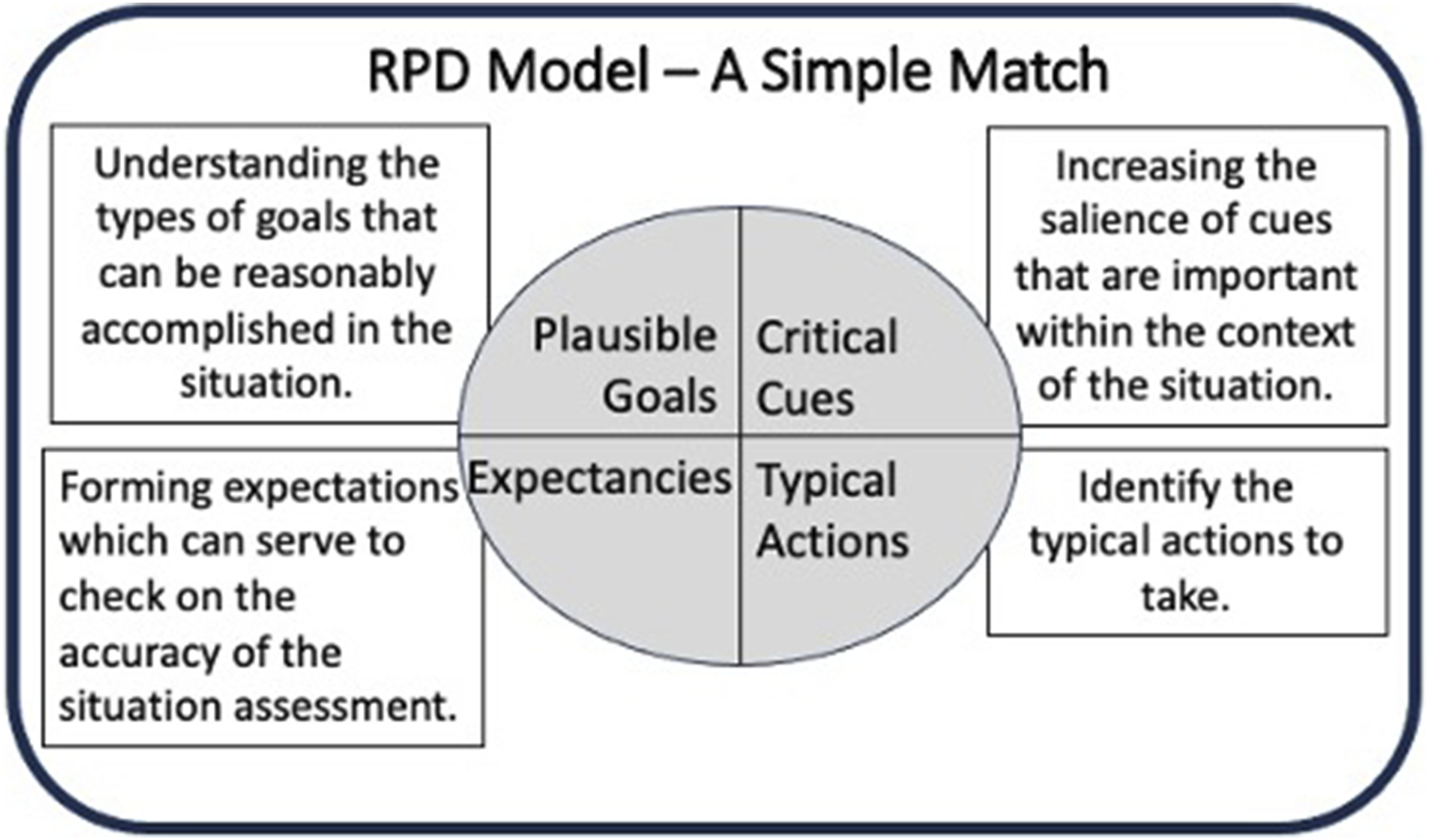

In this study, we have adapted and applied Klein’s recognition-primed decision model to deconstruct the decision-making process, allowing us to understand how the tool informs analysts’ decision-making. Klein’s model was chosen because its application has been shown (Bond & Cooper, 2006) in situations where expert analysts continually strive to make sense of the situations they observe, leveraging their experience to recognise patterns within these situations. This process aligns with the way analysts describe the construction of an evidence-based shared narrative when they are performing their role.

This study makes three contributions. First, it identifies ODRO’s specific, situated factors as they are perceived by the analyst including; the accuracy of the object identification, the analysts’ knowledge of the boundary conditions understanding and predicting when and why the tool will fail, the tool’s configurability, setting the thresholds for object identification, and organisational rewards that inform the analysts’ behaviours such that they are willing to rely and integrate the tool in their workflow. Second, we identify and document the non-uniform reframing among the analysts initially conceiving ODRO as a ‘teammate/advisor’ before adoption and viewing it as a ‘tool’ after adoption, highlighting how role, risk, and context change the interdependence they have with ODRO. Thirdly, we translate these insights into design and adoption implications for Defence: exposing the requirement for configurability that is informed by an analyst’s understanding of when a system such as ODRO may fail. This is the first longitudinal, naturalistic study of ODRO adoption in operational defence settings, providing unique insights into how trust and interdependence evolve in real-world analyst teams.

Related work

In this section, we examine the literature that informs our understanding of trust, interdependence, and the adoption of AI tools such as ODRO. We focus on how these constructs have been conceptualised and operationalised in complex environments, particularly those characterised by uncertainty, risk, and human–machine collaboration. Our aim is to identify frameworks and findings that support the development of situated interdependence between analysts and AI tools, and to explore how these insights can inform Defence’s approach to adoption.

Trust in automation and AI

The literature on trust in automation and AI is developing at a pace (Bradshaw et al., 2013; Hoff & Bashir, 2015; Hoffman et al., 2018; Hoffman & Johnson, 2019; Parasuraman et al., 2000), as a result of the drive to delegate more aspects of our daily routine to machines; this creates a need for mutual trust between humans and intelligent machines (Andras et al., 2018). Foundational research has identified key components of trust, which we define as the analyst’s belief state governing their willingness to rely on ODRO. Trust is informed by predictability, dependability, and faith (Lee & See, 2004; Muir, 1987); it informs a decision to trust tools that calibrate reliance on an automated system. Users build trust in an automated system through processes similar to the way trust forms between humans (Muir, 1987). Further work has provided principles of trust (Castelfranchi and Falcone, 1998) that inform a decision to delegate, these, while developed for multi-agent systems, provide a helpful construct for the trustors belief that they should delegate. Much of this foundational research assumed that trust is static and that it is uniformly distributed among users. Our findings vary, as we found that trust in ODRO was dynamic and situated within context, it evolved over time as the analyst gained experience of the tool in varying operational settings with varying demands. Recent work has recognised that trust is not static as an attribute; it is a dynamic belief state that changes over time updated by the experience and interaction that one has with the tool. Most recently (Li et al., 2024) proposed a multidimensional framework that includes the trustor, trustee, and the interactive context, which we refer to as the situated environment. Here, it is suggested that trust is shaped by both technical performance and the situational environment in which the system is employed. Furthermore, the underlying provenance of this technical performance is critical. Research into data-driven industrial applications indicates that the method of model development directly impacts configurability and accuracy. Addula and Sajja demonstrate that utilising Automated Machine Learning (AutoML) to automate hyperparameter optimisation and model selection can mitigate the human variables typically found in manual model design (Addula & Sajja, 2024). By streamlining these complex configuration steps, development pipelines can achieve higher repeatability and closer adherence to theoretical optimums (Addula & Sajja, 2024), thereby establishing the baseline of technical reliability that operational users, such as our analysts, must assess. Our study extends this by showing that in Defence settings, trust is shaped not only by the technical performance of the system and its operating context, but also by organisational ‘rewards’ (Galbraith, 2009) that shape the analyst’s behaviour, and by the analyst’s evolving understanding of the system boundaries, such that they perceive that they know when the tool will fail. Afroogh et al. argue that trust and distrust act as regulators of AI adoption, and that trustworthiness must be understood in both technical and ethical dimensions (Afroogh et al., 2024). We complement this with our empirical evidence, where analysts describe trust as being earned over time, informed by their perception of the tool’s accuracy and by understanding the limitations of the system when they expect ODRO to fail.

Interdependence and human–machine teaming

Traditional models of automation often focus on task/function allocation determining which tasks are best performed by humans and which by machines (Fitts, 1951) coined in the phrase MABA-MABA men are better at – machines are better at. This rationalistic approach forms the foundation of current AI design philosophies that see the AI (ODRO) and the analyst as being two rationalistic entities in a relationship predicated purely on fixed functions and task allocation. This overlooks the fundamental point that the analyst’s decision-making is naturally dynamic it is situationally driven, necessitating a theoretical shift. The models and arguments on automation have moved on, with the recognition that substitution was not appropriate, as ‘designers accept that automation will transform the practice of people and be prepared to learn from these transformations as they happen’. As a result, the question needed to change to design for coordination between people and automation (Dekker & Woods, 2003). More recently, the argument is strengthened by Hoffman and Johnson as they argue that design must shift toward a design for interdependence, where human and machine capabilities are entwined and mutually leveraged, thereby reframing the design challenge from task/function allocation to relationship building. While these models provide the theoretical foundation, there is a gap regarding how interdependence is actually experienced in operational Defence environments. Our findings address this gap by documenting how analysts’ perceptions of ODRO shift from viewing it as a teammate or advisor to seeing it as a tool, and how this reframing is influenced by the situated context. We perceive that this reframing towards the design for interdependence is critical, as it describes the mutual shaping of the human and tool’s contributions over time within the socio-technical system. Interdependence isn’t static; while augmentation focusses on the additive capability of tools, interdependence addresses the dynamic teaming required. In the situated complex operating environment, the tool’s detections are imperfect, thus to employ the tool analysts must recognise and dynamically mitigate the tool’s imperfections.

Boundary conditions

For a comprehensive review of the challenges of adopting automation, we recommend Lee and See’s review (Lee & See, 2004). Most importantly, they developed a conceptual model that described how trust is governed and the effect that this has on the user’s reliance on automation. Lee and See introduced the term ‘boundary condition’, to include situations where there is uncertainty and complexity when using automation, when it is not possible to evaluate all possible options. They identified a need to define these conditions to inform design and adoption approaches and to ensure appropriate trust is used (Lee & See, 2004). We question whether these boundary conditions can be sufficiently defined to ensure appropriate trust is possible in the context of Defence’s complex operating environments. We must question how they will be learnt over time by users, given that ‘each interdependent situation can be unique’ (Gerpott et al., 2018). Our research suggests that users will need to develop and update their patterns of interdependence with ODRO, informed by direct experience with varied and unpredictable boundary conditions. This is a key area where our findings extend the literature by showing how boundary conditions are learnt and operationalised in practice, rather than simply defined in advance.

Augmentation and trust

The work of Bradshaw et al. is applicable for the context we were considering, as it describes the challenges of adopting automation and autonomy within complex military settings (Bradshaw et al., 2013). We note that we are progressing beyond automation to a situation of interdependence (Johnson et al., 2018). This is occurring as while ODRO can detect and track it does not have the relative strength (Cummings, 2018) for scene recognition, this is performed by the analyst. Designing for such interdependence requires us to understand the machine’s critical contributions to that which the analysts will depend upon, what Castelfranchi describes as a dependence belief when an analyst perceives that they need to depend on it, strong dependence. Or that it is better to rely on it than not rely on it, a weak dependence (Castelfranchi & Falcone, 1998), while ensuring that the relationship is appropriate to deliver efficient and effective outcomes (Lee & See, 2004). When considering what informs this, if we accept that environmental complexity exists, boundary conditions must be explored sufficiently to be perceived as being understood. Makarius extends the concept of boundary conditions to include people in the context, both the role they fulfil and the technical readiness of the adopted solution (Makarius et al., 2020). Our study examines the role element, acknowledging both the role of the user and the tool as a situational context, and adds to this literature by empirically demonstrating how analysts calibrate their dependence on ODRO, based on three key factors: firstly, their perception of its technical capabilities, secondly, the changing situational demand, and finally, their own perceived competence. This situational dependence is not static; it evolves as analysts adapt to their mission, changing environments, and shifting operational requirements.

Frameworks for situated AI adoption

Adoption frameworks for AI often draw on rationalistic models, where performance metrics and utility are used to inform the adoption of tools. However, we perceive that naturalistic decision-making models offer a more holistic framework for the situated adoption of these tools. Thus, Klein’s Recognition-Primed Decision model Figure 1 was adopted to understand the analyst’s process of expert decision-making in naturalistic settings, to help define where and how interdependence is occurring between ODRO and the analysts as decision-making occurs. The model was selected because it has been shown to describe decision-making in emergency and critical situations (Bond & Cooper, 2006), because it accounts for the operational setting and the experience of the decision maker. The Cummings model Figure 2; skills, rules, knowledge, and expertise (Cummings, 2018), although criticised for being too general to be of use (Hoffman & Johnson, 2019) was selected and adapted because it was designed to support individuals from differing technical backgrounds as they conceptualise the functional allocation that occurs between human decision makers and autonomous systems. When adapted, using Kamkar’s taxonomy of detection, recognition, tracking, and scene recognition (Kamkar et al., 2020) the model helps to describe the limitations of ODRO’s capabilities and where the analysts’ expertise best fits. Klein’s RPD model for adoption to identify where ODRO was supporting the analyst’s expert decision-making. An adapted relative strength model.

Domain specific considerations

A significant portion of the trust literature is focused on domains such as autonomous driving, our study varies because we research a different domain that presents unique challenges worthy of study. Our study of ODRO adoption in an operational setting provides the opportunity to identify how trust and interdependence are shaped by these unique challenges. • High pressure: Analysts are required to make rapid decisions based on continually adapting visual cues. • False alarm rates: ODRO misclassifies objects, requiring vigilance, and additional cognitive load by the analyst. • Consequences of error: Misidentifications could have significant implications.

There is a notable gap in the literature regarding how trust and adoption are negotiated in such high-pressure, complex Defence contexts. Our work addresses this gap by providing empirical evidence from a longitudinal, naturalistic study of expert analysts, highlighting the situated and evolving nature of trust, adoption, and interdependence in real-world operational environments, demonstrating how trust, adoption, and interdependence are negotiated in complex defence environments.

Method

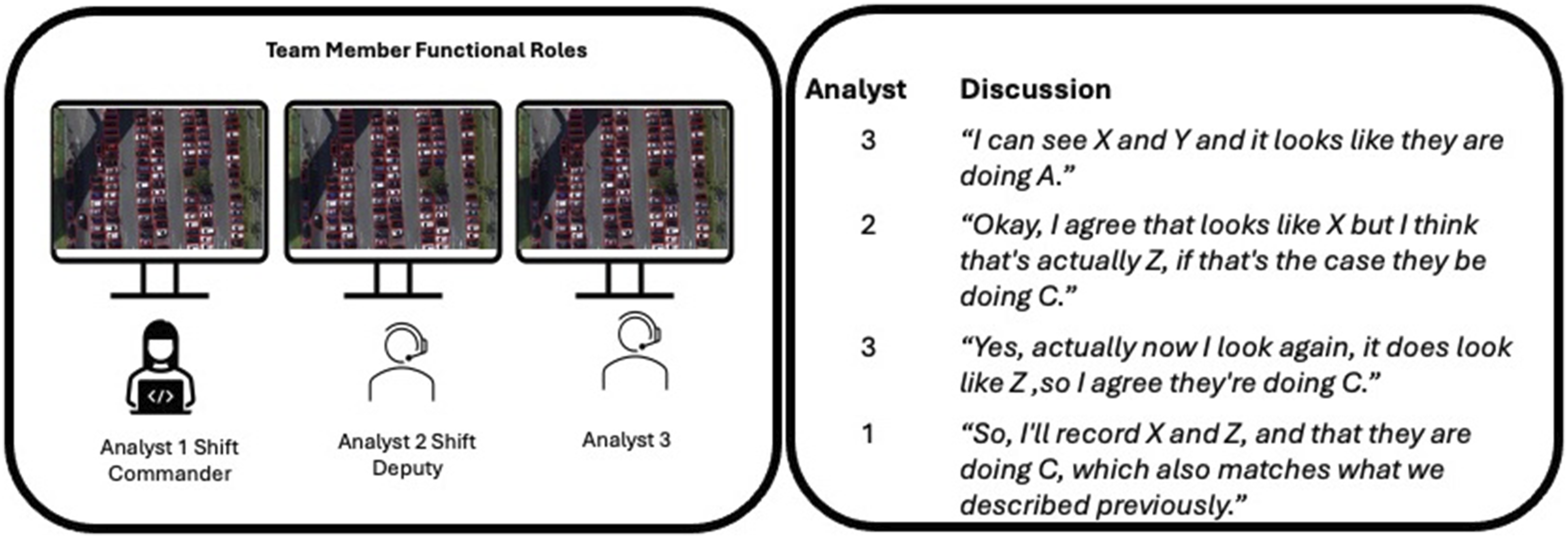

This qualitative study was developed and undertaken collaboratively with experts from various disciplines, including machine learning, human-computer interaction, and defence. As a result, we were able to obtain detailed and open insights from the participants in the functional roles described in Figure 3. Team members and their functional roles.

Participants

A total of 12 participants were recruited for this experiment. All participants were intelligence analysts, serving in the armed forces.

The analysts were drawn from four teams on two shifts. Participation in the study was voluntary, with no direct incentives or coercion. The potential participants were approached via an internal email, each potential participant was provided with an informed consent form and then briefed on the studies, aim, its confidentiality, their anonymity, before becoming study participants. The inclusion criteria required analysts to be in date for their intelligence training and to have at least six months experience within their current role. There was no exclusion on the basis of rank, gender, or age, demographic data was collected, but will not be shared as part of the study. The research was approved by the University of Southampton Ethics Panel (ERGO 57535) and had a favourable opinion from the Ministry of Defence Ethics Committee (1096/MODREC/20).

The participants were part of four teams in two shift teams and had previously worked together. Unfortunately, three participants moved organisations during the experiment; while unforeseen, this is a common occurrence. As a result, nine participants completed the longitudinal study.

At the start of the study, participants had between 9 months and 2 years of current role and between 1 and 20 years of total experience as intelligence analysts. All participants were operating object detection and recognition tasks at the time of this study and no participants had used ODRO before.

Study design and procedure

This was a two-phase longitudinal qualitative study. • Pre-deployment interviews captured the analysts’ baseline attitudes, expectations, and previous experience with automation and AI tools. • Deployment interviews were conducted after a three-week interval comprising two weeks of hands-on ODRO training and one week of operational use.

All interviews were conducted individually, using Microsoft Teams in a voice-only setting, by the same researcher to ensure consistency. The interviews lasted between 45 and 90 minutes, the audio was recorded with the consent of the participant and subsequently transcribed verbatim. On completion of pre-deployment interviews ODRO training was delivered through practical exercises by a field service representative of the organisation that provided the ODRO tool. During practical exercises, participants were able to explore the capabilities and limitations of ODRO and develop ways of working such that they could integrate it into their workflow.

During both the pre- and post-deployment phases, analysts worked in their current team configurations. Teams comprise three roles: two analysts actively monitored the video feed, one described what is seen, while the second annotated the observed activity. The third role, the team commander then takes the output from the two analysts to develop an intelligence product. Throughout their shift, analysts engaged in continuous verbal, non-verbal, and visual coordination, sharing, challenging, and refining their recognition of objects and activities. ODRO was integrated into this workflow, providing detections that analysts would discuss, verify, and incorporate into their intelligence products. This workflow and team structure reflect the actual operation in the real world, thereby ensuring ecological validity and relevance to operational practice.

Figure 3 illustrates the typical flow of information and interaction among team members; while difficult to depict, box 2 shows an example of the continual conversation that occurs between the analysts as they share and challenge and form a recognition of what is occurring within the scene.

Design

Semi-structured questioning investigated trust, adoption, and analysts’ view of ODRO’s productivity. Structured questioning investigated how ODRO was perceived to support the analyst’s RPD process. Specifically, interview probes were designed to correspond to the four RPD quadrants Plausible Goals, Critical Cues, Expectancies, Typical Actions (Klein, 1993), allowing us to deconstruct where the tool informed decision-making. The interviews were conducted individually, with the collection of data for this study occurring in two distinct phases. The pre-deployment interviews aimed to capture the analysts’ predisposition to this technology and their view of the planned adoption of ODRO. The deployment interview was conducted after the participants had an initial two week training package in ODRO and one week of operational use to understand how the participants’ views of the system changed since the pre-deployment baseline interview.

Interview protocol

A copy of the interview questions can be found at Questions. The semi-structured interview guide was developed through a review of the literature and was piloted with two senior analysts not included in the main study. The interview consisted of five sections: • (1) Prior experience and attitudes towards automation, drawing from the work of Glass and Jhim (Glass et al., 2008; Verame et al., 2018). • (2) Perceptions of trust and reliability, questioning what was informing their belief that they should trust the tool (Castelfranchi & Falcone, 1998). • (3) Team processes and interdependence, to identify processes that could be shared between analysts and tool (Van Diggelen et al., 2018), and analyst decision-making, to understand where the tool supported analyst decision-making (Klein, 1993). • (4) Adoption and workflow integration, questioning their motivation for the delegation of agency and what was sustaining their motivation for delegation (Verame et al., 2016). • (5) Reflections on system boundaries and failure modes and questions of what informed their belief that they should trust the tool (Castelfranchi & Falcone, 1998; Lee & See, 2004).

Open-ended and probing questions were used to obtain detailed responses. All core questions were asked in both phases, with additional probes in the deployment phase to explore changes in the analysts’ perception.

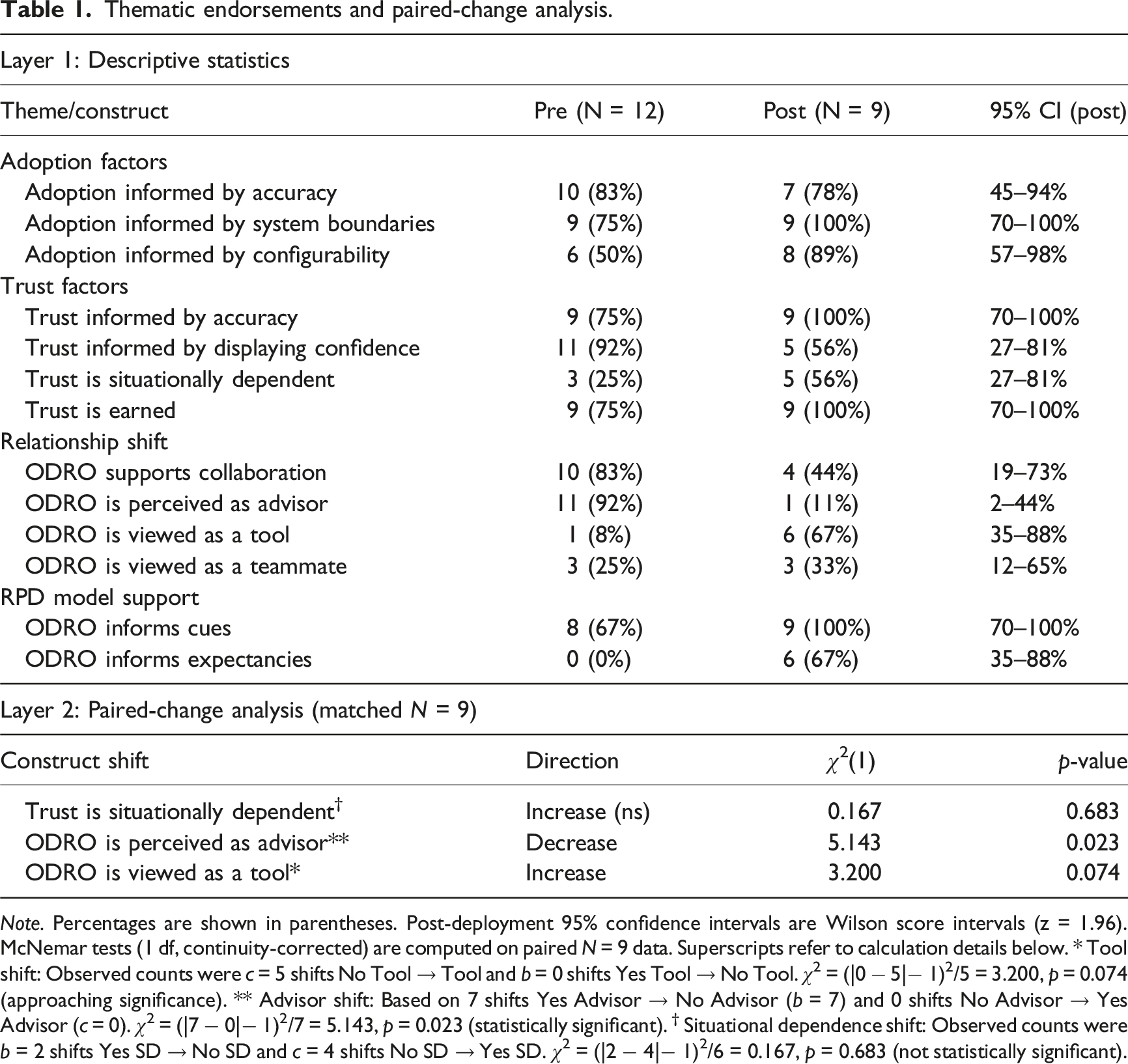

Data analysis

Thematic endorsements and paired-change analysis.

Note. Percentages are shown in parentheses. Post-deployment 95% confidence intervals are Wilson score intervals (z = 1.96). McNemar tests (1 df, continuity-corrected) are computed on paired N = 9 data. Superscripts refer to calculation details below. * Tool shift: Observed counts were c = 5 shifts No Tool → Tool and b = 0 shifts Yes Tool → No Tool. χ2 = (|0 − 5|− 1)2/5 = 3.200, p = 0.074 (approaching significance). ** Advisor shift: Based on 7 shifts Yes Advisor → No Advisor (b = 7) and 0 shifts No Advisor → Yes Advisor (c = 0). χ2 = (|7 − 0|− 1)2/7 = 5.143, p = 0.023 (statistically significant). † Situational dependence shift: Observed counts were b = 2 shifts Yes SD → No SD and c = 4 shifts No SD → Yes SD. χ2 = (|2 − 4|− 1)2/6 = 0.167, p = 0.683 (not statistically significant).

Results

A total of twelve analysts participated in the pre-deployment phase, with nine completing the post-deployment phase. The results are displayed both as the absolute number of analysts who endorse each theme and as the corresponding proportions to aid interpretation. Where appropriate, theme changes are highlighted to reflect how individual analysts’ perception of ODRO shifted over time.

Thematic findings

Table 1 summarises the main themes identified in both phases, showing the number and proportion of analysts who endorse each theme, each count represents the number of unique analysts endorsing the theme in each phase. For the post-deployment phase, proportions are calculated out of nine (the number of analysts who completed both phases). Where possible, within-theme changes are described in the text. The within-theme changes were analysed for the nine analysts who completed both phases. As an example, seven analysts who initially viewed ODRO as an advisor ceased to view it as such post-deployment. Similarly, four analysts who did not initially describe trust as situationally dependent did so after deployment.

Adoption

• Accuracy: Most analysts cited system accuracy as a key factor informing adoption, both before (10/12, 83%) and after (7/9, 78%) deployment. • System Boundaries: Understanding ODRO’s operational boundaries was perceived as being more important post-deployment (pre: 9/12, 75%; post: 9/9, 100%). • Configurability: The perceived importance of configurability increased after deployment (pre: 6/12, 50%; post: 8/9, 89%).

Trust

• Accuracy: Trust was consistently associated with the perceived accuracy (pre: 9/12, 75%; post: 9/9, 100%). • Displaying Confidence: Fewer analysts cited the display of system confidence as informing trust post-deployment (pre: 11/12, 92%; post: 5/9, 56%). • Situational Dependence: More analysts described trust as situationally dependent after deployment (pre: 3/12, 25%; post: 5/9, 56%). However, this increase was not statistically significant (McNemar, p = .683) • Trust is Earned: The belief that trust must be earned increased (pre: 9/12, 75%; post: 9/9, 100%).

Relationship

• Collaboration: The perceived view that ODRO supports team collaboration decreased post-deployment (pre: 10/12, 83%; post: 4/9, 44%). • Advisor Role: Fewer analysts saw ODRO as an advisor after deployment (pre: 11/12, 92%; post: 1/9, 11%). • Tool vs. Teammate: The number of analysts viewing ODRO as a tool increased (pre: 1/12, 8%; post: 6/9, 67%), while those viewing it as a teammate remained stable (pre: 3/12, 25%; post: 3/9, 33%).

RPD model support

• Cues and Expectancies: More analysts reported that ODRO informed their cues and expectancies post-deployment (cues: pre: 8/12, 67%; post: 9/9, 100%; expectancies: pre: 0/12, 0%; post: 6/9, 67%).

Given the small sample size, we employ Wilson Score Confidence Intervals (95% CI) to ensure rigorous interpretation of proportions. The change in endorsement rates between pre- and post-deployment for the nine matched participants was also evaluated for statistical significance using McNemar’s test for paired categorical data. The results, which should be considered exploratory and indicative of trends, are presented with 95% CIs in Table 1. For example, the proportion of analysts who viewed ODRO as a tool post-deployment was 67% (6/9; 95% CI: 35%–88%).

What is informing adoption

Participants were asked to describe what informed their adoption of the ODRO. Initially, they repeatedly described that it was their experience of the system that was informing their adoption. Further questioning identified R1 was informed by accuracy, understanding the system’s boundaries row 2 and configurability row 3. In particular, on deployment, all participants expressed the need to understand the boundaries/thresholds of ODRO in different situations, so that they could identify when ODRO would provide incorrect detections to develop an appropriate interdependence with ODRO as the participant compensated for ODRO. For example, participant 2 described: ‘once I understand where the reliability threshold really is and where it needs to increase, but at the moment it’s not quite there’ (participant 2 deployment interview).

What is informing trust

We asked participants what informed their trust, questioning reliability and predictability as defined by Daronnat et al. (2020). Then we questioned on ODRO displaying confidence in its prediction and how this informed the participants’ trust in ODRO, building on the work of Verame (Verame et al., 2016) to identify trust factors informing R1. Row 4 identifies accuracy as increasingly informing trust. We perceive this is because accuracy is an organisational reward, a motivation for the individual analysts, teams, and organisation. As an example, participant 10 during deployment interview described: ‘Again, it’s going back to you, and you can see what it is quite clearly telling you and that’s something that’s completely different and so you’re questioning why are you telling me this? In that regard can I trust you’.

With respect to R2, row 6 shows that, on deployment, participants increasingly described that they would be less likely to trust the system in situations they viewed as being critical or high risk. For instance, participant 5 mentioned during the deployment interview: ‘because depending on what’s going on at the time, I would feel nervous using it. I’d delegate to it less if the situation was critical’.

When asked what was informing trust in the ODRO prior to and post-deployment, in row 7 participants described experience as the critical factor informing their trust increasing on deployment. Notably, participants described that their trust in the ODRO would be established as it does for other team members, over time, through observing their reliability and accuracy as participants build an understanding of ODRO’s boundaries; participant 1 described: ‘It needs to earn its credentials however; that’s the same for anybody that joins us on the shift, we don’t know who they are how good they are so when they identify things, we the team will look at what it is that they are identifying and whether they’re getting these right, at which point we will know that they know what they’re doing, they know what they’re looking for, so again we need to know its credibility’.

Analysts’ perceived relationship with ODRO

In row 8, pre-deployment participants expected ODRO to support team collaboration as described by (Van Diggelen et al., 2018) and that it would advise them, helping them overcome human fallibility, however both decreased significantly. The notable reframing of ODRO from ‘Advisor’ to ‘Tool’ post-deployment highlights the analysts’ shift toward a more calibrated, interdependent relationship. The initial ‘advisor’ perception (92% pre-deployment) reflects a default human tendency to anthropomorphise AI and expect collaboration. However, direct experience with ODRO’s imperfections and boundary conditions forced analysts to recalibrate, leading to the view that ODRO is a practical tool to be actively managed, rather than a fully trusted teammate. This finding supports the NDM perspective: trust must be earned and is regulated by the analyst’s situated understanding of the system’s operational constraints.

However, those who had viewed the ODRO as a teammate before deployment row 11, continued to view the ODRO in this manner post-deployment, suggesting the relationship with the ODRO was not uniform across the participants, nor did it change uniformly. Participant 7 deployment interview described their relationship with the tool: ‘You then start a process of second-guessing each other such that you can narrow it down, at the minute it’s almost like having another imagery analyst with us’.

How is ODRO perceived to support analyst Recognition-Primed Decision-Making (RPD)?

Analysts described a further benefit of the system being that on occasions ODRO identified objects that were beyond the analysts’ focus, identifying objects which should be considered; but these detection and recognition are subject to error, thus provisioning broader situational context, comes at a cost. For instance, Participant 1 during the deployment phase discussed: ‘So it was helping me to understand what was going on as a pattern of life around the surrounding area, and so while it wasn’t necessarily helpful for the main event, it was helping to highlight things that I would disregard immediately, and tell me that there is an object here or there which you ought to be focusing your attention to’.

No participants described ODRO as informing their actions to take prior to deployment, a feature that is beyond the system’s relative strength (Cummings, 2018). However, on deployment, three participants described ODRO as informing their actions to take; this could be because the analysts perceived that they were integrating ODRO more deeply into their decision-making process and thus perceived that its output was informing their actions to take. Before deployment, analysts did not describe ODRO as helping to inform their expectancies. This perception shifted once the ODRO was put into operation. The shift in analysts mentioning the role of AI in informing their expectations post-deployment could be attributed to a variety of factors. One possibility is that the practical experience with ODRO in real-world scenarios provided insights and tangible identifications that analysts may not have anticipated during the pre-deployment phase. As analysts interacted with the deployed ODRO system, they probably observed its impact on their decision-making processes, data interpretation, or task automation, leading them to recognise its role in shaping their expectations. Post-deployment, 6 of 9 analysts identified that ODRO helped to inform their situational expectancies (67%). This additional situational awareness provided by ODRO served to inform their situational assessment against which they would check to see if their expectations were being violated. Participant 10 (deployment interview) described: ‘absolutely yes, it keeps going back to you it is telling you different things it’s that conversation in your head, between the two and you it’s telling you one thing and you’re either agreeing with it or because of XY and Z, no I think because of X and Y and said I think it’s this and then a few seconds later you might find that it changes his mind’.

Discussion

With respect to R1, we found that the trust between the analyst and ODRO is informed by what the analysts describe as their experience. When asked what they mean by experience, the analysts describe this as the accuracy of the tool, thus, accuracy of the recognition was the most stated informing factor as it contributed to task and team performance. We expected to find that Castelfranchi’s view of agent trust, competence, disposition, dependence, and fulfilment beliefs (Castelfranchi & Falcone, 1998) would categorise trust between ODRO and team members. However, our findings correlated with only the competence belief, a perception that ODRO is useful to the goal that the analysts and team are undertaking. This is informed by the perception that ODRO contribution is imperfect, thus the analyst has a weak dependence belief (Castelfranchi & Falcone, 1998). The analysts perception is that a better outcome can be achieved by trusting ODRO than not trusting it. This belief is sustained by the analysts’ recognition of relative strengths (Cummings, 2018); they accept ODRO’s imperfect object tracking because it augments their own strength in scene recognition. The collaboration is therefore defined not by blind reliance, but by a strategic interdependence where the analyst compensates for ODRO’s weaknesses to achieve a superior combined outcome.

When considering why accuracy was the most stated factor, we perceive that it is necessary to consider the situated nature of accuracy’s importance, which in part comes from the organisation of which the team are part. Here we note that Galbraith describes the organisation through five interconnected organisational design considerations, one of which is the reward that individuals receive (Kates & Galbraith, 2010). Given that analysts produce intelligence products, and that it is the product which they produce that is rewarded for accuracy, we see how in this case an organisational design consideration is informing participant adoption. Further experimentation, where organisations reward differing individual actions, could be undertaken to validate this.

With respect to R2, we found that analysts described their delegation as being dependent upon the severity of the situation, a component of the operating environment. They would be less likely to trust and delegate in situations where there could, for example, be a risk to life. Thus, trust was found to be situationally dependent, and a weak dependence belief was situationally informed and dependent.

We also found that an analyst’s dependence belief is informed by the analyst’s self-recognition of their individual competence; they too make errors. Thus, a better outcome can be achieved through a weak dependence on an inaccurate tool, when used to inform a shared object detection and recognition and the analyst’s activity and scene recognition. Further work could identify how the degree of self-awareness of perceived self-competence informs the analyst’s dependence belief. Castelfranchi discusses putting aside the trust in beliefs and in the knowledge source (Castelfranchi & Falcone, 1998). We propose that our study has investigated this and that trust and belief in the knowledge source are what the analysts describe as knowing the system’s boundaries. From an analyst’s perspective, they want to understand when the system will fail. Once the analyst understands these situations, they then compensate for ODRO when these situations occur. We perceive that knowing the system’s boundary conditions becomes an individual knowledge source that analysts employ to update their competence and dependence belief in ODRO. Further study should be undertaken to understand how these knowledge sources are informed and change over time, such that they are sufficiently accurate for the situations the analyst may encounter.

We found that the design of tools such as ODRO can be informed by adapting Cummings’ relative strength and employing Klein’s RPD model. We adapted Cummings’ model Figure 2 using Kamkar’s description of object detection, recognition, object tracking and activity and scene recognition (Kamkar et al., 2020) to describe the functions that the analysts undertake, replacing the function of information-processing for which the model was originally designed. We observed that the processes the analysts were undertaking with ODRO to understand what was occurring within scenes correctly mapped to the model; for example, it was the analysts’ knowledge and expertise that provided activity and scene recognition, as ODRO has no knowledge of the environment and thus is limited to skills and rules; it cannot provide knowledge or expertise.

We perceive that Cummings’ diagram could be further expanded, it identifies uncertainty as a factor that drives the need to move from skills to rules, knowledge and expertise. Given ODRO is an imperfect tool, the relative strength of the tool was perceived to be lower depending upon the perceived criticality of the situation. Further research could look to refine this model, identifying the relationship between the uncertainty of the external environment and uncertainty within the internal system, which in this situation included the data collated, the tool, and the analyst.

When considering the RPD model, our findings indicated that most analysts perceived that ODRO was provisioning them with relevant cues and that it was informing their expectancies, which informed their overall situational assessment and supported their identification of whether they misunderstood the situation. We did not find that ODRO could provision the analysts’ goals that could be achieved, nor did the ODRO inform their actions to take. Thus, the model defines where the current ODRO supports and informs decision-making. By identifying this, it is possible to further design around these strengths and understand the roles that the ODRO and the analyst fill within the interdependent decision-making process. Further research could investigate how current tools that recommend actions to take could be improved through the application of the RPD model, noting that these actions are informed by goals, cues and expectancies that would be distributed across the analyst and the tool. The application of NDM models, such as Klein’s RPD model, in design ensures that AI tools support the analyst’s meta-cognitive skills such as recognition, adaptation, and perceptual judgement, rather than simply automating discrete tasks. This contrasts directly with the task-allocation approach, which, by dividing functions cleanly, risks reducing the analyst’s role and inhibiting the development of expertise. The Relative Strengths approach inherently supports a more active and meaningful division of labour, where responsibilities are dynamically based on context, expertise, and performance, allowing the socio-technical system to balance strengths and offset risks.

Our findings suggest that successful AI adoption in Defence and similar complex operating environments requires more than technical system performance; it requires transparency, configurability, such that it supports continual user learning. We would recommend that designers and policymakers should prioritise user-centred approaches, that support the human learning of tools boundary condition, and mechanisms that help trust calibration over time. Continual research will be required to ensure that AI adoption strategies remain responsive to the evolving needs and experiences of expert users in complex environments.

Conclusion

Our longitudinal naturalistic study has questioned experts as they adopt an AI tool. This has provided a unique opportunity to understand what is informing their trust and adoption of this imperfect tool. Our examination of existing research identified current open challenges that we could explore forming the questions we investigated with the experts. We found that FMV analysts have a weak dependence belief in the ODRO tool, they perceive that they will trust and employ the tool as long as it provides a better outcome. The employment of the tool is situated within a dynamic operating environment with varying complexity and operational risk. In situations where there is a risk to life, analyst describe that they are less likely to employ the tool. A self-recognition of competence was found to be an informing factor of the analyst weak dependence belief, this relationship between self-awareness of competence and how this can appropriately inform trust and adoption requires further study.

We identified that there is a relationship between organisational reward and the trust and adoption of the ODRO tool. When considering adoption of tools such as ODRO, it is therefore helpful to have an understanding of what the organisation rewards are, that will inform what the adopter values. We have also shown that existing models, in our case the relative strength model and the recognition-primed decision model, can be adapted to inform appropriate adoption. By framing adoption through the lens of NDM, our work moves beyond the rationalistic task-allocation models that assume fixed, divisible functions. The NDM perspective, particularly through the concept of interdependence, is demonstrably better suited to the complex and dynamically uncertain nature of real-world intelligence work, ensuring that human AI teaming elevates, rather than reduces, human potential. Cummings’ relative strength model neatly described the relationship between ODRO and the analysts when adapted with Kamkar’s description of detection, recognition, tracking and scene recognition, the functions that the tool and analyst are undertaking. Cummings’ model has been critiqued for being too general as to be of use (Hoffman & Johnson, 2019). We would argue that when holistically describing the challenge of adoption in the ‘real world’, that is, the situation in which we have employed it, the model has helpfully informed the role that both human and machine assumed and could be further extended to inform the relationship between uncertainty within the systems human and machine and the external situation, helping us to delineate uncertainty between a tripartite relationship of human, machine and the relevant environment.

An important limitation of this study is that we interviewed only 12 participants prior to deployment and 9 participants on deployment. This limitation on participant numbers comes as a result of the nature of the environment under investigation, a small team in a specific setting. This does not detract from it being an important research setting. Therefore, we perceive there is a requirement for further work to refine qualitative research methods for limited case studies such as this specialised defence case. Once undertaken, this would provide a better understanding of how to suitably apply scientific research methods to what the real world offers in terms of important research opportunities. We recognise that we have not addressed the generalisation question with respect to our findings. Our key contribution is therefore the articulation of a situated, NDM-based framework informed by rich qualitative data. There is therefore a requirement for further study in a related intelligence field to understand which findings from this work correlate and thus are generalisable to the field of intelligence, thereby building a body of evidenced work that Defence can draw upon to inform Defence’s approach to the adoption of AI.

Footnotes

Acknowledgements

The authors would like to thank the intelligence analysts who participated in this study for their time and insights.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.