Abstract

We agree with Skraaning and Jamieson’s assertion that the “failure” construct is not always clearly defined in human-automation interaction research. We applied their proposed taxonomy to explore how failures have been characterized in driving automation research based on a recent scoping review we conducted. We discuss the insights gained and the challenges of using the taxonomy to characterize driving automation failures: (1) Utilizing the taxonomy confirmed that driving automation research is limited in failure scenarios tested. (2) Applying the taxonomy to empirical studies on driving automation is challenging due to limited information on underlying failure mechanisms. (3) Failures can be difficult to classify due to the complexity of technology and environmental factors. (4) Researchers and designers should know failure mechanisms, but drivers will not.

Introduction

Skraaning and Jamieson (2023) argue that the concept of “automation failures” has been poorly defined in human-automation interaction research. We agree with their assertion that more explicit and detailed definitions of this construct are needed and support their efforts to develop a taxonomy of automation failures to inform future research and design endeavors.

As human factors researchers studying surface transportation, we have witnessed a surge in research on driving automation. The introduction of automation to driving can enhance safety and convenience, with systems like forward collision warning and automatic emergency braking demonstrating active safety benefits (e.g., Cicchino, 2023). However, as automation takes over more aspects of the driving task such as steering and speed control, failures can result in fatal outcomes (e.g., National Transportation Safety Board, 2020). There has been much (what many now realize to be unjustified) hype surrounding “autonomous” vehicles, but little in technical details and clear guidance from manufacturers about their inner workings and operation.

In addition to the scarcity of technical details about how new automated systems may function, automation in driving is also different than other domains of study in that it is a safety-critical system operated by members of the general public with a wide range of abilities and characteristics and little or no training. Unlike other safety-critical domains, automation in driving is partly introduced as a consumer product or “add-on feature” to its operators (e.g., Super Cruise, 2023). Thus, user satisfaction and convenience are important considerations, and marketing plays a big role in users’ perceptions and understanding of the technology (Teoh, 2020), sometimes to the detriment of safety (Dixon, 2020). Our research has revealed that even the owners of advanced automation functionalities have significant gaps in their knowledge about the limitations of these systems (DeGuzman & Donmez, 2021). Finally, driving is mostly an automatic, skill-based task where responses are required in the order of seconds (Michon, 1985). Except when they may anticipate an impending failure, drivers do not have the time to problem solve when vehicle control automation fails.

Utilizing a recent scoping review we performed, we explored how Skraaning and Jamieson’s proposed taxonomy can be applied to empirical research on driving automation that focused on automation failures. Given the abovementioned distinctions of the driving domain, we expected to provide useful insights to this discourse. We report our findings and discuss lessons learned from and challenges in applying the taxonomy. We also identify future research needs for a more comprehensive and systematic investigation of failures in driving automation.

Automation Failures in Driving: Automation-to-Manual Takeovers

Recent literature on driving automation has largely focused on emerging technologies that provide sustained vehicle control support but still require some level of human operator involvement, which can be classified as Level 2–4 according to the taxonomy proposed by the Society of Automotive Engineers (SAE International, 2021). When using Level 2–4 automation, the driver may be required to resume control after a period when the automation is controlling the vehicle, often at a point when the automation fails to navigate a particular situation and disengages. Such transfers of control are referred to as takeovers and have been widely investigated (De Winter et al., 2021), with extensive research efforts focusing on takeover performance and quality measures (e.g., McDonald et al., 2019; Weaver & DeLucia, 2022; Zhang et al., 2019). Takeovers also include non-failure cases such as control transfers that are driver-initiated (e.g., McCall et al., 2019).

There have been various efforts to classify different takeover situations. Chen et al. (2023) recently reviewed these classifications and proposed their own framework, categorizing takeovers based on whether they are associated with limitations in the automation’s operational design domain (ODD), the operating conditions behind them, their scheduling, their initiation source, and their level of urgency. Based on this framework, we adopt the view that scheduled takeovers that occur when the automation reaches known or anticipated external conditions beyond its ODD (e.g., car reaching a work zone or highway exit) are not automation failures.

Characterizing Failures in a Representative Sample of Driving Automation Studies

Sample Overview

We recently completed a scoping review aimed at understanding how driver eye gaze measures were used to evaluate driving automation systems. The review followed the framework proposed by Arksey and O’Malley (2005) and the Preferred Reporting Items for Systematic reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) guidelines (Tricco et al., 2018). For this commentary, we use the findings to explore how failures have been characterized in the empirical literature on vehicle control automation.

The review focused on SAE Levels 2–4 automation, and although it does not encompass the entire breadth of empirical studies on driver-automation interaction, we consider the sample of identified studies to be representative of the wider literature on the topic, given the scale of the search and diversity of included articles. After a systematic literature search and screening process, 164 articles were identified as meeting the eligibility criteria for the review. Relevant data was extracted, including information on any takeover scenarios included in the studies. 81 of the studies focused on driving automation that can be classified as SAE Level 3 or 4 (automated driving); 69 focused on SAE Level 2 driver support systems, while 14 studies described some combination of automated driving and driver support. 137 studies were conducted using a driving simulator, with relatively few others having an on-road (10), test track (8), or naturalistic driving (6) component.

Identifying Articles Reporting on Failures

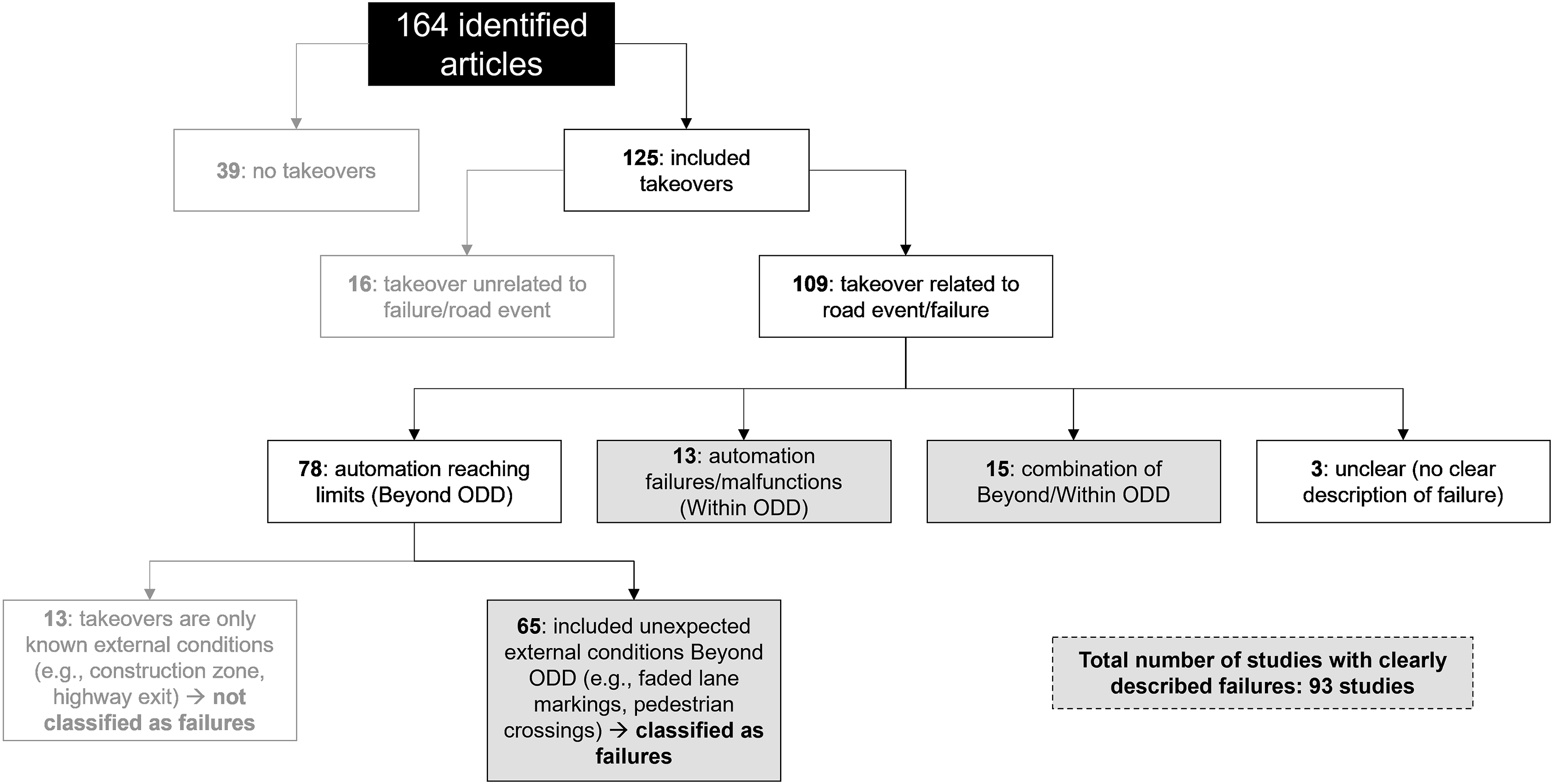

Figure 1 provides an overview of the takeover scenarios in the articles identified in the review. Of the 164 articles, 125 reported on studies that included takeovers, of which 109 described takeovers that are related to automation failures or events occurring on the road. The takeovers in the remaining studies were mostly either driver-initiated (e.g., Akash et al., 2020) or unrelated to any event (drivers were just prompted to take over control without an associated failure; for example, Rauffet et al., 2020). Overall, out of the initial set of 164 studies, 93 studies were identified that involved an automation failure: 65 had failures due to unexpected external conditions beyond the automation’s operational design domain (ODD), 13 had failures that could occur while the vehicle is within the automation’s ODD, which could represent hardware or software malfunctions, and 15 included a combination of takeovers within and beyond the ODD. Classification of takeover scenarios in the included studies into automation failures and non-failures.

Classification of Automation Failures Using Skraaning and Jamieson’s Taxonomy

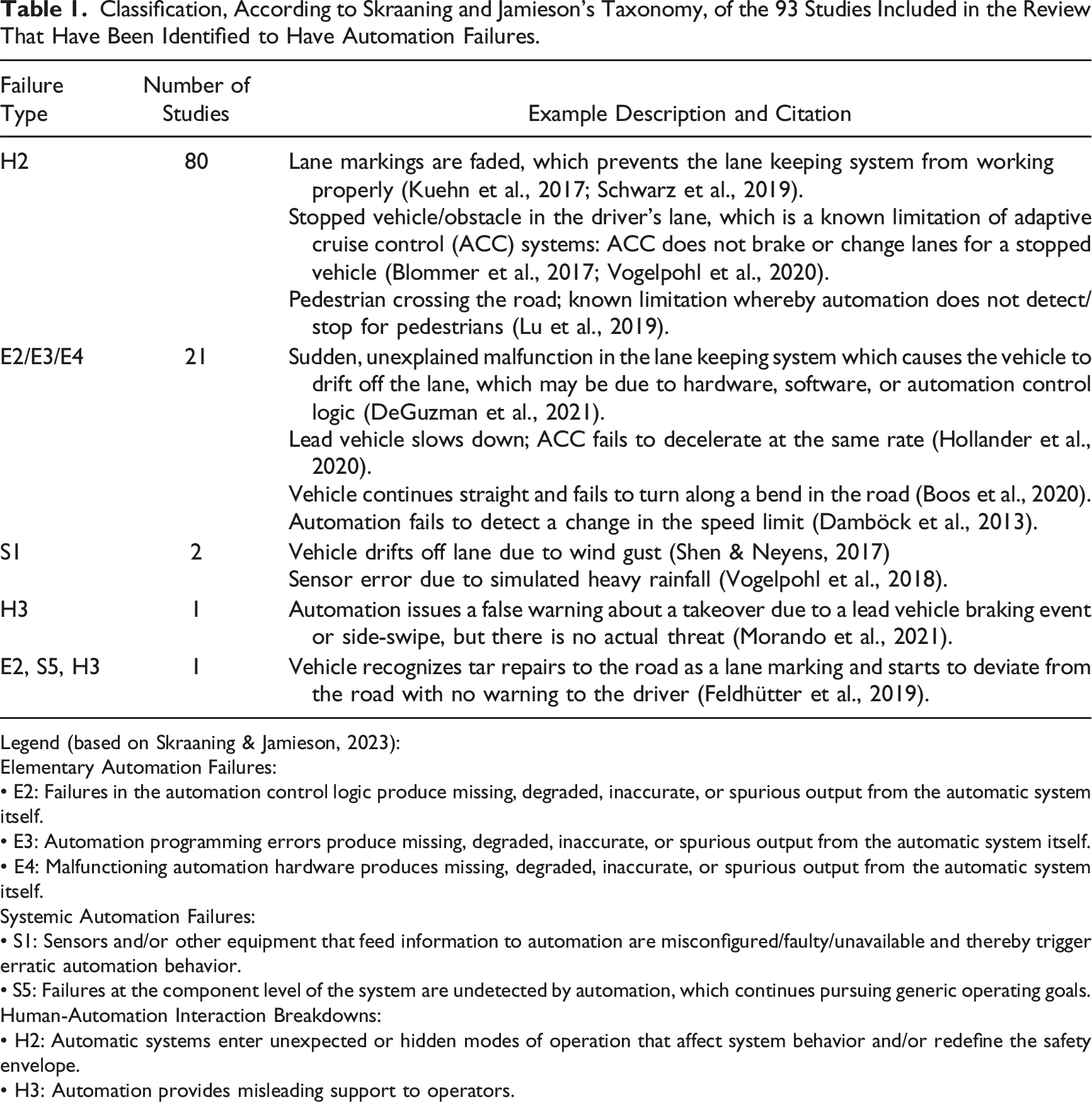

Classification, According to Skraaning and Jamieson’s Taxonomy, of the 93 Studies Included in the Review That Have Been Identified to Have Automation Failures.

Legend (based on Skraaning & Jamieson, 2023):

Elementary Automation Failures:

• E2: Failures in the automation control logic produce missing, degraded, inaccurate, or spurious output from the automatic system itself.

• E3: Automation programming errors produce missing, degraded, inaccurate, or spurious output from the automatic system itself.

• E4: Malfunctioning automation hardware produces missing, degraded, inaccurate, or spurious output from the automatic system itself.

Systemic Automation Failures:

• S1: Sensors and/or other equipment that feed information to automation are misconfigured/faulty/unavailable and thereby trigger erratic automation behavior.

• S5: Failures at the component level of the system are undetected by automation, which continues pursuing generic operating goals.

Human-Automation Interaction Breakdowns:

• H2: Automatic systems enter unexpected or hidden modes of operation that affect system behavior and/or redefine the safety envelope.

• H3: Automation provides misleading support to operators.

88% of the studies (n = 82) investigated human-automation interaction breakdowns; specifically, situations where the automation enters “unexpected or hidden modes of operation that affect system behavior and/or redefine the safety envelope” (Skraaning & Jamieson, 2023). Based on our interpretation, this category of failures most closely describes failures in which the automation encounters situations it cannot navigate, or what Chen et al. (2023) refer to as “unexpected external conditions” beyond the ODD (e.g., lane keeping system disengages due to faded lane markings on the road). Around 20% of the studies (n = 22) focused on takeover scenarios which could be caused by failures in the automation control logic, the programming, or the hardware, and which can be attributed to elementary automation failures that “produce missing, degraded, inaccurate, or spurious output from the automatic system itself” (Skraaning & Jamieson, 2023). An example is a sudden disengagement of the lane keeping system that causes the vehicle to drift off the road for no apparent reason (e.g., Wilkie et al., 2019). Relatively few studies investigated other failure types, particularly those that can be classified as systemic automation failures.

Insights Gained

Applying Skraaning and Jamieson’s taxonomy to a large sample of driving automation studies has revealed interesting insights and directions for future research.

Utilizing the Taxonomy Confirmed That Driving Automation Research is Limited in Failure Scenarios Tested

A majority of the studies investigated situations where the automation reached ODD limits (e.g., drifting off-road due to faded lane markings). Most of the remaining studies focused on sudden failures that cause the automation to disengage for no explainable reason. Relatively few studies examined takeovers that can be categorized as systemic automation failures, such as sensor errors due to weather conditions (e.g., Vogelpohl et al., 2018). It appears that the scope of research on vehicle control automation is limited to a few types of failures from Skraaning and Jamieson’s taxonomy, and many of the studies examined similar takeover scenarios that may not capture the variety of failures that occur in the real world.

For example, rear-end collisions due to automated braking account for a high proportion of collisions (Biever et al., 2019), but have not been utilized much as experimental scenarios. As driving automation technology is evolving and proliferating with limited available technical details about its functioning, researchers interested in “upcoming” or “emerging” technologies may have selected scenarios based on speculation of what elements of the automation may fail, or based on specific experimental needs (i.e., to answer specific research questions). In some cases, potential failures are unforeseen even by designers of the technology, such as the recent incident involving a Cruise “Robotaxi” and a pedestrian already struck by another vehicle (Hawkins, 2023). The lack of variety in scenarios investigated may also be due to limited resources available to researchers, or due to convenience (De Winter et al., 2021). Other researchers have pointed out this lack of variety and realism in investigated scenarios (De Winter et al., 2021), and advocated for stronger collaborations with industry, particularly with automated vehicle manufacturers, that would inform more systematic investigations of driving automation failures based on real-world failure scenarios. Attempts to define and categorize automation failures, such as Skraaning and Jamieson’s proposed taxonomy, could support such efforts.

Applying the Taxonomy to Empirical Studies on Driving Automation is Challenging Due to Limited Information on Underlying Failure Mechanisms

Although we found the taxonomy helpful in characterizing driving automation failures investigated in empirical studies, this was not without its challenges. Given that the majority of studies were controlled experiments conducted in simulators, the failures were designed and programmed to test specific automation limitations or unexplained malfunctions/software errors. The underlying mechanisms behind the failures, which the taxonomy aims to describe, are unknown or not even necessarily relevant in experimental settings. For example, in the case of the elementary automation failures in Table 1, only the observed outcome (e.g., vehicle drifts off lane) is known, but it’s unclear whether that would be due to a software error or hardware malfunction.

When more details were provided, we were able to characterize the failure more accurately. For example, in Feldhütter et al. (2019), the vehicle recognized tar repairs as lane markings and deviated off the lane. We concluded that this failure was a combination of elementary and systemic automation failures, as well as human-automation interaction breakdowns. The automation control logic failed to distinguish between the tar repairs and actual lane markings. This elementary failure was undetected by the automation, which made the vehicle drift off the lane and follow the path of the tar repairs, a systemic failure whereby the automation “continues pursuing generic operating goals.” This failure can also be characterized as a human-automation interaction breakdown, as the automation provided misleading support by not following the correct lane marking, and not warning the driver, as it “thought” it was following the correct lane.

Despite some of the challenges with obtaining data on real-world incidents (Associated Press, 2023), efforts have been made to standardize and regulate their reporting (National Highway Traffic Safety Administration, 2021). The challenges we encountered applying the taxonomy to published studies may not be as prominent for real-world events.

Failures Can be Difficult to Classify Due to the Complexity of Technology and Environmental Factors

Another challenge in applying the taxonomy to both empirical studies and real-world failures, which Skraaning and Jamieson acknowledge in their article, is that multiple failure mechanisms can be combined. The increasing complexity of the technology, and the wide variety of events that can occur on the road, can make it difficult to isolate one single cause or mechanism of failure. Chen et al. (2023) also warned that classifying a takeover scenario/driving automation failure is not a straightforward process, and proposed a fault-tree analysis approach that also considers driver-related factors including driver state and knowledge about automation limitations. When we applied the taxonomy to published studies, the lines between whether failures could be attributed to ODD limits or internal malfunctions were often blurred, and could depend on a variety of factors not reported in the articles or not extracted in our review, including road environment (e.g., surrounding traffic and infrastructure) and the design of the automation (e.g., the automation capabilities and SAE Level classification).

Researchers and Designers Should Know Failure Mechanisms, But Drivers Will Not

The taxonomy was helpful in obtaining a sense of how failures have been characterized in driving automation research. Driving automation studies testing how humans react to automation failures may not need to incorporate the underlying mechanisms into their design, as in most cases, the drivers will not know and will not need to know these mechanisms. As we argued earlier, the driving domain is unique in that it affords minimal to no time for its operators to problem solve but requires them to act within mere seconds. Given that drivers receive little to no formal training on automation, it might not be practical to apply the same training and practices used in domains like aviation (e.g., Casner & Hutchins, 2019), and to expect drivers to learn and remember numerous limitations (or failure mechanisms) of the automation. One alternative is focusing on the driver’s role (responsibility-focused approach). When we compared training drivers with a limitation versus responsibility-focused approach for Level 2 systems, we found that the responsibility-focused approach outperformed the limitation-focused one (DeGuzman, 2023; DeGuzman & Donmez, 2022; DeGuzman et al., 2023). A better understanding of underlying failure mechanisms is however needed for researchers and designers, for example, when designing in-vehicle displays to increase automation transparency, as previous research has found additional information about the reasons for takeovers to be useful (e.g., He et al., 2021). However, what can be conveyed to the driver is limited by the time available to process the information.

Supplemental Material

Supplemental Material - How Are Automation Failures Characterized in the Driving Domain? Insights From a Scoping Review

Supplemental Material for How Are Automation Failures Characterized in the Driving Domain? Insights From a Scoping Review

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.