Abstract

Attacker psychology is currently under-examined in cybersecurity research. A prior, large-scale study sought to understand attackers’ behavior by testing both technological and psychological deception. Professional “red team” members participated over two days in various conditions. This data was examined for further evidence that cognitive biases, a potential disruption for attackers, may be present, and may be affecting the outcome. An applied, novel methodology for measuring confirmation bias and framing effects is presented using this realistic dataset. Both confirmation bias and the framing effect occurred in this interpretation. The framing effect appears to have reduced attacker interactions with systems in the network, which may benefit cyber defenders. These results provide additional, exploratory evidence that biases in the decision-making of cyber attackers could be used as part of a defensive cyber strategy. Limitations to the approach and directions for future study of attackers are discussed.

Introduction

Human cognition is responsible for many of the most important system-level actions across the vastness of cyberspace, both offensive and defensive. A better understanding of the human operators in cybersecurity through the lens of human cognition—memory, attention, and decision-making—is critical. The community of cyber operators and researchers generally agree that psychological components address a key source of variance that hardware- or software-oriented security solutions lack. These components are understudied but are often highly impactful (Borghetti et al., 2017; Gutzwiller et al., 2019).

Researchers have only begun to study humans in their various roles across all operational segments of cyber, from the naïve user to the advanced professional (Bennett et al., 2018; Buchler et al., 2018; Ferguson-Walter et al., 2019; Gutzwiller et al., 2015, 2018, 2019; Johnson, 2022; Sawyer et al., 2014; Trent et al., 2016, 2019). More recently, this research has expanded to more offense-related cybersecurity operations, using hackers and penetration testers, and even early models of behaviors (Aggarwal et al., 2016, 2017; Cranford et al., 2020a, 2020b). Cognitive science might be able to create conditions for distraction and disruption of attacker thinking and behavior (Gutzwiller et al., 2018). Understanding biases in attacker decision-making is the central focus of this article.

Prior research in operational cybersecurity shows biases and heuristics in decision-making often affect trained and professional analysts. Most of these have been demonstrated in defense-oriented cyber, which involves teams of operators, and orients around protecting devices and networks against intrusion and exploitation. Lemay and Leblanc (2018) found evidence of defensive operators exhibiting the base-rate fallacy (the tendency to ignore base-rate frequency information even when it has no impact on the estimation of likelihood; Bar-Hillel, 1980). They also found evidence of confirmation bias (tendency to search for and view information in order to confirm, instead of disconfirming preexisting beliefs; Nickerson, 1998). Lemay and Leblanc (2018) also found the hindsight bias (viewing events in the past as being predictable at the time; Fischhoff, 1975).

In a particularly compelling and applied experimental example, biases were tested as part of investigating the management of a business network under attack (Bos et al., 2016) as part of a test of prospect theory (Tversky & Kahneman, 1991). Prospect theory predicts that people are generally loss averse meaning they are more likely to work to avoid loss, rather than pursuing perceived gains. Bos et al. (2016) provided evidence that such gain/loss framing effects were operating for cyber defense decision-making. In their experiment, participants were provided with a network that was compromised by a cyber-attack. The network was provided in either a “net-down” state in which all capabilities were shut down and quarantined, or in an “operating” state where services were still operating for customers. Participants were told to protect the network, while reducing risk to the company and avoiding undue business costs. In this situation, there was a fundamental tradeoff between leaving machines up for customers (but vulnerable to attack) or keeping them offline (avoiding attack but damaging the bottom line of the business). Loss aversion predicted the operators would avoid business losses and not seek out gains—and indeed, professional cyber security participants in their experiment were highly averse to quarantining new machines in a “net-up” scenario, versus removing machines from quarantine when starting from a “net-down” situation.

Others have found biases, such as recency bias in monitoring networks for threats. Recency effects refer to the heightened fluency of recent memories and experience in recollection. This fluency leads to an overemphasis of recent events or experiences when making predictions about the future, similar to the availability heuristic (Tversky & Kahneman, 1974). Safi and Browne (2022) found in that cyber operators doing security checks were more likely to re-check if the previous one had caught an incident, despite there being no relationship between incidents. Another pointed out the dangers of action bias—the tendency to act, versus not—for cyber incident response in general (Dykstra et al., 2022). Yet most evidence and examples are defender centric. Far less is known about attackers.

There is some limited conceptual evidence that several biases affect attackers (Johnson et al., 2021). For example, notionally, attackers are often loss averse when it comes to using zero-day attacks. They may fixate or show sunk-cost effects in capture-the-flag (CTF) exercises, or any time-bound cyber challenge frequently found in more difficult certifications. If attackers exhibit biased responses within a network, this would provide several advantages to defenders; first, it may slow down attacker operations by wasting their time on elements within the network that are not valuable (Ferguson-Walter, 2020; Ferguson-Walter et al., 2021b). One can easily imagine biasing selection, or other decisions, toward less-valuable elements in the network. There is also evidence these approaches can alter or influence affect and performance in attackers by making them feel frustration and confusion (Ferguson-Walter, 2020; Ferguson-Walter et al., 2021a). Secondly, a more thorough understanding of attacker cognition and decision-making enables new methods of network design, which could be specifically developed to produce that behavior. This in turn could make attackers more identifiable (attackers would be more likely to follow the pattern which would distinguish them from other users).

Biases influence behaviors beyond those found in more laboratory-based experiments (Gächter et al., 2009; Gigerenzer, 2002). The best evidence for biases in cyber attackers comes from prior examination of a small red team (simulation of cyber offensive actors) cyber experiment (Ferguson-Walter et al., 2017). In that experiment, researchers showed that decision-making biases are present and identifiable; the communications of these experts showed that they committed confirmation bias. These red teamers also showed anchoring effects (Gutzwiller et al., 2019) by becoming fixated on machines on the network that they thought were particularly vulnerable.

Goal of the Study

The goal was to show evidence that attackers commit decision-making biases, to learn about the surrounding conditions, and to learn how to measure biased behaviors. Previously, a list of 89 biases was surveyed (Johnson et al., 2020). The team of Subject Matter Experts (SMEs) reviewing biases for investigation consisted of cognitive psychologists (RSG, HR, and CJ) and cybersecurity professionals (KFW and MM). These SMEs reviewed biases in the literature and determined that both confirmation bias and framing were likely to emerge (i.e., Ferguson-Walter, 2020). This was because users made decisions about different machines on the network that were framed by condition task instructions. These decisions could be traced for their relationship to beliefs and evidence seeking (see Methods section for details). Characteristics of the biases influenced initial selections of what biases to look for in the dataset; first, it was considered whether each bias was robust enough to show up in a noisy, realistic environment, and based on prior evidence of them in cyber contexts.

Confirmation bias or the tendency for people to look for what they want to see and look only for evidence supporting their existing beliefs—while ignoring or disregarding what does not agree (Nickerson, 1998)—is common and has a very long history of observation (Thucydides, 1996).

Framing effects reflect the general shift in decision-making toward risk aversion that can occur when the same information is presented with a gain, versus losses, perspective (Druckman, 2001; Tversky & Kahneman, 1981). Famous examples are often word problems that present people with choices between courses of action, with anticipated results, and measure risk aversion under a positive (gains) and a negative (losses) frame. From Tversky and Kahneman (1981), a brief introduction to a problem: “Imagine that the U.S. is preparing for the outbreak of an unusual Asian disease, which is expected to kill 600 people. Two alternative programs to combat the disease have been proposed. Assume that the exact scientific estimate of the consequences are as follows”

Which is then followed by one or the other framed choice:

Positive Frame

“If Program A is adopted, 200 people will be saved. If Program B is adopted, there is 1⁄3 probability that 600 people will be saved, and 2⁄3 probability that no people will be saved.”

Negative Frame

“If Program C is adopted, 400 people will die. If Program D is adopted, there is 1⁄3 probability that nobody will die and 2⁄3 probability that 600 people will die.” (Tversky & Kahneman, 1981, p. 453).

The finding is that participants overwhelmingly choose the first certain option (A) under positive frames but choose the second, risky option (D) under negative frames (Tversky & Kahneman, 1981). The framing thus biases participants toward certain gains (lives saved) but riskier options when framed as losses (choosing the uncertain option for lives lost). In other words, risks are more likely taken when there is uncertainty and a loss frame. Framing was chosen because one of the key aspects manipulated in the experiment framed groups of participants around the idea of deception’s presence in the network environment. Deception presence specifically alters the gain versus loss perspective of interacting with machines in the network toward uncertainty. Framing was also observed in prior research in which telling red team members that deception may be present in the network, versus not, led them to further investigate machines to determine if they were real or fake—even when that was not the central mission (Ferguson-Walter et al., 2017; Gutzwiller et al., 2019).

Finally, the SME team agreed that the exhibition of these decision-making biases could be revealed by text reports generated as part of normal activity. These report data from Ferguson-Walter et al. (2019a) were the sole source for understanding participant decision-making processes in this article. While initial methods for bias measurement were tested in Ferguson-Walter (2020), this article greatly improves upon them with a completely new, more rigorous assessment.

Hypotheses

First, it was expected that confirmation bias could be empirically observed within this text report data, because cyber actors are humans who will exhibit most decision-making biases regardless of their training or expertise (in this case, highly trained red teamers). • H1. Confirmation bias can be empirically observed within the text report data.

Next, a framing effect was expected to occur around the use of deception. As mentioned above, the framing effect demonstrates that participants make different decisions based on the “frame” of the options (as certain or uncertain gains and losses), even when the same conceptual information is presented. In the cyber study dataset, participants were given instructions that inferred a need to remain undetected and would be likely to view detection as aversive, and so, should be more careful at the onset of the experiment (Ferguson-Walter et al., 2019a); this would most likely manifest as greater uncertainty in machines under deception conditions. Participants on Day 1 (of two) of this experiment were sorted into four groups, manipulating the actual presence of deceptive technology (decoy systems; either present or absent), and psychological deception (informed participants were told that deception may be present; uninformed were not). These conditions resulted in the final hypotheses: • H2a. Informed (negative frame) groups will be more likely to initially rate IPs as uncertain • H2b. Informed (negative frame) groups will be more likely to initially rate IPs as uncertain when decoys are present.

Dataset Properties and Methods

The examination described in this manuscript used data from an experiment on professional red teamers operating on realistic networks over two days (the “Tularosa” data set; Ferguson-Walter et al., 2019a, 2019). The realism of the dataset offered clear advantages over more CTF-style events (Ferguson-Walter et al., 2019). The main goal of the experiment was manipulating both psychological deception and network deception (Ferguson-Walter et al., 2019b). Basic details of the study necessary to understand the bias methodology are described next. For rich detail on the experiment design, measures, and outcomes, the interested reader is referred to (Ferguson-Walter, 2020; Ferguson-Walter et al., 2019a, 2019b, 2021a, 2021b). The study team was covered under the IRB protocol for the original study.

The Tularosa dataset consisted of 138 professional participants. Each participant was instructed to report any significant network information to a fictitious teammate throughout the exercise using the MatterMost chat client. In the study, the following goals and orientation were given to red teamer participants. “You represent an APT group attempting to gather information.... You have achieved an initial foothold on the company network, and now must discover as much as you can about potentially valuable targets on the network. You will conduct recon on the network and locate vulnerable services, misconfigurations, and working exploits.... Your objective is to collect as much relevant information about the target network as you can in the allotted time without compromising future network operations… When you learn potentially useful information about target systems on this network you will immediately report this information to your team.” (Ferguson-Walter et al., 2019a, p. 3)

As part of the experiment, participants were instructed to give “The last 2 octets of the IP address, why you believe the host is interesting, how you obtained this information, [and] estimate its value to future operations” (Ferguson-Walter et al., 2019a, p. 3) in their chat messages. These instructions enabled us to have a reference for what decisions and information were being considered whenever a report was made.

Deception Conditions

The key manipulations in the experiment were deception conditions. Participants on Day 1 of the experiment were sorted into the four initial deception conditions, manipulating the actual presence of decoys as well as psychological deception (informed participants were told that deception may be present; uninformed were not). This created four conditions total: present informed (PI), present uninformed (PU), absent informed (AI), and absent uninformed (AU) for Day 1.

On Day 2, most groups moved into an absent, uninformed condition (AU) to test persistence of Day 1 effects. The Day 1 AU condition group transitioned to a PU condition to test the effect of adding technological deception.

Methods

To examine for cognitive biases, text data from chats provided by individual participants to a fake “teammate” were scored. In general, a thematic analysis approach was taken. The team scored over 4000 chat reports for specific and exhaustive key words and elements. Validity was preserved in two main ways; first, multiple cybersecurity SMEs (KFW and MM) were used to validate and improve the scoring method itself (i.e., relevant terms and reviewing cases). Second, data was finalized by seeking unanimous group agreement across cyber and psychologist SMEs to a large sampling of scores (RSG, HR, KFW, CJ, and MM). These checks for agreement were both exhaustive (covering the vast majority of cases) and frequent (they occurred multiple times over most cases, and over no less than six data check “sessions,” until the entire dataset was coded in complete agreement).

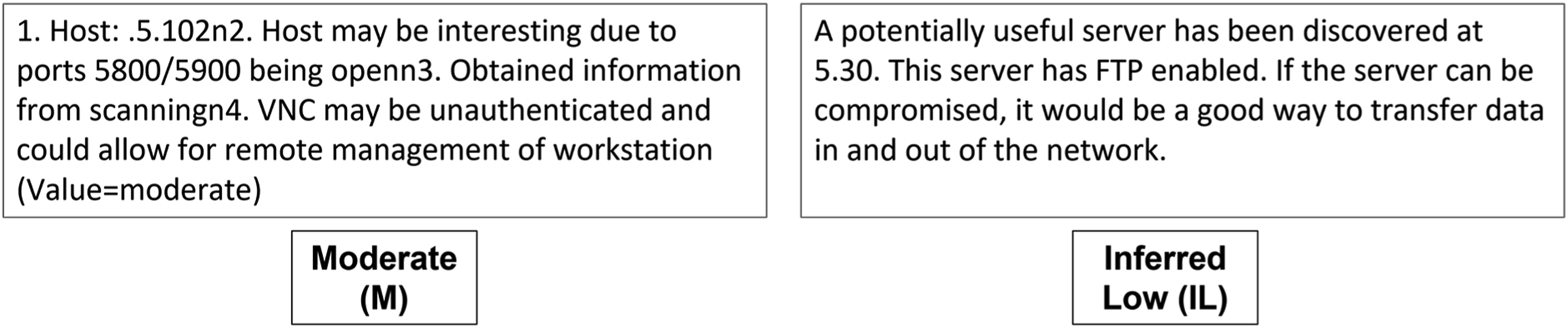

Chats often included specific, stated decisions participants had made, and the outcomes of those decisions (e.g., the selection and failure of a particular exploit on a machine labeled with a specific IP address on the network). Participants also stated their perceived value when referring to these machines. Value can be viewed as a statement about whether a system is real (vs. a decoy or honeypot). Based on the instructions, participants were asked to “estimate … value to future operations,” and therefore value can be multidimensional. However, it is unlikely for a participant to report an IP that is fake as a valuable machine without providing more information about its deceptive nature, if they believe it is indeed deceptive. In some cases, inferences about value were made based on associated context; in those cases, cybersecurity SMEs (KFW and MM) examined contextual information to reach consensus. Inferred versus participant-stated values were distinguished within the dataset as described below. A clarifying example is provided in Figure 1. In the left example chat report, the value is stated by the participant. In the right example, the value is inferred and scored accordingly.

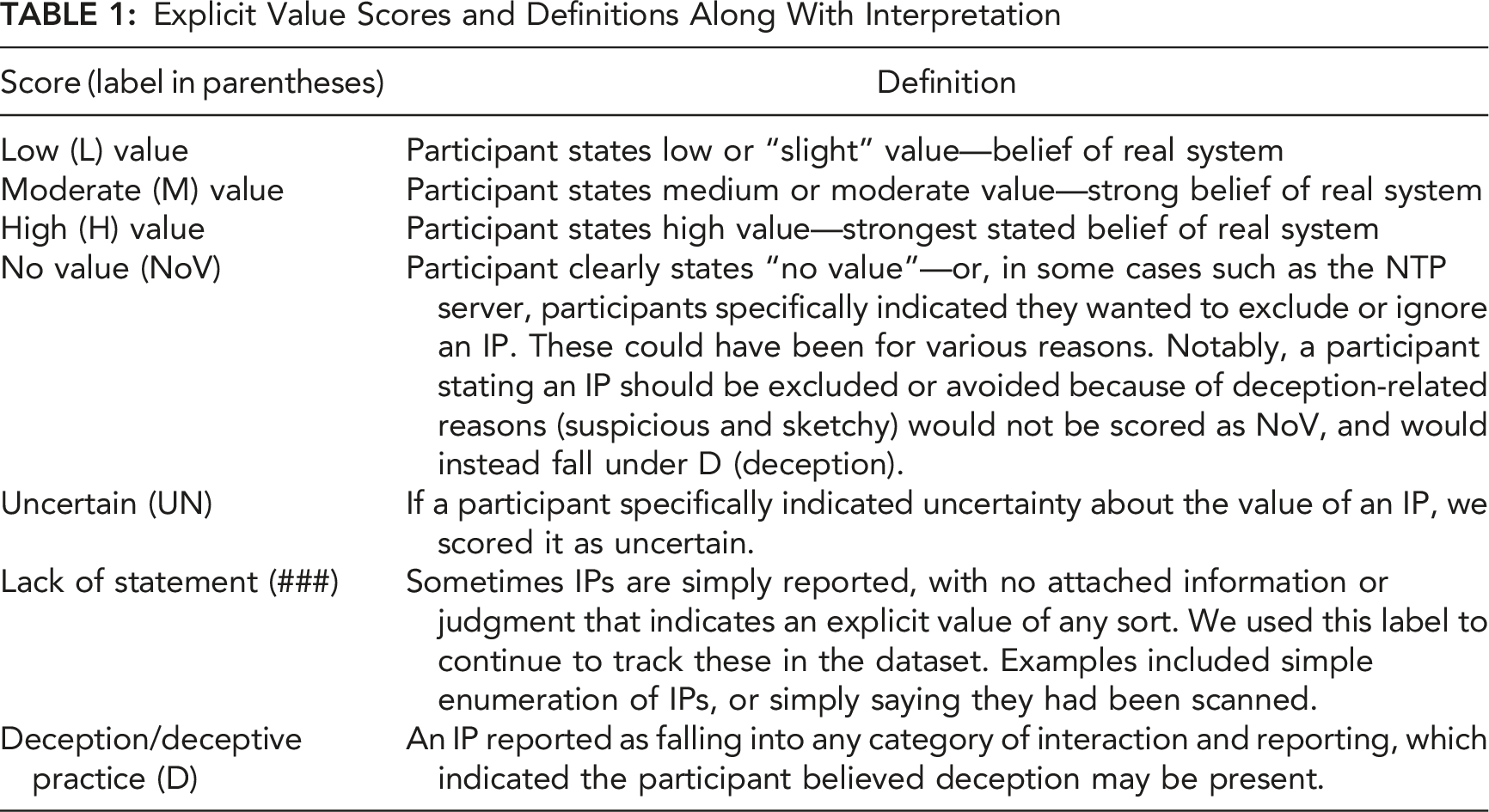

Explicit Value

Explicit Value Scores and Definitions Along With Interpretation

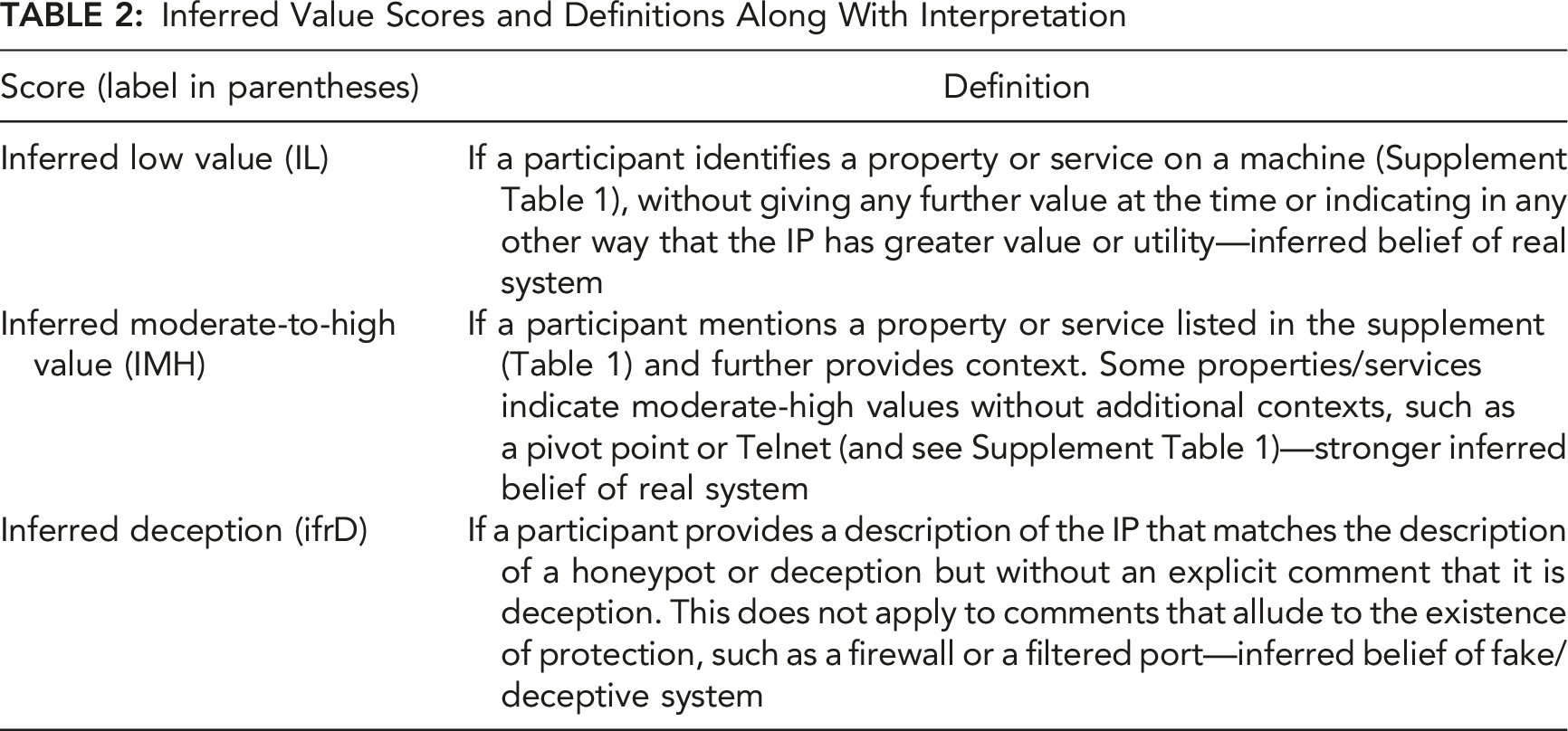

Inferred Values

Inferred Value Scores and Definitions Along With Interpretation

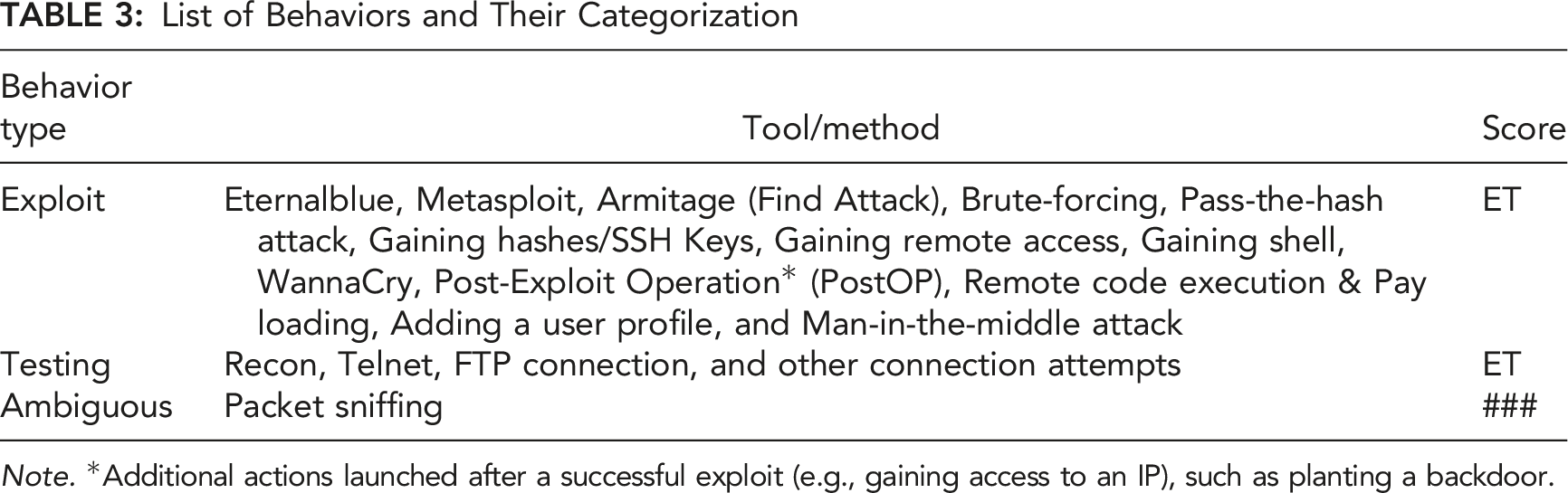

Exploit and Testing Behaviors

List of Behaviors and Their Categorization

Note. *Additional actions launched after a successful exploit (e.g., gaining access to an IP), such as planting a backdoor.

These scores are inferred, since the data relied on participants reporting their behaviors. Inferred scores were scored by KFW, MM, and HR to concurrence. Table 3 indicates the list of behaviors (tools and methods) that were categorized into exploit, testing, or ambiguous behaviors.

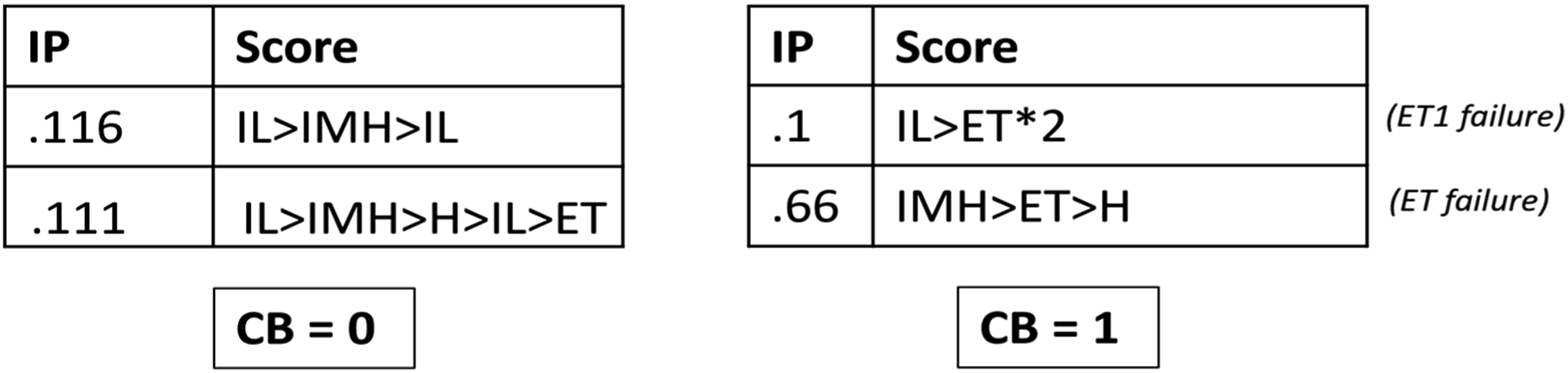

Confirmation Bias Scoring

Using chat reports, interactions reported for each IP were grouped to create a linear “case” over time. The result presents as a timeline that represents the participants' explicit/inferred value, and exploit/testing behavior reported by an individual, for that IP (see Supplemental Material A and B for more detail; and example Cases for several IPs in Figure 2). This scoring method was based specifically on the definition of confirmation bias (CB) as the tendency for people to look for what they want to see and look only for evidence supporting their existing beliefs—while ignoring or disregarding what does not agree when it is present (Nickerson, 1998). Example of CB scoring based on belief-patterns shown by the value scores. In the left examples, the top IP case is continuously believed to have some value but is never tested (IL>IMH>IL). The bottom IP is eventually tested, but no statement was made afterward to inform us if there was an update in belief. Both left examples failed to show CB. In the right example, the top IP is believed to have some value, and it is followed by a stated failed exploit attempt, and the participant then attempts another exploit whereby we can assume value (e.g., ET>ET, which we annotate as ET*2). The bottom IP shows an initial belief of value, a stated failed exploit attempt, followed by another statement showing the participant still believes the IP has high value.

By examining each case, a participant demonstrating confirmation bias was revealed if their chat entries showed a belief remaining the same, despite their provision of evidence that the belief should change. Such a case must include: an initial belief (stated or inferred), followed by evidence that could counteract that belief, followed by the same belief (stated or inferred) (and again, see Supplement B for more details). Notably, CB examples based on initial reports of deceptive and inferred deceptive, followed by any test & exploit attempts, followed by the continued belief of deception, were also examined and scored. Examples of cases are shown in Figure 2 in which a participant either showed no evidence of CB (left examples, one case per IP value) or they did show evidence (right examples).

Framing Effect Scoring

The frame in Tularosa was created using the manipulation of psychological deception. Recall that in the informed conditions, participants were notified about the possibility of deception on the network. The suggestion of deception acts as a negative frame because it adds uncertainty and risk to decision-making and related behavior (e.g., interactions with machines). Participants in the uninformed conditions, in contrast, viewed the network without this frame and may have been more likely to interact with machines generally. To test whether this frame influenced behaviors, the first report given of each IP was examined. The “framed” group should make more reports of being “uncertain” (UN) and be more likely to report deception.

Results

Ten participants data were outright removed across the two days, since they were on the wrong network due to an experiment administer error that persisted across days. Otherwise, for Day 1, an additional 17 people did not provide chat data, 3 more participants were removed because they were assigned in the wrong environments, and 2 used incorrect IPs or failed to mention IP in their reports. This left 106 useable participants’ data for Day 1.

On Day 2, 13 participants did not report chat data, and 5 failed to specify IPs in their reports, or referred to non-existent IPs, leaving 109 useable participants’ data. This led to slight differences in numbers across conditions, as seen below.

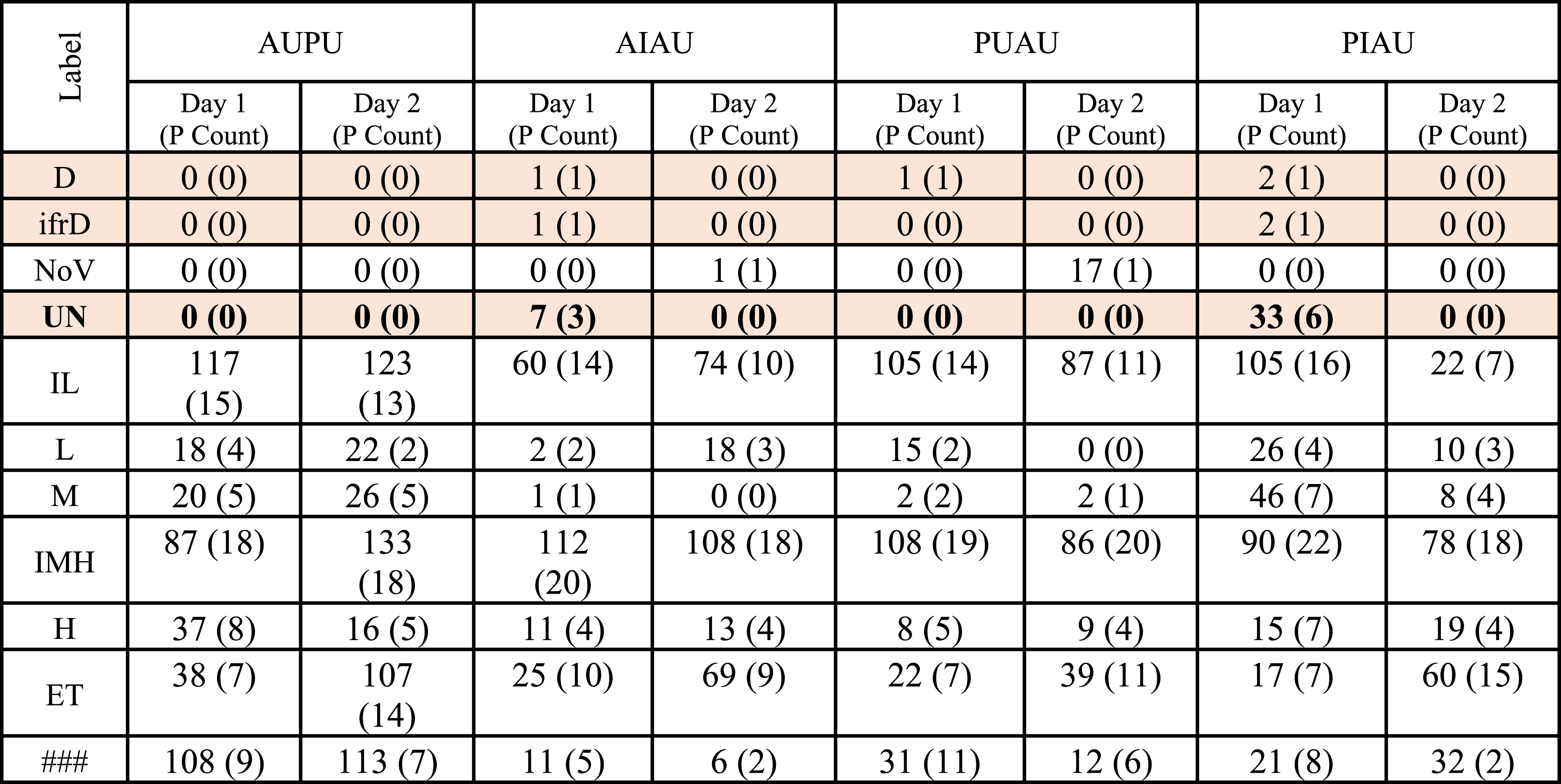

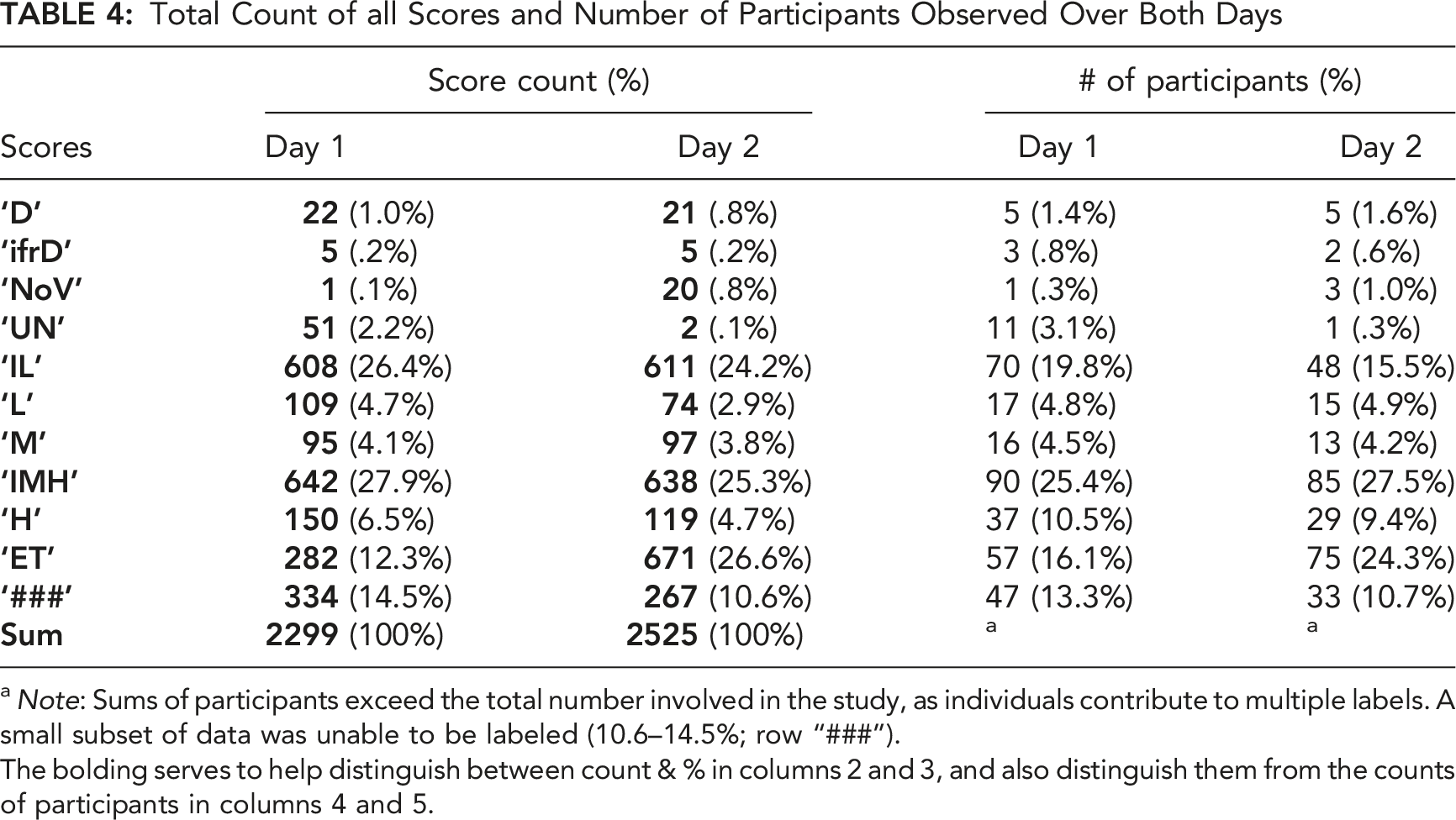

Total Count of all Scores and Number of Participants Observed Over Both Days

a Note: Sums of participants exceed the total number involved in the study, as individuals contribute to multiple labels. A small subset of data was unable to be labeled (10.6–14.5%; row “###”).

The bolding serves to help distinguish between count & % in columns 2 and 3, and also distinguish them from the counts of participants in columns 4 and 5.

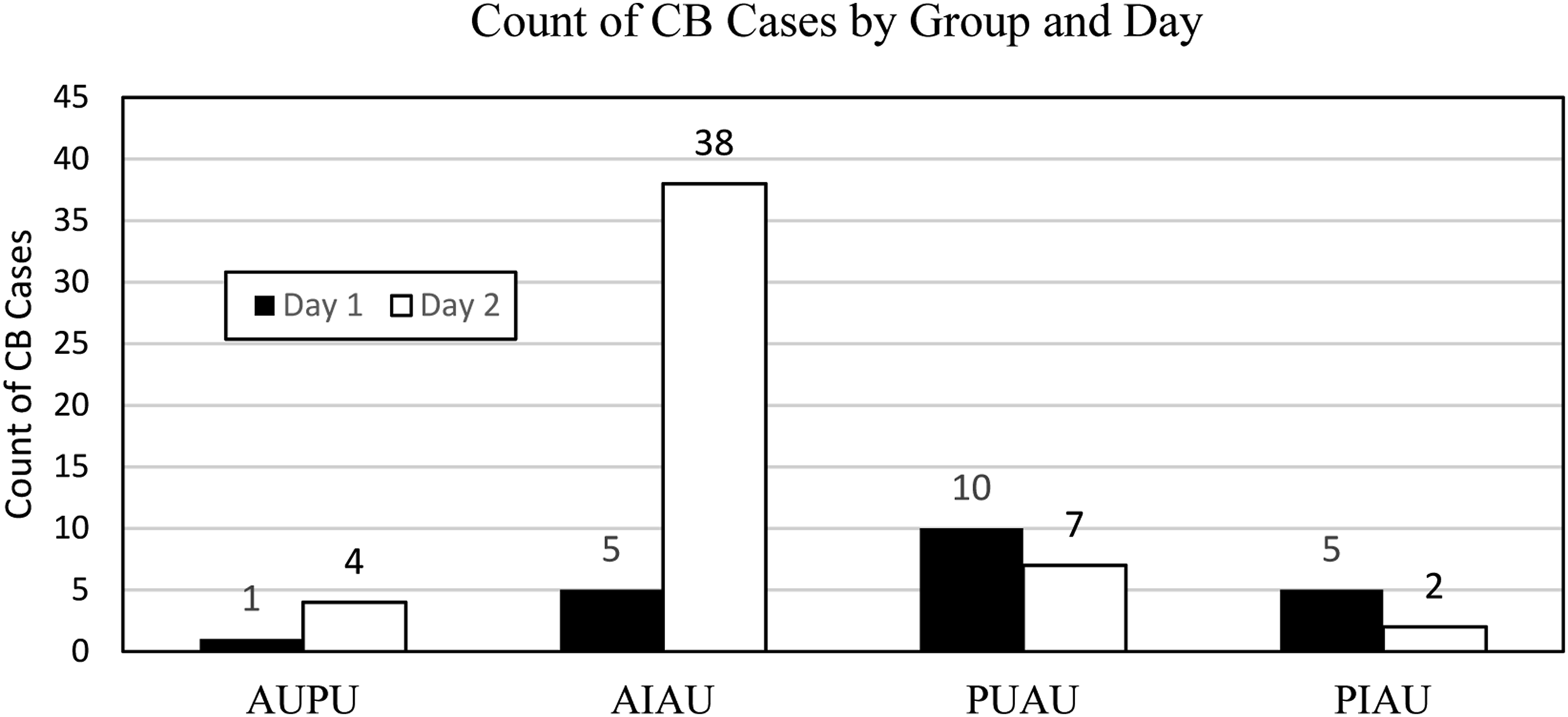

Confirmation Biases Cases

A total of 72 cases of confirmation bias in the dataset were found, across 28 unique participants (Figure 3; and see Supplemental Material Table 4). The results show that these cases appeared to vary between conditions, and a few participants on Day 2 accounted for more than half of the total. It is likely that, given the requirement for testing and exploitation, participants on this day were simply more likely to perform those behaviors, although why more were not observed in other groups is uncertain. Confirmation bias cases by group and day.

To get a sense of how common CB really was, counts were examined by weighing them by participant interaction with other IPs in each network. When the number of IPs where CB was found was divided by the total number of IPs the participant reported interacting with, the proportion of CB cases varied from less than 1% to over 7%. While this indicates presence of CB as hypothesized, it does represent a small portion of interactions overall; nevertheless, as noted above, the method of scoring confirmation bias required that participants attempt a test or exploit of a system. Since testing and exploitation are later stages in attacks, these numbers may under-represent the total amount of CB occurring. Furthermore, the experiment did not set out to create these biases, and the sample was limited by self-reported data including the frequency and completeness with which participants choose to report. Participants presumably did not report every interaction they had throughout the two days.

CBs were also revealed in most cases in the data which involved testing and exploit behaviors at any level (a total of 72). When explicitly looking for cases in which participants changed their minds about a machine or system’s value after a test/exploit (which would be evidence of disconfirmation), there were only four cases total. Of those four, three involved participants believing they had found a decoy system when the system was real. The limited results suggest operators do more frequently confirm, rather than test their beliefs in system value at least based on reports. However, the report-based measure does have limitations, including the need to use our scoring method, the limited scope of only using reports which occurred whenever participants chose versus in a required fashion, and a reduced view of behavior because of the reporting goals.

For the purposes of this manuscript, comparative analyses between the deception conditions were offloaded into the supplementary material. Briefly, both decoy-present conditions and psychologically informed groups had more average confirmation biases than absent conditions on Day 1, where comparison is more easily interpreted, but not in Day 2 (see Supplement Section B).

Framing Effect Results

The approach for the framing effect assessment was to analyze the first report of an IP, and the case created. Those values were then used to examine two hypotheses; first, that the informed groups should be more likely to initially rate IPs as uncertain, and second, that this may be more likely when their condition was combined with decoys. The expectation is that framing occurs upon a first decision opportunity (Druckman, 2001; Tversky & Kahneman, 1981, 1986). However, participants were allowed to make as many decisions as necessary before reporting what they found important about each machine. On the one hand, repeated decisions are a more natural method of interacting with the world and may play a role in explaining the frequency of bias occurrences. On the other hand, any time when these were not reported represented an opportunity lost and meant that interpretation of individual differences would be difficult or impossible.

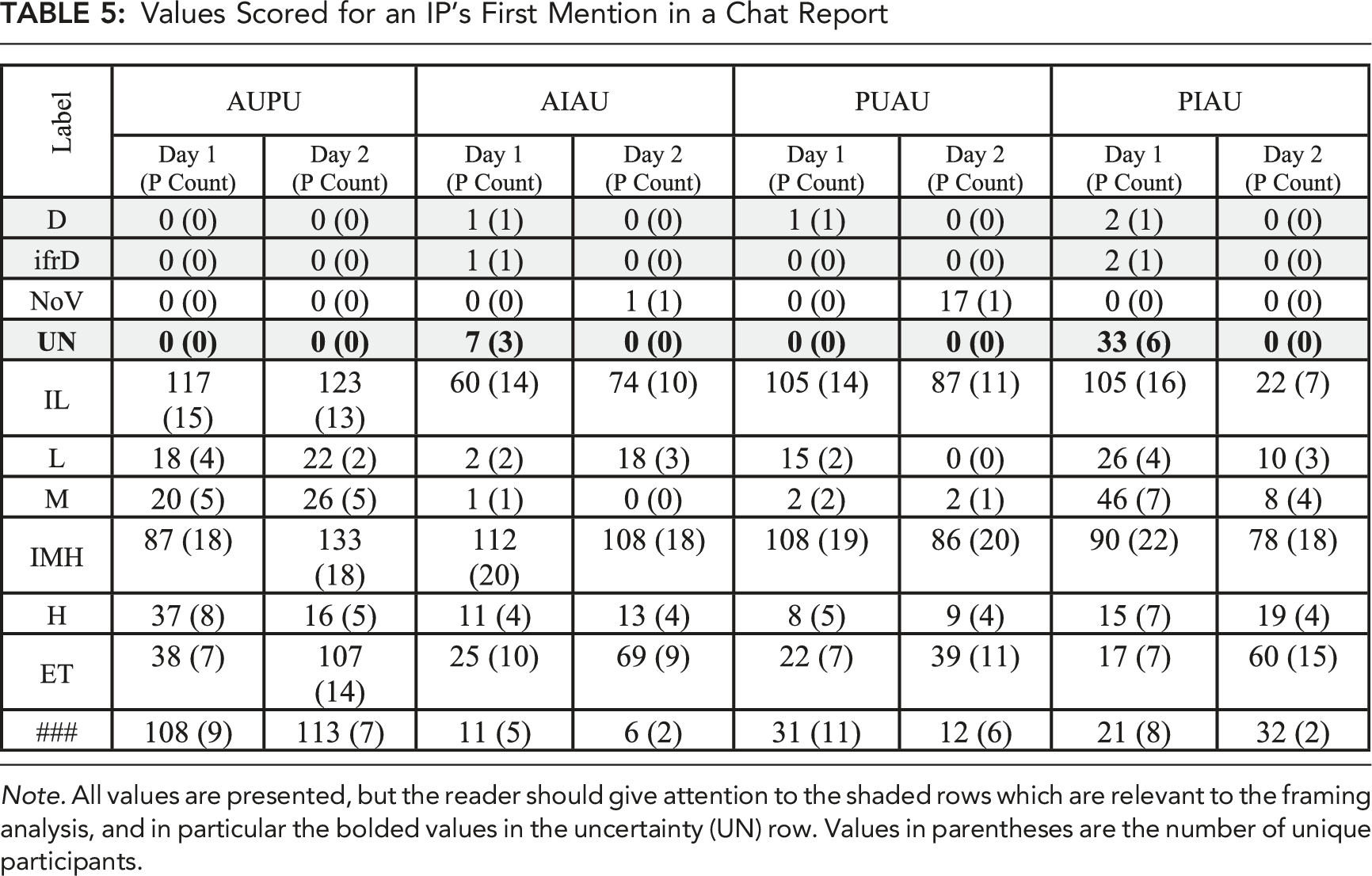

Values Scored for an IP’s First Mention in a Chat Report

Note. All values are presented, but the reader should give attention to the shaded rows which are relevant to the framing analysis, and in particular the bolded values in the uncertainty (UN) row. Values in parentheses are the number of unique participants.

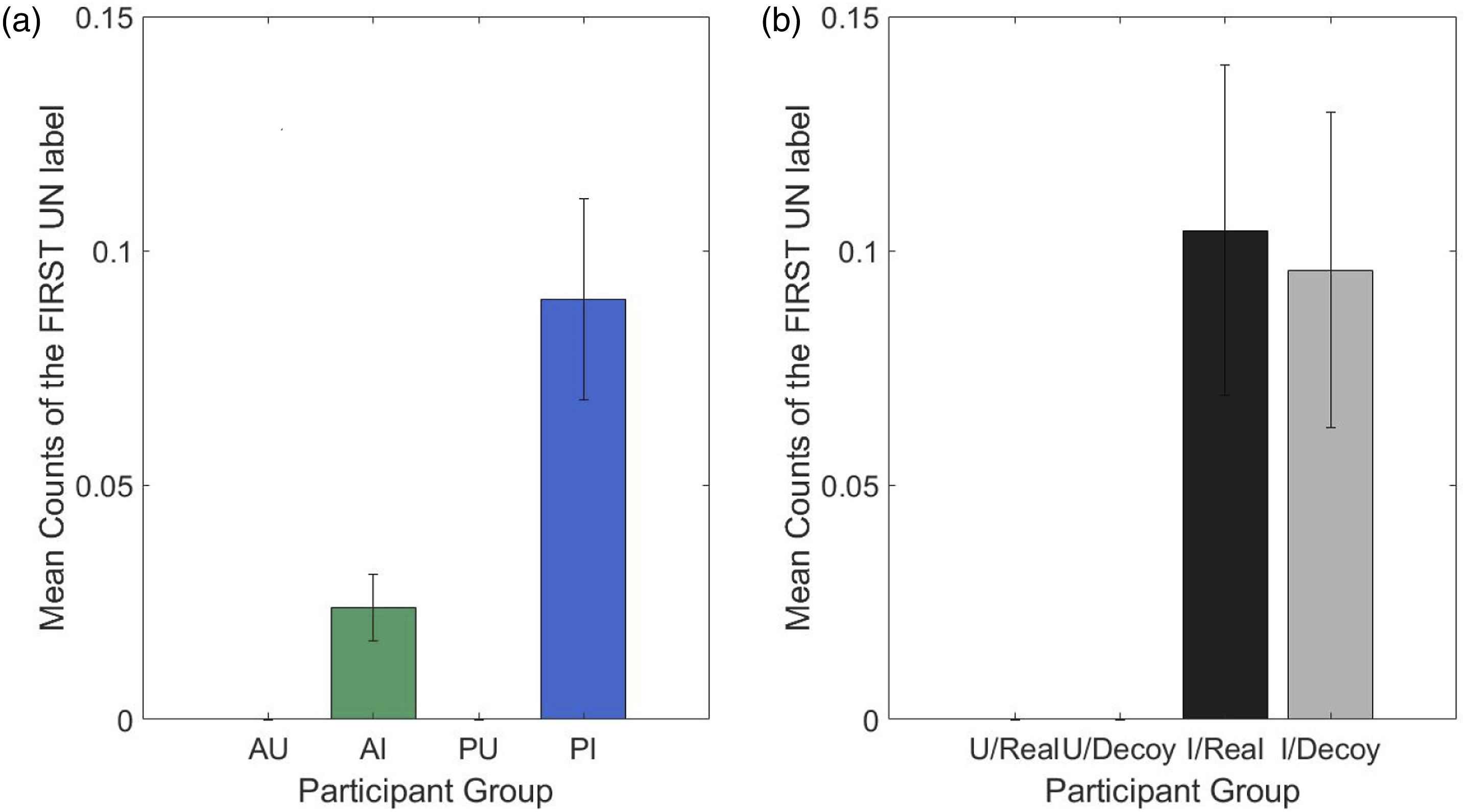

Graph (4a) shows mean counts of UN for Day 1 for an IP’s first mention in a chat report (none were found in Day 2) by condition, and graph (4b) by whether the machine was actually real or a decoy by information condition. Error bars are standard error.

The framing worked similarly on decoy and real systems and appeared more effective if decoys were also present (comparing the AI vs. PI conditions with 7 vs. 33 UN counts, respectively). In fact, both informed conditions were the only ones in which uncertain (UN) labels were found.

Discussion

These data are an exciting and rare view into human performance in attacker cognition in cybersecurity. The presence of two decision-making biases in cyber professionals was revealed. This discovery is the first step toward a systematic biasing of would-be attackers, which is potentially of great benefit to defenders. Cyber attackers in this experiment exhibited both confirmation bias and framing effects, supporting prior work (Ferguson-Walter, 2020; Ferguson-Walter et al., 2021b; Gutzwiller et al., 2019). Naturally, these biases were interpreted and scored in an exploratory fashion but were done according to a novel reproduceable method required in this context that was still based strongly on the accepted definitions of Kahneman and Nickerson. We note that generally, decision-making biases do not have “standard metrics” associated with them and are often interpreted in more applied contexts, although theoretical accounts are maturing (Berthet, 2021; Klayman, 1995; Oeberst & Imhoff, 2023).

Confirmation bias as scored and interpreted in this study’s novel method occurred in 72 cases, and it generally occurred for real machines. Confirmation biased interactions meant that participants held an initial belief (stated or inferred), followed by evidence that could counteract that belief (such as an attack failing), followed by the same belief (stated or inferred). Although CB could also have occurred based on initial reports of deceptive machines, followed by any test & exploit attempts, followed by a continued belief of deception, we did not find any such cases in the data. Only 4 cases of disconfirmation were found across both days. Combined, this paints a picture that—at least based on participants reporting and our method of interpretation of bias—several participants reported belief repeatedly despite evidence their beliefs should change.

The utility to defenders of such behaviors is that attackers continue to take actions in the network—repeated attack attempts, scans, or other interactions, and fail to “move on” to other machines or attack vectors. This is more detectable by network defenders and delays progress; other analyses done of the network data from Ferguson-Walter et al. (2019a) show that in deception present conditions, progress was impeded, and attack success diminished (Ferguson-Walter et al., 2021b). It was also shown that especially in the combined present informed condition, attacker detectability was increased (Ferguson-Walter et al., 2021b). The existence of both confirmation bias and framing effects, interpreted and scored according to our method, may help explain these phenomena.

The utility of confirmation bias is that participants may waste time. The more attackers interact with machines—testing them when they do not need to—the more time they are in the network where defenders have a chance to catch them. This tendency to persist despite contradictory evidence has other implications for attackers working in teams. In more team-centric operations, handoffs of information about attack plan and status are shared with a new/incoming operator for further work. The data examined are representative of this type of handoff information. Confirmation bias present in operator A may influence the next operator B through a report. By their report, continuing to represent a machine as useful/valuable even after the operator has spent time testing/attempting exploits and interacting with a machine may slow down the team. It also suggests that particular machine will be returned to later, potentially exposing the attacker to network security. A pattern of return-access may help identify coordinated efforts to attack enterprise networks.

Operational Consequences of Framing Effects

In the case of the framing effects observed, there is more obvious value to a defender in biasing decisions. The data show that framing, according to our scoring method, increased the participants’ uncertainty of machines in the scenarios, and thereby decreased the likelihood that attackers ever reached a clear understanding of a machine and its value. Such judgments will have operational consequences for attackers (like whether attackers accidentally avoid real systems; or touch decoys). As mentioned above, informed conditions targeted more decoy systems (Ferguson-Walter et al., 2021b). Far more “uncertain” ratings were given to real systems on Day 1 (24) than to decoy systems (9), despite a similar number available in the network. More uncertainty for real systems would suggest that attackers may ignore real systems under these conditions. Indeed, initial confusion or uncertainty in a machine led to many of these machines being ignored for the duration of the experiment (in 32 of 36 cases, after a report of uncertainty, participants did not further discuss that IP in their reports).

Interestingly, ignoring machines was not how the participants stated they would behave when encountering potential deception in surveys (Ferguson-Walter et al., 2023). Participants also could have avoided making any concrete statements about those machines for reporting purposes and could have interacted with the IP in other ways later. However, convergent evidence suggests this was not the case; informing participants about deception led to participants self-reporting more confusion and attempting fewer instances of the most common working exploit (Ferguson-Walter et al., 2021b). Participants informed about deception also showed reduced log-in attempts (Ferguson-Walter et al., 2021b).

Limitations

All the analyses herein were limited by the nature of the data collected, which was part of a prior investigation into deception. Participants were not forced to provide reports for every single IP on the network (or that they may have interacted with) and did so as part of the instructions and at their own rate, based on their own experience and motivation. Determining confirmation bias and framing effects were based on these limitations. The way in which confirmation bias scoring methods were restricted to interactions with machines should also be considered as a limitation. While decision-making is a promising way of disrupting attackers, interactions with machines are by no means the only place CB may be present. The choices of confirmation bias and framing effects in general limited our examination of biases in decision-making, despite the existence of other cyber-relevant biases at large (Johnson et al., 2020; Johnson et al., 2022).

Scoring data relied on cybersecurity experts and cognitive psychologists. Complete agreement was required and adoptions of strict definitions of biased behaviors were used to mitigate the risk of cherry-picking or overclaiming. As a result, a critique about generalization of the methods could be made. However, outside of specific certification “tests” like the Offensive Security Certified Professional (OSCP), every realistic study contains some number of unique elements, stemming from the design and build of the network, and the configuration of what services, ports are used etc. Generalizable scoring for biases would be useful.

A few small limitations were regarding the framing effect. The manipulation of informing participants deviated slightly from standard framing, by only including a negative frame. This limitation stemmed from recognition that a positive framing, of telling participants there was “no deception,” could have backfired and resulted in explicitly believing deception was being used. Most participants in this dataset said they would not typically believe a message stating that deception is present in networks (Ferguson-Walter et al., 2023).

Regarding application, this data comes entirely from reporting. Actual attackers are not going to provide reports to defenders. Though the red team participants were behaving like attackers, they were not actual members of advanced persistent threat (APT) groups, they were not operating in teams, and they were present on the network for a relatively short period of time. A more targeted and controlled study of attackers would help us understand the nature of bias in more detail. However, the dataset was far more realistic and natural than typical experiments and exercises such as CTFs. The next step is to use these types of experiments and data collection events to learn to detect and cause behaviors using only cyber-available data that a defender will have access to.

We have evidence in some cases that single individuals committed numerous biased behaviors. As a result however, the distribution and amount of bias data (each point of evidence of CB or Framing) needed to delve deeper into alternative explanations is probably insufficient to draw strong conclusions. It is certainly possible that in our exploratory bias scoring that these behaviors are related to a variety of factors, including fatigue, experience, and risk-taking propensity (potentially a multifaceted component incorporating goals, experience, and motivations). This is alongside the goals or motivations of the attackers themselves. Many of these data were indeed collected in this study (as reported in Ferguson-Walter et al., 2017). However, we did not view it prudent to relate the two, and we believe that more frequent (and in the future, perhaps required sampling) of participants’ decisions are necessary to assess their potential influence on bias.

Future Directions

What is necessary to advance study of cyber attackers will be a combination of experiments with fidelity of environment and user populations with the targeted use or manipulation of behavior using biases. A longer period of performance may also be necessary to understand the full defensive impact. Employment or creation of bias itself is the next important step that must be assessed. Because of the relative success in the laboratory at creating biased behaviors (based on the work of general psychologists and behavioral economists), this is likely to be successful. However, it requires expertise in multiple fields including cybersecurity and human factors to be designed appropriately.

Take-Away Findings

• As part of red teaming, cyber operators may be confirming their beliefs about machines and do not appear to engage in as much disconfirmation behavior, as measured in reports. • Providing information about possible deception on a network increases confirmation bias occurrence using our interpretation and scoring method. This co-occurs with negative outcomes such as more targeting of decoys, delays in progress, and lessened likelihood of successful attack. • A framing effect manipulation (alerting operators to possible deception) increased reporting of uncertainty, regardless of decoys being in the network. As hypothesized, framing based on our interpretation was more effective with the presence of actual deceptive systems on the network. • Framing effects increased the uncertainty about machine validity, and resulted in less interaction with those machines, regardless of their value. This could provide an advantage to defenders.

Supplemental Material

sj-pdf-1-edm-10.1177_15553434231217787 – Supplemental Material for Exploratory Analysis of Decision-Making Biases of Professional Red Teamers in a Cyber-Attack Dataset

Supplemental Material, sj-pdf-1-edm-10.1177_15553434231217787 for Exploratory Analysis of Decision-Making Biases of Professional Red Teamers in a Cyber-Attack Dataset by Robert S. Gutzwiller, Hansol Rheem, Kimberly J. Ferguson-Walter, Christina M. Lewis, Chelsea K. Johnson and Maxine Major in Cognitive Engineering and Decision Making

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Laboratory for Advanced Cybersecurity Research.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.