Abstract

A richer approach to studying Decision Support System (DSS) interactions is required to understand and predict the nature of actual use in the workplace. We used questionnaire and interview techniques to examine workers’ experiences relating to DSS use in naturalistic settings. We aimed to: 1) Reveal what workers perceive to be the most important factors when deciding whether to accept support from a DSS and 2) Elicit patterns that emerge from DSS users’ recounted experiences using the systems, which may impact their future use. Current and prospective DSS users (N = 93) from numerous industries responded to a questionnaire relating to the factors they perceive to influence their use of DSSs. Subsequently, a retrospective interview protocol was employed to investigate the experiences of a subset of DSS users (N = 10). The questionnaire results underscore a range of factors considered to be very important to the acceptance of DSSs (i.e. decision quality; decision importance; decision risk; historical accuracy; decision accountability; and system comprehension). Further, a series of interconnected themes relating to workers’ use of DSSs were identified from the interview transcripts using thematic analysis. We discuss how these issues may impact workers’ intentions to use DSSs in the workplace, and advocate for the use of naturalistic decision-making techniques to study technology acceptance.

Keywords

Introduction

Decision Support Systems (DSSs) typically comprise computerised information systems designed to support individuals, teams and/or organisations in making decisions (Zhang et al., 2015). A well designed DSS will help decision makers by collating, presenting, and/or integrating useful information from an array of sources and modalities (Morrison et al., 2010). DSSs are currently used across a range of industries and sectors. For instance, in healthcare, computer-based DSSs can advise medical practitioners of drug–drug contraindications prior to prescribing medications (Chaffee & Zimmerman, 2010), while in finance DSSs can recognise trading signals and advise users on decisions to ensure they maximise their return on investment based on recent market activity (Chiang et al., 2016).

The effectiveness of DSSs can be largely attributed to two factors. Firstly, their capacity to reduce the complexity of a task for the user, for instance, by comparing the critical features associated with multiple options (Allen & Abate, 1999). Secondly, DSSs can guide inexperienced users in a manner that protects the integrity of the system (Liu et al., 2021). As a result, some DSS interfaces can fulfil a training function for less experienced workers (Perry et al., 2013).

Although the nature of guidance provided by DSSs will differ across device and context, they are generally designed to lead users to one or more plausible courses of action. For instance, DSSs are currently being used to predict the path of bush fires (Zarghami & Dumrak, 2021), incorporating a range of real-time factors into their calculations (e.g. wind and precipitation), and providing users with projections on the way a fire is likely to spread. Such forecasts can assist in the combat of bush fires in a way not previously possible. However, as the use of DSSs and the consideration of their outputs is typically discretionary in nature (Davern & Kauffman, 2000; Parkes, 2012), users’ acceptance of support is often suboptimal. For instance, users of clinical DSSs have been found to over-rely on system suggestions in uncertain settings, demonstrating an automation bias, even when they are of poor quality (Bussone et al., 2015). Comparatively, under-reliance on DSS warnings has been observed in some domains, such as aviation, which has resulted in catastrophic outcomes (Gallagher, 2002). Relatedly, aversion to accepting judgements produced by algorithm-based systems has been frequently cited in the literature (Burton et al., 2020; Dietvorst & Bharti, 2020; Mahmud et al., 2022).

The Technology Acceptance Model (TAM; Bagozzi et al., 1992; Davis, 1989) and its variations (e.g. TAM2; Venkatesh & Davis, 2000) conceptualise a workers’ intention to use and adopt a new technology as being predominantly determined by its perceived ease of use (i.e. the degree to which an individual perceives the technology to be easy to learn, use and understand) and usefulness (i.e. the degree to which an individual perceives the technology to be relevant and beneficial to their needs). According to the TAM, these factors directly influence an individual’s attitude toward using a technology, which in turn influences their intention to adopt it, and ultimately their actual usage.

This framework has been used extensively in studying workers’ willingness to use newly introduced DSSs at work (e.g. Al-Rahmi et al., 2019; Dulcic et al., 2012; Hu et al., 1999; Mir & Padma, 2020; Nunes et al., 2018). However, despite its prolific application, the TAM has been criticised as being too simplistic, ignoring the complexities associated with real-world use of technology, including individual differences in preferences and attitudes among users and their interactions with workplace and organisational influences, and other structural imperatives (Ajibade, 2018; Bagozzi, 2007; Chuttur, 2009), as well as the complex relationships between these factors. Indeed, the relatively modest predictive capacity for models like the TAM (Legris et al., 2003) has underlined the absence of significant factors in these models and instigated a move to alternative paradigms that may afford a richer approach to studying DSS interactions, which may be better equipped to understand and predict the nature of DSS use in the workplace.

The current study sought to extend the scope of factors of consideration in this field beyond gauging users’ perceptions of usefulness and ease of use (akin to the TAM; Davis, 1989), to capture a broader array of factors that have proven influential in users’ decision to accept or reject the technology in the workplace. In doing so, we employed a mixed-methods approach whereby we used: 1) a purpose-built questionnaire that was designed to assess a sample of current and prospective DSS users’ perceptions of the technology, including quantitative ratings of workers’ perceived importance of numerous factors (drawn from the human factors and human–computer interaction literatures) considered when deciding whether to accept DSSs and 2) a semi-structured interview protocol that was grounded in a naturalistic decision-making perspective.

The Naturalistic Decision-Making (NDM; Klein, 2008) paradigm prioritises the study of decision-making in naturalistic, real-world conditions. The approach emphasises the examination of how people make critical decisions in complex environments, characterised by a range of challenges and constraints (e.g. variations in time-pressure, uncertainty, experience, and contextual and organisational influences), which typically impact their recalled decision processes, and invariably such processes when faced with future similar cases.

An NDM lens and its associated procedures (e.g. variations of Cognitive Task Analysis; Crandall & Hoffman, 2013) has been previously applied to inform training development (e.g. in professional sport; Johnston & Morrison, 2016) and the design of DSSs that align with users’ cognitive decision processes (e.g. in the nuclear power industry; Zsambok, 2014). However, they have been scarcely used to diagnose existing challenges associated specifically with DSS use during critical decisions. Doing so would extend upon previous approaches to understanding workers’ acceptance of DSS technology by: 1) situating DSS use within complex, real-world operations, and relatedly 2) eliciting a rich account of the features of DSSs that are implicated by users as impactful challenges or enablers to successful decision-making, and are therefore likely to impact future system use (i.e. technology acceptance).

Current Study

This study aimed to 1) Reveal what workers perceive to be the most important factors when deciding whether to accept support from a DSS and 2) Elicit patterns that emerge from DSS users’ recounted experiences of critical incidents involving DSSs, which may impact their future use of DSSs. The study adopted a mixed-methods approach, which captured quantitative ratings of perceived importance of numerous factors, as well as in-depth qualitative accounts of lived experiences that have proven influential in workers’ decisions to use DSSs in real-world environments. The questionnaire was conducted prior to the interviews and was designed to generate a descriptive snapshot of current and prospective DSS users’ perceptions of DSS technology. The intention was to establish a somewhat generalisable view of the types of factors that were most prominent across a range of users, industries, and contexts. We then invited a subset of these participants to complete the interview method to both triangulate and deepen the findings from the questionnaire.

In the latter, a retrospective interview protocol based on the Critical Decision Method (CDM; Hoffman et al., 1998; Klein et al., 1989; Militello & Hutton, 1998) was designed to investigate the experiences of several DSS users, and specifically the challenges that they recall arising from using DSSs in making critical decisions in their workplace. To capture a broad cross-section of DSS experiences not limited to specific work contexts or DSSs, the questionnaire and interviews were conducted with workers from a broad range of industries (e.g. airline operations, ambulance and patient transport, health and welfare, and security services). In this research we aimed to answer the following questions: 1) What do workers perceive to be the most important factors when deciding whether to accept support from a DSS? and 2) What patterns – concepts and relationship between concepts – emerge from DSS users’ recounted experiences that may impact their future use of DSSs?

Method

Participants

Questionnaire

Respondents (47 women, 46 men) comprised a sample of adult workers recruited via several means, including: targeted invitations to the research team’s professional networks; advertisement on an online dedicated social media site; advertisement on the Centre for Work Health and Safety’s (CWHS) social media site; and the Prolific recruitment platform (https://www.prolific.co/). Prolific respondents were monetarily reimbursed for their time spent completing the questionnaire. All questionnaire participants were also invited to take part in the subsequent interview method pending eligibility (i.e. having had experience in using a DSS in their workplace). Women participants ranged in age from 18 to 70 years (M age = 32.45, SD age = 12.69), and men from 18 to 80 years (M age = 36.24, SD age = 11.79). Respondents reported an average of 9.58 years (SD years = 10.26; range 1–44 years) experience in their field of work, which was situated across 17 industry categories.

Interviews

Ten DSS users (six men and four women) comprised a sample of industry professionals with 7–36 years of operational experience. Participants comprised a subset of the questionnaire sample who responded to an invitation to participate that was included at the end of the questionnaire. Participants’ professional roles varied and included a commercial pilot, a psychologist, a paramedic, an actuary, two cardiac sonographers, an Emergency Department (ED) physician, an Intensive Care Unit (ICU) nurse, a wound care nurse, and an airport security officer. This broad range of workers from multiple industries was chosen to identify commonalities that might transcend specific work settings or industries and avoid a potentially infinite number of localised investigations.

Participation was sought from those that were fluent in English, were a minimum of 18 years of age, and possessed firsthand operational experience in using DSSs within their work role. A $100 gift voucher was offered to participants who completed the interview as a reimbursement for their time.

Materials

Questionnaire

The questionnaire was developed by the research team to assess a sample of current and prospective DSS users’ perceptions of the technology, including quantitative ratings of workers’ perceived importance of 25 factors considered when deciding whether to accept DSS-generated decisions. The measure comprised a mix of open-ended and ordinal rank items capturing information relating to worker demographics (i.e. workers’ age, gender, industry and years of experience), awareness of, and experience using DSSs, knowledge of DSSs, and the factors impacting DSS acceptance (rated in relation to relative importance on a 5-point Likert-type scale ranging from ‘not important’ to ‘extremely important’). The factors impacting user acceptance were identified as being influential in the acceptance of DSS-related technology within the extant literature, including a systematic literature review conducted by the research team. The provision of open-ended items within the questionnaire provided an opportunity for factors, previously unidentified, to be tabled by participants as influential factors in their acceptance of DSS-generated outputs. A readability analysis (Reading ease: 52.1; and Grade Level: 8) and cognitive interview (n = 2) were employed to ensure a good level of reader comprehension.

Interview

The research employed a semi-structured retrospective interview protocol based on the Critical Decision Method (CDM; Hoffman et al., 1998; Klein et al., 1989; Militello & Hutton, 1998). CDM methodology requires that participants first recall a specific incident from their past work experiences that holds special significance relative to other ‘everyday’ occurrences. During recall, the interviewer constructs a timeline of the event thereby identifying several key decision points that will be of ongoing interest in the interview. The interviewer then uses probing questions to target more specific information regarding interviewees’ decision-making process (e.g. the strategies used, arising challenges and common or anticipated errors). In the current study, special emphasis was placed on eliciting details relating to users’ perceived challenges in using the technology now and into the future. The interviewer also asked additional ‘hypothetical’ questions to respondents to determine the potential impacts of incident variations (e.g. how might your experience have differed under conditions of increasing time-pressure?). The interview protocol can be seen in the supplemental materials.

Procedure

After participants’ initial engagement with the researcher, whether through targeted invitations or advertisement, they were provided with the study’s information statement, which outlined the purpose of the study and described the questionnaire processes, the management of data, and their right to withdraw without penalty. Participants were directed to complete the questionnaire via the online Qualtrics platform. After completion of the questionnaire, all participants were invited to take part in the subsequent interview method pending eligibility (i.e. having had experience in using a DSS). At this point participants were provided information about the interview process, including the time commitment, use of a pseudonym during the interview, audio recording process and an invitation for the participant to ‘member check’ the text-based interview transcript following the interview (details below). It also advised participants on the management of the data being captured during the study, and the support services available if participants experienced discomfort while disclosing adverse experiences (e.g. workplace accidents).

Following participants’ review of the information statement, they were asked to indicate their willingness to participate in the study by providing their email address. Participants were then contacted by the researcher via email to schedule a time and date for the interview to take place. Participants were sent an online meeting invitation, which included a reminder that they would be asked to recall a particular event or situation where they interacted with a DSS in their workplace. The incident was to come from their own personal lived experience, and to be one that was complex and nonroutine in nature (consistent with conventional CDM protocol; Militello & Hutton, 1998).

All interviews were conducted individually via the online platform Zoom (Zoom Video Communications, 2021). Interviews ran between one to one and a half hours. Permission was sought from participants to record the interview, and all participants were asked to assume a pseudonym to ensure that no identifying information was captured.

At the commencement of the interview, participants were asked to provide an overview of their role and years of experience, and to describe the DSS they would be discussing in their account. The interviewer then took the participants through the stages of the CDM protocol (see Materials section). The audio recording of the interview was then transcribed to text and emailed to participants to review and make any amendments. This review formed part of the ‘member-checking’ process (Birt et al., 2016) aimed to improve the trustworthiness of the data (to correct any incorrect features from transcription) and enhance the privacy and confidentiality by allowing participants to remove any aspects of their transcript they were no longer comfortable to have disclosed. Participants had seven days to review and request any amendment to the transcript or decide to withdraw their data from the final study altogether.

Design and Analysis

The study used a mixed-methods approach comprising quantitative and qualitative components. For the questionnaire data, a series of frequency analyses were conducted to generate a descriptive snapshot of current and prospective DSS users’ perceptions of DSS technology and the factors that affect their intention to use it. For the interview data, following the transcript validation process, transcripts were subjected to a thematic analysis approach (Braun & Clarke, 2006), which involved an exploration of the data to identify broad patterns (i.e., concepts and relationship between concepts) across DSS users. This approach was chosen as there was no pre-existing framework that would sufficiently define the parameters for such a varied range of workers, work domains, and decision scenarios.

Consistent with the procedure outlined by Braun and Clarke (2006), a six-phase process of analysis was undertaken. Firstly, upon receiving the transcribed interviews, two coders (BM and KB) familiarised themselves with the data by reading the transcripts to gather the breadth and depth of the content, searching for meaning, patterns and initial theme ideas. The second phase involved the generation of initial codes from the data, with the purpose of organising them into meaningful groups. Codes were identified as a feature from the data if they appeared to be interesting to the coders, and one that may have been recurring throughout the transcript. The two coders then met to discuss their resultant codes and identify any areas of discord.

Phase three required each coder to re-focus the analysis at a broader level, by sorting the different codes into potential themes. Once the codes were collated into potential themes, phase four involved refinement of the themes. This is an iterative process whereby each coder moved back and forth between codes and themes to ensure the integrity of the developing thematic map. This also included undertaking a disconfirming case analysis. Phase five saw the development of the final thematic map wherein theme names, descriptions, and relationships were identified (NM). To help reveal the prevalence of themes in the sample, as well as their frequency of relationship with other themes, counts were generated for codes within each theme for each participant, as well as for when features from themes co-occurred in the transcripts (BM). For example, where an interviewee described an experience relating to their degree of freedom when using a DSS, and how it was influenced by the system’s explainability, this was counted as instances of two codes from the separate resultant themes, as well as an instance of relationship between them. This process is similar to that described by Bradley et al. (2007). Finally, phase six involved the write-up of the themes, providing sufficient evidence of the themes to demonstrate their relevance.

Results

Questionnaire

DSS Usage

More than a third of respondents (37.8%) reported that DSSs were currently available for use in their workplace, with 26.7% of all respondents reporting that they have used DSSs before. Of those that reported DSSs not being presently available in their field of work (62.2%), 50% anticipated that they were likely to be used in their field of work in the future.

Factors Impacting DSS Usage

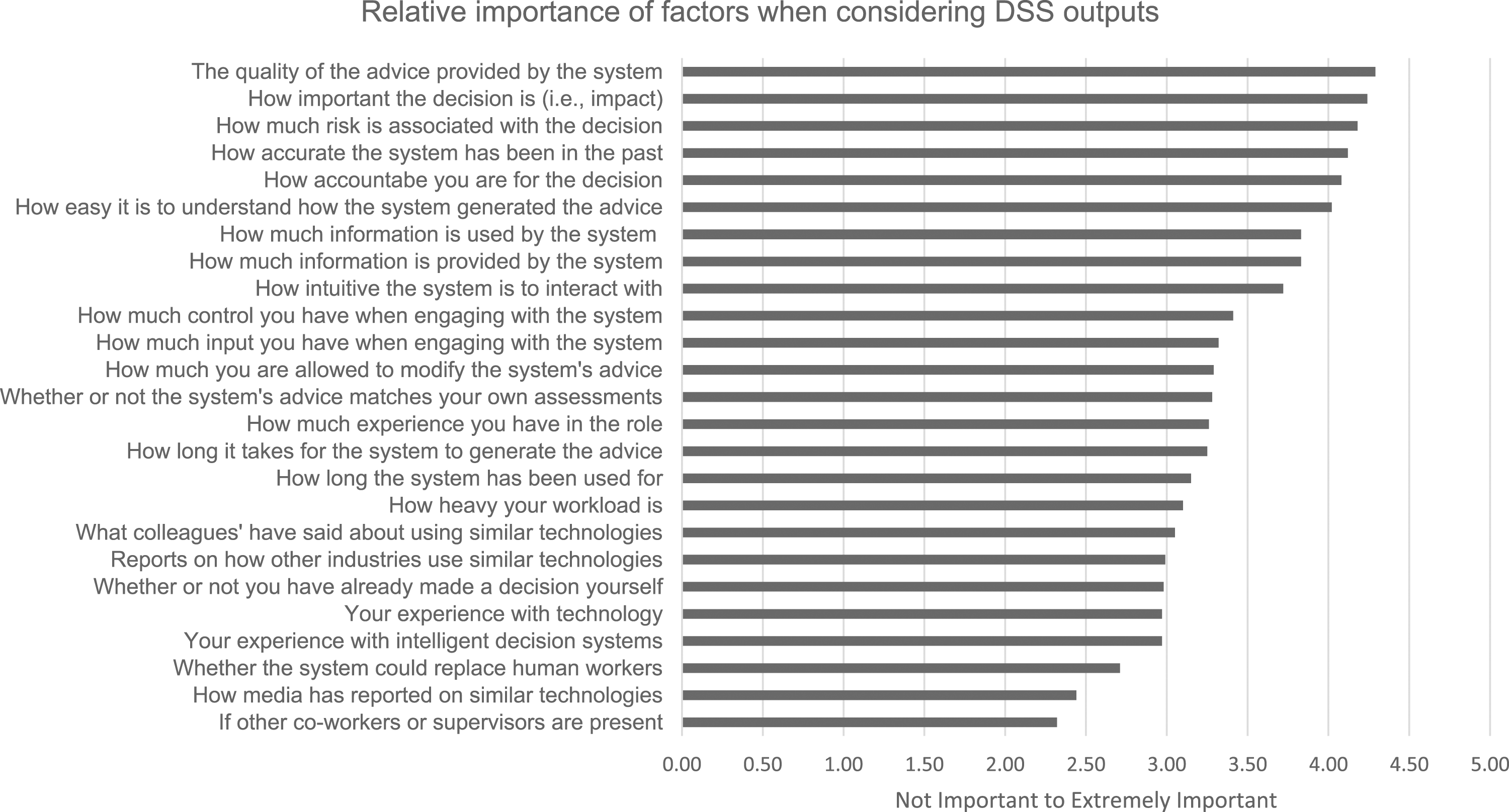

The mean ratings for each of the 25 factors are shown in Figure 1. Six of the 25 factors were considered to, on average, have a relatively high level of importance for respondents (i.e. rated as very important to extremely important), including the quality of the advice provided by the system (M = 4.28, SD = .92); how important the decision is (M = 4.21, SD = .86); how much risk is associated with the decision (M = 4.18, SD = .92); how accurate the system has been in the past (M = 4.11, SD = .93); how accountable you are for the decision (M = 4.08, SD = .89); and to what degree the system can be comprehended (M = 4.02, SD = 1.04). Disproportionate respondent numbers across the industry categories precluded an inferential analysis of factor importance by industry. Participants’ ratings of relative importance of factors when considering DSS use.

Participants reported an additional 19 influential factors in their open-ended responses, including: financial cost (n = 4); job requirements (n = 1); system reliability (n = 8); degree of users’ professional knowledge (n = 1); evidence-base for system efficacy (n = 3); understanding of training data used in algorithms (n = 1); the uncertainty of the situation (n = 1); who the developers were, including their expertise and reputation (n = 4); the capacity and rate of learning (n = 2); how up-to-date the system is (n = 1); user’s mood (n = 1); whether error-free (n = 1); legal obligations (n = 2); data storage (n = 1); ethical considerations (n = 3); human involvement with data (i.e. sense-checking) (n = 1); whether offensive or defensive responses used (n = 1); data quality (n = 1); and standard operating procedures (n = 1).

Interviews

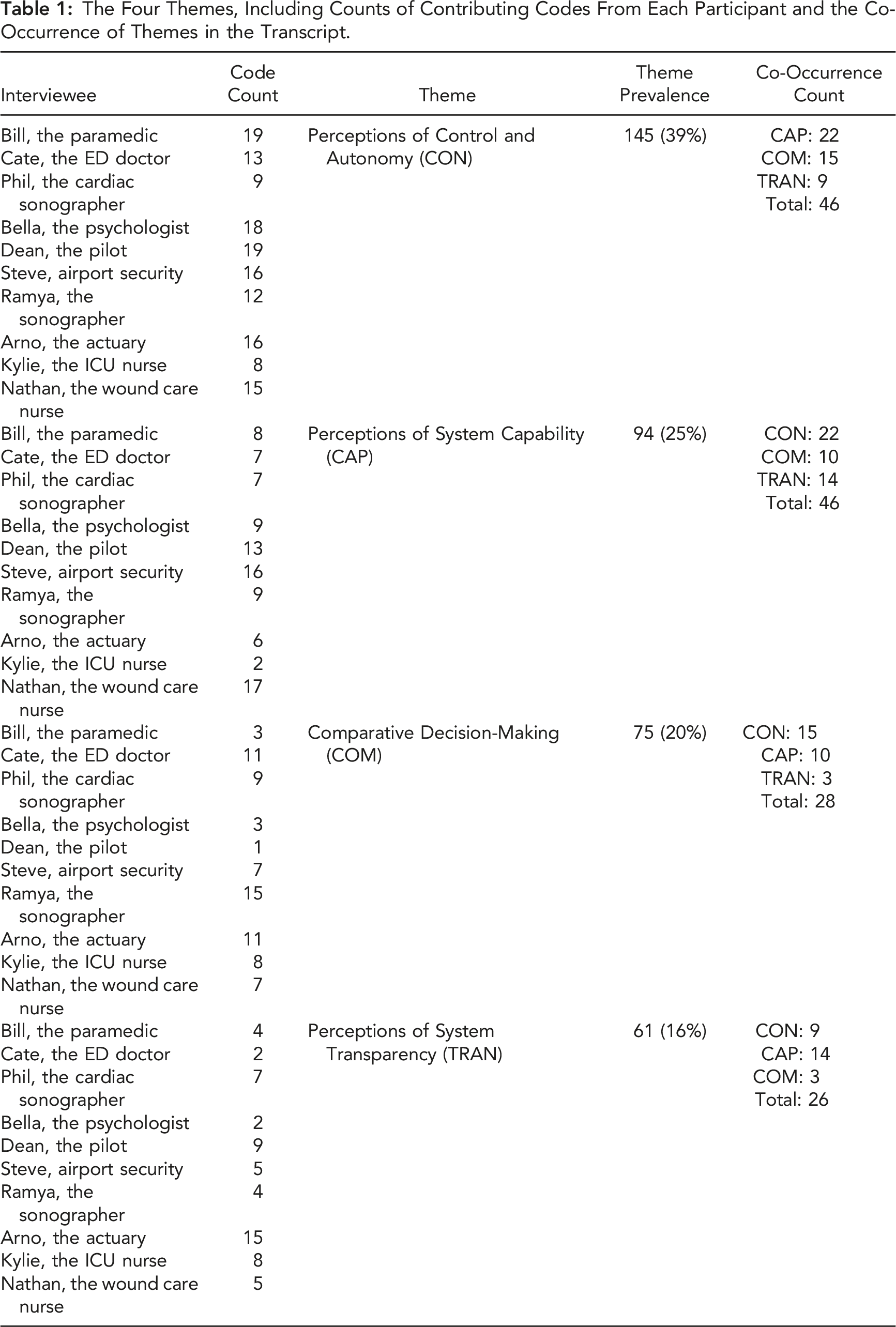

The Four Themes, Including Counts of Contributing Codes From Each Participant and the Co-Occurrence of Themes in the Transcript.

As can be seen in Table 1, Perceptions of Control and Autonomy was found to be a highly prevalent issue discussed among interviewees, accounting for 39% of the codes identified. Further, it was regularly discussed alongside features from the other themes (almost a third of the time), most notably Perceptions of System Capability (15%). Perceptions of System Capability was similarly prevalent (25% of codes) and was also found to be frequently related to the other themes (almost half the time). Discussions of Comparative Decision-Making were common (20%), and often connected to other themes (over a third of the time) but were rarely related to Perceptions of System Transparency, which overall was the least prevalent of the themes (16%). Interestingly, Perceptions of Transparency were less related to Perceptions of Control and Autonomy than the other themes, and more so to Perceptions of System Capability. Commentary on the themes and relationships, including examples drawn directly from the transcripts, are provided below.

Themes

(1) Perceptions of Control and Autonomy

A salient finding that emerged from the interviews was that users perceived themselves to be in control of their decisions, and so this first theme of perceptions of control and autonomy pervades and greatly informs the other three themes identified here. Indeed, respondents regularly positioned the user as having autonomy to either accept, reject, or modify the system’s outputs, and appeared to take solace in the fact that they could override the system’s decision if required with the assumptive position that the system had limits that they as operators were not subject to. For instance, Phil the sonographer reported that: “…there's definitely a human override to that. So it [the DSS] will churn out the results, it'll churn out the finding and it's up to the users who'll go, "Yes, I actually accept this finding." Or, "No, I actually believe it's probably this".

In most cases, it was apparent that the user had the option to modify the system’s decisions to be more aligned with their own, suggesting comparison to their own judgements (explored further in theme 3: Comparative Decision-Making). For instance, Ramya, a cardiac sonographer, notes: “[I] returned to the automation and slightly altered the borders and got the measurement that I was hoping to get”.

Dean, the pilot, underlines the critical importance of users being able to assert their control of the system when it is advocating a conflicting (and seemingly erroneous) strategy: “But that program, or that artificial intelligence told the airplane, “Hey, you're stalling and even though you're only 1000 feet above the ground, I'm going to push the nose down.” And the pilots had no control over it. So that's a classic example of automation going wrong and refusing to let the pilots take over. And we're talking about modern times, we're talking two years ago where these airplane computers have killed people. Because the pilot had no override mechanism. He couldn't say, "Forget this, I'm taking over." The aircraft kept pushing down. The pilot pulled back, the airplane kept pushing down”.

As with Dean’s example, the perceived importance of user control seemed to be centred on users’ reflections of limitations of the systems, relative to their own knowledge and skills, and the perceived need to be able to override the system. For instance, both Bill, the paramedic and Phil the sonographer reflected on the knowledge that they possess as workers, that the machine will not possess (explored further in Theme 2; Perceptions of System Capability). While control is undoubtedly critical to users’ experiences with DSSs, challenges exist in finding an appropriate degree of user input. As Cate, the ED doctor, reflects: “…the response that you get is only as good as the data that you put into it.”

While most respondents perceived control and autonomy as a desirable element of DSS interactions, we must also note that some concerns were raised. In particular, several of the senior workers mentioned the importance of the worker’s decision to either accept or reject the DSS-generated decision, and the need to reflect on their own reasoning in the process. While they believed that such control was a necessity of best practice, they also raised personal concerns about how such decisions would be handled by less experienced workers. Specifically, they expressed concern that, without the opportunity to develop extensive experience in a work setting and the resultant sophisticated reasoning strategies, their junior colleagues may be inclined to over-rely on the tools (i.e. engage in an automation bias). In this sense, this would change the relationship between DSS and worker, who would no longer use the machine as a ‘sounding board’ against their own reasoning but would instead anchor their reasoning to the machine’s projections. Nathan, the wound care nurse, stated that “I definitely think it can dumb people down. People won’t start using their own clinical judgment. I fear that that is one of the problems with many assistive sort of products”. Nathan’s concern about skill paucity as an outcome of DSS use was similarly shared by Steve, the airport security officer, when he spoke about an over reliance on DSSs and the resultant potential for system failures: “People become too reliant on it [the DSS]. I think that is where [we] become too relaxed. It's like if it hasn't flagged it as a bad thing, then it's not a bad thing. Oh yeah, it's an anomaly, but we'll just let it pass. We'll set up a dummy laptop so that the machine is not quite sure if it's an IED [improvised explosive device] or not, and we'll screen it through. You'll get another person, and someone says, "Oh yeah, it's not a thing. It's just a laptop in the tray," and they'll let it go. And then… you pull the officer off the machine and say, "No, this was actually an IED and you should have picked it up." From that perspective, sometimes people can become a little bit too relaxed with them where it's like, "Oh, well, it didn't say it was bad, so it must be okay," whereas they need to… reassess the situation”.

It is important to note that while perceptions of control and autonomy permeated discussions across all participants, subtle differences were observed across DSS users, which seemed to stem from context-specific factors. For instance, there was often mention of the importance of control from a perspective of worker accountability, however, workers core motivations and values were shown to differ across work roles and contexts. These differences speak to a layered understanding of DSS interactions and acceptance, which considers a myriad of context-specific factors, such as worker and organisational values.

(2) Perceptions of System Capability

Throughout numerous discussions, respondents positioned the system as being something that was unable to know or do things that they (or other human workers) could. For instance, Bill the paramedic regularly reported ‘knowing’ things that the DSS did not: “…Bowral Hospital comes up [as an option on the DSS], but we know that after, I think it's 5:00 PM or 6:00 PM, security actually goes home and the hospital orderlies take over... So we overrode that to go to Campbelltown”.

Similarly, Kylie the ICU nurse reflected on the effectiveness of the DSS operating in a broader system that included human workers who may commit errors that could not be anticipated by a machine: “It’s [the DSS] integrated and doing it [running insulin for patient] automatically but then you've also got the human at the bedside who is making sure that once you've got insulin running, you have to have something running alongside it that's putting glucose into the body, whether it be nutrition, whether it be dextrose or glucose. And depending on the person, the human, if there's an error in any part of that, if say, feeds were turned off or they put saline up instead of glucose and then you've still got ... How would the system know that?”.

Similarly, Ramya the cardiac sonographer reflected that the system itself struggled in conditions of complexity and uncertainty when she stated “… it was not able to detect the borders more accurately as a human eye could” and that she would have to anticipate these conditions and intervene. Dean the pilot also questioned whether DSSs could manage all arising situations: “A human being, a human brain has to be in command of that aircraft. This is because there's too many combinations and permutations that can happen out there in the real world which haven't been programmed into the computer, complex and dynamic scenarios that the designers didn't think of. There's a first time for everything, in other words”.

And so, users’ perceptions of the performance limitations of DSSs appear to be central to their acceptance.

For optimal compatibility between human users and DSSs, users would need to possess an accurate understanding of what the DSS can and cannot do, which may be limited by a lack of system transparency, familiarity, and education and training regarding device operations, which appeared to be limited for several workers. For instance, Bill the paramedic noted: “There's actually no training… in order to use it, there's no real training. It's basically, push a button and follow the directions”.

A common undercurrent when discussing issues of system capability, was a rather explicit cynicism around the future of AI in the workplace more generally. While most respondents reported the helpfulness of the DSS, several were somewhat wary of future advancements with AI technologies. For instance, several workers reported a strong belief that AI could not integrate data to formulate its own holistic understandings of situations and would always need the human worker to perform the task. Cate the ED doctor shared: “I think that there still needs to be room for humans to ultimately make decisions because we bring with us additional sets of skills and expertise. And it's a multifactorial process I think, although we may not realise it or recognise it at the time, when we are making important clinical decisions, we take on board lots of different things, including family history, examination findings, investigation findings, radiology, that kind of thing. And so, we're bringing together information from multiple areas together and then formulating a decision based on that”.

Dean the pilot echoed these sentiments, positioning a clear ceiling on the potential of the technology in his workplace: “Even if it’s machine learning, it's just too risky. You need the human element to stand back and say, "I know what parameters you're looking at. I know why you're giving me these instructions and advice. And I know you’re just about to take over control of my aircraft and passengers. But I'm not going to let you do this because of A, B, and C. I've done that a couple of times, whereby I've had advice from the aircraft and we've done something completely different, because we can look at the big picture. Because the computer's looking at zeros and ones, right? But it's not looking at the big overall picture and taking into account every possible variable that could occur in a short space of time. You need a human brain for that”.

Overall, these discussions suggest that some workers believe that only ‘weak’ or ‘narrow’ versions of AI (i.e. that which is limited to specific tasks; Innes & Morrison, 2021) will ever be used in the workplace, and that ‘strong’ or ‘general’ versions (i.e. that which can learn any cognitive task undertaken by a human) are either something to emerge well into the future, or even an impossibility, as Dean the pilot believes: “No matter how intelligent the systems are on board, they're not going to stop you flying into a mountain… We're looking at the weather ahead, possible turbulence, mountainous terrain, other aircraft, restricted airspace - all of these things. These parameters are so variable and dynamic that I think it's almost impossible - you'd have to have a skyscraper full of computers to be able to handle all the permutations”.

In some cases, respondents expressed concerns around an increased reliance on the technology in traditionally ‘human’ or ‘soft-skill’ work domains. For instance, Bella the psychologist remarked: “I mean, that's a scary... I heard on the news that they're going to make like robot therapists... So, I wouldn't want there to be because I think you need that humanistic side to be able to explain things or to be able to give your clinical impression...”.

Taken together, these reflections underline a need to explore how users’ persistent attitudes and beliefs about the capability of the technology may impact their interactions and acceptance of the technology.

(3) Comparative Decision-Making

Another pervasive themes observed in respondents’ recollections pertained to the way in which they considered the DSS’s decisions. Specifically, several respondents reported that they will check the system’s decision against their own. For instance, Phil the cardiac sonographer reported that the “…system advice was helpful because it correlated… with all the expectations. It came out with what I wanted to actually see come through as the result for the patient”.

Cate the ED doctor also reported using the DSS in a way to supplement her own judgement, stating ‘I would never use it to completely replace my own decision-making, but certainly, to complement and to supplement it. I think it’s a really good tool’. Similarly, Ramya the cardiac sonographer discussed how ‘the automated imaging was more than what I anticipated…’ and described the need to ‘compare [the system’s output] with your clinical judgment’. As such, a great degree of emphasis was placed on the users’ own judgement being somewhat of a reference point or decision anchor, while the machine was used as a form of validation. Further, several interviewees reported receiving a boost in confidence when there was a good alignment between their decision and the DSS-generated decision. For instance, when asked about how well the system complements her expertise, Cate the ED doctor reported that: “…it [the system] complemented it well…in terms of…giving me a lot more confidence in my diagnosis…it's an easy way of getting a second opinion in our decision-making… It's almost like a stroke to the ego when you get a diagnosis right and it matches with something that's artificially generated as well”.

Kylie the ICU nurse echoed the comparative sentiment, and expressed a natural scepticism towards the DSS-generated decision, which ultimately stemmed from previously discussed perceptions of control and autonomy: “Look, generally speaking, I take things with a grain of salt because I know a lot about what I'm looking at already. So I go, "Okay, do I want to listen to this or not?" And for me, when I'm looking at if it's accurate or not… I don't need to say, "Oh yeah, that's what I'll do because the app tells me that." And so often, I will say, "No, I'm going to change it to something different." Because there's other little nuances that I'm looking at”.

Conversely, we saw increased instances of overreliance in situations characterised by uncertainty. For instance, Bill the paramedic describes situations in which he will simply ‘go off [the system], because we just basically don’t know otherwise’. Ultimately, the fact that workers will regularly impose their own decision as a point of reference in DSS interactions again speaks to the overarching theme of users’ perceptions of control and autonomy (Theme 1).

(4) Perceptions of System Transparency

The degree of perceived system transparency associated with the DSS was a notable theme. Specifically, in instances where users experienced dissonance between their own expectations and the machine’s outputs, they reported on a need to know ‘why’ and ‘how’ the system came up with a particular decision. For instance, Bella the psychologist discussed her experience with questioning how the system derives its decision, saying: “I guess you would have to look back at why the advice was problematic and how it was problematic. And so if it was problematic in terms of a diagnosis, you would worry about is it asking for the right input data versus if the advice was more around treatment or management, then you would, I guess, question not so much the diagnostic ability of the software, but its ability to apply that or extrapolate that diagnosis further into offering sensible treatment regimens or all strategies. And so yeah, if it was completely bizarre, I would certainly question its utility and why it was asking input of particular data”.

The transparency theme also reinforces the problems identified in Theme 2 (Perceptions of System Capability), as users’ assumptions about what a DSS can and cannot do are not sufficiently showcased during interactions. Indeed, it became apparent in conversations with several workers that they had a limited understanding of the inner workings of the system they were engaging, and as a result made several assumptions about what it was unable to do (e.g. form an integrated view or ‘big overall picture’ of the problem). Arno the actuary, who is active in the development of DSSs, reflects on the importance of transparency in gaining users’ trust and acceptance: “…what's very important in my line of business, is that the tools that you build, you can actually explain them and you can reason about them, and that they actually make sense before they get adopted. So it's definitely not the case that we just build whatever most sophisticated AI model is out there, and we just plug it in without thinking about it because you wouldn't trust it… So still the decisions are in the hand of the humans, but we are explaining what the AI model is thinking, and we're also showing them, okay, because of these features, and actually in the past, here are 10 claims that we've seen in the past that has similar characteristics that we actually found to be fraudulent. So we refresh their memory in terms of saying, "Look, this combination makes us think it's fraudulent. And here are examples of this pattern where it was fraudulent in the past." And that helps them to accept what the model is saying or what it's reasoning”.

Critically, Arno tempers his enthusiasm for increased system transparency by noting that too much transparency may lead to a degree of complacency and even over reliance among users: “Then [it’s] challenging to make sure that the outputs that we are getting from this model, are not just blindly followed, but are actually really constantly critically challenged...”.

Discussion

As part of a mixed-methods approach, we used questionnaire and interview techniques to examine workers’ experiences relating to DSS use in naturalistic settings (i.e. ‘in the wild’). Our aim was twofold: to 1) Reveal what workers perceive to be the most important factors when deciding whether to accept support from a DSS in the workplace and 2) Elicit patterns that emerge from DSS users’ recounted experiences of critical incidents involving DSSs, which may impact their future use of DSSs (i.e. technology acceptance). Below we discuss the findings in relation to each of our research questions, as well as note several limitations and directions for extending on the research.

Important Factors in DSS Acceptance

We surveyed workers’ perceptions of the most important factors when deciding whether to accept advice from a DSS. Notably, all factors offered for assessment were shown to, on average, possess some importance for responders (i.e., all were considered to be at least slightly important). Further, an additional 19 factors were identified among workers in open-ended responses. This result suggests that workers’ compliance with DSSs may be markedly moderated by a substantial range of variables relating to factors featuring prominently in the literature (e.g. system accuracy, transparency and compatibility), as well as a range of other less visible, and often context-specific factors (e.g. ethical considerations, developer bias and organisational policy), which would typically fall outside of the scope of other usability frameworks (e.g. the TAM; Davis, 1989).

Marked differences in mean ratings were identified. Six of the 25 factors were considered to, on average, have a relatively high level of importance for respondents (i.e. rated as very important to extremely important), including: the quality of the advice provided by the system; how important the decision is; how much risk is associated with the decision; how accurate the system has been in the past; how accountable you are for the decision; and how easy it is to understand how the system generated the advice. These factors are somewhat aligned to the existing literature on algorithm aversion (Burton et al., 2020; Dawes et al., 1989; Einhorn, 1986; Dievorst et al., 2015; Grove & Meehl, 1996; Highhouse, 2008), and add value to this literature base by demonstrating their generalisation beyond highly controlled behavioural experiments to real-world work settings.

Interestingly, we also note an apparent ordering of priorities based on a theming of the items. For instance, several of the highest ranked factors pertain to the context of the decision being made (e.g. importance, risk, and accountability) and the quality of the system used (e.g. advice quality and accuracy). This may imply that contextual variables will be key moderators in the human-DSS relationship and suggest the need for empirical evaluation studies to be performed at a localised level (i.e. specific to work role and industry); indeed, we caution against a one-sized fits all approach to understanding DSS use. Other highly ranked clusters of items refer largely to system usability (e.g. ease of understanding, amount of information provided and degree of intuition in use), which underlines a need to consider the relative cognitive compatibility between users and DSSs in investigations of system acceptance, which has been noted here and elsewhere (Hollnagel & Woods, 2005; Inagaki, 2008; Liu et al., 2021; Lyons, 2013; Rushby, 2002). The fact that user-related factors, such as relative experience with technology and/or DSSs, are perceived to be relatively less important among workers, emphasises the need to consider a human-centred approach to the design of DSSs, and potentially the need to develop systems that are ‘forgiving’ of user errors that may arise from a lack of experience, overconfidence, or similar.

Emerging Patterns from Users’ Experiences with DSSs

The interview data showed a strong alignment with the clusters of items identified via the questionnaire. For instance, issues of system capability were prominent. Indeed, users’ perceptions of the performance limitations of DSSs appear central to their acceptance, as is regularly cited in the algorithm aversion literature (Dievorst, et al., 2015; Saragih & Morrison, 2022). As such, developers should consider the ways in which users may be better informed of the capacities and limitations of DSSs, to ensure that they are not incorrectly or over-weighting factors in the determination of system utility.

Perceptions of system capability were found to be frequently associated with issues of user control and autonomy, which were also rated highly in the questionnaire (e.g. degree of control in use, input, and modification). Users’ choice to use and trust in DSSs was continually framed within discussion of what the DSS was able or unable to do. At times, this also appeared to be moderated by the degree of users’ comprehension of how the system worked (i.e. its perceived transparency). Users often reported an attempt to attain system visibility via their own interactions (e.g. inputs) with the system. In this sense, transparency would become a lever that moderated users’ need for control. Consequently, we posit that the apparent need for a high degree of user control, which in some cases will be problematic (i.e. system under-reliance), may be alleviated by demystifying the inner workings of DSSs (e.g. via greater incorporation of explainable and ‘white-box’ AI; Loyola-Gonzalez, 2019). However, we must also emphasise the sizable challenges in determining the extent to which users can understand DSS processes, and to which the user should have control in system interactions. Critically, the precise impacts of such variability in system use on workers’ own health and wellbeing (e.g. cognitive strain, frustration and wellbeing) remain largely unexplored (Morrison et al., 2023).

These challenges may also partly explain users’ prominent tendency to use DSSs as a means to check the accuracy of their own judgements, rather than as a source of independent option generation. While several users reported concerns of automation bias among less experienced users, these were only second-hand reports, with most users’ reporting behaviours implying a degree of under reliance. Indeed, interviewees regularly reported basing their acceptance of DSS outputs on the degree to which they correlated with their own judgement, placing a premium on worker expertise.

In the advice acceptance literature, the differential information theory proposes that advice discounting stems from the fact that, unlike with people’s own opinions, they are not aware of advisors' internal reasons for their opinions and so are less likely to fully accept them (Yaniv & Kleinberger, 2000). A lack of transparency at the DSS-user interface may cause a similar discounting effect among these workers, and in turn, represents an emerging risk in advanced technology work environments that incorporate increasingly opaque systems (e.g. those incorporating artificial intelligence and machine learning).

An under reliance on DSSs could represent a significant barrier to effective real-time operations, as well as users’ capacity to learn from system interactions. Similar reactions have been observed among champion players of the Chinese board game ‘Go’ when they were recently pitted against an artificially intelligent player, Google’s AlphaGo (Metz, 2016). A now infamous move by AlphaGo (‘Move 37’) during a high stakes game against a human opponent was initially widely interpreted as a ‘mistake’, one which deeply shocked players and onlookers. However, despite the apparent ‘strangeness’ of the move, it was ultimately key to AlphaGo’s victory over its human counterpart. This highlights a fundamental problem in the human-DSS relationship; human users will be regularly confronted with outputs that are counterintuitive. Critically, once human players could ‘unpack’ the machine’s moves post-game, they were able to successfully leverage the experience to greatly improve their understanding of the game (and in some cases improve their professional rankings substantially; Metz, 2016); even those who are already considered experts. Therefore, we underline the importance of figuring out ways to temper intuitions that would see users erroneously dismiss machine advice, based on seemingly extreme incompatibilities in initial outputs. This is expected to be particularly challenging among users that have developed their own degree of expertise, which was heavily emphasised in the comparative decision-making theme we identified. Taking these factors into account, we argue that developers should look to design systems that afford the user an optimal degree of control in the input of data and provide enough transparency for users to understand how their actions are influencing the system’s decisions. Additionally, ideally users should be able to interact with the inputs in a way that matches their natural information processing strategies (Liu et al., 2021; Morrison et al., 2010). However, despite advances in the field of explainable and ‘white-box’ AI (XAI; Loyola-Gonzalez, 2019), the extent to which users can understand DSS processes, and to which the user should have control in system interactions remain challenging questions for developers.

Finally, we also note a strong degree of cynicism expressed among interview respondents regarding the future of AI in the workplace more generally, and in particular, their potential to fully supplant human workers. This finding underlines a paucity of consideration of workers’ beliefs and attitudes in human-DSS interactions in the literature and highlights the case for exploring their influence on DSS acceptance in the workplace (Morrison et al., 2023).

Extending the Study of Technology Acceptance via NDM

While utility and usability features that dominate the TAM appeared to resonate in themes of perceptions of system capability and transparency here, the current approach to feature elicitation, grounded in the NDM paradigm, successfully extended on the scope of user considerations elicited. For instance, while perceptions of control and autonomy were found to be related to these features (around 20% of the time), its prominence as an influential and pervasive factor in user-DSS interactions beyond these factors (around 70% of the time) is clear in the current findings. Indeed, we revealed that this theme encapsulated an array of contextual individual motivations and attitudes, organisational and regulatory influences, as well as an indication of the ways that such features interact with others to influence users’ behaviours. These insights can inform system design and implementation, as they make it possible to infer the likely barriers to user acceptance in practice. Further, while it is not our intention to propose specific revisions to the TAM here, the findings do support an extension of the scope of features typically considered in the model. This may involve the inclusion of distinct factors (e.g. control and autonomy), contextual factors (e.g. motivations and organisational influences), and the potential for interaction between such factors in the influence of users’ attitudes and behaviours when engaging with new technologies.

The tendency for users to use the system’s outputs as a point of comparison with their own sensemaking, judgements and decision-making provides insights into the prevalent approaches to actual DSS use, which often extend beyond design intentions and conceptions of optimal use and are only readily revealed when considering behaviour through a naturalistic lens. This firmly establishes the utility of the NDM approach and its methodology (e.g. CDM) in investigations of technology acceptance.

Limitations and Future Directions

The qualitative approach employed is a deviation from the typical research in this field, which frequently favours the use of quantitative surveys (e.g. usability surveys). The benefit of employing qualitative methods is that they enable the in-depth examination of participants’ past experiences, and a level of insight into their cognitions, motivations and attitudes that is rarely possible with quantitative work alone. Indeed, the current retrospective interview and thematic approach successfully illuminated a series of pervasive concepts and patterns among workers using DSS technology in the workplace. However, it is recognised that such techniques may be vulnerable to rationalisation (Lucas & Ball, 2005) and/or social desirability (Bergen & Labonté, 2020) effects. However, we note the use of a mixed-methods approach used here has enabled a triangulation of key phenomena and a more nuanced understanding of the interplay and gaps between workers’ perceptions and real-life experiences. This is recommended as best practice moving forward.

Relatedly, while the analysis revealed four thematic components, our discussion illuminates the complex interactions between them. Although such overlap is not surprising, the qualitative, descriptive approach employed precludes an assessment of distinctness and redundancy among the components. An extension that captures quantitative data would enable insights into the structure of these constructs via factor analytic techniques.

A further limitation relates to the sample of participants recruited. This study intentionally selected a broad range of workers from multiple industries to identify commonalities that might transcend specific work settings, industries, or DSSs and avoid a potentially infinite number of localised investigations. Participant testimonies detailed variations in the DSSs used, organisational context, and roles and tasks, which also manifested in differences in information processing strategies engaged by workers (i.e. the degree of analytical versus rapid forms of cognition required of workers). This decision to target a broad population of workers, DSSs and settings, precluded the meaningful decomposition and interpretation of context-specific cognitive features, which are regularly yielded in CDM studies (e.g. environmental cues and cognitive demands; Wong, 2004). Similarly, the heterogeneity of the sample meant that pervasive patterns that relate to industry or organisation-specific variables (e.g. pressures that arise from organisational and cultural influences) were not afforded an opportunity to emerge in the current findings.

Conclusions

The questionnaire results underscore a range of factors considered to be very important to the acceptance of decisions made by DSS technology (i.e. decision quality; decision importance; decision risk; historical accuracy of system; decision accountability; and system comprehension). The interview data yielded several interconnected themes that illustrate patterns that emerge from DSS users’ recounted experiences, which likely influence their intention to use DSSs in future practice (i.e. perceptions of control and autonomy, perceptions of system capability, comparative decision-making and perceptions of system transparency). These findings validate an extension of technology acceptance research methodology beyond traditional usability approaches that focus predominantly on usefulness and ease of use, by highlighting other salient areas of influence not frequently considered (e.g. ethical considerations, developer bias, organisational policy and values and cynicism towards technologies), as well as their complex relations. The use of an NDM lens enables a richer approach to studying technological interactions, which is required to best understand and predict the nature of actual behaviour and use in the workplace. Such understandings can help inform the design, development, and implementation of effective, safe, and sustainable DSSs and other new technologies ‘in the wild’.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Centre for Work Health and Safety.