Abstract

Autism spectrum disorder, cerebral palsy, brain stem stroke, and neurological injury are examples of conditions that may limit vocal communication. Augmentative and alternative communication (AAC) systems can provide a communication pathway to users who experience such complex communication needs, facilitating their societal participation and supporting some ability to direct their own care. We adapted the cognitive work analysis (CWA) framework to a linguistic domain for insights into an AAC design that best supports users’ communication. First, we applied the work domain analysis (WDA) to a popular commercial AAC system, Proloquo2Go. Data were gathered from guided AAC system use, domain experts, and the syntactic rules of the English language. The WDA exposed unmet needs in the commercial system. We then applied worker competency analysis to consider different approaches to present information and support user actions. The design included graphic forms and process views, and their integration into viewports and the workspace. Our novel application of CWA uncovered new considerations in AAC interface design and presents a nascent area of investigation, namely, AAC displays that more effectively support users’ goals. Future investigation will evaluate the mental workload of this AAC interface compared to that of current commercially available systems.

Introduction

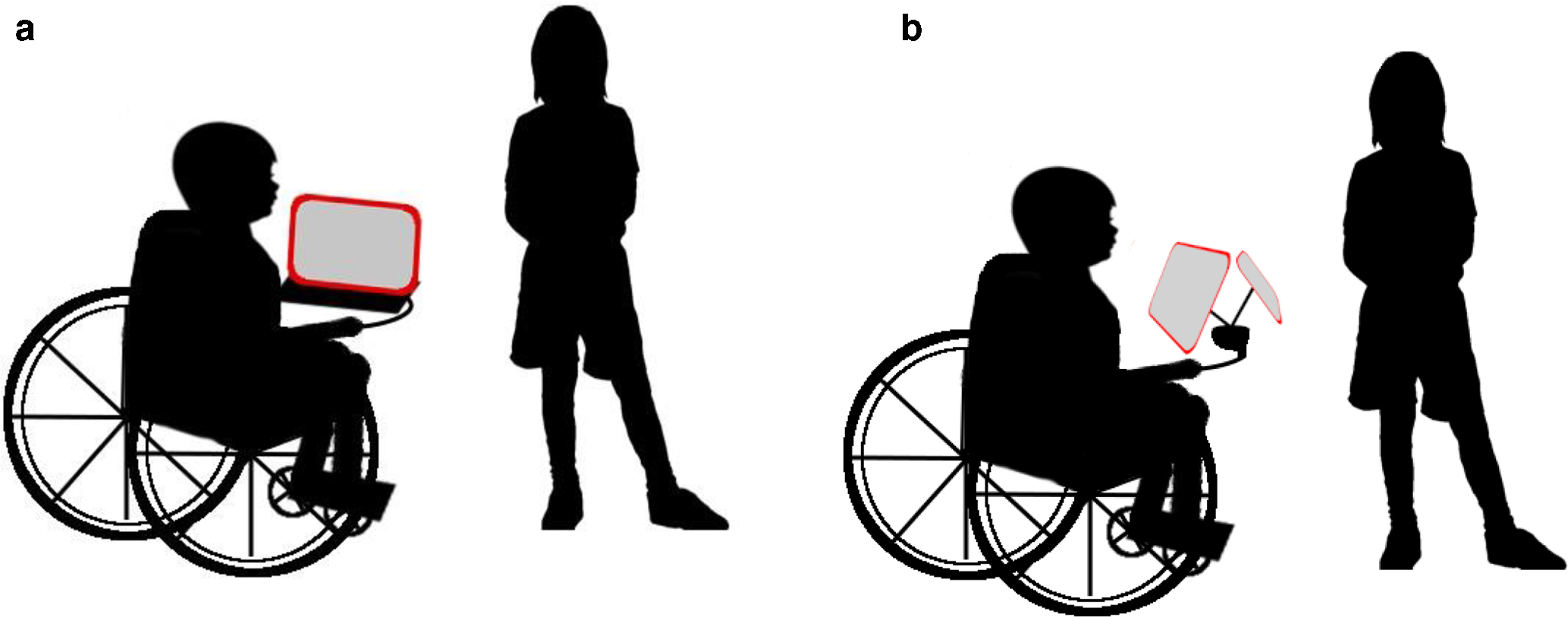

Communication is the intentional or unintentional act of providing or receiving information (National Joint Committee for the Communication Needs of Persons With Severe Disabilities, 1991). Access to communication and its full realization in education, the development of personal interests, and sustaining the enjoyment of life is considered a human right. In particular, as a condition of healthy development, children should be provided the ability to express their views and be included in social and educational activities (United Nations, 2006). Autism spectrum disorder, cerebral palsy, brain stem stroke, or acquired central nervous system injury are among the conditions that may limit a child or adult’s verbal communication (Light & McNaughton, 2012). Individuals experiencing complex communication needs may use diverse systems as an alternative pathway for speech; such approaches are known as augmentative and alternative communication (AAC; Orhan et al., 2016). An electronic speech-generating device (SGD) is a popular example of an AAC technology. A typical configuration of an electronic speech-mediated conversation is shown in Figure 1a. In the simplest case, a user either triggers the machine-enunciation of a small number of predetermined messages, whereas in a more advanced deployment, the user selects combinations of symbols, pictures, phrases, words, or letters to compose a message that is subsequently machine articulated. Both enable communication by generating language. Current commercially available electronic speech generation AAC devices employ a digital user interface as a means of control. Users access digital interfaces directly, with a touch screen, or through a switch that leverages voluntarily controllable actions.

(a) A typical setup for a conversation between an AAC user and a conversational partner. (b) Orientation for a user interface (left screen) for the AAC user and a communication partner user interface (right screen) for the conversational partner for AAC-mediated communication.

Augmentative and Alternative Communication Interfaces

The design of AAC user interfaces strongly affects the device’s performance and is an important consideration for a system that acts as the primary communication pathway for users (Akcakaya et al., 2014; Paas et al., 2012; Tullis & Albert, 2013; Yan et al., 2017). Research into effective AAC visual interfaces has become more prevalent in recent literature. Light et al. (2019) emphasized the importance of developing empirical research-based guidelines to support the development of AAC interfaces. However, to date, most research has focused on visual scene displays. Visual scene display (VSD) interfaces display communication options, in visual and contextual representation, as items or actions associated with an image shown in the viewport. VSDs are well-suited to AAC users who are early in language development through supporting pragmatic and semantic language development (Light et al., 2019). On the other hand, grid-based displays, such as Proloquo2Go and the proposed AAC interface, provide communication options through word and/or symbols organized in a grid. As grid-based displays afford the opportunity to exercise more advanced literacy skills to communicate a greater diversity of messages (Light et al., 2019), we focused exclusively on this genre of AAC interface. Specifically, we limited our consideration to SGD software that provides an alternative English language communication pathway and are, at the time of writing, eligible for reimbursement through the Government of Ontario’s Assistive Devices Program (Ontario Ministry of Health, 2019; Ontario Ministry of Health and Long-Term Care, 2016). AssistiveWare’s Proloquo2Go software is a grid-based iOS and macOS application that presents vocabulary in word-and-symbol color-coded cells organized thematically (AssistiveWare B.V, 2019). A number of other SGD apps, including Chat Fusion, Nova Chat, Accent, TouchChat app, Grid 3, and Tobii DynaVox Compass app, make use of the WordPower language system, which prioritizes core words in a grid-based combination of color-coded word cells and word-and-symbol cells, while offering spelling and sentence prediction (Inman Innovations, 2000). Jabbla’s MindExpress similarly presents vocabulary in grid-based color-coded word-and-symbol cells and thematic categories. Due to its commercial popularity, affordability, accessibility, and comparable presentation and navigation offering to those of other grid-based SGD software, Proloquo2Go was chosen as the representative commercial, electronic AAC interface in this study.

Menu ordering use case: Example commercial AAC interface in action

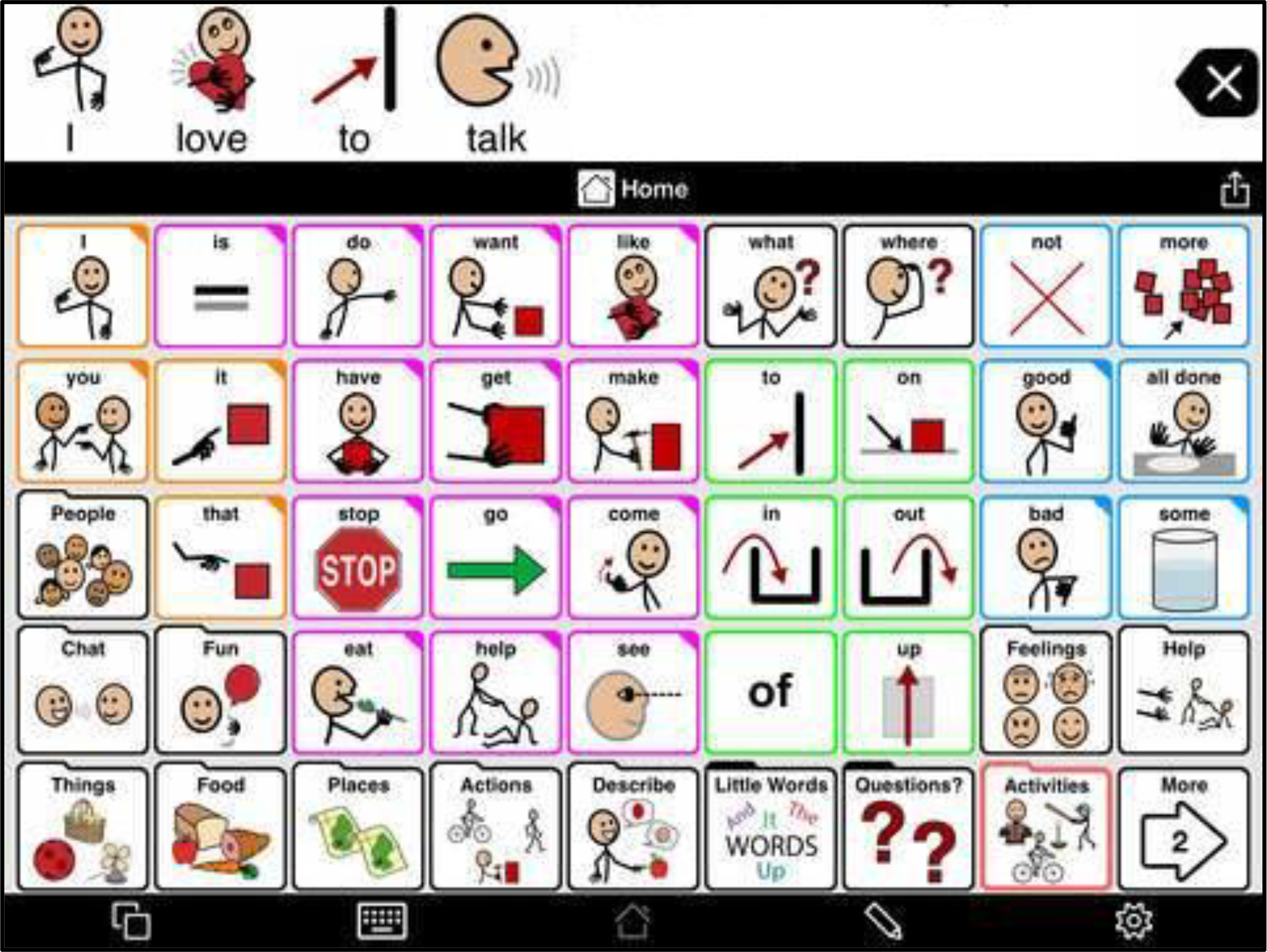

Figure 2 depicts a typical home screen on the Proloquo2Go interface. The following example depicts how a user of a commercial AAC device might order a drink. The user is non-speaking but without loss of generality can directly touch the screen to make selections. To order a coffee, for example, the AAC user aims to compose the “small coffee.” In the AAC system’s idle state, the customer’s interface shows a home screen of a grid of colorful word and category cells with a blank message bar above.

A sample of a possible home screen of the Proloquo2Go Apple application. Retrieved from (AssistiveWare B.V, 2019) on May 10, 2021.

If the user needs to capture the attention of a server, they use gestures or vocalizations within their ability. The user may also leverage a word or phrase on the home screen as an “attention grabber” message.

As “small” is not a regularly used word, the customer searches for and selects the “Describe” category. Words and categories within the “Describe” category replace some of the words on the home screen. The user searches for and selects the word cell corresponding to the adjective “small.” The system enunciates the word “small,” which now also appears in the message bar. The interface then automatically reverts to the top level of the message hierarchy. The user now searches for the “Food” category, and once selected, the display updates with types of foods. The user subsequently searches for the Drinks subcategory (not shown in Figure 2) and finally selects the noun “coffee,” which is then enunciated by the system.

At the completion of the phrase, the customer presses on the word bar. The system articulates the selected words again sequentially, that is, “small coffee.”

The server asks for clarification in the form of a yes/no question. The AAC user searches and selects the Small Words Category. Words within the “Little Words” Category replace some of the words on the home screen. The customer searches and selects either “yes” or “no” and selects the word cell.

Interface Shortcomings of Existing AACs

From the above example and upon scrutinizing Figure 2, a number of observations are evident. The home screen in Figure 2 contains numerous buttons that are not conducive to memorization or rapid visual scanning. While color coded, the categorical grouping is ambiguous (e.g., the green colored buttons include prepositions, adjectives, and adverbs) and the location of specific buttons is nonintuitive (e.g., “what,” “where,” and “who” appear at the top of the grid, whereas the category for “Questions” is situated at the bottom of the grid). Ideally, the user interface would mitigate unwanted attention capture, constantly maintain the user’s mental model of the system of speech-based communication, reduce procedural traps, support memory of events that occur within the system, maximize error tolerance, and provide feedback for actions to support user understanding (Rasmussen, 1985). The example in Figure 2 seems to fall short of these requirements.

Users of AAC devices require extra time to plan and initiate their communication (e.g., time for word finding) and sufficient accommodation from dialog partners (e.g., waiting patiently between turns or providing discrete choices), for a socially inclusive conversation (Remington-Gurney, 2013). These conversational constraints are likely attributable in part to the AAC interface, with which accurate and fast spontaneous interactions can be challenging. For users of AAC technology, an unanticipated event such as a clarifying question or an unexpected need for assistance requires the effortful composition of an unplanned message. Fundamentally, as communication is spontaneous by nature (Andzik et al., 2016), accurate and efficient communication cannot be comprehensively modeled within an AAC interface. In other words, the interface cannot account for every possible communicative task and is thus not suited to exhaustive description of goals and plans (Salmon et al., 2010).

From the case example above, one can argue that current AAC interfaces primarily support knowledge-based communicative behaviors. Actions taken by the user (behaviors) can be broken down by mental workload levels into three groups: (1) skill-based behavior, which is highly automatic with low attentional requirements; (2) rule-based behavior, which is a procedural sequence of actions as a result of a specific cue; and (3) knowledge-based behavior, which is problem-solving that demands the highest mental workload (McIlroy & Stanton, 2015; Rasmussen, 1985). Control of a system often requires different user behaviors at different system states, so all levels of behaviors must be supported in an interface design. With current AAC interfaces, automatic and routine responses can be challenging to master.

Improving AAC Interfaces: The Potential of EID

Cognitive work analysis (CWA), as discussed by Vicente (1999), is a framework that can be applied to socio-technical interactions between humans and information, to model the essential characteristics of complex information exchange systems (Naikar, 2013). CWA identifies constraints imposed on a system through its work domain, control tasks, strategies, organizational structures, and individual or technical capacities through a formative analysis (Flach & Voorhorst, 2016). The framework deconstructs the overall goal of a system through different phases of analysis (Vicente, 1999). CWA is popular in systems within the fields of aviation, military, and healthcare. However, CWA’s application in health care has focused primarily on acute care while applications in continuing and rehabilitation care have received less attention (Jiancaro et al., 2014). Ecological interface design (EID) applies the work domain analysis (WDA) and worker competency analysis (WCA) phases of CWA to develop a user interface that considers the system constraints imposed by the environment (Burns & Hajdukiewicz, 2004). Knowledge of the structure of a system provides the ability to generalize information for more efficient processing (Rasmussen, 1985). Using abstraction decomposition, WDA relates the goals of a system to the functional capabilities of its components. This identifies the constraints imposed by the work domain of a system as well as the information required of the system to support the user’s control (Rasmussen, 1985; Vicente, 1999). As the actions required for system control during a novel event cannot be planned, the interface design must support creative solutions that arise through user adaptation (Naikar, 2013). WCA determines how skill-, rule-, and knowledge-based behaviors can be supported in interface design to optimize human cognition (McIlroy & Stanton, 2015). In sum, EID presents a structured framework for modeling the cognitive demands of a system through the constraints imposed by the work environment to obtain user-interface design features (Birrell et al., 2012; Flohr et al., 2018; St-Cyr & Kilgore, 2008).

Our proposed application of EID is novel and necessitates some extension of methods for its application to a linguistic domain for AAC. However, due to the emphasis of interface design within this study, we sought to address several shortcomings of current electronic AAC interfaces via the EID framework. Specifically, we anticipated that an EID-driven approach would yield an interface that could support unanticipated events and afford the opportunities for the development of skill- and rule-based behaviors.

This paper describes a prototype AAC interface created through the application of the EID framework to speech-based communication. Here, AAC devices are viewed as a part of a conversational system that includes the user and at least one conversational partner. The scope of this analysis focuses on how information is presented to the user of the AAC device and a single conversational partner.

Methods

To inform the design of the AAC interface, WDA and WCA were applied to govern the design of graphic forms and their integration into a user interface. Investigation into the AAC work domain was achieved through interviews, exploratory operation, and examination of English language grammar rules.

Language is a widely used tool for communication that is defined by traits associated with articulation and subsequent understanding of a communication partner. An individual’s perception of context, sound characteristics, and concepts related to word articulations all influence their understanding (Dessalles, 2008). Words are elements of language that represent concepts. Words are composed of phonetic units organized in phonological patterns with morphological structure. Words, in turn, are ordered into phrases according to syntax and thereby carry semantic information (Fay et al., 2014; Fromkin et al., 2011; Huddleston & Pullum, 2002; Beatrice & Kroch, 2007). Providing the ability to construct phrases to convey thoughts may improve understanding between partners in communication, and thus improve communication efficiency through increased information transfer rate.

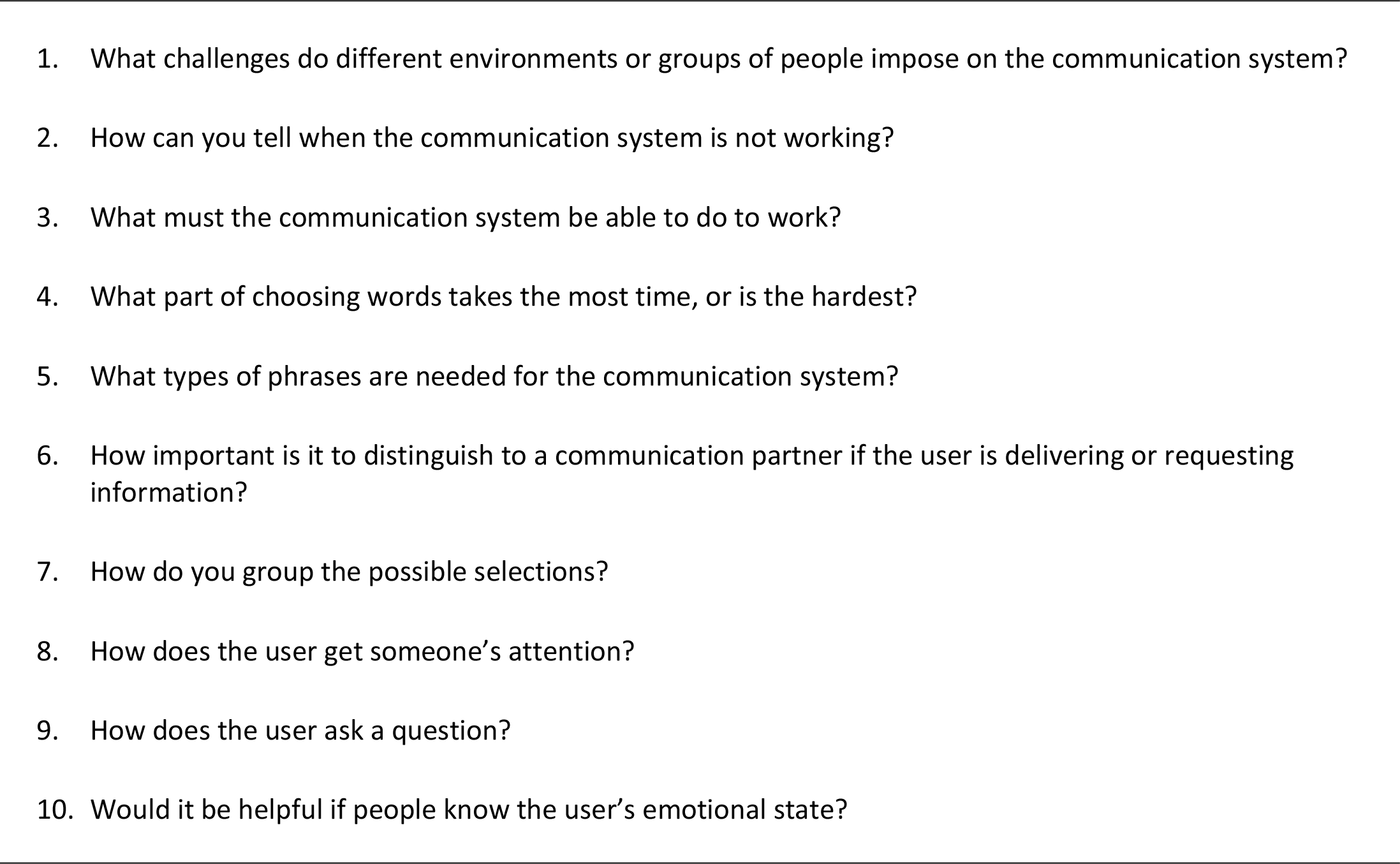

Practical pathways applied by AAC users to accomplish communication were determined through consulting with domain experts: a speech-language pathologist, and two adults from families of pediatric users of AAC devices. The family-member interviews were conducted through a family engagement in research program. Although we only connected with two family partners, we were able to consult throughout the design process, providing us descriptive information on their child’s AAC use. Interviews included questions outlined in Figure 3, adapted slightly to best refer to the interviewee’s relation to the AAC device user. Interviews with domain experts were valuable in providing insight into essential functions of communication that are not supported by existing AAC devices. During the interviews, situations were described in which AAC device users employed unique techniques to convey the nature of a communication act when conversing with familiar conversational partners. Reliance on these techniques with unfamiliar conversational partners, however, posed barriers to independent communication. We also found that phrases are often condensed into necessary words that cannot be implied through context. For example, the question “What did you do at work today?” was paraphrased to “What do work today?”

Questions posed to parents of children who use AAC systems as a communication pathway.

To supplement domain expert interviews, secondary data were collected (Stewart & Kamins, 1993) to build the researcher’s understanding of the AAC mediated speech-based communication system. Exploratory operation of Proloquo2Go (AssistiveWare B.V, 2019) and Grid 3 (Smartbox Assistive Technology, 2020) AAC interfaces were guided through consulting setup guides, manuals, and online resources such as manufacture webinars. Manufacturer information and instructions informed the availability of features of the AAC systems. Secondary research data and domain expert insights were combined to provide an understanding of the elements and relationships within an AAC system work domain.

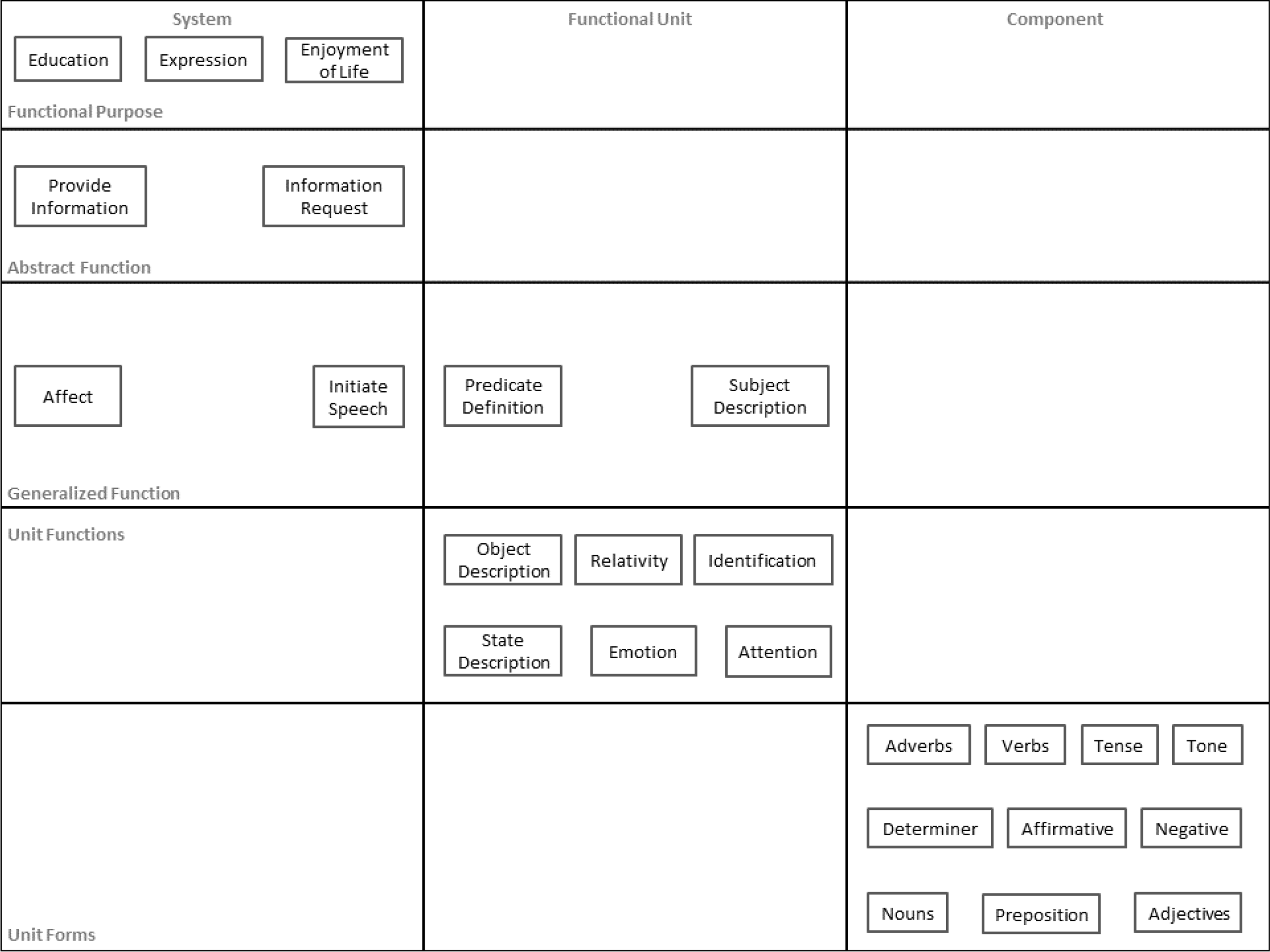

Abstraction Decomposition

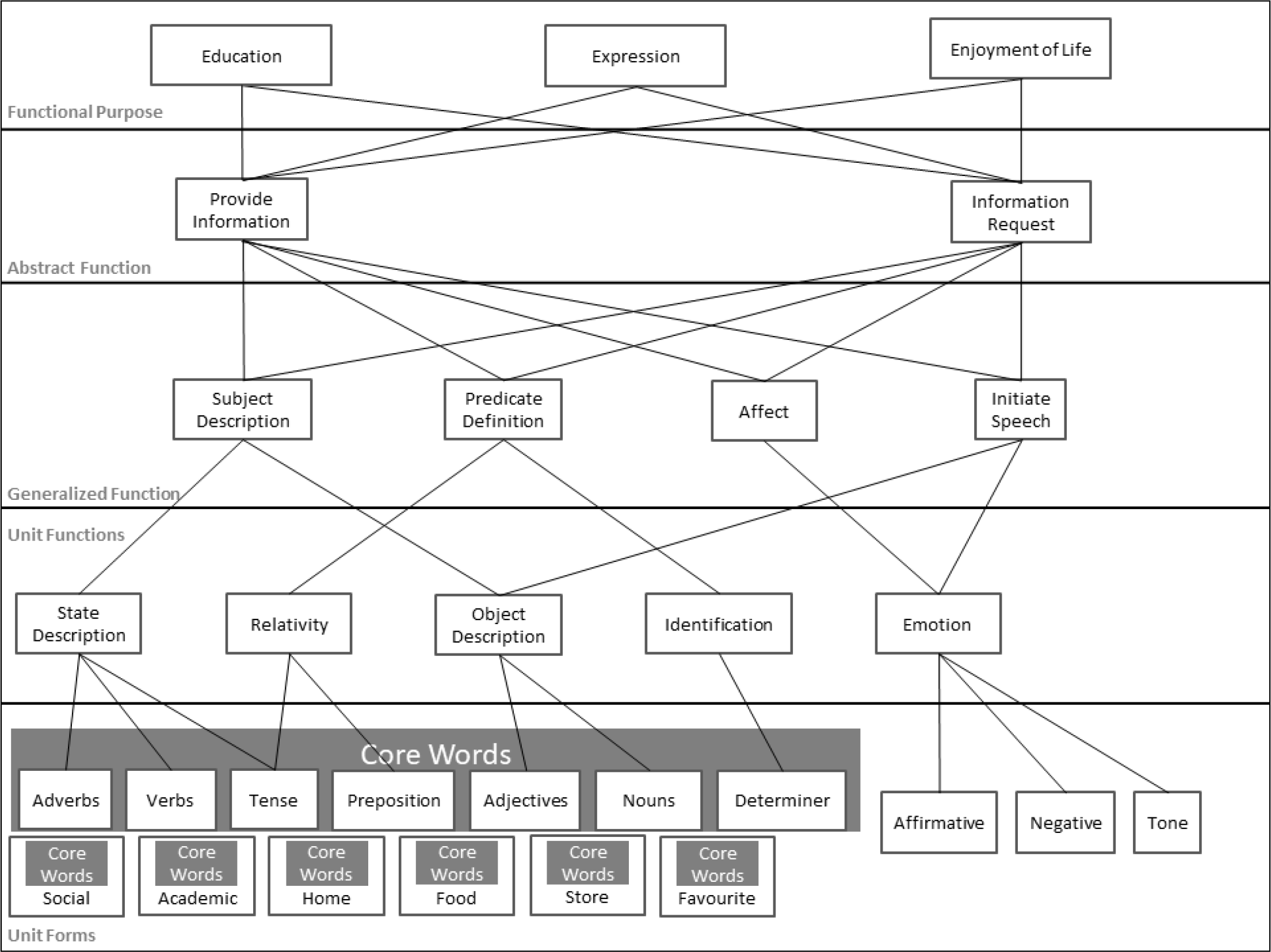

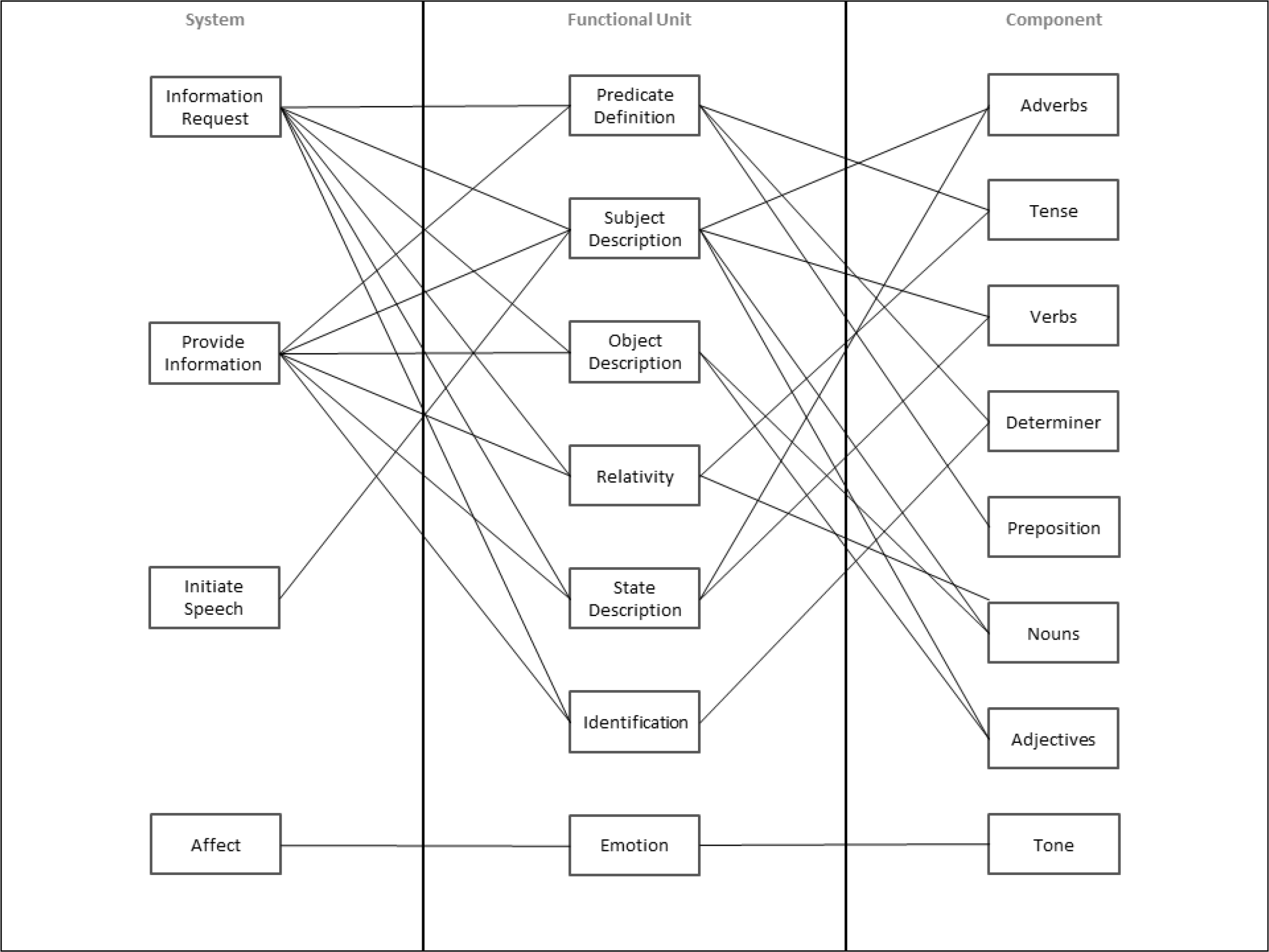

As seen in Figure 4, the AAC interface was decomposed into three levels of resolution from whole to part. This involved, namely, system, functional units, and components of a speech-based communication system. Additionally, there were five levels of abstraction: functional purpose; abstract function; generalized function; unit functions; and unit forms of the system in the abstraction-decomposition space. Abstraction level names were chosen to be different from traditional titles, and they were developed to describe the physical systems analyzed in the inaugural applications of CWA (Rasmussen, 1985; Vicente & Rasmussen, 1992). However, elements of a speech-based communication system are not physical, and they are not described by the traditional titles that reference “physical form” or “physical function.” The term “unit” was chosen to represent the base construct of communication consisting of morphemes that fulfill functions that accomplish purposes (Tenny, 2016). The decomposition of the AAC system was governed by the syntactical rules of the English language. Elements within the abstraction decomposition were connected between abstraction levels through means-ends links (shown in Figure 5) and across decomposition levels through part-whole links (shown in Figure 6).

Abstraction decomposition of an augmentative and alternative communication (AAC) system.

Abstraction hierarchy of an augmentative and alternative communication (AAC) system showing means-ends relationships.

Decomposition hierarchy of an augmentative and alternative communication (AAC) system showing part-whole relationships.

Consider first the means-ends links of our hierarchy (Figure 5). The highest abstraction level described the purposes of language and communication according to the United Nations Convention on the Rights of Persons with Disabilities (CRPD; United Nations, 2006). Specifically, the AAC system was modeled as affording the user the functional purposes of “education,” “expression,” and “enjoyment of life” through the communication acts, that is, abstract functions, of either “providing information” or making an “information request.” The two communication acts of providing information and making an information request are fundamental principles of communication. An “information request,” also described as

Mand and tact communication acts are realized through word sequence formation (Bondy, 2019). However, the communicative goal does not always directly align with the literal definition of a word’s articulations (Christiansen & Chater, 2008). Consequently, we included among the generalized functions of the AAC user interface, “subject description” and “predicate definition,” that is, words sequenced according to specific syntactic rules (Fromkin et al., 2011), in addition to “affect.” Words defining identification, relativity, as well as states and objects were organized to convey simulated concepts and thoughts referencing topics beyond the immediate situation (Ferm et al., 2005). Furthermore, as previously mentioned, communication extends beyond a simple utterance to a nuanced understanding of the affect of the communicator and the communication partner’s comprehension of the communication act (National Joint Committee for the Communication Needs of Persons With Severe Disabilities, 1991). Thus, the unit function of “Emotion” ensured the communication of “affect” and, in verbal communication, the communicator’s emotion can be realized through the use of affirmative or negative language, and utterance traits such as “tone” (Christiansen & Chater, 2008). Finally, spontaneous word application is a stage of communication development (Bondy, 2019). Thus, unit functions of “object description,” “emotion,” and “attention” can independently, or collectively, “initiate speech” for unprompted communication acts.

In considering part-whole links (Figure 6), the total system resolution level (leftmost panel) models a speech-based communication system in terms of the intended communicative acts of the communicator. These acts can form the basis of utterances as well as spontaneous word applications in addition to sound characteristics (Bondy, 2019; Christiansen & Chater, 2008). Thus, in our top-down decomposition, the communication acts were linked to both “subject description” and “predicate definition” phrases or smaller sets of ordered words such as state description, relativity, object description, and identification. These phrases are collectively formed through the application of syntactic language rules and are the functional units of a speech-based system (Bondy, 2019; Fromkin et al., 2011). The communication of affect to the communication partner comprises utterance characteristics associated with emotions (Christiansen & Chater, 2008). Morphemes, the base units of communication, are formed through the application of morphological language rules (Tenny, 2016). They represent the most detailed resolution, that is, component level, of our model of AAC-based communication. Similarly, “tone” represented the most granular utterance characteristic and composes the communication of emotion to the communication partner (Christiansen & Chater, 2008).

The proposed abstraction-decomposition mapped the units of natural language to the goals of communication to describe the work domain of a speech-based AAC system. The necessary functions to enable successful communicative interactions as illustrated in means-ends links between the elements and across different levels of abstraction. Likewise, the speech components comprising a communicative act were represented through part-whole links across three levels of resolution. We contend that to support user interaction with an AAC system work domain, an interface design must reflect the hierarchical nature of the proposed abstraction decomposition and accommodate the interactions between system elements.

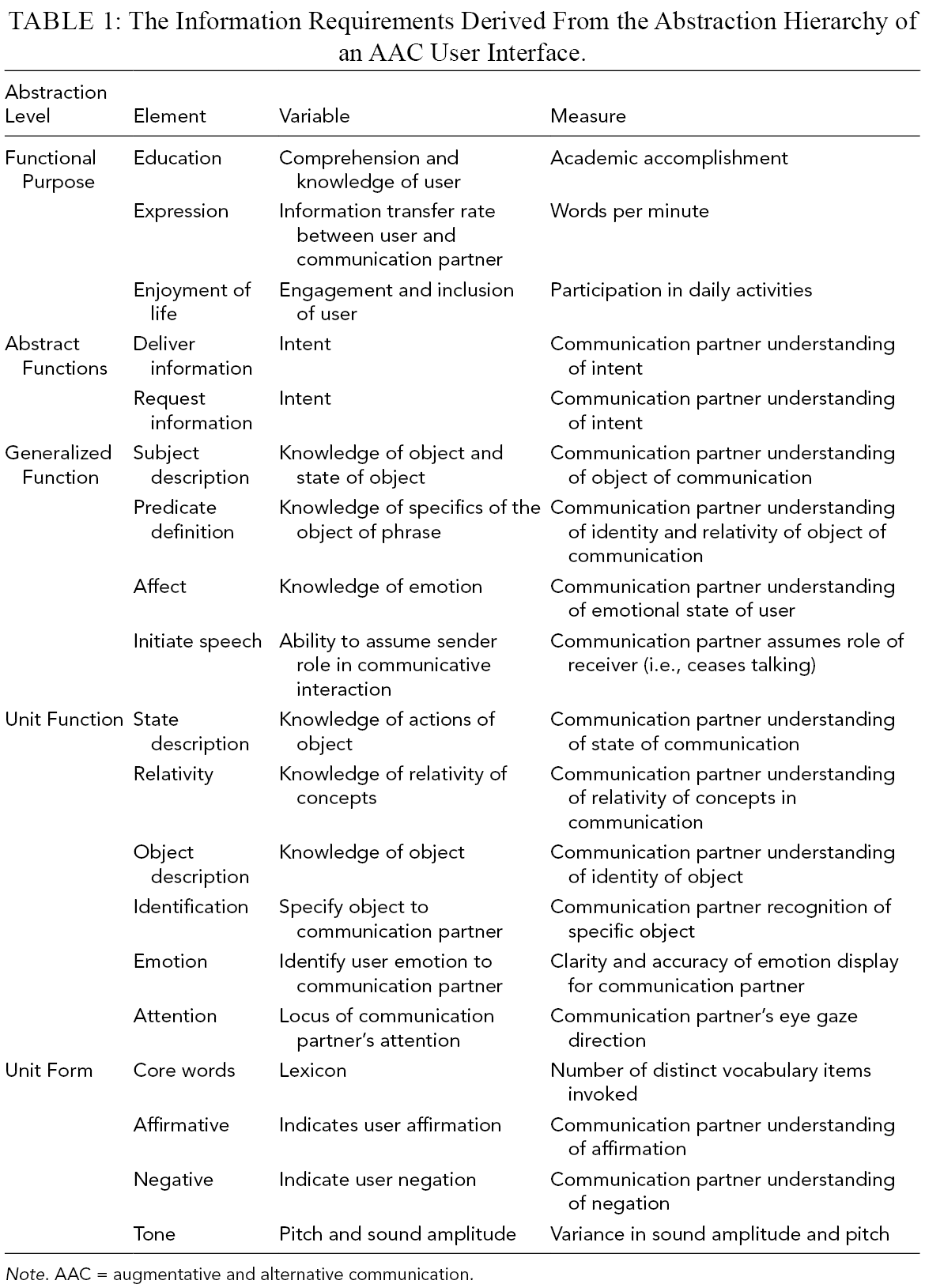

Information Requirements

In consideration of the research findings of Vicente (1999), the WDA allows designers to identify information requirements for the design of user interfaces. Every element in the abstraction decomposition (Figure 4) corresponds to variables that may be measured to provide information about the system. Table 1 shows the abstraction level, variables, and measurement for each element of the abstraction decomposition. Collectively, the elements of each level provide a description of the entire system. The variables used to depict abstraction-decomposition elements define the information required to provide an understanding of the system and its function. Therefore, these information requirements specify the constraints of the AAC system work domain. Our interface design was developed to satisfy the identified information to enhance user interaction with a speech-based AAC system.

The Information Requirements Derived From the Abstraction Hierarchy of an AAC User Interface.

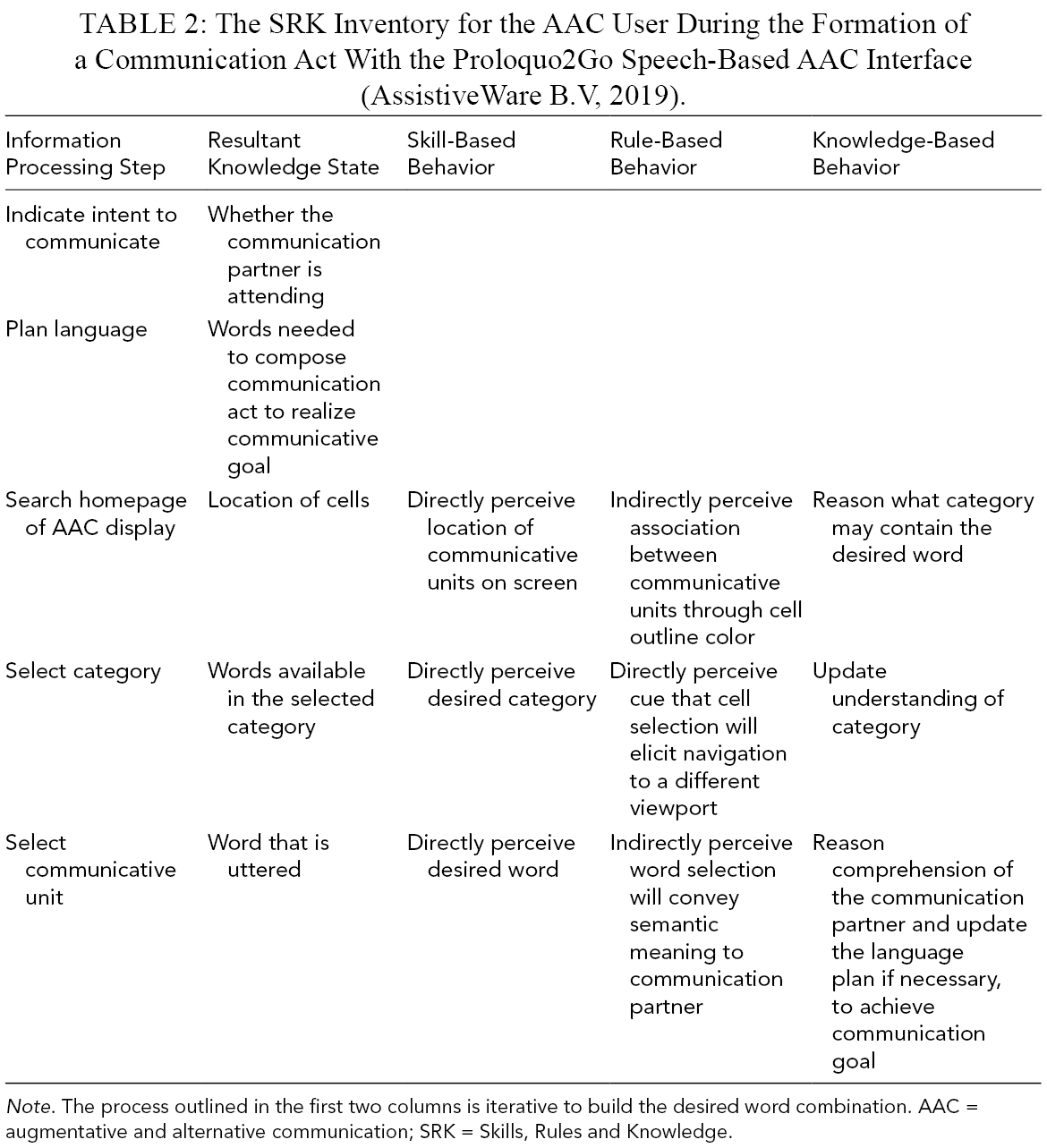

Worker Competency Analysis

A WCA was performed using the Skills, Rules and Knowledge (SRK) Inventory on the task of conveying a communication act with the commercial speech-based AAC device: Proloquo2Go (AssistiveWare B.V, 2019). Figure 2 shows a viewport of the Proloquo2Go system.

Considering the AAC user perspective, the Proloquo2Go interface comprises cells that contain words or else the names of categories. There are no forms that reflect the hierarchical nature of language. This contributes to a lack of behavioral support for language formation within the ACC user context. The AAC user can only leverage semantic understanding of base communicative units for system interaction. Additionally, the category names are ambiguous and require knowledge-based behavior to rationalize the contents of the categories and to select appropriate navigation acts. The lack of rule-based behavioral support results in frequent procedural traps. For example, the user may not associate the word “coffee” with “food” and might instead proceed to search under the “things” category (see Figure 2). Incorrect category selection may persist across months of use, and they are described as a main point of frustration by domain experts. To successfully convey a communication act in a speech-based system, the communicator must articulate communication units and, subsequently, the communication partner must understand the communication goal. A communication act requires fulfillment of the functions of all the components of a speech-based AAC work domain (refer to Figure 4).

As communication is an interaction between, at minimum, two communication partners, we considered information provided to the communication partner as well as the AAC device user. Proloquo2Go provides the communication partner with information on only the AAC user selected words or phrases. It does not reflect the multiple levels of abstraction of a speech-based communication system (e.g., emotion, or the type of communication act). Consequently, the communication partner lacks skill- and rule-based support for determining the context of a communication act, which can lead to the misinterpretation of information and a misunderstanding of the utterances. For example, a user’s question may be interpreted as a statement.

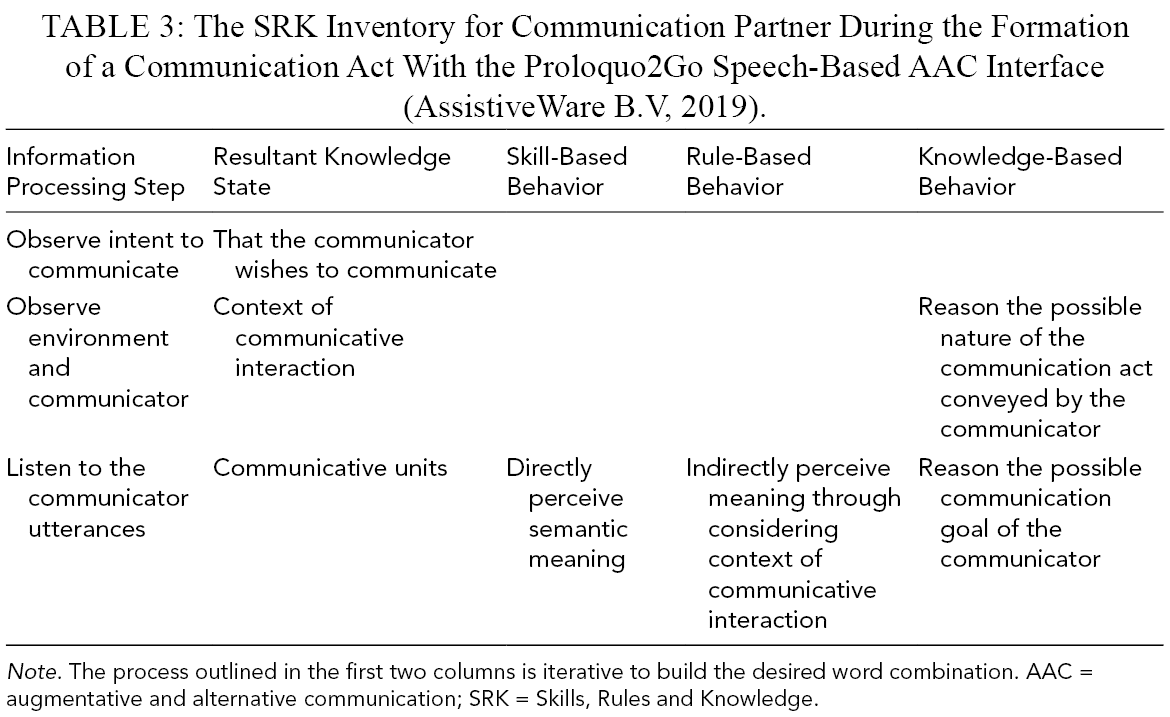

The completed SRK inventories are shown in Tables 2 and 3 for the AAC user and communication partner, respectively. The SRK inventories describe the skill-, rule-, and knowledge-based behaviors that Proloquo2Go supports for each information processing step and the corresponding knowledge state, comprising a communication act. The listed behaviors inform the constraints for user competencies, whereas empty cells indicate behaviors that are unsupported. For instance, current commercially available AAC systems do not support skill-, rule-, or knowledge-based behaviors for the user to indicate their intent to communicate to their communication partner, or to plan the language to fulfill their communication goal. Additionally, the communication partner is unsupported in their ability to determine whether the AAC user intends to communicate and must rely on knowledge-based behavior to interpret the context of the communication act. To support delivery and comprehensive understanding of communication we decided to design two interfaces: (1) one for the AAC device user, and (2) one for the communication partner (Figure 1b).

The SRK Inventory for the AAC User During the Formation of a Communication Act With the Proloquo2Go Speech-Based AAC Interface (AssistiveWare B.V, 2019).

The SRK Inventory for Communication Partner During the Formation of a Communication Act With the Proloquo2Go Speech-Based AAC Interface (AssistiveWare B.V, 2019).

The WCA characterized competencies that are required by communicators and the communication partner as imposed by the constraints of the system’s environment, and identified user behaviors that are not supported by the current AAC interface (St-Cyr & Kilgore, 2008). Extrapolations from the SRK inventories inform how required information can be integrated into the AAC interface design to support the different levels of cognitive processes.

Results

The information requirements from the abstraction decomposition model (Table 1) and SRK competencies identified through the WCA (Tables 2 and 3) informed the design of the ecological interface. WCA also highlighted behavioral aspects of speech-based communication that the Proloquo2Go interface failed to support.

Proposed AAC Interface

We constructed graphical forms by considering the variables required of each element of the WDA and appropriate design features to support skill-, rule-, and knowledge-based behaviors of the user of the WCA (Kilgore & St-Cyr, 2006). The designs were guided by Vicente and Rasmussen’s guidelines to increasing error tolerance in a user interface and the color and shape of the forms were informed by Gestalt’s principles (Vicente & Rasmussen, 1992). Additionally, means-ends links, as defined in the abstraction hierarchy (Figure 6), and part-whole relationships, as defined in the decomposition hierarchy, informed the structure and layout of graphical forms in the workspace. Our proposed AAC interface prototype communicates information pertaining to multiple levels of communication abstraction to deliver a comprehensive understanding of the communication goal of the user. Low fidelity versions of the graphic forms, process views, viewports, and workspace were discussed among the authors.

Graphic forms and process view

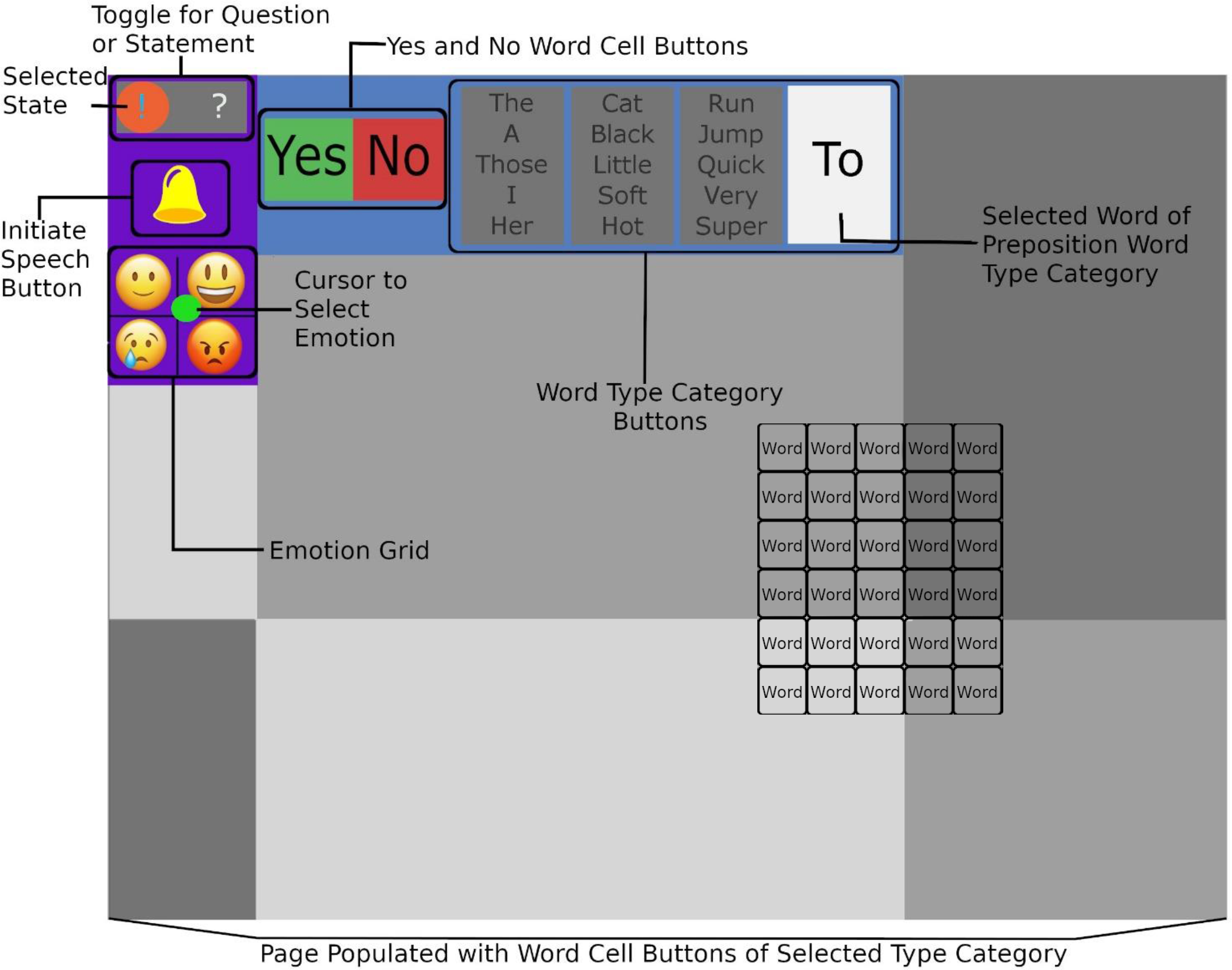

We designed the following components to communicate the information requirements of the system to the AAC user with propositional, analog, and iconic information mapping. The Question-Statement Toggle shown in Figure 7 applies iconic mapping of the question mark and exclamation mark to support skill-based behavior in choosing to request or deliver information. The symbol to represent delivering information was originally a period, but, without context, the period was found to not be recognizable as punctuation. A selection causes a distinct change in salience, from a white character on a gray background to a green character on an orange background, to clearly indicate a change in the selected mode. To communicate the intent to the communication partner, an outward facing display relays the selected icon, as seen in the top of Figure 8.

An annotated view of a viewport of the proposed AAC interface developed through the application of EID. The Toggle for the Question or Statement communicates to the user the ability to clarify their conversational goal of delivering (left) or requesting (right) information to their conversational partner. The user selection of the toggle corresponds to the icon at the top of the communication partner display. The Initiate Speech Button communicates the initiation of speech option to the user. The user selection of the button produces a sound to attract attention and indicate the desire to speak. The Emotion Grid enables the communication of the user’s emotion to the conversational partner. The emergent green dot is a cursor that can be moved throughout the grid to select an emotion based on the composition of sad or happy (range displayed along the y-axis), and emotion intensity (range displayed along the x-axis), for example, extreme happiness would be represented in the top right of the grid. The ‘Yes’ and ‘No’ Word cell buttons communicate to the user the ability to convey “Yes” or “No” to a communication partner.

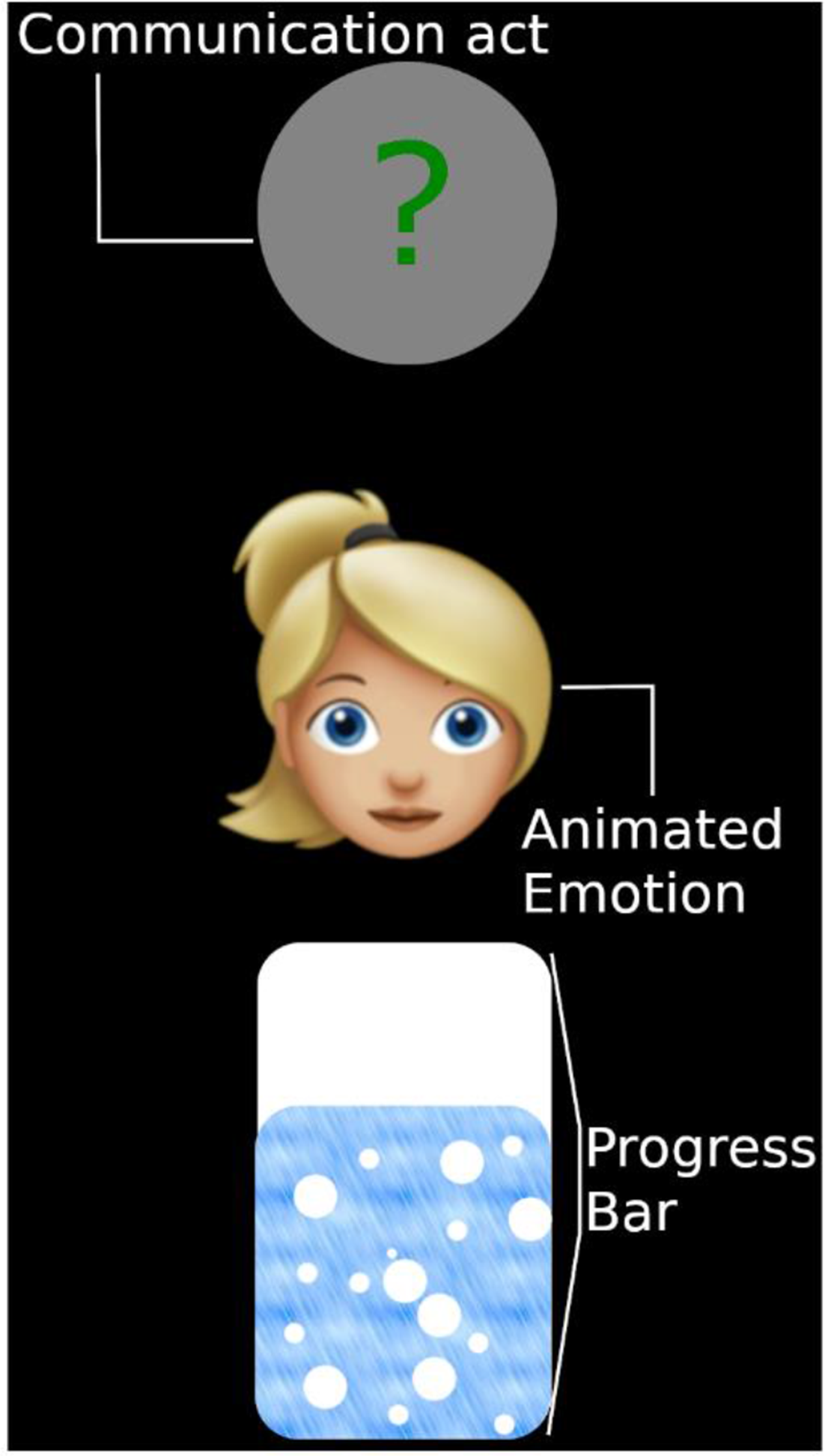

The interface design that presents high-level abstraction concept of communication to the conversational partner of the user of the AAC technology, referred to as the “communication partner display.”

The graphic form of the Initiate Speech Button shown in Figure 7 of a yellow bell on a bright purple background uses iconic mapping to support recognition of the user for the purpose of initiating speech. The selection of the icon activates an audio signal. During the audio signal, the color of the bell would change to gray to indicate the selection.

The multivariate Emotion Grid integrates information relating to affect, emotion, and tone in the grid of cartoon emotional faces as depicted in Figure 7. The graphical form uses iconic mapping; it also uses analogical mapping for selecting the desired affect through the movement of the cursor along the grid. The cursor for navigating the emotion grid offers a strong salience within the graphic form. In previous iterations, the Emotion Grid monopolized much more viewport area, but the area for word cell population was later prioritized. The communication of the emotional state to the communication partner (Figure 8) is achieved through iconic mapping.

Propositional mapping is used to define affirmative and negative communication components with the colors of green and red to support the understanding of the words (Figure 7). The graphic form does not have high salience to avoid attention capture. The selection of the “Yes” or “No” triggers corresponding audible enunciation of the word.

Viewport and workspace

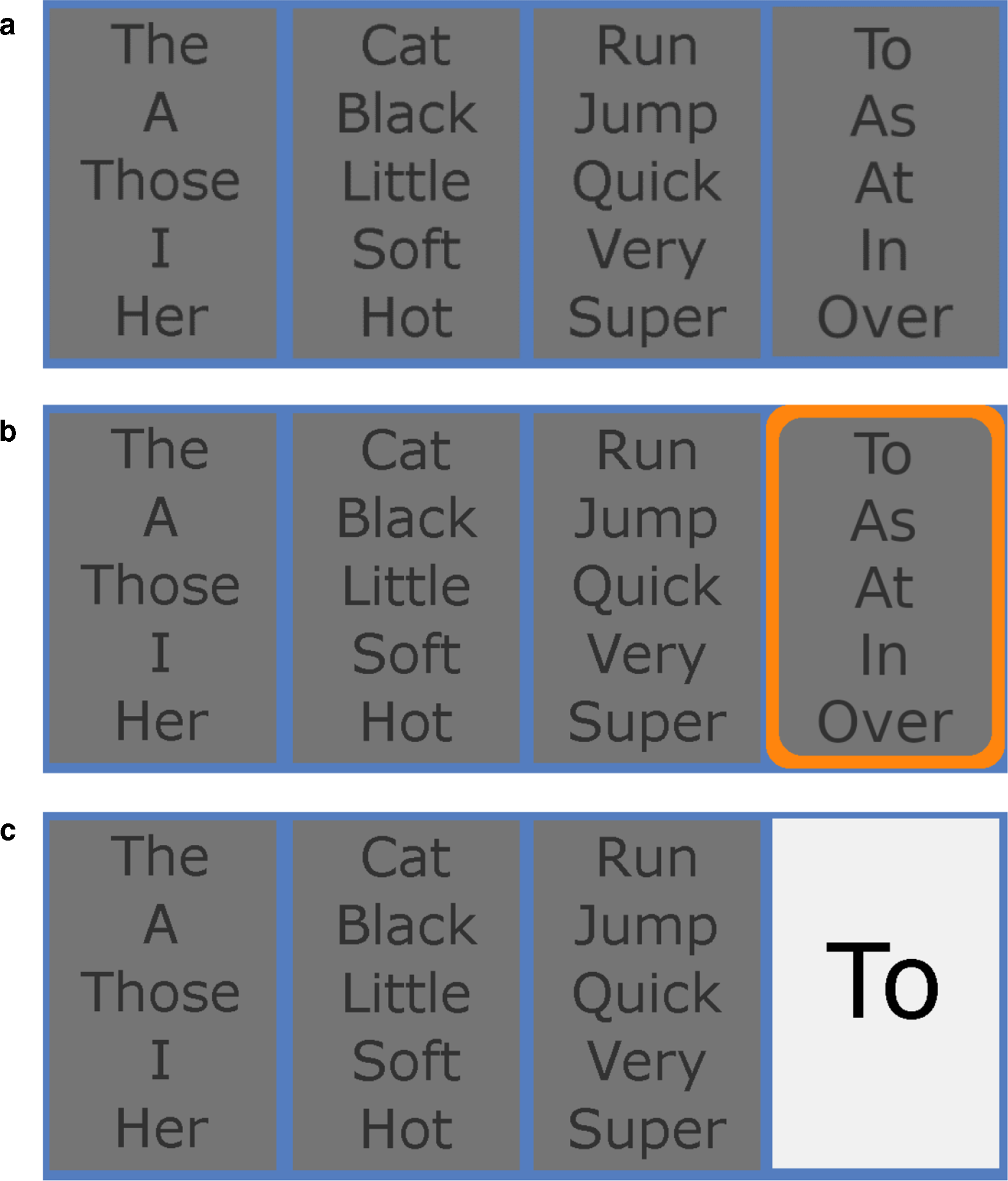

The information requirements of the subject description, predicate definition, state description, relativity, object description, and identification are integrated in the multivariate Word Type Category Buttons shown in Figure 9a. Propositional mapping of information is used to describe the grammatical type of words contained in each selection. This information is displayed with low salience. The selected category would be highlighted with an orange, highly salient border to clearly convey the selected state (Figure 9b). The selection of a category would populate a single page of word cells with the words of the selected category. Upon selection of a word in the category, the word would be enunciated to the communication partner and displayed in the high contrast (black on white) category box (Figure 9c). The word categories are ordered according to typical English syntax.

(a) Screen capture from the iOS integration of the prototype interface including the graphic form to provide the user with information of word type categories and to guide sentence formation. (b) Screen capture from the iOS integration of the prototype interface including the graphic form to provide the user with information of word type categories and to guide sentence formation. A bright emergent feature highlights the category that is selected. (c) Screen capture from the iOS integration of the prototype interface including the graphic form to provide the user with information of word type categories and to guide sentence formation. Upon selection of a word, it appears in high contrast in the category overview.

The word cells represent the components of the system, that is, the lowest level of the decomposition hierarchy. The word cells of each category are presented on a single viewport. The words are grouped in independent regions by themes to maintain a spatial relationship between words that are commonly linked in language. A sample of the word cell graphic form is shown in Figure 7. Word cells only populate the screen when a Word Type Category is chosen. Therefore, the rest state of the prototype consists of a mostly empty viewport.

The graphical forms are organized into a viewport of the user interface in Figure 7. The most spatially salient location of the viewport, the top left, is occupied by the forms used most frequently, that is, those that reference higher levels of the system abstraction. The word cells, as the lowest abstraction feature, occupy the center and bottom right of the screen. However, the words cells do occupy most of the display. Burns (2000) found that users experience the highest usability with an interface that displays all options in a single window. It is anticipated that, due to the dedication of most of the display to the presentation of word cells, all words of each category will be capable of being displayed in a single window.

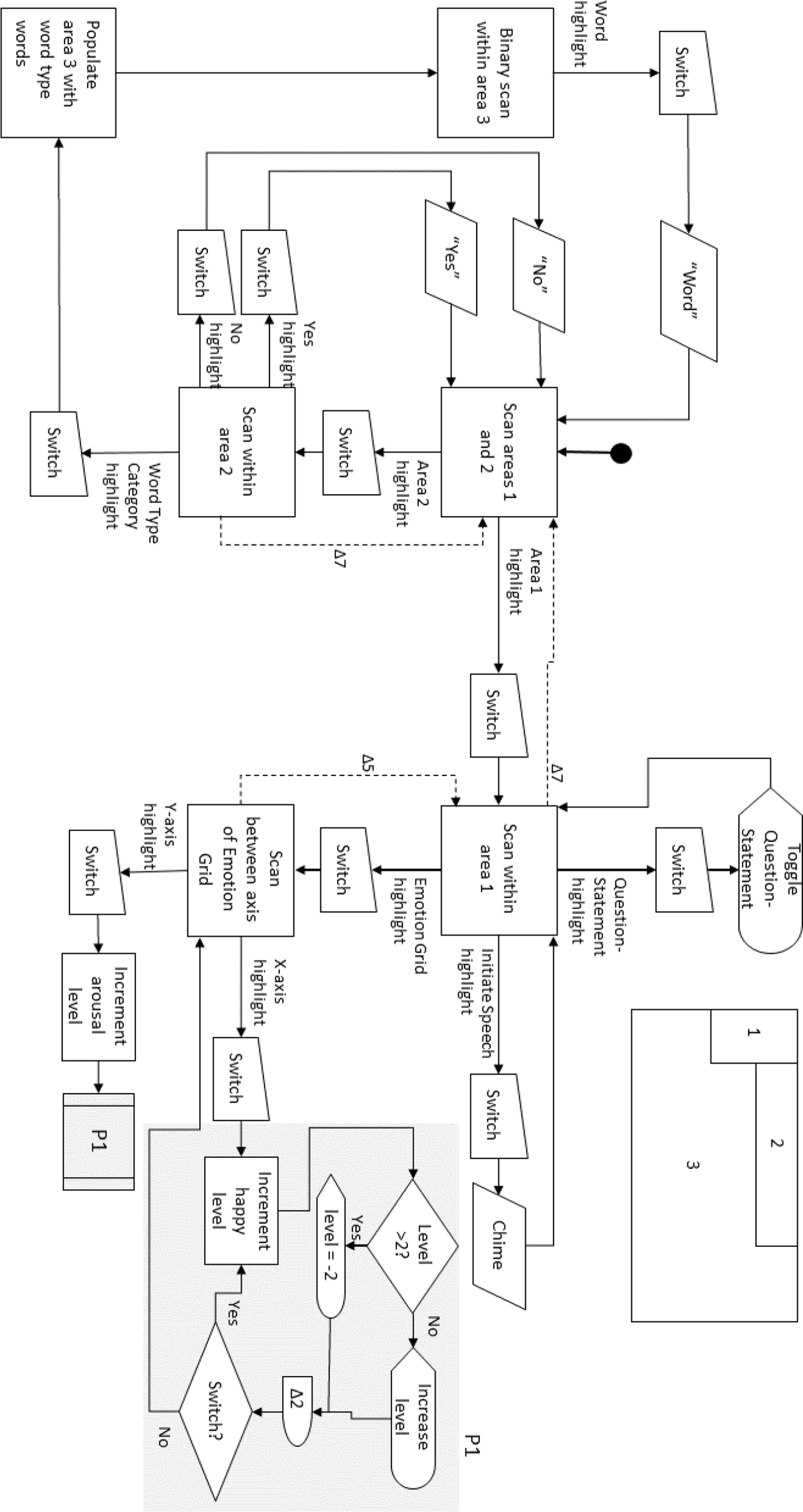

We integrated the interface prototype into an iOS 9 application using Objective C. User interaction with the prototype interface is optimized for direct access and single switch access to maximize accessibility. A system process flow diagram detailing the single switch operation of the prototype user interface is shown in Figure 10.

A process flow diagram of the user interface prototype system (Figure 7) when programmed for single switch access. Areas of the user interface are identified in the diagram in the top right of the figure. Timeouts are indicated with a dashed line and occur at ΔN dwell time as chosen in iOS Accessibility Settings.

The purpose of the communication partner display, shown in Figure 8, is to support recognition of communication intent and comprehension of the AAC user’s communication goal. The display will communicate multiple levels of speech-based communication elements, arranged in order of abstraction hierarchy in a single viewport. The first graphic form at the top of the viewport communicates the goal of the communication act through icons, the second graphic form communicates the affect of the communicator through an animated emotion, and the final graphic form shows the communicator’s progress in language formation.

Menu Ordering Use Case: Proposed AAC Interface in Action

The following example describes the direct access use of features of Figures 7 and 8. To engage with a server as a customer of a cafe to order a coffee, the sentence “Can I please have a small coffee?” would be paraphrased to “small coffee.” In the system rest state, the customer’s interface is mostly a blank gray screen with the purple and blue bars containing the Emotion Grid, Initiate Speech Button, Question-Statement Toggle, Yes and No Word Cells, and Word Type Category Buttons. The communication partner interface shows a completed progress bar, and whatever emotion and communication act had been previously set.

The customer is engaging in mand behavior, which is communicated when the customer presses the Question-Statement Toggle. The communication partner interface is now showing that the customer has a question or request. This informs the server of the context of the upcoming communication.

If the customer needed to capture the attention of a server, they would press the Initiate Speech Button. This produces a chime to indicate that the customer wishes attention.

The customer now selects the Word Type Category containing adjectives. The customer’s interface is populated with adjectives clustered by themes. The progress bar of the communication partner interface indicates the customer is planning language. The customer searches and locates the adjective “small” and presses the word cell. The system enunciates the word “small,” and the system returns to the rest state. These actions are repeated with the noun “coffee.”

If the server has clarifying questions the customer would press the Yes or No Word Cells.

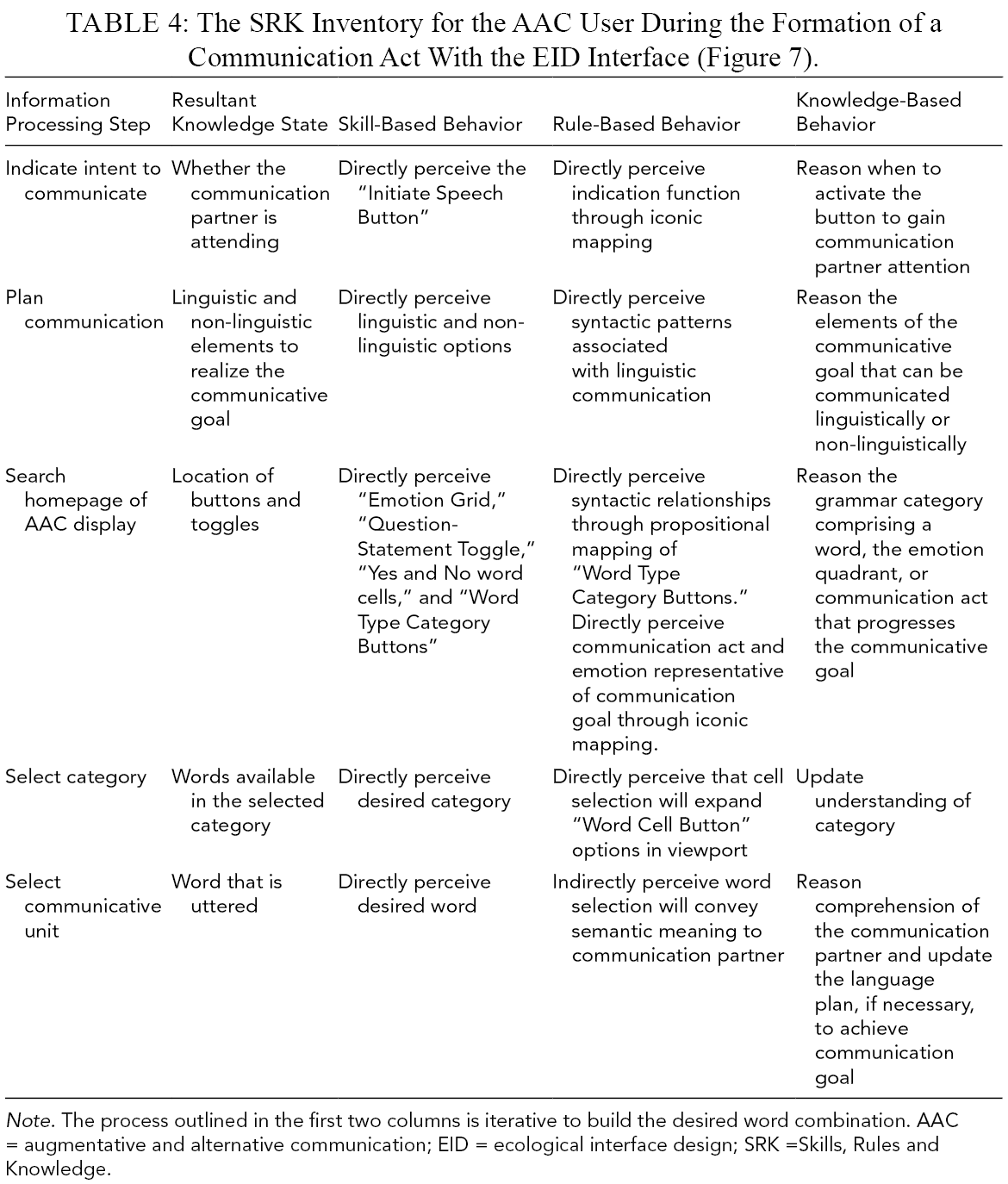

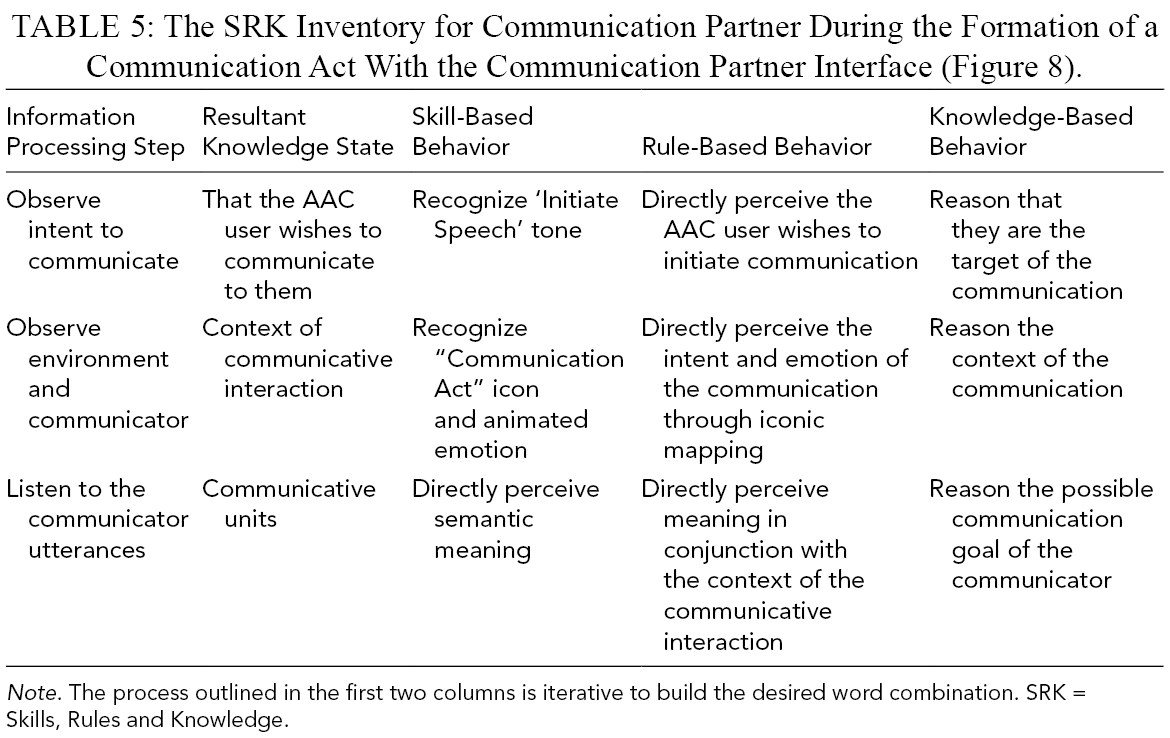

Summary of Interface Changes Resultant of EID Application

Insufficiencies in commercial interface support for user behaviors are shown in Tables 2 and 3 as vacant cells. To consider the changes in user support, EID interface elements are summarized through the lens of WCA in Tables 4 and 5. The SRK inventories show behaviors supported by the commercial and EID interfaces. Elements of the EID interface improve AAC user and communication partner support during communication. The EID interface provides AAC user support for behaviors associated with indicating intent for communication and planning communication. These behaviors were previously unsupported. The EID interface also provides the AAC user with expressive options beyond language (e.g., emotion) to realize their communication goal. This translates to behavioral support associated with understanding the context of the communication act for the communication partner.

The SRK Inventory for the AAC User During the Formation of a Communication Act With the EID Interface (Figure 7).

The SRK Inventory for Communication Partner During the Formation of a Communication Act With the Communication Partner Interface (Figure 8).

Discussion

There are close to half a million morphemes in the English language, and basic comprehension in English involves approximately 3000 with 347 morphemes composing 74% of casual conversation (Tenny, 2016). However, the appropriate use of 50 communicative units is considered robust functional communication (Bondy, 2019). Enabling people who use speech-based AAC systems to respond quickly with relevant language in unplanned scenarios enables independence to participate in successful communicative interactions during day-to-day activities (Hoag et al., 2008). Our novel application of the EID framework in a linguistic domain for the system of speech-based communication identified and addressed unrecognized barriers to communication present for users of current commercially available AAC interfaces.

Research Basis of Current AAC Interface Designs

User interactions with assistive technology have commonly been defined through the human activity-assistive technology (HAAT) model (Cook & Polgar, 2014). The HAAT model considers the target activity, the abilities of the individual, the context in which the device is used, and concludes with the suitability of an assistive device. For assistive technology to be suitable, the device must fulfill the desired function for the user within the environment (Cook & Polgar, 2014). Our adapted application of EID explores constraints of a speech-based communication system imposed by environmental context and user competencies. Gaps in behavioral support show that Proloquo2Go, a popular commercial AAC interface, does not sufficiently support speech-based communication (Tables 2 and 3). Indeed, inadequate support of AAC users is also suggested by the various challenges encountered by users of several text-based AAC devices (Baxter et al., 2012). Realization of the potential for AAC technology necessitates that the device leverages skills of the user (Light et al., 2019). As Proloquo2Go leverages only semantic skills, there is an inherent untapped communication, for example, by providing support for syntactic phrase formation.

Impact of AAC Interface Design

The goals of AAC use are not simply the application of communication units, but ultimately participation and inclusion in daily living activities (McNaughton et al., 2019). Research has shown that participation in conversational interactions, from healthcare to social environments, remains challenging for individuals with complex communication needs (Thunberg et al., 2009). In particular, the capacity to communicate self-assuredly in healthcare environments is important for individual autonomy. However, non-SLP health professionals lack confidence and the ability to adapt strategies for communication with individuals who experience complex communication needs (Cameron et al., 2018). The lack of AAC system transparency for a communication partner, as identified in our application of WDA and WCA, corroborates previous findings and can lead to negative communicative interactions (Baxter et al., 2012). The addition of a communication interface may encourage more frequent and successful communicative interactions between an AAC user and unfamiliar conversational partners.

AAC Prototype Interface Design

Providing the ability to initiate speech through the Initiate Speech Button supports the AAC user’s ability to reason when to commence a communication act and spontaneously engage in a communicative interaction through knowledge-based behavior. The bell shape of the button cues a rule-based recognition of an alert for the user, and the subsequent salience change with the toggle selection allows direct interaction and recognition that supports skill-based behavior.

With respect to language planning, the viewport containing the Emotion Grid, Question-Statement Toggle, Yes and No Word Cells, and the Word Type Category Buttons supports the AAC user’s understanding of speech-based communication and, accordingly, supports knowledge-based reasoning to determine how to leverage the different elements to achieve a communication goal (Vicente & Rasmussen, 1992). The Word Type Category Buttons provide an isomorphic representation of language bounds supporting rule-based behavior in language planning. The provided grammatical overview of language units of the different viewports cues the user to apply syntactic rules in phrase formation during communication. The Word Type Category Buttons aggregate lower-level system information to provide the user with visual momentum and support skill-based behavior for recollection of navigation events (Vicente & Rasmussen, 1992). The transparent taxonomy allows the user to test their navigation path through the example words.

The proposed AAC interface supports syntactic as well as semantic competencies, affording the AAC user with insight into multiple levels of communication system information. The application of syntactic rules to form phrases enables elaboration of concepts and thoughts outside of the immediate environment (Ferm et al., 2005).

The proposed AAC system increases support for the communication partner during a communicative interaction with an AAC user. The alerting tone supports skill-, rule-, and knowledge-based behavior associated with recognizing communicative intent on the part of the AAC user. On recognizing the tone through skill-based behavior, the communication partner is cued to attend to the AAC user with rule-based behavior. They are provided indication to support a knowledge-based behavior judgment that they are the target for the subsequent communication. Knowledge-based behavior is leveraged to reconcile information about the nature of the communication act and emotion displayed in the communication partner interface to rationalize the context of communication. The communication act and emotion forms both act as cues to the communication partner to aid in interpreting the utterances of the AAC user. Skill-based behavior support can be improved in the future using signal information mapping by varying the tone of the generated words according to emotional state.

Input from domain experts influenced the integration of graphic forms. The quick communication of an affirmative or negative response was expressed in the interviews as important speech acts that frequently occur during communication. For this reason, despite being considered components of the system, the Yes and No Word Cells were designed with a higher salience in terms of color and spatial layout.

The proposed AAC interface was developed through the application of EID: a research-based framework in the field of Human Factors. However, research guiding EID perspectives of human behavior and information processing is based on investigations evaluating typically developed individuals. This presents a potential limitation for the application of the EID framework to systems supporting neurodivergent individuals. It is important to investigate interface design with respect to different age groups and diagnoses (Light et al., 2019). Recent research on grid based AAC interfaces has explored design effectiveness with respect to two different diagnoses. Brown et al. (2015) investigated grid presentation of nouns and found no difference in visual search latency for individuals with and without traumatic brain injury. We anticipate that the proposed AAC interface has potential to accommodate neurodivergent development given findings from Brown et al. (2015) and the scaffolding of behavioral supports described above.

Conclusion

Articulation, and the subsequent understanding, of communicative intent is vital for successful interactions between communication partners. Current commercial speech-based AAC interfaces do not optimally support AAC users and their conversational partners in communicative interactions. We designed an AAC interface through the novel application of EID to speech-based communication. Through an abstraction decomposition framework, we developed a model of AAC communication that relates the functional purposes to the competencies of the system. Elements of work domain analysis were used to interpolate the information required to provide a hierarchical understanding of AAC communication to the user. Graphic form design and their viewport integration were then developed to present the information requirements. We anticipate that the prototype AAC user interface produced through the application of EID framework to AAC communication will improve communication performance compared to currently available AAC systems. The novel insights gained through this study support further application of EID and CWA to advance AAC interface design in a way that better supports user performance.

Future research will evaluate the performance between the proposed EID-informed AAC user interface against one available for current AAC systems. To determine the influence of EID application to communication systems, we will consider interface interaction behavior, information transfer rate capacity, and the mental workload burden of interface use. The proposed EID AAC interface will be evaluated through an experimental protocol simulating a conversation between a participant and their communication partner mediated through the commercial or the proposed EID AAC interface.

Footnotes

Acknowledgments

I would like to acknowledge the financial support to this research provided by Brain Canada and Kids Brain Health. I would also like to thank the family leaders who were invaluable partners during the research process. Beth Dangerfield and Megan Dawson-Rait provided expert insight into the goals, purposes, and functions of AAC devices that contributed to an understanding of the system. The information and feedback provided by Beth and Megan ensured the correct value was placed on the information available in the system, and that common challenges were understood.