Abstract

Existing approaches for sensor network tasking in space situational awareness (SSA) rely on techniques from the 1950s and limited application areas while also requiring significant human-in-the-loop involvement. Increasing numbers of space objects, sensors, and decision-making needs create a demand for improved methods of gathering and fusing disparate information to resolve hypotheses about the space object environment. This work focuses on the cognitive work in SSA sensor tasking approaches. The application of a cognitive work analysis for the SSA domain highlights capabilities and constraints inherent to the domain that can drive SSA operations toward decision-maker goals. A control task analysis is also conducted to derive requirements for cognitive work and information relationships that support the information fusion and sensor allocation tasks of SSA. A prototype decision-support system is developed using a subset of the derived requirements. This prototype is evaluated in a human-in-the-loop experiment using both a hypothesis-based and covariance-based scheduling approaches. Results from this preliminary evaluation show operator ability to address SSA decision-maker hypotheses using the prototype decision-support system (DSS) using both scheduling approaches.

Introduction and Motivation

Space situational awareness (SSA) is concerned with accurately representing the orbital state (position and velocity) of space objects “to predict, avoid, deter, operate through, recover from, and/or attribute cause to the loss and/or degradation of space capabilities and services” (Hart et al., 2016). Currently, the space object catalog, a central database of orbit states for space objects large enough to track, contains more than 20,000 objects (Liou, 2014; Shoots, 2018), ranging from decommissioned rocket bodies to active telecommunications satellites to university science and technology experiments. The number of cataloged objects is expected to grow considerably in the near future due to increased launch capabilities (Rendleman & Mountin, 2015), improved sensor sensitivities, and continued debris generation (Cox, Degraaf, Wood, & Crocker, 2005). Maintaining SSA is essential to safe operation of satellites in earth orbit, especially as the space object environment continues to become more congested and contested (Blake, Sanchez, Krassner, Georgen, & Sundbeck, 2012; Ianni, Aleva, & Ellis, 2012; Kelso, 2009).

The space object catalog is generated and updated through sensor (e.g., telescopes, radar) observations from networks such as the Space Surveillance Network (SSN); therefore, one active research area in SSA is the allocation of sensor resources to gather evidence required to answer SSA questions in a timely manner. Current tasking techniques used in the SSN rely largely on models and applications from the 1950s (Hoots & Schumacher, 2004). Many of these approaches are highly task-specific and rigid (e.g., observing a specific subset of satellites each night), requiring manual human-in-the-loop involvement for analysis and planning. In particular, anomalous events such as missed observations are difficult to reconcile automatically with existing systems. Consequently, SSA tasking research increasingly focuses on improved methods for gathering and processing actionable knowledge to acquire and maintain SSA. Emerging approaches for sensor tasking typically incorporate information-based techniques to provide flexible and automated tasking approaches. Many of the proposed sensor tasking approaches focus on minimizing the uncertainties in the estimations of position and velocity for objects in the catalog. This process, known as space object catalog maintenance, allows improved predictions of space object motion into the future. Although uncertainty in position and velocity are appropriate measures for certain SSA problems, such as collision avoidance, other decision-making questions require different metrics; for instance, the brightness of a satellite, measured over time, can be used to estimate the orientation and active mode of a space object (Coder, Wetterer, Hamada, Holzinger, & Jah, 2018). Similarly, the questions decision-makers would like to answer have become more sophisticated. Previously, questions about an object focused primarily on position and trajectory, and hypotheses could easily be generated to correspond. Now, decision makers want to know intent, operational mode, and other characteristics not necessarily confined to position or trajectory knowledge. The expansion of usable metrics and questions about satellites creates a need for more robust techniques that can represent diverse hypothesis knowledge and fuse information from a wide variety of sources to improve the overall understanding of the space object environment and answer-specific SSA decision-maker questions (e.g., “is satellite X currently in a ground-observation mode?”).

Real operational scenarios require a proposed sensor tasking framework and associated decision-support system (DSS) to reliably support an operator’s cognitive work. This work derives generalized requirements for DSS development specific to the SSA domain using cognitive work analysis (CWA), particularly to support decision-maker hypothesis resolution. The design requirement derivation provides a process by which the work of SSA operators can be systematically analyzed and the resultant needs of the work domain formalized into systems requirements. This bridges a gap between the growing SSA domain, with active research in sensor scheduling and information fusion algorithms, and the cognitive engineering domain, with rich history in DSS research and development. This paper presents the design and preliminary evaluation of a prototype DSS for SSA. The design uses a CWA to derive 14 requirements from which the prototype is derived. The work domain analysis (WDA) identifies capabilities and constraints present within both the SSA operator’s work domain and the SSA environment as a whole, and the control task analysis (ConTA) aids in the derivation of a set of decision-support design requirements for information fusion and sensor allocation tasks. The prototype DSS is implemented using two different methods for scheduling sensor observations and evaluated in simplified SSA-relevant scenarios for situation awareness, cognitive support, and workload effects.

Background

The following sections present background material to familiarize the reader with the SSA and cognitive engineering topics that will be discussed in this paper. The primary objectives of SSA involve discovery, tracking, and characterizing of earth-orbiting objects (Hussein, DeMars, Fruh, Jah, & Erwin, 2012), often through accurate estimation of orbital states (e.g., position and velocity). In general, situation awareness as defined by Endsley (1988) is “the perception of the elements in the environment within a volume of time and space, the comprehension of their meaning, and the projection of their status in the near future,” or, more simply, as “knowing what is going on around you” (Endsley, 2000). Inherent in Endsley’s definition of situation awareness is an understanding of what is important (Endsley, 2000), so the design of support systems for situation awareness must begin with establishing goals and purposes of the work domain.

Description of the SSA Work Domain

The work domain relevant to SSA consists of numerous social and technical components. SSA is particularly concerned with accurately representing the state knowledge of objects in the space environment to provide better prediction capabilities for threats such as potential conjunction (i.e., collision) events. Although situation awareness, as defined by Endsley (2000), typically refers to an individual’s internal understanding and ability to project forward in time, SSA refers more broadly to a community understanding of what is happening in the earth orbit environment and what is likely to happen in the near future. Operators in SSA operations centers, such as the National Space Defense Center (NSDC), often start with limited training in orbital mechanics, sensor phenomenologies, data fusion, or other relevant SSA subfields. The operators are then responsible for aggregating data on a diverse space object population, ranging from active satellites to orbital debris, and conduct analyses to predict events (e.g., conjunctions) or schedule follow-on observations to maintain a catalog of space objects. The catalog typically contains information on the orbit state (i.e., position and velocity) of the object, allowing analysts to predict its motion into the future. These data are gathered from a network of sensors (primarily telescopes and radar), many of which are controlled by independent entities, which poses difficulties in gathering timely data on specific events. Sensors in the SSN are geometrically diverse and relatively sparse, especially as compared with more than 20,000 resident space objects (RSOs) currently tracked (Shoots, 2018). For instance, data collections for an object in low earth orbit (LEO) from one ground station might only occur over two 5-min-long passes in 1 day due to observability constraints (e.g., line of sight, cloud cover). This leaves significant time wherein anomalies (e.g., maneuvers, on-orbit break-ups) can occur without being directly observed.

In general, the sensor tasking problem addresses how to obtain, process, and utilize information about the state of the environment (McIntyre & Hintz, 1996). The sensor tasking problem is a high-dimensional, multiobjective, mixed-integer, nonlinear optimization problem, and current approaches focus on tractable subproblems (e.g., single objectives, limited target objects, limited sensors). Potential SSA sensor tasking needs include maintaining catalogs of space object state observations (DeMars, Hussein, Frueh, Jah, & Scott Erwin, 2015; Hobson, 2014), detecting maneuvers or other anomalies (Jaunzemis, Mathew, & Holzinger, 2016), and estimating control modes or behavior (Coder, Holzinger, & Linares, 2018; Hart et al., 2016; Holzinger, Wetterer, Luu, Sabol, & Hamada, 2014). These objectives are generally not complementary, especially given limited sensor resources, and the different objectives require different tasking approaches; for instance, characterization (e.g., anomaly detection) prefers many observations of a small subset of the catalog, whereas catalog maintenance prefers a diverse set of observations from as many objects as possible. Discourse and activity in SSA increasingly focus on decision-making in the presence of limited resources, uncertain and ambiguous information, and a contested space environment (Hart et al., 2016). Limited measurement opportunities from geographically diverse sensors and sensor phenomenologies with specific unobservabilities (e.g., electro-optical systems such as telescopes lack range and range-rate measurement) lead to uncertain and ambiguous data. Often, this results in a proliferation of uncorrelated tracks (UCTs), which do not agree with existing cataloged objects, and can cause false alarms for anomalous events, spurious new object generation, and cross-tagging of cataloged objects. The goal of aggregating all these data is to support SSA judgment, so the quality of the data must be taken into account.

Candidate Sensor Network Scheduling Approaches

This section introduces the two candidate approaches for scheduling sensor network observations that will be used throughout this paper. Existing SSA sensor tasking approaches are task-specific and largely rely on models and applications from the 1950s (Hoots & Schumacher, 2004). Both approaches presented in this paper are information-based methods that are currently being advocated for as improvements to the existing sensor tasking architecture.

Covariance-based sensor tasking

The covariance-based approach for sensor network scheduling attempts to reduce orbit state estimate uncertainty as measured by orbit state covariance. Each sensor measurement has various sources of noise (e.g., pixel width for electro-optical sensors) which contribute to state uncertainty, and the orbit state covariance matrix is a common product of fusing and filtering these measurements into a state estimate. A simple covariance-based scheduling algorithm selects the next sensor actions to minimize the weighted-sum of state covariances (see Figure 1; Jaunzemis, 2018). The weighting parameters represent space object prioritization so that actions will target higher priority assets, quickly reducing their state uncertainties and maintaining that level through follow-on observations.

Illustration of higher state uncertainty at higher altitudes.

Covariance-minimizing sensor tasking approaches have been proposed primarily for catalog maintenance and collision avoidance tasks (Hobson, 2014; Linares & Furfaro, 2016). The covariance-based approach is effective in addressing decision-maker needs that can be directly mapped to state estimate uncertainty; however, not all decision-maker needs meet this condition. Support for decision-making should provide quantifiable and timely evidence of behaviors related to specific hypotheses (e.g., threats). To support this hypothesis-resolution activity, existing and proposed approaches largely focus on collecting observations to identify physical states or parameters; however, many complex hypotheses require RSO behavior prediction that takes into account other RSOs, physics knowledge, and indirect information from nonstandard sources. As such, judgment is an active avenue of research in SSA, that is, using information fusion and emerging technologies to ingest varied, sparse data sets to form a coherent story of the space environment, as well as techniques for better conveying this story to decision makers.

Hypothesis-based sensor tasking

An alternative method for sensor network scheduling, called the hypothesis-based approach, directly quantifies decision-making questions as testable hypotheses that can be interrogated using evidence gathered from sensor observations (Jaunzemis, Holzinger, Chan, & Shenoy, 2019, Jaunzemis et al., 2017). Typically, a hypothesis belief structure is either converted to a probability distribution (as a Bayesian approximation of the belief structure; Cobb & Shenoy, 2006) or collapsed to a probability formulation that allows a decision maker to place bets on the hypothesis given the available evidence using familiar Bayesian constructs (Smets & Kennes, 1994; Tessem, 1993). However, recent work has shown the utility of quantifying and incorporating ambiguity (Jaunzemis et al., 2019, 2017) and evidential reasoning methods such as Dempster–Shafer theory (Shafer, 1986) are more expressive in representing ambiguity than Bayesian approaches (Jirousek & Shenoy, 2018). Hypothesis entropy reduces a complex belief structure to a single measure that represents both the ambiguity (or nonspecificity) and conflict inherent in the current hypothesis knowledge, consider entropy as the hypothesis-resolution uncertainty, analogous to the state covariance being the orbit state uncertainty. For further information on the specifics of Dempster–Shafer theory and hypothesis entropy, refer to Shafer (1986) and Jirousek and Shenoy (2018).

Consider, as an illustrative example of hypothesis-based sensor tasking, a simplified version of the Judicial Evidential Reasoning (JER) algorithm (Jaunzemis, Holzinger, Chan, & Shenoy, 2018; Jaunzemis et al., 2019, 2017). For this hypothesis-based approach, entropy becomes the primary measurement of effectiveness, as low entropy signifies that the gathered evidence points toward a consistent and specific hypothesis resolution. When using the hypothesis-based scheduler, the next sensor actions are selected to minimize the weighted-sum of hypothesis entropies (Jaunzemis, 2018). Here, the weighting parameters allow direct prioritization of decision-maker hypotheses, which automates the selection of specific space object targets toward the goal of reducing hypothesis-resolution uncertainty.

SSA Work Domain Challenges

In addition to selecting an appropriate method for scheduling sensor networks, presenting all relevant information (available sensors, location of target space objects and the most up-to-date information on them, and active working hypotheses on the space environment) to a decision-maker in SSA creates a big-data and data-visualization problem. Problematically, the collected data products are often affected by adverse observation conditions, uncertainties, biases, and unobservable states that may lead to further ambiguity in evidence. The SSA domain exhibits many of the dimensions of complexity outlined by Vicente (1999), including a large problem space of many constantly varying dynamic states with gaps in measurement opportunities, geographically distributed sensor networks and social organizations with varying needs and interests, measurement uncertainty and unobservability resulting in less than full-state knowledge, and disturbances where anomalous events (e.g., missed observations and loss of custody) may lead to high-risk scenarios with significant consequences (e.g., collisions in excess of 7 km/s such as the 2009 Iridium–Cosmos event; Kelso, 2009). To derive guidelines that support decision-making and address these complexities, the following sections apply CWA techniques to the SSA domain.

CWA APPLIED TO SSA

This work begins by applying the first two phases of CWA: WDA to analyze the broader purposes of decision support in SSA and the means available to accomplish those goals, and ConTA to develop insights for decision-support requirements. As well-understood goals are central to the development of situation awareness (Endsley, 2000), this work uses WDA to derive requirements for supporting complex work, applying the principles of WDA to uncover purposes, capabilities, and constraints within the SSA work domain.

Abstraction Hierarchy

WDAs are employed as the first step of CWA to establish broad understandings and identify constraints that exist within the domain (Naikar, Hopcroft, & Moylan, 2005; Vicente, 1999). It is often useful to decompose the work domain into multiple abstraction hierarchies to separately examine different aspects of the environment and domain activities at these varying levels of detail (Burns, Bryant, & Chalmers, 2005; Miller, McGuire, & Feigh, 2017). The SSA problem can be appropriately decomposed using two abstraction hierarchies: the SSA work domain and the SSA environment.

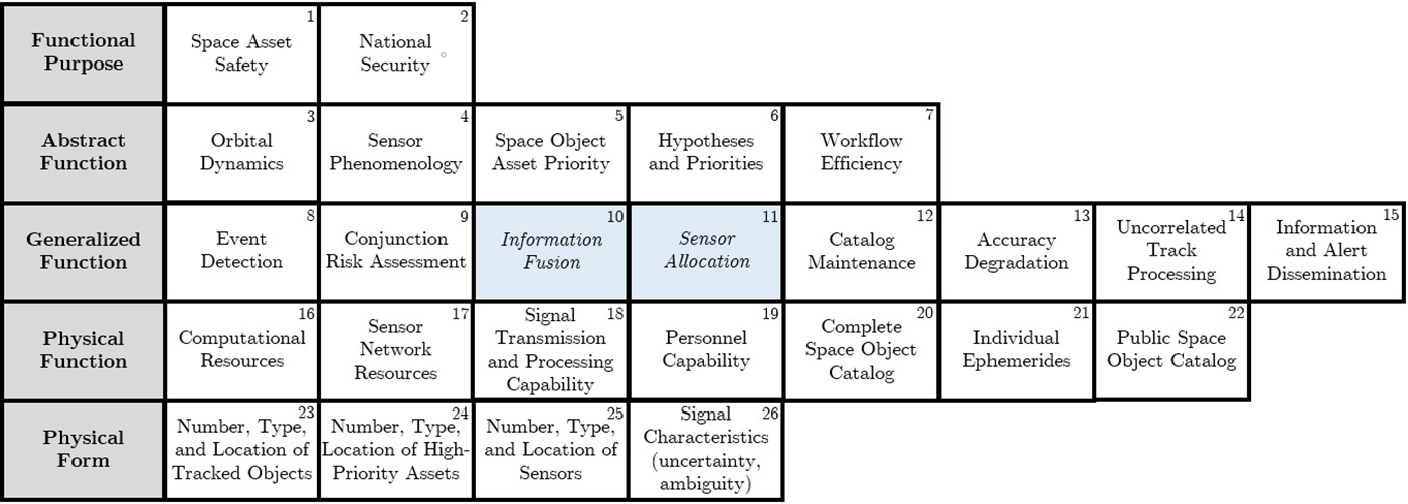

The SSA work domain hierarchy in Figure 2 highlights the means-ends relationships that connect physical measurables (e.g., numbers of objects and signals such as orbit state estimates or light-curves) to common SSA functions (e.g., event detection or catalog maintenance) and subsequently to overarching goals of SSA operators. Historically, SSA operators have been primarily concerned with maintaining safety of more than 1,300 active satellites in earth orbit; however, the U.S. Air Force has also recognized the space domain as a valuable asset in addressing national security concerns through communications and surveillance. These goals are frequently addressed by formulating hypotheses to test and assigning priorities to these hypotheses or specific space objects, which can then be interrogated through sensor allocation methods or other specific SSA functions such as event detection. The chosen functions are carried out by processing physical signals from specific measurements into updated space object catalogs and ephemerides.

Abstraction hierarchy of the SSA work domain (WDA-D).

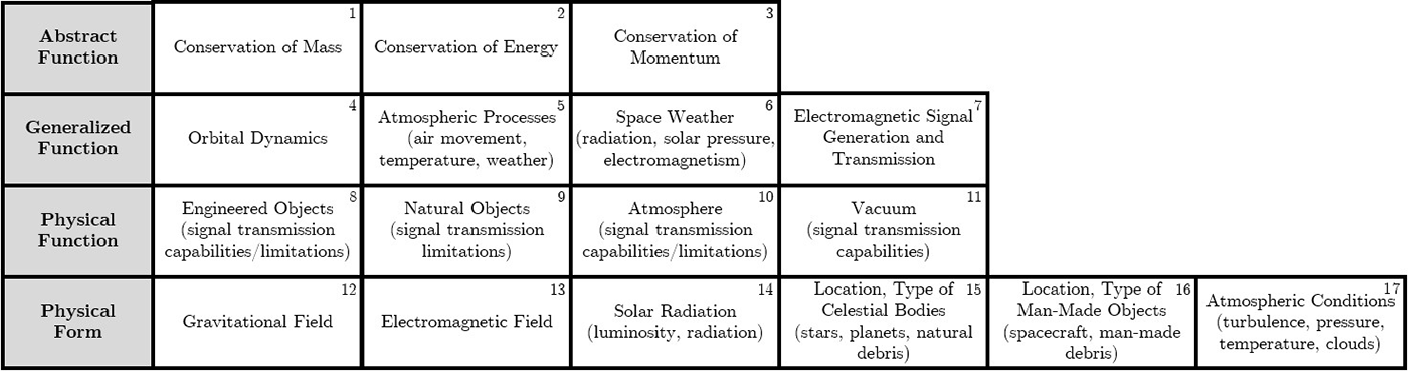

The SSA environment hierarchy in Figure 3 highlights means-ends relationships between different phenomena relevant to SSA operators. Unlike operators in the work domain, the environment does not have any functional purpose or goals, so the top level of the traditional decomposition is omitted. However, there are still many dynamic elements to the SSA environment that impact decision-support design considerations, all governed by the laws of conservation of mass, momentum, and energy. The conservation laws drive the orbital dynamics that prescribe space object motion, space weather, atmospheric properties, and signal transmission. Each of these impacts SSA data collection by limiting observability. The physical function of an environmental decomposition contains elements of operational environment, including natural and man-made objects that provide a means for, or potentially obscure, signal transmission. Similarly, the atmosphere affects ground-based measurements differently than the vacuum of space, distorting the propagation of electromagnetic signals. These different phenomena directly impact SSA operations and space assets through earth’s gravitational, electromagnetic, radiation environment, atmospheric conditions, and the locations of both natural and man-made objects.

Abstraction hierarchy of the SSA environment (WDA-E).

For detailed derivation of each of these abstraction hierarchies, refer to Appendix A.

Insights for SSA DSS Development

By carefully considering the goals of SSA operators, as well as constraints imposed by the domain, this analysis develops several insights for SSA decision support:

Data aggregation should account for the fusion of disparate sensor resources and various signal characteristics, including considerations of uncertainty, ambiguity, and unobservability. State estimates (WDA-D-21) are only as good as the quality of the physical data products (WDA-D-26), so the signal processing (WDA-D-18) and information fusion (WDA-D-10) must be able to account for these difficult aspects of the SSA observation problem. More importantly, however, these data must be fused to address actionable decision-maker needs derived from the SSA domain purposes of asset safety (WDA-D-1) and national security (WDA-D-2). This requires a complete understanding of the characteristics of these physical signals, the limitations of the signal transmission and processing capabilities, and how to account for them in information fusion.

Sensor allocation approaches should be able to directly address varied decision-maker hypotheses. Space asset safety (WDA-D-1) and national security goals (WDA-D-2) are addressed through the formulation of testable hypotheses (WDA-D-6), which are in turn interrogated through functions such as sensor allocation (WDA-D-11) and information fusion (WDA-D-10). These hypotheses may be widely varied, ranging from detecting specific events on individual objects (WDA-D-21) to maintaining a catalog of many space objects (WDA-D-20, WDA-D-22). In particular, there is a need for sensor allocation approaches that not only address the catalog maintenance function (WDA-D-12) but also directly address other decision-maker hypotheses related to asset safety and national security. Therefore, SSA sensor allocation approaches must be flexible enough to be considered a means for resolving a variety of decision-maker hypotheses.

Fused data should be reflected through updated hypothesis knowledge states. The proliferation of different sensor technologies (WDA-D-17, WDA-D-18) means that the physical form of sensor data is widely varied (WDA-D-26). For instance, evidence toward certain hypotheses may be extracted from nontraditional sources such as news articles. In addition, constraints imposed by the functions of the environment, such as space weather phenomena (WDA-E-6) that impact the atmospheric conditions (WDA-E-10, WDA-E-17), can effect the transmission of these signals (WDA-E-7) and thereby the gathered signals (WDA-D-26). The algorithms and processes responsible for sensor allocation (WDA-D-11) should translate these physical measurables into their respective impacts on relevant hypotheses (WDA-D-6). This allows human analysts to perform the abstract- and functional-level processing of prioritizing between different hypotheses as a means of addressing different space asset safety (WDA-D-1) and national security goals (WDA-D-2). An effective DSS for SSA must be able to ingest and fuse data from disparate sources (WDA-D-10); however, the method of conveying that information to decision makers is also a concern. Displaying fused data products on the level of the physical signals (WDA-D-26) or updated state and covariance estimates (WDA-D-21) requires the operator to abstract from these physical functions to the functional purposes of the domain. To avoid the cognitive work required for this abstraction, fused data should instead update hypothesis knowledge states that address the decision-maker concerns. In this way, the technical systems aid in the abstraction from physical forms and functions to the functional purposes of the domain.

Impact of WDA Insights on Sensor Network Scheduling Methods

Applying the WDA insights to the aforementioned candidate methods for sensor network scheduling reveals that covariance-based sensor allocation and information fusion approaches, predicated on state uncertainty minimization, do not provide a robust or clear mapping to decision-maker goals of maintaining space asset safety and addressing national security concerns. Not all domain goals are readily expressed in terms of state uncertainty, and abstracting from the physical data products and state or covariance estimates to domain goals requires added cognitive effort. Consequently, a covariance-driven sensor network is only capable of addressing a limited set of the SSA work domain functional goals, and the results of these tasking schema are physical form (e.g., orbit state uncertainties, light-curves) or physical function (e.g., space object catalogs and ephemerides) elements. The human analysts are then required to perform the abstractions from this physical data to functional purposes of the work domain. In contrast, applying a hypothesis-based approach provides a quantifiable means of formulating domain goals, and resolving these hypotheses requires less abstraction from sensor tasking results to domain goals. This indicates an opportunity for improvement by allocating sensors to address specific hypotheses related to these SSA domain goals.

ConTA Applied to SSA

Using the results of the WDA, the second phase of the CWA, the ConTA, is conducted to further develop insights for decision-support requirements. Previous work by the NASA (National Aeronautics and Space Administration) directorate of Human Effectiveness has investigated how new fusion technologies could be incorporated into the SSA workflow (Aleva, Ianni, & Schmidt, 2012; Ianni et al., 2012), including a ConTA study used to inform designs for several prototype screens for evaluation by NSDC operators (Aleva & McCracken, 2009). The full results of their analysis are under distribution restriction (Aleva & McCracken, 2009). In contrast, this work seeks to derive generalized requirements for SSA DSS development.

Decision Ladders

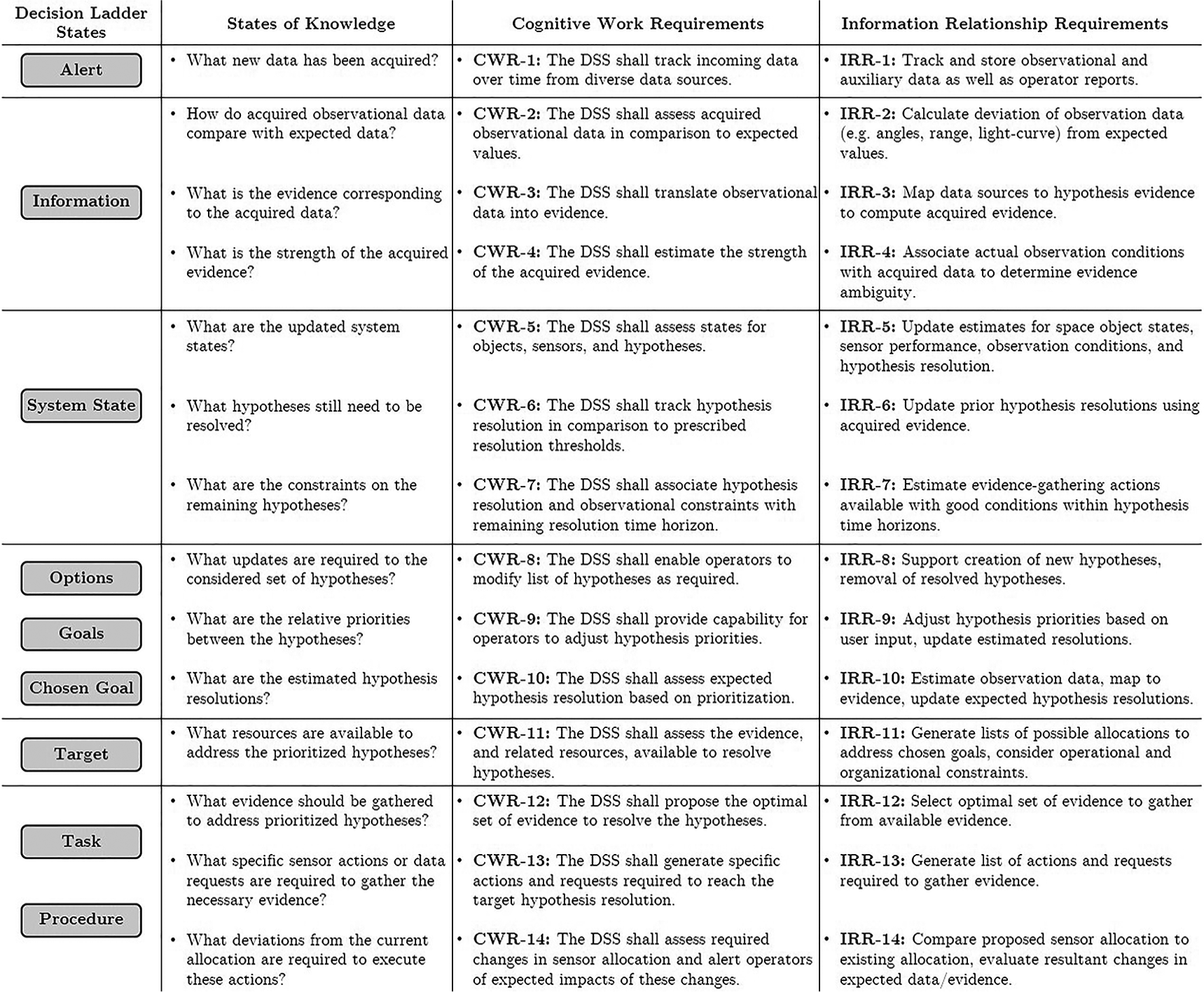

The decision ladder, a popular choice of control task modeling, maps information processing actions and states of knowledge throughout a control task to model the cognitive processes required to complete the task while also encouraging flexibility and expertise by not adhering to a strict linear, procedure-like approach (Bisantz & Burns, 2008; Jenkins, Stanton, Salmon, & Walker, 2011; Rasmussen, 1986; Vicente, 1999). Beginning in the bottom-left of the ladder, the analysis phase involves ingestion of alerts and observations to identify the system state. The judgment phase, at the top of the ladder, models the selection of a particular target goal through the consideration of options and their consequences. Then, descending toward the bottom-right of the ladder, the planning phase of the task selects actions to execute based on the stated goals, and the task terminates in the execution of that plan.

The control task decision ladders can be leveraged to generate two different types of design requirements. A cognitive work requirement (CWR) specifies cognitive demands, tasks, and decisions that must be supported by the DSS; an information relationship requirement (IRR) specifies the context for required data, which translates that data into the actionable information that the decision-maker requires (Elm, Potter, Gualtieri, Roth, & Easter, 2003; Potter, Gualtieri, & Elm, 2003).

Miller et al. (2017) demonstrate how to translate from the states of knowledge in the decision ladder to CWRs and IRRs. In their study, each state of knowledge generates at least one CWR, and each CWR has a corresponding IRR. A similar approach is followed in this work. For each applicable decision ladder state, states of knowledge articulate questions relevant to the SSA operator. Each state of knowledge generates a CWR outlining some functionality the DSS must provide to address this state of knowledge. Similarly, the CWR generates an IRR more explicitly stating the data products required to generate the necessary information.

ConTA Application: Information Fusion and Sensor Allocation

Recalling the SSA work domain abstraction hierarchy in Figure 2, any number of these elements may be identified for further inspection through ConTA. This paper focuses on the generalized functions of information fusion (WDA-D-10) and sensor allocation (WDA-D-11), highlighted in Figure 2, to support SSA decision-making. Information fusion and sensor allocation may be related in one decision ladder, as the information fusion function generally relates to the analysis phase while sensor allocation relates to judgment, planning, and execution.

In addition, the WDA analysis above identified that the formulation and resolution of hypotheses can form a means of maintaining space asset safety and addressing national security concerns. Therefore, this application of ConTA will focus on the abstract function of hypothesis resolution (WDA-D-6) to address these SSA purposes.

The CWRs and IRRs for the information fusion portion of this task (analysis) are shown in the alert, information, and system state portions of Figure 4. The information fusion process begins with alerts generated by any new data (e.g., successful or missed observations) that affects decision-maker hypotheses (CWR-1). These data must be transformed into actionable evidence (CWR-3) that can be applied to specific hypotheses (CWR-5) while considering deviations from expectations (CWR-2). The DSS should then clearly identify which system states require attention and further evidence (CWR-6) as well as any constraints affecting these system states such as line-of-sight opportunities for sensor observation (CWR-7).

Requirements derivation for SSA information fusion and sensor allocation.

Sensor allocation picks up from this stage to follow through with judgment, planning, and execution. The CWRs and IRRs for this task are shown in the remaining portions of Figure 4.

SSA judgment is typically an iterative process involving considerations of many allocation options to address overall SSA goals and purposes (CWR-10). Operators must prioritize between newly generated and existing hypotheses (CWR-8, CWR-9). The established priorities define the evidence required to address the selected goals (CWR-11), considering operational and organizational constraints (e.g., requesting data from sensors controlled by other organizations). An optimal course of action must be formulated to gather the necessary evidence (CWR-12, CWR-13) and adjust existing tasking (CWR-14).

In total, this ConTA of the information fusion and sensor allocation functions generated 14 CWRs and 14 corresponding IRRs. Appendix B contains a more detailed derivation of each CWR to provide context for the development of each CWR and IRR.

Development of a Prototype DSS for SSA

The CWA conducted in the previous sections helped derive a number of DSS design insights and requirements that can be leveraged to improve decision support in SSA, especially relating the information fusion and sensor allocation generalized functions to the domain goals. Recall one of the primary insights: Proposed sensor tasking methods should provide robust and clear mappings to decision-maker goals. This indicates an opportunity for improvement by allocating sensor resources to address specific hypotheses related to these goals. The following sections focus on a subset of the design requirements derived through the ConTA, utilized in the development and validation of a prototype DSS for SSA. In particular, the design requirements selected for further analysis are ones that allow further investigation of how hypothesis-resolution supports decision-making in SSA.

Design Requirements Addressed

As discussed previously, many existing methods of sensor tasking in SSA focus on reducing orbit state uncertainty. Although this tasking goal correlates well with some SSA goals (e.g., conjunction analysis where analysts try to avoid collisions between objects), not all SSA questions can easily be mapped to state covariance estimates. Therefore, state uncertainty minimization methods alone do not provide a sufficient basis for tasking to resolve decision-maker hypotheses related to the overarching SSA goals of space asset safety and national security.

To make connections between covariance estimates and other SSA hypotheses, many current and proposed methods require decision makers to do significant knowledge-based reasoning in the judgment phase. Specifically, when evaluating potential courses of action, the decision maker must consider which objects are related to high-priority hypotheses, whether those objects are available for tasking, and how much their state uncertainty can or should be reduced. Recalling Figure 2, this requires reasoning on several different levels of abstraction: state covariances on the physical function level, sensor allocation and information fusion on the generalized function level, and hypotheses and priorities on the abstract function level. These are three very different levels of detail of the SSA problem, and requiring the decision maker to move between them quickly (and especially iteratively, as in the judgment phase) often leads to reduced situation awareness and increased measures of workload (e.g., effort, mental demand, frustration).

Conversely, a DSS that suggests tasking assignments based directly on resolving hypotheses allows the decision-maker to remain in the abstract function level, considering trades between priorities for the different hypotheses without having to be directly concerned with the sensor allocation or state measurements. It also frees the decision maker to spend more mental effort on formulating hypotheses to directly support the dynamic list of SSA goals. Humans out-perform automation at abstract tasks such as prioritization, so this cognitive task stands to be a better use of human decision-maker effort.

Based on the results of the CWA, we hypothesize that SSA decision-making, situation awareness, and workload are improved when using a hypothesis-based tasking algorithm as opposed to a covariance-based scheduler. To investigate this claim, a prototype DSS was developed that incorporated a number of the design requirements from the ConTA. The following CWRs are considered in this DSS development:

CWR-3: The DSS shall translate observational data into evidence.

CWR-6: The DSS shall track hypothesis resolution in comparison to prescribed thresholds.

CWR-9: The DSS shall provide capability for operators to adjust hypothesis priorities.

CWR-10: The DSS shall assess expected hypothesis resolution based on current prioritization.

CWR-13: The DSS shall generate specific actions and requests required to reach the target hypothesis resolution.

A primary function of hypothesis-based sensor tasking, applied to SSA, is to analyze candidate tasking schedules to estimate hypothesis resolution based on the current hypothesis prioritization (CWR-10). Proposed approaches, such as JER (Jaunzemis et al., 2018, 2019, 2017), are predicated upon developing mappings from acquired data to evidence, so any evidential reasoning application must satisfactorily address CWR-3. Through the use of hypothesis entropy, quantifying both conflict and ambiguity, evidential reasoning provides a means for tracking hypothesis resolution against prescribed thresholds (CWR-6). In addition, adjusting hypothesis weights allows decision makers to prioritize actions that appropriately address hypotheses (CWR-9). Finally, the end result of a hypothesis-based tasking approach is the list of actions that gather the required evidence to resolve the hypotheses (CWR-13). Therefore, an evidential reasoning approach to sensor tasking, such as JER, appropriately addresses these five CWRs.

The five selected CWRs also apply to covariance-based tasking; however, in contrast to a hypothesis-based approach, the covariance-based approach supports the selected CWRs on a more limited basis, namely for hypotheses that are readily mapped to orbit state uncertainty. For example, orbit state uncertainties can be translated into collision probabilities (CWR-3), and reductions in orbit state uncertainty naturally help answer collision-related questions (CWR-6). Therefore, targeting high-uncertainty space objects (CWR-9, CWR-10) generates a sensible set of tasking actions (CWR-13). However, as mentioned previously, not all SSA questions are readily linked to orbit state uncertainty, meaning the operator is left to do the abstract reasoning to convert diverse SSA needs into state uncertainty-based target prioritizations. The hypotheses tested in this study include some that are readily linked to orbit state uncertainty (e.g., collision) and some that are not (e.g., propulsion system status) to investigate the decision-making support of each scheduler in diverse conditions.

As covariance-based approaches are commonly proposed as next-generation sensor tasking schema, the prototype DSS was designed to operate using both hypothesis-based and covariance-based scheduling methods. This demonstrates that the CWA process used to develop the generalized SSA DSS requirements in the previous sections can be applied to both hypothesis-based and covariance-based approaches. The covariance-based scheduler does not map to the CWRs as naturally as the hypothesis-based approach, which was designed directly with the CWRs in mind, but it provides the best comparator to other proposed information-based methods.

Each scheduling approach may also naturally address more of the CWRs than the selected subset, but the analysis is limited to these five design requirements as they can apply to both scheduling approaches and they limit the complexity of the operational task (e.g., not requiring operators to spawn new hypotheses to address CWR-5). The following sections describe the specifics of the prototype DSS design, including the two sensor tasking schedulers and an overview of the functionality of the simulation environment.

Sensor Tasking Schedulers

As presented in the background, two common sensor scheduling approaches are covariance-based and hypothesis-based techniques. In the covariance-based mode, the operator is able to assign specific space objects as potential actions to attempt to increase or reduce tasking actions taken on a particular object. When a given space object is prioritized, the weighting parameter for all the prioritized objects is equalized. In the hypothesis-based mode, the operator is able to prioritize hypotheses directly to attempt to increase or reduce tasking actions taken against a particular hypothesis. Similar to the covariance-based mode, when a given hypothesis is prioritized, the weighting parameter for all the prioritized hypotheses is equalized. Each hypothesis may have more than one relevant space object, so prioritizing a related hypothesis does not guarantee any observations of a given object.

In both modes, the operator’s assigned priorities may affect the scheduled sensor actions; however, the operator is not able to directly assign any space object or hypothesis to any sensor. Therefore, if the object’s covariance is already sufficiently small or the evidence available is weak, the operator may not be able to override the algorithm.

Simulation Environment

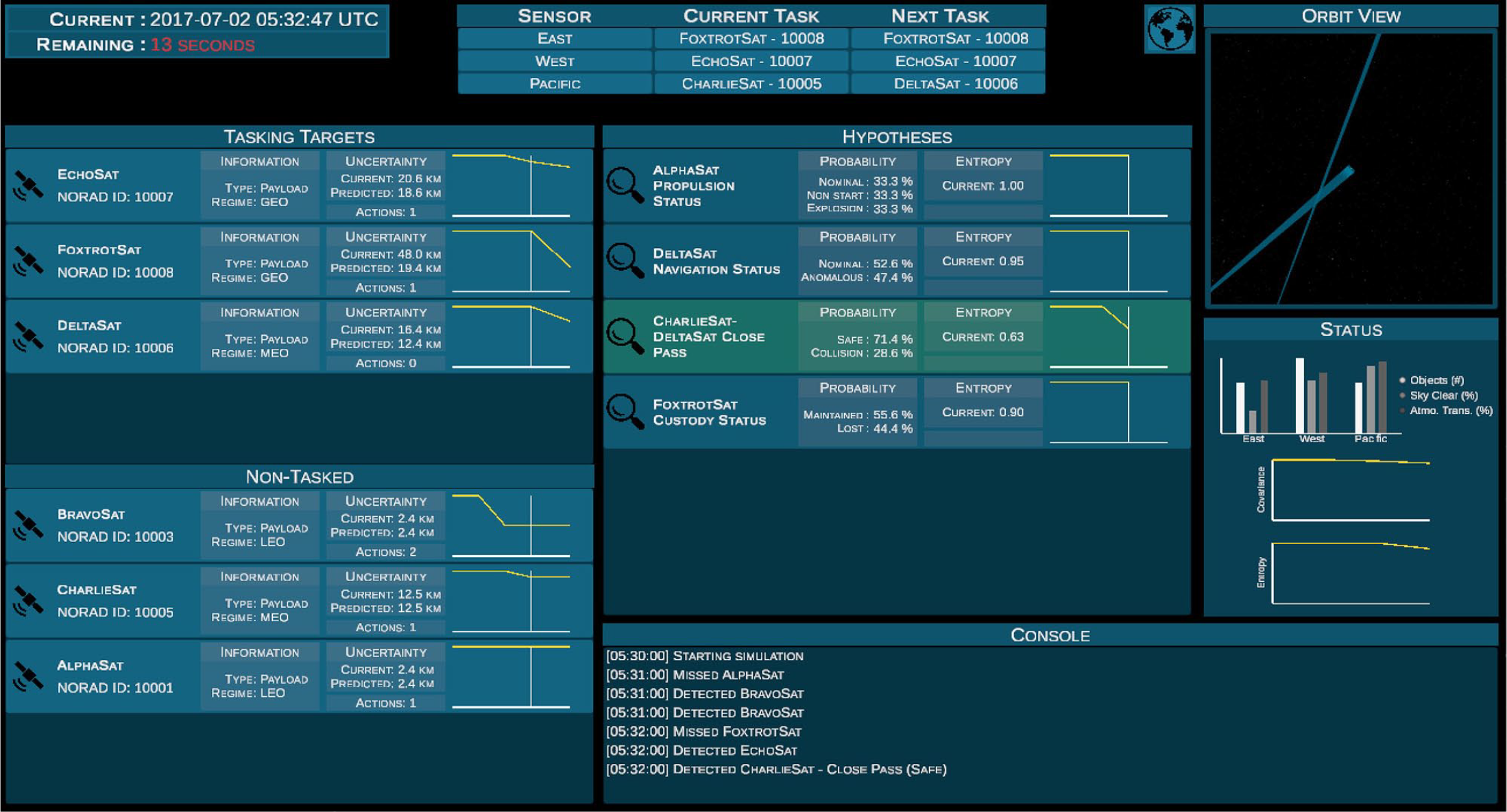

The final prototype SSA DSS is shown in Figures 5 and 6. This prototype DSS can be operated in either a hypothesis-based or covariance-based scheduling mode. The majority of the interface is devoted to displaying relevant space object, hypothesis, and sensor network information. The top-left panel contains information on the current simulation time and the time remaining until the sensor network executes its next-planned actions. The top-middle panel contains the current and proposed sensor tasking schedules, based on the operator’s selections in the tasking lists.

Screenshot of the prototype SSA DSS, using the hypothesis-based scheduler.

Screenshot of the prototype SSA DSS, using the covariance-based scheduler.

The tasking panel on the left of both interfaces allow for drag-and-drop prioritization, while also showing current and predicted information on that object or hypothesis. All items in the “tasked” panel receive an equal weighting, while all items in the “nontasked” panel receive a weight of 0. The drag-and-drop mechanic works the same for both scheduler types, only affecting which type of items receive weightings for that particular scheduler. The scheduling logic takes these weightings into account when generating the list of next tasks (shown at the top of the screen), but the operator does not directly assign sensors to targets.

In the hypothesis-based mode (shown in Figure 5), the operator indicates high-priority hypotheses by moving them into the “tasking targets” section. Each hypothesis element shows current hypothesis resolution and a time-history of entropy since the start of the simulation, as well as predicted entropy from the next proposed tasking cycle. The middle panel (labeled “Space Objects”) shows only the current and historical space object state uncertainty. In the covariance-based mode (shown in Figure 6), the operator indicates high-priority space objects by moving them into the “tasking targets” section. Each space object element shows current, historical, and predicted orbit state uncertainty. The middle panel (labeled “Hypotheses”) shows only the current and historical hypothesis entropy information.

The status panel on the right side of the interface displays quick at-a-glance information on the sensor network, including graphical summaries of the sensor observation conditions, total space object uncertainty, and total hypothesis entropy. Below this panel, on the bottom-right of the interface, is the console that displays read-outs of any attempted actions and resultant gathered evidence.

Human-in-the-Loop Data Collection

The goal of the human-in-the-loop testing was to provide a first evaluation of the prototype DSS, implemented using both hypothesis-based and covariance-based schedulers. As described in the DSS design, the test participant’s only control over the tasking algorithm is through the modification of relative priorities on either space objects or hypotheses. Several subjective and objective measures of performance, situation awareness, workload, and cognitive support were collected to facilitate comparisons of decision support using the different schedulers.

Participants

Prior to this study, a power analysis for repeated-measures analysis of variances (ANOVAs) was performed to determined target participant population sizes. To achieved a statistical power (1−β) of .80 and Type I error rate (α) of .05, assuming conservatively large standard deviations for the unknown sample distributions, this power analysis showed that 49 paired samples would be required for each analysis measure. Twelve students were recruited as participants from the aerospace engineering program at the Georgia Institute of Technology, though only 11 participants completed all sessions. As each participant completed five scenarios with each scheduler and answered each analysis prompt after each scenario, 55 paired samples were collected for each measure. Recruitment was limited to undergraduate and graduate students who had completed, or were currently enrolled in, undergraduate- or graduate-level orbital mechanics. All participants provided written consent for their participation and received pro-rated compensation based on the number of sessions completed.

Test Sessions

In a 90-min training session, participants were given an abbreviated background on the SSA problem and instructed on the use of the DSS for gathering evidence using sensor observations to answer a set of questions. Each participant was familiarized with the objective of resolving hypotheses and the operation of both scheduling algorithms through practice simulation scenarios. At the end of the session, each participant completed a qualification test scenario to ensure he or she could successfully operate the DSS to gather evidence and answer the questionnaires in a simplified scenario.

Two 60-min data collection sessions were conducted for each participant, one session each using the covariance- and hypothesis-based schedulers. Each participant was reminded of the primary task being to answer questions about the state of the objects being observed (i.e., hypothesis resolution). Each data collection session consisted of five test scenarios, each with slightly different initial conditions (e.g., number of space objects, sensor observation conditions, hypothesis types and resolutions). Each participant performed each scenario with both schedulers, leading to a total of 55 pairwise data points for each dependent variable. To control for learning effects, each participant was randomly assigned the scheduler type for the first day of testing, so that six participants used the covariance-based scheduler on the first test day and five used the hypothesis-based scheduler. In addition, the order of the test scenarios was randomized for each participant and each test day, ensuring the users did not encounter the same scenarios in the same order.

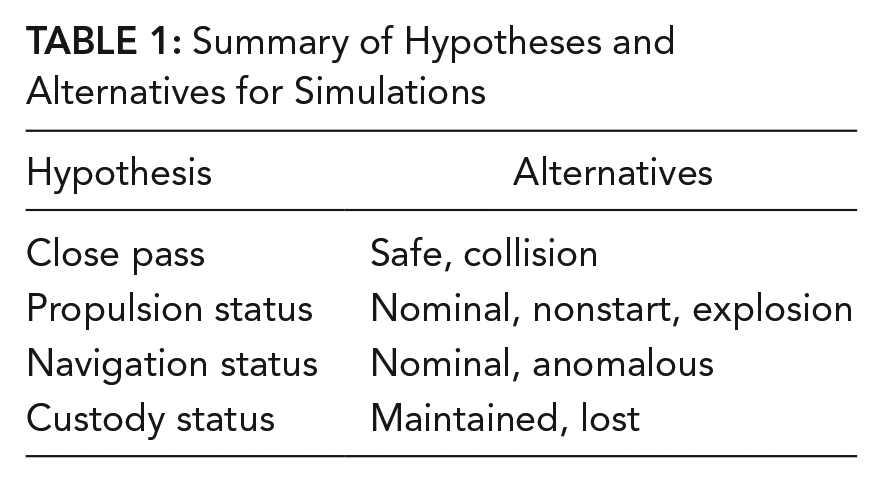

Each test scenario allowed a total of seven sets of actions to be scheduled and executed on the sensor network at 1-min intervals over the 7½-min simulation. Each scenario contained three ground-based sensors with variable observation conditions, where poor observation conditions will cause observations to miss and gather zero evidence. Each scenario also contained six or seven space objects ranging in orbit regimes from LEO, medium earth orbit (MEO), and geostationary earth orbit (GEO). Finally, each scenario consisted of five or six relevant hypotheses, where the types of hypotheses allowed and their respective propositions, or alternatives, are summarized in Table 1. A close pass between two space objects could either result in a safe pass or a collision, which results in debris generation and missed observations of the expected objects. A propulsion event could have executed nominally or resulted in a nonstart, which leaves the space object in its premaneuver orbit, or an explosion, which generates debris. The navigation system could execute nominally, leading the space object to be in its nominal predicted orbit, or experience an anomaly, leaving the space object in a different orbit. Finally, the custody of a space object could be maintained, resulting in a successful observation, or lost, resulting in missed observations. Specifics for each scenario are detailed in Appendix C. Recall that the gathered evidence is ambiguous in nature: Missed observations can also be caused by poor sensor conditions and therefore not indicate anomalous conditions, and successful observations might correspond to debris objects resulting in spurious nominal detections. Therefore, multiple pieces of evidence must be fused to increase belief in any particular hypothesis resolution.

Summary of Hypotheses and Alternatives for Simulations

Independent Variables

The only independent variable for this study was the scheduler type: covariance-based or hypothesis-based. The scheduler types were implemented in the DSS as described previously. In both versions, the user could always see current information such as space object state uncertainty, hypothesis entropy, sensor conditions. When operating in the covariance-based mode, the DSS also displayed predicted changes in the space object state uncertainty from the planned actions. Conversely, when operating in the hypothesis-based mode, the DSS displayed predicted changes in hypothesis entropy from the planned actions. This allowed the user to reprioritize space objects (in covariance-based mode) or hypotheses (in hypothesis-based mode) to achieve a desired result.

Dependent Variables

Dependent variables included performance, situation awareness, cognitive support, and workload, measured primarily using questionnaires.

Performance

The quality of the user’s ability to gather data to answer questions is best measured through hypothesis entropy, which captures both nonspecificity and conflict in the answer to the relevant question. This metric provides an indication of the hypothesis resolution with respect to prescribed thresholds (CWR-6) based on current object or hypothesis prioritization (CWR-10). The ideal sensor scheduling would lead to full specificity and zero conflict in the resultant answer to the question, represented by zero hypothesis entropy. Therefore, the hypothesis entropy after each set of actions was stored to allow for analysis of the evolution the hypothesis knowledge throughout the scenario. The average entropy at the end of the simulation provides the primary measure that can be used to compare hypothesis-resolution performance between the scheduler types.

Situation awareness

Situation awareness was assessed during each scenario according to Endsley’s (2000) SAGAT (situation awareness global assessment technique) methodology, pausing for an initial questionnaire without warning during the middle of the scenario. Participants were asked 10 questions relating to the scenario and hypotheses before returning to the simulation, indicating the participant’s awareness of how the gathered data are translating to evidence (CWR-3) and affecting current and predicted hypothesis resolution (CWR-6, CWR-10).

The specific prompts ranged from perception-related (Level 1) to comprehension-based (Level 2) questions and had deterministic answers, avoiding rater judgment. For instance, the participant might be asked which space object is related to a Custody Status hypothesis, or which sensor has the worst observation conditions, both of which can be related to perception of specific elements on the display. Alternately, the participant might be asked to compare the hypothesis resolution of a set of hypotheses and report the most- or least-resolved, which is more related to comprehension of the situation based on incoming information.

Projection-based (Level 3) questions were not included in this initial evaluation of the DSS due to additional requirements for participant familiarity with SSA and requisite additional training and evaluation time. Instead, this study focused on evaluating DSS effectiveness in supporting awareness of the immediate situation in both nominal and anomalous events. However, projection of SSA states to the future is also an important aspect of SSA analysis (e.g., collision avoidance predictions) and would be an appropriate improvement for future SSA DSS development studies. This would also allow the inclusion of further CWRs, such as spawning new hypotheses (CWR-8).

After completion of the first stage of SAGAT questions, the simulation resumed until the end of the scenario, where 10 different questions were asked. Participants were introduced to each of the potential situation awareness questions during the initial training session.

NASA–task load index (NASA-TLX)

The workload questionnaire is the widely used NASA-TLX (Hart & Staveland, 1988), which assesses the participant’s perceived workload. Per NASA-TLX convention, participant responses are on a 21-point scale, where lower rating responses correspond to lower perceived workload (e.g., 1 = perfect performance). This set of questions was asked at the end of each test scenario.

Cognitive support

The cognitive support questionnaire assesses the participant’s opinion on the ability of each scheduler to support various cognitive objectives derived from the CWA insights and design requirements (CWRs and IRRs). Participant responses to the cognitive support questions are on an 11-point scale, with 1 being not at all effective and 11 being extremely effective. The seven questions asked in this questionnaire were as follows: How effective was this scheduler’s support for . . .

Identifying which questions you still need to answer? (CWR-6)

Proposing sensor tasking assignments that answer relevant questions? (CWR-3, CWR-13)

Ensuring actions gather strong evidence? (CWR-3, CWR-13)

Identifying which questions have high uncertainty (entropy)? (CWR-6)

Allowing modifications of the scheduled tasking assignments as needed? (CWR-9)

Assessing the sensor resources available to answer questions? (CWR-13)

Adapting to sensor observation conditions? (CWR-13)

This set of questions was asked at the end of each test scenario.

Results and Discussion

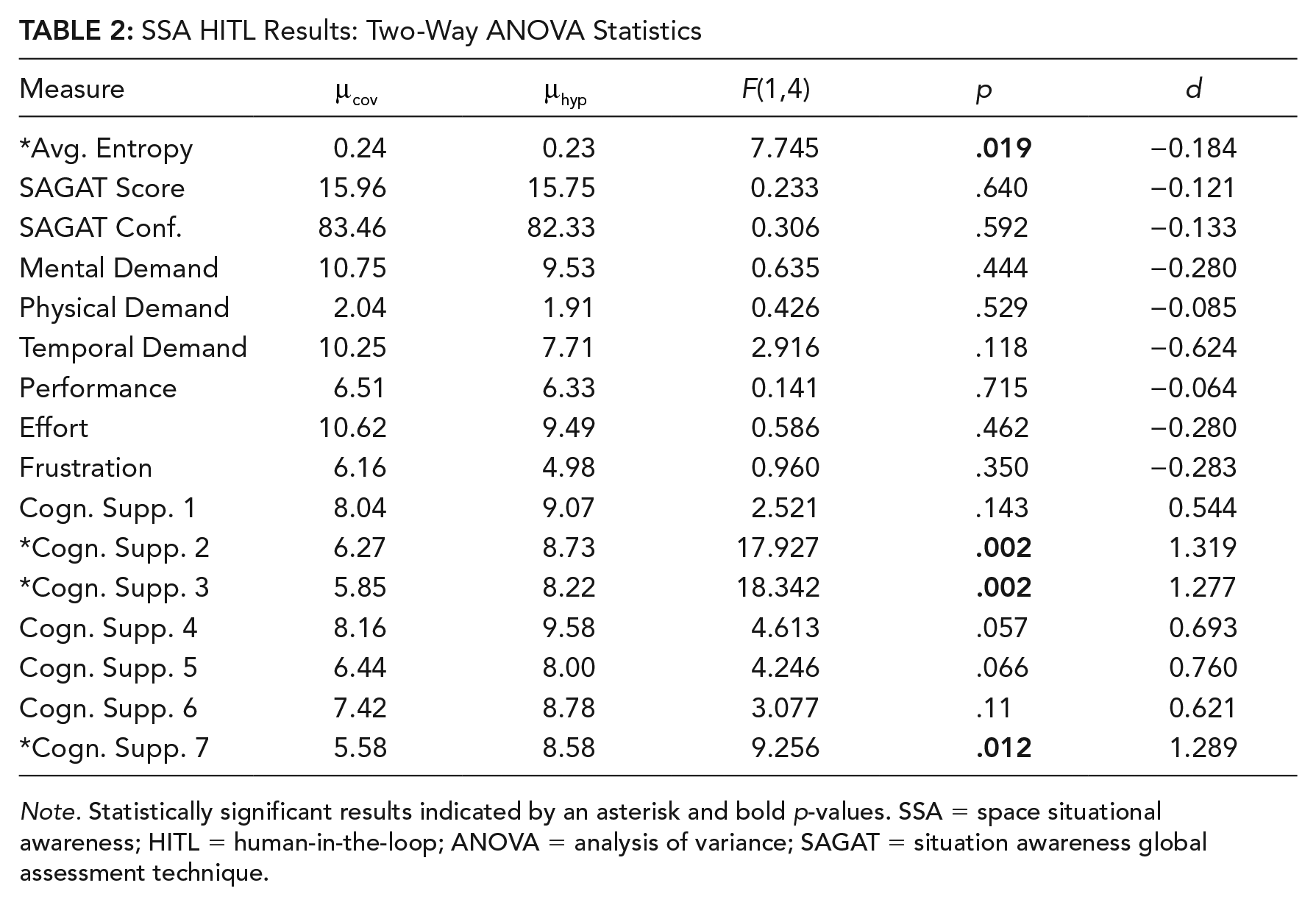

The data obtained from all 11 participants was compiled, and both the objective and subjective measures were statistically analyzed using a repeated measures (or “within-participants”) ANOVAs. The primary independent measure (factor) is the scheduler type: covariance-based or hypothesis-based. Significance was set at a Type I error rate (α) of 5%. In the results below, any statistically significant responses are marked with an asterisk, and the scheduler that provided the better performance or responses is also marked with an asterisk. The additional independent measure (factor) of scenario (A through E) was also analyzed for significance, but as the scenarios were intentionally different in scope and difficulty (see Appendix C), statistically significant differences between the scenarios are not surprising.

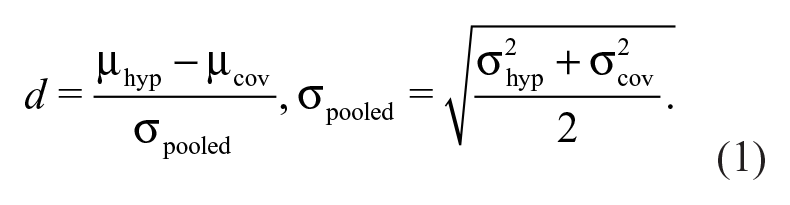

Table 2 contains the results for the scheduler type comparisons, with the significant results indicated by an asterisk. The final column,

Note that a positive effect size

SSA HITL Results: Two-Way ANOVA Statistics

Note. Statistically significant results indicated by an asterisk and bold p-values. SSA = space situational awareness; HITL = human-in-the-loop; ANOVA = analysis of variance; SAGAT = situation awareness global assessment technique.

Performance

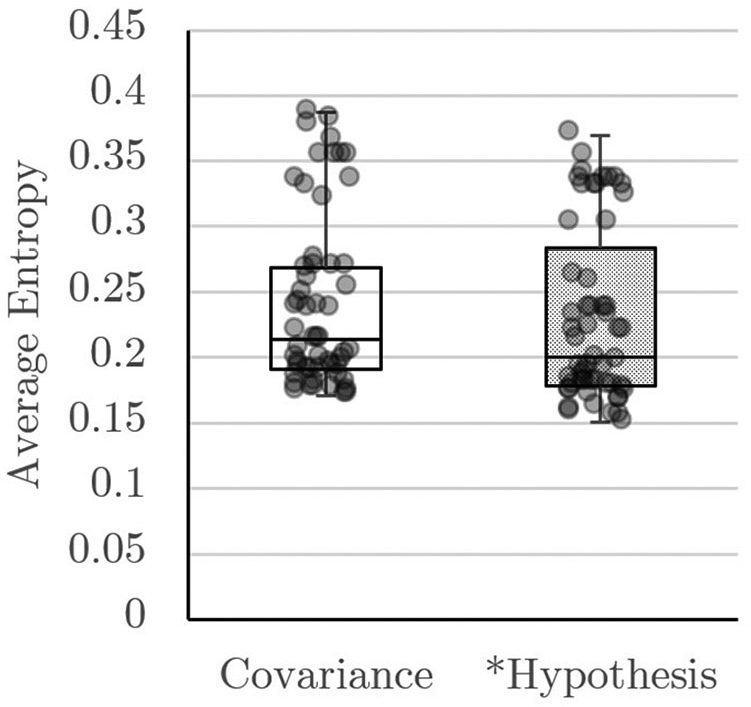

As the operator’s primary goal is to answer questions about the world state, the average entropy value of all hypotheses at the end of each scenario provides an objective measurement of the participant’s performance. Figure 7 summarizes the average entropy results using both the covariance- and hypothesis-based schedulers, including an overlay of each data point. Although trends are hard to establish from this particular figure, the average entropy ANOVA result in Table 2 shows significance,

Average entropy versus scheduler type.

Situation Awareness

Responses to the SAGAT questionnaires provide a measurement of situation awareness. The score component is computed as the number of correct responses, with a maximum of 20 possible for each test case (10 mid-simulation and 10 at the end). Each situation awareness question was accompanied by a slider for the participant to indicate his or her confidence in correctly answering the question. The distributions of SAGAT responses are similar as indicated by the ANOVA results for both score,

NASA-TLX

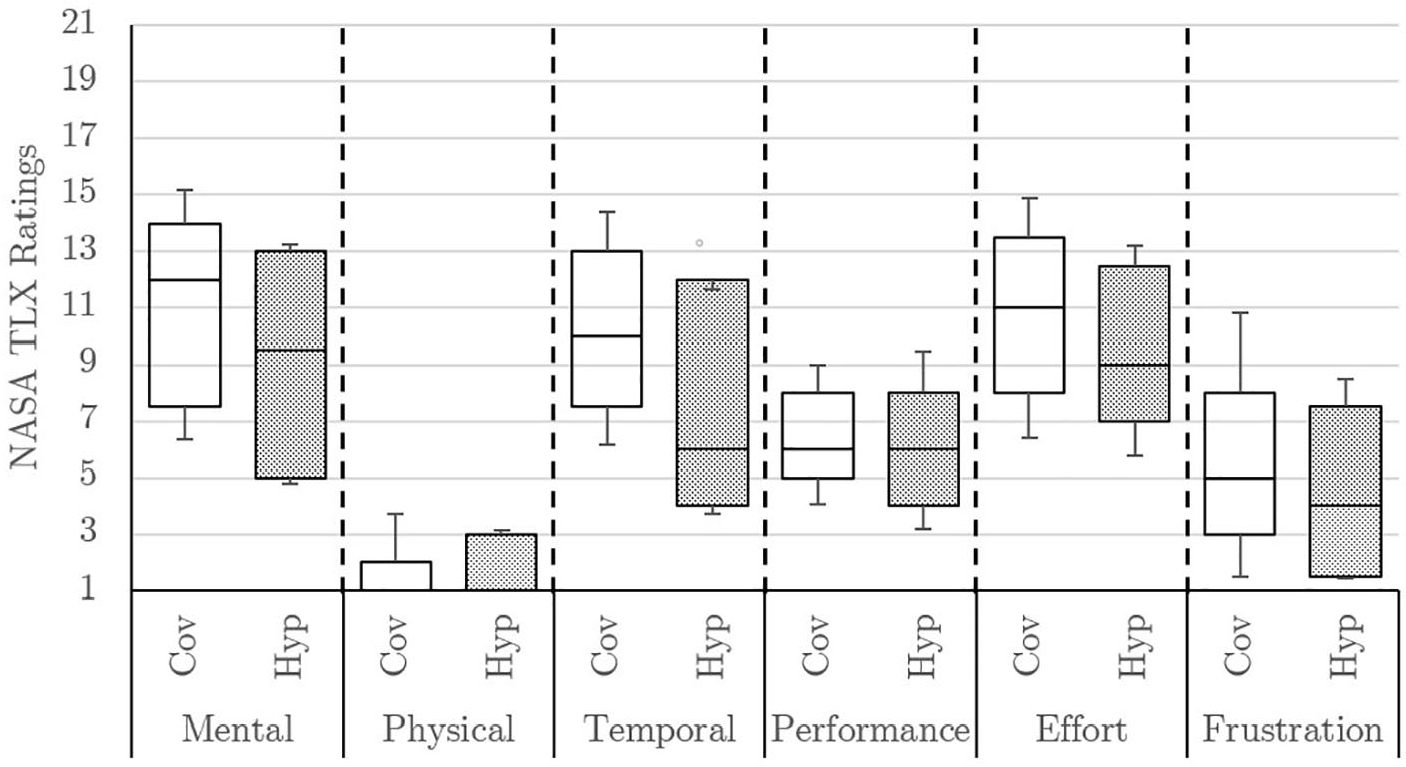

Figure 8 summarizes the responses to the NASA-TLX questionnaires. In these questionnaires, possible scores range from 1 to 21, where lower scores indicate better perceived workload or performance. Although multiple measures appear to indicate improved scores for the hypothesis-based scheduler, none of the measures have statistically significant differences. Temporal demand (

NASA-TLX responses versus scheduler type.

Of note, the least significant result from the NASA-TLX responses is related to the user’s subjective measurement of his or her own performance. Although the objective measurement of performance, average entropy, showed statistical significance, the 1% difference is too small to be readily apparent to the operator. One area of potential improvement for a hypothesis-resolution DSS is to provide more direct feedback on the user’s performance in the task of resolving hypotheses. More thorough indications of the change in entropy or state uncertainty over time, or simpler comparative indicators of the hypothesis-resolution quality, might address this issue.

Cognitive Support

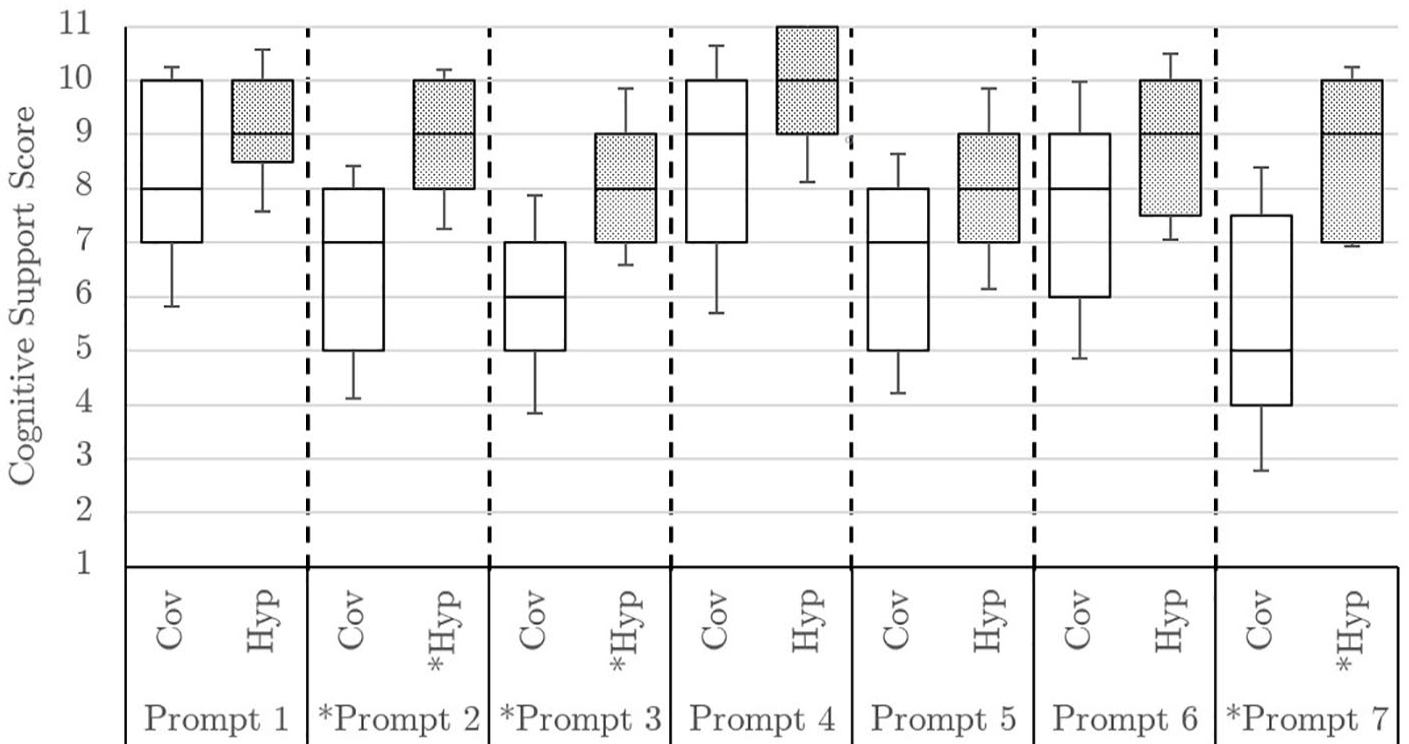

Figure 9 summarizes the responses to the cognitive support questionnaires. In these questionnaires, possible scores range from 1 to 11, where higher scores indicate better perceived cognitive support. The trends can be readily seen in these box and whisker plots: The response means are higher for the hypothesis-based scheduler for all cognitive support prompts, and effect sizes greater than 0.5 suggest moderate-to-strong evidence of systemic effects from the scheduler type. The cognitive support questions were selected to address areas of concern in developing a hypothesis-resolution DSS for SSA. Responses for Prompts 2, 3, and 7 are statistically significant: Users felt strongly that the hypothesis-based scheduler better supported their work to answer relevant questions, ensure actions gather strong evidence, and adapt to sensor observation conditions. These particular prompts map well to strengths in the natural mapping from the CWRs to the hypothesis-based scheduler, such as avoidance of weak evidence from poor observation conditions.

Cognitive support responses versus scheduler type.

Discussion

The human-in-the-loop study provides an initial validation that the prototype DSS derived using the CWA in this paper supports SSA-related decision-making. The prototype DSS developed addresses the selected design requirements by automating the translations from physical data products to evidence (CWR-3) and applying that evidence to update hypothesis knowledge (CWR-6). In addition, when operated using the hypothesis-based scheduler, the prototype DSS allows the operator to directly prioritize hypotheses (CWR-9) and estimates the expected hypothesis resolution (CWR-10) from the generated set of sensor network actions (CWR-13). The covariance-based approach can only directly address CWR-9, CWR-10, or CWR-13 for specific hypotheses that are closely related to orbit state uncertainty, whereas the hypothesis-based approach addresses all five selected design requirements for a diverse set of hypotheses.

Although the prototype DSS was designed to address all five selected design requirements, primarily with hypothesis-based scheduling in mind, comparisons between the different scheduling modes, summarized in Table 2, show that the operators can still perform reasonably well using the covariance-based scheduler. The average entropy difference, though statistically significant, is small in magnitude, and the NASA TLX questionnaires do not indicate a significant change in participants’ subjective performance.

The individual scenarios, outlined in detail in Appendix C, emphasize difference aspects of the prototype DSS and schedulers. For instance, Scenarios C and D feature poor observation conditions, weakening evidence gathered by one of the three sensors, which is indicated both in the sensor network status panel and through the strength of the returned evidence after an observation. The hypothesis-based scheduler accounts for this weakened evidence directly, leading to improved cognitive support responses, but participants still performed reasonably well with both schedulers. Scenarios B and C also feature more space objects, providing more potential tasking targets to complicate the evidence landscape, but participants again diagnosed the scenario quickly using both schedulers and focused observations on the relevant objects and hypotheses.

As an initial evaluation of the DSS prototype, these results show overall similar performance using both the hypothesis-based and covariance-based scheduler. Further studies could be conducted to understand the differences between the support provided by each scheduler type. Specifically, more operationally relevant and complicated scenarios, with broader ranges of available evidence and using practiced SSA operators as participants, could further illuminate these measured differences.

Limitations

Although many steps were taken to ensure these tests are representative of a realistic SSA decision-making operation, the results should be interpreted carefully with appropriate caveats in light of the limited fidelity of laboratory simulation to avoid misleading or invalid conclusions. Each participant was required to have completed at least one semester of undergraduate- or graduate-level orbital mechanics, but operators in SSA decision-making typically receive at least 3 to 6 months of in-depth training specifically on the SSA field. Therefore, the scenarios presented to the test participants were simplified versions of realistic SSA decision-making problems, not intending to represent the full breadth of activities involved in SSA decision-making. Performing well in more difficult and realistic SSA scenarios would require the participants to already be comfortable with the orbital mechanics, sensor phenomenologies, and information fusion intrinsic in SSA operations. Actual SSA operators were not available for recruitment due to the classification-sensitive nature of their jobs. Due to limited available training and testing time, simplified operational scenarios, and laboratory setting, these results are primarily intended to identify trends for the development of more in-depth human-in-the-loop tests closer to operational scenarios.

Perhaps the most significant caveat is the lack of a baseline comparison-point for the developed SSA DSS system. Although some existing spacecraft systems engineering tools (such as the Systems Tool Kit) can be adapted to help address SSA concerns, existing software for SSA decision-making is custom-built, often classified, and limited in applicability to specific use cases. The field has only recently begun focusing on information fusion and hypothesis-resolution as an important aspect of maintaining SSA, so any existing tools are likely in their infancy and are not readily available for academic comparison. Therefore, this display was developed as a prototype applicable to the simplified scenarios developed in this study and refined through pilot testing. The prototype demonstrates how the SSA-specific DSS requirements can be applied to both covariance-based and hypothesis-based scheduling approaches with reasonable performance from both in the simplified scenarios. These results may be considered a starting point for further human-in-the-loop considerations in the development of more mature SSA hypothesis-resolution DSSs, and more specific tests should be performed as these systems are developed and refined to ensure decision-maker support.

Conclusion

This work derives and applies a generalized set of requirements for DSS development in the SSA domain, as a complement to on-going SSA research into sensor allocation and information fusion approaches. This work presents an analysis of the SSA domain from a cognitive work perspective, beginning with a CWA. The analysis of the work domain highlighted deficiencies in existing approaches to sensor tasking, including a lack of robust and clear mappings from sensor tasking priorities (i.e., catalog maintenance) on overarching domain goals and priorities. By focusing on the information fusion and sensor allocation tasks, a set of 14 cognitive work and IRRs is developed to provide guidance for the development of a DSS.

A subset of these design requirements is used to develop a prototype DSS. The DSS is designed with particular consideration to the translation of sensor network data products to evidence used to update knowledge of the world state and answer SSA questions. The DSS prototype is used in a human-in-the-loop study to assess operator performance, situation awareness, workload, and the cognitive support provided by the particular algorithm. Both a hypothesis-based scheduler, developed according to hypothesis-resolution goals outlined through the CWA, and a covariance-based scheduler, representing a commonly proposed emerging sensor tasking approach in SSA, are implemented in the prototype DSS. As a first validation of this DSS, the human-in-the-loop study results show that the operators can perform reasonably well using both scheduling approaches. As DSSs for hypothesis-resolution in SSA are still in their infancy, these results should be used primarily to identify trends for the development of more in-depth human-in-the-loop tests closer to operational scenarios.

Footnotes

Appendix A

Appendix B

Appendix C

Andris D. Jaunzemis is senior technical staff member at Johns Hopkins University’s Applied Physics Laboratory. He earned his PhD in aerospace engineering from the Georgia Institute of Technology in 2018. His current research interests include hardware and algorithm development for miniaturized spacecraft instruments, evidential reasoning, and sensor tasking and decision support for space situational awareness.

Karen M. Feigh is a professor of cognitive engineering at Georgia Institute of Technology. She earned her PhD in industrial and systems engineering from Georgia Institute of Technology and her MPhil in aeronautics from Cranfield University in the United Kingdom. Her current research interests include human-automation interaction and using work domain analysis in systems engineering.

Marcus J. Holzinger is an associate professor in the Smead Aerospace Engineering Sciences Department at the University of Colorado Boulder. His research focuses on theoretical and empirical aspects of space domain awareness, in which he has authored or coauthored more than 100 conference and journal papers. He has made fundamental advances in low signal-to-noise ratio detection and tracking, telescope tasking to resolve hypotheses, lightcurve inversion, and reachability theory.

Dev Minotra serves Alberta Health Services (AHS) as a human factors specialist. He consults various entities in AHS on problems associated with human error and usability. His work is focused on human factors and cognition, and he has conducted research in task management and interruptions. Prior to his appointment at AHS, he was a postdoctoral fellow at the School of Aerospace Engineering in Georgia Institute of Technology. He earned his PhD in information sciences and technology from The Pennsylvania State University (University Park) in 2012.

Moses W. Chan is a technical fellow at Lockheed Martin. He earned his PhD in electrical and computer engineering from Purdue. His current research interests include integrating physics-based models in machine learning algorithms for multisensor fusion, multitarget tracking, and space situational awareness.