Abstract

We study the performance of diffusion LMS (Least-Mean-Square) algorithm for distributed parameter estimation problem over sensor networks with quantized data and random topology, where the data are quantized before transmission and the links are interrupted at random times. To achieve unbiased estimation of the unknown parameter, we add dither (small noise) to the sensor states before quantization. We first propose a diffusion LMS algorithm with quantized data and random link failures. We further analyze the stability and convergence of the proposed algorithm and derive the closed-form expressions of the MSD (Mean-Square Deviation) and EMSE (Excess Mean-Square Errors), which characterize the steady-state performance of the proposed algorithm. We show that the convergence of the proposed algorithm is independent of quantized data and random topology. Moreover, the analytical results reveal which factors influence the network performance, and we show that the effect of quantization is the main factor in performance degradation of the proposed algorithm. We finally provide computer simulation results that illustrate the performance of the proposed algorithm and verify the results of the theoretical analysis.

1. Introduction

Wireless sensor networks have recently been studied among researchers from the fields of communications, computer technology, signal processing, controls, and many others [1–3], because they have many applications [4–7]. In wireless sensor networks, distributed parameter or state estimation is a very important research aspect. Meanwhile, distributed adaptive estimation is extremely attractive and challenging for theoretical analysis and applications in recent years. Adaptive networks [8, 9] are a prevailing solution to distributed adaptive estimation problem. The adaptive network consists of a collection of nodes observing temporal data arising from different sources with possibly different statistical profiles. These nodes are interconnected to each other and required to estimate and infer some parameters of interest from noisy measurements in a collaborative manner. The existing strategies that enable learning and adaptation over such network can be roughly classified into incremental strategy [10–18], consensus strategy [19, 20], and diffusion strategy [8, 9, 21–28]. In incremental strategy, a cyclic path is first determined over a network, and then information is passed from one node to the next node over the cyclic path, repeating the process until all nodes are visited. However, it is difficult to determine the cyclic path in network. Consensus and diffusion strategy do not need to determine the cyclic path. Compared with consensus strategy, diffusion strategy outperforms consensus strategy for distributed parameter estimation. Moreover, when the step sizes are constant to enable continuous learning, diffusion strategy can enhance adaptation performance and widen stability ranges [29]. For these reasons, we focus on diffusion strategy in this paper.

In wireless sensor networks, communication with unquantized values is impractical due to the power and bandwidth constraints, which prevents the exchange of high-precision (analog) data among sensors. Therefore, quantization is usually required before exchanging data through internetworks communications [30–32]. What is more, node that receives data from the neighbors loses certain information because the quantization procedure induces some noise to the original data. This makes the problem more challenging from the previous works [21]. In distributed parameter estimation problem, the quantization issue is considered in consensus strategy [32–34], but it has not been considered in diffusion strategy. Meanwhile, because the wireless environment is very complicated, nodes and links may be subject to failure. Therefore, the randomness of network topology is an important topological characteristic in wireless networks, and then it is considered in diffusion algorithm [35]. In conclusion, the data must be quantized and the network topology is random in wireless sensor networks.

However, the existing works in diffusion algorithm do not consider the effect of quantization (see [21–28] and references therein). Nevertheless, due to the power and bandwidth of each sensor node constrain in sensor network, the quantization is necessarily considered, and random topology is also considered due to wireless environment. These issues are our motivation for this paper. To the best of our knowledge, this paper presents the first performance analysis of diffusion LMS algorithm, which considers the quantization together with random topology. Our objective in this paper is to analyze the convergence and steady-state performances of the proposed algorithm and derive some closed-form expressions of MSD and EMSE that characterize the steady-state performance of the proposed algorithm.

The main contributions are as follows:

Some theoretical results of diffusion LMS algorithm with quantized data and random topology are derived. Some models for the transient and steady-state behavior of the diffusion LMS algorithm are obtained. Explicit expressions are derived for variance relation which contain moments that represent the effects of quantization and random topology. We study the performance of diffusion LMS with quantized data and random topology by deriving closed-form expressions for Mean-Square Deviations (MSD) and Excess Mean-Square Errors (EMSE) for Gaussian data and sufficiently small step sizes. Meanwhile, we obtain the closed-form expressions for MSD and EMSE to explain the steady-state performance at each individual node. The convergence of the dithered quantized diffusion LMS algorithm in networks with random links is proved. What is more, the effect of quantized error is the main factor in performance degradation of the diffusion LMS algorithm.

The remainder of this paper is organized as follows. Section 2 briefly describes the diffusion LMS algorithm without quantized data and with fixed topology. In Section 3, we propose a diffusion LMS algorithm with quantized data and random topology. In Section 4, we analyze the mean-square convergence of the modeled diffusion algorithm. The steady-state performances are obtained in Section 5. Simulation results and analysis are given in Section 6. Finally, conclusions are described in Section 7.

Notation. In order to distinguish between deterministic variables and random variables, we use boldface letters to represent random quantities and normal font represents their deterministic (nonrandom) quantities. In this paper, all vectors are column vectors, with the exception of the regression vector. We use

2. Diffusion Algorithm without Quantized Data: Fixed Topology

We consider a network consisting of N nodes distributed over a spatial domain. In a connected network, two nodes are said to be neighbors if the nodes may be connected directly by an edge; that is, the nodes can share information. Consider a network with a fixed topology; we define the neighborhood of node k, which consists of neighbors of node k and node k itself. The neighborhood of node k is denoted by

The objective of the network is to estimate the column vector

In this section, we briefly describe the diffusion LMS algorithm without quantized data and with fixed topology. However, the sources (e.g., power and bandwidth) are limited and the topology is random in wireless sensor networks. Therefore, we consider diffusion LMS algorithm with quantized data and random topology in Section 3.

3. Diffusion LMS Algorithm with Quantized Data: Random Topology

In this section, we consider the effects of quantization and random topology in diffusion LMS algorithm. Therefore, we first establish random topology model and quantization model and then derive diffusion LMS algorithm with quantized data and random topology.

3.1. Random Topology Model

In this paper, we consider an undirected graph for simplicity; that is,

Since the link failures exist in the network, therefore, we assume a normal topology

Since the topology matrix

3.2. Dithered Quantization Model

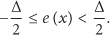

In this paper, we assume that each internode communication channel uses a uniform quantizer with quantization step Δ. To model the communication channel, we introduce the quantizing function,

3.3. Dither Quantized Diffusion with Random Topology

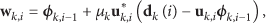

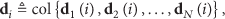

We now return to the problem formulation of diffusion LMS algorithm with random topology and quantized data. In the diffusion LMS algorithm, we consider both quantized data and random topology in the network. Therefore, we introduce the

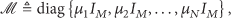

In order to facilitate the analysis, we introduce the quantities as follows:

In this section, we derive the diffusion LMS algorithm with quantized data and random topology. In Sections 4 and 5, we analyze the performances of the proposed algorithm.

4. Mean-Square Convergence Analysis

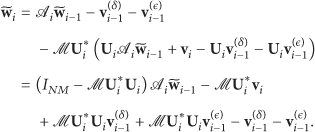

It is well known that studying the performance of single stand-alone adaptive filters is a challenging work. We now face a network of adaptive network, where the nodes influence each other's behavior. Therefore, its performance analysis is more complicated than the single adaptive filter. In order to proceed with the analysis, we introduce the weight error vector:

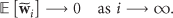

Theorem 1.

Assume the fact that data model (27) and the regression data

Proof.

It follows from (33) that the weight error vector converges in the mean to zero if and only if the matrix

5. Steady-State Performance Analysis

5.1. Variance Relations

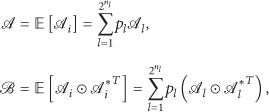

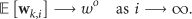

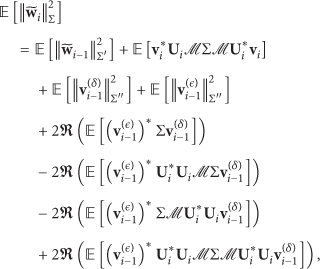

In order to study the mean-square performance of the diffusion LMS algorithm with quantized data and random topology, therefore, we introduce the variance relation of weight error vectors

5.2. Gaussian Data

In order to evaluate the network mean-square performance of each individual node by using the above variance relation, thus, we need to calculate the data moments. In particular, since the last terms in (42) and (43) are difficult to calculate in closed form for arbitrary distribution of the data, thus, we assume that the regressors arise from circular Gaussian sources with zero mean [36]. We define the transformed quantities as follows:

For Gaussian data sources, expression (45) is rewritten by the following recursion:

5.3. Steady-State Performance

We now analyze the steady-state performance of the diffusion LMS algorithm with quantized data and random topology. We consider the steady-state quantities MSD and EMSE, which are defined as

In order to analyze the performance of individual node, we introduce the following quantities:

6. Simulation and Analysis

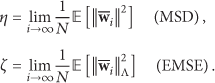

In this section, we compare the theoretical expressions with simulation results by computer simulation. In order to achieve this aim, we consider a sensor network with

Network topology.

Statistical profiles for simulation.

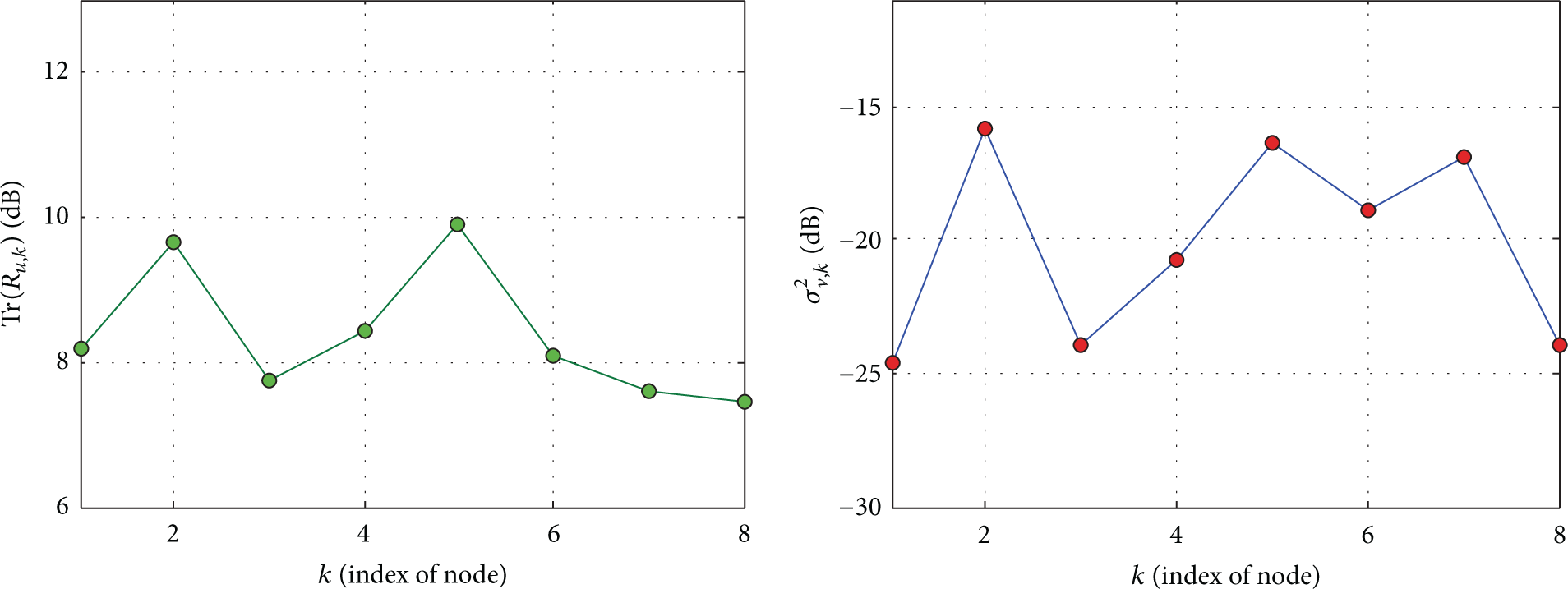

The global MSD and EMSE curves of diffusion LMS algorithm with static topology and unquantized data and diffusion LMS algorithm with random topology and quantized data are shown in Figures 3 and 4, which are obtained by averaging

Global MSD curve for diffusion LMS algorithm.

Global EMSE curve for diffusion LMS algorithm.

The local MSD and EMSE curves of diffusion LMS algorithm are shown in Figures 5 and 6, which show the network performances of the individual node in steady-state. As shown in Figures 5 and 6, a very good match between closed-form expressions and simulations is presented.

Local MSD curve for diffusion LMS algorithm.

Local EMSE curve for diffusion LMS algorithm.

7. Conclusion

In this paper, we studied the distributed estimation problem based on diffusion LMS (Least-Mean-Square) algorithm in wireless sensor networks with quantized data and random topology. First, we established weighted spatial-temporal energy conservation relation. The mean stability of diffusion LMS algorithm with quantized data and random topology is analyzed, and the analysis result shows that the mean stability is independent on quantized data and random topology. We derived a variance relation simultaneously. The closed-form expressions that describe the steady-state performance in terms of the MSD (Mean-Square Deviation) and EMSE (Excess Mean-Square Errors) quantities are derived. The results show that the effect of quantization is the main factor in performance degradation of the diffusion LMS algorithm with quantized data and random topology. Meanwhile, the simulation results show the good match between the closed-form expressions and simulations.

Footnotes

Appendix

Competing Interests

The authors declare that they have no competing interests.

Acknowledgments

This work was partially supported by the National Basic Research Program of China (973 Program) under Grant no. 2013CB329102, by the National Science and Technology Major Project under Grant no. 2015ZX03003002-002, by the National Natural Science Foundation of China (NSFC) under Grant nos. 61372112, 61232017, 61522103, 61370221, and U1404611, and in part by the Program for Science & Technology Innovation Talents in the University of Henan Province under Grant no. 16HASTIT035.