Abstract

In order to meet the demand for efficient computing services in big data scenarios, a cloud edge collaborative computing allocation strategy based on deep reinforcement learning by combining the powerful computing capabilities of cloud is proposed. First, based on the comprehensive consideration of computing resources, bandwidth, and migration decisions, an optimization problem is constructed that minimizes the sum of all user task execution delays and energy consumption weights. Second, a dynamic offloading scheduling algorithm based on

Keywords

Introduction

Mobile cloud computing migrates the complex computing tasks of mobile devices by mobile networks, and offloads tasks to cloud data centers with powerful computing and storage capabilities for processing to meet the needs of complex emerging mobile applications. 1 However, the mobile cloud computing architecture has limitations; the cloud data center is far away from mobile terminal devices. The task to be processed needs to pass through access network and backhaul link can reach the cloud.2–4 This will lead to excessively long application services in mobile cloud computing, and will not be able to achieve the design goal of 5G providing users with millisecond end-to-end latency. 5 In addition, the centralized mobile cloud computing architecture affects network risk and attack resistance. For example, mobile cloud computing cannot meet the low-latency and high-reliability requirements of emerging applications such as Internet of Things (IoT), Industry 4.0, and telemedicine.6–9 Therefore, it is necessary to design a new computing service architecture in mobile network to support the landing and development of new mobile applications. 10

By sinking the computing and storage resources of cloud to the edge of network, services are provided for mobile devices near the edge network.11–14 Mobile Edge Computing (MEC) can improve network data processing throughput, achieve low-latency and high-reliability data processing services, and solve the dilemma faced by mobile cloud computing.15–18

In the MEC environment, in order to better satisfy users’ Quality of Service (QoS) requests and improve the quality of user experience, how to efficiently perform the computing and allocation of IoT resources is one of the hot issues that has been widely studied.19,20 The literature

21

proposed to optimize the energy consumption of edge computing networks under delay constraints. It summarized the problem as a mixed-integer programming problem, and further transformed it into an integer programming problem and solved it with a dynamic programming algorithm, which verified its superiority

2

compared with the branch and bound method. The literature

22

designed a delay-sensitive microservice deployment system, which involves communication delay, calculation delay, and data migration delay. The shortest path algorithm and

When faced with scenarios with a huge number of tasks, due to the limitations of edge node resources and the lack of cloud edge collaboration considerations, it is often difficult to meet the high-efficiency data processing requirements of big data scenarios, so this article proposes a cloud edge collaborative computing allocation strategy based on deep reinforcement learning. The proposed algorithm fully considers the resource requirements of mobile terminal applications and the remaining amount of resources of MEC server when making migration decisions. It always migrates tasks to the most suitable computing resources for execution as much as possible, so as to balance the resource utilization of MEC server and deploy more virtual machines to perform tasks.

System modeling

Network model

The edge computing load balancing problem first considers the computing load balancing problem in the data analysis application scenario of IoT devices in the large-scale cloud edge collaborative network. The large-scale cloud edge collaborative network architecture scenario is shown in Figure 1. The main research content is that the huge amount of data generated by underlying IoT devices is transmitted and processed by wireless and wired networks, and finally converged to the cloud data center for big data analysis and processing. Large-scale intelligent networks, such as smart homes and smart cities, need to analyze the data collected by IoT device nodes to complete corresponding intelligent processing. The centralized processing solution of traditional cloud data center cannot meet the existing big data processing needs. The massive amount of data generated by the huge amount of IoT devices poses a huge challenge to the transmission capacity of backhaul link and the processing capacity of core network. Thus, on one hand, it is necessary to take advantage of the nearby services of edge nodes to meet the low-latency requirements of IoT applications. On the other hand, collaboration between edge nodes and cloud data centers is needed to cope with the arrival of intensive data, disperse the pressure of data processing, which can ensure the normal operation of network.

Large-scale edge cloud collaborative network architecture.

Figure 1 shows the system model of problem being studied. The system can be a three-tier architecture (i.e. including three types of nodes), from the bottom to top: IoT devices, edge nodes, and cloud data centers. The underlying IoT devices will generate a large number of tasks that require big data analysis and processing, which are transmitted to the corresponding edge server nodes via wireless links, and then converge upward to the uppermost cloud data center node for centralized big data analysis and processing. The edge server nodes in the middle—such as base stations, WI-FI access points, switches, routers, and gateways—are connected by wires to form an edge cloud. Edge noders have abundant computing resources. When transmitting the data generated by underlying IoT devices, it can use its own computing resources to preprocess the data, and transmit the preprocessing results to the cloud data center. In the process of data processing and transmission, multiple edge nodes can process and transmit data in a collaborative manner.

The set of cloud data centers and edge nodes in the network is

Calculation model

Based on the definition of network model in the previous section, suppose

where

The edge node updates and maintains the above-mentioned task table synchronously under edge cloud. This synchronization only needs to update the table information after each edge node makes a task migration decision, and broadcast it to all edge nodes under the same edge cloud. Therefore, the total task set

The total task set

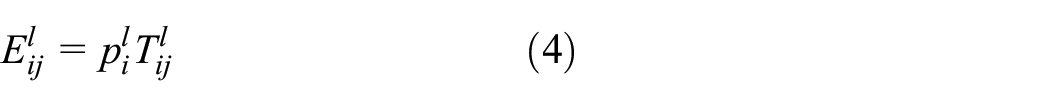

Local execution

When the local calculation time is less than the maximum allowable delay of user

where

where

Combined with the local calculation delay formula and corresponding energy consumption formula, the total cost of local calculation can be expressed as

where

Migration execution

When the local calculation time of user task

Because each user migrates to a different edge node with different uplink and downlink rates, suppose the uplink rate of task

where

Assuming that the downlink and uplink have the same channel environment and noise, the downlink rate can be expressed as

According to the above steps, the transmission delay of user

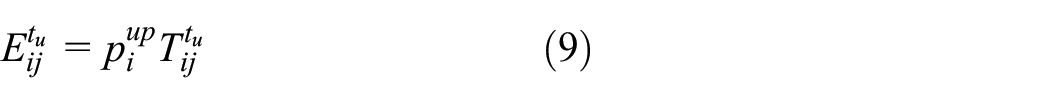

According to the transmission delay, the corresponding transmission energy consumption can be obtained

where

When the data are transmitted to edge node, CPU resources allocated to the task by edge node are used for calculation, and the calculation time of tasks for user

where

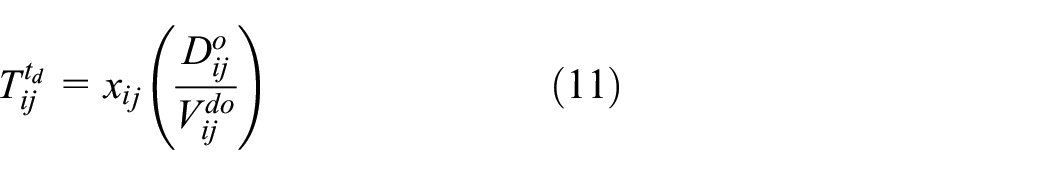

When edge node

where

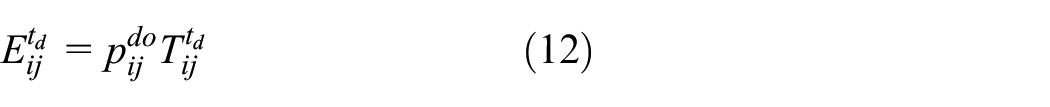

Based on the transmission delay of edge node

where

Combining equations (8), (10), and (11), we can get the total delay of execution process of user task

Therefore, it can be obtained that in the process of user task

where

Combining equations (9), (12), and (14), it can be obtained that the total energy consumption of user side in the process of migrating task

Finally, combined with the total delay and total energy consumption during the execution of task migration for user

where

Optimization problem description

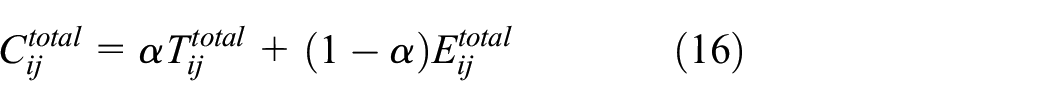

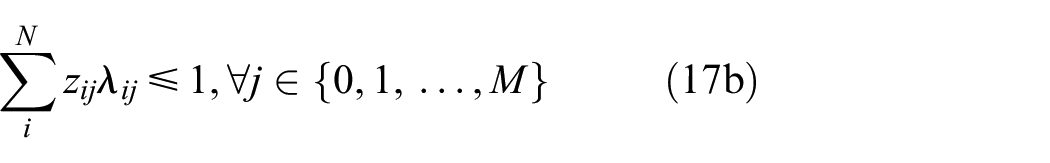

Latency and energy consumption are the two core indicators for measuring network performance. The optimization objectives of this article are mainly focused on the completion time and energy consumption of all tasks at user level. The specific optimization goal is to minimize the weighted sum of task execution delay and energy consumption of all users, that is, the total cost

s.t.

In the above optimization problem, the objective function (17) is to minimize the sum of weights for completion time of all tasks and the energy consumption of user side, expressed by the total cost

Dynamic offloading method of edge computing based on reinforcement learning

Reinforcement learning

Reinforcement learning is an important branch of machine learning. In this branch, the agent learns how to get the maximum reward through interaction with environment. Unlike supervised learning, reinforcement learning cannot learn from samples provided by experienced external supervisors. On the contrary, it must learn from its own experience, even though it faces greater uncertainty in environment. The definition of reinforcement learning is not to describe learning methods, but to describe learning problems. Besides, method suitable for solving this problem can be considered as a reinforcement learning method. A reinforcement learning problem generally includes the following elements:

State: it describes the current environmental situation, such as a Go program, the state is the position of chess piece on the chessboard. The state space refers to all possible environmental conditions.

Action: it represents the actions that the agent may perform in each state. The action space refers to all possible operations of agents.

Reward: in a certain state, after performing a certain action, the reward is obtained. The reward may be positive or negative (i.e. punishment).

State transition probability: it indicates the probability value of the system transitioning to the next state after performing a certain action in a certain state.

Strategy: it indicates the mapping relationship between states and actions, that is, which action to execute in a certain state, usually expressed as

Value function: reinforcement learning focuses on the maximization of long-term rewards, not the maximization of instant rewards. If you only maximize the instant reward, it will only choose the action with the largest instant reward each time, which becomes a simple greedy strategy. In order to well represent the long-term reward accumulated from the current moment until state reaches the goal, a value function is used to describe this variable.

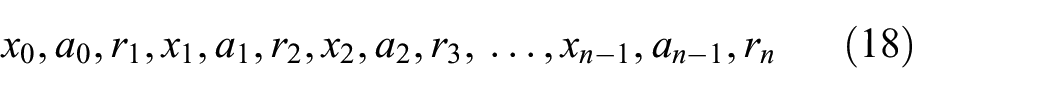

Reinforcement learning problem can usually be described as the optimal control of Markov Decision Process (MDP), but it is not necessary to know the state space, transition probability, and reward function. Thus, reinforcement learning is very effective in dealing with complex problems in the real world. An MDP consists of a finite number of states, actions, and rewards, which can be expressed as

where

Reinforcement learning has two outstanding features: trial and error search and delayed reward. Trial and error search means a trade-off between exploration and exploitation. Agents prefer to use effective actions they have tried in the past to generate rewards. But it must also explore new and better actions that may generate higher returns in the future. The agent must try a variety of actions, and gradually favor those that get the most rewards. Another feature of reinforcement learning is that the agent must not only consider the immediate reward, but also the long-term accumulated reward, that is, the value function.

Generally speaking, depending on whether the environmental elements (i.e. state transition probability and reward function) are known, reinforcement learning can be divided into model-free reinforcement learning and model-based reinforcement learning. Model-free reinforcement learning has recently been successfully applied to deep neural networks. It can directly input the original state into the deep neural network to learn more difficult task strategies. In contrast, model-based reinforcement learning learns the system model with the help of supervised learning, and optimizes the strategy under this model. In recent years, model-based reinforcement learning elements have been incorporated into model-free deep reinforcement learning to increase the learning speed without losing advantages of model-free learning.

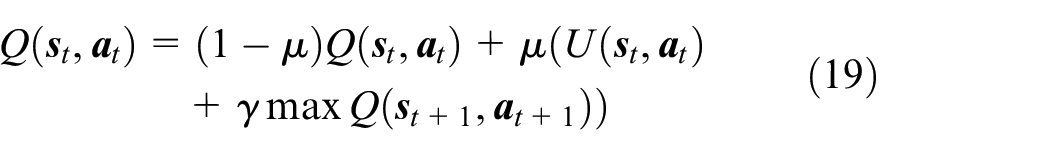

Dynamic offloading scheduling algorithm based on Q -learning

Under normal circumstances, due to the dynamic changes of network environment, conventional dynamic programming algorithms or model-based algorithms cannot effectively solve such optimization problems without prior knowledge of environment. Because the agent cannot predict the next state of environment before acting. Thus, this article uses this model-free reinforcement learning method to study the problem of offloading optimization.

where

In the process of learning and training of

Since offloading optimization is considered in a dynamic network environment, it is necessary to determine which one or more tasks can be executed at the same time in the time slot

Task scheduling process in dynamic scenario.

Based on Figure 2, this article gives the specific steps of task scheduling in a dynamic environment:

Step 1: after the IoT service is started by remotely issuing instructions, the preparation time and completion time of each task are initialized as RT table and FT table, respectively, and scheduling queue

Step 2: when

Step 3: update RT table according to inter-task dependency information

Step 4: search for the task with the smallest value and unscheduled task in RT table. There may be none, one, or more tasks that meet the conditions, and add these tasks to scheduling queue

Step 5: check whether the scheduling queue is empty. If it is not empty, it means that there are still tasks to be calculated. Repeat Steps 2–5 in the next time slot

Simulation results and analysis

In the simulation scenario of this article, it is assumed that there are three edge nodes, and the bandwidth of edge nodes is 100, 150, and 200 MHz, respectively. The computing capabilities of edge nodes are 150, 200, and 250 Mb/s. The computing energy consumption per unit time of edge nodes is 0.001, 0.002, and 0.003 J, respectively. At the same time, assuming that the number of user terminals is 30, it means that there are 30 user terminals that have tasks to be calculated. The task data size of each user terminal is randomly generated between 100 and 500 Mb. The distance between each user terminal and edge node is also randomly generated. Furthermore, it is assumed that the local computing capability

Figure 3 evaluates the impact of different learning rates on the reward value in the deep neural network. From the figure, it can be found that (1) as the learning rate decreases, the convergence of reward value gradually slows down. This is because the learning rate is too small, and the efficiency of each iteration optimization is too low. Thus, the learning rate in deep neural networks cannot be too low. (2) When the learning rate is larger, as the number of iterations increases, the optimal value may be exceeded, causing oscillations near the optimal value. Thus, the learning rate in deep neural network in this article can neither be too low nor too high. Based on the results of multiple simulations, the learning rate selected at the end of this article is 0.001.

Convergence of reward value under different learning rates.

Figure 4 shows the relationship between the total energy consumption of IoT devices and the number of IoT devices participating in the service under different algorithms. In a dynamic scenario, the number of tasks

Relationship between total energy consumption of IoT devices and number of IoT devices.

Figure 5 illustrates the impact of different average data sizes on the total energy consumption of IoT devices. It can be observed that with the increase in average data size, the energy consumption of IoT devices for these three methods is approximately linearly increased. Besides, the energy consumption of the proposed algorithm is the smallest compared with the other two algorithms. It shows that proposed algorithm can adaptively adjust the offloading strategy according to data size, so as to achieve the effect of efficiently optimizing the total energy consumption of IoT devices. However, due to the dependency between tasks, waiting for energy consumption becomes not negligible. The comparison method will show poor energy consumption performance when the average data are large, which will directly increase the energy consumption of other IoT devices. In the case of different average data sizes, the proposed algorithm can get the best energy-saving effect.

Impact of different average data sizes on the total energy consumption of IoT devices.

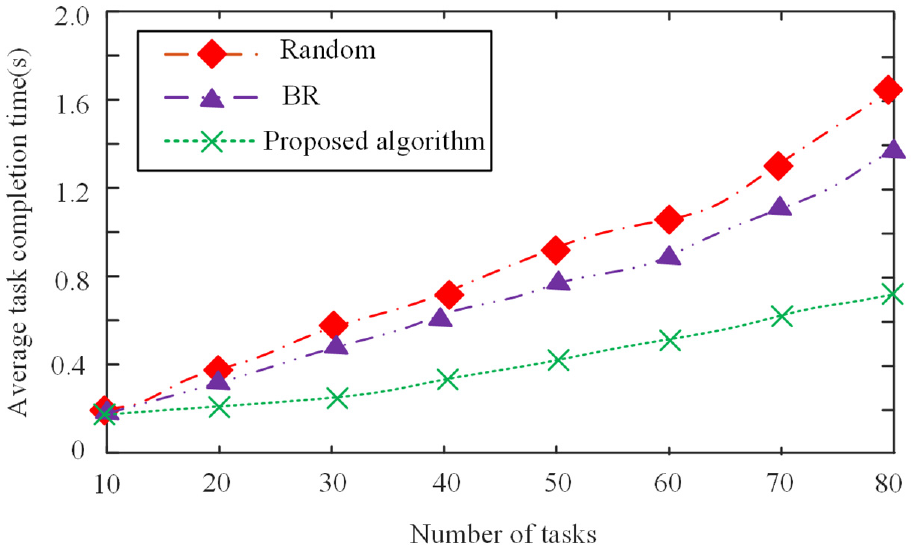

In order to analyze the performance of IoT resource computing allocation strategy based on edge computing, the other two migration location selection strategies are used as comparison strategies. One is the fastest response time strategies (Best Response, BR for short), that is, the node with the shortest completion time of previous round of task processing is selected as migration destination. The other is random selection strategy (Random), that is, any service node is randomly selected as the migration destination in MEC platform. Figure 6 shows the comparison of average task completion time for these three strategies in MEC environment when different numbers of application tasks participate in migration. The task completion time refers to time interval from when the task is submitted to when the task is completed and result is returned to users.

Comparison of task completion time of three strategies.

It can be seen from Figure 6 that IoT resource computing allocation strategy based on edge computing is always better than Random strategy and BR strategy. The average time to complete its tasks is always the smallest. When the number of migration tasks is small, the advantage of proposed algorithm in the average task completion time is not obvious. This is because the available resources are relatively sufficient at the beginning, the resource utilization is relatively balanced, and the corresponding virtual machines can be deployed. As the number of tasks involved in migration increases, the average task completion time of proposed algorithm is much lower than the other two strategies. This is because the total amount of resources for MEC is fixed, and proposed algorithm makes full use of the computing power of both sides and effectively meets the demand for efficient computing services in IoT scenarios. Facing the environment dynamic changes of edge nodes in edge cloud, the proposed algorithm can adaptively adjust migration strategy, which improves the efficiency of resource allocation.

Figure 7 is a comparison chart of task overhead under the three strategies. Figure 7 shows the comparison of average task overhead costs of these three algorithms. Task cost refers to the actual scheduling cost that users pay to complete task migration in MEC environment.

Comparison of task overhead of three strategies.

It can be seen from Figure 7 that as the number of tasks increases, the competition between tasks is fierce, and the task waiting time is longer. Therefore, the average task completion time increases, the resource unit price also increases dynamically, and the average task overhead will increase accordingly. The average task migration overhead of computing allocation strategy based on edge computing for IoT resources is significantly better than Random strategy and BR strategy. This is because the proposed algorithm considers the utilization and price of computing resources when making migration decisions, and it is always more inclined to migrate tasks to computing nodes that match its resource requirements and have relatively low prices. This reduces the migration overhead cost of application tasks to a certain extent.

Conclusion

This article constructs a problem of minimizing the user task execution delay and weighted sum of energy consumption, and then proposes a computing allocation strategy based on edge computing for IoT resources to solve the optimization problem. The proposed algorithm can show efficient energy-saving performance under different number of IoT devices. The comparison method lacks the consideration of cloud edge collaboration, and it is often difficult to make the optimal decision in the dynamic diversity environment with great differences.

In the next step of research work, one is to consider the mobility of mobile terminals, predict its moving direction and trajectory, and calculate the connection time between the mobile terminal and computing resources. After judging whether the task migration can be completed within its connection time, further migration decisions can be made. Besides, the second is to further consider the allocation of other physical layer resources, such as spectrum and power.

Footnotes

Acknowledgements

The author expresses the appreciation to the reviewers for their helpful suggestions which greatly improved the presentation of this article.

Handling Editor: Yanjiao Chen

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was financially supported by the National Education Information Technology Research 2018 project funding, project no. 186130014.