Abstract

Classification of imbalanced data is a vastly explored issue of the last and present decade and still keeps the same importance because data are an essential term today and it becomes crucial when data are distributed into several classes. The term imbalance refers to uneven distribution of data into classes that severely affects the performance of traditional classifiers, that is, classifiers become biased toward the class having larger amount of data. The data generated from wireless sensor networks will have several imbalances. This review article is a decent analysis of imbalance issue for wireless sensor networks and other application domains, which will help the community to understand WHAT, WHY, and WHEN of imbalance in data and its remedies.

Keywords

Introduction

One of the important challenges in data mining is handling of imbalanced data in classification.1–4 We know that classification is an important technique of data mining, in which unknown class samples are assigned to some class based on previous knowledge from training samples.5,6 Imbalance appears when data are unequally distributed into classes; some classes may have large quantity of data called as majority classes and some may have just few instances of data called minority classes. This uneven distribution causes biased performance of traditional classifiers because they consider the error rate not the distribution of data, and due to having little quantity of data instances, minority classes get ignored in overall classification result. This issue appears in many real-world applications, 7 such as healthcare sector,8,9 detection of oil spill, 10 fraud detection in usage of credit cards, 11 modeling of cultures, 12 intrusion detection in networks, categorization of texts, and so on. Figure 1 is a representational picture of imbalanced data.

Imbalance in a binary dataset.

Many solutions are proposed to solve this issue in previous years in different ways. In this regard, high quality of review papers have been published in the last decade on imbalanced data and their related aspects, containing information starting from the definition of imbalance in data space including the characteristics, types, and effect on classification performance to all possible ways to deal with the issue. These review and research articles are the valuable sources of knowledge to understand the problem of imbalance comprehensively. Thorough literature survey is bringing out the enormous study and research on imbalance of data. Following are some examples of some popular research areas dealing with this natural case of data distribution.

Wide ranges of applications

Learning from imbalanced data was mainly driven by numerous applications in real life where we have to deal with the problem of incompatible data representation. The minority class is usually the most important in such cases, and therefore, we need methods to improve their recognition rates. This is closely linked with major issues like preventing malicious attacks, wireless sensor networking, detecting life-threatening diseases, managing atypical behavior in social networks, or handling rare cases in monitoring systems.

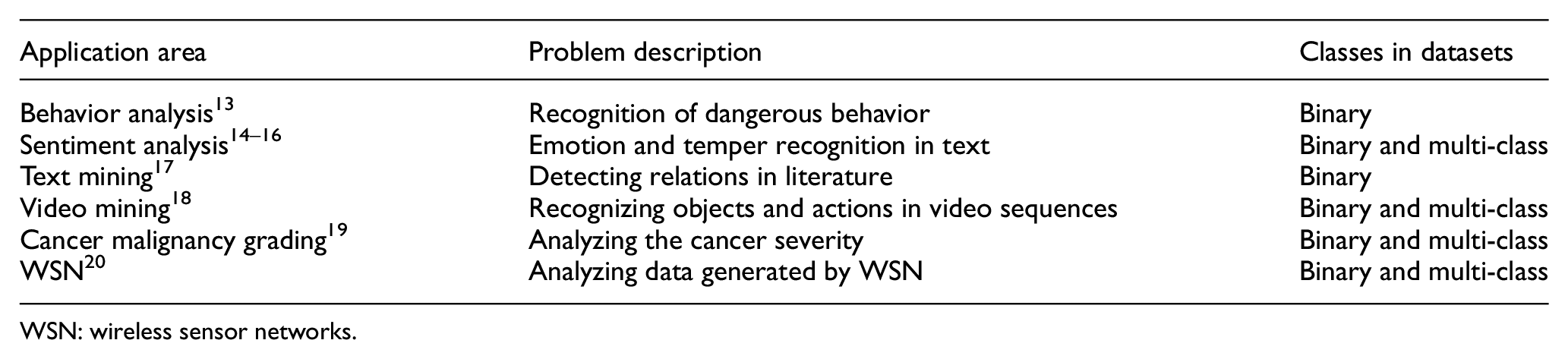

A list of selected, recent real-life applications presenting data imbalance.

This article analyzes review or survey papers followed by original research articles that are finding potential solutions to the problem in specific manners. Especially, nearest neighbor and their fuzzy versions are considered for discussion. This work suggests several techniques that can be used to balance the imbalances generated by sensors in wireless sensor network (WSN).

Following sections of the article are organized to explain the literature from basics of imbalance to solutions offered to the problem recently, and some wide range of applications in this area has discussed. Section “Literature review on handling imbalanced data at a glance” provides the nuggets of imbalance learning literature, from some of the important base papers. Section “Review of solutions to the problem” concentrates on the discussion of various solutions to the imbalance problem, that is, rebalancing of data, algorithm modification, and so on in its subsections. Section “Imbalanced data in wireless sensor networks” discusses about the imbalanced data and their effect on analyzing WSN data. Section “Lessons learned and approaches suggested for handling imbalanced data in WSN” discusses briefly about the suggestions to improve imbalanced data. Finally, the conclusion and future direction are discussed in section “Conclusion and future direction.”

Literature review on handling imbalanced data at a glance

Chawla et al. 21 provide an overview of the imbalance in an editorial issue that consists of the information about imbalance available to date with existing solutions and the extracts of important workshops and conferences organized that time by knowing the criticalness of the problem. They reviewed some of the research articles too. The purpose of this editorial was to ensure the awareness among the data mining community about imbalance present in datasets as its natural in most cases.

WSN: wireless sensor networks.

Visa and Ralescu 22 attempted in a similar way to analyze workshops and conference held in past on imbalanced datasets and came out with the discussion on imbalanced datasets, research gaps, and future research directions. Guo et al. 23 have presented a review on approaches proposed for imbalanced learning on four different levels. They have seen about the evaluation measures for such datasets and relate the other issues to conclude that other factors are also responsible for degradation in classifiers’ performance with imbalances such as small disjuncts.

The review article written by He and Garcia. 2 presented an analysis that becomes a milestone for the researchers of imbalanced datasets for comprehensive knowledge of imbalance issue from elementary definitions of the terms to state-of-the-art solutions and particular evaluation measure. Also, possible future research directions and scope were discussed brilliantly.

Review of the classification of imbalanced data by Fernández et al. 24 was aimed on two important matters: first, the discussion of solutions of the problem with pre-processing and cost-sensitive approaches was provided, and then, a look was given on intrinsic data properties that affect the classifiers’ performance for data imbalance such as existence of small disjuncts, small sample size, class overlapping, and so on.

López et al. 25 have discussed the issue of imbalanced learning with focusing on two targets: first, the attention given on pre-processing, cost-sensitive, and ensemble learning approaches for imbalanced datasets with experimental examples and second, the intrinsic characteristics put on the light that play important role in classification, that is, presence of small disjuncts, small sample size or low density, class overlap, noisy data, borderline examples, and data shift.

Prati et al. 26 has designed an experimental setup to evaluate classifiers’ performances for various degrees of imbalance and came to the conclusion that degree of imbalance is proportionate to the performance of classifiers, implying that higher misclassification for higher degree of imbalance and vice versa. They have also introduced a confidence interval method to judge the performance of such classifiers. They found that existing remedies for imbalanced datasets are partially able to resolve the issue.

Review of solutions to the problem

The issue of imbalance is directly related to distribution of data into classes, and therefore, classifiers have to compromise with the performances. Literature suggests that imbalance in the data can be dealt intrinsically by either balancing the data, also known as re-sampling, or pre-processing of data and then applying traditional classifiers or to modify classifier to find correct classification results from imbalanced data. In the first approach, data pre-processing or re-sampling is applied on data so this could be known as “Data-Level Solution” approach; here, only data are altered, and no changes are performed in classifiers. Other strategy considers natural distribution of data as it is and classifiers are modified for the specific case of imbalance. This approach performs changes in classification algorithms so could be termed as “Algorithmic-Level Solution.” Other known strategies are “Cost-Sensitive Approaches” and “Ensemble Techniques.” Also, imbalance is dealt with feature selection and evolutionary approaches. Subsequent sections of this article contain some good and notable contributions of researchers in all categories of solutions followed by the review of related articles for this article on nearest neighbor and its variants. Following Figure 2 shows the four solutions of imbalance classification:

Strategic solutions for classification of imbalanced data.

Balancing of data or re-sampling pre-processing

Batista et al. 27 have evaluated the behavior of different methods for dealing with oversampling and undersampling in learning from imbalanced data and suggested that the sampling methods perform well on different cases of imbalance. Also, they proposed two oversampling approaches, and both are good in extracting results from datasets having a less number of positive examples.

Similarly, García et al. 28 analyzed the imbalance ratio effect and classifier properties on various re-sampling approaches. They concluded through experiments that for a low imbalance ratio, performance of oversampling as well as undersampling approaches is equivalent but for high imbalanced cases, oversampling should be preferred for better classification. Also, they found that the influence of classifiers on efficiency of re-sampling strategies is negligible.

A systematic review on the class imbalance issue is done by Menardi and Torelli. 29 They discussed that how various existing classifiers are failing in learning from imbalanced datasets. They emphasized the need of model estimation and model evaluation with refined measures specifically for such skewed environment. Also one re-sampling method is proposed in this research that is leading to boosting and bagging and improves the accuracy estimation in severe imbalanced situations.

Chawla et al. 30 proposed a novel technique, synthetic minority oversampling technique (SMOTE), which is remarkable research in the area of oversampling used in many applications. They used the feature-based similarity to generate synthetic examples among minority examples. This method makes traditional classifier to enhance the decision boundary close to minority examples.

He et al. 31 proposed ADASYN approach using the weighted distribution of different minority class examples on the basis of their difficulty level of learning. The minority class instances that are harder to learn expect more synthetic instance generation in comparison to easier minority examples, so this approach reduces the biasness by shifting the decision boundary aiming the difficult minority instances.

Zhang and Li. 32 have evaluated the effect of oversampling on three traditional classifiers and concluded that the oversampling significantly influences the performances of classifiers and, thus, is helpful in classification of imbalanced datasets. They have also proposed random walk oversampling approach that takes less time to generate synthetic samples than SMOTE because from standard deviation and RWO, mean is calculated by the use of minority data.

Wang et al. 33 proposed a combination of an oversampling SMOTE, an optimization technique particle swarm optimization (PSO), and classifiers C5, 1-nearest neighbor, and linear regression that improves performance of the classification over imbalanced dataset of breast cancer survival for 5 years. They found that the hybridization of SMOTE, PSO, and C5 brought best results for this case.

An extension of SMOTE named SMOTE-IPF is proposed by Sáez et al. 34 that considers borderline example and noise as important factors with class imbalance. They used the iterative partitioning; IPF noise filter to handle the SMOTE-generated noise occurred in creating synthetic data. This approach makes boundaries of classes clearer.

An inverse random undersampling strategy is proposed by Tahir et al., 35 and in this method, inverse undersampling is performed to get many training datasets. Then, for an individual training set, a decision boundary is identified that separates the minority class from the majority class. This leads to decide a common and complex boundary in the combination phase; this is very applicable in multi-class classification.

Wong et al. 36 proposed an undersampling approach for large imbalanced datasets, where fuzzy logic is used to select samples from the majority class. Then, an evolutionary computational model of cross-generational elitist selection, heterogeneous recombination, and cataclysmic mutation (CHC) is employed to shrink the majority class by undersampling. Classification of this modified datasets is then performed by support vector machine (SVM).

So data balancing techniques alter the original distribution of data to achieve better classification for imbalanced datasets. Various sampling strategies are used to balance the data, either to undersample large class or oversample the small one or to use the combination of both. Table 1 provides a tabular look of these data balancing techniques with their key features.

Data balancing algorithms.

PSO: particle swarm optimization; SMOTE: synthetic minority oversampling technique. ROSE: random over sampling examples; RWO: random walk over; IPF: iterative-partitioning filter; CHC: cross-generational elitist selection, heterogenous recombination, and cataclysmic mutation.

Algorithm modification

Xu et al. 37 extended the I-algorithm to E-algorithm to work with data imbalance. The “extended” and fuzzy rule induction is modified for imbalanced datasets. Comparison is done for I-algorithm and also E-algorithm by applying them to Duke Energy outage. The extended algorithm performs better for the majority and minority classes.

Antonelli et al. 38 performed comparison on three evolutionary fuzzy rule base classification approaches (EFC) for imbalanced datasets. First approach is an embedded feature selection and granularity learning that included the rule base generation method. The second EFC is an algorithm for genetic programming that creates rule base for the hierarchical fuzzy rule base classifier. The third EFC proposed by authors is a multi-objective evolutionary algorithm that is enhanced so that rule base and membership function parameters could be run simultaneously for a set of fuzzy rule base classifiers. The comparison summed up that the third approach is the best strategy for imbalanced classification.

García et al. 39 proposed evolutionary generalized instance selection by CHC (EGIS-CHC), an evolutionary based nested example learning approach to classify imbalanced data more appropriately. Accumulation of instances in Euclidean n-space is done to perform learning of nested examples in this method.

Improved K-nearest neighbor algorithms

AS-KNN approach is proposed by Yang et al. 40 For event tracking, they modified several nearest neighbor methods and then divided the sum of similarity in every each class with the total number of instances of every class from nearest neighbors. Then, the combination of nearest neighbor approaches reduces the performance variation for the event tracking system.

Different K values have been chosen based on adaptive K-nearest neighbor algorithm that was proposed by Baoli et al. 41 This approach is proposed to find correct categories in text data which many have imbalanced text corpus.

Tan 42 proposed a neighbor weighted nearest neighbor strategy to classify imbalanced text data. In this approach, small weights are allocated to the class having more instances, that is, the majority classes and large weights are assigned to small or minority classes having comparatively less number of data instances. This strategy balances the weightage of instances from both classes.

DragPushing for KNN (DP-KNN) is another method proposed by Tan. 43 In this method, features’ weights are increased or decreased to deal with misclassified data for that training errors are used to improve the KNN by drag and push operations using weights.

Wang et al. 44 presented a K-nearest neighbor method evidence theory. They brought new concepts of global frequency and local frequency estimation, in short GE and LE. This approach deals with class imbalance to a certain level without using re-sampling.

Liu and Chawla 45 suggested an improvement in the weighted K-nearest neighbor strategy for classification of imbalanced datasets. They introduced class confidence weights to find posterior probabilities for this issue. Class confidence weights were determined using mixture modeling and Bayesian networks.

DCM KNN 46 applies the traditional nearest neighbor approach on training data and decomposes them into misclassified and correctly classified data and finds a suitable nearest neighbor method for these sets. And for test data, this approach checks that to which set test instances will belong, misclassified or correctly classified, and then applies the appropriate nearest neighbor method.

Kriminger et al. 47 used the local geometric structure in data in a class algorithm named class conditional nearest neighbor distribution that diminishes the imbalance effect exist in data. The algorithm can be applied for different degrees of imbalance and also to perform with any number of classes. This approach facilitates to add new instances of training datasets.

Dubey and Pudi 48 proposed a KNN-based approach which considered distribution of classes for neighbors of any test instance. Initially, classification is performed by the KNN algorithm, and then, it is used to calculate the weight for all classes. This weighing strategy improves the classification performance for imbalanced data.

KNN’s hubness effect is discussed by Tomašev and Mladenić 49 that minority class instances are the reason for the major misclassification rate in high-dimensional datasets, whereas in small and medium dimensional datasets, misclassification occurs due to majority class instances.

Ryu et al. 50 proposed an HISSN method to predict cross-project defect. In such cases, class imbalance exists in source and target project distributions. In this approach, the KNN algorithm is used to learn local information, and global information is gained by applying naive Bayes approach.

Ando 51 proposed an instance-based learning approach with a model based on mathematics that improves the performance for training data. They designed a class-based weighting strategy to deal with class imbalance, and for these weights, they proposed a convex optimization technique to find out weight parameters.

Patel and Thakur 52 proposed a novel approach which is a hybrid of adaptive nearest neighbor concept and neighbor weighted approach. Large K and small weights are taken for the majority class, and small K and large weights are taken for the minority classes. Table 2 provides the short description of these previously proposed improved nearest neighbor approaches with their key features.

Improved K-nearest neighbor algorithms.

KNN: K-nearest neighbor.

Fuzzy and weighted variants of K-nearest neighbor

Prominent work has been done on fuzzy KNN approaches for imbalanced data. In general, fuzzy concept improves the performance of nearest neighbor classifiers by finding the membership of an instance into a class for normal or balanced data, so for imbalance issue, this could be helpful to use fuzzy membership concept with some strategy to deal with imbalance. These strategies could be alteration in K or some weighing applications. Some notable contributions in this research area fuzzy logic and its variants with K-nearest neighbor are discussed here. Fernández et al. 53 performed an analysis of the fuzzy rule–based classification system for imbalanced environment of datasets which use an adaptive inference system. Genetic algorithms were used to learn parameters of this adaptive inference system. They applied adaptive parametric conjunction operators for varying imbalance ratio and achieved better classification outcomes. This study is the extension work of Fernández et al., 54 where distinctive setups were concentrated for fuzzy rule–based classification systems keeping in mind the end goal to decide the most suitable model for imbalanced datasets. Moreover, they demonstrated the need to apply a re-sampling method; particularly, they found a decent conduct on account of the Synthetic Minority Over-Sampling Technique.

One fuzzy-rough algorithm is proposed by Han and Mao 55 that considers the existing fuzziness and roughness in data. They proposed a membership function in favor of the minority class to minimize the dominance of the majority class on the minority class and also defined an equivalent relation between instances of unknown classes and their nearest neighbors.

Liu et al. 56 proposed a fuzzy KNN approach to handle unevenly distributed categorical data that have bonds between attributes, classes, and other cases. A fuzzy-rough-based approach is proposed for KNN by Ramentol et al. 57 for imbalance in binary classes with six weight vectors. They have also designed indiscernibility relations to unite these weight vectors. The algorithm is applicable on datasets of different imbalance ratios.

An improved weighted algorithm is proposed by Patel and Thakur 58 in that large weights are assigned to small classes and small weight are assigned to larger classes, and when merging with fuzzy logic, the algorithm provides efficient classification results for imbalanced data.

One step ahead, an optimal fuzzy weighted nearest neighbor concept was proposed by Patel and Thakur, 59 and they have taken into consideration the advantages of both, the optimal weights and embedded fuzzy concept to achieve better classification results of imbalanced data.

Patel and Thakur 60 have proposed an approach which takes an adaptive concept of different K values for different classes to calculate more accurate membership of data into classes merged with fuzzy nearest neighbor. Their results show the improved classification results on various imbalanced datasets.

Very less number of fuzzy nearest neighbor approaches is applied for imbalance issue until now. It could be seen that modification in K, weights, and fuzzy concepts all together perform better for imbalanced data. In this research study, some of these combinations are proposed to classify imbalanced data with improved performance of nearest neighbor classifiers. Table 3 gives an instant look on these fuzzy and weighted KNN algorithms for imbalanced data.

Fuzzy and weighted KNN algorithms for imbalanced data.

KNN: K-nearest neighbor.

Cost-sensitive and ensemble approaches

Ensemble is the concept of merging different approaches intended to achieve the same objective with better accuracy and more reliable results. In the past year, many ensemble approaches have been proposed for classification of imbalanced data. Also, cost matrix plays an important role in classification. This section contains various ensemble techniques, including re-sampling, classifiers, and cost-sensitive approaches to learn from imbalanced datasets.

Zhou and Liu 61 studied the effect of sampling and other factors in training of cost-sensitive neural networks. These factors include undersampling, oversampling, SMOTE threshold-moving, and hard and soft ensembles. They concluded that threshold-moving and soft ensemble performs better for cost-sensitive neural networks. Also, cost-sensitive learning is convenient on binary data in comparison to multi-class data.

Nguyen et al. 62 proposed a feedforward neural network approach for imbalanced datasets. In this method, clustering is used to undersample the majority class instances with the concept of weighted cluster centers and its desired output.

Köknar-Tezel and Latecki 63 proposed a supervised learning-based oversampling approach that creates and puts synthetic instances into distance space directly. This strategy is very useful for data where general distance measures cannot be used and so SMOTE cannot be applied, for example, time series. Then, they used SVM for classification. This approach performed good on such cases.

Milaré et al. 64 proposed a hybrid evolutionary algorithm to deal with class imbalance issue. In this method, they developed various balanced datasets from minority class instances and a sample from the majority class. The machine learning approach induces rules and these rules are used to select classifier by applying evolutionary algorithm. This approach reduces the overfitting of oversampling and information loss occurred due to undersampling.

Chen et al. 65 proposed a Probabilistic Classification based on Association Rules (PCAR) to classify imbalanced data more correctly. PCAR performs changes in the pruning method, scoring procedure and rule sorting index of CBA to achieve such purpose.

Another associative classification algorithm for imbalanced learning by improving the scoring based on association (SBA) approach is proposed by Chen et al. 66 This improvement is done by combining the scoring with pruning of association rules in probabilistic classification based on associations (PCBA). Confidence is increased using undersampling and deciding different minimum support and confidence for rules of each class on the basis of distribution to adjust CBA for the forming of PCBA that also removes the pruning rules for the least error rate.

A review article on ensemble methods for classification of imbalanced data is written by Galar et al. 67 They developed taxonomy of ensembles for imbalance learning. They found that the use of ensemble technique performs well on imbalanced data using sampling and single classifier. With more classifiers, it becomes complex but yielding better performance for these unevenly distributed datasets. They also concluded that bagging and boosting approaches provide better classification of imbalanced data.

López et al. 68 conducted an analysis on the performances of data sampling and cost-sensitive approaches for learning from imbalanced data. After experiments, they came to the result that, in general, both strategies yield well and equal results for class imbalance and do determine the best among both; further data intrinsic characteristic analysis is needed.

Chen et al. 69 have carried out a study on the effect of different measures on classification of a dataset based on French bankruptcy and concluded that these measures rigorously affect the classification performance.

Wu et al. 70 proposed a random forest ensemble approach for categorization of imbalanced text data. This strategy contains feature subspaces that are stratified sampled and use SVM to split tree nodes. Stratified sampling is done to find out most important features for both minority and majority classes, and SVM in the learning tree model ensures the better classification of imbalanced text data.

Maldonado and López 71 proposed a cost-sensitive second-order cone programming SVM that is founded on linear programming SVM (LP-SVM) principle. For this, they relaxed the Vapnik–Chervonenkis (VC) bound conditions and maximized the margins directly with two margin variables for both majority and minority classes. By removing conic constraint, this method becomes less complex.

Shao et al. 72 proposed an efficient weighted Lagrangian twin support vector machine (WLTSVM). This approach constructs two proximal hyperplanes by using different training points. WLTSVM first performs graph-based undersampling to maintain proximity information and second Lagrangian twin support vector machine (TWSVM) is improved by applying weights to diminish biasness. Finally, they proved the convergence of the proposed algorithm.

Peng et al. 73 brought up a data gravitational based classification method for imbalanced data and called it imbalanced data gravitation-based classification (IDGC). Gravitation computing is done by a new amplified gravitation coefficient that consists of information about class imbalance and it causes strengthening and weakening of minority and majority class gravitation fields. In weight optimization, they defined the evaluation function to make it sure for parameters to be improved for class imbalance.

A cost-sensitive decision tree based on ensemble methods is proposed by Krawczyk et al. 74 Classifier is designed based on cost matrix, and to select a classifier, assignment of weights, an evolutionary algorithm is applied. Training of classifier is done in random feature subspace, and parameters of cost matrix are chosen from receiver operating characteristic (ROC) analysis. This optimization technique performed well on imbalanced datasets.

Qian et al. 75 proposed a novel approach based on re-sampling ensemble that performs oversampling for minority classes and undersampling for majority classes. Scale of re-sampling is decided on the ratio of min class and max class instances. Results show that the performance of algorithm correlated to the ratio of the number of records in classes and number of attributes. This performs well on the ratio above than 3.

Krawczyk et al. 19 have proposed an ensemble of three image segmentation approaches to detect malignancy for breast cancer even for early biopsy images. This approach is implemented with boosting and evolutionary undersampling to achieve balanced data.

Table 4 contains short description of cost-sensitive and ensemble algorithms.

Cost-sensitive and ensemble algorithms.

SVM: support vector machine; PCAR: Probabilistic Classification based on Association Rules; SBA: scoring based on association; PCBA: probabilistic classification based on associations; CBA: classification based on associations; IDGC: imbalanced data gravitation-based classification.

Feature selection and evaluation measures

Maldonado et al. 76 proposed a set of approaches in that feature selection is done by successive hold out steps based on the backward elimination method, for which measure of contribution is derived from balanced loss function. The intension of this work is to perform better feature selection and deal with imbalance issue in parallel.

Maratea et al. 77 suggested an enhanced SVM for imbalanced data classification and a modified evaluation measure for evaluation of classifiers for such datasets. To cope up with data imbalance, an asymmetric space is developed in class surroundings by applying the first-step approximation and appropriate kernel transformation. The proposed accuracy measure takes care of imbalance nature of data. The scenario is designed for binary classification.

Imbalanced data in WSNs

WSN has several applications in health care, agriculture, weather forecasting, forest fire detection, Internet of Things, and so on.78–82 Even when analyzing the routing protocols for WSN, there are many chances of imbalanced data being generated. Consider agriculture application using WSN.83–92 The sensors used in this application include soil moisture detection sensors, location sensors, humidity detection sensors, temperature sensors, optical sensors, electrochemical sensors, airflow sensors, and so on. If the sensors are collecting the temperature in a tropical country like African countries, most of the times, the temperature will be on a higher side. Hence, while analyzing the temperature data generated through these sensors, the balance will be titled toward high temperatures. Analysis related to lower temperatures in this case is difficult as the number of instances having low temperature is less. In this section, we discuss the work done by several researchers to handle imbalanced data in WSNs.

Yala et al. 93 evaluated WSN data from homes with various re-sampling methods like SMOTE-CSVM, CS-SVM, OS-CSVM along with soft-margin SVM to handle imbalanced data. They proved that SMOTE-CSVM and OS-SVM outperformed other state-of-art approaches. Also, they proved that OS-CSVM is marginally better than SMOTE-SVM when classifying using ubiquitous and binary sensors.

Asur S and Parthasarathy S 94 have used an ensemble classification model to detect rare events by handling of imbalanced data in WSN. They implemented their ensemble model on low-energy adaptive clustering hierarchy (LEACH), a cluster-based WSN architecture. Their approach yielded better accuracy and improved energy utilization.

Zhou H and Yu KM 95 used a model based on KNN and adaptive synthetic sampling (ASS), where KNN is used for imputation of missing values, and ASS is used for treating imbalanced data. Then, they used a feedforward network for prediction of products which are defective on industrial WSN generated data.

Radivojac P et al. 20 used machine learning approaches for detecting intrusion in WSNs. They used two approaches, namely, LEACH and unified network protocol framework (UNPF) for handling imbalance in the data generated by WSN sensors. Their experimentation shows that handling of imbalanced data using machine learning mechanism significantly optimized energy consumption of the WSN.

Yang H et al. 96 used naïve Bayes predictors in the decision tree algorithm at the leaf level for handling imbalanced classes generated by sensors in WSN. They have applied their approach at the training phase, and fine-tuning the prediction accuracy using weighted naive Bayes predictors at leaf nodes.

Yu J et al. 97 proposed a routing protocol based on clusters in WSN to handle imbalanced node distribution to improve the energy consumption. This approach uses energy-aware distributed clustering (EADC), a routing algorithm based on energy-aware clustering approach for non-uniform distributed nodes in WSN. The experimental results proved that their approach balanced energy utilization of nodes resulting in increased lifetime of network.

Tripathi M and Taneja A 98 applied cross-validation technique based on k-fold approach for handling imbalanced data in WSN. Then, random forest classifier is applied on the resultant data for classification on balanced data. Their experimentation results proved that classification of balanced data yielded better results when compared to classification of unbalanced data.

Rodda S and Erothi USR 99 studied the presence of imbalanced data in intrusion datasets from benchmark NSL_KDD. They used four prominent classification approaches to study the impact of imbalanced data on intrusion datasets using WSN.

M’hamed BA and Fergani B 100 proposed an updated version of multi-class weighted SVM model to deal with imbalance data problem in WSN. They gathered the data from three houses with different number of sensors and different layouts.

Lessons learned and approaches suggested for handling imbalanced data in WSN

In this section, we discuss about the lessons learned about handling of imbalanced data in WSN.

Very few works have concentrated on handling of imbalanced data in WSNs. When the data are generated by wide range of sensors, continuously, there is every chance that the data generated from some of these sensors may be discrete; thereby, generated data from those sensors can be sparse. This makes the dataset generated from these sensors imbalanced. Extraction of patterns from these imbalanced datasets can then become biased. To handle these kinds of situations, the following approaches, which were used by several researchers for handling data imbalance in traditional datasets, can be extended to WSNs.

(a) K-fold cross-validation: It is an approach used during training the machine learning algorithms. This approach re-samples the dataset during the training phase of machine learning. This approach splits the datasets into k different groups. One of these groups is considered as testing data and remaining groups are considered as training data. This approach gives equal priority to imbalanced or rare data also.

(b) Ensemble re-sampled datasets: This approach re-samples the dataset in such a way that the data which are scarce or rare will be oversampled. In this way, the overall data can be balanced, and the results achieved by machine learning approaches will be fair and unbiased.

(c) Reduce the weight of the attributes with higher presence and increase the weight of attributes with fewer instances: In this approach, every attribute is assigned a weight. To balance the data to get fair results, attributes which have more presence will be given more weights and attributes with less presence will be given less weight. In this way, imbalances in datasets can be handled efficiently.

(d) Cost-sensitive learning: This approach incorporates misclassification costs in data mining for minimizing the total cost. This approach avoids pre-selection of hyper-parameters adjusting them dynamically.

(e) Combined class methods: This approach uses a fusion of several methods to handle imbalanced data effectively. This approach can eliminate the noise in imbalanced datasets. This approach ensures that useful information will not be lost.

Conclusion and future direction

Imbalance is a very common issue in today’s scenario which causes severe deviation in the performances of traditional classifiers. This review article presents a thorough review on imbalance problem. Both, review and research, articles taken here give a deep insight into the imbalance problem and its various solutions. All considered contributions are systematically arranged in the manner so the differences could be easily understood. In this review, special emphasis is given to the improved KNN approaches proposed for classification of imbalanced data. Nearest neighbor approach is chosen for its simplicity. This literature study facilitates a background that assists for further research in this area and for improvement of new nearest neighbor-approaches. Also some open issues have been discussed here. In summary, the research community should consider the following directions when further developing solutions to imbalanced learning problems:

Introduce solution for imbalanced learning for multi-class problem which will take account of different class relationships.

Reflect on the structure and existence of situations of minority classes to gain a better understanding of the cause of learning problems.

Introduce new approaches based on the specific organized existence of these problems for binary problem and multi-class problems.

Propose new solutions for multi-instance and multi-label learning that are based on specific structured nature of these problems.

Proposed efficient classification techniques for WSN in various scenarios.

This article shows that the vast field of imbalanced learning needs the research community’s attention and intensive growth. There are still many fields that are untouched where this problem exists and solution is also required.

Footnotes

Handling Editor: Mohamed Elhoseny

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT; No. NRF-2018R1C1B5045013)