Abstract

With the growing popularity of fifth-generation-enabled Internet of Things devices with localization capabilities, as well as on-building fifth-generation mobile network, location privacy has been giving rise to more frequent and extensive privacy concerns. To continuously enjoy services of location-based applications, one needs to share his or her location information to the corresponding service providers. However, these continuously shared location information will give rise to significant privacy issues due to the temporal correlation between locations. In order to solve this, we consider applying practical local differential privacy to private continuous location sharing. First, we introduce a novel definition of

Keywords

Introduction

With the development of fifth-generation (5G) wireless communication technology, 5G-enabled Internet of Things (5G IoT) with ubiquitous connection is expected to implement various applications. 1 Benefited from connections and mobility of 5G IoT devices, location-based applications have been significantly increasing by the growing popularity of 5G IoT devices (e.g. smartphones and self-driving vehicles) with localization capabilities. In order to enjoy relevant services, users have to contribute their locations to the corresponding service providers or third parties. For example, if one wants to access services of mobile edge computing (MEC) which is a crucial part of 5G technology, then he or she needs to share his or her location information to the MEC service provider. However, location information gives rise to significant privacy issues.2,3 Since location information itself is sensitive and it can also be easily associated with other sensitive information, this will result in user privacy leakage.

In a recent decade, a large body of location privacy preservation mechanisms (LPPMs)4,5 have been emerged in the setting of location-based service (LBS) or location sharing where a user shares his or her location to an unknown/untrusted server or other party to enjoy some services while guaranteeing location privacy of the user. One solution is cryptography-based LPPM to prevent revealing individual locations.

6

However, such method tends to be computationally expensive. Most LPPMs have concentrated on location obfuscation that transforms the real location of individual to a region (e.g. location generalization)

7

or a perturbed location (e.g. location perturbation).

8

The commonly used location obfuscation methods depend on syntactic privacy models (e.g.

Differential privacy 9 is a state-of-the-art, stringent and provable privacy notion irrelevant to an adversary’s background knowledge and computing power. There are a large number of works adopting differential privacy to location privacy preservation.1,10 Most of them, for example, Al-Hussaeni et al., 11 Qardaji et al., 12 and Fanaeepour and Rubinstein, 13 have applied the traditional differential privacy (or centralized differential privacy, CDP) 9 on location or trace data for data publishing or aggregation with a trusted server. As privacy matters are raised frequently, local differential privacy (LDP) 14 has been considered in many application fields due to its more stringent and practical privacy. In LDP model, a user does not trust anybody else and requires that his or her own data be moderately protected before sharing them. In other words, the user’s exact data only allowed to be accessed by himself or herself. From the perspective of model, LDP is more suitable for the setting of private location sharing. Randomized response (RR) is the principal mechanism of LDP and suitable for perturbing classified data (e.g. discrete location data).

Adopting LDP for location privacy preservation is still in its infancy. Recently, few works15,16 have applied LDP on location data for data aggregation and without considering the temporal correlation between locations. More previous works, for example, Andrés et al. 8 and Bordenabe et al., 17 have adopted LDP model in the setting of private location sharing. Andrés et al. 8 proposed a novel privacy notion of geo-indistinguishability for LBSs, which ensures that any two locations in a circle are geographically close. In fact, geo-indistinguishability is a special notion of event-level differential privacy, and the neighborhood is defined with Euclidean distance. It is implemented by planar Laplacian mechanism to inject noise drawn from a two-dimensional Laplace distribution into a real location. Bordenabe et al. 17 proposed an optimal geo-indistinguishability mechanism to improve the utility by linear programming techniques. However, the notion of geo-indistinguishability is vulnerable to inference attacks if an adversary can learn the correlation between locations over time.

Motivations and contributions

In this article, we study the problem of continuous private location sharing under LDP. As shown in Figure 1, a user who has a set of continuous sensitive locations generated by his or her mobile device shares on-the-fly the locations with an untrusted server to enjoy LBSs, while guaranteeing his or her location privacy at any timestamp. A user’s real locations are only known by himself. The perturbed locations generated by the privacy preservation mechanisms are visible to the untrusted server and adversaries. To this end, we apply LDP to private continuous location sharing under temporal correlations, which cannot be hided from adversaries and hence are assumed to be public. Compared to the existing most similar work by Xiao and Xiong 18 which proposed a traditional differential privacy–based location privacy preservation framework for continuous location sharing, our main contributions are summarized as follows.

Continuous private location sharing.

First, we propose a novel definition of

Second, we present an efficient generalized randomized response (GRR) to achieve

Third, we experimentally evaluate the performance of GRR over real-world datasets through the continuous location sharing framework and the results show that GRR provides superior location utility, compared with PIM.

Related work

Location privacy

A large number of works have been conducted on preserving location privacy. In general, location privacy preservation method can be classified into three categories: cryptography, anonymization, and obfuscation.

19

One of the most widely used definitions of location privacy is the notion of

The other significant solution for preserving location privacy is location obfuscation (e.g. spatial cloaking), which transforms and maps a user’s real location to a region or one or more perturbed location. Andrés et al. 8 proposed a novel notion of geo-indistinguishability for location privacy, which protects a location within a small radius and requires the closer any location pairwise are, the more indistinguishable they are. Nevertheless, such location perturbation mechanism may not be rational in the setting of continuous location privacy due to not considering the correlation among locations. To prevent loss of privacy due to the correlation between locations in the trace, Chatzikokolakis et al. 21 proposed a predictive differential privacy mechanism to reduce privacy budget consumption rate for trace obfuscation. Similarly, Ma et al. 22 proposed an AGENT mechanism for continuous location privacy preservation by introducing R-tree to achieve the reusability of previously perturbed locations. The two mechanisms satisfy the notion of geo-indistinguishability and make use of the previously reported locations to save privacy budget for obfuscated trace. However, the correlation of the two works considered is only used to reduce the privacy budget for improving the utility, not for preventing inference attacks.

Many works have adopted Markov model for modeling users’ mobility and reasoning their locations or traces. Ardagna et al. 23 proposed an approach for preserving sensitive location information in continuous LBSs. The approach first modeled the characteristics of a user’s moving preference by Markov chain and then developed a simple obfuscation method based on his characteristics. Shokri et al. 24 presented a quantifying location privacy framework, which provides a Markov model for reconstructing prior knowledge (user mobility) of an adversary to be used in various attacks. In the most similar work 18 to ours, Xiao et al. proposed a PIM for single-user location sharing under temporal correlations modeled by Markov Chain. Hence, we consider adopting the commonly used Markov model for modeling users’ mobility in our work. In fact, PIM is similar to Laplace mechanism and suitable for perturbing numerical data. Nevertheless, since Markov model is a discrete state model and RR is a perturbation mechanism for categorical (discrete) data, PIM based on noise addition will bring more loss of utility than RR in modeling users’ mobility based on Markov model.

Local differential privacy

Differential privacy is the state-of-the-art, stringent and provable privacy technique. It was initially proposed to protect aggregated statistics in the trusted server setting, which is regarded as centralized differential privacy (CDP). But recent works have been focusing on LDP with stronger privacy preservation, since it requires that a user locally perturbs his data and sends the perturbed data to an untrusted server. In recent years, Google has shown its applicability through RAPPOR, 25 which is a practical LDP solution for protecting users’ settings in the browser. Hereafter, Apple 26 and Microsoft 27 have also applied LDP to iOS10 and Windows 10, respectively. LDP has become the most promising privacy preservation technique.

Different from CDP, which basically uses Laplace mechanism and exponential mechanism, LDP mainly adopts randomized response (W-RR)

28

as the fundamental perturbation mechanism, which is first proposed by Warner in 1965. However, since the W-RR is only suitable for binary variables, Kariouz et al.

29

proposed a staircase mechanism, also known as

Most works of LDP, for example, Kairouz et al., 29 Bassily and Smith, 32 and Nguyên et al., 33 have concentrated on the study of locally differentially private simple data, namely, single numeric or categorical attribute. Recently, some researches have applied LDP for preserving more complex data. Qin et al. 34 have applied LDP on set-valued data which is a set of items (values) for heavy hitter estimation. Wang et al. 35 proposed an optimized LDP mechanism for set-valued data aggregation. Several recent works have adopted LDP to protect location privacy. Chen et al. 15 first applied LDP to solve the private location (spatial) data aggregation problem. Sei and Ohsuga 16 proposed a Bayesian-based multiple dummies method against untrusted server, which satisfies LDP. The method can also be used to protect single location privacy. Our contribution is to extend LDP to the setting of continuous location sharing for single user whose locations are temporally correlated. To our best knowledge, we first apply LDP to continuous location sharing. We will achieve higher utility in practice while guaranteeing location privacy of users over time.

Preliminaries and privacy definition

We use normal letters, bold lowercase letters, and bold uppercase letters to denote scalar variables, vectors, and matrices, respectively. The summary of some significant symbol notations is shown in Table 1.

Summary of some significant symbol notations.

In order to represent a location for Markov model, we use state coordinate system. Space domain is denoted by

Coordinate systems.

Noting that they can be interconverted, we skip the interconverted process. Over time, a trajectory can be represented by a bunch of locations,

Mobility and inference model

In our problem setting, a user’s real locations are only observable by himself, but not by others. The perturbed locations generated by the privacy mechanism are observable to the service provider who might be untrusted. From the perspective of an adversary, the above-mentioned process is a Hidden Markov Model (HMM).

Assume that a vector

where

Transition probability

Emission probability

Given a real location

Inference and evolution

The inference process at each timestamp can be availably derived by forward–backward algorithm in HMM.

Combining the characteristic of LDP model, we consider two different types of

LDP and RR mechanism

The LDP was proposed for the local setting in which there is an untrusted server which is not allowed to access the private data. In general, users only share their information to service provider for enjoying the corresponding services. And yet, users do not trust anyone except themselves and tend to guarantee their privacy at the root that the shared information has been properly sanitized before sharing it themselves. LDP requires that no matter which data value an individual user possesses, the data collector (e.g. an untrusted server) should get almost identical information. Therefore, an adversary with any background knowledge cannot distinguish the individual real value by accessing to the sanitized information. Formally, the definition of LDP is given below.

Definition 1: (Local differential privacy)

A randomized algorithm is

where

LDP provides stringent privacy preservation in local setting, but its utility is bounded by the privacy domain. If the privacy domain size

Randomized response

The RR is the fundamental mechanism to achieve LDP. We present the RR by a simple example that is given below. Given a binary attribute, one report a real value with probability

Composability

In our problem setting, we need to only release one perturbed location at a timestamp, so the sequential composition property is unavailable. However, for multiple releases at a timestamp, the composition property is applicable. In addition, privacy guarantee for the whole trajectory (e.g. release a set of perturbed locations

-location set

To capture the temporal correlations between locations, we introduce the concept of

For instance, assuming that a prior probability

Privacy definition

The nature of

Definition 2: (

-Local differential privacy)

At any timestamp

The above definition makes the real location protected within the

Proposed framework

GRR mechanism

The GRR mechanism is a general version of the RR mechanism. In a particular case where the value is binary, that is

Algorithm 1 shows the pseudocodes of GRR mechanism for location. Given privacy budget

Theorem 1

Algorithm 1 satisfies

Proof

This completes the proof.

Continuous private location sharing framework

Location obfuscation algorithm is shown in Algorithm 2. At any timestamp

Since the drawback of

Note that the surrogate method does not leak any information of the real location. Because

Theorem 2

At any timestamp

Proof

In our method, although a drift happens, the real location is still in

The above algorithm is single private location sharing. Here, we show the framework of continuous private location sharing in Algorithm 3. To enable the framework, we first initialize the initial prior probability

Noting that the two

Privacy and performance analysis

The privacy analysis is presented in Theorem 2. We will present the utility of GRR.

Theorem 3

The upper bound of error for Algorithm 2 is

Proof

Moving model construction and location inference

We discuss the construction (learning) of Markov model. Maximum likelihood estimation and expectation maximization method in HMM can be adopted to obtain the transition matrix. However, depending on the power of adversaries, two typical

Inference methods rest with specific perturbed algorithms. We implement the inference for GRR in the light of the formula (1) and calculate the probability

Experimental evaluation

The experimental evaluation is described in this section. All algorithms are implemented in the MATLAB R2018a on a PC with 3.6 GHz Intel Core i3 CPU and 8 GB RAM. We compare the performance of GRR with PIM that is the most relevant to our work.

Experimental setup

Datasets

We use a public real-world Geolife dataset

36

as the experimental dataset. The dataset was gathered from 182 users during the 3 years. It recorded a wide range of users’ mobility behaviors, represented by a batch of records including timestamp, latitude, and longitude. The locations data were updated at relatively high frequency, ranging from 1 to 60 s. We extract all trajectories within roughly third ring of Beijing (116.3017E~116.4577E, 39.848N~39.9680N) to train the Markov model. Note that the area is divided into cells of

Metrics

We evaluate the performance of GRR and PIM in following metrics. On the one hand, since our privacy definition is related to privacy budget

Evaluation results

1. Performance over time

In order to present the performance of a perturbed mechanism while a user moves over time, including how

Real trace.

We evaluate both GRR and PIM at each timestamp with

Performance over time under public matrix: (a) PIM perturbed trace, (b) GRR perturbed trace, (c) size of

Performance over time under personal matrix: (a) PIM perturbed trace, (b) GRR perturbed trace, (c) size of

Size of

Distance over time with public and personal matrix is shown in Figures 4(d) and 5(d), respectively, from which we can figure out that GRR provides better utility than PIM. The distance between perturbed location and real location over time bounds within a few hundred meters.

In order to present the GRR perturbed trace under different parameter

2. Parameters influence

GRR perturbed trace with public matrix under different parameter

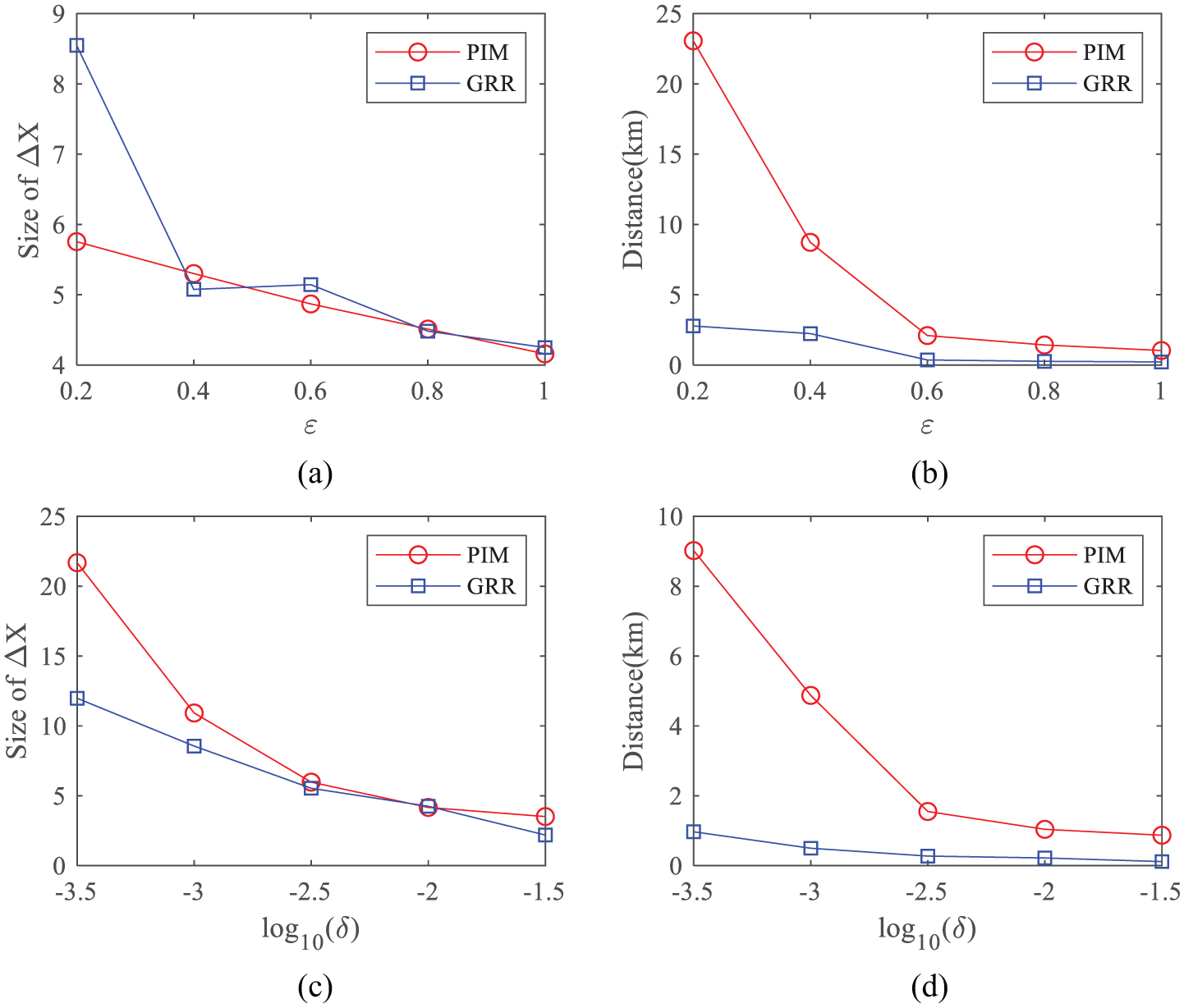

We further evaluate the overall performance and parameters influence. Each evaluation runs 10 times and the average is given. The performances are shown in Figure 7 (public matrix) and Figure 8 (personal matrix). The default parameters values are

Different Markov model. Comparing Figure 7 on public matrix and Figure 8 on personal matrix, we can perceive the influence of different Markov model. GRR can achieve better utility than PIM in both public and personal model. And it can achieve better utility in personal model than in public model.

Parameters influence under public matrix: (a)

Parameters influence under personal matrix: (a)

Conclusion and future work

This article investigated locally differentially private continuous location sharing problem. Specifically, we proposed a novel definition

As future work directions, we will be interested in adopting LDP to general continuous data sharing and continuous location aggregation problems. In addition, we will investigate the influence on the proposed framework in other more advanced mobility model.

Footnotes

Handling Editor: Feng Ye

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by National Natural Science Foundation of China (Grant No. 61872431), Major Technical Innovation Project of Hubei (Grant No. 2018AAA046), and Applied Basic Research Project of Wuhan (Grant No. 2017060201010162).