Abstract

Human activitiy recognition deals with the integration of sensing and reasoning aiming to understand better people’s actions. Moreover, it plays an important role in human interaction, human–robot interaction, and brain–computer interaction. When these approaches have to be developed, different efforts from signal processing and artificial intelligence are considered. In that sense, this article aims to present a concise review of signal processing in human activitiy recognition systems and describe two examples and applications both in human activity recognition and robotics: human–robot interaction and socialization, and imitation learning in robotics. In addition, it presents ideas and trends in the context of human activity recognition for human–robot interaction that are important when processing signals within that systems.

Introduction

Current trends in computer science and the integration of signal processing in embedded devices have been rapidly growing. Interestingly, mobile devices and the miniaturization of sensors allow research and development in the field of context-aware systems for different domain applications. Particularly, the identification of daily activities carried out by people is of big importance. For instance, applications related to this activity recognition are relevant in pervasive and mobile computing, surveillance-based security, context-aware computing, robotics, health, and ambient assistive living, 1 among others.2–4

In this regard, human activity recognition (HAR) deals with the integration of sensing and reasoning, aiming to understand people’s actions better. 5 The goal of HAR is to classify and identify activities based on the collected data from different devices such as sensors or cameras, mainly processed by machine learning methods and pattern recognition techniques.3,6

HAR plays an important role in human interaction, human–robot interaction (HRI), and brain–computer interaction (BCI). It results significant because it provides information related to the profile of a person, its personality and psychological state, and its behavior with the environment. 7 Directly or indirectly, HAR allows building more robust and complete tasks to understand and implement computer interactions with humans.

Depending on the level of recognition, activities can be classified as simple and complex tasks. Simple activities consider daily life actions that most of people perform easily, such as “walking” or “running,” and they are relatively easy to recognize. 7 Complex activities are typically composed of simple activities and they are considered high-level or context tasks, for example, “drink a cup of coffee” or “cooking sushi.” 7 Normally, complex activities are very difficult to recognize, even if the activity can be decomposed into simple tasks.

In order to recognize activities, HAR systems can be developed under the following approaches:2,8,9 sensor-based systems, vision-based systems, acoustic-based systems, and multimodal. Each approach has advantages and weaknesses 9 as well as challenges such as the number of sensors used, the localization of the devices, the organization of the data retrieved, the signal processing methods used, and others. From the point of view of signal processing, in general, HAR systems perform the following steps: data acquisition, windowing, feature extraction, feature selection, building activity models, and classification.

In that sense, this article aims to present a review on the different steps of signal processing in the context of HAR. It provides two examples and applications in signal processing dedicated to HAR and robotics: (1) HRI and socialization, highlighting the importance of recognizing human activities for the context of robots and how those interact in a social way with humans; and (2) imitation learning, as one high-level task in robots to extract knowledge from humans to learn and later to recognize and to perform demonstrated actions for better interaction between humans and robots.

There are many surveys on HAR,4,9,10 HRI,11,12 and imitation learning. 13 Hence, to our knowledge, there is no review that focuses on the application of HAR in these fields.

Signal processing in HAR

The intention of this section is to provide a general overview of signal processing in HAR. In that sense, we adopted the methodology reported in Bulling et al. 14 and complemented in Ponce et al., 5 namely, activity recognition chain (ARC) approach, that describes the full process in HAR. The HAR methodology is shown in Figure 1. Each step is explained below.

Block diagram of the main steps in a human activity recognition system.

Data acquisition

The source of data is relevant in HAR. Particularly, there are different types of sources: inertial sensors, microphones, infrared sensors, video, Kinect, brainwave-based helmet, force sensors, smart-watches, and mobile phones, among others. In any case, different challenges must be faced to acquire data and extract relevant information from them. These challenges are related to signal processing and inherent to HAR. Challenges specific to HAR are the selection of number and characteristics of the subjects mainy in real-world environments. Regarding sensors, we can mention selection of type of sensors, location of the sensors, calibration, synchronization of several sensors, and noise. 2

There are several issues related to determining the source of data. 2 There are multiple affordable modalities and types of sensors well-suited for HAR as mentioned above: wearable and body sensors, smart phones and bracelets, cameras, depth sensors, motion capture sensors, ambient sensors, and so on. An important challenge is which type of sensor or combination of sensors to select for a given activity recognition task. In addition, the localization of them (in the body, in the environment, in devices, etc.) is another important task to be performed beforehand. Each activity recognition system can rely on different sensor signals with particular settings and locations.

Data synchronization, data pre-processing, and data inconsistency are inherent challenges during data acquisition stage.15,16 Since multiple heterogeneous sensors are used for activity recognition, data synchronization and data buffering are always a challenge given the diverse sampling rates, sensing periodicity, and sensor platforms.

Data inconsistency is also a common issue to be addressed due to wireless communication, deprivation (e.g. sensor failure), limited spatial coverage, imprecision from individual sensors, and uncertainty when features are missing. 17 Multi-sensor fusion has been presented as a solution to deal with individual sensor issues. 17

Several studies 18 have shown that noise in data in particular affects the performance of the overall system, falling in poor performance of activity recognition. This is not only a concern to HAR developers but also, in general, to people working on signal processing. Furthermore, real-world applications of HAR consider noisy data caused by several reasons, such as device miscalibration, dead or blocked devices, localization of devices (body and environmental), noisy environments, and interleaved activities.

Windowing and feature extraction

Once the data are acquired by the sensors, this information has to be processed. To do that, the sensor signals can be divided in variable or fixed time windows,2,3 and time windows can also be overlapping or disjoint. 2

Fixed windowing is the most reported approach in HAR.2,3,5,14,18 At each window, there is information contained that is then employed to infer a human activity. Actually, this information from window to window should be compared among them to determine the right activity. However, comparing segments of sensor signals contained in different windows is considered complicated. Instead, representative information is measured inside windows, so they can be quantitatively comparable. 2 Particularly, the latter is known as feature extraction.

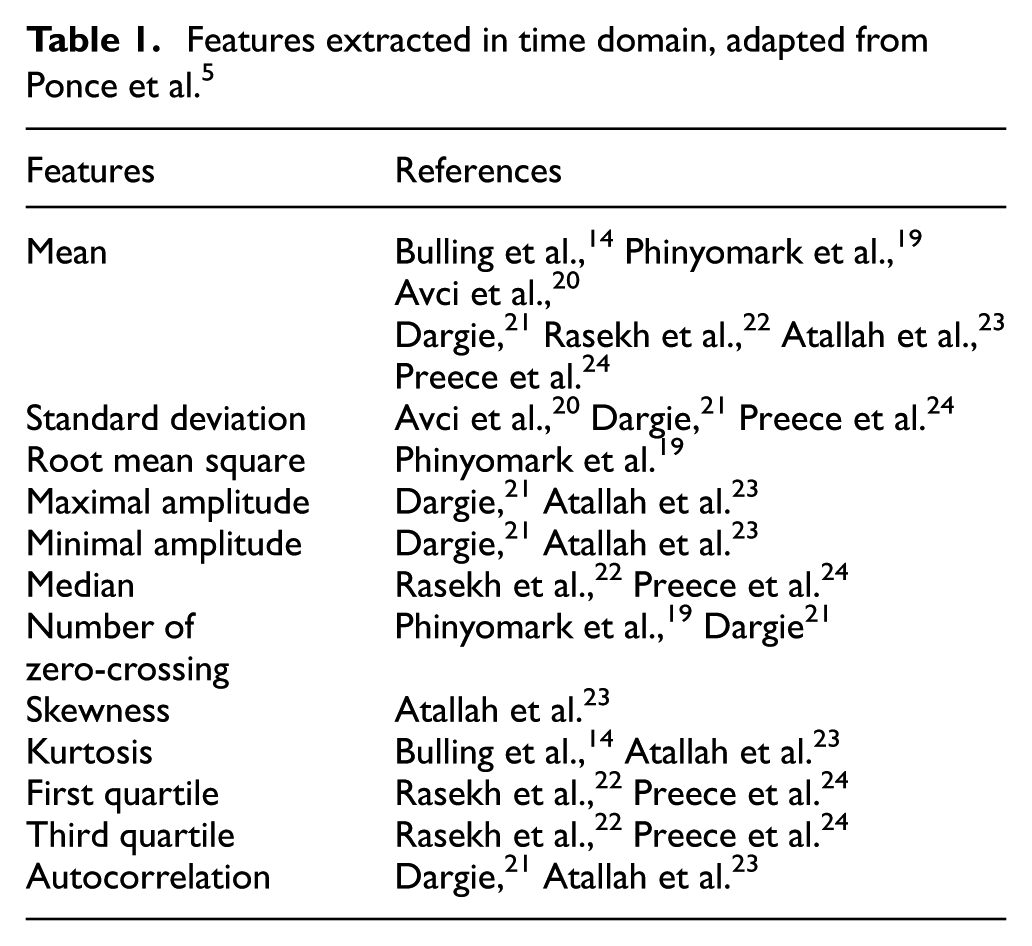

There are different features extracted for HAR systems reported in the literature. Table 1 summarizes common time-domain features and Table 2 lists common frequency-domain features. As noted, these measurements are mostly statistical quantities that contain the overall information of the signal in a window.

Features extracted in time domain, adapted from Ponce et al. 5

Features extracted in frequency domain, adapted from Ponce et al. 5

Although features enable the system the capability to model and detect activities in a more efficient way than in raw signals, conventional feature extraction has several drawbacks identified in Wang et al.: 10 (1) feature extraction relies on human expertise and implies longer time to build an activity recognition system; (2) shallow features (see Table 1) can be used to recognize low-level activities, but it is difficult to recognize high-level activities; and (3) conventional approaches need labeled data to train models in contrast to deep generative networks which train models from unsupervised examples. For instance, deep learning can alleviate the effort on designing features.

Feature selection

Once the feature extraction is done, there might be some issues: (1) redundancy in features and (2) too many features computed. In the first case, there might be different features that are redundant so they can influence positively or negatively in the classification of activities. In practice, there are different methods that are used for selecting a suitable set of features. For example, literature reports the usage of Bayesian information criterion, minimum description length, minimum redundancy and maximum relevance, and correlation-based feature selection, among others. 2

Not only the redundancy but also the large number of features can affect the recognition accuracy of activities. In that sense, feature reduction, such as principal component analysis, 5 is employed to determine how the set of features can be minimized in the number of elements, or to compose another subset with less features.

At the end, feature selection and reduction are applied to the feature extraction in order to get a new dataset of relevant features.

Building activity models and activity classification

Next, an activity model has to be designed. This model contains the information and the methods to classify the sensor signals for determining the different activities performed by a human.

In this regard, machine learning has been widely used in HAR systems to build patterns and to analyze, describe, and predict data. 2 Particularly to HAR, supervised learning is applied and implemented by using a set of training data with instances (observations from sensors) and labels (e.g. activities) that allow the system to learn patterns for classification.25,26 At the end, these learning methods build activity models.

Literature reports different supervised machine learning methods employed in HAR:2,3,5,27,28 stochastic gradient boosting, AdaBoost, decision trees,

29

rule-based classifier, single rule classification, support vector machines (SVM), random forest,

Finally, these models are employed to recognize the activities performed by the users. Since windows are normally small in contrast to the time that an activity is performed, then a classical methodology to summarize the estimated activity per window is the majority voting approach. 2 It considers to output the most frequent estimated activity in a sequence of windows. This technique greatly improves the accuracy in recognition, as shown in Ponce et al. 5

HAR in robotics

The interest in HAR research is growing rapidly in the past decades due to the great versatility in the application of action, activity, and behavior understanding. In different surveys of HAR, Lara and Labrador 2 and Aggarwal and Ryoo 32 discuss the applicability of HAR in medical, security, entertainment, military and tactical, and human interface systems.

In the healthcare and medical domain, HAR enables monitoring patients, elderly and/or children. HAR is an important task for assistive living applications where daily and abnormal activities are identified to aid elderly people. Avci et al. 20 also reviewed several medical applications of activity recognition for healthcare, well-being, and sports systems.

Recognition of human activities is necessary in security systems for intrusion detection, public places surveillance, abnormal activity detection, and video analysis. In Vishwakarma and Agrawal, 33 authors provide a survey for activity recognition in video surveillance. The authors identify diverse applications of HAR for surveillance, namely, behavioral biometrics, content-based video analysis, automatic recognition of abnormalities, interactive applications, learning, and simulation environments.

Tactical and military applications are closely related to the security applications. HAR can be useful for training of military soldiers and public service personnel, soldiers’ activity recognition, location, and health condition.

All these previously mentioned applications can be considered from the robotics point of view since robots must recognize human activities in order to socialize with persons34–36 and for imitation learning purposes. 37 Robots perform many tasks to improve human life in health care, security, entertainment, military, and tactical domains. In different situations, robots must perceive, represent, recognize, and understand from simple human actions and gesture to complex activities and behaviors. Ke et al. 38 described important actions and behaviors to be recognized in vision systems. As robots are intended to interact in surveillance, assistive health and safety, and entertainment situations, similar human activity and behaviors must be identified and understood by robots. Table 3 summarizes activity recognition of one person, multiple people, and crowd behavior for different application systems.

Human activities recognized in different application domains.

HAR: human activity recognition.

Krüger et al. 37 analyzed action recognition from three points of view: computer vision, robotics, and artificial intelligence (AI) community. They discussed the interpretation and recognition of actions in robotics for robot movement and imitation learning. Other authors highlight the importance of HAR in robots for a successful HRI and socialization.35,39,40

In the rest of the article, we analyze two applications in which HAR is indispensable from the robotics’ point of view: (1) HRI and socialization and (2) imitation learning.

HRI and socialization

Goodrich and Schultz 34 define that the HRI problem is to “understand and shape the interactions between one or more humans and one or more robots.” The authors distinguish applications that require mobility, physical manipulation, or social interaction. Industrial and agricultural areas also benefit by applying HRI.11,12 Action and activity recognition is relevant in these categories of applications, but they are crucial for robots’ social interaction.

Depending on the autonomy of the robot, the interaction with a human can vary from direct control and teleoperation to dynamic autonomy, in which robots interact as partners, peers, or assistants. In the full range of possible scenarios of HRI, action and activity recognition can be useful—from simple command understanding for robot control to peer-to-peer interaction.

Social robots must be able to perceive and interpret the world as humans do. 40 Service robots interact directly with people, so it is important to find natural and easy-to-use interfaces. 36 Fong et al. 40 emphasize that social robots’ perceptions need to be human-oriented: “optimized for interacting with humans and on a human level.” 40 Depending on the application nature, robots must be able to track human bodies, faces, and hands. They need to represent, recognize, and interpret speech, facial expressions, gestures, and human activity. Human-oriented perception implies detecting and understanding, according to the needs, from simple commands with gestures or actions to complex activities and behaviors.

According to Goodrich and Schultz, 34 one important robot design decision is the way information is exchanged between a human and a robot. The primary media of communication between human and robots are based on three senses: seeing, hearing, and touching. 34 Despite the differences of the nature of interaction between human and robot, in all scenarios, the robot needs sensory inputs to capture movements and intelligence to understand the meaning of those movements. 37 Some applications are based on speech recognition, but in this section, we focus on human action and activity recognition performed by a robot. We present an overview of representative works that use different types of perceptions.

Vision-based approaches

Vision-based approach is the most frequently used for activity recognition by robots. A good example of action recognition for HRI is robots used for assisted living. Robots monitor persons in daily life and identify their activities and abnormal events, and improve human life particularly in the case of older adults. Assisted robots usually rely on vision systems. Stavropoulos et al. 41 present a HAR method for assisted robots based on EigenJoints descriptor. 42 The authors use information extracted from depth input video from color and depth (RGB-D) cameras. 43 Assisted robots have also been used as therapy tools for autism. 44 In cases of autism, the robot must sense and recognize body parts and motion of the child in order to imitate the movement accurately. 44 Similarly, Fasola and Mataric 45 present the design, implementation, and evaluation analysis of an assisted robot that motivates elderly users to engage in physical exercise. They used a vision-based robot to recognize arm gestures and poses in real time.

In order for a robot to interact with humans, it must understand humans’ intentions, behaviors, and even emotions. This recognition and interpretation is performed having robot as an observer enabling it to react if it is threatened by human actions or has an adequate social response to human behavior. Xia et al. 46 referred to this problem as robot-centric activity recognition and presented a framework and algorithm to analyze RGB-D videos captured by the robot while interacting with humans in daily living environments.

Sidobre et al. 47 presented a solution to exchange objects between a human and a robot based on motion capture technology. They show that in order to exchange objects between a human and a robot in a natural way, the robot must be capable to adapt to human motion and gasp in real time. Robot motion has to be executed in human-aware way to avoid collision and ensure human safety.

Gesture recognition is an important task in HRI that requires recognition of motions of human body parts. For long time, gesture recognition has been used to allow HRI. 48 For instance, Mitra and Acharya 49 identify the different body parts involved in gesture identification, for example, (1) motions of hands and arms are helpful for interpretation of sign language, (2) head and face motions are related to gestures about nodding, or (3) body motions as a whole can be useful for tracking or analyzing people moving or interacting. HRI using gesture recognition can be found when developing medical hearing impaired devices and automatically monitoring emotional states and stress levels in patients, or when monitoring drivers’ alertness and drowsiness, as well as lie detection. 49

Sensor-based approaches

There are two main approaches for gesture recognition: computer vision techniques and cameras, 50 and sensor-based approaches.51,52 As an example, Rautaray and Agrawal 50 identified hand gesture recognition as a core application for controlling and programming robots, such that these can imitate motions and interact with humans.

A wide variety of sensors are used to perceive face, hands, and body motion. 53 Sensor-based approaches are also commonly used for HAR. Zhu and Sheng 54 proposed a robot-assisted living system for elderly people, patients, and disabled. Their method is based on the fusion of wearable inertial sensors to achieve activity recognition combining neural networks and hidden Markov models. A new robot-automated semantic mapping system was presented in Sheng et al. 55 based on wearable sensors. The aim of the system is to enable a robot to build metric maps and identify furniture. Wearable motion sensors attached to the human body were used to recognize several daily human activities. The authors avoid using vision approaches to overcome their high computational cost, environment, light, and occlusion possible problems.

Acoustic-based approaches

Although vision approaches are most commonly used by robots for activity recognition, sound produced by humans or human–object interaction provides rich information about ongoing context, events, and behaviors. 56 Acoustic signals can be used as a complement of vision information or as the main source of data for activity recognition. Maxime et al. 56 address the problem of sound and recognition of domestic events with humanoid robot NAO. 57 They performed a comparative analysis of several classification methods in order to identify events occurring in the domestic environment. Stork et al. 35 developed a method to classify human activities from the sounds of them in order to enable a robot to infer those activities. They proposed the non-Markovian ensamble voting method 35 to classify 22 human activities performed in the bathroom and kitchen contexts.

Tactile-based approaches

Tactile HRIs have also gained interest as a way of keeping a safe operation of the robot around humans, as a way of the human to guide or partner with the robot, and as a necessary element for robots’ behavior development.58,59

A good review of what is detected and how the perceptions are used can be found in Argall and colleagues.58,59 Human and robot tactile interactions can be unexpected or unintended interfering with the robot’s behavior execution. The robot must be able to recover from disturbance and react to physical contact. In these cases, the robot may passively react to the interference. 60 On the contrary, robots can actively predict the effects of human contact and identify the best behavior to operate safely and efficiently around humans.

When human tactile interaction with the robot is deliberate, the interpretation of human intentions by the robot is very important.58,59 In social robots, particularly those made for “psychological enrichment,” 61 sensing and interpretation is very important to improve the human behavior. 62 Paro De Seal 63 and Kasper 64 are good examples.

Multimodal-based approaches

The majority of related works only analyze human actions and events from one point of view. Rodomagoulakis et al. 8 presented a multimodal action recognition system in assistive HRI for elderly persons. Based on inputs from microphone and visual input of high definition and depth cameras, the robot can recognize audiovisual human commands. Accordingly, Han et al. 65 discussed methods and technologies for non-verbal HRI with the NAO robot. 57 They reviewed build-in technologies to detect face and head, and track people; sonar sensors and speech direction detection; and tactile sensors and object recognition. Likewise, Pieropan 66 argued that robots must be able to perceive and understand the environment autonomously out of multiple perception modalities, as humans do. The authors proposed a method for audiovisual recognition of human manipulation actions. In addition, they presented an RGB-D-audio dataset of humans making milk and cereals.

Imitation learning

In the last decades, robots have been gaining ground in human lives. Robots cannot only be found in the industry, but a new generation of service and social robots appear in everyday life environments.67,68 Social robots must relate to humans and cooperate in new scenarios, doing different tasks in possible unknown situations. Hence, robots should be able to adapt and learn new behaviors.

Bandera et al. 68 say that robots must be provided with a natural and intuitive learning system for humans that enable them to expand their behavior repertoire quickly and efficiently. Try and error approaches require reward functions for each task, and even in simple tasks, the possibilities grow exponentially. 13 One of the needs for research on robot imitation learning is the intuitive way of communication between those and humans. Imitation learning is a key technology for applications, where robots are expected to work closely with humans, such as manufacturing, elder care, and service industry. Osa et al. 69 and Schaal 70 state that imitation learning means to speed up learning of humanoid robots. “The goal of imitation learning is to develop robot systems that are able to relate perceived actions of another (human) agent to its own embodiment in order to learn and later to recognize and to perform the demonstrated actions.” 37

According to Krüger et al., 37 for a robot to imitate other agent’s (human or not) actions and activities, it must be able to perceive, analyze, and recognize continuous human movements and actions. This entails also identifying objects relevant to a task, and analyzing the changes in the environment caused by human actions.

The perception of movement is most commonly done with vision-based inputs;68,71,72 hence, these inputs can be complemented or replaced with magnetic tracking systems, 73 motion capture technologies, or proximity and infrared sensor technologies.

Mainly, a robot has to identify what, how, when, and who to imitate. The expert is also acting in a given context and environment sometimes interacting with other people or objects.

What to imitate

First of all, a robot must decide what to imitate when perceiving a demonstration. An important research problem is how the robot determines what observed movements and actions of the selected model are relevant to the task, and what movements are only circumstantial. 74 The robot must also understand what effects certain actions have on the environment of the actor. 37 It has to recognize objects and their manipulation.

An interesting approach to determine what is observed was presented in Pieropan. 66 He proposed an audiovisual approach to understand an observed action. This approach tries to mitigate visual limitations (occlusion, luminosity, actions performed out of sight) with multimodal perception. He also focused on learning manipulation activities and object discovery, tracking, and object affordance for objects that can be grasped.

When and who to imitate

According to Breazeal and Scassellati, 74 the robot must not only decide what to imitate but also when and who to imitate. Depending on the social context, availability of a good model, and robot’s internal motivation, the robot decides to engage in imitation. In the data recollection phase, common problems are noise and sensor errors, and incomplete or inaccurate demonstration.

In Burns et al., 75 the experimenter aims to determine the effect of robotic imitation to increase the engagement of the human participant during a structured social setting. This experiment was not a task-oriented interaction.

How to imitate

Once the robot has identified what to imitate, it has to determine how to imitate the observed action; it has to map these movements and actions into movement that its body can perform. Calinon et al. 72 addressed the problems of “what to imitate” and “how to imitate.” The first problem is to identify which features are relevant for achieving the task, and the second problem refers to transferring the perceived action into primitive movements performed with the robots body. The authors presented a method to extract the constraints of a task in order to determine an imitation strategy. They used a humanoid platform to show a goal-directed imitation.

Nehaniv and Dautenhahn

76

define this as the correspondence problem. In order to physically imitate certain action, the robot has to map the observed action-generating movements (primitive movements)58,59 that the robot is capable to perform with its embodiment. Argall et al.58,59 use the word

Some authors apply different kinematic-based approaches to deal with the correspondence problem. These approaches perform offline optimization to determine the corresponding configurations 77 or real-time techniques for imitation. 78 Jin et al. 79 proposed a framework based on sparsely sample correspondences extracted from raw data that allow imitation in real time.

Where to imitate

One step further for the robot is to understand the context in which the actions are been made. Chella et al. 80 proposed a framework to not just reproduce movements of a human teacher but also understand the environment and perceived actions. Their cognitive architecture for imitation learning is able to learn natural movements and generate action plans.

A different approach for physical HRI is 81 an efficient machine learning algorithm and two human-in-the-loop learning scenarios inspired by human parenting behavior. The test subject was asked to assist a robot in a standing-up and walking assistance task improving the HRI.

Finally, the robot must be able to learn and improve its performance over time. Eventually, the robot must be able to evaluate its actions and establish the similarity of the outcome of its actions in comparison to the observed demonstration.

Challenges and trends

According to Fong et al., 40 social interactive robots are important because in some domains, robots must interact with humans as peer to perform a specific task or when it is used to change human attitudes or behavior. In order to achieve this peer-to-peer relation with humans, robots must develop social skills. Fong et al. 40 identified important design issues in social robot systems. In particular, human-oriented perception, natural HRI, and real-time performance are design issues closely related to the domain of HAR.

Human-oriented perception

A great variety of activities have to be recognized by robots according to different application systems (see Table 3). As social robots are becoming part of our daily lives, they have to be able to recognize and to understand simple gestures related to human activities, object manipulation, complex human behaviors, and social conventions.

Several approaches based on different types of perceptions are used for HRI, and each of them has advantages and limitations. RGB-D systems are of low cost, but they are highly sensitive to lighting changes and environmental factors. These approaches are limited on viewpoint changes. When cameras are integrated to robots, a special challenge is the moving cameras, as reported in Yazdi and Bouwmans. 82 Motion capture systems find good representation of the action, but they are expensive and have high computational costs. The systems require markers and calibration, but they are usually invasive and have to be used in controlled spaces.67,68

Wearable sensors are obtrusive and uncomfortable if they have to be worn for prolonged periods of time. They are limited for certain activities, sensitive to sensor location, and can produce noisy data. On the contrary, sensors do not have all vision drawbacks (e.g. occlusion, fixed location and fixed views, blurring, external conditions such as lightning, high amount of data to process), and they can be used in different environments. We can observe a trend to combine different types of perceptions in multimodal systems 83 to overcome the mentioned limitations at the cost of increasing the computational complexity.

Natural HRI

In order to interact with humans as peers, robots must communicate and act in a friendly way to humans. Human behavior is complex and rich, and robots must understand and act according to social conventions and norms. 40 The robot’s behavior must be believable. Natural embodiment, natural language and dialog, smooth natural motions, and understanding of human’s emotions are desirable. Some of these requirements become relevant depending on the application, and they increase the performance complexity. According to Ishiguro and Nishio, 84 HRI researchers have been neglected the appearance of a robot prioritizing behavior over appearance. There is a shift in recent years to build more humanoid robots who interact with natural communication with humans. Sophia humanoid from Hanson Robotics is the most mentioned example of this trend. 85

Transfer learning for HAR 86 can be helpful to cope with the correspondence problem in HRI.

Real-time performance

“Socially interactive robots must operate at human interaction rates.” 40 This means that a robot must be able to identify and interpret activities and situations, plan and take decisions, and learn as rapidly as humans do.

Regarding the learning techniques, hidden Markov models are frequently used to train the robot’s policy when direct state-action is required, but this is often not enough for different behaviors. 13 Bandera et al. 68 described the following drawbacks for this approach: (1) the complexity of training and inference limits the number of states that can be modeled and (2) the huge amount of training data needed for this approach. Some commonly used methods for classification and regression are artificial neural networks (ANN), KNN, locally weighted regression (LWR), and SVM.

Imitation learning is an important attempt to speed up a robot’s learning. 70 Hussein et al. 13 reviewed the learning methods used for imitation learning which are generic to robot motion learning tasks. The authors claim that there is a need for specialized learning algorithms to represent and predict human action in order to be able to emulate these motor functions. In several applications of human activity, recognition data are collected with vision, sensor, or multimodal perception, and this information is processed to obtain features associated to labels. These features and labels are the learning examples for activity recognition.

In imitation learning, there are states that represent the status of an agent and actions. Imitation learning depends on the expert demonstrations, repeated interactions from which sequential prediction must be achieved. 87 The learning process for imitation learning is capturing actions of the teacher via different sensing methods. The state-action is captured using wearable sensors, motion capture systems, force gloves, movement and position sensors, tactile devices, and cameras, among others. This information is also processed to extract features that describe the state of the performer and task-related information of the surrounding. From these features, the robot must learn a policy to imitate the demonstrated behavior. 13 The policy can be refined for continuous improvement.

According to Hussein et al., 13 classification, regression, and apprenticeship learning methods are used for learning the policies that determine action units or movement primitives 59 that the robot must do. For policy refinement, active learning, apprenticeship learning, reinforcement learning, transfer learning, structured prediction, and optimization can be used. 13

There is a need on the timing response of learning techniques for HRI, since the performance should be done in very limited amount of time, typically in real time. In that sense, learning techniques have issues on training time, mostly offering only offline options for robots. But, there is an urge to propose novel methods for online training. In terms of implemented learning models previously trained, there are too many methods with fast and efficient response. In this regard, future on HRI is mostly focused on new paradigms not only in training learning techniques but also in the implementation of signal processing techniques at low level.

The important design issues discussed above lead us to think about the balance between robot’s complexity and computational and investments costs. The more complex multimodal perceptions and more natural and complex communication and behavior of the robot demand more computational complexity and more costs. This also means that real-time performance is still an issue for complex robot needs. There will always be a trade-off between the complex multimodal perceptions, and natural robot’s behavior and real-time performance, which has to be analyzed for each application.

Footnotes

Handling Editor: Paolo Bellavista

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research has been funded by Universidad Panamericana through the grant “Fomento a la Investigación UP 2018,” under project code UP-CI-2018-ING-MX-04.