Abstract

We model a wireless sensors’ connectivity scenario mathematically and analyze it using capacity provision mechanisms, with the objective of maximizing the profits of a network operator. The scenario has several sensors’ clusters with each one having one sink node, which uploads the sensing data gathered in the cluster through the wireless connectivity of a network operator. The scenario is analyzed both as a static game and as a dynamic game, each one with two stages, using game theory. The sinks’ behavior is characterized with a utility function related to the mean service time and the price paid to the operator for the service. The objective of the operator is to maximize its profits by optimizing the network capacity. In the static game, the sinks’ subscription decision is modeled using a population game. In the dynamic game, the sinks’ behavior is modeled using an evolutionary game and the replicator dynamic, while the operator optimal capacity is obtained solving an optimal control problem. The scenario is shown feasible from an economic point of view. In addition, the dynamic capacity provision optimization is shown as a valid mechanism for maximizing the operator profits, as well as a useful tool to analyze evolving scenarios. Finally, the dynamic analysis opens the possibility to study more complex scenarios using the differential game extension.

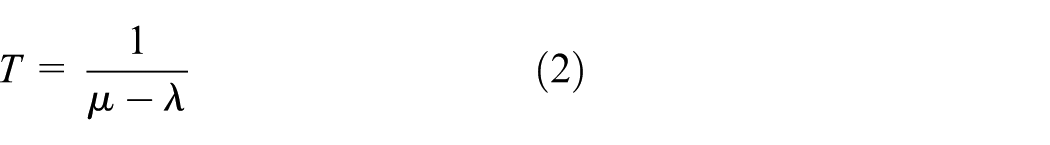

Keywords

Introduction

The concept of Internet of things (IoT) as a revolutionary paradigm is not new. 1 However, the wide concept of IoT that we know nowadays was not defined until the past decade. 2 The number of devices connected is growing driven by this paradigm; in fact, according to Cisco, there will be 5.5 billion mobile devices connected to the Internet by 2020, 3 with a wide range of applications in several areas, such as education, healthcare, industry, infrastructures, smart homes, as well as smart cities,4,5 among others. In this context of huge density of devices connected to wireless networks, the network capacity provision problem has been focused on optimizing the bandwidth usage using different approaches, such as algorithms and programming,6–8 protocol modifications, 9 and game theory.10–13 Nevertheless, given that the main actors in the capacity provision problem are the network operators (OP), it is also needed to justify the solutions not only from an efficiency point of view but also from an operator profit point of view.

The OP profit maximization problem has been addressed several times in the literature as a pricing problem.14–17 Some of the papers only analyze monopolistic scenarios, 18 but it is also common to analyze competitive scenarios using game theory.17,19 The analysis is typically solved statically, and the results are obtained in the equilibrium, where the actors have no incentive to change its decisions.20,21 However, there are some studies that analyze dynamic problems, where the system parameters may vary over the time and the optimization is done within a time interval.22,23 In our work, we tried to extend the scenario analyzed in the work by Sanchis-Cano et al. 24 by solving a dynamic optimization problem using the price as control variable. However, the model was not controllable due to the linear dependence of the Hamiltonian function with the price. To solve this problem, we decided to analyze the profit maximization problem in an IoT scenario, using the capacity provision as control variable 11 instead of the price.

Paper contributions and outline

In this article, we analyze a wireless sensors’ connectivity scenario from an economic point of view using mathematical modeling and game theory. We analyze the scenario using a static model as a first approximation and then we propose a more realistic dynamic model, using evolutionary games and optimal control theory to solve the problem of capacity provision for sensors’ connectivity. We analyze a scenario with several sensors’ clusters trying to transmit the gathered data through a network operator (OP), which provides wireless connectivity. The behavior of the sensors is modeled using a delay-sensitive utility function. The scenario is analyzed both statically and dynamically using game theory. For the static model, the sensors’ population equilibrium is found using population games, and the OP optimal leased capacity is obtained through a maximization problem. The static model is solved using backward induction, and a Nash equilibrium is found. In the dynamic model, the population behavior is modeled using the replicator dynamic, while the OP capacity decision is obtained solving an optimal control problem using the Pontryagin maximum principle (PMP).25,26 The aim of this article is to show the feasibility of the proposed IoT scenario. To achieve this objective, we maximize the profits of the network operator in a given time interval, using the capacity provision as the maximization variable. We provide detailed mathematical procedures, not only for optimization problems with fixed parameters but also for problems where the parameters may vary over the time. In addition, we also provide graphical results, which demonstrate the efficiency of our dynamic capacity provision method for wireless sensors’ connectivity and the feasibility of the scenario.

One real-life scenario where our work may be useful is a scenario where an operator provides wireless connectivity to different kinds of sensors in a city. If the operator is able estimate the sensors’ mean life or can predict new deployments of sensors, then it can optimize the leased capacity over a long time period. In addition, if it is able to lease the capacity in advance, it may obtain a price reduction, and therefore, a reduction in its investment costs.

The main contributions of the article could be summarized by the following points:

The provision of wireless sensors’ connectivity is shown feasible from an economic point of view for all the actors if the investment costs of the service provision are bounded (sections “Game I: static analysis” and “Results and discussion”).

The capacity provision is a valid alternative to pricing techniques in profit maximization scenarios (section “Results and discussion”).

The dynamic optimization using optimal control is shown more efficient than the optimization using equilibrium concepts (section “Results and discussion”).

The dynamic optimization allows to optimize not only static but also changing IoT scenarios (section “OP optimal control and sinks’ distribution with dynamic parameters”).

The rest of this article is organized as follows: in section “General model,” we describe in detail the scenario and the behavior of the actors involved, the utility of the sinks, and the operator profit. In section “Game analysis,” the scenario is analyzed using a static and a dynamic model. The sinks’ subscription problem as well as OP profit maximization problem are solved using game theory and optimization. Section “Results and discussion” shows and discusses the results, while section “Conclusion” draws the conclusions.

General model

We consider the IoT scenario which is depicted in Figure 1 with several clusters uploading their sensing data to the Internet through a network operator (OP). The sensor nodes are grouped into clusters. Each cluster has a large number of sensing nodes connected through a multi-hop wireless network. 27 Each cluster has a sink node, which transmits the data collected by all the nodes in the cluster to the Internet through a network operator (OP). Our scenario is based on the work by Sanchis-Cano et al. 24 and analyzes the interaction between the sinks and the OP. The analyzed model has the following market actors:

Sinks.

Network operator (OP).

Analyzed scenario with all the actors of the market.

Sinks

Each sink belongs to only one cluster. Each sink is responsible of transmitting all the data collected by its sensors in a cluster to the Internet. They are the clients of the wireless connectivity service offered by the OP. The number of sinks is

In order to model the utility perceived by the sinks that subscribe to the OP, we use a quality function

where

where

We propose a utility function, which models the perception of the sinks about the service offered by the OP, as the difference between the quality perceived by the sinks and the price charged by the OP. This utility function is also known as compensated utility and is commonly used in telecommunications28,34–36

where we have re-written the arrival rate as the traffic generated by all the sinks being served

The utility must be non-negative

Network operator

The OP offers a wireless connectivity service to the sinks that allows them to transmit the data collected and charges a price

The objective of the OP is to maximize its own profit choosing the system capacity in order to provide a service ratio

where

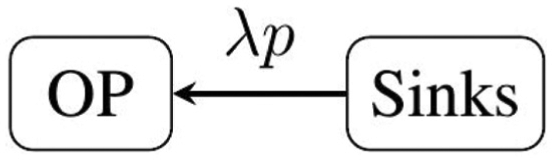

Figure 2 shows the payment flow described in this section; we observe that the amount of money perceived by the OP is proportional to the traffic generated by all the sinks multiplied by the price that each sink pays per data unit.

Model payment flow and actors involved.

Game analysis

The model described in the previous section can be analyzed as two games each one with two stages. The first game is a static analysis, while the second game is a dynamic analysis of the model. Both games have the following structure: first, an optimization stage where the OP chooses the capacity that maximizes its profits and second, a sink’s subscription stage. The games are summarized in Figure 3.

Description of the game stages.

The games were solved as follows. First, the Game I was solved. A static analysis was conducted and the equilibrium solutions were obtained. Second, the Game II was solved, obtaining the optimal OP decisions and the social state as a function of time.

Both games were solved using backward induction, which allows us to find a subgame perfect Nash equilibrium (SPNE) of the proposed games. Backward induction consists in deducing backward from the end of a problem to the beginning to infer a sequence of optimal actions. Any Nash equilibrium found using backward is a Nash equilibrium for every subgame or, equivalently, an SPNE.24,39

Game I: static analysis

This game analyzes our scenario using a static model, where all the parameters are fixed. In this game, the actors act with perfect rationality and its decisions are instantaneous. The solution of this game is a Nash equilibrium where no actor has incentive to change its own decisions.

Stage II: sinks’ subscription game

This stage is played once the OP has fixed its

Population game

Strategies:

Social state:

Payoffs:

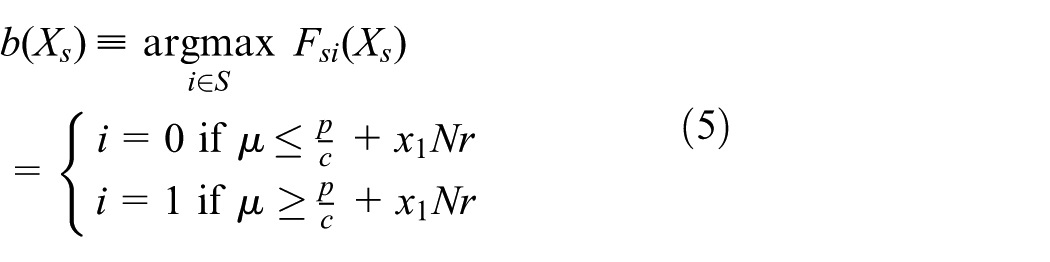

Pure best response

The pure best response

where

Mixed best response

The mixed best response

where

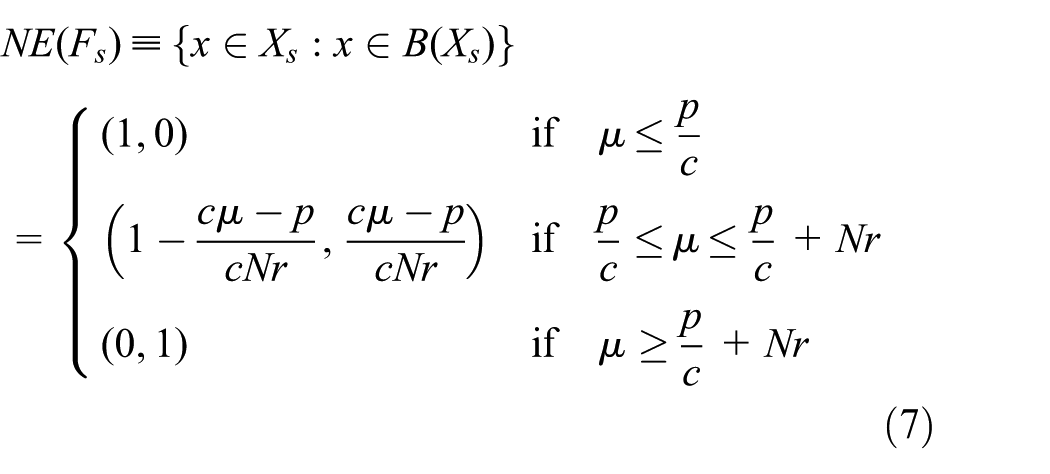

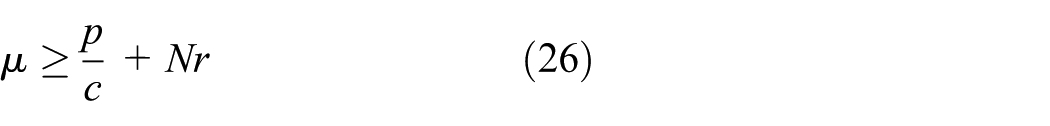

Nash equilibrium

At this point, social state

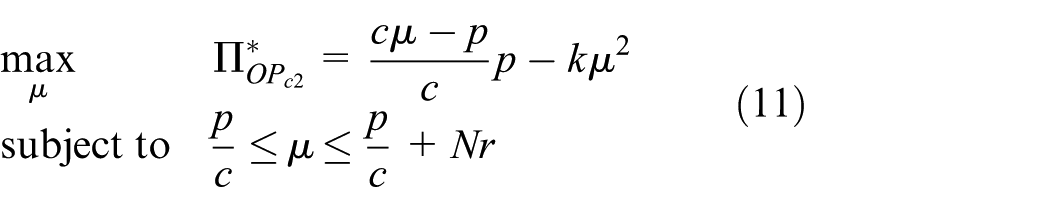

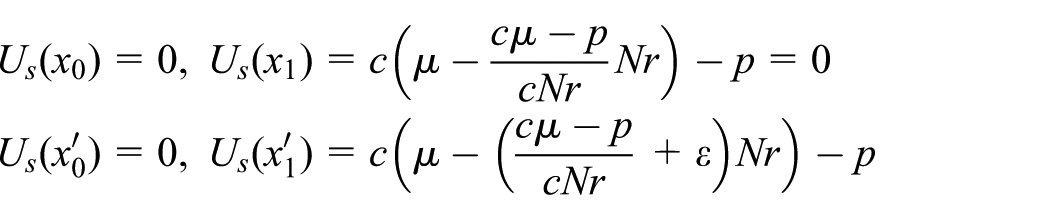

Stage I: OP capacity optimization

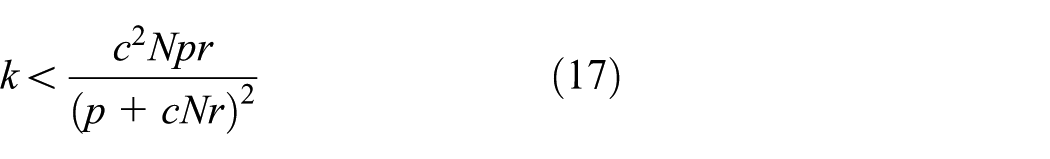

In this stage, the OP wants to maximize its profit given by equation (4) using

Case 1:

where

Note that in this case, it is not possible to obtain positive profit.

Case 2:

The problem in equation (11) is solved using Karush–Kuhn–Tucker (KKT) conditions and its solution is as follows

Case 3:

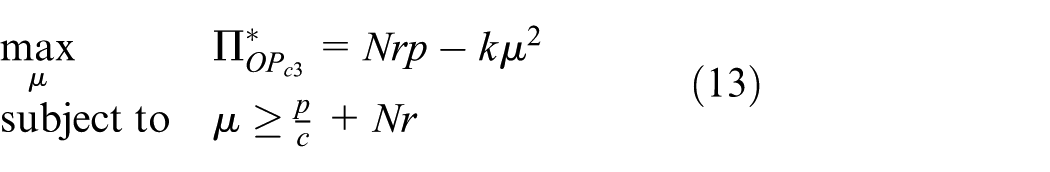

The problem in equation (13) is solved again using KKT conditions and its solution is as follows

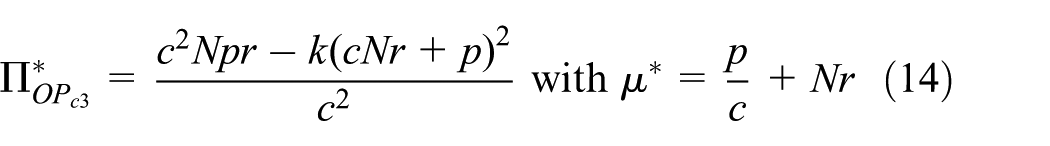

Given that the first part of equation (12) is always greater than equation (14) for the problem restrictions, the OP optimal profit can be summarized as follows

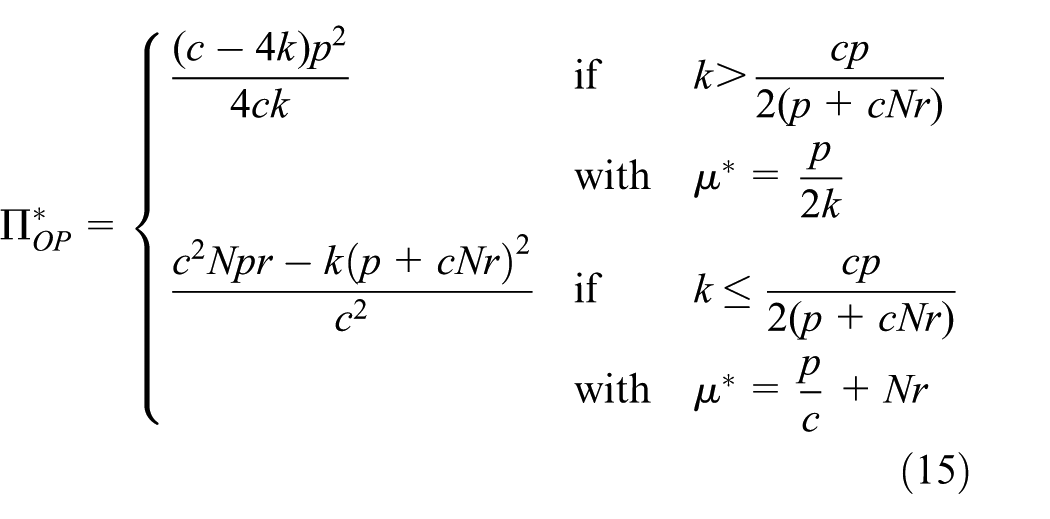

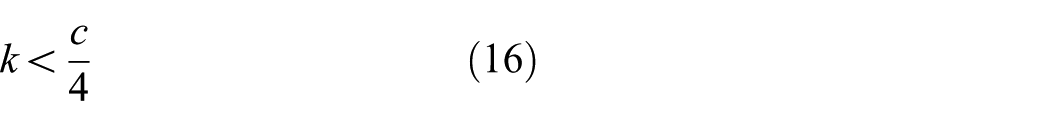

Analyzing the previous results, we observe that

Case

Case

In this case, there are two possible interpretations depending on which is more restrictive than equation (17) or

As shown in the previous analysis, the value of

Game II: dynamic analysis

This game analyzes our scenario using a dynamic model, where the parameters and the decisions of the actors may change over the time. The dynamic analysis was conducted using evolutionary game theory for the sinks’ subscription game, while for the OP capacity, optimization stage optimal control theory and PMP were used.

Stage II: sinks’ evolutionary subscription game

In order to maximize the user utility described in equation (3), we define the following evolutionary game:

Strategies:

Social state:

Payoffs:

The sinks use a set of rules to update their strategies. This set of rules is known as revision protocol 40 and determine the evolutionary dynamic. There are several revision protocols but we are interested in the imitative protocols and direct selection protocols. In the imitative protocols, the users update their strategies taking into account the strategies chosen by other users, but imitative protocols admit boundary rest points that are not Nash equilibria of the underlying game. 41 On the other hand, direct selection protocols are not directly influenced by the choice of others and this characteristic prevents the boundary rest points. In this work, we have chosen an imitative protocol, given that it is tractable analytically and widely used in the literature. However, we need to be cautious about the boundary rest points.

The revision protocol used in this work can be described by the following action:

At the time instant

The revision protocol was introduced by Schlag 42 in a population game context. Under this protocol, a user switches its strategy only if the other user has a better utility. The switching rate is proportional to the difference in the utility and the number of users in the destination strategy. The protocol has D2 data requirements. 41

The mean dynamic can be derived from the proposed revision protocol (equation (18)) as follows

where

Given that

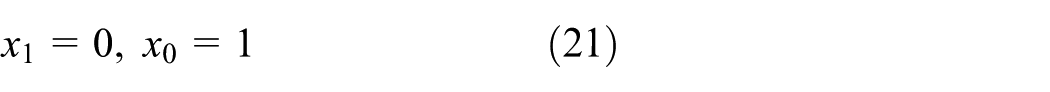

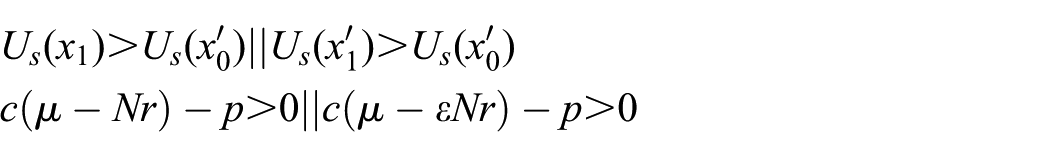

Dynamic stationary points

The dynamic reaches a stationary point when no user is willing to change its strategy or equivalently when

Solving the previous equation and assuming that

Case 1

Case 2

Case 3

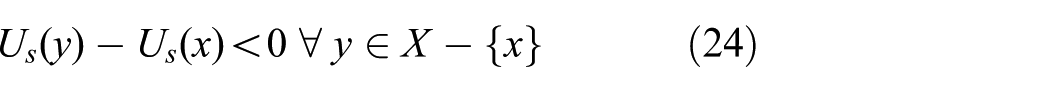

Stability of stationary points

Once we have found the stationary points, it is necessary to characterize its stability. Consider a steady state

which means that the utility perceived by the sinks which did not switch their strategy from state

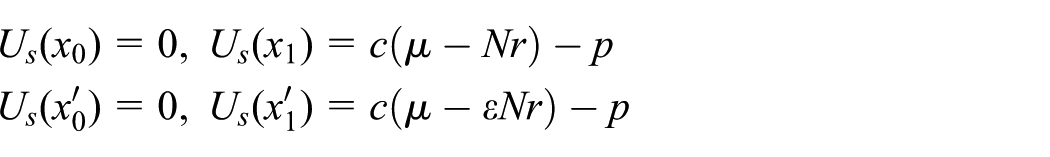

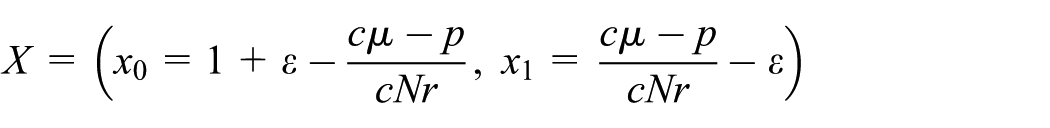

Case 1:

Consider that a number of sinks

The utility of sinks in both states is as follows

This steady state is a GESS if

For all the possible values of

Case 2:

Consider that a number of sinks

The utility of sinks in both states is as follows

This steady state is a GESS if

For all the possible values of

Case 3:

Consider that a number of sinks

The utility of sinks in both states is as follows

The necessary conditions to be a GESS are follows

For all the possible values of

On the other hand, if we analyze the case when a number of sinks

The utility of sinks in both states is as follows

The necessary conditions to be a GESS are as follows

For all the possible values of

With equations (27) and (28), we have the sufficient conditions where this state is a GESS

In the previous analysis, we have demonstrated that there is a GESS for all the possible values of the control variable

Note that when one of the steady states deduced in equations (21)–(23) is a GESS, it is unique. Figure 4 shows a particular case when the GESS is the mixed strategy equilibrium (equation (23)).

Replicator dynamic convergence when the GESS is a mixed equilibrium.

Stage I: OP dynamic capacity optimization

The capacity optimization stage was solved using optimal control theory,

26

which allows us to do a dynamic optimization within a time horizon and not only in the steady states. As a result of the dynamic optimization, we obtained a control function in every instant of time

where

In order to solve the previous problem, we used the PMP, which provides the necessary conditions to find the candidate optimal strategies for the open-loop case. The Hamiltonian function of the OP is defined as follows

where

Following the PMP, all candidate optimal strategies must satisfy the necessary conditions

where equation (32) is the maximality condition, equation (33) is the replicator dynamic, which models the behavior of the sinks, equation (34) is the adjoint equation, and equation (35) is the transversality condition. Solving equation (32), we obtain the candidate strategy to maximize in terms of the state

Replacing the optimal candidate strategy equation (36) in the remaining PMP conditions and with the initial state condition, we have the system of partial differential equations (PDEs) shown in equation (37)

This system is a two-boundary value problem (TBVP) and cannot be solved using traditional methods for PDEs, given that it has no initial conditions for all its variables. Instead of it, is has an initial condition and an end condition. This problem has been solved numerically using the shooting method.

44

Given that the shooting method requires a good initial estimation for the value of

Results and discussion

In this section, we present the numerical results for the static and dynamic games analyzed in the previous section. The results were obtained for the case when the equilibrium is a mixed strategy. The figures were calculated for the values shown in Table 1 unless otherwise specified.

Reference Case 1—static parameters.

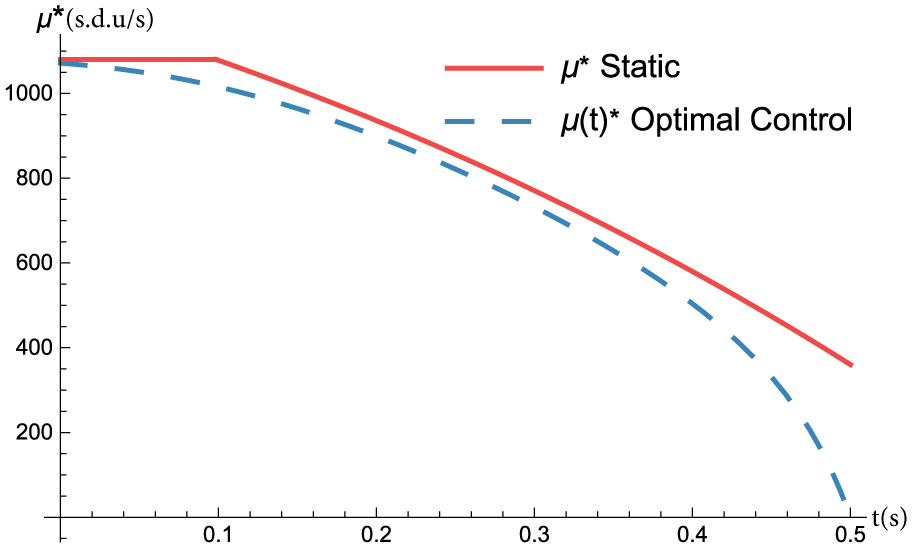

OP optimal control and sinks’ distribution with static parameters

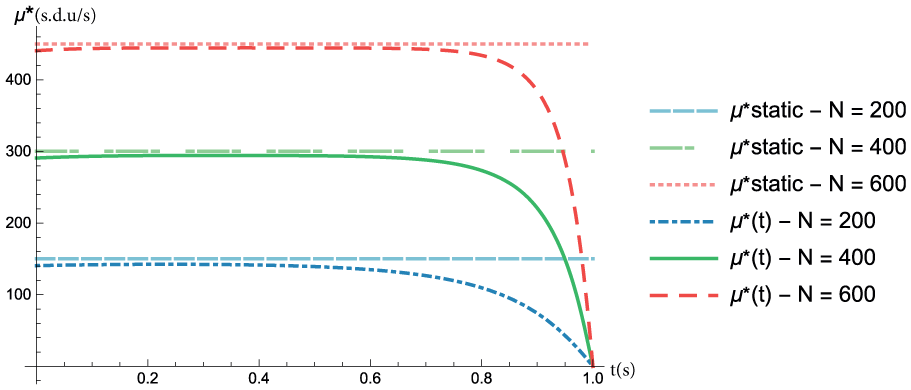

In order to study the static and dynamic results, we show the optimal capacity

Figure 5 shows the OP optimal capacity in the static case and in the dynamic case for different values of

OP optimal capacity in the static and dynamic cases for different values of

Social state in the static and dynamic cases for different values of

OP optimal control and sinks’ distribution with dynamic parameters

In this section, we show the evolution of the optimal capacity

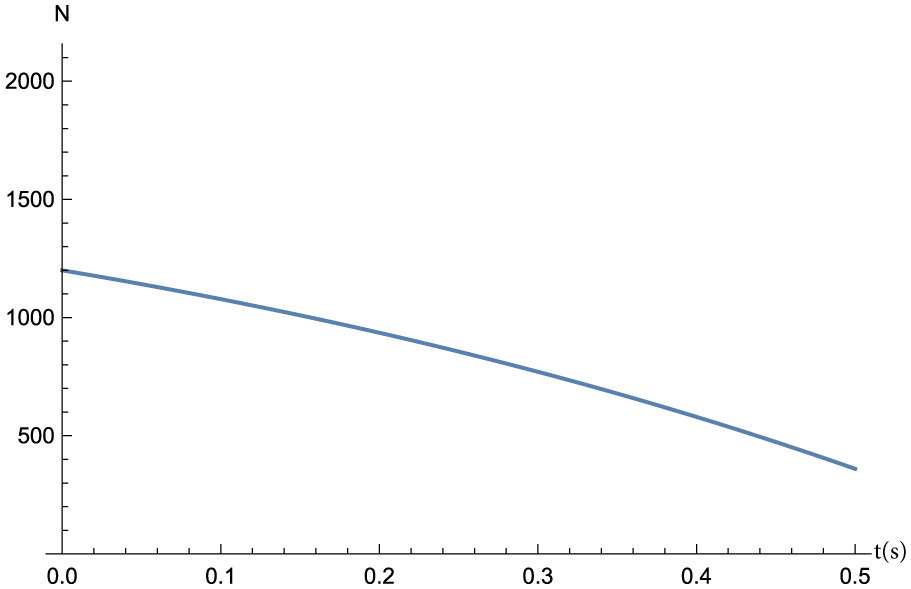

Scenario 1: evolution of the number of sinks

Scenario 1: OP optimal capacity in the cases with static and dynamic optimization as a function of

Scenario 1: social state in the three studied cases as a function of

Scenario 1: evolution of the OP profits for different strategies as a function of

Scenario 2: evolution of the number of sinks

Scenario 2: OP optimal capacity in the cases with static and dynamic optimization as a function of

Scenario 2: social state in the three studied cases as a function of

Scenario 2: evolution of the OP profits for different strategies as a function of

Reference Case 2.1—dynamic common parameters.

In both scenarios are shown three different cases:

Case 1. In this case, the values of

Case 2. In this case, the value of

Case 3. In this case, the values of

Scenario 1

This scenario models a decreasing number of sensors over the time due to failures in the sensors during its life, as shown in Table 3 and Figure 7. The figures were calculated for the values shown in Tables 2 and 3.

Reference Case 2.1—dynamic non-common parameters.

Due to the variation in the number of sensors N, the optimal decision for the OP over the time may vary. Figure 8 shows how the system is able to adapt its decisions to variations not only in the distribution of the sinks but also in the system parameters. The difference between the Cases 1 and 2 and the Case 3 is small for small values of

Scenario 2

This scenario models an increasing number of sensors over the time due to a progressive deployment of new sensors, as shown in Table 3 and Figure 11. The figures were calculated for the values shown in Tables 2 and 3.

As in the previous scenario, the change in the number of sensors varies the OP optimal static solution

Conclusion

A capacity provision scenario for wireless sensors’ connectivity has been studied using mathematical modeling. The scenario was studied using both a static model and a more complex, but also more realistic, dynamic model. The analysis was conducted using concepts such as game theory, replicator dynamics, optimal control, and optimization.

The behavior of the sensors was modeled through a utility function based on a congestion model, while the subscription decision was modeled using both the static equilibrium and the replicator dynamic. The network operator profit was modeled using the revenues obtained from the sensors and a quadratic investment cost function. The optimal profit in a defined time interval was obtained solving an optimal control problem, using the network capacity as a control variable, and compared against the static optimization.

It has been shown that the optimization using optimal control, when the users are modeled using the replicator dynamic, allows the OP to obtain higher profits than the optimization using the equilibrium solution. In addition, the dynamic optimization allowed the operator to optimize its profits not only in a scenario with fixed parameters but also in a scenario where the system parameters, like the number of sensors, change over the time. Given the obtained results, we can conclude that the proposed scenario is feasible from an economic point of view for all the actors. In addition, we show that the optimal control theory is a profitable and a powerful tool for the maximization of the network operator profits in dynamic IoT scenarios.

Future work will involve the dynamic profit optimization of more complex scenarios with several competing operators using differential games.

Footnotes

Handling Editor: Jaime Lloret

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Spanish Ministry of Economy and Competitiveness through project TIN2013-47272-C2-1-R; AEI/FEDER, UE through project TEC2017-85830-C2-1-P; and co-supported by the European Social Fund BES-2014-068998.