Abstract

The original fruit fly optimization algorithm, as well as some of its improved versions, may fail to find the function extremum when it falls far from the origin point or in the negative range. To address this problem, in this article, we propose a new fruit fly optimization algorithm, named as the traction fruit fly optimization algorithm, which is mainly based on the combination of “traction population” and dynamic search radius. In traction fruit fly optimization algorithm, traction population consists of the worst individual recorded in the iterative process, the individual in the center of the interval, and the best fruit flies individual through different transformations, which is used to avoid the algorithm stopping at a local optimal. Moreover, our dynamic search radius strategy will ensure a wide search range in the early stage and enhance the local search capability in the latter part of the algorithm. Extensive experiment results show that traction fruit fly optimization algorithm is superior to fruit fly optimization algorithm and its other improved versions in the optimization of extreme values of continuous functions. In addition, through solving the service composition optimization problem, we prove that traction fruit fly optimization algorithm can also obtain a better performance in the discrete environment.

Keywords

Introduction

Algorithms based on swarm intelligence (SI) serve as an effective way of solving complex optimization problems. 1 Compared with traditional intelligent algorithms, such as a genetic algorithm (GA), SI shows a better behavior in terms of simplicity and effectiveness. Many SI-based algorithms have been widely used in both scientific and production fields. 2 These algorithms mimic the social behavior of species or natural mechanisms to find the best possible result for the given problem. The most popular SI algorithms include ant colony optimization (ACO), 3 which is inspired by the information exchange mechanism of ants when foraging; particle swarm optimization (PSO), 4 which simulates the foraging behavior of bird swarms; locust swarm algorithm (LSA), 5 which was extended from PSO; and the fish swarm algorithm (FSA), 6 which is inspired by the natural aggregation behavior of fishes.

However, all the algorithms mentioned above have their shortcomings. For example, due to excessive dependence on the parameter settings, PSO is prone to converge to a local optimum, ACO weakens its performance due to slow convergence, LSA requires additional steps to solve the problem, while the intricacy of FSA hinders itself from practical applications.

Fruit fly optimization algorithm (FOA) is a novel global optimization algorithm presented by Pan in 2011, which is inspired by the foraging behavior of fruit flies. Fruit flies have an astute sense of smell and sight in comparison with other similar species. By identifying and collecting the odors floating in the air, fruit files are able to locate food sources or fellow creatures, and then with the help of acute vision, they can be directed toward their destination. 7 This technique has been widely applied in real-world problems, such as the solution to function extremum, 8 the enhancement of the accuracy of enterprises financial crisis warning, 9 the improvement of generalized regression neural network, and the PID parameter optimization. 10

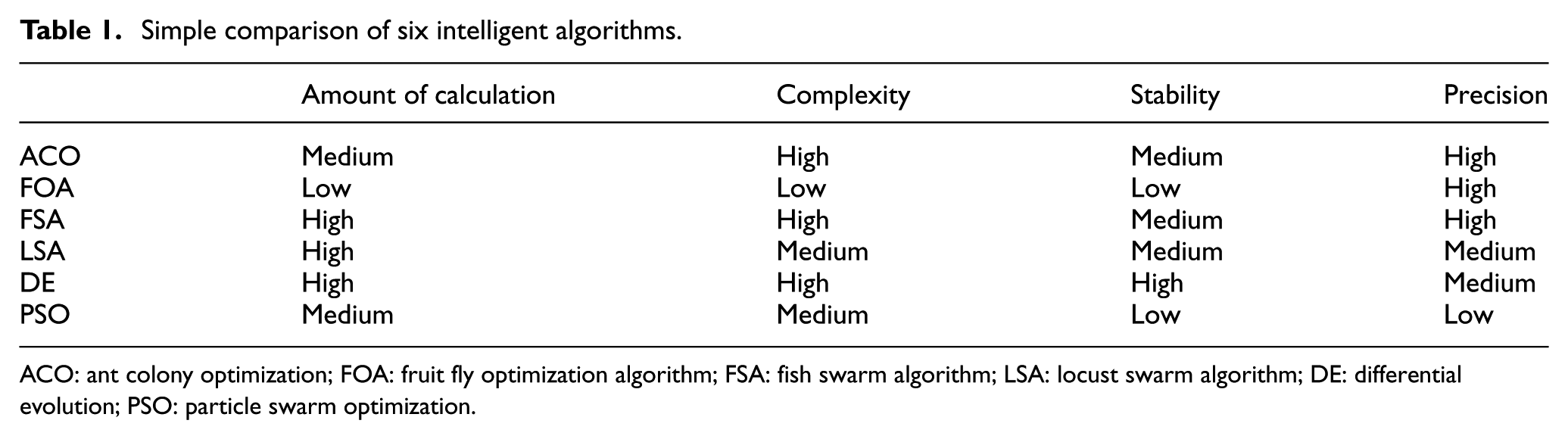

The performance of FOA was compared with ACO, FSA, LSA, differential evolution (DE) algorithm,11,12 and PSO, as shown in Table 1.

Simple comparison of six intelligent algorithms.

ACO: ant colony optimization; FOA: fruit fly optimization algorithm; FSA: fish swarm algorithm; LSA: locust swarm algorithm; DE: differential evolution; PSO: particle swarm optimization.

In general, FOA features are simple to implement, require less parameters to be set, and have strong local searching ability.

However, FOA also has its limitations. Specifically, the diversity of an individual is easy to lose, which leads to the premature problem of the algorithm. In addition, the original FOA cannot find the function extremum when it falls either in the negative range or not around the origin point. Furthermore, the local search capability of the FOA is weak because of the improper osphresis operation and vision operation.13,14 To tackle the above problems, the chaotic mechanism is introduced into the optimization algorithm named chaotic fruit fly optimization algorithm (CFOA).

7

The major limitation of CFOA is that it cannot find the extremum in some complex benchmark functions. QK Pan et al.

8

proposed an improved fruit fly optimization algorithm (IFFO) by improving the method of individual generation. The performance of IFFO was analyzed comprehensively using six benchmark functions. However, its convergence is still very slow. Dan Shan et al.

14

proposed a new improved fruit fly algorithm named linear generation mechanism of candidate solution (LGMS)-FOA based on a linear mechanism, which directly substitutes the individual into a fitness function and generates a new fruit fly individual by dynamically adjusting the parameter

To tackle the limitations of FOA, this article presents an improved FOA algorithm, called the traction fruit fly optimization algorithm (TFOA). The main contributions are as follows:

The deficiency of the original algorithm is analyzed in detail. The reason why FOA has a tendency to fall into a local optimum is explained.

TFOA can escape from a local optimum and achieve a much better global optimum using a traction population and a dynamic search radius mechanism.

Extensive experiments were conducted to evaluate the effectiveness of TFOA and showed that TFOA outperformed the original FOA as well as its other extensions in both the continuous and discrete environments.

The rest of the article is organized as follows. In section “FOA,” the basic FOA is presented. The TFOA is described in detail in section “The Improved Algorithm—TFOA.” TFOA is evaluated experimentally in section “Experimental evaluations.” Section “Conclusion” delivers the conclusion and expectations of this study.

FOA

FOA can be summarized thoroughly with the following seven steps:

where

The improved algorithm—TFOA

This section presents an improved FOA algorithm by introducing a traction mechanism and a dynamic search radius. The algorithm for the traction mechanism is stated in detail first, followed by an elaboration of the TFOA algorithm.

The generation of the traction population

Figure 1 displays the basic optimization process of the algorithm. The algorithm will be trapped in a local optimum without finding the global minimum. TFOA records the worst individual of each iteration round. When the algorithm cannot find a better solution, using the recorded individuals to explore a larger solution space in the opposite direction of the evolution, it will find a better solution, as shown in Figure 2. When the algorithm finds a better solution, it will change the location of the fruit fly population and restart the basic search in the FOA algorithm, thus finding the real minimum value in the end.

FOA optimization process.

The method used in TFOA to escape a local optimum.

This article applies the above to the generation of a traction population. The purpose of the traction population is to guide the algorithm to jump out of the local optimum. To further enhance the diversity of the traction population and improve the convergence rate, the algorithm also creates some individuals around the center solution space. The entire generation process of the traction population is demonstrated in Algorithm 1.

The above algorithm first calculates the direction of evolution according to equation (8) and then follows the transformation rule in line 5 to generate a new individual. After finding the center position (line 11), multiple individuals are randomly generated around it. The final output is the population (

The dynamic search radius strategy

In the original FOA, the search radius is fixed and always searches in one direction. This leads to a slow convergence speed and a tendency to fall into a local optimum. An appropriate search radius is located in the early stages of the algorithm to ensure that the algorithm finds as many solutions as possible. In the latter stages of the algorithm, we can find new solutions and an optimal solution. Therefore, the search radius should be closely related to the number of iterations and the size of the search interval.

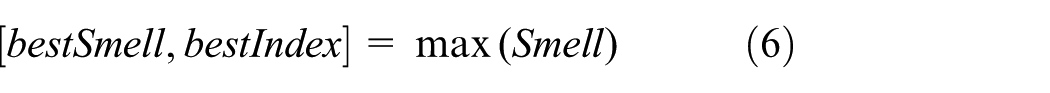

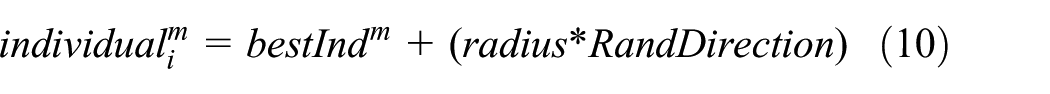

A new method for calculating the search radius presented in this article is shown in equation (9) in the process of generating new individuals by adding a random direction to avoid single direction search

where

New individuals are generated according to equation (10), where

Implementation of the TFOA algorithm

By combining the two strategies mentioned above with the basic FOA algorithm, Algorithm 2 illustrates the entire process of the TFOA via an example of finding the minimum value of a function.

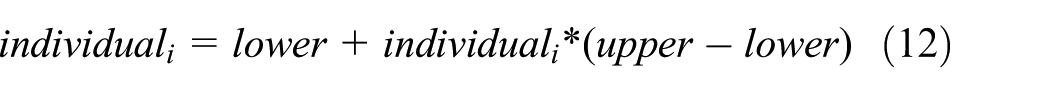

Since the convergence speed is greatly affected by the initial position, a new method for locating the initial population position is applied in TFOA. According to equations (11) and (12), a chaotic mechanism is used to generate the first fruit fly population. The best individual is then chosen as the group’s initial position

where

The execution sequence of TFOA is performed as follows:

Time complexity analysis of TFOA

The following is a brief analysis of the time complexity of TFOA, mainly focusing on the effect of traction on the performance of the algorithm. When the algorithm falls into a local optimum, it is necessary to carry out a traction operation; this operation is performed up to

The time complexity of TFOA is higher than the original algorithm due to the time taken to jump out of a local optimum. As the traction mechanism is used, the algorithm needs to generate traction population to search again. For instance, if the algorithm converges at the

Experimental evaluations

Experiments in continuous and discrete environments were carried out to evaluate the performance of the TFOA. In continuous environments, we evaluated the stability and global optimization ability of TFOA in comparison with the most recent improved versions of FOA, including IFFO, CFOA, and LGMS-FOA, based on 10 benchmark functions. In discrete environments, through the solution of the web service composition problem, the optimization ability of the algorithm is proven.

Algorithm performance test in continuous environments

This section compares TFOA with FOA and three existing improved versions of FOA, including IFFO, CFOA, and LGMS-FOA, based on 10 benchmark functions. The searching ability of TFOA in continuous environments is evaluated.

Experimental setup and parameter configuration

The experiments were conducted on a machine running Windows 7 (64 bit) with Intel® Core™ i5-2430M CPU 2.40 GHz and 4G of RAM. MATLAB R2014a was implemented. The parameters of each algorithm are listed in Table 2. The experimental parameters of the other four algorithms are set according to the corresponding papers. The population size of each algorithm was set to 50, and the number of iterations was set to 500 times.

Parameter settings.

FOA: fruit fly optimization algorithm; TFOA: traction fruit fly optimization algorithm; IFFO: improved fruit fly optimization algorithm; CFOA: chaotic fruit fly optimization algorithm; LGMS_FOA: linear generation mechanism of candidate solution_FOA.

Benchmark functions

To evaluate the ability of TFOA in solving function extrema, 10 test functions whose local extremum are diversely distributed were selected, which is demanding for algorithm’s performance of optimization. The 10 test functions are listed in Table 3.

Benchmark functions.

Experimental results

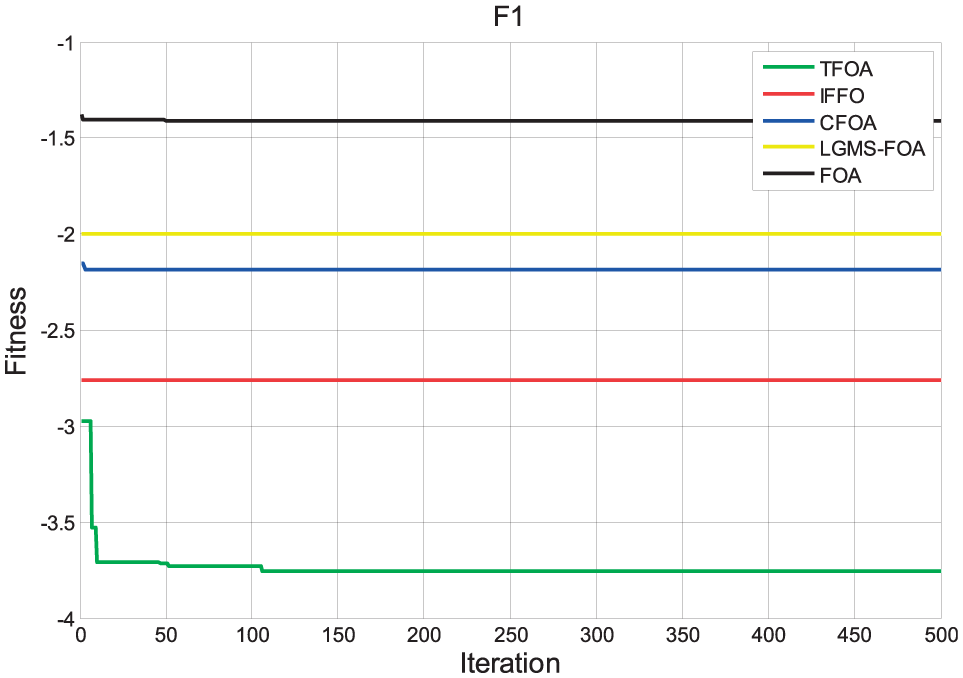

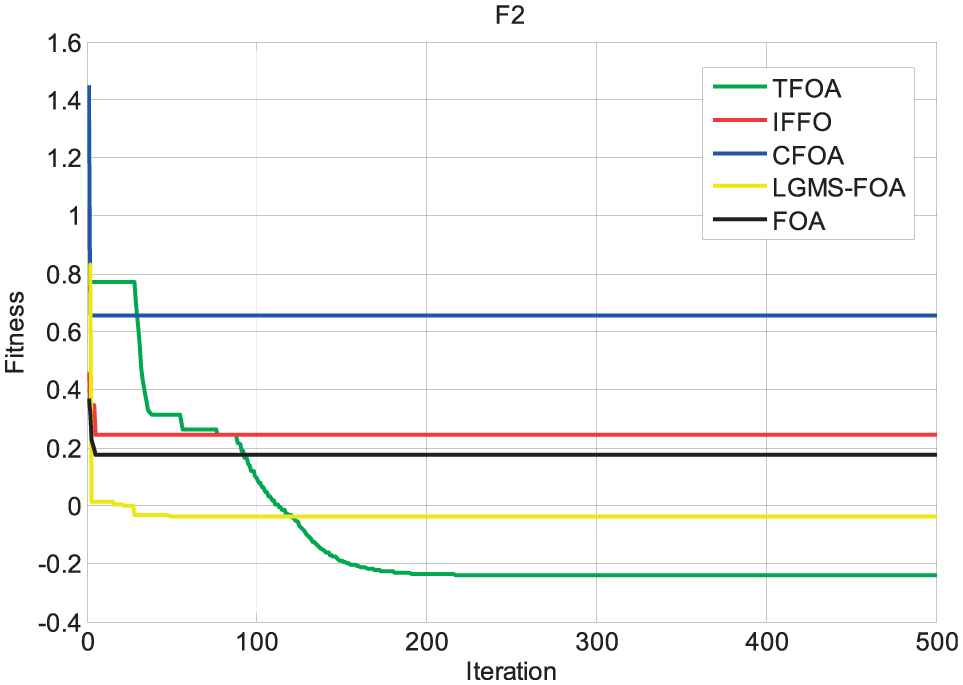

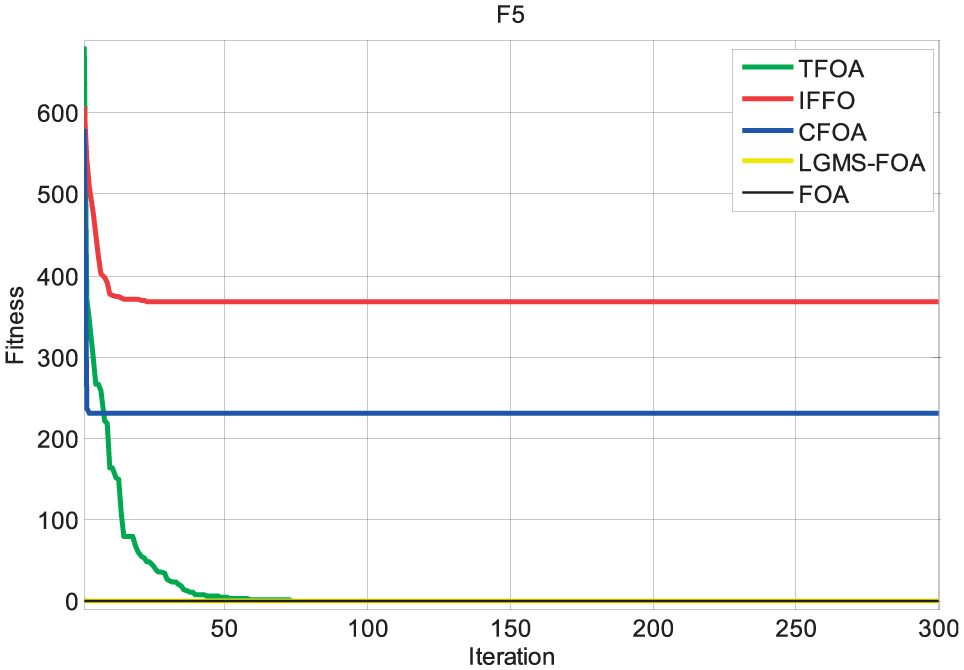

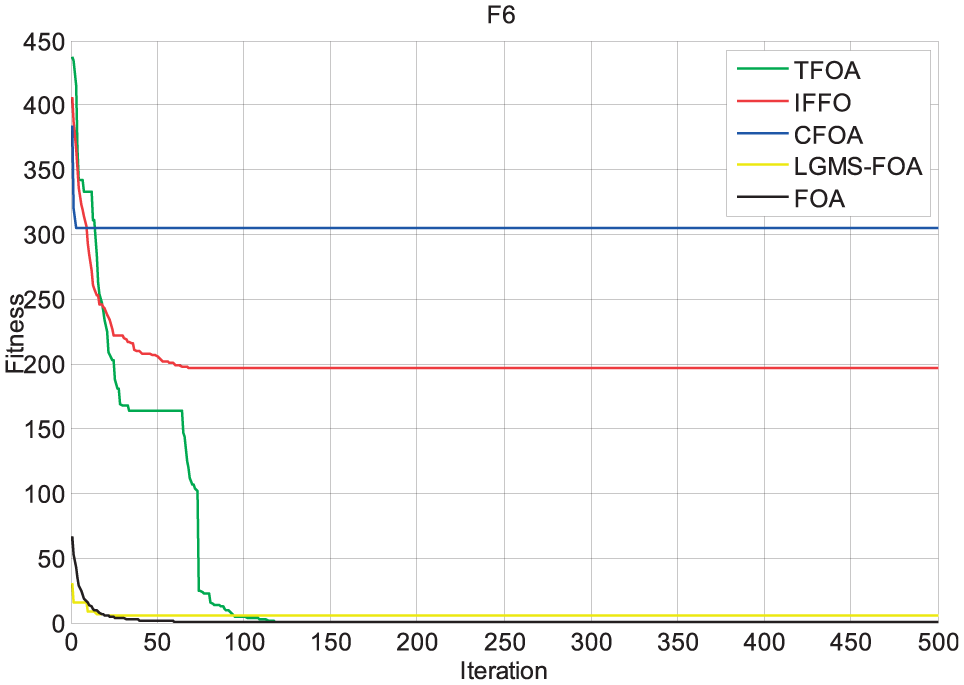

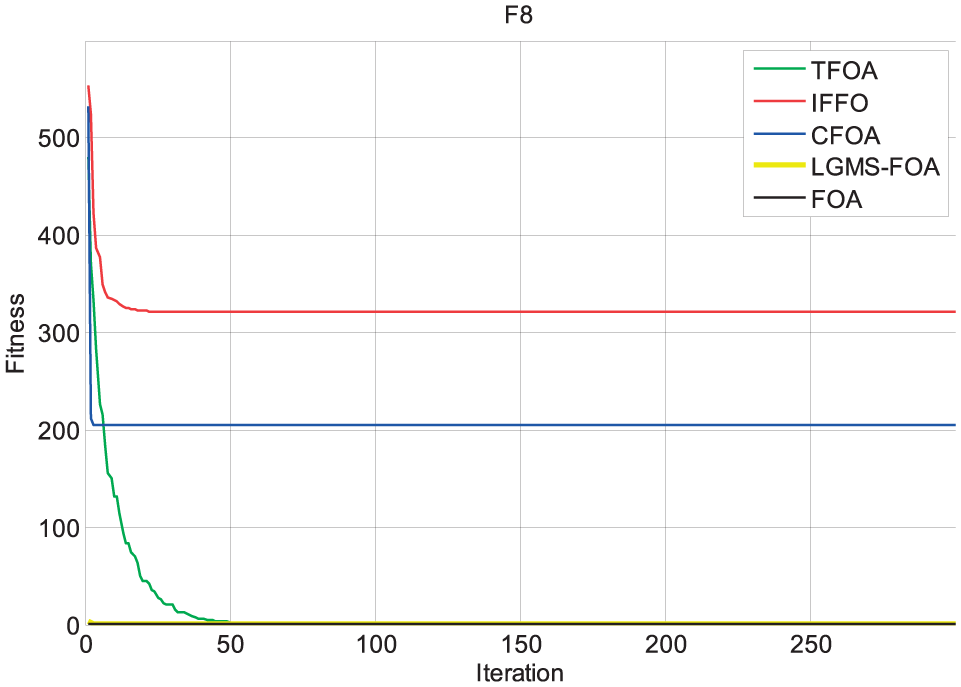

To evaluate the performance in finding the extreme value of the above 10 functions, means and variance yielded after the algorithms had run 50 times were, respectively, recorded and are shown in Table 4. The iterative optimization curves were drawn accordingly, as shown in Figures 3–12.

Mean and variance of algorithm.

FOA: fruit fly optimization algorithm; TFOA: traction fruit fly optimization algorithm; IFFO: improved fruit fly optimization algorithm; CFOA: chaotic fruit fly optimization algorithm; LGMS_FOA: linear generation mechanism of candidate solution_FOA.

Bold numbers indicates that the corresponding algorithm has the best experimental result.

Function F1 optimization curve.

Function F2 optimization curve.

Function F3 optimization curve.

Function F4 optimization curve.

Function F5 optimization curve.

Function F6 optimization curve.

Function F7 optimization curve.

Function F8 optimization curve.

Function F9 optimization curve.

Function F10 optimization curve.

The experimental results of F1, F2, F3, and F4 show that all algorithms except for TFOA cannot accurately find the extreme value of the functions. The reason is that these test functions have many “local extremum points,” which cause the algorithms to easily fall into a local optimum. Additionally, these function extrema are hard to find because they are far from the origin. On the contrary, the results prove that TFOA can find the function extrema values with small variances, which demonstrates its performance in terms of finding low-dimensional function extrema.

As for F5, F6, F7, F8, F9, and F10, six high-dimensional functions, the experimental results show that the algorithms IFFO and CFOA exhibited the premature phenomenon but that TFOA succeeded in finding the extremum. Relatively speaking, TFOA outperforms the original FOA, IFFO, and CFOA in terms of not only the accuracy of the solution but also the variance.

By observing the optimization curve of TFOA, it can be seen that when converging, the number of iterations is higher than other algorithms due to the traction mechanism. Specifically speaking, TFOA needs a certain number of iterations to perform traction operations to find a better solution. The other three algorithms lack proper measures to deal with the problem that the algorithm falls into the local optimum, so they converge to a local extremum and fail to find the global optimal solution.

In conclusion, it can be seen that for a multimodal, low-dimensional function, TFOA can perform both accurately and stably. As for high-dimensional functions, TFOA displays a comparatively better performance as well. Therefore, the effectiveness of the improved FOA algorithm, TFOA, has been proven.

Algorithm performance test in discrete environments

Web services are software components designed to support interoperable machine-to-machine interaction over a network. 20 With the number of web services increasing, the selection of a web service for a composition is not an easy problem because many web services are equal in functionality but different in Quality of Service (QoS).21–30 Web service composition aims to choose a combination service which meets the needs of a user’s service quality. Currently, special attention has been given to the solution of the web service composition problem through SI algorithms, with the proposal of algorithms such as PSO, ACO, and firework. However, the convergence rate and optimization ability of the above algorithms can be further improved. In this article, the effectiveness of TFOA is evaluated and compared with the results of dynamic discrete particle swarm optimization (DDPSO) proposed by Zhang et al. 26

Modeling service composition algorithm

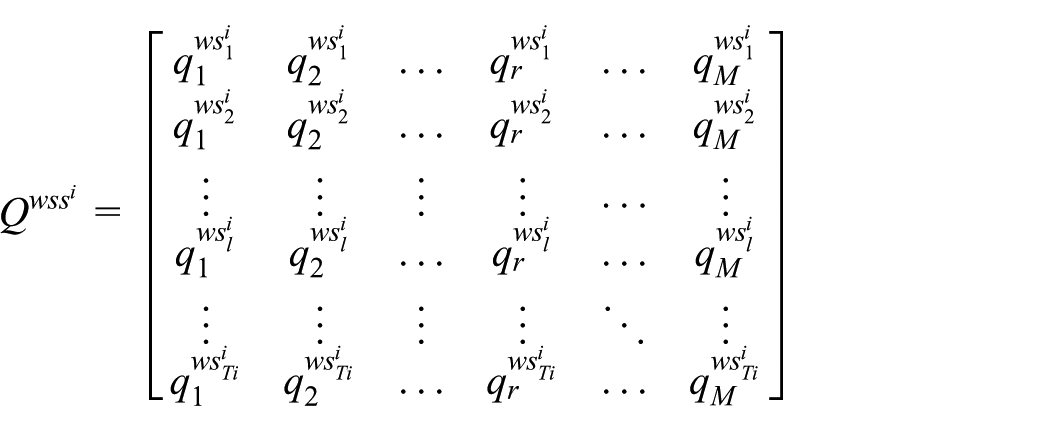

Assume that task

where

In TFOA, individual coding is expressed as {

A fitness function is employed to evaluate the usefulness of web service composition. As shown in equation (14), the fitness function used in this article is as follows

where

In a discrete environment, using a dynamic radius will generate a decimal and so a crossover operation is applied instead. The operation process is shown in Figure 13, where

Learning from the best individual in TFOA.

A dynamic radius strategy will be used continually in the generation of a traction population. If a decimal number is generated in the calculation process, we will round the decimal number to a positive integer.

Experimental simulations

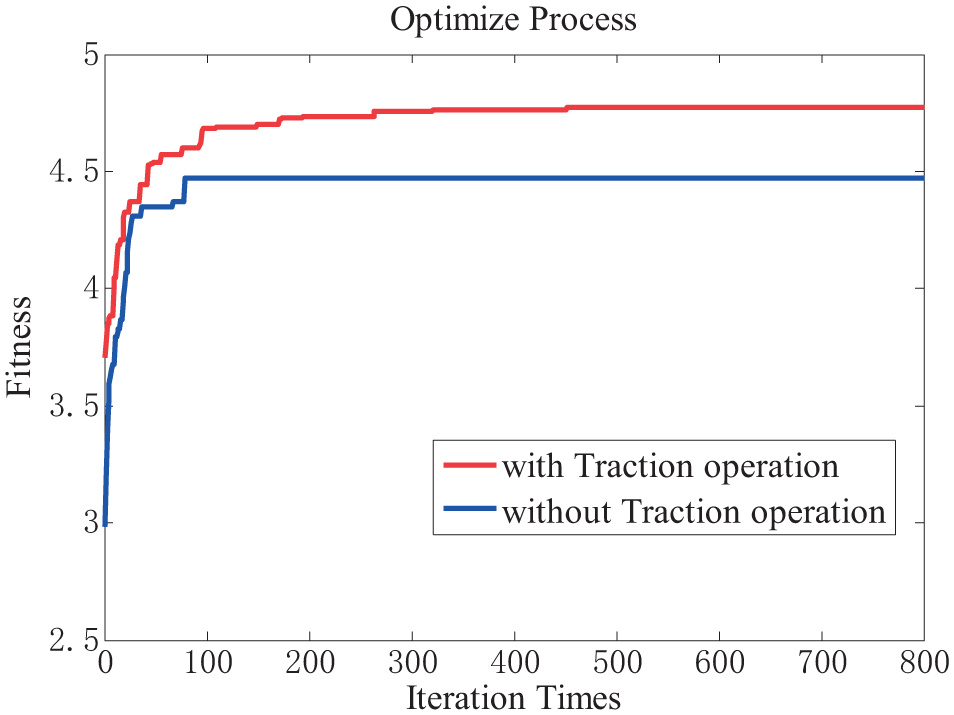

The experiment will mainly focus on the performance of the algorithm, including the influence of the traction group, the optimization ability, the convergence speed, and the stability.

To verify how much the algorithm can be improved by a traction group, a comparative experiment was carried out. The number of candidate services was set to 500. The number of subtasks was set to 7 and the iteration number was 800. As shown in Figure 14, TFOA with a traction group significantly outperforms TFOA without a traction population, which proves that the performance of TFOA has been greatly improved.

Effect of traction on the performance of TFOA.

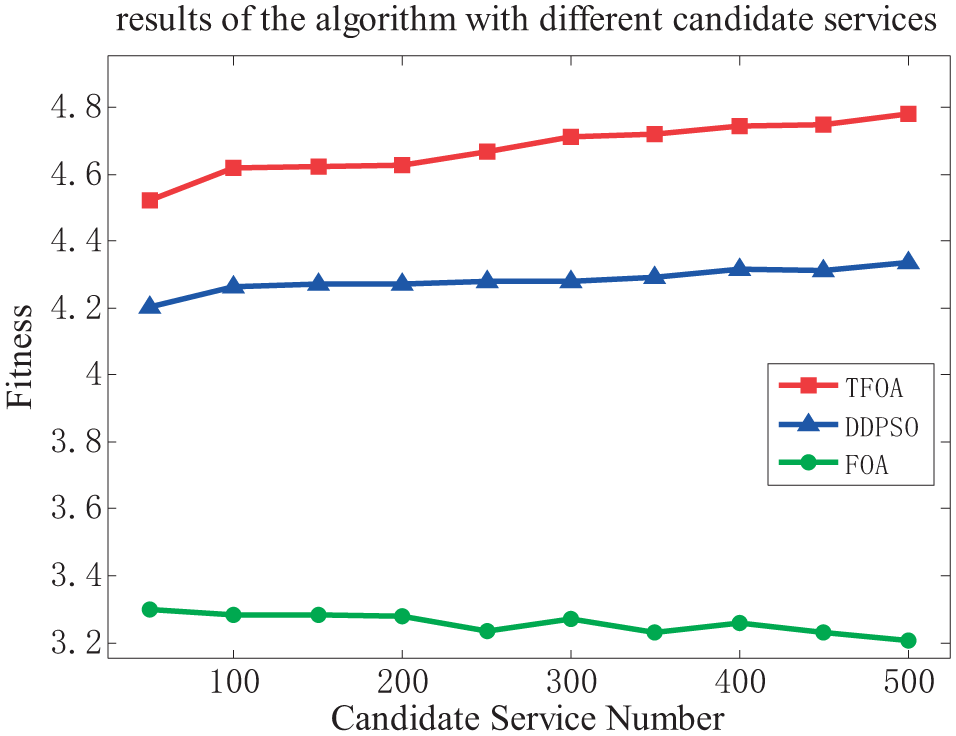

In the second controlled experiment, TFOA was compared with DDPSO proposed by Zhang and Cui 15 and the original FOA algorithm with 50 independent runs. The number of iterations (800) and population size (50) were fixed under an environment with different candidate service numbers (50, 100, 150, 200, 250, 300, 350, 400, 450, and 500). The results are presented in Figure 15.

Optimization results of the algorithm with different candidate services.

It can be seen that for a different number of candidate service, the final fitness values of TFOA are noticeably higher than that of the other two algorithms.

The third experiment mainly focused on the volatility of the algorithm. SI algorithms often have the problem of large fluctuations in the results. In an environment that was the same as that for the second experiment, the fitness variances obtained with 50 independent runs are shown in Table 5. It can be seen that TFOA, with a smaller variance, is more stable than the other two algorithms.

Variance of the fitness of the algorithm under different candidate service numbers.

FOA: fruit fly optimization algorithm; TFOA: traction fruit fly optimization algorithm; DDPSO: dynamic discrete particle swarm optimization.

The fourth set of experiments was used to measure the convergence speed of the algorithm. The number of candidate services (350) was fixed. The experimental results are shown in Figure 16. By examining the experimental results shown in Figure 16, it can be concluded that the convergence rate of TFOA is higher than that of the DDPSO algorithm and the original FOA algorithm.

Optimization results of the algorithm with different iteration times.

The conclusion derived from the above four experiments is that in terms of the solution stability, convergence speed, and solution result, TFOA is superior to DDPSO and the original FOA algorithm. Therefore, the usefulness of TFOA in solving the web service composition problem is proven.

Conclusion

In this article, a new improved fruit fly optimization algorithm named TFOA is proposed. TFOA uses a “traction population” to guide the algorithm to jump out of the local optimum. In addition, the convergence speed and the solving ability of the algorithm are also improved by combining dynamic step size and chaotic mechanism. The performance of the proposed algorithm is tested in both continuous and discrete environments. By solving the extreme value of various functions and through comparisons with three other improved optimization algorithms, that is, LGMS-FOA, IFFO, and CFOA, it can be seen that TFOA features a high accuracy, wide application range, and high stability. Compared with the DDPSO algorithm when solving the problem of web service composition, TFOA is better in terms of accuracy and speed of convergence.

In the case of service composition, the result yielded by TFOA algorithm is unqualified when the number of candidate services exceeds 10,000. Therefore, in addition to reducing TFOA’s time complexity, future work will focus on improving its performance especially when it comes to a large data set.

Footnotes

Handling Editor: Daniel Gutierrez-Reina

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key Technology R&D Program (2015BAK24B01).