Abstract

Uncovering hidden radioactive materials continues to be a major hurdle in worldwide supply chains. Recent research has not adequately investigated practical Internet of Things (IoT)-based approaches for improving and implementing efficient data fusion techniques. Current systems often misuse resources, leading to security vulnerabilities in typical settings. Our research delves into the fundamental principles of detection using both single and multiple sensor configurations, adopting a probabilistic method for merging data. We introduce a model aimed at accelerating the detection of radiation emissions in actual port operations. The results highlight the model’s effectiveness in rapid identification and determine the best conditions for its application in scenarios involving stacked containers, whether they are on ships or positioned in storage areas.

1. Introduction

1.1. Problem statement

Over the past decade, containerization has witnessed an unparalleled surge, redefining the contours of global trade and logistics.1,2 Originating as a simple concept of transporting goods in standardized containers, containerization has grown to become the backbone of modern trade, with its potential only amplifying as years progress. 3 This upswing is evident in the sheer volume of containerized cargo, which has seen an exponential increase. 4 According to the World Shipping Council, in the early 2010s, the volume of goods transported via containers stood at around 100 million TEU (Twenty-Foot Equivalent Units). By the close of the decade, this number had soared to nearly 200 million TEU, effectively doubling the scale of operations. This staggering growth underscores not just the logistical advantages of containerization, but also its economic impact, as it substantially reduces costs, streamlines operations, and accelerates transit times. 5 Such a meteoric rise in containerized trade is not merely a result of logistical advancements but is intrinsically tied to the evolving geopolitical landscape. 6 As emerging economies, especially in Asia and Africa, began to integrate more deeply into the global supply chain, the demand for containerized transportation burgeoned. Countries like China and India, with their vast consumer bases and burgeoning manufacturing sectors, have been pivotal in driving this growth. The Belt and Road Initiative (BRI) by China, aiming to create a modern-day Silk Road, further accentuates the geopolitical underpinnings of this trend. However, it is not just the rise of emerging economies that is contributing to this trend. The shifting sands of global geopolitics, marked by trade wars, regional alliances, and the renegotiation of trade agreements, have made containerized trade more critical than ever. As nations grapple with the challenges of protectionism, tariffs, and economic nationalism, containerization offers a degree of flexibility and efficiency that is unparalleled. The ability to swiftly reroute cargo, adjust to changing trade dynamics, and leverage economies of scale makes containerization a preferred choice for businesses and governments alike.

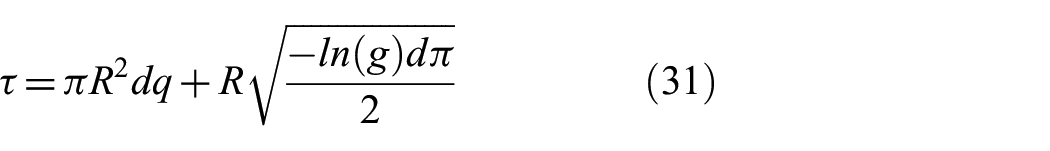

Looking to the future (see Figure 1), the potential for containerized trade seems even more promising. As technology continues to evolve, we are on the cusp of witnessing a new era in container logistics. Innovations like smart containers equipped with IoT devices, autonomous ships, and digital ports promise to revolutionize the industry, making it more efficient, transparent, and resilient.7–10

Tree diagram of the evolution of marine containers with future trends.

Moreover, the ongoing geopolitical realignments, be it the rise of regional trade blocs, the potential thawing of relations in the Korean Peninsula, or the African Continental Free Trade Area, are bound to create new avenues for containerized trade. In essence, containerization, which started as a logistical innovation, is now at the heart of global trade and geopolitics. Its impressive growth over the past decade is a testament to its significance, and given the current trajectories in technology, economics, and geopolitics, its role is only set to become more pivotal in the coming years, yet with such rapid developments in trade and containerization worldwide, new security and safety threats emerge. Dirty bombs, also known as radiological dispersal devices (RDDs), pose a significant threat to global security, especially in the realm of marine container transportation.11,12 These devices combine conventional explosives with radioactive materials, intending to spread radiation over a large area upon detonation. While they may not cause immediate fatalities on the scale of a nuclear bomb, the long-term health consequences and panic they can induce make them a weapon of choice for those aiming to disrupt and terrorize. Marine container transportation, a cornerstone of global trade, becomes a vulnerable target given the massive volume of containers that move across the world’s oceans daily. Detecting such threats amid this vast logistical network is like finding a needle in a haystack. Traditional detection methods, often based on stationary checkpoints and manual inspections, 13 are not only resource-intensive but also inefficient given the sheer number of containers that need to be screened.

1.2. Review of advancements

With the introduction of novel autonomous systems14–17 with the advent of the Internet of Things (IoT) and smart sensors18–20 there is a revolution underway in how we approach the problem. Smart sensors equipped with advanced radiation detection capabilities can pick up even low levels of radiation emissions on local to global scales, which might go unnoticed in conventional systems. These sensors, when integrated into a larger IoT framework 21 can wirelessly transmit data in real-time to centralized monitoring stations22–25 ensuring immediate action if an anomaly (deviating parameter) is detected. Such a system not only speeds up the screening process but also reduces the chances of human error26–29 a crucial factor given the potential consequences of missing a threat. 30 Furthermore, the integration of wireless techniques adds another layer of efficiency. For instance, sensors on containers that are stacked can communicate with each other, creating a mesh network.31–33 If one sensor detects an anomaly, it can alert nearby sensors to increase their sensitivity or change their scanning parameters, thereby creating a dynamic, responsive detection network. 34 This decentralized approach ensures that even if one sensor fails or is compromised, the overall system remains robust and functional.

In prior research, it was empirically demonstrated the feasibility of scaling sensing capabilities to expansive environments, specifically to comprehensive container terminals within maritime port operations,35–37 ensuring containers become more reliable and intelligent, capable of monitoring the environment on their own—proficient in collecting sensory data about vibration and signal strength, transmitting sensory data remotely to a central base station utilizing LoRaWAN technology. 38 Moreover, in 2023, Hernández-Gutiérrez et al. 39 demonstrated a low-cost embedded system aimed at detecting nuclear materials and sending detection events to the cloud in real-time with tracking capabilities. This project utilizes state-of-the-art electronics, including a silicon photomultiplier, a trans-impedance amplifier, an ARM Cortex M4 microcontroller, an ESP8266 IoT module, an optimized MQTT protocol, an MySQL database, and a Python handler program for enhanced nuclear material detection and tracking. And in 2022, Tran-Quang and Dao-Viet 40 demonstrated one significant project—an Internet of Radiation Sensor System (IoRSS) aimed at detecting radioactive sources that are out of regulatory control, particularly in scrap metal. This system integrates various types of detectors, including semiconductor detectors, proportional counters, Geiger-Müller tubes, and scintillator detectors such as NaI(Tl) and CsI(Tl), to effectively identify gamma emitters and special nuclear materials (SNMs). The project emphasized the importance of detecting both gamma rays and neutrons simultaneously to increase sensitivity against natural background radiation. This comprehensive approach demonstrated the critical role of advanced detection technologies in enhancing security measures at border control points and within the maritime industry, focusing on a wide range of targets, including explosives, illicit drugs, and SNM.

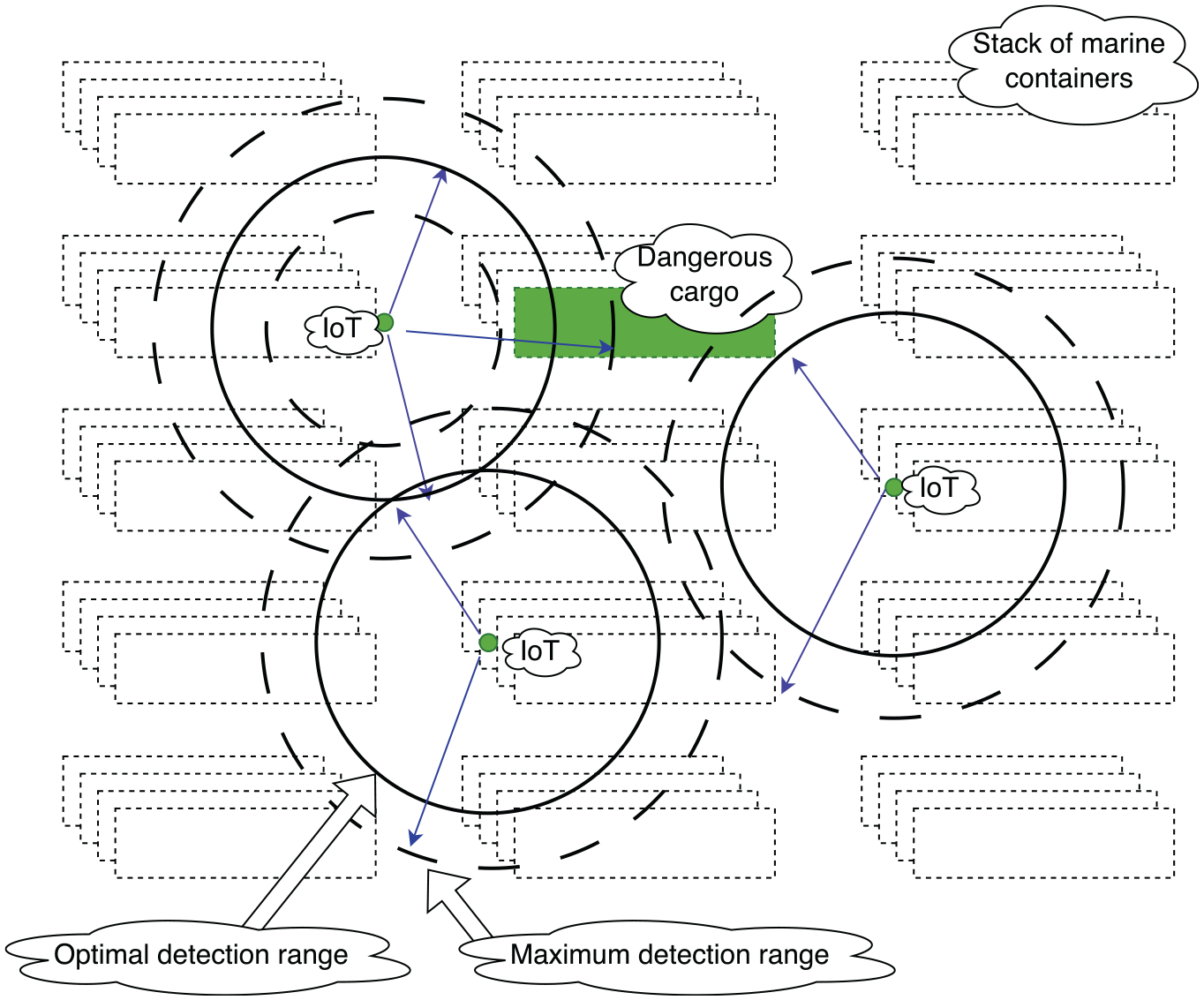

Creating these sensory units within the framework of the intelligent container, in adherence to regulations set forth by the European Union and other international jurisdictions, can substantially enhance detection efficacy. Such integrations are poised to significantly advance the system’s agility, adaptability, and sustainability, aligning with the vision for fully autonomous port operations in the forthcoming era (Figure 2).41,42

A conceptual depiction of how smart containers are incorporated into a container terminal, highlighting their interconnectivity when piled up and while being moved around the terminal for handling.

However, technology alone cannot solve the problem. Regulations play a crucial role in ensuring that all stakeholders in the marine container transportation industry adhere to best practices. 43 The US Container Security Initiative (CSI) is a pioneering effort in this direction. Launched by the US Homeland Security, CSI aims to pre-screen containers that pose a potential threat before they even reach US shores. By collaborating with major ports worldwide, the initiative ensures that high-risk containers are identified and inspected, leveraging technology and intelligence-sharing. On the other side of the Atlantic, the European Union has its set of regulations focusing on container security. The EU emphasizes a multi-layered approach, combining technological solutions with intelligence and risk assessment methodologies. Such regulations not only set the standard for security measures but also promote international collaboration, a must when dealing with a problem of this magnitude. It stands out that the current geopolitical trends emphasize the urgent need for a rigorous and systematic approach to ensure accurate and reliable results in practical applications.

1.3. Paper structure statement

In this paper, we begin with a comprehensive review of the current challenges in detecting hidden radioactive materials in maritime port operations, emphasizing the need for enhanced detection methodologies. Following this, we introduce our IoT-enabled probabilistic model, detailing its conceptual framework and the novel approach it employs for rapid detection. This paper is structured as follows: Section 2 presents a detailed review of related work, highlighting advancements in IoT applications for security and detection systems. Section 3 outlines the methodology, including the development of the probabilistic model, data fusion techniques, and the simulation environment used for testing. In section 4, we discuss the results of our simulations, demonstrating the effectiveness of our model in various scenarios. Section 5 explores the implications of our findings for both academic research and practical applications in maritime security, offering insights into the potential for IoT technologies to revolutionize detection capabilities. Finally, section 6 concludes with a summary of our contributions and suggestions for future research directions. Through this structured analysis, we aim to underscore the potential of IoT-enabled solutions in enhancing maritime port security against the threat of hidden radioactive materials.

2. Methods

2.1. Examination of physical and systematic properties of the following mathematical framework

In recent scientific explorations about radiation detection, there has been a critical investigation into the isotropic assumptions surrounding simplistic event models.44,45 Such models are pivotal in the realm of hazardous radiation source detection within the confines of standard shipping containers. This study posits a comprehensive model that pivots primarily around the gamma radiation spectrum, emphasizing its isotropic nature. 46 Gamma radiation, due to its intrinsic characteristics, can readily permeate the relatively thin steel barriers of shipping containers, radiating uniformly in every conceivable direction. While alternative radiation forms also hold potential for measurement, their propagation is largely impeded by denser materials such as thick metallic plates, rendering them non-isotropic. The overarching concern herein is the potential repercussions of such radiation, which, given its magnitude and implications, can be aptly categorized as an event of significant importance. A quintessential scenario to consider is one where sensors, strategically deployed in a container yard, identify discrepancies by discerning shifts in photon flux. The underlying mechanism involves photons impinging on a sensor, exhibiting a Poisson distribution. The temporal dimension is crucial in this model; the interval necessitated for a sensor to confirm the existence of a radiation source is intrinsically tied to the duration needed to ascertain an elevation in the long-term background photon flux attributable to the anomalous source.

Furthermore, it is imperative to understand that the detection time is inversely proportional to the magnitude of the flux enhancement. Elaborating on this, while a minuscule surge in photon flux might necessitate an extended duration for detection, a pronounced spike can expedite the detection process considerably. The spatial dimension also plays a crucial role in this context. Given the fundamental principle that flux diminishes under the inverse square law concerning the distance from the source, it can be deduced that the detection time escalates quadratically as one moves farther from the aberrant source. Incorporating statistical analysis into this model further accentuates the complexity of the problem. Given the stochastic nature of photon interactions and the myriad variables at play, probabilistic models become indispensable in predicting detection times with a reasonable degree of accuracy. Factors such as sensor sensitivity, background radiation levels, and the inherent variability of the radiation source itself further complicate the model. In addition, real-world scenarios might involve multiple radiation sources, each with its unique isotropic or non-isotropic characteristics, necessitating a more intricate multi-source model.

This discourse underscores the intricacies involved in understanding the isotropic assumptions of simplistic event models concerning radiation detection in containerized environments. While gamma radiation stands out due to its isotropic behavior and ability to penetrate standard shipping containers, other radiation forms, hindered by dense materials, exhibit non-isotropic patterns. The challenges lie not just in detecting these radiation sources but in doing so in a timely and accurate manner, factoring in both temporal and spatial dimensions, and accounting for the probabilistic nature of photon interactions. The models presented herein serve as foundational frameworks, but further empirical validation and refinements are necessary to enhance their applicability in real-world scenarios.

Addressing the intricacies of sensor-based systems requires a comprehensive understanding of the multi-faceted challenges inherent to their operation. Three predominant challenges command attention:

Volume: This pertains to the sheer number of sensing units integrated within a system. As systems grow in complexity, the number of sensors often increases proportionally, which can introduce logistical and data management complexities. Ensuring synchronized operation and effective communication among a vast array of sensors becomes paramount.

Noise: Noise, whether electronic or environmental, can significantly impact the accuracy and reliability of sensor readings. Electronic noise emanates from inherent fluctuations in electrical signals, while environmental noise can be attributed to external factors like temperature variations, humidity, or other ambient interferences. Mitigating these disturbances is crucial for obtaining precise sensor outputs.

Trade-offs: In the realm of sensor technology, trade-offs often refer to the balancing act between parameters like sensitivity, power consumption, cost, and operational lifespan. Decisions made in one area can have cascading effects on other parameters, necessitating a holistic approach to sensor design and deployment.

When delving deeper into the specific types of errors associated with sensors, they can be broadly categorized into:

Detection error: This type of error arises when electronic disturbances or environmental interferences lead to the sensor erroneously detecting events that have not occurred. For instance, electronic noise or other environmental perturbations can cause the sensor to register false positives or negatives, compromising the integrity of the data.

Location error: Location errors manifest when a sensor inaccurately determines or reports its position or the position of a detected event. Ensuring precise geolocation capabilities and calibrating sensors to account for potential spatial inaccuracies is vital.

Timing error: Timing discrepancies, often stemming from clock drift in sensors, can result in sensors misreporting the exact timestamp of an event. As systems rely on synchronized operations, even minor deviations in time reporting can lead to significant operational challenges. Implementing robust clock synchronization protocols and regularly calibrating sensor clocks can mitigate these errors.

The realm of sensor-based systems and their accurate detection capabilities is a critical area of study, especially given the increasing reliance on such systems in various industries and applications. At the heart of this is the sensing unit’s ability to discern between actual events and erroneous readings. When a sensing unit is deployed within a specific region in the vicinity of the emitting entity, it operates by constantly monitoring and analyzing the data received from multiple sensors. The determination of an actual event, or what we term a “true positive” (TP) event, is made when the ratio of sensors reporting events within that designated region surpasses a certain threshold, which is typically some multiple of the number of sensors that do not report any events within the same region. This method of event detection is based on the principle that a genuine event is likely to be detected by a significant proportion of sensors near it, while those further away or obstructed may not register the event. However, this calculation technique is not without its challenges and downfalls. There exists the possibility of “false positive” (FP) events, which are instances where sensors report events that haven’t taken place. This could be due to a myriad of reasons, from electronic noise and interference to environmental factors affecting the sensor’s performance, as reported previously. In some unfortunate circumstances, these false detections from different sensors can coincidentally align, leading the sensing unit to mistakenly identify it as a true event. This overlap of erroneous readings can complicate the situation of event detection and significantly hamper the accuracy of the system that takes the measurements.

The rate at which these FPs occur is not constant and can be influenced by several factors. One primary factor is the uncertainty associated with the propagation of the event. Events can manifest and propagate in various ways, depending on their nature. For instance, a seismic event might spread differently than a thermal one. The inherent constraints on the speed of detection also play a role. Some events might be fleeting, requiring sensors to detect them almost instantaneously, while others might be more prolonged, allowing sensors a larger window for accurate detection. Noise in the sensors is another significant contributor to the rate of FPs. This noise can be intrinsic, stemming from the electronic components of the sensors themselves, or extrinsic, originating from external sources like electromagnetic interference, temperature fluctuations, or even physical obstructions. As the level of noise increases, the clarity and reliability of the sensor readings diminish, making it increasingly challenging to discern between true and false events. Furthermore, the spatial arrangement and density of the sensors within a monitoring region can also impact detection accuracy significantly. If sensors are too sparsely placed, they might miss out on detecting an event entirely, leading to false negatives. Conversely, if they are too densely packed without adequate calibration, they might amplify the noise, leading to increased FPs. To compound the complexity, the very definition of what constitutes an “event” can vary based on the application and the parameters set for detection. This subjectivity can introduce another layer of uncertainty in certain categories of event detection.

2.2. The mathematical framework behind the detection

This research develops a framework and examines the detection capabilities of an individual sensor. We employed a rudimentary version of a typical sensor for our analysis, therefore omitting other parameters affecting the calculation and their probabilistic variations. The operational mechanics of the proposed solution are straightforward: the sensory unit, in theory, triggers an alert when the measurements procured by the sensor at a specific instance (time t) surpass a predetermined threshold value, denoted as

To further provide a more holistic view of the matter, we can examine two distinct scenarios or occurring alternatives:

Background noise dominance: In this scenario, no discernible event has transpired at the specified time,

Event occurrence: Contrary to the first scenario, here, a tangible event, represented by

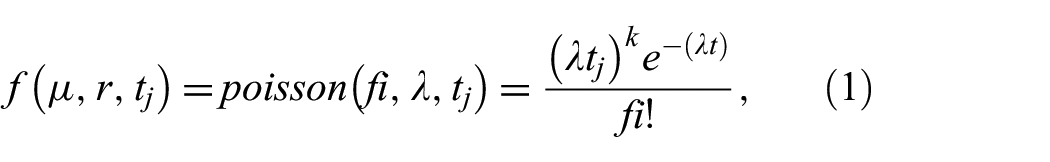

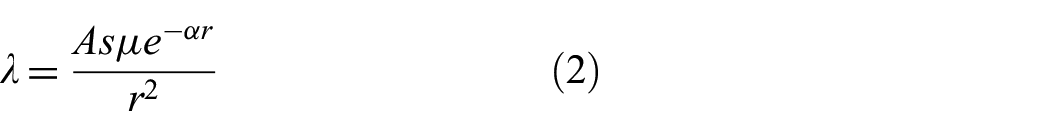

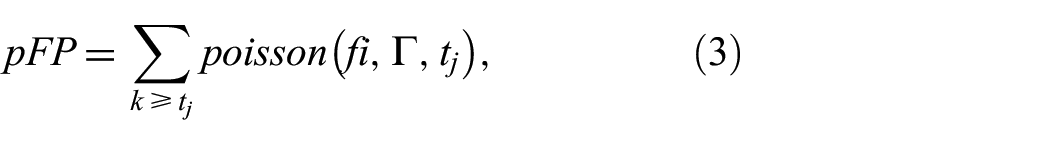

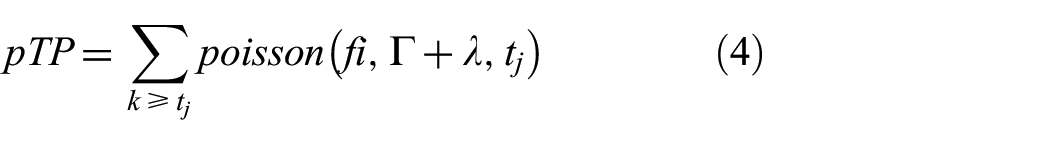

If a sensor makes a mistake in its readings, it can result in either FPs (wrongly detecting something) or TPs (correctly detecting something). The accuracy of these sensors, especially when detecting radiation, depends on many factors. One of the predominant factors is the intrinsic quality and calibration of the sensor, which is defined by the sensor detection function, denoted as

With the effective source strength measure expressed as Equation (2):

Let pFP be the probability of an FP. The probability of observing

Let pTP be the probability of an TP. The probability of observing

In this study, it can be proposed that when the number of events fi exceeds 10, a Poisson distribution can closely resemble a normal distribution with minimal discrepancies. Let

Furthermore, define

When we have equality

Here, we formulate a system Equation (8):

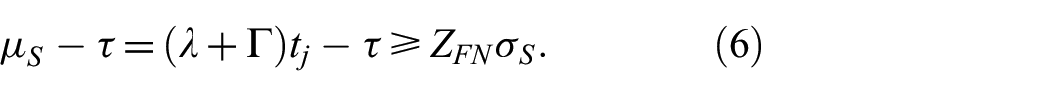

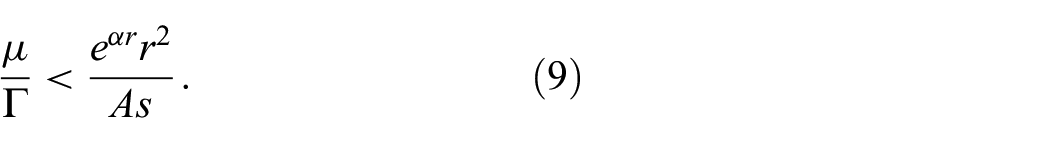

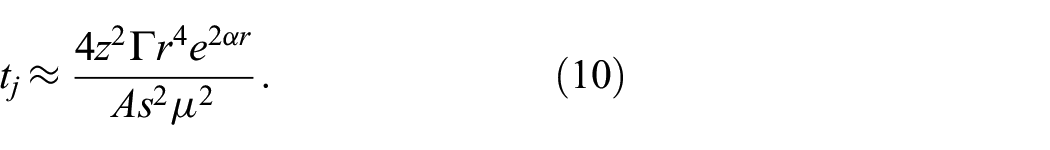

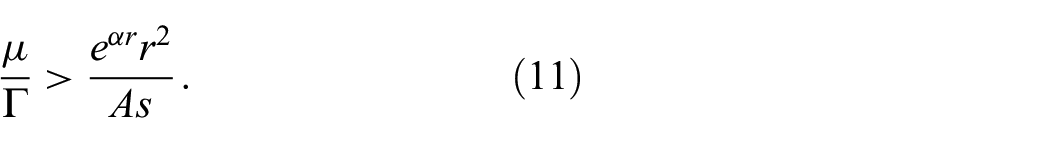

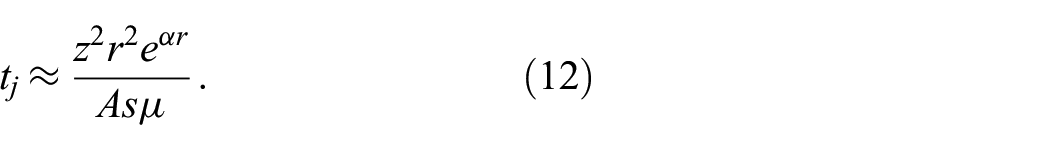

Following the manipulations of the aforementioned equations, we derive a relationship concerning the observation time

where

For a swift response to a genuine yet weak event, a high-density sensor network is essential. Utilizing a dense network increases the likelihood of positioning a sensor in proximity to an event, simplifying the detection challenge addressed in the previous sections. In this context, the density of sensors and the background noise exert minimal influence on the observation duration, as per Equation (11):

The relation with time becomes clear in Equation (12):

2.3. Inclusion of the data fusion technique and its effectiveness estimation

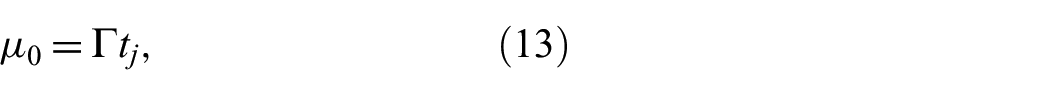

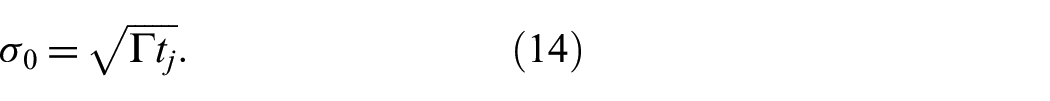

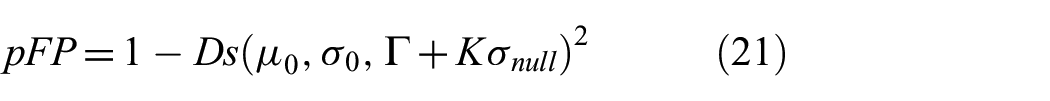

In this study, we posit that the accurate detection of an event occurs with a probability greater than a baseline true positive rate

For secondary screening scenarios, the approach involves using two sensors. Here, it is assumed that

In this context, the fixed parameter K is set based on the limits of

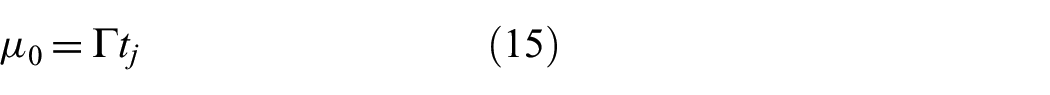

In the scenario of secondary screening or when fusing data from multiple sources, we use parameters (15) and (16):

Similarly, the scenario where an event is detected is represented by Equations (17) and (18):

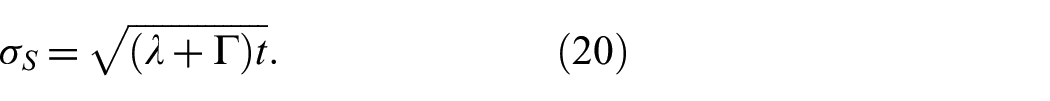

For the secondary screening, which involves data fusion, we use Equations (19) and (20):

Therefore, for the situation

Here in the estimation of standard deviations and mean values for two separate containers, we utilize the

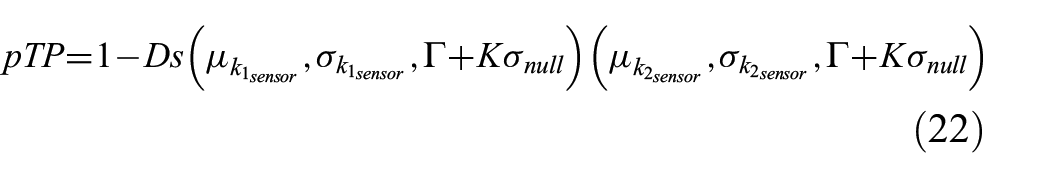

We can identify multiple potential solutions for the

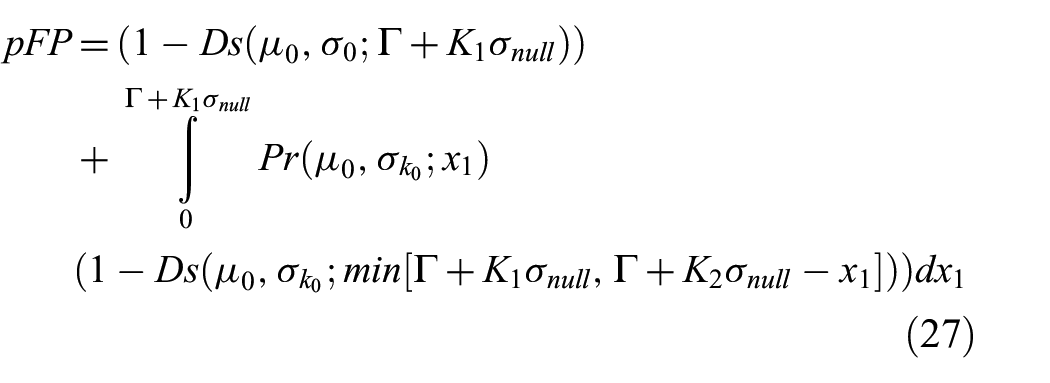

Hence, accurate probabilities of true events can be calculated with Equations (27) and (28):

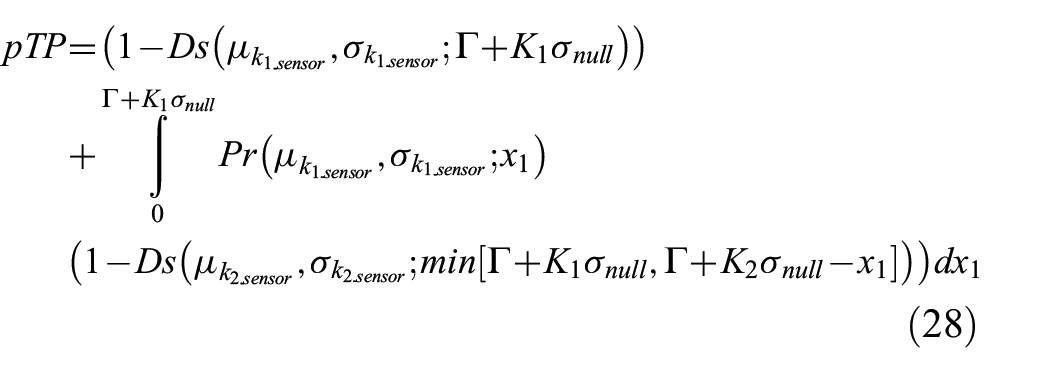

Presuming a source is randomly positioned along the line connecting two spaced sensors within containers, altering

When sensors are evenly set up throughout the container area with a certain density d, and there is a rule that the false alarm rate across the whole network of IoT sensors cannot be more than

Therefore,

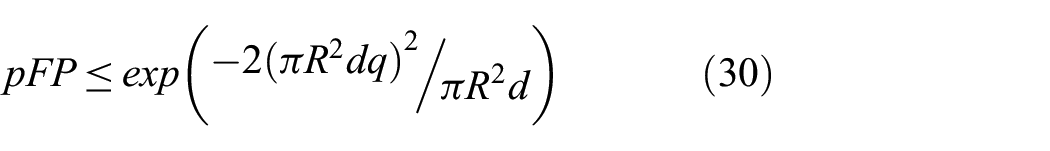

Let us say K represents the count of sensors that have picked something up. The system will only signal that it is found something if K goes over a certain threshold

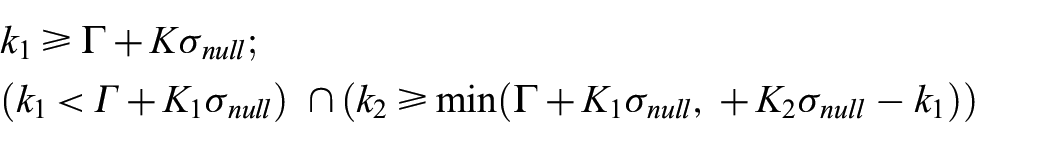

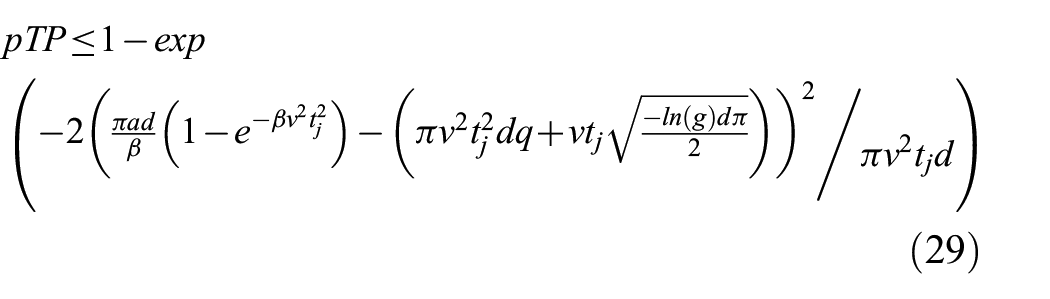

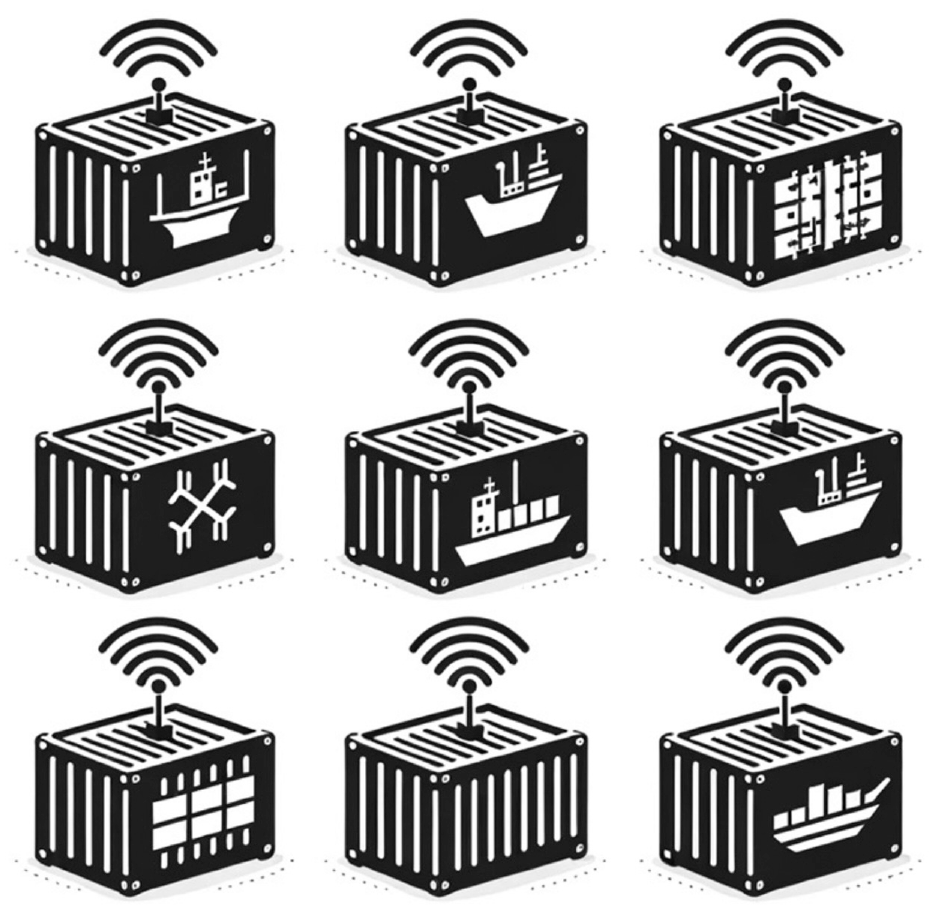

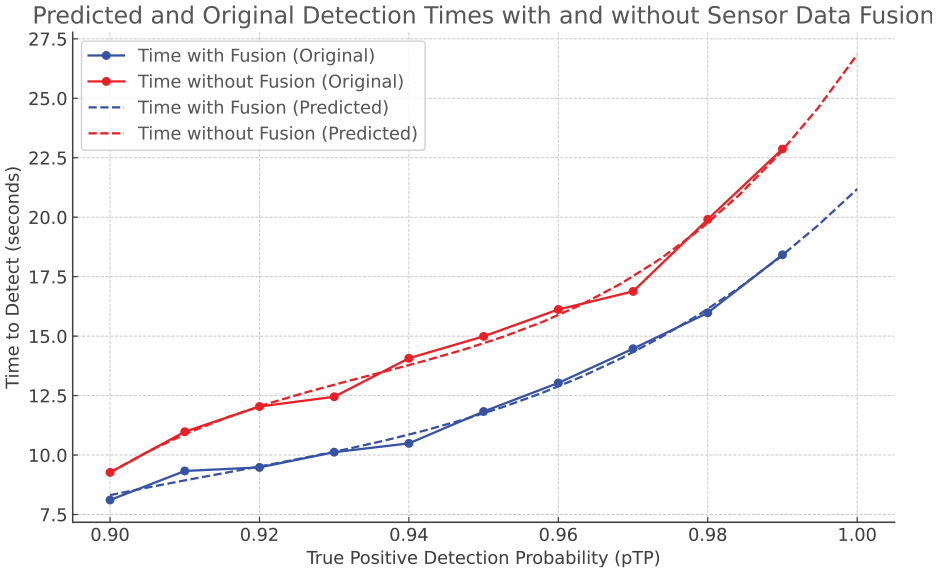

Comparative computational results for

Table 1 demonstrates a comparative analysis where the time required to achieve a true positive detection probability (

With Fusion: The times required to detect a given

Without Fusion: In contrast, detection times without fusion also increase with higher

Comparative efficiency of radiological threat detection with and without sensor data fusion in maritime port operations.

These data points elucidate the critical advantage of integrating sensor data fusion into detection systems within the context of port security. By comparing the two scenarios, the graph demonstrates that data fusion significantly improves the speed and accuracy of detecting hidden radioactive materials, thus contributing to more robust and efficient port security operations. This improvement is essential for maintaining the balance between facilitating global trade and ensuring the security of maritime port operations against potential radiological threats.

The results underscore that a dual-node detection system not only enhances the probability of accurate detection but also reduces the time to detection, effectively lowering the operational threshold for the detection process. However, extending the analysis to configurations involving three or more sensors reveals a diminishing return on detection time reduction. Although a decrease in detection time might be anticipated with an increased number of sensors, the actual outcome is a marginal increase in the overall time. This increment can be attributed to the additional networking and computational time that cumulatively extends the total detection threshold time. Therefore, in a practical operational setting, the final detection time must be evaluated by considering these additional time components within the system’s architecture.

3. Results

The integration and operation of smart containers within a container terminal heavily depend on the technological infrastructure supporting them. When these containers are monitored using a sparse interconnected sensor network, the system can detect anomalies or changes in just 10 s. However, this comes with a relatively low confidence level of 30%. This means that in some scenarios, the system might miss detecting crucial changes or could raise false alarms. On the contrary, when the smart containers stack utilizes a more advanced and denser sensor unit deployment scheme, which includes twice the number of sensors per square foot and sensors that are six times more sensitive, the detection capabilities of the system rise sharply. In the same 10-s timeframe, the confidence level shoots up to a staggering 95%. This essentially means that the sensory system is almost certain about its detections, drastically reducing the chances of errors. Furthermore, research results have provided an interesting insight into the cost-effectiveness of deploying sensors (based on the market). If a container terminal is on a budget and decides to use more affordable sensors, which inherently have noisier outputs and are less sensitive to changes, it does not necessarily compromise the overall efficiency of the system. These inexpensive sensors can still match the performance of their high-end counterparts. The secret lies in the density of deployment. By significantly increasing the number of these cheaper sensors in the container stack, the system can achieve the same performance levels as that of the pricier, high-quality sensors. In essence, whether a container terminal opts for state-of-the-art sensors or more economical ones, the density and deployment strategy play a pivotal role in ensuring optimal performance and reliability. This insight can greatly aid container terminals in their decision-making process regarding technology investments and operational strategies.

In the concept of sensor deployment within a self-communicating container network (the smart containers), it is essential to estimate the impact of sensor arrangements within the area and their density. We researched to understand how the distance between sensors affects the time it takes for the initial results. We looked at different possible situations to see how changing the spacing of sensors within the containers could speed up or slow down the process of identifying and responding to security events. Specifically, we assessed the repercussions of varying the distance between sensors distributed in the containers, especially those spanning a length of 15 meters. A crucial parameter to consider in this context is the distance, denoted as r, between sensors. Our findings indicate a non-linear relationship between r and the time

Concept demonstration of emission detection by a single IoT sensor within an operational radius within a stack of marine containers.

Moreover, the experiment demonstrated that a reduction in sensor sensitivity by 50%, with all other variables held steady, resulted in significant effects: tj or the time required for this event detection, surged by an estimated 400%. It underscores the critical importance of calibrating sensor sensitivity to optimize the rapidity of event detection. Augmenting background noise levels by 100% directly led to a roughly 100% elongation in the decision-making timeframe. Consequently, if our objective is to retain rapid decision-making capabilities while keeping other parameters constant, it becomes imperative to bolster As by approximately 140%. It is also worth noting the relationship between sensor density and the distance between sensors in a network, represented as r. Preliminary approximations suggest that sensor density exhibits a proportional relationship to the inverse square of r, i.e.,

This paper’s findings emphasize how crucial it is to place the right sensors thoughtfully inside containers to improve how quickly and accurately we can detect certain safety events. Our study aims to provide clear guidelines for setting up this kind of sensor network in marine containers, settings that are both quick to respond and accurate in their detection capabilities for future deployments on a worldwide scale.

4. Conclusion and discussions

In conclusion, while the threat of dirty bombs in marine container transportation is real and ever-present, advancements in technology, coupled with stringent regulations, offer a beacon of hope. By leveraging smart IoT sensors with optimized data fusion and knowledge extraction techniques, preferably as an Edge computing solution, coupled with novel wireless communication and cyber-security techniques, we can ensure that global trade continues unhindered, keeping the world’s economy—safe and the transport chain—secured. A pragmatic detection approach should involve utilizing a multi-container system for sensor data fusion to yield preliminary optimal results.47–49 However, the selection of the minimal number of containers is constrained by the requirement to mitigate communication delays and data packet loss within the IoT wireless environment, as these can increase exponentially with network complexity, potentially leading to system overloads and delays. Hopefully, advancements in edge computing will diminish the computational burden and increase the autonomy of the solution as a means of smart digitization of the security check procedure.

Understanding the feasibility of sensing and communication in maritime port operations for container monitoring and tracking necessitates a brief exploration of the wireless mediums employed. Technologies like LoRaWAN and NB-IoT are pivotal in this realm due to their unique attributes tailored to IoT applications. LoRaWAN stands out for its long-range communication capabilities, allowing devices to transmit data over kilometers with minimal power consumption, making it ideal for scenarios where sensor nodes must operate on battery power for extended periods. This is particularly relevant in vast container terminals where power sources may not be readily accessible. On the contrary, NB-IoT, backed by cellular technology giants, provides enhanced indoor coverage, support for a massive number of low-throughput devices, and improved power efficiency, although it typically operates over shorter distances compared to LoRaWAN. Both technologies offer distinct advantages for container tracking and monitoring, with LoRaWAN being more suited for wide-area coverage in less densely populated sensor environments, and NB-IoT offering benefits in areas with existing cellular infrastructure, ensuring reliable data transmission even in densely packed container environments. The choice between these technologies hinges on specific operational requirements, including range, data rate, power consumption, and existing infrastructure, thereby defining their feasibility in various sensing and communication scenarios within maritime port operations.

From the technical perspective, sensor performance is also susceptible to variations in operational conditions, which can precipitate malfunctions and erroneous readings. Extensive computations are necessary to counter this to ascertain the optimal functioning radius within which the sensors can reliably identify anomalies, such as Gamma Ray Emissions (GREs), commonly associated with illicit radioactive substances used in constructing dirty bombs. In addition, the time taken for true event detection may exhibit a geometric progression under certain conditions. Augmenting the data set with additional inputs from adjacent sources enhances the quality of the detection outcomes, albeit at the cost of increased overall processing time, which may exceed the permissible operational duration. Consequently, the reliability of the detection remains uncertain, hinging on the data provided by the selected neighboring node. This scenario presents a real combinatorial optimization challenge for our future research in this area.

To ensure preparedness in the event of a high probability detection, it is imperative to institute additional procedural and legal frameworks. The implementation and comprehensive integration of these advanced detection methodologies into the operational standard are feasible only with a substantial proportion of containers equipped with these detection systems. An experimental approach could involve the localized application within a single port, employing containers from one company in a segregated assembly intermixed with standard containers, to empirically validate the effectiveness of the system in a controlled setting.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.