Abstract

Analysis of the literature related to wargaming identifies a requirement for the perception of immersion and engagement in wargaming. The references generally indicate that the computer is less able to facilitate collective engagement than a manual system; however, there is as yet little empirical evidence to support this. There are also suggestions that players perceive manual games differently to a computer wargame. An experiment, derived from the previous analysis, was performed to address the research question: Is there a discernible difference between the levels of players’ engagement in computer wargames versus manual wargames? The experiment provides empirical evidence that there is a difference in players’ engagement with a computer wargame compared to a manual game, in particular with the manual game providing greater engagement with other players. Hence, if engagement between players is to be encouraged and regarded as an important aspect of a wargame for defense applications, then this provides evidence that the manual approach can indeed be better.

1. Introduction

Military campaigns and operations have been represented in defense and security analysis using a variety of wargaming methods and tools.1–3

The methods used to implement wargames vary quite widely from highly computerized wargames to simple discussion-based wargames with no computer support. The Rapid Campaign Analysis Toolset (RCAT) is an example of a manual wargame. 4

A number of references provide some guidance and advice on wargaming implementation and characteristics. A major point where guidance is inconclusive is the degree and suitability of the use of manual or table-top wargames versus computer-based, or supported, wargames. A second point is the identification that there is a requirement, or at least there is a perception of benefit, for greater immersion and engagement in wargaming. The immersion is stated as a part of the player involvement in the wargame which is necessary for the wargame to be useful; unengaged players will not participate properly and the wargame will not therefore provide satisfactory outputs.

The UK Wargames Handbook 5 produced by Development, Concepts and Doctrine Centre (DCDC) in the United Kingdom contains a series of descriptions of wargames examples and also covers some key points. It includes good guidance of what works and summarizes the design process steps. The guidelines for good wargaming in this paper include the need for inclusion of an adversarial nature, the need for including some representation of chance and uncertainty, the recognition that player decisions have primacy, the need for overall control of a wargaming event to ensure the appropriate outputs, an environment where the participants are aware that it is “safe to fail,” and the need to ensure that the wargame is engaging. The Handbook is written by recognized experts in the field; however, the references used are only Perla, 6 Sabin, 7 and McHugh Francis. 8

Sabin 7 states that computerization (of wargames) offers costs as well as benefits. These are then proposed in several locations through his book. He advocates a combination of computer and manual as both have pros and cons. In particular, he maintains that computer displays do not automatically deliver benefits over analogue solutions. One interesting comment is on the use of wargames for students to explore a historical event. The author states that “hardcopies are used for team playtests since they are easier for groups to use.”

Perla 9 discusses technology and proposes that the technology is a means to supporting the essence of wargaming—the clash of human wills. He states that electrons “are not always the best the most useful technology to apply.”

Perla, 6 in his original work, proposes that wargaming design is part art form and quotes Dunnigan in his assertion that there are two fundamentals in wargame design, realism and playability (to make games meaningful).

Longley Brown 10 quotes Sabin and Perla and adds an example where he was supporting a computer-based military wargame with a large user group and his manual map display was used by commanders involved in the game (an analysis game) as a better way of brief their staffs and building common understanding. He also posits that engagement is a key (if not the key) characteristic of a good game. Indeed, a chapter is devoted to engagement and begins “This chapter is central to professional wargames.”

Dunnigan 11 describes computer wargames development in his book albeit the implementation of computerization was in its early stages. More notably, he also (as cited by Perla) provides some basic guidance on design, in particular the need for playability to ensure engagement.

Salen and Zimmerman 12 in their Rules of Play explore games fundamentals. They refer to the nature of game play—that it includes experiential, social and representational, and cultural aspects. They also discuss the concept of meaningful play. They note that Huizinga 13 in Homo Ludens states that all play means something and that there needs to be some sense to the play. The goal of any game is for meaningful play.

1.1. Summary

In summary, all the modern references generally propose that the computer game/wargame is a very valuable mode of delivery, but there is supposition that this mode is less able to facilitate collective engagement and engagement with the other players in a game than with the manual systems.

There is a further proposition related to the player perception of the validity or acceptance of the manual games as opposed to a computer. In this case, the suggestion is that player perception is that the numbers are perceived as “more credible” for the latter.

There are two inherent important elements in these references. The first is that the guidance is presented usually as based on experience and insights with a recognition that there is little, if any primary, evidence. The second is the statements referring to the need for immersion and engagement as vital elements of a game or wargame.

This study was therefore undertaken to explore and evaluate computer and manual games in response to these two themes.

The work investigated the level of immersion and engagement of players, as perceived by those players, within a computer wargame and as a comparison with a manual wargame.

The specific experiment was derived from the previous analysis with the following research question:

Is there a discernible difference between the levels of players’ engagement in computer wargames versus manual wargames?

This article describes an experimental based intervention to investigate the users’ perceptions of the use of manual and computer wargames and compare the results of feedback in order to inform the above guidance with some experimentally derived evidence.

2. Research method

The approach taken to establish if there is a discernible difference in the levels of players engagement was to use a trial where a manual wargame and a computer-based wargame were both played and then the players questioned as to their level of perceived engagement.

2.1. Potential methods

One method is to play a manual wargame and a computer wargame with a set of consistent conditions and characteristics and to ask the players their level of engagement and to compare the results. The players could be questioned using a survey questionnaire or interviewed. An alternative would be to measure player engagement as they play the games using a form of biometric measurement data. This could be by measuring parameters to identify the level of concentration or arousal for individuals and to measure the amount of communication.

This experiment was intended to be conducted with students in a class environment, so to utilize quite high levels of intervention in the events themselves by recording individuals’ biometric data would have been a very high and unexpected level of imposition on the players. This also ran the risk that it might have reduced the number of participants by affecting the number willing to participate due to the level of preparation and specialist equipment required, and the act of collecting these biometric data could itself affect the level of engagement with the game itself, thus potentially distorting the results, similar to the Hawthorne effect. There is also a lack of skills associated with measuring these types of data among the researcher and the associated staff involved in the exercises. Therefore, this option was not chosen.

2.2. Chosen method

The approach taken was to generate two alternative but similar wargames and to run a set of students of a wargame class through both and then to request that they complete an anonymous questionnaire asking about their levels of engagement in four main criteria. These games were used as part of a class on wargaming and combat modeling and the students were there to learn and experience the different methods. The questionnaire was provided afterwards and its completion was optional. The students in the class varied across serving and retired military officers and other ranks, defense-related civilians, and non-defense-related civilians. To minimize the time imposition of completing the questionnaires, they were kept very short and the only data taken as regards to participants’ background was their experience levels in computer and manual games.

2.3. Experimental design

The two games were chosen to be as similar as possible in scale, scope, complexity, and length while team sizes were also the same in both cases and team members seated similarly closely together, with the main difference being people being individually seated at a PC in the computer case and seated round a table in the manual case. The test subjects were available and willing samples of those people taking these four courses. Each course ran each game once, with two courses running manual first and two running computer first. Questionnaire response was optional, and sometimes on different days, so that although the same people were playing both games, a paired analysis was not possible because not everybody responded to both. There is also a risk of non-response being indicative of non-engagement.

2.4. The wargames

The manual and computer-based wargames used to conduct this experiment are described below.

2.4.1. Manual wargame

The manual wargame, which may also be regarded as a board game (it will be referred to as a manual game from here), was developed within Cranfield University by the first author as a very simple introductory tactical military game allowing a Blue force to be set against a Red force. The system is applicable to any size engagement and most military conflict settings. In this case, it was used to represent a scenario where a Red force is positioned in a defensive location and the Blue force is attempting to find the Red force, engage it, and push past it to a further objective. The terrain setting used in this experiment was near Salisbury in the United Kingdom. The wargame rules amount to 2 pages in total and for this experiment each exercise was introduced by the first author explaining the rules and demonstrating them for around 20 min.

The students were then randomly broken into groups of four to six people and asked to conduct the game within a period of about 1 h. Each group was sitting together around a map board of about 1 m2. The players were divided into two teams—a Red command and a Blue command but all remained at the same table with the game being run as an “open” game.

The manual wargame system is designed to be used with the minimum of preparation, all that is required is a method of determining line of sight (LOS) and of measuring distances, usually using an expanded 1:25,000 map as a sheet of paper.

The force elements (FEs) are represented by counters as shown in Figure 1. They represent groups of three or four vehicles and groups or squads of troops (usually four to eight people). A set of combat factors at different ranges is written on the top left of the counter and a defense factor is to the lower right (4 on the example). The system used is turn-based, with each side acting alternately. The process is repeated for multiple turns until the end of the game, determined by the participants.

Example force element.

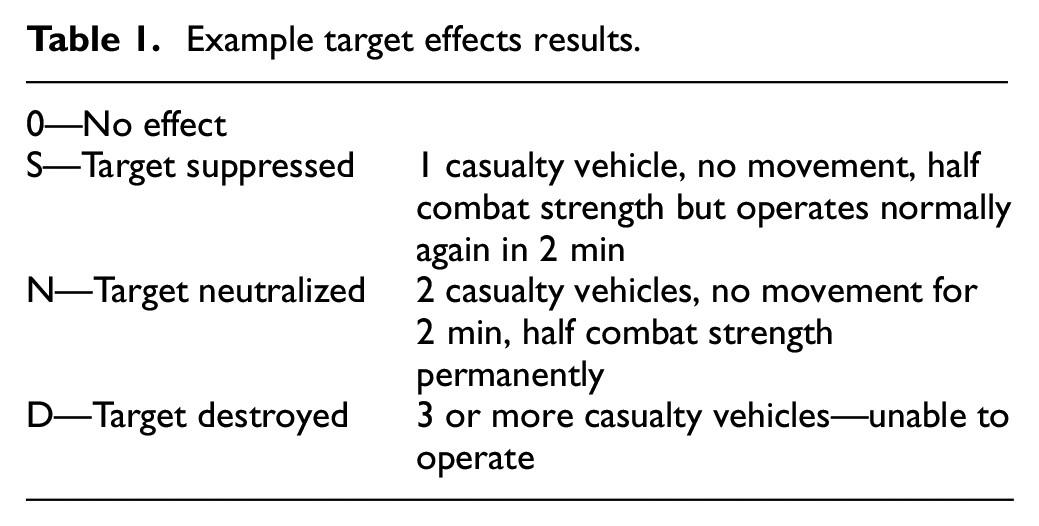

When engaging a target unit, the combat numbers are used to generate a ratio and then the attacker rolls a 10-sided die (or a 1–10 random number generator) and uses a combat table to determine an outcome from the following (Table 1).

Example target effects results.

2.5. Computer wargame

The computer wargame used was CONTACT. This is a computer-based wargame developed in the United Kingdom and used by UK MoD and several overseas military nations. Within education the CONTACT wargame is used with the intention to allow a simple introduction to a computer-based military wargame, so that only a limited set of its functionality is exposed and used.

The CONTACT scenario used for this study pits a Blue force against a Red force and is applicable to forces of up to Battalion level as a whole, representing individual vehicles or groups of vehicles at troop level (three or four vehicles).

In this case, it was used to represent the same scenario as that used in the manual game, with a Red force positioned in a defensive location near Salisbury and the Blue force attempting to find the Red force, engage it and push past it to a further objective. The scenario setting and goals were deliberately almost identical to the manual game.

The exercise was introduced by a lecturer by explaining the mechanics of the computer game and how to move systems and allow engagements and demonstrating them for around 20 min. The lecturer was not the same individual who had led the manual wargame exercise.

The students were then broken randomly into groups of five to six people but with each student in front of one computer screen that was linked to the other four or five in the group. They were sitting next to each other (c 1 m apart). The players each had a set of units associated with their workstation and under their sole control. The players in the same groups were able to communicate with each other for completing the mission. The exercise was conducted using the CONTACT game within a period of about 1 h which is the same duration as the manual game.

2.6. Data gathering—questionnaire for manual versus computer wargame

The questionnaire was developed as a very quick, optional post-exercise data gathering tool. The emphasis was on minimal time and overhead burden for the students. The questionnaires were not tied to an individual so the number completed could be (and was) different between the computer and the manual wargame sessions. The experiments therefore compared the differences between the responding groups as a whole, without any paired analysis being possible.

The first set of questions asked about the level of experience of PC-based computer games and (separately) of boxed/table-top games. Each was asked in a 3-point scale:

Not at all;

A little/sometimes;

Regular player.

The second, and main, set of questions asked about the perceptions of the respondents about their levels of engagement. These were as follows:

During the exercise how immersed did you feel as an individual in your role?

During the exercise how engaged did you feel as an individual in your role?

During the exercise how engaged did you feel with other participants in the exercise?

During the exercise how credible did you feel the simulation/game system was?

All were asked using a 4-point scale as follows:

Not at all;

Somewhat;

Considerably;

Completely.

The first three questions above were developed to ask for a simple response to allow the players to gauge their level of engagement and immersion. Note there was no definition given of the words “immersion” and “engagement.” Thus, the two first questions were designed to identify whether there was any perceptible difference in the two for the players under their own definitions of the words. The first two questions related to the individual and the interaction with the game systems. The third question expanded this to the other participants in the exercise. The fourth question was to address the perception the subjects had as to the level of credibility they felt in the tools.

3. Experiments and data formats

There were four separate experimental runs conducted at Cranfield University, led by the same people in every case. The scenarios and tools remained identical between the four occasions. The only differences were the subjects and the order in which the experiments were conducted. The subjects were students in four different classes. Each was within a short course, part of which included an exposure to wargaming. These short courses all ran within a total period of 1 year.

The first trial was in May 2019 with the computer wargame run first and the manual game a day later.

The second was on two consecutive days in June 2019 with the manual game first.

The third was in September 2019 with the manual game run 2 days ahead of the computer exercise.

The final run was conducted in November 2019 with the computer wargame run first and the manual game a day later.

The data were captured and then formatted from the results from the questionnaires for each of the trials.

The data were captured by date of trial.

Then the responses for the participants that completed the questionnaires were converted into numerical format for each of the six questions as follows.

The level of experience is converted into values of 1, 2, or 3 depending on the responses against the levels

Not at all = 1;

A little/sometimes = 2;

Regular player = 3.

For the other four questions, related to engagement, a response of not at all is given a level of 0 and the others then converted to escalating values of 1, 2, and 3, as follows:

Not at all = 0;

Somewhat = 1;

Considerably = 2;

Completely = 3.

The average “score” using these scales was then generated and is used for the results from each group and as a combined group. The analysis was conducted by an examination of the results by inspection. This was followed by analysis of variance (ANOVA) to establish the statistical evidence or otherwise.

4. Analysis

4.1. Overall combined results

From a total subject (student) pool of 79 respondents in total across the 4 experimental sessions, some 44 people completed the questionnaires for the computer wargame. There were 35 respondents for the manual wargame across the 4 sessions.

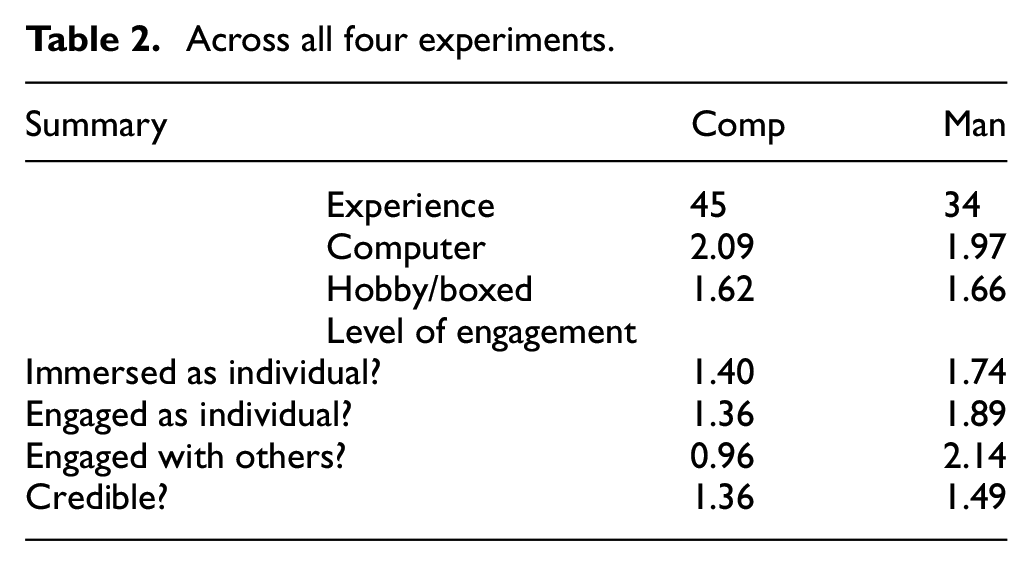

Table 2 shows the results for the scores against experience and the levels of engagement.

Across all four experiments.

The first observation is in the level of experience attached to the groups. Using the numerical scores, there is a very similar score for the level of experience in computer simulations (close to a score of 2, “a little/some” experience and also for the Boxed/Hobby Games which is close to 1.6 so mid-way between no experience and a little/some). In sum, the group overall had more experience and familiarity with computer simulations.

The results averaged across all the respondents related to the level of engagement show higher scores for the manual games across all questions. The score for “how immersed you felt as an individual” averages 1.40 for the computer game and 1.74 for the manual game.

The next question “How engaged were you as an individual” has a similar set of scores and difference. This time the computer results have 1.36 so very close to the previous score for immersion. The manual game is slightly higher than the question for immersion at 1.89.

The next question asks the level of engagement with others in the exercises. Recall that these are team exercises with groups of 5–6 running in the same game. In this instance, the computer responses indicates that the level of engagement is 0.96 (i.e., less than “somewhat”) while the manual game level is 2.14 which is above the level of “considerably.”

The final question is regarding the credibility. This shows a level for the computer as 1.36 versus the manual at 1.49, a very slightly higher value for the manual game.

There now follows a brief discussion of the individual experiments and the variations between them.

4.2. Discussion of results by each experiment

4.2.1. Experiment 1 May 2019

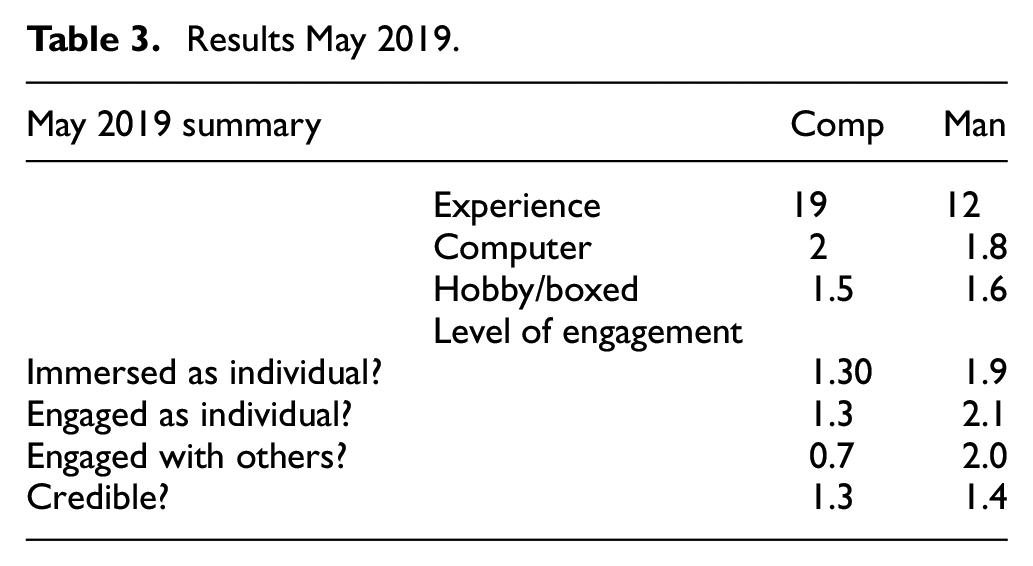

For this experiment, the total class size was 20. Some 19 respondents completed the questionnaires for the computer wargame and 12 for the manual wargame. The computer experiment was run 1 day before the manual one. Table 3 shows the results for the scores for the levels of experience and the levels of engagement.

Results May 2019.

The first observation is in the level of experience attached to the groups. Using the numerical scores, there is again a very similar score for the overall (combined) level of experience in computer simulations (close to a score of 2, “a little/some” experience and also again for the Boxed/Hobby Games which is close to 1.6—mid-way between no experience and a little/some).

Then the results averaged across all the respondents related to the level of engagement show even higher scores for the manual games across all questions as compared to the combined set.

The score for “how immersed you felt as an individual” and “How engaged were you as an individual” has a similar set of scores and difference. This provides another indication that these subjects were consistent and interpreted the immersion and engagement as the same.

The level of engagement with others in the exercises question in this instance indicates that the computer responses show a level of engagement less than 1 at 0.7 (lower than the combined data) and less than a “somewhat” while the manual game level is 2 which is at the level of “considerably.”

The question regarding the credibility shows a level for the computer as 1.3 versus the manual at 1.4 and so is very close with the same sort of small “lead” for the manual.

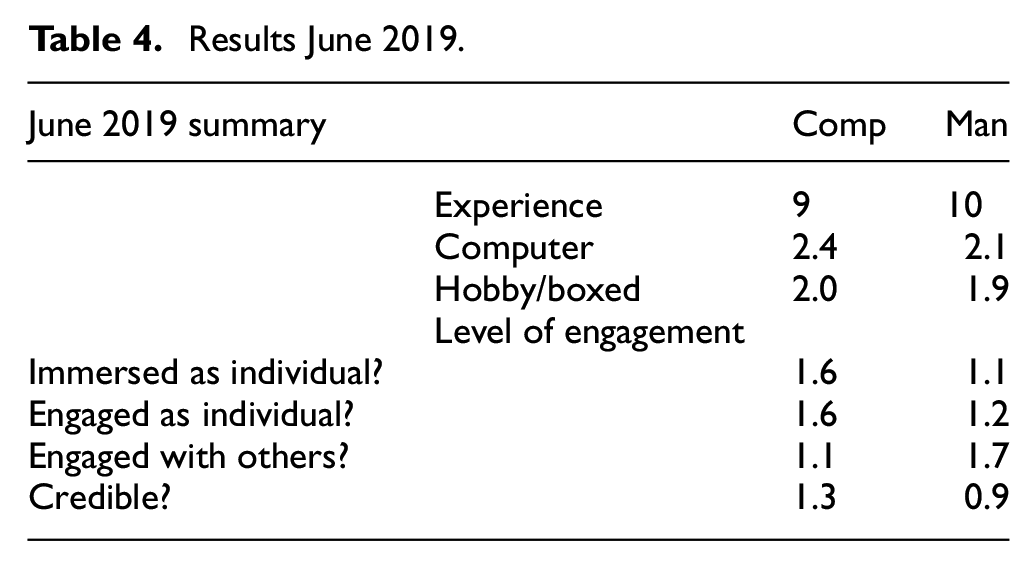

4.2.2. Experiment 2 June 2019

For this experiment, the total class size was 11. Of these, some 9 respondents completed the questionnaires for the computer wargame and 10 for the manual wargame. In this case, the computer experiment was run 1 day after the manual one. Table 4 shows the results for the scores against experience and the levels of engagement.

Results June 2019.

The level of experience attached to the groups using the numerical scores shows again a very similar score for the level of experience in computer simulations (a little higher than the overall average here with scores of above 2 (2.4 and 2.1) with “a little/some” experience). The level is also higher for the Boxed/Hobby Games at close to 2.

The results averaged across all the respondents in this experiment show different characteristics to the first experiment and the overall combined group. In this case, the score for “how immersed you felt as an individual” averages 1.6 for the computer game and 1.1 for the manual game. The 1.6 is mid-way between “Somewhat immersed” and “Considerably immersed” while the manual level is “somewhat.”

The next question “How engaged were you as an individual” has a similar set of scores and difference to the overall.

The next question asks the level of engagement with others in the exercises and in this instance the computer responses indicates that the level of engagement is 1.1 (just above “somewhat”), but in this case the manual game is again higher at 1.7 which is approaching the level of “considerably.”

The final question for credibility shows a level for the computer as 1.3 versus the manual at 0.9 and so the manual game is lower overall this time by a notable margin.

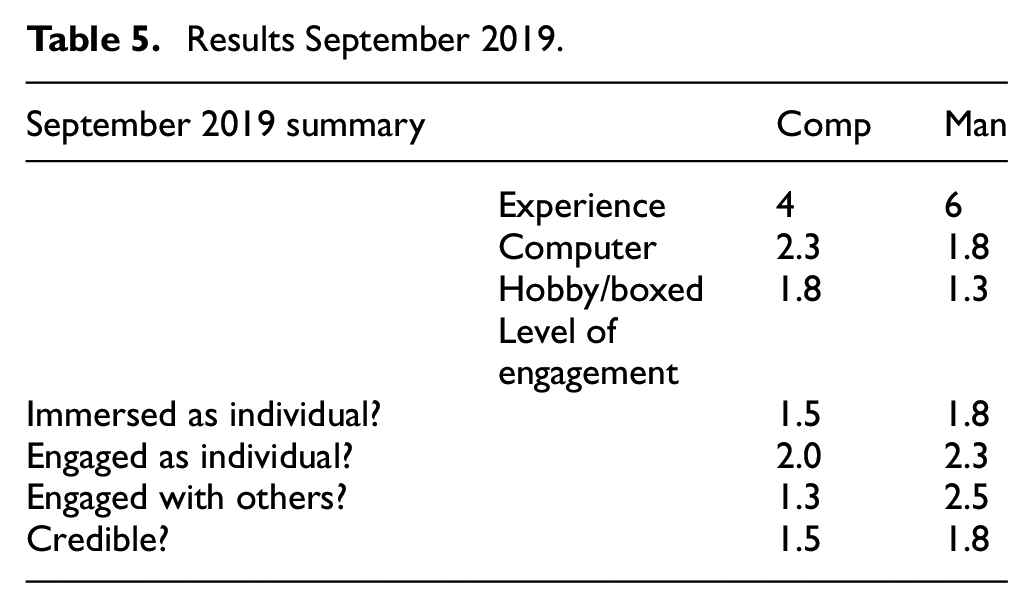

4.2.3. Experiment 3 September 2019

For this experiment, the total class size was 7. Some four respondents completed the questionnaires for the computer wargame and six for the manual wargame. The computer experiment was run 3 days after the manual (i.e., in the same order as experiment 2). Table 5 shows the results for the scores against experience and the levels of engagement.

Results September 2019.

The first observation is in the level of experience attached to the groups: in this case, the differences in the respondents for the level of experience in computer simulations (close to a score of 2.3 for the computer respondents and 1.8 for the manual). For the Boxed/Hobby Games, the levels are 1.8 and 1.3. From this the group overall has higher experience of computer games.

Then, the results averaged across all the respondents related to the level of engagement show higher scores for the manual games across all questions.

The score for “how immersed you felt as an individual” averages 1.5 for the computer game and 1.8 for the manual game. The manual score is close to a 2, that is, “Considerably immersed.”

The next question “How engaged were you as an individual” has higher scores overall. This time the computer results have 2. There is a clear difference in the previous score for immersion and is concerning as there may be inconsistency in interpretation of immersion and engagement.

For the level of engagement with others in the exercises, the computer responses indicates that the level of engagement is 1.3 so just above “somewhat” while the manual game level is 2.5 which is very much into the level of “considerably.”

The final question for credibility levels shows a level for the computer as 1.5 versus the manual at 1.8 and so is close with a small “lead” for the manual.

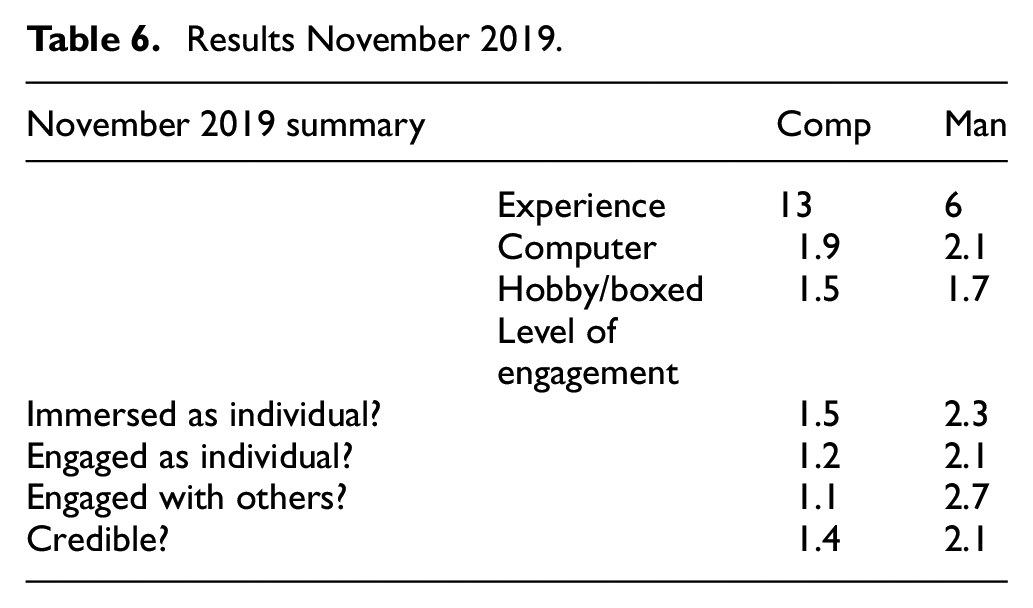

4.2.4. Experiment 4 November 2019

Some 13 respondents completed the questionnaires for the computer wargame and 6 for the manual wargame. The course size was 15. Table 6 shows the results for the scores against experience and the levels of engagement. In this case, the computer experiment was run 1 day before the manual.

Results November 2019.

The first observation is in the level of experience attached to the groups. Using the numerical scores, there is again a very similar score for the level of experience in computer simulations (close to a score of 2, “a little/some” experience and also again for the Boxed/Hobby Games which is close to 1.6—mid-way between no experience and a little/some).

The score for “how immersed you felt as an individual” averages 1.5 for the computer game and 2.3 for the manual game. The 1.5 is mid-way between “Somewhat immersed” and “Considerably immersed” while the manual score is 2.3, a little above “Considerably immersed.” This is a bigger difference than the overall average.

“How engaged were you as an individual” has a similar set of scores and difference although slightly lower. This time the computer results have 1.2 so a little below the previous score for immersion.

The level of engagement with others in the exercises this time shows that the computer responses indicates that the level of engagement is 1.1 (just above “somewhat”) while the manual game level is 2.7 which is at the level of “considerably”—a considerable difference in this instance.

The final question is regarding the credibility. This shows again a high difference compared to the other experiments with the level for the computer as 1.3 versus the manual at 2.1 and so the manual games is regarded as more credible.

4.3. Analysis of variance

ANOVA was conducted with the combined set of data from all the four tests but included an evaluation of each test within the overall analysis. The data were formatted as a set of scores for each respondent with two explanatory variables; the experimental run (courses 1–4) and whether this was a response for the computer or manual game. The response variables were the level of engagement as an individual, the level of immersion as an individual, the level of engagement with others, and the degree of credibility.

The ANOVA examined the difference between the manual and computer sets of data, allowing for possible differences between test runs (courses), in the outputs for the degree of engagement, immersion, and credibility. For all four response variables, standard residual plots showed approximate normality and constant variance, so that the results should be valid. For all four response variables, the factor for test run was not statistically significant, indicating no evidence of a difference between the four courses. When this was recoded as manual first versus computer first, there was also no evidence of a difference.

First is to examine the result for the “Credibility” of each of the manual and computer wargames. The p value for this comparison is 23%. This is much higher than the p value of a general convention of a 5% confidence threshold and so the ANOVA, in sum, does not suggest any significant difference in the level of confidence in the manual versus computer wargames.

The results for the immersion as an individual are however more interesting. The inspection analysis above noted that there is an overall indication of a slightly higher level for the manual but marginally so and the ANOVA indicates that there is evidence to suggest that the difference is significant and the manual games were more individually immersive than the computer games (p < 0.05). The fitted model shows that the difference between computer and manual is 0.39 (standard error, 0.17).

The result for the engagement of individuals is similar and again indicates that there is a significant difference and that the manual games are more engaging than the computer games (p < 0.01). The fitted model shows that the difference between computer and manual is 0.51 (standard error, 0.19) which is similar to the immersion question result.

The final question regarding the engagement with others shows a very clear difference in favor of manual games (p < 0.001). The fitted model shows that the difference between computer and manual is 1.19 (standard error, 0.19).

5. Conclusion

The initial analysis of the literature themes identified the requirement or perception of benefit for immersion and engagement in wargaming. This study was undertaken in response to this requirement and investigated the level of immersion and engagement of players, as perceived by those players, within a computer wargame and in comparison within a manual wargame.

The references generally indicate that the computer-based mode is less able to facilitate collective engagement and engagement with other players than the manual system. There are also suggestions that players perceive manual games differently to a computer wargame.

The experiment has been derived from the previous analysis with the following research question:

Is there a discernible difference between the levels of players’ engagement in computer wargames versus manual wargames?

This experiment has provided empirical evidence that there is a difference in players’ engagement with a computer wargame compared to a manual game both as an individual, but, and more starkly and clearly, for engagement with others.

For the complete set of responses, there is clear difference in the degree of engagement with others in the exercises between the computer and manual games. Across all the exercises, the computer responses indicates that the level of engagement is less than 1 (i.e., less than “somewhat”) while the manual game level is 2.14 which is above the level of “considerably.” This difference is present as an average across the whole set of experiments and also occurred within each experiment set. This is clearly confirmed by the ANOVA. Since wargames are often cited as primarily an act of communication, this finding for “Engagement with others” is key to this function.

There is a consistent difference for the whole combined data set or group for “how immersed you felt as an individual” where the computer is assessed at a lower level than the manual game. But this is not a large difference and there is one experiment where this was reversed. This is very similar for the question of “How engaged were you as an individual” which has a similar set of scores and difference. The ANOVA does indicate that this difference, although small, is meaningful.

The final question is regarding the credibility. This shows a level for the computer as 1.36 versus the manual at 1.49. There is a small “lead” for the manual games, but this is very small and this is consistent among the experiments except for one case. The ANOVA, however, indicates that there is not a meaningful difference.

The experiments have successfully been able to investigate the research question. Improvements might have been to increase the sample size and to identify and compare specific individual’s comparative scores but anonymity requirements and agreements precluded that in this case. Additionally, the number of players involved in each manual game was small and engagement levels may be affected by larger numbers in each group.

This work was not intended to provide definitive evidence but to investigate the perceived differences between computer-based and manual wargames especially with respect to engagement. This was intended then to either support the recommendations or to oppose them by providing an evidence base. The results have shown that the levels of engagement are measurably higher with the manual game mode by a considerable margin. The results within this paper are intended to add quantified and valid data to an important area.

The results provide the evidence for some guidance for game design and configuration. However, it should be recognized that while manual games do appear to offer the advantage of greater engagement, the choice of computer or manual or a combination of the two may be appropriate for specific purposes and where other factors or requirements need to be met. Where a computer wargame is used, the consequent risk of reduced engagement should be noted and mitigations could be employed as appropriate.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.