Abstract

Academics have lamented that practitioners do not always adopt scientific evidence in practice, yet while academics preach evidence-based management (EBM), they do not always practice it. This paper extends prior literature on difficulties to engage in EBM with insights from behavioral integrity (i.e., the study of what makes individuals and collectives walk their talk). We focus on leader development, widely used but often critiqued for lacking evidence. Analyzing 60 interviews with academic directors of leadership centers at top business schools, we find that the selection of programs does not always align with scientific recommendations nor do schools always engage in high-quality program evaluation. Respondents further indicated a wide variety of challenges that help explain the disconnect between business schools claiming A but practicing B. Behavioral Integrity theory would argue these difficulties are rooted in the lack of an individually owned and collectively endorsed identity, an identity of an evidence-based leader developer (EBLD). A closer inspection of our data confirmed that the lack of a clear and salient EBLD identity makes it difficult for academics to walk their evidence-based leader development talk. We discuss how these findings can help facilitate more evidence-based leader development in an academic context.

A content analysis of 21st-century mission statements of top business schools indicates that the majority sees leader development as critical (Kniffin et al., 2020). Most schools argue that, as important suppliers and gatekeepers of the leadership pipeline, business schools play a crucial role in the formation of future leaders of industries and, more broadly, society. These claims that schools make concerning the importance of leader development may be construed as window dressing (Bromley & Powell, 2012); however, accreditation institutes, prospective students, corporate partners, and broader societal stakeholders (Khurana, 2007) likely expect business schools to live up to their espousal of high-quality leader development.

Prior theory and research suggest that business schools may be in an optimal position to produce better leaders (Day & Dragoni, 2015; Lacerenza et al., 2017; Reyes et al., 2019). For instance, students are often in a life-stage optimal for development, schools have an appreciable time – often year(s) – required for behavioral change, and there is a wide range of initiatives and a large team of educators to help cultivate students as leaders. Additionally, most business schools hire expert academics who have close knowledge of the empirical basis for what does (not) work in terms of leadership development, and there are academic standards to guarantee quality education. Business schools can thus claim a unique position: In the massive market for leader development (Schwartz et al., 2014), business schools thus have the possibility of making a unique selling proposition of being evidence-based – meaning that the programs they offer are based on what has been shown to “work” in research (i.e., are effective at developing leaders), whereas the broader market seems to be flooded with “fads and fashions” (Simons, 1999) that may hold great promise but often lack evidence in support of their effectiveness.

Some however have questioned whether leader development programs (LDPs) at business schools are truly as evidence-based as would be expected from academic institutions (DeRue et al., 2011; Klimoski & Amos, 2012; Pfeffer, 2015; Vermeulen, 2011). For instance, LDPs are not always taught by experts with relevant academic training (e.g., Charlier et al., 2011). Additionally, LDPs continue to use popular tools (e.g., Myers–Briggs Type Indicator [MBTI]) that have little academic base (Grant, 2013). Finally, LDPs are often not rigorously evaluated, focusing on student satisfaction (Kaiser & Curphy, 2013; Pfeffer, 2015; Tews & Noe, 2019) rather than, for instance, demonstrating behavioral change. In their review of LDPs in higher education, Reyes et al. (2019) concluded: “in practice, LD programs generally use approaches that are convenient and inexpensive rather than rooted in science” (p. 10). We extend prior investigations by examining the extent to which business schools live up to the promise of “leader development that works.”

Beyond the descriptive RQ 1, we were interested in exploring what drives academics to forego evidence-based management (EBM). Researchers have highlighted a variety of reasons for why practitioners do not adopt EBM (e.g., not well-trained in evidence-based thinking; Bartunek, 2011; Briner & Walshe, 2013; Giluk & Rynes-Weller, 2012; Pfeffer & Sutton, 2006), but there is little research exploring the extent to which and the reasons why academics adopt EBM or not. Considering their academic training and academic institutional context, we assume that academics have more ability, motivation, as well as opportunity (Rousseau & Gunia, 2016) to engage in EBM than their practitioner counterparts. As a result, when even academics fail to adopt EBM, it may highlight additional challenges to adopting EBM.

To answer these two research questions, we performed a qualitative analysis of in-depth interviews conducted with 60 academic directors of leadership centers in renowned schools. To foreshadow the findings, we show, in regard to RQ1, a less-than-ideal situation for EBM, both in terms of selection (choice of programs and teaching methods) and evaluation (assessment of programs). In regard to what drives the nonadoption of EBM (RQ2), our qualitative analysis first revealed different rationales (e.g., insufficient resources) explaining nonadoption of EBM, which were interlinked to a higher order set of challenges (e.g., lack of external monitoring of quality). Finally, as a root cause to these rationales and challenges, we highlight the importance of an individually owned and collectively endorsed identity of “evidence-based leader developer” (EBLD); that is, defining oneself as evidence-based in (1) what (i.e., content) and (2) how (i.e., method) one develops leaders, and using prior evidence to (3) select as well as (4) evaluate one's own LDPs to refine and add to prior evidence regarding effectiveness.

Our findings extend prior literature on EBM drawing insights from the study of drivers of behavioral integrity (BI); the extent to which individuals as well as larger social entities walk their talk (Argyris, 1997; 1998; Bromley & Powell, 2012; Kerr, 1978; Simons, 2002). Although not walking one's talk in organizations is quite common (Effron et al., 2018), it is also detrimental in terms of credibility (Simons, 2002). At an individual level, walking one's talk is an extension of who one is (i.e., one's authentic self), and thus what one really cares about (Leroy et al., 2012). This requires the individual to have a clear and salient identity to help align what they have espoused, and what is enacted (Simons, 2002). However, even when EBLD is clear to an individual, there can be competing institutional priorities that might prevent enacting one's identity, such that only when that identity is collectively endorsed, will people be able to consistently walk their EBLD-talk (Bromley & Powell, 2012).

Although the importance of the strength of one's individual (Rousseau & Gunia, 2016) and collective (Cascio, 2007; Rynes et al., 2007) EBM identity has been suggested by prior research, we more fully clarify its importance in aligning evidence-based identity espousal and enactment. Much of the work on EBM focused on the need to train and improve critical thinking as a competency in order to promote the use of evidence-based practices (e.g., Rousseau and Gunia, 2016), and while these are the building blocks required to be more evidence-based, identity is another factor that shapes individuals’ behavior. Identities have a motivational capacity to hold individuals accountable to their self-view (Ashforth & Mael, 1989; Festinger, 1957; Hogg & Terry, 2000; Lord & Hall, 2005) and when identities are collectively shared, group members create structures and procedures to help solidify and protect the identity's meaning (Bromley & Powell, 2012). Our qualitative analysis of 60 interviews suggests that being evidence-based is not only a competency to develop (Rousseau & Gunia, 2016), but that it can be a professional identity that helps people make choices between competing demands.

Building on these findings and insights, we discuss what is needed to build stronger and clearer individual and collective identities around being an “evidence-based leader developer.” We offer a multilevel, multistakeholder approach to addressing this issue. These solutions range from challenging our readers to reflect on the extent to which they identify with being an EBLD, to reconsidering the extent to which an evidence-based teaching identity should be part of our (philosophical) training as researchers and teachers, and to how existing (e.g., accreditation) as well as potentially new (e.g., awards) incentives could put EBLD in more spotlights. Ultimately, these efforts could help business schools live up to their unique claim that they promote “leader development practices demonstrated to work,” thus being a more unique differentiator and exemplar in the leader development industry.

Evidence-Based Leader Development in Business Schools

Leader development can be defined as “the expansion of the capacity of individuals to be effective in leadership roles and processes” (Day & Dragoni, 2015, p. 134). We explicitly focus on the development of individual leaders (the primary focus of business schools) and not leadership development that is focused on the leadership capacity of a collective. We adhere to this definition but limit ourselves in the scope of LD practices under investigation. For instance, we are specifically interested in leader development as it occurs in a business school context, including formal leadership curricula as well as more informal (e.g., action learning) and extracurricular (e.g., coaching) activities in degree (undergraduate and graduate) as well as nondegree (executive education) programs. Although there are parallels with other organizational contexts, a key distinguishing feature is that business schools are part of an academic institution where being “evidence-based in leader development” is explicitly or at least implicitly part of the educational proposition (i.e., we know what works). As such, we deemed this an appropriate context to study challenges in walking one's evidence-based talk (Simons, 2002).

A second focus of this study is on “evidence-based leader development.” As we highlight later, our respondents have a wide variety of ideas of what it means to be “evidence-based” that do not always correspond with the literature on evidence-based thinking (Rousseau & Gunia, 2016). For instance, some view being evidence-based as only applicable to their niche of research, but others extend it further to their teaching role. In defining evidence-based leader development, we focus on examining all of the above, including evidence-based thinking in what one develops (e.g., knowledge, skills, attitudes, abilities, values, leader identity), as well as how one develops. Having clarified our definition, to maintain an open perspective, we broadly pooled our participants’ perspectives on development that has been “demonstrated to work.”

Methodological Approach

To better understand the current state of quality standards of leader development in business schools, we interviewed 60 academic directors of leadership centers from top-ranked business schools (as determined by the Financial Times top 100 MBA World Ranking in 2019). The majority of these directors were located in the United States (60%) and Europe (30%) with a small percentage from Asia (5%) and Australia (5%). Twenty percent were female, 80% were Full Professors and 20% were Associate Professors – each academic director had a significant track record in publishing leadership research. These academic directors are thus in a crucial position to provide insight into our two research questions (Kumar et al., 1993). Furthermore, the academic directors in our sample indicated that in their role they are typically a central connector within business schools between leadership professors, clinical faculty, administrators, potential students, and marketeers who are selling programs. In other words, they can offer important insight into how and why things work as they do within their school.

Our interview consisted of two parts. First, in relation to RQ1, we asked these directors about the leader development curricula at the school, the evidence they or others have collected to support the choice of these curricula, and the standards for professional quality they had in place to ensure that high-quality leader development occurred. Information gathered from the interviews was cross-referenced and complemented with information found on the leadership center websites and additional documentation that was provided by interviewees. Second, and consistent with RQ2, we asked the center directors what drives the (lack of) adoption of evidence-based leader development. To warm up respondents to this question (part of RQ2), we first asked respondents: “What evidence do you have that your program works as espoused?” A full listing of the questions used in our interviews is presented in Appendix A.

Data Analysis

Our data analyses and results are both descriptive as well as interpretative. For RQ1, we used the interviews as a fact-finding tool, trying to accurately identify which practices are used and which methods are in place for evaluating their effectiveness across the 60 leadership centers. Our interview approach (as compared alternate approaches such as a survey approach) allowed us to check that respondents and interviewers had the same understanding of the questions, thus enhancing the validity of the data collected. We also analyzed archival data (e.g., websites, course documents) to augment the validity and generalizability of our data. For RQ2, we employed a more interpretative stance and conducted a thematic analysis to explore prevalent themes across the interviews (Miles & Huberman, 1994). Initial analyses were done by the first and second authors, checking differences in code names and interpretations until consensus was reached (Gioia et al., 2013). In a second stage, we pooled the perspective of our coauthors to debate and clarify our initial findings, further refining them to ensure that we had reached saturation.

Our coding was done in three steps: First, we coded directors’ rationales for the programs, either being or not being evidence-based. We discussed these concepts and organized them into second-order themes, clarifying them and identifying the links between related concepts, to come to a set of challenges that could help explain why their leader development programs are or are not evidence-based. At the third stage, we compared the findings to prior theory and research on BI to see if we could identify a root cause that addressed the key challenges identified in step two. Drawing on the themes that emerged and the literature on BI, we identified the identity of an “evidence-based leadership developer” as a key root cause driving misalignment between espousal and enactment. We then revisited our data and confirmed challenges around fully adopting an EBLD identity for many respondents and their institutions.

Findings

To assess the extent to which LDPs in business schools are evidence-based, we needed a clear understanding of what it means to be evidence-based. Based on ideas in evidence-based medicine (Pfeffer & Sutton, 2006), evidence-based management is often equated with using the best quality evidence available for teaching about managerial decision making. One source of evidence particularly relevant to an academic context is systematic research on leader development. This would include the science of leadership as well as the science of leader development. Whereas the science of leadership informs us which approaches to leadership are effective and under which conditions (DeRue et al., 2011; Lord et al., 2017), the science of leader development highlights the methods by which we can effectively develop specific knowledge, skills, abilities, but also values, and identities in leaders (Day & Dragoni, 2015; Lacerenza et al., 2017) 1 . Although the evidence produced by leader-development science is high-quality, with reviews and meta-analysis highlighting the closest approximation to the truth of “what works” within an ever-evolving state of scientific advancement (Avolio et al., 2009; Collins & Holton, 2004; Day et al., 2014; Lacerenza et al., 2017; Reyes et al., 2019), the field is nascent enough that there remain many unresolved questions. This means that an assessment of what is “evidence-based,” will be nuanced and relative to the current state of what we know in terms of the evolution of the science. Following this logic, as a first step in answering RQ1, we will look at the extent to which existing programs adopt the current state of the scientific literature. We call this the selection-side of the evidence-based equation.

At the same time, scholars studying EBM have highlighted that there are several reasons why we cannot rely on what is offered by prior scientific research alone, and thus that EBM needs to consider a wider range of evidence. Evidence-based thinking means not just relying upon prior scientific evidence, but reflects a critical stance to any type of evidence in (dis)confirmation of one's ideas (Briner & Rousseau, 2011) by asking the right question, acquiring the right information, appraising the quality of that evidence, applying that evidence to practice through an intervention, and assessing the outcomes of that intervention (Rousseau & Gunia, 2016). Assessing the outcomes of interventions is important as some leader development interventions may be so novel that the science to support these approaches has neither been fully developed nor implemented (e.g., certain gamifications), in which case the onus lies on the user to gather whatever evidence they can on whether their program works (Rousseau, 2020).

More broadly, because the science of leader development is neither static nor always clear-cut (often with two sides to the debate on the effectiveness of a certain intervention), it requires researchers to engage with the existing research in a nuanced and critical way to see how the collected evidence applies to what they hope to achieve with their leader development program(s). Research across scientific disciplines, as well as in leadership and its development highlights that “what works” is often driven by contingencies, such that selecting what works depends on the specific context in which you apply the intervention and the makeup of the participants. This suggests that even when one can chose the highest quality theories, assessments, and intervention strategies/programs, as indicated by prior research (the selection-side of the evidence-based equation), it is critical to evaluate the quality of those programs on an ongoing basis. We call this the evaluation side of the evidence-based equation.

Selection-side of the EB-Equation

As a first step, we asked leadership center directors how they ensure their programs are evidence-based. We discovered a wide range of answers (detailed in this section), ranging from low (not using prior evidence when designing their programs) to high (using substantial evidence to guide their choices). For instance, some respondents stated that they did not always have control over the LDPs (especially when these were outsourced to external consultants) and expressed significant frustration that practitioners are still teaching concepts, models, and measures which research clearly demonstrates are outdated (e.g., MBTI; Grant, 2013). 28.30% found themselves in this category.

The majority of respondents (58.33%) highlighted that they have slowly “taken back” leader development in their schools, with the goal of making them more evidence-based. This does not only mean that respondents expressed a need to have more academics teach leader development but, from their role as academic director, being able to systematically review curricula with faculty, who may or may not have substantial research training, in order to weed out models and methods that have been proven to be ineffective in developing leaders.

A small percentage (13.33%) highlighted that they consider not just what leadership approach has been proven to effectively work (e.g., create higher follower motivation), but also the evidence about how to best develop it. For instance, knowing about the outcomes and moderators of empowering leadership is not the same as effectively developing empowering leaders. In other words, interviewees made a distinction in terms of what type of evidence they considered. Whereas the majority of the directors focused on educating people about effective leadership, these directors use practices based on the science of development.

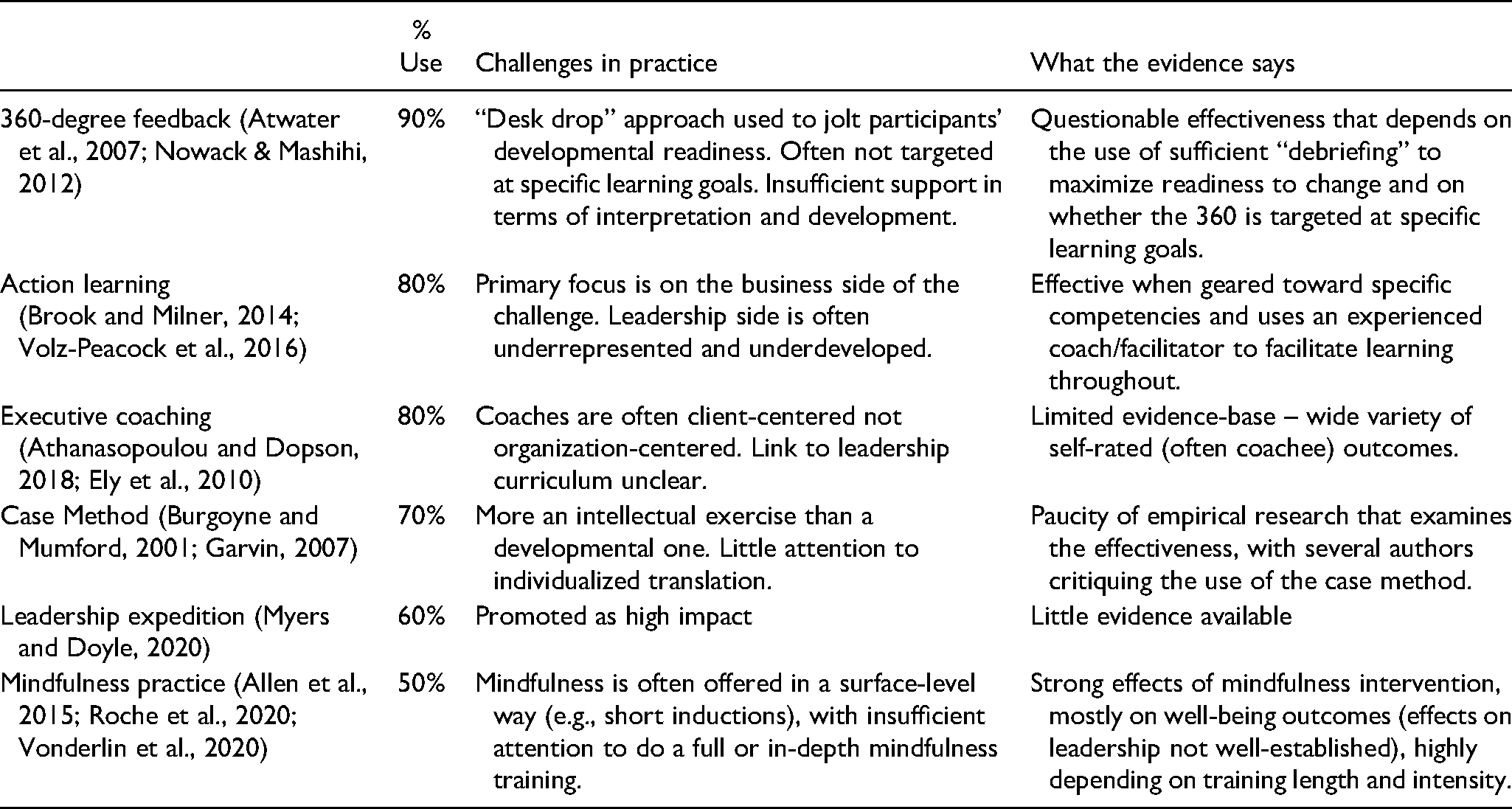

In a second lens on this research question, we also examined the actual practices adopted by business schools (from what the directors told us, cross-referenced with course manuals and websites). Table 1 provides an overview of the seven broad practices that emerged from the interviews and additional archival evidence as being most common across schools. Columns 1 and 2 of Table 1 highlight the extent to which these practices represent a cross-section of the curricula offered by business schools. Please note that this is not an exhaustive list: There may be additional practices being used at business schools, for instance, self-reflection assignments, which were not included as they emerged from the interviews as a method of evaluation rather than as a method of development. At first glance, business schools seem to be more evidence-based in their practices compared to other schools in higher education institutions (Reyes et al., 2019). Indeed, Table 1 indicates that business schools typically follow the models and methods that have been shown to improve the quality of leader development effectiveness as highlighted by Lacerenza et al. (2017)'s evidence-based “guide for practitioners when developing a leadership training program” (p. 19). For instance, column 1 indicates that these schools typically use multiple delivery methods from case study to action learning (vs. relying solely on in-class lectures) and that these methods are commonly delivered in a face-to-face and interactive way. Additionally, directors mentioned the frequent use of personal developmental feedback throughout the program, for instance using 360-degree feedback assessment. Finally, programs tended to be designed in such a way to allow for spaced training sessions throughout the developmental curriculum, which was connected to a larger vision of a stepwise development of the person as a leader throughout the program. In sum, many business schools in our sample do seem to follow many of the suggestions of prior literature for evidence-based practices (Lacerenza et al., 2017; Reyes et al., 2019; Salas et al., 2012).

Common Leader Development Practices in Business Schools.

A finer-grained analysis of Table 1 (columns 3–4), however, suggests clear room for improvement. For each of the practices mentioned in Table 1, we considered how these directors described the practices being used in their business school and compared this to the accumulated scientific evidence in the research literature. Prior literature on the effectiveness of each practice suggests contingencies for when a certain approach works better or worse, making the case that whether something “works” is nuanced. Table 1 compares the common use of these practices in business schools and the conditions highlighted by prior research as (sub)optimal for leader development program effectiveness. This comparison reveals a (mis)match between the conditions under which a program is offered and the best practices for implementation.

The review presented in Table 1 suggests that there is a clear disconnect between the current use of a certain practice and the scientific contingencies established in the literature for the use of that practice. For instance, research on 360-degree feedback suggests its effectiveness is questionable and that it is most effective when geared toward specific learning objectives and when end-users receive sufficient support to make sense of their results, such as coaching support (Atwater et al., 2007; Nowack & Mashihi, 2012). However, the implementation of 360-degree feedback typically does not seem to follow the evidence-based scientific recommendations. Another example is mindfulness training, which has become part of many leader development programs (Roche et al., 2020). Although such practices have been shown to reduce stress and increase individuals’ well-being and resilience (e.g., Vonderlin et al., 2020), the empirical evidence on whether they improve leader effectiveness seems to be mixed (Reitz et al., 2020; Rupprecht et al., 2019).

Evaluation-side of the EB-Equation

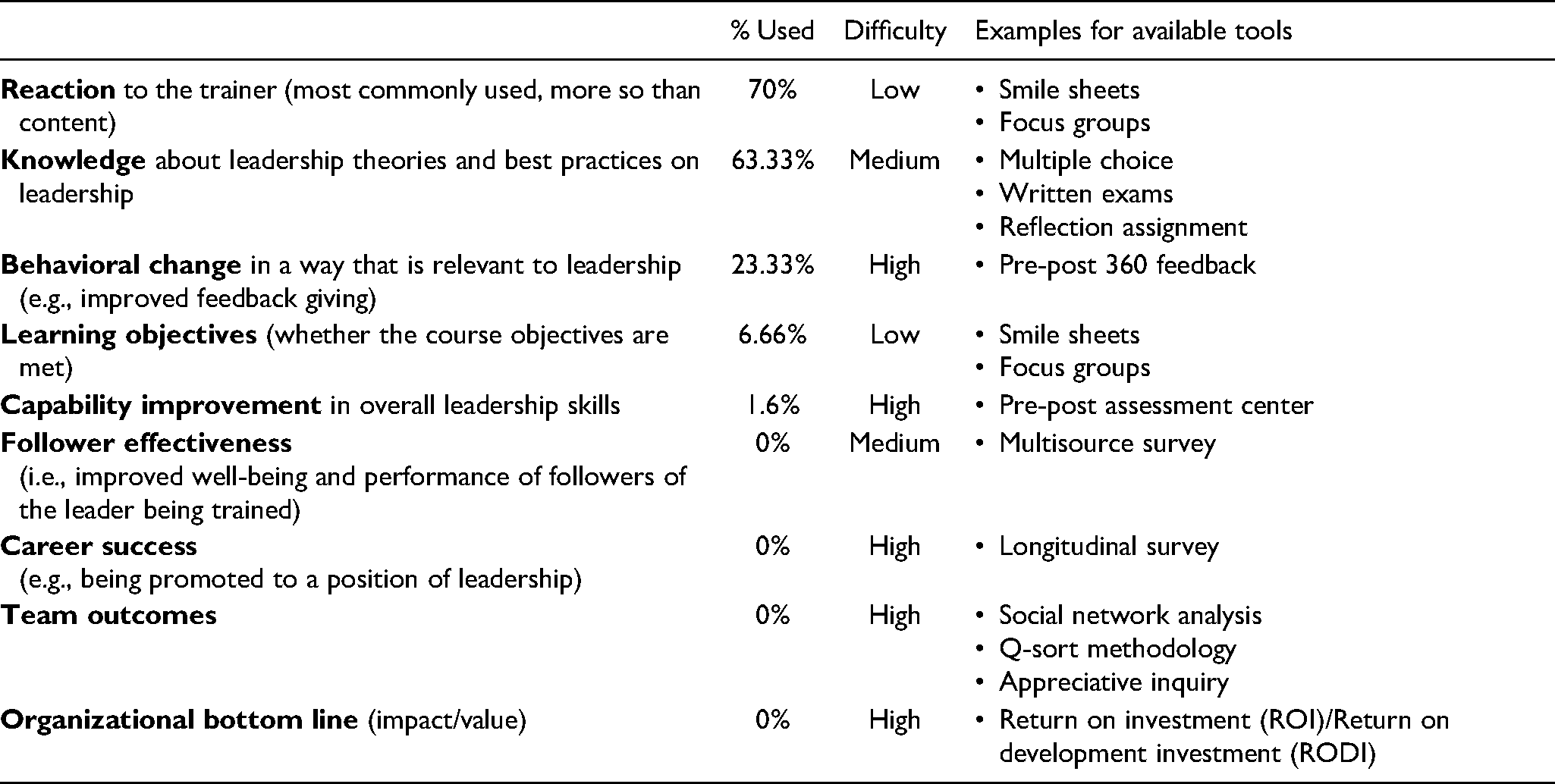

Table 2 provides an overview of the most commonly used criteria to assess the effectiveness of LDPs. Most often (70%) leader development programs in business schools are evaluated using student reactions to the program commonly known as “smile sheets” (see also Kaiser & Curphy, 2013; Pfeffer, 2015). Typically, participants complete a short survey about their experience with training, including aspects such as training venue, facilitators, workload, and materials. Most course evaluations thus center around reactions (Kirkpatrick & Kirkpatrick, 1994) indicative of how entertaining the instructor is or how enjoyable and exciting participants found the sessions to be (labeled by three of our respondents as “infotainment”). Interestingly, the most important point mentioned by directors as the key source of information for decision-making in the school (e.g., promotions) was not whether the participants liked the course/program, but the evaluation of the trainer.

Evaluation Criteria Used in Assessing Leader Development Programs.

These findings above are related to those respondents (6.66%) who used rather subjective means of assessing students’ satisfaction but were also focused on examining what information was meaningful in terms of program effectiveness. Typically, these respondents argued for clear learning objectives to be included in the design of the LDP upfront, and then evaluated reactions (see above) in light of those objectives. These respondents acknowledge that reactions are not a comprehensive evaluation method but argued that there were practical considerations why a more extensive program evaluation was not possible in their school: asking for reactions is relatively easy compared to alternative methods (discussed next).

Another set of respondents (63.33%) highlighted their use of an assignment or test associated with their leader development programs, to assure learning had taken place (as compared to offering a program as extracurricular or pass-fail). Typically, these assurances of learning are done in the form of multiple-choice or open-ended question exams, where students demonstrate how much they learned about effective leadership. Some also used situational cases for students to analyze and demonstrate that they understood what constituted effective leadership and could apply that knowledge to the situation they were addressing. Other programs used reflection assignments, where respondents made sense of their past or current leadership challenges using the course materials. Finally, some programs were more action-oriented in asking students to apply the course materials to a real-life problem (e.g., aiding a nonprofit organization's rise to power), but these were typically assessed using a reflection report.

About one quarter of our sample (23.33%) reported a conscious effort to independently evaluate their leader development efforts using concrete metrics that have been shown to be associated with effective leader development interventions. Most often, these approaches focused on subjective self-ratings of respondents (e.g., measuring improvements in their leadership identity). Other schools reported looking at improvements in pre- and post-360-degree feedback scores, as their students progressed through their cohort experiences spanning the program. However, when probed, none of those directors indicated that these 360-degree scores were actually used as criteria to assure learning. Instead, these methods were used for students to use in their own development, not the assessment of program effectiveness.

Only one school (i.e., 1.6%) reported using development centers, whereby the program assessed the individual's leadership capacities (e.g., through various leadership challenges) at the start and at the end of the program, tracking the evolution throughout. Finally, while mentioned as important, none of the center directors indicated assessment on longitudinal indicators of effectiveness, such as their leadership in future careers, follower development, or societal impact.

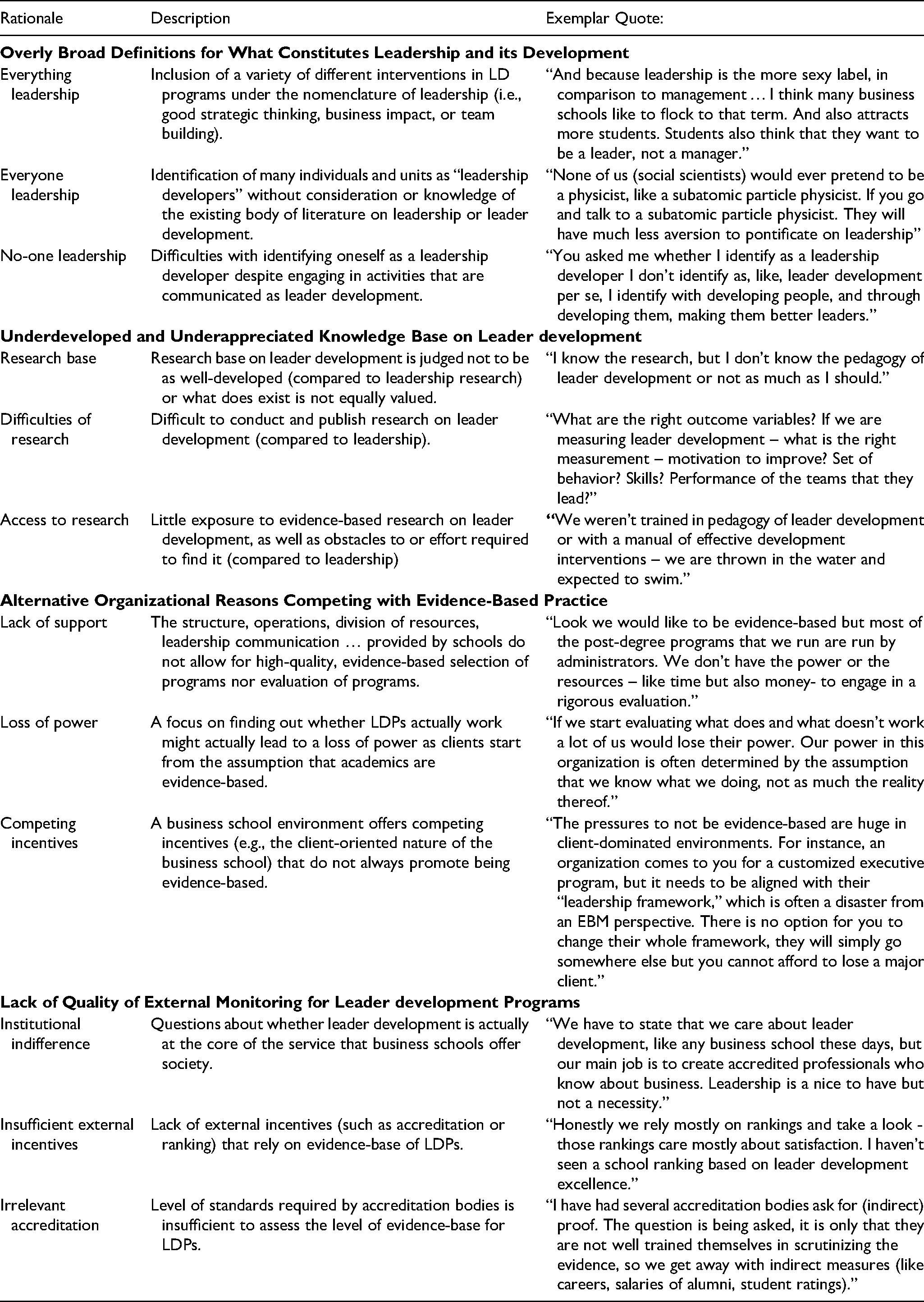

In the first step toward answering the second research question, we considered the rationales that our respondents offered to explain what drives them to (dis)engage from an evidence-based leader development approach. When respondents explained their approach to EBLD, they offered a wide variety of rationales such as a lack of time or money to do proper evaluations (i.e., lack of internal resources), lack of knowledge of leader development science (i.e., lack of research base), and absence of external monitoring agencies to ensure the quality of LD programs (i.e., independent external monitoring). For an overview of all codes and exemplary quotes, please refer to Table 3.

Reasons for Not Walking Our Evidence-Based Talk.

In the second step of our analysis, we engaged in higher order coding of these initial codes by considering which codes cluster together, and in our coding of these clusters, we considered which challenges may drive a similar set of codes. These are summarized in Table 3 under the higher order codes highlighting the need for better or clearer: (1) definitions, (2) science, (3) governance, and (4) monitoring. We discuss these in more detail in the next section, referring back to the underlying rationales where appropriate.

In the third and final step of our analysis, we considered how the higher order key challenges are interlinked with each other, by considering past theory and research on behavioral integrity in organizations. In doing so, we identified that a root cause of our respondents’ rationales relates to a lack of an identity as an EBLD. When revisiting our data, we found evidence of identity challenges for many of our respondents and we provide various examples of how these identity challenges play out for our respondents.

Overly Broad Definitions for What Constitutes Leadership and its Development

Leadership is commonly defined as a process of “influencing and facilitating individual and collective efforts to accomplish shared objectives” (Yukl, 2013, p. 66). Considering the romantic preference for anything labeled “leadership” (Kniffin et al., 2020), people typically include a lot of different interventions in their school under the nomenclature of leadership (i.e., good strategic thinking, business impact, or team building). As a result of this, the word “leadership” has become a container that serves as a symbol of anything that seems impactful but, ironically, that ambiguity makes it hard to determine the impact of those developmental efforts: “Look, think about it – leader development is pretty (much) any side activity that we run, often not even in our own hands but in students’ hands … how can we ever hope to get a good view of that?” This perspective is labeled as “everything leadership.” When everything is labeled as “leadership,” the concept loses meaning and/or gets muddied, undermining our ability to provide quality development and our understanding of what leadership is and how it is measured.

Leadership and its development are typically viewed as a domain that cuts across many fields, often to such an extent that it is “owned” by everyone in the school (e.g., accounting, marketing, operations, and finance). As an example, one of our interviewees explained “We had a large corporate sponsor who gave us a lot of money to study more effective leadership. Guess what, in no time everyone in the school became a leadership researcher.” This perspective is labeled as “everyone leadership.” The field of leadership is certainly cross-disciplinary but this suggests the term is being used too loosely, where some academics who do not really know the existing literature still call themselves leadership scholars or developers.

The above would suggest that we need to be very clear on what falls inside and outside of the academic curriculum in terms of what constitutes leader development. Without clear boundaries, anything can be considered as leader development, and anyone as a leader developer. Consider these two quotes from respondents: “Look, the truth is that we want alumni who had a great experience in college and are willing to give back. Now you can be evidence-based all you want but great alumni are built through high satisfaction scores.” or “We do leader development because those experiential activities allow us to build more coherence and connections between people. It is not as much about developing individuals as facilitating to create connections between people.”

If someone wants to build the cohort or alumni networks, those are fruitful efforts but there is a clear danger of calling these leader development activities. To be clear, we could see cohort/alumni-building as an example of more collective leadership development (Day et al., 2014), but only when that is done with a clear intention to develop leaders. Unfortunately, the same respondents (cf. supra) argued that: “We do these additional activities because it drives us up in the rankings, no more no less.”

In sum, the preceding arguments suggest the need for a much more precise definition of what constitutes leadership and leader development. Although different schools or academics may employ different definitions, each school or academic could still develop a clear definition of what leadership and its development means for them, and then be held accountable to that definition. In the absence of clear definitions and boundaries, leadership center directors can find themselves continually “herding a thousand different cats all of which call themselves leadership developers.” At the same time, the leader development field should develop a more common understanding of what is leadership and its development, so that center directors have an agreed-upon standard to start from. We consider this issue next.

Underdeveloped and Underappreciated Knowledge Base on Leader Development

Some respondents also argued that the science around leader development is still in an early stage of development, such that it is hard to definitively know what does and does not work and under which conditions. Most notable here is the comparison between research on leadership and its development. Our interviewees stated that generally “we know a lot more about effective leadership than we know about effective leader development.” Although research on leadership is not without its methodological and theoretical problems, a century of leadership research has demonstrated a fairly solid base captured in reviews (e.g., Lord et al., 2017) and handbooks of leadership (e.g., Yukl, 2013). Much less established is literature on leader development (Day & Dragoni, 2015; Lacerenza et al., 2017; Wallace et al., 2021), thus suggesting a limited “research base.”

Unfortunately, not all respondents consider the difference between the science of leadership and leader development when considering whether they are evidence-based. Consider this quote: “We are evidence-based. The concepts we talk about in our classes are derived from research so surely that makes us evidence-based.” This would suggest that the knowledge base around leader development that does exist (e.g., Day & Dragoni, 2015; Lacerenza et al., 2017) is not used. Yet, in response to RQ1, our data revealed that the majority of respondents rely on the science of leadership (i.e., the science of how to influence others; Yukl, 2012), not the science of leader development (i.e., expansion of the capacity of individuals to be effective leaders; Day and Dragoni, 2015). Although educating people about leadership is a first step, it is not the same as developing them as better leaders, therefore a focus on the science of leadership (and not leader development) does not guarantee that LD programs are effective. In sum, while the science of leader development needs development, that does not fully explain why academics do not use the scientific base – with respondents equating leadership education with leader development.

Respondents highlighted that doing academic research on leader development is more difficult than doing research on leadership, which is a reason why leader development is relatively sparse, as well as the insufficient effort to rigorously evaluate LDPs. Indeed, as one interviewee noted, intervention research is hard to set up and execute. For example, to provide evidence of LD effectiveness, we would need to employ longitudinal methods as the effects of LD program may manifest longer term (due to internalizing and developing process or to the fact that sit may take time for students to be in leadership positions). Moreover, to show the effectiveness of program (over other factors), an experimental intervention design is essential. Such intervention research however is often held to the highest standards of medical research (e.g., double-blind, placebo) that may be difficult to implement for organizational interventions. Our academic system typically rewards other types of research: “Let's be honest – it is much easier to demonstrate that a leadership style is present and that it affects others than to go in with an intervention and show that you can change it, no matter that the latter might actually be the more important thing to know in practice.” This perspective is what we label as “difficulties of research.” As the field of leader development continues to grow, we hope this will result in leader development having its own “dedicated top journal outlet and academic conferences,” such that publishing leader development research is more easily facilitated and rewarded.

As the research base continues to grow, our respondents highlighted the importance of accessibility. Indeed, while there is research available, that does not mean that this research is always easy to access (Judge, 2019). In a world where we find ourselves overloaded with information and changing complexities, it is often hard to get up-to-speed with what does (not) work as noted by one director:

In sum, the preceding suggests two challenges for those seeking to be more evidence-based in their LD programs. An underdeveloped base of knowledge regarding effective leader development, with the knowledge that does exist underappreciated and difficult to access and implement (compared to the science of leadership). Thus, posing significant challenges to those who wish to be more evidence-based in their leadership development programs. These factors can also decrease the motivation to be more evidence-based and further undermine efforts to improve the quality of such programs. In this regard, Lewis (2015) pleaded for a shift from a descriptive science to an improvement science, namely shifting from the knowledge of a particular scientific discipline (i.e., leadership) to knowledge about the instruction of such scientific discipline (i.e., leader development), which can provide the evidence necessary to be more effective in developing leaders. Or as put by Kurt Lewin – “If you want to truly understand something, try to change it.” This underappreciation and underutilization of the leader development science is not just limited to program design and selection but also includes program evaluation. Considering our skills as researchers to set up adequate empirical tests, it is remarkable that LDP evaluation remains underdeveloped.

Alternative Organizational Reasons Competing with Evidence-Based Practice

As indicated at the outset of this article, top-ranked business schools increasingly espouse in their mission statements that they develop better leaders (Kniffin et al., 2020). However, this does not necessarily equate with being evidence-based in terms of leader development. Consider two of the more extreme and contrasting quotes below expressed by our respondents: “We are in the business of knowledge creation and dissemination, not in the business of shaping individuals, leave that to religion and family. There is a danger of becoming cultish when you call yourself the school of whatever-leadership.” and “As academic institutes we have a role to play to shape the whole of the human being not just impart academic knowledge. This evidence-based, Tayloristic approach gets us away from this holistic thinking.”

The first statement claims leader development does not serve the core purpose of the school, namely knowledge creation and dissemination. The second suggests that the purpose of a business school inherently goes beyond knowledge transfer to more holistic cultivation of human beings. Neither statement, however, suggests a strong drive toward being evidence-based. The lack of precision is not just there in the words of a mission, but often also in how it is enacted. Many educators find that the academic system does not allow for rigorous evaluation of teaching – even in academic institutes where faculty receive a generous amount of time and resources allocated for research: “Look we would like to be evidence-based but most of the post-degree programs that we run are run by administrators. We don’t have the resources – like time but also money – to engage in a rigorous evaluation.” This perspective is labeled as “lack of support.”

Ideally, demonstrating that what one teaches inside or outside the classroom actually “moves the needle” on leader development, would be a unique selling point for business schools. Unfortunately, business schools are often not always set up in a way to really move the needle. As one interviewee explained – engaging in and revealing whether or not practices actually work may burst the bubble that we as academics always know what it is that we are doing:

Despite taking pride in their academic roots, business school leaders may actually discourage being evidence-based in leader development programs for a variety of reasons. For instance, the client-oriented nature of a business school environment may advocate for good student and alumni satisfaction scores, but satisfaction may not always support development (Alliger et al., 1997) and short-term satisfaction may lead to long-term dissatisfaction when students experience that their training does not help them overcome the leadership challenges they ultimately face. To make sure that a school actually prioritizes evidence-based programs, there may also be a need for outside monitoring.

Lack of Quality External Monitoring for Leader Development Programs

Beyond internal misalignment between mission and practices, there seem to be few if any external monitoring systems to identify high-quality leader development programs. Although we have accrediting bodies in place, the quality of leader development is not always a core focus. Said one respondent: “You know what I have never heard professional accreditation tell us – well you state you care about leader development but we don’t see the evidence for it. Honestly, if they don’t care why should we care?” This perspective is labeled as “irrelevant accreditation.”

To some extent, this is surprising as one could imagine that being able to differentiate business schools in terms of how they develop leaders could be key to their ability to attract students and thus enhance their reputation. However, as one interviewee noted:

Interestingly, our respondents had mixed perspectives on the role of accreditations (“They make the job of senior leaders an administrative nightmare.”) and rankings (“Rankings are inherently competitive and don’t always bring out the best in people.”). At the same time, respondents acknowledged that rankings and accreditations are part of our world and they can help the consumer simplify a very complex decision-making process about which school could help them in their developmental journey. Respondents argued however that ranking and accreditation systems would benefit from more specificity, in that a general “who is best?” seems like an inaccurate reflection of reality where being good at one aspect (e.g., leader development) undoubtedly means less resources for other areas (e.g., scholarships). Thus, while accreditation may be beneficial, in order for it to be useful and advance EBLD, more work is required to develop the right type of accreditation and ranking.

Finally, respondents argued that current rankings and accreditation systems have room for improvement. In particular, the evidence base and developmental nature of rankings were questioned: “To what extent are rankings based on accurate evidence that one school outcompetes another?” and “To what extent do accreditations actually pool for evidence of high-quality development versus an administrative, ticking-the-boxes exercise?” This further links to their developmental value: Pushing schools to be clear and accurate about, for instance, whether they actually develop leaders should aid the school in its objective of improving their leader development efforts. However, organizations offering accreditations and rankings often have a self-reinforcing perspective to set these up in a general way, so that a broad constituent of “clients” want to be associated with their accreditation. The limits of this client orientation highlight the need for “independent external monitoring” (as noted by one respondent).

In sum, one last challenge identified here is that external monitoring specific to leader development excellence does not exist or that such monitoring is not always of the highest quality nor developmental. In other words, there is no formal external carrot or stick in terms of accreditation to motivate schools to prioritize evidence-based leader development over other priorities in the school. This is problematic because without a clear external incentive, in the face of limited resources, other priorities will take over, even though schools themselves set LD as one of their main priorities (Kniffin et al., 2020). Although the agenda for higher quality leadership development can be pushed onto schools to some extent through accreditation, it is equally important to create an external pull from the external market (e.g., a ranking of schools on leader development that helps students in their school selection, and donors in targeting their giving).

Root Cause Analysis: Individual and Collective Identity of Evidence-Based Development

In the final stage of our data analysis, we considered what underpins the previous four challenges (definition, science, governance, and monitoring). Although these factors are unique to some extent, they are also interconnected. For instance, it is difficult to have external standards of “excellent leadership development” (challenge 4), if people do not agree on what leadership development entails (challenge 1) or if there is not ready access to a clear body of scientific knowledge to make that difference (challenge 2). Additionally, professional external standards and external metrics are necessary for schools to revisit their own structure to reward academics to become more evidence-based in their LD (challenge 3). And, of course, without the proper internal incentives within schools, academics will not be motivated to spend much time addressing challenges 1 and 2.

Considering the interconnection between these key challenges, we considered if there are root causes that could help address all of these challenges simultaneously. In reviewing the disconnect between academics promoting being evidence-based, but not fully living up to that ideal, we were struck by how similar the situation described above is to those described in the literature on behavioral integrity in an organizational context – the extent to which individuals and larger entities “walk their talk” (Argyris, 1990; Bromley & Powell, 2012; Effron et al., 2018; Kerr, 1987; Simons, 2002; Zohar, 2010). This literature highlights that not walking one's talk is quite prevalent in an organizational context and that misalignment between words and deeds is the result of a complex interplay between micro- and macro-drivers (Effron et al., 2018). We consider this literature in more depth in an attempt to find a root cause that helps explain the rationales and key challenges identified earlier.

At the microlevel, a lack of BI is driven by individuals lacking self-awareness and self-regulation (Simons, 2002): BI requires a clear and authentically held identity (Leroy et al., 2012) as well as careful identity management to ensure that espousal and enactment remain aligned. As we explain in more detail below, without the clear identity of EBLD, faculty and administrators may still espouse evidence-based leadership development, but not be motivated to forego easier, pragmatic alternatives in lieu of the labor- and time-intensive path of EBLD. Additionally, at a more macro level, the literature on BI is clear that the onus is not just on the individual actor; there are also many external factors that drive alignment between policy and practice: “rules and law, in areas such as accounting and auditing standards, consumer safety, labor regulations, or protection for the natural environment. But pressures also stem from “soft” laws, including numerous forms of standards, ratings, rankings, and the rights-based claims of social movements, as well as general social or professional norms.” (Bromley & Powell, 2012, p. 488). Organizational rewards, incentives, goals, resource allocations, and other organizational factors also influence whether the talk is walked. A key consideration within the BI literature is that organizations need to be careful when espousing aspirational identities (e.g., evidence-based leadership development) without fully considering competing pressures (Quinn & McGrath, 1985). A collective identity needs to be rooted in the culture of the organization (not just its strategy; Schein, 1990). Without such rooting, managers should not be surprised when the organization espouses A but employees ultimately do B (Kerr, 1978).

In sum, one key driver of people walking the talk mentioned by BI theory and research is that a clear and salient identity can drive espousal as well as enactment, individually and collectively endorsed. Applied to our specific setting, the identity of an EBLD, and whether it is individually held or collectively endorsed, may thus be a root cause of the aforementioned challenges facing evidenced-based leadership development.

We saw evidence for the importance of such an identity in our data. Consider this quote by one of our respondents in the key challenge of definitions (labeled as no-one leadership, Table 3): “Yes, I do run the leadership center here and sure I teach several of the core classes on leadership and to the outside world I tag my research as leadership, but I would never call myself a leadership researcher or developer. Leadership is just a word needed for the outside world.” Although actions demonstrate that this person teaches leadership, he or she has not internalized being a leadership developer (Deci & Ryan, 2000), and therefore avoids claiming such an identity (DeRue & Ashford, 2010). More broadly, we found that respondents adopted a wide variety of strategies (Petriglieri, 2011) to justify a disconnect between word and deed; including identity deletion (“I teach leadership but would never call myself a leadership developer.”), compartmentalization (“I am only a leadership developer when talking to corporate sponsors.”) and lowering it in hierarchy (“My first priority is happy students.”).

Beyond challenges with the leadership developer identity, we also noticed clear challenges with people adopting the idea of being an evidence-based developer. Consider the following quote: “I guess I've never thought about myself as evidence-based and I know that's a weird thing to say. … but I guess I am a full cycle academic: It's not just that I worked to create it, but I worked to then teach in an honest way … in the classroom and then I evaluate whether or not what I'm doing is working. And then I adjust and so it's as a cycle.” More broadly, we noticed that while individual academics are often focused on evidence in their own research specialty, that evidence-based thinking does not always extend to other spheres of influence (such as effective educational or developmental practices). Although all respondents are scholarly academics (AACSB, 2020), and being evidence-based is likely a core part of their professional identity, it is not necessarily extended to their identity as developers (Rousseau & McCarthy, 2007).

In addition to the importance of academics internalizing the identity of an EBLD, we found evidence of academics highlighting the need for a more collective identity of “evidence-based leader development.” Consider the following quote in the key challenge of governance: “I can shout about the importance of EBM [in my school], but when no one really cares about it, no one hears me shouting” (labeled as “institutional indifference” in Table 3). Or if the organization does not adopt and endorse the identity of being evidence-based in its LDPs, they are in danger of creating a disconnect between rhetoric and practice. Research-oriented schools that are set up to value research above all else, are at particular risk of being seen as hypocritical when their academic roots suggest being evidence-based but their practice suggests something different: “I would not claim that our programs are evidence-based. We will also avoid claims about “our LDP demonstrably works” to avoid legal claims and litigation.”

Not only is the identity of being evidence-based frequently absent in words or deeds, there is also confusion about what it means to be evidence-based. As one respondent noted: “being evidence-based is often used too loosely” so that people have lost sight of what it means to actually judge to what extent they are evidence-based in their teaching. As noted earlier, the evidence is not always clear-cut such that the extent to which someone is evidence-based requires a careful and nuanced assessment. Thus, different people can claim to be evidence-based but yet use quite different criteria, with varying levels of rigor, to support their claim. As one respondent noted: “It is very hard to make that difference visible if everyone claims the same thing and provides “statistics” to support that claim and the statistics for that claim from consultancy businesses look much nicer.” This suggests that ideally the identity of being evidence-based is not just individually owned but that is also collectively endorsed and understood in such a way that alleviates confusion over what it means.

Prior research suggests that identities can be a strong driver of behavior when they are also collectively endorsed (Ashforth et al., 2011; Ashforth & Schinoff, 2016; DeRue & Ashford, 2010). For instance, a collective understanding of what it means to be an EBLD would give academics a sense of pride and shared social norms to maintain that identity. For instance, other professions (e.g., medicine, psychotherapy) have fought long and hard to achieve this collective status of being an evidence-based profession. Furthermore, when a shared identity finds itself institutionalized formally (e.g., leader developer as a certified profession), academics may find it easier to adopt and enact that identity, or make it more salient/higher in the hierarchy of their self-concept (Ramarajan, 2014).

The importance of these collective identities can extend well beyond the boundaries of an organization. Consider the following quote in the key challenge of monitoring: “Don’t you think it's strange – when we go to a doctor or a psychotherapist we want to make sure that these people are licensed practitioners. But when it comes to leadership development, we don’t care who teaches us what as long as we are entertained.” A clear collective professional identity facilitated by external entities aids with tackling all four key challenges identified earlier. First, a clear professional certification that reinforces one's identity can help to distinguish leadership development from other, similar professions (e.g., executive coaching standards set by ICF). Second, professional standards also have a solid scientific base to build on with codes of practice (e.g., moral code) to help guide behavior. Third, professional certification ensures that institutes can organize themselves around those certifications. Fourth, it is easier to monitor who follows the standards of professional certification and to what extent, thus providing incentives for business schools to be EBLD. In sum, a clearer individual and collective identity around being an evidence-based leadership developer could help tackle the key challenges identified herein. However, it is important to note that this assumes that the accrediting institutes rely on the best available evidence such that they are qualified in their certification.

Beyond the importance of understanding the role of identity in enhancing the use of evidence-based leadership development content and methods, focusing on EBLD identity (or lack thereof) and drawing on BI research can offer a novel contribution to the EBM literature. These findings suggest that being an EBLD does not result only from having the knowledge and skills (Rousseau & Gunia, 2016), but is also shaped by the individual's perception of themselves as EBLD, or their identity. As identity has a significant role in shaping behavior (Festinger, 1957; Higgins, 1997; Lord & Hall, 2005), exploring its effects on adopting evidence-based practices can provide novel insight into the barriers for such behavior, as well potential avenues to address it. We elaborate on the implications of this next.

Discussion

Business schools have largely advocated that leadership and its development are central to their schools’ mission statements. Our overriding concern guiding this work is whether business schools are truly practicing what they preach. Without agreed upon professional standards of quality, based on solid evidence on what does and does not work, the academic leader development industry risks becoming viewed as hypocritical to the extent there is a gap between what is espoused and what is enacted. This is unacceptable if we assume that effective leadership development plays an important role in generating the business leaders that the world needs.

Our analysis of the state of professional standards of leader development across a broad range of business schools does not suggest a “Wild West” 2 context where the frontier and territories are lawless, however, we have identified some clear challenges around the definition, science, governance, and monitoring of LDPs. Our analyses are not just descriptive in highlighting the state of affairs, but also offer some interesting micro (e.g., confusion around what is effective leader development) and macro (e.g., incentives that push developers away from being evidence-based) rationales into why such a lack of professional standards may exist in business schools. At the highest level of analysis, we identified the lack of a clear identity of being an EBLD, individually held and collectively endorsed, as an important roadblock to improving evidence-based leader development in business schools.

We defined an EBLD-identity as defining oneself as being evidence-based in what (i.e., content) and how (i.e., method) one develops leaders. This further implies being evidence-based in choosing content and methods based on the available evidence (i.e., selection), as well as evaluating whether chosen interventions work as intended (i.e., evaluation). We highlighted examples of low- and high-quality evidence-based thinking in both the selection and evaluation process, to help academics and business schools reflect on where they stand in their own EBLD-identity. Furthermore, we highlighted various identity strategies employed by respondents that may rationalize scoring relatively low on EBLD-competency while maintaining the image of being evidence-based overall. We hope that our overview of rationales will help academics better understand their own rationalizations and thus encourage them to take ownership over the matter and help them better align words and deeds; either by scoring higher on EBLD-actions or scoring lower on EBLD-attitudes (Festinger, 1957) – that is, either walk the talk or change the talk.

Additionally, we highlighted the importance of EBLD-identity as being collectively endorsed or held. When there is shared understanding amongst developers of what practices have more or less impact and, perhaps more importantly, a shared mindset of remaining critical of what does (not) work, humble and nuanced about one's own efforts, an individual's EBLD-identity likely further reduces discrepancies between espousal and enactment because it is collectively endorsed. Beyond that, creating a collective identity (e.g., through a professional group or network) can provide a source for individuals to draw on as they develop their individual identities, Brewer and Gardner, 1996). Ideally, such collective endorsement does not start from an avoidance control- or prevention-perspective that highlights what individuals and institutes cannot do, but an approach- or promotion-perspective (Higgins, 2012) that emphasizes a shared identity of the added value of leader developers remaining curious about what really works (Ashforth & Mael, 1989; Tajfel & Turner, 1986). Therefore, perhaps what is needed less is a ranking or accreditation, but a collective movement intrinsically motivated by “getting better at leadership development collectively.”

Theoretical Implications and Avenues for Future Research

Our work makes several theoretical contributions to past work on EBM. First and foremost, our paper highlights the importance of an EBLD-identity. Prior work has primarily focused on identifying and developing the competency of EBM (Briner & Rousseau, 2011). Although this captures the ability to be evidence-based, it does not fully capture the motivation or opportunity to do so (Rousseau & Gunia, 2016). An identity would be better suited as a driver of the motivation (individual identity) or opportunity (collective identity) to engage in EBLD. For instance, imagine a credo (similar to a medical doctor's oath to “do no harm”) that invites leader developers to a core set of principles or values that guide their practice. One statement offered by one respondent in such a credo could be: “Developers avow to keep up to speed on the collective science on what works and does not work in terms of leader development, to give clients the best possible developmental experiences.” The appeal of this statement lies in promoting EBLD as a clear identity that people can adopt that would help them to align words and deeds, especially in difficult or ambiguous situations.

Interestingly, this statement further indicates the adoption of an evidence-based identity is not just related to the academic endeavor of “knowing what works and what does not” or discovering “the truth.” Instead, a deeper passion toward an evidence-based identity comes from the desire to have an impact on society in a responsible way. As the example credo-statement above shows, the adoption of EBM is not for the sake of science, which would be mostly self-reinforcing (i.e., we believe in what we do), but is offered in service of making a meaningful and sustainable impact on the world. A better understanding of the different values associated with being evidence-based would help clarify people's motivation toward or away from being evidence-based.

Understanding the situated importance of an EBLD-identity is also important for attempts to develop an EBLD-identity. Although past work has made great strides in developing the competencies to think in an evidence-based manner (Bartunek, 2011; Briner & Walshe, 2013; Erez & Grant, 2014; Giluk & Rynes-Weller, 2012; Pfeffer & Sutton, 2006; Wright et al., 2016; Wright et al., 2018), capturing the heads of the crowd may not mean the same as capturing their hearts. Ironically, the science on leader development could help point the way by switching from more instrumental to more transformative developmental exercises (Avolio et al., 2009; Avolio et al., 2010; Petriglieri et al., 2011; Petriglieri & Petriglieri, 2015). Transformative development is less concerned with skill development and focuses more on changing mindsets; changing the way people look at themselves and the world. Beyond encouraging more use of these and other methods, we advocate that those who engage in such developmental efforts adopt methods that have been studied systematically and thus best suited to guide participants to an evidence-based identity.

Future work should also try to capture more fully what exactly an identity of EBLD entails. Our work suggests that the identity of being evidence-based is domain-specific, such that one can be evidence-based in one's own narrow research specialty, but it may not translate to the dissemination of that knowledge. Indeed, many respondents highlighted that they see themselves as evidence-based in their research efforts, but not in their teaching efforts (i.e., they do not know, nor do they apply the state-of-the-science in development science). This need not be a problem if espousal is aligned with enactment. Often a general evidence-based identity (e.g., at the school level) is assumed without specifying in which areas this applies. This overgeneralization is problematic in that outsiders see the person as saying A (e.g., I work at a university thus I care about being evidence-based) while doing B (e.g., I implement MBTI).

Finally, our paper also contributes to prior literature on leader development. In recent decades, important strides have been made in the leader development literature that leader development does not only work but that it shows considerable return on investment (e.g., Arvey et al., 2007; Zhang et al., 2009). These are great advances, but they yield few consequences if that knowledge is not picked up by academics (let alone practitioners) in their efforts to develop better leaders. For others to adopt their efforts, those in the leader development “profession” will need to take extra steps to go beyond additional intervention research to addressing bigger research questions that help to establish it as a legitimate science and professional practice. For instance, some common understanding of what leader development includes (and excludes) would help set a clearer scope, ideally in a way that is easily accessible for others to use. Furthermore, we hope that those in the leader development science are the first adopters of the identity of an evidence-based leadership developer and will work together to collectively enforce and protect this identity.

Practical Implications

Beyond the theoretical implications of this work, we consider how our findings lead to more practical steps that can be taken by business schools and leadership centers to make LDPs more evidence-based. First, this work offers a general framework to think about and examine LDPs as evidence-based, in that it highlights the need to examine both the content and methods used in such programs, as well as examining the extent to which content and methods are both selected and evaluated in terms of their effects on participants’ development as leaders. Therefore, this can be a starting point for those who wish to examine their LDPs and evaluate the extent to which they are evidence-based, as well as providing some information as to how to improve on these two aspects.

Additionally, taking a more system-level view, in Appendix B, we offer a series of reflection questions and corresponding schematics that addresses the most important challenges identified in our work into a step-by-step framework to critically reflect on whether one's school is evidence-based in its LD programs. Here we elaborate further on those factors that address the root cause of fostering the EBLD identity within academia.

First, those engaged in leader development do not always identify as evidence-based leadership developers, and so the first important step would be to address this issue and work to create a stronger identity in a way that would be appealing for those engaged in the work of leader development. Ideally, we can create a collective identity that leadership developers could derive their individual identity from (Ashforth et al., 2011), by developing EBLD as an independent field. This involves having dedicated publication outlets (academic as well as practitioner-oriented) geared toward EBLD. Alternatively, existing journals could push more toward developmental intervention studies to counterbalance a more dominant focus on descriptive (leadership) science. Additionally, there could be awards for evidence-based leader development programs or efforts to highlight schools or universities outstanding in their efforts toward leader development. Over time, these could be translated to existing accreditation or rankings, with the caveat that this does not take away from the intrinsic, developmental appeal of engaging in these self-reflections. Ideally, this extends beyond the academic community to include online or offline communities as well as professional associations dedicated to EBLD.

In anticipation of the widespread acceptance of the importance of a collective EBLD identity, there is a reality that undermines academics from becoming more evidence-based despite the best intentions to do so (e.g., lack of time or resources to implement more rigorous evidence-based methods and assessment). Here we would like to highlight the positive stories we collected in our interview of the relatively simple, creative, and low investment strategies in which some directors made their programs more evidence-based. This involves thinking about data that are already being collected in a different way (e.g., administering 360-degree surveys multiple times throughout the program and examining individual progress on its dimensions), or other types of data that may be relatively easy to collect. For example, systematic efforts would be valuable to collect data on alumni's career trajectories post-graduation, such as how many years until the alumni advanced to a leadership position in their organization (or reached executive positions). Although there are likely to be a plethora of factors affecting promotion beyond leadership skills acquired at school, it can provide some evidence into the effectiveness of LDPs. Such metrics are relatively easy and cheap to collect and have the potential of being instrumental in demonstrating results in order to receive more resources, which will allow collecting more developmental metrics. Similarly, it requires substantial investment in terms of time and money to transform complete programs, making this quite a monumental challenge for most center and program directors. Yet, starting small can be a more successful strategy in many cases. It is easier to choose a specific program or class to update, create a circle of faculty allies with knowledge of evidence in this area, rigorously assess the specific component that has been modified, and then share this with other faculty in the business school. Having successful outcomes, or simply providing a demonstration that such change can be done, can encourage others to do the same. Interestingly, our respondents highlighted that the adoption of the identity of an EBLD is likely key in convincing people to take the first step as when you believe in the importance of this: “You can’t in good conscience not do more.”

Limitations

As with any research, our study has limitations. A first clear limitation is the representativeness of the sample, as our respondents come from a specific set of business school (top 100 schools). Not all business schools have the same focus or resources as do top-tier research schools and thus some of our insights need to be contextualized, however, we propose that all schools could become more evidence-based in their LD programs, as many schools prioritize student development. Additionally, there are restrictions in terms of cross-cultural perspectives, as most of our sample was “Western,” predominantly located in the US. This is problematic, in that views around why things are evidence-based may differ across cultural boundaries. For instance, prior research has shown that in Eastern cultures that score high on power distance there may be greater trust in authority figures (Hofstede, 1997) and hence less distrust resulting from not walking one's talk (Effron et al., 2018; Friedman et al., 2018). Related to this is the fact that we only interviewed center directors, yet there are others in schools that are involved with decision-making and training of LDPs, who may have different perspectives. Future work should extend our findings to a larger and more diverse sample, likely using different methods (e.g., large-scale survey research), to look at the generalizability of our findings. One key consideration here is whether a different sample would lead us to expand on the key challenges and root causes that our paper has identified.

Addressing these challenges in academia first may be key to improving EBLD in the broader leadership development industry. Hannah et al. (2014) argue that what we teach students in an academic setting, often at the start of their career, has the tendency and potential to become normative later in one's career; that it becomes self-fulfilling. In this case, that would mean that if we could impose higher standards on evidence-based leadership development in a business school context, over time, we could expect those students to enforce similar standards on their own dealings with leadership development programs, whether it entails selecting programs for themselves or selecting them for their organization.

Conclusion

The academic leader development industry is in clear need of more professional standards of quality in order to establish, maintain, or enhance its credibility as a high-quality service provider. Our analysis of leader development in business school contexts reveals not only the state of the academic industry but also various reasons why many business schools may have found themselves out of compliance with their own stated missions: which we argue are centered around the absence of a clear professional identity, individually held and collectively endorsed, of an evidence-based leadership developer. This is important as academics, and the (business) schools they reside in, may stand to lose trust from not walking their academic, evidence-based talk, if not properly addressed in the future as markets for students become increasingly more competitive. Our results thus challenge academics to consider their identity as not just an evidence-based scholar of leadership science, but also as an evidence-based developer.

Footnotes

Authors’ Note

Hannes L. Leroy, Moran Anisman-Razin, Pissita Vongswasdi, and Johannes Claeys conceptualized the article and wrote the original and revised versions with multiple rounds of input, editing, and review by each additional coauthor, who have assisted with developing deeper understanding and insight into the research (listed alphabetically by last name) Bruce J. Avolio, Henrik Bresman, J. Stuart Bunderson, Ethan R. Burris, James Detert, Lisa Dragoni, Steffen Giessner, Kevin Kniffin, Thomas Kolditz, Gianpiero Petriglieri, Nathan C. Pettit, Sim B. Sitkin, and Niels Van Quaquebeke.

Acknowledgments

The authors want to acknowledge the helpful comments of Denise Rousseau, Brad Bell, Frederik Anseel, and Lindy Greer in preparing this manuscript. We also would like to thank Sean Hannah (the editor) for useful insights.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Notes

Correction (June 2023):

Article updated to correct affiliation of author Johannes Claeys from “IESEG Business School, France” to “IESEG School of Management, France”.

Author Biographies

Prior to entering academia, Professor Bresman worked in several roles as a manager, consultant, and entrepreneur. He co-founded a venture capital firm focused on early-stage technology businesses.

In the classroom, Kniffin enjoys "meeting students where they are" by engaging students' experiences in the pursuit of studying evidence-based principles of individual and organizational behavior. Winner of the Innovative Teacher Award from the College of Agriculture and Life Sciences (CALS) in 2020, Kniffin employs an interdisciplinary approach to both Organizational Behavior (AEM 3245/6245) and Leadership and Management in Sports (AEM 3320/6325). Kniffin has presented "Ten Bottom-Line Lessons from the Big Leagues" as the University's Faculty Homecoming Speaker (2016) and speaks regularly for alumni, governmental, and business organizations.

He holds a Bachelor’s degree in Psychology and Sociology from Vanderbilt University, three Master’s degrees, and PhD in Psychology from the University of Missouri.