Abstract

The fidelity of interventions is essential for research, but there is little understanding about how intervention fidelity can be promoted and monitored in clinical practice. This review aimed to establish the methods used to promote and monitor the fidelity of occupational therapy interventions implemented within clinical practice. The scoping review followed Arksey and O’Malley’s (2005) five-stage methodological framework. Four databases were searched, and included papers had a primary focus on the fidelity of an occupational therapy intervention implemented within clinical practice. Fifty-nine articles described a combination of supports including, therapist training (n = 39), manuals or protocols (n = 24), supervision or mentoring (n = 24), checklists and rating scales (n = 46), and video and audio recordings (n =18). Implementation fidelity of occupational therapy interventions within clinical practice requires a combination of approaches, with the use of checklists and discussion within supervision as a sustainable strategy.

Plain Language Summary

We wanted to find out what occupational therapists can do to make sure they deliver treatments correctly. We searched the literature and found 59 articles. The findings identify two main ways to do this. First, there were ways to gain the knowledge and skills. This includes training, using manuals or protocols, and learning from someone more experienced. Second, there were ways to check how well the treatment was delivered. This includes using checklists, rating scales, and video or audio recordings. Occupational therapy treatments can often be complex, and the best results are achieved when they are delivered correctly. This review helps therapists identify how to build their knowledge and skills and what they can use to check that the treatment was delivered correctly.

Introduction

Treatment fidelity is defined as the degree to which an intervention is implemented as it was intended (Bellg et al., 2004) and is important to ensure delivery of effective services. Occupational therapy interventions are often complex in nature (Nielsen et al., 2022) and considered difficult to quantify and measure (Hildebrand et al., 2012; Powers et al., 2022). Therefore, fidelity measures used within occupational therapy interventions have traditionally been poorly described (Hildebrand et al., 2012) and lack clear strategies to ensure the intervention is implemented as intended (Shin et al., 2022; Toglia et al., 2020). These complexities are often due to occupational therapy interventions being context dependent, influenced by unique client-therapist interactions, and indi-vidualized professional reasoning (Breckenridge & Jones, 2015). Nonetheless, fidelity measures strengthen treatment implementation (Hand et al., 2018) and allow for a more confident interpretation of treatment outcomes (Powers et al., 2022).

Within the literature, fidelity is often discussed in relation to the efficacy of trials and outcomes research (Hand et al., 2018; Toglia et al., 2020). Recommendations such as those from the National Institute of Health Behavior Change Consortium (NIH BCC) (Bellg et al., 2004) are designed to support and monitor treatment fidelity within research. Bellg and colleagues (2004) propose five key components of fidelity: (a) design of intervention; (b) training of providers; (c) delivering the treatment; (d) receipt of treatment; and (e) enacting treatment skills (Bellg et al., 2004). Created for the purpose of increasing research rigor, recommendations and other frameworks do not directly translate to implementation fidelity and the extent to which a therapist delivers an intervention as it was intended in a clinical context (Powers et al., 2022).

The importance of examining treatment fidelity in efficacy studies is recognized (Bowyer & Tkach, 2019; Murphy & Gutman, 2012). However, approaches to fidelity can differ depending on whether the purpose is for research efficacy or implementation into clinical practice (Toglia et al., 2020). The purpose of this review was to explore the methods used to promote and monitor the fidelity of interventions implemented within the context of occupational therapy practice.

Method

This scoping review was guided by the methodological framework originally developed by Arksey and O’Malley (2005) and later revised by Levac et al. (2010). The framework involves six steps: (a) identify the research question; (b) identify relevant studies; (c) selecting studies; (d) charting the data; (e) collating, summarizing, and reporting results; and (f) an optional stage of consultation (Arksey & O’Malley, 2005). The optional stage of consultation was not completed in this review, as the group of focus, occupational therapists, was represented in the authorship team.

Stage 1: Identifying the Research Question

The research question guiding this review was “What methods are used to promote and monitor fidelity of occupational therapy interventions implemented within clinical practice?”

Stage 2: Searching for Relevant Studies

The search strategy was developed in consultation with an experienced librarian. Systematic searches of EMBASE, CINAHL, MEDLINE, and OTSEEKER were completed in December of 2022, with restriction to English language and peer-reviewed articles and adjustments to the search terms as database requirements. No date restrictions were imposed to ensure all relevant studies were identified. Search terms included fidelity OR “fidelity measure*” OR “intervention fidelity” OR “fidelity protocol” OR “implementation fidelity” OR “treatment integrity” OR “best practice guideline*” AND intervention* OR protocol OR approach* OR treatment* OR “practice pattern*” OR technique* OR program* AND “occupational therap*” OR OT. Search terms were developed to widely capture potential literature with terms that may be used other than fidelity.

Stage 3: Selecting Studies

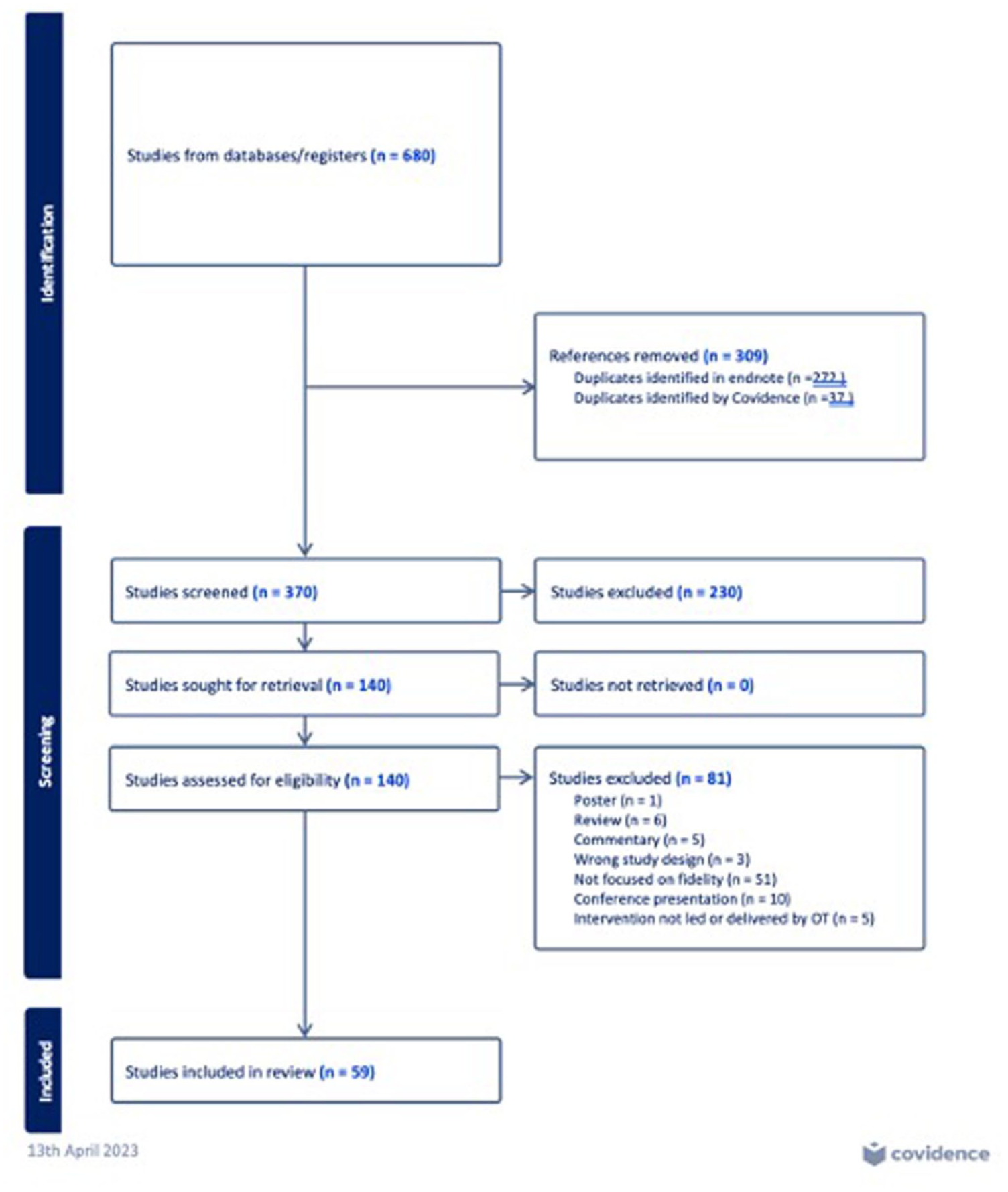

Identified studies were downloaded to EndNote, duplicates removed, and then exported to Covidence (Veritas Health Innovation, 2023). Study titles and abstracts were screened by two authors to determine if they met the eligibility criteria. Papers were included if they: included interventions that were led or completed by an occupational therapist; had a primary focus on how to measure or support the fidelity of the intervention when implemented within clinical practice; or were implementation studies with a focus on fidelity; or were protocol papers that provided required details on the measurement or support of fidelity for studies that had been completed or were underway. Papers were excluded if: they were a scoping or systematic review; the main objective was to report the findings of a randomized controlled trial; or were a conference abstract. Two authors completed full-text screening of 140 articles with any conflicts discussed and resolved with a third author when required. The reference lists of the 59 included articles were reviewed, and no new articles meeting the eligibility criteria were identified. Figure 1 outlines the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) diagram of study selection.

Preferred Reporting Items for Systematic Reviews and Meta-Analysis Flowchart for the Selection of Studies for the Scoping Review.

Stage 4: Charting the Data

A data charting form was collectively developed by the research team utilizing Covidence (Veritas Health Innovation, 2023). Two authors independently extracted data from five articles to ascertain consistency with the research question, then collaborated to further refine the extraction template. Key information regarding the following was extracted and tabulated: study characteristics (journal of publication, aim of the study, study design, and participant cohort), implemented intervention, practice setting, and information regarding how fidelity was promoted or monitored.

Stage 5: Collating, Summarizing, and Reporting Results

Results were collated and summarized by the primary author and discussed with the research team. Data were extracted and summarized using the data extraction tool and an Excel spreadsheet.

Results

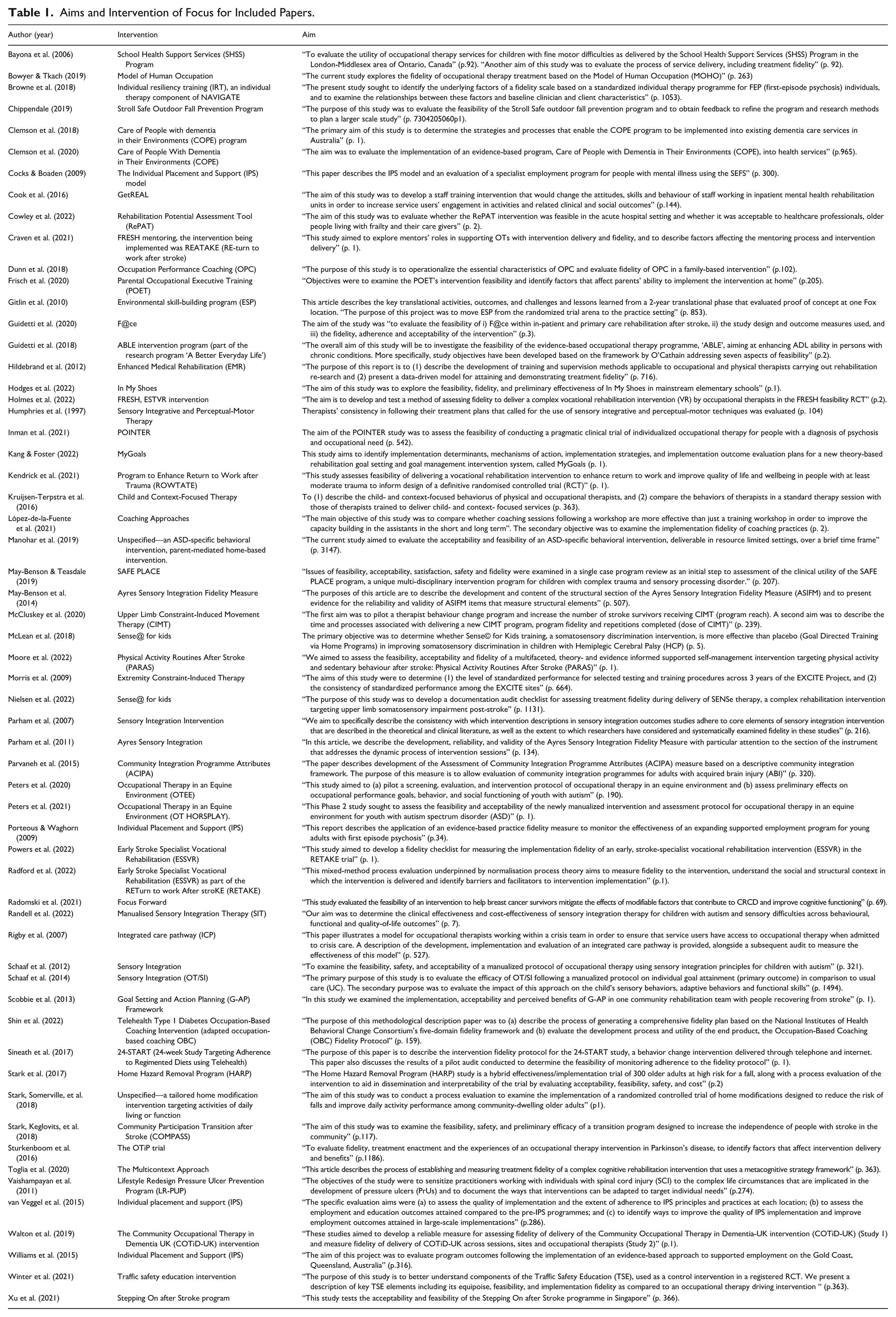

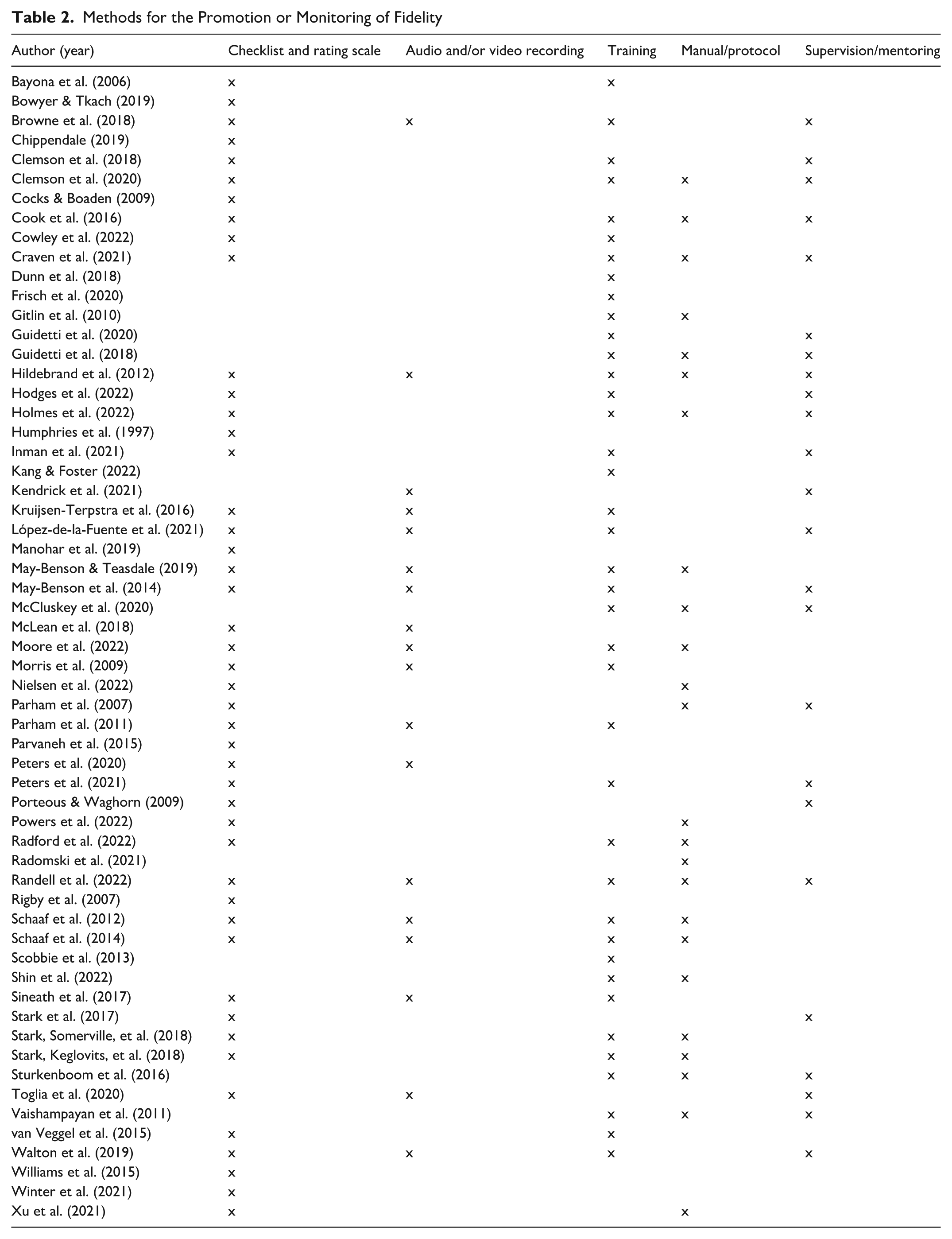

The 59 studies (Table 1) were published across 33 journals. The top four journals were the British Journal of Occupational Therapy (13.5%), the American Journal of Occupational Therapy (10.2%), BMC Geriatrics (6.7%), and Pilot and Feasibility Studies (6.7%). Nearly half of the studies originated from the United Kingdom (23.7%), Australia (15.2%), and the United States (7.3%). Articles were published between 1997 and 2022, but most studies were published from 2015 to 2022 (74.5%). Interventions (n = 54) were in pediatric (28.8%), stroke (18.6%) and mental health (13.5%) settings, and 11 (20.4%) were related to Sensory Integration. Table 2 overviews the methods for the promotion or monitoring of fidelity in the included studies.

Aims and Intervention of Focus for Included Papers.

Methods for the Promotion or Monitoring of Fidelity

Methods that Promote Fidelity

Training

Thirty-nine (66.1%) studies discussed training that ranged from a 5-hr training session (May-Benson & Teasdale, 2019) to a 9-day workshop (Morris et al., 2009). Training often included introduction of the intervention manual and procedural aspects of the intervention (e.g., Craven et al., 2021; Frisch et al., 2020; van Veggel et al., 2015) and practical learning experiences (e.g., Cook et al., 2016; Gitlin et al., 2010; Guidetti et al., 2020; Parham et al., 2011). Education on the theoretical underpinnings of the intervention, information on intervention components, and how to deliver them was regularly included (e.g., Bayona et al., 2006; Clemson et al., 2020; Peters et al., 2021; Vaishampayan et al., 2011). A smaller proportion of studies (10.2%) identified the inclusion of follow-up training to enhance treatment fidelity over time. For instance, Holmes et al. (2022) and Radford et al. (2022) included an additional day of training 6 months after intervention implementation.

Notably, training often occurred in conjunction with a range of other approaches to support and monitor fidelity. For example, Kang and Foster (2022) described training that included educational meetings but was accompanied with resources identifying how the intervention could be adapted, shadowing clinicians, auditing intervention components, provision of feedback, and ongoing consultation.

Intervention Manual or Protocol

Twenty-four (40.7%) studies discussed the use of an intervention manual or protocol. Manuals were designed to outline the key components of the intervention (e.g., Craven et al., 2021; May-Benson & Teasdale, 2019; Parham et al., 2007; Radomski et al., 2021; Randell et al., 2022), and papers often referred to how to access the manuals (e.g., Clemson et al., 2020; Cook et al., 2016; McCluskey et al., 2020). The adopted approaches ranged from a single-page prompt sheet, with additional treatment examples (Hildebrand et al., 2012) to manuals that addressed procedures, session content, and a toolbox of intervention components (e.g., Guidetti et al., 2018). Other resources for use alongside the manual included guiding scripts, treatment documentation forms, and logbook for notes (e.g., Gitlin et al., 2010; Holmes et al., 2022; Inman et al., 2021; Kendrick et al., 2021; Nielsen et al., 2022; Powers et al., 2022). These resources were often reviewed and audited to monitor adherence to intervention protocol (e.g., Frisch et al., 2020; Scobbie et al., 2013). It was noted that although intervention manuals were important, therapist training to support understanding of the manual and core techniques in combination with direct observations and videotaping of intervention sessions was also essential to ensure intervention fidelity (Hildebrand et al., 2012).

Supervision and Mentoring

Mentoring and supervision often followed the training to support and assist in quality management of intervention implementation (e.g., Clemson et al., 2018; Craven et al., 2021; Kendrick et al., 2021; López-de-la-Fuente et al., 2021; May-Benson et al., 2014; Randell et al., 2022; Stark, Somerville, et al., 2018). Described in 24 (40.7%) articles, the format of mentorship was dependent on the availability of a suitable supervisor or mentor and could take the form of peer support, individual, or group supervision (Walton et al., 2019). Group meetings were commonly used to provide support and encourage collective problem solving (e.g., Clemson et al., 2020; Guidetti et al., 2020; McCluskey et al., 2020; Vaishampayan et al., 2011).

Discussing fidelity outcomes within supervision or mentoring sessions was beneficial as a feedback mechanism (Randell et al., 2022) or to address and clarify difficulties with applying and adhering to the manual in clinical practice (e.g., Browne et al., 2018; Cook et al., 2016; Guidetti et al., 2018; Holmes et al., 2022). Discrepancies between observed adherence to intervention components and the manual or protocol were addressed with the practicing therapist and provided as feedback to ensure behavior change (e.g., Browne et al., 2018; Hildebrand et al., 2012; Peters et al., 2021; Porteous & Waghorn, 2009; Stark et al., 2017). Technology was used to support and supplement mentoring and supervision such as the use of small group teleconference mentoring sessions (Radford et al., 2022), online discussion board with the involvement of an experienced coach (Sineath et al., 2017), or the provision of supervision as ongoing through email correspondence and telephone calls in addition to on-site feedback (Toglia et al., 2020).

Monitoring of Fidelity

Checklists and Rating Scales

Forty-six studies (77.9%) discussed the use of fidelity checklists and/or rating scales. Checklists were used to record adherence to key components of the intervention (e.g., Cook et al., 2016; Cowley et al., 2022; Hildebrand et al., 2012; Holmes et al., 2022; Peters et al., 2020; Powers et al., 2022; Toglia et al., 2020). Audit tools reviewed therapists’ case records and documentation (e.g., Frisch et al., 2020; Nielsen et al., 2022; Parham et al., 2007; Radford et al., 2022; Scobbie et al., 2013). Other checklists were designed to be used as a self-report tool used before, during, or after the intervention (e.g., Manohar et al., 2019; Morris et al., 2009; Rigby et al., 2007; Sineath et al., 2017; Stark et al., 2017).

Examples were often included to assist with coding guidelines and scoring (Walton et al., 2019). Kruijsen-Terpstra et al. (2016) developed a rating manual specific to their Pediatric Rehabilitation Observational Measure of Fidelity. Rating scales, particularly 3- or 5-point Likert-type scales (e.g., Bowyer & Tkach, 2019; Humphries et al., 1997; López-de-la-Fuente et al., 2021; Xu et al., 2021), were commonly used in conjunction with a fidelity checklist to rate consistency and adherence to core intervention components and the protocol (e.g., Clemson et al., 2018; Kruijsen-Terpstra et al., 2016; May-Benson & Teasdale, 2019; McLean et al., 2018; Parvaneh et al., 2015; Porteous & Waghorn, 2009).

Williams et al. (2015) developed a fidelity scale specific to their intervention that rated 15-items across three domains (i.e., staffing, organization, and services) using a 5-point Likert-type scale. The Ayres Sensory Integration Fidelity Measure (ASIFM) was used in six studies (10.2%). The ASIFM addresses structural and process components of intervention fidelity (Parham et al., 2011). Structural components include factors such as therapist credentials and training, environmental components (e.g., resources and physical space), and the presence of collaborative goal setting. Process elements refer to the therapist’s adherence to the 10 primary components of Sensory Integration and key therapeutic strategies (Parham et al., 2011). Each section includes a total score which then translates to an overall score; an 80% pass rate is needed to demonstrate successful adherence to the fidelity protocol (e.g., May-Benson et al., 2014; Parham et al., 2011; Randell et al., 2022; Schaaf et al., 2012).

Audio and/or Video Recording

Eighteen (30.5%) studies identified the use of audio- and video-recorded sessions. All studies using the ASIFM were scored by assessing video-taped sessions (e.g., May-Benson et al., 2014; Parham et al., 2011; Randell et al., 2022; Schaaf et al., 2012, 2014). Hildebrand et al. (2012) reviewed segments of video-taped sessions alongside the treatment manual at weekly meetings, to provide the therapist with feedback and to clarify intervention procedures. Other studies described the use of video recordings to rate and examine sessions (e.g., Kendrick et al., 2021; Kruijsen-Terpstra et al., 2016; López-de-la-Fuente et al., 2021; Walton et al., 2019), often including the dissemination of feedback following analysis (e.g., Browne et al., 2018; Morris et al., 2009). Moore et al. (2022) included audio recordings alongside a fidelity checklist to ascertain whether desired behavioral content was present, and this was then used as feedback before subsequent delivery of the intervention.

Discussion

The purpose of this review was to explore how occupational therapists promote and monitor implementation fidelity of occupational therapy interventions within clinical practice. Although the findings indicate a range of approaches, it is important to recognize that they were rarely used in isolation with authors often reporting that a combination of approaches to establish knowledge and skills were applied with those to monitor fidelity. Training combined with mentoring and supervision supports therapists to develop their knowledge and ensures adherence to the original conceptualization of the intervention (Hildebrand, 2012; Toglia et al., 2020). However, incorporation of manuals, checklists, audits, observations, and audio- or video-recorded sessions is promoted as the gold standard to identify skill-building topics for discussion in supervision (Holmes et al., 2022). Promoting and monitoring fidelity using multiple approaches enables the identification of key components that were not delivered as intended and is considered essential to enhancing outcomes from occupational therapy interventions (Powers et al., 2022). However, it has been estimated that one-and-a-half to 2 days were required to complete fidelity measures such as observations, interviews, and review of documents within a clinical setting (Parvaneh et al., 2015). This is an extensive commitment of resources within the context of the workforce shortages currently challenging the delivery of occupational therapy services across 65% of the World Federation of Occupational Therapists (WFOT) member countries or territories (WFOT, 2022).

Objective fidelity checklists may provide a more practical approach due to their reported quick and easy use (Powers et al., 2022). The refinement of fidelity checklists into self-reflection tools, such as the self-report tool to track the use of motivational interviewing in telephone calls (Sineath et al., 2017), may combat some of the concerns regarding the resourcing concerns and practicalities of maintaining fidelity. One criticism of self-report tools is that without objectivity and critical reflection, or discussion through the critical lens in supervision, there is an increased risk of high levels of bias and less constructive feedback. The scoping review findings highlight that the outcomes of fidelity measures were commonly discussed in supervision and/or mentoring sessions. Therefore, the incorporation of the self-reflection tools into supervision may support discussions that guide discovery and enhanced implementation fidelity.

Implications for Occupational Therapy Practice

The findings highlight that there is no single way to ensure fidelity of an intervention within clinical practice and that implementation fidelity includes both approaches to promote and monitor adherence to the required elements of an intervention. Decisions about what to use should be considered relative to the practice context and the intervention; however, fidelity checklists that inform discussions in supervision sessions may form a sustainable approach.

Limitations

This scoping review was limited to only articles with a specific focus on implementation fidelity of interventions led by occupational therapists. However, this may be considered a strength as we only included papers that provided significant levels of detail on the adopted approaches as opposed to a one-paragraph description within a larger methodology. Furthermore, the inclusion criteria of peer-reviewed articles and studies published in the English language may have limited the inclusion of other forms of knowledge and reinforced a Western perspective. Finally, a full critical appraisal of each included study was not conducted, as the focus of the research question was on types of fidelity supports and measures and not study outcomes.

Conclusion

This review aimed to establish the methods used to promote and monitor the fidelity of occupational therapy interventions. Of the 59 included articles, the most common methods used to support fidelity include training; intervention manuals or protocols; and supervision and mentoring. Methods to support fidelity are beneficial because they facilitate the development of occupational therapists’ knowledge, skills, and confidence to implement interventions as intended. Common fidelity measures were checklists, rating scales, and audio and video recordings. Such measures promote consistency and adherence to key intervention components. Video- and audio-recorded sessions were previously considered gold standard practice; however, studies suggest that for pragmatic reasons, this is not always suitable or sustainable within clinical practice. Occupational therapists should be adequately supported when implementing interventions with approaches to fidelity that are appropriate for the context and intervention.

Footnotes

Ethical Considerations and Consent to Participate

Not applicable for a scoping review.

Author Contributions

All authors were involved in the development of the scoping review protocol and review process. The first author led the drafting of the manuscript with revisions by the remaining team members. All authors approved the submitted manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

No new data generated by this review.