Abstract

Objective

The American College of Surgeons National Surgical Quality Improvement Program (NSQIP) is an important data source for observational studies. While there are guides to ensure appropriate study reporting, there has been no evaluation of NSQIP studies in vascular surgery. We sought to evaluate the adherence of vascular-surgery related NSQIP studies to best reporting practices.

Methods

In January 2022, we queried PubMed for all vascular surgery NSQIP studies. We used the REporting of studies Conducted using Observational Routinely-collected Health Data (RECORD) statement and the JAMA Surgery (JAMA-Surgery) checklist to assess reporting methodology. We also extracted the Journal Impact Factor (IF) of each article.

Results

One hundred and fifty-nine studies published between 2002 and 2022 were identified and analyzed. The median score on the RECORD statement was 6 out of 8. The most commonly missed RECORD statement items were describing any validation of codes and providing data cleaning information. The median score on the JAMA-Surgery checklist was 2 out of 7. The most commonly missed JAMA-Surgery checklist items were identifying competing risks, using flow charts to help visualize study populations, having a solid research question and hypothesis, identifying confounders, and discussing the implications of missing data. We found no difference in the reporting methodology of studies published in high vs low IF journals.

Conclusion

Vascular surgery studies using NSQIP data demonstrate poor adherence to research reporting standards. Critical areas for improvement include identifying competing risks, including a solid research question and hypothesis, and describing any validation of codes. Journals should consider requiring authors use reporting guides to ensure their articles have stringent reporting methodology.

Keywords

Introduction

The American College of Surgeons National Surgical Quality Improvement Program (NSQIP) is a large-scale registry that is an important data resource for surgical research.1–4 Nationwide registries like the NSQIP are powerful data sources because of the significant amount of information they contain; The 2021 NSQIP participant use file (PUF) contains demographic, procedural, and outcome related data on 983,851 cases from 685 different sites while 15 other separate NSQIP PUFs contain over 9.6 million procedures. 5 Although the size and breadth of the procedures and variables are strengths of the NSQIP, the inherent complexity of NSQIP and other analogous large-scale databases predispose them to unwanted variation in research reporting methodology. 6 Inadequate research reporting can obscure sources of biases, mask the strengths and limitations of data sources, and lead to faulty conclusions. 7 In recent years two research reporting guides have been created specifically for observational studies based on datasets such as NSQIP to help authors follow strong reporting standards. In 2015, The REporting of studies Conducted using Observational Routinely-collected Health Data (RECORD) statement was created as an extension of the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) statement and focuses on reporting items specific to the needs of studies that use large-scale databases. 7,8 Separately, in 2018, the editors of JAMA Surgery created a checklist (JAMA-Surgery) as a guide for authors using the NSQIP and ten other databases. 9

Although other investigators have examined reporting methodology in different surgical specialties, none have examined vascular surgery studies based on NSQIP data.10–13 As such, we sought to use the RECORD statement and JAMA-Surgery checklist to appraise the quality of reporting among all published vascular surgery NSQIP studies. Furthermore, we sought to understand the association between Journal Impact Factor (IF) and article reporting quality, given that Journal IF has historically been associated with article quality. 14,15 We hypothesized that vascular surgery NSQIP studies would demonstrate poor to moderate adherence to the RECORD statement and JAMA-Surgery checklist reporting criteria. In particular, we expected the identification of competing risks and clearly defining study inclusion/exclusion criteria to be the most heavily missed reporting items based on our prior work. 14

Methods

Study Selection

In January 2022, we queried PubMed for all NSQIP studies. We included only vascular surgery studies that used the NSQIP. We excluded all non-vascular surgery clinical research studies, studies that used multiple data sources, and studies that qualitatively described the NSQIP. We also excluded all editorials, commentaries, and reviews.

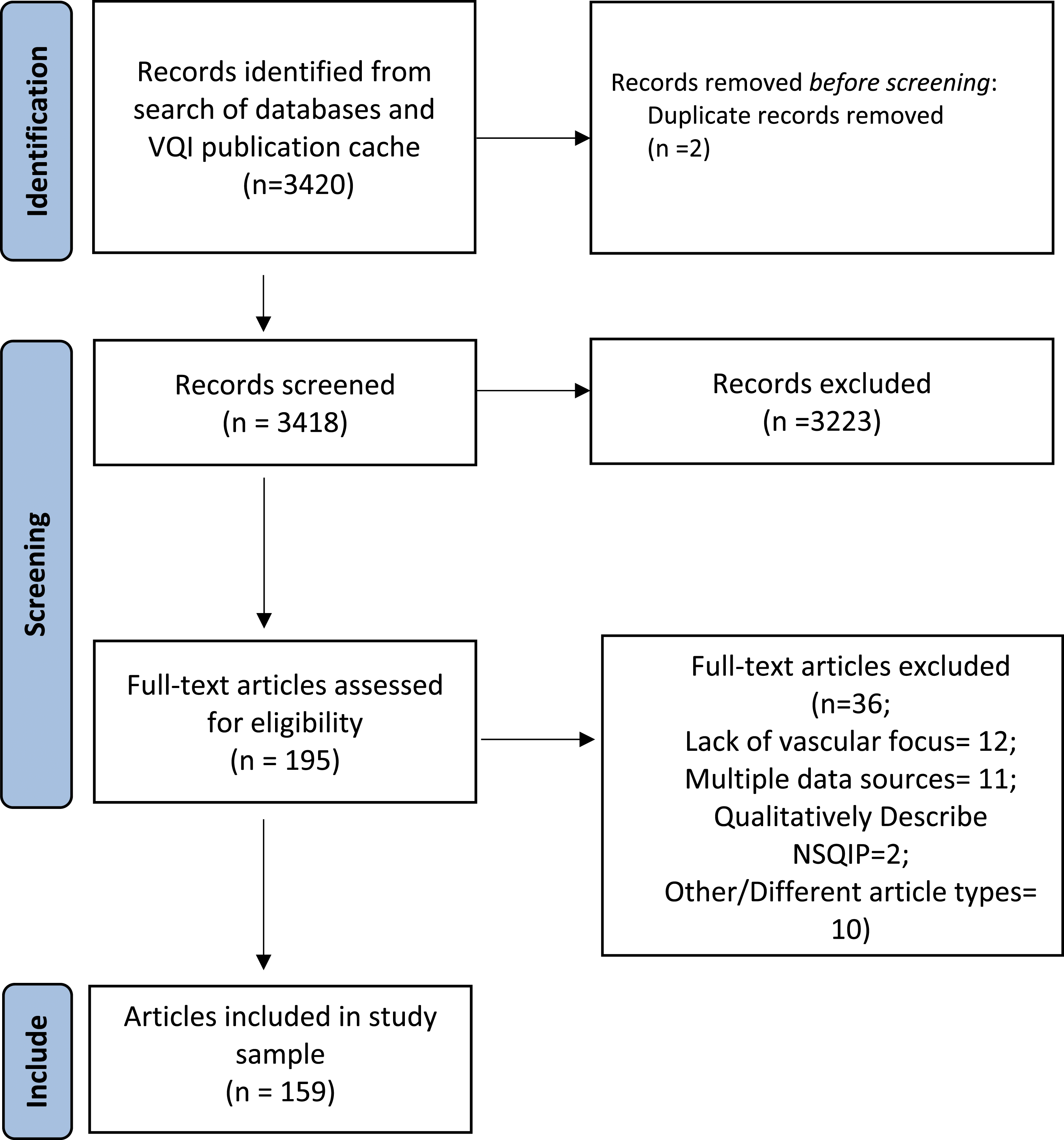

Following study retrieval and duplicate removal, six study team members (AM, WU, KL, AD, JR, CV) reviewed the title and abstracts of all studies for potential inclusion. The title and abstract of each study were reviewed by two reviewers and required the approval of both reviewers to be considered for full-text review. A third reviewer was consulted for any disagreements. All six reviewers assessed articles for review during full-text review. Two reviewers needed to approve each article during full-text review, and a third study team member was consulted to resolve any disagreements about study inclusion/exclusion. Our study sample selection process followed the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) methodology. 16 This study was deemed exempt from review by the University of Florida Institution Review Board (IRB202101723). Informed consent was not obtained or needed for this study since our study sample was made up of scientific articles available to the public.

Evaluation of Article Reporting Quality

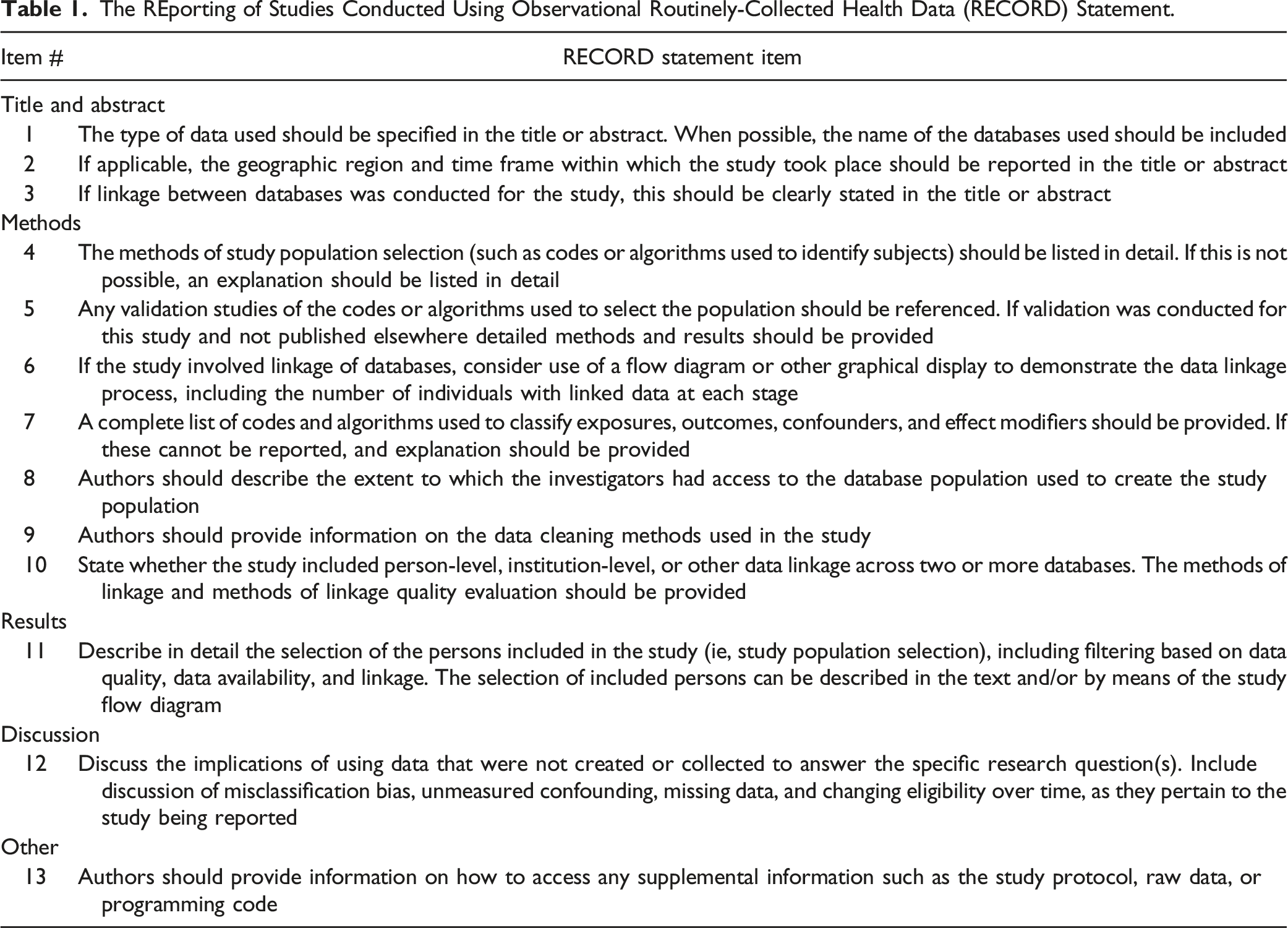

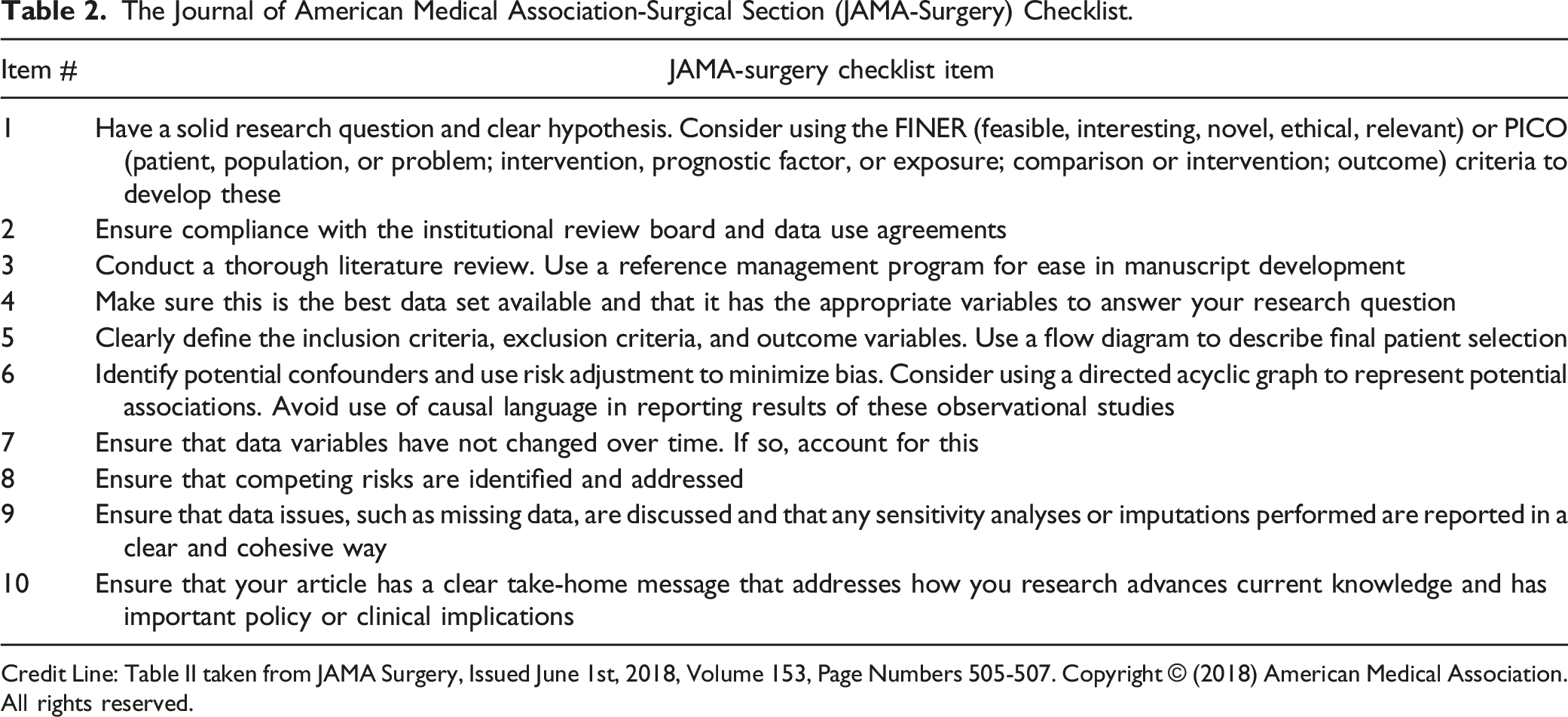

After consulting the literature for the most commonly used checklists in appraising research reporting, we chose to utilize two appraisal guides to analyze each study:11-14 1. The Reporting of studies Conducted using Observational Routinely-collected Health Data (RECORD) statement: The RECORD statement is made up of thirteen unique items Table 1. We excluded five items (Table 1 items 3, 6, 8, 10, and 13) due to their lack of relevance to NSQIP studies. The RECORD statement was created by an international group of 100 health data researchers collectively known as the RECORD Initiative.

8

2. JAMA Surgery (JAMA-Surgery) Checklist: The JAMA-Surgery checklist is made up of 10 items Table 2. Three items from the JAMA-Surgery checklist were excluded from our analysis (Table 2 items 3, 4, and 7) because we were unable to assess them accurately. The REporting of Studies Conducted Using Observational Routinely-Collected Health Data (RECORD) Statement. The Journal of American Medical Association-Surgical Section (JAMA-Surgery) Checklist. Credit Line: Table II taken from JAMA Surgery, Issued June 1st, 2018, Volume 153, Page Numbers 505-507. Copyright © (2018) American Medical Association. All rights reserved.

Both reporting guides were used to appraise each study. Each item on the RECORD statement and JAMA-Surgery checklist was assigned one point. After excluding items from both checklists, scores out of 7 (JAMA-Surgery) and out of 8 (RECORD) total points were assigned. Six study team members (AM, WU, KL, AD, JR, CV) scored papers. Each paper was independently scored by two team members, and a third team member was consulted for any disagreements. After scoring was complete, pooled Cohen’s kappa scores were calculated. 17 We obtained journal IF percentiles from Journal Citation Reports. 18 We classified journals with an IF greater than or equal to the 90th percentile of one of their core journal categories as having high IF. 18 We classified all other journals as low IF.

Statistical Analysis

Continuous variables are represented by medians and interquartile ranges (IQR), and categorical variables are presented using percentages and frequencies. Fisher’s exact test was used as indicated. Pearson’s correlation coefficient was used to assess for a correlation between scores on the RECORD statement and the JAMA-Surgery Checklist. The significance threshold for all analyses was a 2-tailed P-value ≤.05. All statistical analysis was conducted using R (R Foundation for Statistical Computing, Vienna, Austria) and Microsoft Office Analysis ToolPak (Microsoft Corporation, Redmond, Washington).

Results

Search Results

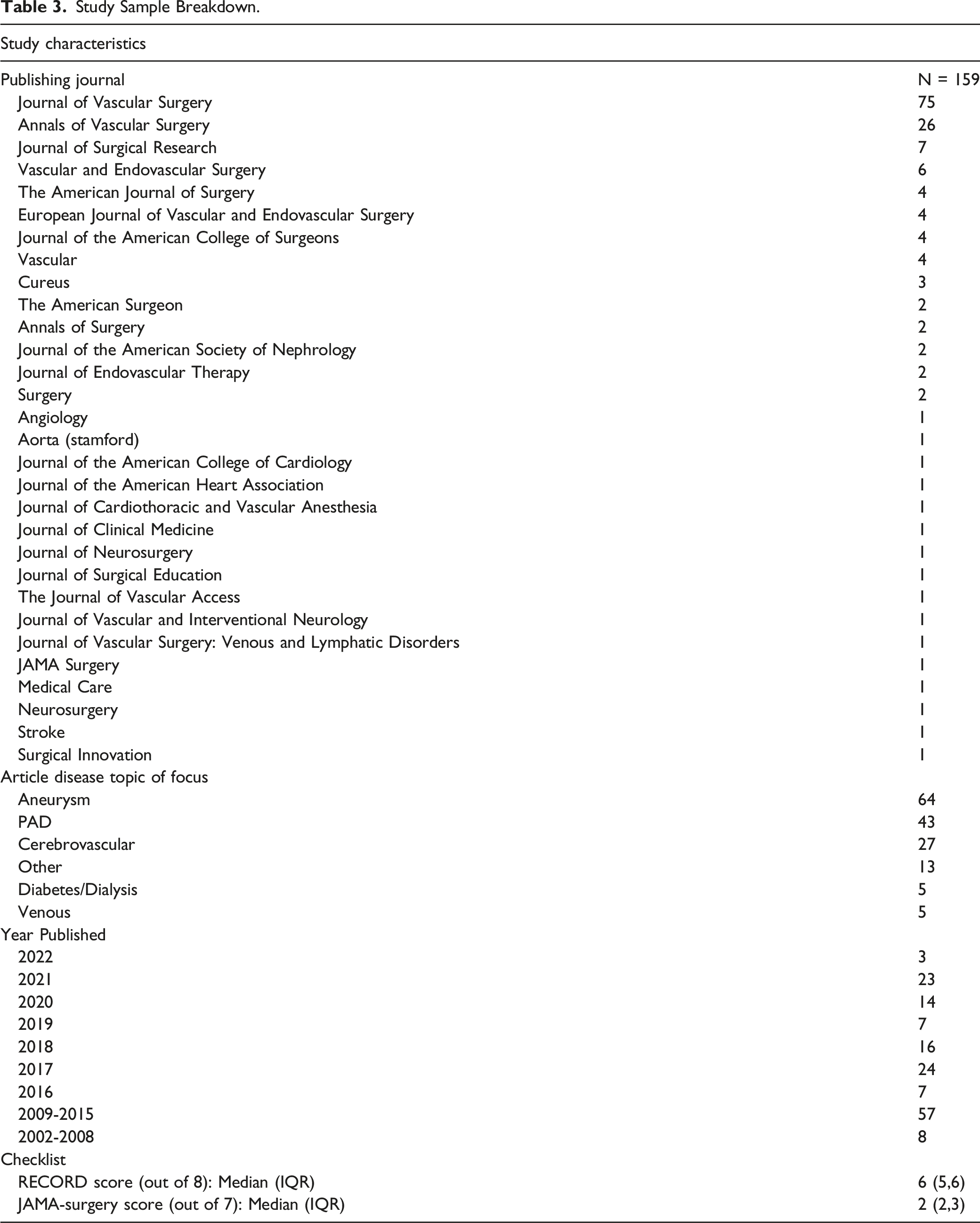

As of January 2022, we found 3420 NSQIP articles indexed in PubMed. After duplicate removal 3418 articles remained. After conducting a thorough title and abstract review we further excluded 3223 studies, which left 195 articles to be reviewed. After a full-text review of all 195 articles, we further excluded 36 articles, which left us with a final study sample of 159 studies Figure 1. Our sample includes articles published between 2002 and 2022. 30 unique journals are represented in our study sample, and nearly half of all NSQIP vascular surgery studies were published in the Journal of Vascular Surgery (n = 75). Aneurysm research (n = 64) was the most common topic of focus of the articles in our study sample. Table 3 displays the characteristics of our study sample. Preferred reporting items for systematic reviews and meta-analyses diagram illustrating study sample selection process. Study Sample Breakdown.

Reporting Guideline Criteria Fulfilment

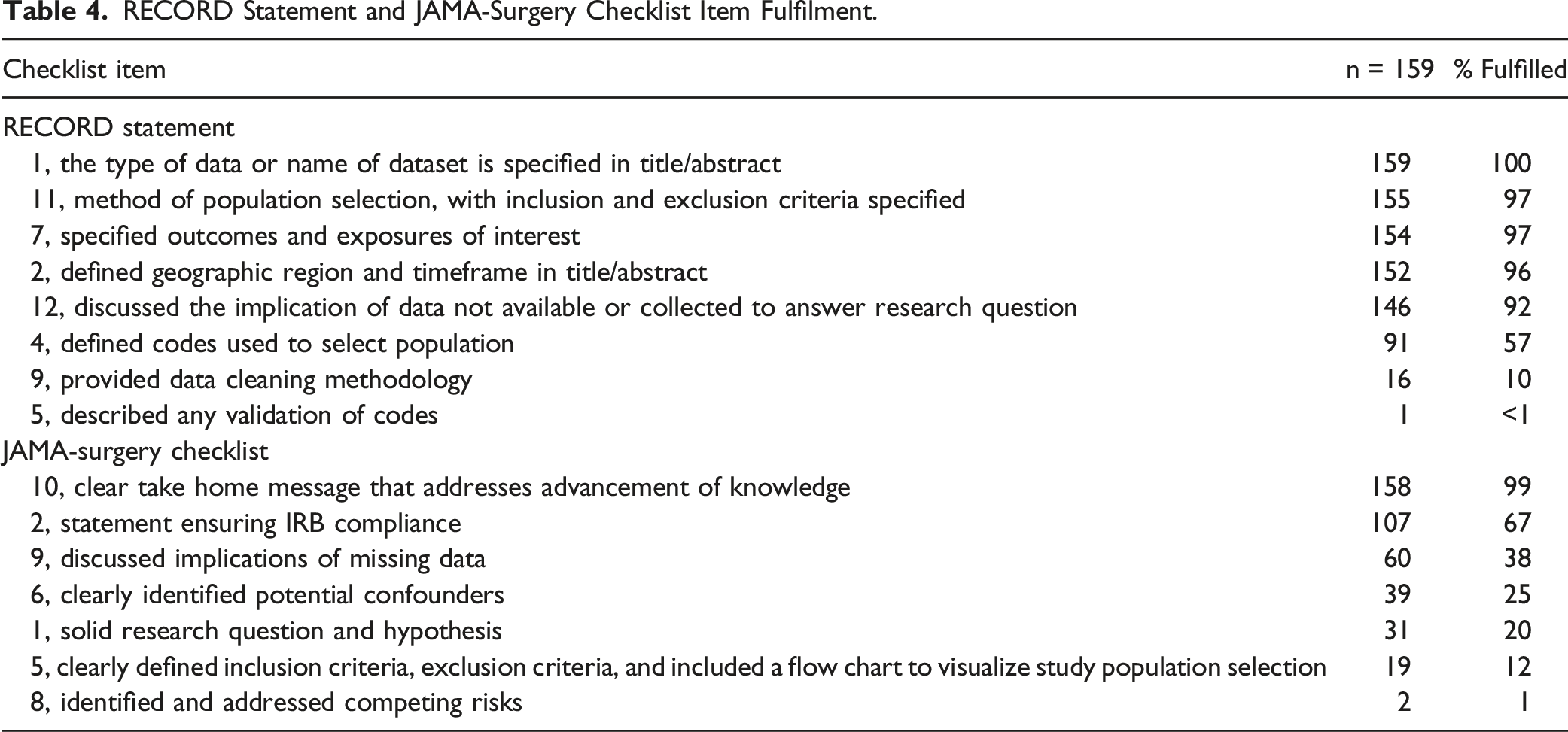

RECORD Statement and JAMA-Surgery Checklist Item Fulfilment.

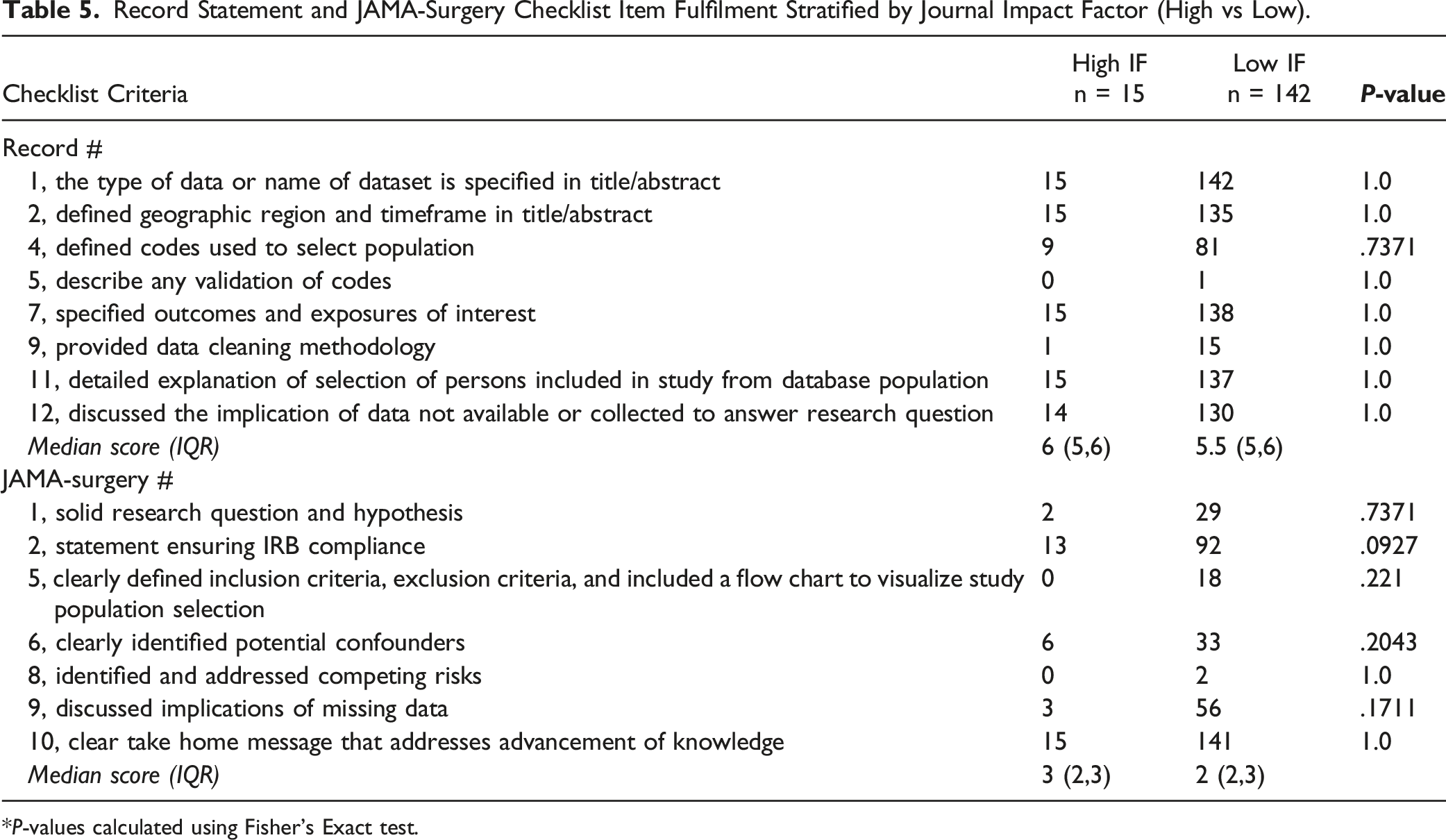

High vs Low Impact Factor (IF) Journals

Record Statement and JAMA-Surgery Checklist Item Fulfilment Stratified by Journal Impact Factor (High vs Low).

*P-values calculated using Fisher’s Exact test.

Discussion

Our data confirm our hypothesis that vascular surgery studies that use NSQIP data have a poor adherence to research reporting standards. In particular, NSQIP studies frequently failed to identify competing risks, use flow charts to help visualize study populations, have a solid research question and hypothesis, identify confounders, and discuss the implications of missing data. In addition, vascular surgery NSQIP studies neglect to describe any validation of codes or describe data cleaning efforts.

Previously published studies investigating the reporting standards of NSQIP articles have shown varied research reporting adherence. For example, a study by Yolcu et al investigating neurosurgery NSQIP studies and another by Khachfe et al assessing pancreatic surgery NSQIP articles found that NSQIP studies have satisfactory reporting. 11,12 In contrast, a study by El Moheb et al found that NSQIP emergency general surgery articles demonstrate suboptimal reporting. 13 Similar to our findings that two of the most heavily missed reporting items were using flow charts to help visualize study populations (12% fulfilled) and describing code validation (<1% fulfilled), El Moheb et al noted the same criteria were fulfilled in only 11% and 3% of emergency general surgery studies respectively. 13 These limited data points showing similar areas of deficient reporting quality suggests common areas for improvement exists among different surgical subspecialties. Furthermore, our previous investigation of reporting standards among Vascular Quality Initiative (VQI) articles also found suboptimal reporting. 15 Given our similar findings among vascular surgery articles using two different databases suggests improved research reporting may be broadly needed within the vascular surgery literature.

Although there were several checklist criteria that were missed in most studies, we highlight a few that are especially important below. The most commonly missed item from the JAMA-Surgery checklist, the identification of competing risks, was only addressed in 1% of studies in our study population. Competing risks are events that “compete” with one another, such that the occurrence of one event prevents the occurrence of another “competing” event.19-21 An example of a competing risk would be mortality in a study of major limb amputation among patients with advanced peripheral arterial disease. A cohort study by Abdel-Qatir et al investigating stroke risks in patients with atrial fibrillation, found that neglecting competing risks led to biased estimates and a stroke incidence estimate that was overestimated by 39%. 19 Similar neglect in vascular surgery NSQIP studies could lead to misleading results.

Another important but frequently missed reporting item from the JAMA-Surgery checklist is item 1, which recommends including a solid research question and hypothesis, but was fulfilled in only 20% of studies. Having both a research question and hypothesis is important to adequately define the population of interest and narrow the scope of the study. 9 This explicitly helps to avoid scientific “fishing expeditions.” We acknowledge that some studies are intended to be “hypothesis generating” and may not have clear hypotheses. We believe this should be noted explicitly in these studies rather than left ambiguous. Moreover, use of large-scale registry databases like NSQIP generally should not be used for exploratory analyses since multiple statistically significant associations can commonly be identified with little clinical relevance so it is the authors opinion that a minority of reports should be submitted without clear hypotheses.

The most commonly missed item from the RECORD statement, describing any validation of codes, was also only fulfilled in 1% of our study sample. By not describing or citing validation studies, the transparency and reliability of studies are severely diminished. 7 Put plainly, if there are no validation studies for the codes used to select the study population readers may not be sure if the study sample accurately represents the population of interest. In cases where there is no code validation data, authors should explicitly note this in the methods and discussion. While not every reporting criteria item holds the same importance in strong research reporting, the aforementioned items are especially impactful and should be addressed in future NSQIP studies to ensure appropriate rigor and reliability.

Interestingly our study also found that Journal IF was not associated with differences in reporting standards and that studies published in high IF journals did not have statistically better reporting adherence. None of the RECORD statement or JAMA-Surgery checklist items had a reporting adherence that varied significantly between articles in high and low IF journals. Our findings agree with El Moheb et al who found that emergency general surgery NSQIP articles generally do not vary by journal IF. 13 In addition, in our previous study that used the RECORD statement and JAMA-Surgery checklist to appraise articles that used another large-scale database, the Vascular Quality Initiative (VQI), we found no association between Journal IF and reporting adherence. 14 While our IF analysis may be skewed by a relatively small number of high IF articles, based on the cumulative findings of this investigation and of similar studies we believe that issues with research reporting affect both high and low IF journals alike.

While not every reporting criterion on the RECORD statement and JAMA-Surgery checklist weigh equally in the evaluation of robust research reporting, our study highlights key takeaways such as addressing competing risks, including a solid research question and hypothesis, and using validated codes to significantly improve the reporting standards of NSQIP articles. To ensure that future studies adhere to strong research reporting standards we encourage journals to require authors submitting articles that use large-scale databases to follow the reporting criteria of both the RECORD statement and the JAMA-Surgery checklist. By incorporating reporting guides into the peer-review process, the reporting rigor and transparency of future NSQIP articles will improve.

Our study has certain limitations. First, we only queried PubMed to create our study population; therefore, it is possible that we missed some NSQIP articles that are not indexed in MEDLINE and the other online sources PubMed queries. In addition, the majority of articles in our study sample were published before 2015; this may limit the generalizability of our findings to more recently published NSQIP articles. Second, while we equally assigned each reporting item one point for fulfillment, we concede that different reporting items are not equally important in their adherence. Finally, while the RECORD statement and JAMA-Surgery checklists are thorough, the two reporting guides we used do not capture every domain of robust research reporting. As a result, our appraisal is not a comprehensive analysis of every research reporting criterion.

Conclusion

Vascular surgery NSQIP articles demonstrate a poor adherence to research reporting standards. Areas to improve the reporting rigor of vascular surgery NSQIP studies include identifying competing risks, using flow charts to help visualize study populations, having a solid research question and hypothesis, identifying confounders, discussing the implications of missing data, and describing any validation of codes and data cleaning efforts. Future efforts should focus on ensuring journals work with authors to incorporate standards of robust reporting methodology in scientific articles.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported by the National Institutes of Health [K23HL161428]. The sponsor played no role in the design, methods, data collection, analysis, or preparation of this article.