Abstract

Coefficient plots are a popular tool for visualizing regression estimates. The appeal of these plots is that they visualize confidence intervals around the estimates and generally center the plot around zero, meaning that any estimate that crosses zero is statistically nonsignificant at least at the alpha level around which the confidence intervals are constructed. For models with statistical significance levels determined via randomization models of inference and for which there is no standard error or confidence intervals for the estimate itself, these plots appear less useful. In this article, I illustrate a variant of the coefficient plot for regression models with p-values constructed using permutation tests. These visualizations plot each estimate’s p-value and its associated confidence interval in relation to a specified alpha level. These plots can help the analyst interpret and report the statistical and substantive significances of their models. I illustrate using a nonprobability sample of activists and participants at a 1962 anticommunism school.

1 Introduction

Visual alternatives to traditional estimate tables have become increasingly popular in the social sciences, especially with the growing interest in data science and computational social science (Healy 2019; Wickham and Grolemund 2017). One visualization that is supplanting more traditional regression tables is the coefficient plot (Gelman et al. 2018; Jann 2014; Lander 2018). Coefficient plots can provide a more parsimonious method for reporting regression coefficients, especially when many independent variables (IVs), models, or both make tables difficult to read or present. Thus, these plots have become quite popular in sociological research, ranging in application from cultural sociology (for example, Lizardo and Skiles [2016a,b]; Stewart, Edgell, and Delehanty [2018]) and social networks (Bähr and Abraham 2016) to the sociology of education (Schührer, Carbonaro, and Grodsky 2016) and the sociology of work and occupations (Friedman and Laurison 2017; Laurison and Friedman 2016).

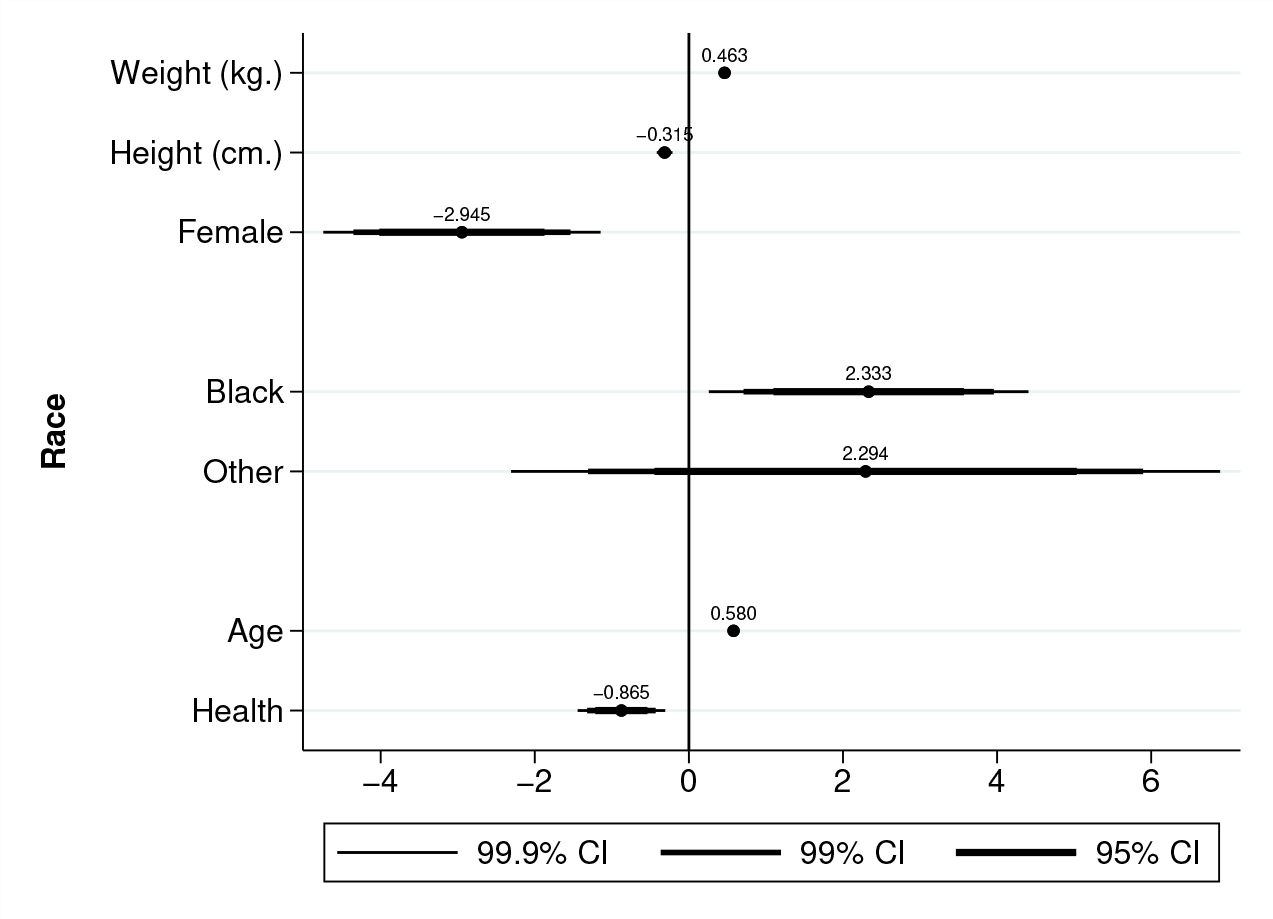

Coefficient plots visualize the confidence intervals (CIs) and their corresponding regression estimates. The graphs are generally centered around zero—meaning that any estimate that crosses zero is statistically nonsignificant at least at the alpha level around which the CIs are constructed. Consider, for example, the coefficient plot in figure 1 below, which was generated from a basic linear-additive multiple ordinary least-squares (OLS) model regressing systolic blood pressure readings on a vector of covariates using the National Health and Nutrition Examination Survey data available from StataCorp. The plot is interpreted like a regular regression table: black individuals, for example, have systolic blood pressure readings that, on average, are about 2.333 mm/Hg. higher than white individuals’ readings, net of their weight, height, sex, age, and self-reported health. The plot also shows that this difference is statistically significant with at least an α = 0.001 because the thinnest band does not cross zero (the vertical line).

Coefficient plot of OLS estimated effects on systolic blood pressure.

For all their utility, coefficient plots still come with assumptions about the inference statistics accompanying the estimates—namely, coefficient standard errors (SEs) and their CIs. This is, of course, a valid assumption for the majority of analysts using probability samples and relying on the asymptotic properties of random sampling distributions. However, analysts using nonprobability samples must adopt a different inference model. Such an alternative—the “randomization model” (Ernst 2004; Ludbrook and Dudley 1998) or what might be termed the “process inference” model (Darlington and Hayes 2017, 513–514)—allows for sample-specific inferences by using Monte Carlo permutation tests to derive empirical p-values. These p-values indicate the proportion of test statistics—say, a specific coefficient—from the models computed from the randomly permuted samples that are greater than or equal to the observed test statistic (in the case of a right-tailed test).

The randomization model poses problems for the standard coefficient plot. Specifically, unlike the conceptually similar bootstrap technique, SEs derived from Monte Carlo permutation tests are with respect to the p-value—not the coefficient itself. Thus, there are no coefficient-specific CIs to report and no way to visualize the effect’s proximity to zero without explicit reference to the effect’s p-value.

In this article, I illustrate a variant of the coefficient plot for regression models with p-values constructed using permutation tests and discuss its utility in interpreting and presenting research. These visualizations, which I refer to as permutation p-value (PPV) plots, plot each estimate’s p-value and its associated CI in relation to an alpha level of the analyst’s choice. These plots can help the analyst interpret and report the statistical and substantive significances of their models with permutation-based inference without recourse to tables. This article unfolds as follows: First, I overview the logic behind Monte Carlo permutation tests and how they can be used to construct sample-specific inferences, noting especially how they introduce complications for how regression estimates and their associated predicted values are visualized. Second, I introduce PPV plots, illustrate how they can be generated, and demonstrate their utility for visualizing regression estimates and predicted values. PPV plot illustrations are made using a series of models predicting heightened perceptions of communist threat among a nonprobability convenience sample of people who participated in the CACC’s San Francisco Bay Region School of Anti-Communism in January and February of 1962 (Wolfinger 1992). I then conclude with an outline of how PPV plots can be further expanded for data visualization.

2 Randomization inference

2.1 Using Monte Carlo permutation tests to generate p-values

The traditional “population model” (Ernst 2004; Ludbrook and Dudley 1998) of statistical inference with which most social scientists are accustomed is not always appropriate when the sample is constructed using nonprobabilistic procedures or when the sample suffers from nonrandom systematic error that cannot be mitigated with bias-correcting statistical adjustments. When such “prestatistical” methodological constraints are present, a different model must be adopted if the analyst wishes to use the language of inferential statistics. One option is the “randomization” (Ernst 2004; Ludbrook and Dudley 1998; Manly 2007, 1–4) or “process inference” (Darlington and Hayes 2017, 513–514) model—a strategy that has, unfortunately, been slow to gain ground in sociological research using observational data, 1 save for work in social network analysis. 2 The key distinction between the population model and the randomization model is the “thing” to which statistical inferences are being made. In both cases, the p-value, its associated CI (for the coefficient in the population model and the p-value itself in the randomization model), or both are used to assess the confidence in the inference. 3

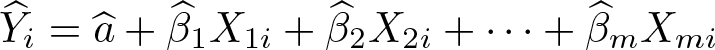

Consider the following simple OLS estimation equation:

On one hand, if p is the p-value attached to some estimated coefficient

where β is the true but unobserved estimate of the effect of X on Y. This p-value expresses the probability that (if the coefficient was truly generated from a random sample from the target population and with no systematic error) an effect of at least the absolute size of

On the other hand, under the randomization model with 1,000 Monte Carlo permutation tests and again assuming a two-tailed test,

where k is the number of permutations,

2.2 Complicating the coefficient plot

Regression estimates generated under the randomization model pose unique problems for visualization. For the popular coefficient plot, the problem is quite fundamental: there are no SEs or CIs for the coefficient,

The fact that SEs and CIs for p-values are still valid provides leverage for constructing a new type of coefficient plot for visualizing regression estimates and their subsequent predicted values under the randomization model. This new series of plots, which I refer to collectively as PPV plots, is outlined in the following section.

3 The PPV plot toolkit

A PPV plot can be thought of as a coefficient plot for regression models with significance levels determined via permutation tests. Its structure is quite simple: instead of arraying model coefficients within a variable-by-Y -unit space, the p-values for each model coefficient are arrayed within a variable-by-[0, 1] space. Unlike a traditional coefficient plot centered around 0 to indicate no statistically significant effect, the PPV plot is centered around an analyst-determined alpha level. In this article, I use a combination of Stata’s

I illustrate how PPV plots can be used to visualize regression coefficients and postestimation predicted values. Each strategy is addressed in turn below. First, however, I provide a brief overview of the case that I use for illustration: perceptions of communist threat among a nonprobability convenience sample of far-right activists who participated in the 1962 San Francisco Bay Region School of Anti-Communism (Wolfinger 1992).

3.1 The case: An anticommunism school

The Christian Anti-Communism Crusade (CACC) was founded in 1953 by Fred Schwarz. Schwarz, an Australian originally trained in medicine, spearheaded the “second wave of religious anti-Communism” in the United States (Herzog 2011, 207) and started CACC in an attempt to illuminate and teach on the insidious activities of communism—which he believed to be “the very mask of Satan” (The Schwartz Report 2018). CACC generated considerable funds by “promoting the belief that no bilateral negotiation could exist with communism” (Aiello 2005), largely through their tax-exempt and tuition-based “anti-Communism schools” that the organization would put together. In these schools, Schwarz and other organizers would teach on the un-American perils of communism and put on fundraising activities (Wolfinger et al. 1964, 22).

The fleeting nature of these meetings posed problems for academic researchers wishing to survey the attendees at these “schools”. No clearly definable sampling frame of participants existed—and, even if it did, it was unlikely that such a list would have been provided to researchers given the high skepticism of colleges and universities amid the McCarthyism of the time. Nonetheless, Wolfinger and colleagues wished to know just who these school attendees were and what they believed. To this end, they adopted purposive sampling techniques to gather data from attendees at the San Francisco Bay Region School of Anti-Communism, held from January 29, 1962, to February 2, 1962, at the Oakland Auditorium in Oakland, California. Wolfinger and colleagues describe their data-collection process as follows: We were able to conduct a study of the personal characteristics and political attitudes and activities of several hundred people who attended this Crusade school. Under our direction two dozen Stanford undergraduates interviewed members of the audience and distributed questionnaires (similar to the interview forms) which could be completed later and mailed back to Stanford. (Wolfinger et al. 1964, 23)

As the excerpt suggests, respondents constituted a convenience (and clearly non-probabilistic) sample. Given that participants were not known beforehand and were there for activities completely unrelated to data-collection efforts, data had to be gathered in a way that was not optimally systematic. Using the population model to draw statistical inferences with this dataset would be inappropriate because the sample was not a random “draw” from a defined target population of American anticommunists (or even the “population” of anticommunism school attendees), meaning that asymptotic distributional properties of probability sampling cannot be assumed. The randomization model, however, can be used to draw sample-specific inferences on the likelihood that statistical associations and relationships could arise from a random data-generating process. These data (Wolfinger 1992) are used in the remainder of this article to illustrate the utilities of the PPV plot toolkit. More specific information on the variables used are discussed below.

3.2 PPV plots and regression coefficients

Consider the following pseudo-research question: Among the participants at this school, what were some of the sociodemographic factors that predicted a heightened perception of impending communist threat (given, of course, an already heightened baseline perception of communist threat relative to the general population because they self-selected into an anticommunism school)? 7 To address this question, I constructed factor scores from a series of dummy variables related to different perceptions of communist dangers (for example, communism in academia and communism in the Democratic Party). Details on how these variables were constructed can be found in appendix A; higher scores indicate higher perceptions of communist threat. This variable was then regressed on the following variables: degree of political engagement, party identification, level of educational attainment, union member status, and whether the respondent was strictly self-employed. 8 All predictor variables were observed variables, save for the political engagement score, which was also constructed using factor analysis on a series of dummy variables. (Details on how these factor scores were constructed are in appendix A.)

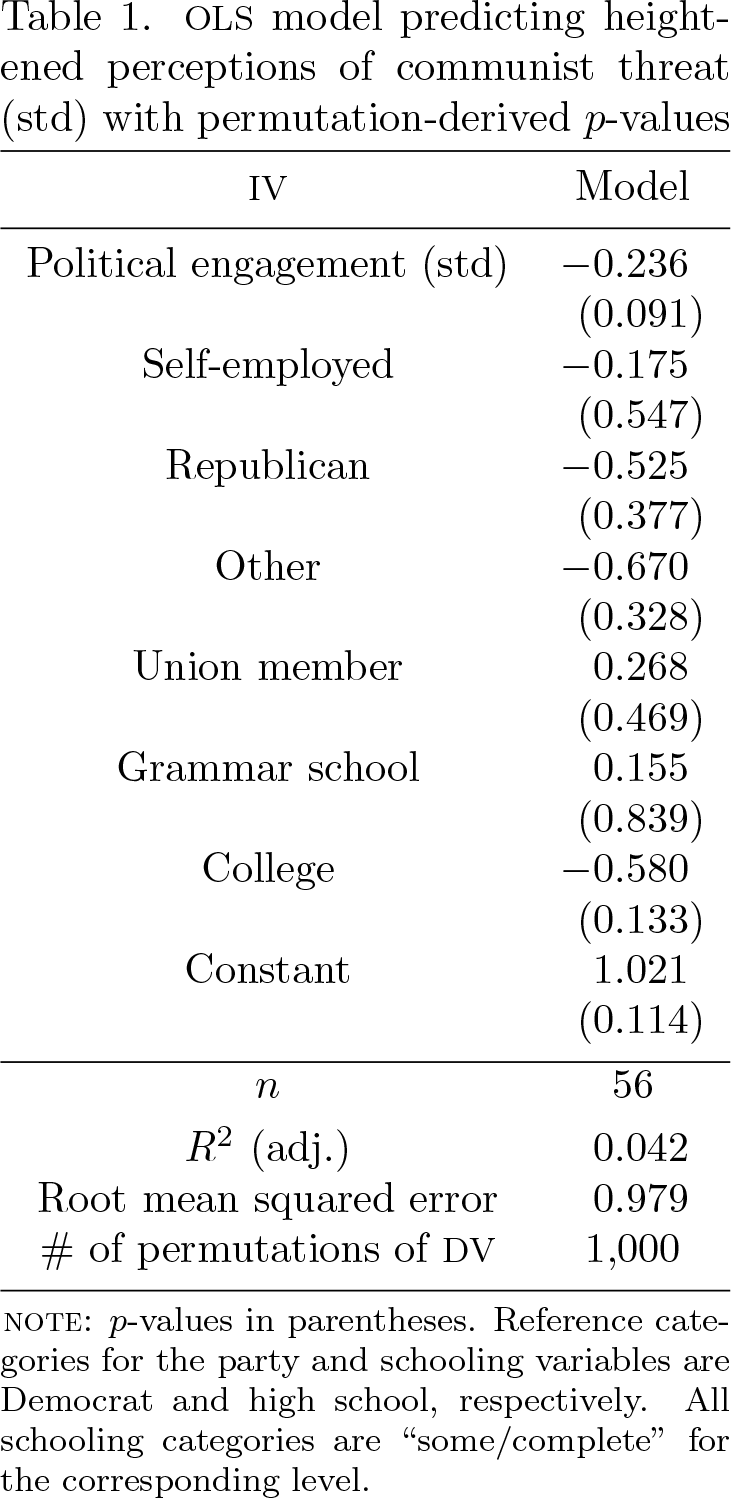

The results from this OLS model could be arranged within a table, as shown in table 1. This table is similar to a traditional regression table, with the only consequential difference being that the coefficient p-values rather than SEs are reported in the parentheses.

OLS model predicting heightened perceptions of communist threat (std) with permutation-derived p-values

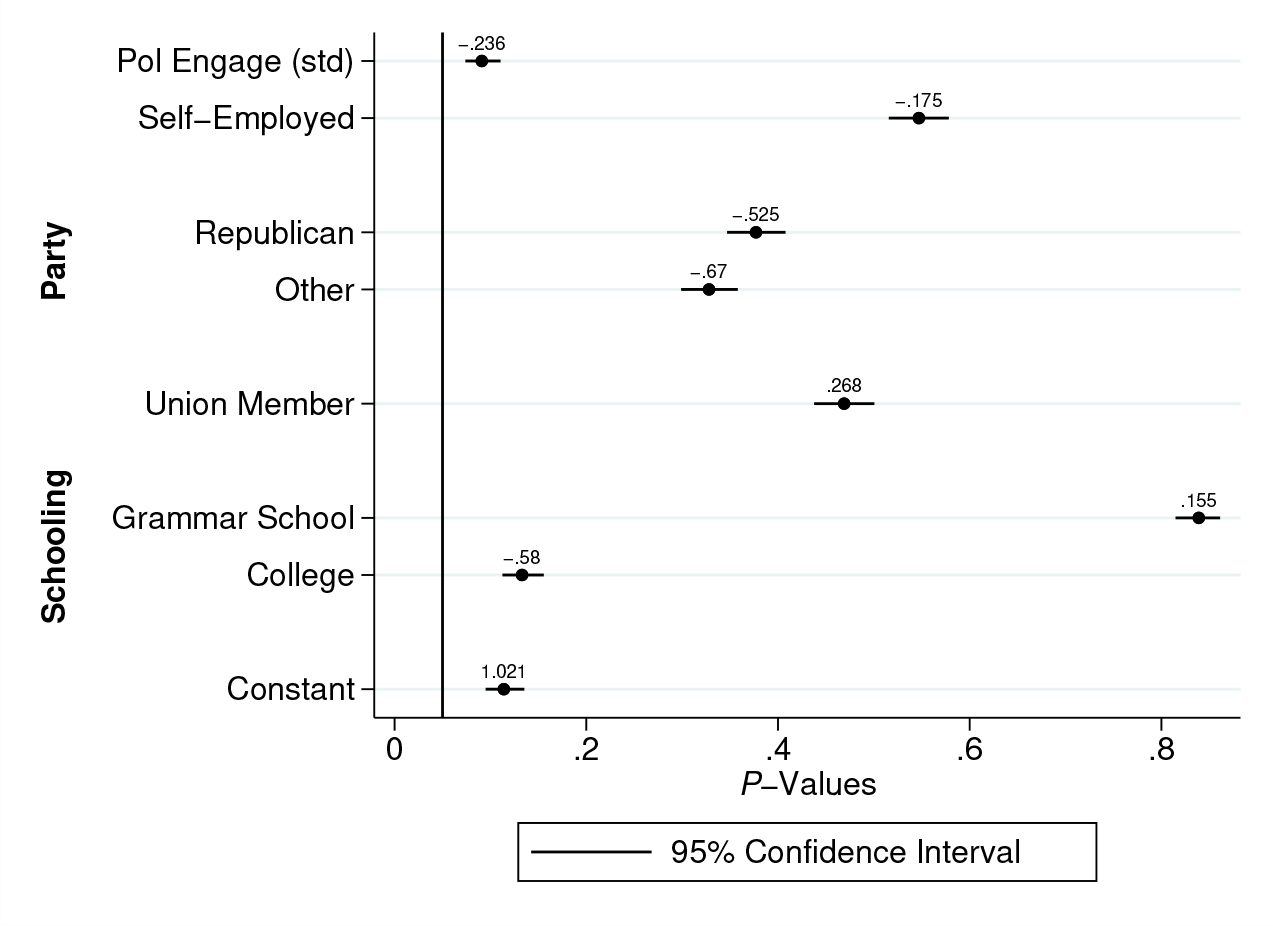

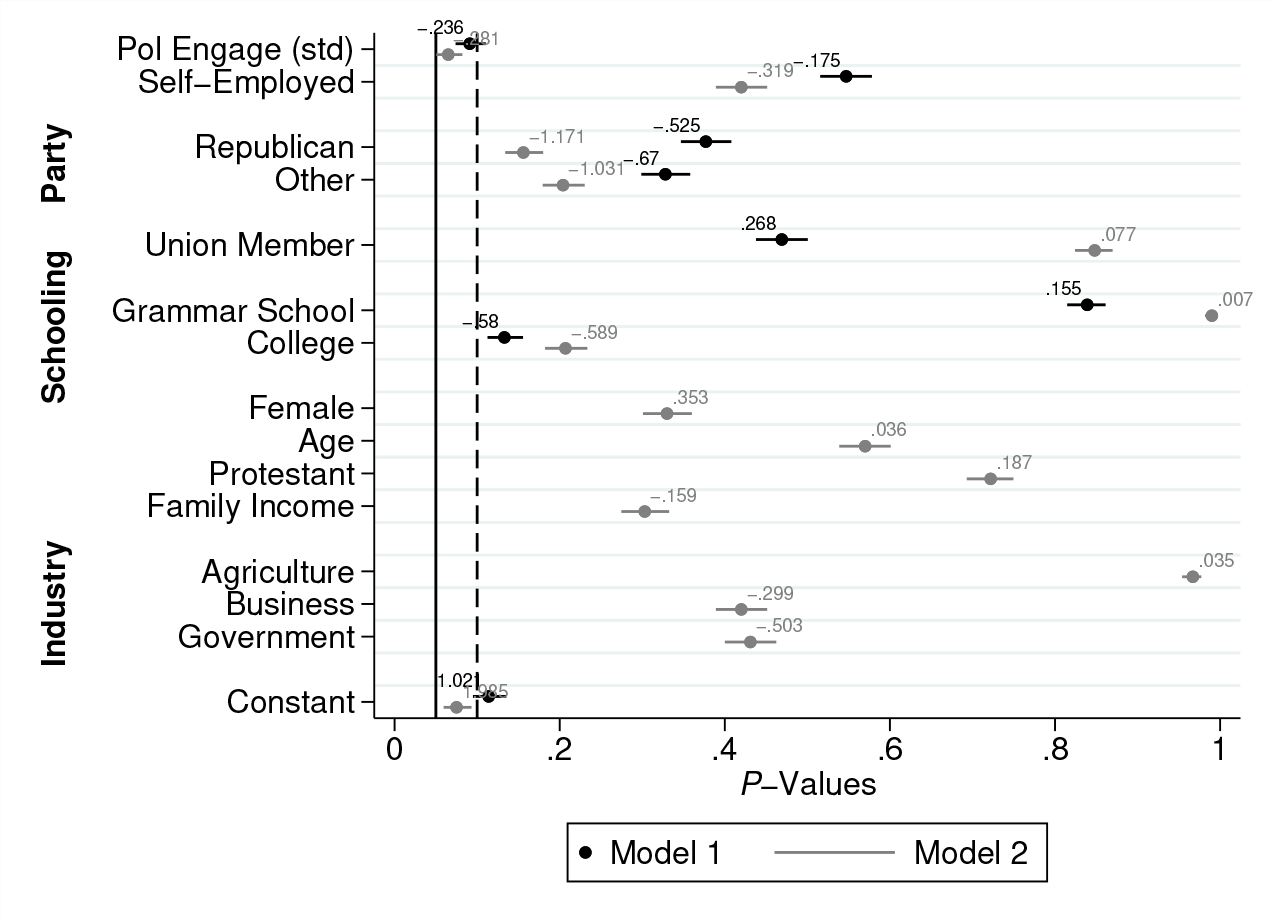

Alternatively, the model could be represented graphically as a simple PPV plot, as illustrated in figure 2. Like figure 1, the y axis lists the predictor variables. Unlike figure 2, the x axis can vary within the [0, 1] interval and is used to plot the coefficient p-values and their associated CIs. The markers are the p-values, and the numbers above them are the coefficients themselves. The vertical line represents α = 0.05—meaning that any coefficient with a p-value to the left of the line is statistically significant at least at the 0.05 level. For instance, consider the self-employment coefficient,

PPV plot of OLS estimated effects on perception of communist threat.

Conceptually, a PPV plot such as figure 2 is constructed in the following simple steps. First, the regression model is fit. Assume, for instance, the following linear-additive estimation model,

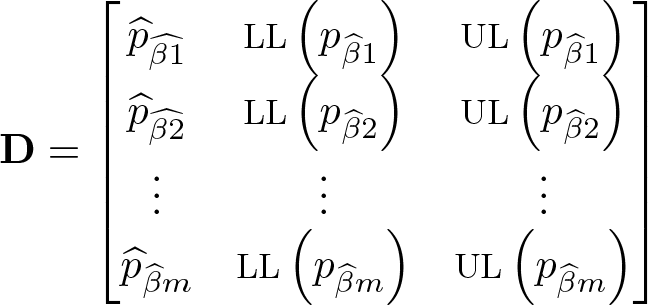

Second, the data (in the examples in this article, the DV) are randomly permuted k number of times, with the p-value for each

where

Lastly, any coefficient plot function can be used to plot

The fact that

Nested PPV plot of OLS estimated effects on perception of communist threat.

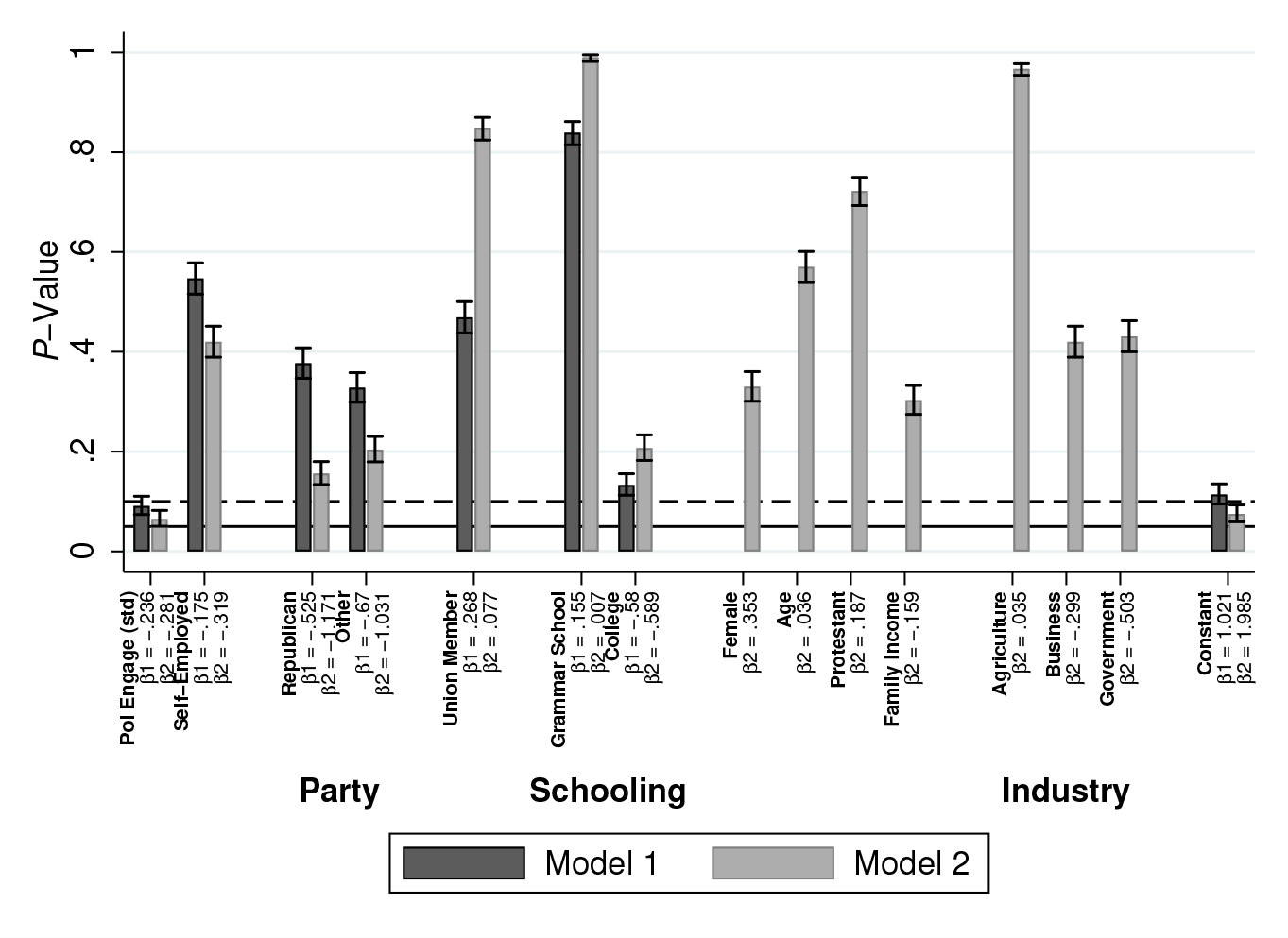

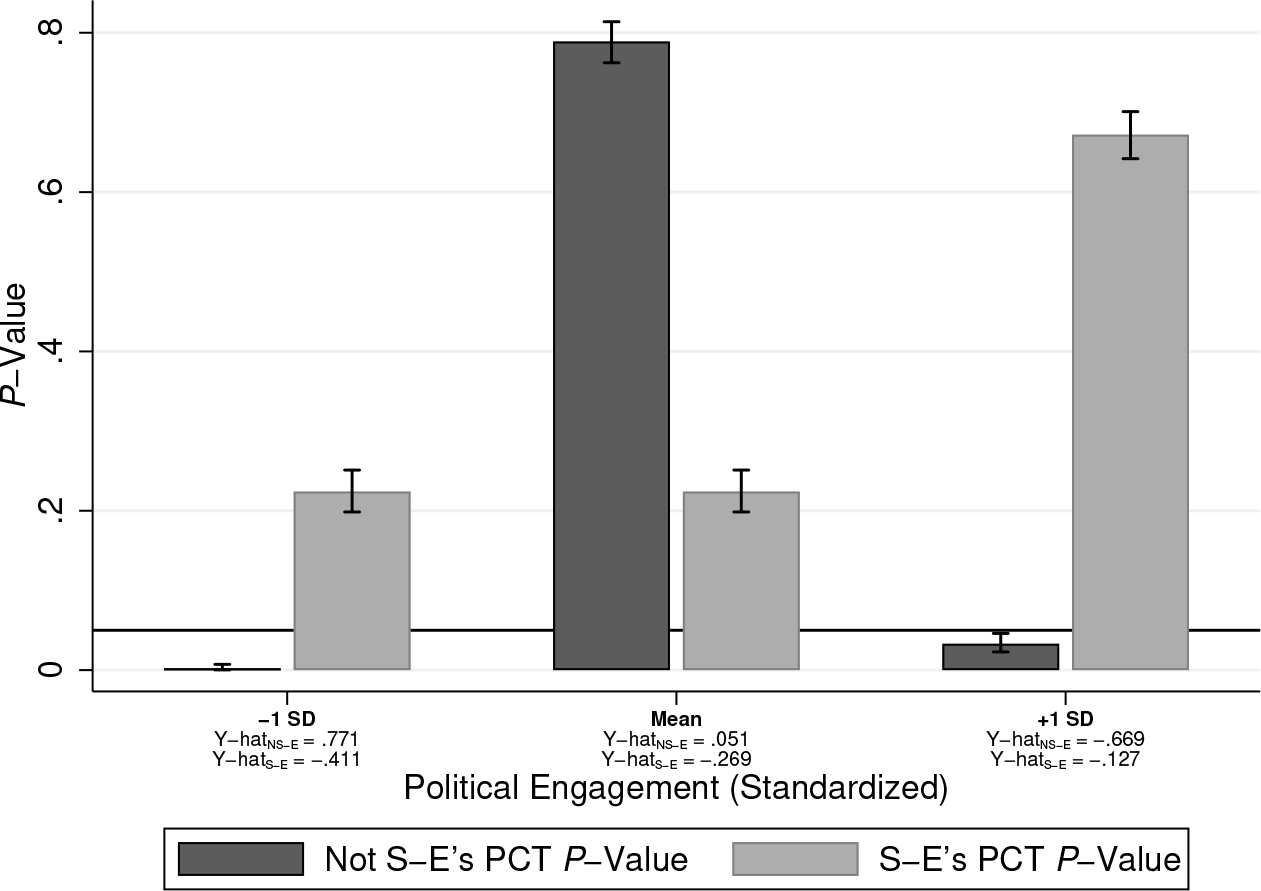

Similarly, the analyst can reconfigure the matrix of p-values and CIs into other plot types. For example, figure 3 can be displayed as a bar chart (figure 4), where a p-value below 0.05 (now a horizontal solid line) indicates statistical significance with at least α = 0.05. In this plot, spikes above the 0.05 reference line indicate an observed coefficient

PPV plot reconfigured as a bar chart.

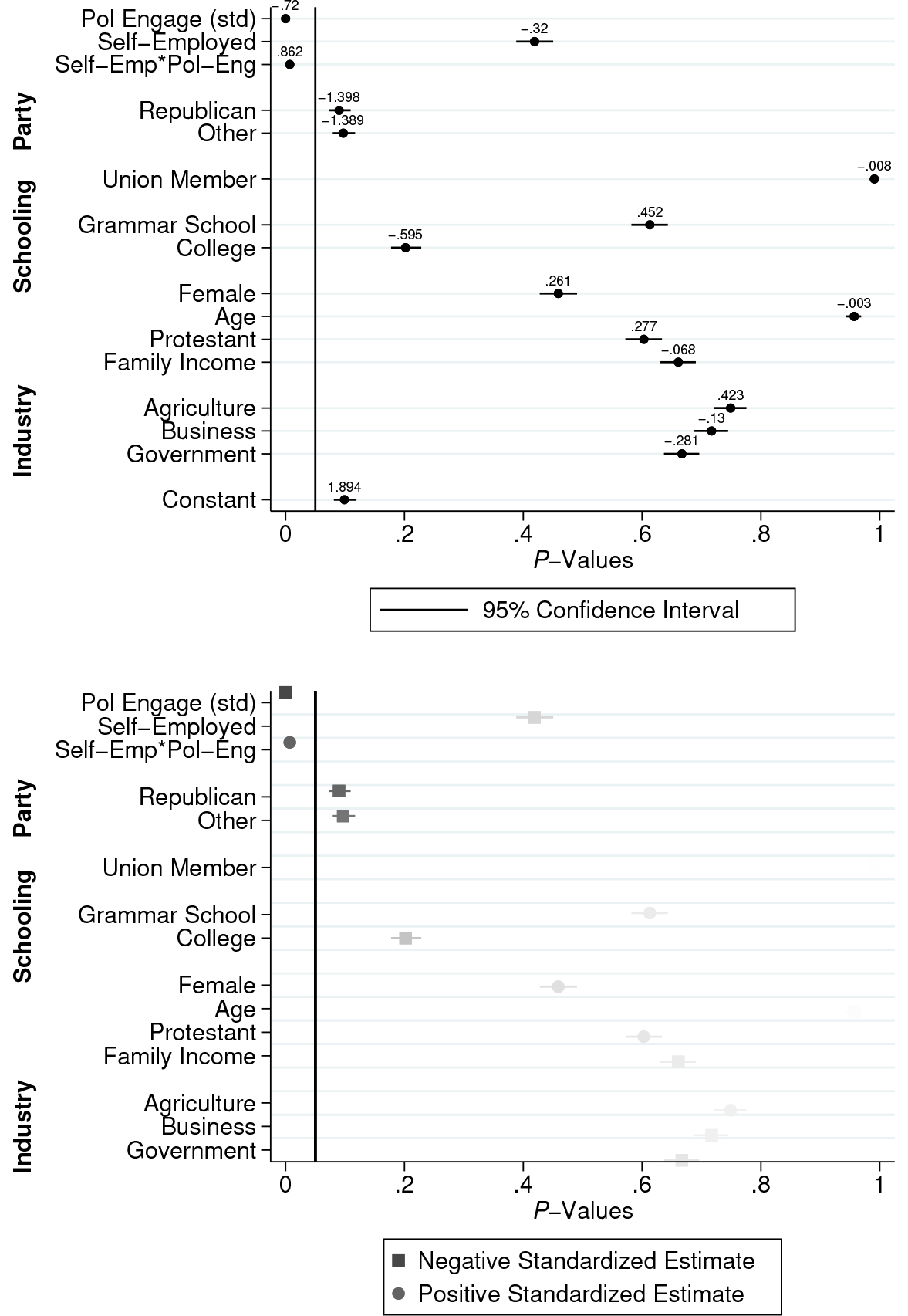

The substantive significance of the estimates can also be incorporated into PPV plots using standard graphical options. For example, assume an analyst is interested in the gap in perceptions of communist threat between those who were strictly self-employed and those who were not. They include an interaction between the political engagement scale and the dichotomous self-employment variable. They use 1,000 permutation tests and construct the PPV plot in the top panel of figure 5, which shows a statistically significant interaction (

Multiplicative model with and without effect-size visualization.

3.3 PPV plots and predicted values

p-values for postestimation predicted values may be of little use under the population model of inference when zero is not a meaningful value in the units of Y. This is because the prediction p-values are typically derived from one-sample t- or z-tests of the null hypothesis that the “true” value for the hypothetical case in question is zero. The (two-tailed) prediction p-value, then, is just the probability of generating a predicted value of at least that absolute size if the true value for a case with that profile of IV characteristics were zero—again assuming that the sample used to derive that prediction is a random draw from the target population. This means that for DVs without a meaningful zero, most empirically plausible predictions with reasonable SEs will generate large test statistics and therefore small p-values. Further, because a statistically nonsignificant predicted value under the population model simply indicates a 1 − α or greater probability of observing that predicted value for that case when the “true” value is 0, a predicted value for a DV with a meaningful zero (such as a standardized variable) could easily have p < 0.05 but still indicate a “nonzero” prediction.

With that said, it might be important for scholars basing statistical significance on permutation-based p-values to report the p-values for predictions. This is because permutation-based p-values are based on the differences between the observed prediction and the predictions from the permuted models and not on the difference of the prediction from zero. Thus, a two-tailed permutation-based p-value for a single observed prediction following a regression model indicates the proportion of predictions from the regressions on the permuted samples that are greater than or equal in absolute size to that observed prediction. 9 This p-value, then, provides an estimate of the extent to which the observed prediction deviates from the predictions generated from models where the association between the DVs and IVs are random by design.

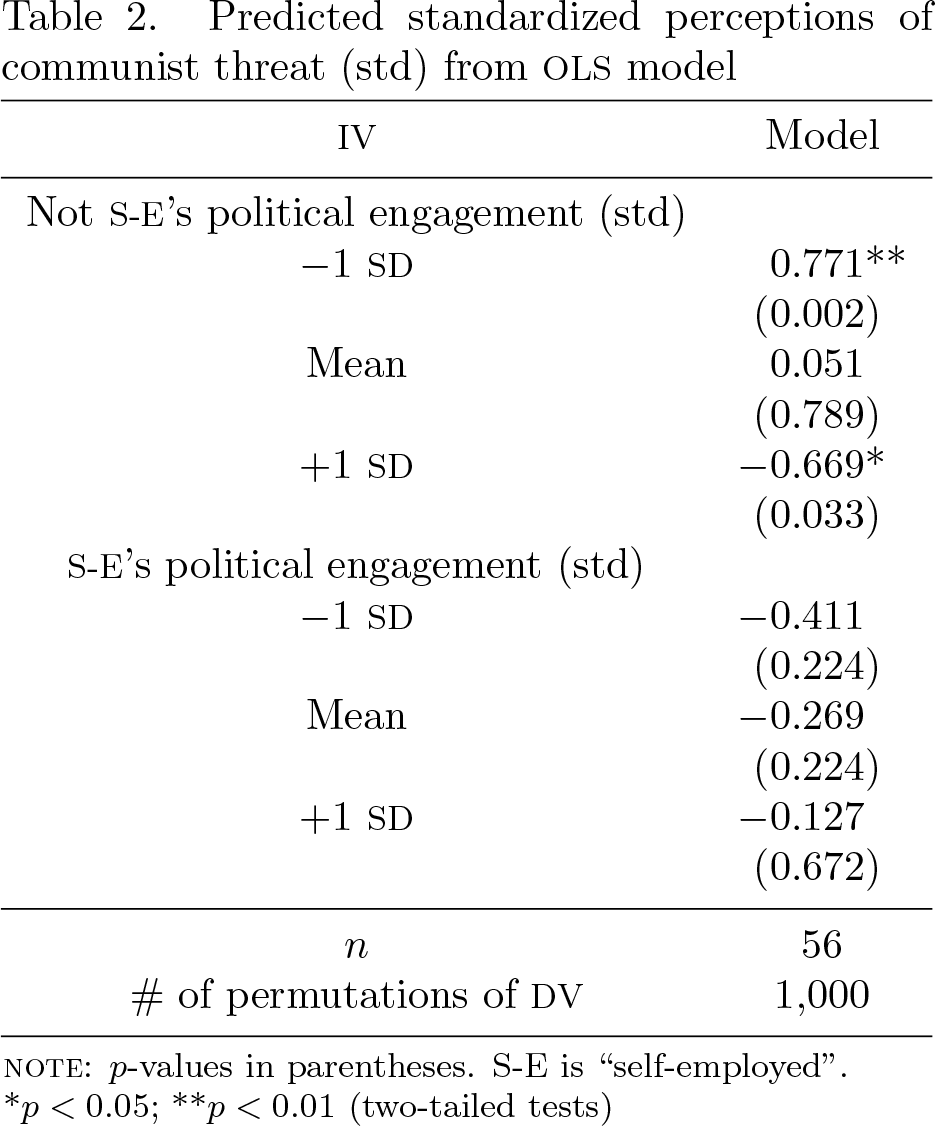

PPV plots can also be used to visualize these permutation-based prediction p-values. One can accomplish this by first writing a program that adds the calculation of predicted values as an extra step to each of the 1,000 permutation tests. Consider again, for instance, the multiplicative model used to construct the PPV plots in figure 5, which showed a statistically significant interaction between an attendee’s level of political engagement and their self-employment status when it comes to heightened perceptions of communist threat. The analyst can now add one additional step to his or her permutation procedure for obtaining permutation-derived p-values for postestimation predictions: after the analyst randomly permutes the DV k times and fits the regression model, a series of predictions can be generated for each permutation and compared with the predictions from the observed regression model. This analysis could result in something akin to table 2, which presents predicted perceptions of communist threat (on a standardized scale) for both the self-employed and those who are not, within a standard deviation (SD) of the sample mean political engagement scale.

Predicted standardized perceptions of communist threat (std) from OLS model

*p < 0.05; **p < 0.01 (two-tailed tests)

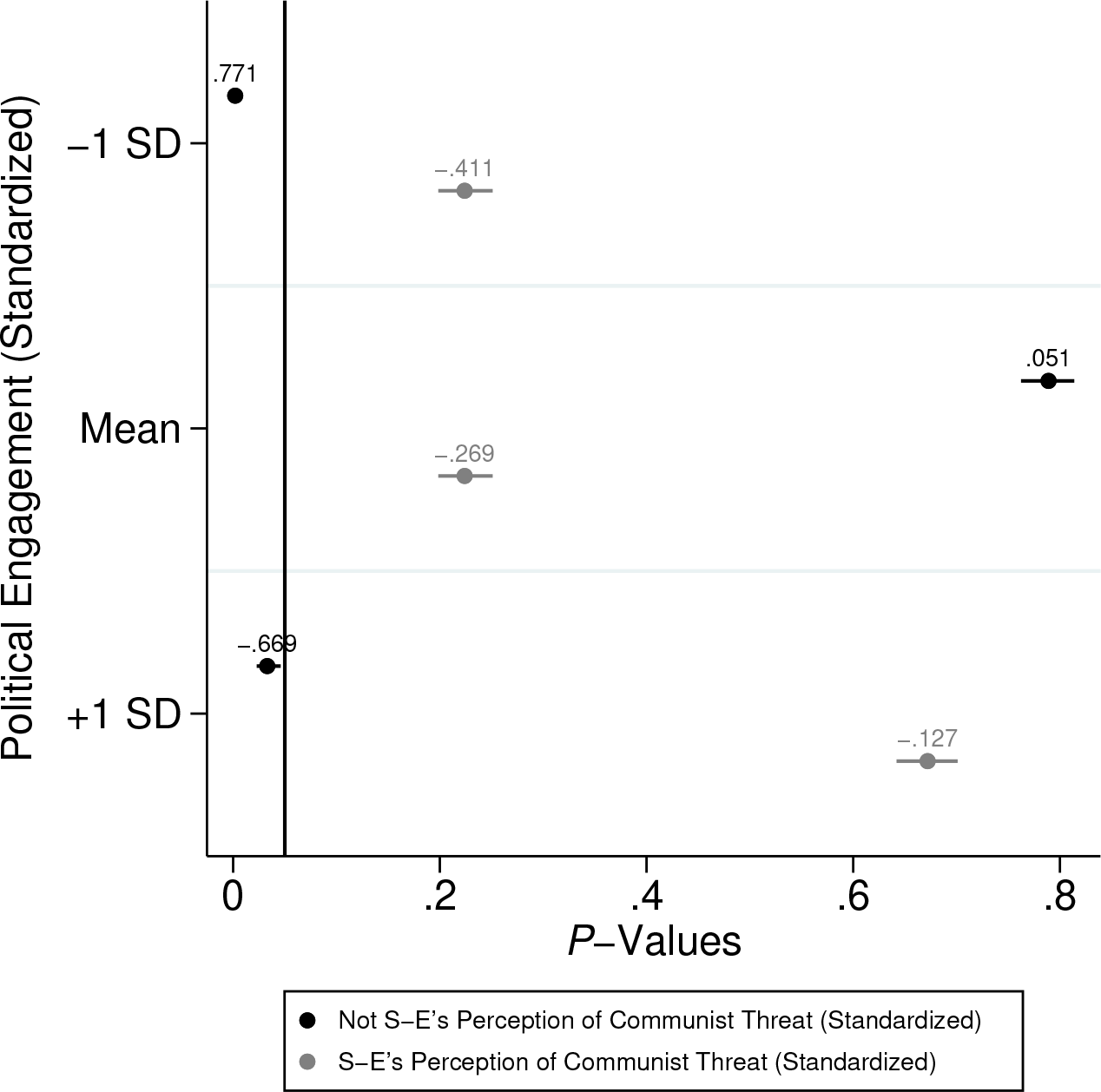

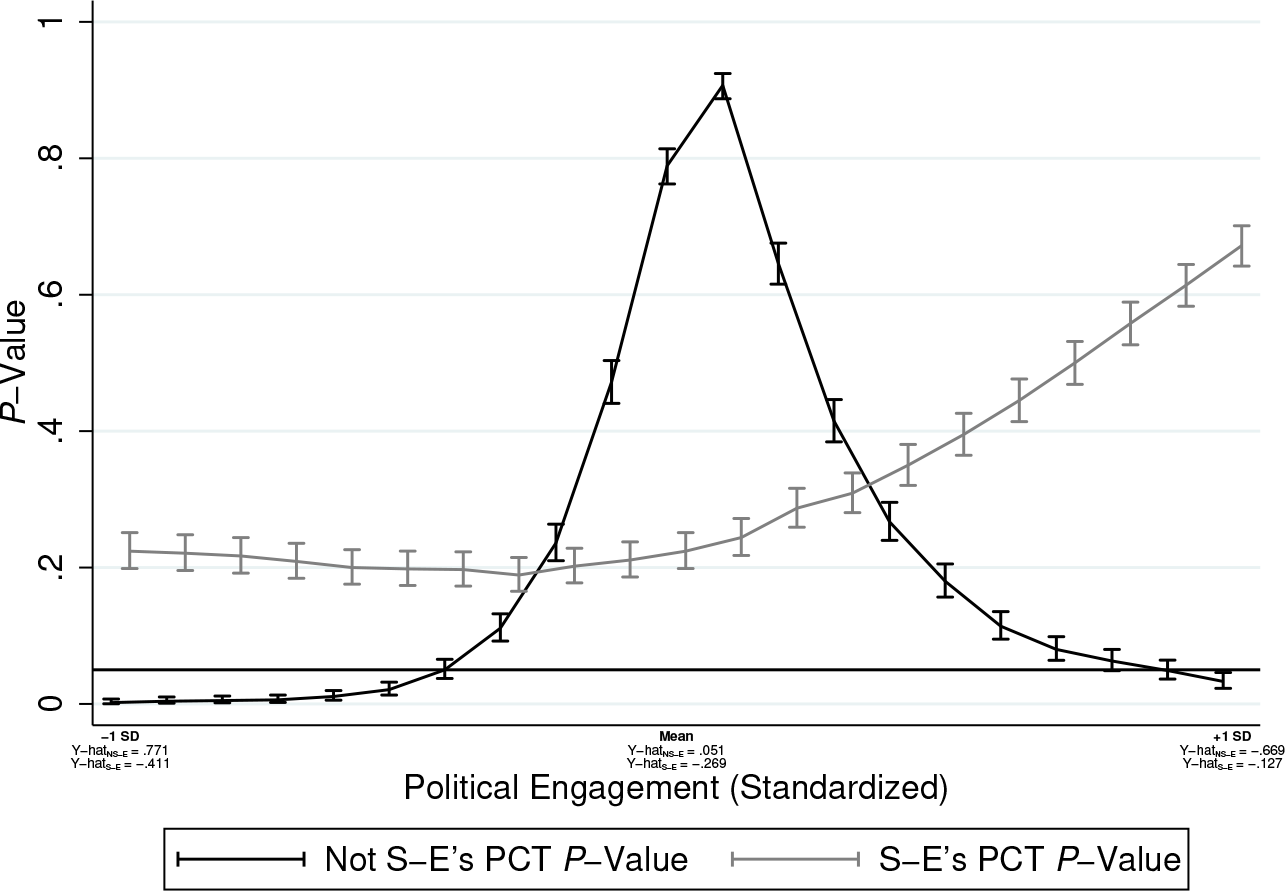

Alternatively, these predictions could be displayed as a PPV plot that allows for a visual comparison of the relative statistical significance levels, as shown in figure 6. These predicted-value PPV plots can also be easily reconfigured into other plot types—for example, as a bar chart (figure 7) similar to the coefficient PPV bar chart in figure 4. Or, alternatively, the prediction p-values can be displayed as a line graph for continuous variables. Figure 8 provides an example, with separate political engagement p-value “slopes” for hypothetical respondents of each employment category.

PPV plot of predicted standardized perceptions of communist threat by self-employment and level of political engagement

Predicted value PPV plot reconfigured as a bar chart

Predicted value PPV plot reconfigured as a line graph

Figures 6, 7, and 8 indicate that higher levels of political engagement are negatively associated with higher perceptions of communist threat for those who are not strictly self-employed (according to the switch in signs for marker labels in figure 6 and the x-axis labels in figures 7 and 8 for those that are not self-employed), while the estimate remains negative for the self-employed but loses some of its effect size (as indicated by the smaller negative effect sizes for those that are self-employed). However, these figures suggest that this interaction may be asymmetric. Among these 56 school attendees, only those who are not strictly self-employed with lower than average and higher than average levels of political engagement have predicted levels of perceptions of communist threat that deviate from what would be expected under the condition of a random data-generating process. Non–self-employed (NSE) respondents with lower than average levels of political engagement exhibit slightly above average perceptions of threat (

The NSE with average levels of political engagement and the self-employed at any level of political engagement have predicted threat perceptions that never deviate from what we would expect if the predictions were computed from a model of random associations. If this hypothetical analyst were hoping to draw substantive conclusions from these results—and assuming a properly specified regression model (which these models, because they are for illustrative purposes, may not be)—he or she may conclude something to the following effect: Among these 56 school attendees, those who were not strictly self-employed but who were less politically engaged than the average participant were more likely to have higher than average perceptions of a communist threat—but, when they were more politically engaged than the average participant, they tended to have some of the weakest perceptions of communist threat.

The asymmetry of this interaction would likely have been more difficult to pick up without adding prediction calculations to the permutation tests, and PPV plots certainly make this task of comparing effects across groups that much easier.

4 Summary and conclusions

Recent interest in data analytics and computational social science has sparked a flurry of activity in data visualization among social scientists (Healy 2019; Wickham and Grolemund 2017). One popular graph type for displaying regression models is the coefficient plot. While coefficient plots are useful for simultaneously visualizing both the statistical and substantive significance of the estimates, their applicability assumes that statistical significance is determined under the population model of inference—that is, that the coefficients have SEs and CIs, derived either with reference to theoretical sampling distributions or to some asymptotically based resampling technique such as the bootstrap or jackknife (Shao and Tu 1995). The PPV plot “toolkit” introduced in this article offers a way of leveraging the intuitiveness, parsimony, and aesthetic simplicity of the coefficient plot for regression models with statistical significance levels derived via the randomization model of inference, where SEs and CIs are reported for the coefficient p-values rather than the coefficients themselves and where a plot centered at zero is less useful.

In this article, I illustrated the utility of PPV plots for visualizing permutation-based regression estimates and postestimation predictions using the case of heightened perceptions of threat among 56 anticommunists at an anticommunism school. In both cases, PPV plots leverage the same benefits of coefficient plots in that they visualize the direction and size of the estimates while concomitantly incorporating estimate uncertainty into the graph. For coefficient plots, the uncertainty is captured with the CIs around the estimates: the wider the interval, the more uncertainty there is about where the true population parameter may be. For PPV plots, the uncertainty is captured with the CI around the p-value. Specifically, the wider the interval, the more uncertainty there is about where the true p-value would be had every possible permutation been accounted for. Accounting for this uncertainty is particularly important for boundary estimates: that is, when a p-value indicates a statistically significant estimate with a CI that spans across alpha levels or a nonsignificant estimate that spans to the left of the alpha level necessary to establish statistical significance. PPV plots can be particularly helpful in the case of a model with many predictors because they can simultaneously display the number of boundary estimates. Importantly, because the size of the p-value CIs is a function of the number of permutations performed, PPV plots in this case can also provide a general sense of how much closer the analyst should approximate an exact test (that is, account for every possible permutation) to shrink the CIs so that the number of boundary estimates is minimized.

There will undoubtedly be the question of how much traction visualization strategies for randomization inference will get in sociology, where this type of statistical inference is not the disciplinary norm. Indeed, if the population model is far and away the more common mode of inference, then what real leverage do PPV plots provide? This concern is itself symptomatic of a fundamental question about quantitative inferential reasoning: namely, is it really inference if there is no population to which one can infer things (compare Ludbrook and Dudley [1998, 128])? Such a perspective is likely a partial function of the fact that the inferential statistics curricula for most statistics courses in sociology are predicated on the logic that inference is synonymous with generalizing to a population. Ironically, though, it is the other type of inference that seems to predominate the actual practice of sociological research, because, as network analysts have observed for some time, “most social science aims at studying mechanisms rather than describing specific populations” (Snijders 2011, 135). Perhaps to no surprise, this implicit reliance on mechanistic reasoning seems particularly important in subfields where datasets constructed using rigorous probabilistic sampling procedures are by far the exception rather than the norm—for example, cultural sociology, social movement studies, and organizational sociology. Following this, I conclude this article with a more general call for quantitative sociologists to reflect critically on what sort of statistical inferences are appropriate given their sample design and, by extension, on how they choose to “see” this significance.

Footnotes

6 Acknowledgments

I thank the editorial team at the Stata Journal and an anonymous reviewer for their helpful feedback. I also thank Omar Lizardo and Dustin S. Stoltz for their thoughts on earlier versions of this article.