Abstract

Background:

Cancer patients increasingly use YouTube for nutritional guidance, yet information quality varies substantially. Existing text-based assessment tools fail to capture audiovisual content characteristics. This study aimed to (1) develop a video-specific assessment tool, (2) evaluate German-language YouTube videos on cancer nutrition, and (3) identify quality indicators for laypersons.

Methods:

A 20-criteria assessment tool integrating established instruments and video-specific elements was developed. The first 30 YouTube videos on cancer nutrition were systematically evaluated. Spearman correlation and Kruskal-Wallis tests identified associations between video characteristics and quality scores. Interrater reliability was assessed.

Results:

Intraclass correlation coefficient indicated good to very good interrater reliability (95% CI: 0.87-0.96). Overall video quality was poor (mean: 38.6/60, SD: 5.3). Videos from hospitals (P = .002) and healthcare organizations (P = .006) scored significantly higher than those from independent persons. Videos with clearly formulated goals (rs = 0.71, P < .001) and cited references (rs = 0.43, P = .019) demonstrated stronger evidence-based content. High-quality videos more frequently addressed missing evidence (rs = 0.51, P = .004). Quality scores inversely correlated with likes (rs = −0.55, P = .002) and views (rs = −0.46, P = .01).

Conclusion:

YouTube videos on cancer nutrition exhibit substantial quality deficits, even from institutional providers. The validated assessment tool identifies observable quality indicators including clear objectives, scientific citations, transparent discussion of evidence gaps, and institutional authorship. However, no single feature reliably predicts quality. Strengthening digital health literacy and improving evidence-based content production and visibility remain essential priorities.

Keywords

Introduction

According to the World Health Organization (WHO), ~10 million people worldwide died of cancer in 2022, and an estimated 20 million new cancer cases were recorded globally that same year. 1 Projections indicate that by 2050, the number of new cancer cases will rise to 30 million globally. These figures underscore the critical need to expand targeted prevention measures. 2 According to Rock et al, a healthy diet represents an important preventive measure for reducing cancer risk. For breast and colorectal cancer specifically, moderate evidence suggests that risk can be reduced through healthy dietary patterns and avoidance of processed meats. Evidence also indicates that healthy nutrition may reduce risk for other cancer types, such as liver, lung, pancreatic, prostate, and gastric cancers. 3

Nutrition plays a crucial role not only in cancer prevention but also for individuals already diagnosed with cancer. Malnutrition is a frequent problem in this population and negatively affects quality of life, treatment outcomes, and ultimately patient survival.4,5 Between 10% and 20% of cancer patients die not from their tumor itself, but from malnutrition-related complications. Globally, cancer-specific malnutrition continues to be frequently overlooked and inadequately treated in clinical practice.4,6

Despite this clinical gap, nutrition remains a topic of high importance and motivation for cancer patients, who actively seek information with the hope of improving their treatment outcomes and quality of life. 6 Due to insufficient attention to nutrition counseling in clinical settings, 6 an increasing number of cancer patients turn to social media for online research. 7

YouTube is currently the second most visited website in the world, following the search engine Google. 8 A systematic review of health information on YouTube revealed that the platform hosts a vast amount of health-related content. 9 Unfortunately, much of this information is inaccurate, misleading, low in quality, or lacking in validity.9 -11 The quality of cancer-related videos has been similarly characterized in the literature as questionable to poor.12 -14 In a study by Loeb et al, 67% of bladder cancer videos demonstrated moderate to poor quality. 15 The likelihood that health consumers will encounter such information during their research is high. 9 Concurrently, the majority of cancer patients report feeling uncertain about their ability to assess the quality of information they encounter online. 7 Especially in the context of cancer, false or misleading information about diet can even worsen the prognosis of cancer patients.5,16 Nevertheless, evidence-based videos are available on YouTube, and hold significant potential to assist the public in making informed health decisions. The challenge lies in ensuring that they can be easily identified. 10 Assessing the quality of Internet-based information is challenging for both laypersons and medical professionals. 17

While several tools exist to facilitate systematic assessment, none have been specifically designed for evaluating YouTube videos or for use by laypersons. The literature includes numerous YouTube analyses covering a wide range of topics. Typically, the same tools are used to evaluate these videos, such as DISCERN, the Global Quality Scale (GQS), the Journal of the American Medical Association Score (JAMAS), and the Patient Education Materials Assessment Tool (PEMAT).18 -25

These tools are not entirely transferable to video content. Even tools specifically created for video evaluation are incomplete and fail to address all the criteria necessary for assessing videos with medical content. The same tools are often employed in varying configurations, sometimes supplemented with disease-specific assessment instruments. 26 The heterogeneity of these assessment tools has already been noted in systematic reviews.27,28 Given the continuous increase in YouTube video analyses, 28 standardization of evaluation methods is urgently needed. Standardized tools would improve the comparability of data, enabling more concrete and reliable conclusions to be drawn.

Given the identified gaps in video-specific quality assessment and the lack of guidance for laypersons, this study pursued 3 interconnected objectives:

Tool Development: To develop and validate a comprehensive, video-specific assessment tool that addresses the limitations of existing text-based instruments (eg, DISCERN, JAMAS) by incorporating criteria specific to audiovisual medical content.

Quality Assessment: To systematically evaluate the quality of the first 30 German-language YouTube videos addressing nutrition for cancer patients, quantifying the prevalence of evidence-based information versus potentially misleading content.

Guidance for Laypersons: To identify observable video characteristics that correlate with high-quality content, enabling laypersons without medical expertise, to make informed judgments about video reliability.

By achieving these objectives, this study contributes both a methodological advancement (validated video assessment tool) and practical insights (quality landscape of German cancer nutrition videos and actionable guidance for patients) to address the growing challenge of health misinformation on social media platforms.

Methods

Study Design

The research was conducted by J.H., S.H., and A.G. Literature review and development of the assessment tool started in November 2021. A systematic search of the videos was conducted on YouTube (www.youtube.com) on February 22, 2022. The German translation of the search term “nutrition in cancer” was selected to simulate a layperson’s search. The default “relevance” setting on YouTube was used, as it is the most commonly selected option. To avoid bias, a public computer with no browsing history or cookies was employed. Given that users typically do not look beyond the first page of search results, 29 the first 30 videos were screened, corresponding approximately to the content of 1 page. Videos were included if they met the following criteria: German language, content specifically addressing nutrition for cancer patients (any cancer type), and original non-duplicate material (eg, re-uploads or multiple videos with identical information from the same source). Exclusion criteria included videos in other languages, content unrelated to cancer nutrition, repetitive videos from the same channel covering identical topics, and commercial advertisements or sponsored promotional content.

Development of the Assessment Tool

Our tool was based on the assessment tool for websites by Liebl et al. 30 This is composed of the Initiative Health on the Net Foundation (Stiftung Initiative Gesundheit im Netz; HONCode), 31 DISCERN, 32 transparency criteria of the Action forum on health information system (Aktionsforum Gesundheitsinformationssystem; afgis), 33 guidelines of the German Agency for Quality in Medicine (Gesellschaft für Qualität in der Medizin; ÄZQ), 34 publications of Steckelberg et al on “criteria for evidence-based patient information” 35 provided by the German Network for Evidence-based Medicine (Deutsches Netzwerk für evidenzbasierte Medizin; EbM Network) and Maddock et al, who assessed the needs of cancer patients seeking health information via the Internet. 36

DISCERN is primarily a tool for assessing the quality of patient information about treatment alternatives. It lacks consideration of illustrations, layout, and evidence. Additionally, since DISCERN was designed for written information, the evaluation does not consider audio quality. 32

The GQS was developed for the evaluation of videos. It assesses the overall quality, usefulness to the patient, relevance of the subject, and flow of the information presented. The evaluation criteria are very imprecisely defined. For example, there is no detailed description of when exactly a video is of good or bad quality. 19

The instrument of JAMAS assesses the following 4 items: authorship, attribution, disclosure, and currency. Content criteria such as evidence and layout, comprehensibility and audio quality are not considered here. 37 With the help of PEMAT, it is possible to assess the understandability and actionability of written or audiovisual materials. Since only 2 criteria can be tested, this tool cannot adequately assess the quality of a video.

The tool by Liebl et al 30 was revised to make it usable for videos that deal with medical content. The criteria of audio quality and visual quality, comprehensibility, and actionability were adopted from PEMAT.21,22 Audio quality was assessed by examining whether distracting background noise was avoided, the volume and speech rate were appropriate, and the speaker was easy to understand. Visual quality covered adequate lighting, image sharpness, appropriate camera movement, and the readability of any text shown in the video. Comprehensibility referred to both structure and language. Structural comprehensibility evaluated whether the sequence of information supported understanding and whether visuals (eg, diagrams or illustrations) effectively complemented the spoken content. Linguistic comprehensibility assessed the use of clear, concise sentences and medical terminology only when necessary for understanding or patient preparation.

Feasibility (actionability) focused on whether the video clearly identified at least 1 actionable step for users, addressed them directly when describing activities, broke down tasks into manageable steps, and used language that supported user participation and communication with clinicians.

From the assessment tool JAMAS, the criterion authorship was adopted because the other criteria already coincided with the assessment tool for websites by Liebl et al. 30 In addition, a modified form of the Title-Content Consistency Index (TCCI) was added, that checks the degree of conformity of the title with the content of a video or an article. 20 The complete assessment tool can be found in Supplementary File 1.

Several existing tools—including the Video Information and Quality Index (VIQI), 38 usefulness score, 19 Medical Information and Context Index (MICI), 38 and accuracy score 39 —were reviewed but not adopted, as they either lacked transferability to video format evaluation, focused on highly specific content areas, or overlapped with criteria already included in our tool.

The assessment tool consists of 3 parts—content-related, user-oriented, and formal criteria—to each of which the criteria were assigned. In total, the assessment tool consists of 20 criteria. Table 1 provides an overview of the criteria included in the assessment tool, along with the respective sources from which each criterion is derived.

Summary of Criteria With Their Respective Sources of Origin.

Sources: Liebl et al, DISCERN; Steckelberg et al, PEMAT, ÄZQ, HON code, afgis, JAMA(S).

A maximum of 60 points and a minimum of 20 points could be awarded for each video. A 3-point Likert scale was used for scoring: 3 = criterion is fully met, 2 = criterion is partially met, and 1 = criterion is inadequately met. If a video fails to meet a criterion entirely, it should be assigned the lowest score of 1. If certain aspects of the criterion are present but lack precision or could be improved, a score of 2 should be given. A score of 3 should be awarded when the criterion is fully and comprehensively met. A high score corresponds to a good result. We divided the scores into 4 different groups from very poor to very good. A video of very poor quality is scored from 20 to 29.9 points, a poor video is scored from 30 to 39.9 points, a good video is scored from 40 to 49.9 points, and a very good video is scored from 50 to 60 points.

Before conducting the formal video analysis, we pilot-tested the assessment tool to evaluate its functionality, usability, and applicability across different user groups. A diverse panel of 11 assessors was recruited for this pilot phase, including 3 oncologists with experience in nutrition care, 2 medical students, 1 psycho-oncologist, 1 patient representative, and 4 laypersons. This heterogeneous composition was specifically designed to determine whether the tool could be reliably applied by both medical professionals and laypersons. During pilot testing, assessors independently evaluated a subset of videos using the tool and provided feedback on criterion clarity, scoring procedures, and practical challenges encountered. This process revealed that while medical professionals could consistently apply all criteria, laypersons encountered substantial difficulties, particularly with criteria requiring evaluation of evidence-based content, interpretation of scientific references, and assessment of nutritional recommendations’ clinical validity. Laypersons reported that some criteria required specialized medical knowledge they did not possess, leading to low confidence in their ratings and significant inconsistencies compared to medical professionals’ assessments.

Based on these pilot findings, we concluded that comprehensive application of our assessment tool requires medical expertise. While layperson input was valuable for refining tool clarity and presentation aspects, accurate evaluation—particularly of evidence-based information quality—necessitates medical background knowledge. Consequently, we determined that only medically trained personnel should conduct the formal video assessments.

The development of the assessment tool was realized in cooperation with Huchel et al. 40

To our knowledge, the development of our assessment tool is the first scientific approach that combines several validated instruments into a comprehensive tool specifically for the assessment of videos.

Assessment of the Videos

Following pilot testing, the formal quality assessment of all 30 videos was conducted by 4 independent raters with medical backgrounds: 2 oncologists (J.H., C.K.) and 2 medical students (A.G., S.H.). These 4 raters were selected from the original pilot panel based on their medical expertise and familiarity with the assessment tool gained during pilot testing. Each video was independently rated once by all 4 raters.

For analysis, the following video characteristics were noted:

Duration of the video

Provider (3 categories)

○ Hospitals ○ Healthcare organizations ○ Independent persons

Time since the upload

Numbers of views, comments, and likes

Providers were categorized as follows: Registered nonprofit organizations dealing with health issues were included in the group of healthcare organizations. Video channels dealing with medical topics, but not based on a scientific foundation, were not included. This refers to channels that are not affiliated with any recognized organization and focus on topics such as alternative medicine, conspiracy theories, or the promotion of medical products and advice from unqualified, nonmedical personnel. The group of independent persons included, for example, laypersons and individual professionals with an academic degree who did not operate within the framework of an official organization.

Raters were not blinded to video metadata (provider type, views, likes), as this information was integral to evaluating certain tool criteria (eg, transparency, source credibility). However, to minimize bias, several quality assurance measures were implemented: (1) standardized evaluation criteria using the assessment tool, (2) transparent documentation of all rating decisions, (3) independent assessment by 4 raters without consultation, (4) calculation of the intraclass correlation coefficient (ICC) to assess inter-rater reliability, and (5) no post-hoc modifications to evaluation criteria or results, even in cases of substantial inter-rater variation.

Statistical Analysis

Video quality was assessed using the assessment tool, with each criterion rated on a 3-point Likert scale, generating ordinal scores for individual items and overall video quality. For descriptive and statistical analysis of these data, we used IBM SPSS Statistics 28. The 95% confidence intervals of the intraclass correlation coefficient (ICC) and its estimates were also calculated on the basis of the mean-rating (k = 4), 2-way mixed-effects model and absolute agreement. 41 The following guidelines provided by Koo and Li were used to assess the degree of reliability:

>0.90: excellent reliability

0.75 to 0.9: good reliability

0.5 to 0.75: moderate reliability

<0.5: poor reliability 41

To calculate correlations between 2 independent numeric variables, the Spearman test (rs) was used. Given the ordinal nature of our data, the assumption of normality was not appropriate. Moreover, a monotonic relationship was observed, which led us to employ the Spearman test for statistical analysis. A significance level of P < .05 (2-sided) was used.

For comparisons involving 3 or more groups, the Kruskal-Wallis test was applied, as the ordinal nature of the data precluded the use of parametric tests that assume a normal distribution. The significance level for the global test across all groups was set at P < .05. For pairwise comparisons, adjustments for multiple comparisons were made, and the adjusted significance (a.s.) was used. For the Kruskal-Wallis test, the adjusted significance (a.s.) was analyzed, and the significance level was set to P < .05. The significance level of a correlation for the Spearman test was also set to P < .05 (2-sided).

Ethics Statement

We confirmed that all methods were carried out in accordance with relevant guidelines and regulations. As this is not a clinical trial, no vote by an ethics committee of the University Hospital Jena was required.

Results

Quality of Our Assessment Tool

Following Koo and Li’s guidelines, the intraclass correlation coefficient calculated for evaluating interrater reliability demonstrated good to very good reliability, with a 95% confidence interval of 0.87 to 0.96. 41 The intraclass correlation coefficients for pairwise comparisons between the 4 independent raters are shown in Table 2.

ICC Between Raters.

Abbreviation: ICC: intraclass correlation coefficients.

Oncologist.

Medical student.

To assess the quality of our assessment tool, the Spearman’s correlation test was used. The analysis indicated that higher overall video results were strongly associated with better evidence-based information (rs = 0.87; P < .001) and more transparent communication regarding missing evidence (rs = 0.62; P < .001).

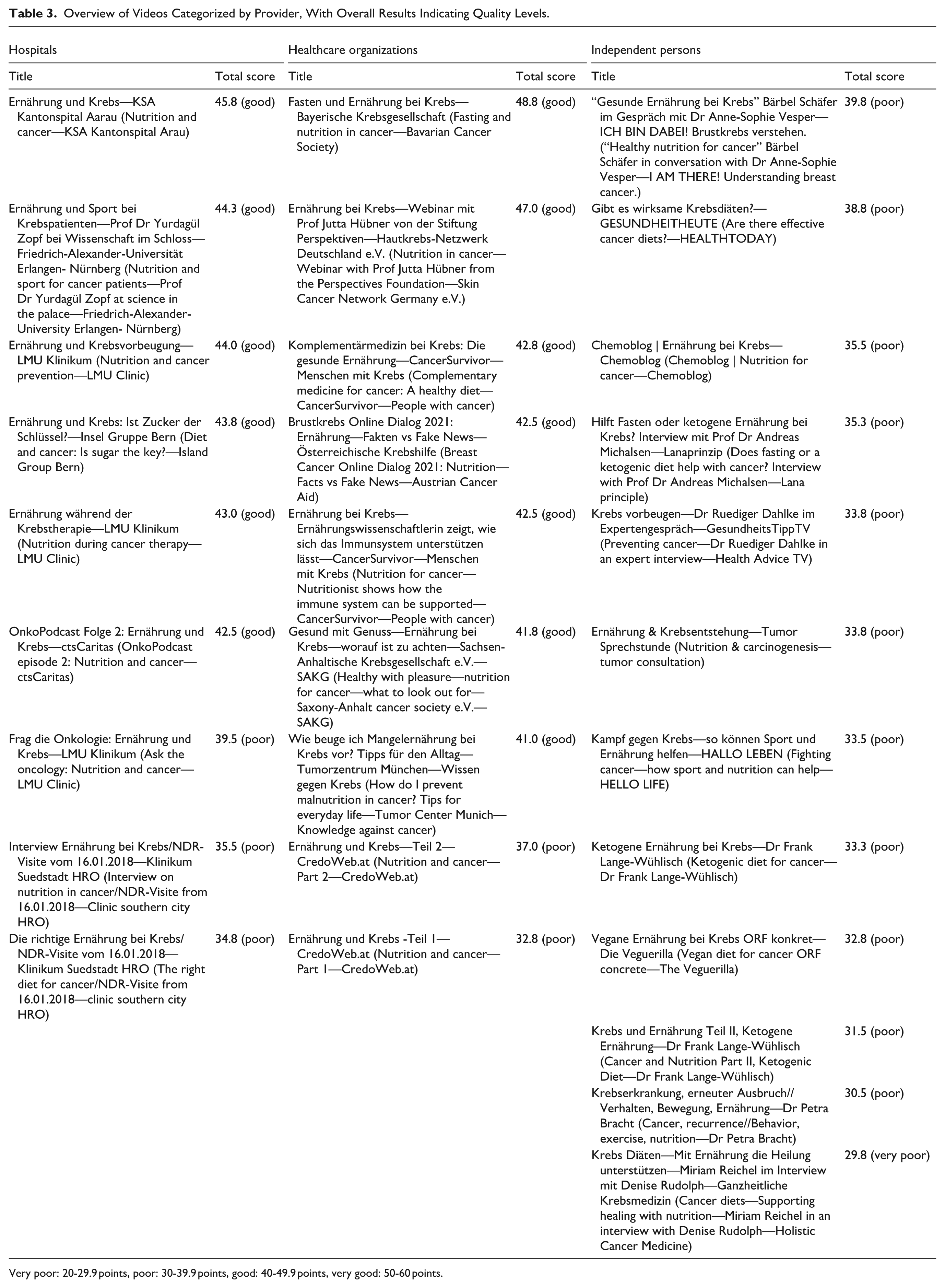

General Video Quality

A total of 30 videos were evaluated in the analysis. Table 3 presents an overview of the videos along with their corresponding overall results. The videos were classified on the basis of the type of provider: 30% (n = 9) were uploaded by hospitals, another 30% (n = 9) by healthcare organizations, and the remaining 40% (n = 12) by independent persons. A flowchart of the video selection and categorization process is presented in Figure 1.

Overview of Videos Categorized by Provider, With Overall Results Indicating Quality Levels.

Very poor: 20-29.9 points, poor: 30-39.9 points, good: 40-49.9 points, very good: 50-60 points.

Flowchart of video selection and categorization.

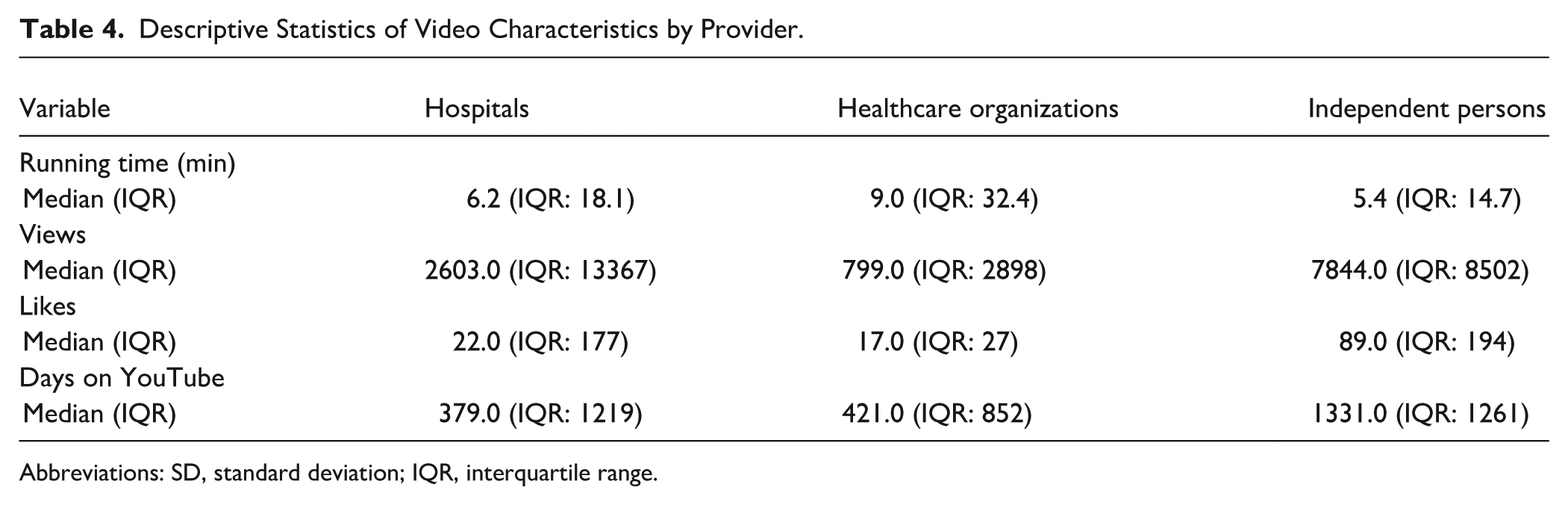

Videos in the hospital category were uploaded by hospitals from both Germany and Switzerland. The healthcare organizations group can be further subdivided into 3 types: cancer societies, specialized networks and centers, and health platforms. Eight of the 9 organizations focus specifically on cancer, while 1 platform serves as a general health platform. Videos in the independent persons group were uploaded by individual physicians, health/counseling channels, specialized programs, patients, and nutrition-focused blogs. Please refer to Table 4 for the basic characteristics of the videos by source.

Descriptive Statistics of Video Characteristics by Provider.

Abbreviations: SD, standard deviation; IQR, interquartile range.

To ensure clarity, the points for each result were rounded to 1 decimal point.

The overall result ranged from a minimum of 29.8 points (very poor) to a maximum of 48.8 points (good), with a mean result of 38.6 points (poor; SD: 5.3) across all videos. Consequently, no video achieved a very good rating. For an overview of the descriptive statistics of video quality scores rated by each reviewer, please refer to Table 5.

Descriptive Statistics of Video Quality Scores by Reviewer.

When analyzed by provider type, independent persons had a mean score of 34 points (SD: 2.9; poor), whereas hospitals and healthcare organizations scored 41.4 (SD: 3.9) and 41.8 (SD: 4.8) points, respectively, both of which fall into the good category. Figure 2 illustrates the rating of videos ranging from very good to very poor quality, categorized by their respective providers.

Percentage of videos rated from very good to very poor quality by provider (N = 30).

Characteristics of High-Quality Videos

Characteristics of high-quality videos were analyzed, defined as those with high overall results or strong evidence-based information. Videos with higher overall results tended to have longer running times (rs = 0.47, P = .008). With respect to video providers, hospitals (a.s. = 0.002) and healthcare organizations (a.s. = 0.006) achieved better overall results than videos from independent persons did. Significant correlations were also found between video providers and the inclusion of evidence-based information: videos from hospitals (a.s. = 0.001) and healthcare organizations (a.s. = 0.022) were more likely to present evidence-based information than were those from independent persons.

With respect to evidence quality, videos with clearly formulated goals (rs = 0.71, P < .001) and those that cited references (rs = 0.43, P = .019) provided stronger evidence-based information. Additionally, videos that presented high-quality evidence were more likely to address missing evidence openly (rs = 0.51, P = .004). Videos that cited references tended to communicate missing evidence more transparently (rs = 0.47, P = .009).

The overall result was inversely correlated with the number of likes (rs = −0.55, P = .002) and views (rs = −0.46, P = .01).

No significant correlations were found between the number of views and video length or between the number of likes and visual quality. Additionally, no significant correlation was observed between the criteria of clearly formulated goals and the communication of missing evidence.

Discussion

Validity of the Assessment Tool

The assessment tool developed by our group shows good quality with good to very good intraclass correlation coefficients. Verifying reliability is a crucial step in implementing a newly developed measurement instrument, such as this assessment tool for YouTube videos. Without reliability, the results cannot be considered dependable or accurately interpreted.

The positive correlations between the overall result and the level of evidence (rs = 0.87, P < .001), as well as the criterion of openly communicated missing evidence (rs = 0.62, P < .001), provide strong indicators of the validity of our assessment tool. The level of evidence and the disclosure of missing evidence are the most important criteria for avoiding misinformation or even disinformation.

Similar results were reported in a parallel study by our group in which the same tool was used for the assessment of videos on alternative and complementary medicine. 40

Overall Video Quality

The evaluation revealed predominantly poor video quality, with a mean score of 38.6 points (SD: 5.3) and scores ranging from 29.8 (very poor) to 48.8 points (good). Notably, no video achieved a “very good” result.

Our results are broadly in line with 2 previously published YouTube analyses by Segado et al 42 and Sütcüoğlu et al. 43 Both authors assessed videos on cancer and nutrition or real food and cancer, respectively. The real food concept is a movement that promotes a diet of fresh and minimally processed foods. 42

However, our study provides a more nuanced quality assessment through the newly developed assessment tool, which identified specific deficits not captured by previous assessments. For video analysis, Sütcüoğlu et al used DISCERN, modified DISCERN, JAMAS, and the GQS. 43 Segado et al used the modified DISCERN and the GQS. 42 Owing to the assessment tools being used, several criteria were not considered in both studies that were covered in our work. For example, the criteria of visual and audio quality are not considered in the previously mentioned studies. These criteria have a major influence on the comprehensibility of a video 44 and therefore should be considered. The criterion of evidence is not directly recorded in the work of Sütcüoğlu et al. 43 Although the GQS is used to assess the quality of a video, it does not describe in detail what is meant by this. This poses the risk of a very subjective rating of a video depending on the respective evaluator. Furthermore, the quality of a video cannot be assessed comprehensively without taking evidence into account. The criterion of comprehensibility is also insufficiently considered by the assessment tools used in both studies. With the help of the new comprehensive assessment tool, for example, the focus is on the language and structure of the video. This more detailed differentiation also reduces the subjectivity of the ratings.

Importantly, the absence of “very good” videos in our sample (maximum score: 48.8/60) suggests that even institutional providers have substantial room for improvement. The complexity of nutritional oncology—involving tumor-specific recommendations, treatment-phase considerations, and individual patient factors—may explain why even professionally produced videos struggle to meet all quality criteria comprehensively.

Qualitative Analysis—Contrasting High- and Low-Quality Video Characteristics

To illustrate the practical implications of our quality assessment, we present detailed examples of videos representing different quality tiers, highlighting specific elements that distinguished superior from inferior content.

Case Study 1

High-quality video example “Fasten und Ernährung bei Krebs—Bayerische Krebsgesellschaft” (Fasting and Nutrition in Cancer—Bavarian Cancer Society), overall score: 48.8/60 (good)—highest in our sample.

Strengths

1. Clear Evidence-Based Framework: The video explicitly references 6 studies on which the information presented in the lecture is based. In addition, further sources are provided at the end of the video.

2. Balanced Communication of Missing Evidence: When discussing fasting during chemotherapy—a topic with limited evidence—the presenter explicitly stated “current research is insufficient to make definitive recommendations” and outlined both theoretical benefits and potential risks, modeling appropriate scientific humility.

3. Structured Content Delivery: The video followed a clear and coherent structure, moving from foundational concepts to more specific guidance and practical considerations, which facilitated viewer comprehension and retention.

4. Professional Production Quality: High audio clarity, professionally designed graphics illustrating complex concepts, and clear on-screen text reinforcing key messages.

Remaining Limitations

Even this highest-rated video had deficits, including unclear quality assurance procedures—such as whether the content had been validated or scheduled for future updates—and suboptimal presentation of numerical data and outcomes, which would have strengthened the evidence supporting its statements.

Case Study 2

Low-quality video example “Krebs Diäten—Mit Ernährung die Heilung unterstützen” (Cancer Diets—Supporting Healing with Nutrition), overall score: 29.8/60 (very poor)—lowest in our sample.

Critical Deficits

Absence of Evidence-Based References: No citations to scientific literature, guidelines, or credible sources—content appeared based solely on personal opinion or anecdotal evidence.

Failure to Communicate Missing Evidence: Did not acknowledge the lack of evidence for “cancer diets” or mention potential risks of restrictive dietary approaches (malnutrition, quality-of-life impact).

Lack of Transparency: Creator’s qualifications were unclear—no medical, nutritional, or scientific expertise was evident.

Poor Structural Organization: Content jumped between topics without logical flow, making information difficult to follow and retain.

Failure to Point Out Limitations of the Medium: Failed to advise consultation with healthcare professionals before implementing dietary changes, particularly concerning for cancer patients whose nutritional needs are complex and individualized.

Patient Safety Implications

This video exemplifies content that could directly harm patients by encouraging restrictive diets without professional supervision, potentially exacerbating malnutrition risk—a leading cause of mortality in cancer patients. Despite its low quality, this video had accumulated significant views, illustrating the quality-popularity paradox discussed later.

Provider Type and Quality

The analysis revealed significant quality differences based on provider type: hospitals (mean: 41.4, SD: 3.9) and healthcare organizations (mean: 41.8, SD: 4.8) achieved “good” results, while independent persons scored substantially lower (mean: 34, SD: 2.9).

These findings corroborate previous research by Cakmak and Mantoglu 23 and Memioglu and Ozyasar, 45 who similarly found higher-quality content from institutional sources. However, this study identified that healthcare organizations performed marginally better than hospitals (41.8 vs 41.4 points), though this difference was not statistically significant. This suggests that specialized cancer organizations may have additional expertise in creating patient-facing content. The institutional quality advantage likely stems from multiple factors: multi-professional content review processes, reputational accountability, access to evidence-based resources, and potentially dedicated health communication staff. However, it is crucial to note that even institutional videos achieved only “good” rather than “very good” ratings, indicating systematic quality gaps across all provider types.

The Quality-Popularity Paradox

The finding of negative correlations between video quality and both views (rs = −0.46, P = .01) and likes (rs = −0.55, P = .002) represents one of the most concerning patterns in this study. This inverse relationship has been consistently documented across multiple studies examining health information on YouTube.19,23 -25,43

The success of YouTube is largely driven by factors such as the number of viewers, frequency of visits, and duration of engagement with the platform. 46 The proliferation of low-quality content is, in part, fueled by competition among YouTubers for likes, subscribers, and other metrics. 10 This focus on views and likes can, therefore, lead viewers to adopt unhealthy behaviors and make misguided health decisions, 47 potentially causing harm. Hospitals and healthcare organizations require support and strategies to improve their presence on YouTube with higher-quality content. 47

Additional factors are discussed to explain the negative correlation between the number of views and likes and the quality of the videos. It is argued that laypersons are not able to check the quality of the information of a video.19,24,25 Mueller et al also argued that high-quality videos may tend to be too complex and less entertaining. The same study suggests that users may seek out unconventional content that differs from standard medical information or advice, possibly driven by the hope of discovering alternative treatment options with fewer side effects.24,43 Our analysis revealed that good-quality videos are longer than poor-quality videos are, most likely because of the complexity of high-quality information, as it takes more time to present complex information. At the same time, however, this could entail videos of good quality being watched less and receiving fewer likes owing to length because longer videos demand greater attention and motivation to be watched through to the end. This analysis did not reveal any negative correlations between the number of views and likes and the length of the video. Further research is therefore needed to identify the criteria by which YouTube users select their videos.

YouTube’s Trustworthiness Labeling and Its Limitations

Recently, YouTube has begun exploring ways to make trustworthy sources and videos more visible to users. Since early 2023, informational panels providing context about the source of health information have been displayed beneath certain videos. This labeling aims to help users quickly assess the origin and reliability of the sources.

YouTube channels that want to be labeled as reliable sources must go through an application process and meet certain criteria and principles. 48 These principles were developed by a panel of experts from external health authorities, such as the Council of Medical Specialty Societies (CMSS), National Academy of Medicine (NAM), and World Health Organization (WHO). 49

At the time of our video analysis, this function did not exist. In the meantime, 7 out of 30 videos have received this tag, and only providers that were assigned to the groups of hospitals or healthcare organizations.

However, a major problem remains: the individual videos are not assessed for quality by an independent committee consisting of experts on the respective topic. Behind trustworthy sources or providers, videos of poor quality or with false information can still be hidden, perhaps misleading users even more. This was not the case in our analysis; however, in another study, Huchel et al 40 used the same assessment tool and analyzed videos on the topic of complementary and alternative medicine. More good ideas are therefore needed to protect users of platforms such as YouTube from misinformation or even disinformation.

Possible Orientation Features of Good-Quality Videos for Laypersons on YouTube

Given the substantial variability in the quality of health-related YouTube content and the limited feasibility of applying comprehensive assessment tools such as DISCERN for everyday users, it is essential to identify easily recognizable features that may help laypersons distinguish higher-quality videos from misleading or low-quality content. The following indicators emerged as potentially useful quick-assessment cues.

First, the type of provider appears to offer an initial point of orientation. Although quality differences among provider categories have been described above, it is important to emphasize that institutional sources—such as hospitals or healthcare organizations—are generally more likely to follow established review processes and adhere to evidence-based standards. For laypersons, verifying the uploader’s affiliation is a simple and time-efficient step. Nevertheless, this criterion should not be interpreted as sufficient in itself, as comparable patterns were not consistently observed across all medical topics in previous research.

Second, evidence-related features may offer additional guidance. Higher-quality videos more frequently present clearly formulated objectives and provide transparent references to underlying sources. These elements are easily identifiable and thus accessible to non-experts. However, such cues can be imitated without guaranteeing factual accuracy, which limits their reliability as stand-alone indicators.

A third aspect concerns the communication of missing or uncertain evidence. Explicit statements about the limits of current knowledge are characteristic of scientifically sound communication but are more difficult for laypersons to evaluate. This criterion may therefore serve as a supplementary rather than a primary orientation feature.

Fourth, popularity metrics—such as views or likes—should not be used as proxies for quality. Despite their visibility, these indicators showed a negative association with video quality in multiple studies, likely reflecting the platform’s preference for entertainment-driven rather than evidence-driven content. This underscores the need for users to exercise caution when relying on such metrics for decision-making.

YouTube’s recent efforts to highlight “authoritative health sources” may improve the visibility of reliable content; however, these labels do not involve quality assessment of individual videos. As a result, misleading content may still appear under otherwise trustworthy channels.

Guler et al 50 also developed a tool for assessing YouTube videos with medical content. Their study was published after our literature review and tool development were completed. Many criteria overlap with ours. Their tool offers the advantage of being usable by laypersons, unlike ours. However, as noted above, a critical criterion is not considered: the evidence base of the presented information. Without assessing the evidence, the quality of medical video content cannot be comprehensively evaluated. Videos might appear high-quality while still containing misinformation.

Taken together, these observations illustrate that while certain features—such as the provider category, stated objectives, source citation, and transparent communication of evidence—may support users in identifying higher-quality content, no single criterion is sufficiently reliable on its own.

User Health Literacy and the Need for Critical Appraisal Skills

As it is currently not possible to ensure consistently high-quality health information on the Internet, users must remain vigilant in critically evaluating the information they encounter. 51 A study conducted in the United States reported that 36% of adults had average or insufficient health literacy, 52 which was associated with poorer health outcomes and a higher mortality rate. 53 Furthermore, the study revealed that a significant portion of individuals, including those with adequate or high health literacy, relied on low-quality online sources for health information. 54 Even when users can distinguish between credible and unreliable information, they tend to select sources that are more easily understood. 54 Therefore, fostering critical thinking skills should be a key priority. Such skills can be cultivated55 -57 and should be integrated into school curricula to enhance health literacy. Additionally, individuals and organizations with substantial social media influence should be held accountable for producing accurate and reliable content. 51 Research has demonstrated that misinformation spreads more rapidly and widely than factual information does.58 -61 Consequently, it is essential to promote the dissemination of high-quality, comprehensible health information across multiple channels to counteract the spread of misinformation and improve users’ critical appraisal skills.

Limitations

Sample Size and Temporal Constraints

Our sample size of 30 videos is relatively small, limiting statistical power and generalizability. Our data from February 2022 are now ~3 years old. The YouTube landscape evolves rapidly—new videos are uploaded, algorithms change, and scientific evidence advances—potentially affecting the current relevance of our specific findings. While our methodological framework and quality patterns likely remain applicable, the specific video landscape may have shifted. Future longitudinal studies with repeated sampling are needed to assess the temporal stability of findings.

Methodological and Statistical Considerations

Due to the ordinal nature of our assessment data, derived from 3-point Likert scale ratings, we employed non-parametric statistical methods throughout our analysis. While this approach is methodologically appropriate and was correctly implemented, ordinal scales inherently contain less information density compared to continuous measurement scales. Normally distributed continuous data analyzed with parametric tests can potentially provide greater statistical power and more precise parameter estimates. However, given that quality assessment criteria are inherently categorical rather than continuous, the Likert scale approach represented the most suitable and practical method for our evaluation framework. The strong interrater reliability observed (ICC: 0.811-0.909) supports the consistency of our ordinal measurements.

Video Metrics Limitations

View counts have limited validity, as they may reflect clicks rather than actual viewing, and should be interpreted cautiously as engagement indicators.

Tool Validation and Generalizability

The tool we developed has not yet undergone external validation by independent research groups. While our internal validation results, including excellent inter-rater reliability, indicate potential validity, comprehensive external validation remains necessary. Assessment may also have been influenced by raters’ varying expertise levels (oncologists vs medical students), especially when evaluating evidence-based content.

It is uncertain whether this tool performs effectively in other languages (eg, English) or can be generalized to platforms beyond YouTube. Further studies are needed to confirm cross-linguistic and cross-platform applicability.

Usability for Laypersons

Despite incorporating HonCODE and DISCERN assessment tools designed for laypersons—the complete assessment tool likely requires medical expertise, particularly for evaluating the evidence base of nutritional recommendations. Laypersons would require substantial training to accurately apply all assessment criteria, limiting the tool’s direct utility for patients.

Evaluation Subjectivity and Geographic Factors

The evaluation process contains inherent subjectivity, despite our efforts to minimize bias through multiple independent raters and standardized criteria. Raters were not blinded to video metadata (provider, views, likes), potentially introducing expectation bias in quality assessments. Additionally, “geofencing” algorithms may result in different videos being displayed in different geographic regions, potentially affecting which content is analyzed. The specific videos selected and the evaluators’ backgrounds can influence results.

Accessibility and Demographic Limitations

This study specifically addresses a German-speaking audience with visual and auditory capabilities. Consequently, results may not be fully applicable to all demographic groups. For individuals with sensory disabilities, alternative criteria may define high-quality video content. Future tool development should incorporate accessibility assessment criteria, and platforms like YouTube should enhance accessibility features for sensory-impaired audiences.

Conclusions

This study set out to achieve 3 aims: (1) to develop and validate a comprehensive, video-specific assessment tool for evaluating medical content, (2) to systematically assess the quality of the first 30 German-language YouTube videos addressing nutrition for cancer patients, and (3) to identify observable video features that may help laypersons recognize reliable content. All 3 aims were met.

First, the newly developed assessment tool demonstrated strong reliability and validity, confirming its suitability for evaluating medical video content in a structured and reproducible manner. By incorporating criteria unique to audiovisual formats—such as visual quality, audio quality, and video description—it addresses important limitations of existing text-based instruments as well as previously used video assessment tools.

Second, applying this tool revealed that the overall quality of available YouTube videos on cancer nutrition is predominantly poor, with substantial deficits in evidence citation, transparency regarding missing evidence, and comprehensibility. Even videos produced by institutional providers rarely reached the highest quality categories.

Third, the analysis identified a set of observable features—such as clear goal formulation, source citation, and provider type (eg, hospitals and healthcare organizations)—that were associated with higher-quality videos and may serve as practical orientation cues for laypersons. At the same time, easily visible popularity metrics such as views and likes should not be used as proxies for quality as prior studies have shown negative associations between these indicators and evidence-based content, reflecting the platform’s preference for entertainment-driven material. Taken together, these findings show that no single feature is sufficiently reliable on its own, underscoring the need to strengthen users’ critical appraisal skills.

These findings have important implications for clinical practice. Given the widespread use of online platforms, healthcare professionals should actively guide their patients towards trustworthy digital resources and address potential misinformation during consultations. Institutions, in turn, can use the criteria of the assessment tool as a guide to improve the quality of their own educational videos.

Future research should further refine and expand video-specific assessment tools to ensure their applicability across different medical topics, languages, and digital formats. Given the growing relevance of short-form content on platforms such as YouTube Shorts and TikTok, examining how such formats can be systematically evaluated is particularly important. Future studies could also incorporate analyses of user comments through sentiment analysis or deep learning approaches, allowing audience perceptions to be integrated into evaluation models. In addition, user-centered studies are needed to understand how laypersons apply observable video features when judging reliability and which indicators most effectively support accurate decision-making. Finally, evaluating the impact of platform-level interventions—such as labeling authoritative health sources—will be crucial for developing evidence-based strategies to reduce misinformation and enhance the safety of digital health environments.

Supplemental Material

sj-docx-1-ict-10.1177_15347354261422762 – Supplemental material for Development of an Assessment Tool for YouTube Videos With Medical Content: A Focus on Nutrition and Cancer

Supplemental material, sj-docx-1-ict-10.1177_15347354261422762 for Development of an Assessment Tool for YouTube Videos With Medical Content: A Focus on Nutrition and Cancer by Alina Grumt, Sophia Huchel, Christian Keinki, Viktoria Mathies, Lukas Käsmann and Jutta Huebner in Integrative Cancer Therapies

Footnotes

Acknowledgements

We extend our heartfelt gratitude to Lena Josfeld, Dr Christoph Stoll, Niklas Best, Isabel Denzel, Lena Prehn, Rieke Stehr, and the Kidney Cancer Network Germany e.V. (Nierenkrebs-Netzwerk Deutschland e.V.) for their invaluable contribution to our project. Their diligent efforts in testing the functionality of our assessment tool prior to the actual analysis have significantly enhanced its reliability and efficacy. We would also like to express our gratitude to Dipl.-Math. oec. Lisa Wedekind for her assistance with the statistical analysis. We deeply appreciate their commitment and collaboration, which have been instrumental in advancing our research endeavors. On behalf of Working Group Prevention and Integrative Oncology of the German Cancer Society, we deeply appreciate their commitment and collaboration, which have been instrumental in advancing our research endeavors.

Abbreviations

CI Confidence interval

WHO World Health Organization

GQS Global Quality Scale

JAMAS Journal of the American Medical Association Score

PEMAT Patient Education Materials Assessment Tool

HONCode Initiative Health on the Net Foundation

Afgis Action forum on health information system

ÄZQ Agency for Quality in Medicine

EbM Network German Network for Evidence-based Medicine

TCCI Title-Content-Consistency Index

VIQI Video Information and Quality Index

MICI Medical Information and Context Index

ICC Intraclass Correlation Coefficient

rs Spearman correlation

a.s. Adjusted significance

SD Standard deviation

Ethical Considerations

This article does not contain any studies with human or animal participants.

Author Contributions

Material preparation and data collection were performed by A.G. The collaboration of A.G., J.H., and S.H. enabled the development of the assessment tool. The analysis was performed by A.G., J.H., C.K., and S.H. Tables and figures were created by A.G., L.K., and S.H. Supervision was performed by J.H. The first draft of the manuscript was written by A.G., and all the authors commented on previous versions of the manuscript. A.G., S.H., C.K., V.M., L.K., and J.H. read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets used and/or analyzed during the current study are available from the corresponding author (Alina Grumt) upon reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.