Abstract

Students with mathematics difficulty (MD) often struggle with both computation and word-problem solving, which are foundational skills emphasized in national standards such as the Common Core State Standards. As most past-error analysis research has primarily focused on a single mathematical topic, little is known about whether students with MD demonstrate error patterns consistently across both computation and word problems. The present study examined error types and consistency that Grade 4 and 5 students with MD made on 22 computation problems and 10 word problems. Using a researcher-developed coding protocol adapted from prior literature, we identified that the most common errors were miscalculation, regrouping subtract smaller integer, and wrong operation in computation; and wrong schema, miscalculation, copy, and regrouping in word problems. The majority of students did not demonstrate overlapping errors.

Outlined in mathematics standards in the United States are expectations that students develop computational fluency with whole number and decimal operations as well as fraction addition and subtraction and apply these skills to solve word problems by the end of elementary school (i.e., Grade 5; National Governors Association Center for Best Practices & Council of Chief State School Officers [NGA & CCSSO], 2010). Of importance, is the strong link between proficiency with these skills and later life successes such as graduation and employment opportunities (e.g., Cai & Lester, 2010; Hein et al., 2013). However, solving computation and word problems are challenging for many students, especially students experiencing persistent mathematics difficulty (MD; Kingsdorf & Krawec, 2014; Nelson & Powell, 2018a, 2018b). In the present study, we explored common errors that elementary students with or at risk for MD made when working on computation and word problems to inform instructional decisions. In this introduction, we define students with MD, discuss computation and word-problem solving, review past error analyses, and present the purpose and research questions of the present study.

Students Experiencing MD

MD is an umbrella term that refers to students who struggle with mathematical concepts and procedures and who often demonstrate mathematics performance below their peers without MD. In addition to a formal diagnosis of a mathematics learning disability or dyscalculia, students with MD encompass those who score below a certain percentile (e.g., the 35th percentile) on standardized assessments, or identified as having persistent difficulty with mathematics by their teachers, school, or district (e.g., Fuchs et al., 2004; Nelson & Powell, 2018a). Often, students with MD also demonstrate difficulty with reading and vocabulary (Forsyth & Powell, 2017; Vukovic et al., 2010), as well as cognitive difficulties, such as working memory, attention, phonological processing, and nonverbal problem-solving (Fuchs et al., 2005; Peng et al., 2018).

Computation

In this study, we defined computation problems as mathematical problems with one of the four operations (i.e., addition, subtraction, multiplication, and division) using numerals and symbols (e.g., 28.4, the equal sign [=]). By the end of Grade 5, students should be able to read and solve computation problems accurately (e.g., 48.2 × 9.5 = 457.9) by applying their understanding of the place value system, arithmetic fact, and number properties (Mabbott & Bisanz, 2008; NGA & CCSSO, 2010). As students advance mathematically, beginning in late elementary and middle school, the attention on computational accuracy is shifted toward fluency and automaticity to prioritize higher-order cognitive reasoning (i.e., algebraic reasoning; National Mathematics Advisory Panel [NMAP], 2008; NGA & CCSSO, 2010). This shift occurs to reduce the cognitive load of working memory (e.g., counting, arithmetic fact, recalling the meaning of operations) and allow for deeper understanding of complex mathematical concepts and relations (Mabbott & Bisanz, 2008).

A key characteristic of students with MD when performing computation, however, is their difficulty with arithmetic fact retrieval (e.g., 9 + 4, 12 – 8, 3 × 7, 25 ÷ 5), which often results in compromised accuracy and fluency (Gersten et al., 2005; Peng et al., 2018; Vukovic & Siegel, 2010). With weak arithmetic fact fluency, students with MD rely on inefficient strategies, such as finger counting or counting from one, which increases cognitive load and leads to miscalculations (Dowker, 2019; Gersten et al., 2005). Students with MD also exhibit limited number sense, the conceptual framework that connects mathematical relationships (e.g., place value, regrouping), properties (e.g., commutativity, identity), and procedures (e.g., differentiating addition from subtraction, correctly manipulating fractions; Case et al., 1992; Castaldi et al., 2020; Nelson & Powell, 2018a). Deficits in number sense can contribute to rigid and error-prone mathematical reasoning, and students struggle to apply problem-solving strategies (Castaldi et al., 2020). Subsequently, students with MD experience increased cognitive load when attempting to grasp more complex mathematical concepts, leading to weaker conceptual and procedural understanding, as well as greater chance for making mathematical errors (Castaldi et al., 2020; Peng et al., 2018). Therefore, students with MD often demonstrate lower computation performance than students without MD (Zhang et al., 2014).

Word-Problem Solving

We define word problems as text-based mathematical problems that describe real-world scenarios without providing mathematical notation such as an operation sign (Boonen et al., 2016). Word problems require students to identify relevant information, construct a number sentence or equation that accurately reflects the problem, and solve for the unknown (Powell, 2011). For example, Mary and John have 48 carnival tickets in all. Each has the same number of carnival tickets. How many carnival tickets does each person have? To solve this problem, a student must read and understand the problem enough to generate the number sentence, 2 × _ = 48, or 48 ÷ 2 = _, that accurately represents the narrative and solve for the unknown. Therefore, word-problem solving not only relies on students’ ability to perform mathematical computation (such as 48 ÷ 2 = _), but it also relies on the student’s level of understanding of the text of the word problem (Fuchs et al., 2008).

As the body of literature on word-problem solving has continued to grow over the last few decades (see Myers et al., 2022, 2023), researchers have examined, developed, and determined that using schemas as a framework (e.g., via schema-based instruction) led to improved word-problem solving outcomes. Students use schemas to identify the type of the word problem based on the underlying structure presented in the problem (Marshall, 1995; Powell, Berry, et al., 2022). For example, the problem, Jenny ate

Nevertheless, students with MD struggle with word-problem solving (Powell, 2011). First, the linguistic complexity of word problems presents a major obstacle, especially for students with concurrent reading difficulty (RD; Fuchs et al., 2008). In many word-problem solving studies, researchers recognized that reading comprehension challenges often impact students’ understanding and interpretation of the problem, leading them to using ineffective strategies (e.g., tying key words to operations; Powell, Namkung, & Lin, 2022). Second, word-problem solving requires multiple steps (e.g., Powell et al., 2020), creating hurdles for students with MD.

Error Analysis

Error analysis is a valuable tool for recognizing students’ error patterns, which often derive from misconceptions or a weak conceptual and procedural foundation, to make instructional decisions (Ashlock, 2010). It provides researchers and teachers insights into the degree to which students understand and can perform mathematical concepts and procedures. For the present study, we reviewed and adapted past error analyses and approaches to guide our understanding and interpretation of predetermined errors.

Computation

To investigate errors among students with MD, reading difficulty (RD), co-occurring MD + RD, and neither, Raghubar et al. (2009) conducted an error analysis sampling 291 Grades 3 and 4 using a computation measure with 12 multi-digit addition and subtraction problems. In their study, Raghubar et al. classified errors into four categories: arithmetic fact errors (e.g., incorrect single-digit computations), procedural errors (e.g., misapplication of arithmetic procedures), visual-spatial errors (e.g., misreading numbers, column alignment), and switching (i.e., wrong operation). Upon analyzing errors among different student groups, the researchers identified that arithmetic fact errors were associated more so with students’ severity of MD, rather than their reading status. Moreover, students with MD and MD + RD made more procedural errors (e.g., subtract smaller integer and borrowing across zero), indicating a lack of conceptual and procedural knowledge of subtraction and place value. To the researchers’ surprise, students with RD and MD + RD made more visual-spatial errors than students without MD or RD, which may be attributed to the poor reading and processing of alphanumeric symbols. Finally, the researchers determined that operation switch errors were common across student groups, regardless of MD or RD status, which contrasted past research.

Nelson and Powell (2018b) analyzed 478 Grade 3 students with and without MD to identify errors made on a 40-item computation measure. Using descriptive analysis, the researchers identified a total of 2,427 errors, out of which 705 (29.05%) errors were random. They observed that students performed the wrong operation (20.02%), which is performing a different operation from the intended operation, the most. This was followed by adding all digits across (9.35%), miscalculation (8.32%), subtracted smaller integer (7.79%), and regrouping (7.09%) errors. In using wrong operations, Nelson and Powell further broke down the specific operation with which students used incorrectly: Adding instead of the intended operation (10.14%), subtracting (8.74%), and multiplying (1.15%). Based on these results, the researchers emphasized the need for explicit instruction on operational symbols (e.g., distinguishing addition, subtraction, and multiplication), place value, and regrouping. Moreover, not only did students with MD make more errors overall, but they also exhibited greater variability in their incorrect responses on assessment items than students without MD, suggesting a broader range of misconceptions or inconsistent problem-solving strategies.

Word-Problem Solving

From her error analyses on word problems, Newman (1977, 1983) developed a hierarchical model that identifies and describes the necessary stages for solving word problems: (a) Read the problem (reading recognition), (b) comprehend what is read (comprehension), (c) carry out a mental transformation from the words of the question to the selection of an appropriate mathematical strategy (transformation), (d) apply the process skills demanded by the selected strategy (process skills), and (e) write the answer in an acceptable written form (encoding). These steps, Newman argued, are hierarchical because failure at any stage would prevent students from moving onto the next stage and arriving at the solution correctly. In their error analysis, Raduan (2010) used the Newman model to analyze fraction word problems among 374 Grade 5 students. Results showed that most of the errors occurred during comprehension (52.91%), followed by transformation (22.37%), process skills (15.55%), encoding (8.84%), and reading (0.34%). Raduan determined that most errors in word-problem solving stemmed from the early stages of understanding and representing the problem.

A similar approach to analyzing word-problem errors is Mayer’s (1985) model, which entails four stages. Distinct from Newman’s model, Mayer’s model explicitly lists the knowledge and skill required for each stage. The first stage, problem translation, is reading and interpreting the information from text; this stage requires linguistic and factual knowledge, and the skill to identify relevant numbers. The second stage, problem integration, involves organizing identified information in a logical way for problem-solving; it requires schematic knowledge and the skill to select the right operation(s) to use. Third, solution planning involves creating a discrete plan to solving the steps; it enlists strategic knowledge and the skill of identifying the order of numbers and steps. Finally, solution execution executes the developed plan from the previous stages; it calls for algorithmic knowledge and computational skills. Adapting this model, Kingsdorf and Krawec’s (2014) error analysis with 76 Grade 7 and 8 students with and without MD revealed that students with MD committed the number selection, operation, missing step, and random errors more frequently than students without MD, with a marginally significant difference in computation. These differences in the frequency of errors between students with and without MD suggest that students with MD demonstrate difficulty with conceptual understanding in word-problem solving more so than students without MD and highlights a need to train teachers on providing corrective instruction that remedies students’ misconceptions.

The Present Study

Both computation and word-problem solving are essential components of students’ overall mathematics proficiency (NGA & CCSSO, 2010). Although mathematics error analysis is a well-established method for identifying gaps in students’ mathematical understanding, few studies have conducted analyses of both computation and word-problem solving among students with MD. Specifically, to our knowledge, no prior studies have systematically investigated the consistency of errors across these two important domains. Given that computation underlies successful word-problem solving, understanding whether students make similar errors across both types of tasks can yield valuable insights into the persistence and nature of their conceptual and procedural difficulties. Investigating the consistency of students’ errors across computation and word-problem solving strengthens inferences about students’ persistent misconceptions and informs for targeted instructional response. Specifically, we asked the following research questions:

Method

Participants

A larger intervention study took place in a district of 79 elementary schools in a Southwestern state. The intervention trained in-service general and special education teachers on using evidence-based practices through coaching and professional development; student mathematics performance was used as an outcome variable. Students were eligible to participate in the study if their state assessment results from the previous school year were identified as Approaches Grade Level or Did Not Meet Grade Level. We categorized these students as experiencing MD. A total of 992 students with MD from 65 Grade 4 and 5 classrooms participated in the larger intervention study in the school year 2022 to 2023. Demographic information was available for 97% of the participants (n = 965). Of these, 52% were female, 22% received special education services, and 36% were identified as English learners. Overall, approximately 7% of the students identified as Black, 31% as White, 56% as Hispanic/Latino, 3% as multiracial, and 2% as Asian. For the present study, we randomly selected approximately 20% of the sample (n = 206) and analyzed their pretest data (i.e., before any intervention occurred). The 20% subsample was selected to allow for a manageable yet representative analysis of student errors. Demographic information was available for 88% of the participants (n = 176). The sample included about 51% female, 51% English learners, and 23% received special education services. About 4% of the students identified as Black, 21% as White, 70% as Hispanic/Latino, 4% as multiracial, and 1% as Asian. Of these randomly selected students, 72% were Grade 4 and 28% were Grade 5 students. We included 20% of the students to reflect the instructional context of the larger intervention study, which included teachers and education specialists teaching both grade levels.

Measures

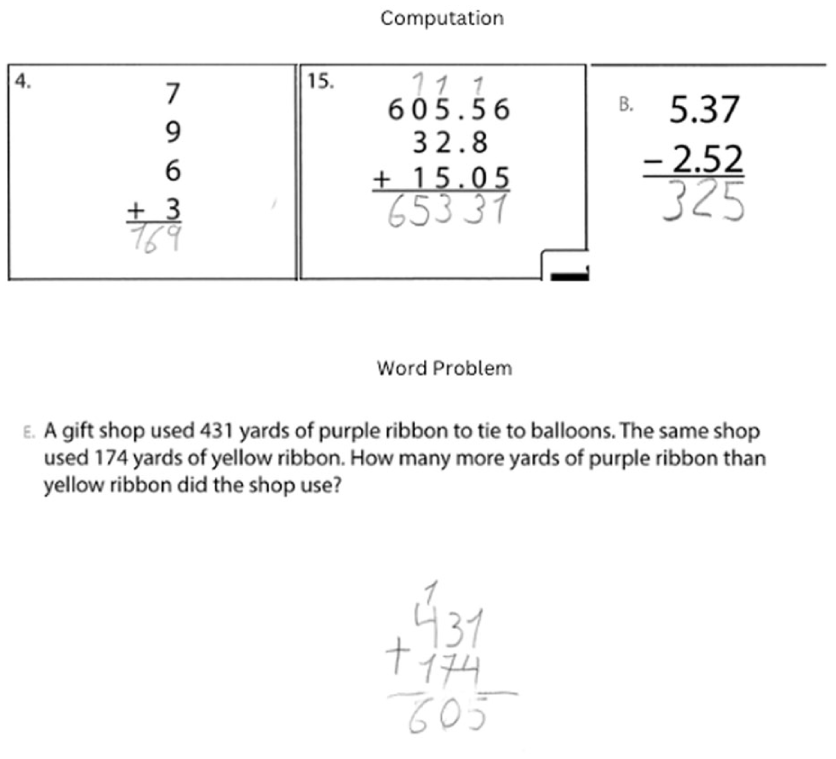

We administered two measures—one focused on computation and the other focused on word problems. Figure 1 shows computation and word-problem examples. The Test of Mathematical Abilities, Third Edition (TOMA-3; Brown et al., 2012) is a standardized, norm-referenced assessment designed to evaluate the mathematical abilities of students ages 8 to 18 years. We used the Computation subtest of TOMA-3 to examine students’ computation skills. This two-page subset contained 30 computation problems that cover a wide range of mathematical topics and increase in difficulty. The topics ranged from single- and multi-step operations with whole numbers (e.g., 11–2 = _; 5 × 30 = _), rational numbers (e.g.,

Examples of Computation Problems and a Word Problem.

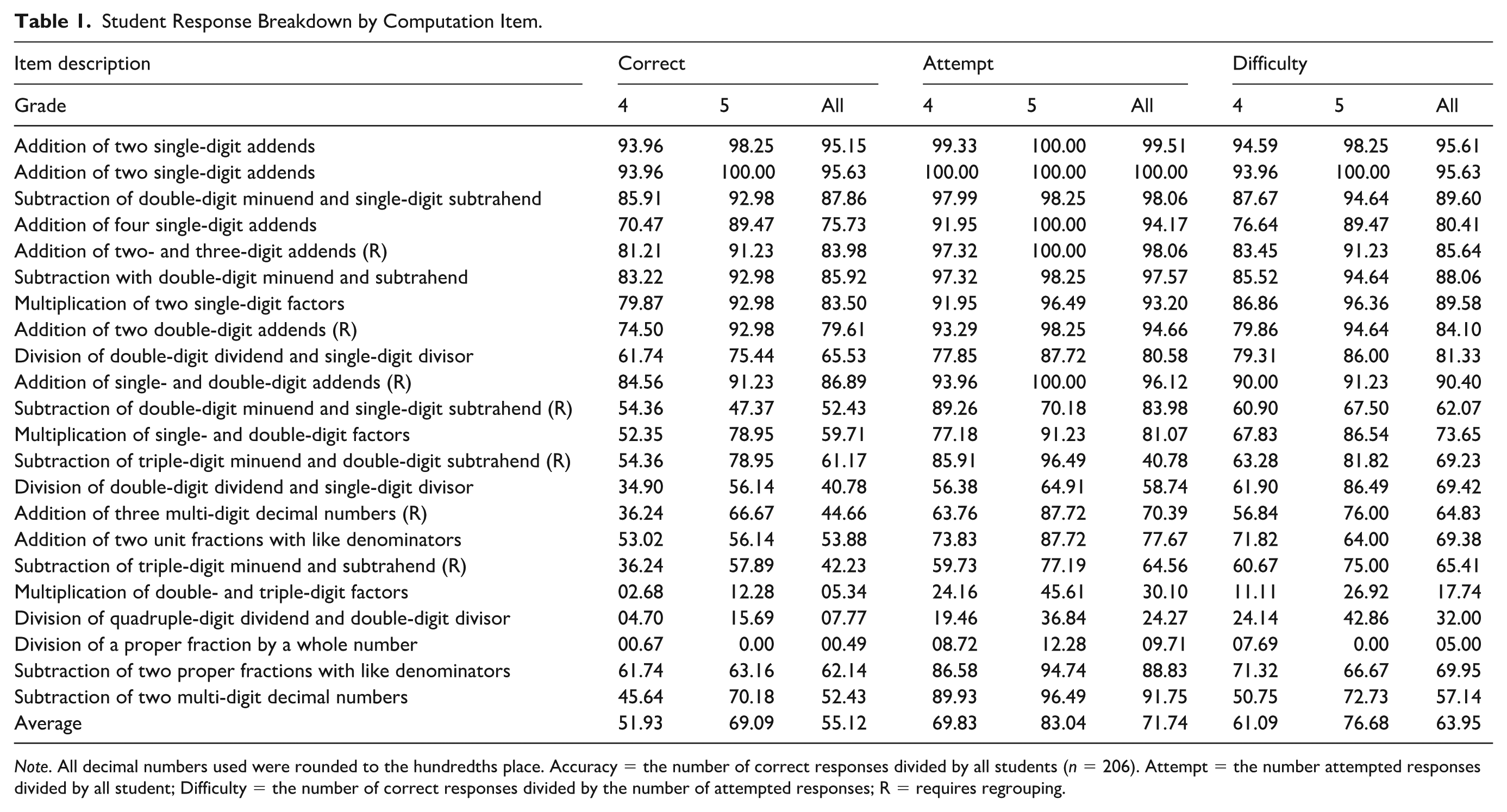

Student Response Breakdown by Computation Item.

Note. All decimal numbers used were rounded to the hundredths place. Accuracy = the number of correct responses divided by all students (n = 206). Attempt = the number attempted responses divided by all student; Difficulty = the number of correct responses divided by the number of attempted responses; R = requires regrouping.

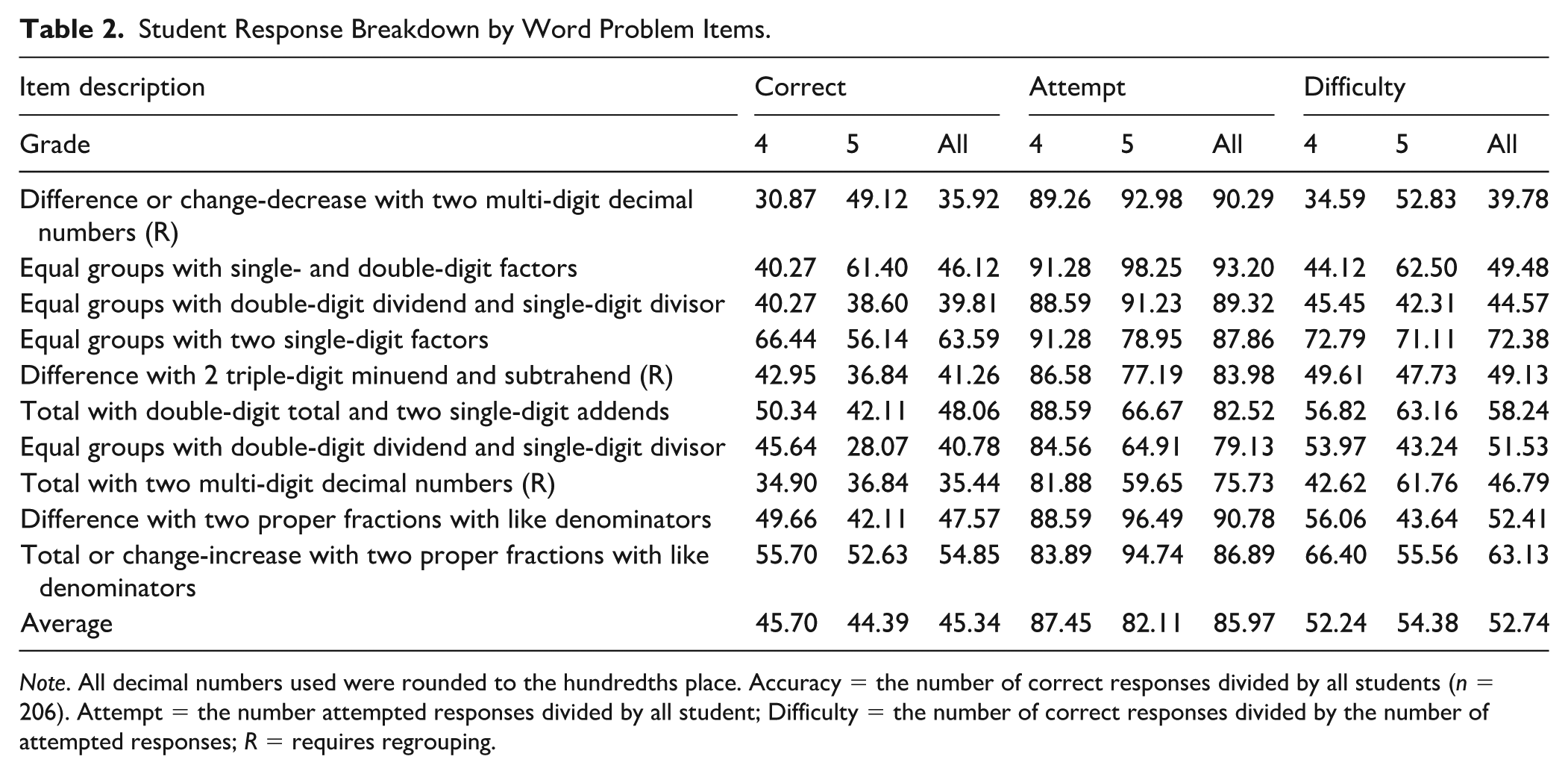

The SAT-10 is a norm-referenced standardized measure designed to determine whether K-12 students meet national or state standards in reading, mathematics, and language. We used 10 items from the SAT-10 Intermediate 1 and 2 to create the Word Problems-Abbreviated to evaluate students’ routine word-problem solving performance. These items were purposefully selected based on their alignment with common schemas (i.e., total, difference, change, and equal groups), as teachers in the larger intervention study were being trained to emphasize schema-based instruction when teaching word-problem solving. The selected items reflect single-step problems involving single- and multi-digit whole numbers and rational numbers (e.g., Nick saved $27 each week for 6 weeks. How much money did he save in all?). Table 2 describes all word-problem items. For the 10 word problems, Cronbach’s alpha for this sample was .83.

Student Response Breakdown by Word Problem Items.

Note. All decimal numbers used were rounded to the hundredths place. Accuracy = the number of correct responses divided by all students (n = 206). Attempt = the number attempted responses divided by all student; Difficulty = the number of correct responses divided by the number of attempted responses; R = requires regrouping.

Data Collection

Before intervention implementation, throughout the first 3 weeks of September 2022, the research team delivered scripted pretest packets to participating teachers, who administered the pretest assessments over two sessions to their students during regular or intervention class periods. The teachers administered the pretests by reading the directions aloud and the students worked quietly and independently on the paper-and-pencil assessments. Upon completion of the pretests, research team members collected the pretests from the teachers in person, scanned, and uploaded the scanned documents into an encrypted folder protected by the university. The authors of the present study obtained student pretests by signing a data use agreement with the principal investigator of the intervention project.

Coding Procedures

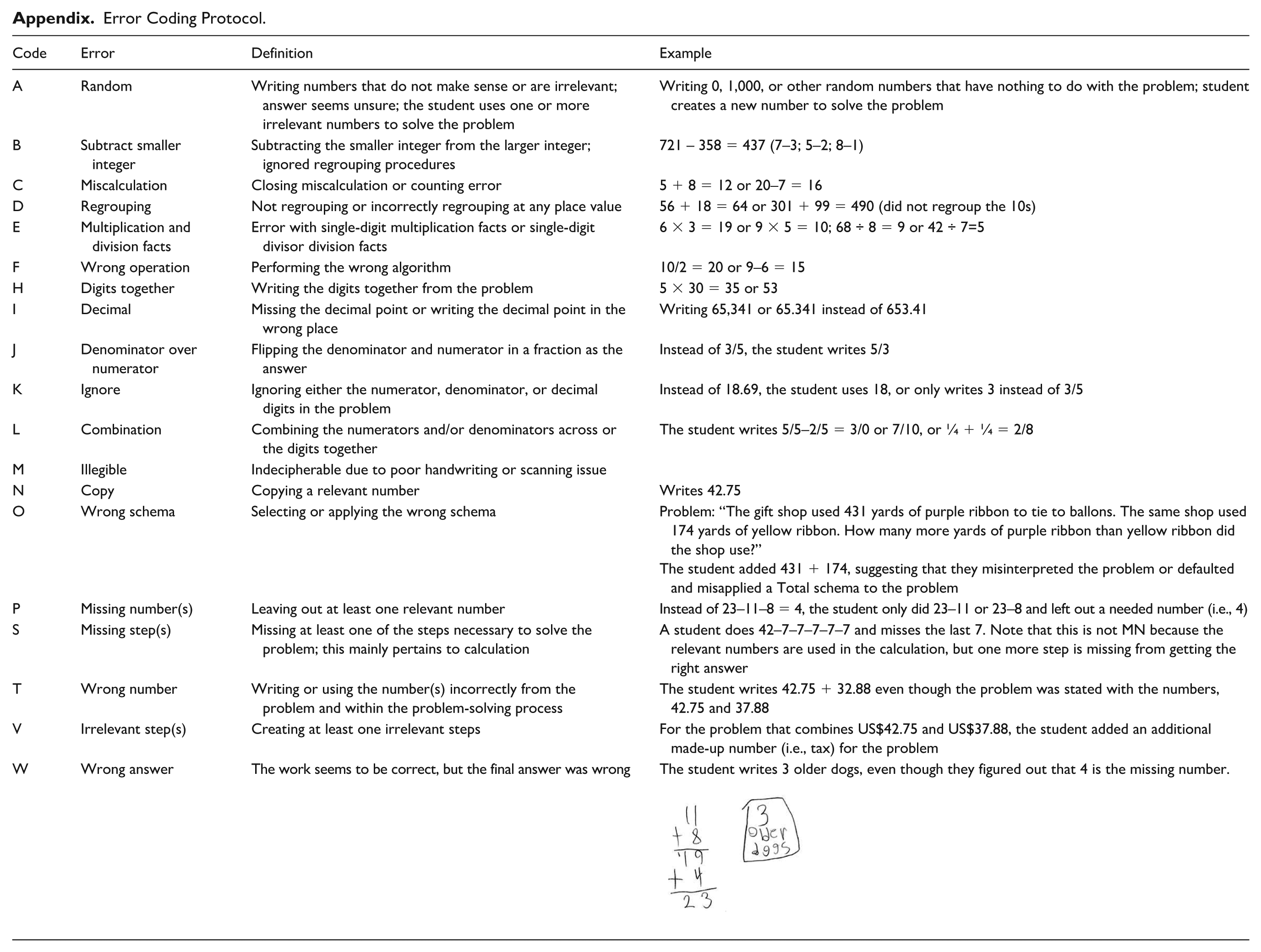

We scored student responses from the computation and word-problem items as correct or incorrect (i.e., 1 or 0) and determined the error type using an error coding protocol (see Appendix). Students’ writing errors (e.g., writing numbers backwards or misspelling) were not penalized. From literature, we identified the following error types to be prevalent among computation problems: Wrong operation, regrouping error, miscalculation, subtracted smaller integer, multiplication facts error, copy prompt, did not understand prompt, and did not understand exponents (e.g., Nelson & Powell, 2018b). To capture the error types exhibited in our sample data more precisely, we used multiplication and division facts error to denote multiplication facts error (Nelson & Powell, 2018b) and math fact errors (Raghubar et al., 2009), and random error instead of did not understand prompt (Nelson & Powell, 2018b). Then, one of the authors piloted the error coding of 20 student assessments, leading them to adding more error codes to the protocol that did not fit into the predetermined error types, resulting in a total of 13 computation error types (see Codes A–N in the appendix). For word problems, we referenced Newman and Mayer’s models and identified error types at a more distinct level than the model stages, resulting in a total of 22 distinct word problem error types (see Codes A through W in Appendix). As for interpreting schema application, which encapsulates the comprehension and interpretation stages (Mayer, 1985; Newman, 1983) of word-problem solving, all researchers had received prior training on schema types for word problems in a doctoral-level special education mathematics course and received additional review and clarification from the first author, who had experience providing schema-based intervention. As computation errors are also probable in word-problem solving, we applied the entire coding protocol when coding word-problem errors.

Interrater Reliability Agreement

To ensure coding reliability, the first author coded an initial set of 20 student assessments to establish the codes and compiled the coding protocols for computation and word problems. Next, the first author met with the rest of the author team for an interrater reliability agreement (IRA) training. In the training, the author team coded 10 student assessments together to establish an understanding of the coding protocols. Once we obtained 100% IRA in the training, we independently coded about 40 to 50 student assessments (i.e., scoring student response). Two of the authors coded all 206 computation responses and double-coded 35% of the responses, obtaining an initial 94.1% IRA. Three of the authors coded all 206 word-problem responses and double-coded 35% of the word-problem responses and obtained an initial 93.4% IRA. The two teams of authors met separately to rectify error coding discrepancies and reached 100% IRA.

Data Analysis

We conducted descriptive analysis to answer our research questions (Langford, 2006). First, we calculated the frequency of each computation and word-problem error. We also calculated the percentages of students who attempted and solved problems accurately and those who attempted and solved the problem inaccurately to observe patterns among problems. In answering our second research question, we calculated error consistency by using the frequency of each error type at the student level across computation and word problems (i.e., Errors A–N in the appendix), adapting the approach to creating a contingency table. First, we calculated the frequency of a student’s error (e.g., subtract smaller integers) among computation problems and word problems using the Excel formula,

Results

We examined Grade 4 and 5 student responses (n = 206) on 22 computation problems and 10 word problems. On average, these students with MD attempted 17 computation and 10 word problems; they solved 13 computation and four word problems correctly. Grade 4 students had an average of 52% accuracy and 70% attempt rates on computation problems, and 46% accuracy and 87% attempt rates on word problems. Grade 5 students had an average of 69% accuracy and 83% attempt rates on computation problems, and 44% accuracy and 82% attempt rates on word problems. Tables 1 and 2 detail student response rates (including difficulty rate, as measured by the number of correct divided by the number of attempt, multiplied by 100) by problem.

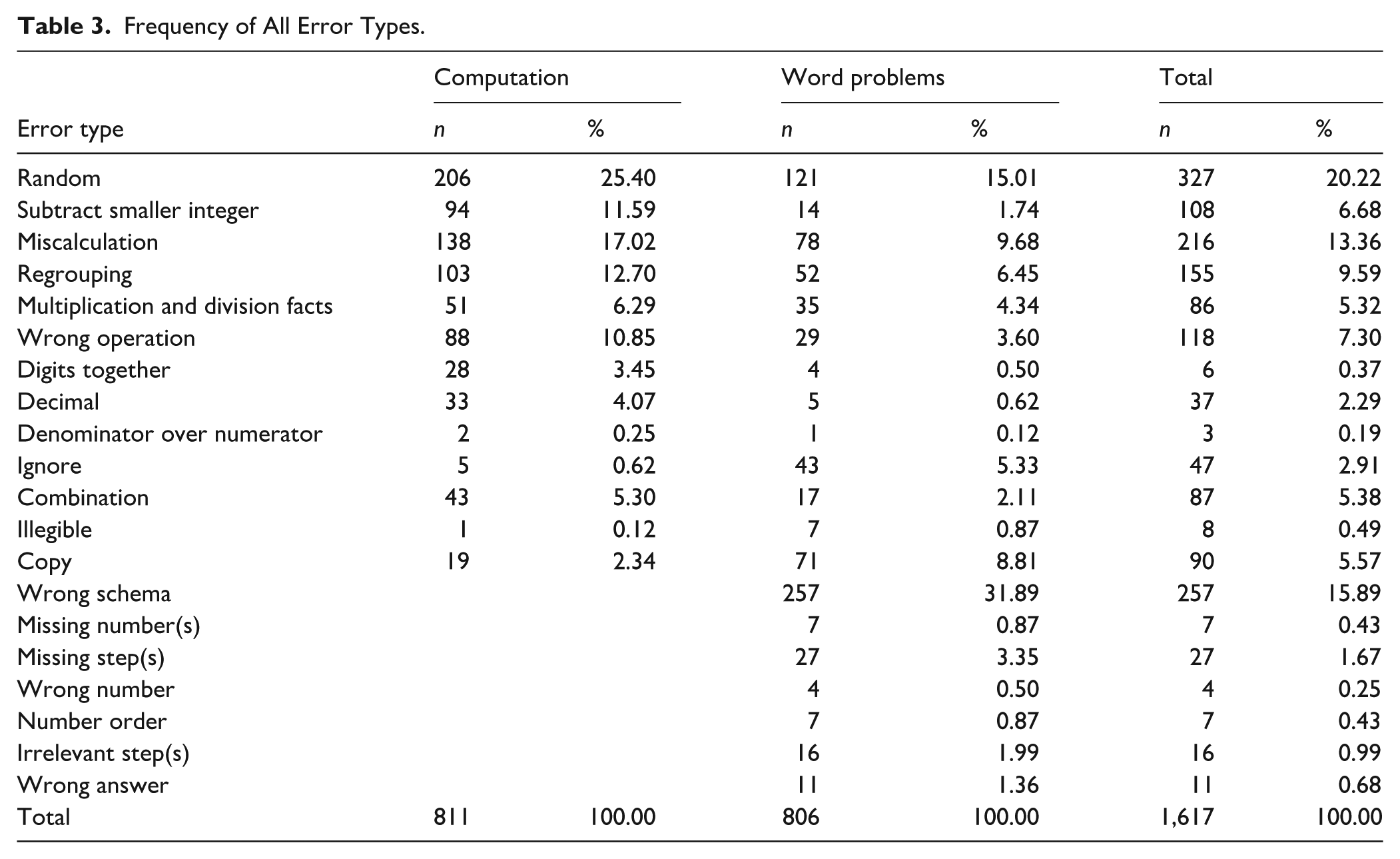

Research Question 1: Common Errors

Students made a total of 1,646 errors in this study, including 327 (19.9%) unique errors. Table 3 displays the frequency of each error type. Besides random errors, the error type with the highest frequency among computation problems was miscalculation (n = 138; 17.0%), followed by regrouping (n = 103; 12.7%), subtract smaller integer (n = 94; 11.6%), and wrong operation (n = 88; 10.9%). The error type with the highest frequency among word problems was wrong schema (n = 257; 30.8%), followed by miscalculation (n = 216; 25.9%), regrouping (n = 155; 18.6%), wrong operation (n = 118; 14.1%), and subtract smaller integer (n = 108; 12.9%).

Frequency of All Error Types.

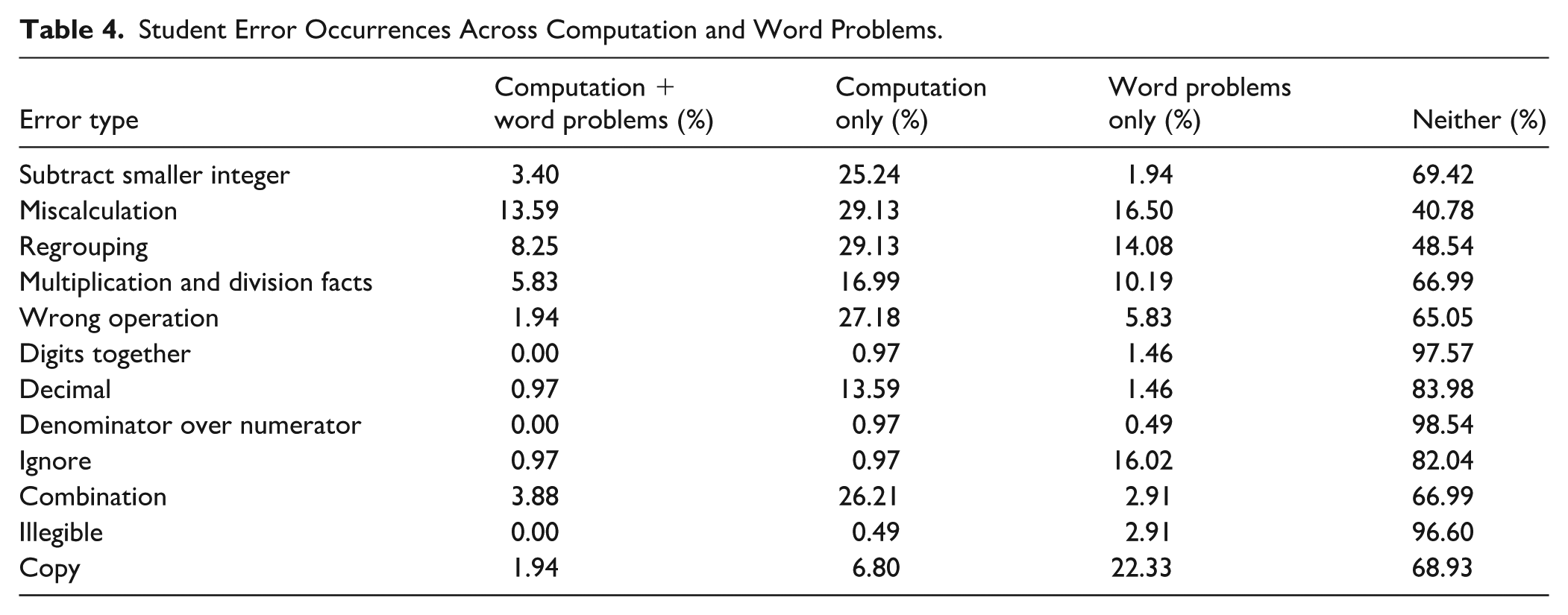

Research Question 2: Consistency of Errors

Among our sample of 206 students, few students made consistent errors between computation and word problems. Table 4 details the proportion of error-making patterns. Of the error types applicable to both computation and word problems, miscalculation had the highest consistency (n = 28; 13.6%) between computation and word problems. A smaller proportion of the students demonstrated consistency in making regrouping (n = 17; 8.3%) and multiplication and division facts (n = 12; 5.8%) errors.

Student Error Occurrences Across Computation and Word Problems.

Discussion

We examined errors that 206 Grade 4 and 5 students with MD made on computation and word problems. We identified these students as having MD based on the outcome of their state assessment performance being Approaching Grade Level and Did Not Meet Grade Level. On average, students with MD solved over half of the 32 problems (55.6%) correctly, with less than two thirds of the 22 computation problems (60.2%) solved correctly, and less than five word problems (45.3%) answered correctly. We identified a total of 1,646 errors, 20% of which were indecipherable (i.e., random); our discussion focuses on the rest of the errors.

Common Errors

Consistent with the literature, miscalculation (17.0%), regrouping (12.7%), subtract smaller integer (11.6%), and wrong operation (10.9%) were the most common computation errors (Nelson & Powell, 2018b; Raghubar et al., 2009). As expected, students in this sample may not have had strong arithmetic fact fluency and thus used inefficient counting strategies that resulted in miscalculation (Gersten et al., 2005). Combining that with their limited number sense, particularly that of the place value system, this may have led students to subtracting integers disregarding the place value of each digit and making regrouping errors (Case et al., 1992; Raghubar et al., 2009). As for solving computation problems using the wrong operation, perhaps because students were exposed to addition first in their mathematics learning and experienced more practice opportunities using addition than other operations, students defaulted to addition rather than the intended operation (Nelson & Powell, 2018b).

The most common word problem errors involved wrong schema (30.8%), followed by miscalculation (9.3%), copy (8.5%), and regrouping (6.2%). Consistent with literature, most students with MD demonstrated errors in the initial stage word-problem solving process, where they struggled to represent reflect their comprehension and interpretation of the problems mathematically (Fuchs et al., 2008; Kingsdorf & Krawec, 2014; Raduan, 2010). As shown in Figure 1, the word problem required a Difference schema, which asked students to compare the amounts of ribbon used (i.e., “how many more”). However, the student added the two quantities, indicating the misinterpretation of a Total problem. This shows that the student did not comprehend and capture the underlying structure of the problem (i.e., comparing amounts for a difference) even though they selected the needed numbers and demonstrated accurate computation. Copy was another error type that suggested students struggled at the early stage of word-problem solving, where they may not have understood what the problem was about and, in attempt to show their engagement with the problem, they copied number(s) from the problem.

Many students with MD also exhibited computational errors (e.g., miscalculation and regrouping errors) in their word-problem solving. Although word problems showcase heavier demands on reading comprehension and accurate mathematical representations (Fuchs et al., 2008), students’ procedural weaknesses (i.e., miscalculation and regrouping) continue to prevail (Gersten et al., 2005). In other words, even when students correctly interpret and represent a word problem, they may still arrive at incorrect answers due to computational errors. Moreover, the presence of computational errors within word problems may reflect cognitive overload (Tolar et al., 2016). Word problems often require students to read and comprehend the text, identify and hold relevant information, and determine an appropriate mathematical strategy all before executing the computation(s). For students with MD, these layers of word-problem demands likely added to the likelihood of inaccuracy (Peng et al., 2018).

Error Consistency

Results related to our second research question revealed that a small proportion (less than 14%) of the students with MD made errors consistently between computation and word problems. Table 4 shows not only students’ consistent errors applicable to both computation and word problems but also compares the percentages of students who made each of the errors in computation and word problems. We highlight select error patterns for this discussion.

Miscalculation, Regrouping, and Fact

Of the 206 students with MD, about 14% exhibited miscalculation, 8% regrouping, and 6% multiplication and division facts across computation and word problems. This was expected as miscalculation was the most common computational error in computation problems and word problems separately (Gersten et al., 2005; Nelson & Powell, 2018b; Raghubar et al., 2009). Including regrouping and multiplication and division fact errors, the rates of these error patterns suggest that students with MD had yet to develop strong place value knowledge and multiplication fact fluency, which was evident in some students’ use of lower-level strategies such as repeated addition and tally marks on the assessments.

Although miscalculation, regrouping, and fact errors were prevalent in this study, it is encouraging that within all our sample whom we identified as having MD, only a small proportion of them demonstrated error consistency, that is, less than 14% (and that was only for one error type). This means that only a handful of the students with MD carried the same weak arithmetic fact fluency and number sense across different problem contexts. Teachers should provide targeted instruction on these error-prone computational skills to help students develop fluency with computational strategies to access advanced mathematical topics (e.g., fractions), thus relieving them from cognitive overload (Peng et al., 2018; Tolar et al., 2016)

Wrong Operation

Of interest was that more students with MD made the wrong operation errors in computation problems (27.2%) than those who made the error on word problems only (5.8%) or both (1.9%). At first, this may seem counterintuitive, as word problems require students to determine the operation based on the schema. However, results suggested that students may have misapplied operations during computation due to confusion about operational cues or habits rather than true conceptual misunderstanding. For example, a student might have added by default when seeing a minus sign because they were more comfortable and confident performing addition (Nelson & Powell, 2018b). During word-problem solving, however, students generated an operation based on their comprehension of the text that was internalized, subsequently leading them to perform the correct operation. The contrast in occurrence rates highlighted a potential over-reliance on procedural cues without attention to the meaning of mathematical symbols.

Copy, Ignore, and Digits Together

Copy, ignore, and digits together were the only errors in which their occurrences were higher on word problems (22.3%, 16.0%, and 1.4%, respectively) than computation problems (6.8%, 1%, and 1%) or both (1.9%, 1%, and 0%). This aligned with literature in that students with MD often demonstrate difficulties with cognitive overload (e.g., phonological processing, working memory, and attention; Fuchs et al., 2005; Peng et al., 2018) particularly with the multi-step nature of word-problem solving (Mayer, 1985; Newman, 1977, 1983). We suspected that students with MD may have copied number(s) from word problems, ignored parts of a numeral (e.g., using 18 as opposed to 18.69), or simply combined digits (e.g., 5 × 30 = 53 or 35) due to feeling overwhelmed or intimidated, thus disengaging from the cognitively demanding word problem without attempting to proceed or finish (Fuchs et al., 2008; Tolar et al., 2016).

Limitations

This error analysis study is not without limitations. First, the interpretation and coding of errors may not have fully reflected students’ conceptual and procedural errors. Because assessment directions did not ask students to show work and the allotted space for computation problems was limited (see Figure 1), we were not completely sure of students’ thought process to accurately identify error type(s). Furthermore, researcher bias may have been introduced by the inclusion of new error types during pilot coding. To minimize misinterpretation, however, we created the coding protocol in which most of the error types derived from past literature (e.g., Kingsdorf & Krawec, 2014; Nelson & Powell, 2018b), operationally defined with provided example(s), and piloted the coding protocol on a sizeable amount of student data (n = 20), as well as double-coded samples, to achieve 100% reliability among multiple coders. Future research on error analysis should employ measures that entail student work process or conduct student interviews to better capture error types and patterns.

Second, we used a descriptive analysis, which could limit generalizability. Although we explored error consistency using Excel formulas, other statistical approaches (e.g., regression and chi-square) may provide deeper insights into student error patterns across the different mathematical topics. Being an initial error analysis study to examine error types and patterns across two mathematical topics among students with MD, we determined that descriptive analysis could provide preliminary results that inform future research exploring errors across topics. For instance, future research may consider exploring predictors of prominent errors among distinct student groups (e.g., with MD, MD + RD, with mathematics learning disability; Fuchs et al., 2008; Raghubar et al., 2009) or use latent class analysis to identify root misconceptions based on patterns of response.

Third, we aggregated all error types across all computation problems, which included various topics such as single- and multi-digit operations, and fractions and decimal number addition and subtraction. Because certain errors can only occur on specific problems (e.g., decimal errors on decimal items, subtract smaller integer on subtraction problems), this approach may overlook item-specific patterns. Although our goal was to provide an overview of common error types to inform instructional planning and decision-making, we acknowledge that item-level differences in structure and content may influence the types of errors students make. Future research could build upon this work by conducting an item-by-item analysis to examine how particular problem topics or features (e.g., operation type, whole number vs. rational numbers, position of the unknown [i.e., equal sign knowledge]) are associated with distinct error patterns.

Fourth, our comparison of errors between computation and word problems was exploratory in nature. The computation and word-problem assessments were not designed to align in content or difficulty level, which limits our ability to draw conclusions about skills transfer or consistency across item types. Future research could investigate this question more directly by designing matched item pairs wherein a computation problem and a word problem target the exact same skill at a similar difficulty level to examine how students apply strategies across mathematical contexts and whether the same errors and misconceptions persist.

Finally, we coded only the primary error for each problem, rather than capturing all possible errors within the same incorrect response. This decision was made to focus on the initial misstep that most directly contributed to the incorrect answer, particularly in cases where one error likely influenced subsequent ones. However, this approach may overlook the complexity of multi-step error patterns, especially in word-problem solving, where students may likely make both schematic and procedural errors. Future research may consider developing or adapting a multi-error coding scheme to provide a richer picture of students’ problem-solving processes.

Practice Implications

The results of this study inform the following practice implications. First, frequent errors such as miscalculation, regrouping, and subtract smaller integer highlight the need for teachers to bolster arithmetic fact fluency (i.e., addition and subtraction within 20, and multiplication and division facts) and place value understanding for students with MD. If possible, teachers should build brief (e.g., 2–5 min) and regular (e.g., daily) fact fluency practice into their instructional routine (Fuchs et al., 2021; NMAP, 2008). Second, given that students with MD also made operational errors by applying the wrong operation despite symbolic signals (i.e., +, −, ×,and ÷), teachers may provide additional review and discussion during instruction on the meaning of operations and provide strategies (e.g., checklist) to prevent procedural oversight. Third, on word problems, many students showed that they either did not understand the underlying problem type (i.e., wrong schema) or may have felt overwhelmed or intimidated (i.e., copy and ignore) by the textual nature of word problems that they performed poorer on word problems than on computation problems (i.e., 45.34% vs. 55.12% accuracy, respectively). Teachers should provide schema instruction as schemas shown to support students’ understanding of word problem structures to more precisely strategize and plan their problem-solving process (Powell, Berry, et al., 2022). Finally, item-level error analysis can ensure that intervention aligns with students’ demonstrated areas of struggle. By integrating analysis into progress monitoring, teachers can track changes in the types of errors students make over time.

Beyond classroom instruction, school-based professionals such as instructional coaches, school psychologists, and mathematics specialists can also play a meaningful role in helping teachers leverage error analysis to improve student learning. These professionals often have specialized training in interpreting assessment data and connecting it to instructional decision-making (e.g., Grapin & Benson, 2019; Ruhter & Karvonen, 2023). In supporting teachers, they can assist teachers to review student error patterns, identify learning challenges, interpret results, and develop instructional strategies (e.g., reteaching and small-group interventions). By providing opportunities for collaborative analysis, these professionals help ensure that error data leads to actionable next steps for teachers.

Conclusion

This error analysis study identified common errors that upper elementary students with MD make on computation and word problems. Our results showed that students with MD persistently exhibited miscalculation and regrouping on both computation and word problems. Students also solved word problems with low accuracy rate because they struggled to understand the problem and the problem type.

Footnotes

Appendix

Error Coding Protocol.

| Code | Error | Definition | Example |

|---|---|---|---|

| A | Random | Writing numbers that do not make sense or are irrelevant; answer seems unsure; the student uses one or more irrelevant numbers to solve the problem | Writing 0, 1,000, or other random numbers that have nothing to do with the problem; student creates a new number to solve the problem |

| B | Subtract smaller integer | Subtracting the smaller integer from the larger integer; ignored regrouping procedures | 721 – 358 = 437 (7–3; 5–2; 8–1) |

| C | Miscalculation | Closing miscalculation or counting error | 5 + 8 = 12 or 20–7 = 16 |

| D | Regrouping | Not regrouping or incorrectly regrouping at any place value | 56 + 18 = 64 or 301 + 99 = 490 (did not regroup the 10s) |

| E | Multiplication and division facts | Error with single-digit multiplication facts or single-digit divisor division facts | 6 × 3 = 19 or 9 × 5 = 10; 68 ÷ 8 = 9 or 42 ÷ 7=5 |

| F | Wrong operation | Performing the wrong algorithm | 10/2 = 20 or 9–6 = 15 |

| H | Digits together | Writing the digits together from the problem | 5 × 30 = 35 or 53 |

| I | Decimal | Missing the decimal point or writing the decimal point in the wrong place | Writing 65,341 or 65.341 instead of 653.41 |

| J | Denominator over numerator | Flipping the denominator and numerator in a fraction as the answer | Instead of 3/5, the student writes 5/3 |

| K | Ignore | Ignoring either the numerator, denominator, or decimal digits in the problem | Instead of 18.69, the student uses 18, or only writes 3 instead of 3/5 |

| L | Combination | Combining the numerators and/or denominators across or the digits together | The student writes 5/5–2/5 = 3/0 or 7/10, or ¼ + ¼ = 2/8 |

| M | Illegible | Indecipherable due to poor handwriting or scanning issue | |

| N | Copy | Copying a relevant number | Writes 42.75 |

| O | Wrong schema | Selecting or applying the wrong schema | Problem: “The gift shop used 431 yards of purple ribbon to tie to ballons. The same shop used 174 yards of yellow ribbon. How many more yards of purple ribbon than yellow ribbon did the shop use?” |

| P | Missing number(s) | Leaving out at least one relevant number | Instead of 23–11–8 = 4, the student only did 23–11 or 23–8 and left out a needed number (i.e., 4) |

| S | Missing step(s) | Missing at least one of the steps necessary to solve the problem; this mainly pertains to calculation | A student does 42–7–7–7–7–7 and misses the last 7. Note that this is not MN because the relevant numbers are used in the calculation, but one more step is missing from getting the right answer |

| T | Wrong number | Writing or using the number(s) incorrectly from the problem and within the problem-solving process | The student writes 42.75 + 32.88 even though the problem was stated with the numbers, 42.75 and 37.88 |

| V | Irrelevant step(s) | Creating at least one irrelevant steps | For the problem that combines US$42.75 and US$37.88, the student added an additional made-up number (i.e., tax) for the problem |

| W | Wrong answer | The work seems to be correct, but the final answer was wrong | The student writes 3 older dogs, even though they figured out that 4 is the missing number. |

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.