Abstract

The original Social Emotional Distress Survey–Secondary (SEDS-S) assesses adolescents’ past month’s experiences of psychological distress. Given the continued need for and use of brief measures of student social-emotional distress, this study examined a five-item version (SEDS-S-Brief) to evaluate its use for surveillance of adolescents’ wellness in schools. Three samples completed the SEDS-S-Brief. Sample 1 was a cross-sectional sample of 105,771 students from 113 California secondary schools; responses were used to examine validity evidence based on internal structure. Sample 2 consisted of 10,770 secondary students who also completed the Social Emotional Health Survey-Secondary-2020, Mental Health Continuum–Short Form, Multidimensional Student Life Satisfaction Scale, and selected Youth Risk Behavior Surveillance items (chronic sadness and suicidal ideation). Sample 2 responses examined validity evidence based on relations to other variables. Sample 3 consisted of 773 secondary students who completed the SEDS-S-Brief annually for 3 years, providing response stability coefficients. The SEDS-S-Brief was invariant across students based on sex, grade level, and Latinx status, supporting its use across diverse groups in schools. Additional analyses indicated moderate to strong convergent and discriminant validity characteristics and 1- and 2-year temporal stability. The findings advance the field toward comprehensive mental health surveillance practices to inform services for youth in schools.

The December 2021 U.S. Surgeon General’s Advisory provided a direct call to action in support of youth mental health, identifying schools as a critical setting for supporting the mental health of all students. Staggering statistics from the Youth Risk Behavior Surveillance Survey (YRBSS) of the Centers for Disease Control indicate that one in three students in high school has reported persistent feelings of sadness or hopelessness, with an increase of 40% from 2009 to 2019 (Centers for Disease Control and Prevention, 2021). Similarly, there was a significant increase in suicidal behaviors, with approximately one in six students making a suicide plan in 2019, representing a 44% increase since 2009. Additional research has shown significant increases in anxiety, depression, and other post-traumatic symptoms during the COVID-19 pandemic (de Miranda et al., 2020), with substantial negative impacts on students from marginalized and minoritized communities (Song et al., 2021).

As the awareness of youths’ mental health challenges among adolescents gain recognition as a public health concern, schools and communities must have appropriate epidemiological surveillance tools for all students. They need practical measures with strong evidence supporting their use to assess current needs accurately and monitor youth mental health trends. Surveillance measures are helpful to monitor the prevalence of conditions (e.g., sadness, suicidality) within a population (e.g., nationwide, statewide, or schoolwide), measure progress toward achieving health objectives, assess trends in behaviors, or evaluate the impact of interventions at a population-based level (Kann, 2001). Relatedly, school-based mental health screening has been advised as a population-based approach to inform and monitor youth mental health needs (Dowdy et al., 2010). This approach, built on a public health framework, gathers all mental health indicators of students to monitor their mental health status. Screening measures in educational contexts evaluate student-specific needs for support services, whereas anonymous surveillance measures more broadly assess population (national, state, or local) level trends.

The Center for Disease Control uses the YRBSS as a population-based surveillance instrument to examine national and state behavioral trends that pose a health risk to students, including substance use, violence-related behaviors, and sexual activity. Despite limited information collected explicitly related to youth mental health, the YRBSS gathers information on sadness, hopelessness, and suicidal behaviors on a biannual basis. Some states also collect surveillance information on student behaviors. For example, the California Healthy Kids Survey (CHKS, see www.wested.org/chks) is currently the largest statewide surveillance survey used to gather data on the prevalence of mental health indicators in schools. Data are gathered at a minimum from Grades 7, 9, and 11 and include YRBSS items. Additionally, some schools opt to collect data more frequently and with different grade levels to help examine the prevalence and needs of students in California schools. In recognizing the need for additional information on youth mental health, other supplementary modules of the CHKS have been offered to schools to gather more detailed information on social-emotional health and well-being.

Study Context and Purpose

As part of collaborative efforts across California, the Social Emotional Distress Survey-Secondary (SEDS-S; Dowdy et al., 2018) and the Social Emotional Health Survey–Secondary (SEHS-S-2020; Furlong et al., 2020) were included in the CHKS to examine youth mental health symptoms more comprehensively. These two measures were part of a complete mental health approach to universal mental health surveillance, with the SEHS-S-2020 measuring youths’ strengths and the SEDS-S measuring youths’ distress symptoms. Historically, mental health has been defined and measured as a unidimensional construct—psychopathology’s presence or absence. In moving beyond a unidimensional model, researchers have proposed complete mental health models (Dual-Factor Model: Furlong et al., 2022; Greenspoon & Saklofske, 2001; Suldo & Shaffer, 2008; Dual-Continua Model: Keyes, 2005, 2006, 2013) offering a strengths-focused approach that simultaneously considers social-emotional strengths and psychological distress indicators. The complete mental health framework provides a culturally appropriate approach to surveillance (Romer et al., 2020) while yielding a fuller picture of diverse learners’ psychosocial functioning conducive to schools’ efforts to alleviate identified youths’ negative psychological symptoms (Dowdy et al., 2018). The current emphasis on complete mental health emphasizes the need for distress and strengths measures to ensure appropriate use with diverse youth subgroups.

Optimally efficient measures are needed to responsively assess the effects of emerging educational and social contexts, such as remote instruction or the additional challenges many communities face during the COVID-19 pandemic. Due to the pandemic and the increasing demands placed on schools and students, a short version of the SEDS-S, named the SEDS-S-Brief, was created as a youth mental health surveillance tool to include in statewide CHKS administrations. This study examines the short version of the SEDS-S for use in schoolwide surveillance of mental wellness.

Social Emotional Distress Survey-Secondary-Brief

The original SEDS-S had 10 items with satisfactory unidimensional model fit (Dowdy et al., 2018); however, a shorter version might adequately accomplish schools’ epidemiological surveillance needs. In addition, a shorter version would be responsive to the desires of school personnel to reduce the length and burden of surveys. It would allow more flexibility to add items to statewide school wellness surveillance as critical issues arise. This reduced length could be essential as school administrators recognize that biannual surveys often insufficiently monitor students’ mental health changes. Additionally, the SEDS-S queries past month experiences instead of lifetime prevalence, assessing change across assessment administrations.

The SEDS-S was designed “not to measure syndrome patterns, but to broadly assess youth personal emotional distress within the school context” (Dowdy et al., 2018, p. 242). This emphasis on non-pathological emotional distress, instead of more specific low-incidence diagnostic symptoms, lends itself to broad-based surveillance. Additionally, the SEDS-S was designed for surveillance contexts. The original 10-item version had solid psychometric properties in an initial study—high-reliability estimates, good unidimensional model fit, significant positive relations with symptoms of anxiety and depression, and significant negative relations with life satisfaction and personal strengths scores (Dowdy et al., 2018).

Modern validity theory conceptualizes validity as a unified construct. Validation is a continuing process (Messick, 1995) informed by evidence specific to a measure’s test content, response processes, internal structure, relation to other variables, and consequences of testing (American Educational Research Association, American Psychological Association, & National Council on Measurement in Education, 2014). Additionally, cross-cultural examination of measures is considered a best practice for scale development (You et al., 2014). Because K–12 enrollment is increasingly diverse (U.S. Department of Education, 2019), school-based assessments need to be specifically examined for use with today’s culturally heterogeneous youths. It is even more relevant for state education agencies serving diverse populations, such as California’s public schools. A necessary measurement validation step is to examine the SEDS-S-Brief for use with secondary school students. Because it is administered universally to all students, it is essential to explore its use with various sociocultural groups in order to promote valid score inferences for all intended examinees. The current study extends previous research and evaluates several types of psychometric evidence for the SEDS-S-Brief by examining the following research items:

validity evidence based on internal structure for youth across sex, grade level, and Latinx identification;

validity evidence based on relationships with other variables (convergent and discriminant); and

temporal stability over 1- and 2-year periods.

These analyses are needed to determine if the SEDS-S-Brief has essential assessment characteristics for population-based mental health surveillance. Based on prior investigations with the long-form (Dowdy et al., 2018), we anticipated unidimensional fit, invariance across important subgroups, strong convergent and discriminant validity, and acceptable stability estimates.

Method

Samples and Procedures

This report draws on three independent samples to examine the SEDS-S-Brief for validity evidence based on internal structure, validity evidence based on relations to other variables (convergent and discriminant), and temporal stability (AERA, APA, & NCME, 2014).

Sample 1: Cross-sectional sample to examine internal structure

Participants

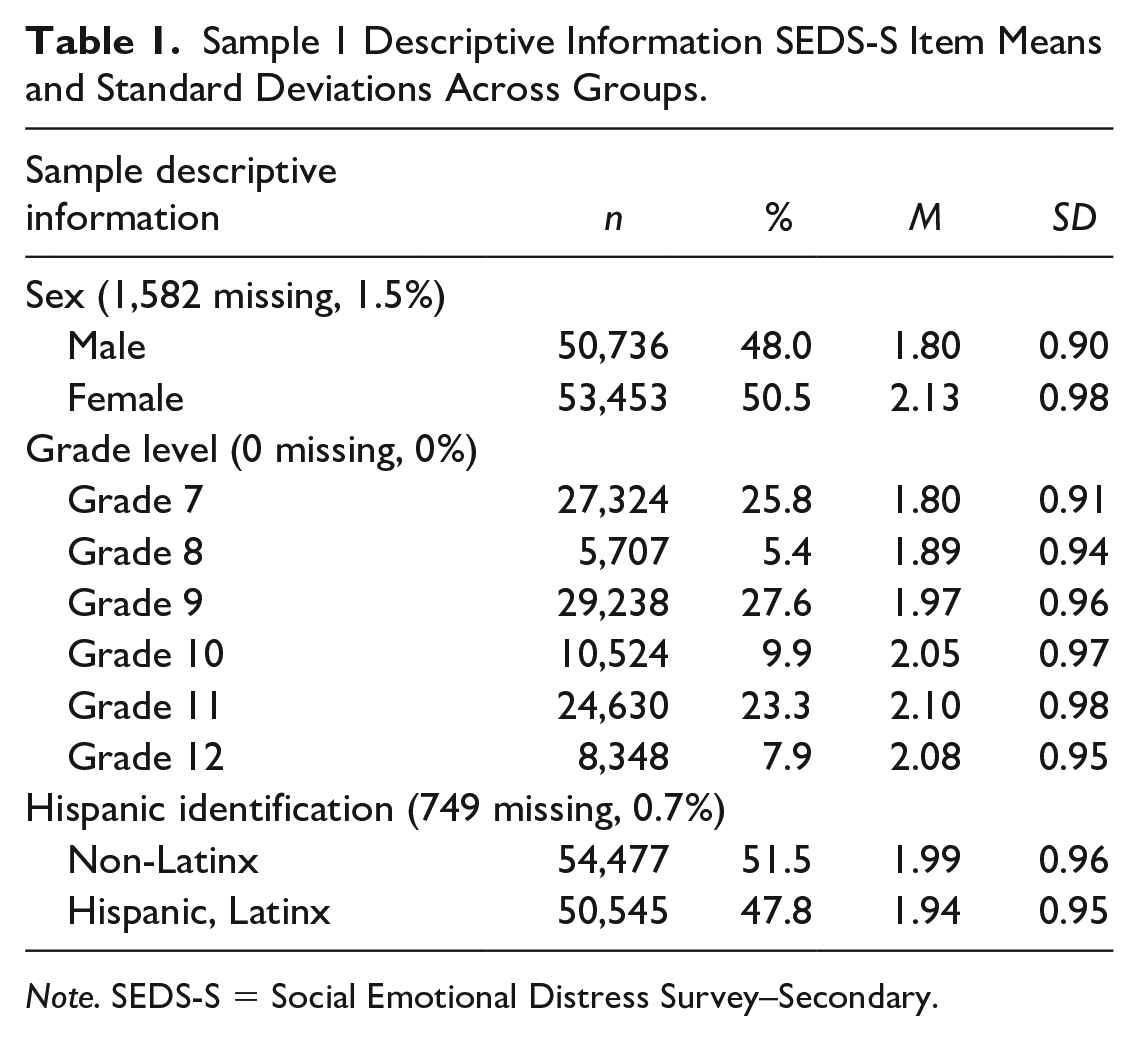

The detailed CHKS survey management guide directs each school site survey manager to seek a minimum of 60% student participation to obtain valid results. Student responses were from 113 secondary schools (in 34 of California’s 58 counties, including 82 school districts) located in urban, suburban, and rural communities. This cross-sectional sample contained 105,771 students. Random subsamples of this overall cross-sectional sample were used to examine internal structure. See Table 1 for Sample 1 demographics and descriptive information.

Sample 1 Descriptive Information SEDS-S Item Means and Standard Deviations Across Groups.

Note. SEDS-S = Social Emotional Distress Survey–Secondary.

Procedures

Data collection was part of the California State Department of Education’s initiative to provide local education agencies with school quality indicators. WestEd administered and managed the CHKS. Student responses were collected between October 2017 and June 2019. The CHKS is a comprehensive school-based surveillance survey used in California for over 20 years. The SEDS-S-Brief was administered as part of regular annual CHKS surveys. Parents of the student participants received an introductory letter and a consent form. Consistent with CHKS procedures, parents provided passive consent, and students provided assent. A school-site administrator coordinated the CHKS online survey administration during a regular class session (see https://calschls.org/survey-administration/). All students in Grades 7, 9, and 11 in attendance on the survey administration day had the opportunity to take the survey. Based on school preference, some schools offered all students (including Grades 8, 10, and 12) the option to take the survey. All measures were self-report, including demographic questions, and were delivered via an online platform. This study included responses to the survey English version.

Sample 2: Cross-sectional sample to examine relations to other variables

Participants

This sample consisted of 10,770 students in Grades 9 to 12 attending 15 California high schools. The survey participation rate was 57.3%, and 91.3% of students who attempted the survey provided usable responses. The sample was evenly balanced by sex (48.0% male, 50.5% female, 1.5% missing). There was diversity in student ethnicity identification (36.3% White, 34.5 two or more group identities, 11.6% Asian, 5.9% American Indian/Alaskan Native, 3.7 Black African American, and 2.1% Native Hawaiian/Pacific Islander). Forty-eight percent of the sample identified as Hispanic/Latino/a/x.

Procedures

The SEDS-S-Brief items were part of a social-emotional health module added to the 2017 and 2018 California Healthy Kids Survey core module. The secondary schools included in this sample were the first 15 randomly selected schools that agreed to ask their students to complete the wellness module, including additional indicators used to examine relations to other variables. In addition to the SEDS-S-Brief, students completed the Mental Health Continuum–Short Form (MHC-SF; (Keyes, 2005, 2006; SEHS-S-2020; Furlong et al., 2020), Brief Multidimensional Student Life Satisfaction Scale (BMSLSS, Huebner et al., 2006), a global life satisfaction item from the Student Life Satisfaction Scale (SLSS; Huebner, 1991), and suicide ideation and chronic sadness items from the CHKS (see descriptions in the “Measures” section). The online survey used the standard CHKS procedures (see https://calschls.org/survey-administration/), which includes multiple steps to maximize the quality of the data. For example, the CHKS includes case reject criteria that remove students with inconsistent or impossible response patterns. We addressed low-effort responders by not including students who completed the survey in <10 min. Students reporting answering “hardly any” items honestly were not included.

Sample 3: Longitudinal sample to examine temporal stability

Participants

To evaluate the SEDS-S-Brief temporal stability, we used the responses of 783 (28% of total enrollment) students who completed a California school district’s annual wellness surveillance survey in October 2019, 2020, and 2021. This sample’s survey was independent of the annual CHKS administration. The Sample 3 students attended one of five secondary schools and in 2019 (Year 1) were in Grades 6 (17.6%), 7 (15.5%), 8 (15.2%), 9 (32.7%), and 10 (19.0%). The sample was 47.9% males, 46.6% females, 2.6% non-binary, and 2.9% other—most students identified as White (56.6%) and Latinx (26.2%).

Procedures

Following university human participants committee approval, passive parental consent, and student permission, students in the stability sample completed an online Qualtrics formatted survey using tablets in a classroom or school computer lab. Student responses were not used for the current study if the parents requested they not be used or if the student did not provide assent. Mirroring CHKS procedures, multiple steps increased data quality, including teacher scripts, several items examining for inconsistent response patterns, and items designed to identify mischievous responders (Furlong et al., 2017). The survey portal was open for two weeks allowing students multiple opportunities to complete the survey. All survey administrations used the same online format and survey URL link. In 2019 and 2021, the surveys were administered by school staff during a regular class session using a standard script. In 2020, students completed the survey remotely because the schools were using remote instruction due to the COVID-19 pandemic.

Measures

Social Emotional Distress Scale–Secondary-Brief

The Social Emotional Distress Scale–Secondary-Brief (SEDS-S-Brief) includes five items from the original 10-item SEDS-S measure of internalizing psychological distress. Item selection considered multiple factors, including

expert input (i.e., three doctoral-level school psychologists with expertise in school wellness surveillance);

item performance from prior investigations with the longer measure (i.e., selected the four items with the highest loadings and an additional highly loaded item with broader construct coverage; the frequency of affirmative responses to ensure items had a range of endorsement); and

theory (i.e., desired balance of a range of the desired construct of emotional distress experiences).

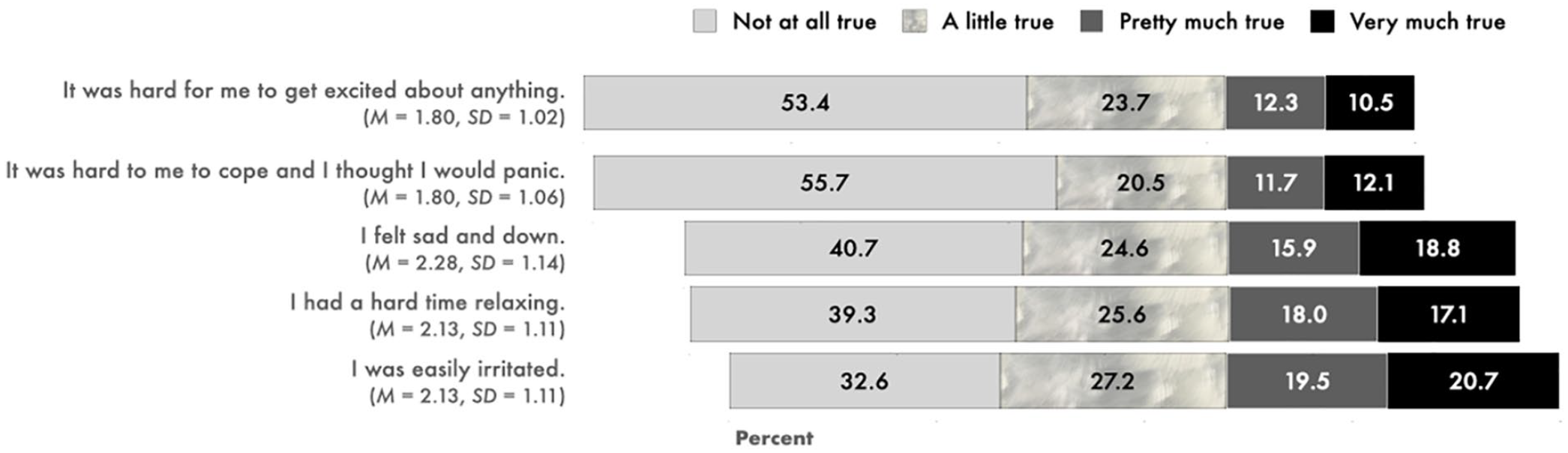

Students rated the degree to which they experienced distress symptoms in the past month (e.g., In the past month, it was hard for me to get excited about anything) on a 4-point response scale (1 = not at all true, 2 = a little true, 3 = pretty much true, and 4 = very much true). A confirmatory factor analysis (CFA) supported a unidimensional factor structure of the 10-item version with high internal consistency (α = .91; Dowdy et al., 2018). In the present study, the five-item version had comparable internal consistency for the entire sample (α = .90). See Figure 1 for SEDS-S-Brief items, category percentages, item means, and standard deviations.

SEDS-S-Brief Items, Category Percentages, Item Means, and Standard Deviations.

Social Emotional Health Survey–Secondary

The Social Emotional Health Survey–Secondary (SEHS-S) 2020 is a 36-item self-report measure with 12 subscales that assesses psychosocial strengths informed by social-emotional learning and positive youth development research. It was previously co-administered with the SEDS-S for use in complete mental health screening (see Furlong et al., 2022 for a description of the use of these measures in complete mental health screening). The SEHS-S-2020 was used in this study’s discriminant validity analyses to evaluate how the SEDS-S-Brief relates to measures of social-emotional health. The SEHS-S-2020 contains 12 subdomains that are associated with four correlated general positive social-emotional health domains (i.e., Belief-in-Self, Belief-in-Others, Emotional Competence, Engaged Living) and assess a higher-order covitality latent construct. The items use the same 4-point response format as the SEDS-S (1 = not at all true, 2 = a little true, 3 = pretty much true, and 4 = very much true). Students with more SEHS-S-2020 strengths report positive mental well-being and low levels of emotional risk behaviors (Furlong et al., 2020). The current sample reported high reliability (α = .95, ω = .95) for the covitality total score.

Mental Health Continuum–Short Form

MHC-SF’s (Keyes, 2005) 14 items measure emotional (EWB), psychological (PWB), and social (SWB) well-being. Previous studies support a three-factor structure (Keyes, 2006). The MHC-SF was used in discriminant validity analyses to evaluate how the SEDS-S-Brief relates to this widely used criterion mental health indicator. The question stem is, During the past month, how often did you feel the following ways: (a) an example item for emotional well-being is . . . happy; (b) an example item for the psychological well-being is . . . that you liked most parts of your personality; and (c) an example item for social well-being is, . . . that society is a good place or becoming a better place for all people. Response options are 0 = never, 1 = once or twice, 2 = about once a week, 3 = 2 or 3 times a week, 4 = almost every day, and 5 = every day. Individuals are classified with flourishing mental health when they respond “every day” or “almost every day” to at least one of the three EWB items and at least 6 of the 11 PWB-SWB items. Students are classified as having languishing mental health when they respond “never” or “once or twice” to at least one of the three EWB items and at least 6 of the 11 PWB-SWB items. All other response patterns are classified as moderate mental health. For Sample 2, the three subscales had high reliability (EWB α = .88, SWB α = .90, and PWB α = .92).

Brief Multidimensional Student Life Satisfaction Scale

We used the BMSLSS (Huebner et al., 2006) items that asked students to judge their overall satisfaction for five broad life domains: Friends, Family, Self, Living Environment, and School. Research supports its internal consistency among high school students (a = .81; Zullig et al., 2001). Convergent validity is documented with the comprehensive Multidimensional Students’ Life Satisfaction Scale (r = .69, Seligson et al., 2003, 2005; r = .62). Factor analyses support a single factor structure (Seligson et al., 2003, 2005) In the current study, the response options were: 0 = strongly dissatisfied, 1 = moderately satisfied, 2 = mildly dissatisfied, 3 = mildly satisfied, 4 = moderately satisfied, and 5 = strongly satisfied. Sum scores range from 0 to 25, with higher scores indicating greater life satisfaction. The reliability of the BMSLSS total life satisfaction score for Sample 2 was .81.

Student Life Satisfaction Item

The SLSS was designed specifically for youth ages 8 to 18 years old (Huebner, 1991). We used one item from Huebner’s (1991) SLSS to assess general, global life satisfaction: My life is going well. The 6-point response options were: 1 = strongly disagree, 2 = moderately disagree, 3 = mildly disagree, 4 = mildly agree, 5 = moderately agree, and 6 = strongly agree.

Chronic Sadness and Suicide Ideation Items

The CHKS survey included two YRBSS self-report items (Kann et al., 2018) to examine convergent validity. The items asked students (yes or no) during the past 12: Did you ever seriously consider attempting suicide? Did you experience periods of persistent feelings of sadness or hopelessness (i.e., almost every day for 2 weeks or more in a row so that the student stopped doing some usual activities)?

Data Analysis Plan

Validity evidence based on internal structure

Using Sample 1 responses, CFA with Mplus 8.6 (Muthén & Muthén, 1998–2021) evaluated the internal structure of the SEHS-S-Brief. A CFA with a random subsample of (n = 10,000) students with treated items as categorical (1–4) was used to evaluate the internal structure of the brief measure. Subsamples were extracted from this overall cross-sectional Sample 1 to prevent the same participants from being included in each analysis. We used a weighted least square mean and variance adjusted (WLSMV) estimator (Asparouhov & Muthén, 2010). Model fit was assessed using recommendations from the literature: comparative fit index (CFI > .95), root mean square of approximation (RMSEA < .05), and standardized root mean square residual (SRMR < .05) indicated satisfactory model fit (Hu & Bentler, 1999).

Using Sample 1 responses, measurement invariance (MI) was used to assess if an instrument (i.e., the SEDS-S-Brief) measures the same constructs across groups (Millsap, 2012). Evaluating MI related to universal surveillance practices is essential to ensure appropriate use and assess the precision of information obtained through an instrument used across diverse groups. When established, MI increases an instrument’s generalizability, indicating that items function similarly within the factor model across different subgroups in a larger sample. Without MI, it is inconclusive whether observed subpopulation differences result from demographic or structural differences (Millsap, 2012). To test if the SEDS-S-Brief is invariant across various demographic subgroups, multigroup CFA examined MI for (a) sex, (b) grade level, and (c) Latinx status. This analysis used Mplus version 8.6 (Muthén & Muthén, 1998–2021) with WLSMV estimator and unit variance identification. Using random subsamples of n = 2,500 for each subgroup (i.e., sex = male, female; Grade level = 7, 9, 11; Latinx status = Latinx, non-Latinx) from the overall cross-sectional Sample 1, CFAs predetermined each subgroup’s model fit. Subsequently, multigroup CFAs evaluated MI (Chen, 2007).

Three levels of invariance were examined: (a) configural invariance (same number of factors and pattern of fixed and freely estimated parameters hold across groups); (b) metric invariance (equivalence of factor loadings indicating that respondents from multiple groups attribute the same meaning to the latent construct); and (c) scalar invariance (invariance of both factor loadings and item intercepts, indicating that the meaning of the construct and the levels of the underlying items are equal across groups). Configural invariance confirms that the model structure fits the data well for each subgroup. Metric invariance is then established if the ΔCFI < .01 and ΔRMSEA < .015 or ΔSRMR < .03 when compared with the configured model (Chen, 2007). Lastly, scalar invariance demonstrates that participants’ scores on the latent construct and observed variable will be similar across groups. Scalar invariance is confirmed when the comparison to the metric model yields a ΔCFI < .01 and ΔRMSEA < .015 or ΔSRMR < .03 (Chen, 2007). A chi-squared difference test for WLSMV was estimated to compare nested models; however, given documented concerns with the use of this difference test for invariance (see, for example, Counsell et al., 2020) we relied more on the goodness of fit indices to assess if there was evidence of MI.

Validity evidence based on relations to other variables

Using Sample 2 responses, receiver operating characteristic (ROC) analysis examined the association of the continuous SEDS-S-Brief score with the binary suicide ideation and chronic sadness items. A significant and substantial ROC area under the curve (AUC) finding would be a positive validity indicator (Mossman & Somoza, 1991). The MHC-SF also provided validity evidence by comparing the SEDS-S-Brief mean scores for the Languishing, Moderate Mental Health, and Flourishing groups. A finding that the mean SEDS-S-Brief for the Languishing group was significantly higher than the other two MH-SF groups provides validation information. Similarly, we compared the SEDS-S-Brief mean scores for the six BSLSS item–response options. Higher SEDS-S-Brief mean values for students responding moderately or strongly disagree would provide validation evidence. Finally, we evaluated discriminant evidence by computing the Pearson correlation between the SEDS-S-Brief, BMLSS total scores, and SEHS-S-2020 covitality scores. All validity analyses used Sample 2 and SPSS v28.01, considering their Cohen effect sizes.

Temporal stability

The Sample 3 students answered the SEDS-S-Brief items three times in October 2019, 2020, and 2021, providing 1 (2019–2020, 2020–2021) and 2-year (2019–2021) stability coefficients. These correlation coefficients were computed with SPSS v28.01 considering their Cohen effect size.

Results

Validity Evidence Based on Internal Structure

The CFA for the SEDS-S-Brief demonstrated good model fit, χ2(5) = 201.267, p < .001, CFI = .998, RMSEA =.063, 95% confidence interval (CI) = [.055, .070], and SRMR = .008. The standardized factor loadings for the one-factor model were all large, ranging from .84 to .91.

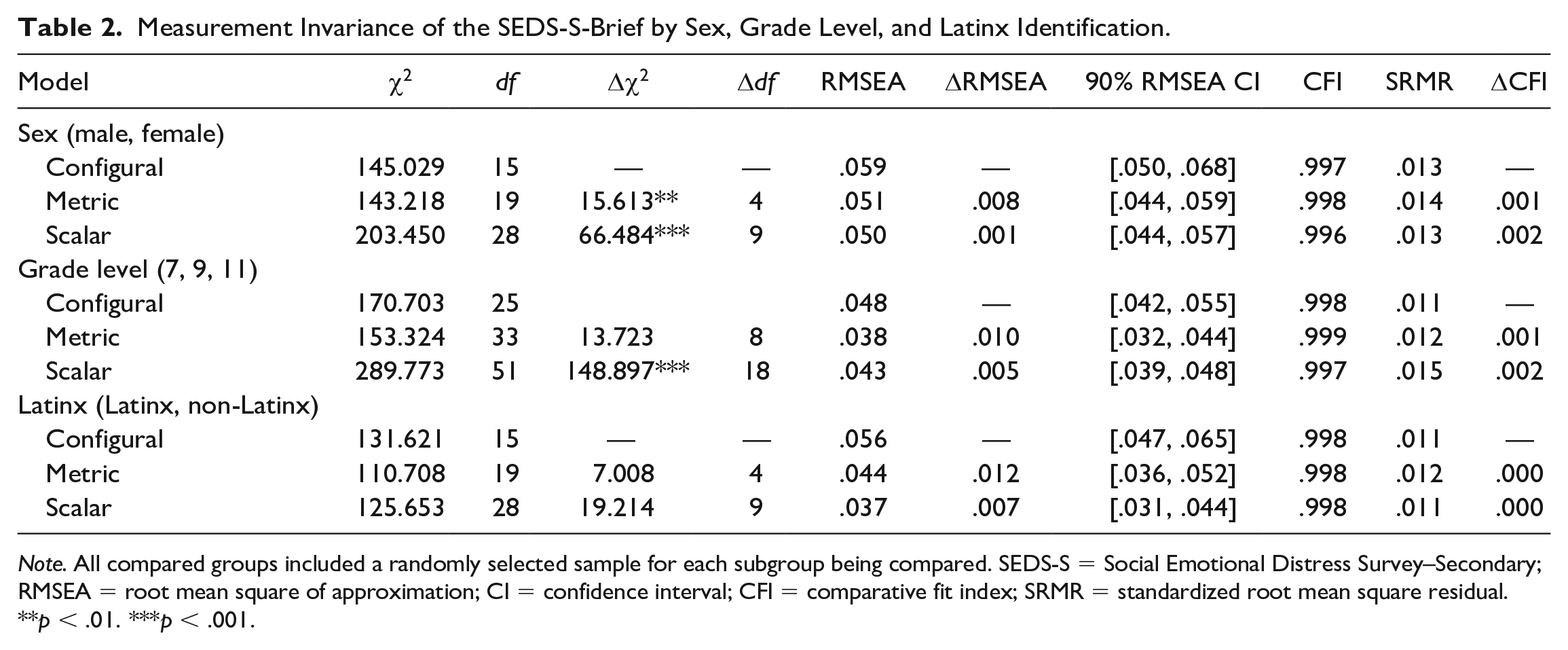

To examine measurement invariance (MI) across sex, we compared the configural CFA model to the model with metric invariance across sex. Metric invariance was established (ΔCFI = .001, ΔRMSEA = .008, ΔSRMR = .001), suggesting that constraining the factor loadings equal across males and females does not significantly increase model misfit. When considering ΔCFI and ΔRMSEA, both show a minimal shift in the model fit. Thus, there is evidence that metric invariance does hold. Equality of the unstandardized item intercept was tested across groups and compared with the Metric model. The scalar model fit well but did not decrease model fit (ΔCFI = .002, ΔRMSEA = .001, ΔSRMR = .001), hence scalar invariance was supported (see Table 2).

Measurement Invariance of the SEDS-S-Brief by Sex, Grade Level, and Latinx Identification.

Note. All compared groups included a randomly selected sample for each subgroup being compared. SEDS-S = Social Emotional Distress Survey–Secondary; RMSEA = root mean square of approximation; CI = confidence interval; CFI = comparative fit index; SRMR = standardized root mean square residual.

p < .01. ***p < .001.

Next, to examine MI across grade levels, we compared the configural CFA model to the metric invariance model across three grade levels (7, 9, and 11), as these are the grades required in California for administration on a biannual basis. Metric invariance was established (ΔCFI = .001, ΔRMSEA = .01, ΔSRMR = .001), suggesting that constraining the factor loadings equal across grade level does not significantly increase model misfit. When considering ΔCFI and ΔRMSEA, both show a minimal shift in the model fit. Thus, there is evidence that metric invariance does hold. Equality of the unstandardized item intercept was tested across groups and compared with the Metric model. The scalar model fit well but did not decrease model fit (ΔCFI = .002, ΔRMSEA = .005, ΔSRMR = .003), suggesting that scalar invariance was supported with this sample when considering grade level differences.

Finally, we compared the configural CFA model to the metric invariance model across Latinx status. Evidence supported metric invariance (ΔCFI = .000, ΔRMSEA = .012, ΔSRMR = .001), suggesting that constraining the factor loadings equal across Latinx status does not significantly increase model misfit. When considering ΔCFI and ΔRMSEA, both show a minimal shift in the model fit. Thus, there is evidence that metric invariance does hold. Equality of the unstandardized item intercept was tested across groups and compared with the Metric model. The scalar model fit well but did not decrease model fit (ΔCFI = .000, ΔRMSEA = .007, ΔSRMR = .001), suggesting that scalar invariance was supported with this sample when comparing Latinx and non-Latinx samples. Overall, tests for MI showed invariance across sex, grade level, and Latinx status (see Table 2).

Validity Evidence Based on Relations to Other Variables

We first examined the Sample 2 SEDS-S-Brief association with YRBSS convergent validity indicators—36.0% of the students reported past-year chronic sadness, and 18.2% reported past-year suicidal ideation. The ROC analyses showed that the SEDS-S-Brief sum value used as a binary classifier was substantially associated with chronic sadness, AUC = .850, 95% CI = [.843, .858], and suicidal ideation, AUC = .818, 95% CI = [.808, .828].

A second analysis contrasted the total SEDS-S-Brief scores for the three MHC-SF global well-being groups. The mean SEDS-S-Brief responses for the Languishing (L; M = 8.55, SD = 4.70, n = 1,828), Moderate Mental Health (MMH; M = 6.29, SD = 4.25, n = 3,577), and Flourishing (F; M = 3.59, SD = 3.66, n = 4,415) groups were significantly different, F (2, 9817) = 1,061.39, p < .0001, η2 = .178 (large effect size; L > MMH > F).

To further aid interpretation of the SEDS-S-Brief values, a third analysis examined the mean SEDS-S-Brief values by response options for the SLSS item My life is going well. The mean SEDS-S-Brief values were significantly different across the six response options: strongly disagree (SD; M = 9.12, SD = 5.31, n = 433), moderately disagree (ModD; M = 9.85, SD = 4.10, n = 543), mildly disagree (MD; M = 8.90, SD = 4.00, n = 788), mildly agree (MA; M = 7.21, SD = 4.02, n = 2,020), moderately agree (ModA; M = 4.68, SD = 3.74, n = 3,144), and strongly agree (SA; M = 2.82, SD = 3.40, n = 2,671), F(5, 9,593) = 709.35, p < .0001, η2 = .27 (large effect size; ModD > SD, MD > MA > ModA > SA).

To examine discriminant validity, we examined associations between the SEDS-S-Brief total score and measures of positive psychological states. The correlations with the total SEHS-S-2020 covitality score (r = −.32) and the total BMSLSS life satisfaction (r = −.47) were significant and in the expected negative direction.

Temporal Stability

The 1-year (2018–2019: r = .55, 95% CI = [.49, .60]; 2020–2021: r = .60, 95% CI = [.55, .64]); and 2-year (2019–2021: r = .49, 95% CI = [.43, .54]) coefficients indicated that the Sample 3 student responses were moderately stable even over a 2-year period. The 1-year coefficients represented large effect size associations and the 2-year coefficient represented a moderate effect size association.

Discussion

As more adolescents experience mental health disorders (Whitney & Peterson, 2019), mental wellness programs created for them must be informed by comprehensive prevalence information. Additionally, there is a strong need for short measures used in epidemiological efforts to assess and monitor youth’s mental health. The school-based mental health literature indicates that universal screening enhances early prevention and intervention efforts, and brief surveillance measures can enhance a school’s ability to monitor well-being trends (Furlong et al., 2020). The current study supported the cross-cultural use of the shortened five-item SEDS-S-Brief for epidemiological surveillance use within school contexts.

Considering Sample 1’s size and data collection context, we expected that the unidimensional distress model would hold for various contexts and sociocultural groups. Calibration, validation, and MI CFA results robustly replicated the original longer form’s single-factor structure. Initial CFAs for each group and subgroup had satisfactory model fit. Invariance testing indicated that SEDS-S-Brief items measured the psychological distress construct similarly across demographic subpopulations, which is critical to support schoolwide surveillance efforts in which all students are included. This finding parallels the cross-group MI we have previously reported for the SEHS-S-2020 (Furlong et al., 2020), a strength-grounded measure included in the CHKS. This finding has practical implications for the applicability and utility of the SEDS-S-Brief, especially as it relates to population-level complete mental health surveillance using measures of personal strengths (e.g., SEHS-S-2020) and distress (e.g., SEDS-S-Brief). Accordingly, the SEDS-S-Brief may be used among diverse students in general and in varied contexts, which can help researchers and practitioners evaluate how sex, grade level, race/ethnicity, and other diversity considerations play a role in mental health differences.

Examining validity evidence related to other variables with Sample 2 is also essential to support the SEDS-S-Brief for schoolwide surveillance efforts. Similar to the initial study of the longer SEDS-S version (Dowdy et al., 2018), the briefer version is meaningfully related to other indicators of distress and well-being. In particular, as measured on the SEDS-S-Brief, distress is related in anticipated ways to life outcomes, including the YRBSS chronic sadness and suicidal ideation items routinely included in national and state surveillance efforts. The SEDS-S-Brief distress items provide information on student functioning to inform prevention and wellness programming. Additionally, student responses with higher levels of distress were also associated with lower levels of overall mental health, with students more likely to be categorized as Languishing than Flourishing and more likely to report lower levels of life satisfaction and personal strengths.

We also examined the stability of the SEDS-S-Brief. The SEDS-S-Brief items ask students to reflect and comment on their past month’s distressing emotional experiences, thereby assessing a student’s emotional experiences that might fluctuate in response to changing life circumstances. Since adolescence is a developmental stage associated with growth change and adaptation, one might expect SEDS-S-Brief responses to be more transient. The moderate temporal stability we found with Sample 3 probably does not mean that the SEDS-S-Brief measures a trait-like construct. Instead, it could be that the SEDS-S-Brief items function as intended; that is, the moderate stability is an indicator that the items ask students about non-pathological distressing experiences, ones that adolescents commonly experience. Findings support its use in surveillance efforts. We note that previous research examining the stability of adolescents’ emotions with the Positive and Negative Affect Schedule reported stability coefficients of .47 to .68 for positive affect and .39 to .71 for negative affect (Watson & Levin-Aspenson, 2018). Another possible factor is that, in 2020, the students were attending school virtually because of the COVID-19 pandemic. In the 2021 survey, they were in regular school attendance for less than 2 months. The challenge of distance learning and coping with the pandemic might have heightened student distress making SEDS-S-Brief responses similar even over a more extended period. Future research examining stability following remote learning and changing contexts due to the pandemic is warranted.

Implications for Schoolwide or Statewide Wellness Surveillance

Most surveillance surveys use individual items. A notable exception illustrating how a brief psychometrically sound measure can influence youth wellness surveillance is the School Connectedness Scale (SCS) from the Adolescent Longitudinal Health Study (Resnick et al., 1997). Eventually formatted as a five-item scale with strong psychometric evidence (Furlong et al., 2011; Libbey, 2004), the SCS provides an important school belonging indicator included in statewide surveillance surveys (e.g., CHKS). With this information, the Centers for Disease Control and Prevention created practical resources supporting school and community efforts to foster students’ human bonds at school (https://bit.ly/3nXCw0c). The SEDS-S-Brief items offer a similar potential advantage for universal wellness surveillance of a student distress construct. The item wording is not linked directly to mental illness diagnosis because it asks about emotional discomfort, which all people can experience. This item wording is optimal for a universal surveillance survey because it increases the relevance of the items for all students, emphasizing student adaptation, coping, and wellness.

Compared with individual surveillance items (e, g., chronic sadness, and suicidal ideation), the SEDS-S-Brief scale is arguably more reliable and has testable psychometric properties. Additionally, researchers can use it in analyses that use robust latent variables and a general school climate indicator (Aldridge et al., 2016).

Limitations

A limitation of the current study is that the samples were limited to California high school students. Consequently, while the findings are promising, they warrant further investigation across other regions within the United States and beyond. Demographic information on who consented to participate versus who did not provide consent was not available; this did not allow for examining selection bias within the sample. We also recognize that many additional areas for fruitful evaluation that will further inform the appropriate use of this measure across students with various intersecting identities. The United States boasts significant diversity, far beyond what was examined in this study by categorizing students as Latinx or not. Even within the Latinx community, there is significant diversity, and additional studies are needed to ensure that mental health surveillance tools are appropriate and sensitive for youth from various racial/ethnic backgrounds and intersecting identities (Galindo, 2021). To eliminate disparities in students’ service access, educators require access to carefully designed and thoroughly examined surveillance measures. Most importantly, surveillance measurement researchers must improve their examination of MI and conception beyond simple categorization based on race or ethnicity. With the recent adoption of the SEDS-S-Brief into the California Healthy Kids Survey with its large samples, we will be able to perform more granular analyses of its psychometric properties, respecting students’ diverse and intersecting identities. Also, since the administration of this surveillance measure as part of the CHKS procedures, there are improved questions related to gender identity, as opposed to sex; it will be critical to evaluate the MI of the SEDS-S-Brief across gender. Additionally, a thorough investigation into the psychometric properties of this measure translated into other languages will be foundational prior to recommended use and interpretation. Nevertheless, considering the current study’s large, diverse sample, the analyses provided initial validity evidence based on internal structure and comparability of the SEDS-S-Brief across sociocultural groups. Furthermore, it expands the literature base supporting its use to monitor students’ distress as a part of different universal surveillance programs.

Conclusion

Considering that the five-item SEDS-S-Brief will be used annually with over 250,000 students across California schools, the current study’s findings provide crucial support for the ongoing use of the SEDS-S-Brief across diverse student subgroups. Beyond California, the results advance the field toward considering enhanced ways to engage in surveillance for youth’s mental health in direct response to the U.S. Surgeon General’s call to action to protect youth’s mental health.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported in part by the Institute of Education Sciences, U.S. Department of Education, through Grant # R305A160157 to the University of California, Santa Barbara. The opinions expressed are those of the authors and do not represent views of the Institute of Education Sciences or the U.S. Department of Education.