Abstract

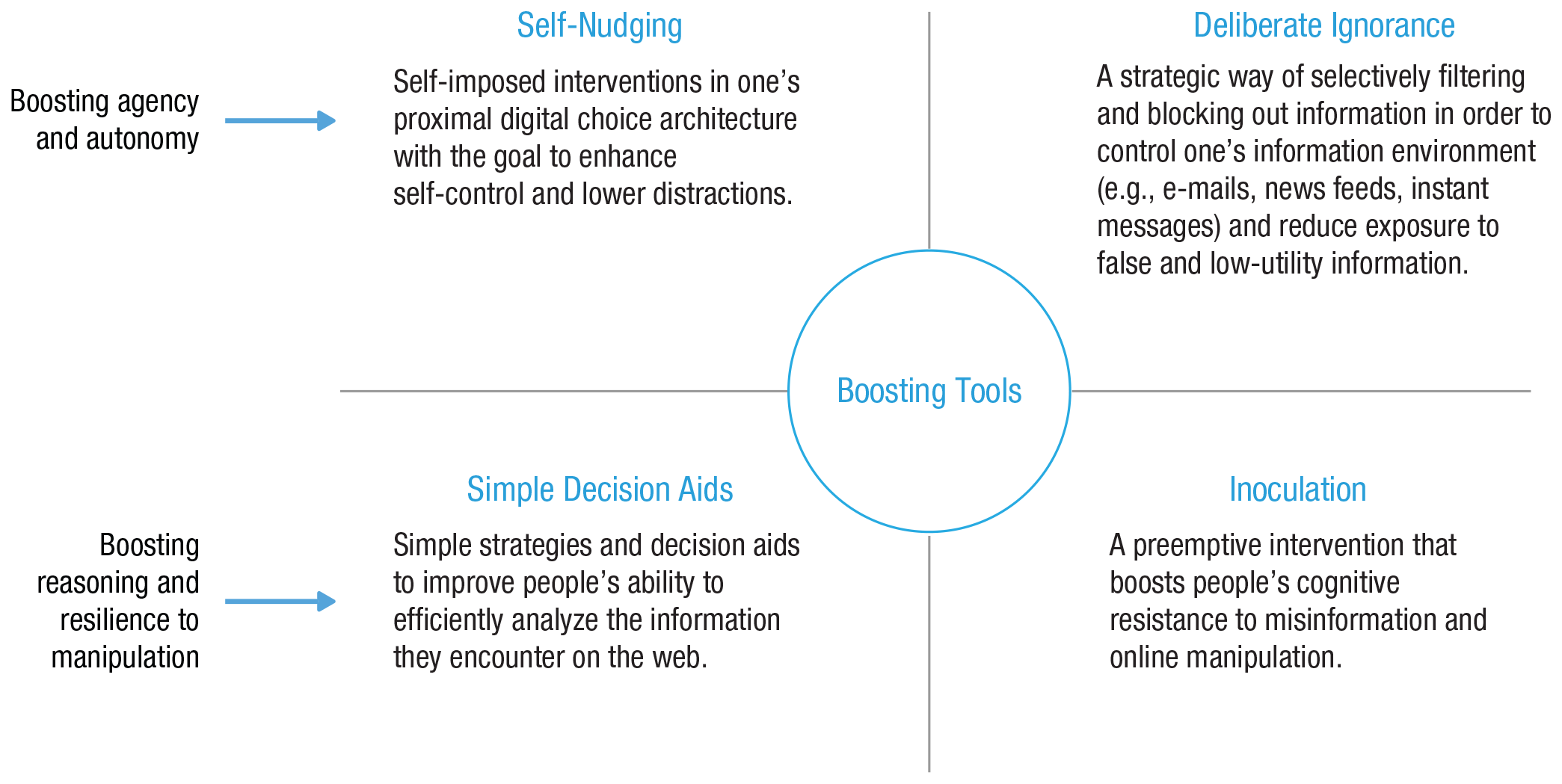

The Internet has evolved into a ubiquitous and indispensable digital environment in which people communicate, seek information, and make decisions. Despite offering various benefits, online environments are also replete with smart, highly adaptive choice architectures designed primarily to maximize commercial interests, capture and sustain users’ attention, monetize user data, and predict and influence future behavior. This online landscape holds multiple negative consequences for society, such as a decline in human autonomy, rising incivility in online conversation, the facilitation of political extremism, and the spread of disinformation. Benevolent choice architects working with regulators may curb the worst excesses of manipulative choice architectures, yet the strategic advantages, resources, and data remain with commercial players. One way to address some of this imbalance is with interventions that empower Internet users to gain some control over their digital environments, in part by boosting their information literacy and their cognitive resistance to manipulation. Our goal is to present a conceptual map of interventions that are based on insights from psychological science. We begin by systematically outlining how online and offline environments differ despite being increasingly inextricable. We then identify four major types of challenges that users encounter in online environments: persuasive and manipulative choice architectures, AI-assisted information architectures, false and misleading information, and distracting environments. Next, we turn to how psychological science can inform interventions to counteract these challenges of the digital world. After distinguishing among three types of behavioral and cognitive interventions—nudges, technocognition, and boosts—we focus on boosts, of which we identify two main groups: (a) those aimed at enhancing people’s agency in their digital environments (e.g., self-nudging, deliberate ignorance) and (b) those aimed at boosting competencies of reasoning and resilience to manipulation (e.g., simple decision aids, inoculation). These cognitive tools are designed to foster the civility of online discourse and protect reason and human autonomy against manipulative choice architectures, attention-grabbing techniques, and the spread of false information.

Keywords

In 1969, the year Neil Armstrong became the first person to walk on the moon, the precursor to the Internet—then known as ARPANET—was brought online. The first host-to-host message was sent from a computer at the University of California, Los Angeles, to a computer at Stanford University, and it read “lo”: The network crashed before the full message, “login,” could be transmitted. Fast forward half a century from this first step into cyberspace, and the Internet has evolved into a ubiquitous global digital environment, populated by more than 4.5 billion people and entrenched in many aspects of their professional, public, and private lives.

The Role and Responsibility of Psychological Science in the Digital Age

The evolution of digital technologies has given rise to possibilities that were largely inconceivable in 1969, such as instant worldwide communication, mostly unfettered and constant access to information, democratized production and dissemination of information and digital content, and the ability to coordinate global political movements. The COVID-19 pandemic and resulting lockdowns serve as a striking example of just how indispensable the Internet has become to the global economy as well as to citizens’ well-being and livelihood. With much of the world stuck at home, the Internet is one of the most important tools for connecting with others, finding entertainment and information, and learning and working from home. But as the popular adage goes, there is no such thing as a free lunch. Digital technology has also introduced challenges that imperil the well-being of individuals and the functioning of democratic societies, such as the rapid spread of false information and online manipulation of public opinion (e.g., Bradshaw & Howard, 2019; Kelly et al., 2017), as well as new forms of social malpractice, such as cyberbullying (Kowalski et al., 2014) and online incivility (A. A. Anderson et al., 2014). Moreover, the Internet is no longer an unconstrained and independent cyberspace but, notwithstanding appearances, a highly controlled environment. Online, whether people are accessing information through search engines or social media, their access is regulated by algorithms and design choices made by corporations in pursuit of profits and with little transparency or public oversight. Government control over the Internet is largely limited to authoritarian regimes (e.g., China, Russia); in democratic countries, technology companies have accumulated unprecedented resources, market advantages, and control over people’s data and access to information (Zuboff, 2019).

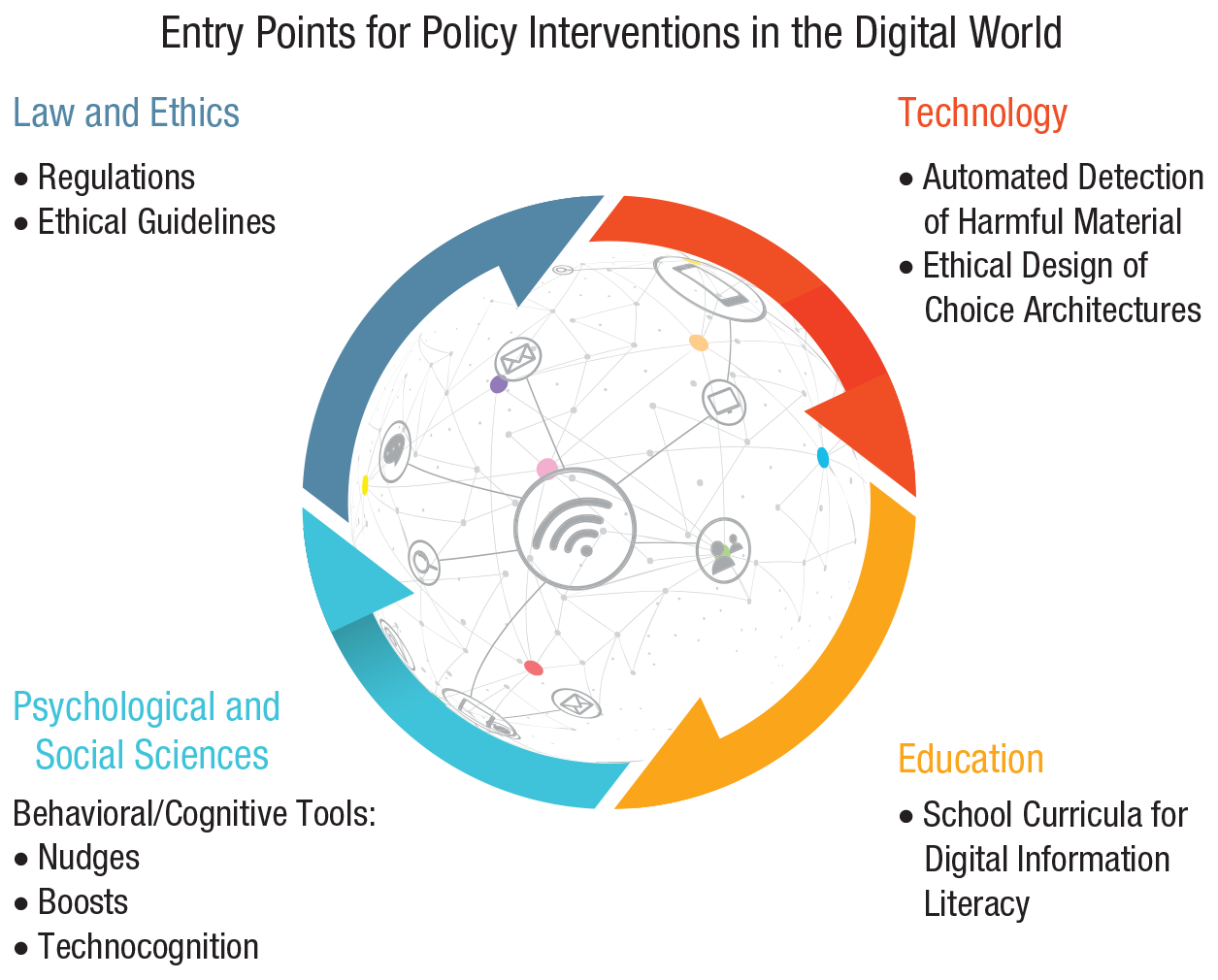

This hidden commercial regulation has been brought into sharp focus by several scandals implicating the social-media giant Facebook in unethical dealings with people’s data (“The Cambridge analytica files,” 2018). Regulators and the general public have awakened to the extent to which digital technologies and tech companies can infringe on people’s privacy and control access to information. Furthermore, these scandals have revealed the manipulative power of techniques such as “dark ads” (advertising messages that are visible only to those who are targeted by them) and microtargeting (customizing advertisements to particular individuals), which are meant to influence people’s decision-making and voting behavior, by exploiting their psychological vulnerabilities and personal identities (e.g., Matz et al., 2017). There is clearly no panacea for solving these problems. Instead, there are multiple entry points for addressing the existing and emerging challenges (Fig. 1; see also Lazer et al., 2018). We argue that psychological science is indispensable in the analysis of the key challenges to human cognition and decision-making in the online world but also in the design of ways to respond to them.

Entry points for policy interventions in the digital world: legal and ethical, technological, educational, and socio-psychological. Each entry point is shown with examples of potential policy measures and interventions. Entry points can inform each other; for instance, an understanding of psychological processes can contribute to the design of interventions for any entry point, and regulatory solutions can directly constrain and inform the design of technological and educational agendas. Icons are used under license from Adobe Stock.

The first entry point for interventions comes from the normative realm of law and ethics; this includes legislative regulations and ethical guidelines—for example, ethics guidelines for trustworthy AI by the High-Level Expert Group on Artificial Intelligence (2019) or the European Union (EU) Code of Practice on Disinformation (European Commission, 2018); for an overview of misinformation legislative actions worldwide, see also Funke and Flamini (2019). Regulatory interventions can, for instance, introduce transparent rules for data protection (e.g., the EU’s General Data Protection Regulation [GDPR]; European Parliament, 2016) or for political campaigning on social media and can impose significant costs for violating them; they can also implement serious incentives (and disincentives) for tech firms and the media to ensure that shared information is reliable and online conversation is civil. Regulatory initiatives should strive to create a coherent user-protection framework instead of the fragmentary legislative landscape currently in place (e.g., for Germany and the EU, see Jaursch, 2019). 1

The second entry point for interventions is technological: Structural solutions are introduced into online architectures to mitigate adverse social consequences. For example, social-media platforms can take technological measures to remove fake and automated accounts, ensure transparency in political advertisement, and detect and limit the spread of false news using automated or outsourced fact-checking (e.g., Harbath & Chakrabarti, 2019; G. Rosen et al. 2019). However, such measures are mainly self-regulatory, depend heavily on the company’s good will, and are often introduced only after considerable public, political, and regulatory pressure.

The third entry point for interventions is educational. These interventions are directed at the public as recipients and producers of information—for example, school curricula for digital-information literacy that teach students how to search, filter, evaluate, and manage data, information, and digital content (e.g., Breakstone et al., 2018; McGrew et al., 2019).

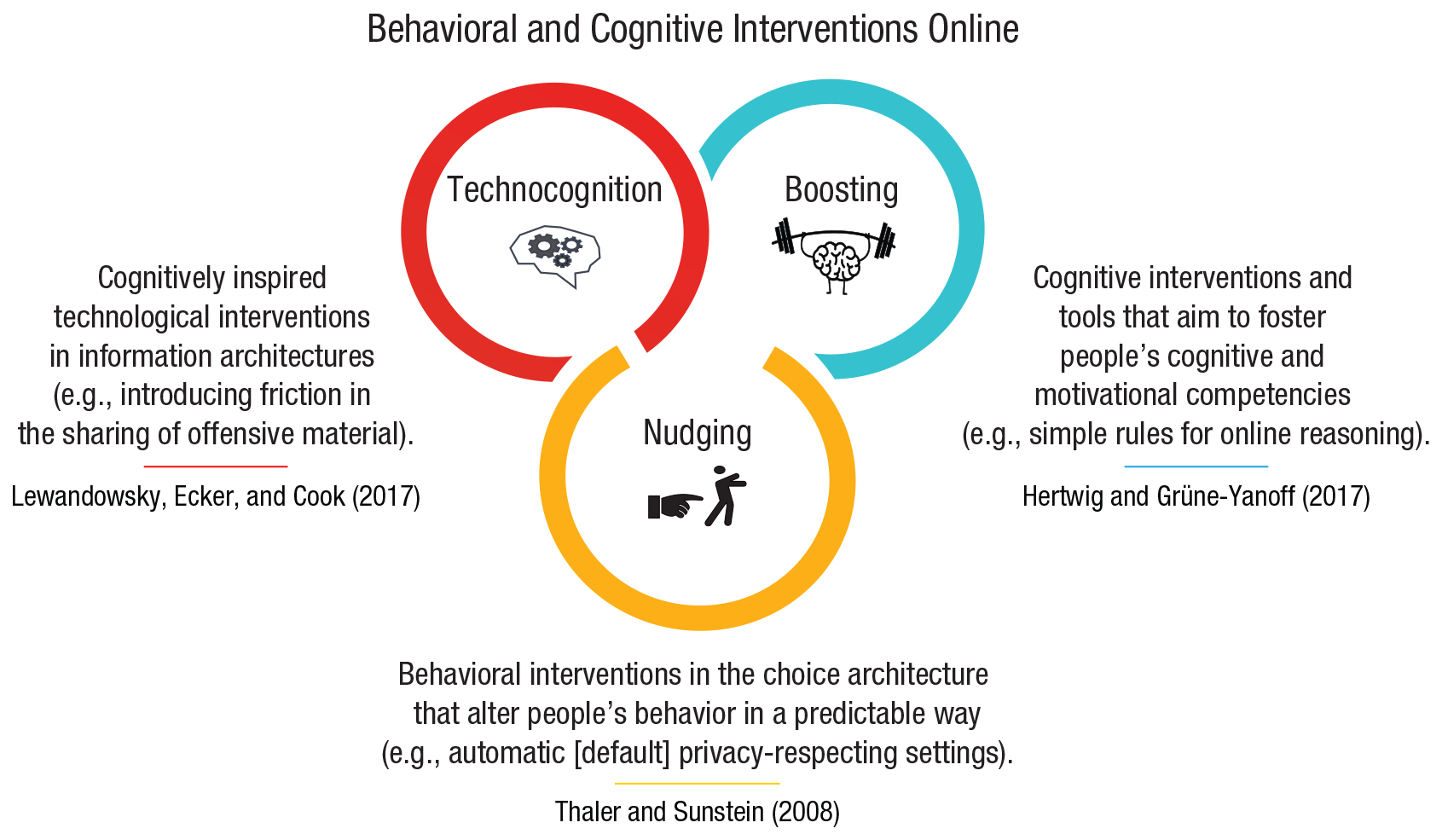

Finally, the fourth entry point for interventions comes from psychological and social sciences and includes behavioral and cognitive interventions: Here, nonregulatory, nonmonetary policy measures are implemented to empower people and steer their decision-making toward greater individual and public good. In online behavioral and cognitive policy making, which is the main focus of this article, there are three notable approaches to designing interventions. The first is

These four entry points for interventions—coming from law, technology, education, and psychological science—are interrelated, and they can and should inform each other. For example, regulations on the ethical design of digital technologies should inform technological, educational, and behavioral interventions. Moreover, understanding psychological processes is essential for all four approaches; for instance, behavioral and cognitive insights can be useful for designing both educational and technological tools as well as regulatory interventions.

In this article we are concerned specifically with behavioral and cognitive interventions. Our main aim is to identify key challenges to people’s cognition and behavior in online environments and then to present a conceptual map of our preferred cognitive intervention: boosting. We focus on boosts for several reasons. First, we hold that the philosophy of the Internet is one of empowerment (e.g., Deibert, 2019; Diamond, 2010; Khazaeli & Stockemer, 2013). This is reflected in the EU’s approach, which highlights citizen empowerment as a goal of European digital policy (European Commission, 2020). The president of the European Commission echoed this sentiment, stating that “Europe’s digital transition must protect and empower citizens, businesses and society as a whole” (von der Leyen, 2020, para. 11). Second, although the call to increase people’s ability to deal with the challenges of online environments is growing louder (e.g., Directorate-General for Communications Networks, Content and Technology, 2018; Lazer et al., 2018), there has been no systematic account of interventions based on insights from psychological science that could form the foundation of future efforts. Third, the Internet is a barely constrained playground for commercial policy makers and choice architects acting in accordance with financial interests; in terms of power and resources, benevolent choice architects in the public sector are at a significant disadvantage. It is therefore crucial to ensure that psychological and behavioral sciences are employed not to manipulate users for financial gain but instead to empower the public to detect and resist manipulation. Finally, and crucially, boosts are probably the least paternalistic measures in the toolbox of public-policy makers and potentially the most resilient in the face of rapid technological change, in that they aim to foster lasting and generalizable competencies in users.

We begin by comparing online and offline environments to prepare the ground for considering the impact that new digital environments have on human cognition and behavior (Systematic Differences Between Online and Offline Environments section). Second, we consider the challenges that people encounter in the digital world and show how they affect users’ cognitive and motivational abilities. We distinguish four types of challenges: persuasive and manipulative choice architectures, AI-assisted information architectures, false and misleading information, and distracting environments (Challenges in Online Environments section). Third, we turn to the question of how to counteract these challenges. We briefly review the types of behavioral and cognitive interventions that can be applied to the digital world (Behavioral Inventions Online: Nudging, Technocognition, and Boosting section). We then identify four types of cognitive tools:

Systematic Differences Between Online and Offline Environments

The Internet and the devices people use to access it represent not just new technological achievements but also entirely new artificial environments. Much like people’s physical surroundings, these are environments in which people spend time, communicate with each other, search for information, and make decisions. Yet the digital world is a recent phenomenon: The Internet is 50 years old, the Web is 30, and the advanced social Web is merely 15 (for definitions, see Table 1). New adjustments and features are added to these environments on a continuous basis, making it nearly impossible for most users, let alone regulators, to keep abreast of the inner workings of their digital surroundings.

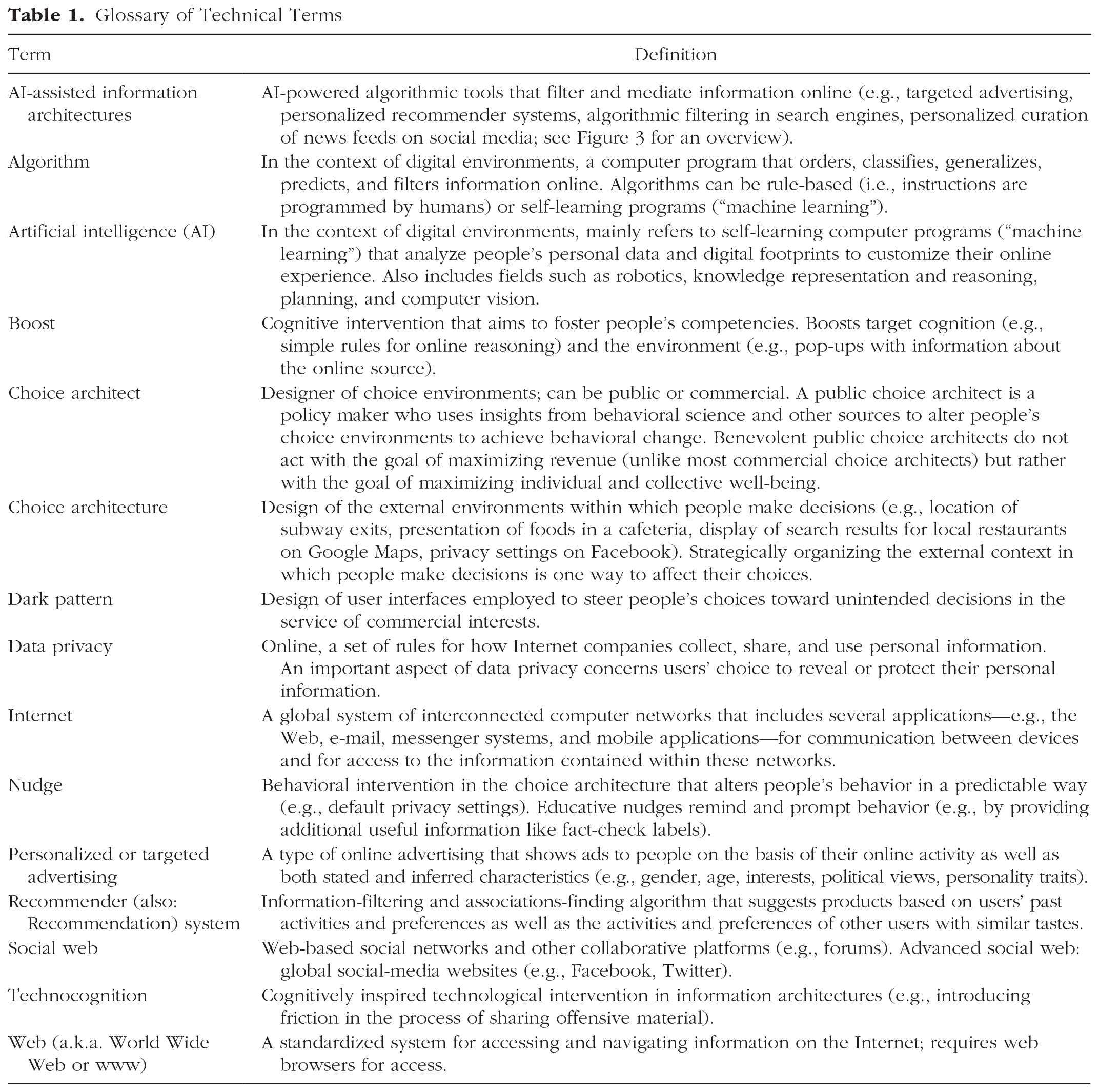

Glossary of Technical Terms

Online reality tends to be seen as different from the physical world, and computer-mediated social activities are often described as inferior substitutes for real-life or face-to-face interactions (for an overview, see Green & Clark, 2015). However, this presumed dualism between online and offline worlds is becoming more problematic—and possibly obsolete—as the line separating the two environments continues to blur. The ubiquitous nature of computing 2 and the integration of digital devices and services into material objects (e.g., cars) and actions in the physical world (e.g., navigation) make it difficult to delineate when one is truly online or offline—a phenomenon that Floridi (2014) called the “onlife experience” (p. 43). This effect is highly visible in computerized work environments, where more and more of people’s working time is spent online. According to a report by the European Commission (2017), the use of digital technologies has increased significantly in the past 5 years in more than 90% of workplaces in the EU, and most jobs now require at least basic computer skills.

That said, the digital world differs from its offline counterpart in ways that have important consequences for people’s online experiences and behavior. We will proceed by outlining several ways in which online ecologies do not resemble offline environments. A systematic understanding is required not only to fill the gaps in knowledge of the psychologically relevant aspects of the digital world but also to ensure that psychological interventions take into account the specifics of these new environments and the particular challenges that people are likely to face there. First steps have already been made. Marsh and Rajaram (2019) identified 10 properties of the Internet—including accessibility, unlimited scope, rapidly changing content, and inaccurate information—that they organized into three categories: (a) content (what information is available), (b) Internet usage (how information is accessed), and (c) the people and communities that create and spread the content (who drives information). They argued that these properties can affect cognitive functions, such as short-term and long-term memory, and reading, and have an effect on social influence online. Other relevant classifications summarizing the differences between online and offline environments in the context of social media include those provided by McFarland and Ployhart (2015) 3 and Meshi et al. (2015). 4

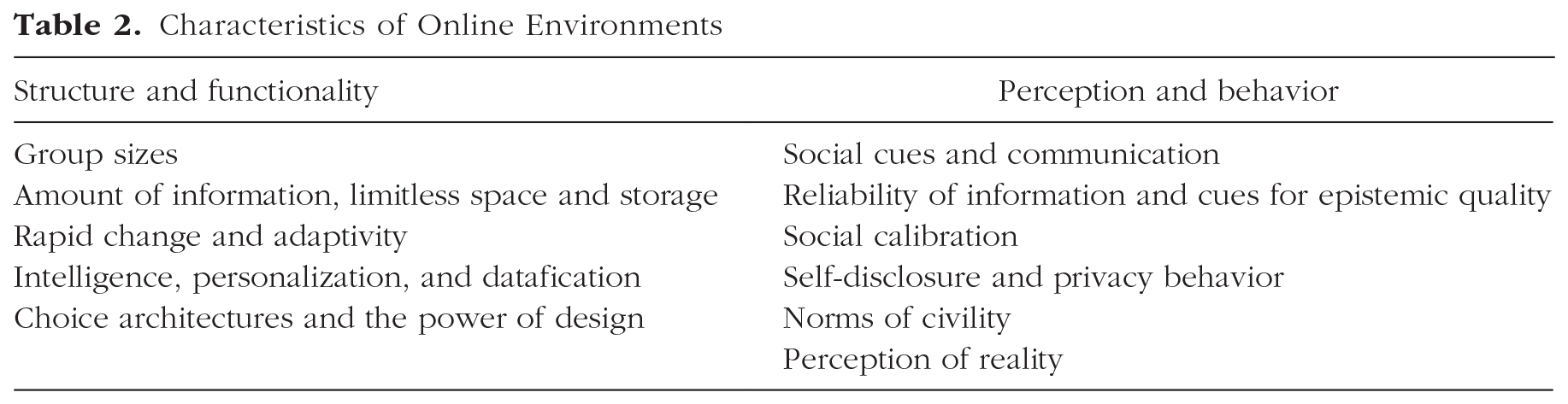

We expand on these classifications by focusing on two broad types of differences between online and offline ecologies: differences in structure and functionality and differences in perception and behavior (i.e., how people perceive the online and offline worlds and how their behavior might differ accordingly). A list of characteristics of online environments can be found in Table 2, which is followed by a detailed discussion of each characteristic.

Characteristics of Online Environments

Differences in structure and functionality

Group sizes

In 2020, there are more than 4.6 billion people (or almost 60% of the global population) and around 30 billion devices connected to the Internet. 5 Digital technologies have changed the public sphere, connecting people separated in both time and space and creating the “digital public” (Bunz, 2014). Indeed, one of the predominant uses of the Internet is for communication. The social Web boasts impressive numbers of users: In the third quarter of 2020, Facebook alone had 2.7 billion active monthly users (Statista, 2020c), and the Chinese WeChat more than 1.2 billion (Statista, 2020d). According to Our World in Data, “social media platforms are used by one-in-three people in the world, and more than two-thirds of all Internet users” (Ortiz-Ospina, 2019, para. 2). Online, one can broadcast a message to audiences of millions, whereas in face-to-face communication, there are physical limits to how many people can join a conversation (Barasch & Berger, 2014). Yet even though social media enables people to establish larger social networks and profit from greater global connectivity, the structures of social circles online and the number of close friends people have online do not significantly differ from their offline counterparts (Dunbar et al., 2015). 6 In online social networks, the average number of friends (between 100 and 200) as well as the number of friends who are considered to belong to the two closest circles (typically around five and 15, respectively) do not differ from the values for offline inner circles (Dunbar, 2016; Dunbar et al., 2015). This suggests that the cognitive and temporal constraints that “limit face-to-face networks are not fully circumvented by online environments” (Dunbar, 2016, p. 7).

Amount of information, limitless space and storage

Digital environments are not subject to the same constraints on information proliferation and storage found in physical surroundings. Online space is virtually limitless, contains several layers (e.g., surface Web and dark Web), and can grow at a high pace. Consider that when Sergey Brin and Larry Page launched Google in 1998, they archived 25 million individual pages. In 2013 that number had grown to 30 trillion and, by 2016, had reached more than 130 trillion (Schwartz, 2016). At the time of this writing in the second quarter of 2020, there were 1.8 billion websites on the Internet and approximately 4.5 billion Google searches a day. 7 Moreover, the potential for speed and scope of information propagation is much higher online, where the same message can be effortlessly and immediately copied to reach vast audiences. For example, the most shared tweet to date 8 reached 4.5 million retweets, most of which happened in the 24 hr after the initial posting. New technologies have systems made for processing and storing information superior to any previously available systems (Clowes, 2013). This feature of digital technology also implies that information does not have an expiration date and can be stored more or less indefinitely—a situation that prompted the EU to establish what is commonly referred to as the “right to be forgotten,” which provides European citizens with a legal mechanism for ordering the removal of their personal data from online databases (European Parliament, 2016, Article 17).

Rapid change and adaptivity

Digital environments develop at a high rate, especially compared with most offline environments. The document-based Web 1.0 was replaced by the more interactive Web 2.0 in the beginning of the 2000s, and an increasingly more sophisticated and AI-powered Web of data is being introduced (Aghaei et al., 2012; Fuchs et al., 2010). Online content can be added, removed, or changed in seconds, and digital architectures can rapidly adapt to new demands and challenges. Even small changes in the structure of online architectures can have major societal consequences: For example, introducing some friction into the process of sharing information (i.e., increasing the investment in time, effort, or money required to access or spread information) can significantly decrease the likelihood of citizens engaging with the affected sources, as the Chinese government’s attempt to manage and censor information shows (see Roberts, 2018). Clicks, likes, and other types of social information shared online—as insignificant as they may seem individually—can collectively amount to sizable changes (e.g., for election results, which can in some cases be decided by just a few votes). For example, in a large-scale experiment on their users’ newsfeeds, Facebook showed that including social information in an “I voted” button (in this case, displaying faces of friends who had clicked on the button) affected both click rates and real-world voting—people who saw social information were 2.08% more likely to click on the button compared with those who saw nonsocial information, and they were 0.39% more likely to vote than were people who saw an informational message or no message at all—suggesting that social signals from friends on social networks (especially close friends) contributed to the overall effect of the message on people’s voting behavior (Bond et al., 2012).

Intelligence, personalization, and datafication

The latest developments in the evolution of the Internet increasingly depend on datafication (the transformation of many aspects of the world and people’s lives into data 9 ) and mediation of content by algorithms and other intelligent technologies (we expand on this in the AI-Assisted Information Architectures section). Increasing datafication leads to increasing surveillance and control over people’s information diets (Zuboff, 2019), and rapidly developing machine-intelligence technology spurs a gradual relinquishing of both public control and transparency surrounding the technology. For example, search engines and recommender systems (e.g., video suggestions on YouTube) routinely rely on machine-learning systems that outperform humans in many respects (e.g., RankBrain in Google). Such algorithms are both complex and nontransparent—sometimes for designers and users alike (Burrell, 2016). The opacity of machine-learning algorithms stems from their autonomous and self-learning character: They are given input and produce output, but the exact processes that generate these outputs are hard to interpret. This has led some to describe these algorithms as “black boxes” (Rahwan et al., 2019; Voosen, 2017). Modern-day online environments, unlike their offline counterparts, possess autonomous intelligence—be it purely domain-specific machine intelligence, crowdsourced human intelligence, or a powerful combination of both.

Choice architectures and the power of design

Another feature that distinguishes online environments from physical surroundings is the ubiquity and the power of the design that mediates people’s online experience. The design of an interface in which people encounter the complexity of interconnected information online—the “human interface” (Berners-Lee et al., 1992)—presupposes that it has a decisive role in how people perceive the information presented. In other words, there is no Internet without ubiquitous

Differences in perception and behavior

Differences between online and offline environments are to be found not only in their structural characteristics and functionality but also in people’s perception of them and the way their behavior might change online in light of these perceptions.

Social cues and communication

Online communication differs from face-to-face communication in several ways, including the potential for anonymity and asynchronicity, the ability to broadcast to multiple audiences, and the availability of audience feedback (Misoch, 2015). Another characteristic of online communication that was emphasized in early research into Internet communication concerns the lack of nonverbal or physical cues—such as body language or vocal expressivity—that are important for conveying and understanding emotion in face-to-face communication. This raised concerns that increased use of computer-mediated communication would lead to impoverished social interaction (the reduced-social-cues model; e.g., Kiesler et al., 1984). However, it has now been recognized that users adapt to the medium and substitute the lack of nonverbal cues in digital communication with other verbal cues, thereby achieving equal levels of affective content (Walther et al., 2005, 2015). Online environments also contribute to the development of social cues, offering additional nonverbal cues such as emoticons, “likes,” and shares to enrich online communication. However, social cues can mean different things to users and platforms: To a user, a “like” button signifies appreciation or attention; to a tech firm, it is a useful data point. In addition, digital social cues can leak more information—and more sensitive information—than people intend to share (e.g., sexual orientation, personality traits, political views), including information that can be exploited to psychologically target and manipulate users (Kosinski et al., 2013; Matz et al., 2017).

Reliability of information and cues for epistemic quality

Information available online often lacks not only the typical social cues found in face-to-face interaction but also the cues to its epistemic quality that are generally available offline, such as an indication of sources or authorship. One reason for this is that the Internet—“an environment of information abundance”—is no longer subject to traditional filtering through professional gatekeepers (Metzger & Flanagin, 2015, p. 447). Modern-day digital media replaces expert gatekeeping with either crowdsourced gatekeeping (e.g., Wikipedia) or automated gatekeeping (e.g., algorithms on social media; Tufekci, 2015). Although some online platforms deliberately construct information ecosystems that favor indicators of quality (e.g., references to sources, fact-checking) and have rules for content creation (e.g., Logan et al., 2010), much of the content shared on social networks and online blogs does not give users sufficient cues to judge its reliability. Allcott and Gentzkow (2017) pointed out that because of the low costs of producing content, information online can be relayed among users with no significant third party filtering, fact-checking, or editorial judgment. An individual user with no track record or reputation can in some cases reach as many readers as Fox News, CNN, or

Moreover, social-media platforms contribute to the phenomenon of “snack news”—“a news format that briefly addresses a news topic with no more than a headline, a short teaser, and a picture” (Schäfer, 2020, p. 1). Schäfer (2020) argued that frequent exposure to snack news can indirectly lead to the formation of strong convictions that are based on an illusory feeling of being informed. This phenomenon is not rare; an analysis by Bakshy et al. (2015) of 10.1 million Facebook profiles showed that users follow the links of only 7% of the news posts that appear in their news feeds. Moreover, manipulative use of certain cues—for instance, creating fake-news websites, impersonating well-known sources and social-media accounts, inflating emotional content (Crockett, 2017), or creating an illusion of consensus (Yousif et al., 2019)—can lead to dubious or outright false claims and ideas being disseminated.

Unreliable and intentionally fabricated false information is not found only in the digital world. Deception such as lying or impersonation is common offline as well (e.g., Gerlach et al., 2019). But because of some of the structural features we have discussed here (e.g., group size), deceptive acts can reach a much larger audience online than in face-to-face interactions. Impersonating an individual is easier when the important and hard-to-fake cues that are normally used to verify a person’s identity (e.g., voice, appearance, behavior) are not readily available. The perception (accurate or inaccurate) that false information and deception is more prevalent in the online world may exact far-reaching consequences. For instance, Gächter and Schulz (2016) recently showed, using cross-societal experiments from 23 countries, that the prevalence of rule violations across societies may impair individual intrinsic honesty, which is crucial for the functioning of a society.

Social calibration

The Internet can also affect social calibration—that is, perceptions about the prevalence of opinions in the general population. Offline, one gathers information about how others think from the limited number of people with whom one interacts, and most of these people live nearby. In the online world, physical boundaries cease to matter; one can connect with people around the world. One consequence of this global connectivity is that small minorities of people can form a seemingly large, if dispersed, community online. This in turn can create the illusion that even extreme opinions are widespread—thereby contributing to “majority illusion” (Lerman et al., 2016) and “false consensus” effects (the perception of one’s views as relatively common and of opposite views as uncommon; Leviston et al., 2013; Ross et al., 1977). Although one may find it very difficult to meet people in real life who believe the Earth is flat, the same is not true online among Facebook’s billions of users, where indeed there are some who do share this belief—or other equally exotic ones—and they can now find and connect with each other. Still another consequence of global connectivity is that online social media “dramatically increase the amount of social information we receive and the rapidity with which we receive it, giving social effects an extra edge over other sources of knowledge” (O’Connor & Weatherall, 2019, p. 16).

Self-disclosure and privacy behavior

The emergence and development of new online environments has consequences not only for how people communicate with others or how they evaluate information but also for the way they disclose information about themselves. Early studies on self-disclosure (revealing personal information to others) reported higher levels of sharing in visually anonymous computer-mediated communication than in face-to-face communication (Joinson, 2001; Tidwell & Walther, 2002). People also tend to be more willing to disclose sensitive information in online surveys that have the reduced social presence of the surveyor (Joinson, 2007). A systematic literature review by Nguyen et al. (2012) reported mixed evidence: Although most experimental studies (four of six) that measured self-disclosure showed more disclosure in online than in face-to-face interactions, in survey studies, participants reported more disclosure and willingness to share information with their offline friends (six of nine surveys). One may speculate that although the level of closeness, trust, and depth of interactions may prompt people to disclose personal information in offline relationships, the anonymity afforded by online communication can enhance people’s willingness to share. The benefits of online anonymity include the elimination of hierarchical markers (e.g., gender and ethnicity) that may trigger hostility (Young, 2002) and a sense of control people have over the information they share that stems from a belief that it will not be linked to their real personas. However, this sense of control can backfire. For example, one study showed that increasing individuals’ perceived control over the release and access of private information can increase their willingness to disclose sensitive information (the control paradox; Brandimarte et al., 2013).

Another paradox in people’s privacy behavior online is the

Norms of civility

The “online disinhibition effect” describes “a lowering of behavioral inhibitions in the online environment” (Lapidot-Lefler & Barak, 2012, p. 434) that is not seen offline. Online disinhibition can be both benign and toxic (Suler, 2004): It can inspire acts of generosity and help shy people socialize, but it can also lead to increased incivility in online conversations—as behavior “that can range from aggressive commenting in threads, incensed discussion and rude critiques, to outrageous claims, hate speech, and more severe forms of harassment such as purposeful embarrassment and physical threats” (Antoci et al., 2019, p. 84). One of the most common examples of incivility is trolling, a type of online harassment that involves “posting inflammatory malicious messages in online comment sections to deliberately provoke, disrupt, and upset others” (Craker & March, 2016, p. 79). Trolling can be used strategically to disrupt the possibility of constructive conversation. Incivility is pervasive online: A survey by the Pew Research Center revealed that 44% of Americans have personally experienced online harassment, and 66% have witnessed it being directed at others (Duggan, 2017). Although incivility in online comments can polarize how people perceive issues in the media (A. A. Anderson et al., 2014) and can disproportionally affect female politicians and public figures (Rheault et al., 2019) and members of minority groups (Gardiner, 2018), it seems to be perceived as the norm, rather than the exception, for online interaction (Antoci et al., 2019). One may speculate that actions in the online sphere might be perceived as less influential: For instance, insulting and even threatening anonymous users in online forums may be perceived as less harmful and consequential—for both the victim and the perpetrator—than threatening someone face to face.

Perception of reality

In contrast to the offline world, the Internet and social media are immaterial, virtual environments that do not exist outside of the human-created technology that supports them (McFarland & Ployhart, 2015). This relative lack of anchoring in the material world allows for multiple realities to be constructed for, or by, different audiences and media online (Waltzman, 2017), so that any reference to the objective truth and shared reality can be replaced by alternative narratives (e.g., “systemic lies” created to promote a hidden agenda; McCright & Dunlap, 2017). The impact of the Internet on the media landscape—along with several other factors, such as rising economic inequality and growing polarization—is likely to have contributed to the emergence of the “posttruth” environment, an alternative epistemic space “that has abandoned conventional criteria of evidence, internal consistency, and fact-seeking” (Lewandowsky, Ecker, & Cook, 2017, p. 360). In this alternative posttruth reality, deliberate falsehoods can be described as “alternative facts,” and politicians and media figures (on both sides of the Atlantic) can claim that objectivity “is a myth that is proposed and imposed on us” (Dmitry Kiselev, as quoted by Yaffa, 2014, para. 8), that “there’s no such thing, unfortunately, anymore as facts” (Scottie Nell Hughes, as quoted by Holmes, 2016, para. 3), or that “truth isn’t truth” (Rudy Giuliani, as quoted by Pilkington, 2018, para. 2; see also Lewandowsky, 2020b; Lewandowsky & Lynam, 2018). These environments are conducive to the dissemination of false news and rumors, which in turn undermine public trust in any information and erode the basis of shared reality (Watts & Rothschild, 2017), thereby creating an atmosphere of doubt that serves as a fertile ground for conspiracy theories (more on this in the False and Misleading Information section).

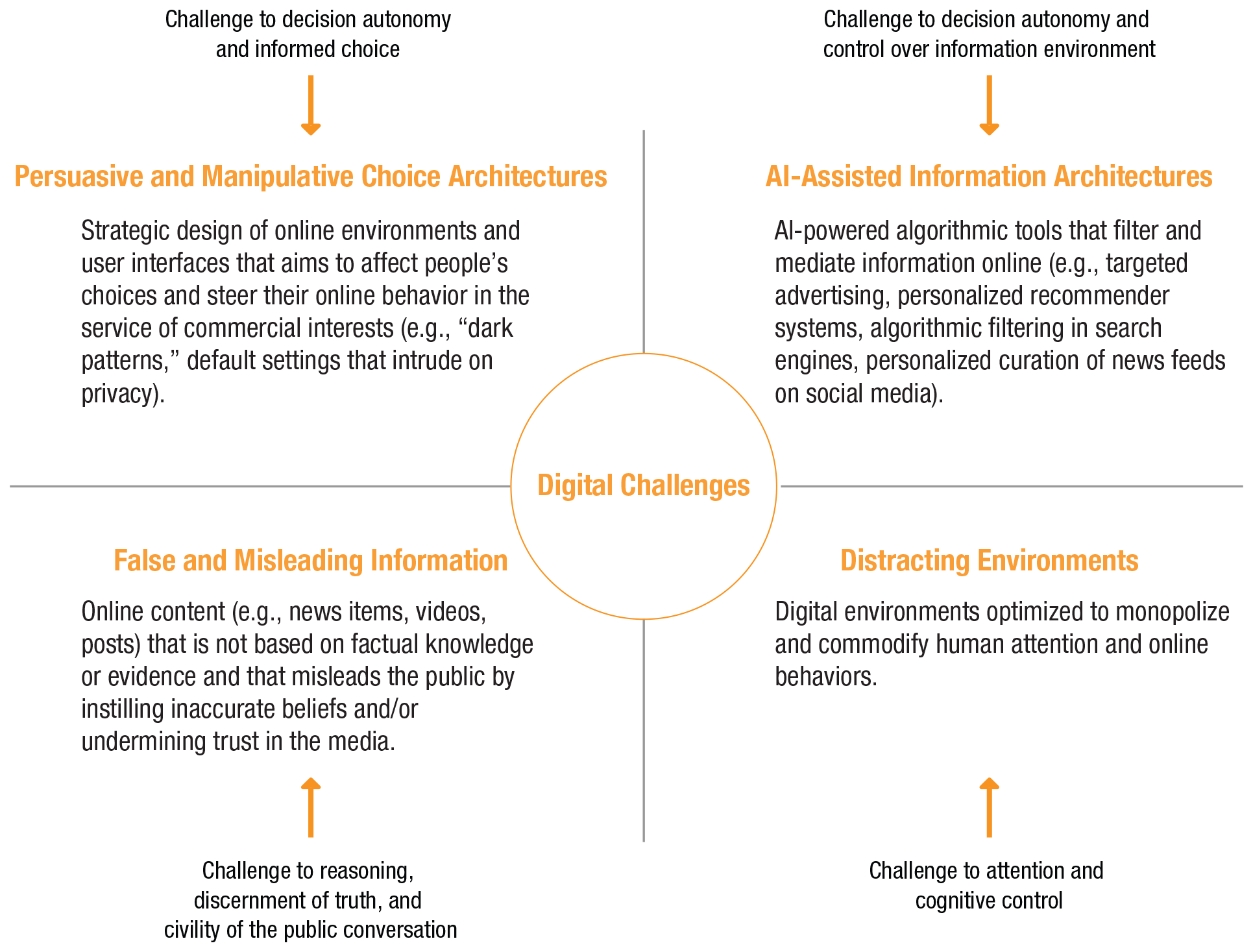

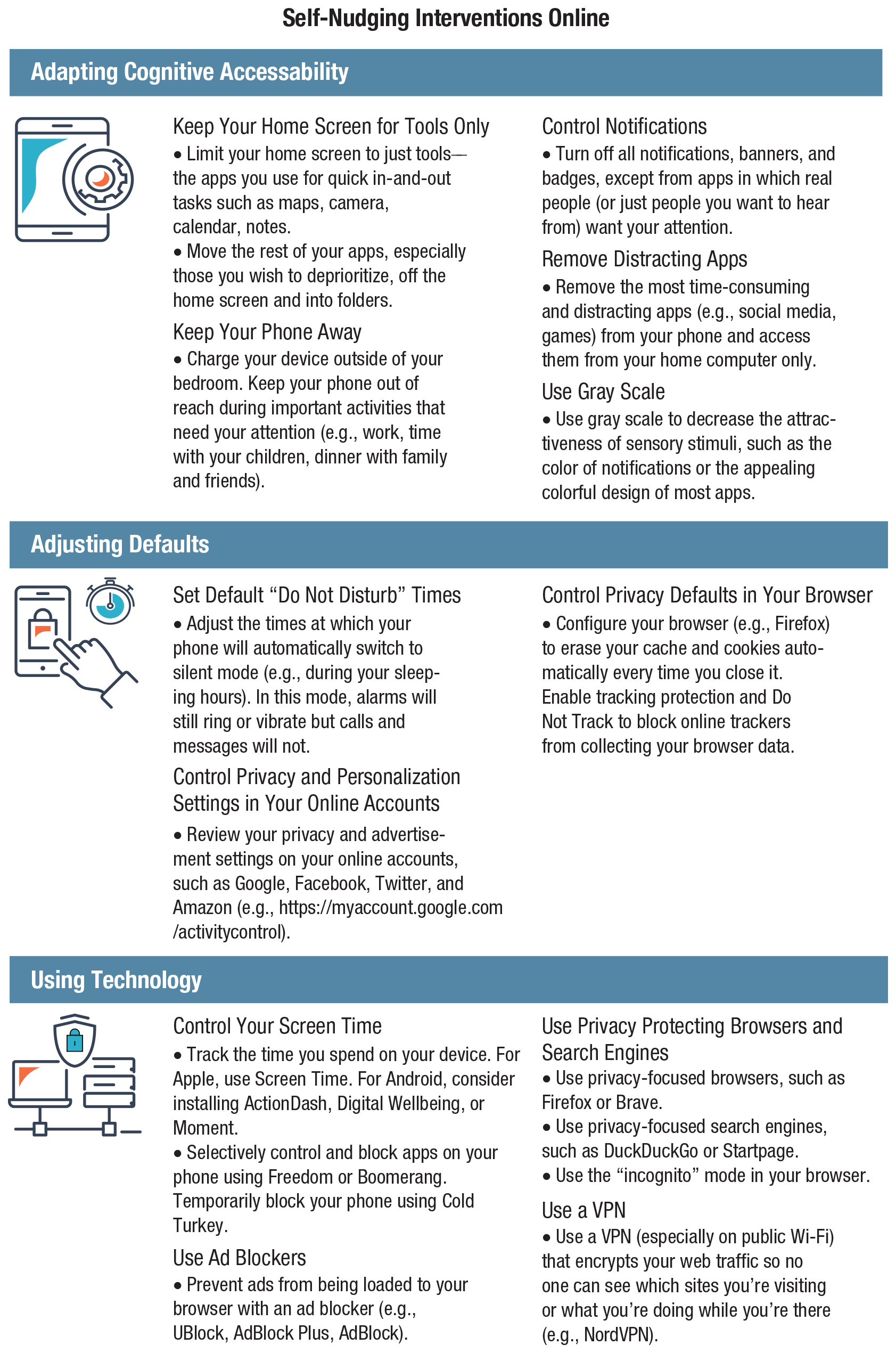

To summarize, online and offline worlds differ in psychologically and functionally relevant ways. The online world appears to trigger perceptions that can render it different from the offline world. When people and online architectures are brought into contact (without much public oversight and democratic governance), pressure points will emerge. We next review four such challenges (outlined in Fig. 2): persuasive and manipulative choice architectures, AI-assisted information architectures, the proliferation of false and misleading information, and distracting environments.

Challenges in the digital world.

Challenges in Online Environments

Persuasive and manipulative choice architectures

Modern online environments are replete with smart, persuasive choice architectures that are designed primarily to maximize financial return for the platforms, capture and sustain users’ attention, monetize user data, and predict and influence future behavior (Zuboff, 2019). For example, Facebook’s business model relies on exploiting user data to the benefit of advertisers; the goal is to maximize the likelihood that an ad captures its target’s attention. To stretch the time people spend on the platform (thus producing behavioral data and watching ads), Facebook employs a variety of design techniques that aim to change users’ attitudes and behavior via persuasive choice and information architectures (e.g., Eyal, 2014; Fogg, 2003). It is no coincidence that notifications are red; the color incites a sense of urgency. The “like” button triggers a quick sense of social affirmation. The bottomless news feed, with no structural stop to scrolling (i.e., infinite scroll), prompts people to consume more without noticing. These examples illustrate that a witting or unwitting awareness (via massive A/B testing) of human psychology underlies persuasive choice architectures and commercial nudging. Benefiting from an abundance of data on human behavior, these architectures are continuously being adapted to offer ever-more-appealing user interfaces to compete for human attention (e.g., Harris, 2016).

The main ethical ambiguity of persuasive choice architectures and commercial nudging resides in their close ties to other types of influence, such as coercion and, in particular, manipulation. Coercion is a type of influence that does not convince its targets but rather compels them by eliminating all options except for one (e.g., take-it-or-leave-it choices). Manipulation is a hidden influence that attempts to interfere with people’s decision-making processes to steer them toward the manipulator’s ends. It neither persuades people nor deprives them of their options; instead, it exploits their vulnerabilities and cognitive shortcomings (Susser et al., 2019). Manipulation thus undermines both people’s control and their autonomy over their decisions—that is, their sense of authorship and their ability to identify with the motives of their choices (e.g., Dworkin, 1988). It also prevents people from choosing their own goals and pursuing their own interests. Not all persuasive choice architectures are manipulative—only those that exploit people’s vulnerabilities in a nontransparent, covert manner. Below we consider two cases in which persuasive design in online environments borders on manipulation: dark patterns and hidden privacy defaults.

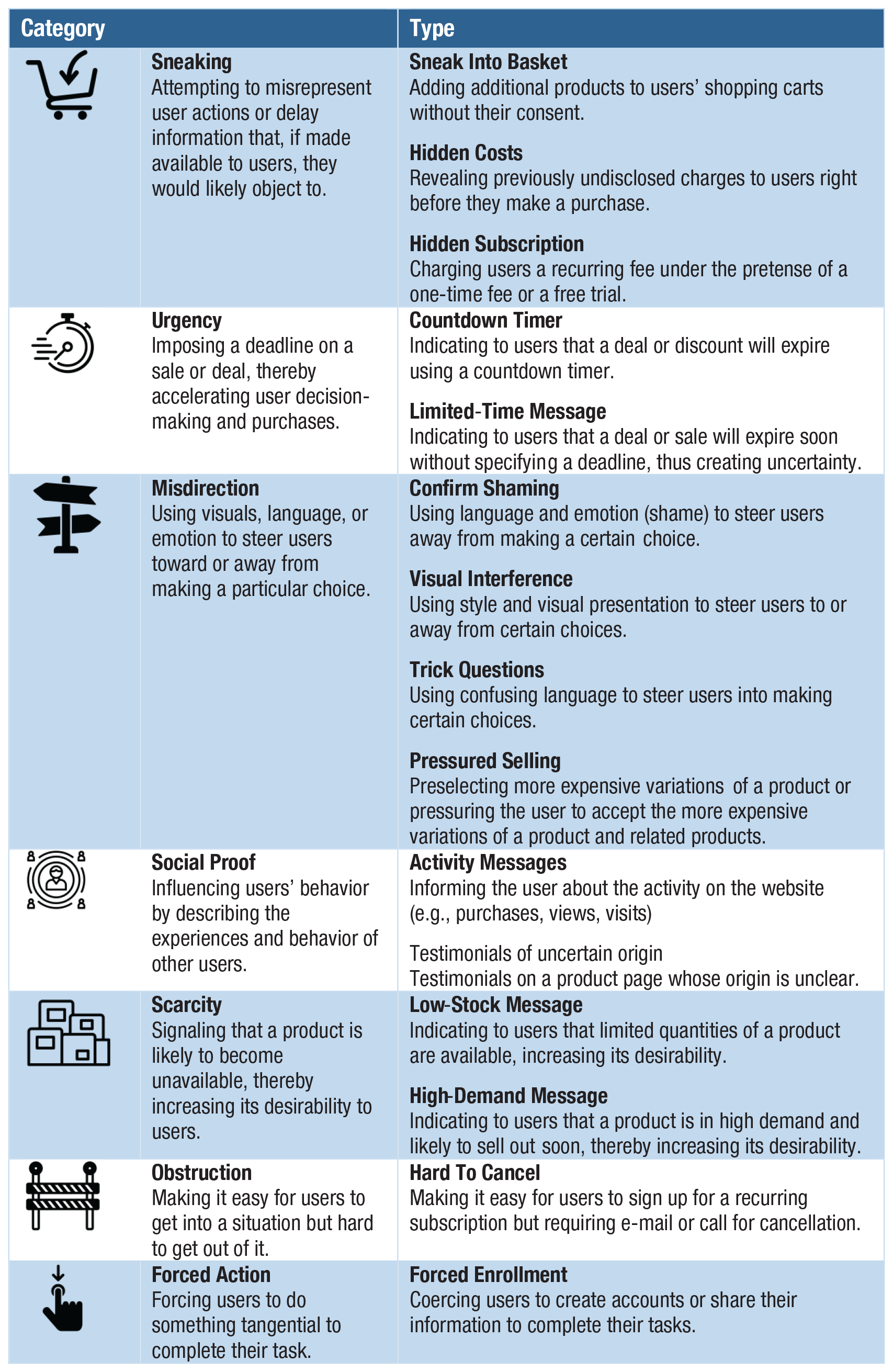

Dark patterns—a term coined by designer and user-experience researcher Harry Brignull (see Brignull, 2019; Gray et al., 2018; Mathur et al., 2019)—are a manipulative and ethically questionable use of persuasive online architectures. “Dark patterns are user interface design choices that benefit an online service by coercing, steering, or deceiving users into making unintended and potentially harmful decisions” (Mathur et al., 2019, p. 1). One notorious example of dark patterns is the “roach motel,” unglamorously named after devices used to trap cockroaches. The roach motel makes it easy for users to get into a certain situation, but difficult to get out (in Fig. 3 it falls under the type “hard to cancel”). Many online subscription services function that way. For instance, creating an Amazon account requires just a few clicks, but deleting it is difficult and time consuming: The user must first hunt for the hidden option of deleting an account, then request this procedure by writing to customer service. This asymmetry in the ease of getting in and out borders on manipulation and retains customers. Another example is “forced continuity”: subscriptions that, after an initial free trial period, continue on a paid basis without notifying users in advance and without giving them an easy way to cancel the service. 10

Categories and types of dark patterns. Source and visual materials: Dark Patterns Project at Princeton University (https://webtransparency.cs.princeton.edu/dark-patterns); see also Mathur et al. (2019). The icons are used with permission of the Dark Patterns Project.

Dark patterns are anything but rare. In a recent large-scale study, Mathur et al. (2019) tested automated techniques that identified dark patterns on a sizeable set of websites. They discovered 1,818 instances of dark patterns from 1,254 websites in the data set of 11,000 shopping websites. Mathur et al.’s findings revealed 15 types of dark patterns belonging to seven broader categories (see Fig. 3), such as misdirection, applying social pressure, sneaking items into the user’s shopping basket, and inciting a sense of urgency or scarcity (a strategy often used by hotel-booking sites or airline companies).

Another case of persuasive design that borders on manipulation is hidden default settings. Hidden defaults present a particularly strong challenge because they trick people into accepting settings without being fully (if at all) aware of the consequences. For example, online platforms are often designed to make it difficult to discontinue personalized advertising or choose privacy-friendly settings. Default settings can also lead users to unwittingly share sensitive data, including location information that can be used to infer attributes such as income or ethnicity (see, e.g., Lapowsky, 2019). Default data-privacy settings do not even have to follow dark-patterns strategies: Most users, lacking the time or motivation to go several clicks deep into the settings labyrinth, will not change their defaults unless they have a specific reason to do so. Hidden defaults raise clear ethical concerns, but these practices continue despite the introduction of the GDPR in Europe in 2016, which stresses the importance of privacy-respecting defaults and insists on a high level of data protection that does not require users to actively opt out of the collection and processing of their personal data (European Parliament, 2016, Article 25).

However, attempts to game the rules of informed consent and privacy by default have found to be a major challenge to GDPR implementation. Nouwens et al. (2020) reported that dark patterns and hidden defaults in the form of implied consent are ubiquitous on new consent-management platforms (in the United Kingdom) and that only 11.8% meet minimal requirements of GDPR for valid consent (e.g., no prechecked boxes, explicit consent, rejecting as easy as accepting). According to a report by the Norwegian Consumer Council (2018), tech companies such as Google, Facebook, and—to a lesser extent—Microsoft use design choices in “arguably an unethical attempt to push consumers toward choices that benefit the service provider” (p. 4). On the topic of privacy, key findings of the report include the use of privacy-intrusive default settings (e.g., Google requires that the user actively go to the privacy dashboard to disable personalized advertising), framing and wording that nudges users toward a choice by presenting the alternative as ethically questionable or highly risky (e.g., on Facebook: “If you keep face recognition turned off, we won’t be able to use this technology if a stranger uses your photo to impersonate you”), giving users the illusion of control (e.g., Facebook allows users to control whether Facebook uses data from partners to show them ads, but not whether the data are collected and shared in the first place), take-it-or-leave-it choices (e.g., a choice between accepting the privacy terms or deleting an account), and design of choice architectures in which choosing the privacy-friendly option requires more effort from the users (Norwegian Consumer Council, 2018). Such design choices might also contribute to the privacy paradox by actively discouraging users from behaving in a way that reflects their concern for their privacy. Users’ halfhearted privacy-protecting behavior might be due not to laziness or a lack of skills but rather to the unnecessarily complicated nature of protecting one’s privacy online.

In sum, persuasive designs and commercial nudges can go far beyond transparent persuasion and enter the territory of hidden manipulation when they rely on dark patterns (Mathur et al., 2019), default settings that intrude on user privacy (Norwegian Consumer Council, 2018), and the exploitation of people’s biases and vulnerabilities (Susser et al., 2019). These practices affect not only how users access information but also what information they agree to share. Moreover, online manipulation undermines people’s control and autonomy over their decisions by nudging them toward behaviors that benefit commercial actors or by hiding relevant information (e.g., settings for discontinuing personalized advertisement).

AI-assisted information architectures

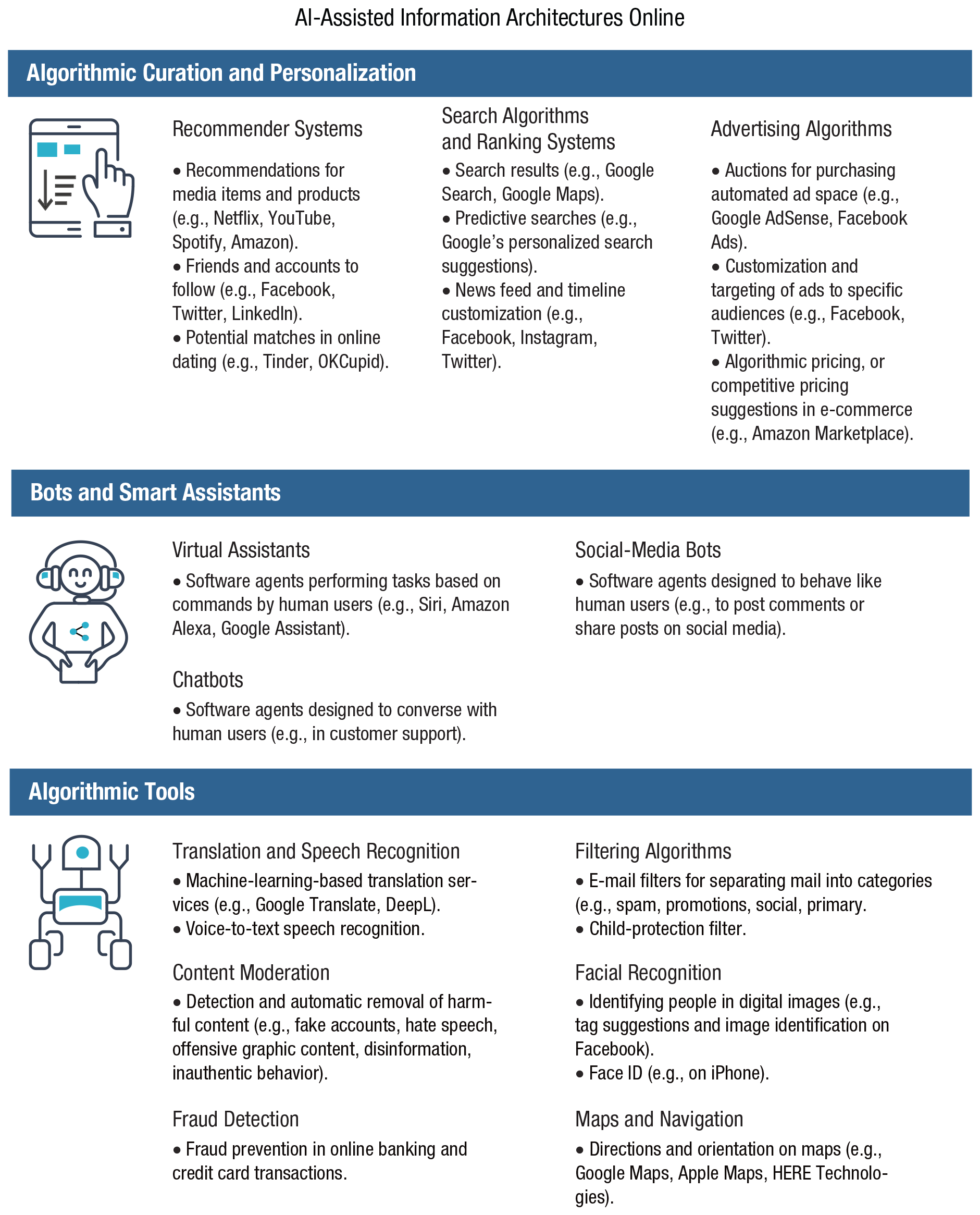

Another challenge of online information and choice architectures comes with the use of machine learning and smart algorithms. We use the term

Examples of AI-assisted information architectures online. Icons are used under license from Adobe Stock.

One general problem is that decision-making is being delegated to a variety of algorithmic tools without clear oversight, regulation, or understanding of the mechanisms underlying the resulting decisions. For example, ranking algorithms and recommender systems are considered proprietary information, and therefore neither individual users nor society in general has a clear understanding of why information in search engines or social-media feeds is ordered in a particular way (Pasquale, 2015). Other factors contribute further to the lack of transparency,

11

such as the inherent opacity of machine-learning algorithms (the black-box problem) and the complexity of algorithmic decision-making processes (de Laat, 2018; Turilli & Floridi, 2009). Delegating decision-making this way not only results in impenetrable algorithmic decision-making processes but also precipitates people’s gradual loss of control over their personal information and a related decline in human agency and autonomy (J. Anderson & Rainie, 2018; Mittelstadt et al., 2016; Zarsky, 2016). Relatedly, data privacy and its protection in the context of AI-assisted information environments should be seen not merely as an individual good but as a public good (Fairfield & Engel, 2015). As algorithmic inferences from data collected from users can be used to predict personal information of nonusers (known as

Consistent delegation of choice and shifting autonomy from users to algorithms leaves open the question of responsibility and accountability (Diakopoulos, 2015). Because artificial agents are capable of making their own decisions and because no one has decisive control over their actions, it is difficult to assign responsibility for the outcomes (e.g., the responsibility gap; see Matthias, 2004). Consider the decisions of a recommender system employed on YouTube (boasting about 2 billion users, it is the second most popular social network and the second most visited website worldwide

12

). The recommender algorithm—based on deep neural-network architecture—offers video recommendations to YouTube users with the predominant purpose of increasing watching time (Covington, Adams, & Sargin, 2016). However, one unintended consequence happened to be that the system promoted videos that tended to radicalize their viewers with every step. For example, Tufekci (2018) reported how after showing videos of Donald Trump during the 2016 presidential campaign, YouTube started to recommend and autoplay videos featuring White supremacists and Holocaust denialists. After playing videos of Bernie Sanders, YouTube suggested videos on left-wing conspiracies (e.g., that the U.S. government was behind the 9/11 attacks). An investigation by

Who, then, should be held accountable for decisions made by autonomous recommender systems that suggest ever more radical content on social networks: the developers of the algorithms, the owners of the platforms, or the content creators? YouTube recently vowed to limit recommending conspiracy theories on its platform (Wong & Levin, 2019), a move that highlights the tech industry’s unilateral power to shape their users’ information diets. In a recent empirical audit of YouTube recommendations, Hussein, Juneja, and Mitra (2020) found that the YouTube approach indeed limited recommendations of selected conspiracy theories (e.g., the flat-earth narrative) or medical misinformation (videos promoting vaccine hesitancy), but not of other misinformation topics (e.g., the chemtrail conspiracy narrative).

Another closely related concern is the impact of AI-driven algorithms on choice architectures—for instance, when algorithms function as gatekeepers, deciding what information should be presented and in what order (Tufekci, 2015). Be it personalized advertising or filtering information to present the most relevant items, the results directly affect people’s choices by narrowing their options (Newell & Marabelli, 2015) and steering their decisions in a particular direction or reinforcing existing attitudes (e.g., Le et al., 2019). The consequences loom large for societies as a whole as well as for individuals: Epstein and Robertson (2015) showed, using a simulated search engine, that rankings favoring a particular political candidate can shift voting preferences of undecided voters by 20% or more. Given that four of the past five U.S. presidential elections resulted in margins between the Democrats and Republican of below 4% and that the 2016 election, for instance, was decided by razor-thin margins in a few swing states (six states were won by margins of less than 2%), the impact of potential search-engine biases should not be ignored.

Microtargeted advertisement on social media, especially in the context of political campaigning, is another case in point. This method relies on automated targeting of messages on the basis of people’s personal characteristics (as extracted from their digital footprints) and a use of private information that stretches the notion of informed consent (e.g., psychographic profiling; see Matz et al., 2017). The resulting microtargeted political messages, which are seen only by the targeted audience, can exploit people’s psychological vulnerabilities while evading public oversight. Findings show that data collected about people online can be used to make surprisingly accurate inferences about people’s sexual orientation, personality traits, and political views (Kosinski et al., 2013). For instance, algorithmic judgments about people’s personalities that are based on information extracted from digital fingerprints (e.g., Facebook likes) can be more accurate than judgments made by relatives and friends (Youyou et al., 2015), and just 300 likes are sufficient for an algorithm to predict users’ personalities more accurately than their own spouses can (Youyou et al., 2015). In a systematic review of 327 articles, Hinds and Joinson (2018) showed that multiple pieces of demographic data could be reliably inferred from people’s digital footprints, including ethnicity, occupation, and sexual orientation. Hinds and Joinson (2019) also demonstrated that computer-based predictions of personality traits (e.g., extraversion, neuroticism) from digital footprints are more accurate than human judges’ predictions. This information can be used to create a dangerous “personality panorama” (Boyd et al., 2020) of people’s behavior online that, consequently, can be employed to persuade and manipulate users; for example, advertising messages can be adjusted to match people’s introversion or extroversion score (Matz et al., 2017). A former employee reported that Cambridge Analytica used personality profiling during Donald Trump’s 2016 presidential campaign to target fear-based messages (e.g., “Keep the terrorists out! Secure our borders!”) to people who scored high on neuroticism (Amer & Noujaim, 2019).

The impact of this manipulation on the outcomes of the Brexit vote and the 2016 U.S. election is a major cause for concern and an argument for stricter regulation of online platforms (e.g., Jamieson, 2018; Persily, 2017; Digital, Culture, Media and Sport Committee, 2019). Sixty-two percent of social-media users in the United States agree that it is not acceptable for social-media platforms to use their data to deliver customized messages from political campaigns (Smith, 2018b). Recent surveys in Germany, the United Kingdom, and the United States (Ipsos MORI, 2020; Kozyreva et al., 2020) also provide evidence that people consider personalization and targeting in political campaigning unacceptable. The impact of microtargeting is often exacerbated by the lack of transparency in political campaigning on social media: It is nearly impossible to trace how much has been spent on microtargeting and what content has been shown (e.g., Dommett & Power, 2019).

Another challenging consequence of algorithmic filtering is algorithmic bias (e.g., Bozdag, 2013; Corbett-Davies et al., 2017; Fry, 2018). Here ethical concerns touch on both the generation of biases in data processing and the societal consequences—such as discrimination—of implementing biased algorithmic decisions (Mittelstadt et al., 2016; Rahwan et al., 2019). One particularly disturbing set of examples concerns deeply rooted gender or racial biases that can be picked up by data-processing algorithms. One study of personalized Google advertisements demonstrated that setting the gender to female (rather than male) in simulated user accounts resulted in fewer ads related to high-paying jobs (Datta et al., 2015). Another study found that online searches for “Black-identifying” names were more likely to be associated with advertisements suggestive of arrest records (e.g., “Looking for Latanya Sweeney? Check Latanya Sweeney’s arrests”; Sweeney, 2013, p. 3). Names such as Jill or Kristen did not elicit similar ads even when arrest records existed for people with those names. Striking examples of racial biases in algorithmic decision-making are not limited to online environments; they also have consequential effects offline, for instance in policing and health (e.g., Obermeyer et al., 2019).

Algorithms are designed by human beings, and they rely on existing data generated by human beings. They are therefore likely not only to generate biases because of technical limitations but also to reinforce existing biases and beliefs (Bozdag, 2013), which in turn can deepen ideological divides and exacerbate political polarization. Along the same lines, it has been argued that personalized filtering on social-media platforms may be instrumental in creating “filter bubbles” (Pariser, 2011) or “echo chambers” (Sunstein, 2017); echo chambers are information environments “in which individuals are exposed only to information from like-minded individuals” (Bakshy et al., 2015, p. 1130), whereas filter bubbles refer to content selection “by algorithms according to a viewer’s previous behaviors” (p. 1130). Both echo chambers and filter bubbles tend to amplify the confirmation bias—a way to search for and interpret information that reinforces preexisting beliefs and increases political polarization (e.g., Bail et al., 2018) and radicalization. Not everyone agrees about the existence of filter bubbles, however; some researchers argue that news-audience fragmentation is less prevalent than is often assumed (Flaxman et al., 2016) or that face-to-face interaction is currently even more segregated than online discourse (Gentzkow & Shapiro, 2011). Bakshy et al. (2015) found that individual choices, not algorithms, limit exposure to attitude-challenging views among Facebook users. But because recommender systems typically “learn” users’ preferences, psychological tendencies in information selectivity and algorithmic amplification of those tendencies are likely to reinforce one another.

From a psychological perspective, many factors could motivate online segregation and polarization, including confirmation bias, selective exposure to information, and selective engagement with online content (e.g., Garrett & Stroud, 2014). Although exposure might be not as segregated as is commonly claimed, social-media environments show signs of selective engagement (in the form of likes, shares, and comments; see Schmidt et al., 2017), leading to “highly segmented interaction with social media content” (Garrett, 2017, p. 371). The extent to which such selective exposure and engagement can distort people’s information diets and influence democratic processes is highly debated—we return to this topic in the next section.

False and misleading information

Another challenge presented by online environments and social networks is the increasing speed and scope of false-information proliferation and its resulting threat to the rationality and civility of public discourse—and ultimately to the very functioning of democratic societies. In this section we explore three questions: (a) What is the extent of the “false news” problem? (b) What are useful taxonomies of false and misleading information? (c) What are the psychological mechanisms underlying receptivity to false content online? Before we proceed, let us briefly mention our terminological choices. We use the term

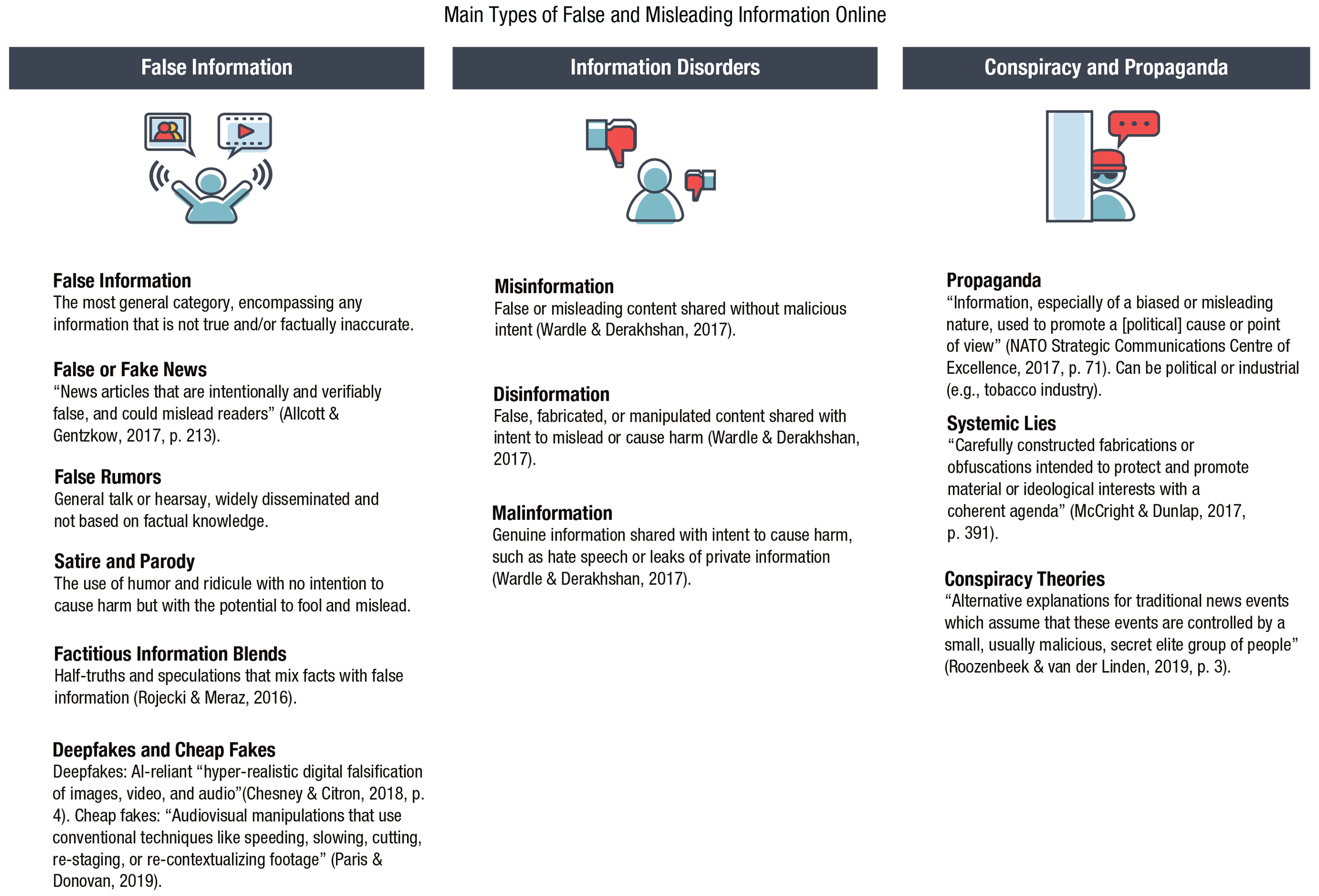

Main types of false and misleading information in the digital world. Icons are used under license from Adobe Stock.

Scope of the problem

We begin our review of the challenge of false and misleading information with an examination of its scope. A recent report by Bradshaw and Howard (2019) showed that in the past 2 years alone, the number of countries with disinformation campaigns more than doubled (from 28 in 2017 to 70 in 2019), and that Facebook remains the main platform for those campaigns. At least half of all Internet users rely on online and social media as their primary sources of news and information, including 36% who access Facebook for news (Newman et al., 2020). False and unverified claims online can therefore lead not only to false beliefs and misguided actions but also to an erosion of trust in the information ecosystem—ultimately threatening a society’s ability to hold evidence-based conversations and reach a consensus. There is much concern that the spread of false news and rumors on Facebook and Twitter influenced the U.S. presidential election and the Brexit referendum in 2016 (see Digital, Culture, Media and Sport Committee, 2019; Persily, 2017). For instance, Allcott and Gentzkow (2017) estimated that the average U.S. adult read and remembered at least one fake-news article during the election period. They also compiled a database of fake-news articles that circulated in the 3 months before the 2016 election (115 pro-Trump and 41 pro-Clinton) and that, together, were shared 38 million times in the week leading up to the election. As Silverman’s (2016) analysis showed, in the 3 months before the 2016 U.S. presidential election, the most popular false-news stories were more widely shared on Facebook than the most popular mainstream news stories. The 20 top-performing false election stories from hoax sites and hyperpartisan blogs generated 8,711,000 shares, reactions, and comments, whereas the 20 top-performing election stories from legitimate news websites generated 7,367,000 reactions. A single false story about the Pope endorsing Donald Trump was liked or shared on Facebook 960,000 times.

Online disinformation and misleading claims can have deadly real-world consequences: The Pizzagate conspiracy theory, which alleged that Hillary Clinton and her top aides were running a child-trafficking ring out of a Washington pizzeria, was floated during the 2016 presidential campaign on Reddit, Twitter, and fake-news websites. It led to repeated harassment of the restaurant’s employees and eventually prompted an armed 28-year-old man to open fire inside the pizzeria (Aisch, Huang, & Kang, 2016). On a broader—and even more disturbing—scale, the Myanmar military orchestrated a propaganda campaign on Facebook that targeted the country’s Muslim Rohingya minority group, inciting violence that forced 700,000 people to flee (Mozur, 2018). Encrypted messenger networks such as WhatsApp are also vulnerable to manipulation: False rumors about child kidnappers shared in Indian WhatsApp groups in 2018 incited at least 16 mob lynchings, leading to the deaths of 29 innocent people (Dixit & Mac, 2018). Most recently, the COVID-19 pandemic has given rise to multiple conspiracy theories and misleading news stories that gain credibility among members of the public by exploiting their fears and uncertainty; for instance, 29% of Americans believe that COVID-19 was created in a lab (Schaeffer, 2020), and there have been up to 50 attacks on mobile-phone masts in the UK since the spread of coronavirus was fallaciously linked to the country’s rollout of the 5G mobile network (Adams, 2020).

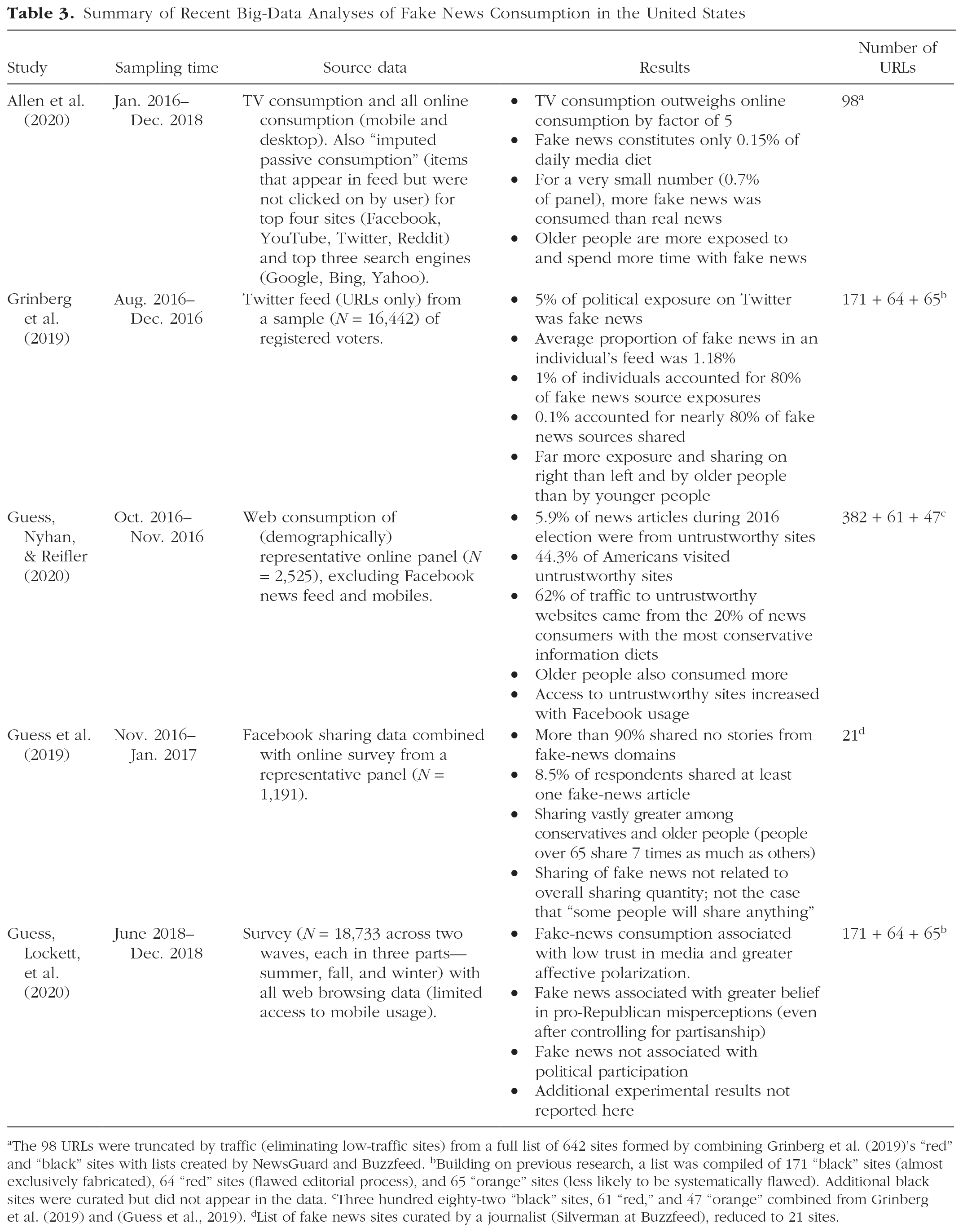

Several recent analyses have suggested that the problem of fake news is not as serious as was initially believed in the aftermath of Brexit and the 2016 U.S. election (Allen et al., 2020; Grinberg et al., 2019; Guess et al., 2019; Guess, Lockett, et al., 2020; Guess, Nyhan, & Reifler, 2020). Table 3 summarizes these articles, which all used big-data analyses to measure Americans’ exposure to fake news and concluded that the limited prevalence of fake news online (of the type examined in these articles) 13 may not present cause for alarm.

Summary of Recent Big-Data Analyses of Fake News Consumption in the United States

The 98 URLs were truncated by traffic (eliminating low-traffic sites) from a full list of 642 sites formed by combining Grinberg et al. (2019)’s “red” and “black” sites with lists created by NewsGuard and Buzzfeed. bBuilding on previous research, a list was compiled of 171 “black” sites (almost exclusively fabricated), 64 “red” sites (flawed editorial process), and 65 “orange” sites (less likely to be systematically flawed). Additional black sites were curated but did not appear in the data. cThree hundred eighty-two “black” sites, 61 “red,” and 47 “orange” combined from Grinberg et al. (2019) and (Guess et al., 2019). dList of fake news sites curated by a journalist (Silverman at Buzzfeed), reduced to 21 sites.

Although there is notable heterogeneity among the articles shown in Table 3, particularly in the source of data, the analyses identify at least three consistent attributes of the problem of fake news: First, the distribution of fake-news consumption and sharing is extremely lopsided; most people are not involved at all, and a small number of users are responsible for the lion’s share of consumption and sharing. Second, age appears to be an important variable: People over the age of 65 share far more fake news than do younger adults. Finally, the political distribution is highly asymmetrical. Although some fake news appeals to left-wing views, the majority of fake news is consonant with right-wing attitudes. Accordingly, people on the far right, and Trump supporters in particular, share considerably more fake news than do moderates or liberals.

The articles also share a methodological commonality that reveals a strong limitation: They all operationalize exposure to or sharing of fake news by counting visits to or shares of a limited number of specific websites (Table 3, final column). Fake-news outlets were defined as sites that have the trappings of legitimately produced news but lack the editorial standards or processes to ensure accuracy (Grinberg et al., 2019). Examples included conservativetribune.com, wnd.com, and rushlimbaugh.com. Lists of those sites were carefully curated by a variety of sources (Table 3, footnotes) and cross-checked against fact-checker performance (e.g., Guess, Nyhan, & Reifler, 2020). One can therefore state with confidence that those sites were purveyors of fake news. Most articles listed in Table 3 also showed that the authors’ conclusions were robust to extensions and alterations of their lists. Yet the articles did not consider any other forms of political material online as potential sources of fake news; false advertisements, unchecked false statements by politicians, and false or misleading information in mainstream media were not included in the analyses. Moreover, looking at click-through rates considerably underestimates exposure to false news because most people do not follow the link in the headlines that they see on their social-media feeds (see e.g., Bakshy et al., 2015).

The results in Table 3 therefore present a lower bound on exposure to false and misleading information online. Their converging suggestion that few people visit or share material from fake-news sites does not speak to the magnitude of the disinformation problem overall (as noted by Allen et al., 2020). Concern about the widespread effects of misinformation on society is therefore justified (e.g., Bradshaw & Howard, 2019; Roozenbeek & van der Linden, 2018; Zerback et al., 2020). These legitimate concerns are fueled by a number of issues. For example, Facebook has an explicit policy against fact-checking political advertisements (Wagner, 2020), which was—unsurprisingly—exploited during the December 2019 election in the UK. According to fact-checkers, 88% of Facebook ads posted by the Conservative party during a sampling period immediately before the election were misleading, compared with around 7% of those posted by the Labour party (Reid & Dotto, 2019). In addition, even a small dose of fake news can set agendas in “its ability to ‘push’ or ‘drive’ the popularity of issues in the broader online media ecosystem” (Vargo et al., 2018, p. 2043). Vargo et al. (2018) showed that although fake news did not dominate the media landscape from 2014 through 2016, it was intertwined with American partisan media (e.g., Fox News); each influenced the other’s agendas across a wide range of topics, including the economy, education, the environment, international relations, religion, taxes, and unemployment. 14

Last but not least, people’s perceived exposure to misinformation and disinformation online is high: 15 In the EU, “in every country, at least half of respondents [in the sample of 26,576] say they come across fake news at least once a week” (Directorate-General for Communication, 2018, p. 2). In the United States, “about nine-in-ten U.S adults (89%) say they often or sometimes come across made-up news intended to mislead the public, including 38% who do so often” (Mitchell et al., 2019, p. 15). Globally (across 40 countries), 56% of respondents are concerned about what is real or fake when it comes to online news, and almost four in 10 (37%) said they had come across a lot or a great deal of misinformation about COVID-19 on social media, such as Facebook and Twitter (Newman et al., 2020).

Taxonomies of false and misleading information

What is online false and misleading information? Clearly, it is not a single homogeneous entity. For instance, dangerously misleading online content might arise from deliberate attempts to manipulate public opinion or emerge as an unintended consequence of sharing unverified rumors and false news. Focusing on information falseness and the intent to mislead, Wardle and Derakhshan (2017) distinguished among three types of “information disorders”

16

:

Although this classification establishes some useful general distinctions, the landscape of online falsehoods and propaganda is much more complicated. For example, the difference in intent between misinformation and disinformation is often hard to establish, and the real consequences of both can be equally harmful. Both are therefore usually considered to be false information—or, if presented as news, false (or fake) news. Moreover, there are additional categories of misleading content, such as online political propaganda and “systemic lies” (McCright & Dunlap, 2017); the latter are created and curated by organized groups with vested interests (e.g., fossil-fuel companies denying climate science). Likewise, motivations for creating false content can be financial as well as ideological: Recent findings by the Global Disinformation Index (2019) showed that online ad spending on disinformation domains amounted to $235 million a year.

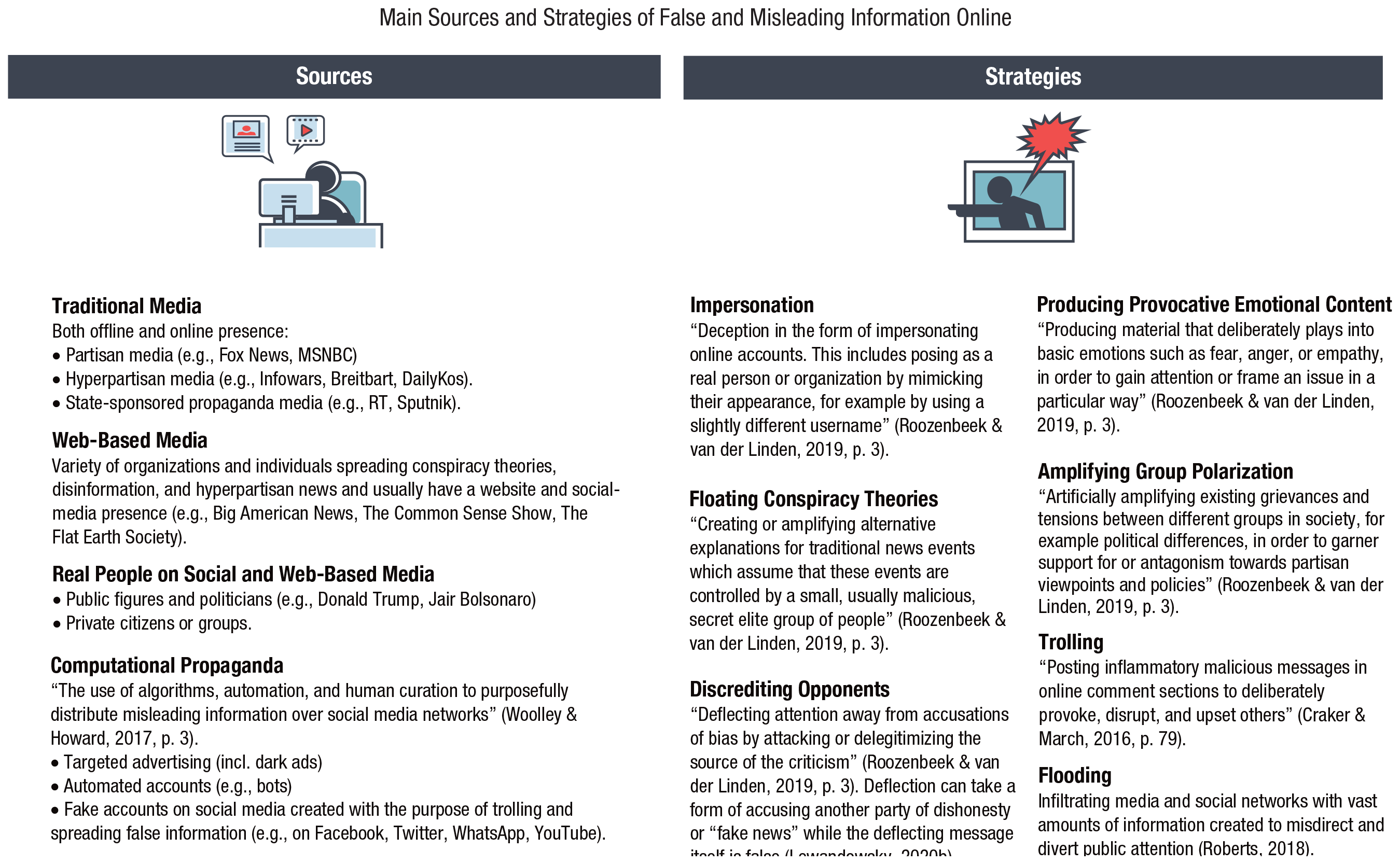

Creating and disseminating false information relies on several common practices that can be catalogued and used to develop tools to counteract disinformation (e.g., inoculation; see Roozenbeek & van der Linden, 2019, and the Inoculation: Boosting Cognitive Resilience to Misinformation and Manipulation section). Figure 5 lists the main categories of false and misleading information in the digital sphere; Figure 6 lists the main sources and strategies used for its creation and dissemination. We have compiled these classifications from a wide range of sources (indicated in the figures). One likely reason for controversies in the literature on the impact and significance of false information is the use of narrow definitions of fake news that exclude many manipulative sources as well as half-truths and other misleading techniques. At the same time, the “type of misinformation on the margins” (Warzel, 2020, para. 33)—that is, “believable information [that] is interspersed with unverifiable claims” (para. 37)—is the most difficult to trace, debunk, and verify. We have therefore chosen to include a variety of sources and types of false and misleading information instead of focusing on a narrow definition of fake news or a more abstract definition of “information disorders.”

Main sources and strategies of false and misleading information in the digital world. Icons are used under license from Adobe Stock.

Propaganda, rumors, conspiracy theories, and other kinds of misleading information are not novel phenomena, nor are they exclusive to online environments (see Uberti, 2016): As early as 1275, England’s First Statute of Westminster (English Parliament, 1275) outlawed spreading false news, stating that “none shall report slanderous news, whereby discord may arise” (Chapter 34). Numerous fake-news stories were published in newspapers in the 19th century, including the Great Moon hoax published in the New York tabloid

Psychology of fake news and receptivity to false information

Recent research has found that false news on Twitter spreads faster, deeper, and broader than does truth (Vosoughi et al., 2018). 17 Fake news appears to press several psychological hot buttons. One is negative emotions and how people express them online. For instance, Vosoughi et al. (2018) found that false stories that “successfully” turned viral were likely to inspire fear, disgust, and surprise; true stories, in contrast, triggered anticipation, sadness, joy, and trust. The ability of false news to trigger negative emotions may give it an edge in the competition for human attention, and digital media may, as Crockett (2017) argued, promote the expression of negative emotions such as moral outrage “by inflating its triggering stimuli, reducing some of its costs and amplifying many of its personal benefits” (p. 769). More generally, people are more likely to share messages featuring moral–emotional language (Brady et al., 2017). It is possible that this type of loaded language and content, which feeds on humans’ negativity bias (i.e., the human proclivity to attend more to negative than to positive things; Soroka et al., 2019), succeeds in “attentional capture”—that is, it manages to shift cognitive resources to particular stimuli (Brady et al., 2019). 18

Another hot button that fake news can press is the human attraction to novelty and surprise. Anything that is new, different, or unexpected is bound to catch a person’s eye. Indeed, neuroscientific studies suggest that stimulus novelty makes people more motivated to explore (e.g., Bunzeck & Düzel, 2006). Vosoughi et al. (2018) found that false stories were significantly more novel than true stories across various metrics. They also found that people noticed this novelty, as indicated by the fact that false stories inspired greater surprise (and greater disgust). One interpretation of these findings is that falsehood’s edge in the competition for limited attention is that it feeds on a highly adaptive human bias toward novelty.

Still another factor in the dissemination dynamics of false and misleading information is that the business models behind social media rely on immediate gratification; deliberation and critical thinking slow users down, which is generally detrimental to a social-media organization’s internal goals. Recently, Pennycook and Rand (2019b) showed that insufficient analytic reasoning—rather than politically motivated reasoning—is what appears to drive people’s belief in fake news. 19 People might believe fake news because they are discouraged from taking the time to think critically about it—but their political leanings affect whether they share it: “Participants were only slightly more likely to consider sharing true headlines than false headlines, but much more likely to consider sharing politically concordant headlines than politically discordant headlines” (Pennycook et al., 2019, p. 3; see also Pennycook et al., 2020). Deliberation and analytic reasoning thus seem to play a role in judging accuracy, but other factors contribute to sharing information, including one’s political loyalty. Political partisanship has also been shown to be a significant factor in selective sharing of news (Weeks & Holbert, 2013) and fact-checking messages (Shin & Thorson, 2017). Moreover, ideologically motivated reasoning has been shown to play a role in endorsement of conspiracy theories (Miller, Saunders, & Farhart, 2016, p. 837); U.S. conservatives are significantly more likely than liberals to endorse specific conspiracy theories or to espouse conspiratorial worldviews in general (van der Linden et al., 2020).

A final factor in the dissemination dynamics of false information is which people are especially susceptible to it. Several recent studies have shown that increasing age is associated with increasing susceptibility to false information (see Table 3). A study of fake news on Facebook found that Americans over age 60 were much more likely to visit fake-news sites compared with younger people (Guess et al., 2019). Furthermore, the vast majority of both shares of and exposures to fake news on Facebook were attributable to relatively small fractions of the population, predominantly older adults (Grinberg et al., 2019). Allen et al. (2020) also found that false news was “more likely to be encountered on social media . . . and that older viewers were heavier consumers than younger ones” (p. 4). Brashier and Schacter (2020) argued “that cognitive declines alone cannot explain older adults’ engagement with fake news” (p. 321), but that gaps in digital literacy and social motives may play a bigger role. The role of analytic reasoning and deliberation may also offer hints as to who is particularly susceptible to false information. For instance, it is possible that users who rely more on reason than on emotions when making decisions may be less vulnerable to fake news; indeed, there is some evidence that is consistent with this possibility (Martel et al., 2020).

Distracting environments

We now turn to a final challenge of online environments: the way they shape not only information search and decision-making but also people’s ability to concentrate and allocate their attention efficiently. As early as 1971, Herbert Simon understood that in an information-rich world, an abundance of information goes hand in hand with a scarcity of attention on the part of individuals and organizations: “A wealth of information creates a poverty of attention and a need to allocate that attention efficiently among the overabundance of information sources that might consume it” (Simon, 1971, pp. 40–41). Information overload and scarcity of attention became even more salient with the rapid evolution and proliferation of the Internet and media technologies. The original goals behind the Web were to create a user interface that would facilitate access to information and to simplify the process of information accumulation in the interconnected online space (Berners-Lee et al., 1992). Organizing information and making it accessible is also part of Google’s official mission statement (Google, 2020).

However, as new informational environments evolved and business models of Internet companies were refined, the goals and incentives of Internet design shifted as well. Human collective attention became a profitable market resource for which different actors compete. Fierce competition for human attention has led to the growing fragmentation of collective attention, with ever greater proliferation of novelty-driven content and shorter attention intervals allocated to particular topics (Lorenz-Spreen et al., 2019). By analyzing the dynamics of collective attention that is spent on cultural items such as Twitter hashtags, Google queries, or Reddit comments, Lorenz-Spreen et al. (2019) showed that across the past decade, the rate at which the popularity of items decreased or increased has grown. For example, in 2013, a hashtag on Twitter was popular on average for 17.5 hr; in 2016, its popularity lasted only 11.9 hr. The authors’ explanation is that when an excess of information meets limited attentional capacities, people’s thirst for novelty leads to accelerated ups and downs for each item and a higher frequency of alternating items. In other words, the amount of collective attention allocated to each single topic is decreasing and more topics are attended to in the same amount of time.