Abstract

Tube yarn is also called glass fiber spun yarn. Due to the excellent properties of glass fiber, various industrial products based on glass fiber are used in a variety of industries. As the most obvious factor affecting the quality of products, quality detection of glass fiber yarns is very important for companies. Due to traditional defect detection relies on the experience and subjective factors of workers, which makes many different views on the same defect. Some traditional methods limit the solution to specific types of defects and do not accurately detect various defects. In this paper, we propose a comprehensive defect detection system of tube yarn via combining machine vision and deep learning methods. In whole system, we inspect the weight through a weight sensor firstly. Then, we propose a multi-scale cross-fusion attention module to improve the MobileNetV2, and combine with machine vision image feature extraction method for hairiness detection. Finally, the modified MobileNetV2 network is used as the backbone of YOLOX network, making the YOLOX is lighter and achieve more efficiently stain detection of tube yarn. Then, the detection results are used to determine whether the glass tube yarn has passed. In addition, we establish an effective and sufficient amount of tube yarn defects dataset. The experimental results show that the proposed hairiness detection algorithm achieve 96% accuracy with 160+ FPS, and the surface stain detection algorithm achieve 0.89 mAP with 71+ FPS on the tube yarn dataset. The system is efficient, precise, and can be applied to actual production.

Introduction

In recent years, with the rapid development of the manufacturing industry, more and more manufacturers have payed more attention to the quality control of products. Defect detection becomes an integral part of the production process, which can ensure that the manufacturing and production processes are controlled and feedback from defect detection allows work as expected. Depending on the nature and extent of the defect detection, we can implement appropriate corrective actions, which can ensure that the manufacturing process performance remains satisfactory. In the production of products, defects are basically caused by machine failure, aging or improper operation of workers, so defect detection can be regarded as a precursor to the diagnosis stage of machine maintenance. And defect detection is also an important part of the detection process, relating to the acceptance or rejection of a product produced by a process or delivered by a supplier. Additionally, it enables part rework and repair, reducing material waste. 1 In general, defect detection is very important in the production of products.

With the increasing development of industrial automation, traditional manual quality detection has gradually been difficult to adapt to high-speed factory assembly line production. More and more researchers are committed to applying machine vision methods to industrial defect detection in factories, which enables detection methods based on machine vision to demonstrate powerful capabilities in all walks.2–10 And some researchers have designed a string of defect detection systems based on machine vision methods for specific products and defects.11–21 Textile fabrics defect was detected via optimal Gabor filter by Jing et al. 2 In, 3 a method for detecting fabric defects using a thermal camera was presented. A method based on principal component analysis was proposed by Kazim to achieve dimensionality reduction-based classification of fleece fabric-based images. 4 In, 5 an objective grades evaluation method of E-glass filament yarn was proposed by analysing the cross-sectional images of yarn. A two-channel deep neural network for apple disease detection by extracting features from HSV and RGB color spaces was constructed by Zhang et al. 6 Zeng et al. 7 proposed an atrous spatial pyramid pooling-balanced-feature pyramid network and used it to achieve the small defects detection on the printed circuit boards surface. Dang et al. used detection transformer to achieve end-to-end sewer defect detection. 8 Deep-separable convolution was used to improve Unet by Jing et al., 9 and proposed a lightweight end-to-end fabric defect segmentation network. In, 10 a rail boundary guidance network was proposed for rail surface defects detection, which solved the problem of fully convolutional networks (FCN)-based methods suffer from the blurry rail edges and inaccuracy during detection.

Due to the development of defect detection methods, there are many researchers who have designed corresponding detection systems for different products. A comprehensive description of defect detection systems was given in, 11 which indicated the components of a system and the selection of cameras, light sources, lenses, etc.. In, 12 it was pointed out that detection often requires multiple sensors to work together in smart manufacturing, and a system for detecting defects in products on smart engineering production lines based on fog computing calculations was designed. In agriculture, a machine learning-based system for detecting surface defects in apples is presented in. 13 In, 14 an online skin defect detection system for jujubes based on hyperspectral images was proposed using machine vision methods. In industry, A machine vision-based steel surface defect detection system is proposed in, 15 which uses support vector machines to learn defect features and achieve real-time steel surface defect detection. A system for detecting defects such as dirt, scratches, burrs and wear on the surface of a part was proposed in, 16 where a CNN network was used to detect the surface of the part defects, and then each defect is classified using traditional machine learning methods to identify the different defects. A fully automated system for the defects detection on the surface of tube yarns and fabrics was presented in. 17 In, 18 a machine vision-based online defect detection system for the printing quality of fluorescent coatings on metal plates was designed, which used color space conversion, blob analysis, sub-pixel edge extraction and template matching to achieve the detection of common defects that appear on fluorescent coatings on metal plates. In, 19 a tunnel surface detection system was proposed, which used a Faster-RCNN based multi-scale feature fusion network to achieve real-time tunnel image defect detection. A collaborative cloud edge fabric defect detection system based on the MobileNetV-SSDLite method was presented in, 20 which improved the detection accuracy of small defects and complex background images in resource-constrained situations. A wire arc additive manufacturing defect detection system based on incremental learning was proposed by Li et al. 21

As the needs of society are constantly being updated, the new composite materials are playing an increasingly important role in everyday life. Such as glass tube yarn, also known as glass fiber spun yarn, is a yarn body formed by winding electronic grade glass fiber yarn around a tube body for ease of packaging and transportation. During the manufacturing and transportation of glass tube yarns, due to the complexity of the production environment and external factors, a series of defects such as hairiness and stains is often inevitably produced on the surface of tube yarn. These defects will affect the quality of the product’s surface, the sales of the product and the performance companies. In addition, if it is made of glass fiber products, it will also produce a series of other hazards to the product, which will then affect the company’s sales interests and cause economic disputes or legal issues. Therefore, it is essential to detect defects on the surface of the tube yarn. As described above, some researchers have proposed many methods for the defect detection of industrial products, but they often only target a certain type of defect, such as tube yarn hairiness detection,22, 23 surface stain detection,24,25 and few systems or devices can achieve comprehensive detection of multiple defects in tube yarns. To address these issues, we have designed a glass tube yarn surface defects detection system, combining software and hardware, for efficient and accurate cop defect detection. For the hardware component, we design a data collection system to capture multiple defect images of the tube yarn. For the software component, we propose a combination of conventional and deep learning algorithms for tube yarn hairiness detection. In addition, we propose a surface defect detection algorithm based on deep learning for tube yarn surface stains. Finally, a corresponding operating software is designed to control the hardware device. In general, our contributions are summarized as follows. (i) A mature bobbin yarn defect detection system has been designed and used in production practice. After field testing, it can basically meet the needs of factory production detection, and can replace manual bobbin defect detection. (ii) A plug-and-play multi-scale attention module is proposed to improve the MobliNetV2 algorithm for fast and accurate classification of tube yarn hairiness. (iii) Proposes an improved YOLOX network for accurate and fast detection of defects such as surface stains and stains on bobbin yarns. (iv) A cop hairiness defect classification dataset containing more than 5000 images is constructed. These defects include cop hairiness, hair clips, loops and other defects, which basically cover all the defects that may occur in cop manufacturing in the industrial field.

The rest of this paper is organized as follows. In Section 2, we present the hardware design of the entire system. In Section 3, the two datasets for tube yarn defects, the algorithms for the detection of tube yarn hairiness and surface stains are described in detail. The software design of the system is presented in Section 4. Section 5 provides an overall summary of the article.

The hardware system design

According to the actual market research, we fully consider the actual industrial production for the tube yarn surface surface quality detection, which needs to detect the tube yarn hairiness, surface stains and other different defects. Therefore, in the system we designed, different positions are used to detect different defects. The online detection system for tube yarn surface mainly detects and evaluates whether the surface defects of G75 tube yarn are qualified. (i) Automatic adsorption of yarn threads, avoiding the influence of threads on hairiness detection, reducing the workload of manual thread winding and improving overall detection efficiency. (ii) Automatic dust and static removal to prevent dust from adhering to the tube yarn and affecting the effectiveness of surface defect detection. (iii) Automatic weighing, it is ensured that the yarn wound on each cylinder is basically the same and in compliance with the regulations via the automatic weighing of the tube yarn. (iv) This enables the detection and classification of tube yarn hairiness defects and gives a score for the size and number of defects. (v) The detection of stains and spots on the surface of the tube yarn is similar to the detection of hairline defects, with a score based on the size of the stain and the number of spots. (vi) Statistical analysis of the surface data of the bobbin yarn, the product is judged as qualified according to the evaluation criteria and the qualified and unqualified yarns are automatically separated.

An automated detection system consists of three elements as shown in Figure 1. Each part fulfils a different function in whole detection procedure. “A” represents the feedstock module, which feeds tube yarn through the conveyor into static elimination device (“D”) and then into “B”. “B” is the tube yarn detection module and is also the most important part of our design, where ①, ② and ③ represent three positions used to weight verification, hairiness detection and surface stain detection of the tube yarn respectively. The inspected tube yarn will flow into section “C”, where the unqualified tube yarn (such as “E”) will be automatically removed by a pneumatic pusher to the unqualified area, and the qualified tube yarn (such as “F”) will flow into the next section. Topology of our designed system. The system consists of three parts: A, B, C, which are feedstock and pre-processing module, defect detection module and module for sorting qualified and unqualified tube yarn.

Defect detection and product classification are the most important parts of the system. The overall architecture and working procedure are explained in Figure 2. Firstly, images of tube yarn are captured by four GigE cameras of two detection position. Then, these images are transmitted to the central processing server via a Gigabit network cable. These images are processed and used to detect hairiness and stain defects in the central processing server, then the yarn is judged to be qualified according to the detected results from hairiness and stain detection positions. The procedure of defect detection and product classification of our system. The whole procedure can be divided into three parts: obtaining images of tube yarn, detecting defect from these images, and classification of tube yarn.

In summary, our system firstly measures the weight of tube yarn to check whether the weight of the tube yarn conforms to the standard weight, because when the weight is up to standard, the length of yarn carried on each tube is also in accordance with the manufacturing standard. Secondly, surface defects are detected by our proposed defect detection methods. These defects include hairiness, stains and other surface defects. Finally, the tube yarn is sorted by detection result.

The hardware structure platform of the designed system is shown in Figure 3. Main components include holding devices, LED strip lights, cameras, a weight sensor, etc.. The weight of the tube yarn is validated at the position ① shown in Figure 3. A weight sensor is placed underneath the detection position, and the weight of the tube yarn is directly measured by the weight sensor via removing the weight of the tube yarn load-bearing tray during correction. The measured weight of the tube yarn is displayed on an electronic screen for workers to view. In the second detection position, two cameras are used to capture images in real time to detect defects such as yarn hairiness (“maoyu”), yarn loops (“maoquan”), yarn cluster (“maojia”) etc. on the oblique and vertical sides of the tube yarn, as shown in ② of Figure 3. The third detection position is used to detect surface stains on the tube yarn, which is rotated in the position and the camera transmits the captured images in real time to the central server for processing and defect detection, as shown in ③ of Figure 3. Hardware view of the system, where the different colored circles indicate the different vision devices. Main components include holding devices, LED strip lights, cameras, a weight sensor, etc.

Specification and number of visual components.

The detection algorithms

In this section, the dataset of tube yarn defect is described firstly. Then, a detailed description of the algorithms used in our system, which is in terms of both the yarn defect detection algorithm and the algorithm of tube yarn surface stains detection.

Establishing a dataset of tube yarn defects

The details of the hairiness defects dataset.

Types of hairiness defects and their presentation. The dataset includes six defects: “maoyu”, “maoquan”, “mohu”, “xiantou1”, and “xiantou2”.

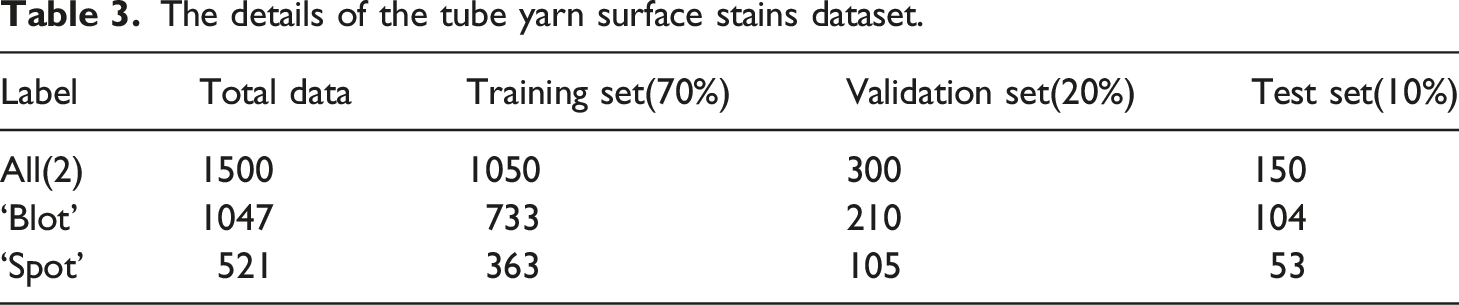

The details of the tube yarn surface stains dataset.

Types of the tube yarn surface stains and their presentation. The dataset includes two surface defects: “blot” and “spot”.

Tube yarn hairiness defect detection

There are three main parts including defect extraction, defect classification and defect statistics in tube yarn hairiness defect detection, which is shown in the Figure 6. All possible defects in the image are extracted firstly by using machine vision feature extraction methods, then these defects are classified, and finally the defects detected are counted to achieve a graded evaluation of the product. Method for detecting tube yarn hairiness defect. The detection consists of three process: extracting defects information, cropping image blocks, and defect classification.

During tube yarn hairiness defect detection stage, the feature points in the images acquired by the camera are detected using the method that we previous researched in Ref. 26. Since the yarn image is captured with a black background, feature points are almost distributed in the regions where the black background and the white yarn meet, i.e. at the edge of the tube yarn, these dense feature points are traced to obtain the edge of the tube yarn, the edge approximate a straight line. Then, the feature points and other lines above the edge are considered as defective parts, which are cropped out to obtain image blocks containing defects. These blocks are classified to determine the type of defects, and these defects in all images are counted to obtain a basis for grading the tube yarn. For defect classification, image blocks are classified by the deep learning classification network we proposed to identify defects that appear in image blocks. The reason for above processing because deep learning image classification algorithms are well established and many hardware devices are exceptionally good at accelerating convolutional neural networks. In addition, deep learning algorithms are more robust than traditional algorithms, so instead of using traditional machine vision algorithms to accurately detect every type of defect. In short, we use traditional feature extraction method based on machine vision to extract the defects information, then using classification network to identify every block belong to which defect. The detection progress is efficient and accurate.

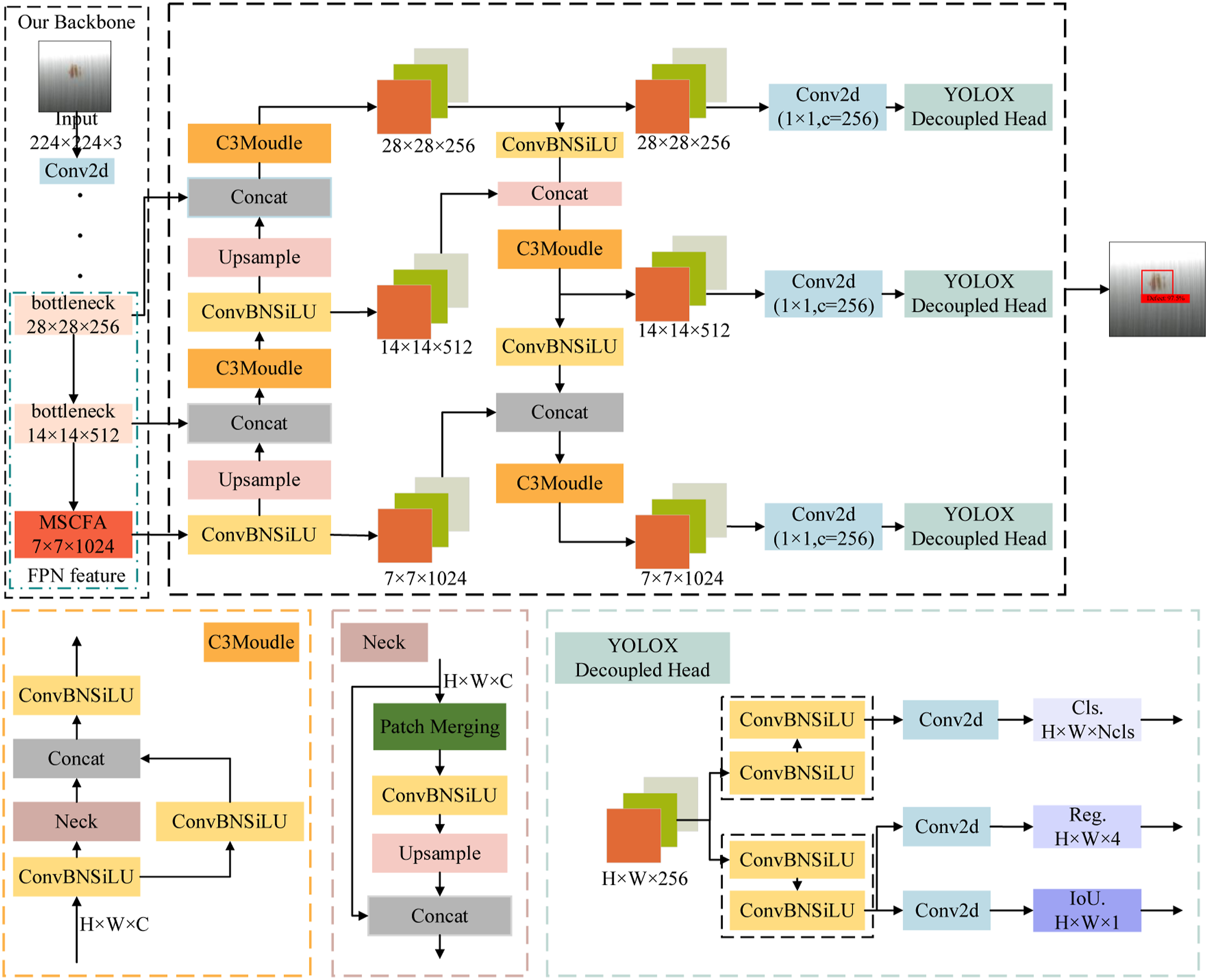

We use the modified MobileNetV2 network 27 to defect classification. Due to the development of neural networks, most networks are able to meet the classification accuracy requirements. But when applied deep learning networks to industrial production process, the requirement for real-time is very high. Many networks cannot be applied to industrial process because they cannot meet the real-time requirements. MobileNetV2 is a lightweight network, and its classification of defect images already meets the requirements of most industrial process. We modified the network structure of MobileNetV2 by reducing the number of network layers to improve its efficiency. Then, our proposed Multi-Scale Cross-Fusion Attention Module (MSCFA) is added to significantly improve the classification accuracy without affecting the classification speed of the original network.

Multi-scale cross-fusion attention module

The attention module has two main aspects: deciding which part of the input features needs to pay more attention, and allocating limited information processing resources to the important parts, which can effectively improve the network’s ability to extract valid information. Inspired by attention modules such as SE,

28

CBAM

29

and ECA,

30

we have designed a Multi-Scale Cross-Fusion Attention Module, which is a plug-and-play module with the following module schematic as shown in Figure 7. Structure of our proposed Multi-Scale Cross-Fusion Attention Module. Where GAP is global average pooling, GMP is global max pooling.

Firstly, the input feature map is convoluted with three different sized convolution kernels respectively, which achieves multi-scale feature extraction. This is equivalent to extending the feature map of one scale to multiple scales, which is a better fusion of both contour features and detail features of the target. Then, the global average pooling (GAP) and the global max pooling (GMP) are performed for each scale feature maps. Followingly, all the global max pooling results are cross-fused and all the global average pooling results are also cross-fused, and two 1 × 1×C feature sequences are obtained. The two feature sequences are then passed different stimulus layers in parallel and later fused. The fused results are passed the fully connected layer and the activation function layer in turn to obtain a set of weights.

In MSCFA, we use GMP and GAP at the same time because different pooling operations has different results, either to reduce features and parameters or to maintain invariance in rotation, translation, scaling, etc.. Average pooling is mainly used when all the information in the feature map should be considered, while max pooling is mainly used to reduce the effect of useless information. Such as max pooling, which is often used in the shallow layers of a network, as the first few layers contain more irrelevant information for the image feature. At the same time, max pooling is designed to reduce the dimension of features while extracting better features with stronger semantic information. We use global pooling instead of the full connection layer, which can save parameters, greatly reduce network parameters, and avoid overfitting. On the other hand, each feature map has the equivalent to an output feature, and this feature represents the feature of our output class. In addition, average pooling can reduce the increase in variance of the estimates due to domain size constraints and preserves more of the background information of the image. Max pooling can reduce the bias of the estimates due to parameter errors in the convolution layer and preserves more of the texture information.

Transformer-style network architecture

The network structure of our improved MobileNetV2.

Tube yarn surface stain detection

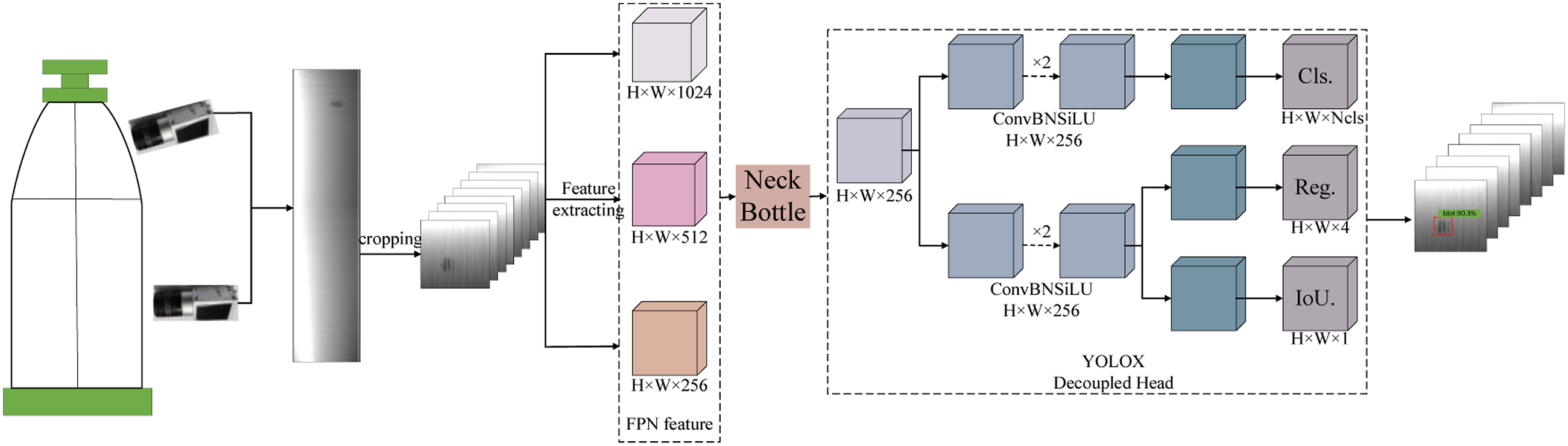

Apart from hairiness defects, there are also surface defects caused during the forming of the tube yarn. These stains defects on tube yarn surface are caused by d improper site environment and worker operation. We use deep learning-based object detection methods to detect stains. Deep learning-based object detection algorithms are mainly divided into one-stage algorithms and two-stage algorithms, in which the detection speed of the one-stage algorithm is often higher than that of the two-stage algorithm, but the detection speed is not as fast as the two-stage algorithm. On the contrary, the two-stage methods often have a high detection accuracy, but the detection speed is not as fast as the one-stage methods. In industrial applications, under the condition of meeting certain detection accuracy, the required detection speed must be achieved to be applied to actual production. Therefore, we adopt the one-stage object detection algorithm, because it compared with the two-stage deep learning method has an absolute advantage in processing speed. With the development of deep learning, the detection accuracy of the one-stage algorithm also basically reaches the requirements of actual production. One-stage methods can be applied to actual detection equipment. For tube yarn stain defects, our detection process is shown in Figure 8. Process for detecting surface stains on tube yarn. The whole detecting process can be divided into three parts: the image is cropped to a series of image blocks, FPN feature extraction, and Decoupled detection head.

Images of tube yarn surface are captured by the industrial camera firstly. Then, these images are cropped into individual blocks because of the original images are high-resolution and unbalanced in width to height ratio. Then, we improve the YOLOX

33

object detection method to detect stain defects. Our improved network structure is shown in Figure 9. Based on the YOLOX, the original network uses the darknet53 network for feature extraction. In our improvement, we use the modified MobileNetV2 network that described in The structure of our detection network. It consists of FPN feature extraction, bottle network and decouple head, which achieves tube yarn stains detection.

Software system design

A complete system consists of a hardware part and a software part, the software part of our designed system is described in this section. For the defect detection system we have designed, we have designed a software operating system to visualise the data during the detection process in real time, which is easy for the operator to view and manage. We designed the operating software community as shown in Figure 10, where (a) is the main interface, showing the overall information of the detection, including the product information of the currently inspected samples, the defect information, and the rightmost part of the interface which shows the total number of products currently inspected, the number of qualified products, the number of non-conforming products and the current passing rate, which is convenient for workers to view in real time. The (b) is the parameter setting interface of the software, which mainly completes the setting of some weights that are used to calculate the weight of each defect when evaluating and grading the product, as well as the possibility of setting by the administrator the maximum number of each defect that can exist in each tube yarn, and the direct determination of a non-conforming product when the number of detected defects exceeds the set threshold. The (c) shows the data view interface, which allows one to observe a view of the detection data over a period of time, such as the test data of the type of product, the performance of the product, the equipment and worker responsible for detection, which can facilitate subsequent traceability of responsibility. The (d) is a view of the detected defects as images, which will be saved in a local folder when it is determined in the main page to save the images. These images are advantage for workers to study defects characteristics and use as a data set in subsequent studies. The operating software interface, which contains a total of four screens: (a) the home page, (b) the parameters setting screen, (c) the data view page and (d) the image view page, each implementing a different function.

Experiments and analysis

In this section, we have verified the effectiveness of the entire system, using defective and qualified yarns tested in the factory for defects and product ratings test, which is equivalent to evaluate the performance of our design system under conditions of priori knowledge. Firstly, the performance of the yarn hairiness detection algorithm is examined, the accuracy and speed of the hairiness defect classification algorithm is analysed and compare with some mainstream algorithms. Then the effectiveness of the surface defect detection algorithm is analysed and compare with a series of state-of-the-art algorithms. Finally, the overall operation of the system is tested, including classification, detection, product rating and software operation. In the experiments, all experiments are implemented on a local workstation. We implemented our models on PyCharm using the open source toolkit PyTorch 1.9. The experimental hardware environment is Windows 10 with an Intel Core i9-10900X CPU @ 3.70 Hz GPU of NVIDIA GeForce RTX 3090. The algorithms compared are all from the model library provided by Paddlex, which is a technologically advanced and fully functional open source deep learning platform developed by Baidu. It merges the core framework, tool components and service platform of deep learning, simplifies the complex steps in deep learning, enables easy implementation of the pipeline from dataset construction to model selection and training to result prediction.

Tube yarn hairiness defect classification experiment

In industrial production, there are two main factors to be considered, detection speed and detection accuracy. Therefore, when our method is compared with other state-of-the-art methods, the accuracy and frame rate are considered. Accuracy (acc) is an important reference indicator for classification, which is the proportion of correctly classified samples to the total number of samples, i.e.

Accuracy and frame rate performance comparison of different models.

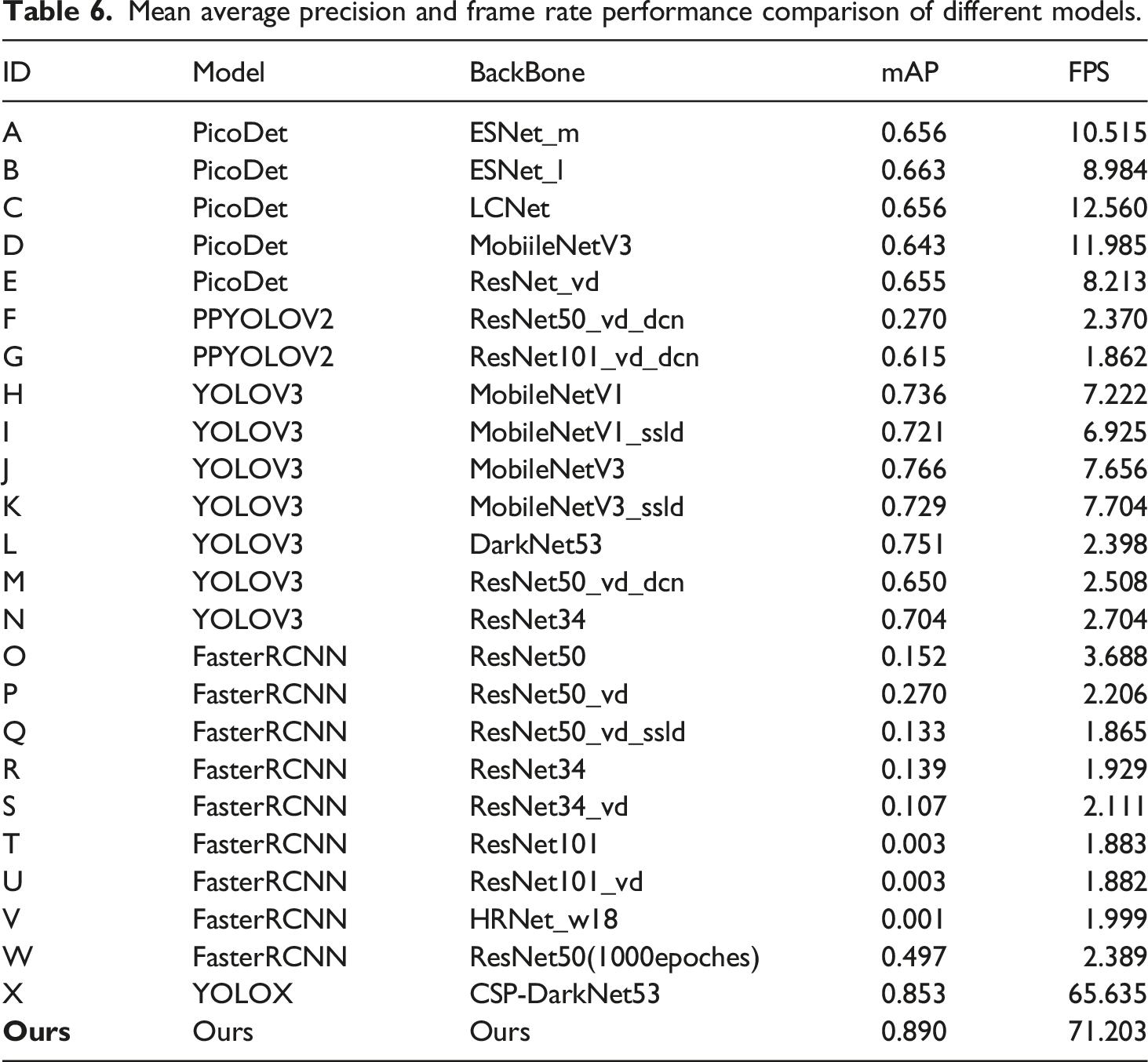

Surface defect detection experiment

For defect detection task, mean Average Precision (mAP) is usually employed to quantify the performance of the algorithm, which combines precision and recall to provide a comprehensive assessment of the network model and is calculated using the well-known metrics as follows

Mean average precision and frame rate performance comparison of different models.

Detection results of different models, GT (Ground Truth), a (PicoDet + ESNet m), b (YOLOV3 + MobileNetV1), c (FasterRCNN + ResNet50 with 1000 training epoches), d (YOLOVX) and f (Ours).

From the experimental results, we can obtain that with the development of deep learning networks, the new network has higher accuracy and detection speed when training the same intensity. While for some two-stage networks, such as the FasterRCNN network in the experiment, its detection accuracy rises as the training epoches increases. When we increase the training epoch from 300 to 1000, its accuracy rises by 30%+, but its detection speed does not change significantly. Combining the training epoch and detection speed, the one-stage network has a greater advantage in practical applications. In this experiment, our improved YOLOX network achieves the best results at the same training cost. Our method with its high detection accuracy and speed for tube yarn defect detection, can basically meet the basic needs of the industry.

Overall system test experiment

After completing the system design and the defect detection algorithm, we combine all modules to form a complete detection system and verify the overall operation and integrity of the system. During the detection process, the LED lights up when the product appears on the position and the detection position is rotated to ensure that the camera acquires the entire surface of the yarn. The real-time detection information is displayed on the GUI interface. The actual running performance of our system is shown in Figure 12, where (a) is the working condition of the hairiness defect detection position, (b) is the working situation of the surface stain detection position and (c) is the real-time detection information. In addition, the system is equipped with an alarm device. when any fault occurs in the vision component or the motion control component during system operation, the alarm light immediately lights up to show red and the system stops working, while it will display green when it works normally, as shown in (d) and (e). System operation display, (a) is an illustration of the operating status of the hairiness defect detection position, (b) is an illustration of the operating status of the surface defect detection position, (c) is a display of product detection information in the GUI interface, (d) is a red alarm when the system is abnormal, and (e) is an indication of the status of the alarm when the system is operating normally.

Actual running test results of our design system.

Conclusion

In this paper, we design a defect detection system of glass tube yarn based on machine vision and deep learning. The whole hardware detection platform includes three detection position, weight verification position, tube yarn hairiness detection position and tube yarn stain detection position. For weight verification, we use a weight sensor to inspect that the weight of the tube yarn to be detected is in accordance with production standard. For tube yarn hairiness detection, we use image feature extraction method based on machine vision and improved MobileNetV2 method with the proposed Multi-Scale Cross-Fusion Attention Module to detect hairiness, which achieve excellent detection result. We modify the backbone network of YOLOX via replacing the original backbone network with a more lightweight feature extraction network. With above improvement in YOLOX, our proposed stain detection method reaches higher detection speeds while maintaining good detection results. The system uses machines instead of humans to carry out tube yarn defects detection, basically meeting the needs of industrial production and having the ability to be applied in factories. In the future work, the defect detection can be achieved using a lighter network, the real-time of the system is further improved by other light detection networks. This is do to make the system can meet the detection requirements of most products and can be applied to a wider range of applications.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by Innovation Capability Support Program of Shaanxi (No.2021TD-29), in part by the Youth Innovation Team of Shaanxi Universities, in part by the National Natural Science Foundation of China (No.62176204), and in part by Key research & development plan of Shaanxi province (2022GY-066).