Abstract

In this article, we examine the television series about global surveillance Person of Interest (POI) (aired on CBS, 2011–2016) to reflect on popular understandings of surveillance and account for its representation of artificial intelligence (AI) and algorithmic surveillance. Drawing on television, media, and surveillance studies, we focus on the power of representations of social relations and diegetic technologies in possible and imagined futures to explore the role of cultural representations in shaping social order. Through the character of the AI Machine representing algorithmic surveillance, we evaluate the show’s critique of algorithmic autonomy and contend that, as a media technology of surveillance, the show participates in the hype of big data as a panacea while banalizing surveillance. We argue that POI could facilitate a comprehensive analysis of the politics of algorithmic surveillance but fails to do so due to its uncritical representation of artificial intelligence agencies in detecting security risks.

Introduction

First launched in the fall 2011 season as one of CBS’s flagship TV programs, 1 Person of Interest (POI) is a science fictional drama about global surveillance and prediction machines that spanned five seasons and 103 episodes. The show introduces Team Machine, a self-appointed group of secret operatives using top-level algorithmic surveillance 2 developed by the US government to counter terrorism and preempt violent crimes. Although POI is science fiction, its contemporaneity resonated strongly (Rothman 2014; Takacs 2016), more so in the wake of the 2013 Snowden leaks, which revealed extensive government surveillance programs conducted by the National Security Agency. 3 As Jonathan Nolan, the show’s creator and first executive producer, stated, POI is “maybe the only show. . . that started out science fiction, and then by season three was basically a documentary.” 4

Of course, POI did not become a documentary, but it summons viewers to a broader discussion over the use of surveillance for national security purposes. For Nolan, the show consciously and explicitly adopted a critical posture toward surveillance and its possible consequences for civil liberties. In an interview with The New Yorker, Nolan defended his show from being an “apologia for the surveillance state.” As he averred: “I totally reject that. If there’s a cynicism to the show, it’s in the premise not just that this is inevitable but that it’s already happened. The science fiction part of the show is that the Machine is accurate, but the invasion of civil liberties is not imaginary” (Rothman 2014). In this article, we examine POI and popular culture to reflect on popular understandings of the political and social consequences of surveillance and to account for its representation of artificial intelligence (AI) and algorithmic surveillance. We explore how the show, in the form of popular media both acting as a media technology of surveillance and as representation of one, participates in the hype of big data as a panacea while simultaneously banalizing surveillance. Even if Nolan sees in science fiction a shield from criticism of the show, we argue that POI partly falls short of its critical aspirations.

Rather than analyzing the show “as text” for its realist depiction of surveillance, we take into account the role of television in the social fabric of American life as a cultural and political force and we focus on the power of representations of social relations and diegetic technologies in possible and imagined futures, drawing on interdisciplinary contributions from television (Casey et al. 2008; D’Acci 2004; McCabe 2011; Mittell 2009; Newcomb 2006; Steenberg and Tasker 2015), media (Couldry 2000), and surveillance studies (Lyon 2018; Monahan 2022; Zimmer 2015) to make our case. These contributions share a similar concern for the role of cultural representations in shaping social order. We contend that POI’s procedural and serial format and content could facilitate a comprehensive analysis of the politics of algorithmic surveillance but fails to do so because of an uncritical representation of AI agencies in detecting security risks. As featured, the seemingly intimate representation of algorithmic surveillance, the proposed opening of the notorious AI black box, remains incomplete. In the end, notwithstanding the critical intention of its author, POI reproduces what boyd and Crawford (2012) identify as the “mythology of big data” and participates in the normalization of surveillance practices (such as facial recognition technology; Andrejevic and Volcic 2021; Gates 2002; Hill et al. 2022). For boyd and Crawford, big data is as much new technologies (more powerful computing) and new methods of analysis (pattern recognition and anomaly detection) as it is a “mythology: the widespread belief that large data sets offer a higher form of intelligence and knowledge that can generate insights that were previously impossible, with the aura of truth, objectivity, and accuracy” (p. 663). By reproducing this mythology, POI strives to heighten the belief in the accuracy of surveillance practices. In this sense, whether audiences accept this belief is less important than its representation in the show, which normalizes the use of algorithmic surveillance by security institutions: in other words, POI itself is an expression of the mythology that boyd and Crawford posit. 5

In the following sections, we first set our theoretical framework and contextualize our analysis as we examine the politics of televisual popular culture and explain how it may contribute to the normalization and critique of the national security state. Then, we explore POI’s representation of surveillance and how we may understand POI as a surveillant narration device to study algorithmic surveillance. In the third section, we open the black box of algorithmic surveillance to show how it works by deepening the portrayal of algorithmic surveillance through the character of the Machine. This allows us to argue that POI normalizes surveillance by the national security state before and elicits us to explore the show’s critique of algorithmic autonomy.

Setting the Context and Situating the Analysis

Representing Surveillance Technologies and Possible Futures on Television

This piece assesses the representations of surveillance technologies in this one television program and is concerned with the power of media representation. Media studies emphasize the critical analysis of media to understand how societies function and change, and how media structure social reality (Couldry 2000). Media studies coalesce around the influence of media power and “the conditions and power relations of the societies in which media operate,” as Ouellette (2013) argues in the introduction to her Media Studies Reader (p. 1). Therefore, this piece is grounded in both media studies and television studies.

Television studies examines, among other things, “the role of television in shaping social order” and emphasizes how “it has the capacity to help construct the society in which it sits and that, in turn, gives television and the agencies which produce it a remarkable power” (Casey et al. 2008, x). Most importantly, television is a mass medium: representations in media can forge perceptions of reality, and mass media does this for the masses (Esquenazi 2017). Television series participate in the constitution of our political, social, and geopolitical imaginary (Heller 2005). Esquenazi (2017) concurs: “television programs have been oriented almost everywhere in the world toward a kind of almost obsessive follow-up of current events, a scripted version of reality or more exactly of a spectacular and immediate vision of social and political reality” (p. 212; our translation). Television allows us to experience—and critique—society and political life with the undeniable advantage of showing “fictional realities” presenting tensions and of making the audience experience emotions by confronting them with staged words, images, and thoughts. In addition, television studies have documented and exposed “the profound influence [of television] on social life and the rapidly changing technology” (Casey et al. 2008, xi). This work does not adhere to a realist rendition in representation but relies on the social relations and technologies presented in terms of imagined futures and possibilities.

The prolific cinematic and televisual productions resonating with the US national security state from World War II onward make for a special relationship between American society and how it is imagined on screens, big and small (O’Meara et al. 2016). This means that national security entertainment can be used as a form of advertisement for the national security state or a critique of it (Spigel 2004; Takacs 2012; Tasker 2012). Since 9/11, some of the most popular TV series feature the national security state (Melley 2012, 5), including 24 (on Fox, 2001–2010), The Unit (on CBS, 2006–2009), POI (on CBS, 2011–2016), Homeland (on Showtime, 2011–2020) and Westworld (on HBO, 2016–2022). Popular culture has become an ideal platform to study the depiction of national security scenarios and the politics of the national security state (Jackson and Daniel 2003).

The blurring of the lines between reality and fiction is certainly not unprecedented, as the history of collaboration between the Pentagon and the entertainment industry goes back as far as the Second World War. The novelty, however, is that the American public has become somewhat accustomed to such fetishism of national security. As Kumar and Kundnani (2013) aptly point out, “film and television become arteries through which the national security state circulates its latest obsessions.” These popular representations of surveillance technologies and the national security state make things seem right, with television being a highly accessible media (Letort 2017). Lenoir and Caldwell (2018) echo this in their conclusion on the influence of the popular cultural representations from the military-entertainment complex after 9/11: “The popularity of TV programs, games, and films dealing with the War on Terror and imagined future warfare ensures that whether or not you support the weaponization of drones and the automation of surveillance for identifying potential terrorist, you are predisposed to accept them as simply the way we conduct war today” (p. 47).

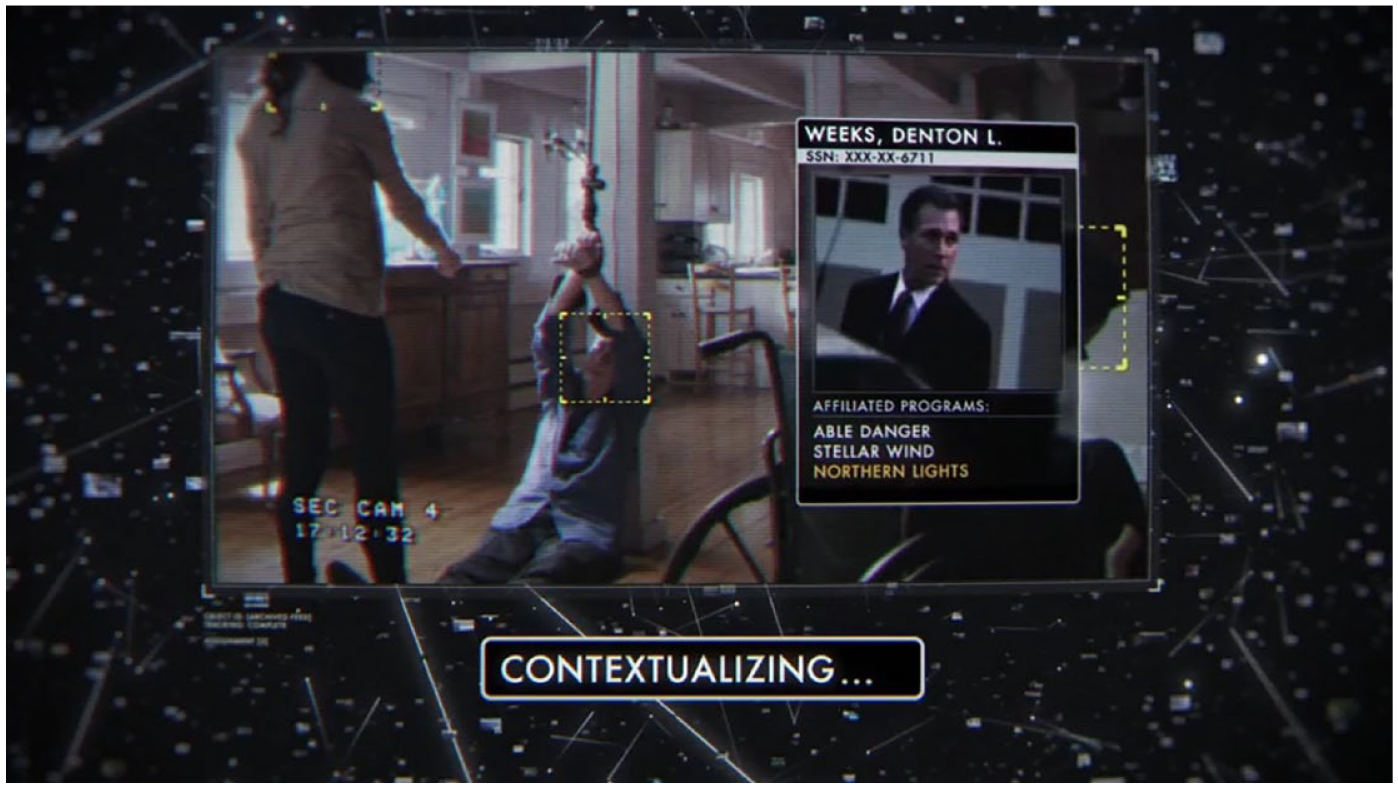

Diegetic Technologies on TV as Imagined Experiences

POI is a science fiction drama that vacillates between being a cop show and an intelligence show. The series follows a team of six main human protagonists 6 helped by the Machine. The emergent artificial superintelligence, coded and created by the character Harold Finch, has a life of its own (and is sometimes referred to as “Her”) and becomes a key protagonist. Each episode follows the same format: we see the Machine in action, in a flashback using CCTV and facial recognition to show people being targeted and digitally traced through a number produced by the Machine’s algorithmic system. The show then skips to the present and hints at the future, where viewers see glimpses of what may come, all from the multiple points of view of the Machine’s eyes (see Figure 1). This opening sequence emphasizes the feeling that viewers can see algorithmic surveillance from the inside through the Machine’s eye. Conceived as an antiterrorism feature, the Machine came to detect all kinds of future violent acts—and it is why its creator “de-commissioned” it from the security state, fearing it would be misused. The Machine monitors and analyzes data (from surveillance cameras, electronic communications, and audio input), calculates risks of violence, and makes predictions. Using the information produced by the Machine, the Team works in secret to stop crimes and terrorist acts from happening, preventing futures from materializing (Hansen 2015, 212–213). Hence, in each episode, viewers follow the Team as it works to come to the rescue of the person (linked to the number) from corrupt state or police forces, solving the case in each episode. Throughout the show, larger arcs are followed, all circling around the relationship to the Machine, technology’s role in shaping society and the fabric of life itself, and our inability to evade the ever-watchful eye of the surveillance society. In this sense, a television series like POI functions as a media technology that provides an ideal platform for studying surveillance technologies and media as surveillance understood as “narrative processes of surveillance” and “visual rhetorical devices” of fictional representations of surveillance.

Machine identifying target subjects.

Going beyond popular culture as a reflection or mirror of society allows us to engage with this televisual experience as an esthetic experience and critique of society and political life by playing out imagined scenarios with the technologies embedded in the fiction’s narrative structure. Beyond the criticism of the technological drift that characterizes most science fiction works, science fiction remains rich in imag(in)ing and reflecting on many elements of social life, representing social universes, technological innovations, and organizational and human contexts based on current circumstances or our everyday life. This is what Kirby (2010) describes as “diegetic prototypes,” that is, “cinematic depictions of future technologies.” He asserts that these diegetic prototypes can “demonstrate to large public audiences a technology’s utility, harmlessness, and viability” (p. 195). It is as if popular culture, with such diegetic technologies, offers a glimpse of a future to come so that we can see the future now and evaluate current surveillance technologies and practices. And, as Kirby aptly points out, “diegetic prototypes have a major rhetorical advantage even over true prototypes: in the fictional world—what film scholars refer to as the diegesis—these technologies exist as ‘real’ objects that function properly and that people actually use” (p. 195).

The imagined experiences of these diegetic technologies should not be undermined, as they may allow a questioning that society has not yet been able to do. For instance, Steven Spielberg’s film Minority Report (2002) (based on Philip K. Dick’s novella), which features biometric identification as a diegetic prototype, interrogates biometrics as everyday technologies. In one scene, John Anderton (played by Tom Cruise) enters a store in the shopping mall and is greeted as Mr. Yakamoto; he was given new eyes—Mr. Yakamoto’s—to hide from the police state and its use of iris identification technologies. The everyday use of biometric surveillance was not possible in 2002 when the movie came out: it could be possible today, yet it is not deployed as such in shopping malls. Minority Report shows the importance of diegetic prototypes in popular culture. In the case of a popular television program like POI, popular culture can take on an educational dimension to help us imagine and reflect on phenomena. This is what the media may offer within the rules of a fictional world: social representations of working technologies that may influence the real world. What Kirby concludes here about cinema may certainly be said about television: Narratives in cinema require certainty to move their stories forward. Cinematic texts require technologies to work and provide utility to their users. Technological objects in cinema are at once both completely artificial—all aspects of their depiction are controlled—and normalized as practical objects. Characters treat these technologies as a “natural” part of their landscape and interact with these prototypes as if they are everyday parts of their world. . . Thus, the need for a potential technology is established as given within the framework of a popular film (p. 196).

How We Study Algorithmic Surveillance in Popular Culture

Person of Interest as a Surveillant Narration Device

A television series such as POI and the visual scripting it presents with the artificially superintelligent Machine put the logic of preemption into operation in an imagination of possible futures that are part of a surveillance architecture used to manage the living environment of the US national territory. From the beginning, POI looks eerily like our present, but it turns into a dystopia, with the frightening scenario of omniscient surveillance orchestrated by the ever-increasing proliferation of surveillance cameras and intelligent capabilities of mobile technologies.

Melley (2012) developed the concept of “covert sphere” to understand the interplay between fictional and real worlds in relation to the national security state. A covert sphere consists of “a cultural imaginary both forged by institutional secrecy and public fascination with the secret work of the state” (p. 5). A TV show like POI allows us to see the national security state “in action.” It gets one to become more aware of and familiar with “algorithmic security and surveillance,” the geopolitical assemblage of security that deals with big data—data encoded to an algorithmic subject, data that comes from geoweb, remote sensing technologies, private security companies’ intelligence, and surveillance knowledge, as well as knowledge produced by intelligence and national security institutions (Crampton 2015, 3). This algorithmic surveillance is dependent on the “sensor society” described by Andrejevic and Burdon (2015), “a world in which the interactive devices and applications that populate the digital information environment come to double as sensors” (p. 20). The Machine embodies the link between the sensor society and the security assemblage.

Although popular representations such as POI do not constitute accurate portrayals of algorithmic surveillance and the national security state, they are informed by actual events and provide meaning to practices and concepts that sit beyond most people’s access. As surveillance studies scholar Marx (1996) often reminded us, popular culture was integral to “developments in technology”: “art, science fiction, comic books, and films have anticipated and even inspired surveillance devices and applications to new areas” (p. 194). The depicted images of surveillance technology in popular culture were consequently highly informative for understanding contemporary everyday applications. The Orwellian Big Brother concept from the 1949 novel, 1984, and Philip K. Dick’s “precrime” from his 1956 novella, Minority Report, stand as predictive or behavior control technologies of surveillance which have a long standing in popular culture and through which we appreciate their meaning in our digital surveillance context; the prevailing CCTV technologies readily show how some “[c]ontrol technologies have become available that previously existed only in the dystopic imaginations of science fiction writers” (Marx 2002, 9).

In line with Marx, Kammerer (2004) points out how, in the 1990s, “mainstream commercial cinema has seen an obvious trend to integrate the imagery and the esthetics of video surveillance into the film itself, and/or to make the consequences, blessings or terrors (as the case may be) of a dooming ‘surveillance society’ the subject matter of an entire movie” (p. 468). The same may be said of the 2000s and 2010s television series that made CCTV and data surveillance a typical feature. As Muir (2012) explains, cinema lends itself as: an ideal medium for considering the ways in which the invisible can be made visible, particularly in relation to a surveillance society which is increasingly characterised by “invisible” technologies which are embedded into the fabric of urban architecture. [. . .] In so doing, however, it is possible that cinema itself becomes complicit in the very surveillant assemblage that it seeks to portray and critique (p. 264).

For this analysis, we follow Levin’s (2002) rendering of “surveillance as a form of cinematic narration” (p. 583). He, more specifically, terms this cinematic evolution of mainstream cinema of the 1990s “surveillant narration,” which is characterized by an ambiguity “between surveillance as narrative subject, that is, as thematic concern, and surveillance as the very condition or structure of narration itself” (p. 583). This leads him to contend that “surveillance has become the condition of the narration itself. In other words, the locus of surveillance has thus shifted, imperceptibly but decidedly, away from the space of the story, to the very condition of possibility of that story. Surveillance here has become the formal signature of the film’s narration” (p. 583; emphasis added).

We speak of cinematic narration devices to designate the diegetic representations of algorithmic surveillance offered as the rendition of the surveillance society in Jonathan Nolan’s POI. While TV shows like Black Mirror have made several episodes criticizing the surveillance society’s 7 invasion of privacy spawned by digitalization, the fact remains that the first two seasons aired on the UK’s Channel 4 starting in 2011 and was only picked up for streaming with season 3 in 2016 by Netflix. POI was broadcast in prime time on CBS, the most-watched mainstream network in the US, providing easier access to a wider audience (when streaming was not as predominant as it is now). Examining the normalization of algorithmic surveillance as diegetic technologies in POI will allow us to imagine and understand the national security state as more than entertainment in surveillance television by foregrounding in POI a critique of diegetic/real-life algorithmic surveillance.

Visualizing Ubiquitous Algorithmic Surveillance: Privacy and Beyond

POI makes visible the surveillance state that Nolan sees as threatening civil liberties. As Rothman (2014) observes: “in ‘P.O.I.,’ private data is a naive fantasy: data streams that seem secure can be easily stolen, decrypted, and combined, if not by the government then by someone else.” The privacy lens, however, is limited to appraising the politics of surveillance and capturing the social transformations set up by mass algorithmic surveillance because it brings the issue down to the individual, their rights, and the respect of privacy rules. It remains blind to how the socio-technical structure of surveillance enacts power or weakens the integrity of individual identity (Cheney-Lippold 2017). More specifically, demanding privacy in the security context misunderstands how security works (Coll 2014). Security institutions look for a “‘nexus’ of suspicions” (Goede 2014, 102) triggered by connecting data and anomaly detection systems (Aradau and Blanke 2018). Only once these nexuses are identified do they seek to know more about the identity of the person qualified as a risk. As part of the national security state, algorithmic surveillance brings to the fore the politics of algorithmic knowledge, risk assessments, and the dangers of the securitization of everyday life.

POI is not the first nor the only television show or film struggling to raise a comprehensive critique of surveillance. From that perspective, a show like Black Mirror, is built as multiple stories, which allows it to cover a larger umbrella of current or foreseeable social issues linked to surveillance and digital technologies than a serial show. Yet, this is precisely the interest, and weakness, of POI. The serial format of POI represents in detail the functioning of algorithmic surveillance. While POI offers, through the Machine, this intimate and seemingly comprehensive view of algorithmic surveillance, it fails to raise the related political and social issues focused on the disappearance of privacy.

Analyzing the Show: Opening the Black Box of Algorithmic Surveillance in Person of Interest

The Efficient Machine: Normalizing Algorithmic Surveillance as an Instrument of National Security

The algorithmic security assemblage is not simply the background of POI. The Machine embodies algorithmic security and the national security state’s process of mathematically sorting data. As the Machine progressively acquires a dominant position in the story arc, 8 algorithmic security moves to the forefront of the narrative. In the process, POI comes to illustrate the importance of what Pasquinelli (2015) names the “algorithmic vision”: “a new epistemic space inaugurated by algorithms and [a] new form of augmented perception and cognition” (p. 3) which is characterized by “a general perception of reality via statistics, metadata, modeling, mathematics” (p. 8). However, despite the show’s attempt to make the inner working of algorithms visible, to un-box surveillance, POI never explains how the numbers function, nor how the Machine’s algorithms succeed in sorting vast amounts of data and accurately raising some numbers over others. In other words, the show fails to question the technology’s efficiency and the consequences of claiming security through algorithms.

Concurrently running a multitude of data analysis software, the Machine accomplishes two main computational tasks: (1) finding anomalies that will spring alerts for possible threats and victims through clustering and network analysis and (2) running predictive scenarios to foresee the future. For these two tasks, the Machine obtains a perfect score. Of course, POI is a TV show: protagonists win, the ending is written. There is no doubt that a malicious act would occur if it were not for the intervention of Team Machine. If this narrative fits the series, it reproduces the promise that with the help of algorithms, security institutions can know with absolute certainty what will unfold in the future. This recalls the heuristic use of precognitive visions by “the precogs” in Minority Report, which led to finding people guilty by anticipation on “premonitory proofs,” or the simulations in The Matrix, which both remove the very possibility of choice from human agents. This teleological reading of the future confirms the need for preemptive action. Yet, for the national security state, no such certainty exists. Risks first exist as imagined possibilities: events more or less likely to happen, with more or less severe consequences, from terrorism to cyberattacks. Thus, the difficulty for security actors is coping with this radical uncertainty in the context of foreseen catastrophes. To solve this problem, they claim agency through technologies—calculations, statistics, and scenarios—that frame risk, making it actionable and legitimate “to act [in the present] upon uncertain futures” (Amoore and Raley 2017, 2). These security practices are profoundly political and potentially detrimental to suspected individuals and society more generally.

In this context, algorithms and big data have become vital instrumental devices to security institutions, acting as “suspecting machines” (Amicelle and Grondin 2021). As Amoore and De Goede (2008) observe, the national security state has come to believe that “knowledge about future risk is always already present in the data, if only information on transactions patterns can be effectively integrated and mined” (p. 174). Algorithms offer an exciting solution to this problem: a liaison between “collect it all” and “connect the dots” (Heath-Kelly 2017), and, as a defense contractor suggests, the promise of “limitless intelligence” (Crampton 2015, 520). They are used to aggregate collected data and insert them into security scenarios to identify potential risks. Anomaly detection 9 is seen as an avenue to identify, from a mass of data, the “unknown unknowns,” famously coined by the US Secretary of Defense Donald Rumsfeld (Aradau and Blanke 2018, 2). Anomaly detection filters and sorts data by establishing similarities and identifies anomalies—“dissimilar, dissonant, or discrepant” data—out of the dataset. Algorithms automatically identify which data seems out of place without the need for an analyst to imagine possible risks in advance. In other words, it promises an efficient solution for producing actionable intelligence to discover the unexpected before it happens (Aradau and Blanke 2018, 6–7).

However, numerous authors have taken issue with the idea that knowledge unproblematically emerges from the algorithmic analysis of a large set of data (Gitelman 2013; Kitchin 2014). From their production and collection, sorting and analysis, from the practices they empower, and the interventions that rewrite the algorithms, humans and machines select information and promote some knowledge over others, which weakens the “promise of algorithmic objectivity” (Gillespie 2014). Pasquinelli (2015) reports, for example, that the algorithm behind the predictive police software PredPol is copied from the mathematical equations established to predict earthquakes (p. 7). That the similarity between the two causal relations is unclear does not preclude authorities from using the algorithm, raising the risk of “seeing patterns or connections in random or meaningless data” (p. 9). The faith and investments in big data analytics for security and counterterrorism run against the evidence-based policymaking mantra that Western governments are pursuing. In that sense, the national security state abides by boyd and Crawford’s (2012) big data mythology. Big data generate statistical inferences, not objective knowledge or irrefutable evidence.

Suspicion is also not equally distributed. Algorithms target individuals because of group statistics (Gandy 2012), intervene in the present because of past police records (Benbouzid 2018), feed on stereotypes present in national security discourses (Amoore 2009) and materialize discrimination. The automation of these relations of power does not make them any less real (Eubanks 2018). Similarly, resorting to anomaly detection does not depoliticize algorithmic surveillance. The automatic identification of anomalies out of similar data may avoid, as Aradau and Blanke (2018) suggest, identifying norms beforehand. Yet the whole process of creating the databases from which algorithms work involves selecting, filtering, indexing, and excluding to keep only the types of data deemed relevant to identifying risks (Hogue 2021). In other words, even if anomaly detection does not ask programmers to set queries beforehand, developers still need to select specific types of data thought to provide insight—communication metadata to reconstruct social graphs or geolocation data to build mobility patterns—and then leave analysts to judge the security value of the flagged data afterward. As Pasquinelli (2015) observes, “the construction of norms and the normalization of abnormalities is a just-in-time and continuous process of calibration” (p. 8). Analysts and developers are left tinkering with algorithms to provide the most useful results to develop and analyze the norms of risk.

Far from easy access to social truth, algorithmic surveillance is a technology of power that filters and sorts data (and people) to automate the exercise of governance (Lyon 2003). Like previous technologies of government, algorithmic surveillance normalizes, excludes, and is limited by its biases. It contributes to the securitization of society as it inserts data from all spheres of social life into risk calculations (Heath-Kelly 2017). Biases and violence remain part of anomaly detection, leading Aradau and Blanke (2018) to wonder “how [to] reconnect techniques of producing dots, spikes, and nodes with vocabularies of inequality and discrimination” (p. 20). POI cannot answer this question because it cannot see the relations of power embedded in algorithmic surveillance. Inevitably, the “persons of interest” the Machine identifies are victims of malicious acts needing protection, never erroneously targeted victims of racial discrimination. The structural violence produced by suspicion is absent from the surveillant narration in POI. The Machine’s accuracy and usefulness to preempt crime and protect the innocent mask this inescapable violent aspect of algorithmic surveillance.

The Subaltern Machine: On Autonomous Decision-Making and Responsibility

Stretched between observing the disappearance of privacy under surveillance and the optimism of algorithmic security, POI shares the unease and ambiguity that many observers feel toward big data and algorithms (boyd and Crawford 2012, 664). Yet, as the show develops, POI tries to move out of this uncomfortable position by sounding the alarm against the danger of autonomous algorithmic decision-making. As Nolan observes: “The moment we’re in is one in which data goes from being passive—something that we avail ourselves of—to being active. It’s a moment in which the data starts to direct us. [. . .] Computer systems will soon begin dictating, in ever less subtle ways, the paths of our lives” (Rothman 2014). The fear of seeing AI taking control over humans is a common theme of the science fiction genre. POI makes no exception to this narrative. It raises awareness about AI’s growing agency and autonomy and suggests that “good” algorithms must remain under human control.

The “Deus Ex Machina” episode 10 (POI, episode 22, season 1) marks the arrival of a new environment where the Machine is not alone. It introduces the Samaritan, a competing superintelligent surveillance system that threatens to bring down the Machine and her team. Contrary to the Machine, Samaritan, a fully automated algorithmic system, not only identifies persons of interest but may designate victims and perpetrators, whereas the Machine may only identify persons of interest in assessing risks from surveillance data. The human members of the Team, contextualizing the assessments, determine each person’s “role” as a victim or an aggressor. Once designated, the Team starts working to preempt the crime. The Samaritan system introduces a different type of algorithmic surveillance: it assesses risks, but it also decides the course of action and manipulates its human “assets” to achieve its ends. While the Machine ultimately depends on humans to make the final decision that distinguishes the aggressor from the victim—the ethical judgment over good and evil—Samaritan relegates humans to minions.

Samaritan’s access to extensive databases from the US national security state and its autonomy in determining threats depicts a dystopic future when algorithms may oversee decisions with prejudicial effects on people. The contrasting effect between Samaritan and the Machine reinforces the dystopia. With the Machine, POI limits algorithmic autonomy and reaffirms the human team members’ responsibility in determining anomalies and risks, thus serving as a fail-safe against algorithmic malfunction (Amoore and Raley 2017, 5). The show also emphasizes the need for ethics in (security) decisions when it presents the long process that Finch goes through to teach morality to the Machine. By playing chess, 11 Finch explains the indeterminacy of the future and the doubt that this radical uncertainty must generate while forecasting possible futures. He also teaches her that contrary to chess, where a pawn is less important than the queen, it is impossible to attribute value to people.

The Machine’s opposition to Samaritan reinforces her morality. Samaritan’s lack of morality leads it to sacrifice human beings to protect the nation and itself. Despite the good intentions behind the Samaritan program (i.e., to protect Americans against terrorism), the unleashing of this all-powerful machine ends up being disastrous both for the initiators (killed by their creation), and the nation, when it targets the New York Stock Exchange and creates a power shortage in the city. As Trendacosta (2016) observes, “Samaritan’s goal isn’t something so rigidly defined as preventing bodily harm. It’s control and order, so it’s willing to rig elections, hire assassins, and eliminate anything it deems ‘deviant.’ There’s no higher morality value in Samaritan’s goal.”

The issues over the autonomy, decision-making, and responsibility of algorithms raised in POI are thus of great importance. However, the clear-cut distinction between the role of humans and algorithms portrayed is misleading. Distinguishing who is responsible for decisions between humans and algorithms is complicated. Current models of algorithms imply continuous interactions with their human counterparts, who propose research hypotheses, evaluate their effectiveness, and play the role of permanent arbiters (Amoore and Raley 2017, 5–6). From the operators’ point of view, algorithms select and partition information and influence decisions. For Gillespie (2014), this close relation between algorithms and humans is a “recursive loop between the calculations of the algorithm and the ‘calculations’ of people” (p. 183). The Machine’s objective selection and data transmission to its operators are as improbable as Samaritan’s complete autonomy. POI remains trapped in a humanist understanding of the ethics of responsibility that echoes the national security state’s justification for indiscriminate surveillance—if no human sees it, it is not surveillance (Aradau and Blanke 2015, 4)—and poorly reflects the world and consequences of security decision-making.

Conclusion: A Mythology of Possible and Imagined Futures of the Algorithmic Surveillance Society

POI projects an informed and politically significant fantasy of the secretive world of algorithmic security surveillance: politically significant because, despite its science fictional nature, it normalizes the efficiency of surveillance technology for security purposes. And yet, Monahan (2018) reminds us to be wary of the “algorithmic fetishism” associated with a search for algorithmic transparency, where one cannot escape using “surveillance as a corrective to surveillance” (p. 2). POI offers an attempt at imagined algorithmic transparency over the possible futures of the surveillance society. It seeks to provide a critical reading of the disappearance of privacy and the dangers that surveillance may create on civil liberties. Ultimately, it argues that surveillance is, or at least can be, for the greater good. It can efficiently preempt violence if put into “the right human hands.” But the social consequences of algorithmic surveillance go beyond privacy or usefulness. It participates in the government of life and the making of acceptable subjects and marginalized undesirables. In that sense, it enacts new forms of violence that POI is unable to see. In the process, the show reproduces the mythology that portrays algorithmic surveillance as unproblematically objective and efficient. By normalizing the use of surveillance by the national security state, POI falls into this category of unintentional complicity against which Muir (2012) warned (p. 264).

POI tries to remove this tension by challenging the autonomy of algorithmic surveillance and raising the issue of decision-making and responsibility. “As the series aged,” Takacs (2016) observes, “the do-gooding procedural elements gave way to greater complexity and seriality, and the show began to question its own initially reassuring premises. It now asked, is a total surveillance society about protecting innocents or disciplining them?” (p. 126). While POI imagines protective surveillance, Samaritan demonstrates that misuse can lead to harm, if it alone is left deciding between good and evil. In so doing, it asks an increasingly relevant question which, considering the generalization of algorithms in all spheres of society, we will all be forced to answer. Unfortunately, POI’s clear division of labor between the Machine and her team and the dichotomous representation of dangerous autonomous algorithms (Samaritan) or efficient surveillance technology (the Machine) leads viewers to a defective understanding of the complex entangled relations that bind humans to their computers.

Footnotes

Acknowledgements

We would like to thank the anonymous reviewers for their insightful critiques and suggestions, which improved the article by making our arguments clearer and stronger, as well as Rudy Leon for help with copyediting. David Grondin would especially wish to acknowledge Simon Glezos, Kyle Grayson, and Marc Doucet, who provided constructive feedback while serving as discussants on the two panels where the first iteration was presented some time ago, at the International Studies Association annual meeting in New Orleans in 2015 and at the Canadian Political Science Association annual meeting in Ottawa in 2015.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for the research done for this article comes from the Social Sciences and Humanities Research Council (SSHRC) of Canada (Ref. 435-2018-1022).