Abstract

360-degree photography is an emerging form of visual media that is increasingly used in research. However, little methodological evidence exists on the use of this technology for field-based data collection and analysis. Drawing on an “urban objects” research project, we describe procedures to capture, process, store, code, and analyze 360-degree digital photos, highlighting considerations particular to this imaging format. 360-degree photography is a powerful field method, particularly for research that requires “whole scene capture” and/or strives to analyze the relational connections between objects and their wider surrounding environments. The procedures outlined are applicable to field-based research projects across the social sciences.

Introduction

360-degree photography (also called full-panoramic, omnidirectional, or spherical) is a growing form of visual media, driven by the availability of consumer-grade 360 cameras and software over the past decade. (See Roche et al [2025] for a discussion of various terms used for 360-degree imaging and the need for shared definitions.) The technology offers the potential for fully panoptic data capture at a time of growing interest in visual methods in the social and behavioural sciences for both “produced” and “found” images (Rose 2023). 360-degree photographs are widely available through street-level imagery platforms such as Google Street View, and there has been rapid growth in research that uses such found images, despite many uses contravening Google’s terms of service (Cinnamon and Jahiu 2021; Helbich et al. 2024).

There is a small but growing empirical literature on the application of 360-degree photography in social science fieldwork (e.g., Cinnamon 2024; Gómez Cruz 2017). However, documented procedures for field-based 360-degree image production are limited (exceptions include Cinnamon and Gaffney 2022; Raudaskoski 2023); and to our knowledge, no previous work has documented procedures for coding and analyzing 360-degree photos captured in the field.

In this short contribution, we offer a step-by-step procedure for capturing, coding, and analyzing 360-degree photographs, drawing on evidence from our project on the aesthetics of digital platforms as they show up in cities (Leszczynski et al. 2025). Research on urban digital platforms typically coalesces around the impacts of the “platformization” of goods, services, and amenities on economies, labor, transportation, and everyday life in the city (e.g. Leszczynski 2020; Stehlin et al. 2020). In our study, we took a different approach, examining the visual impact of platform operations (including on-demand delivery, ridehailing, and micromobility sharing), attempting to answer the research question: What do cities look like under conditions of digital platformization? For our empirical fieldwork in six cities in three countries, we developed a method for visual capture and analysis of urban scenes using 360-degree photography, to examine the visual impact of the urban objects (Lieto 2017) of platformization within the wider urban-built environment—e.g., insulated cubes used in on-demand delivery, shared e-scooters, and signs designating ridehailing pick-up/drop-off points. Our method provides a systematic way to examine such platformized urban objects as material expressions of political and economic change in cities (Leszczynski and Kong 2022; Pilo’ and Jaffe 2020).

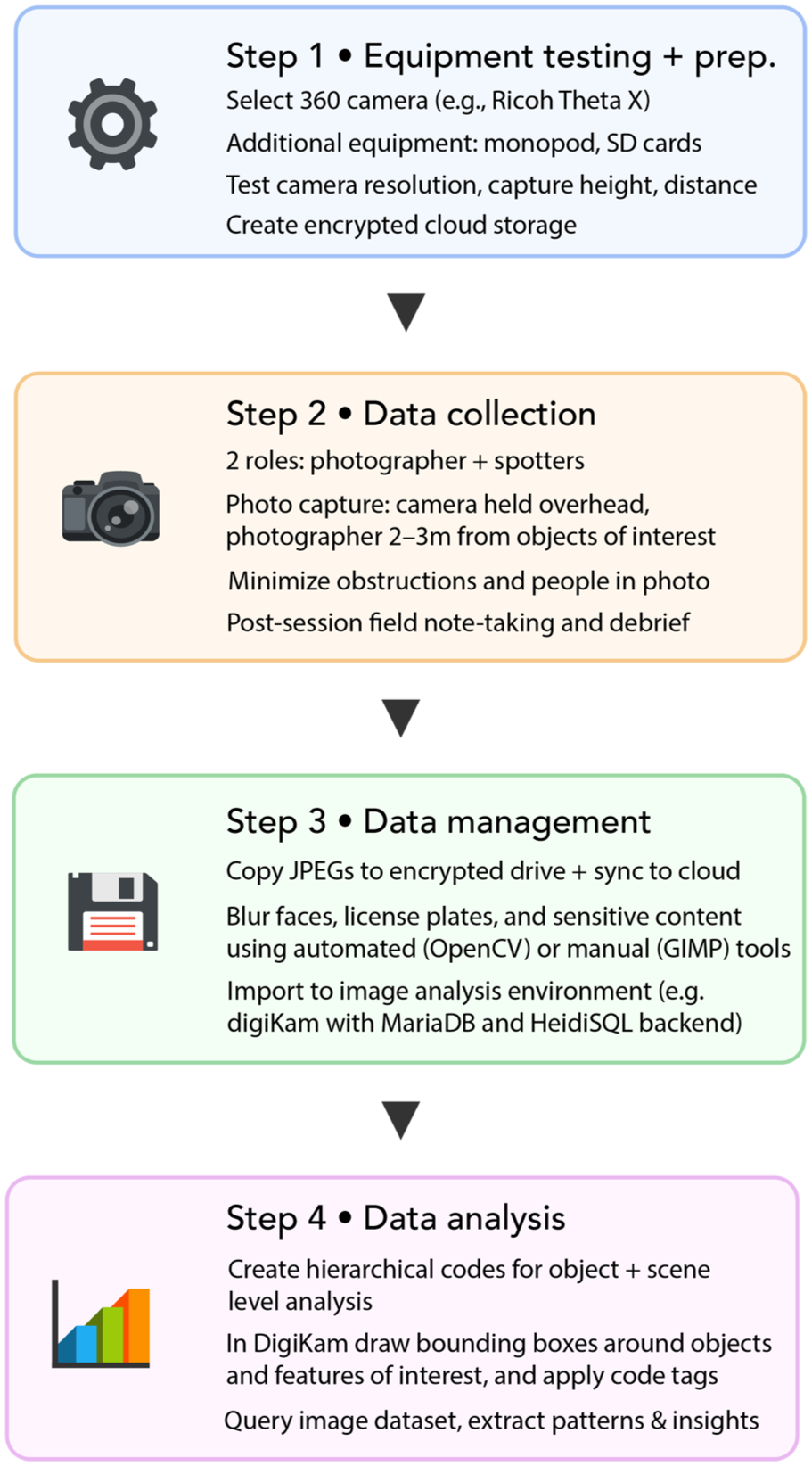

In the following sections, we detail how to capture, process, store, code, and analyze 360-degree digital photos, highlighting considerations particular to this imaging format (summarized in Figure 1). While the focus of this project is on urban objects, the procedures described below are applicable to a diverse range of social science research in urban and rural contexts.

360-degree photo method workflow.

Procedure for 360-degree photo capture and analysis

Step 1: Equipment testing and project preparation

Before beginning data collection, we identified three preparatory matters that needed to be addressed prior to entering the field: what to capture photographs with; how to capture them; and where to store our image data. We chose a mid-range 360-degree camera suitable for our project (RICOH Theta X) by considering cost, capabilities, resolution, memory, battery life, and charging time. This camera simultaneously captures two 180-degree images and automatically stitches them into a fully spherical image in-camera and uses a GPS sensor to assign location coordinates to the metadata of each photo. We tested the camera’s capacities in a spacious park to identify the optimal resolution, camera height, and distance between the photographer and objects. Next, we created an encrypted cloud-based drive for image data storage and secure and remote data transfer.

Step 2: Data collection

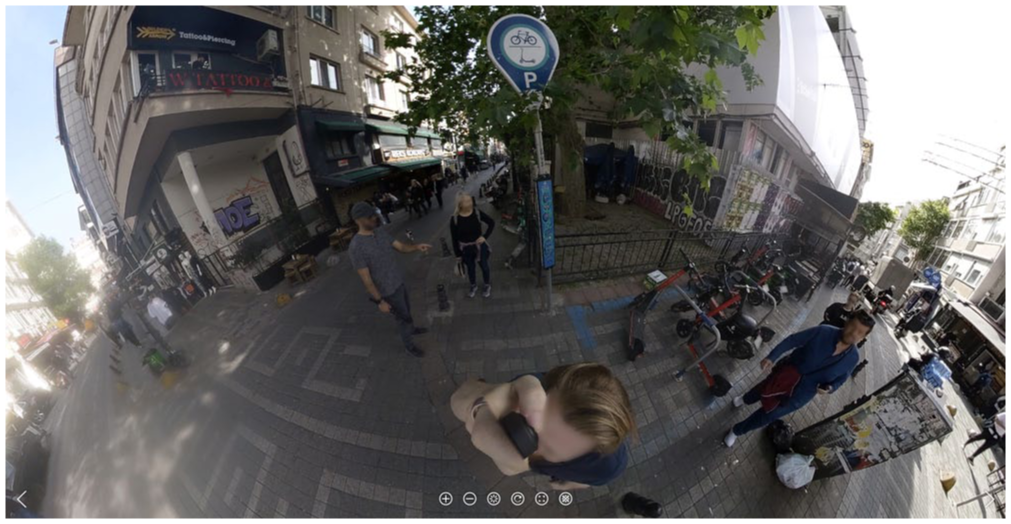

Data collection was informed by our embodied immersion in the urban environment, through walking and capturing 360-degree photographs of urban platform objects and their surrounding environments (Figure 2). At least two team members were required for data collection, acting in one of two roles. The photographer operates the 360 camera and equipment, and the “spotters” walk ahead of the photographer to identify instances of urban platform objects. Building on recommendations for DIY production of street-level imagery (Cinnamon and Gaffney 2022), we identified three best practices for the photographer.

The 360-degree photo data collection process.

Photos should be captured from a fixed position, with the camera held directly over the photographer’s head (20–50 cm) using a monopod/selfie stick to minimize presence in the photograph. Their body should be positioned close to the object, but far enough from it (about 2–3 m) so that the surrounding environment is equally visible within the image. The photographer should then wait for the optimal moment to capture, which has particular constraints due to the 360-imaging format. Key considerations for this study included minimizing the presence of obstructions—including passing vehicles, pedestrians, as well as the spotters themselves—which will be visible in the resulting photo if positioned nearby in any direction from the capture location.

On busy streets, it may be impossible to capture photos without unwanted objects or people in them. In this situation, one option is for the photographer to capture images with their back turned to people near the object of interest, to make them feel like they are not in-view and thus not the intended element of the image. This may be important given the varied attitudes passersby may have toward being photographed, despite the legality of photography in public space in most settings. After each data collection session, post-fieldwork note-taking provided a forum to document daily observations and discuss strategies for the next day’s fieldwork.

Step 3: Data management

After data collection, images were copied as jpeg files from the camera memory card to an encrypted computer hard drive and synced to the secure cloud storage location. Before analysis, image blurring protocols were performed to obfuscate license plates and faces. Two options were tested: (1) a manual protocol using the blurring tools in GIMP (free and open source graphics software—https://www.gimp.org/) and; (2) an automated protocol written in Python using OpenCV (free and open-source computer vision library—https://opencv.org/). The manual approach was preferred because the algorithm could not accurately identify features when distorted or split at the image stitch line, due to the requirement to process images in equirectangular format.

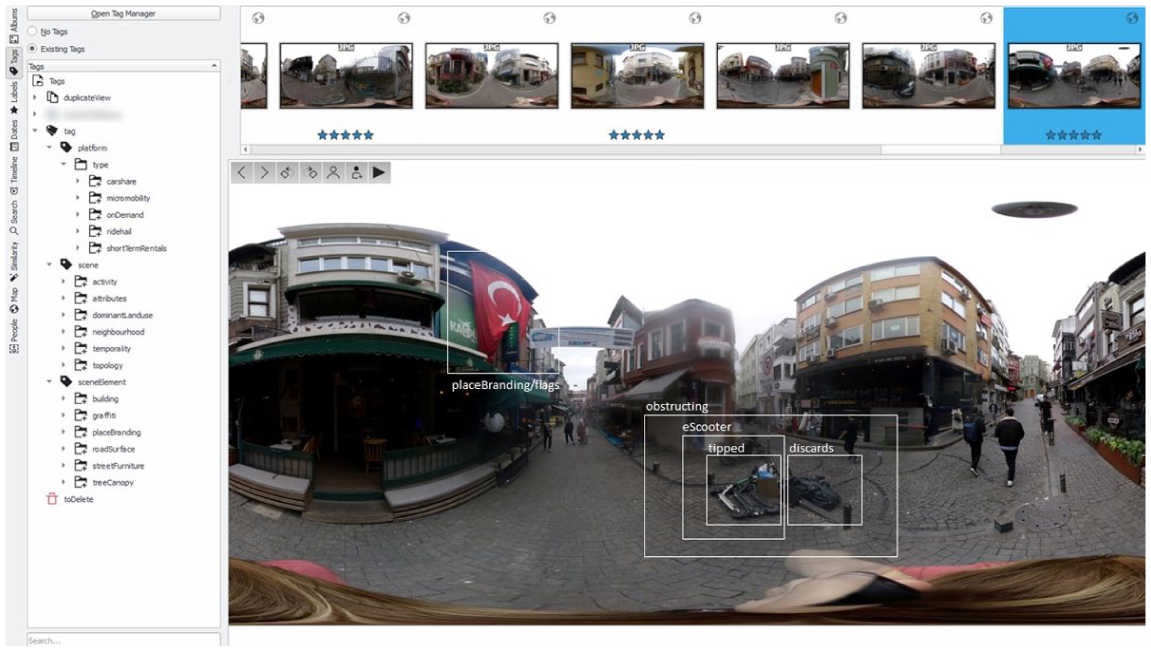

The digiKam software (free and open-source (FOSS) image organizer and tag editor—https://www.digikam.org/) was used for coding and analyzing the photos. A backend infrastructure was created to support digiKam using MariaDB (FOSS relational database management system— https://mariadb.org/) and HeidiSQL (FOSS administration tool—https://www.heidisql.com/) to store image metadata and codes into databases. In digiKam, the file root was set to the drive created in Step 1 to organize the image dataset by city and date.

Step 4: Data analysis

Prior to conducting a qualitative visual content analysis (Rose 2023) of the 360-degree photographs (n=1293), the research team sketched a preliminary coding schema. Through discussion and reference to our post-fieldwork notes, we identified three hierarchical groupings for our codes, ranging from “micro” to “macro” geographical sites. The first group, “platform,” contained codes that described the platform object in the image (e.g., an improperly parked e-scooter). Codes in the second group, “sceneElement,” categorized the environment immediately proximate to the platform object (e.g., the façade of a building). The codes for the third group, “scene,” described the broader environment encompassing the above two groups (e.g., a neighborhood’s name). We then created a digitized coding dictionary that listed code paths and definitions for select first-, second-, and third-level codes. Codes from the data dictionary were populated in digiKam using the “Tag Manager” tool. To code the photographs (in equirectangular format), bounding boxes were drawn around features and assigned a code (Figure 3). Once complete, the full image dataset can be queried by date and location, and by code by entering keyword strings into digiKam’s “Advanced Search” tool.

Screenshot of the digiKam software with a 360-degree image in equirectangular format. Bounding boxes indicate the location of a coded urban object (e.g., an improperly parked e-scooter; an instance of place branding). The full image dataset is accessible in the top ribbon, and the tags/codes are listed in the left panel.

Conclusion

This visual method provides a basis for systematic and comprehensive analysis of objects in their wider contexts, through what we call whole scene capture (Cinnamon et al. 2025). By capturing 360 photos (Step 2) and querying the image database (Step 4), researchers can identify spatial patterns of objects between and within cities, and identify analytical themes including, in the case of our study, the aesthetic alignment of objects in relation to the built environment. The key advantage of this method is the ability to record an entire scene, including the object of interest and the complete surrounding context. In comparison to conventional framed cameras used in photo-based field methods, the whole scene capture advantage of 360-degree photography also provides a stronger basis for a “capture-now-consider-later” methodology—potentially allowing theories and ideas to emerge more inductively. This key advantage, however, produces complex image data that can require extensive effort to code and analyze, potentially producing a dataset that is “too rich” (Raudaskoski 2023:134). We estimate that the time needed for Steps 3 and 4 (data management and coding, respectively) to be at least equivalent to field data collection (Step 2), so researchers should consider whether whole scene capture is desired. Beyond the example in urban objects research described here, we expect this method may translate well to a wide range of research contexts. Finally, the procedures could also be readily integrated as part of many existing “walk-along methods” (Martini 2020) focused on data collection and analysis.

Footnotes

Funding

This research was funded by a Canadian Social Sciences and Humanities Research Council (SSHRC) Insight Grant, Award #435-2022-1542.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.