Abstract

We compared trust game behavior in three environments: (1) The standard laboratory with physically present participants (Laboratory); (2) An online environment with an online meeting before playing the trust game (Online plus a meeting); and (3) An online environment without a meeting (Online without a meeting). In Laboratory, participants convened in a classroom and interacted via computers. In Online plus a meeting, participants could see each other in an online meeting before sessions started. In Online without a meeting, participants did not see each other during the experiment. The experimental design enables examination of the influence of the environment on choice behavior. Participants in all conditions were recruited through the same pool of subjects. We did not see a statistically significant difference between the treatment conditions regarding trust or trustworthiness. Our results suggest that online experiments will yield similar results with experiments implemented in a laboratory.

Introduction

Experiments in human interaction are increasingly conducted online. Online experiments offer possibilities for large and diverse samples and for cross-cultural comparisons with reasonable costs (Hergueux and Jacquemet 2015). Many social interactions also take place online that call for comparisons of online and face-to-face behaviors. Yet, existing evidence does not give a univocal picture of behavioral differences in online and laboratory environments. Increasing experiences of online interaction may also undermine the generalizability of research conducted some years ago. We study whether reliable data on trust game behavior can be acquired through online experiments, and whether behavioral trust and trustworthiness differ in face-to-face versus online interactions.

Trust toward another person is a belief in the other’s reciprocity (Berg et al. 1995), whereas trustworthiness means acting in accordance with this expectation. We compare behavior in the trust (investment) game (Berg et al. 1995) in three environments: in a laboratory where participants are physically present; in an online environment with an online meeting before playing the trust game; and in an online environment without the meeting. In the trust game (Berg et al. 1995), two participants are randomly assigned into sender and responder roles. Both participants are endowed with a sum of money. The sender first decides how much, if any, to send to the responder. Any money sent to the responder is multiplied by the experimenter and given to the responder, who then decides how much to send back to the sender. While both participants benefit if money is sent and returned, an individually payoff maximizing strategy is to send nothing.

We focus on the trust game because there is a large literature on trust game behavior in the laboratory (Johnson and Mislin 2011; van den Akker et al. 2020) and some evidence on online trust game behavior (Fiedler and Haruvy 2009; Hergueux and Jacquemet 2015). Moreover, trust and trustworthiness in online interactions are of special interest. While online interaction types vary, many of them take place in a global community among strangers without physical contact and are typically characterized with a delay in product delivery, making trust and trustworthiness crucial.

Literature Review and Hypotheses

Why would behavior be different in laboratory and online interactions? First, the experimenter has less control over participants in the online setting compared to the laboratory. The laboratory reduces the number of unobservable factors that might influence behavior, such as disruptions or noise (Morton and Williams 2010:ch. 8). In an online environment, reading instructions and decision-making may be careless and quick, and the experimenter cannot monitor participants. It is unlikely, though, that the lack of monitoring would generate systematic behavioral differences.

The degree of anonymity also varies between the laboratory and online environments, which may cause systematic differences. Anonymity is usually greater in online interactions and potentially leads to increased dishonesty or riskier decision-making compared to closely monitored laboratory settings (Mazar and Ariely 2006). Anonymity likewise influences possibilities for reputation building, which plays a crucial role in the trust game (Charness et al. 2010). However, we studied a one-shot trust game that did not provide possibilities for reputation building.

Anonymity can influence social desirability (Thielman et al. 2016; Vorlaufer 2019). If participants’ choices are monitored, they may behave in ways they assume to be socially desirable (Hoffman et al. 1994). The association between anonymity and social desirability has given rise to a vast number of studies, especially concerning survey answers (Dodou and Winter 2014; Lelkes et al. 2012; Ried et al. 2022; Vorlaufer 2019). If the laboratory gives a better opportunity to monitor participants, the influence of social desirability can be greater. The physical presence of the experimenter can also increase socially desirable behavior.

Anonymity can also influence experiences of social distance (Charness and Gneezy 2008). When participants see one another, behavior can be influenced by conceived social distance to others. Social distance theory (Akerlof 1997) suggests that online interactions can impair social preferences because participants cannot recognize a common background (Hergueux and Jacquemet 2015). In construal level theory, social distance refers to a psychological construction of other people as socially close or distant to oneself (Liberman and Trope 2008). The theory does not directly predict behavior but rather delineates how distance influences the ways in which objects are construed—close objects are constructed in concrete terms and distant objects in abstract ones. Empirical observations on the effects of construal levels on behaviors are somewhat mixed and emphasize the effects of moderating factors (Chen 2020; Nan 2007; Park and Morton 2015; Park et al. 2020; Schuldt et al. 2018).

The physical presence of others in the laboratory versus a more abstract presence of online others could influence how others are mentally construed, but it is not clear what the behavioral consequences of the mental constructions are. Concrete others may give rise to more prosocial behavior compared to abstract others because of a more detailed knowledge of others’ needs and interests. However, a high-level construal may also emphasize joint outcomes over outcomes to oneself (Stillman et al. 2018). Yet another approach to differences in online and laboratory environments is the ingroup—outgroup division, which has clear behavioral consequences in the form of ingroup favoritism (Cikara et al. 2011). The laboratory allows participants to realize that they belong to the same ingroup or, for example, are students of the same university, whereas the online environment may give more indirect signs about a shared background.

Finally, anonymity can pertain to participants’ beliefs about playing with a real counterpart. If participants do not see one another and don’t know anything about other participants, they may suspect they are playing against a computer instead of a real person (Eckel and Wilson 2006; Frohlich et al. 2001).

Empirical evidence on the influence of anonymity is mixed. Some studies suggest that the degree of anonymity does not have behavioral consequences (Barmettler et al. 2012; Umer 2020). Others have observed less prosocial or cooperative behavior under anonymity (Franzen and Pointner 2012; Wang et al. 2017), whereas others suggest that greater anonymity in fact boosts pro-sociality (Dufwenberg and Muren 2006). The influence of anonymity may also depend on the interaction situation, or the methods used to vary the degree of anonymity (Charness and Gneezy 2008; Vorlaufer 2019). Thielmann et al. (2016) observed low levels of trust when anonymity was maximized and suggest that anonymity may be particularly relevant for trust game behavior. Finally, a meta-study shows that playing against a real person increases trust game sending compared to simulated counterparts even though participants are not told about being paired with a computer (Johnson and Mislin 2011).

What does empirical evidence say about behavioral differences in online and laboratory environments? Despite broad literature on online experiments (Eckel and Wilson 2006; Grech and Nax 2020; Hedegaard et al. 2011), controlled comparisons of online and laboratory environments are less common and existing evidence is mixed. Evidence on public good games suggests that the online and laboratory environments lead to similar behavior (Arechar et al. 2018). A study on a lost-wallet game demonstrated that trust and reciprocity were less frequent in the online mode compared to the laboratory, but differences were rather small (Charness et al. 2007). A comparison of trust game behavior in a laboratory and a virtual world revealed that laboratory participants both sent and returned larger proportions of their endowment compared to the virtual world participants (Fiedler and Haruvy 2009). A similar study observed that behavioral trust was lower in a virtual world, but trustworthiness was higher (Füllbrunn et al. 2011).

A problem with these studies is that laboratory and virtual world participants were recruited from different pools—students and virtual world residents. Virtual world residents were people with heterogeneous backgrounds, represented by avatars in existing virtual worlds. It is possible that the samples rather than the experimental setting account for the observed difference. Closest to our design is a study comparing laboratory and online environments with a variety of games (Public Good, Trust, Dictator, Ultimatum) (Hergueux and Jacquemet 2015). The authors observed more other-regarding behavior in online interactions compared to the laboratory. A shortcoming is that everything else except the experimental environment was not held constant. The online participants could complete the experiment at any time they wanted to, and they were matched with participants from previous sessions. The payment method was also different for laboratory and online participants. Prissé and Jorrat (2022) addressed these issues and designed a study in which they varied the environment, online or laboratory, while holding everything else constant. They studied a variety of choice tasks excluding, however, the trust game. Prissé and Jorrat observed that online and laboratory environments gave rise to similar behavior across the choice tasks, except for a larger proportion of online participants donating something in the dictator game.

Our contribution lies in a controlled comparison between online and offline environments as well as testing a “middle” type, in which participants can see one another before the session starts although the experiment is conducted online.

Hypotheses

We compare trust game behavior in three conditions: online and laboratory environments, and an online environment with an online meeting before the trust game. In the laboratory, participants were seated in cubicles and could have reasonable confidence that they were interacting with other humans. The online meeting condition mimics the laboratory because participants could see other participants in the meeting (by Zoom) before the trust game was played. The pure online condition took place without the meeting. In all conditions, the trust game was played anonymously via computers using the same software (O-tree [Chen et al. 2016]), and participants were present at the same time in their experimental session. In the Zoom sessions, the experimenter was visible in the meeting before the sessions started. We call the treatment conditions: Laboratory, Online plus a meeting and Online without a meeting. Our aim was to create conditions where the degree of anonymity would increase when moving from Laboratory to Online without a meeting.

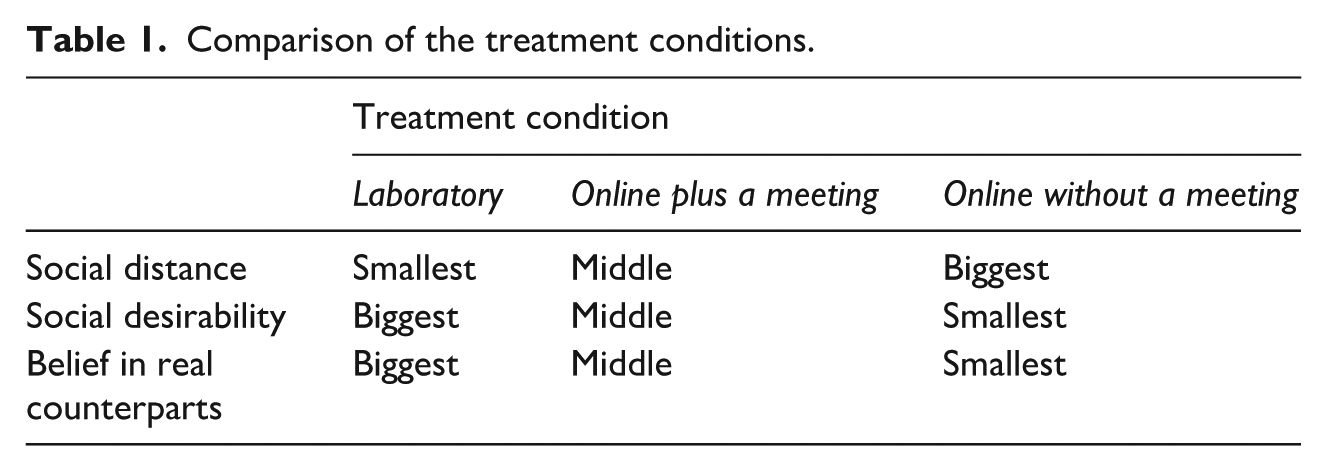

We assume that social distance is smallest in the Laboratory where participants can see that they are all university students of about the same age. Online without a meeting is expected to give rise to the biggest social distance because participants cannot see one another and become aware of social similarity. Online plus a meeting falls between these two because participants can see each other but it is possible that seeing others via the computer does not correspond to the experience of physical presence. In an online meeting, participants cannot observe others’ bodies and movements, whereas their own face is visible to them unlike what usually happens when meeting others physically (Peper et al. 2021). However, it is possible that in the online meeting participants realize their common background as young students. We assume that social desirability is greatest in the Laboratory where the experimenter and other participants are physically present; that it is smallest in Online without meeting; with Online plus a meeting falling in between. Regarding belief in real counterparts, Laboratory and Online plus a meeting are rather similar, whereas Online without a meeting can give rise to doubts about playing against a computerized program rather than a real person. Table 1 outlines the expected differences between the conditions.

Comparison of the treatment conditions.

Small social distance, big influence of social desirability, as well as a strong belief in real counterparts, should increase trust game sending, whereas big social distance, small influence of social desirability, and suspicion about real counterparts should reduce trust game sending. We therefore hypothesize that the largest amounts are sent in Laboratory; the smallest in Online without a meeting; and Online plus a meeting being in the middle. Regarding responder behavior, we expect it to follow sender behavior because reciprocity is likely to motivate responders (Herne et al. 2022). It is also noteworthy that how much is sent sets an upper limit to how much responders can send back. Based on these expectations, we formulated two hypotheses:

H1: Trust game senders send most in Laboratory, least in Online without a meeting and in between in Online plus a meeting.

H2: Trust game responders return most in Laboratory, least in Online without a meeting and in between in Online plus a meeting.

Certain reservations pertain to our hypotheses. The difference between Laboratory and Online plus a meeting relies on the assumption that physical presence is different from an online presence, but we are not aware of evidence about this difference pertaining to trust game behavior. We can neither rule out the possibility that the extensive increase in online interactions, to which the Covid-19 pandemic had an impact, may have influenced online trust in ways not seen in studies conducted before the pandemic. It is also notable that while the experimenter and participants are not physically present in the online condition, other people may be present in a participant’s location. The design of the experiment was pre-registered in the Open science framework (https://doi.org/10.17605/OSF.IO/3BVE9).

Procedures

Participants

Participants were recruited from DMLab, Tampere University, and PCRClab, University of Turku, Finland, participant pools. Both pools consist mainly of university students and participants self-selected to sign up in the pools. Ethical approval statement was given by The Ethics Committee of Tampere Region in October 2022. Data collection procedures were followed and implemented according to the European Union GDPR rules. An invitation by e-mail was sent to all participants in the pools (run by ORSEE [Greiner, 2015]). One hundred forty-three participants came via DMLab and 107 participants via PCRClab. Enrolled participants were told that they would receive more information regarding the experiment a day before their session (whether the environment was laboratory or online). Seventeen enrolled participants did not show up in the sessions and seven participants were allowed to change from a laboratory session to a later online session because of unforeseen obstacles (e.g., becoming ill).

We aimed at 100 participants per condition but failed to achieve this goal by a small amount. Despite not getting the target number of participants, our study is relatively well powered. Our target of 100 participants would have yielded 50 observations for sender behavior and 50 observations for responder behavior in each treatment condition. To detect a smallish effect size, Cohen’s d = 0.3, with ANOVA, 37 observations per condition would be needed to have a power of 0.80, with p=0.05. We organized 30 sessions and got 250 observations in total, 84 in Laboratory, 90 in Online plus a meeting, and 76 in Online without a meeting. The average number of participants per session was seven in Laboratory (12 sessions), 15 in Online plus a meeting (6 sessions), and six in Online without a meeting (12 sessions). The differences in the number of average participants in the two types of online sessions is due to an incidental uneven number of last-minute dropouts.

Experiment Design

The experiment was conducted during November 2022—February 2023. An identical trust game was played with the same software (O-tree), anonymously and with stranger-matching in each condition. The sender and the responder were endowed with 100 experimental currency units (1 currency unit = 0.05€). The sender first decided an amount x (0 ≤ x ≤ 100) to be sent to the responder. The sender kept 100-x to him or herself, the amount sent was doubled, the responder receiving 2x. The responder had now 100 + 2x and decided whether to return an amount y (0 ≤ y ≤ (100 + 2x)) to the sender. The game was played for one round plus an un-incentivized practice round. Participants were paid what they earned from the trust game plus a participation fee of 5€. Random allocation into the player role and stranger matching were used.

In the trust game, the sender can show trust by sending points, and the responder can show trustworthiness by returning points. It is noteworthy, though, that sender behavior can reveal risk attitudes, altruism, or pro-sociality, rather than beliefs about the responder’s reciprocity. The responder can be motivated by altruism or pro-sociality in addition to reciprocating the sender’s behavior.

In all conditions, participants were first instructed and played a practice round and one round of the trust game. Participants were told that the experiment begins when their randomly chosen partner arrived (paired experiment), but that they might have to wait a while for their partner’s decisions during the experiment. The experiment lasted on average 10 minutes per participant, and it took roughly the same time in the three environments. Participants also filled in a large pre-survey about a week before the experiment and a short post-survey immediately after the experiment (see Supplementary materials).

Results

Participants represented a large variety of majors, and the share of economics and business students was low (n=13). Participants’ background characteristics were not different in the three conditions (see Table S1, Supplementary materials).

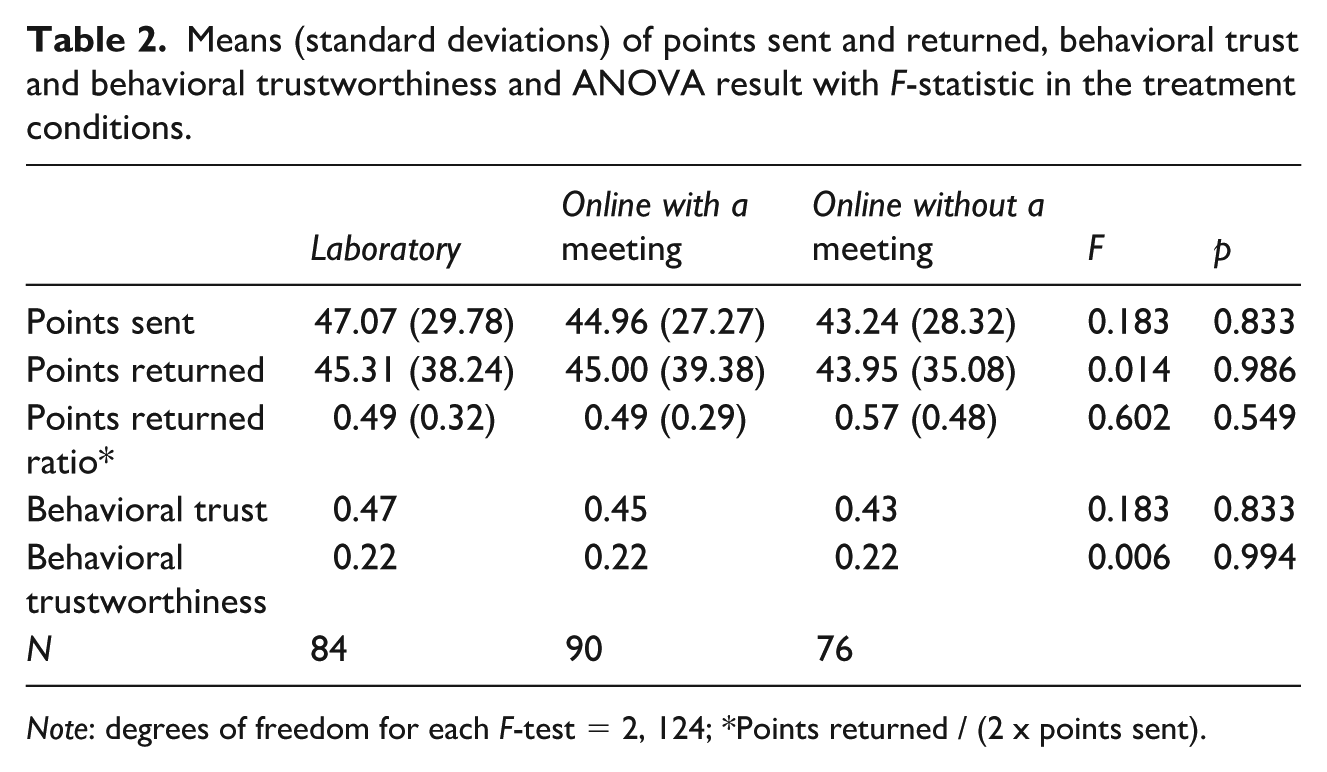

The overall mean of points sent was 45.14 (sd=28.27, min=0, max=100), and of points returned 44.78 (sd=37.44, min=0, max=160). Behavioral trust can be defined as the amount sent divided by endowment, and behavioral trustworthiness as the amount returned divided by the amount available to return (2 x points sent + responder’s endowment) (Johnson and Mislin 2011). Following this definition, mean trust was 0.45 and mean trustworthiness 0.22. In comparison to a meta-analysis (Johnson and Mislin 2011:871), both trust (0.45 vs. 0.50) and trustworthiness (0.22 vs. 0.37) were somewhat lower. However, the meta-analysis shows that variations in the experimental procedures tend to produce different results.

To test H1, we compare mean amounts of points sent in the three conditions with ANOVA. Table 2 shows that the means were not statistically significantly different in the three conditions, yielding no support for H1. Table 2 also shows that points returned and the points returned ratio (points returned / (2 x points sent)) are not different in the three conditions implicating that H2 is not supported. We checked the result with a non-parametric Independent-Samples Kruskal-Wallis test, which did not show statistically significant differences between the treatment conditions in amounts sent (p=0.927) or in points sent back (p=0.868). A further robustness check was made using Bayesian ANOVA, which yielded similar results (Table S2, Supplementary materials).

Means (standard deviations) of points sent and returned, behavioral trust and behavioral trustworthiness and ANOVA result with F-statistic in the treatment conditions.

Note: degrees of freedom for each F-test = 2, 124; *Points returned / (2 x points sent).

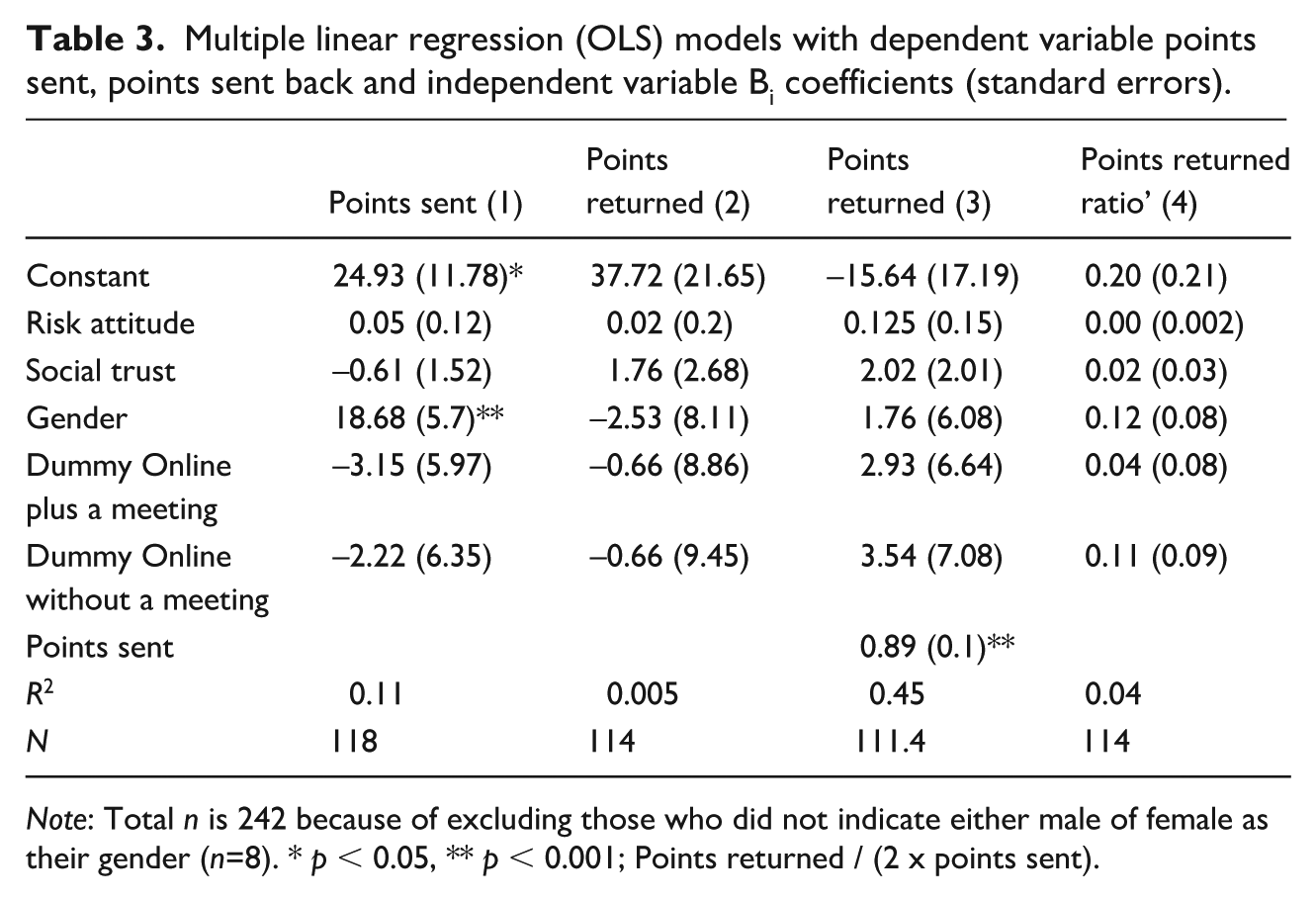

To further analyze trust and trustworthiness, we ran a regression with points sent, points returned and points returned ratio as dependent variables. We included risk attitude, social trust, gender, and treatment condition (Laboratory is the reference category) as predictors. Table 3 represents regression results for points sent and returned. Apart from gender in the first regression and points sent in the third, none of the predictors are statistically significant. In line with previous studies, we see that men tend to send more points than women (van den Akker et al. 2020). Including the sender’s decision in the model (3), a clearly larger variation in returning behavior is accounted for (R2=0.45). 1

Multiple linear regression (OLS) models with dependent variable points sent, points sent back and independent variable Bi coefficients (standard errors).

Note: Total n is 242 because of excluding those who did not indicate either male of female as their gender (n=8). * p < 0.05, ** p < 0.001; Points returned / (2 x points sent).

Participants’ answers to the post-experiment questions suggest that the three environments did not generate different participation experiences, which is in line with not observing behavioral differences between the conditions (Table S4, Supplementary materials).

Discussion and Conclusion

We compared trust game behavior in three environments and did not observe differences in sending or responding. Our results suggest either that social distance, social desirability, or belief in a real counterpart were not different enough in the three conditions, or they were not relevant for trust game behavior. There could also be other processes that worked counter to these tendencies. Regarding social distance, it is possible that it was not substantially bigger in the online environment because participants received the invitation through a pool of student subjects implying that other participants are also students. Social desirability could also have been about the same in all conditions if the physical presence of the experimenter and other participants were not crucial, or if social desirability did not influence participants’ choices in any of the conditions.

It is also possible that the mechanisms pertaining to the degree of anonymity are not relevant for trust game behavior, whereas other features influence trust and trustworthiness. In particular, the now-familiar online setting can enforce social norms emphasizing trust and trustworthiness unlike the more unfamiliar laboratory environment. People engage regularly in economic interactions on the Internet, and they are likely to have numerous experiences of others’ trustworthiness in online transactions (Hergueux and Jacquemet 2015). Trust and trustworthiness are crucial for online transactions because enforcing contracts is difficult. The participants may have learned to trust in online interactions, rendering the processes related to anonymity insignificant.

Overall, our experiment speaks in favor of using online experiments to collect data because they are cheaper and yield possibilities for larger samples and comparisons across cultures. Our results suggest that the online environment yields similar results with experiments conducted in a laboratory.

Certain limitations pertain to our study. A widely discussed question is how well the trust game captures societal trust measured in surveys. Initial research raised concerns about the ability of the two methods to measure the same thing (Glaeser et al. 2000). More recent research suggests that survey and trust game measures coincide (Banerjee et al. 2021; Yamagishi et al. 2015). This result supports possibilities to generalize from trust game experiments.

Another issue is that while participants were randomly allocated to the conditions, we cannot completely rule out the possibility of a self-selection bias because last-minute drop-outs were more common in the laboratory sessions. Moreover, we did not vary social distance in the laboratory. Such a design would have required a condition with varying familiarity with other participants, for example. Future research could also analyze which of the processes related to anonymity are, in fact, at work in online and face-to-face interactions. Post-experiment surveys could be used to get data on participants’ experiences of social distance and social desirability to specify differences between face-to-face and online interactions. One could also ask how well we can generalize based on Finnish university students, especially because social trust is relatively high in Finland (Bäck 2019).

Online interactions have become an essential part of a wide range of social life, ranging from transactions in private markets to political campaigns and voting. It is mportant to know whether the online environment influences trust and trustworthiness needed in many types of social interactions. Our study suggests that trust and trustworthiness are not influenced by the environment of interaction, and that complete anonymity in the online environment does not compromise trust and trustworthiness.

Supplemental Material

sj-docx-1-fmx-10.1177_1525822X251377889 – Supplemental material for In Online and Laboratory We Trust: Comparing Trust Game Behavior in Three Environments

Supplemental material, sj-docx-1-fmx-10.1177_1525822X251377889 for In Online and Laboratory We Trust: Comparing Trust Game Behavior in Three Environments by Hanna Björkstedt and Kaisa Herne in Field Methods

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research was funded by the Research Council of Finland (decision numbers 345715/365619).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data will be made available after publication in the Finnish Social Science Data Archive.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.