Abstract

In-situ measurements, using the experience sampling method (ESM), can provide insight into behaviors and contextual factors by allowing individuals to self-report them via text or push messages on a smartphone close to the behavior of interest. However, more is needed to know about the data quality of these measures, particularly the impact of sampling frequency. This study aims to examine the effects of different sampling frequencies on compliance, sample biases, and reactivity of measures in the context of digital media use. In July 2021, a group of Dutch citizens (n = 250) was randomly assigned to either a standard daily-intensive burst measure (DI-BM; seven surveys across the day) or hourly-intensive burst measure (HI-BM; 12 surveys over two hours per day) condition and surveyed across seven consecutive days, resulting in a total number of 16,135 surveys sent. Results indicate higher compliance in the standard ESM condition than in the burst ESM condition.

The experience sampling method (ESM) is widely considered a middle ground when assessing information from individuals prone to self-report error (see Schnauber-Stockmann and Karnowski 2020). Here, respondents repeatedly answer questions in situ (i.e., when they receive the prompt to respond to short questions) (Otto et al. 2019). ESM enables researchers to collect data on subjective variables such as attitudes, cognitions, and affective emotions (e.g., Stone et al. 2023), but also objective variables such as behavior or circumstances like locations without the need for additional measurement methods (e.g., observation, GPS tracking) (Bayer et al. 2018). Scheduling of ESM prompts is a crucial determinant for data quality as they determine the frequency of assessments with consequences for the potential coverage of behavior and states, the recall effort of respondents, and the potential of longitudinal analysis. As a rule of thumb, a more frequent assessment relates to lower recall efforts and biases, better detection of (especially infrequent) behaviors, and a higher order of statistical analysis possible (e.g., the analysis of within-person changes using multi-level modeling). However, a higher scheduling frequency comes with risk for data quality and participation rates, as the burden for respondents may increase (Anderson et al. 2016).

Burst designs have been proposed to assess infrequent behavior prone to recall biases with high-frequency sampling. A burst design is the “longitudinal measurements planned around closely spaced successive ‘bursts’ of measurements” (Sliwinski 2008), usually several times a day. The burst periods are repeated over longer periods, for example, weeks, months, or years (Stawski et al. 2015). Longitudinal, high-frequency assessments make “measurement burst design [. . .] ideal for the study of short-term variability, long-term change, and the individual differences therein” (Myin-Germeys and Kuppens 2021). Burst, hence, is a relative term, marking periods of higher relative to lower measurement frequency. However, no definition or standard exists that narrows down a burst measurement to a certain level of frequency or reference periods.

This study conceptually distinguishes hourly intensive burst measures, HI-BM (12 beeps in two hours per day), and daily intensive burst measures, DI-BM (seven beeps across 14 hours per day). We employ an innovative mobile Experience Sampling Measurement (mESM), utilizing a general population sample (n = 250). Respondents were surveyed up to 12 times in two hours, pushing the boundary of assessment frequencies and providing a first feasibility test. We compare the response patterns between hourly- and daily-intensive burst measures for compliance, attrition, and sample biases. The study tests how well such a method can work and provide guidance to scholars when deciding on a scheduling frequency in intensive measurement designs and asks: What is the impact of the intensive ESM designs on procedural non-compliance and cross-temporal variability in self-reported media exposure data?

Hourly Intensive Burst Measures (HI-BM) versus Daily Intensive Burst Measures (DI-BM)

In communication research, accurately gauging media exposure is increasingly difficult due to challenges like recall error and social desirability biases, which complicate the reliability of traditional surveys (Araujo et al. 2017; Prior 2009; Slater 2004). It was shown that, especially in the case of mobile media, retrospective self-reports of use frequency and duration are only moderately associated with more objective measurement methods, such as event logging (Parry et al. 2021). This is due to the high pervasiveness of mobile media use in modern life, with uses that happen frequently but for short periods, making them hard to distinguish and aggregate retrospectively (e.g., Oulasvirta et al. 2012; Schwarz and Oyserman 2001; Vorderer and Kohring 2013). Reasons for this are the increasingly short usage periods (e.g., watching videos for a few seconds on TikTok) or multitasking different media (see, e.g., Siebers et al. 2022). As self-reports on digital behavior provide substantial value nonetheless (Verbeij et al. 2021), researchers now focus more on perceived media experiences, such as news avoidance and incidental exposure (Ohme et al. 2022; Thorbjørnsrud and Figenschou 2022). While digital trace data offer insights into what articles users select or videos they watch (e.g., Wedel et al. 2024), they fail to capture the subjective experiences or motivations behind these choices, making the contextual understanding of media interactions essential (Shiffman et al., 2008).

The experience sampling method (ESM) addresses some of these challenges by collecting self-reported data close to the actual experience, enhancing the accuracy of reports on information exposure. However, challenges exist regarding data quality, such as high missing data, lower compliance compared to traditional surveys, and increased participant burden, which primarily affect less tech-savvy populations like adolescents, the elderly, or those in certain psychological states (e.g., Mayen et al. 2024). Another challenge is the send-out frequency. Daily intensive burst measures (DI-BM), which involve random sampling throughout the day, are useful for frequently occurring behaviors like checking news on social media. However, the effectiveness of DI-BM may be limited by issues of recall and the inherent variability of media consumption behaviors (Verhagen et al. 2016).

Hourly intensive burst measures (HI-BM) could potentially provide more precise data by asking participants about their media experiences more frequently. This method reduces the cognitive load of recall and may better capture transient states and ad-hoc decisions, which are often influenced by heuristics or situational factors (Lerner et al. 2015). For instance, HI-BM might more accurately reflect behaviors like news avoidance or selective exposure that are not consistently distributed across days and where intentions of usage are hard to recall (Ohme et al. 2022). For example, DI-BM is useful for assessing changes in state measures, such as the fear of missing out or other psychological conditions (e.g., Dekker et al. 2024). Here, the goal is to get a good coverage of changes in the measured states over time. However, some states or experiences are more volatile. HI-BM is helpful in measuring those, such as the surprise (to see a news article (e.g., Nanz and Matthes 2020) or intentions (to avoid a news article (e.g., Ohme et al. 2022). Other examples would be measuring changes in feelings in certain situations, such as short-lived experiences (e.g., at events, during clinical trials, etc.). HI-BM is helpful in cases where changes happen at a quicker pace or memories are likely to fade quickly.

However, increasing the frequency of surveys also introduces risks such as respondent fatigue, which can adversely affect data quality (Anderson et al. 2016). Karnowski et al. (2017) demonstrated the potential of using frequent prompts to gather data on social media experiences, which can mitigate some recall biases but may increase the burden on participants.

Scheduling Frequency in mESM Studies

The frequency of scheduling mESM (mobile Experience Sampling Method) surveys is determined by how (in)frequently the behavior of interest occurs. Unknown time trajectories call for high sampling frequency but come with greater respondent burden (Eisele et al. 2022). Field periods can vary from hours to weeks, and the reference period for self-reports can be the very moment up to a whole day (Schnauber-Stockmann and Karnowski 2020). Most mESM studies in media research rely on approximately five to seven repeated measurements per day, while some studies have sent out up to 50 “beeps” (i.e., survey prompts) daily (Schnauber-Stockmann and Karnowski 2020). Sampling frequencies in shorter time intervals than days are rarely reported. To our knowledge, no mESM study has used burst sampling with multiple beeps and intensive repetition within a few hours. To test for the methodological viability of such a design, we investigate the sampling frequency intensity between two mESM approaches.

Unknowns of Hourly Intensive Burst Measures

For hourly intensive burst measures (HI-BM) to be of value for media and communication research, several parameters must be fulfilled regarding compliance and data quality. Indicators frequently used to evaluate mESM studies are the overall compliance with the protocol, response rate, and response latency (Schnauber-Stockmann and Karnowski 2020; van Berkel et al. 2017). In addition, we deem onboarding compliance, attrition, and sample biases as important indicators that need to be investigated, as they have frequently been shown to impact the explanatory power of media exposure measures (e.g., Ohme et al., 2021).

Sample Biases

A longitudinal mESM study consists of several steps for respondents: giving informed consent, downloading an app, logging in to the app, and responding to multiple daily questions throughout several days. In each step, attrition of the initial sample drawn can occur. While a random loss of participants compromises the power of a study, a systematic loss can introduce sample biases: in other words, the data analysis is based on a sample with different characteristics than the one drawn initially. Most mESM studies do not report a systematic loss of participants during the recruitment and onboarding procedure (see Schnauber-Stockmann and Karnowski 2020). Previous research has shown mESM studies are subject to sample biases for sociodemographic aspects such as age (Eisele et al. 2021). Other mobile and digital trace data collection methods that rely on similar protocols have been shown to introduce systematic biases for participants' privacy risks, literacy, and tech-savvy assessments (Boeschoten et al. 2021; Ohme et al. 2021). We, therefore, assess whether sample biases for these variables are introduced in each step of the mESM protocol.

Remote Onboarding Success

Remote onboarding success is one of the major determinants of successful mESM studies that rely on onboarding procedures that transition users intuitively and without interruption through additional training (e.g., videos, lab attendance) from a recruitment step to the mESM application. It is a sub-dimension of compliance. While many studies use student and convenience samples, for general population samples, it is less realistic to invite all respondents to a laboratory where they can install the necessary software or app on their devices. However, when a sizable number of recruited respondents drop out through a remote onboarding process before the experience sampling protocol even starts, the data basis for further analysis is severely compromised, as we deal with a compliance error.

Respondents may associate a higher burden with the reception of 12 beeps within two-hour periods, compared to a more standardized design entailing seven beeps across 14 hours. Compliance error is a common problem in media exposure research that relies on multiple-step onboarding procedures (e.g., Boeschoten et al. 2020; Jürgens et al. 2019; Ohme et al. 2021). We therefore investigate whether compliance, defined as participants transitioning from the recruitment survey to the mESM platform and successfully logging in, differs between HI-BM and DI-BM.

Protocol Compliance

Once respondents start participating, the burden of the protocol can result in nonresponses (i.e., not answering questions when a beep is sent out). The reporting of daily compliance is often missing in mESM studies. For a DI-BM design with six automated daily calls, Courvoisier et al. (2012) report an overall compliance of about 75%, whereas compliance decreased to up to 50 % over the week). Calls in the morning had the lowest compliance, while evening and late afternoon calls were taken most frequently. In a design with 10 randomized signals per day, Rintala et al. (2020) analyzed 10 pooled mESM studies that found a similar compliance (78%), an over time decrease, a decrease over calendar days, and differences within a day, with the lowest compliance for the first assessment in the morning.

Research comparing different sampling frequency protocols is sparse, however, first studies show that receiving three, six, or nine beeps per day did not affect the response rate of a student sample (Eisele et al. 2021). While compliance decreased over time, there was no significant difference between sampling frequencies. However, while disturbance by ESM protocols with 10 momentary assessments across the day was perceived as relatively low by respondents, there is indication that perceived disturbance increases across study days and within a day (Rintala et al. 2021). Moreover, respondents who felt more disturbed were less compliant in these studies (Rintala et al. 2020). This can be seen as a first indication that in a HI-BM condition where 12 beeps occur every 10 minutes over two hours, perceived disturbance can be higher and result in lower protocol compliance. However, none of these studies has used a truly HI-BM design. We, therefore, ask what the protocol compliance is for hourly vs. daily intensive burst measures.

Method

Sample and Recruitment

This study aimed to apply mobile experience sampling to a general population sample. Therefore, we recruited a diverse mobile-only sample of Dutch Internet users via an international polling company. This study is part of a larger data collection effort with different mESM components. An initial sample of 1,411 participants was recruited for the component analyzed in this study. The study received ethical approval from the ERB of the University of Amsterdam.

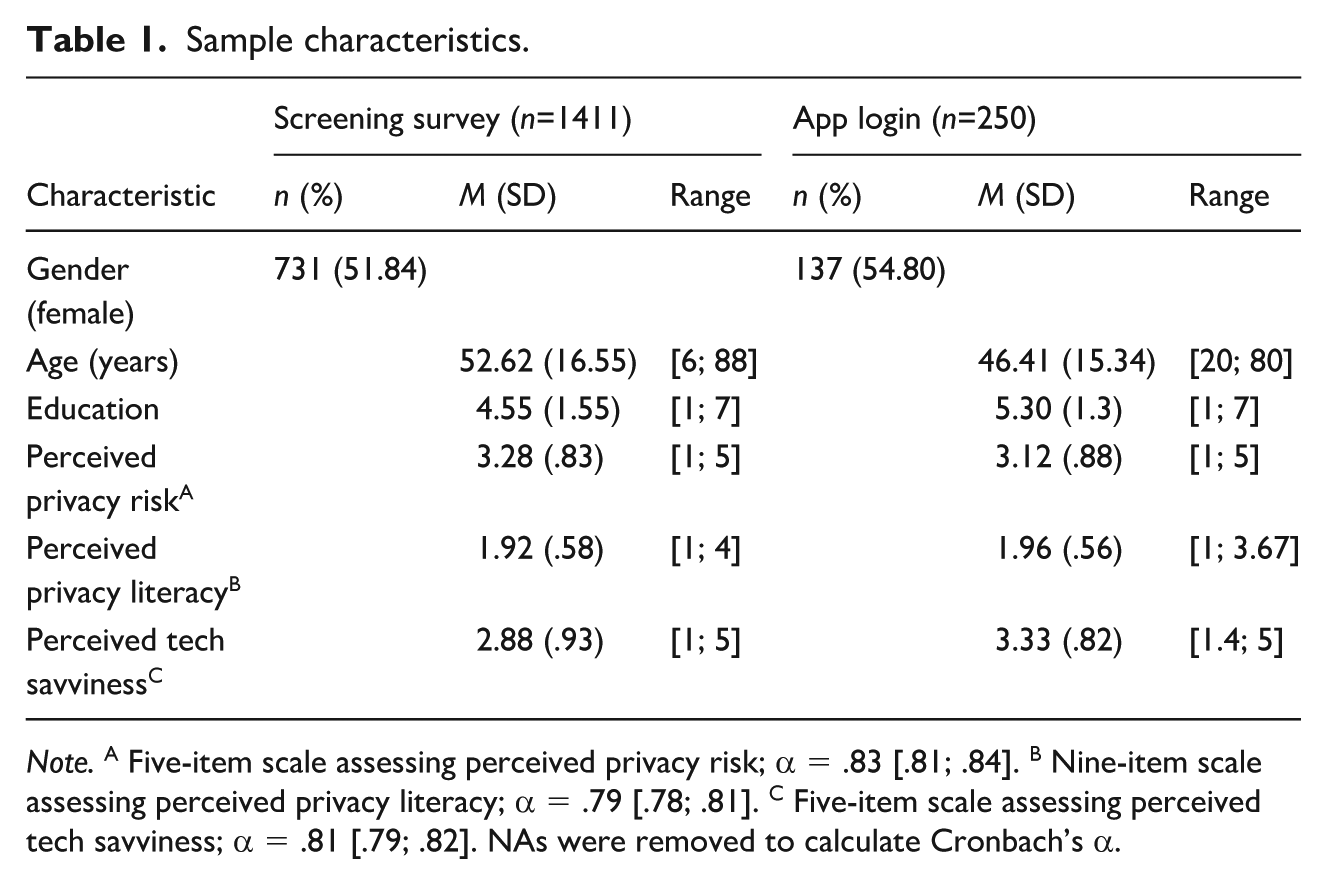

Participants first provided informed consent and filled in a recruitment survey online. At the end of the online survey, participants who provided informed consent and passed the attention check were randomly assigned to the HI-BM or DI-BM condition in the mESM part of the study. After this assignment, they received information on how frequently they will receive surveys during the mESM period. They were automatically redirected to the app store on their phones to download the ExpiWell app. 1 The app automatically created a session ID for each user, without requesting additional input, to waive entering personally identifiable information and to avoid study dropout by manually transitioning from the online survey to the mESM survey (e.g., nonmatching participant codes). This procedure allowed for the automated identification and matching of both sets of responses from the recruitment and the mESM survey. The app was available for Android and iOS, which helped recruit a more diverse sample, as differences between users of different operating systems (OS) exist (e.g., Götz et al. 2017; Reinfelder et al. 2014). A total of 250 participants entered the app successfully, showing diverse backgrounds in gender, age, and education (Table 1).

Sample characteristics.

Note. A Five-item scale assessing perceived privacy risk; α = .83 [.81; .84]. B Nine-item scale assessing perceived privacy literacy; α = .79 [.78; .81]. C Five-item scale assessing perceived tech savviness; α = .81 [.79; .82]. NAs were removed to calculate Cronbach’s α.

MESM Protocol

Daily Intensive Burst Method (DI-BM)

Once successfully logged in to the ESM app, respondents received short surveys in the form of notifications (also called beeps) sent via push messages. We used a combination of a signal and interval contingent protocol (see van Berkel et al. 2019). Respondents received seven triggers daily in two-hour intervals from 8 am to 10 pm. Within the interval, the send-out time was randomized to avoid capturing repeatedly occurring, time-dependent behavior (e.g., leaving the house on the hour). The number of triggers was independent of the number of answered notifications. We used this procedure for seven consecutive days, resulting in a maximum of 49 beeps sent under the same protocol, with an average survey duration of 30.04 seconds.

Hourly Intensive Burst Method (HI-BM)

Again, we used a combination of a signal and interval contingent protocol (see van Berkel et al. 2019). Each day, a random two-hour interval was selected, spanning the 8 am to 10 pm interval, resulting in seven two-hour time slots covered in a week. Respondents received randomized survey prompts every 10 minutes within the same two-hour periods. The number of triggers was independent of the number of answered notifications. The 10-minute interval was chosen based on research suggesting that people forget 40% of the information they have encountered after 20 minutes (see Stahl et al. 2010). We used this method for seven consecutive days, resulting in 84 beeps sent under the same protocol, with an average survey duration of 10.19 seconds.

Measures

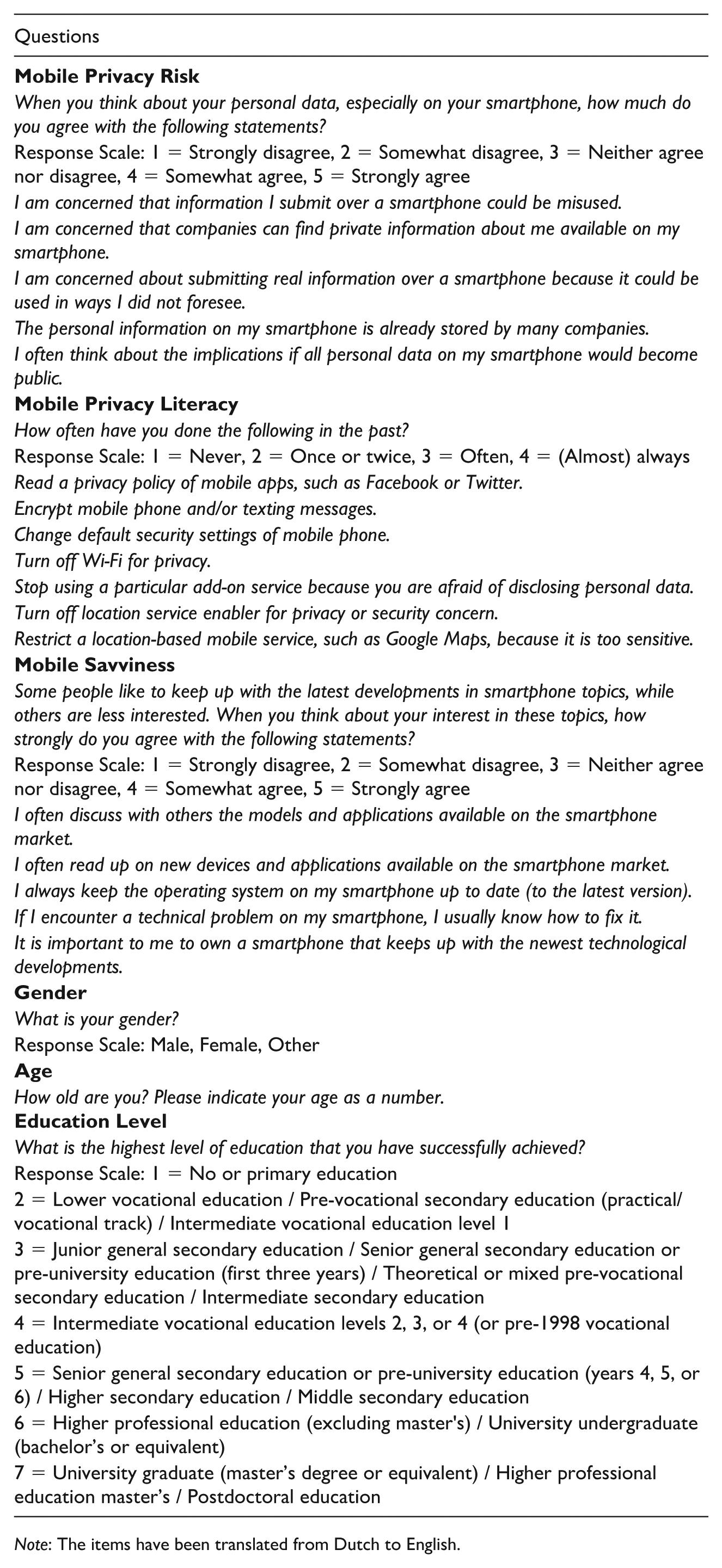

Given that the mESM survey was conducted on smartphones, we assessed mobile privacy concerns with five items adapted from Keith et al. (2013) and mobile privacy literacy with nine items based on Park and Mo Jang (2014). Mobile phone savviness was measured with five items, adapted from Ohme et al. (2021). We included an attention check in the form of a control question. The item was: “To ensure data quality, we want to find out if you are paying attention. Please select ‘strongly disagree’ for this item.” The resulting value is binary in nature. Descriptive statistics of all measures can be found in Table 1 and a full overview of the items is provided in Appendix A.

Analysis

The dataset's repeated measure format required transformations for logistic regression analysis. 2 Initially, two dummy variables were created: (1) progression to the ESM app, indicating completion of the screening survey, app download, and login; and (2) ESM protocol compliance, reflecting responses to ESM prompts and micro-survey completions. Logistic regression models analyzed the impact of sampling frequency on sample bias. A multilevel logistic regression model assessed predictors of ESM protocol compliance, including normalized variables for intra-day (wave) and inter-day (day) progressions and the type of ESM sampling strategy (DI-BM vs. HI-BM).

See the OSM (https://osf.io/7r8dx/?view_only=a4ae3a3182734f0da8f4640f9a428bd4) for the data and the analysis script for replication of the results.

Results

Sample Biases

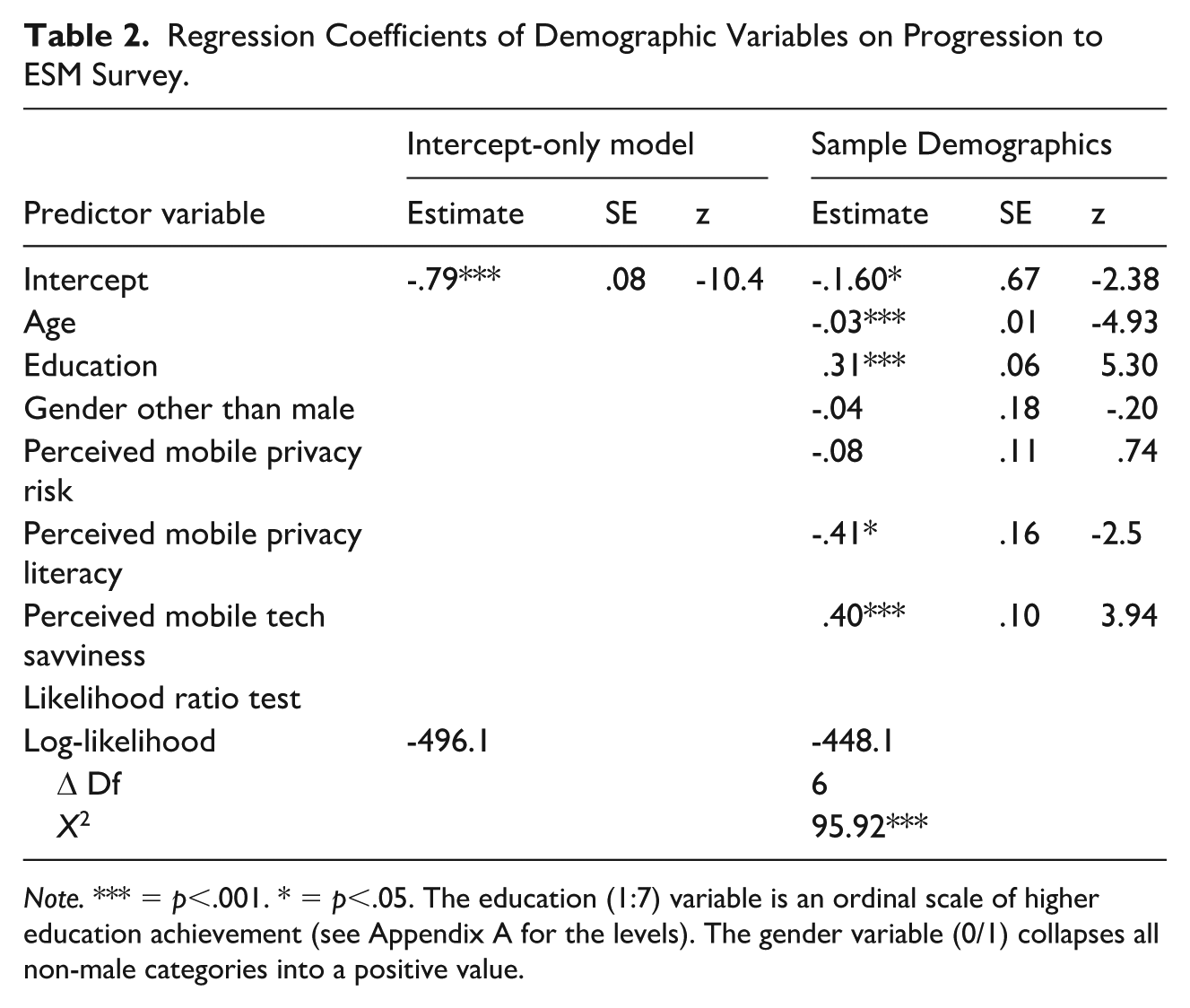

The results of the sample demographics model showed that participants’ age, their self-reported education, and their self-reported tech savviness and perceived privacy literacy had statistically significant effects on progression to the ESM app. No statistically significant effects of non-male gender and perceived privacy risk were detectable through the logistic regression analysis (Table 2).

Regression Coefficients of Demographic Variables on Progression to ESM Survey.

Note. *** = p<.001. * = p<.05. The education (1:7) variable is an ordinal scale of higher education achievement (see Appendix A for the levels). The gender variable (0/1) collapses all non-male categories into a positive value.

Remote Onboarding Success

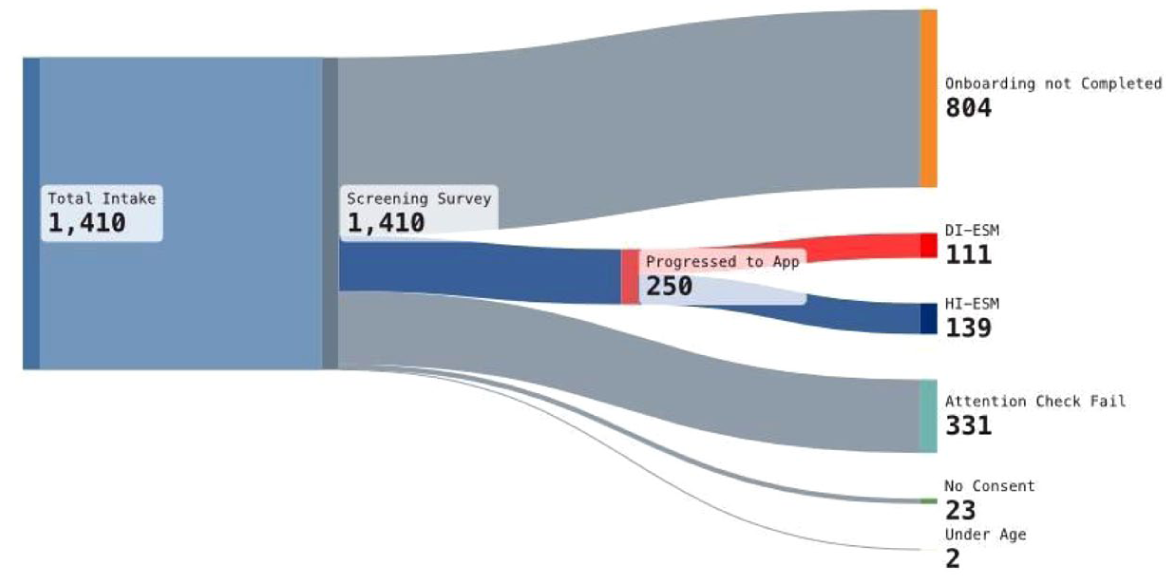

Of the original intake (N=1,411), 111 individuals (7.87%) were successfully onboarded onto the HI-BM survey and 139 individuals (9.85%) into the DI-BM survey (see Figure 1 for an overview of the onboarding process).

Sample development during mESM onboarding procedure.

Protocol Compliance

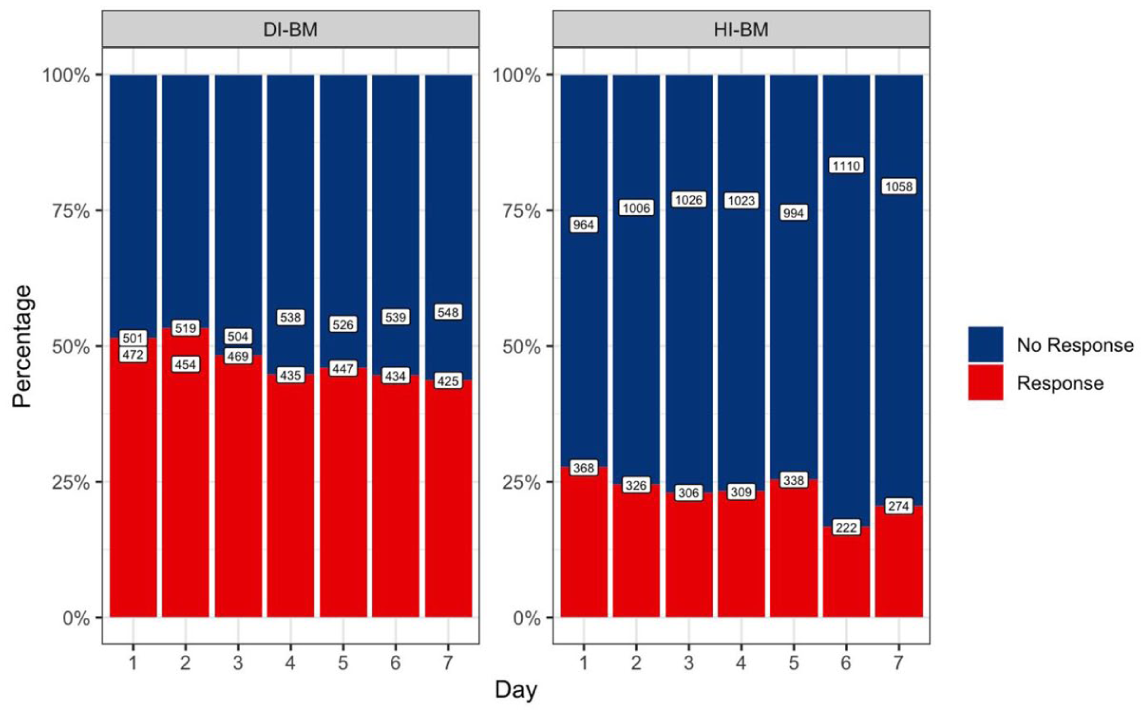

To investigate protocol compliance, we first visualized the response rate to prompts received by participants through the ExpiWell app for each study day (Figure 2). The visualization indicates a lower response rate for the HI-BM group than the DI-BM group. Response attrition is observable for both survey conditions, though it does not differ between conditions.

Count and percentage of completed mESM surveys per day and group.

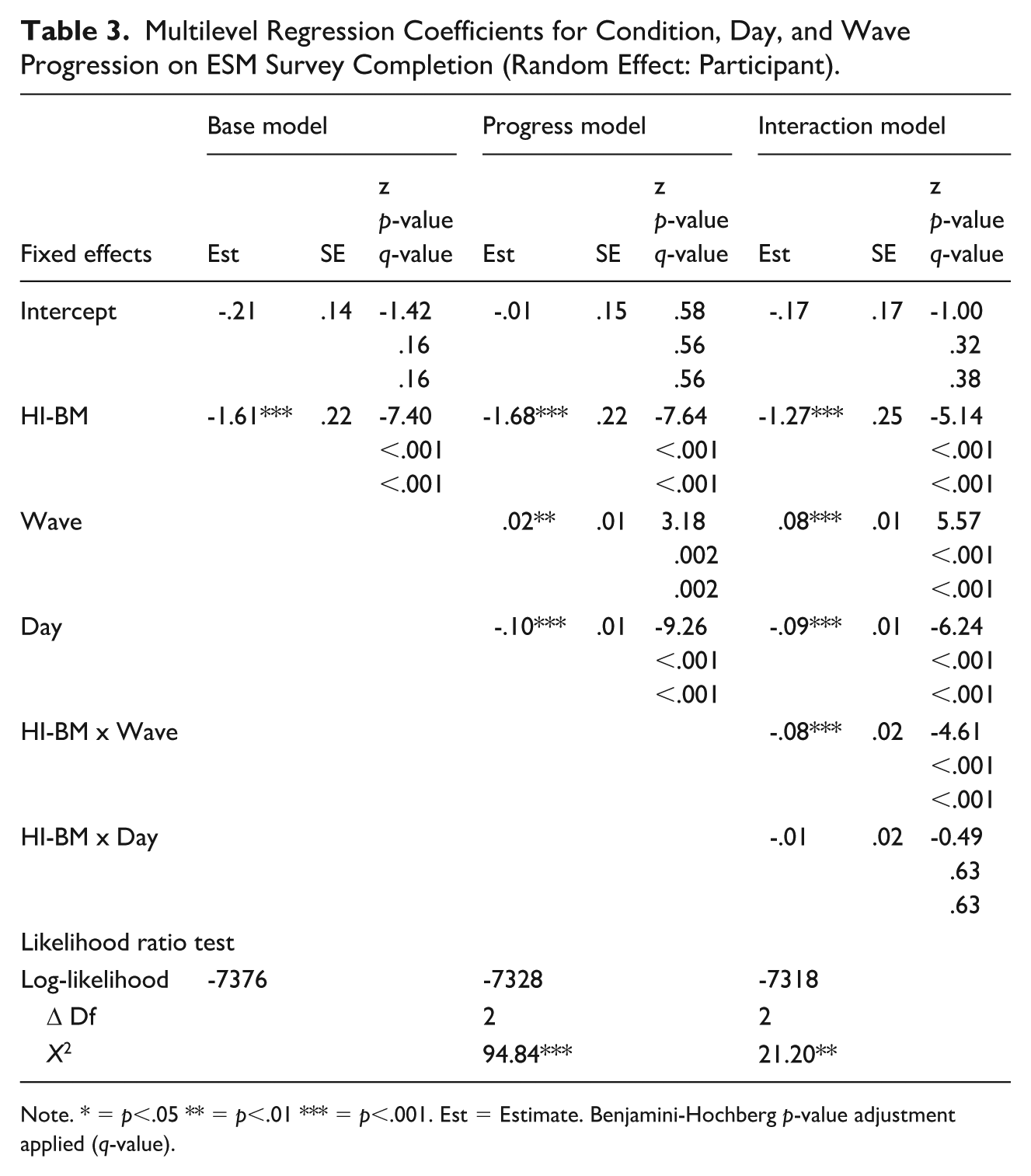

To verify the effect of the type of ESM survey and the progression along the days of the study, as well as to identify potential intra-day effects (i.e., progression along waves within a day), we fit a multilevel logistic regression model using survey completion (i.e., the number of completed surveys per day) as the outcome variable, the condition (HI-BM vs. the baseline DI-BM), daily progression (days 1–7) and intra-daily progression (waves 1–7 and 1–12, respectively) as the fixed effects and the individual participants as the random effect (Table 3).

Multilevel Regression Coefficients for Condition, Day, and Wave Progression on ESM Survey Completion (Random Effect: Participant).

Note. * = p<.05 ** = p<.01 *** = p<.001. Est = Estimate. Benjamini-Hochberg p-value adjustment applied (q-value).

In the first model, we detected significantly lower HI-BM compliance than in the DI-BM condition. In the second model, we observed significantly higher compliance as participants progressed through the intra-day survey protocol (along waves) and significantly lower compliance with each additional study day. This means that surveys sent later in the day (i.e., later waves) were taken more frequently, while surveys sent later in the week (i.e., later days) were taken less frequently on average. This might be explained by the time of day participants in the DI-BM group received their prompts since they might be more likely to respond in the afternoon rather than the early morning. Adding an interaction term between condition and day and wave, respectively, in the third model indicated that the effect of intra-day progression protocol compliance in the HI-BM condition was significantly lower than in the DI-BM condition. This suggests that respondents who received 12 push notifications within two hours responded less frequently with every beep than those who received seven push notifications within a day.

Discussion

The study took a starting point in the increasing popularity of ESM burst designs in media exposure research specifically and momentary assessment research in general. Asking the question “How often is too often?” we conceptualized two sampling frequency designs that are relevant for a wide range of research questions, which build on the frequent assessment of behavior, such as media exposure: hourly intensive burst measures, HI-BM, versus daily intensive burst measures, DI-BM. While HI-BM utilizes bursts of questions within a few hours across a day in very short succession (e.g., every 10 minutes), DI-BM design asks questions across a whole day, with larger timeed intervals between questions (e.g., every two hours). So far, research that compares ESM protocol compliance, attrition, and sample biases for ESM designs with different sampling frequencies is sparse. Previously, analog methods must be reevaluated as “advances in smartphone, Internet, and computer-assisted applications have further increased the appeal of within-day assessments” (Stone et al. 2023). To fill this gap, we conducted a study assessing digital media use with a dedicated ESM smartphone app and comparing these two conditions’ sample and response characteristics.

The study has three important findings: First, it makes it difficult to recruit respondents for ESM surveys transparently, as few studies have reported sample attrition. Besides a usual but still high recruitment error due to failed attention checks and lack of informed consent, only 23.53% of respondents installed the app successfully, and only 17.82% responded to ESM surveys. This sheds light on a less-known issue of ESM studies: high attrition during the onboarding phase. While remote onboarding has been shown to be successful for special populations (e.g., students; see Verbeij et al. 2021), our study shows the great difficulties that remain in establishing larger general population samples in an mESM study using online access panels.

Second, while small samples can lack statistical power for subsequent analysis, an even greater problem is potential sampling biases if users systematically drop out of a study. We find that younger and higher educated people with higher perceived mobile tech savviness and lower perceived mobile privacy literacy had a higher chance of progressing to the ESM app. In other words, the onboarding process of this study led to multiple systematic sampling biases. This problem is not new for studies that involve multi-step onboarding procedures, especially on mobile devices (e.g., Ohme et al. [2021] and Eisele et al. [2020], already described sample biases for age in ESM studies). Our study confirms the sample bias for age, education, privacy risk, and mobile tech savviness. This finding is important, as it means that many ESM studies aiming at general and diverse population samples may deal with a more special population than they have aimed for.

Third, and focusing on the differences between conditions, we find that the hourly-intensive burst measurement sampling frequency of sending a short survey every 10 minutes over two hours for seven days leads to lower ESM protocol compliance compared to a more spread-out sampling schedule. An hourly-intensive burst method may be perceived as more strenuous, annoying, or repetitive by respondents, which can be one explanation for our findings. A second interpretation is that the chances of missing a survey increase due to high frequency in short succession. The intended higher density of measurement points, hence, might diminish due to lower protocol compliance. Although response rates are overall lower in high-frequency sampling, the average response rate across seven study days does not strongly decrease. This indicates that while the number of measurements may be lower in a HI-BM condition, the day-to-day decrease is a minor issue. Focusing on the development of protocol compliance across waves (i.e., the number of beeps sent), people in the HI-BM condition have a lower likelihood of responding to waves later in the sampling period. Through the visualization, we see that this result is less systematic, and the statistical difference we find may be explained by a daytime effect (i.e., respondents in the DI-BM condition responded more frequently later in the day). Hence, the study cannot establish a clear indication that protocol compliance decreases in later waves.

The results have major implications for future ESM studies with high-frequency sampling. Asking questions via ESM surveys in very short succession can be meaningful and important in many research fields. Our study shows that this is a feasible data collection method, as we find it to produce a meaningful data set that can help answer questions about media exposure experiences, such as news avoidance or inadvertent news exposure. However, studies using this approach need to consider lower response rates.

This study furthermore suggests that ESM studies should pay closer attention to attrition during the recruitment phase and the potential sample biases this can lead to. Many digital research methods (be it data donations, data tracking, or ESM studies) rely on multi-step recruitment strategies. Our results show that a significant and systematic loss can occur, and we suggest future research to be transparent and mindful about this renewed obstacle in participant recruitment. Years ago, the recruitment of simple surveys was the subject of many studies, but the digital turn in surveys and experienced sampling methods have received less attention in research methods. Therefore, we suggest always including the details on systematic drop-out, for example, with a Sankey diagram we used in this study to make the study data more transparent and accessible for readers.

Limitations

This study uses a cutting-edge and unique research design, which comes with several limitations. First, the comparability of the two conditions does not strictly follow experimental methods testing but is adjusted to the different research purposes that were part of this study’s larger data collection effort. We randomly assigned respondents across conditions, but, given that we were interested in differences in sampling frequency the specificities of reference periods were inherent to the research design. Another observation is that responses took a certain daytime trajectory in the low sampling condition, with response rates being highest in the afternoon. Given the randomized intra-day sampling in the high-frequency condition, this difference could not be adjusted for in the design itself, so the lower odds of survey completion in the HI-BM condition may be due to this systematic uptake of survey completion toward the end of the day in the DI-BM condition.

Second, the purpose of investigating sample biases for these two designs means that our results are also subject to this sample bias. They are based on a sample that is younger, more educated, has more technical ability in handling mobile devices, and perceives lower privacy risks on their smartphone. We cannot rule out that results for the initial general population sample with representative characteristics of the Dutch population would differ. Although this is speculative, it is possible that a high-frequency sampling design is more strenuous for an older population and would lead to lower protocol compliance for less smartphone-savvy respondents, for example, by missing survey notifications. Moreover, this is a single-country study, and more research is needed to see whether high-frequency ESM sampling can be equally applied in countries with, for example, higher work pressure or different daytime rhythms.

Although this study is one of the first to systematically report participant dropout across the onboarding phase for a general population sample with an ESM app, the dropout was significant. While this does not directly challenge the goal of the study, modes of using text messages to recruit a specific user sample have shown higher levels of conversion rates (e.g., Mayen et al. 2024). Finally, ESM has general limitations, as it often yields high rates of missing data, which can also be missing not at random, posing challenges for data preparation and analysis despite its feasibility and potential for high-quality insights into media exposure experiences or news avoidance.

Despite its limitations, the study’s unique design showed that high-frequency sampling in mESM studies comes with certain challenges but can result in a rich body of data without high overtime attrition of respondents or a decrease in response rates. Respondents seem willing to answer questions about the media-usage behavior, even when they come frequently and are repetitive. While we need to consider systematic biases in mESM studies, the study overall provides more evidence that high-frequency sampling through burst measurement does not burst self-reports.

Footnotes

Appendix

| Questions |

|---|

|

|

| When you think about your personal data, especially on your smartphone, how much do you agree with the following statements? |

| Response Scale: 1 = Strongly disagree, 2 = Somewhat disagree, 3 = Neither agree nor disagree, 4 = Somewhat agree, 5 = Strongly agree |

| I am concerned that information I submit over a smartphone could be misused. |

| I am concerned that companies can find private information about me available on my smartphone. |

| I am concerned about submitting real information over a smartphone because it could be used in ways I did not foresee. |

| The personal information on my smartphone is already stored by many companies. |

| I often think about the implications if all personal data on my smartphone would become public. |

|

|

| How often have you done the following in the past? |

| Response Scale: 1 = Never, 2 = Once or twice, 3 = Often, 4 = (Almost) always |

| Read a privacy policy of mobile apps, such as Facebook or Twitter. |

| Encrypt mobile phone and/or texting messages. |

| Change default security settings of mobile phone. |

| Turn off Wi-Fi for privacy. |

| Stop using a particular add-on service because you are afraid of disclosing personal data. |

| Turn off location service enabler for privacy or security concern. |

| Restrict a location-based mobile service, such as Google Maps, because it is too sensitive. |

|

|

| Some people like to keep up with the latest developments in smartphone topics, while others are less interested. When you think about your interest in these topics, how strongly do you agree with the following statements? |

| Response Scale: 1 = Strongly disagree, 2 = Somewhat disagree, 3 = Neither agree nor disagree, 4 = Somewhat agree, 5 = Strongly agree |

| I often discuss with others the models and applications available on the smartphone market. |

| I often read up on new devices and applications available on the smartphone market. |

| I always keep the operating system on my smartphone up to date (to the latest version). |

| If I encounter a technical problem on my smartphone, I usually know how to fix it. |

| It is important to me to own a smartphone that keeps up with the newest technological developments. |

|

|

| What is your gender? |

| Response Scale: Male, Female, Other |

|

|

| How old are you? Please indicate your age as a number. |

|

|

| What is the highest level of education that you have successfully achieved? |

| Response Scale: 1 = No or primary education |

| 2 = Lower vocational education / Pre-vocational secondary education (practical/vocational track) / Intermediate vocational education level 1 |

| 3 = Junior general secondary education / Senior general secondary education or pre-university education (first three years) / Theoretical or mixed pre-vocational secondary education / Intermediate secondary education |

| 4 = Intermediate vocational education levels 2, 3, or 4 (or pre-1998 vocational education) |

| 5 = Senior general secondary education or pre-university education (years 4, 5, or 6) / Higher secondary education / Middle secondary education |

| 6 = Higher professional education (excluding master's) / University undergraduate (bachelor’s or equivalent) |

| 7 = University graduate (master’s degree or equivalent) / Higher professional education master’s / Postdoctoral education |

Note: The items have been translated from Dutch to English.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.