Abstract

Using masculine forms in surveys is still common practice, with researchers presumably assuming they operate in a generic way. However, the generic masculine has been found to lead to male-biased representations in various contexts. This article studies the effects of alternative gendered linguistic forms in surveys. The language forms are evaluated on three dimensions: comparability, response behavior, and response effort. The results suggest that, compared to masculine-only forms, the use of gender-fair forms does not impair comparability and does not result in detrimental response behavior for most items.

Introduction

Surveys are the most commonly used instrument in the social sciences to collect data and information on attitudes, values, and behaviors (Groves et al. 2004). To collect high-quality data, it is essential that respondents correctly understand the language and meaning of the questions. Comprehensibility—how easy it is to understand a question and the effort required to do so—may affect survey responses and response behavior. Vagueness and ambiguity can lead to inaccurate answers and measurement error (Lenzner 2012).

In German, a grammatically gendered language, using masculine-only forms in surveys is still common. The masculine-only form tends to be used either specifically to refer exclusively to men or in a generic way to describe gender-mixed groups or groups of individuals where gender is unknown or irrelevant (referred to as the generisches Maskulinum; Stahlberg et al. 2007). However, using the generic masculine might lead to ambiguities in interpretation since non-male persons might not know whether they are being referred to or not (Gygax et al. 2008). Furthermore, it has been shown in various contexts that masculine-only forms make male persons more cognitively accessible, making women and members of other genders less visible. It is assumed that this is due to a stronger association between the masculine-only form and male persons, as male persons are always included (Friedrich et al. 2022). Official guidelines in many countries recommend using gender-fair language and caution against generic masculine forms in publications (e.g., APA 2022; European Parliament 2018).

Germany introduced a third sex designation for intersex people in 2019. This law has led to an increased awareness surrounding the use of inclusive language in surveys, as well as questions about the effects of these linguistic forms. Given the unknown effects of gendered language in surveys, this article explores how alternative gendered language forms impact question comparability, response behavior, and effort.

Background

Languages represent gender in various ways. Five types of gender-related languages can be distinguished: grammatical gender languages (e.g., French), natural gender languages (e.g., English), and genderless languages (e.g., Turkish; Stahlberg et al. 2007) as well as languages with a combination of grammatical and natural gender (e.g., Norwegian) and genderless languages with “a few traces of grammatical gender” (e.g., Basque; Gygax et al. 2019:4). German belongs to the group of grammatical gender languages in which the gender of personal nouns can be aligned to the gender of the referent (e.g., German der Lehrer [masc.], die Lehrerin [fem.], “teacher”).

Gender-fair language constructs languages in a way that does not emphasize gender or presents genders equally (Sczesny et al. 2016). In German, there are several alternative gender-fair wordings, such as the feminine–masculine pair form (e.g., female and male students, Studentinnen und Studenten) or the male/female splitting form (e.g., ein/e Student/in; Student/innen, pl.). However, the explicit linguistic expression of female and male forms has also been criticized for activating gender associations and emphasizing gender dichotomy (e.g., Gabriel et al. 2018). Moreover, one form has to be mentioned before the other in the paired form and the first is usually perceived as more important (Hegarty et al. 2016).

Alternatively, language can be phrased in gender-unmarked terms by using specific endings (e.g., “chairperson” instead of “chairman”) or by referring to functions (“medical advice”) instead of persons (e.g., “doctor” and/or “female doctor”). Further alternatives are the participial form (gender-neutral plural form [e.g., Studierende, equivalent to “studiers”]) or graphic transpositions, such as the capital I in the middle of words (e.g., StudentInnen), the gender-gap (e.g., Student_innen), or the gender asterisk (e.g., Student*innen; Adler and Hansen 2020), all of which separate the masculine form or the word stem from its feminine marked suffix. The gender asterisk is intended to symbolize not only women and men but also people who do not identify with binary gender. It is currently the most widely used gender-fair form in German (Duden 2021). Using gender-fair language in surveys would contribute to a more balanced mental representation of all genders as it is intended to eliminate gender discrimination and to decrease stereotypical thinking (Kollmayer et al. 2018; Sczesny et al. 2016).

Question Response Process

When responding to survey questions, respondents must follow four cognitive steps thoroughly to provide high-quality responses: They must comprehend the question, retrieve relevant information, use the information to form a judgment, and answer the question by selecting a response (Tourangeau et al. 2000). An ideal response behavior is characterized as passing through all stages conscientiously, termed optimizing response behavior. However, respondents may not always be willing or able to perform all cognitive processes needed to answer the question meaningfully. Instead, they can take shortcuts by applying so-called satisficing response strategies when confronted with less comprehensible questions.

Satisficing behavior (Krosnick 1991; Krosnick and Alwin 1988) is manifested, for example, by giving no answer at all (item nonresponse [e.g., Olson et al. 2019]), nondifferentiation (Roßmann et al. 2018)—i.e., selecting a response option for the first item and selecting the same or similar response options for the following items—or by survey dropout (Galesic 2006). Research on cognitive aspects of survey methodology has uncovered several sources of potential measurement error arising from the response task, including question wording (Tourangeau et al. 2000). The higher the difficulty of answering a survey question and the lower the respondents’ ability and motivation, the higher the chance of respondents engaging in satisficing response behavior (Krosnick 1991).

To facilitate the response task and to ensure comprehensibility, it is generally recommended to ask short and simple questions and to avoid long or syntactically complex ones (Belson 1981). To ensure comparability, questions should be understood in the same way by all respondents. Masculine-only forms might lead to biased mental representations and ambiguous interpretations, either generically or specifically, and thus can impair data quality when respondents do not know who to include when answering the questions.

When taking the above factors into consideration, critics of gender-fair language often argue that it is confusing, less familiar, and makes written texts longer, less readable, and less comprehensible (Payr 2021; see Braun et al. 2007; Gabriel et al. 2018 for summaries). Gender-fair forms, such as pair forms, may overload the respondents’ cognitive resources, reducing the likelihood that they will perform the comprehension process thoroughly (Tourangeau et al. 2000). Conversely, it can be argued that the use of masculine-only forms in texts leads to biased mental representations or requires conscious effort to counteract these biases, making texts less comprehensible (Friedrich et al. 2022).

More inclusive forms may offend respondents, increasing the risk that they will abandon the survey (Sischka et al. 2022). Research investigating the effects of gender-fair language on the comprehensibility of texts in general has shown, though, that gender-fair language did not impair comprehensibility when using paired forms (e.g., Friedrich and Heise 2019), neutral forms (e.g., Steiger-Loerbroks and von Stockhausen 2014), capital-I forms (e.g., Pöschko and Prieler 2018), or the gender asterisk compared to masculine-only forms (Pabst and Kollmayer 2023). Further, respondents quickly get used to gender-fair forms (Steiger-Loerbroks and von Stockhausen 2014). Gender-fair language can even facilitate the response process since it evokes more appropriate mental representations of the genders and might be more precise (for reviews, see Gabriel et al. 2018; Stahlberg et al. 2007). Several studies have shown that when asked to recall representatives of certain groups (e.g., musicians, politicians) or to estimate the proportion of men and women, respondents name more male subjects after reading masculine-only forms. In contrast, using inclusive forms leads to more equal representations (Horvath et al. 2016; Stahlberg et al. 2001), but can also lead to stronger representations of women than men (e.g., using the capital I form [Heise 2000] or the gender asterisk [Körner et al. 2022]).

Research on the effect of gendered forms in surveys is scarce. One study found that responding to a questionnaire on academic motivation in either a generic masculine or pair form affected respondents’ self-reports (Vainapel et al. 2015). When female respondents answered the generic masculine form, they reported lower scores on two subscales than when completing the gender-neutral form, while no differences were found for male respondents.

Research Questions

The study examines the effects of alternative gendered language forms in surveys on three dimensions: comparability, response behavior, and response effort. The research questions are as follows:

Are gender-fair language forms comparable to the masculine-only form, or do they elicit other mental associations?

Using only masculine forms to refer to people generically can lead to uncertainty when respondents do not know whether the question also refers to non-male people. Previous research has shown that masculine-only forms lead to a male bias, while gender-fair forms lead to the inclusion of women. Differences in mental representations that affect responses to questions are examined by comparing means and response distributions between the masculine-only and other language forms.

Do gender-fair language forms lead to more undesirable response behavior than the masculine-only form?

If respondents perceive wordings as more complex or are less willing to provide meaningful responses, this will have a negative impact on response behavior and lead to more survey satisficing. According to the arguments of opponents of gender-fair language, the pair and gender asterisk forms may reduce comprehensibility by increasing length and complexity. There is also the risk that some respondents, especially those who espouse heteronormative, binary gender views and object to more inclusive gender language, might become annoyed and decide to abandon the survey when confronted with gender-fair language. To analyze response behavior, three detrimental response strategies that affect data quality are examined in this study: drop-out, item nonresponse rates, and response differentiation.

Do gender-fair language forms increase the cognitive effort required to answer questions?

In addition to the perceived complexity, the cognitive effort required to comprehend survey questions can affect data quality. As an indicator of response effort, I compare response times. If gender-fair forms are more complex, answering and processing the gender-fair forms should take longer than answering the masculine-only form (Lenzner et al. 2010).

As younger respondents—compared to other groups—may be more familiar with gender-fair language, particularly with the gender asterisk, and may be more favorable toward its use (Friedrich et al. 2021), potential age differences will be investigated.

Method

Experimental Design

To answer the research questions, two experiments were conducted in German. Experiment 1 used a set of four items dealing with respondents’ attitudes toward politicians. The items were presented in six linguistic forms. Question texts and item wordings in condition “Masculine” used the masculine-only form (e.g., politicians; Politiker; identical with the masculine form). Condition “Footnote” used the masculine-only form, as in condition “Masculine” but with a footnote below the question stem stating that women are included as well (“In the question, only the masculine form is used for the sake of simplicity. The female form is invariably included by implication.”). Condition “Feminine” used a feminine-only form (e.g., Politikerinnen). Condition “Pair” used both the masculine and the feminine form (e.g., female and male politicians; Politikerinnen und Politiker). Condition “Neutral” used a neutral wording (e.g., people working in politics; Menschen, die in der Politik tätig sind). For the neutrally worded items, an online database providing a collection of terms to facilitate gender-unmarked wording was used (https://geschicktgendern.de). Condition “Inclusive” used the gender asterisk (Politiker*innen). Items were presented in a grid format with five response options.

Experiment 2 consisted of two questions in four experimental versions, one closed and one open-ended question, to examine the effect of gender representativity. The closed question was about politicians’ desire to reduce income disparities. The second question asked respondents to estimate how much certain professions earn in an open response format. For both questions, condition “Masculine” used the masculine-only form. Condition “Pair” used both the masculine and the feminine form. Condition “Neutral” used a neutral wording, and condition “Inclusive” included the gender asterisk. The professions were represented by a chairperson of a large national corporation, a person in middle-level management, and a caregiving person in a hospital. They were selected to have variations in representation of men and women and socioeconomic status. While board chairpersons tend to be thought of as a male-dominated profession, caring professions are mainly perceived as female-dominated. Male-dominated professions are associated with higher prestige and are assumed to attract higher salaries (Fiske et al. 2002). Table A1 in the Online Supplement shows the German question forms used and the English translations.

Data

Both experiments were included in a web survey, conducted with respondents from the German opt-in online panel of the Respondi AG. The survey was conducted from November 11–22, 2021, and used quotas for gender (female, male), age (18–39, 40–59, 60 and older), and education (with/without university entrance qualification). Overall, 2,220 panelists started answering the experiments’ questions; 27 dropped out, resulting in 2,193 completes. The final sample of those 2,193 respondents comprised 50% female and 49.7% male. Seven participants did not disclose their gender or did not identify with binary gender. The respondents were equally distributed among the three age categories; half of the sample had a university entrance qualification (51%). According to a power analysis carried out with G∗Power 3.1 for a one-way ANOVA with six groups, a sample size of 1,986 respondents was necessary to ensure sufficient statistical power of 1–β = 0.95, an α = 0.05, and d = 0.10 (Faul et al. 2009).

Respondents were randomly assigned to experiment 1 with six and experiment 2 with four experimental conditions. The two experiments followed directly after each other. χ2-tests showed no statistically significant differences in the sample composition between conditions of experiment 1 and 2 (see Tables A2 and A3 in the Online Supplement).

Measures and Analysis

To compare the effects of question wording on respondents’ substantive answers, I looked at whether the observed means and response distributions differ significantly between experimental conditions. Survey dropout, the amount of item nonresponse, response differentiation, and response times were compared as indicators of satisficing.

Dropout is the share of respondents who abandoned the survey before the respective experiment was completed among all respondents who started the experiments. All other analyses consist of survey completes.

Item nonresponse is the proportion of respondents giving no response to an item.

Response differentiation reflects the extent to which respondents differentiate in their answers to items in an item battery or give (nearly) identical responses to all items (nondifferentiation). It is calculated as

Response times are collected page-wise in milliseconds using a client-side paradata script (Kaczmirek 2005). Respondents with response times shorter than the 1st and longer than the 99th percentile were eliminated as outliers from the analyses (Ratcliff 1993), which applied to 42 respondents (1.9%).

Outlier exclusion in experiment 2. In experiment two, respondents were not given any restrictions in estimating the monthly gross incomes, except that it was only possible to enter numerical values in the open text field. The entries ranged from zero and unrealistically small incomes to extremely high values. To determine the lower threshold of plausible incomes, the legal minimum wage in Germany at that time, 10.45 EUR per hour, was used. For the occupations chairperson and middle-level management, a 35-hour week was used as a basis, resulting in a minimum plausible value of 1,463 EUR. For caregiving staff, which had the lowest values, the wage for a low-income, tax-free “mini job” was used (=450 EUR). For the upper threshold, those respondents who estimated income values above the median plus the upper quartile range multiplied by three were excluded (Hoaglin et al. 2000).

Response distributions and item nonresponse were compared using χ2-tests. For means and response times, one-way analyses of variance (F-tests) were conducted with Dunnett correction for multiple comparisons with the masculine-only form as the reference category. To analyze the effects of language versions and age, two-way analyses of variance were conducted. Analyses were carried out using SPSS29.

Results

Dropout Rate

Dropout rates were analyzed as the first indicator of detrimental response strategies impairing data quality. In total, 27 respondents abandoned the survey, one respondent during experiment 1. Of the 26 respondents who dropped out during experiment 2, four were assigned to conditions “Masculine” (0.7%) and “Inclusive” (0.8%), eight to condition “Pair” (1.4%), and 10 to condition “Neutral” (1.7%). Dropout rates did not differ significantly between conditions, χ2 = 3.57; df = 3; p = .31; Cramer’s V = .04. Nineteen were female, and eight were male; four were aged 18 to 39, nine were 40–59, and 13 were aged 60 or older.

Experiment 1

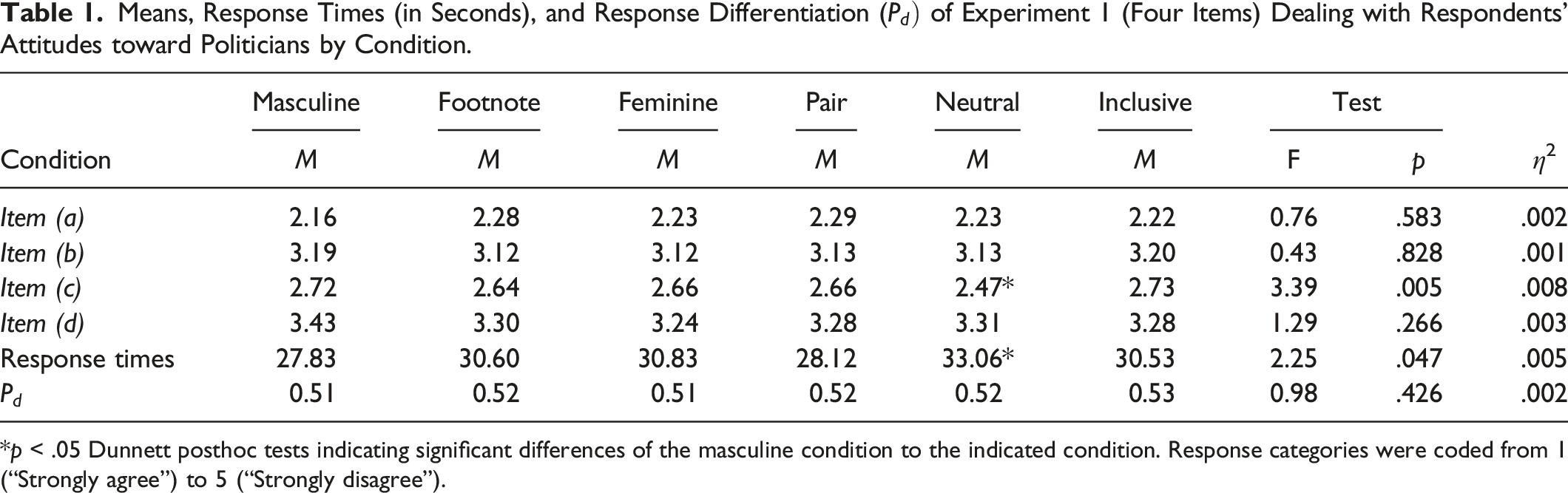

Means, Response Times (in Seconds), and Response Differentiation (

*p < .05 Dunnett posthoc tests indicating significant differences of the masculine condition to the indicated condition. Response categories were coded from 1 (“Strongly agree”) to 5 (“Strongly disagree”).

Considering age differences, two-way ANOVAs with age as the second factor showed a statistically significant main effect of age for items (c) and (d) [(c): F = 4.78, p = .008, η2 = .004, (d): F = 15.65, p < .001, η2 = .014). For item (c), respondents aged 18–39 had lower mean scores compared to the older age groups (40–59; 60 and older). For item (d), means were statistically significantly lower for respondents aged 60 years and older compared to those aged 18–39 and 40–59. There were, however, no significant interaction effects of language form and age [(c): F = 0.87, p = .56, η2 = .004, (d): F = 0.71, p = .72, η2 = .003; see Table A5 in the Online Supplement).

Comparing response distributions across experimental conditions revealed no statistically significant differences (see Table A4 in the Online Supplement for the answer distributions).

Item nonresponse was generally very low, with one to three respondents per item [(a): n = 2, (b) n = 3, (c) n = 1, (d) n = 3] and did not occur systematically in any of the experimental conditions.

Regarding response differentiation as an additional quality indicator, no significant differences were found (F = 0.98, p = .43, η2 = .002). Respondents in all conditions differentiated their answers to a similar degree. There were also no differences by age group (F = 0.19, p = .83, η2 = .00).

Comparing cognitive processing showed statistically significant differences in response times answering experiment 1 (F = 2.25, p = .05, η2 = .005). Respondents needed the least time to answer items presented in the masculine-only and pair form and took the longest for the neutrally worded items. Dunnett posthoc tests indicated significant differences between the masculine and the neutral condition (p = .02). Overall, respondents aged 60 or older took longer to answer compared to the younger age groups (F = 19.95, p < .001, η2 = .02), but there was no statistically significant interaction effect with language forms (F = 0.72, p = .71, η2 = .003).

Experiment 2

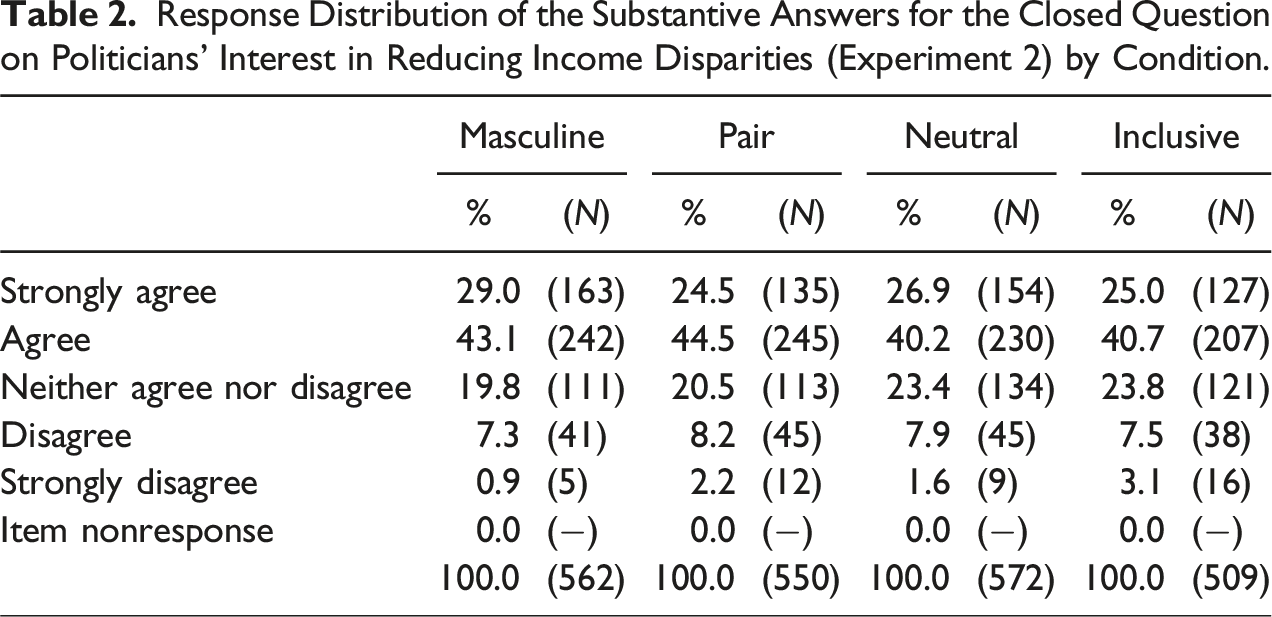

Response Distribution of the Substantive Answers for the Closed Question on Politicians’ Interest in Reducing Income Disparities (Experiment 2) by Condition.

A two-way ANOVA revealed no statistically significant main effects for language form (F = 2.26, p = .08, η2 = .003), or age (F = 0.73, p = .48, η2 = .001), and no significant interaction effect between the two factors (F = 1.23, p = .28, η2 = .003; see Table A6 in the Online Supplement).

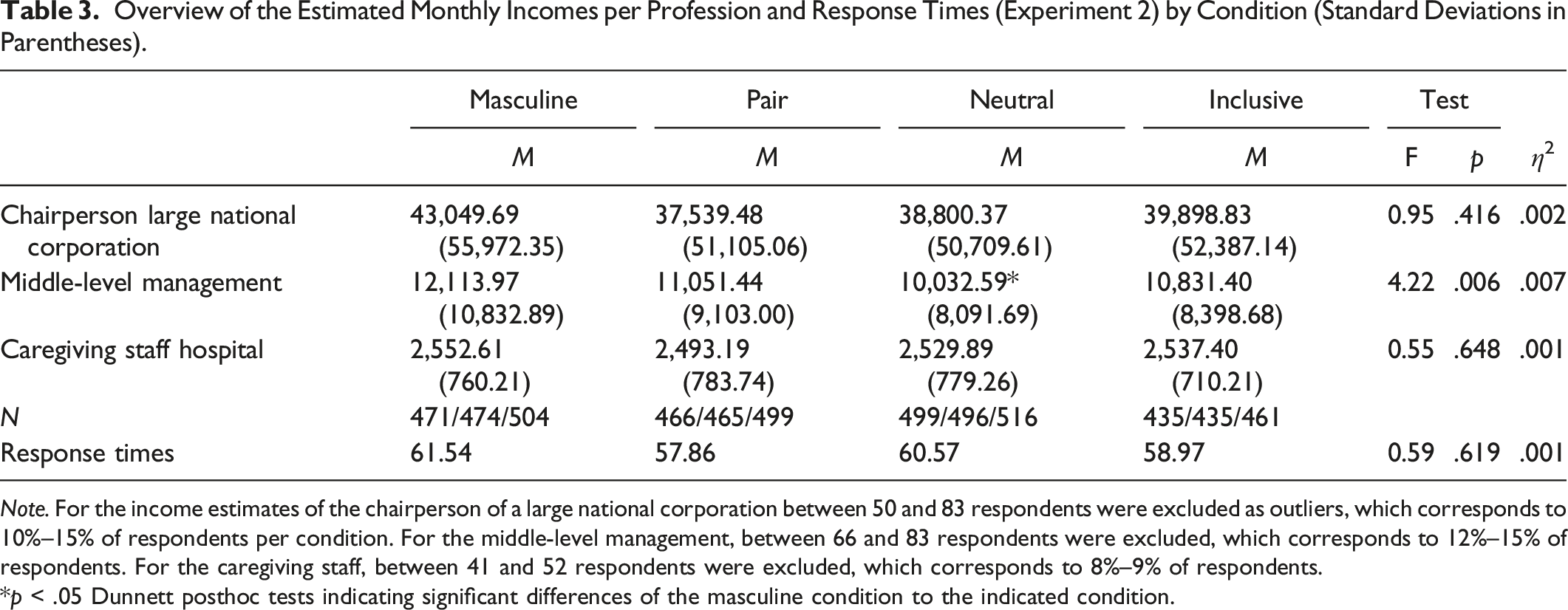

Overview of the Estimated Monthly Incomes per Profession and Response Times (Experiment 2) by Condition (Standard Deviations in Parentheses).

Note. For the income estimates of the chairperson of a large national corporation between 50 and 83 respondents were excluded as outliers, which corresponds to 10%–15% of respondents per condition. For the middle-level management, between 66 and 83 respondents were excluded, which corresponds to 12%–15% of respondents. For the caregiving staff, between 41 and 52 respondents were excluded, which corresponds to 8%–9% of respondents.

*p < .05 Dunnett posthoc tests indicating significant differences of the masculine condition to the indicated condition.

Estimates of income by age group showed mixed results. For chairperson and caregiving staff, the analyses revealed main effects for age (F = 40.09, p < .001, η2 = .041; F = 11.49, p < .001, η2 = .012). Respondents aged 18–39 gave the lowest estimates of all incomes, while the oldest respondents gave the highest income estimates. Only in the condition “Pair” did the oldest group estimate incomes for these two occupations that were lower than those of respondents aged 40–59. For middle-level management, there were statistically significant main effects for language form (F = 4.85, p = .002, η2 = .008) and age (F = 59.04, p < .001, η2 = .06), but no significant interaction effect (F = 0.74, p = .62, η2 = .002). Again, income estimates increased with age (see Table A7, Online Supplement).

Item nonresponse was between 1.4% (chairperson and middle-level management) and 1.6% (caregiving staff) and did not differ by conditions. With regard to response effort, no differences in the time needed to answer the open question were found (F = 0.59, p = .62, η2 = .001; see Table 3).

Discussion

In this study, the wording of items used in surveys was varied to investigate whether alternative gendered forms affected comparability, response behavior, and response effort. For most items, the results indicate that gender-fair forms do not affect comparability, response behavior, or response effort compared to masculine-only forms.

No differences in means were observed between the language forms, except for differences between the masculine-only and the neutral form. This was the case for item (c) in experiment 1 and for the income of the middle-level management in experiment 2. The income of the middle-level management position was estimated as significantly higher when the profession was worded in a masculine-only than in a neutral way, indicating differing mental associations. This is in line with previous research (e.g., Horvath et al. 2016).

With regard to undesirable response behavior, no significant differences were found. The low dropout and nonresponse rates indicate that most respondents were willing to answer the survey independent of language forms.

Response times only differed between the neutral and the masculine-only condition, with longer response times for the neutrally worded items. This could be due to the slightly longer item text or because the terms used were paraphrased more heavily, inhibiting understanding.

In summary, no evidence was found that gender-fair language affects comparability, respondents’ behavior or effort, and thus, exhibited no negative effect on data quality in this survey. Considering the vagueness of masculine-only forms, it seems reasonable to use gender-fair forms, given that one important strategy for reducing measurement error is to minimize interpretation problems (Lenzner 2012).

Researchers who want to formulate their questionnaires in a gender-fair way should nevertheless consider the following practical recommendations: Before adapting existing items, it is essential to check whether the wording used may affect respondents’ comprehension of the question and its equivalence in meaning. When adapting items in a neutral way, it is not always possible for the terms to be used one-to-one in every context, and the words might carry slightly different connotations or meanings. For the term “politicians” (Politiker und Politikerinnen in German), there is no equivalent neutral wording, and the term politician was replaced with “people who work in politics.” Although the wording had no effect in this study, survey designers should establish in advance whether neutral alternatives actually mean what they are intended to mean. Cognitive interviewing—a pretesting method used to expose whether respondents understand the question as intended—can help in this regard (Lenzner et al. 2023). Additionally, the order of words can convey a hierarchy. In the “Pair” condition, the masculine form was stated first, followed by the feminine form, which may have favored men and biased representations toward them (Hegarty et al. 2016). Generally, the term “politician” is more strongly associated mentally with men, which reflects the composition of the German national parliament, with 36% female members (Misersky et al. 2014). Whether questions with terms that evoke stronger associations with males have a stronger effect on response behavior requires further exploration.

Also worth examining are the effects of gender forms for the oral presentation of questions, with particular emphasis on the interaction between interviewer and respondents. In this study, questions were presented visually to respondents, and answered in a self-administered way. Gender-fair forms may not be equally suitable for spoken and written language. In the case of interviewer-administered questionnaires—either by telephone or face-to-face—orthographic transpositions might not be articulated as intended (e.g., when interviewers do not comply with the usage or become careless over the course of the interview). This initial study does not indicate that using gender-fair language in surveys compromises data quality. Nevertheless, further research with more questions and in a wider range of contexts is essential.

Supplemental Material

Supplemental Material - How Do Alternative Gendered Linguistic Forms Affect Response Behavior in Surveys?

Supplemental Material for How Do Alternative Gendered Linguistic Forms Affect Response Behavior in Surveys? by Cornelia E. Neuert in Field Methods

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.