Abstract

Cost-effective and user-friendly, mobile phone-assisted methods have remained underutilized in qualitative social science research. The scarce methodological guidance, together with recruitment and ethical challenges, has arguably stifled advancements in this area. COVID-19 exposed the need to better equip researchers with the expertise and tools to conduct remote research effectively. In 2020, we designed and launched a smartphone survey application to collect real-time data from children’s sector professionals across the globe regarding best practices in, and challenges to, responding to the pandemic. In this short article, we reflect on the efficiency, quality, and acceptability afforded by the smartphone app survey, and outline recommendations for enhancing rigor and feasibility. We also present data snippets illustrating the positive impact of participation on respondents—a seldom-documented aspect of app-based research. Altogether, we advocate a flexible, pragmatic, and user-centered study and app design that aligns with respondents’ specific, situational needs, and preferences.

Introduction

The COVID-19 pandemic has amplified the interest in remote data collection methods, including mobile phone-assisted research (Hensen et al. 2021; Rahman et al. 2021; Tiersma et al. 2022). Blurring geographical boundaries, such methodologies proffer efficiency, convenience, privacy, and customizability (Braun et al. 2021). Those affordances are particularly suitable for research in low- and middle-income countries (LMICs) and remote settings, and research with hard-to-reach groups (Davidson et al. 2021; Rahman et al. 2021; Tiersma et al. 2022). Collecting rapid qualitative data during COVID-19 has been important for guiding policy and practice (Vindrola-Padros et al. 2020). There has been markedly less focus in the methodological literature on remote surveys with predominantly open-ended questions (OEQ; Tiersma et al. 2022), and yet the inclusion of OEQ presents distinct design, respondent management, and analytic challenges (Fielding et al. 2013).

This article discusses a smartphone app-based methodology for gathering in-the-moment quantitative and qualitative data across countries, in the last quarter of 2020.

Project Background and Study Design Overview

The multinational “COVID 4P Log for Children’s Wellbeing” project was a response to the destabilization of children’s sectors and support systems brought about by COVID-19, and the urgent informational gaps as to how professionals were responding to this crisis. In the last quarter of 2020, a smartphone app hosting an eight-week survey was designed and launched across 29 countries, in collaboration with international partner organizations (Advisory Group). The survey gathered 3,339 responses from 247 respondents—frontline providers, managers, policymakers, and other children’s sector professionals—across 22 countries and five continents. The survey was structured into eight broad topics relevant to children’s well-being, (including protection from violence; access to basic necessities; access to justice; alternative care; socio-emotional well-being; and participation). Each week, the app was updated with daily questions for that week’s given topic, and the app gave daily (but customizable) reminders. An average of three questions were sent per day. Respondents could skip any question and withdraw any time, with their existing responses being kept. Questions from previous weeks remained in a “calendar” function and could be revisited at a later time. The eight-week survey contained 177 items (61%—OEQ; 39%—close-ended questions). The survey was available in English only. No financial or other material incentives were provided. Respondents did receive a Certificate of Completion via the app. Altogether, the study had a flexible, pragmatic, and user-centered design that aligned with the specific, situational needs of our target population (see Davidson et al. 2021; Davidson et al. 2023; Karadzhov et al. 2023).

Methodological Reflections on Process and Outcomes

Choosing a Mobile Research Platform: A Cost–Benefit Analysis

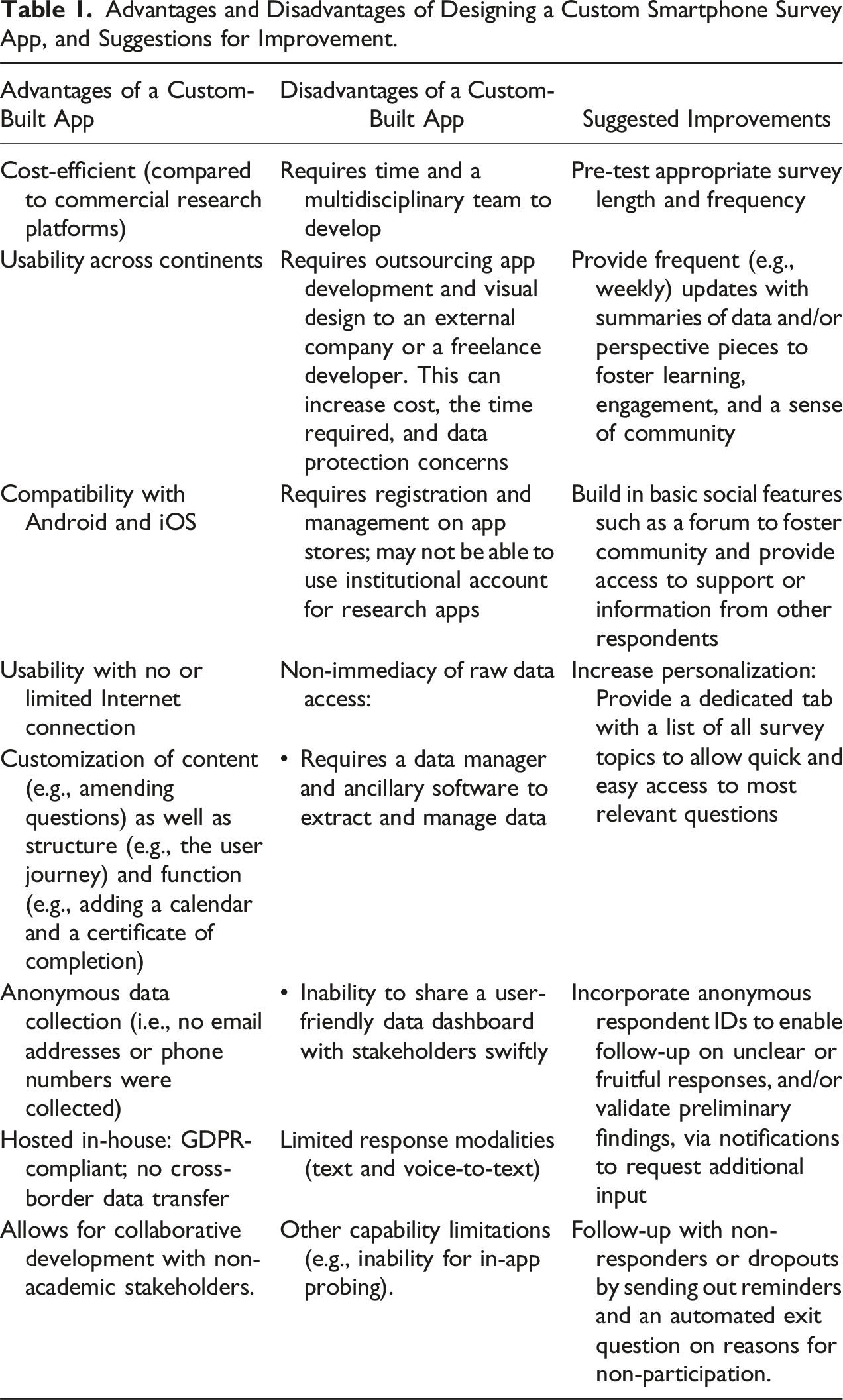

Advantages and Disadvantages of Designing a Custom Smartphone Survey App, and Suggestions for Improvement.

Optimizing the Mobile Phone Survey Design

Overall, the app and survey design proved to be efficient—taking only four months to develop, and acceptable—gathering a large number of responses from more than 20 countries. Nevertheless, we identified several areas for optimizing survey design, particularly data quality and respondent engagement and retention (see Table 1).

Anticipate, Manage, and Minimize Attrition

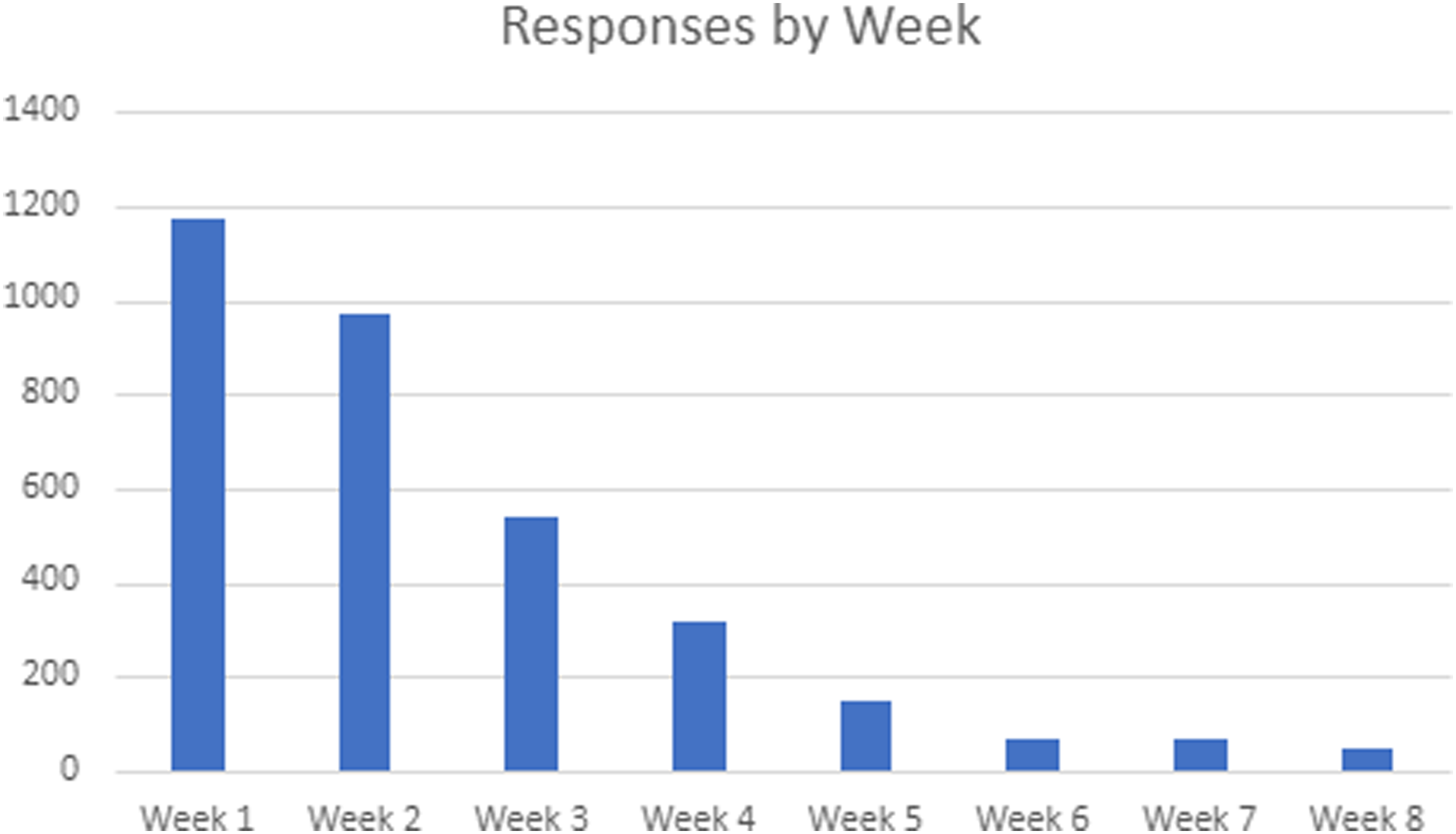

The response rate declined dramatically after the second week (see Figure 1). We next offer potential explanations and countermeasures. Number of responses per week.

The high number of OEQ (61%) increased the burden of participation. It also remains likely that respondents—who represented various countries, sectors, and roles—selectively responded to questions and topics most aligned with their remit. This may account for the high non-response rates in the latter weeks (see Figure 1). A countermeasure would be to offer respondents a “menu” of all survey topics at the start, and allow them to select the most relevant ones (increased personalization; see Table 1).

It remains unclear whether the daily reminders were effective as respondents could turn them off. This lack of information as to how respondents interacted with app functionalities remains a limitation. Attrition and non-engagement should be analyzed methodically (Houtgraaf et al. 2022). A feasible tactic would be sending out an exit question to explore reasons for non-participation automatically triggered by a period of non-activity (Tiersma et al. 2022). Alternatively, our project partners could have disseminated informal surveys exploring what prevented eligible professionals from participating.

Crucially, the anonymous data collection precluded many evidence-based response maximization strategies (e.g., pre-contacting potential respondents, and/or sending out email invitations or reminders; Tiersma et al. 2022; Wu et al. 2022).

Leverage the App as a Knowledge-sharing and Community-building Platform

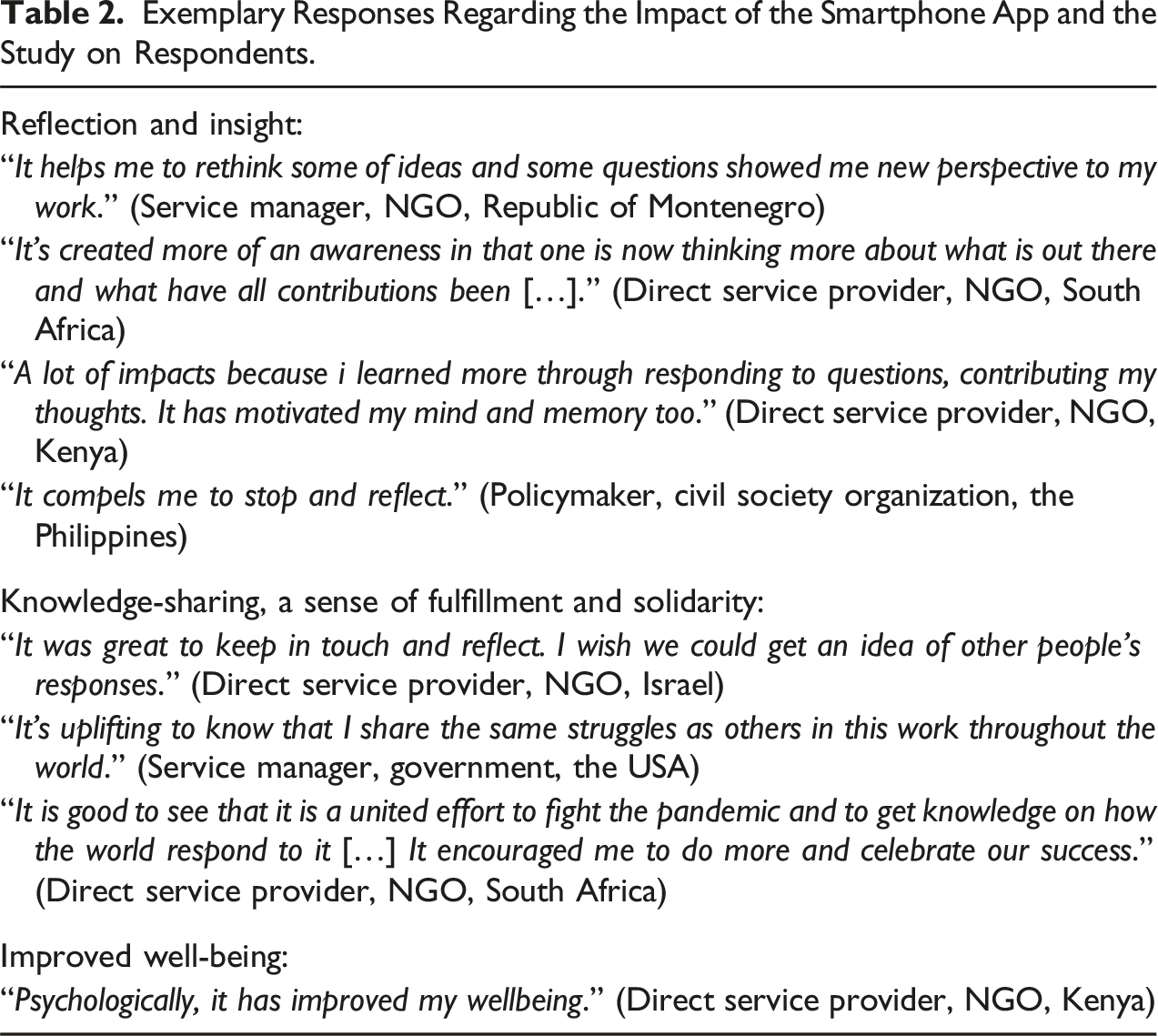

Exemplary Responses Regarding the Impact of the Smartphone App and the Study on Respondents.

Relatedly, increasing researcher presence and building a supportive community via the app would have allowed us to survey children directly, provided reasonable safeguards were in place, and feed back their views to sector professionals to foster inclusion, empathy, and knowledge-sharing.

Enable Real-time Probing and Follow-up

The feasibility of real-time probing should be explored in future smartphone app surveys. For example, anonymous respondent IDs attached to each response could be used to send targeted follow-up in-app prompts requesting more detail. To test the feasibility and utility of this function, it could be piloted with a small cohort of volunteer respondents during the first week, and the approach iterated (question wording, frequency, what responses are followed-up, etc.) as needed to balance respondent workload, availability, and data richness. This could be implemented randomly or purposively—the latter entailing the selection of a few “critical” or “extreme” cases for in-depth in-app interviewing (Kauffman and Peil 2020). This would exemplify a nested sampling design, whereby a sub-sample of “key informants” is selected to provide additional detail (Onwuegbuzie and Leech 2007).

Evaluating Acceptability and Impact on Respondents

While attrition rates provide a quantitative (proxy) indicator of acceptability, qualitative data regarding the user experience should also be collected (Twis et al. 2020). This can help understand reasons for retention. Respondents were asked a set of questions about their app experience in Weeks 2, 4, 6, and 8 (see Appendix). Although the number of responses was modest (primarily due to attrition observed post-Week 1), the responses indicated positive experiences and effects (see Table 2).

Thirty (79%) of the 38 respondents who completed this question stated their overall experience with taking part in the study was “positive” or “very positive,” compared to eight (21%) who replied with “neutral.” When asked how easy it was to use the app, 29 (81%) responded with “easy” or “very easy”; five (14%)—“neutral”; and two (6%)—“hard.” Finally, 21 (57%) reported not having experienced difficulties while using the app, compared to 15 (41%)—“yes”; and one (3%)—“don’t know.” Examples of technical issues commonly reported are the lack of confirmation for successfully submitted responses; repetitive questions; difficulties using the voice-to-text functionality; and difficulties with the calendar navigation.

It must be noted, however, that those responses are non-representative of the sample due to the aforementioned attrition observed post-Week 1. Selection bias cannot be ruled out—respondents who enjoyed the app more were more likely to remain engaged in the latter weeks and provide positive feedback. This reinforces the need to survey dropouts and non-responders (Table 1).

Concluding Reflections and Recommendations

We urge researchers engaging in remote, app-based research to practice an ethic of care, and balance research risks and potential benefits to respondents (Crivello and Favara 2021). Our app signposted respondents to in-country well-being support. We also recommend that surveys include questions on the impact of study participation on respondents, particularly when conducting research on sensitive topics or during emergencies. The positive respondent feedback reinforces the importance of maximizing the beneficial psychological, socio-emotional, and educational impact of remote research on practitioner populations, particularly those operating in high-stress environments (see Table 2).

Despite the aforementioned methodological limitations and practical constraints, the project generated rich and actionable findings into a rapidly evolving emergency—demonstrating the utility of rapid collaborative qualitative research (Chan et al. 2022; Davidson et al. 2023). Our experience resonates with Firchow and Mac Ginty’s (2020) call for a pragmatic approach to implementing “good enough” methodologies—allowing for pragmatism and agility while meeting the minimum criteria for scientific rigor “when operating in suboptimal contexts for research” (p. 135).

Footnotes

Acknowledgments

While their mention does not imply their endorsement, the authors are deeply grateful to our international key partners, who actively shaped the overall project and without whom this project could not have been undertaken: the African Child Policy Forum; African Partnership to End Violence Against Children; Barnafrid National Centre on Violence Against Children; Child Rights Coalition Asia; Child Rights Connect; Defence for Children International, European Social Network; Fédération Internationale des Communautés Éducatives; Global Social Services Workforce Alliance; International Child and Youth Care Network; National Child Welfare Workforce Institute; Organization for Economic Cooperation and Development; the Observatory of Children’s Human Rights Scotland; Pathfinders for Peaceful, Just and Inclusive Societies; REPSSI Pan African Regional Psychosocial Support Initiatives; the UN Special Representative of the Secretary-General on Violence Against Children; and Terre des hommes. These key partners were not involved in the development of this manuscript. Finally, we would like to extend our thanks to the app designer, Krzysztof Sobota; to Erin Lux, for the database expertise and research assistance; to Helen Schwittay and Sophie Shields, for the research and knowledge exchange assistance; and to Mark Hutton, for the app visual design.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Scottish Funding Council Global Challenges Research Fund (Reference: SFC/AN/18/2020).