Abstract

There is abundant literature about interviewer effects on the survey process, but studies of interviewer training are quite limited. Previous research has produced mixed findings on how training affects interviewer performance. Trainings are often conducted in person despite the mixed findings. There has been no research that examines the use of videoconferencing as a medium for training field survey interviewers. We conducted an interviewer training experiment with the Medical Expenditure Panel Survey (MEPS). We randomly assigned 242 field interviewers into three training modes: in person, videoconference (i.e., WebEx), and self-administered training. Each interviewer’s performance was observed before and after the training. As post-hoc analysis, we observed improvement for higher performed interviewers trained in videoconference. Interviewers trained in videoconference rated their experiences similar to their counterparts trained in person.

Background

It is well documented that interviewers affect the data collection process both positively and negatively. Under the Total Survey Error (TSE) framework (Groves et al. 2011), interviewers contribute to errors arising from the survey process. There is a rich and diverse literature showing that interviewer’s characteristics, such as experience, demographic characteristics, attitudes, and personality, can affect the sample frame coverage, cooperation rates, measurement errors, and key survey estimates (e.g., West and Blom 2017).

Interviewer training is often conducted with the intent of reducing the interviewer-related error in standardized interviews. The goal is to achieve consistent application of the interviewing protocols across interviewers, such as providing all respondents with the exact same question wording, probing in a nonleading way, and providing neutral feedback as needed. Previous research on interviewer training has often focused on either improving interviewers’ skill set on gaining cooperation from sampled members or collecting high-quality data from respondents. Presumably, training would result in improved interviewer performance and therefore improved cooperation and data quality, however, the findings from previous research are mixed.

Some of the previous research has found positive training effects on gaining cooperation. Groves and McGonagle (2001) developed an interviewer training protocol that aims to increase cooperation rates by improving the interviewer’s skills at tailoring and maintaining interaction. By tailoring, the interviewer quickly classifies a respondent’s comment into an appropriate category and delivers an appropriately phrased response. They tested the training protocol with two telephone establishment surveys and found improved cooperation rates for interviewers who received the training. Mayer and O’Brien (2001) tested the feasibility of using the Groves and McGonagle (2001) training protocol in a telephone household survey. They found that interviewers who attended the refusal aversion training had significantly higher cooperation rates as compared to their counterparts who did not attend the training.

However, sometimes training did not improve the interviewer performance on gaining cooperation as excepted. For examples, O’Brien et al. (2002) examined the effects of a refusal aversion training experiment in a face-to-face national household health survey. Equal number of interviewers selected from two census regions were assigned to either receive or not receive the training. They found that trained interviewers had higher but not statistically significant cooperation rates between pre- and post-training phases as compared to untrained interviewers. Schnell and Trappman (2006) examined the effects of an interviewer training protocol that aims at reducing refusal rates with the German European Social Survey (ESS) Wave 2. ESS collects data via face-to-face Computer-Assisted Personal Interviewing (CAPI) interview. The authors found that interviewers who received the training had a significantly lower refusal rate but a significantly higher noncontact rate as compared to their counterparts who did not receive the training.

The findings on how interviewer training affect data quality are also mixed. Some of the previous research has found positive training effects. For example, Cannell et al. (1981) conducted a series of experiments to examine how interviewer techniques affect respondent’s responses in highly structured interviews. They focused on specific interviewing techniques: giving interviewers thorough instructions on their role and the interviewing task, providing feedback to respondents given their response behaviors, and asking respondents to sign an agreement promising to do their best to give accurate and complete answers. They found that interviewers who received training in these specific techniques collected data of higher quality compared to their counterparts who did not receive the training (e.g., more complete answers to open-ended questions, increased precision when reporting the dates of doctor visits and medical events, increased reporting of socially undesirable behaviors, and reduced reporting of socially desirable behaviors). Billiet and Loosveldt (1988) conducted a field experiment to examine the effects of interviewer training on the respondent’s responses to factual questions. The training focused on collecting accurate information from the respondent. Compared to untrained interviewers, trained interviewers had significantly lower item nonresponse for six out of nine questions for which nonresponse and underreporting were expected as well as more complete information for questions that required more interviewer activities, such as clarification, feedback, and probing.

However, other studies have found no effects of interviewer training on measures of data quality. Fowler and Mangione (1990) reported an interviewer training experiment that varies the length of the training and the closeness of supervision (i.e., if an interviewer’s cases were tape recorded and reviewed). They didn’t find significant differences across the groups in response rates. There were no significant main effects of training or supervision on the within-interviewer correlation (

Despite the mixed findings, most of the interviewer trainings are conducted in person as a workshop. For large-scale nationally representative in-person surveys, interviewers often travel to attend the workshop in person for a short period of time. The workshop covers project specific topics. It usually includes lectures on each topic with practice exercises such as role playing in pairs and small groups. Recently, there has been increasing interest in the use of videoconferencing applications or platforms for qualitative research, such as cognitive interviews and focus groups, in the health research field (e.g., Davies et al. 2020; Namey et al. 2020). In the survey research field, a few studies have explored the use of videoconferencing for interviews in either a laboratory setting (e.g., Sun et al. 2021

In this study, we conducted a field interviewer training experiment with interviewers who have been working on a large-scale nationally representative survey to examine the effects of training modes, including in-person, videoconferencing, and self-administered training by Learning Management System (LMS), on interviewer performance. In particular, we explored the feasibility and effectiveness of using videoconferencing as a medium to train field interviewers. We also collected interviewer feedback about the training.

Data and Methods

MEPS-HC

The Household Component of the Medical Expenditure Panel Survey (MEPS-HC) is a nationally representative survey of the U.S. civilian noninstitutionalized population. MEPS-HC collects data from a sample of families and individuals in selected communities across the United States, drawn from a nationally representative subsample of households that participated in the prior year’s National Health Interview Survey (NHIS). MEPS-HC collects data in all 50 states and Washington, D.C. There are over 100 primary sampling units (PSUs). We conducted up to 20,000 interviews in a field period with a household reporter. The MEPS-HC collects data through an overlapping panel design. A new panel of sample households is selected each year and is followed up for two calendar years. This includes five rounds of interviews that take place over a two-and-a-half-year period. MEPS-HC is an in-person survey. It provides continuous and current estimates of health care expenditures at both the person and household level.

Interviewer Grouping

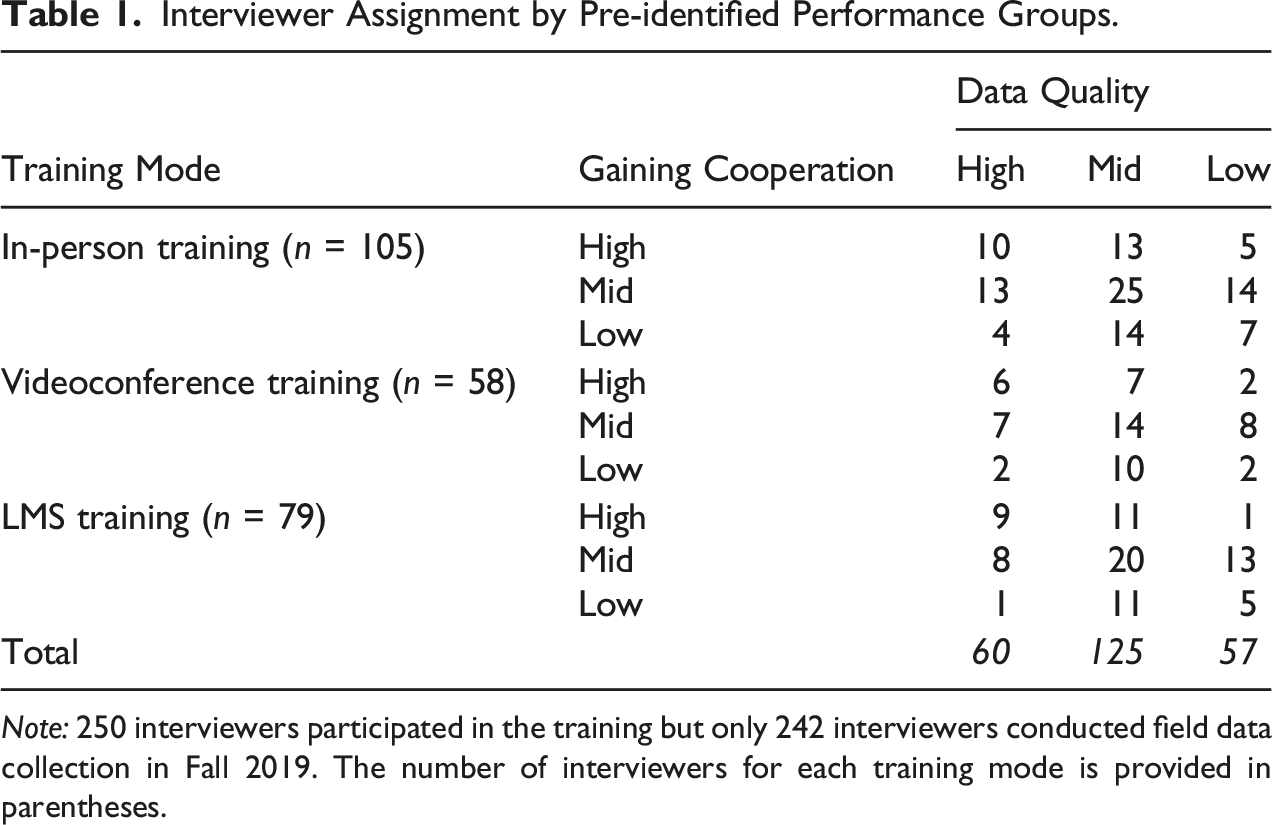

In the Fall of 2019, we conducted an experiment to examine the effects of training on interviewer performance. The purpose of the training was to refresh experienced interviewers on two skill sets: how to gain cooperation from sampled households and how to collect high-quality survey data. Two-hundred and fifty field interviewers participated in the training. All interviewers had been working on the MEPS-HC for three or more rounds and were considered experienced interviewers. After the training, 242 interviewers conducted field data collection in Fall 2019. To achieve a balanced sample of interviewers in each training mode, we computed scores for each interviewer on two skill sets using data from the preceding round of data collection (see Appendix Table 1). The first skill set is the interviewer’s performance on gaining cooperation, which was operationalized by the number of cases the interviewer worked and his or her cooperation rate. The second skill set is the interviewer’s performance on collecting high-quality data, which was operationalized by a set of measures, including key survey estimates (e.g., provider match rate) and paradata (e.g., interview length, interviewer comments, and the use of special keyboard shortcuts). We selected these measures because they could be obtained in timely fashion during data collection and have been used for monitoring interviewer performance previously in MEPS-HC.

We computed two composite scores—a cooperation score and a data quality score—to reflect the interviewer’s performance on the two skill sets. To develop the composite scores, we first computed the standardized z-score for each measure and then calculated the weighted sum of the individual standardized z-score to produce the composite scores. We assigned weights to the individual measures based on their importance to the MEPC-HC data collection. Next, we examined the distribution of each of the composite scores to divide interviewers into performance groups. Interviewers with a score in the lower quartile of the distribution were defined as low performers, interviewers with a score in the upper quartile were defined as high performers, and the rest of the interviewers were defined as mid-performers. Thus, we divided the interviewers into nine pre-identified performance groups across the two skill domains (i.e., three groups for gaining cooperation by three groups for collecting high quality data).

Interviewer Assignment by Pre-identified Performance Groups.

Note: 250 interviewers participated in the training but only 242 interviewers conducted field data collection in Fall 2019. The number of interviewers for each training mode is provided in parentheses.

Training Mode

In-person Training

One hundred and five experienced interviewers were assigned to the in-person training condition. The in-person training was carried out in mid-August 2019. It was a two-and-a-half day training period that included 24 modules covering various topics, such as gaining cooperation in the MEPS-HC Round 1, keeping respondents engaged during the interview, conducting refusal conversion, reviewing key MEPS-HC components, collecting hard-copy materials, and managing interview time. The in-person training was a combination of long and short lectures, large group discussions, small group exercises, and CAPI hands-on practices.

During the training, interviewers were split into small groups to complete exercises. We formed the groups so that each was a mixture of pre-identified high-, mid-, and low-performers to promote peer learning. The goal was to have low- and mid- performers interact with their peer high-performers during the exercises and therefore encourage learning from high-performers on the targeted skill sets. For example, one training module focused on approaches and tools for gaining the respondent’s cooperation in keeping and using records. During the CAPI hands-on practice, low- and mid-performers would observe and discuss with the high-performers on how to ask for records and probe additional records without it seeming like a burden to the respondent.

After attending the in-person training, interviewers were asked to complete a web survey to provide feedback about the training. They were asked a set of debriefing questions about their experience, such as “In general, how would you rate your overall experience with the training?” “In terms of collecting high quality survey data, how much new information did you learn at the training?” and “After the training, how confident are you now in collecting high quality data in more challenging situations?”

Videoconference Training

Another 58 interviewers were assigned to the videoconference training condition. The videoconference training was carried out in mid-September 2019. We used WebEx (https://www.webex.com) as the platform to train interviewers in the videoconference condition. Interviewers were assigned to six WebEx sessions based on their pre-identified performance and availability. Each WebEx session had approximately 10 interviewers with varied performance on gaining cooperation and collecting high quality data. The training was a single two-hour WebEx session that covered two modules targeted data quality, Provider Search and Hard Copy Collection.

The Provider Search section of CAPI instrument produces the names, addresses, and telephone numbers of the health care providers identified during the interview. The Provider name helps the respondent answer questions in the health care utilization section. It also informs the contact information displayed on authorization forms used for the MEPS Medical Provider Component (MPC) survey. The MPC is a voluntary survey designed to supplement, replace, and validate health care expenditure and source of payment data collected in the MEPS-HC. The information collected in the Provider Search section has a substantial impact on the processes and costs associated with the MPC survey. The Provider Search training emphasized how to utilize more effective search strategies, how to select the most appropriate provider, and how to enter complete information for providers that are not in the CAPI look-up list. Hard copy collection is another important component of the MEPC-HC interview. The training focused on reviewing the purpose of hard copy collection and the challenges that the interviewer may face with this part of the MEPS-HC interview process. It also focused on how interviewers can respond to respondent objections to this part of the interview.

In preparation for the videoconference training, we mailed the interviewers a hard copy home study guide, exercise packets, and exercise worksheet. Interviewers were asked to read all the contents of the home study packets and complete all the required tasks indicated in the packet. We emailed the interviewers their assigned WebEx session date and time. In addition, we scheduled test WebEx sessions with the interviewers to tackle any login or technical issues before their assigned training date and time. In the WebEx training, the trainer delivered the lecture, reviewed the exercises with the interviewers, and conducted hands-on practice as a group. To keep the interviewers engaged during the training, the trainer called on individual interviewers to answer practice questions or share their experiences.

After attending the videoconference training, interviewers were asked to complete a web survey to provide feedback about the training. The same set of debriefing questions used in the in-person training were used here. In addition, interviewers were asked about their prior experience with WebEx training and if they experienced any technical issues during the training.

Self-administered Training by LMS

Another 79 interviewers were assigned to the self-administered training using LMS. This is the type of training we often use to refresh interviewers on their skill sets between any two rounds of data collection. The LMS training was carried out in mid-October 2019. It covered the same two modules as the WebEx training: Provider Search and Hard Copy Collection. We mailed the interviewers the hard copy home study guide, exercise packets, and exercise worksheets. Interviewers were asked to read all the contents of the home study packet and complete all of the required tasks indicated in the packet. In addition, they were asked to complete the practice exercises and view the entire LMS presentations on provider search concepts, the provider search exercise, reasons for hard copy collection, and understanding challenges associated with this task. The LMS manages and delivers assigned electronic training and documentation in a browser environment. The training was self-administered and self-paced. The LMS tracks and reports on completion of assigned training modules for individual interviewers.

After completing the online training, interviewers were asked to complete a web survey to provide feedback about the training. The same set of debriefing questions used in the in-person training were used here.

Outcome Measure

The outcome measure in the study is the provider match rate that is computed as the number of matched providers over the number of eligible providers. Eligible providers are those with a valid ID in the MEPS Medical Provider Component National Provider Inventory (NPI) database that meet additional project specified criteria. Interviewers use a directory developed from the NPI database to select eligible medical providers based on the respondent’s response. Matched providers are eligible providers interviewers found and selected though the provider directory. If a match is not located, the provider is added without an NPI ID. A high match rate indicates a higher level of data quality.

Statistical Approach

The MEPS-HC collects data through an overlapping panel design. Respondents were either at their Round 2 or Round 4 interviews in Fall 2019. These respondents were considered cooperative, given they already participated in at least one round of data collection previously. In Round 3 and 4, there were not many opportunities for interviewers to improve their skill set on gaining cooperation. Thus, we focused on the provider match rates in the analysis as it is the direct measure of how well the interviewer mastered the concepts and content delivered in the Provider Search module. The Provider Search module was provided to interviewers in all training modes. The level of interaction between the trainer and the interviewer varies due to the nature of mode. However, the same content and exercises were used across the training modes. Like other large-scale nationally representative in-person surveys, MEPS-HC sampled households are not randomly assigned to interviewers due to cost constraints and interviewer availability. Instead, interviewers are usually assigned to work in a single geographic area that confounds interviewer and area effects. Given interviewers were nested within geographic areas, we first fitted a two-level random intercept unconditional model to explore how much variation in the outcome is associated with geographic areas. Only about 2% of the total variation in the outcome measures (the provider match rate) was accounted for by geographic areas, and it was not significant (

For the same interviewer, the provider match rate was computed both before the training and after the training (i.e., at the end of the field period). To account for the correlated errors, we used marginal linear models to examine how interviewers’ performance change over time (West et al. 2015). In this study, we are not attempting to isolate interviewer effects. The focus is to examine the overall, marginal relationship between training modes and the provider match rate. The general specification of the marginal linear model is:

We first fitted a marginal linear model to examine the effects of training modes on provider match rates before and after training for all interviewers. Then we fitted separate marginal linear models to see if the training effects vary for interviewers in the three pre-identified performance groups. The models were estimated using the restricted (or residual or reduced) maximum likelihood (REML) estimation in SAS 9.4 PROC MIXED with unstructured covariance structure.

Results

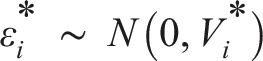

Parameter Estimates in the Marginal Linear Model That Predicts the Provider Match Rate for All Interviewers (n = 242).

Note: ***p < .0001.

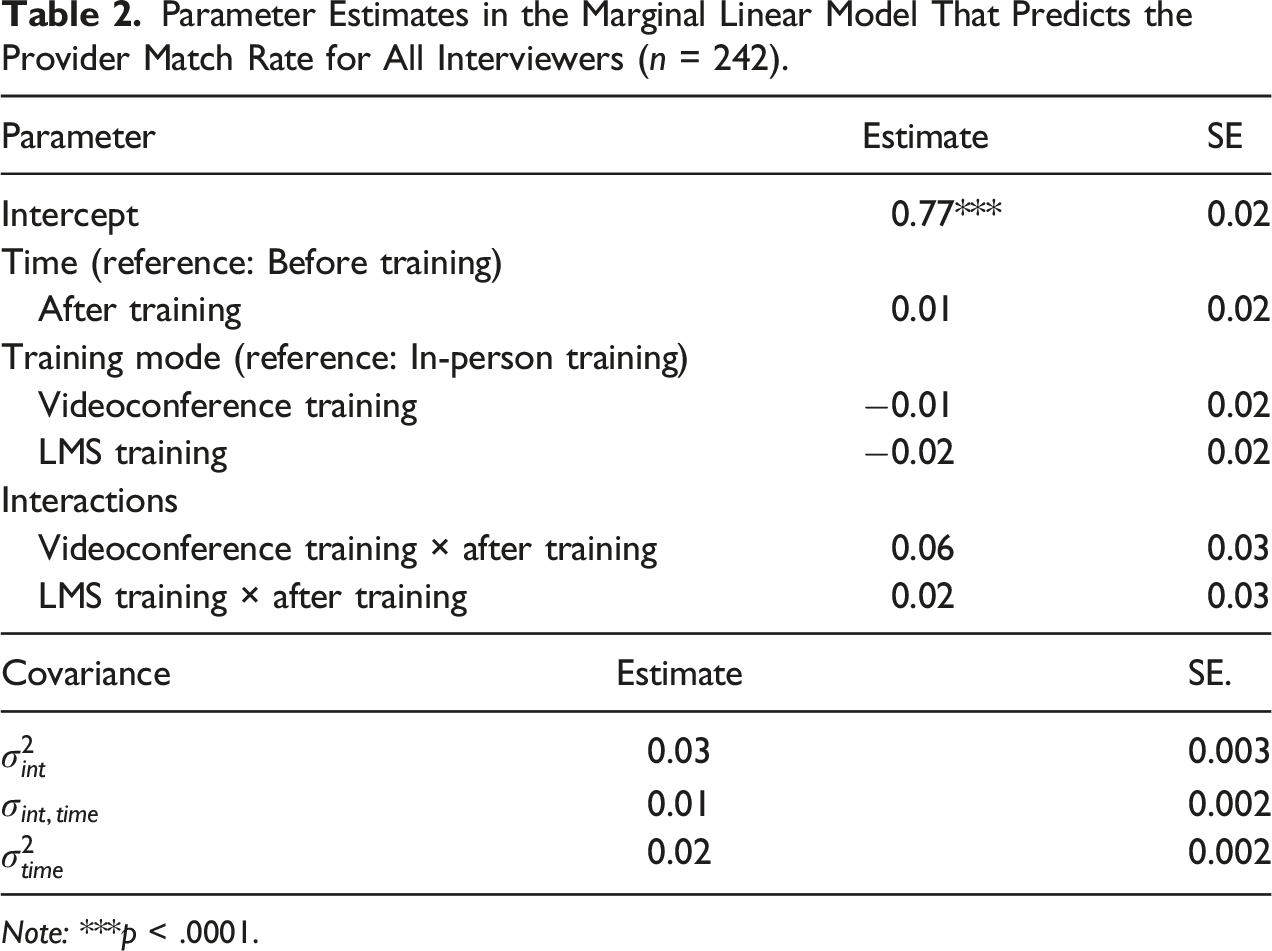

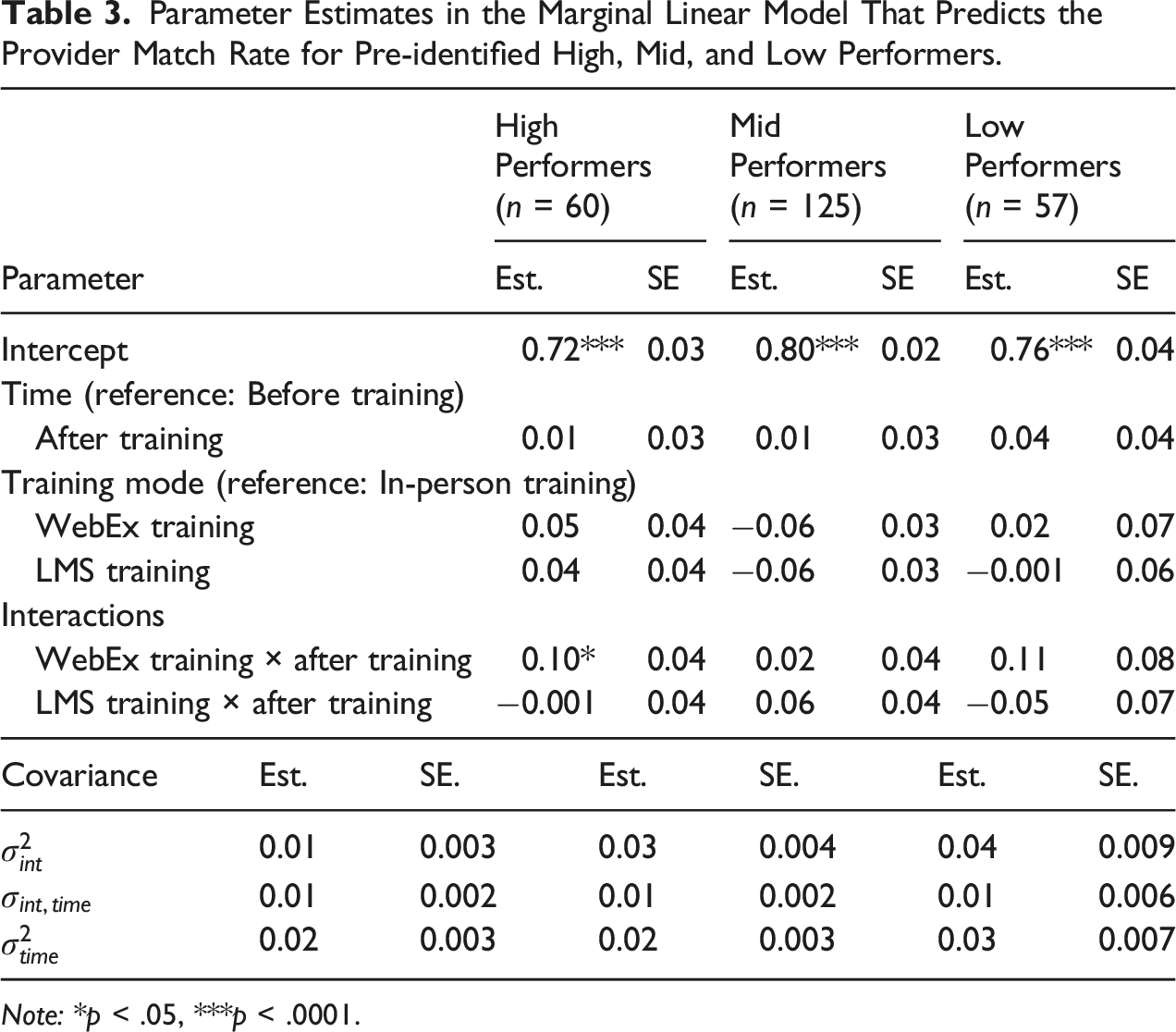

Parameter Estimates in the Marginal Linear Model That Predicts the Provider Match Rate for Pre-identified High, Mid, and Low Performers.

Note: *p < .05, ***p < .0001.

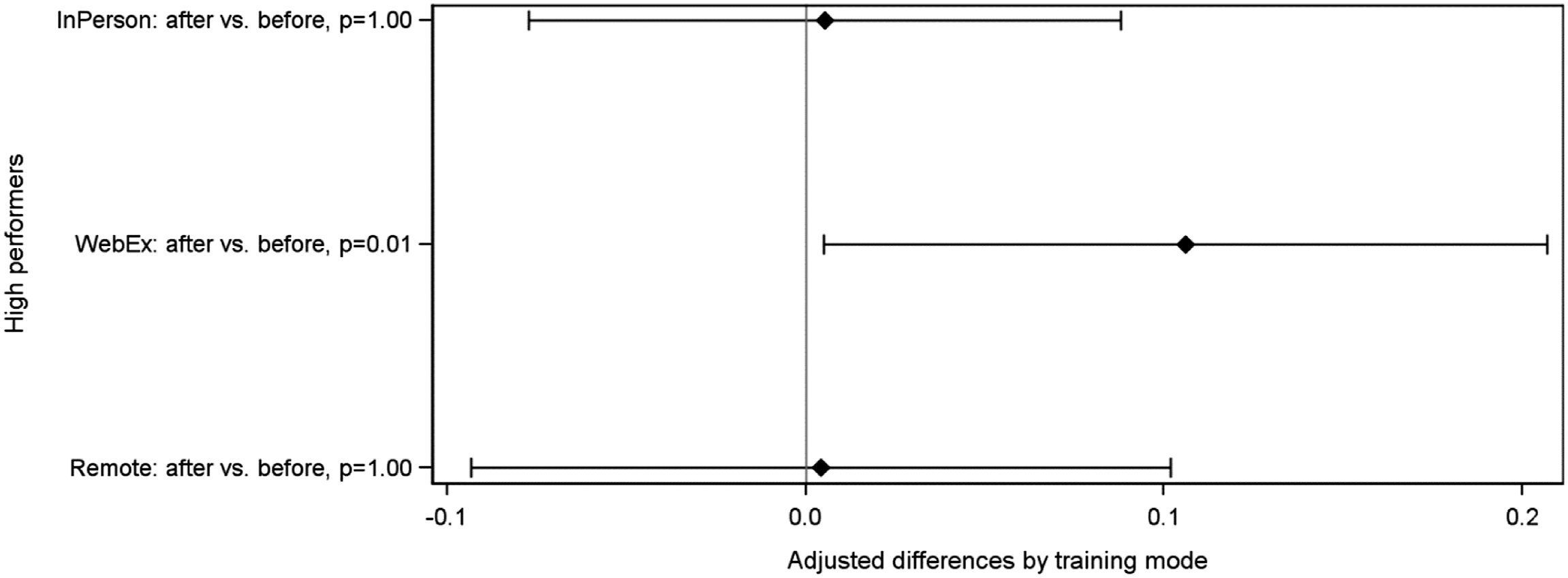

Figure 1 presents the adjusted differences on least square means of the fixed effects on the interaction between training modes and time (after vs. before training) for the pre-identified high-performance group. The p-values and confidence intervals for the differences were adjusted for multiple comparisons using the step-down Bonferroni method (Holm 1979). As shown in Figure 1, for interviewers pre-identified as high performers, there was significant improvement on the provider match rate before and after the training if they were trained in videoconference ( The LS Means and 95% confidence intervals for the adjusted differences before and after training by training mode for pre-identified high performers.

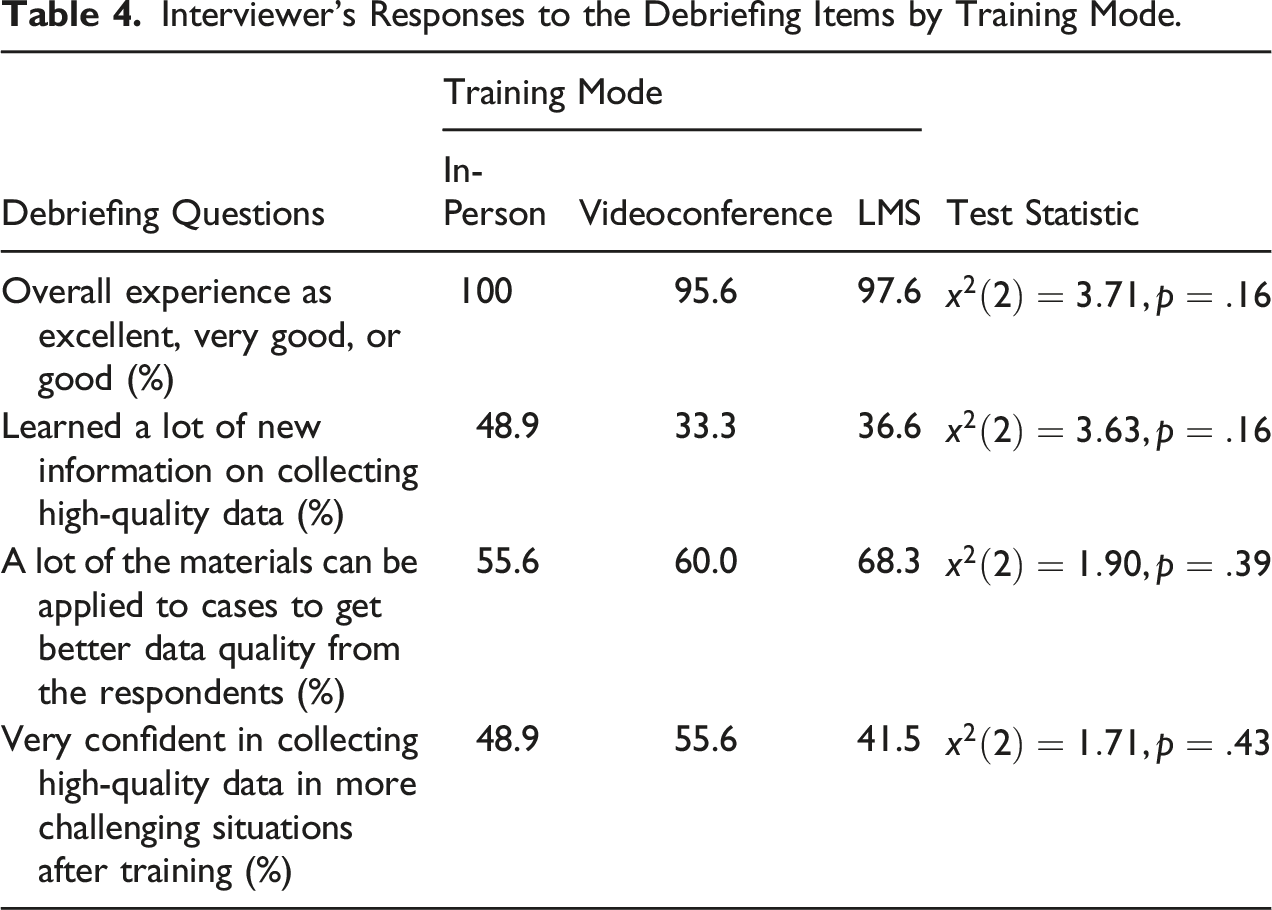

Interviewer Debriefing

Interviewer’s Responses to the Debriefing Items by Training Mode.

We asked two additional debriefing items for interviewers trained in WebEx. For interviewers trained in WebEx and completed the debriefing questionnaire, 28 out of 45 interviewers had never attended any WebEx sessions before the training. However, only four out of these 28 interviewers had some technical issues while attending the training. If the WebEx training is well planned, the lack of prior experience with the training mode does not seem to adversely affect the overall experience.

Summary and Discussion

We conducted a field experiment with a large-scale nationally representative survey to examine the effects of training modes on interviewer performance before and after training. We assigned interviewers into one of the three training modes, including in-person, videoconference, and LMS. The outcome measure used in the study was the provider match rate, which is closely related to the interviewer’s performance on collecting high-quality data. We measured the provider match rate before the training and after the training (i.e., at the end of the field period). We then examined the overall, marginal relationship between training modes and the provider match rate. We did not find significant improvement on the provider match rate before and after training by mode at p = .05 level. As post-hoc analysis, we saw improvement on the provider match rate for high performers trained in videoconference. During the interviewer debriefing, interviewers trained in the three modes did not provide significantly different responses to questions about their training experiences.

The amount of interaction varies across the three training modes. Consider the amount of interaction an interviewer experienced during training as a continuum, interviewers in the in-person training had the greatest amount of interaction with the trainers and fellow interviewers, followed by videoconference training and the LMS training. In the in-person training, all interviewers shared the same physical location and had face-to-face interactions with one another during the training. In the videoconference training, however, interviewers interacted with the trainers and a smaller group of fellow interviewers virtually for a few hours. As compared to the in-person training, the amount of interaction was reduced in the videoconference training. In the LMS training, interviewers completed the training as self-administered modules with no direct interactions with others.

The in-person training covered 24 modules in two and a half days. One might expect that the in-person training would be most effective as it was intensive and had the greatest amount of interactions between trainers and interviewers. However, we did not find significant improvement on the provider match rate before and after training for interviewers trained in-person. This is not completely unexpected as the in-person training did not focus on any particular interviewer performance measures but the overall tactics on gaining cooperation and collecting high quality data. It could be that the training improved interviewers’ overall understanding about the study and increased their motivation to work harder in general. Unlike the in-person training, both videoconference and LMS training only emphasized two modules. One of the modules specifically targeted the provider match rate. We saw improvement for pre-identified high performers trained in videoconference. It appears that focused training targeting a few skills and having trainer–interviewer interaction to some extent is more effective than comprehensive training targeting all aspects of interviewer performance.

However, training interviewers in WebEx or via any videoconferencing platforms requires extensive preparation. In this study, we offered six sessions, each with about 10 interviewers. This was based on our prior experience using WebEx as a tool to debrief interviewers. Keeping the session size small allows effective interaction between the trainer and interviewers. Each session also had a mixture of high, mid, and low performers to encourage peer learning. All this made scheduling a challenging task as interviewers’ availability varied and last-minute changes were inevitable.

The study has some limitations. First, training modes and field period are confounded. The training for interviewers assigned to the three training modes occurred during different weeks of the field period. Previous research found that reluctant respondents tend to provide survey responses of low quality as compared to cooperative respondents (e.g., Curtin et al. 2000; Olson 2013). Early respondents are more likely to be easier cases. They are often being worked at the beginning of the field period. Late respondents are more likely to be harder cases, for example, interim refusals. They require additional efforts from the interviewers and are often being worked at a later stage of the field period. Although it is unclear if the provider match rate would be lower for harder cases as compared to easier cases, it is not unrealistic to assume that interviewers would have more difficulties collecting information about providers when interviewing reluctant respondents. Due to limited resources and staff availability, we were not able to conduct training in the three modes at the same time. For future research, we recommend replicating the current design by providing trainings to interviewers assigned to the different training modes at the same time to remove the confounding effects. Having all training conducted at the same time also allows one to examine the training effects over time.

Compared to interviewers trained in videoconference and LMS, interviewers trained in person had additional 22 modules covered in the two-and-a-half days training. It is well documented in the psychology literature that people have limited attentional capacity and therefore cannot process and respond to all the relevant information to the task, nor completely ignore distracting information (e.g., Eriksen and Eriksen 1974; Pashler 1994). The training effects on a particular aspect of the interviewer performance may be “diluted,” given all the information provided across all the sessions during the day. The training mode and training content are likely confounded given the additional modules covered by the in-person training.

For future research, we recommend improving the experimental design by having the same modules provided in in-person and videoconference to see how effective the training modes are. Another factor worth exploring is the optimal number of modules to be offered in a videoconference training session. In addition, all interviewers in this study were considered experienced. They had at least three rounds of prior experience working on the survey. Comparing the effects of training modes on inexperienced and experienced interviewers would be an important extension of the current study. Nevertheless, our findings suggest that training interviewers via videoconferencing is a promising method that deserves further consideration.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Disclaimer

The views expressed in this article are those of the authors, and no official endorsement by the U.S. Department of Health and Human Services or Agency for Healthcare Research and Quality.

Appendix

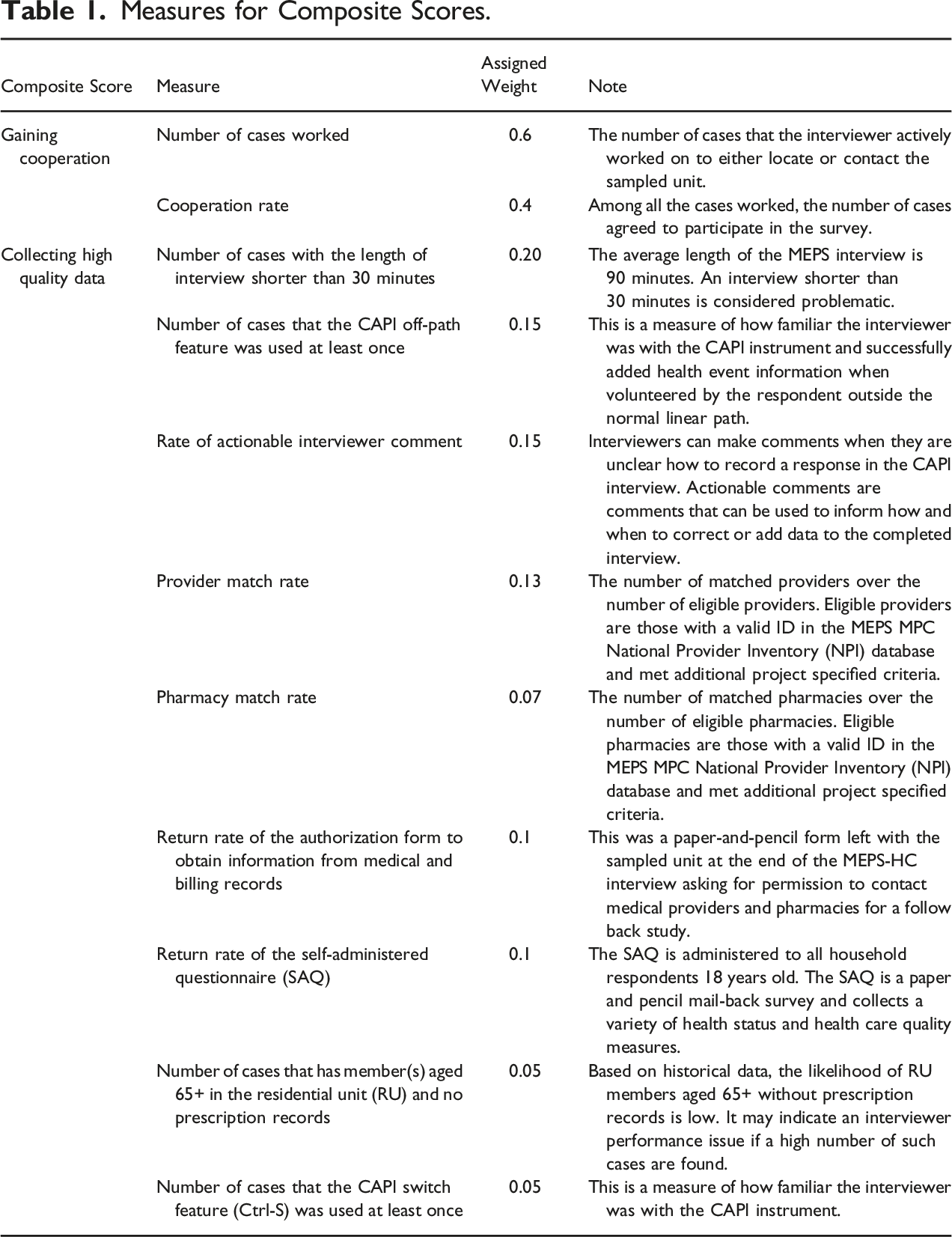

Measures for Composite Scores.

Composite Score

Measure

Assigned Weight

Note

Gaining cooperation

Number of cases worked

0.6

The number of cases that the interviewer actively worked on to either locate or contact the sampled unit.

Cooperation rate

0.4

Among all the cases worked, the number of cases agreed to participate in the survey.

Collecting high quality data

Number of cases with the length of interview shorter than 30 minutes

0.20

The average length of the MEPS interview is 90 minutes. An interview shorter than 30 minutes is considered problematic.

Number of cases that the CAPI off-path feature was used at least once

0.15

This is a measure of how familiar the interviewer was with the CAPI instrument and successfully added health event information when volunteered by the respondent outside the normal linear path.

Rate of actionable interviewer comment

0.15

Interviewers can make comments when they are unclear how to record a response in the CAPI interview. Actionable comments are comments that can be used to inform how and when to correct or add data to the completed interview.

Provider match rate

0.13

The number of matched providers over the number of eligible providers. Eligible providers are those with a valid ID in the MEPS MPC National Provider Inventory (NPI) database and met additional project specified criteria.

Pharmacy match rate

0.07

The number of matched pharmacies over the number of eligible pharmacies. Eligible pharmacies are those with a valid ID in the MEPS MPC National Provider Inventory (NPI) database and met additional project specified criteria.

Return rate of the authorization form to obtain information from medical and billing records

0.1

This was a paper-and-pencil form left with the sampled unit at the end of the MEPS-HC interview asking for permission to contact medical providers and pharmacies for a follow back study.

Return rate of the self-administered questionnaire (SAQ)

0.1

The SAQ is administered to all household respondents 18 years old. The SAQ is a paper and pencil mail-back survey and collects a variety of health status and health care quality measures.

Number of cases that has member(s) aged 65+ in the residential unit (RU) and no prescription records

0.05

Based on historical data, the likelihood of RU members aged 65+ without prescription records is low. It may indicate an interviewer performance issue if a high number of such cases are found.

Number of cases that the CAPI switch feature (Ctrl-S) was used at least once

0.05

This is a measure of how familiar the interviewer was with the CAPI instrument.