Abstract

Participatory research engages a transdisciplinary team of stakeholders in all aspects of the research process. Such engagement can lead to shifts in the research design, as well as who is considered a participant. We detail our experiences of studying an evolving stakeholder network in the context of a 2.5-year transdisciplinary, participatory project. We show how participation leads to shifts in the network boundary overtime and how a transdisciplinary effort was needed to retrospectively redefine the network boundary. Through tacking back and forth between ethnographic insights, research aims, and modeling assumptions, the team eventually reached agreement on what determined network membership and how to code network members according to their timing and level of participation. Our account advances literature on boundary and modeling approaches to shifting, evolving networks by demonstrating how participatory transdisciplinarity can be both a driver of, and solution to, capturing the complexity of evolving networks.

Overview of the Context and Problem

Specifying a network boundary is challenging, yet certain research contexts can be more challenging than others. In this article, we describe our experiences in specifying a shifting network boundary in the context of a participatory, transdisciplinary research project. By network boundary specification, we mean the strategies employed for defining membership in a social network (e.g., Laumann et al. 1989). By participatory transdisciplinary research, we mean research that engages heterogeneous stakeholders, across disciplines and beyond academia, in an ongoing process to discuss, research, and problem-solve issues of common interest, in our case climate change related concerns (Anggraeni et al. 2019; Daniels and Walker 2001; Reed et al. 2018; Vargas-Nguyen et al. 2019).

The project we describe here is the Integrated Coastal Resiliency Assessment (ICRA) project, which took place from Fall 2015 to Spring 2018 on the Deal Island Peninsula (DIP), located in the Chesapeake Bay in Maryland. Here, a transdisciplinary research team composed of the present authors (ethnographers and geographers) and natural scientists, engaged with stakeholders from both government and the local community, to identify and assess specific areas on the DIP according to their vulnerabilities, resiliencies, and potential adaptation strategies to climate change (Johnson et al. 2017; Paolisso et al. 2019).

A main aim of this project was building ties of respect and understanding among ICRA participants; thus we conducted a longitudinal network analysis aimed at measuring stakeholders’ evolving networks over time, along with their perceptions of DIP social–ecological changes. Toward that end, three rounds of online survey data were gathered, measuring social ties among ICRA participants and their individual perceptions. However, the participatory nature of the ICRA project presented challenges in specifying the network boundary, as new topics emerging from group dialogs prompted the inclusion of additional stakeholders. This expansion in the network boundary, combined with issues of nonresponse and varying levels of participation among stakeholders, collectively challenged the research team in defining a stable network boundary that could be used for modeling purposes.

To meet these challenges, we harnessed the skills and insights from our transdisciplinary team to rethink the meaning of “participant” in the ICRA network. Modeling assumptions and options were also brought into the discussion, which, in turn, helped further clarify the network boundary, as well as apply appropriate coding schemes for modeling purposes. As such, our efforts translated into a more holistic understanding of the complex, shifting nature of this network, and brought modeling assumptions alongside ethnographic insights to clarify both the nature of this network as a whole and the roles of individual network members. As of this writing, we are unaware of an article that describes how the complex nature of a shifting, participatory network can be captured by the interplay of disciplinary approaches the way we describe here. Before describing our experiences, however, we offer a brief overview of past research pertaining to boundary specification.

Issues of Boundary Specification

Laumann and colleagues (1989) offer an over-arching distinction between two main strategies for boundary specification: a realist approach, wherein actors declare their own membership in a network, and a nominalist approach, wherein research objectives guide boundary specifications. Building on this distinction, the authors then outline four definitional foci, each of which can be adopted by either a realist or nominalist approach. A positional–attribute focus defines a boundary by the presence or absence of some attribute; a reputational–attribute focus relies on informants to identify network members based on predetermined criteria; a social relations focus identifies network members based on the presence or absence of certain social ties; and an event-driven focus involves identifying activities, policies, or events in which actors may or may not participate.

Over the years, however, researchers have highlighted various challenges in applying these approaches. For example, different specification approaches applied to the same network data a posteriori can lead to significantly different results in the use of the same network measure (e.g., Valente et al. 2013), and/or result in asking substantially different research questions (e.g., De Stefano et al. 2011). The tradeoffs of boundary approaches applied a priori to data-gathering efforts have also been noted (Nowell et al. 2018; Prell et al. 2011). For example, positional attribute approaches are more conducive to capturing isolates (Nowell et al. 2018), or actors with specific characteristics (Pallotti et al. 2015; Sewell 2017) that are especially well aligned with a researcher’s question (McAllister et al. 2008), yet relational approaches tend to yield larger, broader networks beyond the narrow interests of the researcher (Nowell et al. 2018; Sandström 2011). Other studies highlight the use of in-depth, qualitative interviews with key stakeholders as an alternative to using a positional approach (e.g., Halinen and Tomroos 2005); or as a supplement to the initial criteria established by the researcher (e.g., Halinen and Tomroos 2005; Smith 2014).

Yet some scholars argue that the challenges of defining network boundaries have less to do with shortcomings of one approach or another, but rather arise from the inherent complexity of networks themselves (Cooper and Shumate 2012; Halinen and Tomroos 2005; Heath et al. 2009; McAllister et al. 2008). The embedded, multilevel context of networks (Hollway and Koskinen 2016) creates a complex arrangement of actors, structures, and events that are dynamically changing overtime (Cooper and Shumate 2012; Halinen and Tomroos 2005; Heath et al. 2009). Such complexity implies that network boundaries are influenced by conditions external to the network (or research study) itself (e.g., communication infrastructures; Cooper and Shumate 2012), business contracts (Halinen and Tomroos 2005), or international treaties (Hollway and Koskinin 2016; Prell and Feng 2016). On the one hand, advances in network analysis are being developed for handling some of this complexity—e.g., imputation and estimation procedures for handling missing data (Koskinen et al. 2013; Kossinets 2006), and/or shifts in composition change across waves (Ripley et al. 2018). Yet some scholars argue that the complexity is too vast for any researcher to validly or reliably capture and suggest that network scholars turn away from traditional network analysis altogether and approach networks from a more qualitative angle (e.g., Cooper and Shumate 2012; Heath et al. 2009).

In our case, the challenges of specifying the ICRA boundary certainly arose from the complex nature of the ICRA participatory project, yet our solution(s) for these problems did not lie in either adopting an alternative method during the data-gathering process, nor applying a statistical technique to handle some of the messiness of the data that resulted. Instead, as will be shown, we collectively drew on the insights of the whole team to develop a deeper understanding of this particular evolving network, how it spoke to, and was shaped by, participatory research, and how modeling assumptions and needs, combined with ethnographic insights about individuals’ motives brought greater clarity to the notion of a participant and to the appropriate ways of handling the missing data that arose.

Expanding the ICRA Network

Our first attempts to define the ICRA boundary began with key stakeholders who had participated in an earlier DIP project held in 2012–2015 (Paolisso et al. 2019). Here, 19 key stakeholders met in November 2015 and offered the research team advice on how to proceed with the project and who to include in the list of stakeholders. Based on these suggestions, an initial roster of 55 names was created, most of whom had been involved in earlier DIP activities. This initial list of stakeholders arose, then, from a realist approach, in that many of the stakeholders already self-identified as being members of the larger DIP Project, yet the list also included new names based on key informants, thus also reflecting a nominalist, reputational–attribute approach to boundary specification.

In late January 2016, a kick-off meeting was held, where participants identified four geographical areas on the DIP for conducting collaborative assessments. Shortly thereafter, the first round of online survey data was gathered. Here, 54 of the 55 listed stakeholders responded to this online survey. A second kick-off meeting took place in early February 2016, where stakeholders once again nominated who else to include, and encouraged local stakeholders present to reach out to neighbors and other locals who they felt would be interested in/relevant to any or all of the focus areas.

By collectively identifying four areas for collaborative assessment, the criteria for identifying who was relevant to the ICRA acquired more precision, and this, in turn, had impacts on the network boundary. The ICRA team was now actively looking for stakeholders who worked, lived, and/or had expert insights into any of the four geographical areas. These four areas thus built on, and further specified, the nominalist, reputational–attribute approach used at the beginning of the project

Shortly after these two kick-off meetings, the ICRA team actively advertised the project to the wider DIP community via a monthly newsletter, social media, and word of mouth. As news of the ICRA project spread, more individuals came forward and asked to join the ICRA project. This slow expansion of the ICRA network continued over the course of one year (March 2016–March 2017), and during this time, the team held a number of collaborative field assessments for each of the four DIP areas. Thus, this period in which new stakeholders asked to join the ICRA network, reflects a realist, attribute approach in that newcomers self-identified as members of the ICRA network.

The expansion of the ICRA network resulted in 23 new names being added to the original roster, making a total of 78 names listed on the roster for the second wave of data gathering. All 78 stakeholders were approached in early March 2017 and were asked to fill in the second online survey. Of these 78 stakeholders, 54 completed this second survey. Here, 12 of these respondents were newcomers to the project, and the remaining 42 respondents were stakeholders who had filled in the first survey.

In the summer and fall of 2017, three additional workshops occurred. The first, in early June, served to update the stakeholders on ICRA activities. In early October 2017, two half-day workshops were held to discuss potential adaptation strategies to address priority needs and concerns. During the second (and final) workshop held in early October 2017, it was announced that the Maryland State Government would be funding a $1 million engineered shoreline reconstruction project to address stakeholder concerns about extensive shoreline erosion on Deal Island.

In early January 2018, we gathered our final round of online survey data. Here, five new names were added to the roster, and all five were newcomers who had heard about the ICRA via a personal contact and/or the newsletter. These actors subsequently self-identified as being part of the ICRA network, and, thus, this final expansion to the network boundary reflected a realist, attribute approach. A total of 42 respondents completed this final survey wave, where five were newcomers completing the survey for the first time; six were respondents who had also completed survey 2; and 31 were respondents who had completed all three rounds of online surveys.

Reconsidering the Network Boundary

After completing all three rounds of data gathering, the research team convened to reflect on the fluctuations in the network boundary. For example, the ethnographers on the team shared their observations regarding several listed individuals whose participation decreased significantly overtime, where participation referred to attendance to meetings, engagement in the ICRA listserve, and/or response to interview or survey requests. An ordinal value was subsequently developed to reflect this overall level of participation, in which stakeholders were assigned a number ranging from 1 (very low) to 3 (very high) participation. The team then noted that some stakeholders who ranked as relatively high participants, had nonetheless failed to fill in one or more of the online surveys. Finally, the team noted that the expansion of the network between the first and second rounds of the online survey, meant that no network or perceptions data had been gathered, during the first online survey, for the 23 newcomers.

These discussions on the network expansion, levels of participation, and nonresponse led to a number of decisions regarding the network boundary. First, the team decided that stakeholders with an overall low participation score should be removed from the list, as this lack of participation mean that stakeholders had not really engaged with the ICRA project. A total of 18 names were thus removed from the final list based on this criterion. Second, the team decided that those actors who joined late in the ICRA but engaged with the project thereafter should be included in the final dataset, even though data were missing for them in the first wave. Finally, the team also decided to keep all names of stakeholders who failed to respond to one or more of the online surveys (even after numerous prompts by the research team), if these stakeholders had been moderate to highly active in participating in other ICRA activities.

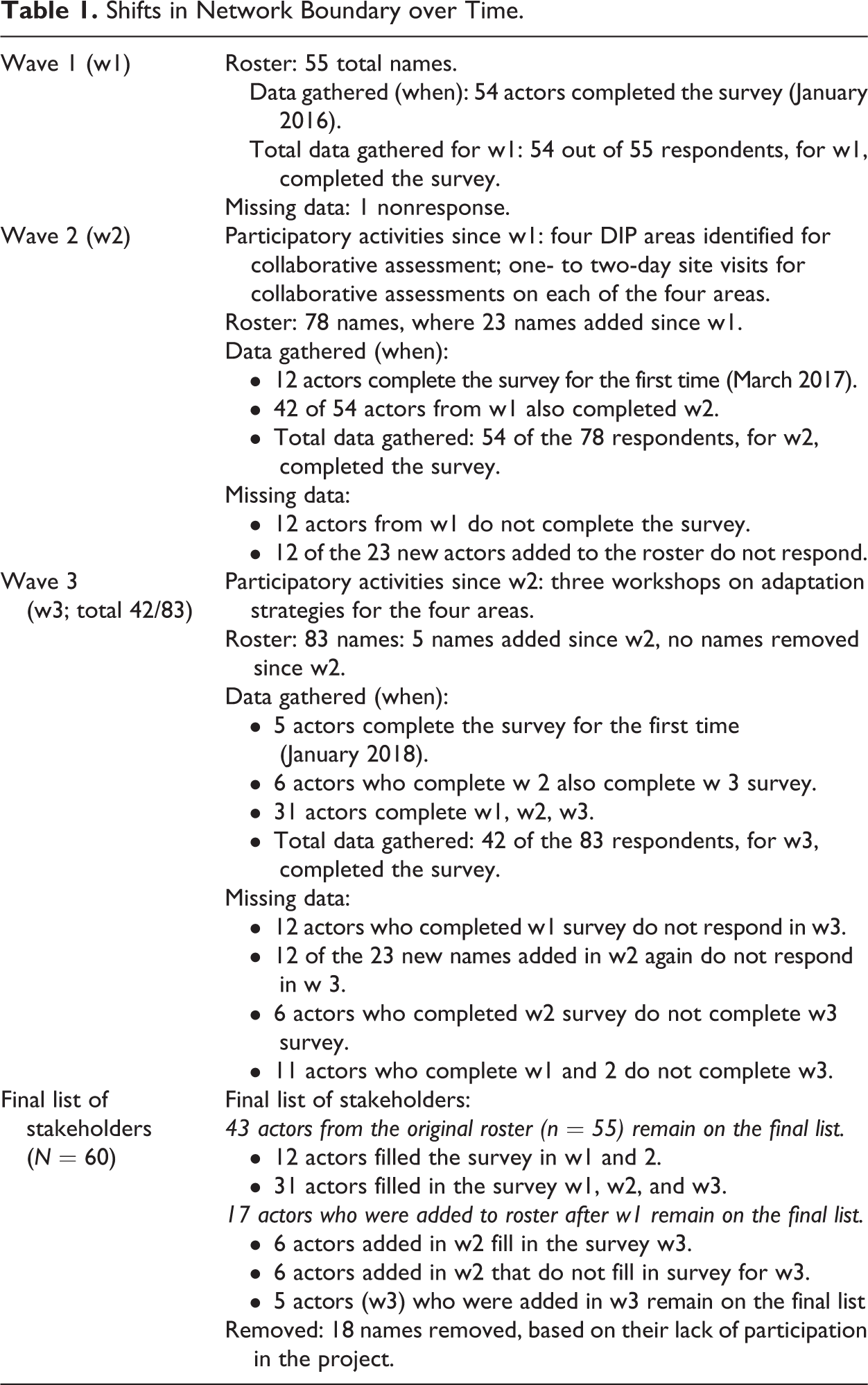

These shifts to the network boundary, and the final list of stakeholders, are summarized below in Table 1.

Shifts in Network Boundary over Time.

Table 1 shows that 43 of the original 54 names are found on the final list; of the 28 new names added to the roster over the course of Waves 2 (n = 23) and 3 (n = 5), only 17 of these newcomers qualified as participants in the final list. Further, Table 1 shows a fair amount of missing data across the three waves of data: Wave 1 lacks data for all 17 newcomers, and Wave 3 lacks data for 18 actors.

Modeling Assumptions and Boundary Considerations

Although clarifying the network boundary according to what was considered a participant was important for further network analyses, the modelers on the team were also concerned about the amount of missing data, and whether such missing data would go against model assumptions (Snijders et al. 2010:45). As the original intent in gathering longitudinal network data was to quantitatively assess the coevolution of stakeholders ties with perceptions of social–ecological change, the team’s modeling approach was the adoption of Stochastic Actor Oriented Models (SAOMs), a modeling suite specifically designed for testing hypotheses related to coevolution for longitudinal network data (Snijders et al. 2010). SAOMs assume that network data have some stability overtime (Snijders et al. 2010:45) and struggle to provide good estimates if missing data exceeds 20% on any variable (Ripley et al. 2018:32). However, SAOMs estimation process can handle a certain amount of missing data via imputation methods (Ripley et al. 2018:32) as well as accommodate shifts in network boundaries, provided that the dataset is coded according to SAOM guidelines (Ripley et al. 2018:30–34).

These assumptions and modeling specifications were shared with the larger team, and combined with the earlier insights from the ethnographers, led the team to specifying codes for the data that further clarified the shifting boundary—not only for modeling purposes—but for the team’s overall understanding of the nature of this participatory network. For example, the missing data on 18 actors in Wave 3 were all reported to have come from stakeholders who, although active in ICRA activities, nonetheless failed to respond to team members’ numerous prompts to complete the online survey. As such, the team decided that these missing data should be handled by SAOM imputation methods and coded according to the manual’s specifications (Ripley et al. 2018:32).

With regard to the 17 newcomers, however, it was not readily clear how to handle their (lack of) data from Wave 1. As team members noted, most (if not all) of the newcomers held social ties to others involved in the ICRA prior to joining the roster in Wave 2. Thus, perhaps these actors’ data should also be coded as missing data, in the same way as the 18 nonrespondents from Wave 3. However, after reflecting as a group, we realized that modeling these stakeholders’ ties, only after they were identified as relevant to the ICRA network, was a truer reflection of the participatory process itself, and, hence, a more valid way of capturing this shifting network boundary. That is, coding the data to reflect the ICRA network boundary as shifting over time, rather than simply having more or less missing data, was deemed a more valid reflection of the nature of this participatory network. Given this reasoning, the decision was made to code these 17 actors’ ties as “structural zeros” for Wave 1, a specification in SAOM that treats, as certain, all ties to and from these actors as nonexistent for Wave 1, and thus enables SAOM to begin estimating probabilities for ties forming only for the remaining waves in which survey data were present (Ripley et al. 2018:30–31).

For further details on this coding scheme, please see the supplemental material.

Reflections and Lessons Learned

The ICRA stakeholder network boundary underwent processes of expansion and shrinkage overtime. The main driver for expansion was the identification of the four geographical areas, which prompted stakeholders to nominate themselves or others to the ICRA network. Yet this collaborative decision to focus on certain areas (and not others) ultimately led to a lack of data on these newcomers’ ties for Wave 1. In addition, 18 stakeholders stopped participating in the ICRA project, and were ultimately removed from the network. Although in some cases the motives for leaving the project were clear (e.g., moving away or getting a new job), the team was unable to gather data on why many of these stakeholders stopped participating, and we could only assume that it was a general lack of interest that resulted in these stakeholders dropping out. Understanding why stakeholders leave a given participatory network is thus one area for future research.

Of the stakeholder participants who remained active for the duration of the project, some failed to respond to the survey, and such nonresponse data were qualitatively different from nonresponse data arising from a lack in ICRA participation, or late joiners entering the ICRA project after earlier rounds of data collection. The distinctions between these different kinds of missing data, combined with an understanding of the SAOMs data requirements and modeling assumptions, triggered a group reflection on how to code these data to capture these qualitative distinctions.

Taken together, our story illustrates not only the complexity that participatory projects offer network analysts, but also an interesting “tacking back and forth” between ethnographic and modeling insights, which ultimately led to a cross-team clarification on the concepts of participant and participatory network. We do not believe our particular way of delineating the shifting network boundary could have arisen without this unique interplay among team members, each with their own insights into the reasons for this shifting network boundary. Given that participatory projects will likely increase in the future (Vargas-Nguyen et al. 2019), we welcome other scholars’ reflections on the ways to model such complexity overtime, harnessing the participatory spirit of the project team to problem-solve some of the challenges posed by the participatory process. Taken together, more critical, reflective pieces on the unique challenges and insights of studying evolving participatory networks and shifting network boundaries are needed.

Footnotes

Acknowledgments

We would like to acknowledge and thank the DIP Project stakeholders, and our funders, the Maryland Sea Grant, Award No. SA75281450N. Helpful comments were given on an earlier version of this article, presented at the Mitchell Centre for Social Network Analysis, at Manchester University. We also thank the reviewers for their helpful comments in shaping the final version of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.