Abstract

This article is concerned with the extent to which the propensity to participate in a web face-to-face sequential mixed-mode survey is influenced by the ability to communicate with sample members by e-mail in addition to mail. Researchers may be able to collect e-mail addresses for sample members and to use them subsequently to send survey invitations and reminders. However, there is little evidence regarding the value of doing so. This makes it difficult to decide what efforts should be made to collect such information and how to subsequently use it efficiently. Using evidence from a randomized experiment within a large mixed-mode national survey, we find that using a respondent-supplied e-mail address to send additional survey invites and reminders does not affect survey response rate but is associated with an increased proportion of responses by web rather than face-to-face and, hence, lower survey costs.

Introduction

This article concerns sequential mixed-mode surveys in which the first phase (mode) is web and the second is face-to-face interviewing, in which the first communication with sample members is by mail and includes an invitation to participate online. This is a common type of design (Lynn 2013; Millar and Dillman 2011) and therefore a context of interest to many researchers. Our focus is the use of e-mail for additional communications (invitation or reminders) during the first (web) phase of fieldwork, not to substitute mail communications. We investigate whether these additional communications affect propensity to participate in the survey (participation propensity) and propensity to respond online rather than face-to-face (mode of participation). It is important to establish whether these additional communications have beneficial effects, because they are not cost free.

There is often a cost associated with initial collection of e-mail addresses, as these are typically not available on the sampling frame. In longitudinal surveys, researchers can ask sample members to provide an e-mail address to contact them at subsequent waves. A similar opportunity may also arise in some types of onetime web surveys such as visitor surveys, where visitors may be handed a card or letter asking them to go online and complete a survey. At the same time, they could be asked to supply an e-mail address. However, the request may be seen as intrusive and the information as sensitive and private (though Bandilla et al. [2014] found no effect of asking for an e-mail address on participation in a follow-up survey). Furthermore, resources are required to capture, clean, and manage the collected e-mail addresses. Researchers should therefore be assured of the value of asking for an e-mail address before doing so.

There are at least two potential advantages of additional communications by e-mail. First, they may increase participation propensity. Second, they may reduce data collection costs if a higher proportion of respondents participate online rather than face to face. The mechanisms that could bring about each of these two effects are discussed in the next sections. Aside from response propensity and cost, speed of response can also be an advantage of e-mail communication (Mehta and Sivadas 1995; Schaefer and Dillman 1998), but this consideration only applies to single-mode web surveys in which all sample members can be contacted by e-mail. To our knowledge, no study has investigated the effect of additional e-mail communications on response propensity in either a mixed-mode or longitudinal context. This article therefore addresses important methodological questions that have yet to be tackled. Furthermore, the use of a nationally representative sample and a semiexperimental design provides a strong basis for inference and a context from which a degree of generalizability can be assumed.

Background

E-mail Communications and Participation Propensity

There are at least three mechanisms through which additional e-mail communications could increase participation propensity compared to mail alone. First, e-mails could reduce the risk of failing to make contact with the sample member. Noncontact is a major component of survey nonresponse (Groves and Couper 1998), and the probability of it occurring depends on the number, nature, and timing of contact attempts (Lynn 2008). E-mail communications are different from mail communications in a number of ways that are relevant to contact propensity. They tend to arrive in a personal inbox, checked only by the intended recipient, whereas mail is delivered to a letter box that may be shared by other residents. Consequently, mail can be removed by another person before the intended recipient sees it, whereas e-mail generally cannot (with the exception of spam filters). Also, many people check their e-mail inbox several times a day and may do so from multiple locations, whereas checking a mailbox requires physical presence and may not be done often. For these reasons, additional e-mail communications may result in the communication being seen by some sample members who would not otherwise have seen it.

The second mechanism by which additional e-mail communications could increase response propensity is by reducing the burden of participation. Respondent burden (Bradburn et al. 1978; Sharp and Frankel 1983) encompasses several features of the task of survey participation, including the time it takes to perform survey tasks and the associated disruption to other activities. Greater burden can reduce the probability that a sample member will initiate, or continue with, survey tasks. Sending a survey invitation by e-mail enables the recipient to participate by simply clicking on a link while already online, whereas if the invitation is received by regular mail, the recipient must retain the letter until it is convenient to go online and must then type in a URL and enter a passcode. The latter takes more time and requires more effort. The additional burden is somewhat analogous to that involved in surveys that attempt to switch respondents from telephone interviewing to web, a design feature that has been shown to reduce participation propensity (Kreuter et al. 2008).

The third mechanism by which additional e-mail communications could increase response propensity is not specific to the mode (e-mail) of the communications. The extra communications could simply serve as reminders that prompt some sample members to participate.

Millar and Dillman (2011) found that adding two e-mail communications in a single-mode cross-sectional web survey that otherwise involved three mail communications significantly increased response rate, though their study was of undergraduate students, all of whom had e-mail addresses and were assumed to be web users. Several other studies have examined aspects of the use of e-mail communications in the single-mode web context, but none of these studies assessed the effect of e-mail communications additional to mail communications.

The effect of substituting e-mail communications for mail communications was tested by Porter and Whitcombe (2007), Millar and Dillman (2011), and Kaplowitz et al. (2012), all of whom found no effect. Bandilla et al. (2012) found higher response rates with mail rather than e-mail invitations (in the absence of a mailed prenotification letter). Kaplowitz et al. (2004) compared different combinations of e-mail and mail communications, but all treatments included an e-mail communication, so their study cannot be used to compare designs with and without e-mail communications. Bosnjak et al. (2008) found higher response rates with e-mail invitations rather than short message service invitations. A meta-analysis carried out by Manfreda et al. (2008) found that web surveys achieved a higher response rate when the invitation was delivered by e-mail rather than mail, but they, too, did not assess the marginal effect of e-mail communications additional to mail contacts. Muñoz-Leiva et al. (2009) found that additional e-mail reminders could increase response rates when previous communications had also been by e-mail, but did not compare treatments that involved mail communications. Bosnjak et al. (2008) compared mode of prenotification but not of invitation or reminders.

In single-mode self-completion surveys, additional communications tend to increase response rates regardless of whether the communication is a prenotification or a reminder and for both web and paper surveys (Cook et al. 2000; Dillman 2000; Dillman et al. 1995).

E-mail Communications and Mode of Participation

In a sequential mixed-mode design, where sample members are first invited to complete the survey online and subsequently approached for interview (either face to face or by telephone) only if the web survey has not been completed, additional e-mail communications could increase the propensity to complete the survey online, even if overall participation propensity (as discussed in the previous section) is not affected. In other words, conditional on participation, respondents may be more likely to participate in web mode rather than interviewer mode. The mechanisms through which this shift in the distribution of mode of participation could occur are essentially the same ones outlined above: The e-mail invitation may increase the probability of the sample member being aware of the invitation (contact) or may make online participation easier (burden). Whether the outcome of these mechanisms increases the overall participation propensity or the proportion of responses made online depends on the extent to which sample members who only participate online as a result of the e-mail communications would otherwise not have participated at all (overall participation propensity) or would have participated by interviewer mode in the second phase of the fieldwork (mode of participation).

Moderating Factors

Any effects of e-mail communications may be moderated by other factors. Three types of factors are of interest: sociodemographic characteristics, reactions to earlier requests to provide an e-mail address, and survey characteristics. A wide range of sociodemographic characteristics has been found to moderate the effectiveness of survey design features intended to increase participation propensity. In the longitudinal survey context, reviews of such effects can be found in Watson and Wooden (2009) and Uhrig (2008). Nonresponse theory does not posit that these characteristics have a direct causal effect, but rather that they act as markers for variations in at-home patterns, time availability, psychological dispositions, and relevant attitudes (Groves and Couper 1998; Groves et al. 2000). Knowledge of the moderating effects of sociodemographic characteristics can be useful to researchers implementing longitudinal surveys, as design features can then be targeted at subgroups for whom they are expected to be effective (Lynn 2014).

Two aspects of sample members’ reactions to requests for e-mail addresses can be of operational interest. The first is how recently an e-mail address was provided. Any moderating effect of this on the effect of e-mail communications may have implications for how frequently researchers should ask sample members to provide an (updated) e-mail address and/or for whether e-mail communications should be restricted to sample members who provided/confirmed an e-mail address relatively recently. The second aspect is the reaction of other household members to the request to provide an e-mail address. (This applies only to surveys that collect data from multiple members of a household.) Other household members may influence a sample member’s survey participation decision, and this may be particularly likely in the case of spouses and partners, whose relationship will tend to be the closest.

A survey characteristic pertinent to design decisions for longitudinal surveys is time spent in the sample (Kalton and Citro 1995). Some features are more effective for recently joined sample members, while others work better among long-term sample members (see, e.g., Couper and Ofstedal 2009; Lynn 2016). The effect of additional e-mail communications, too, could be moderated by time in sample.

Research Questions

Based on the above discussion, we hypothesize that additional e-mail communications will increase participation propensity and the proportion of responses submitted online. We do not have specific hypotheses regarding the nature of moderators, but we wish to identify the extent and nature of any moderating effects as these may have implications for survey design.

Our research questions are Do additional e-mail communications affect overall participation propensity? Are effects on participation moderated by how recently the sample member provided an e-mail address or by whether their partner has provided an e-mail address? Are any of the effects in research question 1 or 2 moderated by characteristics of sample members or by time in sample? Do additional e-mail communications affect mode of participation (propensity to participate in web mode rather than interviewer mode)? Are effects on mode of participation moderated by how recently the sample member provided an e-mail address or by whether their partner provided an e-mail address? Are any effects in research question 4 or 5 moderated by characteristics of sample members or by time in sample?

Study Design

We use data from wave 5 of the Understanding Society Innovation Panel, a household panel designed specifically for methodological development and testing (Uhrig 2011). The innovation panel is based on a stratified, clustered, probability sample of residential addresses in Great Britain (Lynn 2009). All current residents at sample addresses in April to June 2008, when interviewers carried out wave 1 of the survey, were designated panel members and were followed up for subsequent waves at one-year intervals. A refreshment sample, selected through the same design, was added at wave 4. At each wave, data are collected from all adult household members, even though not all such people are themselves panel members. At each wave, respondents are asked to provide a range of contact information including e-mail addresses. Waves 1, 3, and 4 involved single-mode computer-assisted personal interview (CAPI) data collection, while wave 2 had an experimental computer-assisted telephone interview—CAPI mixed-mode design (Lynn 2013).

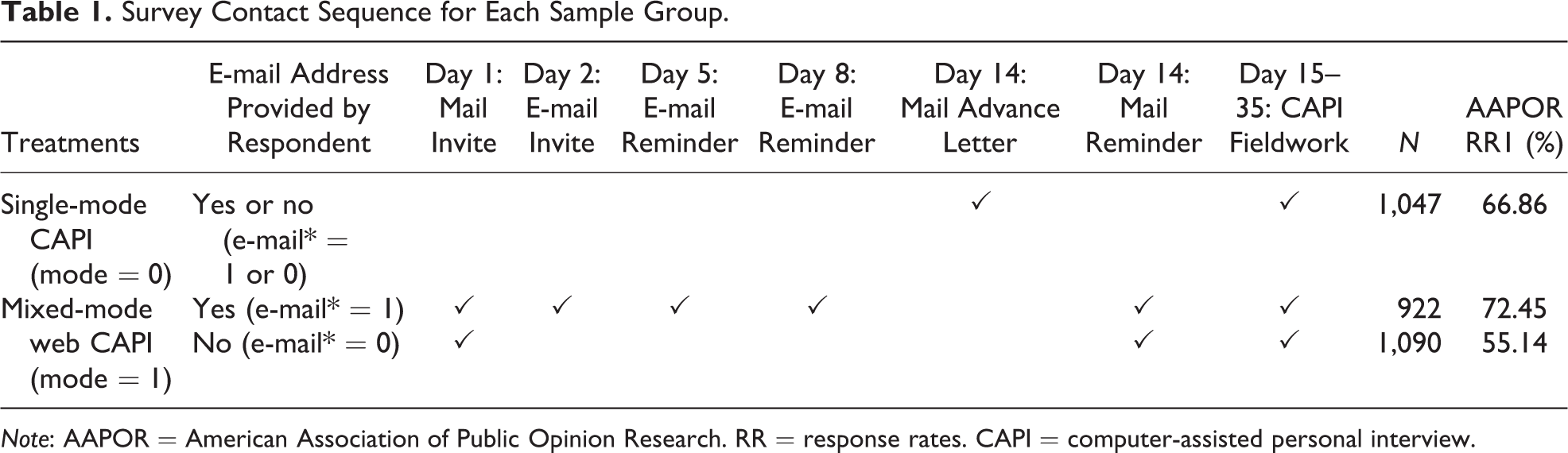

Wave 5 fieldwork took place from May to July 2012. A random two-thirds of sample households were allocated to a web-CAPI sequential mixed-mode design, while the other one-third was administered single-mode CAPI. This randomized allocation to mode treatment allows identification of the effect of additional e-mail communications on participation rates. We discuss how we do this in the next section. In the mixed-mode treatment, each sample member aged 16 years or over was sent a letter inviting him or her to take part by web. The letter included the URL and a unique user ID to be entered on the welcome screen. A version of the letter was additionally sent by e-mail to all sample members who had previously supplied an e-mail address (around half of the sample; e-mail = 1 in Table 1) with a live link to the survey.

Survey Contact Sequence for Each Sample Group.

Note: AAPOR = American Association of Public Opinion Research. RR = response rates. CAPI = computer-assisted personal interview.

For the 20% of respondents who had indicated at previous waves that they do not use the Internet regularly for personal use, the letter informed them that they would be able to do the survey with an interviewer. Up to two e-mail reminders were sent at three-day intervals. Sample members who had not completed the web interview after two weeks were sent a mail reminder and interviewers then started visiting to attempt CAPI interviews. The interviewer visits began in the same week that the reminder letter would have been received. The web survey remained open throughout the fieldwork period. In the single-mode CAPI treatment, each sample member was sent a letter explaining that an interviewer would soon visit their address. The design and content of the letter were identical aside from the paragraph that mentioned an interviewer visit instead of inviting online participation (see Online Appendix). The contact sequence for each sample group is summarized in Table 1.

The present study is based on sample members issued to the field for the wave 5 survey (N = 3,059). These constitute around 45.7% of all potentially eligible panel members due to nonresponse at previous waves. The survey outcomes are our dependent variables of interest.

Variables and Method

Our dependent variables are indicators of whether the sample member completed the individual interview at wave 5 and, if so (for the mixed-mode sample), whether they completed it in web mode or by CAPI. Key independent variables are dichotomous, taking the value 1 if a characteristic or design feature applies and 0 otherwise. Mode treatment indicates whether the sample member was allocated to the mixed-mode treatment rather than single-mode CAPI; time in sample indicates membership of the original sample rather than the wave 4 refreshment sample. E-mail indicates whether an e-mail address was supplied by the sample member prior to wave 5. Note that this is independent of mode treatment: The request to provide an e-mail address was made of all sample members at waves 1–4 without knowledge of the mode treatment to which they would be assigned at wave 5.

For sample members with e-mail = 1, e-mail wave is a categorical variable that indicates the (most recent) wave at which an e-mail address was supplied. Partner’s e-mail indicates whether an e-mail address was supplied by the sample member’s partner. Fourteen additional variables are included in our models as controls for the selectivity effect in supplying e-mail addresses. These include sociodemographic indicators, such as age, gender, education, and ethnicity, and a set of variables expected to be associated with propensity to respond in web mode, such as having home broadband, regular Internet use, and stated mode preference.

Three logistic regression models are developed:

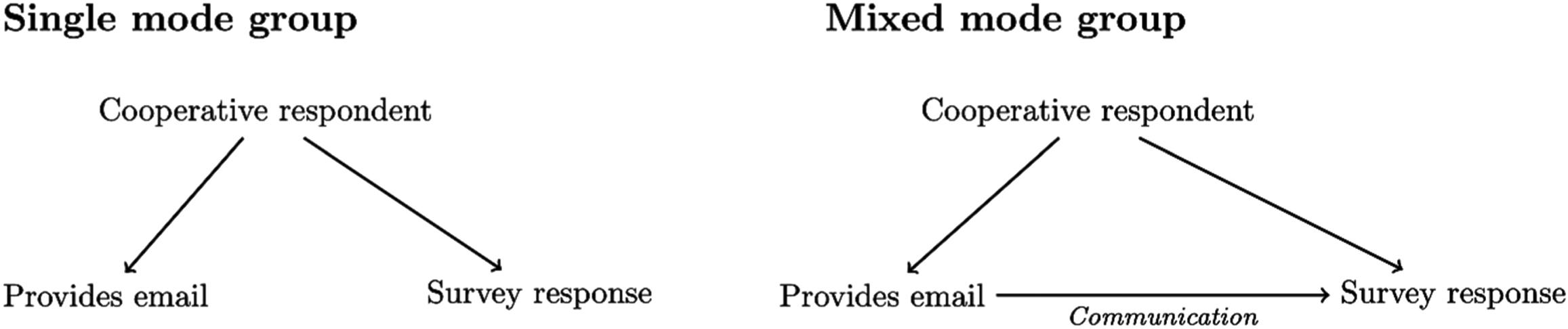

Model 1 predicts participation based on the full sample. Here we exploit the random allocation into mode designs to test the interaction between e-mail and mode treatment. This coefficient indicates whether the extra e-mail contact actually aids the response process. To understand why this is the case, Figure 1 presents the expected relationships in the two randomized groups: single mode and mixed mode. If a relationship between e-mail and survey response is found in the single-mode design, then this is due to a common cause, for example, a general tendency to be cooperative. This is because people in the single mode were not contacted by e-mail, so no direct causal effect of e-mail on survey response is possible. On the other hand, any difference in the effect of e-mail between single mode and mixed mode can only be due to the effectiveness of additional e-mail communications. Thus, if a main effect of e-mail on participation is found, but no interaction with mode treatment, then e-mail simply indicates a tendency to be cooperative, whereas an interaction in which the effect of e-mail on participation is stronger for the mixed-mode group would suggest that e-mail communications enhance response propensity.

Model 2 predicts survey participation conditional on being in the mixed-mode treatment. This allows us to test the effect of e-mail, and interactions between this and other respondent characteristics, in the mixed-mode context of interest.

Model 3 predicts mode of participation conditional on participation based on the mixed-mode group alone.

The link between the experimental data collection design and analysis strategy.

In each model, we test interactions of e-mail and partner’s e-mail with time in sample as a test of whether any effect of e-mail communications depends on time in sample. We also test whether e-mail wave is significant, as a test of whether effects depend on how long ago the e-mail address was supplied. All results below are presented in odds ratios (ORs). An effect of 1 means no relationship between independent variable and outcomes (survey participation), while an effect larger (smaller) than 1 indicates the extent to which the chances of participating increase (decrease) when the independent variable increases by 1.

Results

Research Question 1: Effect of Additional E-mail Communications on Survey Participation

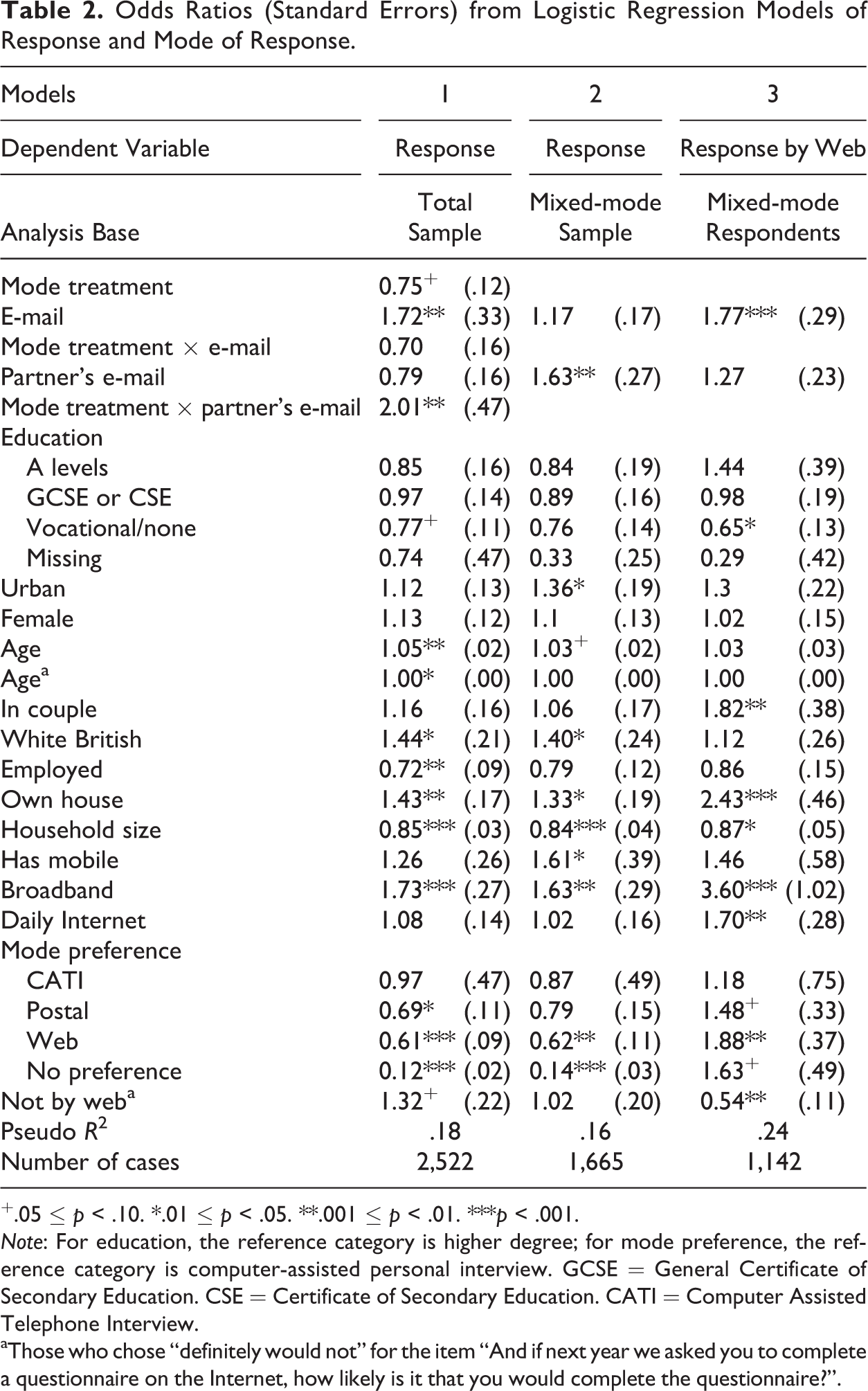

Our main research question concerns the effect of additional communications by e-mail on overall participation propensity in the web-CAPI sequential mixed-mode survey context. Results from model 1 (see Table 2) show that the overall effect on participation, in the entire sample, of the respondent having supplied an e-mail address is positive (OR 1.72, p < .01). As mentioned previously, this confounds unobserved characteristics, such as general cooperativeness, with the direct effect of additional communications by e-mail. To separate the two, we must look at the interaction between e-mail and mode treatment in Model 1. This is not significant (OR 0.7, p > .1), indicating no evidence that the effect on propensity to participate differs between the mixed-mode treatment (where the e-mail address was used for additional communications) and the single-mode CAPI treatment (where it was not used).

Odds Ratios (Standard Errors) from Logistic Regression Models of Response and Mode of Response.

+.05 ≤ p < .10. *.01 ≤ p < .05. **.001 ≤ p < .01. ***p < .001.

Note: For education, the reference category is higher degree; for mode preference, the reference category is computer-assisted personal interview. GCSE = General Certificate of Secondary Education. CSE = Certificate of Secondary Education. CATI = Computer Assisted Telephone Interview.

aThose who chose “definitely would not” for the item “And if next year we asked you to complete a questionnaire on the Internet, how likely is it that you would complete the questionnaire?”.

Research Questions 2 and 3: Moderators of the Effect of Additional E-mail Communications on Survey Participation

Although no average effect of additional e-mail communications was found, it is possible that effects may operate differentially across subgroups. To test for such moderating effects, we test interactions between each potential moderating variable and the randomly allocated mode treatment. We find a significant interaction between mode treatment and partner’s e-mail (Table 2). In the mixed-mode context, those whose partners had supplied an e-mail address were significantly more likely to have participated, whereas in the single-mode CAPI context no such effect was observed. This is confirmed by a significant main effect of partner’s e-mail in model 2 (p < .01), but not in model 1. There is no evidence that any effect of e-mail or partner’s e-mail acts differentially between sample subgroups or is moderated by whether the sample member has broadband Internet access at home or whether they are a regular Internet user. Furthermore, interactions with time in sample or replacing e-mail with e-mail wave are not significant in model 1 or 2.

Research Question 4: Effect of Additional E-mail Communications on Mode of Participation

In model 3, we model the propensity to answer by web as opposed to CAPI conditional on participating in the mixed-mode survey. The significant main effect of e-mail indicates that sample members who had provided e-mail addresses were more likely to respond in web mode rather than face-to-face (OR 1.77, p < .01). It should be noted that in this model, we cannot take advantage of an experimental design, as there was no further randomization to treatment (receiving additional e-mail communications) within the mixed-mode group. Instead, we rely here on the inclusion of the other 15 independent variables to provide a control for nonrandom supply of an e-mail address. The result suggests that additional e-mail communications increase the propensity to respond by web rather than by face-to-face interview, thus reducing survey costs.

Research Questions 5 and 6: Moderators of the Effect of Additional E-mail Communications on Mode of Participation

In extensions of model 3 (results not shown), we investigated interactions between e-mail and time in sample and between e-mail and each of the 14 control variables. Two significant interactions were found: The effect of e-mail is stronger for those in urban areas (e-mail × urban:

Discussion

We find no evidence that additional e-mail communications (invitation and reminders) in the first phase of a web-CAPI sequential mixed-mode survey affect participation propensity. However, such additional e-mail communications appear to be associated with a higher propensity to respond in web mode as opposed to CAPI, an outcome that brings cost savings.

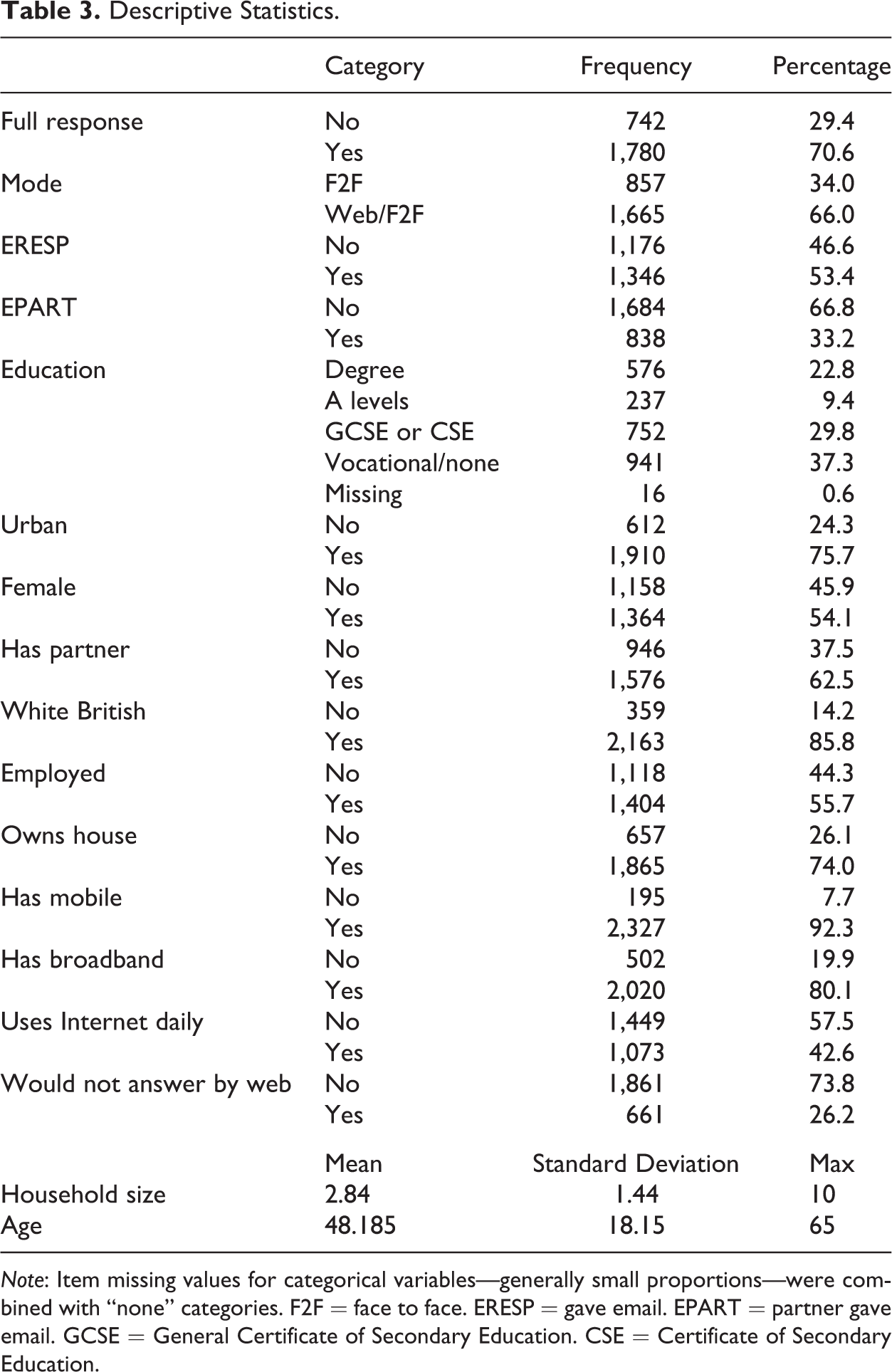

Previous studies have generally found additional communications of any sort to improve response rates (Cook et al. 2000; Dillman et al. 1995). One might question whether our failure to find such an effect is caused by our study being based on a panel, whereas previous studies were cross-sectional. Panel members may be relatively committed respondents and consequently less sensitive to influences on their participation propensity. However, we doubt this explanation for two reasons. First, the proportion of persons issued to field at wave 5 who completed the individual interview was only 70.6% (see Table 3), suggesting some scope for influence. Second, the absence of an interaction between e-mail and time in sample implies that our results hold equally at the second and fifth annual waves of a survey.

Descriptive Statistics.

Note: Item missing values for categorical variables—generally small proportions—were combined with “none” categories. F2F = face to face. ERESP = gave email. EPART = partner gave email. GCSE = General Certificate of Secondary Education. CSE = Certificate of Secondary Education.

An alternative explanation may be that encountering URLs while off-line and having to retain them until a suitable occasion when one is online, and entering passwords, may have become common and routine activities that are not a big barrier to participation. The extra convenience of being able to click a link may be rather trivial. Additionally, we do not know how many sample members actually received our e-mails. Some e-mails may have been diverted by spam filters (Fan and Yan 2010) and others may simply have been left unopened. The e-mail addresses provided by respondents may, in some cases, relate to accounts set up primarily for receipt of commercial mailings and the like. At wave 6, only 30% of our invitation e-mails were opened by the recipient (Wood and Kunz 2014). We suspect that a more important difference between our context and that of earlier studies is the sequential mixed-mode design. In our design, the control treatment included not only two mailings but also extensive face-to-face contact attempts. Most of the other studies discussed earlier in this article took place in contexts where the control treatment did not include any in-person contact attempts (telephone or face to face).

Intriguingly, additional e-mail communications with the sample member’s partner appear to increase response propensity in the mixed-mode context. This may indicate that e-mail communications with both members of a couple have a positive effect (from the researcher’s perspective) on both (recall that in most cases, the partner of a sample member will themselves be a sample member too in our design), whereas e-mail communication with just one person has no effect on the response behavior of that person.

With regard to the mode of participation in a sequential web-CAPI design, we find that additional e-mail communications during the web phase increase the propensity of respondents to respond by web rather than CAPI (conditional on participation). This can help reduce survey costs. However, the effect is not observed in rural areas or among homeowners. The identification of heterogeneous effects across sociodemographic groups such as these might be useful for future research and for targeting (Lynn 2014). Our findings regarding mode of participation require replication, preferably with an experimental allocation of e-mail communications. We have tried to counter the possible selectivity in the process that leads to provision of an e-mail address by controlling for relevant respondent characteristics and by interacting e-mail with all the controlling variables, but the possibility remains of unobserved heterogeneity.

Conclusion

To our knowledge, this study provides the first evidence of the effects of additional e-mail communications in a sequential mixed-mode panel survey context. The benefits may be less than in the context of single-mode web surveys. Researchers should evaluate carefully whether the intrusion and effort implied by a request to supply an e-mail address are warranted. In a mixed-mode context, as a means to improve participation rates, collecting e-mail addresses may not be worthwhile. However, as a means to save costs by increasing the proportion of respondents who participate in web mode, the use of e-mails could be effective. Further research is required to replicate our findings in different populations to better identify the determinants of mode of participation in sequential mixed-mode designs and to learn more about the circumstances in which additional e-mail communications are worthwhile.

Footnotes

Authors’ Note

Data management was coordinated by Noah Uhrig and Jon Burton, and data were collected by NatCen Social Research.

Acknowledgment

We are grateful to Annette Jäckle for sharing her derived indicators regarding e-mail addresses.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by Economic and Social Research Council Award no. ES/H029745/1, “Understanding Society and the UK Longitudinal Studies Centre” (principal investigator: Nick Buck) and the National Centre for Research Methods (NCRM; grant reference: RES-576-47-5001-01).

Supplemental Material

Supplementary material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.