Abstract

To address complex problems like health disparities, public health interventions have become increasingly ‘‘complex’’—engaging multisector partners and communities at multiple ecological levels. Complex interventions are intentionally flexible, making them challenging to evaluate with predetermined measures. While innovative evaluation methods tailored to complex interventions have been developed, there is a need for practical guidance to enhance capacity within public health. This article describes the qualitative FIND (Frame, Identify, Narrow, Do) evaluation process for a complex, community-engaged health equity intervention. Illustrative examples and take-away tools are provided. The FIND process is sensitive to complexity, involving ongoing data collection that helps guide implementation. The evaluator is embedded within the project team, facilitating frequent, direct interaction between the evaluator and those whose roles position them to hear or see happenings with the on-the-ground implementation in real time. The FIND process includes (a) developing a flexible framework for determining relevant processes and outcomes, (b) identifying important project events, (c) narrowing in on the most impactful events, and (d) making decisions informed by data. Using the FIND process, the project team learned about significant project events that would have been missed without a complexity-sensitive approach. Having the evaluator embedded within the project team, rather than as an independent entity, was crucial for understanding and utilizing on-the-ground developments in an evolving intervention. There is a need for more practical guidance to build capacity for evaluating complex public health interventions, including strategies for communicating with stakeholders about approaches that diverge from traditional scientific norms.

Keywords

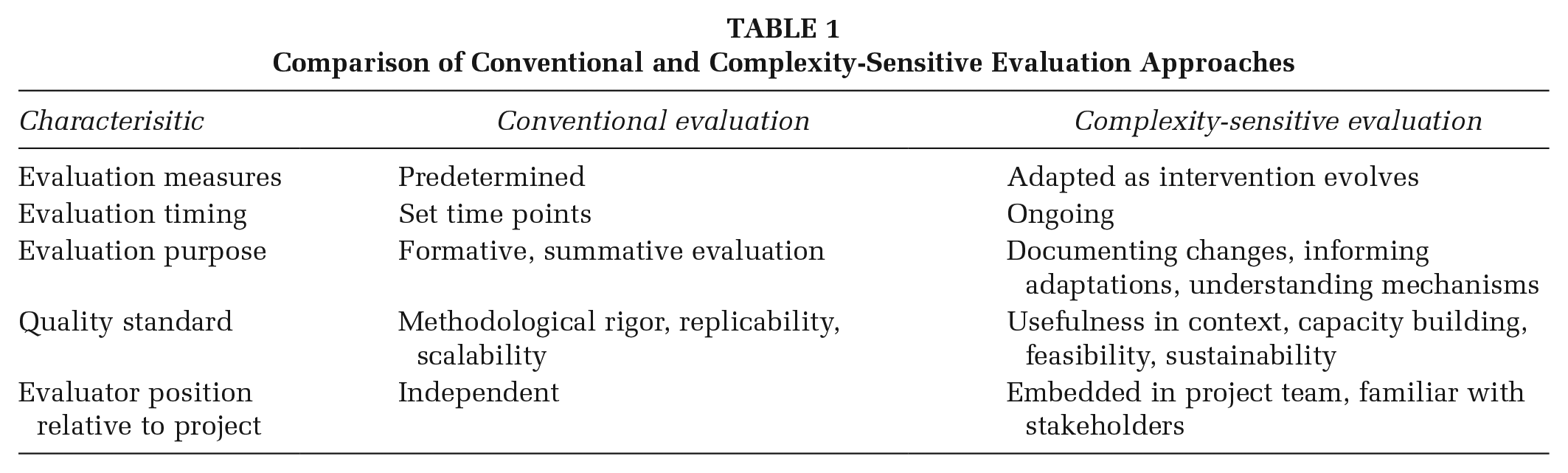

To address complex public health issues like chronic disease disparities, increasing numbers of interventions have adopted complex ecological approaches which engage multisector partners and communities (Giachello et al., 2019; Grumbach, 2017; Hussaini et al., 2018; Oestman et al., 2024). These interventions are “complex” because they have many moving parts and need to adapt to shifting real-world conditions (Patton, 2011). Corresponding evaluations must keep up with change, so they are designed to be ongoing and responsive to evolving contexts (Kania & Kramer, 2013; Patton, 2011). Qualitative methods play a critical role in evaluating complexity, as they can be applied flexibly in concert with intervention changes. Key differences exist between complexity-sensitive and conventional evaluation approaches, designed for controlled interventions, where methods and outcomes are predetermined or fixed (see Table 1).

Comparison of Conventional and Complexity-Sensitive Evaluation Approaches

Because complexity-sensitive evaluations may be unfamiliar to public health evaluators, practical resources need to be developed and shared. This paper describes a qualitative evaluation process created and used within a complex community-engaged health equity intervention. We aim to support teams in moving from daunting to doable evaluations of complex interventions—to build capacity that can practically guide choices for action, adaptation, and investment.

Diabetes Impact Project–Indianapolis Neighborhoods

The Diabetes Impact Project–Indianapolis Neighborhoods (DIP-IN) began in 2018 with the goal of improving the health and well-being of areas of Indianapolis with disproportionately high diabetes burden (Staten et al., 2023). DIP-IN brings communities and multisector partners together to address diabetes disparities across the prevention continuum. Partners include local stakeholders from the health industry, county health department, academia, public health care system, community development, city government, community-based organizations, and community residents. Core intervention components include community health workers, resident steering committees, community health improvement projects (CHIPs), and community capacity building.

What makes DIP-IN complex? While the core components and strategies remain consistent over time, DIP-IN is designed to be flexible and responsive to communities, partners, and shifting real-world contexts. DIP-IN has three partner communities—which have varying cultures, contexts, and partnership opportunities. Through resident input, each community prioritized a different focus for CHIPs (e.g., access to healthy food, physical activities and supportive infrastructure, life stressors and stress management, and/or social connections).

Overall Evaluation Approach

This complex multilevel intervention is evaluated using mixed methods (Staten et al., 2023). Quantitative methods are used to track population-level health outcomes—to understand how DIP-IN impacts diabetes disparities. Quantitative measures are predetermined, and analyses compare measures between set time periods. Qualitative methods, the focus of this paper, are critical in evaluating some of the more complex aspects of the intervention. DIP-IN evaluators use qualitative tools to document unanticipated outcomes of the intervention, understand indirect ripple effects, and to explore how and why strategies succeed, fail or work differently in each community context. Qualitative methods are ideal for these purposes, as they can be used flexibly to support ongoing evaluation, respond to change, and provide actionable feedback to the project in progress (Patton, 2011).

Process and Tools

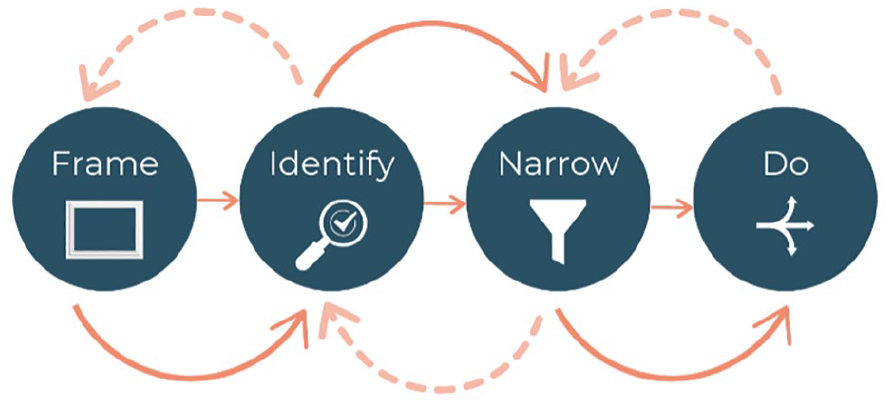

Next, we describe our systematic yet flexible qualitative evaluation process, FIND—Frame, Identify, Narrow, Do (see Figure 1).

Frame, Identify, Narrow, Do (FIND) Evaluation Process for Complex Interventions

Each step is described, followed by an example case of the full process. We draw on approaches for evaluating complex interventions which were first introduced in community development contexts but can be applied broadly (Patton, 2011; Preskill et al., 2014; Tamarack Institute, n.d.). Throughout, we highlight two key differences between this evaluation and more conventional approaches (Table 1). First, although FIND is represented as a sequence, there are many paths and feedback loops linking evaluation and implementation. Solid and dashed arrows in the FIND diagram convey its ongoing, fluid nature. Second, the program evaluator is embedded in the project team, rather than being independent. Their role is to guide this collaborative evaluation process—which prioritizes input from community-facing team members who have the best sense of what is happening in each community.

Frame: What’s Relevant to Our Project?

Complex interventions and their evaluations can quickly become overwhelming. The thought, “What if we miss something?!” has kept at least one co-author up at night. Deciding what is relevant as early as possible can ease, but not eliminate, this concern. In DIP-IN, we monitor processes and outcomes that represent significant successes and challenges to achieving project goals. Because DIP-IN is a multisector partnership, the most important processes relate to partners (e.g., promising partnerships, changes in relationships, parting of ways). Given the multilevel nature of DIP-IN, we carefully attend to emerging outcomes related to broader socioecological system(s) where the project happens (e.g., neighborhood leaders, partner organizations, communities, environments) (for further guidance on framing complex intervention evaluations, see Preskill et al. (2014)).

Identify: How Do We Collect Relevant Data in a Project That’s Always Changing?

We use a short electronic survey—a Google form called the Identifying Impacts Tool, which allows team members to regularly record memos about significant project events (see Supplemental Appendix A). “Memoing” provides a steady stream of data about project happenings, from multiple perspectives (Tamarack Institute, n.d.). Prompts are designed to elicit information about processes and outcomes of interest (e.g., Frame) while being open-ended enough to invite a variety of responses. DIP-IN evaluators typically take the lead on the actual memo entry based on what team members share, although the form could easily be used by contributors who are not trained evaluators. The Identifying Impacts Tool in Supplemental Appendix A includes a link to an editable version of the Google Form which readers can customize for their projects.

Narrowing and Doing: What Is Important? What Are We Going to Do?

Memo-ing generates abundant documentation that can be leveraged within the implementation. The evaluator reviews the memos and Narrows them down to the most significant, guided by project framing. Small groups of relevant stakeholders can then be convened for focused sensemaking and deciding on actions (e.g., Doing). Stakeholders may include project staff, partners, and/or resident leaders.

Usually, we decide to continue monitoring the events documented in the memos, such as a promising new partnership or a CHIP that has stalled out in implementation. Other times, we choose to explore further. This might mean a quick email or bringing it up at the next meeting with a partner. For example, if one of our partners receives a grant, we inquire about whether DIP-IN played a role. Sometimes, we decide the event calls for timely action. This was the case when one of our intervention components resulted in multiple unanticipated outcomes, and we conducted brief stakeholder interviews to find out why. This example is profiled below.

An Example of the FIND Process

Next, we illustrate the full FIND evaluation process using one of our evidence-based community health improvement projects (CHIPs). As described previously, CHIPs exemplify complexity in DIP-IN, because implementation is community-specific. Each resident-led steering committee chooses their own CHIPs and what health area(s) to focus on—healthy food access, physical activity infrastructure, stress, and/or social connections.

The Sunday Suppers CHIP is a monthly outdoor community dinner series hosted by a local community-based organization. Residents and community stakeholders are provided a free, healthy meal from a local caterer. Resources and activities related to health and wellness are also available. Sunday Suppers are the first CHIP to make social connections their priority focus area and one of the project’s first surprise successes. The FIND process for the Sunday Suppers is described below. It reflects Sunday Suppers implemented in three consecutive seasons, where the event occurs monthly from May through October (Fall 2021–Spring 2024).

Frame

We expect all CHIPs, including the Sunday Suppers to engage residents around their focus area(s). In this case, the Suppers were intended to bring the community together to share a meal and get the word out about diabetes and healthy eating. The primary focus was on increasing social connections among neighbors. However, we remain sensitive to unanticipated and indirect effects of all intervention components, including CHIPs. In DIP-IN, we are most interested in ecological outcomes related to partner organizations, communities, and environments.

Identify

Outcomes demonstrating increased social connections became increasingly evident over the first 2 years of Sunday Suppers implementation. In collaboration with the community-facing project team, the evaluator used memos to describe the Suppers’ rapid expansion in terms of resident/partner participation. The event was increasingly multigenerational and attracted partners from multiple levels. We also documented evidence of community-level impacts stemming from the Suppers, such as residents being more involved in the community, capacity building within the host organization, and environmental improvements in the immediate area.

Narrow

A small group including the evaluator, project lead, and a community-facing team member regularly discussed the Sunday Suppers, supported by memo data. After the second Sunday Suppers season, we decided we wanted to know more about how and why the Suppers’ were successful, so we could leverage it in the project-in-progress. We have taken several time-sensitive actions:

Do

The evaluator conducted and summarized brief interviews with 10 Sunday Suppers stakeholders including event leaders, residents, and organizational partners. Recommendations for interview participants and introductions were made by a community-facing team member and the Sunday Suppers CHIP lead organization.

The small group reconvened to review the data, which helped us understand how the Sunday Suppers were building community. We concluded that CHIPs focusing on social connections may open doors for resident engagement in more direct health improvement goals of DIP-IN (e.g., increased physical activity).

A summary of interviews was shared with the steering committee and the Sunday Suppers lead organization. This supported decision-making about investing in future iterations in this community and elsewhere, including elements of the Suppers critical to successful adaptation.

FIND is ongoing, flexible, and cyclical. The Sunday Suppers process was initially driven by what we learned from memos. However, it prompted a more focused FIND cycle with stakeholder interviews as the data source (e.g., Identify). The sensemaking that followed (e.g., Narrow) generated further insights and action (e.g., Do).

Discussion

This article described the qualitative process for evaluating a complex public health intervention aimed at reducing diabetes disparities. The FIND process supports teams in continuously monitoring and using information relevant to project goals. Below are lessons learned from our experience with FIND:

Many insights generated through the FIND process would have been missed with more conventional evaluation approaches (e.g., external evaluators using predetermined measures at set time points).

FIND relies on qualitative methods, which rise to meet the evaluative demands of fluid, community-responsive interventions. Qualitative methods like memos and brief interviews can be quickly adapted to shifting project conditions. We recommend including robust qualitative methods in broader mixed-methods evaluation plans.

Having an evaluator embedded in the project team is vital to understanding and leveraging what is happening in the community. In our project, community-facing team members were best positioned to make observations regarding time-sensitive, significant events.

The collaborative nature of FIND makes it more accessible. Team members with no special expertise in evaluation are key contributors at all stages. Expertise in evaluation comes in the design and facilitation of the collaborative process—rather than being solely responsible for data collection and analysis.

Memo-ing can be expanded to include a wider variety of stakeholders, who enter memos directly. This would also remove the evaluator as the filter and encourage further sharing of challenges, alongside successes. Memo-ing could be incorporated within existing project structures (e.g., regular meetings) and available ad hoc.

Conclusions and Implications for Practice

To build capacity for evaluating complex public health interventions, there is a need to create and share resources specific to public health settings. Furthermore, we need to develop a language for educating stakeholders, including funders, about evaluation approaches that fall outside traditional scientific norms. Finally, although this paper is titled “from daunting to doable,” we experience FIND as more than doable. It can be exciting, even alarming at times, to discover things that otherwise would not have been detected, as they happen. The process is also meaningful and motivating. We witness firsthand how our work impacts the intervention, supporting vital work that addresses health equity.

Supplemental Material

sj-pdf-1-hpp-10.1177_15248399241298840 – Supplemental material for From Daunting to Doable: Tools for Qualitative Evaluation of a Complex Public Health Intervention

Supplemental material, sj-pdf-1-hpp-10.1177_15248399241298840 for From Daunting to Doable: Tools for Qualitative Evaluation of a Complex Public Health Intervention by Celeste Nicholas, Tess D. Weathers and Lisa K. Staten in Health Promotion Practice

Footnotes

Authors’ Note:

The authors thank the Diabetes Impact Project – Indianapolis Neighborhoods (DIP-IN) team for their contributions in developing the evaluation process described here. Ariez Christmon, Natalie Oslund, and Ron Rice, specifically, are critical links between the project and partner communities—without which this evaluation approach would not be possible. Becca Karstensen provided fabulous graphic design support. The authors also thank organizational partner Aspire House, whose programming is featured in this manuscript. This work was supported by funding from Eli Lilly and Company. Celeste Nicholas is fully funded through DIP-IN funding. Tess Weathers and Lisa Staten are partially supported through DIP-IN funding.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.