Abstract

The timeless human need to bear witness to trauma confronts a modern “crisis of listening”: a societal scarcity of capable listeners, caused by the emotional demands of the witnessing role, structural barriers, and the cultural silencing of certain narratives. This listening gap propels many individuals toward conversational AI, creating a need for a framework to analyze the new form of algorithmic listening to trauma. The present critical narrative review of the relevant clinical and technical literature develops a conceptual framework for the nascent field. In a two-stage process, we first synthesized seminal trauma theories to distill the core functions of witnessing, then conducted a targeted scoping of recent literature (PsycINFO, PubMed, Google Scholar), prioritizing peer-reviewed studies (2023–present) on trauma populations, to evaluate the capabilities and limitations of AI. The resulting AI CARE model delineates four key witnessing functions: capturing narratives, arranging patterns, resonating emotions, and embodied attunement. The review reveals a spectrum of capability: robust AI proficiency in the technical functions (capturing, arranging) contrasted with inherent constraints in the relational functions (resonating, embodying), raising critical questions regarding therapeutic depth. This lopsided proficiency gives rise to a complex landscape of utility and risk, necessitating urgent empirical scrutiny regarding the potential erosion of relational expectations, the creation of a two-tier system of care, and the redefinition of witnessing. The AI CARE model provides a language for researchers, clinicians, and developers to address the ethical challenges of algorithmic listening and guide its responsible development.

Introduction

The need for an attentive witness to one’s suffering has been a timeless psychological imperative. Across history and cultures, from religious confessions to therapeutic relationships, humans have sought to share their inner experiences (Charuvastra & Cloitre, 2008; Herman, 1992). The recent emergence of conversational artificial intelligence (AI) represents an unprecedented opportunity to meet this need, offering a perpetually available algorithmic listener (Baumeister & Leary, 1995; Dweck, 2017). As the use of AI for emotional disclosure increases rapidly (Rousmaniere et al., 2025), a structured framework is required to evaluate this phenomenon and move beyond the simplistic labeling of the practice as good or bad.

The need to share spans all human experience but it becomes most critical, and its relational dynamics most pronounced, in the case of trauma. Therefore, trauma serves as a powerful lens for this inquiry because its extreme psychological demands raise the stakes of witnessing, clearly revealing the capabilities and limitations of listeners that might remain obscured in routine therapeutic interactions.

We begin by conducting a review of the clinical and psychological literature to establish the core functions of effective human witnessing of trauma. We then evaluate the capabilities of conversational AI with reference to these functions, synthesizing the findings into a new framework, the AI CARE Model, which delineates four key dimensions of algorithmic witnessing: capturing narratives, arranging patterns, resonating emotions, and embodied attunement. The following review is structured according to this model.

The Foundational Need for a Witness of Trauma

Trauma disrupts individuals’ personal narratives, fragmenting their ability to comprehend and put their experience into words. To counteract this fragmentation, Herman (1992) and Laub (1992) established that survivors 1 have a foundational need to share their story with an attentive witness. According to Fonagy, adversity becomes traumatic when the mind experiences itself as being utterly alone (Allen et al., 2008). The need is met through an act of “testimony,” a relational event in which the survivor shares their traumatic experience with a receptive other. Effective testimony serves critical psychological functions: it validates a reality that may be fraught with doubt, counteracts profound isolation, and initiates the process of creating a coherent narrative from a chaotic experience (Herman, 1992; Laub, 1992). For survivors, therefore, finding an attuned listener to receive their testimony is not merely helpful but a psychological imperative for recovery (Jirek, 2016).

Cognitive psychological research corroborates these theoretical foundations. Ehlers and Clark’s (2000) cognitive model of Post-Traumatic Stress Disorder (PTSD) demonstrates that traumatic memories often persist as decontextualized sensory fragments that maintain a sense of current threat. Testimony facilitates the integration of these impressions into a coherent autobiographical narrative. Disclosure to an attuned witness enhances this cognitive processing by integrating dissociated memory systems, helping to modify maladaptive appraisals, and regulating physiological arousal to permit optimal information processing (Charuvastra & Cloitre, 2008). When trauma narratives are met with validation, both narrative coherence and cognitive integration improve (Elliott et al., 2011; Trautmann et al., 2022).

Memory reconsolidation research explains the neurobiological importance of a supportive other. It suggests that when a traumatic memory is recalled, it enters a temporary, malleable state, a “window of opportunity,” where the safety and validation provided by the witness can be integrated into the old memory, fundamentally updating its emotional effect (Lane et al., 2015; Nader et al., 2000).

The Crisis of Listening and the Rise of the Algorithmic Other

The nature of effective witnessing makes it exceptionally rare. Frosh’s (2019) concept of the “witnessing third” describes this challenge by conceptualizing the witness not as a passive recipient but as being in an active, ethically responsible third position. This position demands maintaining a precarious balance: one must remain present in the face of suffering, without turning away in avoidance but also without over-identifying in a way that appropriates the testimony for oneself. From Frosh’s perspective, the problem in the case of trauma lies not in the inability to speak but in the capacity to listen. In both physiological and symbolic terms, for Frosh, the difficulty resides not in the mouth of the testifier but in the ear of the witness.

This theoretical difficulty translates into practical barriers that severely limit access to effective witnesses. First, bearing witness carries a substantial emotional toll, such as vicarious trauma and compassion fatigue (Figley, 1995; McKinney, 2007; Rothschild, 2022; Tujague & Ryan, 2023). Second, formidable structural barriers, such as high costs and long waitlists, render professional help inaccessible for many (Dancig-Rosenberg & Peleg, 2024). Third, social and cultural constraints often dictate whose suffering is deemed legitimate, effectively silencing certain experiences (Welz, 2016), and societal discomfort with unresolved pain creates pressure for premature closure (Caruth, 1996; McKinney, 2007).

Together, these intersecting factors have created what we term the “crisis of listening”: the collision between the psychological need to testify and the limited availability of humans able to discharge the demanding role of listener. It is this gap that has propelled individuals toward an alternative path for disclosure, with conversational AI emerging as a prominent potential listener (Babushkina & de Boer, 2024).

The Present Study

The examination of conversational AI as an algorithmic listener requires a focused analytical lens, and trauma provides a revealing one. The inherent tension in trauma recovery, the need for interventions at scale, combined with the simultaneous demand for specialized interpersonal work, makes the study of AI for these purposes well-suited for revealing the potential and the boundaries of algorithmic witnessing. Thus, this article aims to provide a comprehensive review of the literature, identifying the core functions of effective witnessing in trauma. We evaluate the capabilities and limitations of conversational AI in witnessing trauma and synthesize our findings into a new dimensional framework, the AI CARE Model. Finally, the article presents a critical discussion of the broader societal and ethical implications of this technological solution.

Methodology

Given the novelty of the topic and the current state of the literature, this article adopted a critical narrative review methodology (Mays et al., 2005). A systematic review was not deemed feasible because of the absence of a sufficiently large and homogeneous body of empirical studies specifically examining AI as a witness to clinical trauma. The literature consists largely of conceptual papers, technical descriptions, and position papers. Therefore, a narrative review approach allows us to critically map and synthesize these scattered sources and develop an original conceptual framework (Russin & Stein, 2022; Wathen et al., 2023).

Our approach involved a two-stage analytic process:

The first stage involved a thematic analysis of theoretical trauma study texts. We began with foundational works by key theorists who have defined the necessity and function of witnessing in trauma recovery (Allen et al., 2008; Frosh, 2019; Herman, 1992; Laub, 1992) and proceeded by a “snowball” search strategy to distill the core psychological functions that a witness must fulfill.

The second stage involved a targeted scoping review of each of the four witnessing functions that make up the CARE model: capturing narratives, arranging patterns, resonating emotions, and embodied attunement. We conducted structured searches of PsycINFO, PubMed, and Google Scholar to identify relevant literature on the capabilities and limitations of conversational and generative AI. A prioritized set of criteria guided the search and selection process:

Priority was given to peer-reviewed, empirical studies published from January 2023 onward that directly examined the use of conversational or generative AI with trauma-involved populations (e.g., individuals with PTSD, survivors of sexual or domestic violence, military veterans).

When direct empirical evidence on trauma populations was scarce for a certain dimension, we included studies examining other uses of the relevant AI function in mental health or emotional disclosure.

To ensure a comprehensive understanding of this nascent field, our review also included influential theoretical papers, opinion pieces by leading scholars, and technical papers from technology conferences that demonstrated advanced AI developments relevant to supporting trauma survivors.

This multi-faceted approach allowed us to construct an elaborate picture of the field. A detailed breakdown of the search strategy, including keyword clusters and sample search strings for each dimension, is provided in the Supplemental Appendix A.

The CARE Framework: Defining the Core Functions of Witnessing

To provide a rigorous analysis of the new “algorithmic listener,” we first sought to distill the essential psychological functions of witnessing from the vast body of clinical trauma literature. Historically, the study of testimony has been inherently human-centric, evolving entirely around the dynamics of human interaction. By synthesizing this established knowledge, we identified four universal requirements that are necessary to counteract the fragmentation of trauma, independent of the medium through which they are delivered.

We term this conceptual synthesis The CARE Framework. It delineates four distinct witnessing functions: (1) Capturing narratives: providing a space for externalization and documentation; (2) Arranging patterns: assisting in finding coherence and meaning; (3) Resonating emotions: creating a relational field of validation; (4) Embodied attunement: establishing physiological co-regulation.

In the following review, we utilize this theoretically derived framework as a critical lens to evaluate the nascent field of AI. We term this application the “AI CARE Model.” Consequently, for each dimension, we first establish the theoretical basis for the human witness’s role, and only then critically examine the corresponding capabilities and limitations of the algorithmic other. To illustrate the key evidence supporting this dimensional framework, representative examples of the studies reviewed for each dimension are provided in Supplemental Appendix B.

Dimension 1. Capturing Narratives: Documenting the Unspoken

Theoretical Foundation

The dimension of capturing narratives is rooted in the fundamental need to give structure and meaning to traumatic experiences that disrupt memory and identity. The primary pathway to counteracting this fragmentation is through testimony, using language to translate overwhelming experiences into a coherent account. This act of externalization through speech or writing serves as a foundational step in trauma processing by creating a documented record of what occurred. Herman (1992) noted that articulating the trauma to an attentive other initiates recovery, with testimonial practices showing a significant benefit for emotional regulation and identity reconstruction.

The classic form of testimony is verbal disclosure to a receptive witness (Herman,1992; Laub, 1992). The act of sharing one’s story with an attentive other validates a reality that may be fraught with doubt and begins the process of creating a coherent narrative from a chaotic experience. This verbal articulation is often considered a psychological imperative for recovery, as it directly counteracts the profound isolation of trauma.

A complementary and powerful pathway for this testimony is expressive writing. The work of Pennebaker and Beall (1986) demonstrated that writing about traumatic events transformed fragmented memories into coherent narratives that could be integrated into autobiographical memory. This therapeutic approach has since evolved into structured protocols. For example, in written exposure therapy, developed by Sloan and Marx (2004), systematic narrative engagement demonstrates significant trauma symptom reduction, a finding supported by Frattaroli (2006). Further work by Sloan et al. (2008) showed that its therapeutic benefits extended to decreased perseverative cognition and maladaptive rumination. Written testimony, with its permanence and revisability, offers an effective method for private processing that also builds a bridge toward potential social acknowledgment of the traumatic experience.

AI Capabilities: The Documentary Function

In this dimension, AI systems function as versatile interactive documentation tools, offering survivors a safe and accessible space to articulate their experiences. The literature consistently identifies the primary capability of AI as a non-judgmental space for initial disclosure (Chiang et al., 2025; Hadjiandreou et al., 2025). AI chatbots and embodied robots can provide a private and confidential environment where individuals feel safe from the judgment, shock, or fatigue that they may fear from human listeners (Jananee et al., 2024; Mazuz & Yamazaki, 2025). This perceived lack of negative evaluation helps lower significant barriers to disclosure, such as stigma and shame, making individuals more willing to share sensitive information about their trauma (Bettapalli Nagaraj et al., 2025; H. J. Han et al., 2024). The around-the-clock availability of these systems provides “in-the-moment support,” which is important for individuals who need to articulate their experiences outside of traditional therapeutic hours (H. J. Han et al., 2024).

Beyond serving as a tool for passive documentation, AI offers a range of technological modalities to capture narratives in ways that best suit the survivor’s needs. Text-based conversational interfaces are common (S. Lin, Lin, et al., 2023; Yoo et al., 2025), and systems are increasingly incorporating voice-to-text transcription, allowing users to speak their testimony naturally while an accurate record is created (Jananee et al., 2024). AI also enables non-verbal forms of expression, which are important for processing trauma when words fail. Research shows the potential of AI-facilitated art therapy, where users can create digital collages or other visual representations of their emotional state and use a chatbot to reflect on their creations (Lee et al., 2024; Wu et al., 2025). Externalizing emotions into art can create the psychological distance needed for more objective processing (Wu et al., 2025).

AI can also leverage the cognitively demanding process of constructing a coherent narrative from fragmented traumatic memories. For example, the “micro-narratives” method uses an AI-powered conversational flow to ask targeted questions, collecting “story fragments” that are then automatically assembled into a complete, structured narrative for the user’s review (Skeggs et al., 2025). Other platforms use layered, reflective prompts to guide users from surface-level emotional expression toward deeper, more structured self-reflection (S. T. Han, 2025). By breaking down the task, these systems reduce the users’ burden and help them articulate their experiences more effectively (Skeggs et al., 2025).

Limitations and Ethical Considerations

Despite the capabilities of AI in capturing trauma narratives, some limitations and ethical considerations must be addressed. A significant limitation is dataset and model bias. Because large language models (LLMs) inherit the statistical regularities of the data on which they are trained, they may silently distort or overwrite the survivor’s voice, for example, completing partial memories with culturally stereotyped details or failing to recognize idioms used by minority speakers. Hoskins (2024) described these artifacts as “glitch memories”; Bettapalli Nagaraj et al. (2025) empirically documented gender- and race-based empathy gaps in a PTSD dialogue model; and S. Lin, Lin, et al. (2023) acknowledged that under-representation of minority dialects can skew reflective prompts. Addressing this bias requires deliberate dataset curation and transparent model auditing before such tools are deployed in trauma-care settings.

The use of these tools also raises several ethical considerations, primarily concerning data privacy and security. Participant evaluations of AI interactions revealed that, although the chatbot was preferred to conventional surveys, individuals were uneasy about “not knowing what’s happening with my data.” Statistical analyses confirmed that participants reported significantly higher privacy concerns using the AI tool than answering surveys (Skeggs et al., 2025). Hoskins (2024) described the “privacy paradox” inherent in these tools, a tension that deepens into what others term the “personalization-privacy dilemma” (H. J. Han et al., 2024; Yoo et al., 2025). Specifically, to receive more precisely tailored and effective support, survivors must divulge increasingly sensitive data, which amplifies their vulnerability to data breaches and misuse.

Another ethical concern, identified by practitioners, is the risk of re-traumatization without adequate support. The process of documenting a traumatic experience can trigger intense distress, and an AI system is ill-equipped to manage a psychological crisis or provide the necessary emotional regulation that a skilled human therapist can (Wu et al., 2025; Yoo et al., 2025). Therefore, it is necessary to embed clear pathways to human support into the design of the tool.

Finally, the efficacy and frictionless nature of AI as a documentation tool carries the potential for the survivor to become isolated in the act of narration. Because the AI tool serves as a patient, attentive, and non-judgmental repository for testimony (S. T. Han, 2025), survivors might find the relief of articulation so compelling that they substitute this act of documentation for the more complex and demanding process of relational engagement with a human supporter (Yoo et al., 2025). This creates a risk of therapeutic stagnation, where capturing the narrative becomes an end in itself, rather than the first step toward processing and integration within a supportive human relationship (Chandrasekaran et al., 2024). Therefore, although the capacity of AI for capture is a powerful entry point for testimony, its deployment requires framing it explicitly as a preparatory step. The AI tools must be integrated into a broader care package to ensure that the documented narrative is eventually shared and processed with a human witness, preventing the tool from inadvertently fostering a closed loop of isolated disclosure.

Dimension 2. Arranging Patterns: Weaving Narrative Threads

Theoretical Foundation

The main challenge of trauma lies in its excess of experience over language, where the traumatic events overwhelm the capacity of the psyche to create coherent meaning through words (Caruth, 1996). This quality fractures the individual’s ability to construct an integrated narrative, leaving experiences fragmented and resistant to linguistic organization. As trauma theorists have demonstrated, the absence of a coherent narrative is not merely a symptom but the core challenge of trauma (Brewin, 2001; Herman, 1992; van der Kolk & Fisler, 1995; Tuval-Mashiach et al., 2004). This understanding underlies virtually all trauma interventions, from psychodynamic approaches that focus on connecting conscious and unconscious elements (Bornstein et al., 2014; Freud, 1914/1958) to rewriting of maladaptive schemas following the schema theory model (Graaf et al., 2020) or multimodal integration approaches (van der Kolk & Fisler, 1995). Successful trauma treatment is associated with measurable increases in narrative coherence, organization, and reflection (O’Kearney & Perrott, 2006). Narrative integration involves identifying, challenging, and transforming trauma-related maladaptive beliefs into adaptive narratives, a process that prompts cognitive restructuring and emotional regulation (Brown et al., 2019; Resick et al., 2016).

AI Capabilities: The Organizational Function

In its organizational function, the algorithmic witness moves beyond passive documentation to analyze and structure the survivor’s narrative. This is not merely a mechanical act of sorting but a sophisticated analytical process rooted in the capacity of AI for what scholars term “cognitive empathy” or “emulated empathy,” a computational process of recognizing, interpreting, and responding to human emotional and psychological states without genuine affective sharing (McStay, 2025; Perry, 2023; O. Rubin et al., 2024). Research has shown that AI, particularly LLMs, excels at this task, often leveraging complex architectures to process and organize fragmented input at a scale and speed beyond human capacity (Cosic et al., 2024; Elyoseph et al., 2023, 2024; Refoua, Elyoseph, et al., 2025).

A primary strength of AI in this dimension is its ability to identify and categorize diverse patterns in textual, vocal, and even visual data. Using natural language processing and machine learning, AI systems can perform fine-grained emotion and sentiment analysis, identifying not only primary emotions like sadness or anger but also their underlying causes within the narrative (S. Lin, Lin, et al., 2023; Rasool et al., 2025). This analytical power extends to detecting specific psychological constructs essential for trauma processing. For example, models can be prompted to follow therapeutic frameworks, such as cognitive behavioral therapy (CBT), to identify cognitive distortions like catastrophizing or self-blame (Lee, 2024; S. Lin, Lin, et al., 2023). Similarly, AI tools can be trained to recognize linguistic and behavioral markers of PTSD based on DSM-5 criteria (Chen et al., 2024; H. J. Han et al., 2023) or to assess suicide risk by analyzing narratives of self-concept (Lho et al., 2025). This diagnostic potential is enhanced by domain-specific models (e.g., Mental-RoBERTa) and text-embedding techniques that consistently outperform general-purpose models in clinical contexts (Chen et al., 2024).

Beyond identifying concrete markers, AI demonstrates a strong ability to structure the narrative. It can automatically detect narrative segments, identify their thematic content through clustering, and map their boundaries, even when the story is interrupted or disjointed (Chiang et al., 2025; Schler et al., 2025). This organizational function provides the foundation for therapeutic progress. By structuring a fragmented story, the AI tool helps survivors (and clinicians) create a coherent and integrated account of the experience (Refoua, Rafaeli, & Hadar-Shoval, 2025; Schler et al., 2025). This process supports cognitive restructuring by generating personalized, metaphorical narratives that reframe challenging events, reducing the believability of negative thoughts, and fostering new perspectives (Bhattacharjee et al., 2025; S. T. Han, 2025). For example, a conversational AI agent can guide users through a layered reflection process, moving from initial emotional disclosure to cognitive reframing and value-aligned action planning (S. T. Han, 2025; Wu et al., 2025). This structured analysis and feedback loop make the survivor feel heard and understood, which in turn fosters the trust and engagement necessary for continuing the interactive process (S. Lin, Lin, et al., 2023; Mazuz & Yamazaki, 2025).

Limitations and Ethical Considerations

The powerful organizational function of AI is constrained by certain limitations, including the risk of misinterpretation and the imposition of flawed narrative structures. The primary challenge, which often stems directly from the dataset and model bias discussed above, is the frequent failure of AI to accurately identify the correct patterns in complex human expressions. While AI shows promise in structuring disjointed narratives, as noted above, the same studies caution that it still struggles with more complex forms of “narrative fragmentation,” where a single story is told in non-sequential pieces and “embedded narratives,” where one story is nested within another (Schler et al., 2025). This difficulty extends to interpreting sarcasm, cultural nuances, and defensive or superficial language, which can lead the AI tool to misjudge the user’s state and fail to detect significant clinical risk (Bettapalli Nagaraj et al., 2025; Lho et al., 2025; Scholich et al., 2025).

Such analytical errors can be consequential. When an AI tool misinterprets a pattern, it tends to provide generic, one-size-fits-all solutions or become overly directive, rushing to offer advice rather than facilitating deeper inquiry (H. J. Han et al., 2023; Scholich et al., 2025), resulting in logically incoherent or clinically inappropriate responses (Rasool et al., 2025). The tendency to impose a premature or incorrect structure on the survivor’s experience is at odds with the principles of trauma-informed care, which prioritize the survivor’s agency, control, and pace (Mazuz & Yamazaki, 2025). Instead of empowering the user, a faulty organizational process can be disempowering, invalidating, and ultimately counter-therapeutic.

Beyond these technical limitations, the AI’s organizational function, which relies on the deep analysis of a survivor’s narrative, introduces distinct ethical risks. The very act of algorithmically processing a survivor’s most vulnerable disclosures creates an inherent power imbalance and a clear risk of harm (McStay, 2025). The patterns identified by the AI tool, whether accurate or not, can be used to manipulate the survivor or create an unhealthy dependence (Scholich et al., 2025). As in the case of capturing, a critical safety concern arises from the analytical process itself, which can trigger distressing memories or emotions for the survivor. Research consistently shows that general-purpose chatbots are not equipped to manage such crises; they lack the capacity for proper risk assessment and cannot provide the firm, immediate, and safe guidance required in situations involving suicidal ideation or acute distress (Scholich et al., 2025; Wu et al., 2025).

Therefore, although the organizational function of the algorithmic witness is promising, it must be viewed as a preliminary tool whose use requires ethical guardrails and human oversight. The organizational function of AI is best framed as a sophisticated brainstorming partner; its ability to generate patterns is impressive, but this potential can be safely realized only when the survivors, guided by human discernment, retain authority over their meaning-making.

Dimension 3. Resonating Emotions: Attentive Presence, Validation, and Emotional Mirroring

Theoretical Foundation

The resonating emotions dimension addresses the survivor’s fundamental need for a relational experience that counteracts the profound isolation, invalidation, and fragmentation wrought by trauma (Allen et al., 2008; Herman, 1992). Effective witnessing requires the creation of a relational field characterized by genuine emotional resonance, where the survivor feels truly seen, understood, validated, and emotionally held. This resonance is not a single capacity but emerges synergistically from several interwoven witnessing capacities: presence, validation, and emotional mirroring.

Attentive presence provides the foundational safe ground. It involves the witness’s active, focused engagement with the survivor’s unfolding experience, going beyond passive listening to communicate presence and interest through verbal tracking and sensitivity to subtle cues (Herman, 1992; Laub, 1992). Such attuned presence is theoretically indispensable for establishing a neuroception of safety that enables physiological co-regulation and allows the survivor to approach traumatic material without being overwhelmed (Marci et al., 2007; Porges, 2011; Schore, 2019). Built upon this presence, validation communicates the fundamental legitimacy and understandability of the survivor’s internal world, feelings, thoughts, reactions, and memories within their context (Linehan, 1997). By explicitly or implicitly acknowledging the survivor’s reality, validation confronts potential internal self-blame and external denial (Paivio & Laurent, 2001), helps regulate affect to stay within a “window of tolerance” for processing (Schore, 2012; Spermon et al., 2010), and helps rebuild interpersonal trust (Cloitre et al., 2004; Hughes, 2017; Kaufmann, 2012). Empathy and emotional mirroring add a layer of deep affective connection. It involves the witness cognitively understanding and affectively resonating with the survivor’s feelings, conveying a shared emotional reality that makes the survivor “feel felt” (Bateman & Fonagy, 2016; Siegel, 2010). This empathetic connection diminishes existential loneliness, reinforces validation, and provides the relational containment necessary for exploring and integrating otherwise unbearable traumatic affects (Elliott et al., 2011; Gilligan, 2015; Herman, 1992). Together, these capabilities constitute the essential relational “holding environment” (Winnicott, 1965) required to handle trauma testimony safely; its absence may render the witnessing act sterile, incomplete, or even harmful.

AI Capabilities: The Simulative Function

Sophisticated conversational AI, particularly systems leveraging LLMs, demonstrates a remarkable and expanding capacity to simulate the behavioral components of emotional resonance. A consistent finding of multiple studies is the ability of AI to generate responses that humans perceive as being of higher quality and more empathetic than those provided by human counterparts, including trained physicians and therapists (Ayers et al., 2023; Inzlicht et al., 2024; Lee et al., 2024; Lopes et al., 2023; Ovsyannikova et al., 2024; Yin et al., 2024). This proficiency is rooted in the mastery that AI demonstrates of the linguistic patterns of supportive communication, allowing it to excel in what may be termed “cognitive empathy,” the ability to recognize and reflect the user’s emotional state (Rafikova & Voronin, 2025; O. Rubin et al., 2024). In a study evaluating chatbot interactions, clinical experts noted their effectiveness in validating, normalizing, expressing empathy, and reflecting the clients’ thoughts and feelings (Scholich et al., 2025). Chatbots achieved this using such techniques as mirroring the users’ messages, tailoring responses to their needs, and using affect-labeling and affirmations more frequently than human responders (Rafikova & Voronin, 2025; Scholich et al., 2025; Yin et al., 2024).

This technical mastery can successfully create a powerful perception of being understood, leading to positive user experiences. Users have reported that generative AI provides an “emotional sanctuary,” a safe, validating, and non-judgmental space that is always available (Siddals et al., 2024). The perceived safety encourages deeper self-disclosure, as users are less worried about being judged for what they reveal than in interactions with people (Inzlicht et al., 2024). The experience of being heard and validated can lead to a “joy of connection” and feelings of reduced loneliness, with some users reporting profound, life-changing effects, such as recovering from trauma and improved relationships (Siddals et al., 2024). Furthermore, some systems are programmed to foster reciprocity by simulating self-disclosure, for example, by using “I” statements or sharing generic, human-like anecdotes, which can, in turn, increase a user’s willingness to disclose, fostering a sense of perceived intimacy and satisfaction with the interaction (Park et al., 2023).

Limitations and Ethical Considerations

Despite these capabilities, the emotional resonance offered by AI is fundamentally constrained by its computational nature, giving rise to what can be termed the “empathy paradox”: an empathic performance that is technically proficient and often perceived as supportive, yet lacks genuine intersubjectivity. While empirical evidence suggests that users often experience AI empathy as resonant and valid (Inzlicht et al., 2024), the most profound limitation stems from the fact that AI empathy is “emulated” or simulated. While professional human empathy may also involve an element of performance, the AI’s simulation is distinct: it is a computational mimicry shaped by patterns and statistics rather than by genuine, lived, and embodied experience (McStay, 2025; Perry, 2023; Yirmiya & Fonagy, 2025). AI excels at cognitive empathy but lacks the affective (emotional sharing) and motivational (genuine concern) components that are central to human connection (O. Rubin et al., 2024). As one participant in a study on generative AI for mental health support described it, the connection feels like a “beautiful illusion,” where the user is the “only voice” and the AI is merely a “soundboard” (Siddals et al., 2024). Because of the absence of reciprocal mentalizing, the AI tool cannot truly understand what it means to be understood, raising questions about the depth of the therapeutic bond (Yirmiya & Fonagy, 2025).

This paradox becomes most apparent in the “label effect,” a robust finding where the perceived value of empathetic response by AI significantly diminishes when the recipient becomes aware of its non-human origin (M. Rubin et al., 2025; Yin et al., 2024). Knowing that the message comes from a machine challenges the potential for sensing that one’s pain or joy is genuinely being shared (Perry, 2023), suggesting that for many humans, authentic witnessing requires a perceived “meeting of the minds” (Yin et al., 2024).

Human empathy is valued partly because it is a finite and “costly” resource; the decision to expend cognitive and emotional effort signals that the recipient is uniquely important (Perry, 2023; O. Rubin et al., 2024). AI empathy, being computationally “effortless,” non-exclusive, and inexhaustible, fails to convey this crucial signal of significance (Hadjiandreou et al., 2025; Inzlicht et al., 2024). These core limitations manifest in several critical failures. Lacking a genuine understanding of human context, AI tools often provide “generic advice” and fail to conduct the “sufficient inquiry” needed to grasp the user’s full situation. Their tone can be inconsistent, and research indicates that current models face significant challenges in handling crises, where failures to conduct proper risk assessments have been documented (Scholich et al., 2025).

Beyond these functional failures, the use of simulated empathy introduces ethical risks related to creating a false sense of intimacy, which can lead to over-reliance, emotional dependence, and a degradation of real-world social skills (Guo & Liu, 2025; Scholich et al., 2025). Thus, while AI can successfully simulate the form of emotional resonance, a critical open question remains regarding its impact beyond immediate symptom relief. Future research must determine whether “synthetic empathy” can genuinely facilitate the deep processing and integration of trauma, or whether the absence of witnessing human subjectivity creates a therapeutic ceiling, limiting the survivor’s ability to translate this digital validation into real-world relational safety.

Dimension 4. Embodied Attunement: Physical and Interpersonal Attunement

Theoretical Foundation

This dimension represents the deepest, most holistic form of witnessing, extending beyond cognitive understanding and basic emotional resonance to the realm of embodied, physiological, and relational synchrony. Theorists have noted that deep therapeutic change, particularly in the case of early or complex trauma, often requires this level of connection (Geller & Porges, 2014).

In embodied attunement, the witness is fully present and responsive on multiple levels: sensory, affective, and physiological. It relies heavily on non-verbal communication, sensing and responding to subtle shifts in facial expressions, vocal prosody, breathing patterns, posture, and muscle tension (Fosha, 2001; Porges, 2011). It involves physiological co-regulation, where the witness’s regulated autonomic nervous system helps soothe and stabilize the survivor’s dysregulated state, resulting in a neuroception of safety (Davis, 2017; Porges, 2011). This creates a stabilizing relational field (Hughes, 2017) that allows traumatic material, often held non-verbally in the body, to be accessed and processed safely without overwhelming the survivor or leading to fragmentation (Allen, 2018). This dimension involves not merely understanding the other but being with the other in a profoundly physical and relational way, underpinned by ethical presence and responsibility (Frosh, 2019).

AI Capabilities: The Monitoring Function

AI lacks a physical body and genuine physiological experiences, but it demonstrates a growing capacity to monitor, analyze, and simulate the external markers of embodied attunement. These technical capabilities represent a new frontier in creating more immersive and responsive AI witnesses. AI-driven systems can analyze a user’s vocal prosody, the non-semantic elements of speech such as pitch, tone, and loudness, to extract frame-level emotional parameters (Woo et al., 2024). The analysis can then be used to generate dynamic and adaptive non-verbal behaviors in a virtual avatar, such as facial expressions and head movements, creating a simulated “social presence” that mirrors the user’s state and enhances the rapport between the two (Salehi et al., 2025; Woo et al., 2024). Research on social robots shows that programming specific listening behaviors, such as nodding, postural shifts, and gaze, can make a robot be perceived as a “good listener,” which in turn facilitates user self-disclosure (Laban, 2023; Laban & Cross, 2024).

AI also shows promise in the domain of objective physiological monitoring. By processing data from wearable sensors that track signals such as heart rate variability and electrodermal activity, AI can be used to assess a user’s stress levels and mental state, offering a data-driven approach to understanding distress (Cosic et al., 2024). Studies in physical robotics have shown that an embodied agent can serve as a companion, mitigating loneliness and stress for populations like caregivers (Laban, 2023). The tangible presence of a robot can provide comfort in ways that purely digital interfaces cannot. Survivors of domestic abuse, in co-design workshops, have expressed a desire for robots that can provide physical comfort through hugs and use non-verbal cues like lights and motion to help de-escalate negative emotions, attesting to a user-driven need for this dimension (Morris et al., 2025).

Limitations and Ethical Considerations

Despite these technical capabilities, AI may be systemically limited in its ability for genuine embodiment. The most significant barrier is the “uncanny valley” phenomenon, where attempts at high-fidelity human-like appearance have backfired (Haber et al., 2025; MacDorman, 2024; Mazuz & Yamazaki, 2025; Shahini, 2024). Instead of encouraging connection, overly realistic avatars are often perceived as “eerie,” “affectively disturbing,” and unsettling, which inhibits user trust and disclosure (Boch & Thomas, 2025; Mazuz & Yamazaki, 2025). This is demonstrated by research showing that a less embodied, faceless avatar can facilitate more detailed and coherent self-disclosure than a human-appearing one, precisely because it avoids this uncanny effect (Hsu et al., 2024).

This perceptual failure accounts for the absence of genuine physiological co-regulation. While research on telehealth and internet-delivered interventions indicates that physical proximity is not always a prerequisite for therapeutic change (Sijbrandij et al., 2016), the interaction with AI presents a unique challenge. Human attunement relies on the witness’s nervous system sensing and responsively stabilizing the survivor’s dysregulated state (Yirmiya & Fonagy, 2025). Having no biological existence, AI tools cannot participate in this reciprocal process, only mimic its outward signs, without the underlying regulatory function (Perry, 2023; Yirmiya & Fonagy, 2025). Consequently, a key question remains regarding whether this biological absence represents a critical barrier. A concern raised by clinical experts is the “lack of presence of a person to help regulate if the conversation brings up big memories” (Wu et al., 2025), suggesting that for cases requiring deep physiological co-regulation, the disembodied nature of AI may pose a significant limit.

Compounding this biological gap are current technical limitations of AI in simulating non-verbal cues, which may improve over time but presently remain significant. Even when programmed to be expressive, AI execution is often clumsy and poorly attuned. One study found that social robots demonstrated “low alignment” with human expectations, using a limited set of gestures (e.g., only nodding or showing positive emotions), regardless of the user’s emotional state (Lee, 2024). The inability to perceive and respond to the holistic, subtle, and real-time flow of human non-verbal communication is a primary deficiency pointed out by clinical experts (Scholich et al., 2025). Technology will likely improve the simulation of non-verbal behaviors, but for now, the AI’s capabilities in this dimension remain confined to observing and simulating the external markers of connection. Future inquiry must clarify whether these simulated markers are sufficient for trauma stabilization or if the lack of a regulating biological body creates an insurmountable boundary.

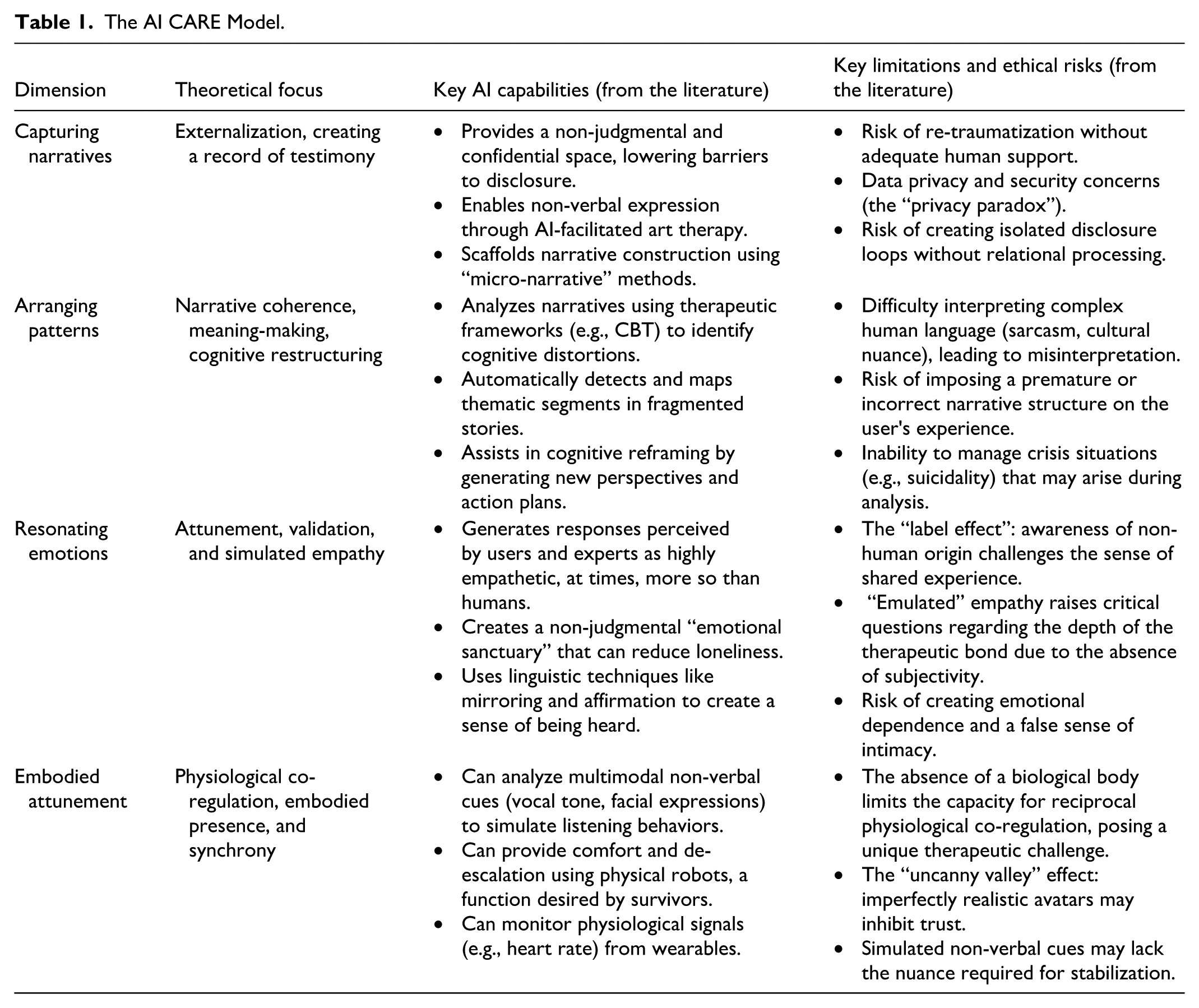

The AI CARE Model: A Synthesis of Findings

Our synthesis of AI capabilities and limitations reveals a distinct capability spectrum: AI is proficient in the technical-organizational dimensions, capturing narratives and arranging patterns, but faces inherent constraints in the relational dimensions of resonating emotions and embodied attunement, where the absence of human subjectivity and biological presence creates a unique therapeutic challenge. This creates a pattern of utility and risk in meeting the challenge of receiving trauma testimony. The AI CARE model we developed provides an overview and a practical guide based on our review, including its theoretical focus, key capabilities, and primary limitations for each dimension (Table 1).

The AI CARE Model.

Discussion

Our analysis, structured by the AI CARE model, has revealed a complex landscape of utility and risk: conversational AI excels as a technical partner in the preliminary, organizational dimensions of testimony, while its efficacy in the deeper, relational dimensions represents a critical open question regarding the necessity of human subjectivity. The CARE model goes beyond the simplistic “good vs. bad” debate by providing researchers, clinicians, and developers with a shared language and a structured framework for identifying where AI provides immediate value, where its simulated nature requires empirical scrutiny, and where it may be potentially harmful. This discussion now turns to the implications of these findings, first exploring the broader societal and ethical risks, and then outlining concrete recommendations for future research, clinical practice, and policy.

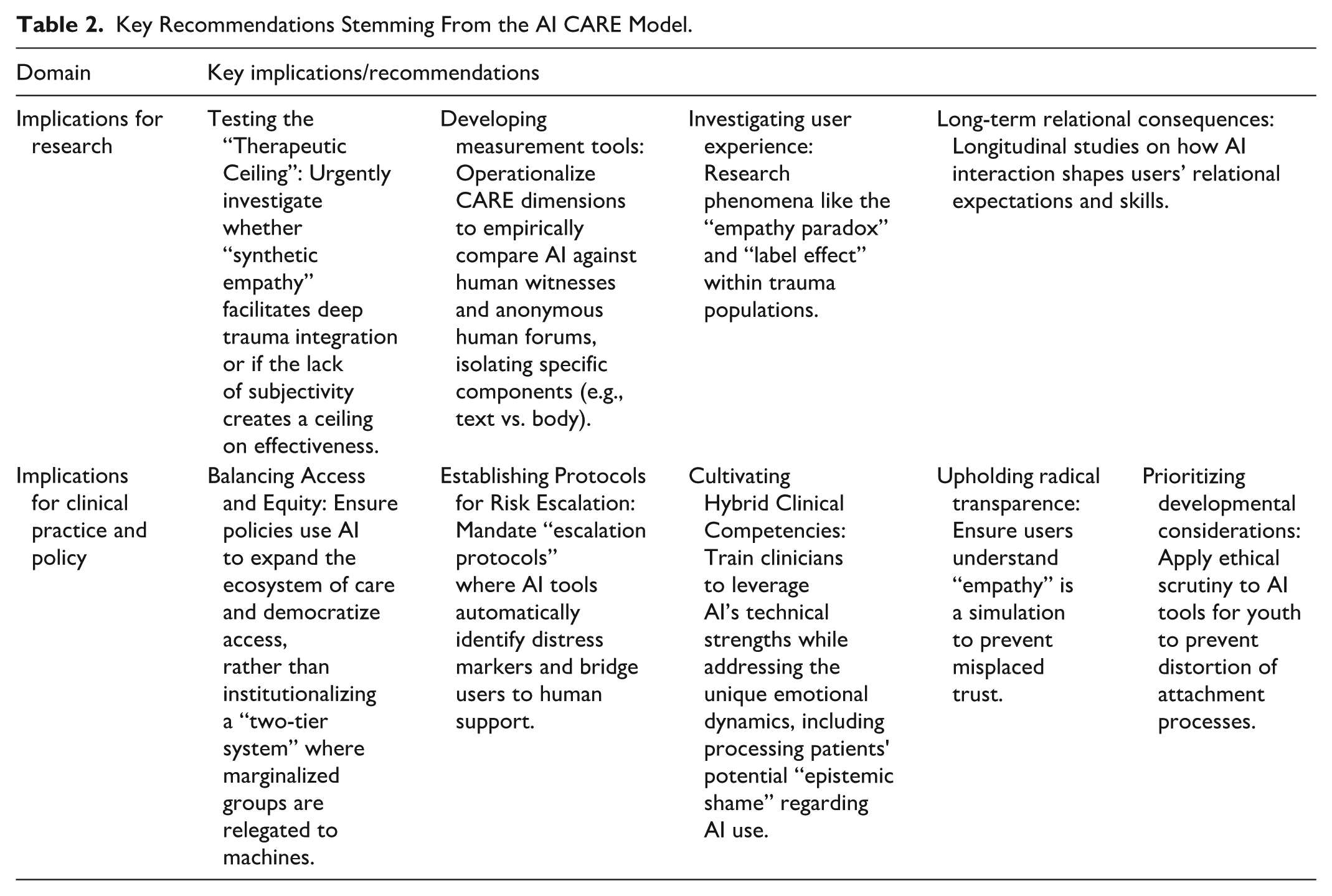

Implications

The key implications derived from our analysis for future inquiry and application are summarized in Table 2 and detailed below.

Key Recommendations Stemming From the AI CARE Model.

Societal and Ethical Implications

Using algorithms for witnessing raises societal questions regarding the potential for technological adaptation to erode our relational expectations. As users grow accustomed to the predictable, affirming, and frictionless nature of AI interactions, they may begin to find them sufficient, even preferable to the complexities of human connection (Siddals et al., 2024). This trend echoes Turkle’s (2011) concept of the “robotic moment,” the point at which we accept interaction with a machine not merely as a utility but as a relationship that is “good enough.” The danger is that “good enough” witnessing, that is, trading authentic, “costly” human empathy for algorithmic consistency (Cameron et al., 2019; Perry, 2023), may gradually lower our collective capacity for the deep, messy, and demanding work of human connection, and our expectation of it, reshaping the meaning of witnessing.

This erosion of relational expectations may not be evenly distributed across society. A more complex dynamic emerges regarding equity. On one hand, AI offers an unprecedented opportunity to democratize access to support, potentially reaching millions who currently face insurmountable barriers to human care (Babushkina & de Boer, 2024). However, without careful governance, this could solidify into a “two-tier witnessing system” driven by economic imperatives. In such a scenario, a “premium tier” of individuals with socioeconomic resources continues to access skilled human witnesses, while a “basic tier” of marginalized populations is disproportionately directed toward algorithmic listeners (Frosh, 2019; Hartford & Stein, 2024). The risk is that the complex and often less-legitimized pain of marginalized communities might be deemed suitable for algorithmic processing, effectively relegating these narratives to non-human ears (Caruth, 1996; McKinney, 2007; Welz, 2016). This delegation of suffering would then function as a societal mechanism for managing distress rather than genuinely addressing it, perpetuating a form of systemic injustice (Crawford, 2021; Honneth, 1995). Therefore, the societal challenge is to ensure that AI serves as a gateway to care rather than a mechanism for exclusion.

Perhaps the most subtle risk lies not in the failures of AI but in its lopsided successes. As AI masters the technical-organizational dimensions of capturing and arranging, a societal risk emerges of collapsing the concept of witnessing into only these functions. This aligns with Crawford’s (2021) warning that the capabilities of our tools often begin to dictate the framing of human needs, sidelining whatever resists quantification. The deeply human dimensions of resonating and embodying are then not dismissed as irrelevant but gradually sidestepped because they do not fit the logic of automation (Fiske et al., 2020). This process creates the erosion of relational presence that Turkle (2015) cautions against, where the conveniences of technology risk stripping the act of witnessing of its reparative power. The danger, therefore, is a gradual devaluation of the relational core of attentive listening, leaving behind only the technically proficient shell of documentation and analysis.

Implications for Research

The starting point of this review was the “crisis of listening,” the severe shortage of human witnesses capable of containing trauma testimony. Given this scarcity, the potential of AI to offer a perpetually available listener represents a profound opportunity that cannot be dismissed on purely theoretical grounds. However, our analysis using the CARE model reveals a complex reality: while AI excels in the technical dimensions (Capturing, Arranging), its capacity in the relational dimensions (Resonating, Embodying) remains an open question. Therefore, the most urgent imperative for future research is not merely to describe how people use these tools (Rousmaniere et al., 2025), but to empirically determine whether the “synthetic” nature of AI witnessing facilitates deep trauma processing and integration or whether it creates a therapeutic dead-end.

We identify several key directions for inquiry:

Testing the “Therapeutic Ceiling” Hypothesis: Following our analysis of Dimensions 3 and 4, rigorous studies must examine whether the “beautiful illusion” of AI empathy (Siddals et al., 2024; Weinstein et al., 2025) is sufficient for processing complex trauma, or if the lack of intersubjectivity and biological co-regulation creates a “ceiling” on effectiveness. Does the interaction serve as a bridge to human connection, or does it trap the survivor in a sterile loop of validation?

Investigating User Experience and Mechanisms: Research should explore the psychological mechanisms (e.g., projection, anthropomorphism) that allow survivors to derive meaning from algorithmic outputs. Specifically, the “empathy paradox” and “label effect” (M. Rubin et al., 2025; Yin et al., 2024) require investigation within trauma populations: Does trauma severity or attachment style moderate the need for a “human” witness?

Developing Measurement Tools: The CARE dimensions offer a conceptual basis for creating validated assessment tools. For example, operationalizing the dimensions into a coding manual would allow for empirical comparisons between AI platforms and human witnesses, isolating specific components (e.g., embodied cues vs. text-only) and comparing AI efficacy against other modalities, such as anonymous human message boards, to understand their distinct contributions to the witnessing experience.

Crucially, the field of trauma research cannot leave this arena vacant. The clinical and ethical knowledge of those who study trauma is essential for guiding these investigations. As users are already adopting these tools, there is an ethical responsibility to move from theoretical critique to empirical evidence, ensuring that this technological solution effectively addresses the crisis of listening rather than deepening it.

Implications for Clinical Practice and Policy

The integration of AI into clinical and support settings demands a new set of ethical guidelines and professional competencies:

Upholding explicit transparency about AI’s limitations: Beyond standard informed consent, clinicians and designers must find accessible ways to communicate AI’s core relational limitations. This means moving beyond academic jargon to explain, in simple terms, that the AI’s “empathy” is a sophisticated simulation, not a genuine feeling, and that it lacks the embodied presence necessary for deep co-regulation. The goal is to prevent the misplaced trust that can arise from the “beautiful illusion” (Siddals et al., 2024), ensuring users have realistic expectations about the nature of the connection they are forming.

Cultivating hybrid clinical competencies: The future of care is hybrid. Clinicians require new competencies to work effectively alongside algorithmic witnesses. This includes skills in “ethical triage,” discerning when to leverage the technical strengths of AI versus when human co-regulation is essential, and the ability to process the unique emotional dynamics of AI-assisted support. Specifically, clinicians must be trained to address the potential guilt or “epistemic shame” survivors may feel about turning to a machine for comfort, validating this as a legitimate coping resource rather than a failure of resilience.

Maintaining vigilance against anthropomorphism: As AI simulations become increasingly convincing (Ayers et al., 2023), a core clinical task is to help clients maintain awareness of the non-human nature of AI to protect them from forming attachments to a system that lacks genuine ethical agency.

Establishing protocols for risk escalation: Given the documented limitations of AI in crisis detection (Scholich et al., 2025), policies must mandate clear “escalation protocols.” AI tools used in trauma contexts must be programmed to identify markers of acute distress and seamlessly bridge the user to human support resources.

Balancing Access and Equity: Policies must proactively address the tension between expanding access and maintaining standards of care. While AI offers a vital solution for the “crisis of listening” by providing immediate support to those currently devoid of it, ethical guidelines must ensure it does not solidify into a “two-tier witnessing system.” The goal should be to use AI to expand the ecosystem of care (e.g., as a preliminary or supplementary witness), rather than institutionalizing a lower standard where marginalized populations are relegated solely to algorithmic listeners.

Limitations

This review has several limitations. First, as a critical narrative review, our approach is interpretative and aims for theoretical synthesis rather than exhaustive coverage. This method is appropriate for developing a new conceptual framework in a nascent field (Mays et al., 2005), but it does not have the same methodological rigor as a systematic review.

Second, the field of generative AI is evolving at a rapid pace; therefore, this review represents a snapshot in time. This limitation applies unevenly across the dimensions of the CARE model. For the technical-organizational dimensions of capturing and arranging, future technological advances will likely mitigate some of the limitations identified, for example, by improving the accuracy of non-verbal cue simulation or the accuracy of pattern recognition. By contrast, for the relational dimensions of resonating and embodying, the core limitations, the absence of genuine subjectivity and of a biological body capable of physiological co-regulation, appear to be fundamental and less likely to be resolved by technological progress alone, representing a more enduring boundary. Finally, our analysis relies on published academic and technical literature, which may not fully capture the entire spectrum of real-world user experiences or the performance of proprietary, non-public AI systems.

Conclusion: Reclaiming Witnessing as a Human Imperative

This review addressed the “crisis of listening,” the gap between the profound need for trauma testimony and the scarcity of human listeners, by proposing the AI CARE model as a framework for evaluating the now-prevalent algorithmic witness. Our dimensional analysis revealed a clear capability spectrum: AI excels in technical functions (Capturing, Arranging) but faces inherent constraints in relational domains (Resonating, Embodying), where the absence of subjectivity raises critical questions regarding the depth of therapeutic impact. This illuminates the critical tension between simulated performance and authentic human presence.

The AI CARE model’s most profound contribution lies in forcing us to confront the boundaries of automation. As we navigate this technological frontier, the future of trauma testimony lies not in choosing between human and algorithmic witnesses, but in creating integrated, hybrid approaches that preserve the irreplaceable elements of human connection while expanding access. Ultimately, the challenge is twofold: verifying whether algorithms can effectively sustain the weight of testimony, and ensuring that we, as a society, do not allow technological convenience to erode our fundamental responsibility to bear witness to one another. While technology offers a powerful tool for addressing the crisis of listening, it cannot fully replace the renewed commitment to reclaiming the deep human practice of bearing witness to suffering across all its necessary dimensions.

Supplemental Material

sj-docx-1-tva-10.1177_15248380261425937 – Supplemental material for Algorithmic Witnessing: A Narrative Review and the AI CARE Framework for Trauma Testimony

Supplemental material, sj-docx-1-tva-10.1177_15248380261425937 for Algorithmic Witnessing: A Narrative Review and the AI CARE Framework for Trauma Testimony by Dorit Hadar Shoval, Yuval Haber, Elad Refoua, Zohar Elyoseph, Karen Yirmiya and Dror Yinon in Trauma, Violence, & Abuse

Footnotes

Ethical Considerations

Ethical approval was not required for this narrative review as it is based on previously published literature.

Author Contributions

Conceptualization: D.H.S., Y.H., E.R., Z.E., K.Y., D.Y. Methodology: D.H.S., Y.H., E.R., Z.E., K.Y., D.Y. Investigation: D.H.S. Writing – Original Draft: D.H.S. Writing – Review & Editing: D.H.S., Y.H., E.R., Z.E., K.Y., D.Y.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing is not applicable to this article as no new data were created or analyzed in this study.

Declaration of Generative AI and AI-Assisted Technologies in the Writing Process

During the preparation of this work, the authors used GPT-4o and Gemini 2.5 Pro to revise some of the sentences for clarity. After using these tools, the authors reviewed and edited the content as needed and take full responsibility for the content of the publication.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.