Abstract

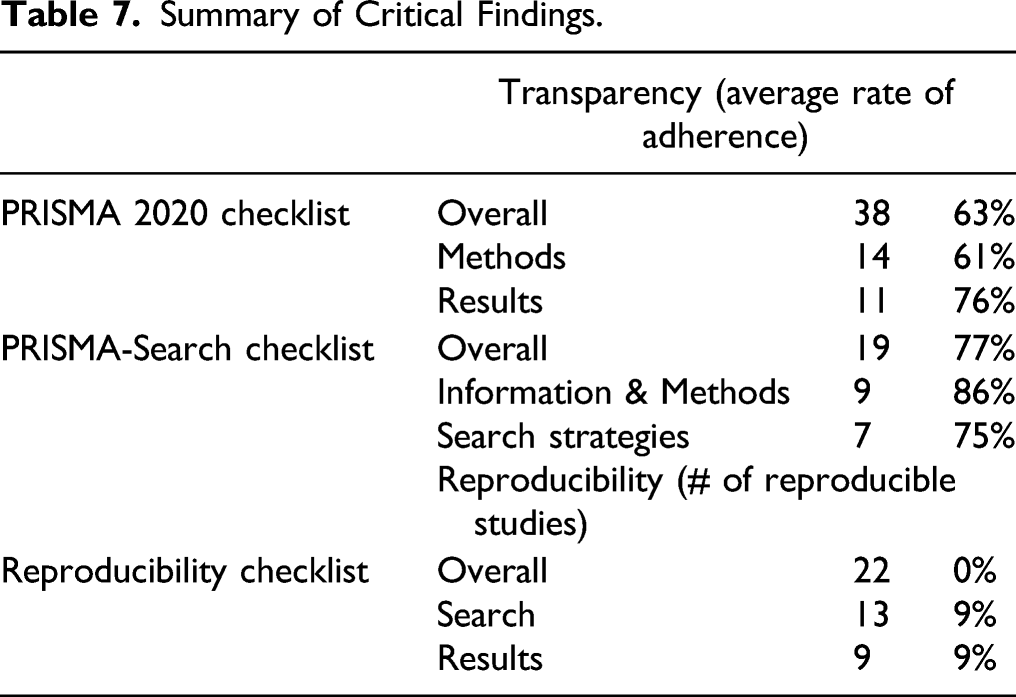

While assessments of transparent reporting practices in meta-analyses are not uncommon in the field of health sciences interventions, they are limited in the social sciences and to our knowledge are non-existent in criminology. Modified PRISMA 2020 checklists were used to assess transparency and reproducibility of reporting for a sample of 33 meta-analyses of intervention/prevention evaluations published in scholarly journals between 2016 and 2021. Results indicate that the average rate of transparent reporting practices was 63%; adherence varied considerably across studies and subscales, with low rates of adherence for some core checklist items. Overwhelmingly, studies were not reproducible in their entirety; article word count was significantly correlated with reproducibility (r = 0.4028, p < .03). These findings suggest that substantial changes to reporting practices are necessary to meet traditional meta-analytic claims of transparency and reproducibility. Study limitations include sample size, coding instruments, and coding subjectivity.

Meta-analysis is an increasingly popular quantitative research synthesis technique (see Williams et al., 2017) that involves explicit and systematic methods to identify a population of relevant studies and statistically synthesize results across studies to produce an average effect. The technique differs from a traditional narrative literature review, in which findings from a non-systematically generated set of studies are discussed and summarized qualitatively. While meta-analysis is not without its criticisms (e.g., Bailar, 1997; Berk, 2007), proponents argue for its strengths with respect to decreasing bias in study identification (e.g., Thompson & Belur, 2016), utility in summarizing large and/or disparate bodies of research (e.g., Siddaway et al., 2019; Wilson, 2001), and proficiency in addressing the oft-cited concern of “proliferation without accumulation” (e.g., Wells, 2009), in which the volume of studies on a given topic increases but definitive knowledge does not increase accordingly.

When compared with traditional forms of research synthesis such as narrative review and vote-counting, meta-analysis has several advantages (see Borenstein et al. (2009) for a more detailed overview). These include, primarily, (a) greater precision in estimating the effect in question (through converting individual study results into a common metric to enable cross-study pooling; Wilson, 2001), (b) a focus on the direction and size of each study’s treatment impact rather than on statistical significance (which recognizes that non-significant results are rarely effects of zero and that statistical significance is often a function of sample size; Wells, 2009), (c) the ability to quantitatively examine potential causes of variations in treatment effect magnitude (such as the impact of study, treatment, measurement, or participant characteristics; Williams et al., 2017), (d) increased objectivity regarding study selection and inclusion in the analysis by way of a pre-defined set of criteria (Thompson & Belur, 2016), and (e) methodological transparency which limits hidden biases and assumptions and enables replication of the research by others (Gough et al., 2017; Lipsey & Wilson, 2001; Siddaway et al., 2019). With respect to (e), methodological transparency suggests that, if provided with the complete list of inclusion/exclusion criteria and sources for study identification (i.e., bibliographic databases, grey literature sources), along with the set of methodological decisions concerning effect size calculation and study pooling, independent researchers could theoretically reproduce the findings of the original study. The purpose of this paper is to examine whether recent journal publications of meta-analyses in the field of criminology meet the expectations of transparency and reproducibility. Given that meta-analyses are often highly cited resources which are presumed to present the most comprehensive summative evidence in a field, ensuring that these reports are explicit about subjective research decisions, and that implementation of these decisions is verifiable by third parties, is essential.

Transparency and Reproducibility

That meta-analyses are both transparent and reproducible are common narratives in the literature when touting the strengths of meta-analysis in comparison to other forms of research synthesis. A quick foray into classic meta-analysis handbooks and renowned authors in the field highlights these claims. For example, Lipsey and Wilson (2001), in Practical Meta-Analysis, Good meta-analysis is conducted as a structured research technique in its own right and hence requires that each step be documented and open to scrutiny…. By making the research summarizing process explicit and systematic, the consumer can assess the author’s assumptions, procedures, evidence, and conclusions rather than take on faith that the conclusions are valid. (p. 5–6)

Likewise, as noted by Borenstein et al. (2009) in Introduction to Meta-Analysis, …because all of the decisions are specified clearly, the mechanisms are transparent…. While the reviewers and readers may still differ on the substantive meaning of the results (as they might for a primary study), the statistical analysis provides a transparent, objective, and replicable framework for this discussion. (p. xxiii)

Transparency and reproducibility are overlapping but not synonymous constructs. “Transparency” refers to the completeness of reporting in a given meta-analysis document, such that all methodological elements, decision points, findings, and conclusions are presented in full. Meta-analysis requires an elaborate sequence of steps; not surprisingly, studies vary in their degree of transparency in reporting of processes and decisions concerning the literature search, inclusion criteria for candidate studies, and methods for computing effect sizes and quantitatively pooling the results. Further, despite the systematized processes in literature searches and cross-study pooling that are inherent to meta-analysis, syntheses range in overall quality.

“Reproducibility” is whether or not the results of a given meta-analysis would be possible to reproduce in their entirety, based on the details presented in the original report (see Lakens et al., 2016). While many elements of transparency are also required for reproducibility, some, such as multi-coder involvement in data extraction, implications of the findings, and financial support for the study are not. On the other hand, reproducibility requires a level of information not traditionally expected when it comes to transparency, such as study-level detail on effect size computation and related decisions on sample size if the primary report is not clear. As per Williams et al. (2017), “With so many potential sources of variance across these decisions, it is easy to imagine investigators coming to conclusions that differ, at least slightly” (p. 269). Reproducibility is possible only if meta-analyses present all decision and data points in full.

Why do Transparency and Reproducibility Matter?

As meta-analyses purportedly represent the summative state of the current literature on a given topic, they are often widely read, influential documents (Lakens et al., 2017; Polanin et al., 2020). While transparency in all forms of research is important, it is even more indispensable for meta-analysis. Critics contend that given the large series of subjective decision-making steps involved in such syntheses, meta-analyses may produce results that are misleading at best and erroneous at worst (Ferguson & Kilburn, 2010; Ioannadis, 2016). Further, obscurity concerning methodological processes has been raised as a validity concern (Gotzsche et al., 2007; Jones et al., 2005; Lakens et al., 2017). For example, a meta-review by Ford et al. (2009) reported a 100% error rate across eight systematic reviews of interventions to treat irritable bowel syndrome; errors included the use of ineligible trials according to the stated review inclusion criteria, the omission of trials that should have been included (according to stated inclusion criteria), errors in data extraction, and errors in pooled treatment impact calculation. Given the critical importance of the consolidation of existing literature to our understanding of current knowledge, as well as the “replication crisis” often lamented in the field (e.g., Losel, 2018; Pridemore et al., 2018), the production of valid and robust meta-analyses is imperative.

Importantly, we note that the issue of transparent and reproducible reporting in meta-analysis is distinct from the issue of meta-analysis methodological quality. While in some cases, the two may be linked—that is, a low-quality study may be characterized by low-quality reporting (e.g., Tunis et al., 2013), it is certainly possible for a high-quality study to display a lack of transparency in its reporting of methods and findings. Similarly, a low-quality study suffering from methodological concerns may adhere to high standards of reporting. Systematic review and meta-analysis involve a series of sequential steps in which subjective decision-making is introduced repeatedly; different analysts often make different choices at each decision point and whether a given study is “high” versus “low” quality with respect to some of these decisions is up for debate. Far less debatable is the fact that high-quality, transparent reporting in meta-analysis allows for third party reviewers and readers to ascertain study quality themselves.

Lakens et al. (2016) list additional benefits to reproducibility, including the potential for independent analysts to subsequently adjust study inclusion criteria or methods of effect size calculation and conduct new analyses. These new analyses may result in different conclusions than the first synthesis, similar to the frequent finding in primary study replication wherein the results of the original study are not supported (see, for example, Derry et al. (2006) on the null impacts of acupuncture once inclusion criteria for the synthesis are limited to randomized, double-blind trials). Another benefit to complete transparency in reporting is the increased ease for future meta-analytic updates as new empirical research on the topic is published, or as new analytic techniques are developed which may be utilized to increase the validity of effect size estimation or study aggregation (Lakens et al., 2016; Polanin et al., 2020).

Reporting Guidelines for Meta-Analyses

Reporting guidelines for systematic reviews and meta-analyses are not new; multiple frameworks exist such as the MARS (Meta-Analysis Reporting Standards; Cooper, 2010) and the Campbell Collaboration’s MEC2IR reporting standards (The Methods Group of the Campbell Collaboration, 2019). Other frameworks focus on assessing the methodological quality of systematic reviews and meta-analyses; most notably AMSTAR (A MeaSurement Tool to Assess systematic Reviews; Shea et al., 2017). With respect to reporting guidelines, arguably the most widely-used checklist is the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Page et al., 2021a, 2021b). The PRISMA statement was designed to be used by authors of systematic reviews and meta-analyses to improve reporting, and by journal peer reviewers and editors as a tool for critical appraisal of the reporting of systematic reviews.

The PRISMA 2020 statement and associated checklists were released in March 2021; an update from the previous guidelines published in 2009 (available at http://www.prisma-statement.org/PRISMAStatement/PRISMAStatement). The updated guidelines contain few substantive differences in terms of actual content to be reported; rather, the 2020 guidelines include expanded subsections for coding of key elements noted in the 2009 guidelines. The 2009 statement was “widely endorsed and adopted, as evidenced by its co-publication in multiple journals, citation in over 60,000 reports (Scopus, August 2020), endorsement from almost 200 journals and systematic review organizations, and adoption in various disciplines” (Page et al., 2021a). The 2020 checklist contains seven sections (e.g., Introduction, Methods, Results) and a total set of 42 checklist items (Page et al., 2021a; 2021b). In addition, the PRISMA statement contains a series of “extensions”, meant to facilitate reporting guidelines for different types or aspects of meta-analyses/systematic reviews. Most relevant to the current study is the 16-item “PRISMA for Searching” checklist, which provides an expanded reporting checklist for the information sources and search strategies involved in a systematic review (Rethlefsen et al., 2021).

Prior Research Examining Transparency and Reproducibility

With respect to PRISMA reporting guidelines, research examining systematic reviews and meta-analyses find low adherence to the guidelines overall. For example, Sun et al. (2019) examined 64 systematic reviews and meta-analyses of nursing interventions for Alzheimer’s patients by scoring studies on the PRISMA 2009 checklist. The mean PRISMA score across all studies was 19.3 out of a possible 27 points (SD = 4.17), with six items reported in less than 50% of the studies. Similarly, Peters et al. (2015) used the PRISMA checklist to rate meta-analyses of otorhinolaryngologic articles published in the top five Ear Nose Throat journals. Peters and colleagues found a median score of 54.4% on the checklist overall, with a lower adherence rate for the PRISMA-Abstracts checklist (41.7%; a separate checklist of recommendations for transparent reporting in abstracts). Other research examining PRISMA-Abstracts has reported similarly low rates of checklist compliance, including Tsou and Treadwell (2016) with 60% of items reported on average across 200 reviews, and Maticic et al. (2019) with a mean adherence rate of 42% across 244 studies.

Research on transparency and/or reproducibility in the social sciences is relatively limited and has predominantly been conducted in the field of psychology (e.g., Aytug et al., 2012; Brugha et al., 2012; Dieckmann et al., 2009). With respect to reproducibility, Lakens and a team of 14 colleagues (2017) attempted to reproduce 20 published meta-analyses in the psychological sciences. The authors noted “there was unanimous agreement across all seven teams involved in extracting data from the literature that reproducing published meta-analyses was much more difficult than we expected” (p. 8). Results suggest that 25% of the studies could not be reproduced at all due to missing data, and, of the remaining studies, there were frequently differences between the effect sizes calculated by Lakens et al. and those reported by the original authors due to lack of reporting of effect size conversion equations used, lack of clarity on sample sizes, and so forth. With respect to transparency, Hohn et al. (2020) examined reporting practices in a random sample of 345 psychological meta-analyses published between 2009 and 2014. Using 45 items from the Quality Assessment for Systematic Reviews—Revised (QUASR-R; Slaney et al., 2017), the authors compared the actual practices of studies (e.g., search methods) versus those that were reported, and identified several areas of concern. Major gaps in reporting included primary study quality (reported by 36% of the 345 reviews), type of meta-analytic model used (e.g., random effects, fixed effects; reported by 80%), and power analyses (reporting almost non-existent).

Most relevant to the current study is a recent article by Polanin et al. (2020), who examined transparency and reproducibility in published meta-analyses in the journal Psychological Bulletin over a 30-year period (1990–2020). Based on the PRISMA checklist, Polanin and colleagues developed a 34-item checklist to represent important components of transparency and reproducibility, then scored 150 studies on the checklist. The authors found relatively weak adherence overall; just over half (55%) of all checklist items were reported in each study. Some examples of Polanin et al.’s specific findings are that only 58% of authors reported their source of funding, 2% reported using a systematic review protocol, 64% defined the criteria for study population eligibility, 95% listed eligible outcome measures, 77% specified the search terms used in databases, 48% reported dates of searches, 77% reported on data transformations, and 57% mentioned publication bias.

The Current Study

While prior research in health sciences and psychology suggest that the transparency and reproducibility of meta-analyses is low, to date no study has examined meta-analyses in criminology. The goal of the current study is to conduct a preliminary inquiry to examine whether criminological meta-analyses published in scholarly journals meet the traditional claims of transparency and reproducibility, by (1) scoring the extent to which best practices in transparent reporting are followed based on the PRISMA 2020 checklist and PRISMA-Search checklist, and (2) using a PRISMA-based Reproducibility checklist to assess overall study reproducibility and identify any common barriers. Importantly, we note that the PRISMA 2020 guidelines were not in existence when any of the studies in the current sample were published. We underscore that the purpose of the current study is not to rate meta-analysts on how well they adhered to reporting guidelines that were not yet established, nor is it to criticize authors for failing to observe existing guidelines. Rather, we examine how closely recent meta-analyses in criminology adhere to one example of state-of-the-art reporting guidelines, and identify where areas for improvement exist. We reiterate that whether a meta-analysis is reported in a manner that is highly transparent, and/or in a manner that would allow reproduction by others, should not be confused with a measure of the quality of the meta-analysis or its methodology (e.g., see the AMSTAR 2 checklist by Shea et al., 2017); it is a reflection of the manner in which it was reported.

Methods

Data

To locate recently published meta-analyses of intervention/prevention evaluations in the field of criminology, we searched Criminal Justice Abstracts (EBSCOhost) with date limiters January 1, 2016 to March 10, 2021. The following terms were combined in an Abstract search: “meta analysis” AND (recidiv* OR arrest* OR charge* OR convict* OR incarcerat* OR offend* OR offense* OR offence* OR crim*) 1 . Given the general difference in conceptual orientation, we limited the inclusion of studies to those presenting a meta-analysis of intervention/prevention program evaluations, as opposed to a meta-analysis of risk factors, attitudes, behaviors, actuarial assessments (e.g., the Static-99), drug treatments, and so forth. In addition, as we were interested in the transparency of reporting of meta-analyses published in scholarly journals, we limited studies to those published in peer-reviewed journals (as opposed to technical reports or Campbell Collaboration reports that can range up to 100+ pages). The nature of the planned analyses and presentation of results was at the individual study level; this was to enable a preliminary assessment of transparency and reproducibility, demonstrate study-level similarities and differences, and ascertain potential areas for improvement. We emphasize that the goal of the search was to identify a fairly small set of recent meta-analyses in the field of criminology; the search was not structured to be a systematic literature search across numerous potentially relevant databases and grey literature sources.

Instrumentation

(1) Transparency.

The PRISMA 2020 checklist and the PRISMA-Search checklist were used to assess the transparency of reporting across the sample of studies. Each checklist is described below.

PRISMA 2020

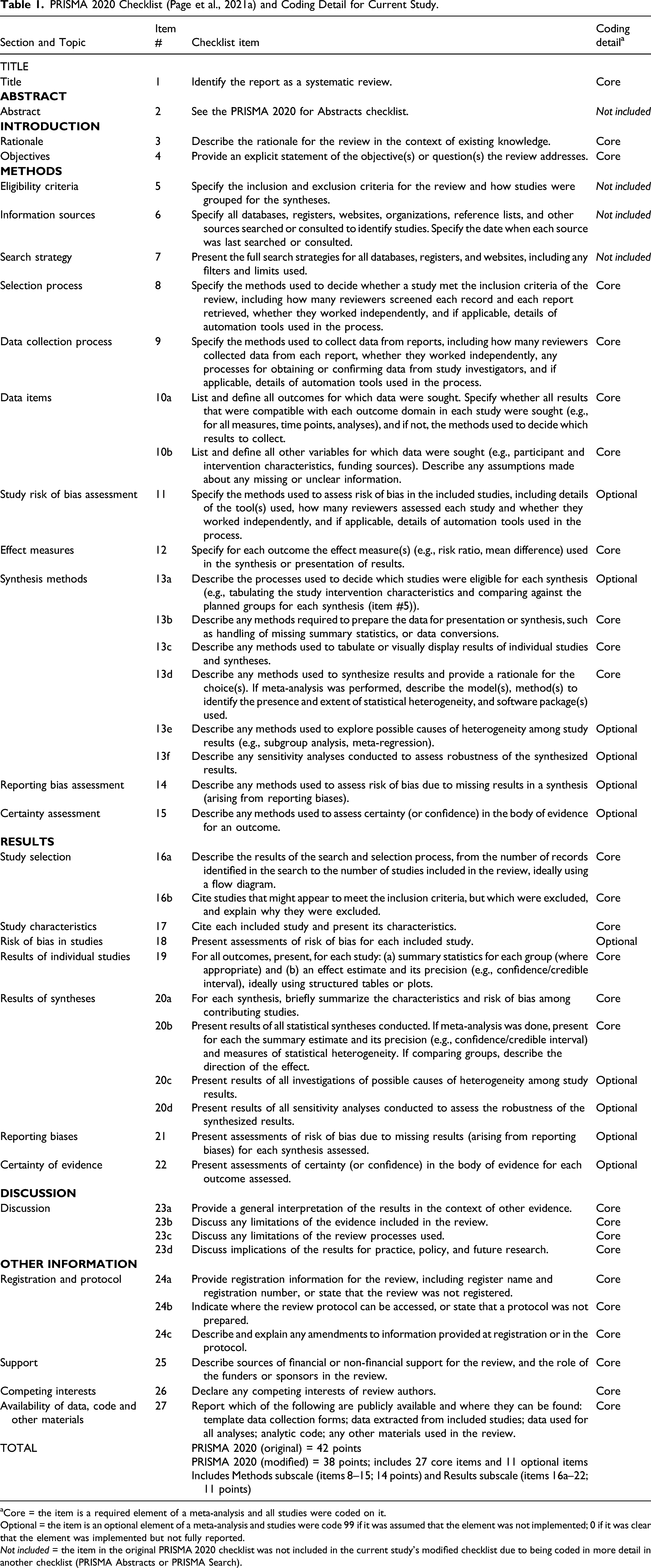

PRISMA 2020 Checklist (Page et al., 2021a) and Coding Detail for Current Study.

aCore = the item is a required element of a meta-analysis and all studies were coded on it.

Optional = the item is an optional element of a meta-analysis and studies were code 99 if it was assumed that the element was not implemented; 0 if it was clear that the element was implemented but not fully reported.

Not included = the item in the original PRISMA 2020 checklist was not included in the current study’s modified checklist due to being coded in more detail in another checklist (PRISMA Abstracts or PRISMA Search).

Of the 38 PRISMA 2020 items, we consider 27 to be “core” reporting items for a meta-analysis, and 11 items to be “optional.” Core reporting items are those that are central to the conduct of a basic systematic review and meta-analysis (see Polanin et al. (2020) for a discussion of mandatory vs. optional criteria). For example, core items include #12: description of outcome effect measures, #16a: results of the search and selection process, and #22d: a discussion of any limitations of the evidence included in the review.

Optional reporting items are steps that may certainly improve the quality of a meta-analysis if they are implemented, but that are not indispensable to complete a basic synthesis of the literature (and in some cases may not be feasible given a small set of studies). These include item #11: specifies methods used to assess risk of bias in included studies, #13f: describes any sensitivity analyses used, #20d: presents results of heterogeneity investigations, and #22: presents results of certainty in the body of evidence for reach outcome assessed. Full details on core versus optional reporting items are presented in Table 1.

PRISMA-Search

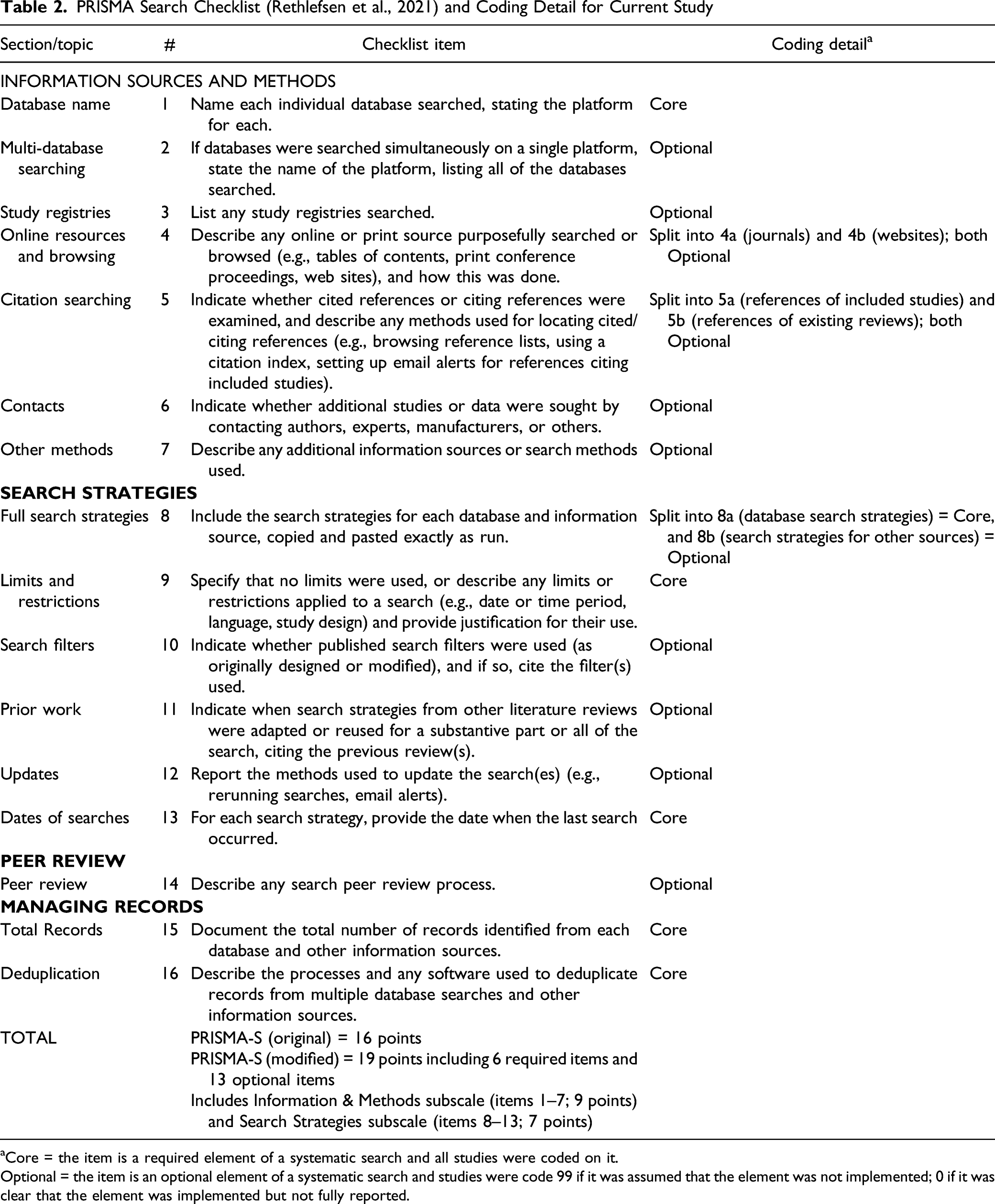

The PRISMA-Search checklist is an extension of the PRISMA 2020. Specifically, the checklist encompasses 16 items, for example, #1: database names, #5: citation searching, and #7: full search strategies. To avoid issues associated with coding double-barreled items, we modified this checklist by adding subcategories to three items as follows: We split item #4: Online resources and browsing into (a) hand-searched journals and (b) websites, we split item #5: Citation searching into (a) reference lists of included studies and (b) reference lists of existing reviews, and we split item #8: Full search strategies into (a) database search strategies and (b) search strategies for other sources.

For the modified PRISMA-Search checklist, the total possible score was 19 points. In addition, we computed sub-scores for “Information and methods” (subtotal = 9 points), and “Search strategies” (subtotal = 7 points). Both core (n = 6; e.g., #1: name each individual database searched; #9: limits and restrictions) and optional (n = 13; e.g., #3: list any study registries searched; #6: indicate whether studies were sought by contacting authors or experts) reporting items were included. See Table 2 for the complete checklist and modifications made. (2) Reproducibility. PRISMA Search Checklist (Rethlefsen et al., 2021) and Coding Detail for Current Study aCore = the item is a required element of a systematic search and all studies were coded on it. Optional = the item is an optional element of a systematic search and studies were code 99 if it was assumed that the element was not implemented; 0 if it was clear that the element was implemented but not fully reported.

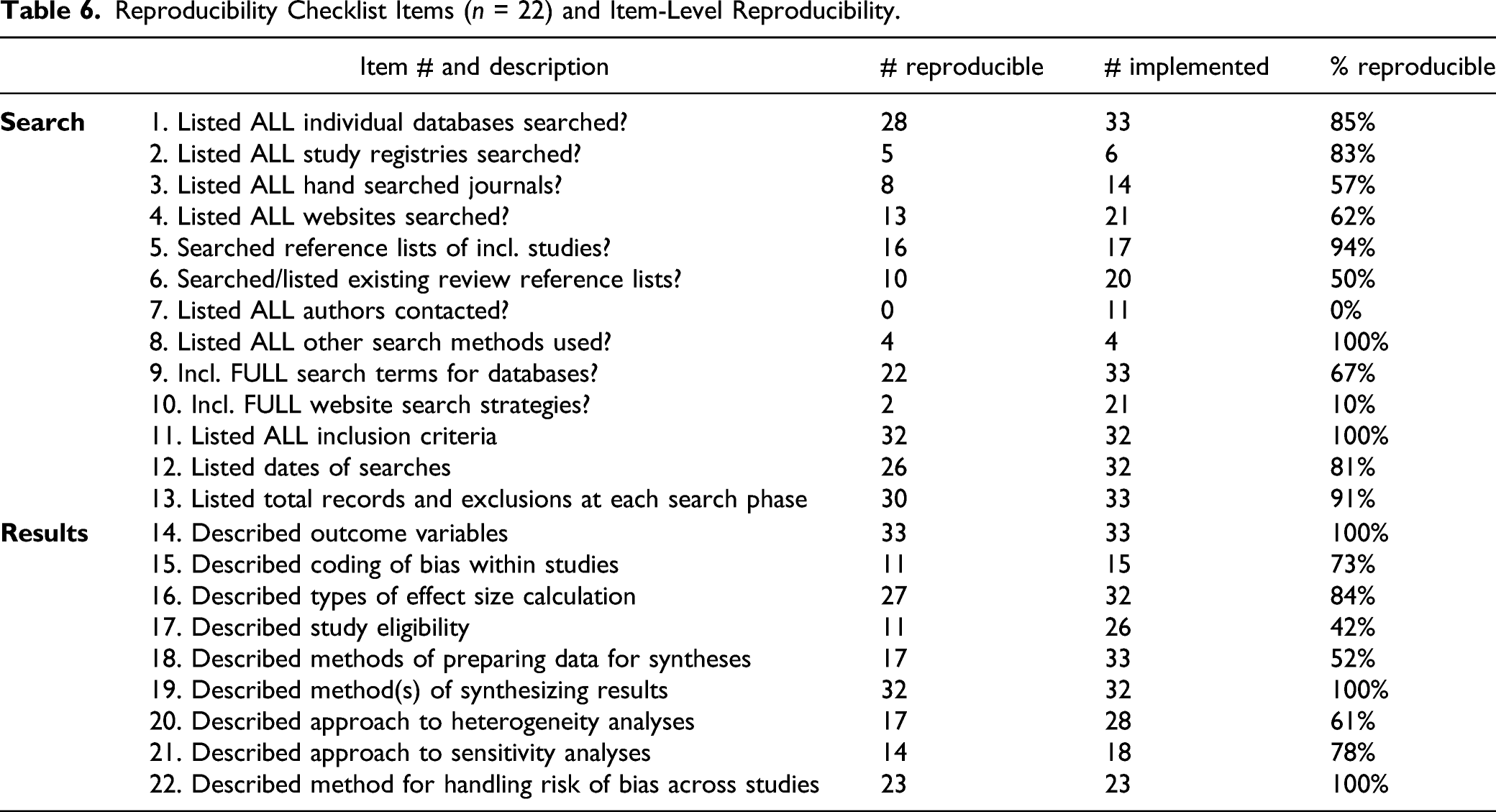

A checklist to assess study reproducibility was developed based on the PRISMA 2020 checklists. The Reproducibility checklist is intended to assess whether the meta-analysis could be reproduced using the information presented in the article; this checklist is notably shorter than the full 38-item PRISMA 2020 checklist because as previously noted not all elements of transparency are necessary for study reproduction. Importantly, we did not actually attempt to replicate any of the searches, effect size calculations, or meta-analyses as this task was beyond the scope of the paper and we were not focused on attempting to validate the procedures or results of the included studies. Rather, we reviewed each study and, based on our prior experience in coding for and conducting multiple meta-analyses and our familiarity with the necessary level of detail for various data points, we determined whether sufficient information was reported to enable us to reproduce the study element. The 22-item checklist contains two sections (Search; n = 13 items and Results; n = 9 items); see Table 6.

Coding

All coding was completed by the two study authors. The first author coded all studies in the sample, and the second author repeated the same process in order to validate the initial codes. Discrepancies and disagreements between the first and second round of coding were discussed and multiple iterations of coding were conducted in order to reach 100% consensus on codes across all studies. (1) Transparency.

Each study was coded on all PRISMA checklist items using a scoring system in which 0 = implemented but not reported at all, 0.5 = implemented but partially reported, and 1 = implemented and adequately reported. As it is largely uncommon for authors to explicitly report that they did not implement a step in a meta-analysis, for the checklist items that we designated as optional we coded 99 if the item was not reported. In other words, in many cases we assumed that a study did not report on a PRISMA checklist item because the authors did not implement the item.

3

This coding is distinct from situations involving a clear reporting omission from an item such as study eligibility criteria, which would have been coded as 0 or 0.5. (2) Reproducibility.

Scoring options were 0/1 with respect to whether the article reported the checklist item in sufficient detail for it to be reproduced by third party authors. 4 Again, the code 99 denotes that there was no evidence an optional component was implemented by the study authors.

Analytic Approach

We assessed the transparency of reporting for each study by summing the scores across all items and computing the adherence rate (i.e., sum score/total possible items). Rates were computed for the PRISMA 2020 and PRISMA-Search checklist scores, as well as the two associated subscales in each. With respect to the calculation of adherence rate, the denominator of each study (i.e., total possible items) was reduced by omitting the “99” codes. 5 As such, the rate of adherence is not impacted by unreported, optional checklist items.

Similarly, we assessed the reproducibility of each study by determining whether sufficient information was presented in the report to enable full reproduction of each reported component. If yes, the item was scored as 1; if no, the item was scored as 0. Any 0’s indicate that the study is not reproducible at that particular step. The number of 1s and 0s for each study were tallied, to give an overall indication of which components were the most and least reproducible.

Last, to assess the potential impact of journal page limitations on transparency and reproducibility, we examined the correlation between checklist scores and length of article. The word count for each article’s .pdf file was calculated using the “counting characters” extension in Google Chrome. 6

Results

Search Results

The search resulted in 145 hits, of which 33 were meta-analyses of intervention/prevention programs published from January 2016 to February 2021 and were selected for inclusion. Almost all of the meta-analyses were published in criminology journals such as Aggression & Violent Behavior, Criminal Justice & Behavior, and Journal of Experimental Criminology (n = 31). One was published in Psychiatry, Psychology, & Law, and one was published in Addiction. The types of interventions examined were diverse; for example, crisis intervention teams, music therapy in correctional settings, protection orders for domestic violence, and electronic monitoring. The outcomes examined were primarily recidivism and crime; some studies measured alternative outcomes such as psychological functioning, educational attainment, and police officer use of force. All included studies are denoted with an asterisk in the References section.

Transparency

PRISMA 2020 Checklist

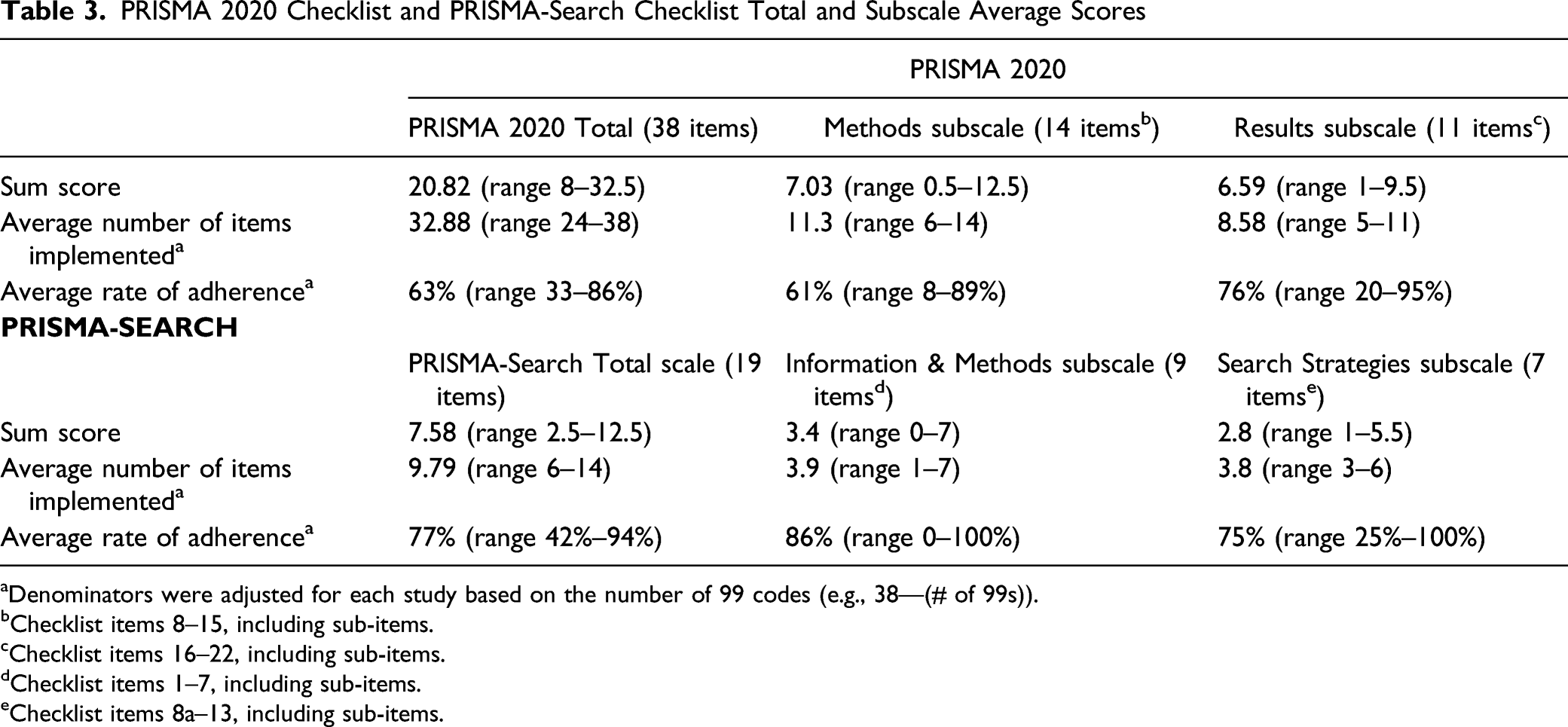

PRISMA 2020 Checklist and PRISMA-Search Checklist Total and Subscale Average Scores

aDenominators were adjusted for each study based on the number of 99 codes (e.g., 38—(# of 99s)).

bChecklist items 8–15, including sub-items.

cChecklist items 16–22, including sub-items.

dChecklist items 1–7, including sub-items.

eChecklist items 8a–13, including sub-items.

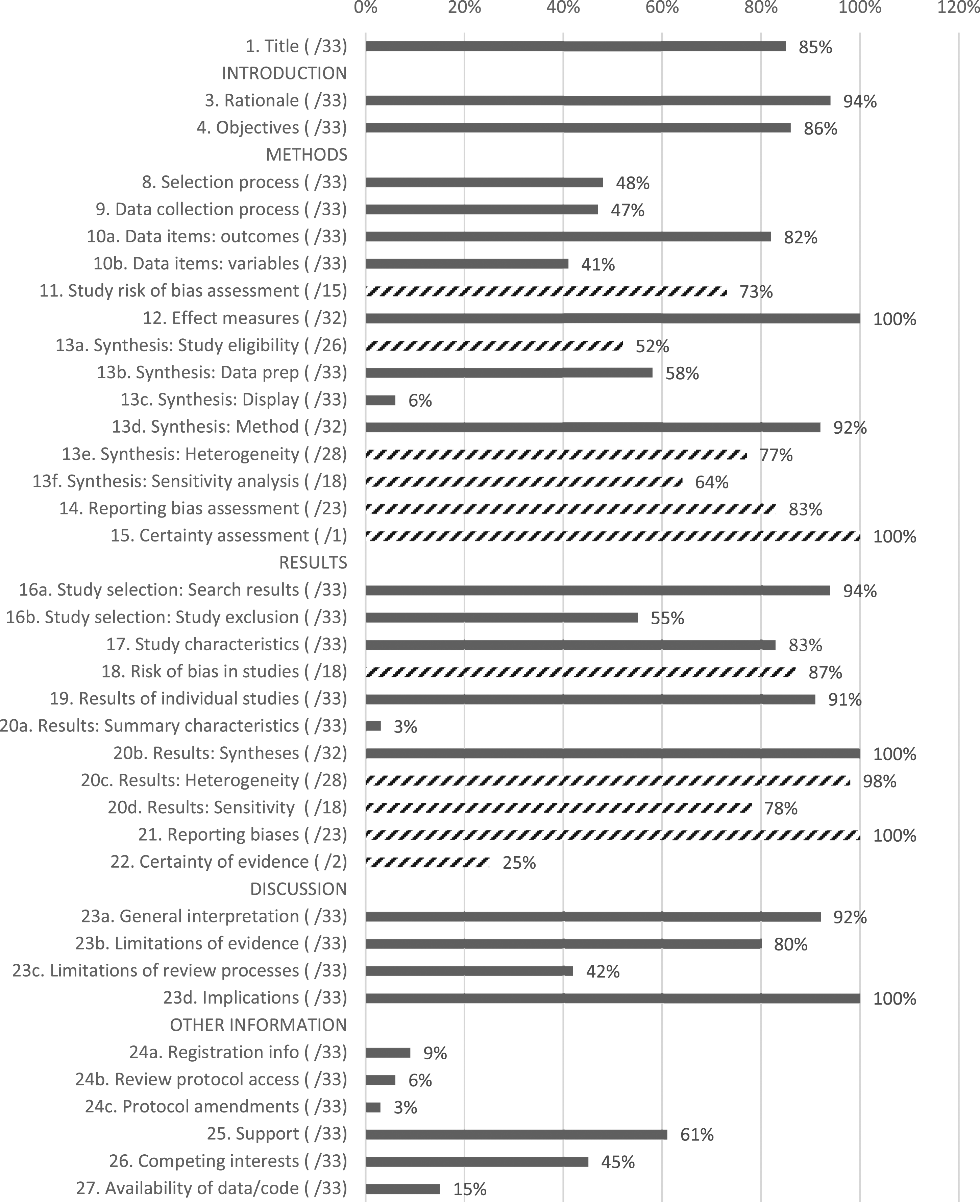

Figure 1 shows the item adherence scores across the 38 PRISMA 2020 items. With respect to the 27 “core” items, while the majority of studies received high scores on adherence to items such as study rationale (94%), objectives (86%), type of effect measures used (100%), and type of results synthesis (92%), other items had substantially lower rates of reporting. In particular, few studies described their approach to displaying results (6%), presented summary characteristics of each pooled analysis (3%), mentioned whether or not the study had a registered protocol (9%), or mentioned the availability of data/code (15%). PRISMA 2020 Average Item Adherence Scoresa,b,c. aItem descriptions available in Table 1; as noted in the Methods section, PRISMA 2020 items #2, #5, #6, and #7 were not included. bNumbers in parentheses represent the number of studies reporting on the item; n = 33 for all core reporting items, and variable counts for the optional items. For example, 15 studies implemented checklist item #11. cDiagonal bar lines represent optional reporting items.

Figure 1 differentiates the 11 “optional” PRISMA 2020 checklist items with diagonal lines in the bar chart. In general, studies that implemented optional components tended to report adequately on these components. For example, of the 15 studies that implemented a study-level risk of bias assessment, 73% adequately described the methodological approach. Similarly, of the 18 studies that implemented a sensitivity analysis, 64% adequately reported the methodological approach and 78% adequately reported the analytic results.

PRISMA-Search Checklist

Results for the PRISMA-Search checklist indicate that none of the 33 studies implemented all 19 items on the checklist. As shown in Table 3, the average sum score was 7.58 (range 2.5–12.5 items), and the average number of items implemented was 9.79 (range 6–14). Across the 33 studies, the average transparency of reporting was 77% (range 42%–94%). Subscale scores for the PRISMA-Search checklist are also shown in Table 3. For the “Information & Methods” subscale, the average rate of adherence was 86% with a range of 0%–100%, while the average adherence score for “Search Strategies” was 75%; range 25%–100%.

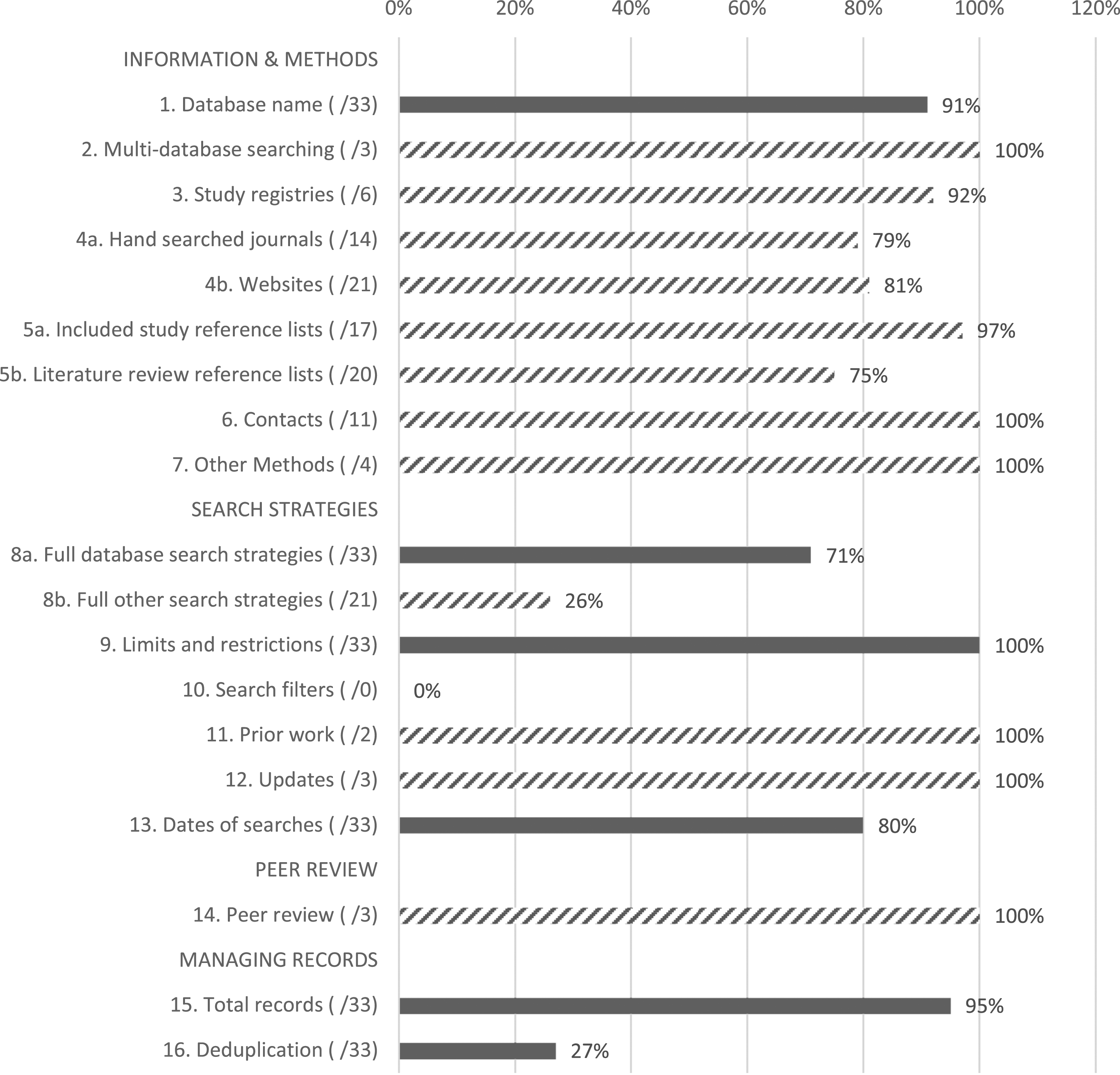

Figure 2 demonstrates the average adherence of reporting for each of the 19 individual items on the PRISMA-Search checklist. The majority of the items were optional, and are indicated by diagonal lines in the bar chart. For the core reporting items, adherence was highest for item #9: limits and restrictions for included studies (100%), item #15: total number of records documented (95%), and item #1: database names listed (91%). Reporting was somewhat lower for item #13: dates of each search (80%), item #8a: inclusion of full database search strategies (71%), and item #16: description of search records deduplication method (27%). For the optional checklist items, reporting adherence rates were lowest for search filters (item #10; 0%), and listing complete search strategies from non-database sources (item #8b; 26%). In general, high rates of adherence were shown for the optional checklist items, although many items were not implemented by the majority of studies in the set. For example, only six studies reported searching study registries (item #3), four studies reported other methods of searching for literature (item #7), and three studies noted using peer review for their search strategy (#14). PRISMA-Search Average Item Adherence Scoresa,b. aNumbers in parentheses represent the number of studies reporting on the item; n = 33 for all core reporting items, and variable counts for the optional items. bDiagonal bar lines represent optional reporting items.

Reproducibility

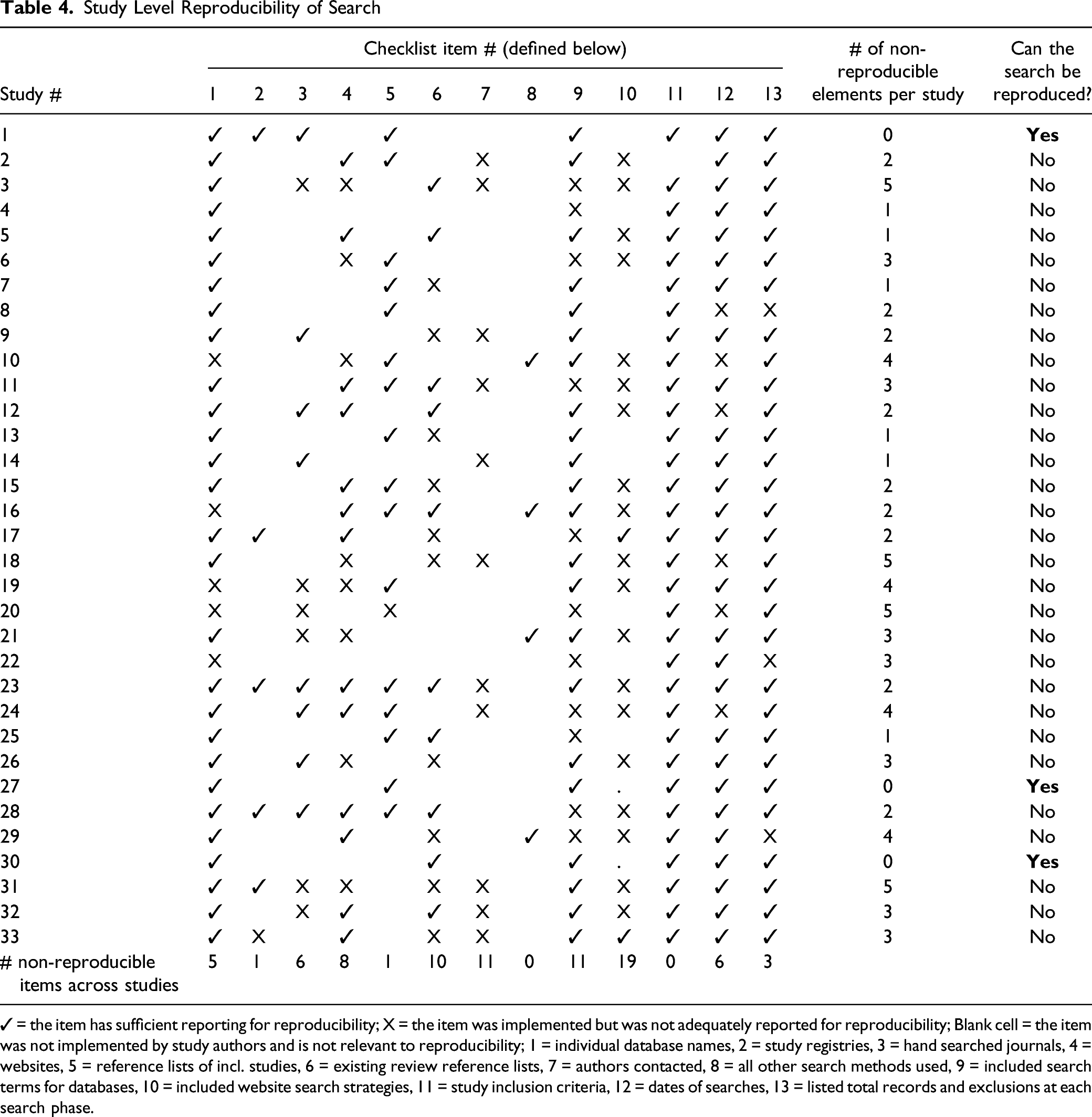

Study Level Reproducibility of Search

✓ = the item has sufficient reporting for reproducibility; X = the item was implemented but was not adequately reported for reproducibility; Blank cell = the item was not implemented by study authors and is not relevant to reproducibility; 1 = individual database names, 2 = study registries, 3 = hand searched journals, 4 = websites, 5 = reference lists of incl. studies, 6 = existing review reference lists, 7 = authors contacted, 8 = all other search methods used, 9 = included search terms for databases, 10 = included website search strategies, 11 = study inclusion criteria, 12 = dates of searches, 13 = listed total records and exclusions at each search phase.

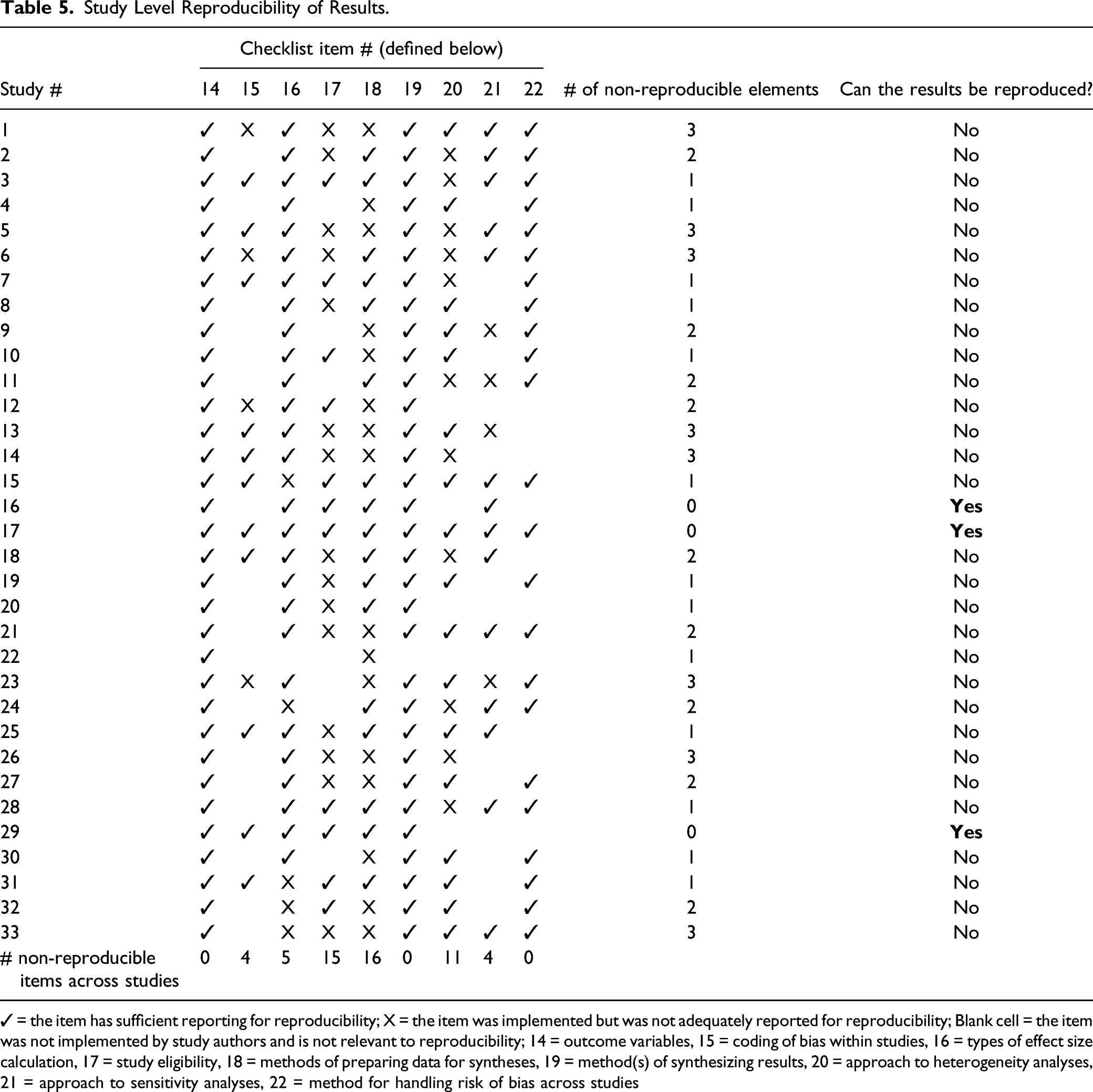

Study Level Reproducibility of Results.

✓ = the item has sufficient reporting for reproducibility; X = the item was implemented but was not adequately reported for reproducibility; Blank cell = the item was not implemented by study authors and is not relevant to reproducibility; 14 = outcome variables, 15 = coding of bias within studies, 16 = types of effect size calculation, 17 = study eligibility, 18 = methods of preparing data for syntheses, 19 = method(s) of synthesizing results, 20 = approach to heterogeneity analyses, 21 = approach to sensitivity analyses, 22 = method for handling risk of bias across studies

Taken together, while three studies presented a reproducible search strategy and three other studies provided reproducible meta-analytic results, none of the 33 meta-analyses in our sample would be reproducible in their entirety. The average total number of non-reproducible elements (i.e., Search and Results) ranged from 1 to 7; with the average study stopping short of reproducibility by approximately 4 items (M = 4.12, SD = 1.57).

Reproducibility Checklist Items (n = 22) and Item-Level Reproducibility.

For example, study #10 did not report on four of the nine search items implemented. While the authors listed three examples of the 18 databases that they searched (including Criminal Justice Abstracts, National Criminal Justice Reference Service, and Web of Science), this is insufficient for third party reproduction based on the published article alone. Similarly, the authors present examples of websites that they searched but do not provide a complete list; further, they do not specify the search terms used in website searches, and do not report the specific dates of all searches implemented.

The most common roadblocks for reproducibility of the meta-analytic results were items #18 (“describes data preparation”; only 16 of 33 studies (48%) reported this item sufficiently), #17 (“describes study eligibility for different analyses”; 15 out of 26 studies (58%) did not sufficiently report this item), and #20 (“describes approach to any heterogeneity analysis”; 11 out of 28 studies (39%) did not report the methodology in a way to enable reproduction of results).

Article Length, Transparency, and Reproducibility

Last, given that reporting transparency and reproducibility may be in part a function of article length, we examined correlations between article word count, PRISMA 2020 score, and reproducibility score. With respect to word count, the 33 articles ranged from 6,232 to 19,714 words, with a mean of 11,543 words (SD = 3395). As noted previously, the mean PRISMA 2020 score was 63% (SD = 11%), and the mean reproducibility score was 73% (SD = 11%).

As anticipated, article word count was significantly correlated with study reproducibility score, with a moderately sized Pearson r = 0.4028 (p < .03). Longer articles were more likely to score highly with respect to reproducibility. Conversely, PRISMA 2020 scores were not correlated with article word count, suggesting that reporting adherence/transparency is not directly related to length of the article or, potentially, any journal page length maximums.

Discussion

A central premise of meta-analysis is that reporting is transparent and findings are reproducible (Borenstein et al., 2009; Lipsey & Wilson, 2001). Indeed, that studies are objectively and systematically selected and synthesized is arguably one of the method’s marquee features, particularly in comparison to traditional narrative research syntheses. Yet, prior studies indicate that methodological transparency in meta-analyses is often lacking, rendering the reproduction of results near impossible (Hohn et al., 2020; Lakens et al., 2017). As research on transparency and/or reproducibility of meta-analyses in the social sciences is limited and to our knowledge is non-existent within criminology, the current study sought to examine whether meta-analyses in the field of criminology meet these traditional claims.

Our systematic (albeit purposely limited in scope) search of the literature yielded a set of 33 meta-analyses of intervention programs published in scholarly journals between January 2016 and February 2021. Modified versions of the PRISMA 2020 checklist (38 items) and the PRISMA-Search checklist (19 items) were used to assess transparency of reporting and adherence to PRISMA reporting guidelines. Our findings indicate that the average rate of transparency within the sample was moderate (63%), however adherence varied considerably across studies and across subscales; in particular, information in the Results sections of meta-analyses was reported on more adequately than was information in the Methods sections (76% vs. 61% adherence). Additionally, findings from the PRISMA-Search checklist indicate a fairly high rate of adherence to the reporting of search methods overall (77%); the majority of the studies in our sample (86%) adequately reported “Information & Methods” items such as the full list of databases and websites used, and 75% sufficiently reported the search strategies used in the meta-analysis (such as search terms and inclusion criteria). Overall, while “core” and “optional” checklist items were both generally reported with sufficient detail, some core items had low rates of adherence. This finding is concerning as the core checklist items are those that are most central to conducting a basic systematic review and meta-analysis, and represent information that should be reported with utmost transparency. Failure to clearly and sufficiently report core items not only creates potential gaps in the transparency of decision-making, but opaque reporting practices may also considerably undermine the perceived rigor and validity of the general findings and serve to minimize the overall credibility of study conclusions.

With respect to the core items in PRISMA 2020, in this sample of meta-analyses few studies provided information concerning review protocol registration and protocol (item #24a, 9%), and few noted the availability of data, code, or other materials (item #27, 15%). While we do not contest the value of a pre-registered review protocol and the utility of having data and code made publicly available, these components are not currently standard in the field of criminology and lack of reporting on these items may be more of a reflection of norms in criminology (versus health sciences research) than a lack of reporting transparency. A difference in norms is also evident for some low reported items in the PRISMA-S checklist; including item #3: study registries (only six studies reported using registries), item #10: search filters (no studies reported using filters), item #11: prior work (only two studies noted using search strategies from other literature reviews), item #14: peer review (only three studies used a search peer review process), and item #27: deduplication (27% of studies mentioned efforts to handle duplication in search results but only three studies mentioned the use of software). These items do not appear standard in the field at the current time, and we contend that their lack of implementation is less a reflection of lack of rigor and/or lack of transparency, but more a reflection of differences in research norms between meta-analysis of criminological interventions versus meta-analysis of health interventions (for which PRISMA 2020 was developed; Page et al. 2021a).

Considering how few official guidelines exist in the field of criminology with respect to commitments for data sharing and transparency, these findings are perhaps not altogether surprising. When compared with disciplinary norms in other fields such as psychology, it appears that criminology is lagging behind with respect to established guidelines for reporting and data sharing. For instance, section 8.14 of the American Psychological Association’s (APA) Ethics Code “instructs researchers to allow other competent professionals access to the data on which their published results are based” (APA, 2020). Further, many APA journals now require authors to provide data availability statements that link shared data, materials, and/or codes (for the purposes of reproducing results or replicating procedures), or authors must specify their ethical or legal reasons for not sharing (APA, 2020). Without clear guidelines of reporting standards in criminology, an acceptable level of “adherence” will continue to be difficult to achieve.

Altogether, while it is encouraging that most studies have reasonably transparent reporting based on state-of-the-art PRISMA standards, our findings point to several areas in need of improvement. Specifically, of the eight core items in the Methods section of the PRISMA 2020 checklist, five had adherence rates below 60%: #8: selection process, #9: data collection process, #10b: data items (variables), #13b: preparation of data, and #13c synthesis display (see Figure 1). As items #8, 9, 10b, and 13b all represent items where at least some level of subjective decision-making is required, transparency is especially vital for third party appraisal of methodological objectivity and rigor.

Additionally, two of the six core items in the Results section of the PRISMA 2020 checklist were below 60% on adherence: #16b: study exclusions, and #20a: summary characteristics of each analysis. With respect to item 16b, this phase of the study selection process requires authors to make a number of decisions to ensure that the set of studies is commensurate (e.g., with respect to population, intervention type, outcome measures, etc.). In general, listing the number of studies excluded at each phase of the search process as a result of various inclusion criteria reduces potential ambiguity of the decision-making process, which may have a considerable impact on the perceived objectivity of study selection (e.g., that the studies were not “cherry picked”) and that there are no hidden biases. Finally, our findings suggest that summarizing the characteristics and risks of bias for each synthesis in a given meta-analysis (for example, when studies present a series of smaller syntheses that examine homogenous program (e.g., multi-session vs. single-session) or participant (e.g., adults vs. youths) types) is uncommon in meta-analyses of crime prevention/criminal-justice-related interventions. Across the set of 33 studies, only one study adequately reported results on this checklist item.

To assess reproducibility, we used a 22-item checklist that we developed based on the PRISMA 2020. Our findings demonstrate that, overwhelmingly, studies did not adhere to reproducibility guidelines as none of the 33 studies in our sample could be successfully reproduced in their entirety. While five of the checklist items were entirely reproducible across the set of 33 studies (i.e., 100% of the studies sufficiently reported this information), six items had a rate of reproducibility below 60%; including four in the Search section (see Table 6). With respect to the search strategy items (i.e., #3: listed all hand searched journals, #6: listed all existing review reference lists that were searched, #7: listed all authors contacted, #10: included full website search strategy), these generally represent items that include potentially long lists, and would consume a large amount of manuscript space if listed in full. It is possible that authors who do not consider the reproducibility of their study (or deprioritize it) may choose to omit or partially report this information when faced with the challenge of adhering to a journal’s submission guidelines with respect to word count.

The significant relationship between article word count and overall reproducibility scores in the current study support this explanation. With respect to the items in the Results section (i.e., items (#17: described study eligibility for each synthesis, #18: described methods of preparing data for syntheses)), it may be that such descriptions are prohibitively long and complex depending on the number (and nature) of the primary studies being synthesized. For example, in our experience recidivism data that are primarily 0/1 outcomes computed as odds ratios tend be much simpler than data computed as standardized mean differences (or those involving multiple levels of nesting or treatment groups that require aggregation). To fully describe all data preparation for a study might in some cases require a half page of explanation (or more); if the meta-analysis contains a large pool of studies, this requirement is unrealistic for presentation in a journal manuscript. In these situations, supplementary online material that includes raw data may be the most reasonable means by which to ensure full transparency and reproducibility.

Summary of Critical Findings.

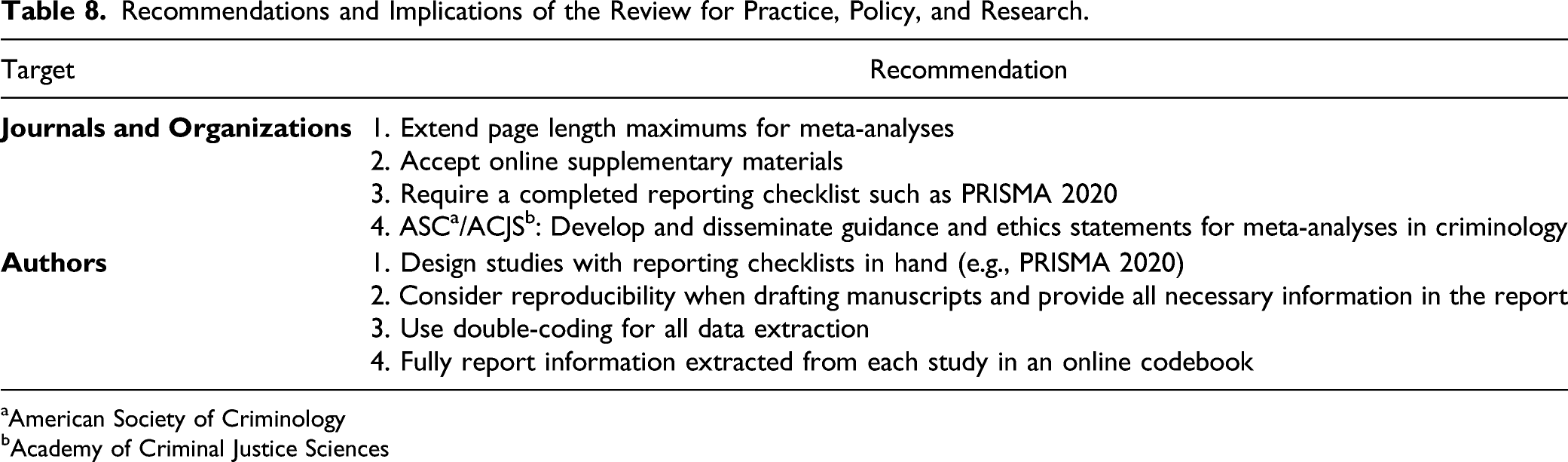

Recommendations

The results of this study lend to several recommendations for the field. With respect to academic journals and organizations, criminology journals that publish research syntheses should clarify in their submission guidelines that page length maximums will be extended for meta-analyses—or that online supplementary materials for meta-analyses are expected. Transparency in reporting is hampered by a requirement for authors to keep a manuscript to 25 or even 35 pages, which are the stated page maximums for some of the journals in which the current set of articles were published. In addition, editors of journals that welcome the submission of meta-analyses could consider requesting a completed reporting checklist, such as PRISMA, along with the manuscript to enhance transparency. Influential journals in the field of criminology should also consider adopting a similar approach to that of APA journals, and require data sharing/availability statements from meta-analyses to enable other researchers to reproduce results and/or replicate methodological procedures. Further, if the quality of reporting is to be improved, guidance and ethics statements from organizations such as the American Society of Criminology and the Academy of Criminal Justice Sciences can be developed for meta-analysts in the field.

With respect to authors, we strongly recommend that meta-analysts bear in mind both the PRISMA 2020 guidelines for reporting transparency, as well as the tenet of reproducibility, when designing their research and drafting their manuscripts. As meta-analysts are known for their repetitive calls to primary evaluators to “do better” in reporting elements of research design (e.g., Lipsey, 2001), we recognize the irony in making this suggestion. Nonetheless, it is clear that meta-analyses suffer the same issues of imperfect reporting, but with potentially even more problematic implications. Regarding reproducibility, we recommend that meta-analysts adopt a policy of double-coding all phases of systematic reviews; from study inclusion decisions to effect size calculation. Surprisingly, very few of the studies in the current set reported double-coding of all data; many used inter-rater reliability (IRR) assessments on a portion of overlapped studies. In our experience, no matter the coder’s proficiency, errors in data extraction are inevitable; as such, a high IRR is likely not satisfactory for reproducibility. While the intense labor involved in coding for meta-analyses is well-known, we suggest that coding be conducted by two reviewers, with 100% overlap on all studies and disagreements resolved by a third author (see Buscemi et al., 2006). If double-coding all studies is not possible (e.g., because the data set is prohibitively large), we recommend that a second reviewer code a random sample of a substantial portion of the studies in the set, and IRR subsequently be assessed.

Recommendations and Implications of the Review for Practice, Policy, and Research.

aAmerican Society of Criminology

bAcademy of Criminal Justice Sciences

Limitations

There are several limitations to our study. First, the sample of included studies was fairly small (n = 33). This was intentional in order to identify a manageable set of recently published studies on which to test the application of PRISMA 2020 guidelines, develop and test our Reproducibility checklist, and present findings at the individual study level. While the search was systematic in that we used a priori inclusion criteria and specific date limiters in the Criminal Justice Abstracts database, we by no means suggest that this set of 33 studies is the universe of meta-analyses of criminological prevention/intervention program evaluations conducted during this time period. In addition, we purposely limited the search to meta-analyses focused on prevention/intervention program evaluations in the field of criminology, and results may not generalize to meta-analyses of other topics. Further, given our focus on journal publications, we specifically excluded Campbell Collaboration meta-analyses and the results from this study do not extend to these publications. Second, this study involved a series of decisions with respect to coding instruments and procedures. While we selected the PRISMA 2020 reporting checklist as our measure of transparency, other checklists such as the MARS (Cooper, 2010) may arguably have been more applicable. The focus of the paper was not on PRISMA; rather, it was to use a state-of-the-art transparency guideline as a benchmark to assess the transparency of recent publications. Third, with respect to coding, some element of subjectivity was apparent when applying the checklists. To ensure accurate coding to the extent possible, both reviewers familiarized themselves with the PRISMA 2020 statement, and both reviewers coded the full dataset with a requirement that disagreements were discussed and concurrence on 100% of the codes was reached. The same process was used for the Reproducibility checklist. Relatedly, we acknowledge that there is a degree of subjectivity in ratings on the components of the Reproducibility checklist. Finally, a thoughtful reviewer queried how it was possible to assess reproducibility without actually reproducing anything. We acknowledge this as a potential limitation to the study, and emphasize that the study’s focus is on meta-analysis reporting (as opposed to validating meta-analysis methods or conclusions). While we relied on our own meta-analytic expertise and experience in conducting meta-analyses to determine whether sufficient information was reported to theoretically allow for replication, it is certainly possible that an attempt to formally replicate the 33 studies in our set would have led to different study results.

Conclusion

Meta-analyses are summative research syntheses and have the potential to be influential pieces of literature—provided their methods and conclusions are accepted as systematic, objective, and valid. It is incumbent on meta-analysts to adhere to principles of transparency and reproducibility, as the research they produce may influence decisions with respect to criminal justice policy—with notable implications for public safety and equity with respect to treatment impacts on diverse populations. The results of the current study suggest that meta-analyses in the field of criminology are moderately transparent, with several noted areas for improvement. Substantial changes to reporting practices are necessary for reproducibility to become reality versus mythical claim. While an adherence rate of 63% for transparency does not mean much in isolation, comparisons between fields (e.g., social science vs. natural science) or comparisons within the same field over time (e.g., crime prevention/intervention meta-analyses published between 2016 and 2020 vs. 2021–2025) could yield useful information about how adherence to transparent reporting in criminology compares to other fields (i.e., perhaps 63% adherence is comparatively high), or whether reporting practices have changed over time. Future research should continue to examine these issues in criminology, using PRISMA-based checklists (or other validated tools for reporting) such as the ones derived for the current study, with potential modifications implemented such as removing items that not considered norms in the field. Applying these checklists to a larger sample over a lengthier time period would allow for the assessment of a broader array of correlates, such as whether transparency and reproducibility of reporting are related to recency of publication, research topic, size of the study set, number of analyses conducted, journal metrics, methodological quality ratings on AMSTAR 2, and so forth.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.