Abstract

This article aims to discuss whether external evaluations are instruments to ensure increased quality of public school education. It is part of a research that investigated how evaluation results and the resulting indices were used in two schools in the state of São Paulo (Brazil). The methodology adopted was the case study, using different methodological tools such as interviews (intended for school coordinators and supervisors), questionnaires (intended for teachers and school directors) and observation of pedagogical meeting schedules. The collected data were analyzed and contextualized from studies by authors such as Afonso (2009), Dale and Robertson (2006), Le Grand (1991) and Neave (1988), among others, who investigated the commodification of education and external evaluations. In conclusion, we noticed that the mere existence of an external evaluation, accompanied by an index, is not sufficient to ensure improved quality of education, as this is done with the participation of parents, a good initial teacher training and investment in their continued training and higher social investments in the communities. External evaluations, contrary to what they promise, have on many occasions enabled increased regulation, ranking and competition, but have decreased the autonomy of schools thereby, reinforcing school inequality.

Introduction

The evaluation of education is a global phenomenon in which Brazil tentatively started to become involved during the 1980s. Thereafter, several evaluation programs of elementary and higher education were created with the expectation that these would increase the quality of education. The same happened in other countries that created (or formalized) their external evaluation systems, as they are currently known. These included the United States (1988, NAEP- National Assessment of Educational Progress State), Cuba (1996, Sistema de Evaluación de la Calidad Educación), Canada (1993, SAIP- School Achievement Indicators Program), Colombia (1988, Pruebas Saber), El Salvador (1993, SINEA- Sistema Nacional de Evaluación de los Aprendizajes), Ecuador (1996, Aprendo), among others (Bauer, 2010).

The proliferation of evaluation systems should be understood as part of a more complex context that involves all society and the way in which it perceives education. According to Afonso (2009): “… the functions of evaluation must be … understood in the context of educational changes and of broader economic and political changes… the evaluation itself is a political activity” (Afonso, 2009: 19). For that reason, evaluations generate political effects, such as the ranking of educational institutions which, in turn, generate different degrees of prestige and resources for schools.

Afonso (2009) states that external evaluations have increased control over schools and contributed to the loss of autonomy in institutions that feel judged and often punished by the results obtained in external evaluations.

As with Afonso (2009), Reynaud (1988) understands that evaluation can lead to three types of regulation: (1) control; (2) school autonomy; and (3) one that reconciles the previous two. In Brazil evaluations exert regulation primarily by control, in which: “the logic of cost and efficiency responds to external contractors: those of production and market” (Reynaud, 1988: 7). In other words, this type of regulation has been exerted by external evaluation, which is a regulation emanating from a higher sphere of power and interferes with salaries and the organization’s autonomy, and eventually applies an external pressure to the educational institution. In Brazil this regulation comes from a legitimate authority: the Brazilian state.

In the 1980s the interests of neo-liberal governments toward evaluation intensified and started to be referred to by a term created by Neave (1988): “evaluative state”. This term is used to express the idea that state control is subtle and is associated with an institution’s strategies of autonomy and self-regulation. The state began to implement public policies that valued competition and individualism, lowered public investment in social areas and partnerships causing the state to become disengaged, and implemented private and market principles in schools, which created more sophisticated mechanisms of control and responsibility such as external evaluation systems that ended up remotely controlling what is taught in schools.

According to Neave (1988), external evaluations have become an important instrument of the evaluative state since they are simultaneously: (1) a way of controlling the work done in schools; (2) allow schools to be blamed for poor results; and (3) guide implemented educational policies aimed at decreasing the state’s direct interference. The overall function of ‘strategic evaluation’ is to assess the previous performance of a particular dimension of national policy with a view to carrying out major changes in the light of what is found. (Neave, 1988: 9).

Afonso (2001) states that the evaluative state became present in elementary education by means of a climate of competition, generated by results arising from external evaluations that did not take into account the environment in which the schools are situated. The autonomy of these institutions functions only as a pretext for their accountability.

The state has increased its control over education by means of external evaluations. It selects the curriculum and interferes with the work of teachers by means of market mechanisms within public schools, leading to competition among schools and making it ideologically acceptable that some schools are considered to be “better” than others.

External evaluations, limited to the application of a test, do not allow any modification on what has already been taught, because they are an evaluation of the product rather than the process. A posteriori evaluation seeks to elicit how far goals have been met, not by setting the prior conditions but by ascertaining the extent to which overall targets have been reached through the evaluation of ‘product’. (Neave, 1988: 9)

The objective of this evaluation is to verify the extent to which the proposed objectives are achieved, i.e. it is an evaluation of the product, not of the process. Its results are not able to increase the quality of education for the students who provided these data, because they have already changed from one grade to another or have completed their elementary education.

Apparently, the evaluative state granted greater autonomy to several institutions at different levels of education. However, this freedom is not real since external evaluations became a new and very strong, although different, mechanism of control. Along with evaluation, systems of rewards and educational funding are being implemented. All these factors appear to be associated with, and reinforce, the centralization of decisions, and education remains a centralized top-down system.

In order to understand the evaluative state’s role, one has to understand the expression “quasi-market”, proposed by Le Grand (1991), as this acts concomitantly. For Le Grand (1991) the quasi-market policy arose during a period of historical crisis that threatened the stability of the British government. In order to solve this crisis, some market laws were incorporated to substitute the welfare state and reduce expenses within the social areas. All these reforms had a fundamental similarity the introduction of what might be termed ‘quasi-markets’ into the delivery of welfare services. In each case, the intention is for the state to stop being both the funder and provider of services. (Le Grand, 1991: 1257)

The central idea of this policy is to reduce the state’s responsibility toward education quality and funding. According to Le Grand (1991), it is very difficult to escape from this tendency, as well as to ignore external pressures for better results and the dichotomy of quality versus quantity. If there is a direct relationship between the quality and quantity of inputs and the quantity and quality of outcomes this may not matter, since the latter will improve with the former; but if there is not and in many welfare areas the link between inputs and outcomes has yet to be established empirically then we are likely to see upward pressure on costs, with no corresponding improvement in service. (Le Grand, 1991: 1265)

The major question on the concept of quasi-markets is how to ensure that the reduction in investment does not impair the quality of the service provided, since the basis of this theory is centered on reducing costs and investment in social areas. A key feature of this theory is the reduction of investment, manifested through non-existent or insufficient salary adjustments for professionals involved in these areas.

Dale (1994), corroborating the ideas of Le Grand (1991), defines market in the educational area as a means of funding, supplying, and regulating combined, exerted by the state. In collaboration with Robertson (Robertson and Dale, 2006), Dale states that the elements of the quasi-market that were introduced in education did not cause a decrease in the role of the state, rather a different role, which could often be considered to be greater. The pressure was in the direction of introducing markets or market-aping competitive structures, maximizing competition and choice, and minimizing state influence—even on state funded, provided, regulated and owned education systems. This can be regarded as a classic case of one form of constitutionalizing the neo-liberal; that market-making, state-inhibiting rules are put into place through legislation and largely administered by the state … However, this is still not the ideal situation for neo-liberals; there is still ‘too much state’. Regulated and quasi-markets are a great advance on ‘state control’, but they are not the same as open markets and ‘pure’ competition involving the private sector. (Robertson and Dale, 2006: 6)

The idea of a quasi-market enables the introduction of the concept of private management in public institutions, which can be perceived through the inclusion of financial stimuli or even the privatization of education as a way of achieving quality. Competition increases selectivity, the prevalence of productive principles, and the restriction of the notion of social rights.

For Robertson e Dale (2001), the state favors competitiveness and commodification in all areas because they would bring more prosperity to the poor than compensatory policies. Merits––both individual and collective––are highlighted through rewards and punishments, which impair social inclusion and stimulate collective living only to the extent that it can generate some type of gain. In this way, the idea of meritocracy is stimulated rather than the notion of rights and duties: “which has negative consequences for democratic and republican practices” (Freitas, 2007: 178).

In Brazil external evaluations have increased rapidly with many students sitting the tests. Besides the national evaluations such as: National System for Evaluation of Elementary Education (SAEB––Sistema de Avaliação da Educação Básica), Prova Brasil (students in elementary education), Provinha Brasil (students in literacy phase), High School National Exam (ENEM––Exame Nacional do Ensino Médio) for students completing high school, and National Survey of Student Performance (ENADE––Exame Nacional de Desempenho de Estudantes) for students completing university, there are many initiatives in different states and municipalities that have developed their own evaluation systems. Regardless of their particular features, they fundamentally represent the evaluative state’s principles, increasing competition among schools, punishing and rewarding them in different ways, and always blaming them for the results obtained. This, in turn, decreases the state’s responsibility and increases its control over the curriculum and teachers’ work.

One of these evaluations is conducted by the government of the state of São Paulo and uses a large-scale evaluation (SARESP, Sistema de Avaliação do Rendimento Escolar do Estado de São Paulo––state of São Paulo School Performance Evaluation System) to compile the index IDESP (Índice do Desenvolvimento da Educação no Estado de São Paulo––state of São Paulo Education Development Index) with the objective of “measuring” and stimulating the quality of public education. In this article we analyze the effects of this policy, using two schools as subjects––one with good performance and another with poor performance in this evaluation.

SARESP and IDESP: the evaluative state in action

SARESP was created in 1996 by the state of São Paulo Secretary of Education, with the objective of producing periodic and comparable information about state public schools, so that new actions to improve public education could be developed from the resulting information.

SARESP is a large-scale external evaluation that is applied annually, allowing the results obtained to be compared with previous editions in order to determine if there were improvements in students’ scores, which would indicate improvements in their learning.

As previously stated, the objective of this evaluation is to monitor what is being taught in public schools and then evaluate the quality of education. For this quality to be achieved, the curriculum is expected to be taught in all schools and learned effectively by its students. Thus, SARESP intends to evaluate the quality of education and subsequently guide actions to achieve it. In this sense, it is important to consider to what extent this evaluation affects the curriculum, as it is based on competences and abilities originating from the state-proposed curriculum.

The scores obtained by the school in SARESP, plus the rate of school flow, make up the IDESP. This index is the state version of an index created by the federal government, the Elementary Education Development Index (IDEB––Índice do Desenvolvimento da Educação Básica). In the official treatise the objective of these indices is to detect the schools (or education networks) that show low performance and monitor the evolution of student learning. With the IDEB, more specifically, it was determined that, by the year 2022 (celebration of 200 years of Brazilian independence), Brazil would reach the same level of educational development in its indices as those of countries in the Organisation for Economic Co-operation (OECD) (Fernandes, 2007).

Similarly to IDEB, IDESP imposes goals for schools to reach, believing that this will lead to an improvement in education. IDESP integrates the “School Quality Program” (Programa Qualidade da Escola), with the objective of promoting increased quality and equity of education. This program presents an index for each school (IDESP), establishing goals for yearly improvement. As an “incentive”, a bonus salary is paid to schoolteachers at the end of each year if they achieve the proposed goals.

The scores achieved by schools in IDESP are disclosed to the media and the educational institutions are ranked: some are promoted while others are undermined. This has resulted in educational ranking being seen as negative––the simple act of “ranking” already demonstrates the logic of exclusion, as it is known that not all schools can be in first place. Ranking produced by SARESP/IDESP is supported by the state, which reinforces its weight as an absolute truth. Schools, and consequently their teachers and students, are labelled as good or bad.

The comparisons produced as a result of ranking, already reveal a problem: it often compares what cannot be compared. The comparison between schools with different features and realities is not only unfair but also attributes culpability for the results obtained to the teachers. From this hypothesis, two schools from different realities and with opposing features were selected and their results compared. We chose schools with opposing performances: one with a positive variation and the other with a negative variation.

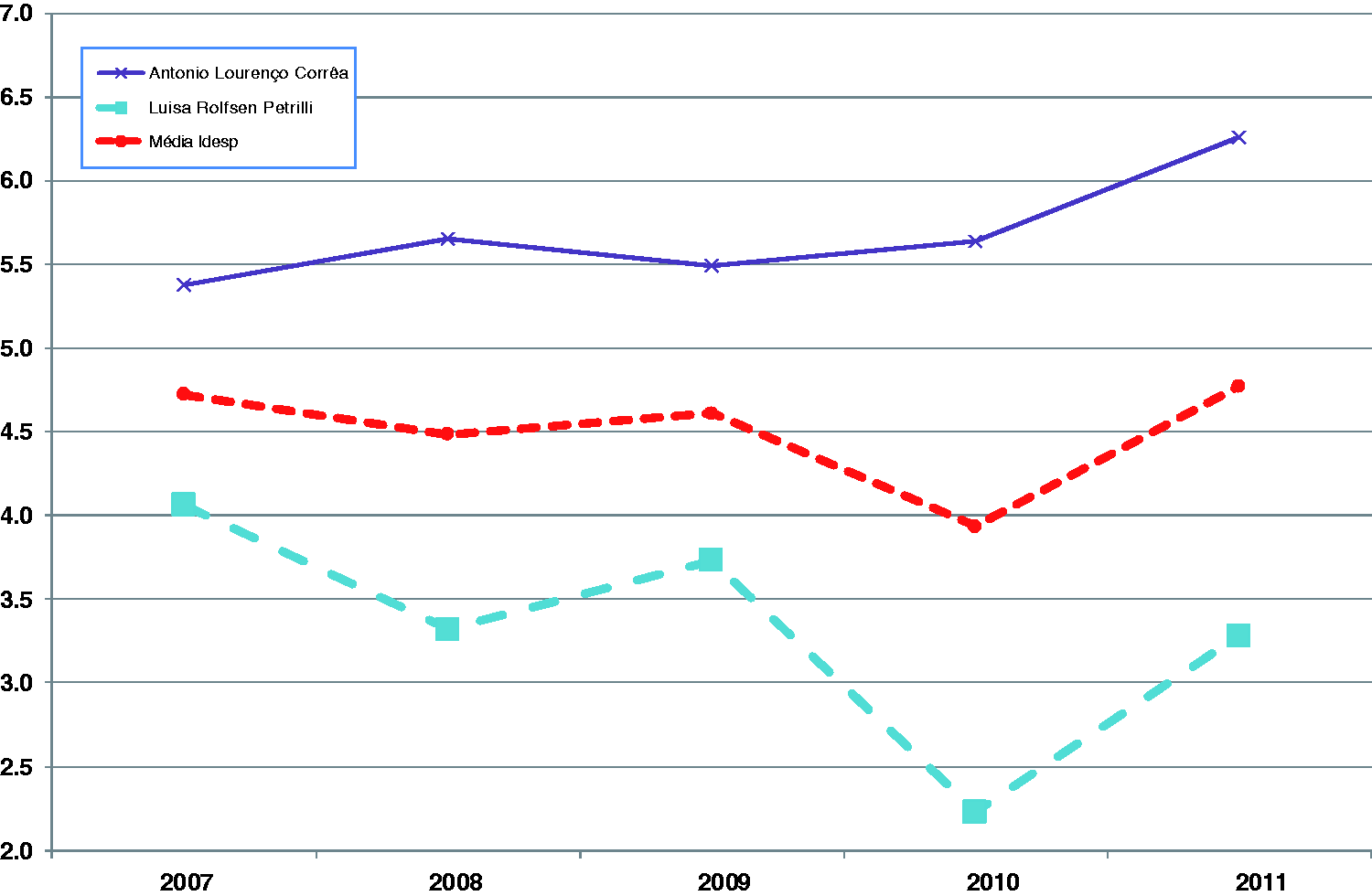

The two schools are represented in the graph in Figure 1: school A (purple) shows a positive variation, since it is in progressive ascension, while school B (blue) shows a negative variation, as it represents a progressive worsening. We will be able to determine from the selection of these two schools the impact of IDESP, and reflect on the fact that the creation of an index is not enough to ensure teaching quality, particularly as the performance of one of these schools continues to deteriorate despite the implementation of this index.

IDESP of selected schools (x-axis represents the years analyzed, y-axis represents the indices).

The difference in performance between the two schools has increased, which leads us to the conclusion that the school which already presented good indices continued to improve while the other demonstrated further difficulties. According to Robertson and Dale (2001), neo-liberal educational reforms through stimulating competitiveness caused an increased “polarization” of schools, which eventually created a set of marginalized and constantly poorly evaluated schools.

Market principles have highlighted the success of some schools against the failure of others. The state needs to deal with these “failing” schools, intervening through public policies as a way of ensuring stability. The creation of indices and external evaluations show the existence of a problem, but the solution cannot be reduced to the notion of ranking.

The evaluative state in the school

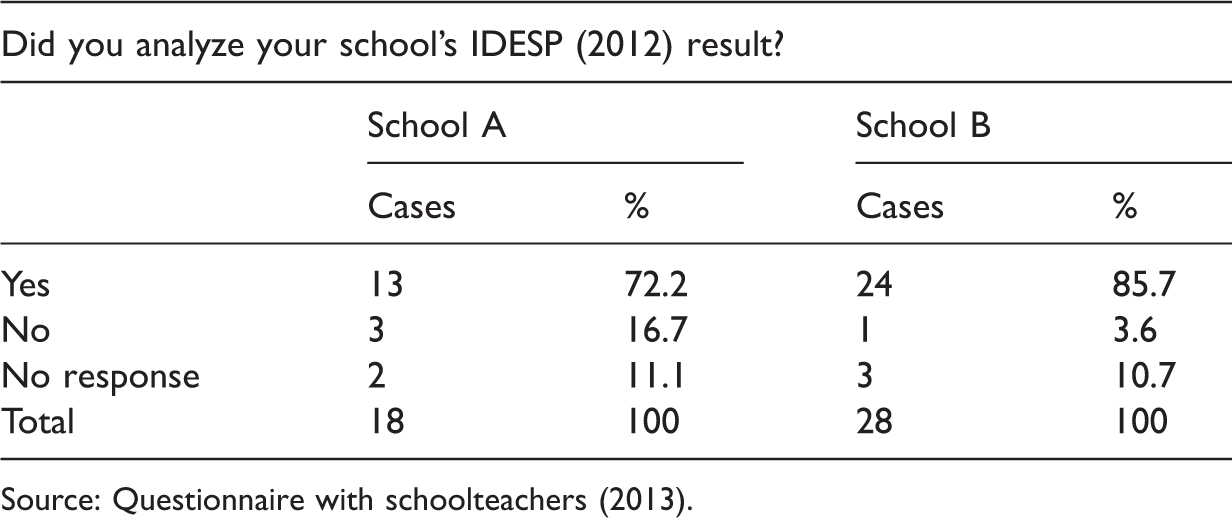

Analysis of IDESP results by schools.

Source: Questionnaire with schoolteachers (2013).

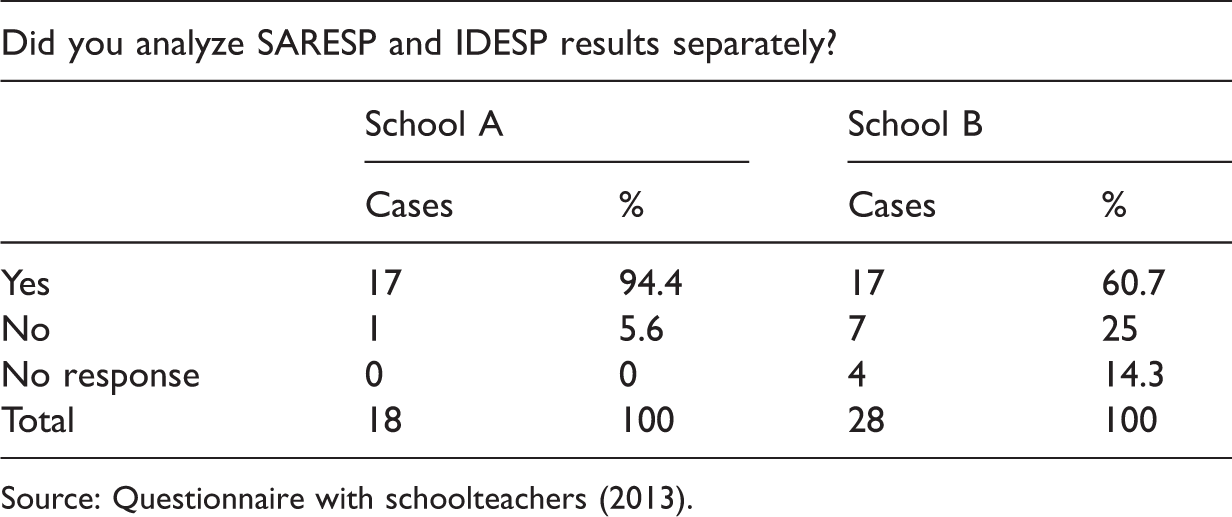

Analysis of IDESP versus SARESP by schools.

Source: Questionnaire with schoolteachers (2013).

Two factors are important in this aspect: firstly, the way in which these data are employed; and secondly, it is not enough to merely analyze these results––it is also important to act on them. The presence of the state is fundamental to the school overcoming its difficulties.

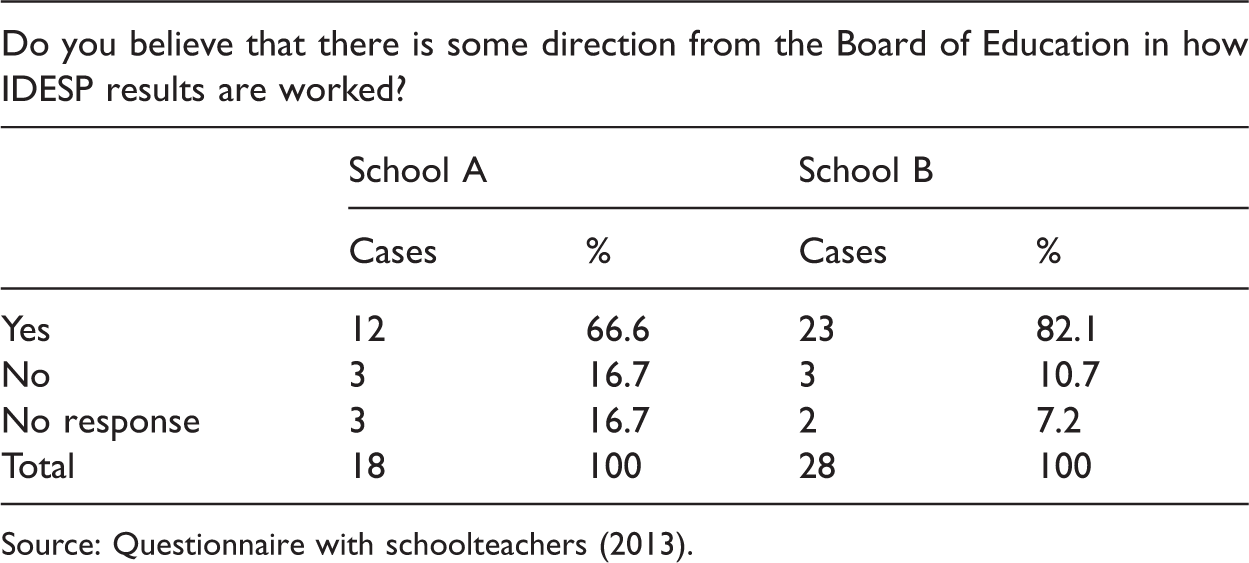

School autonomy in IDESP results discussion.

Source: Questionnaire with schoolteachers (2013).

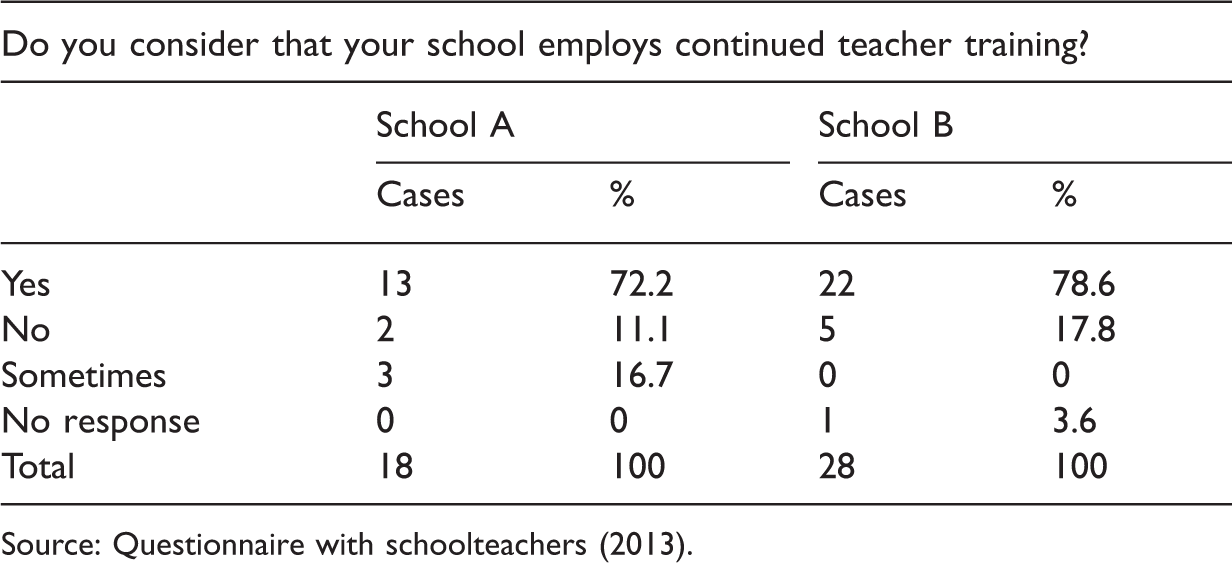

Continued training.

Source: Questionnaire with schoolteachers (2013).

This type of training does not help teachers to reflect upon their practices; it only leads to conditioning and acceptance. In this context, working with the results of external evaluations means training teachers so they can train students. This training is very superficial as many teachers do not master the content they teach. In school A 17% of teachers said they had not mastered the content developed in the guidelines for teaching mathematics in the early grades, while in school B this number increased to 21%.

What can be concluded is that continued training does not replace an initial training with little theoretical foundation, as many teachers continued with the same doubts and difficulties they had previously had. In school A 83% of teachers hold a Bachelor’s degree in education, 44% graduated from public universities whose performance in national evaluations of higher education has been higher than that of private universities, and 11% had completed Masters or PhD degrees.

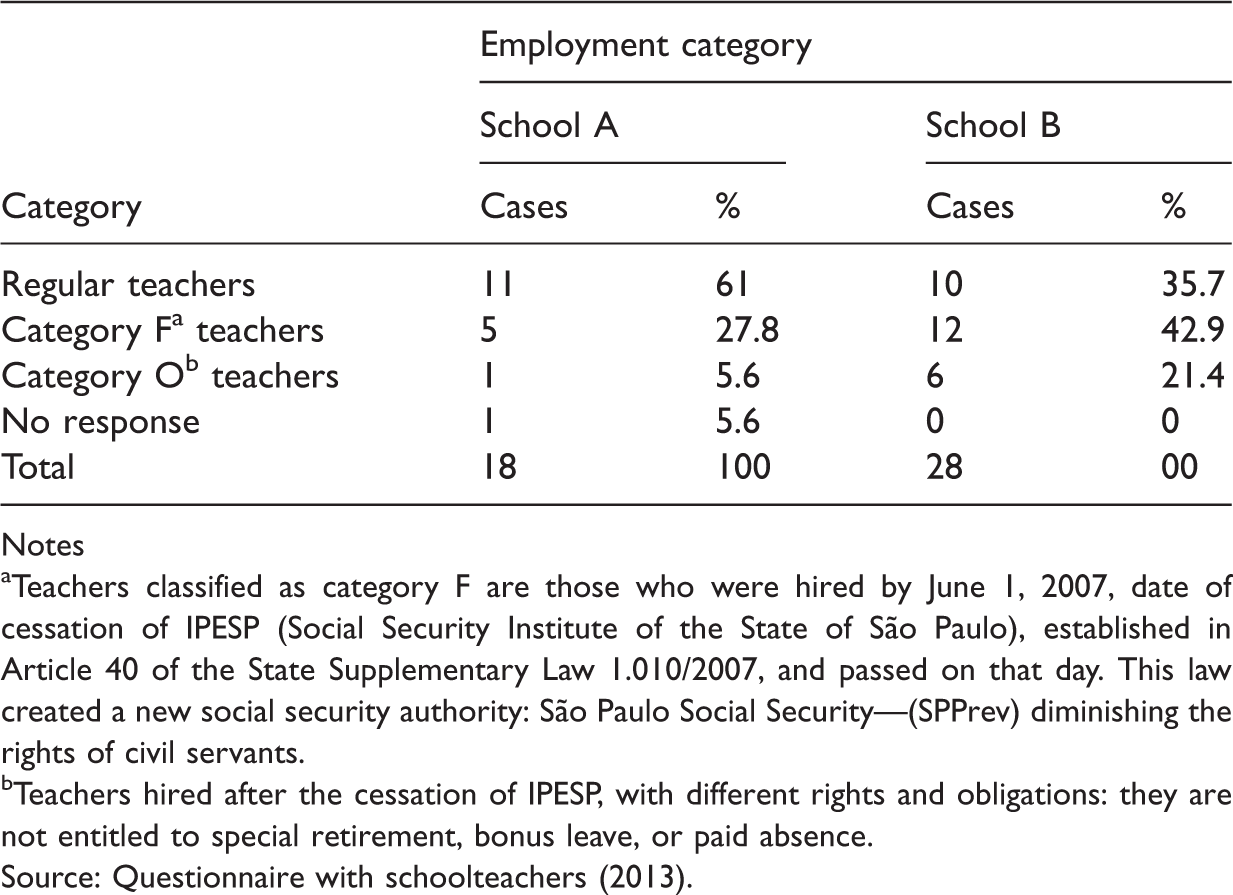

Teachers’ employment categories.

Notes

Teachers classified as category F are those who were hired by June 1, 2007, date of cessation of IPESP (Social Security Institute of the State of São Paulo), established in Article 40 of the State Supplementary Law 1.010/2007, and passed on that day. This law created a new social security authority: São Paulo Social Security––(SPPrev) diminishing the rights of civil servants.

Teachers hired after the cessation of IPESP, with different rights and obligations: they are not entitled to special retirement, bonus leave, or paid absence.

Source: Questionnaire with schoolteachers (2013).

Continued training conducted in schools resembles a training program in which there is little space for the participation of teachers. This is supported by the fact that they are never able to choose the agenda for their meetings (a fact that was agreed upon unanimously in school B), and all the training themes are imposed.

We could establish a possible link between initial teacher training and student performance. In school A where most teachers come from public universities and do not demonstrate any difficulties in the content taught, students showed a better performance. However, it is not possible to state that this better performance is due to the teachers’ level of education, since other factors differentiate the two schools and may affect student performance.

Continued training of directors/supervisors also happens in an imposed way, but in a different location, at the Board of Education. The difference is limited to the location, not the methodology, because it also follows the same logic of repetition and training. At the Board of Education, directors/supervisors are trained to train teachers who, in their turn, will train the students.

Results are treated in a superficial manner, since schools do not receive the results separated out by individual question; therefore they cannot know the questions their students failed. In school A, 50% of the teachers declared that they have no access to this information, and that is why results do not lead to a change in teaching practices. This indicates that external evaluations are focused on curricular control and are used as a way of controlling education remotely, rather than as an instrument able to provide data to reflect upon the teaching and learning processes.

Although the results are considered only generally, the demands, rewards, and punishments take into account the individual results of the schools. In this sense, there seems to be an inconsistency in the official treatise which propagates the idea that SARESP is an evaluation of the system (therefore individual performances do not matter) and grants bonus salaries to teachers and other employees according to IDESP, but which, in practice, creates an individual demand.

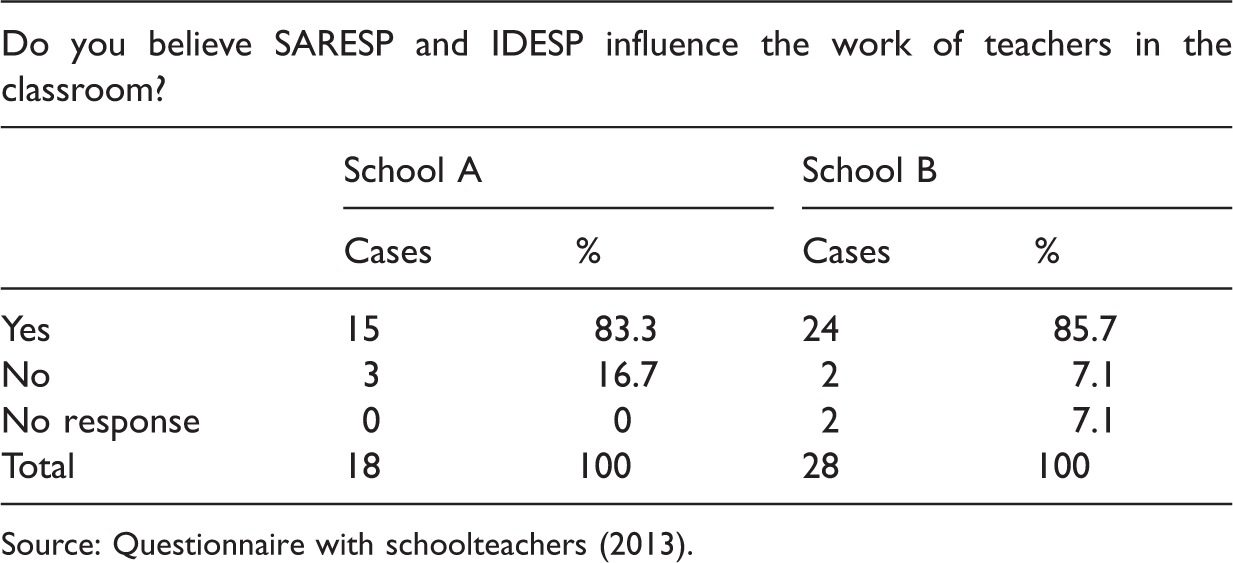

Influence of SARESP and IDESP on teaching work.

Source: Questionnaire with schoolteachers (2013).

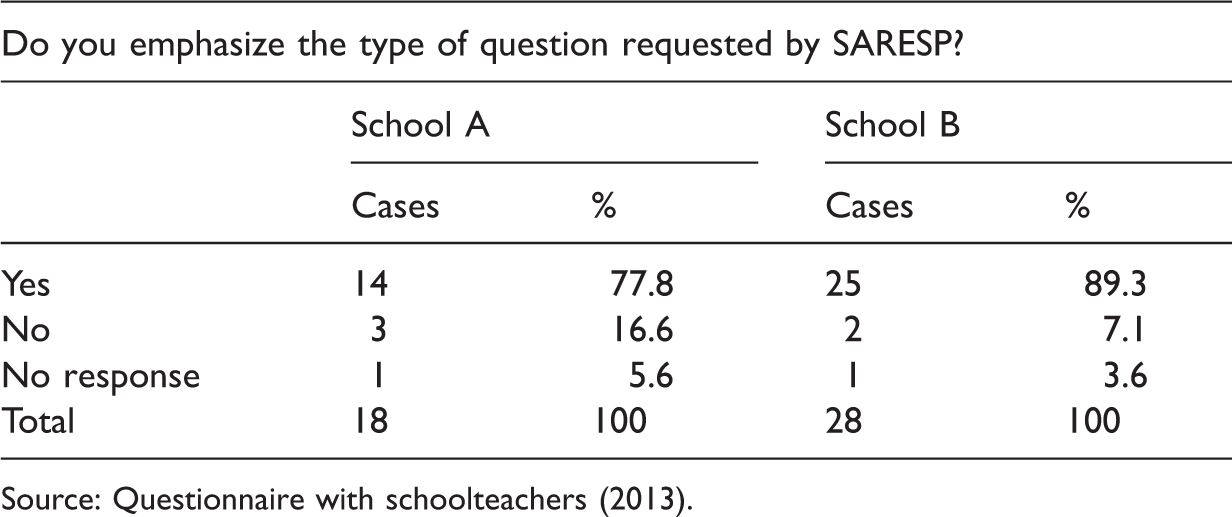

SARESP and the teaching work.

Source: Questionnaire with schoolteachers (2013).

SARESP’s evaluation is centered on abilities and competences required in the official curriculum of the state, implemented in 2007. Therefore, it is possible to conclude that it mainly evaluates whether the state curriculum is followed: “it is the responsibility of the Board to clarify the link between SARESP and curriculum” (São Paulo, 2009a: 3). It is thus possible to state that SARESP exerts an element of control over what is taught, because it “asks” for what was previously stipulated by the curriculum.

The evaluative state values knowledge and abilities determined by the market, so there is more appreciation of certain disciplines and methodologies over others. Some disciplines, such as Portuguese and mathematics, are overvalued, covered in evaluations and make up the IDESP, while other disciplines are considered peripheral. The valued methodology means excessive work with diverse multiple-choice questions that do not allow the student to develop their reasoning skills.

In the same way that there is overvaluation of some knowledge, there is also overvaluation of a particular type of teacher: those whose students obtain good scores. This is proven by the introduction of the bonus policy in some regions of Brazil, including the state of São Paulo, where this research was conducted. Carette (2008) realizes this tendency and states that currently the quality of the teacher is measured through the results obtained by their students in external evaluations. Through these evaluations, teachers would be rewarded or punished. In this perspective, there is a valuation of the product rather than the process, so it is possible to state that the valued teacher is the one who trains their students to perform well in the tests. The effective teacher is the one who works with very structured activities and gives more importance (more time) to the most relevant disciplines (those covered in evaluations). This teacher also applies constant evaluations with many objective questions, such as the questions asked in external evaluations. Besides ignoring different students’ learning rhythms, this model of teacher prefers individual work (evaluations) in the form of external evaluations.

Carette (2008) believes that research based on a process-product paradigm brings a limited vision of a good teacher. In this context, the question of the definition of the features of an effective teacher is an issue we may not always measure the importance. It leads us to argue that it is interesting to speculate on the discrepancy between the educational discourse usually spread in initial and continuous training and the results on the features of an effective teacher from “process-product” research (Carette, 2008: 83).

Research and educational policies that evaluate the teacher through an external evaluation applied to their students represent, to say the least, a simplification of school practices and of lessons considered “effective”. These policies also create an education system based on universal features, which ultimately compare what is not comparable and ignore the features of the social contexts in which the schools are located.

The overemphasis on the results of these evaluations has led to a distortion in initial and continued teacher training. Teachers are now “trained” to work with certain contents, using certain methodologies. It is necessary to question this cycle that links teacher practice to the performance of their students. Instead it should take into account the difficulties and limitations of these evaluations in measuring students’ knowledge and teachers’ practices. The regulatory effect of SARESP/IDESP is not limited to the work with content or models of questions; teachers also notice more intense monitoring in schools with lower indices.

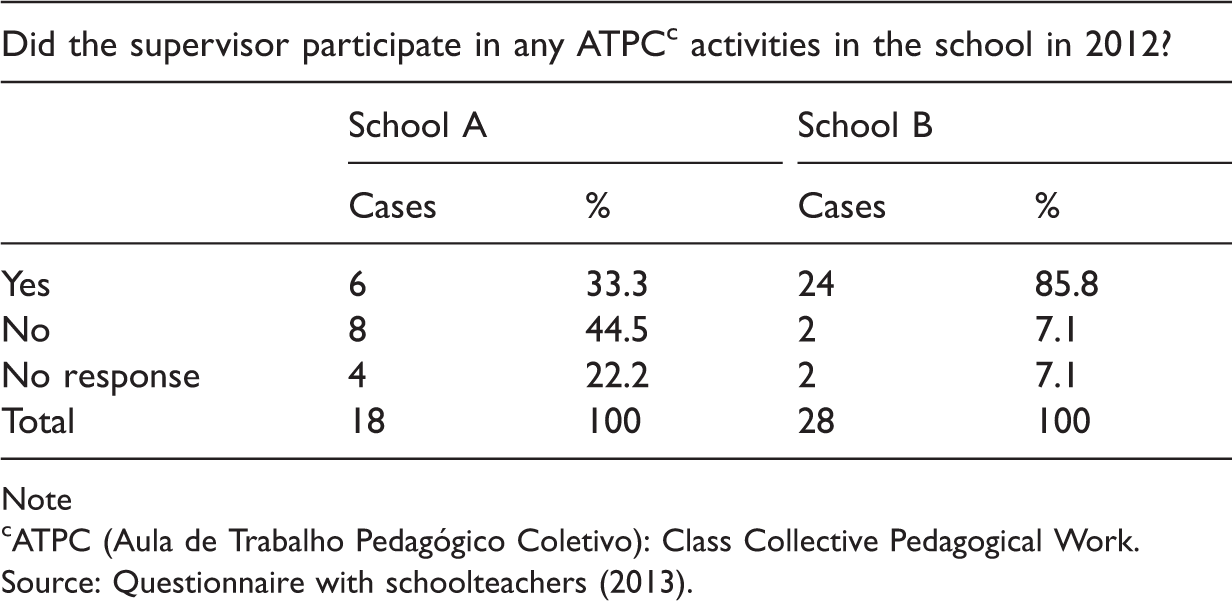

Supervisor participation.

Note

ATPC (Aula de Trabalho Pedagógico Coletivo): Class Collective Pedagogical Work.

Source: Questionnaire with schoolteachers (2013).

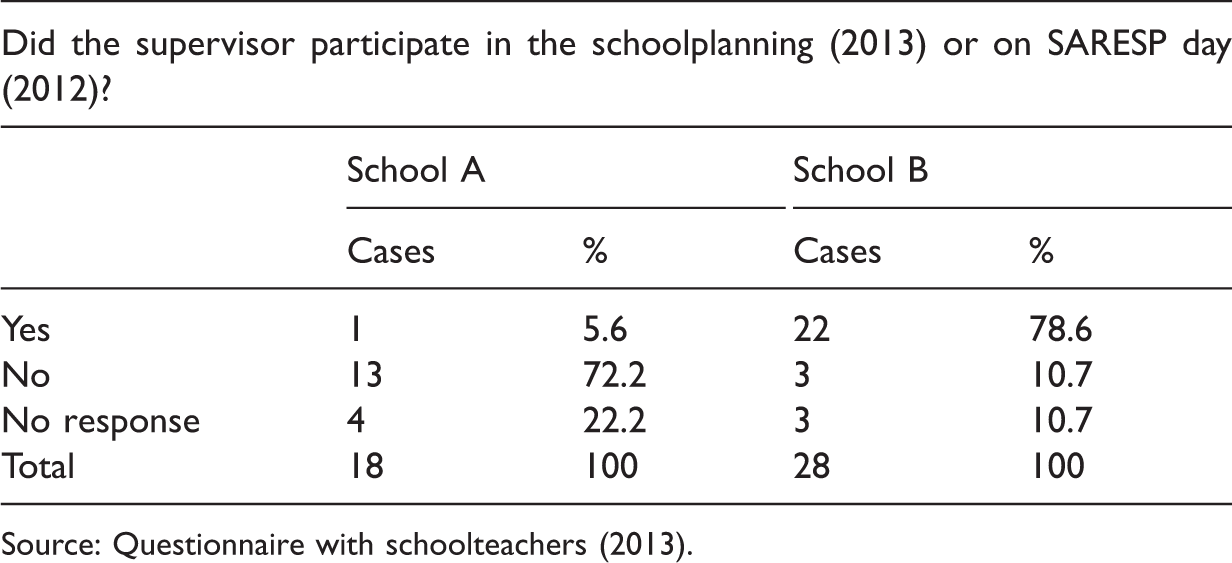

Role of the supervisor in planning.

Source: Questionnaire with schoolteachers (2013).

In school B we see the opposite, with 85.8% of teachers declaring that the supervisor participates in weekly teacher meetings (see Table 8) and 78.6% declaring the supervisor participates in planning (see Table 9). A similar situation occurs regarding the presence of teaching workshop coordinators, with 93% of teachers stating that they participate in meetings, and 75% affirming that they participate in planning. The control over teachers’ work is much greater in school B as a result of its lower IDESP score.

Increasing control does not increase the educational quality and the index in 2013 was lower than that of 2012, while in the less “monitored” school the index increased. It can be inferred, therefore, that this model of “monitoring” needs revision as it appears to be, according to teachers’ reports, a way of pressure and control rather than a cooperative practice.

Possibly, if monitoring were more focused toward continued training that promotes reflection on the school’s performance and the creation of internal collectively decided goals, this type of monitoring would have a positive effect on students’ scores. This is different to what has occurred. It is also worth mentioning that both schools lacked internal goals, and only school B had started implementing a self-evaluation process. However, this was an imposed process violating the basic principles of self-evaluation.

We found that schools do not promote any action to review and act on the evaluation results autonomously; they simply follow the directions of the Board of Education which, in turn, follows the State Secretary of Education. Theoretically, there is the possibility to develop other projects. However, there is no time available, because they must conduct those projects demanded by the Board, before they are able to consider other projects.

The actions idealized by the State Secretary of Education failed to meet the desired effects, and in many cases they are not implemented because they are not suited to the particular situation of the school. This is the case in school B, which lacks space and structure to incorporate a reading room, which is one of the actions suggested by the State Secretary of Education to improve students’ performance. There is also insufficient space for a library and a video room, which were transformed into regular classrooms due to a large rise in the number of students. No investment was made in building expansion, as elementary schools can be municipalized, which leaves them short of official resources.

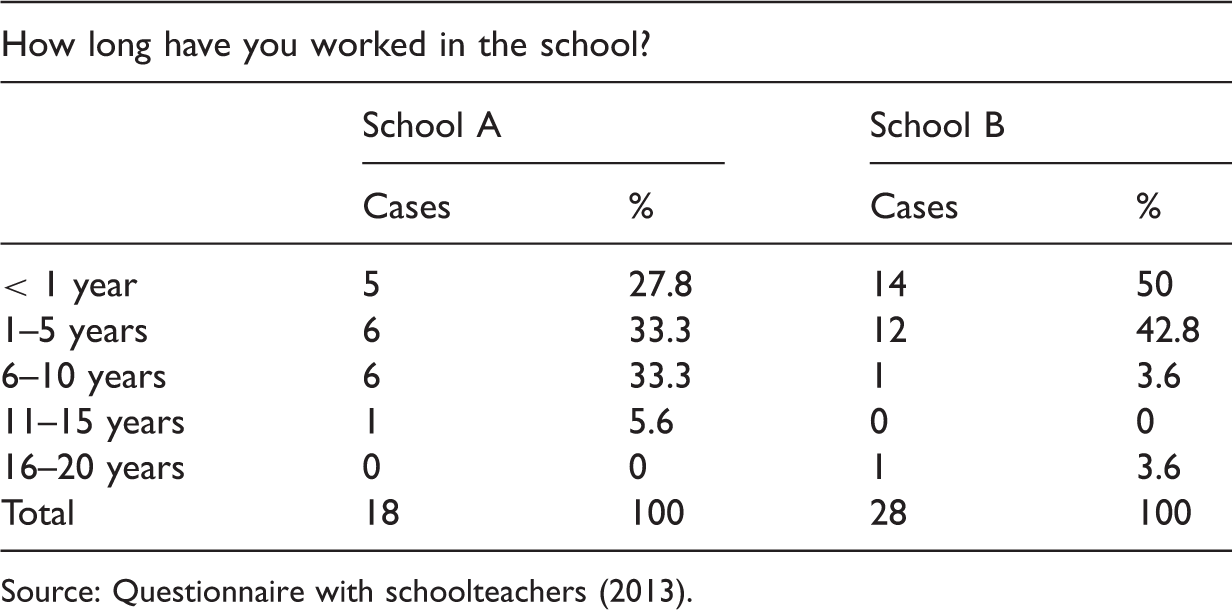

Length of time teachers have worked in the school.

Source: Questionnaire with schoolteachers (2013).

The situation is different in school A, where 61% of teachers are regular teachers. As indicated in Table 10, 38.9% have been at the school for more than six years and only 27.8% are in their first year there. The school board also shows lower turnover, with the current director having been there for six years. In contrast, school B suffers from many problems: (1) being located in a remote area; (2) being in a building with little space; (3) having a lack of teaching resources; (4) having a lack of faculty mobility; (5) teachers exhibiting little confidence over the content taught; and (6) no continuity in administration. School B finds no room to reflect on these problems and there are few policies available for the improvement of this situation.

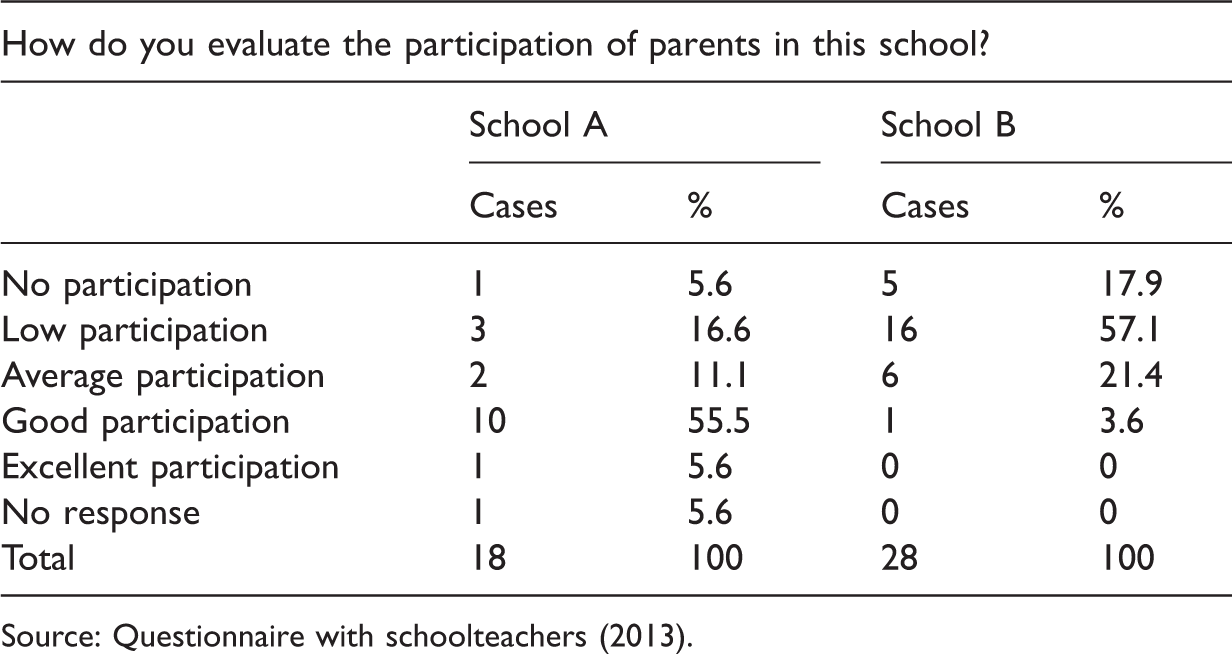

Participation of parents.

Source: Questionnaire with schoolteachers (2013).

As indicated in Table 11, in school A 61.1% of the teachers classified the participation of parents as good or excellent. On the other hand, in school B only 3.6% of them, one teacher, did so, and 75% classified the participation as being low or non-existent.

This information is of utmost importance as the relationship between school and community is very subtle in school B, which requires more intense participation. As a consequence, parents of school B are less aware of what IDESP and SARESP are, as this information is only provided in parents’ meetings. However, in school A only 11% of teachers stated that parents do not know the school’s IDESP score, while in school B this number increased to 79%.

School B, whose IDESP is the worst according to the Board of Education, has difficulties convincing parents to participate in meetings with teachers. However, according to the teaching proposal, the participation of parents is good and increasing. This discrepancy of information demonstrates that the teaching proposal does not reflect the reality at the school.

Studies conducted by Dale (1994) indicate that student performance is much more related to their origin and to the social capital that can maximize (or minimize) their learning, than to the structure of the school. This leads to a second observation: the evaluation of the school depends on the cultural capital of those who attend it. For that reason: “… the social origin seems to be the ‘quality’ schools are most interested in…” (Dale, 1994: 127).

The participation of parents is also different and varies according to their cultural capital: the higher the socioeconomic level of parents, the higher their participation in the children’s school environment (school council and parents’ association), as they have more time and are interested in the type of education that is being offered.

Following the principle of the competitive market, many schools tend to refuse the enrolment of students from the poorest areas, because they believe they can worsen the schools’ performance in external evaluations. In Brazil enrolments are distributed according to neighborhoods. That means schools located in socioeconomically disadvantaged areas show a worse performance in external evaluations, as is the case with school B. It is thus possible to notice that the educational market is not neutral and reproduces inequalities.

The less the state holds responsibility for the type of service provided, the more excluding it becomes. The educational market alters the balance of prestige between different public schools, between public and private schools, and between different private schools. This has led to the migration of the middle class from the public schools.

Related to this competition we have: “inadequate ways of evaluating and valuing the work of each teacher” (Torres Santomé, 2003: 68). As the rewards only reach part of the group rather than a decent wage policy for all, it is possible to note financial benefits for some professionals. As a consequence, professionals who master the content they teach and are better trained tend to prefer working in central schools whose performance in external evaluations is better, which gives rise to a bonus salary. Deep down, we are faced with a strategy in which there is a shift of responsibility from the Administration to each school. This policy has been made invisible, and will have negative repercussions for children from disadvantaged socioeconomic groups, because society itself will struggle to know which institutions are responsible. (Torres Santomé, 2003: 69)

In school A, the participation of parents is greater and, according to the coordinator, very good. She affirmed that the relationship between parents and school is very positive, that the community feels it is part of the school, and that parents even compare the content that different teachers set for the classes.

On the other hand, school B experiences a different situation in which the majority of students show great mobility, often changing neighborhoods and/or cities. According to the teaching proposal, this is due to the low financial conditions of the families. Children and parents do not take up residence for long in the neighborhood, which impairs the association between parents and school and even between school and children, since they lack the sense of being part of that community. This turnover damages both the family-school relationship and students’ learning itself. Changing neighborhoods, cities or states is frequent, undermining the development of contents and the full development of children in the teaching-learning process, what often affects negatively the school in external evaluation indices proposed by the State Secretary of Education. (São Paulo, 2009b: 4)

An evaluation intended to “measure” the quality of the education offered cannot ignore these factors and be reduced to the performance of students in a test––it needs to consider several factors. Schools A and B present so many differences that comparing them is unfair. In this sense, we state that the educational policy itself, as well as the interests it promotes and the values it disseminates, needs to be evaluated.

The evaluation process is political in nature and, in order to generate any changes that result from an increase in the quality of education, it has to be inclusive and not be seen as an evaluation of a product that appears out of context. Instead, institutional evaluations should be holistic and integrate the educational system. To achieve that, evaluations should be conducted by teachers, otherwise it generates resistance and produces distorted images of reality.

Conclusion

The evaluation itself is not good or bad; its role and the way in which its results are treated are determined by public policies. In this sense, it is possible to state that in the current Brazilian context these policies are being used to increase control over the work done in schools, to deepen inequalities, and to decrease (and direct) investment in the area of education. Furthermore, these policies construct and legitimize the ideology of meritocracy.

Pressure for better results, rankings, competition, and punishment (or reward) are not able to improve the quality of the education offered, because this is a result of the association of several factors, such as parental participation, an initial and continuing quality teacher training, a school structure able to awaken children’s interests, continuity of teaching work, and policies that stimulate the participation of schools in decision-making processes.

Policies that stimulate competition and ranking are not irreversible, hence the need to consider alternatives. In this sense, Nevo (1998a, 1998b, 2006) presents some paths, such as self-evaluation, that should be continually developed in schools to provide data to be analyzed in conjunction with external evaluation, in addition to the dialog between internal and external evaluations.

Institutional evaluations (or any other type of evaluation) cannot be reduced to a few technical procedures, however sophisticated and guided by an obsession with measurement they may be, and no matter how imperatively they are being presented. Thus, the correlation between information arising from external evaluations and from self-evaluations is important, as they complement each other.

In an attempt not to state the obvious, schools should be analyzed as a whole and not simply measured and evaluated by external indices which have little say about their reality. For that reason, we believe in self-evaluation and greater investment in schools that need it the most, which does not mean stopping investment in those that are achieving good results. Through a renewed investment in schools with lower indices, external evaluations would be fulfilling their role of identifying problems, and the state would be able to attempt to solve them.

Investment in infrastructure, acquisition of teaching resources, teacher training, development of school-accredited projects, policies to retain teachers, and cooperation between school and community are essential. Only then will external evaluations be an instrument to promote the quality of education.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.