Abstract

To address the unprecedented challenges of construction pressurized by the global climate crisis, housing shortage, and growing shortage of skilled labor, this research presents a radical shift in the construction lifecycle of buildings, from linear processes that produce static continuous buildings to interrelational processes linking adaptative eco-systems of collaborative robots and reconfigurable building parts. Inspired by natural builders, the interdisciplinary field of collective robotic construction (CRC) offers the potential for scalable, adaptive, and resilient construction with simple robots. We establish a design framework for autonomous collaborative robotic construction (ACRC) through modular robotic material eco-systems (MRMES) trained with deep multi-agent reinforcement learning (DMARL). This involves the integration of three core aspects: (1) modular robotic material eco-systems (2) cyber-physical simulation and control with bidirectional feedback (3) adaptive intelligence through deep multi-agent reinforcement learning. The framework is implemented through three comparable case studies for collaborative modular robotic assembly of reconfigurable building parts.

Keywords

Introduction

This research is rooted in the transdisciplinary field of cybernetics, significantly advanced in the 1960s and 1970s by figures like Gordon Pask, Cedric Price, Nicholas Negroponte, Christopher Alexander, John Frazer, and the Archigram group. Gordon Pask argued that the architect’s role is less about designing static structures and more about catalyzing environments that evolve over time. 1 He challenged the traditional view of architecture as a fixed material artifact, instead proposing it as a dynamic composition of interrelated systems regulated by feedback in a constantly changing environment. 2 Projects such as Cedric Price’s Fun Palace (1961) and Generator (1976) were designed as intelligent, adaptive architectural environments that could reconfigure themselves using mobile cranes controlled by cybernetic systems. Both Pask and Price focused on managing “indeterminacy,” exploring architecture’s capacity to adapt to and influence its inhabitants.3-5

This approach to architecture is especially relevant in today’s rapidly transforming world, with the construction industry increasingly pressurized by the global climate crisis, global housing shortage, increasing nomadic and aging populations, and a growing shortage of skilled labor. Our human population is expected to grow to 8.6 billion in 2030, 9.8 billion in 2050, and 11.2 billion in 2100, requiring us to build over two billion homes in the next 80 years. 6 Meanwhile, an estimated 33% of the world’s waste, and at least 40% of the world’s carbon dioxide emissions is produced by the construction industry. 7 While technological advances in computation, robotics, and AI have rapidly transformed other industries such as the automotive industry, the construction industry is notoriously slow in its uptake of disruptive technologies, while being one of the least digitized sectors.8–10 Most buildings are organized and constructed as a layered system called sheering layers 11 and designed with linear building life cycles often resulting in buildings which are over specified and inflexible to future changes in demand and eventually ending in demolition.12,13 Industrial research in computation, AI, and robotics for construction tends to be segmented within isolated processes at different stages of building design, fabrication, and construction. To address the unprecedented challenges we are facing requires radically changing the way we conceive, plan, and construct buildings, from linear processes used to produce static continuous objects to interrelational processes of developing reconfigurable buildings with adaptive life cycles.

Living systems in nature exhibit highly adaptive and efficient construction through the flexible assembly of simple parts made of sustainable materials. The extraordinary collective intelligence and robust and adaptive building techniques found in nature have inspired new multi-disciplinary approaches to construction with multi-robot systems categorized as collective robotic construction (CRC). CRC specifically concerns embodied, autonomous, multirobot systems that modify a shared environment according to high-level, user-specified goals integrating architectural design, the construction process, mechanisms, and control. By codesigning robots together with building systems, we can develop more adaptive, scalable, and reusable architecture. 14

The research presented in this paper builds on the notable advantages seen in collective construction of natural builders and growing research in CRC. The scope of this research is to establish and test a design framework for autonomous collaborative robotic construction (ACRC) with modular robotic material eco-systems (MRMES) trained with deep multi-agent reinforcement learning (DMARL). This design framework involves the integration of three core aspects: (1) modular robotic material eco-systems (2) cyber-physical simulation and control with bidirectional feedback (3) adaptive intelligence through DMARL / world models. In this paper, we establish the framework and then implement it through three case studies for collaborative modular robotic assembly of reconfigurable building parts (Figure 1). Autonomous Collaborative Robotic Construction (ACRC) case study 1, 2, and 3.

Background

Learning from nature: Autonomous ecologies of technology

The true potential for robotics and AI to transform the construction industry is limited by our conception of buildings as layered static structures where the value of robotics is constrained to automating unidirectional processes in isolated stages of construction. We propose a radical shift in paradigm toward a built environment developed as an eco-system of autonomous robots interacting with reversible building parts in continuously adaptive construction processes. Automation is derived from the Greek word “automaton”, meaning “self-movement,” while autonomy is derived from autonomous meaning “self-law.” A shift from automated toward autonomous architecture is enabled by developing an effective interdependency of three main properties defined as facilitated variation, situated, and embodied agency, and intelligence. 15 Facilitated variation in evolutionary biology is an observation that the bias’s in the intrinsic construction of an organism directly contribute to the effectiveness of its “evolvability”.16-18 At the scale of robotic construction this translates to how effective the relationships of geometry, materials, connections, and degrees of freedom are between robots and architectural elements. To be autonomous requires agency or the ability for the system to act by its own rules. This agency must be situated and embodied to act within the constraints of the physical world through a bidirectional linkage between a precise simulation environment linked with a physical sensing and control system. Finally, autonomous construction is not simply procedural, but must be adaptive which requires an embedded intelligence to learn to adjust itself effectively to unforeseen circumstances. In this context, a distributed approach to intelligence aligns with deep multi-agent reinforcement learning19,20 and world models 21 where multiple robotic agents learn to collaborate toward shared goals under dynamic conditions in a shared environment.

Despite the rise of artificial intelligence today and a long history of debates and discussions, there is no standard definition of “intelligence.”

22

We still refer to the Turing Test as a conceptual measure of artificial intelligence qualifying machine intelligence through its ability to exhibit intelligent behavior indistinguishable from, that of a human.

23

In Ways of Being: Beyond Human Intelligence, James Bridle challenges our conventionally short-sighted understanding of intelligence as being synonymous with “what humans do” or “human intelligence”, instead shifts our viewpoint to a posthuman perspective that considers many other forms of collaborative and distributed intelligence.

24

In the natural world, many forms of intelligence are decentralized from a single brain and are instead distributed, collective, and interrelational. For example, nomadic ant colonies forage for food to survive, facing extreme pressure to locate food sources and create foraging routes to effectively move and feed massive numbers of ants each day. They exhibit collective intelligence through stigmergy, spreading out to search for food while communicating successful routes by leaving pheromones as a form of communication through their environment.

25

They navigate complex environments and leverage their bodies as simple parts organized with local rules to construct adaptive “living bridges” (Figure 2)

26

while modulating their behavior in response to locally changing environments to adapt to dynamic traffic conditions, recover from damage, and dissemble when underused.

27

Living ant bridge.

The term “ecology” was first defined by Ernst Haeckel as “the whole science of the relations of the organism to the environment including, in the broad sense, all the conditions of existence.” 28 Bridle sees ecology as fundamentally different to other sciences in that it describes a scope and an attitude of study rather than a field which can be applied to many domains. He describes technology as the last field to discover its ecology calling for our need as a species to discover an ‘ecology of technology’, to form new relationships with technology and non-human intelligences to live with the world, rather than seeking to dominate it. 24 Shifting away from centralized intelligence and control to an ecological view where collective systems interact with each other opens new possibilities for leveraging distributed technologies.

In this research, autonomy is enabled through the bidirectional interdependencies of three core elements (1) design of effective constraints, degrees of freedom, and compatibility of active and reversible passive parts (facilitated variation), (2) integration of a simulation, sensing, and control system with bidirectional feedback (situated and embodied agency), and (3) AI agents trained to make autonomous and collaborative decisions based on predictive simulation feedback and sensory feedback from the physical environment to directly control the system’s robotic parts (intelligence).

Designing robotic material eco-systems

Modular robotic material eco-systems are developed with dynamic parts and static parts capable of physically interacting with each other (Figure 3). Should a single complex robot be capable of conducting all the tasks in constructing a building or would it be more effective to have collectives of simpler robots collaborating on distributed tasks? Should a construction robot’s body plan mimic that of a human or are there more effective modular body plans that can be linked or decoupled on-the-fly? A key property of biological evolvability deals with “weak linkages” or “weak signals” as specific types of nonlinear interconnectability that lends a capacity for high flexibility in how systems, components and processes are reconfigurable through gates and switches.

18

Tibbitts defines self-assembly as a process by which disordered parts build an ordered structure without humans or machine suggesting that geometry and materials matter most,29,30 while Gershenfeld develops principals for self-assembly around the concept of “digital materials” enabling reversibility and reconfigurability through computational models structuring the combinatorics of discrete parts.

31

Robotic material interface, case study 3.

We can define a modular robotic material system as having a complex and interrelational reciprocity between dynamic (robotic) elements and passive (material system) elements wherein modularity in both robotic elements, material system elements, and their connections enable effective individual and collective degrees of freedom for collaborative assembly, adaptation, and reconfiguration.

State of the art

Robotic manufacturing (off-site)

While global productivity has surged in many sectors such as manufacturing industries with increased adoption of automation and digitization in the past decade, it has remained virtually stagnant in the construction industry where significantly less uptake of robotic technologies has rendered the industry one of the least automated of the leading industrial sectors.32–34 One of the main differentiating factors is that in general manufacturing there are many opportunities for high volume mass production of parts while in building construction this degree of repetition is rarely possible. 35 Robotic manipulation of materials in construction has focused largely on mass customization through the automation of prefabrication processes and cooperative robotic fabrication (CRF) in controlled environments 36 including full scale, collaborative, robotic assembly of timber structures using industrial robotic arms hung in mobile gantry systems 37 ; multi-robot assembly of geometrically differentiated metal space frame structures; complex prefabrication of components with robotic fiber winding has been implemented for construction 38 ; robotic timber sawing and assembly39,40; hot-blade and wire-cutting41,42; brick assembly 43 ; robotic concrete printing44–46; and metal forming. 47 Machine learning has been implemented in complex fabrication processes such as adaptive robotic carving 48 ; automation of scaffold lifting machines 49 ; and robotic wire arc additive manufacturing. 50 Deep reinforcement learning has been used with robotic arms in controlled environments for high-precision assembly tasks, 51 solving insertion tasks, 52 and autonomous block stacking with visual sensing. 52 While these systems enable gains in productivity and efficiency with high precision, they are typically limited by the scale and degrees of freedom of the robotic arms and gantry systems.

Robotic construction (on-site)

Models of multi-robot collaboration have begun to transition from automated prefabrication tasks to applications of robotics for automation and autonomous robotics in onsite construction. 53 Examples of robotic construction on-site tend to focus on semi-automated approaches to individual repetitive tasks such as on-site concrete 3d printing, 54 cable-driven robots for installation of curtain wall facades, 55 semi-automated bricklaying robots, 56 robotic reinforcement placement, 57 among others. 8 While many of these systems offer improvements in efficiency and safety in repetitive and dangerous tasks, there are few examples of autonomous robotic systems capable of more complex interactions and collaboration. There are a growing number of companies invested in large scale building 3d printing 58 as well as one example of reinforcement learning for the control of large-scale 3D printing with tower cranes. 59 While these solutions offer rapid and customizable construction, they require large scale infrastructure and are typically not reversible. Other examples of semi-autonomous robotics used in construction also include Spot from Boston Dynamics for accurately scanning and inspecting construction sites and FieldPrinter from Dusty robotics, an autonomous layout robot that translates BIM to a layout drawn directly on-site.

Collective robotic construction (CRC)

Research in CRC systems is inspired by the ability of collective natural builders to offer efficient, scalable, and adaptive on-site construction. CRC systems leverage a range of centralized or decentralized coordination strategies across various codesigned robotic platforms and material systems. 14 Construction coordination includes centralized control, 60 local communication, 61 templated control, 62 and emergent coordination. 63 Principals have been extracted from biological construction and translated into the field of robotics through various algorithmic strategies such as stigmergy64–71 swarm flight,72,73 templating,66,67 blind bulldozing74,75 reactive and interactive construction65,76–78 task allocation79–81 and specialization. 82 Building element strategies for CRC include using predefined elements 70 ; amorphous materials 83 ; and continuous elements 84 which then translate into a range of mechanisms and material strategies such as robot/ brick codesign83,85–90; strut climber codesign91–93; compliant materials66,67,94; amorphous depositions 94 ; and fibers.95–99 Embodied swarm intelligence has been demonstrated through robot reconfiguration with robotic builders navigating over the simple blocks they stack. 100 The term “relative robot” relates to a co-dependency between a robotic system and material system for locomotion and assembly through its structured environment such as BILL-E robotic robots climbing on the modular lattice structure they assemble, 87 autonomous strut-climbing robots that climb over the trusses they construct, 92 and simple distributed robotic joints leveraging passive timber elements for kinematic chaining and locomotion.101,102 Distributed aerial robots have been developed for coordinated construction, brick stacking and other assembly tasks103,104 as well as assembly with reconfigurable elements. 105 Finally, swarm intelligence through aerial additive manufacturing (Aerial-AM) employs high degrees of freedom through teams of aerial robots with coordinated 3D printing, but the resulting structures are not reversible or adaptable.72,73

Researchers have experimented with colonies of social insects to study how they work collectively to design, communicate, and coordinate the construction of their complex living environments. 106 In natural builders, it has long been debated to what degree there is an “overall plan” of construction considered as the “mental image hypothesis” vs an entirely bottom process of “stimulus and response”.106,107 Due to the complex requirements of buildings, we separate the global design intent of the building from the localized scope of robotic agents. We develop degrees of autonomy through two separate yet tightly linked processes: (1) generative design processes for “goal assembly states” and (2) autonomous decision-making processes of robotic agents for collaboratively building/reconfiguring the “goal assembly states”. This paper focuses primarily on the latter.

Methods/research

Our design framework integrates the properties of facilitated variation, situated and embodied agency, and intelligence 15 through three core aspects: (1) modular robotic material eco-systems (2) cyber-physical simulation and control with bidirectional feedback (3) adaptive intelligence through deep multi-agent reinforcement learning and world models. We present the implementation of this framework on three case study research projects. Case study 1, 108 Autonomous Collaborative Robotic Reconfiguration with Deep Multi-Agent Reinforcement Learning [ACRR + DMARL] was developed in 2022 while case study 2, titled ReSpace, and case study 3, titled Stigmergic Spaces were developed in 2023.

All three case studies were developed in parallel and follow an iterative, agile development approach. They are developed as different robotic material systems with different typologies and degrees of freedom, testing their degrees of flexibility and efficiency while considering the range of potential geometric complexity of their architectural outcomes. For example, Case study one hypothesized that groups of a single type of very simple modular robot benefit from less constraints of movement and connectivity in collaboration while case study two investigated the potential large benefits in efficiency gained through two additional types of robots.

Each system must physically demonstrate robotic self- locomotion, material assembly, and collaborative reconfiguration with limited human intervention in a variety of conditions and demonstrate degrees of autonomy through semi-autonomous cyber-physical control tests, sensor feedback tests, and autonomous decision-making tests. A variety of increasingly complex tasks were set up to evaluate further degrees of success both digitally and physically over several development cycles. Case studies were initially evaluated on their ability to complete simple tasks, such as moving from one location to another. As task complexity increased, efficiency metrics, including the accumulated degrees of motor rotation and energy consumption, were used to compare behaviors within and across the case studies.

Facilitated variation: Co-design of modular robot material eco-systems

Modular robotic material ecosystems consist of active parts (bespoke distributed robots) and passive parts (reversible material units), designed to self-assemble and reconfigure architectural structures significantly larger than the robots themselves. In this work, we are not setting out to create fixed structures through additive deposition like termites or to assemble temporary structures out of found materials like weaver birds, but instead to learn from natural builders’ strategies to develop a reconfigurable construction system of mass produceable parts that can be adapted over time through simple reversible connections. The key to this process is in developing compatible end effectors, joints, and interfaces between active parts and passive parts which enable robot-robot, robot-passive part, and passive part-passive part connections. From this principle, a large variety of geometries, fabrication techniques, and materials could be explored. Robotic material ecosystems form their own structured environments within larger unstructured environments. Active parts can move themselves through “off-grid” self-locomotion in unstructured environments while also using the structures they assemble for locomotion (Figure 4). CRC Behaviour sampling: On-grid versus off-grid adaptability, case study 1.

Case study 1

Case study one features a simple modular robot and passive reversible timber building blocks of varying geometries in three sizes: 280 × 140 × 140 mm, 420 × 140 × 140 mm, and 560 × 140 × 140 mm (Figure 5). Each robot measures 280 × 100 × 100 mm, weighs 420g, and consists of three rigid bodies 3D printed in polylactic acid (PLA) with two magnetic end effectors. These elements are connected by four joints actuated by Dynamixel AX-12A motors, powered via a U2D2 Power Hub, and controlled with a Raspberry Pi Zero. Modular robotic material system, active and passive parts, case study 1.

Case study 2

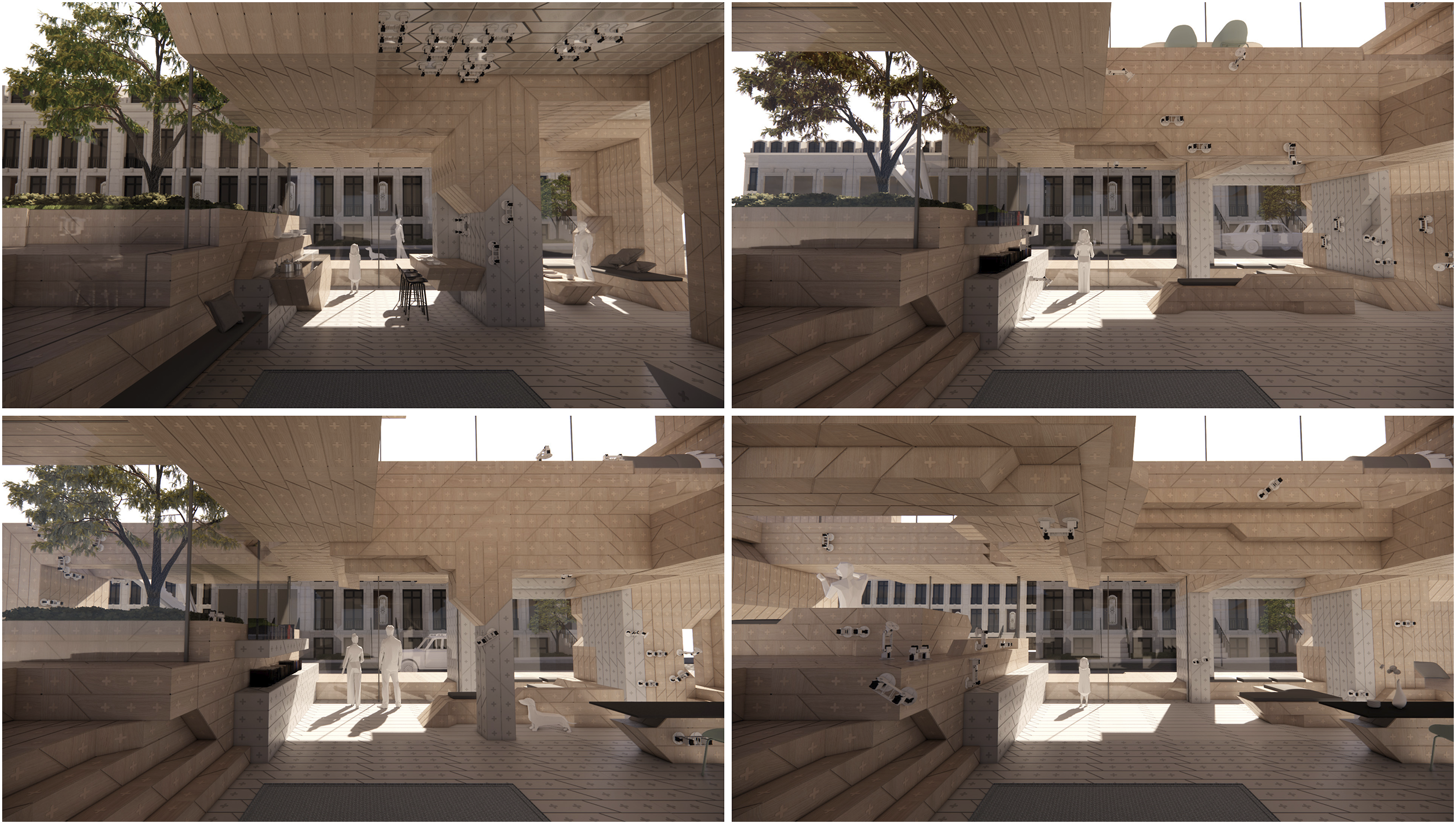

Case study two is developed with three types of modular robots (track, wheel, and arm) and three types of passive reversible building blocks with functions related to navigation, structure, and adaptability (Figure 1, 6 and 7) Modular robotic material system, active parts, wheel robot, track robot, arm robot, case study 2.

Track robot (Type 1) measures 100 × 100 × 70 mm with upper and lower surfaces 3D printed in PLA. It features two end effectors—a gear system and locking tool on the bottom, and a simple magnetic interface on the top. The gear system enables track-based sliding navigation, and the ratchet locking tool facilitates locking onto the track

Wheel robot (Type 2) measures 100 × 100 × 115 mm with 3D printed PLA surfaces. It includes two wheels and two universal ball bearing wheels on the bottom, and a magnetic interface on the top for stable driving navigation and direction changes

Arm robot (Type 3) measures 100 × 100 × 380 mm with four rigid bodies 3D printed in PLA and two magnetic end effectors. These six elements are articulated by five joints with one degree of freedom, enabling climbing navigation and material manipulation.

The passive blocks, developed with reversible connections and integrated navigation tracks, come in three types (Figure 7) Modular robotic material system, passive parts, case study 2.

Block type 1 is a cubic geometry (200 × 200 × 200 mm) with tracks and magnetic interfaces on each face

Block type 2 is a cubic geometry (200 × 200 × 200 mm) with magnetic interfaces on some faces and voids on the bottom, allowing the wheel robots to drive underneath and move vertical stacks of blocks

Block type 3 is a beam/column-like geometry (200 × 200 × 1000 mm) with tracks and magnetic interfaces on each face.

Case study 3

Case study 3 has a more complex modular robot and a single oblique type of passive timber block in various sizes (Figure 8). Each robot measures 360 × 120 × 120 mm, with a similar body plan and similar mechatronic control components to Case Study 1, but with more complex end-effectors integrating both wheels and retractable magnetic interfaces and eight Dynamixel AX-12A motors. The magnetic interfaces allow connections with the passive blocks, while the wheels enable sliding and free navigation. Modular robotic material system, active and passive parts, case study 3.

Common features

In all case studies, all passive and active parts share a common magnetic interface with four neodymium magnets, universally enabling all robot-robot, robot-passive part, and passive part-passive part connections. Magnets are placed with alternating polarities equidistantly along a circle’s circumference. Aligned equivalent polarities (position 0) repel, while opposite polarities (position 1) connect. A 90° rotation enables parts to engage and disengage. Robot-robot connections facilitate collaborative alignment for complex navigation and assembly. These interactions enable emergent collaborative behaviors, including robotic translations, material pick-ups, material and robot transportation, temporary support structures, and assemblies of robots and material units into joint body plans (Figure 12).

Situated and embodied agency: Cyber-physical simulation, sensing, and control system

Each modular robotic material ecosystem is integrated with a cyber-physical control system with four main elements: a control computer running a Unity-based simulation environment, a Raspberry Pi Zero per robot, a Dynamixel U2D2 per robot, and several Dynamixel AX-12A motors per robot (Figure 9). The custom agent-based simulation environment is developed in Unity to simulate interactions of robotic agents and passive parts within the constraints of the physical world while establishing bidirectional communication between the simulation environment, Raspberry Pi, and the Dynamixel hardware using the Dynamixel SDK. The simulator calculates behavioral changes in robots and building parts, sending sequenced motor speed and position changes via Wi-Fi to each Raspberry Pi. These instructions are dispatched through USB connections to the U2D2s, which convert the data and feed it into the motors via 3Pin TTL and 4Pin RS-485 connectors. Cyber-physical simulation, sensing, and control with bidirectional communication.

Social insects such as termites are found to communicate with each other through touch, vibration, and pheromone deposition as well as taking visual stimulus from their built environment to activate a building response 106 . To achieve a situated and embodied agency that is adaptive requires feedback not only in the simulator, but also from the physical world. In our system this degree of autonomy is enabled through feedback from both motor sensors and visual sensors. The Dynamixel AX-12A motors have built-in sensors that read their positions, speeds, and loads, feeding data back through the same pipeline to the simulation environment. This feedback verifies goal achievement and detects critical loads or obstacles. If a motor encounters an obstacle, the system updates the simulator to recognize and resolve the issue.

Visual sensory feedback was developed using Optitrack 109 motion capture to improve precision and adaptiveness (Figure 14). This system includes a Motive 110 motion capture session on the control computer, four Optitrack cameras, and reflective markers on the parts of the robotic material system. The cameras track part movements, sending 2D images of the markers to the control computer. Motive triangulates their 3D positions in the simulator using the Optitrack Unity 3D Plugin and NatNet SDK protocol.

The simulation environment measures efficiencies related navigation and assembly such as accumulated motor rotations, energy use, speed, and distance traveled. Additionally, we developed a real-time structural analysis tool that calculates forces and torque running through the assemblies whenever they are changed (Figure 11). These tools provide a feedback mechanism to evaluate behavioural efficiencies and structural performance which are leveraged in the agent’s machine learning training and predictive decision making.

Intelligence: Collaborative Intelligence with deep multi-agent reinforcement learning

The integration of the robotic material ecosystem with the simulation, sensing, and control system enables both human controlled and procedurally sequenced automation to robotically assemble and reconfigure physical structures. Moving toward autonomy requires the active agents in the system to make intelligent decisions in relation to their observations. Researchers have found that social insects operate collectively through decisions enabled by social communication and environmental stimuli, while coordinating work in specialized teams. For example, social wasps build nests from paper pulp which involves three subtasks: (1) actual nest building, (2) foraging wood pulp, and (3) collecting of water to adjust the pulp consistency. Marked wasp experiments have shown these tasks are performed by specialized groups while also showing that workers actively switch task groups when there are changes in demands. 111 This follows a principal called “series-parallel” where several individuals can independently, but in parallel begin and end a task 106 .

Similarly, considering each robotic material system as a distributed collective opens the potential for parallel construction processes with complex collaborative behaviors. Considering the complex solution spaces for adaptive and autonomous robotic planning of adaptive structures built from biased reconfigurable parts within dynamically changing construction environments, we targeted deep reinforcement learning (DRL) to train situated and embodied agents through self-play to learn adaptive locomotion and reconfigurable construction behaviors. 20 Considering a larger eco-system with various multiples of robots capable of collaborating through complex sequences of emergent coordinated movement, we extend this to deep multi-agent reinforcement learning (DMARL), a sub-field of DRL focused on the study of behavior of multiple agents that coexist in a shared environment. Each agent is motivated by its own rewards, taking actions based on its interests where those interests can be aligned or opposed to other agent’s interests, resulting in complex dynamics. 19

Agent-based decision making was developed through a series of task-based games set up in the simulator. We trained individual and groups of agents to receive the same reward structure, enabling them to learn emergent strategies for individual behaviors as well as when and how to collaborate. DMARL with self-play is set up through a series of simulated scenarios with single agents and teams of agents for navigation, pathfinding, assembly, and reconfiguration tasks with increasing complexity (Figures 10, and 17). Each robotic agent seeks to complete the task of the scenario by taking situated and embodied actions while receiving observations from the simulation environment. The results are evaluated by speed and energy efficiency (degrees and rate of motor changes), to find high performing behaviors. Single agents and multi-agent teams are trained using the ML-Agents framework in Unity 3D,

112

leveraging the simulation environment as a training gym where at each step a deep neural network (DNN) takes observations (o) and executable actions (a), and receives a reward (r) dependent on its incremental performance towards the goals of the scenario. The simulation tracks the time elapsed (t) and total absolute motor angular change (∑|Δa|), which serve in the reward function to promote speed and energetic efficiency. The positions of passive parts and agents are randomized in each training run to build adaptive and scalable behaviors in relation to different environments and scenarios. Collaborative intelligence with deep multi-agent reinforcement learning, training arena.

Degrees of autonomy were added by identifying the highest performing players, strategies, and behaviors in each game and incorporating them as part of the decision-making process in subsequent games. For example, in the navigation game an agent trained to rotate its motors in sequences that move it to the target discovers a sequenced pattern of rotating the agent uniquely which is found to be most efficient. In the subsequent games, this “patterned sequence” is brought forward as an actionable behavior. Likewise, an A* pathfinding algorithm 113 is found to be most efficient for calculating a path and is therefore brought forward as one part of the AI agent’s decision-making in subsequent games. By comparing trained agents with human players and algorithmic decision making we identify opportunities to incorporate algorithms and human-robot collaboration in the decision making.

Additional degrees of autonomy were explored in case study three by incorporating structural analysis as feedback in the decision-making process in order to learn to incrementally assemble structures that maintain stability using a real-time force prediction at each step. Each time a part is added or moved, the simulator measures the forces and torque values running through each connection node in an assembly of building parts (Figure 11). A dataset of assemblies was procedurally generated where each assembly contains an embedded graph representation with connection node positions, and forces and torque values stored as metadata. The dataset was used to train a graph neural network to predict the optimal next connection at each step that would increase or maintain stability (Figure 11). This prediction contributes to the agent’s autonomous decision-making strategy. Structural simulation for deep multi-agent reinforcement learning with force and torque feedback.

Results and discussion

Case studies: Facilitated variation: modular robot material eco-systems

Case study 1

The simple robot demonstrates a comprehensive range of single-agent and multi-agent behaviors (Figure 12): • Navigation / Locomotion: Individual robots navigate in unstructured environments, through rolling and stepping sequences. They navigate in all orientations using stepping, hanging, and climbing behaviors on structured assemblies. Multi-robot teams demonstrate collaborative navigation by connecting to translate each other’s bodies to traverse inclined planes, larger gaps, and obstacles. • Block Transport / Assembly: Individual robots can manipulate and place small and medium (∼460g) passive parts, but cannot transport parts continuously while moving themselves, so they repeat sequences of lifting, placing, and walking around / over each block. Larger passive parts (∼670g) must be lifted and manipulated by multiple robots through coordinated picking up and counterweighted sequencing. In collaboration, robots pass and transport passive parts in sequences about 300% faster than individual robots. Collaborative robot behaviours, case study 1.

Case study 2

This system’s three types of robots and three types of passive parts have a range of individual abilities and constraints while collaboratively, the three systems combine driving, sliding, and passing sequences offering extra degrees of efficiency and flexibility in complex assemblies. (Figure 1,13): • Navigation / Locomotion: Wheel robots move freely on surfaces, avoiding obstacles. Track robots slide efficiently in-plane but are constrained by the track arrangements. Arm robots demonstrate similar abilities to Case Study 1. Collaboration between these systems enables wheel robots to efficiently carry arm or track robots from one location to another, track robots to attach to arm robots and slide them efficiently across planes, and arm robots to lift and pass other arm, wheel, or track robots from one location to another and place them on different planes. • Block Transport / Assembly: Only arm robots can place and assemble blocks like Case Study 1. Wheel and track robots transport passive parts by driving or sliding, while arm robots arm robots carry, pass, and move materials similarly to case study 1. Block type 2 units feature ground-level voids with assembled parts on top. This design allows wheel robots to individually access and transport whole stacks or work together to move larger complex assemblies without disassembly, significantly boosting reconfiguration efficiency. Multi-type collaborative robot behaviours, physical and digital visualization, case study 2.

Case study 3

This system acts similarly to case study one while adding wheel-based navigation to the climbing robot (Figure 1): • Navigation / Locomotion: Combines functionality of wheel-based navigation allowing efficiencies through free movement in unstructured environments with similar individual and collaborative behaviours to case study one over structured assemblies • Block Transport / Assembly: Combines functionality of wheel-based navigation for efficient block transport in unstructured environments with similar individual and collaborative behaviours to case study one for placing and assembling blocks.

The light weight and simplicity of case study 1’s robotic material eco-system, makes it flexible for assembly and reconfiguration and simple to coordinate adaptive sequences through local neighborhood rules, but its singular arm robot type is less efficient for in plane locomotion or for transporting materials without collaborative passing sequences. The system leverages the clustering of robots and material units into combined body plans, and hybrid material-robotic morphologies surpassing the capabilities of basic collaboration. Robots operate as each other’s elevators, bridges, and cranes or combine with material units to move in clustered arrangements, such as bipedal or hexapedal clusters or place material units or themselves as temporary scaffolding during reconfiguration processes (Figure 12). While case study three gains a degree of freedom of movement through its wheels, its added weight makes it less efficient in performing collaborative behaviours. Case study 2’s three types of modular robots and passive parts have a variety of combinatoric abilities adding higher degrees of complexity through the location planning of specific block types for material transport. With this added complexity comes many opportunities for significant gains in efficiency through sliding and driving for material transport as well as using wheel robots to move whole stacks or complex assemblies along flat ground surfaces for larger scale reconfiguration (Figures 1, and 13).

Case studies: Situated and embodied agency: Cyber-physical simulation and control system

In case study one and 3, the simulator was developed as a multi-agent system with a single robot type and calibrated with the physical robots. In case study 2, a variety of actions were developed for the three robotic agent types and a method was developed for evaluating the current state of an assembly and calculating the shortest paths by extending the A* pathfinding algorithm

113

along available surfaces in the assembly. Case study three incorporated the simulation of forces and torques running through assemblies as real-time structural feedback. Case study one was developed with feedback from an Optitrack camera system for motion capture and applied it to a series of experiments which systematically evaluated different tracking setups, while activating specific sequences based on the positioning data of four robots and 18 material units (Figure 14). The value of the additional sensing lies in greater precision and adaptability to unpredictable physical environments which was validated in the experiments with an average of ∼350% increase in sequence activation. Optitrack camera sensing with motive, integration into simulation environment, case study 1.

Case study one developed a series of cyber-physical reconfiguration tasks at 1:1 scale with five robotic prototypes collaborating to assemble and reconfigure 50 material units into a variety of assemblies up to 3m in height (Figure 15). In case study 2, a series of reconfiguration tasks were demonstrated through the collaboration of three robots (one wheel robot, one track robot, and one arm robot) and a variety of 25 blocks of type 1 and 2 (Figure 16). In case study 3, a series of assembly demonstrations were setup with two robots and groups of 5-10 passive parts. Multi-robot collaborative assembly and reconfiguration demonstrator, case study 1, 5 robots & 50 passive parts. Multi-robot collaborative assembly and reconfiguration demonstrator, Case Study 2, three robots (1 wheel robot, one track robot, and one arm robot) and a variety of 25 blocks of type 1 and 2.

Case Studies: Intelligence: Collaborative Intelligence with deep multi-agent reinforcement learning

Each of the three case studies developed degrees of autonomy through trained AI agent decision making. In case study 1, DMARL was set up for individual and collaborative behaviors for a variety of scenarios in the simulator and the models were trained with approximately five million runs on average, enabling the DNN to learn a series of policies that maximize the rewards to achieve a series of goals.

Case study one initially implemented four training “games” with increasing complexity related to navigation (game 1), pathfinding (game 2), single agent assembly (game 3), and multi-agent collaborative reconfiguration (game 4) described in detail in this paper

108

(Figures 10 and 17). In game 1 (Open Navigation), two efficient locomotion behaviors emerged: 1) the “straight flip” uses 360° of total absolute angular change (∑|Δa|) over 260 frames (t) for each 140 mm of movement while the “tilted turn”, discovered by the trained neural network player, reduced the total absolute angular change (∑|Δa|) to 190° over the same distance with 290 (t). In game 2 (Pathfinding), the A* algorithm

113

was more successful than the trained agent and brought forward in subsequent games. In game 3 (Single Agent Assembly), several robot-part behaviors emerged from the trained agent including the “side-roll” as a material transportation strategy. In game 4 (Multi-Agent Collaborative Reconfiguration), the AI agents consistently had the highest success rates, revealing many emergent and high performing collaborative behaviors from a variety of material passing strategies to collaborative robot-unit translations such as the “carry-on” pass. Finally, the model was implemented with six fully trained robotic agents deployed to work together to create a skylight opening within a large, closed aggregation “slab”. The group autonomously coordinated the reconfiguration of 23 material units of diverse sizes to transform a flat roof into a 1.5 m skylight in 1min and 54 s (Figure 18). Deep multi-agent reinforcement learning training for navigation, pathfinding, simple assembly, and collaborative assembly, case study 1. 6 trained robotic agents coordinated reconfiguration of a slab into a skylight, case study 1.

Finally, Game 5 (Complex Collaborative Reassembly) has since been developed where two agents must collaborate to reconfigure a wall from one location to another with a diverse range of parts (Figure 19). The model was trained with DMARL to approach the problem with three different strategies: (1) reinforced sequence selection model (RSSM) with specific objective positions (SOP), (2) (RSSM)+(SOP) + Block Ordering Algorithm (BOA) and (3) (RSS) + Flexible Objective Position (FOP). Strategy one developed an emergent “mid-stack pass” behavior, but repeatedly failed with agents struggling to place the block correctly. Strategy two was typically successful in placing blocks “exactly” according to a guiding plan with a coordinated “walk-pass-place” pattern but took a long time to complete. Finally, strategy three was successful in placing the blocks within the volume in different orientations in half the time. Deep multi-agent reinforcement test sample for complex collaborative reassembly, testing three strategies on parapet reconfiguration of six blocks by two robotic agents.

Case study 2 developed single and multi-agent reinforcement learning strategies for locomotion, pathfinding, and assembly, beginning with training individual robot types. The simulation environment was used as a training gym with varied arrangements of obstacles, starting points, and goals constrained by the three robot types. Training scenarios were extended from single to multi-agent collaboration including rearranging whole “stacked” assembles using multiple wheel-based robots operating on the ground plane. Next, the model was trained with all three types of robots collaborating to move a single part from a randomized starting point at ground level to various goal positions within a wall (Figure 20). Finally, a team of two wheel robots, two track robots, and two arm robots were trained to fully reconfigure 36 blocks (27 block type 1 and block 9 type 2), between two different locations and configurations using a combination of individual and collaborative behaviors. The trained agents successfully reconfigured a large block into a wall with openings through coordinated behaviors by initially breaking the assembly into large stacks moved by wheel robots from one location to another followed by a series of collaborative sequences leveraging track robots to slide parts and arm robots vertically and use arm robots for precise assembly (Figure 20). 6 Trained robotic agents reconfigure 36 blocks between two different locations and configurations, case study 2.

Finally, case study 3 added a different degree of autonomy leveraging a graph neural network trained with a dataset of assemblies created by generating many arrangements of its passive building parts and their structural metadata (Figure 11). Here the agent is given the prediction of the most potentially “stabilizing nodes” while trying to assemble a structure toward a goal position. This resulted in a range of emergent outcomes of different sizes which closely approach, but do not exactly match the goal while maintaining stability at each step (Figure 21). This force feedback allows a multi-agent team to learn how to coordinate the construction sequence while maintaining stability. Deep multi-agent reinforcement with force feedback integration, generative outcomes, case study 3.

Conclusion

The transition from designing architectural robotics for isolated automation to developing ecologies of autonomous robotic agents interacting with simple, reconfigurable building parts offers new opportunities for adaptive and scalable construction. This was demonstrated in three case studies using our framework for Autonomous Collaborative Robotic Construction (ACRC) with Modular Robotic Material Ecosystems (MRMES), where modular robotic material systems were integrated with cyber-physical simulation, sensing, and control, and trained with DMARL to develop degrees of autonomy leveraging adaptive and collaborative policies.

One key finding in these studies is the interoperability between active (robotic) and passive (building) parts through a universal interface. In case study 1, simple active and passive modular parts formed hybrid body plans on-the-fly, exhibiting emergent collaborative behaviors that enabled highly adaptive navigation and construction of complex structures. Case study 2 introduced more efficient “sliding” and “driving” behaviors and specialized parts for coordinated movement of entire structures. Separating the three robotic types while allowing them to connect significantly increased system mobility and efficiency. This comparison highlights the potential value of expanded ecosystems of compatible modular robots with differentiated roles.

Future potential for robotic construction lies in designing interoperability between diverse autonomous modular machines and reconfigurable building parts. Instead of relying on overly specified robots, modularity and interoperability between robotic and passive parts allow for more efficient, flexible, and adaptive buildings. Modular robotic material ecosystems offer several advantages over traditional industrial robotic solutions. Like social insects, modular robots can distribute work in parallel with nonlinear construction sequencing, navigating structures without the need for external gantry systems or scaffolding. They are inexpensive to manufacture and employ principles of redundancy, enabling construction processes to adapt to individual robot failures without delays. Reconfigurable building parts with reversible connections allow structural assemblies to adapt over time, creating new types of sustainable and adaptive buildings developed from new circular economies of compatible building parts.

With the rapid acceleration of robotics and AI there is no doubt that construction sites will evolve from “partially automated” to “fully autonomous,” with robots working around the clock to build with increased speed, safety, and precision. Yet reappraising building construction from an ecological perspective is not simply an incremental improvement of how we automate construction, but a radical shift in the potential for buildings to self-adapt with a continuous lifecycle (Figure 22). In “Architecture as Agent,” Hanna shifts our view of robots as subservient workers toward intelligent machines, speculating on the potential for a built environment as an intelligent agent.

114

In 1969, Gordon Pask challenged static architectures, reconsidering architecture as a composition of interrelated active systems regulated and controlled through feedback with a constantly shifting environment.

2

In the future, autonomous modular robotic material ecosystems could embed the adaptive agency we see in natural systems directly in our built environment, transforming our relationship, and our conversation with it (Figure 22). Speculative vision of the transformation of reconfigurable building over time, case study 1.

Footnotes

Acknowledgments

The authors would like to acknowledge and thank the following student researchers for their contributions in support of this research: (Diffusive Habitats Project Team - 2021-2022): Sergio Mutis, Eric Hughes, Garyfallia Papoutsi, Faizunsha Ibrahim; (ReSpace Project Team - (2022-2023): Yuechuan Jin, Yongye Xie, Weiheng Zhao, Yaosheng Tang; (Stigmergic Spaces Project Team - 2022-2023): Çağla Şamcı, Yuying Xiang, Hansen Ye, Ziheng Zhou, Huize Qiu. Living Architecture Lab., Research Cluster 3 (RC3), B-Pro Architectural Design (AD) MArch, The Bartlett School of Architecture, University College London (UCL).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.