Abstract

The rapid rise of generative artificial intelligence (GenAI) tools is provoking intense debate about their potential to boost productivity, stimulate creativity, or disrupt employment. While insightful, these discrete, short-term metrics risk obscuring GenAI’s deeper and longer-term implications for the social fabric of organizations—that is, the ways in which pathways of organizational members interweave in the course of everyday work. In this essay, we identify three sociotechnical characteristics of GenAI use in the workplace—its multipurpose usability, unpredictable plausibility, and hyper-personalizability—and outline how they can reroute the ways employees cross paths, interact, and exchange resources in everyday work. We consider two contrasting scenarios. Under a laissez-faire scenario, GenAI use can disrupt the flows of resources, such as expertise, trust, and collegiality within the organization—in what we refer to as the rise of GenAI “polymaths,” “oracles,” and “sirens” in the workplace. Under a cultivation scenario, by contrast, generative artificial intelligence use is deliberately weaved into the meshwork of everyday work pathways in ways that strengthen, rather than erode, the social fabric that holds the organization together.

Keywords

Introduction

The widespread use of generative artificial intelligence (GenAI) tools—ChatGPT, Midjourney, Gemini, Harvey, and CoPilot among them—carries profound strategic implications for organizations. This unprecedented wave of innovation is fueling ongoing debate across academia and industry alike—from promises of heightened productivity and unleashed creativity to warnings of widespread job displacement. While the impressive capabilities of GenAI might warrant such hopes and anxieties, we contend that something even more fundamental is at stake for organizations.

Most of current discourse treats GenAI as a stand-alone technical artifact that either automates or augments discrete tasks and individual roles (e.g. Brynjolfsson et al., 2023; Dell’Acqua et al., 2023; Felten et al., 2023). Valuable as such analyses might be for charting GenAI’s immediate, task-level effects, they tend to overlook its longer-term, collective, and indirect implications for work practices (Huysman, 2020, Mayer et al., 2025), management (Retkowsky et al., 2024), and organizations (Ritala et al., 2024).

To foreground such deeper implications, we propose a shift in perspective: rather than treating GenAI as merely a stand-alone technical tool—thus limiting the analysis to its immediate capabilities and task-level effects, we adopt a flow-oriented approach (Baygi et al., 2021, 2024); Instead of asking what GenAI can do to this or that task or job, we ask how different patterns of GenAI use can reshape the ways organizational members cross paths, interact, and exchange resources (with one another and with GenAI) in the course of everyday work. Put differently, we examine how GenAI use can reshape—and be reshaped by—the social fabric of organizations.

In this essay, we first elaborate what we mean by the social fabric of organizations. Then, we explore how three sociotechnical characteristics of GenAI use in everyday workplace—namely, its multipurpose usability, unpredictable plausibility, and hyper personalizability—can reshape this social fabric. Viewed from a flow perspective, this reshaping takes the form of a rerouting of pathways along which resources flow within organizations. We explore this reshaping under two scenarios. Under a laissez-faire scenario, organizations treat GenAI as a mere technical upgrade with little regard for its implications for their social fabric. This, as we argue, can quietly disrupt the flows of resources, such as expertise, trust, and collegiality within the organization—a dynamic we refer to as the rise of GenAI “polymaths,” “oracles,” and “sirens” in the workplace. Conversely, under a cultivation scenario, organizations actively domesticate (Pakarinen and Huising, 2023) and host (Ciborra, 1999) GenAI—deliberately weaving its use into the meshwork of their everyday work in ways that preserve, or even strengthen, the future of their social fabric.

As GenAI is increasingly integrated in organizational work practices, these hidden but profound shifts in the organizational fabric will be of strategic importance. Understanding and preserving this social fabric will therefore be essential for organizations seeking to navigate the latent risks and generative possibilities of a GenAI-infused future of work.

The social fabric of organizations—a flow approach

When we speak of an organization’s social fabric, we do not mean to conjure the image of a finished cloth with warp and weft threads already fixed in place—a static metaphor for organizations’ latent social networks (cf. Avital et al., 2023 for an overview of how this metaphor has been utilized in different disciplines). Rather, we use the term to refer to the ways in which the paths of organizational members continually interweave as they go about their everyday work (Baygi et al., 2021; Pentland et al., 2025). That is, if we consider the path each organizational member traverses in their day-to-day “comings and goings” as a thread (Pentland et al., 2025), the social fabric of organizations is the ever-weaving meshwork 1 (Ingold, 2015; Introna, 2019) that forms and re-forms as these threads entwine or unravel over time. Social fabric is therefore always in-the-making—never complete.

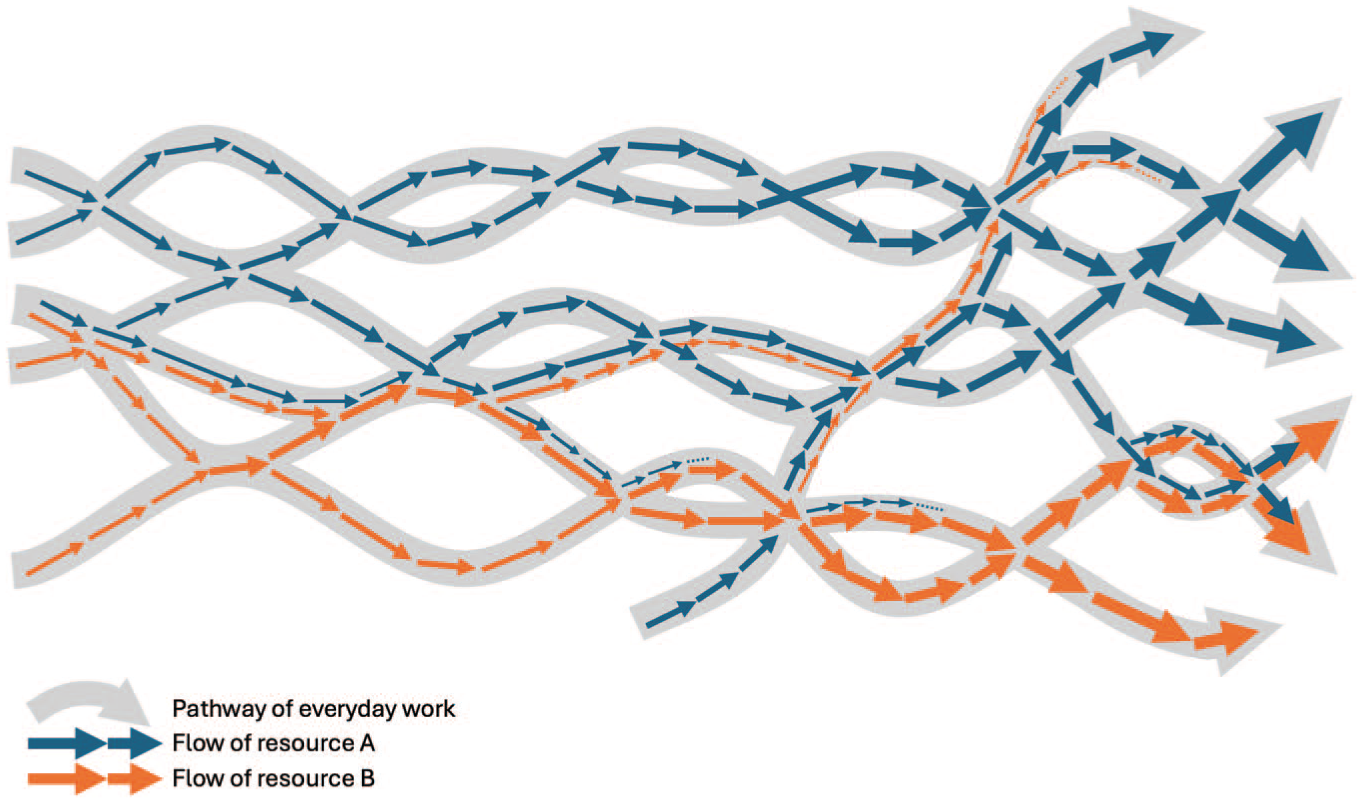

Social fabric matters, we argue, because its interlacing threads (who you message, meet, or bump into in the course of everyday work) are the very channels along which valuable, but often intangible, resources flow within organizations (Baygi et al., 2021; Baygi and Rezazadeh-Mehrizi 2024; Faraj et al., 2016). Knowledge and expertise, creativity and shared meanings, collegiality and support, trust, favors, influence—even power—all circulate through these routes. In turn, those flows reinforce the pathways that carry them: exchanging help today raises the odds you will seek out that colleague tomorrow. Social fabric therefore both channels resource flows and is continually rewoven by them (see Figure 1).

Social fabric as the meshwork of everyday work pathways & the flows of resources.

Importantly, to talk of the flow of resources is to foreground the temporal qualities of their movement (Baygi et al., 2021, 2024) Just as when one talks about the flow of traffic one is not interested in cars per se but in how they surge, idle, and maneuver; similarly, when we talk about the flow of resources, our attention is not focused on the resource-as-a-thing but on the rhythms, intensities, and directions of its movement. From a flow perspective, resources are thus never static assets; they are in motion, continuously circulating, accumulating, and mutating as they move along the shifting rhythms of everyday practice. Without such active circulation—without flowing— resources grow stale, lose vitality, and eventually dissipate (see thickening and thinning arrows in Figure 1).

The strength of an organization’s social fabric thus hinges on how and when the paths of organizational members cross one another. Frequent, timely, and generative intersections among these pathways produce a tightly woven fabric that ensures redundancy and resilience: if one thread falters, others reroute the flow of resources. In such a case, a colleague’s temporary absence is less likely to disrupt the circulation of resources, as existing well-interwoven pathways can absorb the shock and maintain continuity. In contrast, when intersections are sparse or poorly integrated the social fabric weakens, becoming prone to gaps and silos that reduce redundancy and makes the flow of organizational resources unstable. 2

Generative AI and the social fabric of organizations

GenAI represents an inflection point in the evolution of AI technology. Earlier “expert systems” encoded know-how as fixed “if-then” rules; they could only handle situations their designers had already anticipated, which confined them to narrow, highly structured applications: troubleshooting, medical diagnostics, and tax calculation. The subsequent generation of task-specific classificatory AI used machine learning for pattern recognition in large data sets; these tools were, and still are, trained for one specific classification purpose at a time: screening résumés, classifying radiology images, sorting seeds, predicting crime, and much more (Karacic et al., 2023; Kim et al., 2025; Van den Broek et al., 2021; Waardenburg et al., 2022).

GenAI models, by contrast, are task-agnostic, generative engines. Powered by transformer architectures, they do not classify to which bucket an input (a résumé, magnetic resonance imaging (MRI), and crime report) belongs; instead, they iteratively predict the next token in an output they are themselves composing. Thanks to their prowess and ease of use, GenAI tools have quickly become widespread, to the point that some commentators describe GenAI as a general-purpose technology—an infrastructure upon which other waves of innovations can be built.

Before moving on to examining how GenAI can reshape organizations’ social fabric, a word against the familiar trap of technological determinism. A deterministic stance portrays GenAI—its capabilities (e.g. “thinking” and “reasoning”), its evolution (e.g. a linear march toward general or super-intelligence), and its consequences (e.g. dramatic productivity gains, widespread job displacement)—as inevitable, unavoidable, “foregone conclusions” (Leonardi and Barley, 2010). Such a view obscures the mutually constitutive relations between GenAI and the wider socio-cultural and organizational context—the very mesh of relations that precondition and shape how GenAI tools are taken up and what they come to mean in different contexts.

In this essay, we instead foreground three sociotechnical characteristics of GenAI use in everyday workplace. That is, rather than focusing solely on its technical capabilities, we characterize GenAI in terms of emerging patterns in how practitioners integrate these tools into their work practices. Drawing on firsthand experience as well as early field evidence, we identify the following sociotechnical characteristics: multipurpose usability (employees can use the same tool to write marketing copy at 9 am, debug code at noon, and draft a legal clause by 3 pm), unpredictable plausibility (employees can take GenAI’s plausible sounding, but unstable, output and circulate it uncritically), and hyper personalizability (employees can train AI companions on their personal files, writing style, or project history).

While these three characteristics can have immediate task-level consequences, our interest in this essay lies in their relational implications as they weave into everyday work (Faraj and Leonardi, 2022). GenAI use does more than improve employee productivity; it can rewire who crosses paths with whom, when, and for what purpose. That is, GenAI use can reroute the pathways along which resources flow inside organizations. As these pathways shift over time—opening new conduits for certain flows (e.g. AI‑mediated expertise) while constricting others (e.g. spontaneous peer‑to‑peer help)—so too does the fabric that binds an organization together.

In the sections that follow, we explore this reshaping of organizations’ social fabric under two scenarios. We begin with a laissez-faire scenario, whereby organizations treat GenAI as a mere technical upgrade with little regard to how its use redirects resource flows and ultimately reshapes their social fabric. We exemplify the implications of each of the three sociotechnical characteristics by primarily focusing on one affected resource flow (namely, expertise, trust, and collegiality). These flows serve as telling examples, not an exhaustive list; they do nonetheless make the broader stakes of GenAI use at the workplace tangible.

Multipurpose usability and the flow of expertise

GenAI models can be used in a wide range of tasks, functions, and domains to process and generate synthesized content across modalities: text, images, music, videos, and code (Peres et al., 2023). Many early adopters have been struck by this multipurpose usability of GenAI tools, which has rapidly accelerated their adoption in the workplace. Employees are discovering that the same GenAI tools can assist in automating customer service conversations (Dell’Acqua et al., 2023), generating marketing copy (Kshetri et al., 2024), drafting legal documents (Martin et al., 2024), or even writing or debugging code snippets (Sauvola et al., 2024). This breadth of functionality positions GenAI not just as a tool for executing specific tasks, but as a versatile collaborator capable of spanning multiple domains with minimal adaptation effort.

However, beyond simply enhancing individual tasks, GenAI’s multipurpose usability—and the ensuing ease of hopping from one domain to another—can fundamentally alter how resources like expertise flow through an organization’s social fabric. Expertise has long been structured around professional authority and jurisdiction over specific tasks that require extensive training, qualifications, and experience, yielding domain experts who act as gatekeepers and custodians of specialized knowledge, skills, and judgment. Expertise therefore flows from these centers to the rest of the organization as members collaborate or consult with experts in the course of their day-to-day work. When we face an unfamiliar challenge, the standard practice has long been to consult an in-house domain expert: an email, a ticket, a meeting, and so on, through which the expert diagnoses the issue, supplies guidance, or reference materials, and if needed, steps in to execute the fix or coaches the requester through it. These micro-consultations keep expertise circulating in the organizations, while also strengthening the related intersections in the meshwork.

With the multipurpose usability of GenAI tools, non-specialists can increasingly bypass these traditional pathways, independently handling tasks that once required domain expertise. This “democratization of expertise” can lead workers to confidently dabble in tasks outside their usual roles. For instance, employees in non-technical roles can now tackle projects that previously required specialized technical input, such as creating dynamic dashboards or writing Python scripts, while technical professionals can use GenAI to handle tasks traditionally seen as requiring “soft skills,” such as drafting empathetic communication. Even highly complex and knowledge-intensive practices are increasingly becoming accessible to non-experts.

Under a laissez-faire scenario, these dynamics can affect the frequency and timing with which experts and non-experts cross paths. Over time, fewer and untimely crossings mean weaker, sparser meshwork of pathways for subsequent flows of expertise. In particular, GenAI’s polymath-like 3 appeal can give rise to workers who “do it all” with AI prompts. This can introduce conflicts and tensions around relations of expertise recognition, control, and jurisdiction within organizations. Professional expertise risks being undermined when such generalists, over-confident in GenAI, attempt to tackle complex tasks without sufficient background to critically assess the quality or implications of the AI output. This phenomenon, echoing the Dunning–Kruger effect (Kruger and Dunning, 1999)—whereby individuals with limited competence overestimate their abilities—can lead to superficial solutions that lack the depth true domain experts provide. As inbound queries dry up, experts will have fewer opportunities to hone their expertise, leading their skills to stagnate and erode. They might also face a slow devaluation of their expertise (Hsu and Bechky, 2024). Some commentators already speculate that soon fluency in GenAI tools—whether via prompting, fine-tuning, or contextualizing outputs—will be prized as highly as specialized mastery of any single domain.

Overall, while GenAI’s multipurpose usability can have clear benefits (e.g. fewer workflow bottlenecks), it also introduces risks, not only threatening the quality of work but also eroding the pathways that keep human expertise alive and the social fabric of organizations resilient.

Unpredictable plausibility and the flow of trust

GenAI models can generate plausible sounding but fundamentally unpredictable content: from inventive analogies to erroneous or misleading information. These models work based on a stochastic process, trained to sound coherent and plausible, rather than any deterministic knowledge retrieval or fact checking: Ask the same question twice and you may receive a completely different, but equally plausible, even authoritative-sounding answer. This unpredictable plausibility is compounded by GenAI’s emergent capabilities that appear as the models scale in size, training data, and “compute” (Schneider et al., 2024). Once a GenAI model crosses certain scale thresholds, it may acquire capabilities—such as basic arithmetic or chain-of-thought reasoning—that their creators never explicitly trained them for or even expected (Wei et al., 2022). As such abilities emerge, the model can spin longer, more intricate answers. This verbosity further masks its hallucinations behind fabricated citations, made-up statistics, and confident but logically incoherent arguments—making the outputs even more plausible but also harder to predict or control.

GenAI’s unpredictable plausibility can destabilize expectations of reliability, thereby disrupting the flow of trust through an organization’s social fabric. Trust is earned and bestowed inside organizations through repeated, reliable encounters in the course of everyday work: I send you a spreadsheet; you spot‑check it and find no surprises. We co‑author a slide deck; you trust that I have verified every chart. Over time, these micro‑confirmations thicken the pathways along which trust in each other’s competence and reliability flows.

However, as organizational members become impressed by GenAI’s plausible and confident output, they can begin to excessively rely on it in their work; copy-pasting its outputs without question, even when they have the necessary skills to evaluate or challenge them. Pressed for time, workers can easily skip verification altogether, relying on GenAI to produce entire reports, proposals, or code snippets. Once such content is integrated into organizational knowledge flows it becomes harder to identify or rectify errors. However, if/when the hallucinated content is finally exposed, blame and mistrust can spread through the organization’s social fabric. A single AI‑written paragraph that turns out to be hallucinated does not merely blemish the document; it casts doubt on coworkers’ diligence and, by extension, on the reliability of future encounters.

Under a laissez-faire scenario, employees are left to navigate GenAI’s unpredictable plausibility on their own. As a result, they may come to treat GenAI as an oracle-like 4 infallible source of knowledge—inadvertently disseminating authoritative-sounding but unreliable information throughout the organization. As such questionable content circulates in the organization, it undermines trust not only in their supposed authors, but also in the broader organizational processes that failed to catch or correct the issue. The mere possibility that GenAI was used without disclosure is enough to create an ambient suspicion that colleagues are lazily relying on it—even when they are not. Employees can grow wary of the reliability of each other’s work, judgments, and decisions, interpreting signs like the use of certain styles, words or formatting as clues of AI involvement. 5 When the appearance of correctness can no longer be taken as evidence of correctness, every hand‑off arrives under a looming question mark: is this generated by GenAI? The result is a systemic “trust deficiency,” where reliable encounters give way to second-guessing and increased verification overhead—slowing down collaboration and, ironically for the GenAI optimist, decreasing collective productivity.

Overall, although GenAI’s unpredictable plausibility can offer advantages (e.g. generation of novel or outside-the-box ideas), it also poses significant risks; eroding not only the reliability of work but also the very pathways that nurture the flow of trust and sustain the social fabric of organizations.

Hyper personalizability and the flow of collegiality

Unlike earlier AI systems that required specialized training and top-down implementation, current GenAI tools allow organizational members to adopt and personalize their use. This hyper personalizability allows employees to easily experiment and adapt these tools to their individual workflows—for example, by actively or passively training these tools on their own email threads, project logs, or writing styles—all without involving IT, data science teams, or management. As a result, use patterns proliferate along highly personalized and idiosyncratic lines, faster than any standardized policy can keep up with (Dell’Acqua et al., 2023), even in organizations that restrict GenAI use (Retkowsky et al., 2024). At the same time, management is now counterbalancing: investing in fine-tuned foundation models and retrieval augmented generation (RAG) to create GenAI-enabled knowledge management systems (Alavi et al., 2024; Earley, 2023). Such enterprise solutions, however, can feel rigid to workers who are increasingly accustomed to their personalized GenAI tools and workflows, mirroring tensions seen in earlier technologies, such as personal versus enterprise social media (Oostervink et al., 2016).

GenAI’s hyper personalizability, and the idiosyncratic workflows it fosters, can disrupt the flow of collegiality within organizations’ social fabric. Collegiality—a sense of camaraderie and mutual care among colleagues—grows in spontaneous and informal encounters in the course of everyday work: hallway banter, a coffee chat over half-baked ideas, or a quick word of encouragement after a rough customer call (Fayard and Weeks, 2007). Over time, these micro‑interactions thicken the non‑transactional pathways along which favors and moral support flow within organizations. Collegiality flows when coworkers reach out often and reciprocate timely; it weakens when such encounters are displaced or delayed.

As employees grow more accustomed to their increasingly personalized GenAI tools and workflows, the relative cost of seeking a human colleague can rise. Why ask a coworker about how to handle an escalating email thread with the boss, when you can paste the thread into an ever-present AI chatbot—knowing it has the full context of your writing style, past threads, even the boss’ rhetorical tics? Asking a (busy) colleague can increasingly feel slower, more effortful—even riskier—and, unlike a coworker, the AI never asks anything in return. This convenience gap widens further in hybrid and remote settings, where serendipitous hallway crossings were already scarce (Waizenegger et al., 2020).

Under a laissez-faire scenario, employees may increasingly favor the speed and convenience of consulting their personalized GenAI tools over interactions with colleagues. While this self-sufficiency might appear efficient in the short term, it risks creating a siren

Overall, GenAI’s hyper personalizability offers certain benefits (e.g. allowing to shape tools to one’s own rhythms and enjoying a sense of autonomy). But it can also isolate workers into self-contained “AI islands,” thereby eroding the pathways that nurture the flow of collegiality and sustain the social fabric of organizations.

Unweaving the fabric: polymaths, oracles, and sirens in the workplace

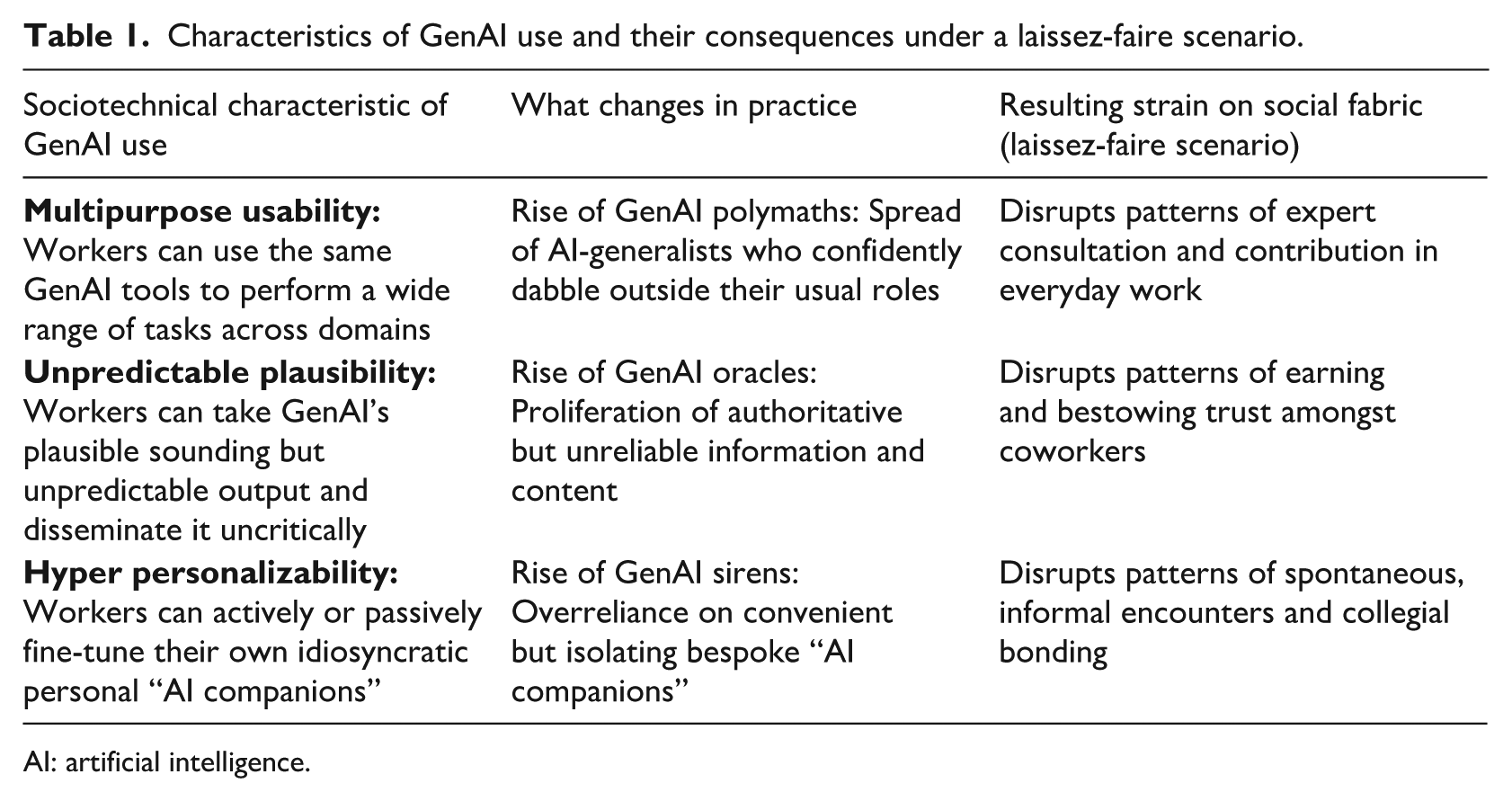

Thus far, we have outlined how, under a laissez-faire scenario, the sociotechnical characteristics of GenAI use can threaten the pathways along which resources, such as expertise, trust, and collegiality flow in organizations. Left unchecked, GenAI use may spawn workplace “polymaths,” “oracles,” and “sirens” that can considerably disrupt these flows. Table 1 summarizes these patterns and their associated implications for the social fabric of organizations.

Characteristics of GenAI use and their consequences under a laissez-faire scenario.

AI: artificial intelligence.

Importantly, the three implications we have unpacked so far are not discrete or separate phenomena. From a flow perspective (Baygi et al., 2021, 2024); and as illustrated in Figure 1, resources, such as expertise, trust, and collegiality do not travel through separate threads, but are part and parcel of the same meshwork of everyday pathways of work; disruptions in one ripple through the others, amplifying the overall impact on an organization’s social fabric. When employees rely on GenAI to bypass expert input, errors can propagate, eroding the patterns of earning and bestowing trust among colleagues. A weakened flow of trust makes employees hesitant to engage in spontaneous exchanges, further disrupting the flows of collegiality. In turn, as spontaneous encounters dry up, opportunities to build trust and “know who” within the organization diminish, leaving employees less willing—and less able—to seek or share expertise, thereby weakening the flow of expertise.

Toward a cultivation scenario

The laissez-faire scenario presented above is of course not the only way that organizations can or will engage with GenAI. On the opposite end of the spectrum lies an overregulation scenario, where organizations approach GenAI solely in terms of guardrails: rules, regulations, and protocols. For instance, organizations might outright ban the use of GenAI tools or limit it to pre-approved applications under close managerial supervision. While such measures might be inescapable in the short term, we argue that they are fundamentally inadequate for addressing the indirect, long-term consequences of GenAI use. Guardrails tend to target direct consequences of GenAI use, for instance, to ensure accuracy in outputs or to minimize data security risks. However, they often overlook the deeper, systemic transformations in relationships, roles, and workflows—the “undertow” of digital transformation (Scott and Orlikowski, 2022). It is in this undertow that the enduring impacts of GenAI on organizational dynamics—including on the social fabric—take root, often outpacing, and circumventing guardrails. Moreover, as mentioned before, over-restrictive regulations can only push GenAI use underground, exacerbating the proliferation of idiosyncratic and isolating practices as well as the constant second-guessing of coworkers’ work (Retkowsky et al., 2024).

To move beyond the dual pitfalls of the laissez-faire and overregulation scenarios, we propose a cultivation scenario, whereby organizations deliberately weave GenAI use into the meshwork of their everyday work in ways that sustain, even strengthen, their social fabric. This middle-ground scenario begins with the recognition that a tightly woven social fabric is key to domesticating (Pakarinen and Huising, 2023) and hosting (Ciborra, 1999) GenAI. Rather than instituting rigid guardrails to keep GenAI at arm’s length (e.g. for the sake of accuracy or security alone), a cultivation approach ensures that, in using GenAI in everyday work, colleagues continue to cross paths frequently, timely, and meaningfully. Such old and new intersections channel GenAI use along routes that keep key resources, such as expertise, trust, and collegiality, flowing through the organization. Below, we outline such a cultivation scenario by focusing on how each of the three sociotechnical characteristics of GenAI use can be leveraged to cultivate resource flows and ultimately the social fabric of organizations.

Leveraging multipurpose usability to cultivate flows of expertise

Rather than bypassing established pathways of expertise application and transfer, organizations can leverage GenAI’s multipurpose usability to cultivate those channels. Doing so requires resisting the myth of the lone “prompt wizard” who can “do it all,” and instead rerouting GenAI dabbling where it can add value. For example, organizations may distinguish between low-, medium-, and high-stake workflows: Any employee may use GenAI for routine, low-stakes work—to test ideas, prototype analyses, or mock-up communications. However, more complex or higher-stake workflows are routed through “expertise checkpoints,” where qualified experts review, amend, and sign off on AI-assisted drafts. In “mission-critical” workflows, GenAI can have but an advisory role: experts may consult it, but the final output must be traceable to a qualified human.

This multi-tier routing keeps expert contributions flowing where they matter most, while also preserving the visibility of expertise in the organization—clarifying when, and from whom, specialized insight is required. Moreover, off-loading low-stakes work frees experts to tackle novel problems and upskill continuously, including through purposeful experimentations with GenAI. Meanwhile, non-experts can legitimately dabble and experiment outside their traditional roles. This low-cost exposure to adjacent domains enables workers to build boundary-spanning literacy—helping them to ask better questions of specialists, when needed. It also allows them to explore whether their talents or passions lie elsewhere. Once such exploration reveals genuine aptitude, short-term secondment and mentor-pairing programs can link the aspiring generalists with domain experts, turning individual dabbling into organizational learning and/or transferring the know-how needed to assess GenAI outputs critically.

Organizations can thus leverage GenAI’s multipurpose usability for low-cost upskilling for experts and reskilling for generalists. Low-stake dabbling lowers the barrier to exploration, while expertise checkpoints and mentorship pairings keep authoritative expertise flowing where it matters most. In this way, GenAI use becomes less a shortcut that frays the social fabric and more a spin that weaves new threads of expertise application and transfer.

Recasting unpredictable plausibility to cultivate flows of trust

Rather than treating GenAI use as a “guilty secret,” organizations taking a cultivation approach assume GenAI is always involved; they instead embed GenAI use in shared transparency and verification practices. Organizations can state upfront that every draft is presumed to have drawn on GenAI (unless stated otherwise), and ask employees to attach provenance tags and prompt histories for their output. Teams can then pair up as ‘audit buddies’: one partner drafting with GenAI while the other probing assumptions, requesting sources, and flagging hallucinations—before signing off on the work. Weekly “devil’s advocate” sessions can subject high-impact deliverables to pointed skepticism: “What evidence backs this? Could the opposite be true?” A shared log can keep track of hallucination patterns: prompt snippet, error, fix, and downstream impact. Over time, this log becomes an organizational asset: used both for training purposes and also in-house prompt libraries and style guides.

Such a stance shifts attention from who produced the text to how it was produced. This way, the patterns of earning and bestowing trust shift from the presumed authorship of the text to the visibility and credibility of the process that produced it. Once a document has passed through the audit-buddy loop, the devil’s advocate challenge, and so on, the pathway itself attracts trust, even if minor errors slip through. In addition, such rituals allow organizations to build literacy around GenAI’s appropriate and inappropriate use cases. In the course of these rituals, and not during dry training sessions, will employees have the opportunity to organically learn to navigate the stochastic nature of GenAI outputs: to develop an eye for hallucinated content as well as to learn strategies for cross-referencing, verifying, and contextualizing outputs.

Organizations can thus recast GenAI’s unpredictable plausibility as a catalyst for new trust-building encounters: by regularly interrogating, refining, and ultimately co-owning knowledge claims, workers can restore trust in each other’s reliability. Instead of quietly second-guessing each other’s work, they witness each other’s continued participation in a shared verification process. In this way, GenAI use can tighten existing strands of trust, while weaving new ones, into the organization’s social fabric.

Harnessing hyper personalizability to cultivate flows of collegiality

A cultivation approach would not ban personal AI companions; it would socialize them to serve as occasions for collegial encounters. As with the above, this approach begins with openness about GenAI use; when AI assistants operate in the open, coworkers can ask follow-up questions—“How did you get it to do that?”—that could spark helpful asides or a bit of humor. Organizations can then implement synchronous “refinement checkpoints”: employees may use GenAI tools for initial ideation but refine and contextualize their outputs in direct discussions with peers. These real-time interactions not only improve the final outputs but could also spark a moment of serendipitous laughter or empathy that a private AI chat would have short-circuited.

Organizations can also experiment with socializing personal AI sidekicks. “Introduce Me to Your AI” events can encourage pairs (or small teams) to interact with each other’s AI assistants, giving the bots a feel for the broader work relations. Once acquainted, an AI assistant can nudge its user toward the right colleague, whenever appropriate—“John usually sanity-checks figures like this; want me to loop him in?” Moreover, hyper personalization need not stop at individuals; teams can train an AI team member stocked with a collective memory and prompt library. As members co-craft prompts and refine outputs together, GenAI use shifts from an isolating individual effort into a communal problem-solving activity, bestowing the teams with opportunities for informal interactions.

Finally, organizations can carve out “AI-free zones,” designated times or spaces for exclusively person-to-person interaction. Inside these zones, employees exchange small talk, share half-formed ideas, and so on—letting unstructured chatter spill over, fostering collegiality among colleagues who might never have crossed paths. Organizations can thus harness GenAI’s hyper personalizability to cultivate a mesh of spontaneous, informal crossings, thereby thickening the pathways through which collegiality flows in their social fabric.

***

It bears emphasizing that our analytical distinction between cultivating flows of expertise, trust, and collegiality is just that—analytical. In practice, each cultivation move serves to create frequent crossings that bundle flows of resources together: refining a hallucination in a buddy audit not only transfers expertise, but it also reaffirms trust in one another’s vigilance and might even spark an inside joke; admitting a prompt backfired is a bid for trust, showing them the fix is a micro-gift of expertise that incurs a debt of camaraderie. The result is a meshwork of pathways whose redundancy and resilience come from the way the flows of resources knot together in every encounter.

Moreover, the extent to which organizations can effectively adopt this cultivation scenario depends on how carefully they have nurtured their social fabric to date. Organizations with a history of fostering their social fabric may find themselves better equipped to ensure continued flows of resources, such as expertise, trust, and collegiality in the face of GenAI use. Conversely, organizations with weaker or fragmented social fabrics may face greater difficulty in mitigating risks and realizing the potential benefits of GenAI integration.

Conclusion

As GenAI spreads to every corner of organizational life, it is tempting—yet ultimately misleading—to frame its impact in terms of discrete, short-term metrics: higher productivity, enhanced creativity, and job displacement. Our central claim in this essay is that such an individualist, task-level lens obscures GenAI’s deeper, indirect, and longer-term implications. GenAI is not just a better tool but also it is a sociotechnical phenomenon whose rise in the workplace can slowly reroute how employees cross paths, interact, and exchange resources (both with one another and with AI). Left unchecked, this quiet rerouting can strain the pathways through which resources, such as expertise, trust, and collegiality flow inside organizations. Alternatively, as we argued, by deliberately weaving GenAI use into the meshwork of everyday work pathways, organizations can ensure that employees continue to cross paths frequently, timely, and meaningfully—thereby cultivating rather than eroding the social fabric that holds the organization together.

On a theoretical plane, we invite scholars to draw upon the concept of the social fabric—as the meshwork of everyday work pathways—to explore the more indirect, longer-term, and systemic implications of GenAI use in organizations. Specifically, to study such meshworks (Ingold, 2015; Introna, 2019), we invite researchers to shift from static, structural views of workplace relations, and instead adopt a longitudinal flow perspective (Baygi et al., 2021, 2024); Similar to the relational perspective (Faraj and Leonardi, 2022), but with a temporality-first commitment, the flow perspective allows researchers: to explore the ways in which GenAI use can affect the everyday “comings and goings” (Pentland et al., 2025) of organizational members; to trace how these altered pathways reroute the flow of key resources throughout the organization; and to ultimately determine the conditions, mechanisms, and contextual factors under which these rerouted flows strengthen or weaken the continued weaving of organizations’ social fabric.

Furthermore, we advocate for adopting a sociotechnical approach to GenAI. That is, instead of treating GenAI as mere technical tools or external forces imposed on an organization, GenAI is better approached in terms of intertwined social and technical concerns. Such a perspective enables a more nuanced and layered understanding of how sociotechnical characteristics of GenAI use (e.g. its multipurpose usability, unpredictable plausibility, and/or hyper personalizability) can weave into and reshape the social fabric of organizations: splintering existing pathways and/or knotting new intersections.

Finally, we invite researchers to identify and analyze “best practice” cases where organizations successfully preserve or even strengthen their social fabric amid the integration of GenAI. Such studies can offer actionable insights, best practices, and also boundary conditions, to guide organizations in mitigating the disruptive effects of GenAI, while safeguarding continual flows of key resources.

Footnotes

Acknowledgements

We are extremely grateful to the Special Issue editors for their insightful and constructive guidance throughout the review process. We would also like to thank the participants of the Theorizing Data and AI Workshop 2025 for their feedback and helpful reflections. Special thanks go to Lauren Waardenburg for her deep engagement and friendly reviews of our manuscripts. We also thank our colleagues at the KIN Center for Digital Innovation, Vrije Universiteit Amsterdam, for their feedback on earlier versions of this work.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.