Abstract

Prior literature has emphasized that inconsistency of market signals leads to evaluation penalty. However, limited attention has been paid to the heterogeneity of audiences who deal with inconsistency. I argue that audiences differ in the extent to which they process different market signals, which may largely shape the effect of signal inconsistency. When audiences fail to process all signals, they may not perceive signal inconsistency, thereby weakening its effect on product evaluation. It is hence important to investigate audience heterogeneity in theorizing signal inconsistency. In this study, I focus on the distinction between two important audience groups: professional critics and end consumers. Specifically, I argue that signal inconsistency exerts a stronger effect on critics’ evaluations than on consumers’ evaluations, because critics are more likely than consumers to process various market signals. I argue further that critics can act as an important intermediary to bridge the effect of signal inconsistency on consumers, even though consumers may not process all signals themselves. I test these ideas in a sample of video games released between 2001 and 2016 and find general support.

Keywords

Introduction

Market signals play an important role in shaping evaluation as they help reduce audiences’ uncertainty about quality (Spence, 1974). Research on the signaling process has proliferated over the last few decades, identifying various positive and negative signals in a wide array of contexts (Connelly et al., 2011; Steigenberger and Wilhelm, 2018). While the literature has well established how and when one particular market signal is effective (Kim and Jensen, 2014; Negro et al., 2015; Podolny, 1993; Pollock and Gulati, 2007), recent studies emphasize that most firms release multiple signals that convey quality information, and draw attention to an important issue of inconsistency when the signals are incongruent (Wang and Jensen, 2019). Signal inconsistency can be harmful to firms who send those signals, because it creates ambiguity about their quality, raising audiences’ concerns about what quality to expect from the firms (Zhao and Zhou, 2011).

However, for signal inconsistency to influence evaluation, a key scope condition is required that audiences process all market signals of a firm (Connelly et al., 2011; Madsen and Rodgers, 2015). Only when audience members pay attention to various signals, could they perceive any inconsistency of the signals. Failing to process all signals may lead audiences to misperceive the level of inconsistency, thereby weakening the adverse effect of signal inconsistency on their evaluation. 1 However, this critical issue has been underexplored in the research of signal inconsistency. Indeed, prior literature on signal inconsistency has mostly examined its impact on one specific type of audience evaluation (Jensen and Wang, 2018; Vergne et al., 2018), with limited discussion on the heterogeneity of audiences who deal with the signals. This neglect is a bit surprising, given the emphasis on audience heterogeneity in recent literature on social evaluation (Durand and Haans, 2022; Falchetti et al., 2022; Sharkey et al., 2022). Kim and Jensen (2011), for instance, show that opera critics and fans react differently to market identity; Pontikes (2012) finds that investors and consumers have different preferences in evaluating startups. Bringing audience heterogeneity into research on signal inconsistency, I emphasize that audiences differ in their processing scope of various signals, so that they may not react to signal inconsistency in the same manner. By doing so, I shift attention from the general signal inconsistency discount (Vergne et al., 2018) to how the evaluative concern of signal inconsistency differs across audience groups.

I focus specifically on two types of market audiences—professional critics and end consumers. Critics and consumers are important audiences in the product market, as their evaluations are consequential for firms’ economic exchanges (Kim and Jensen, 2011). Whereas consumers determine more directly firms’ market performance (Zhu and Zhang, 2010), critics influence the demand for goods in an indirect way (Reinstein and Snyder, 2005; Zuckerman, 1999). It seems intuitive that both critics and consumers are susceptible to signal inconsistency, as they rely on market signals to form evaluative judgments. I emphasize, however, that the two types of audiences differ in terms of their attention to various signals (Kovács and Hannan, 2010), a key scope condition for signal inconsistency. While critics often analyze a firm exhaustively in their evaluation process (Hsu, 2006a; Sharkey et al., 2022), consumers rarely process all the signals of the firm. Since signaling can only be effective to the extent that audiences receive and interpret signals (Madsen and Rodgers, 2015), consumers would be less likely than critics to observe the inconsistency of the signals. As such, I argue that the impact of signal inconsistency is weaker on consumers than critics.

Second, on top of the direct effects, I stress that critics can act as an important market mediation to bridge the effect of signal inconsistency on consumers. While prior literature has emphasized that different audience groups can react differently to market practices and signals (Pontikes, 2012), the mutual influence between audience groups is much less discussed in studies of audience heterogeneity. Different groups of audiences are not completely isolated, but commonly affect each other’s evaluations (Kovács and Sharkey, 2014). For instance, while peers in movie production have different evaluative focus than professional critics (Cattani et al., 2014), winning Oscars from peer voting might also influence how a film is evaluated by critics after the ceremony. As such, accounting for the link between different groups is both necessary to estimate each audience group’s direct (instead of total) reaction to signal inconsistency, and important to understand the pathways through which signal inconsistency affects the evaluations of different audiences (Kovács and Sharkey, 2014). The link is particularly pronounced between critics and consumers, since critics have long been known for shaping the attention and evaluation of consumers (Basuroy et al., 2020; Blank, 2007), albeit critics may sometimes conform to the taste of consumers as well (Pang et al., 2022). By emphasizing the interaction between critics and consumers, I argue that even though consumers may not have a full coverage of a firm’s signals and hence be less reactive to its signal inconsistency, critics can mediate the effect of signal inconsistency on consumers.

By specifically examining the temporal incongruence of reputation signals, this article also emphasizes a new form of inconsistency. Prior studies have focused on two types of inconsistency. Some scholars highlight dimensional inconsistency, the extent to which signals are different across dimensions (Vergne et al., 2018; Zhao and Zhou, 2011); others pay attention to categorical inconsistency, the extent to which signals are incongruent spanning market categories (Jensen and Wang, 2018). In this article, I shift attention to temporal inconsistency of a firm’s quality signals over time, which remains underexplored. Whereas the literature on reputation has mostly examined the average level of past quality (Shapiro, 1983), this study emphasizes that the variation in quality levels in the past also imposes substantial constraints on new product evaluation. That is, audiences are sensitive to the consistency of reputation signals, above and beyond the average level of the signals.

I test these hypotheses using a sample of video games. This empirical setting is appropriate as it allows me to observe separately critic and consumer evaluations of games on Metacritic (Chen et al., 2012). I first develop a framework to examine and compare the effects of signal inconsistency on critic and consumer evaluations. Empirically, signal inconsistency of a game developer is defined as the extent to which the quality levels of its past games are incongruent. And then I employ structural equation modeling to formally disentangle the direct effect of signal inconsistency on consumers from its indirect effect due to the mediating role of critics. The results show that while the direct effects of signal inconsistency on critic and consumer evaluations are both negative, the former’s effect size is more than two times as large as the latter’s. I also find support for a mediating effect of critic evaluations on the relationship between signal inconsistency and consumer evaluations. This explains why the total effects of signal inconsistency on critic and consumer evaluations are comparable, but the two direct effects are substantially different.

Theory and hypotheses

Market signals and signal inconsistency

Uncertainty about quality is a widespread and important feature of markets, as quality information is commonly asymmetric between firms and their audiences (Shapiro, 1983; Spence, 1974). Firms who are insiders often obtain information that is not available to outside audiences. This is particularly so when products involve more experience attributes (e.g. movies, wines, and video games), such that one can only evaluate products through consumption (Nelson, 1970). To deal with the uncertainty, audiences tend to rely on various market signals of a firm and its products during quality evaluation (Ebbers and Wijnberg, 2010; Ni and Tang, 2013). Market signals are strategic measures that convey quality information about a firm or a product, influencing how audiences evaluate them in uncertain situations (Vergne et al., 2018). A positive signal enhances audiences’ beliefs about a product’s quality, whereas negative signals cause more concerns.

To evaluate a product, audiences may refer to both the signals that are specific to the product and the general signals of its producer. Audiences are sensitive to a variety of product-level signals (Connelly et al., 2011). High price and unconditional warranty of a product, for instance, make potential buyers to perceive good quality (Milgrom and Roberts, 1986); product recalls, on the contrary, contain negative information about the products and their producers (Rhee and Haunschild, 2006). At the same time, producer-level signals such as status and reputation constitute producers’ vertical market identity that entails normative expectations, signaling the quality of their offerings (Podolny, 1993; Sorenson, 2014). Audiences usually believe products to be of high quality if they are offered by firms who are of high status or high reputation (Obloj and Capron, 2011; Shapiro, 1983; Stern et al., 2014).

While earlier literature focuses on whether and when one particular signal is effective, recent studies shift attention to the inconsistency of multiple signals from the same source (Vergne et al., 2018). A firm often releases different signals that convey quality information (Ni and Tang, 2013), which can be either consistent or inconsistent. When multiple signals are congruent, they can cross-validate each other and enhance the effectiveness of the signaling process (Stern et al., 2014). Consistency among multiple signals is hence crucial in constructing a clear and robust market identity of firms. In reality, however, signals are often incongruent, leading to an issue of signal inconsistency. Signal inconsistency refers to the extent to which multiple signals convey incongruent quality information about the same firm (Connelly et al., 2011). Signal inconsistency can be detrimental to firms, because it creates ambiguity about their quality claims. That is, a firm’s incongruent signals will create an interpretation challenge for external audiences, who cannot make sense of what quality to expect from the firm.

Signal inconsistency may exist in several forms. First, signal inconsistency occurs when signals about a product or a firm are inconsistent across different dimensions (Vergne et al., 2018). A product that is priced high but provides no warranty and a firm that engages in philanthropy but overcompensates its CEO are examples. When signals are inconsistent across dimensions, their positive claims are undermined, leading to devaluation in the market. Zhao and Zhou (2011), for instance, examine signal inconsistency of Californian wines along three dimensions (i.e. professional critics, wine appellations, and extra designations), and find evidence that wine prices are constrained by such inconsistency. Second, signal inconsistency also exists when a firm releases incongruent signals in different categories (Jensen and Wang, 2018). When a firm spans multiple market categories and sets very different prices for its products (e.g. luxury handbags and budget watches), for instance, audiences receive incongruent quality signals. Such signal inconsistency poses problems to a multiproduct firm, as audiences will likely find it challenging to make sense of the firm’s overall quality. Third, signal inconsistency also occurs when a firm sends inconsistent signals over different time periods. A signal is essentially a snapshot pointing to unobservable quality at a particular point in time (Davila et al., 2003). Audiences will hence receive multiple product signals about one firm over time, which may be inconsistent from each other. For instance, rankings that signal the quality of universities may fluctuate across years (Askin and Bothner, 2016), and online ratings that are essential signals of sellers’ quality may swing over time (Parker et al., 2017), both leading to the third form of signal inconsistency. In this article, I focus specifically on the third form of signal inconsistency (i.e. the temporal incongruence of reputation signals), though I expect that my theory can be applied to the other forms as well.

Past quality as market signals

While scholars have identified a variety of signals, one of the most visible signals is product quality in the past (Shapiro, 1983). That is because audiences tend to form an expectation of future quality that is directly based on past demonstrations (Jensen and Roy, 2008). When a firm’s past offerings are of good quality, for instance, it gains a high reputation that will signal its ability to offer high-quality products or services in the future. Reputation will lead audiences to form more favorable expectations and evaluations (Jensen et al., 2012). Sports teams, for instance, pay more for players who have shown better performance in the past (Ertug and Castellucci, 2013); public firms choose auditors who have already demonstrated good technical skills (Jensen and Roy, 2008); buyers offer greater price premium in online transactions to sellers who have received more positive ratings (Obloj and Capron, 2011).

While past quality or reputation has been well established as a quality signal, it is not necessarily consistent (Parker et al., 2017). Players’ performance, for instance, may fluctuate over seasons; firms’ performance can go up and down; sellers might receive divergent online reviews for their products. The inconsistency of past quality is consequential. It challenges the effectiveness of past quality as a market signal. When a firm’s past quality is stable and consistent, it can serve a valid and reliable signal based on which audiences form quality expectations and judgments (Shapiro, 1983). However, when a firm has delivered outputs of divergent quality, the function of its past quality as a signal becomes obscured (Connelly et al., 2011). Incongruent past quality, in other words, makes a firm’s quality claims ambiguous (Parker et al., 2017). Being concerned with ambiguity, audiences may ignore such a firm, avoid transactions with it, and lower their evaluations of the firm (Vergne et al., 2018).

Signal inconsistency in the video games context

In the research context of video games, audiences face uncertainty about evaluating the quality of new games. To deal with the uncertainty, audiences usually consider the quality of a developer’s previous games as an important market signal. The quality level of past games largely reflects a developer’s reputation, as it indicates how its games perform relative to others in terms of perceived quality (Rietveld and Eggers, 2018). A game developer gains a good reputation from its past provision of high-quality games (Choi et al., 2018), which is commonly shown in various online platforms that constantly evaluate, rate, and rank games (Parker et al., 2017). Because of the signaling role of reputation (Obloj and Capron, 2011; Shapiro, 1983), a developer’s new games will be more appealing if its past games have accumulated a higher level of average quality reputation.

While all developers hope for their games to gain a high reputation, even reputable developers (e.g. Ubisoft) cannot guarantee that their games are always at high-quality levels. When a developer’s past games have displayed inconsistent quality, it runs into the problem of reputation signal inconsistency. 2 Audiences will find its quality claims ambiguous and cannot make sense of what quality to expect from such a developer (Wang and Jensen, 2019). Being concerned about the quality ambiguity induced by signal inconsistency, market audiences may devaluate the developer and its new offerings (Hsu, 2006b; Zuckerman, 1999). That is, I expect that audience evaluation on a new game is contingent on the extent to which a developer’s previous games emit congruent quality reputation signals, above and beyond the average level of the signals. Audiences will likely make negative evaluations of games from developers whose past game reputations are more inconsistent. 3 I therefore formulate the baseline hypothesis as follows:

Hypothesis 1 (H1). The more inconsistent a developer’s past reputation signals are, the lower its new game will be evaluated by audiences (both critics and consumers).

Signal inconsistency and audiences

Signaling is effective to the extent that audiences process the signals released by firms (Madsen and Rodgers, 2015). An emitted signal will have no effect if audiences do not look for the signal, or do not know what to look for and how to interpret (Connelly et al., 2011). As such, the impact of signal inconsistency will depend on the extent to which audiences observe and interpret all signals about an entity. For example, for the inconsistency between wine appellations and extra designations to take effect (Zhao and Zhou, 2011), it is necessary for consumers to understand wines’ positions in both dimensions; for the inconsistency between corporate philanthropy and CEO overcompensation to influence evaluation (Vergne et al., 2018), it is vital for media to cover a firm’s practices in both areas.

If audiences fail to process all of the relevant signals, they may end up with a biased perception about the level of signal inconsistency. For instance, if media focus on a firm’s philanthropy practice but overlook its CEO overcompensation, they may not perceive any signal inconsistency; if consumers have little knowledge about wine appellations and extra designations, they may not sense a wine’s signal inconsistency. While existing studies on cognition heuristics (Goldstein and Gigerenzer, 2002) would suggest that audiences do not have to possess perfect knowledge about market signals for signal inconsistency to remain important, it is still compelling that the effect of signal inconsistency is contingent on the extent to which audiences process various signals (Connelly et al., 2011).

However, in analyzing the role of signal inconsistency, prior studies often assume (implicitly) that various market signals are well processed by audiences (Jensen and Wang, 2018; Zhao and Zhou, 2011). Relaxing this assumption, I emphasize that there exists substantial heterogeneity between audience groups. Compared with end consumers, professional critics (or other market intermediaries) are more motivated to attend to the details of signals and process them systematically, in striving for accuracy in evaluations. A fundamental goal of critics is to offer principles for the ranking of products relative to one another in terms of perceived quality, which can establish themselves as an expert and guide market evaluation (Becker, 2008; Blank, 2007; Hsu, 2006a). Critics are hence motivated to help reveal hidden product quality and specify particular criteria for product assessment (Sharkey et al., 2022).

As a result, critics usually pay much closer attention to all the offerings and signals of an entity, to provide more comprehensive, detailed, and convincing evaluations. Selling-side analysts, for example, will investigate carefully all segments of a listed firm when publishing their forecasts (Zuckerman, 1999); movie critics commonly analyze the historical records of a film’s directors and actors when posting their reviews; media journalists try to cover many relevant conducts of a firm when writing their articles (Vergne et al., 2018); video game critics acquire in-depth knowledge about a developer’s past offerings before providing their evaluations on its newly released game (Zhao et al., 2018). As critics vigilantly scan the environment to process all relevant signals, they are more likely to sense the degree of signal inconsistency. As such, critics will be susceptible to signal inconsistency as expected.

While it is possible that a few end consumers have equivalent coverage as critics, the majority of consumers are less likely to process a complete set of signals (Kovács and Hannan, 2010). As compared with critics whose career reputation hinges on their evaluations, consumers are less motived to search for all relevant quality signals in their own evaluation process. Missing some signals will then lead the consumers to overlook signal inconsistency, thereby weakening their reactiveness to it. Put differently, in product evaluations, critics and consumers may consider a different set of information cues (Falchetti et al., 2022). While consumers are more likely to focus only on the product-level attributes, critics tend to make a more holistic evaluation of the product, its producer, and even the social and competition environment (Blank, 2007). More specifically, in evaluating a new game, critics are more likely to process the developer’s reputation signals by considering the past demonstrations of its offerings in the market, so that they are better able to discern its degree of temporal signal inconsistency. Consumers, however, may not conduct a comprehensive analysis of the developer’s past games, and hence fail to spot the inconsistency of reputation signals at the developer level. Taken together, I conjecture that end consumers are less attentive than professional critics to the inconsistency of reputation signals. Formally,

Hypothesis 1 (H2). The negative effect of signal inconsistency on evaluations is weaker for consumers than for critics.

However, critics may pass over their concerns about signal inconsistency to customers, through their substantial influences in the market. Because the evaluation of cultural or experiential products is complicated and difficult (Nelson, 1970), consumers often refer to critics in making their own assessments in various settings such as video games and movies (Zhao et al., 2018; Zuckerman and Kim, 2003). Indeed, being aided by communication technologies (e.g. Internet), critic evaluations have become widely available to consumers (Chen et al., 2012; Zhu and Zhang, 2010). Their evaluations play important roles in facilitating consumers’ judgment, shaping consumers’ theory of values, 4 and drawing consumers’ attention toward some criteria and away from the others (Durand and Boulongne, 2017). By doing so, critics directly or indirectly establish a particular schema for value assessment, which aims to guide the evaluation and taste of others in the market (Hsu, 2006a; Hsu et al., 2012b).

When consumers rely on critics’ evaluations as an anchor to form assessments (d’Astous and Touil, 1999), they implicitly become susceptible to signal inconsistency. 5 As a result, even though game consumers may not be able to clearly observe developer-level signal inconsistency themselves, they are influenced by the inconsistency indirectly when they accept the evaluation schema and standard of game critics. By contrast, if consumers do not refer to critics in their evaluation process, they would be less likely to sense the incongruence of reputation signals, reducing the impact of signal inconsistency. 6 I therefore argue that critic evaluations work as a mediator between signal inconsistency and consumer evaluations.

Hypothesis 3 (H3). Critic evaluations mediate the effect of signal inconsistency on consumer evaluations.

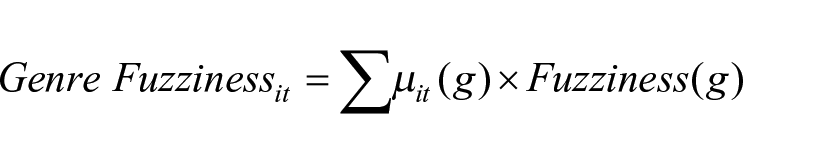

Figure 1 depicts my conceptual framework. Signal inconsistency of past game quality is expected to influence both critic and consumer evaluations. However, its effect on critics is stronger than that on consumers. And finally, the effect of signal inconsistency on consumer evaluations is mediated by critic evaluations.

Conceptual framework.

Methods

I test my hypotheses using data on video games released in the global market between 2001 and 2016. Video games are a type of experiential products that involve uncertainty, as consumers cannot know games’ quality before their purchase and consumption. Even after consuming a game, many consumers may still be uncertain about what ratings to give. As such, market signals play an important role in shaping audience evaluations in this context. Indeed, prior research has shown that the market acceptance of video games is largely influenced by various quality signals such as price, online word of mouth (Zhu and Zhang, 2010), and firm reputation (Choi et al., 2018).

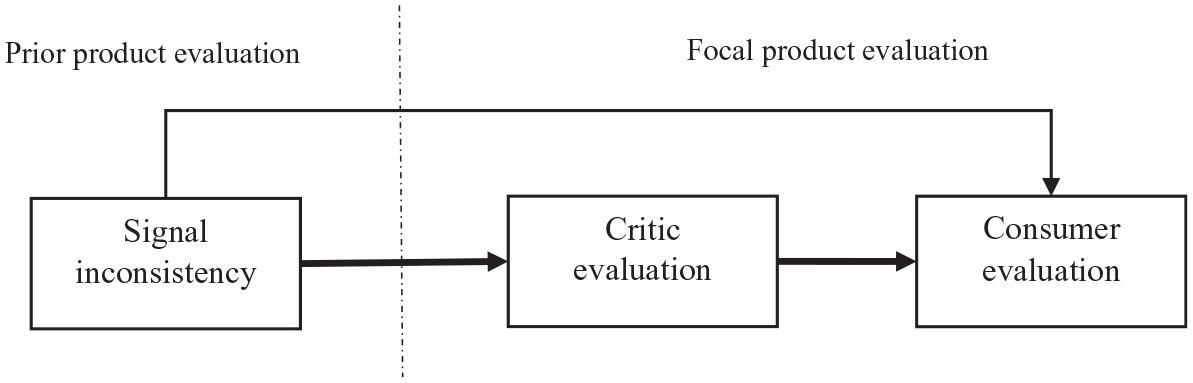

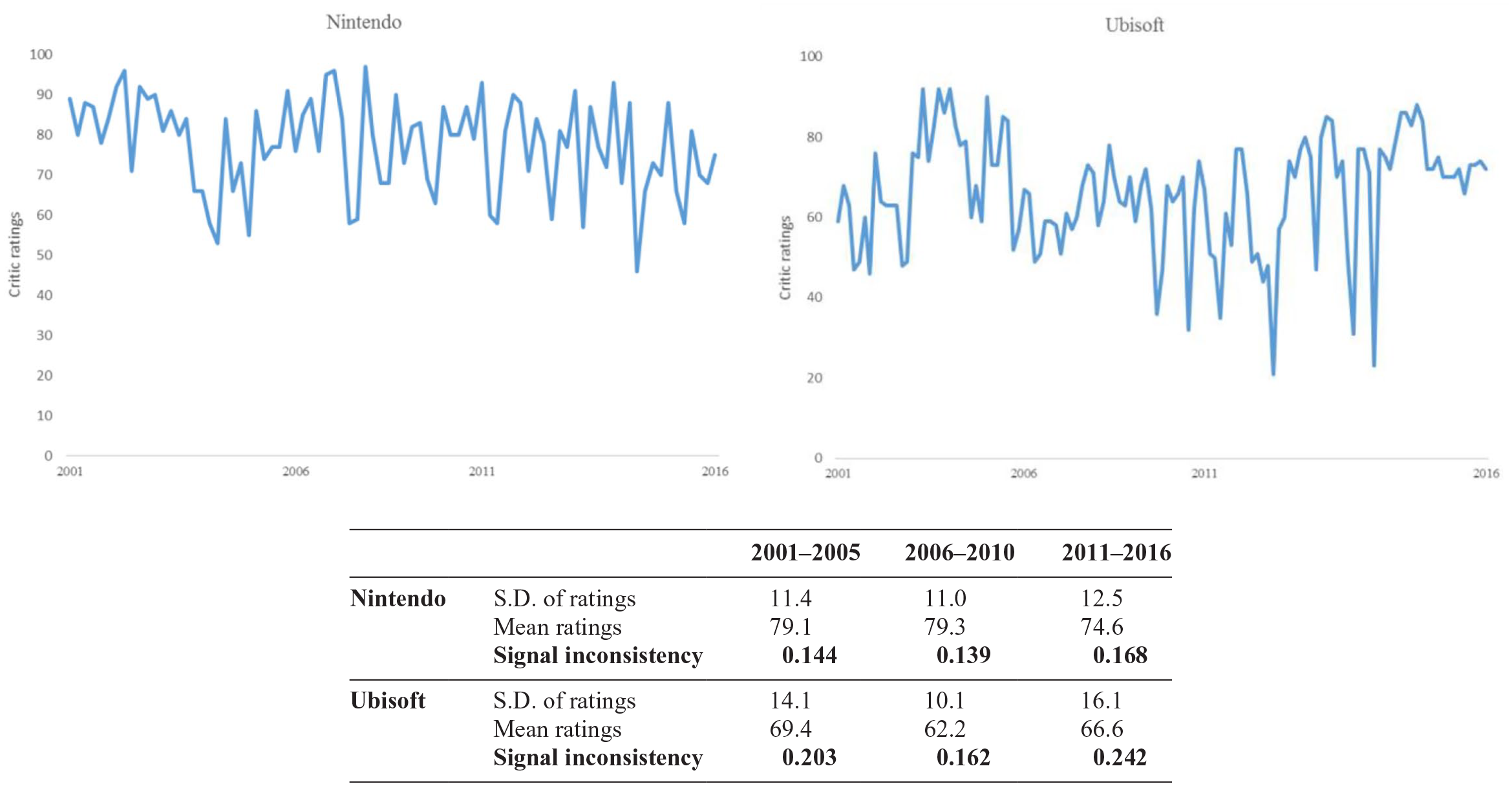

The video game market is appropriate to test signal inconsistency, because there is often quality variation of different games released from the same developers. I define signal inconsistency of a game developer as the extent to which its past games display incongruent quality levels (Parker et al., 2017). Figure 2 depicts the quality levels of games in my sample developed by Nintendo and Ubisoft from 2001 to 2016. While both developers’ games show inconsistent quality, it seems that Nintendo games are less inconsistent than Ubisoft games. Indeed, as reported in the table, Ubisoft shows a larger signal inconsistency than Nintendo in the three periods, according to my measurement (explained below). See more examples in Appendix 1.

Critic evaluations of games by Nintendo and Ubisoft, 2001–2016.

Second, this setting is also suitable to compare evaluations from different types of audiences. The game ratings of critics and consumers are separately recorded in widely used datasets, which allows me to explore how they may react differently to signal inconsistency. Specifically, I collect the key variables, critic and user evaluative ratings, from the review aggregator Metacritic.com. Metacritic keeps track of more than 300 game review publications, from which it creates Metascore that indicates the overall evaluations by critics (Rietveld, 2018). Game users also constantly post their ratings of games on Metacritic, which can be used as a proxy of consumer evaluations. Since games may be released either exclusively for one platform or for multiple platforms, the data are organized at the game-platform level, with a single observation per game for each platform on which it is released (Rietveld and Eggers, 2018). I collect other information (e.g. game sales, year of release, platform, and genre) from VGChartz.com. The final sample includes 4645 observations from 478 developers. 7

Third, and more importantly, I choose to analyze video games because temporal sequence is relatively clear in this context, such that consumer evaluations usually come after critic evaluations. This is an important scope condition for the mediation hypothesis. While temporal sequence can be context-dependent, critics do provide evaluations prior to the majority of consumers in the video game ratings. Many critic ratings are published before official game releases, or very shortly after the games are released (Zhu and Zhang, 2010). It is common in the video game industry that many critics have the opportunity to play a game several days or weeks before its release date (Stuart, 2015). This is intuitive since critics aim to be the pioneers guiding the evaluations of consumers (Blank, 2007). Users, by contrast, mostly post their ratings in a window after critics (please see Appendix 2 for more discussions). 8

Dependent variables

I refer to critic and user ratings of a new product as how it is evaluated by professional critics and end consumers, respectively (Hsu, 2006b). Specifically, I measure critic evaluations using the Metascores of games. Metascore is aggregated by Metacritic from game review publications (Rietveld and Eggers, 2018). Larger scores indicate more favorable evaluations by game critics, and vice versa. Because of its prevalence, Metascore is described as the video game industry’s “premier” review aggregator (Leack, 2015). Indeed, it has been used by many companies (e.g. Microsoft) when making important decisions about game development (Remo, 2008). It ranges between 0 and 100, but I scale it by 10, to make it comparable with the range of consumer evaluations. Similarly, I measure consumer evaluations with average user ratings from Metacritic. When a game is well accepted by end consumers, one should expect higher user ratings. It can range from 0 to 10.

Independent variable

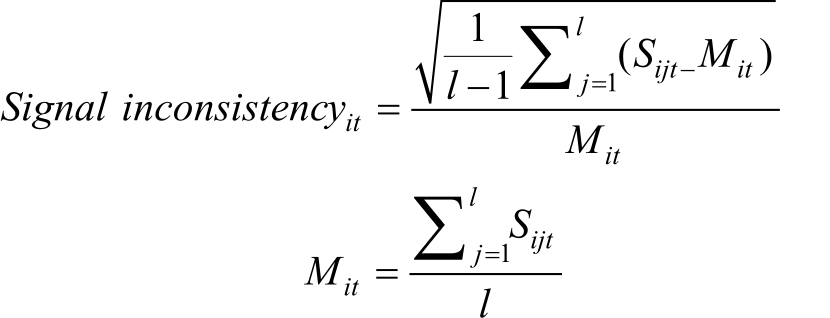

Signal inconsistency

I measure signal inconsistency as the extent to which the quality reputation of a developer’s past games is incongruent. In line with previous studies (Ebbers and Wijnberg, 2010; Parker et al., 2017), I use mean critic ratings to indicate a game’s quality reputation, which is the most salient quality indicator of the game relative to others in the marketplace (in a robustness check below, I also use mean user ratings as an alternative measure of game quality reputation). Specifically, I calculate the signal inconsistency of a developer as the standard deviation of mean critic ratings of its games in the past 5 years before the focal game (e.g. a 5-year time window with 1-year time lag), scaled by the mean of past ratings

where

Control variables

Developer reputation

While focusing on the inconsistency of quality reputational signals, I control for the average level of them (i.e. developer reputation) that can also affect market acceptance (Choi et al., 2018). However, there are empirical challenges if simply adding the average as a control variable, because including the average variable twice on the right-hand side might lead to estimation bias. Inspired by related literature (Han and Pollock, 2021), I create a dummy variable that indicates whether a developer has a high reputation, to mitigate the potential concerns about collinearity. I rank all game developers based on the average reputation, and assign a value of 1 to a developer (i.e. high reputation) if it is within the top 20th percentile, and 0 otherwise. 9

Developer status

The status of game developers can influence audience evaluation. Following previous studies, I assume an actor with high status if it wins top awards (Ertug and Castellucci, 2013). Specifically, I include a dummy variable indicating whether any games of a developer are awarded Game Developers Choice Awards. The awards are announced annually by the Game Developers Conference to the most innovative and creative game designers (De Vaan et al., 2015). The awards are introduced in 2001, including awards such as Game of the Year, Best Audio, Best Debut, Best Design, Innovation Award, Best Narrative, Best Technology, and Best Visual Art. A game developer is considered as of high status if any of its games win any of the awards in the past 5 years.

Number of genres

While focusing on reputational signals, I control for category spanning of game developers (i.e. grades of membership) (Hsu et al., 2009). Games are categorized into genres such as Strategy, Fighting, Role-playing, and Sports. I count the number of game genres that a developer has spanned in the past 5 years.

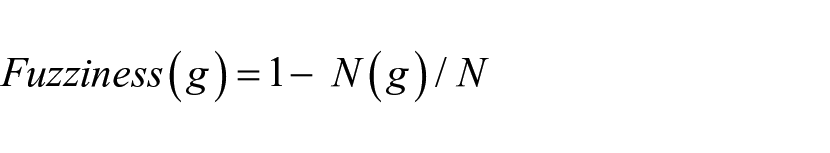

Genre fuzziness

Following (Hsu et al., 2012a), I also control for the extent to which a developer’s genre participation is ambiguous. I first calculate a developer i’s grade of membership in a genre g, µi(g), based on the number of games it develops in the genre divided by the number of games it develops in the past 5 years. I then compute the fuzzy density for a genre, N(g), as the sum of the grades of membership of developers that affiliate with the genre. Genre contrast, N(g)/N, is measured as the fuzzy density divided by the number of developers with at least one game in that genre over the past 5 years. Fuzziness is calculated as the opposite of contract

To measure the genre fuzziness of a developer i, I sum up the product of a developer’s grade of membership in a genre with the genre’s fuzziness

I control for game distribution patterns by including a dummy variable, Multi-homing, indicating if a game is launched for multiple platforms. A game’s evaluation may also depend on the number of competing games that are available in the same year, genre, and platform. I therefore include a variable, Niche Density, to rule out that effect. I control for Sales of publisher, the global sales of game publishers in the past 5 years. I introduce four platform dummy variables: Platform-PS, Platform-Xbox, Platform-Nintendo, and Platform-PC, to indicate if a game is developed for any of those major platforms. The four platforms cover over 92% of all the games in my final sample. 10 To account for any variances across time and categories, I include year fixed-effects, game genre fixed-effects, and Entertainment Software Rating Board (ESRB) rating fixed-effects. 11

Analysis and results

Following previous studies (Hsu et al., 2009), I employ Tobit regressions to test my Hypotheses. 12 To address the concern that games are not completely independent, I cluster standard errors by game developers and report robust standard errors (Singh and Fleming, 2010). To test the mediation effect of critic evaluations, I employ structural equation models (SEM) using the sem command in Stata (Zhao et al., 2018). SEM accounts for the fact that signal inconsistency may affect consumer evaluations in both direct way and indirect way through critic evaluations. It mitigates measurement errors that may bias the estimation of mediating effects (Shaver, 2005) and corrects error terms across systems of equations simultaneously to address potential endogeneity. To better identify the system, I have to specify one explanatory variable that directly affects critic evaluations but does not have a direct effect on consumer evaluations. Specifically, I include a dummy variable of main genre indicating whether a game falls into the main genre of the developer. I identify a genre as main genre if it includes the largest proportion of games that a developer has released in the past 5 years. The analysis suggests that it meets the empirical requirement as it has a significant effect on critic evaluations, but its direct effect on consumer evaluations is non-significant.

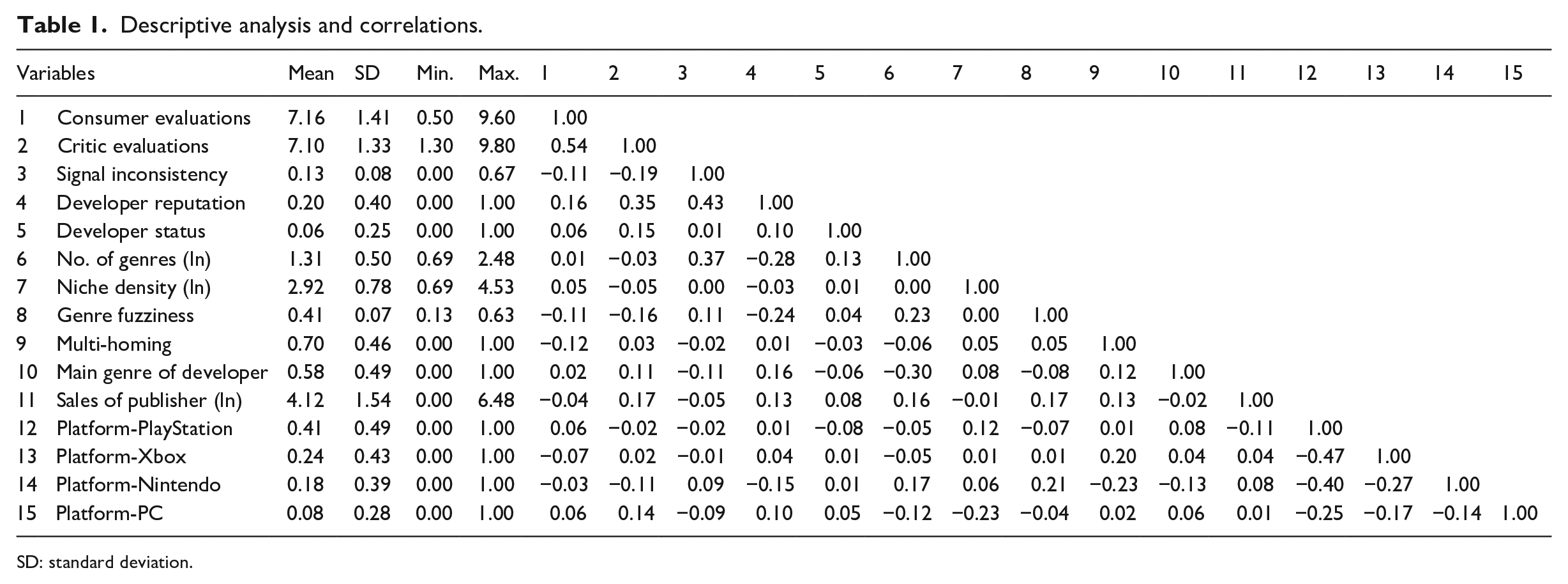

Table 1 shows descriptive analysis and correlations. It is worth noting that while the pairwise correlation between critic evaluations and consumer evaluations is positive (r = 0.54), they are not completely coupled, suggesting potential evaluative disparity between the two types of market audiences (Santos et al., 2019). Table 2 presents the results of Tobit regressions. Model 1 and Model 3 show the baseline results including only control variables. As expected, developer reputation and developer status are positively associated with the evaluations of both critics and consumers. Genre fuzziness is negatively related to critic evaluations, suggesting that developing games in fuzzy categories leads to less favorable evaluations from critics. The effect of genre fuzziness is, however, non-significant on consumer evaluations, which is somehow aligned with prior proposition that categorical ambiguity may have different impacts on different types of audiences (Pontikes, 2012).

Descriptive analysis and correlations.

SD: standard deviation.

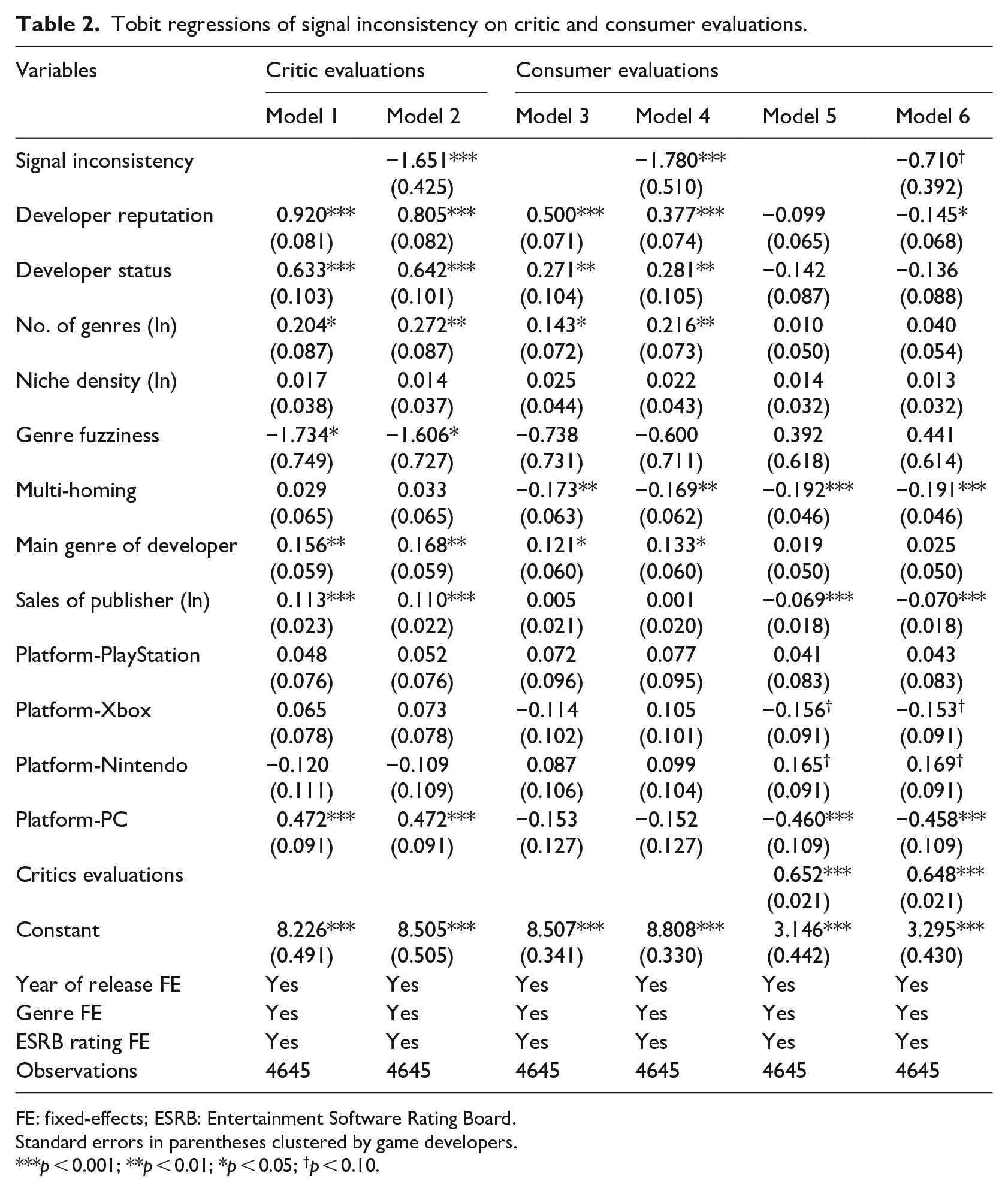

Tobit regressions of signal inconsistency on critic and consumer evaluations.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05; †p < 0.10.

Models 2 and 4 include signal inconsistency of a developer’s past game reputation. The effect of signal inconsistency is significantly negative on both critic evaluations (β = −1.651, s.e. = 0.425) and consumer evaluations (β = −1.780, s.e. = 0.510). This supports the baseline Hypothesis 1 that signal inconsistency leads to negative evaluations among both critic and consumer audiences. To formally compare the two coefficients across the two models, I use seemingly unrelated estimations (suest in Stata), which simultaneously estimate (co)variance (Ertug et al., 2016). I then employ nlcom (Haans et al., 2016) to calculate the ratio between the effect size of signal inconsistency in Model 4 and that in Model 2. A ratio that is larger than 1 would mean that the former’s effect size is stronger than the latter’s, and vice versa. 13 However, I find that the ratio is not significantly different from 1 (p = 0.750), suggesting that signal inconsistency has comparable total effects on the evaluations of critics and customers.

However, since signal inconsistency may influence consumers through the mediating effect of critics, it is necessary to control for critic evaluations, to test the direct effect of signal inconsistency on customers. As such, I include critic evaluations in Models 5 and 6. 14 The effect of critic evaluations on consumer evaluations is positive in Model 5 (β = 0.652, s.e. = 0.021), suggesting that consumers are influenced by critics. The effect of signal inconsistency on consumer evaluations decreases substantially in Model 6 (β = −0.710) from Model 4 (β = −1.780). The ratio between the effect sizes of signal inconsistency in Model 6 and in Model 2 is 0.43 (= −0.710/−1.651). It means that the direct effect size of signal inconsistency on consumer evaluations is less than half of its direct effect on critic evaluations. The formal nlcom test also suggests that the ratio is significantly smaller than one (p = 0.021). This lends support to Hypothesis 2 that the negative effect of signal inconsistency on audience evaluations is weaker for consumers than for critics.

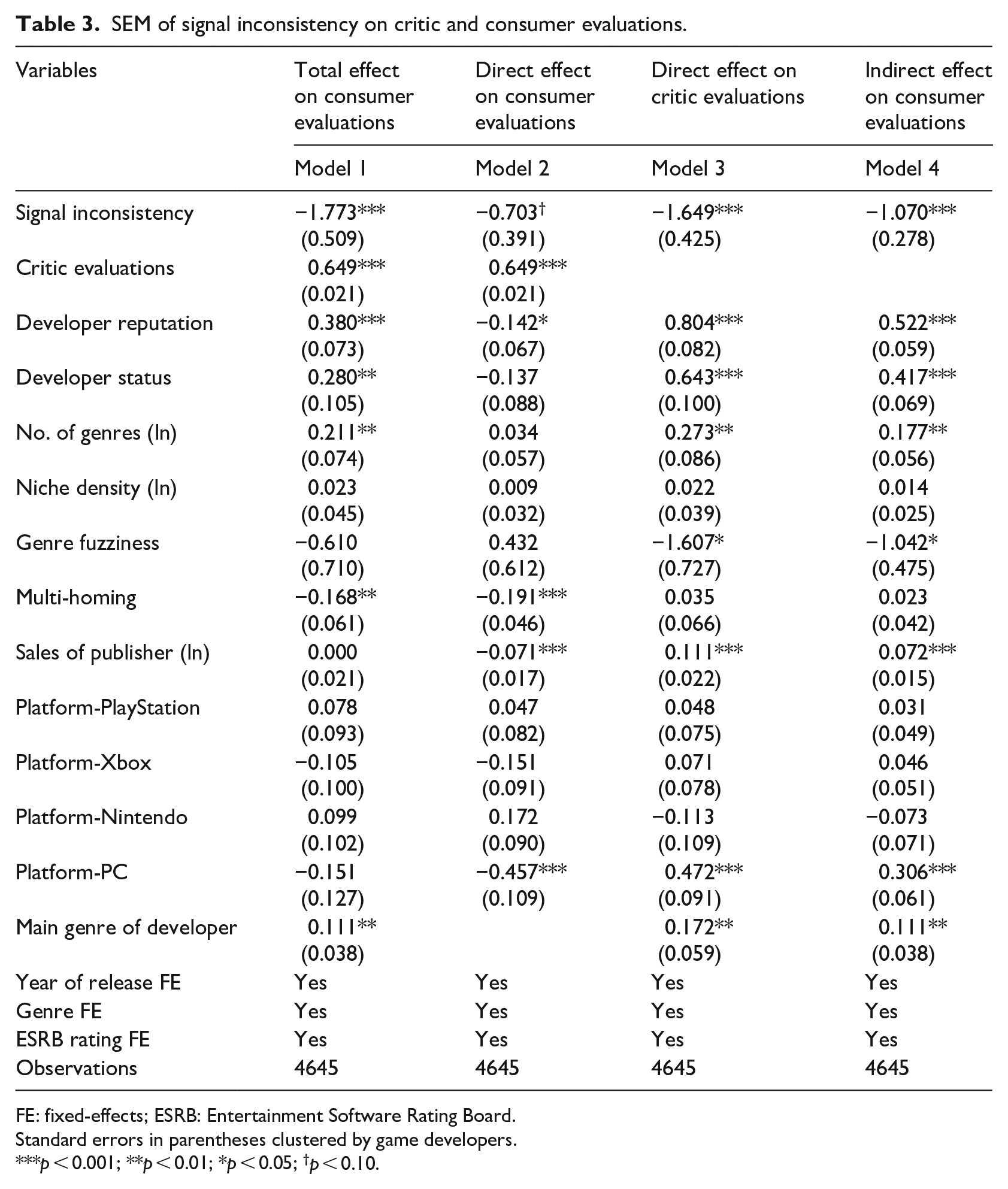

To test Hypothesis 3 on the mediation effect of critic evaluations, I employ SEM estimation. Table 3 presents the SEM results. The overall findings are consistent with my Tobit regressions in Table 2. Model 1 shows the total effects on consumer evaluations. Model 2 and Model 3 show the direct effect of signal inconsistency on consumer evaluations and critic evaluations, respectively, and Model 4 reports the indirect effect on consumer evaluations. The direct effect of signal inconsistency on consumer evaluations (β = −0.703, s.e. = 0.391) counts for only about 39.6% of its total effect (β = −1.773, s.e. = 0.509), while the indirect effect (β = −1.070, s.e. = 0.378) through critic evaluations takes a much larger proportion (60.4%). To more formally test the mediation effect, I used Preacher and Hayes’ (2004) calculation tool for various forms of Sobel tests. The classic Sobel test is highly significant (t = −3.85; p < 0.001), while the Aroian and Goodman specifications provide almost identical results. These results together suggest that the effect of signal inconsistency on consumer evaluations is largely mediated by critic evaluations. Hypothesis 3 is hence supported that critic evaluations mediate the effect of signal inconsistency on consumer evaluations. 15

SEM of signal inconsistency on critic and consumer evaluations.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05; †p < 0.10.

Supplementary analyses

In addition to main estimation, I conduct a wide range of supplementary analyses that examine alternative measurements, use alternative model specification, and further explore the data.

Standardization

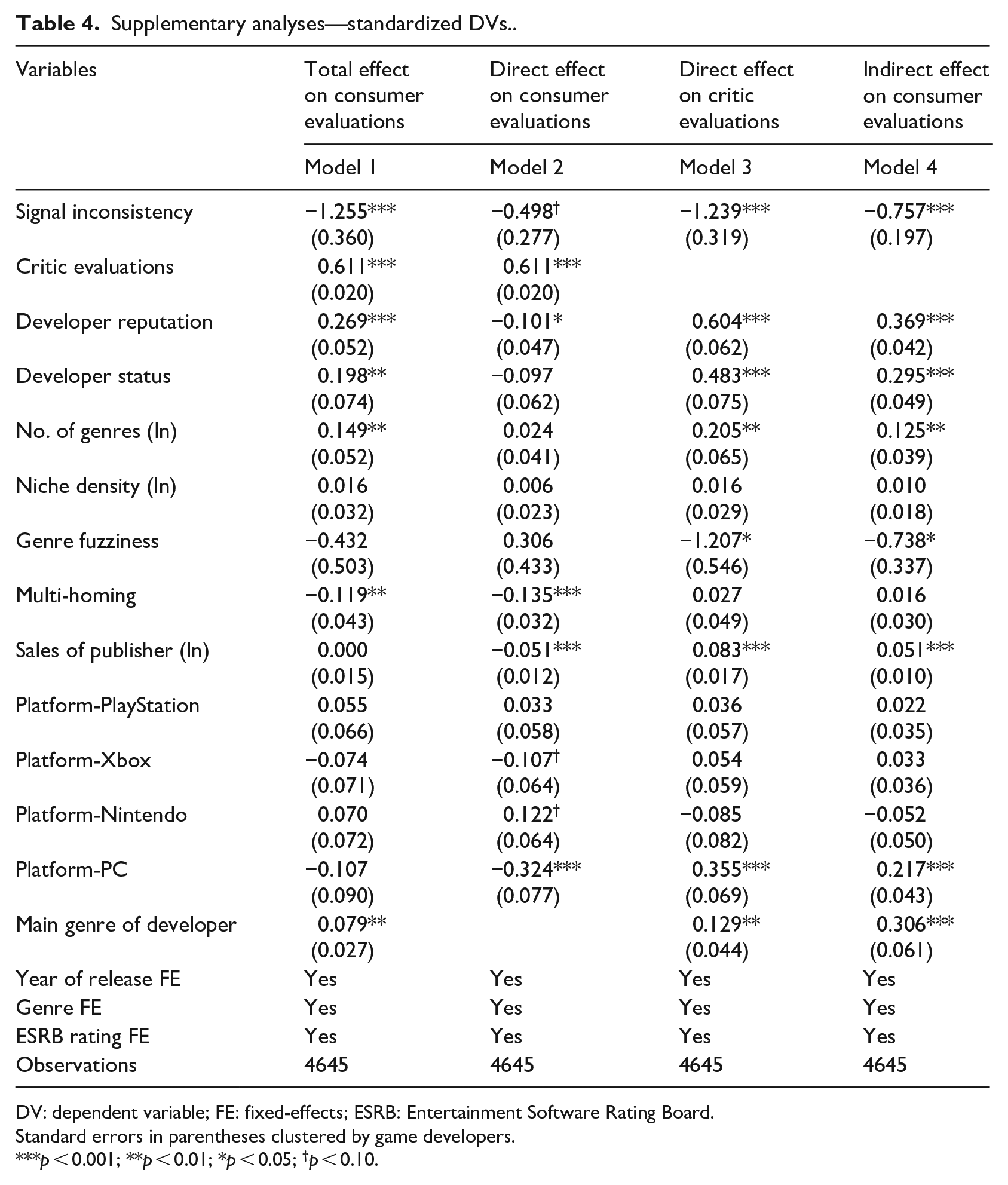

Instead of using raw measures of dependent variables, I standardize them before regressions. Much of my analytical attention is focused on comparing estimated coefficients. While the independent variable (i.e. signal inconsistency) stays the same across models, dependent variables are different. One may be concerned that my results were driven by the fact that dependent variables have different distributions and scales. To address this concern, I standardize both critic and consumer evaluations before estimation, and report the SEM results in Table 4. The regression results and Sobel tests (t = −3.85) are both largely consistent with Table 3, in term of statistical significance and effect size.

Supplementary analyses—standardized DVs..

DV: dependent variable; FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05; †p < 0.10.

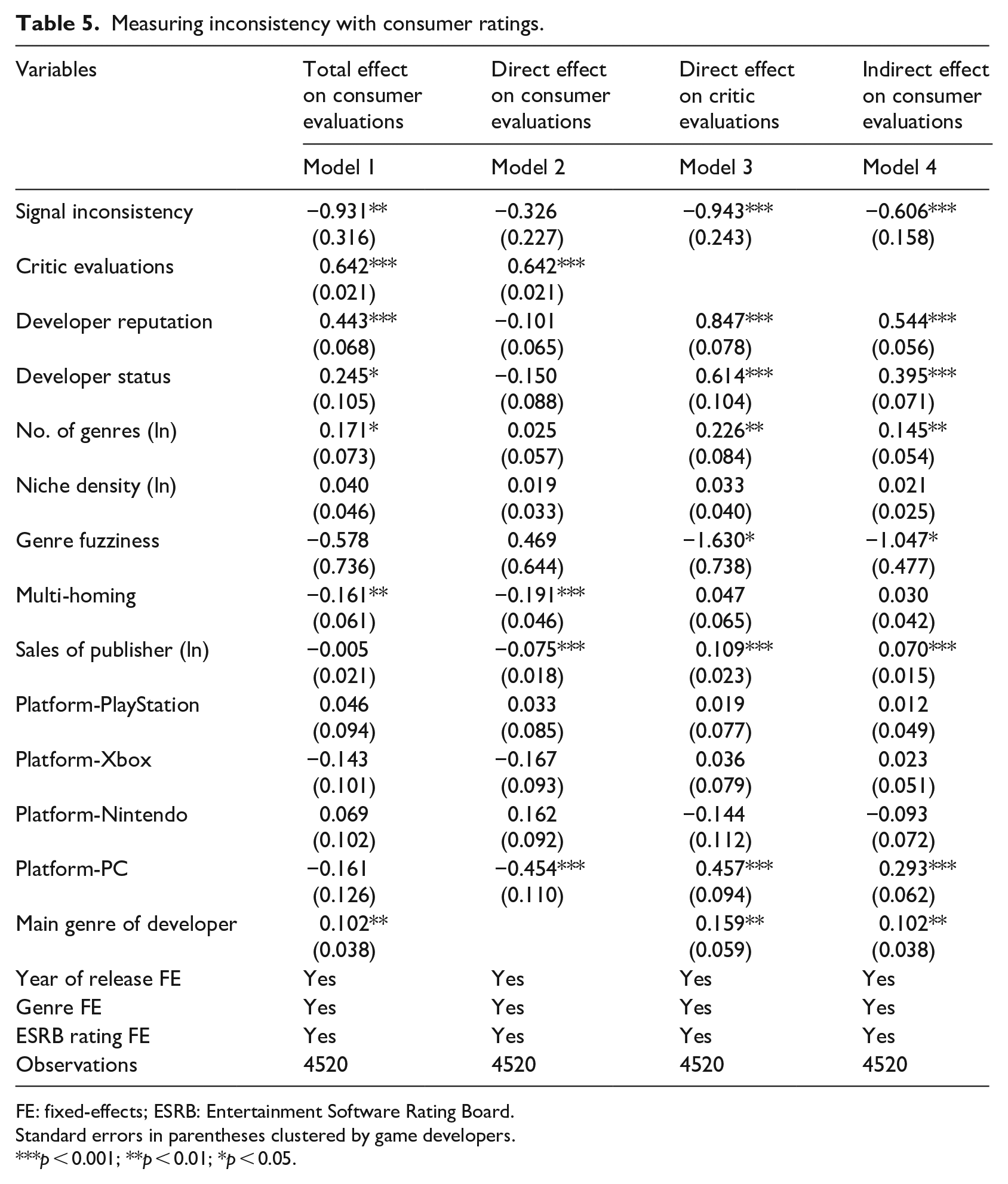

Signal inconsistency

I use an alternative measure of signal inconsistency. In my main estimation, the inconsistency of reputation signals is measured based on critic ratings. This measure is valid as prior research commonly refers to critic ratings as an important quality reputation indicator of video games (Rietveld and Eggers, 2018). However, one may be concerned that the critics-based inconsistency measure mechanically leads to a stronger impact on critic evaluations than on consumer evaluations. To address this concern, I replace the measure of signal inconsistency using the standard deviation of user ratings of games in the past 5 years, scaled by its mean ratings. This new measure has a moderate correlation with the critic-based measure (r = 0.373). 16 The regression results are reported in Table 5, which are largely similar to my main findings above.

Measuring inconsistency with consumer ratings.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

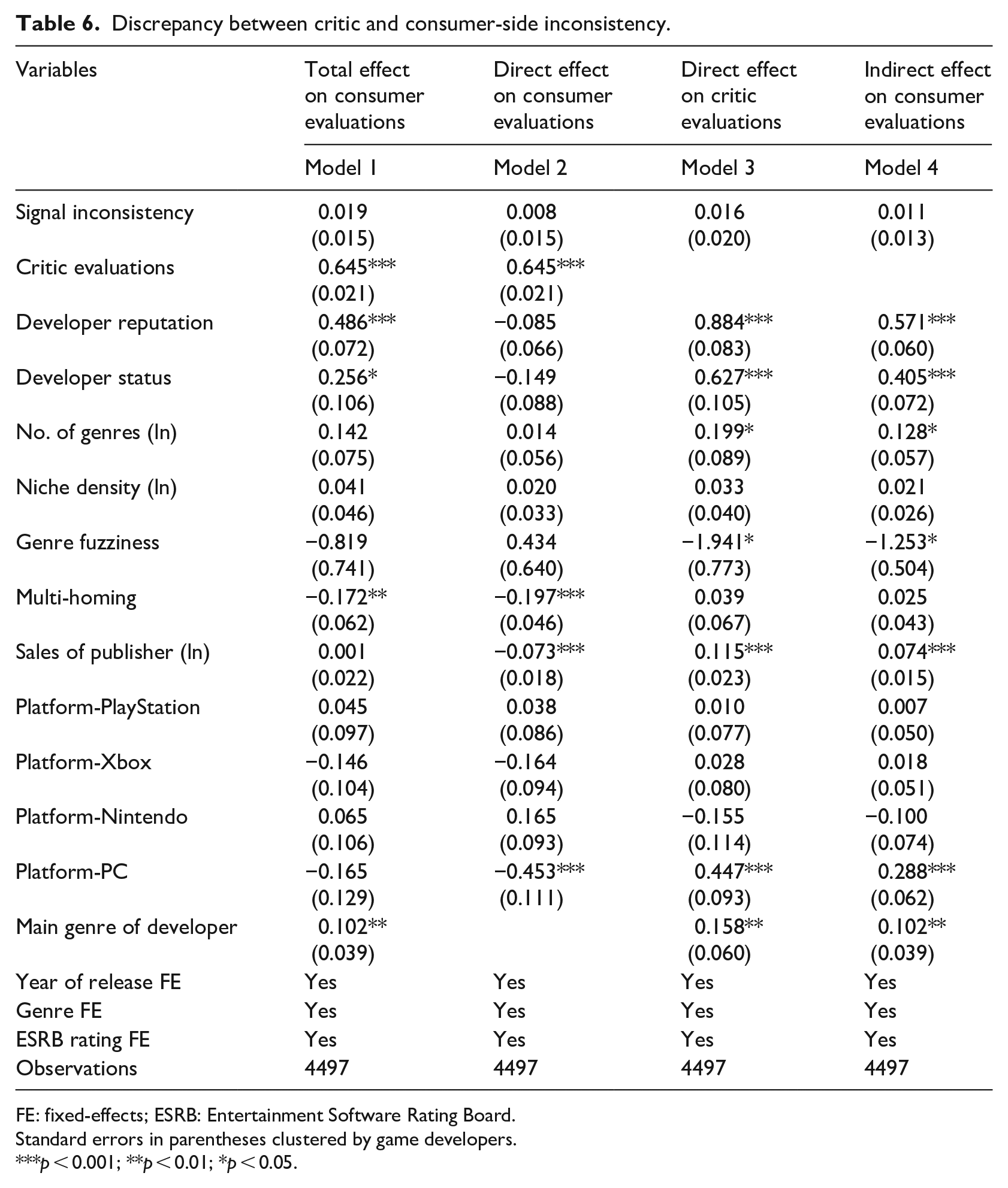

Moreover, I also build a variable that captures the “second order” discrepancy between critic- and consumer-based inconsistency measures (formally,|critic-based inconsistency/consumer-based inconsistency −1|). A larger value of discrepancy indicates that the inconsistency measures are more divergent between critic and consumer sides. The results in Table 6 show that the effect of the discrepancy is non-significant in all models. This suggests that market audiences are not very attentive to this “second-order” factor.

Discrepancy between critic and consumer-side inconsistency.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

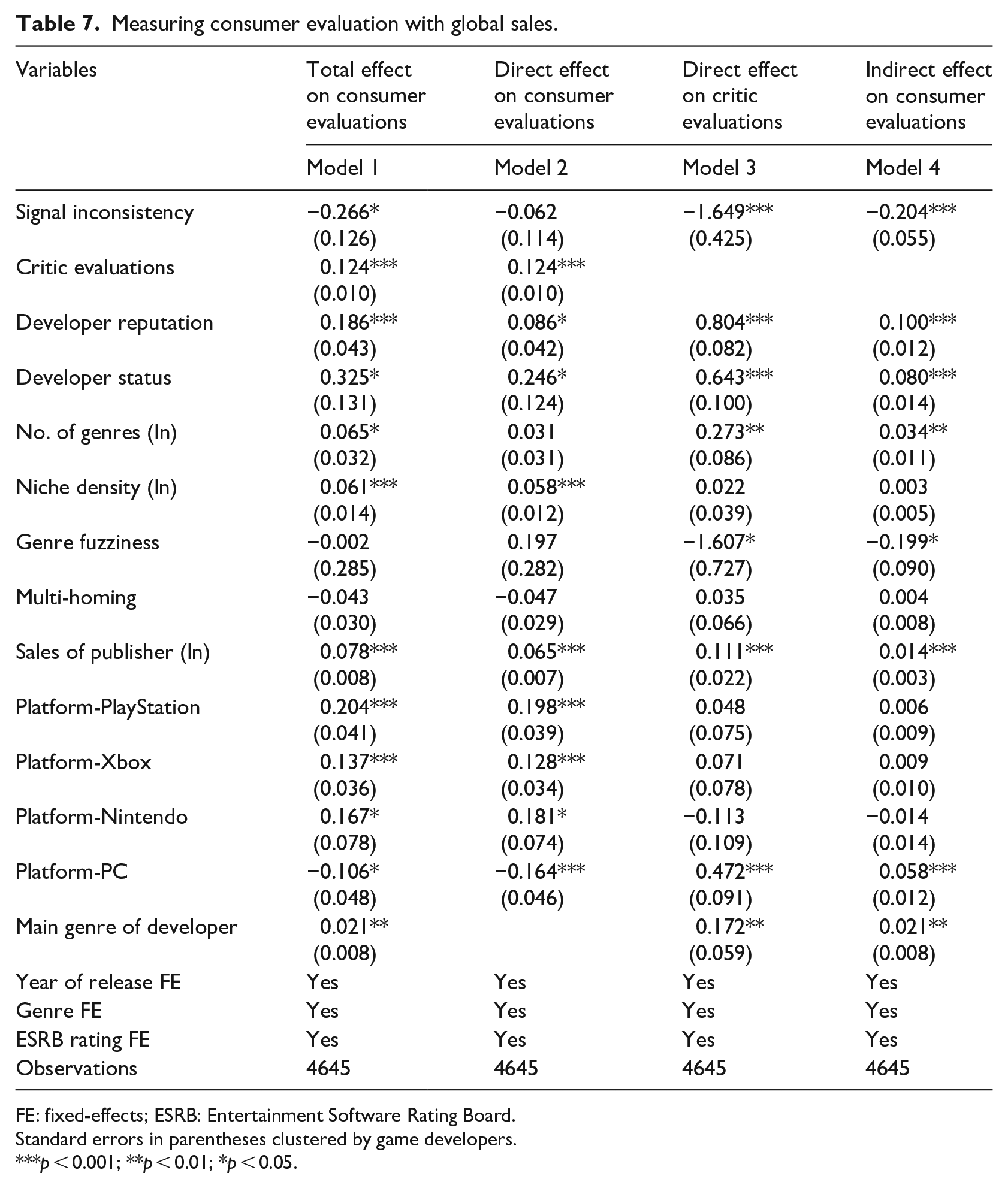

Market sales

I use global sales of video games as an alternative measure of consumer evaluations. Sales reflect how video games are received by end consumers in the marketplace (Zhao et al., 2018). Using game sales helps differentiate the measurement methods of two dependent variables, critic and consumer evaluations. This new measure also provides insights into the selection process. In other words, while online ratings reflect consumers’ post-consumption evaluations, sales indicate the patterns in pre-consumption evaluations. I report the results in Table 7, which are largely consistent with my findings in Table 3. Specifically, the total effect of signal consistency on game sales is largely driven by the indirect effect through critic evaluations (with a significant Sobel test of t = −3.70).

Measuring consumer evaluation with global sales.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

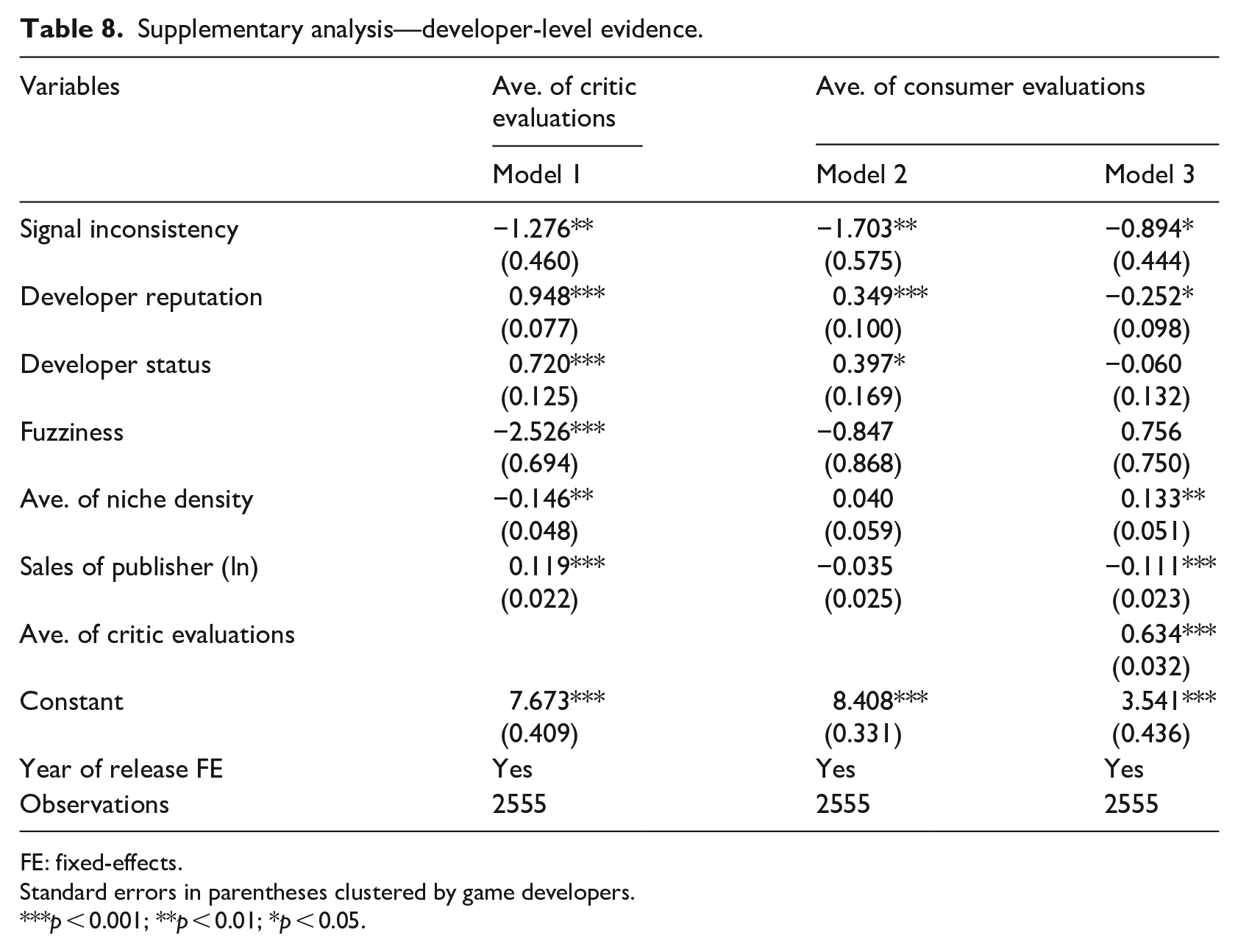

Developer-level evidence

I also extend my analysis to the developer level. As my primary research design is at the game-platform level, there are multiple games from the same developers at the same year, who share the same signal inconsistency. This may lead to concerns about the interdependence of observations. In this extension, I aggregate game ratings into the developer level, which helps reduce interdependence of observations. Specifically, I calculate the average ratings of a developer’s all games in the same year and analyze how a developer’s signal inconsistency affects its overall evaluations in the market. The results are reported in Table 8, which show patterns consistent with my core claims.

Supplementary analysis—developer-level evidence.

FE: fixed-effects.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

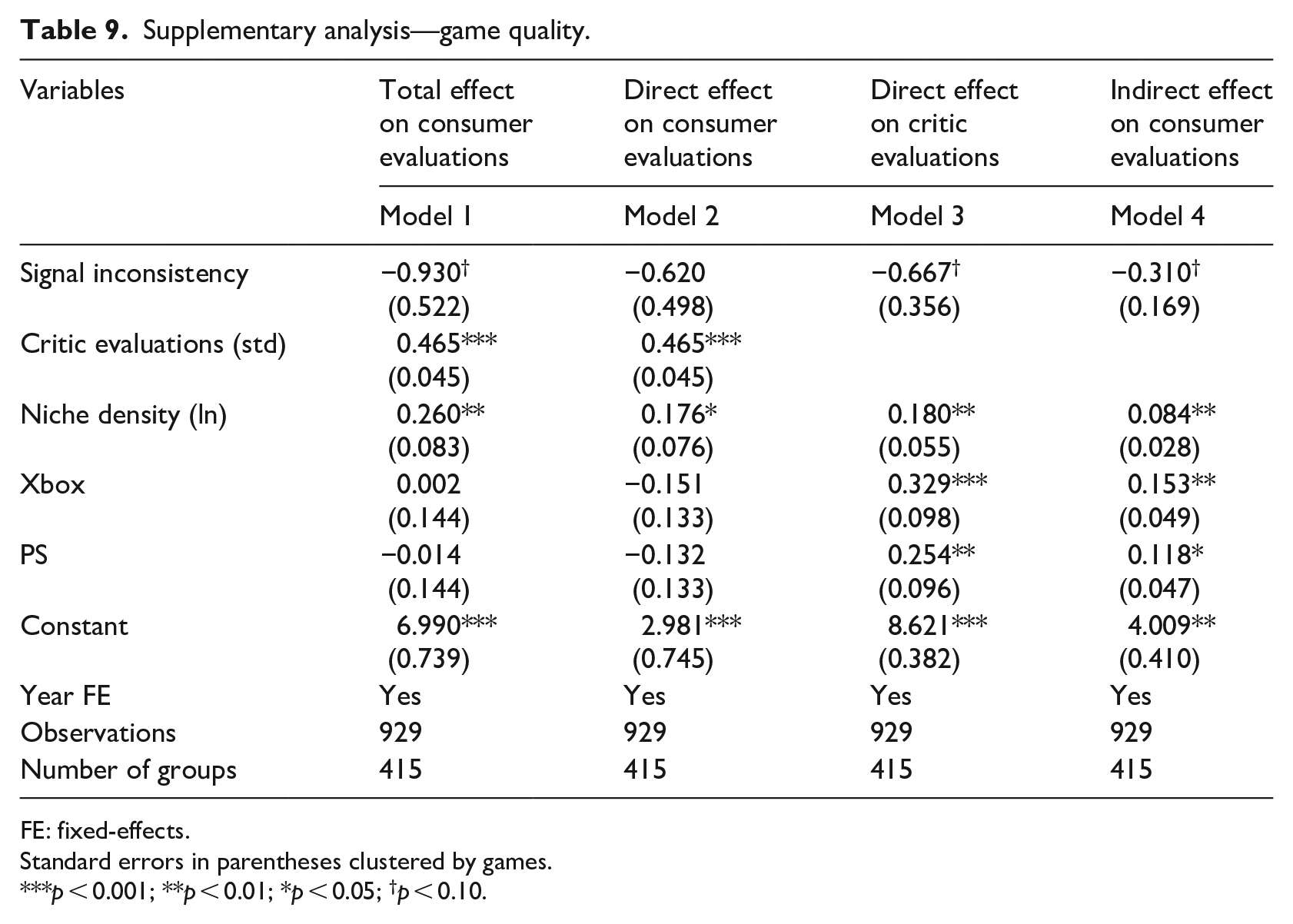

Game quality

I try to rule out the impact of unobserved quality. It is possible that key variables are all driven by game quality that is not captured in my estimation. In this extension, I follow prior studies (Rietveld and Eggers, 2018) to focus on a subsample of multi-homing games. The same game released on multi-platforms entails almost identical quality. However, its developer could have different signal inconsistency in different platforms, when game ratings differ across platforms even for the same game titles (Zhu and Zhang, 2010). To do so, I first create developer-platform level measure of signal inconsistency. That is, while my primary inconsistency measure is at the developer level, this new measure calculates the standard deviation of game ratings for each developer on each platform in the past 5 years. Despite its advantage of controlling for game quality, it is worth noting that this design has a strong assumption that there is no information spillover across platforms. Reputation signals on one platform are not perceived by audiences in the other platforms.

After adopting this approach, I end up with a small subsample of multi-homing games with sufficient data (929 observations of 415 games). I then apply generalized SEM with game-level fixed-effects to estimate critic and consumer evaluations, which rules out game-level and developer-level factors. The results are reported in Table 9. The overall picture is consistent with my hypotheses, with t-statistic of −1.84 from Sobel test. However, it is worth noting that the effects are less substantive in this estimation, as the key variables are mostly significant at the p < 0.1 level. These preliminary results may imply that the effect of signal inconsistency on audience evaluations is also largely explained by game quality. New games from a signal-inconsistent developer tend to receive negative evaluations, not only because signal inconsistency creates ambiguity in the market (a perception discount), but also because those games are likely to have lower inherent quality (a production discount). In other words, in bridging the effect of signal inconsistency on consumers, critics may have both integrated their evaluative concerns about the inconsistency and helped reveal the hidden product quality associated with signal inconsistency. Nonetheless, to understand the actual relationship between quality and signal inconsistency, more direct theorizing and testing are needed.

Supplementary analysis—game quality.

FE: fixed-effects.

Standard errors in parentheses clustered by games.

p < 0.001; **p < 0.01; *p < 0.05; †p < 0.10.

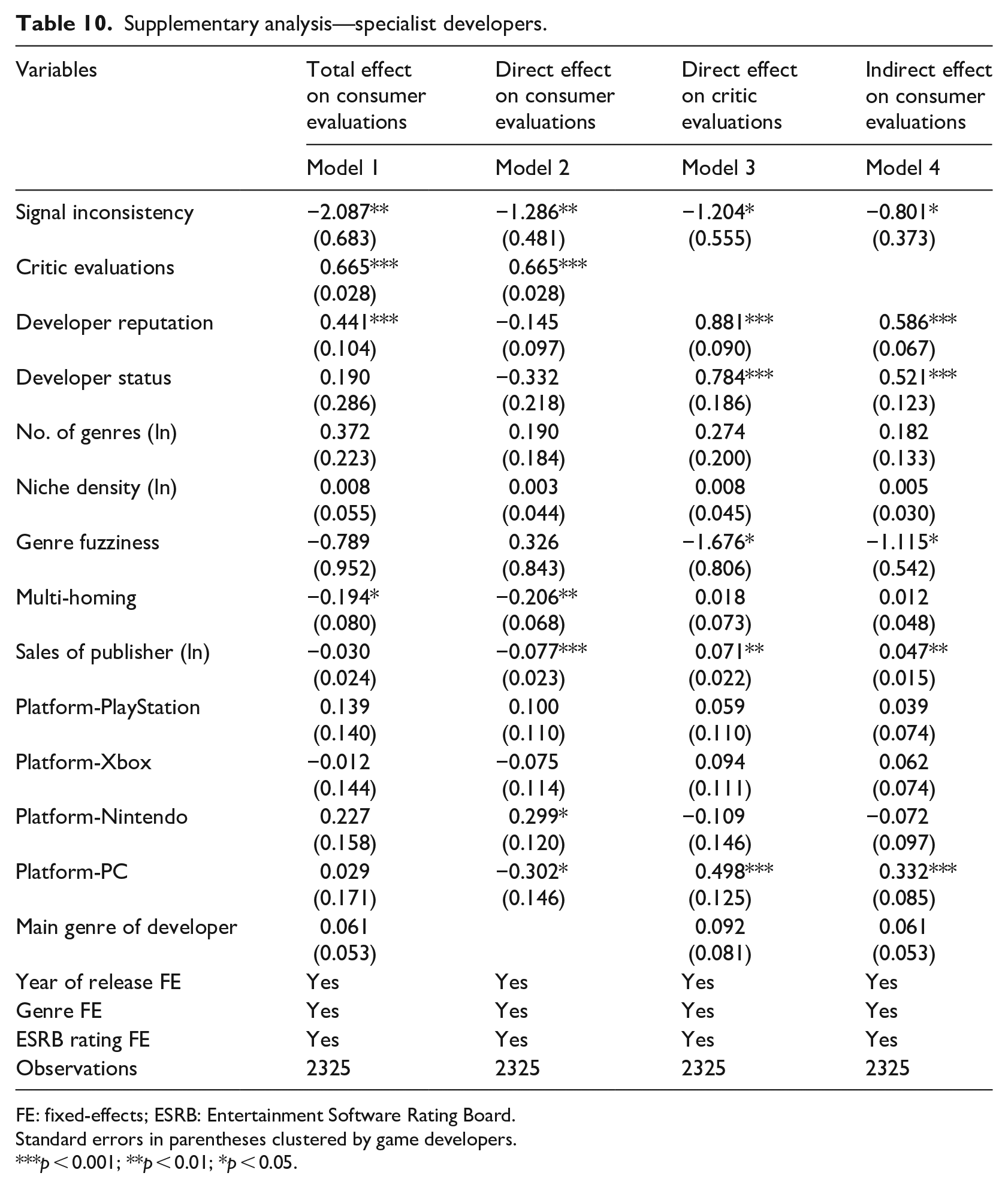

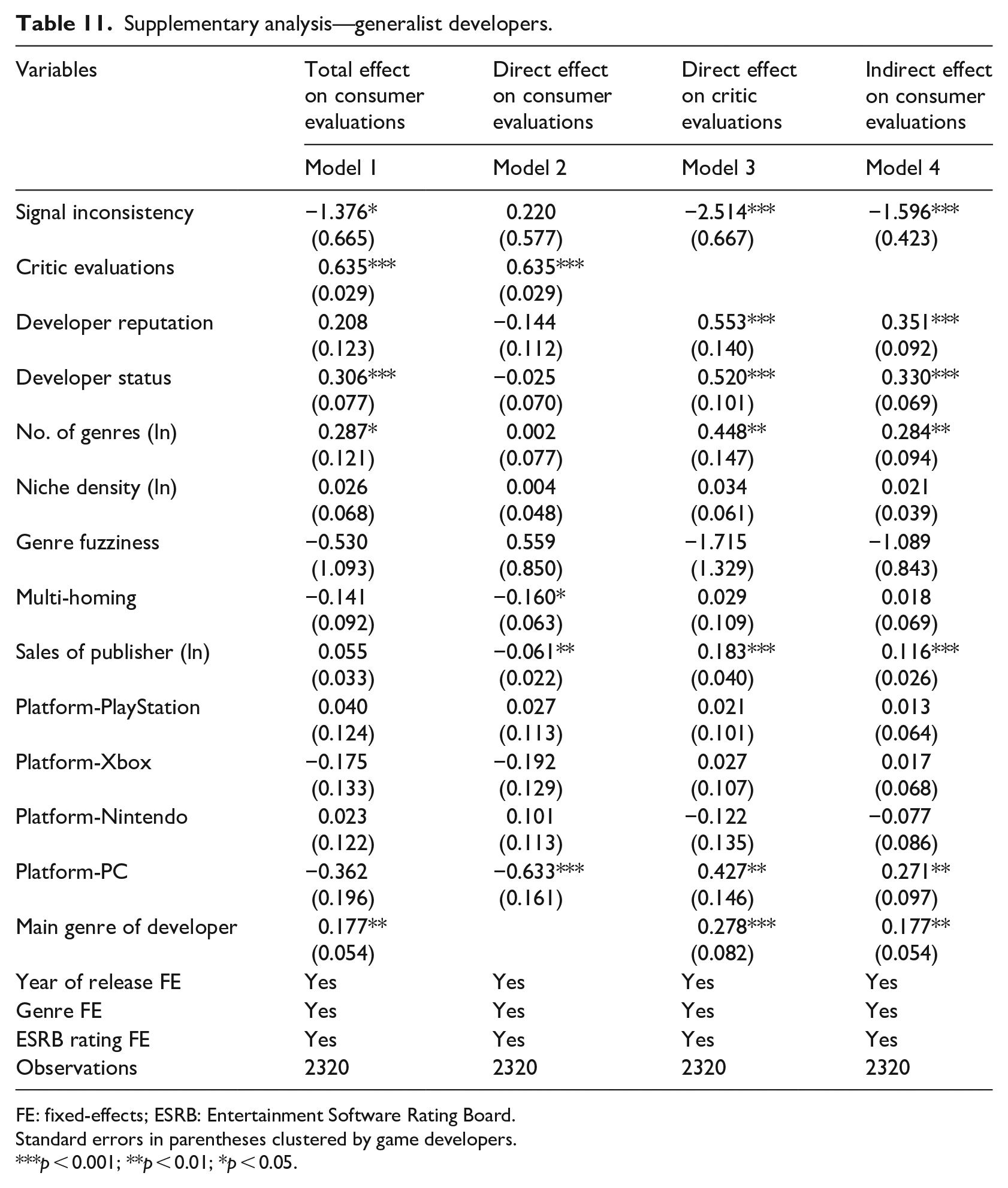

Specialists versus generalists

To further understand the role of signal inconsistency, I conduct a set of subsample analyses that distinguish between specialist and generalist developers. My theory suggests that consumer audiences are less likely to attend to all signals of a developer. It would be particularly so if the developer is a generalist who diversifies into many game genres, assuming that end consumers tend to focus on a narrow set of game genres. In other words, it is even more unlikely for consumers to sense a generalist developer’s various signals in many different genres, as compared with a specialist’s signals. To test this corollary, I divide the full sample into two based on the median of the number of genres that a developer spans in the past 5 years, and then repeat SEM estimations in each of the two subsamples. In Table 10, analyzing specialist developers, the direct effect of signal inconsistency on consumer evaluations is negative and significant (β = −1.286, s.e. = 0.481), whose effect size is comparable to that on critic evaluations. In Table 11, analyzing generalist developers, however, the direct effect on consumer evaluations is non-significant (β = 0.220, s.e. = 0.577), whereas there is a significant direct effect on critic evaluations (β = −2.514, s.e. = 0.667). While inconclusive, comparing the two results suggests that the effect of signal inconsistency on consumer audiences is weaker when game developers are more of generalists diversifying into many genres.

Supplementary analysis—specialist developers.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

Supplementary analysis—generalist developers.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Standard errors in parentheses clustered by game developers.

p < 0.001; **p < 0.01; *p < 0.05.

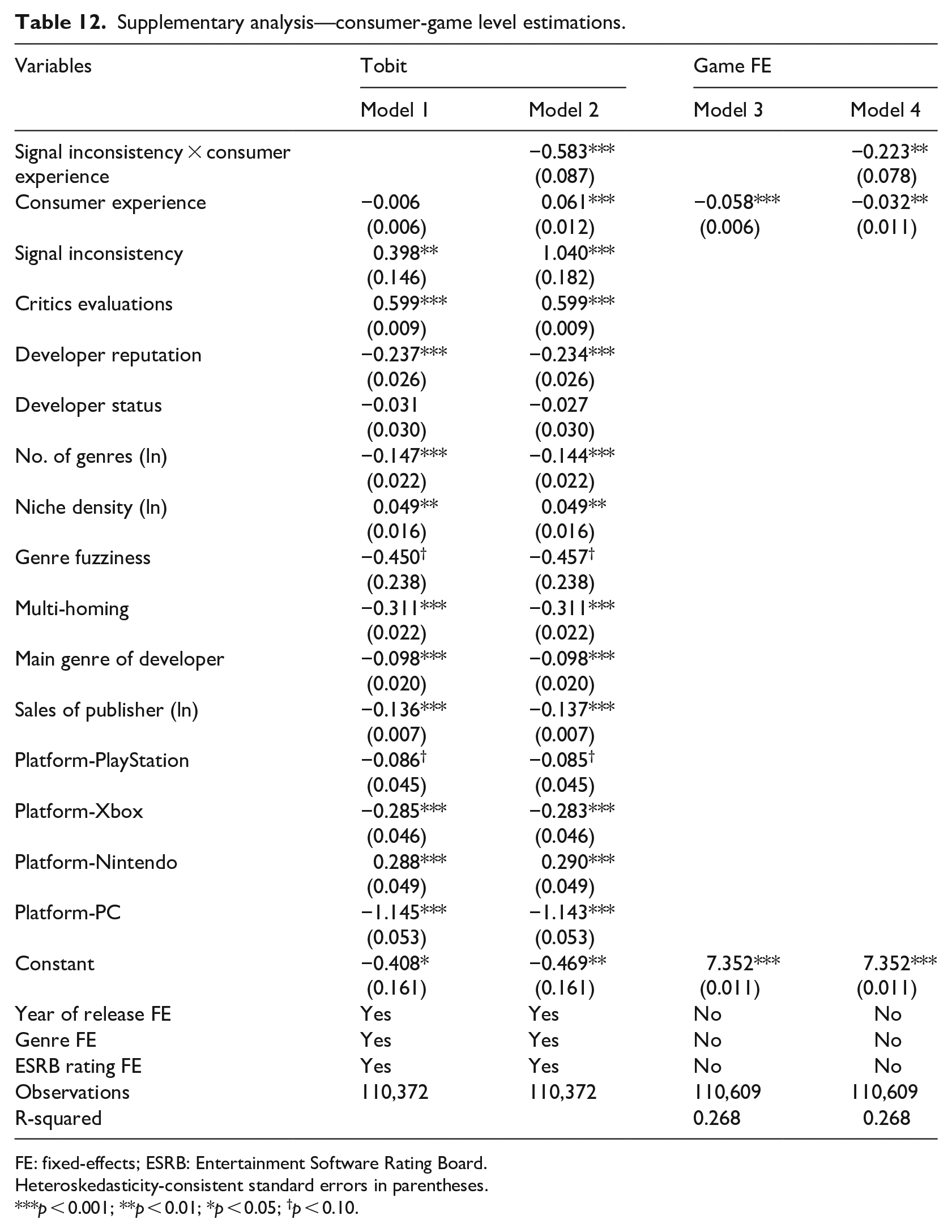

Consumer experience

The final extension focuses on intra-audience heterogeneity. My main framework emphasizes inter-audience distinction. Specifically, I posit that critics are more sensitive to signal inconsistency because they hold a more holistic view on diverse signals than consumers. If so, one may expect differences among consumers across levels of experience: more experienced consumers may react more strongly to signal inconsistency than less experienced consumers. To test this argument, I collect consumer-game level ratings. Consumer experience is simply measured as the number of game ratings posted by a consumer before the focal game. I then run consumer-game level estimations to examine the role of consumer experience. Results are presented in Table 12. Models 1 and 2 employ Tobit regressions, where I find a significant and negative interaction between consumer experience and signal inconsistency on individual ratings (β = −0.583, s.e. = 0.087). It suggests that consumers with more rating experiences are indeed more reactive to the inconsistency of reputation signals. Models 3 and 4 use game-level fixed-effects that rule out game- and developer-level factors. 17 The interaction effect in Model 4 is significant and negative on individual ratings. Altogether, the primary results show patterns that are in line with my conjecture.

Supplementary analysis—consumer-game level estimations.

FE: fixed-effects; ESRB: Entertainment Software Rating Board.

Heteroskedasticity-consistent standard errors in parentheses.

p < 0.001; **p < 0.01; *p < 0.05; †p < 0.10.

Discussion

Moving beyond the baseline proposition that signal inconsistency leads to evaluation penalty (Vergne et al., 2018; Zhao and Zhou, 2011), I draw attention to a key scope condition for signal inconsistency to take effect. Specifically, I argue that the effect of signal inconsistency is highly contingent on the extent to which audiences attend to and interpret various market signals. Signal inconsistency is hence proposed to exert a stronger direct effect on critics than consumers, because consumer audiences are much less likely to keep a full track of various signals than critics. I test these hypotheses in a sample of video games. The results show that while the direct effects of signal inconsistency on critic evaluations and consumer evaluations are both negative, the former’s effect size is more than two times as large as the latter’s. I also find a substantial mediating effect of critic evaluations on the relationship between signal inconsistency and consumer evaluations.

This study contributes mainly to the signaling literature. While the role of market signals has been well established, recent literature shifts attention to the important issue of signal inconsistency, with a holistic perspective on multiple signals from the same entity (Stern et al., 2014). Signal inconsistency leads to penalty because audiences find it challenging to make sense of incongruent quality signals. While scholars have underscored the negative consequences of signal inconsistency (Parker et al., 2017; Wang and Jensen, 2019), most studies in this stream focus on producers and the diverse signals they send, at the cost of treating audiences as homogeneous. This study adds to this literature on signal inconsistency by emphasizing the important role of audiences who process different signals. Specifically, I stress that signals are not equally observed by any audiences. Audiences are not only divergent in their tastes and preference (Kim and Jensen, 2011; Shrum, 1991), but also different in the extent to which they attend to and interpret market signals (Kovács and Hannan, 2010). When audiences fail to consider different signals from the same entity, they may not sense its signal inconsistency.

Supporting my arguments, I find that signal inconsistency has a stronger effect on critic evaluations than consumer evaluations. This implies the importance of considering heterogeneity at the audience side (i.e. signal receivers) when theorizing about signal inconsistency. Such findings are also in line with recent proposition that critics attend to a different set of information cures than consumers (Falchetti et al., 2022). However, it does not mean that critic evaluations are more accurate than consumer evaluations, since new products with inconsistent past signals may not necessarily have worse inherent quality. To understand evaluation accuracy, one would have to measure inherent quality, which can be challenging in the cultural market.

I also highlight the market mediation role of professional critics in processing signal inconsistency. Interestingly, while the direct effect of signal inconsistency is much smaller on consumer evaluations than on critic evaluations, its total effects are comparable on both. It suggests that although consumers may not clearly observe signal inconsistency themselves, they are influenced by signal inconsistency indirectly when they accept the evaluation schema of critics (Hsu et al., 2012b). This provides additional evidence on the important role of critics as intermediaries that reduce information asymmetry in the market. Critics search for, select, and process various vital signals in their evaluations, which facilitates the quality judgments of consumers who otherwise would overlook such important cues as signal inconsistency.

By doing so, this study underscores the importance of controlling for mutual influence between different audiences. While research has proliferated on how different types of audiences react differently to organization practices and market signals (Cattani et al., 2014), many studies in this stream do not highlight the interactions between different audiences. Such neglect is a bit surprising, since it has also been well established that different groups of audiences often affect each other’s evaluations (Kovács and Sharkey, 2014; Zhao et al., 2018). In this study, I find that after controlling for the influence of critics on consumers, the effect size of signal inconsistency on consumer evaluation is reduced by about 60%. That is, the influence of signal inconsistency on consumer evaluations is largely explained by the mediating effect of critic evaluations. This suggests the importance of accounting for the mutual influence between different groups of audiences, when analyzing how they respond to organizational practices and market signals. For instance, even though investors and customers prefer different types of startups (Pontikes, 2012), investors’ decisions may also be influenced by whether startups will be well accepted by customers. When investors foresee that an atypical startup will be less favorably received by customers, will they still invest in the startup? Interdependence between audiences is simple in this study, as it is more likely for consumers to be influenced by critics, than the other way around. It can become, however, complicated for other types of audiences where the influence is largely bi-directional.

Limitations and future studies

This research opens more space for future studies. First, although I motivate this project with market signal inconsistency in general, I develop and test hypotheses on a very specific form of signal inconsistency, that is, the inconsistency of quality signals over time. Future studies may extend it in two different ways. On one hand, scholars may examine other forms of quality signal inconsistency, such as inconsistency across different dimensions (Zhao and Zhou, 2011), inconsistency across different product categories (Wang and Jensen, 2019), and even inconsistency between different groups of audience evaluation. On the other hand, while most of current attention is paid to the inconsistency of vertical orderings, it is also important to explore the inconsistency of horizontal/categorical signals (Negro et al., 2015). For instance, when a firm makes distant jumps in its categorical focus over time, it may face the problem of categorical identity inconsistency (Leung, 2014). Although those are different forms of inconsistency, I suspect the underlying theory may still hold that the effect of signal inconsistency depends on the extent to which audiences attend to various market signals. Nonetheless, more empirical investigation is needed.

Second, while this study focuses on the distinction between critics and consumers (i.e. inter-audience heterogeneity), I have downplayed the heterogeneity within each type of audiences (i.e. intra-audience heterogeneity). Critics (or consumers) in fact also differ in terms of their attention, experience, taste, or purpose (Goldberg et al., 2016), which may lead to divergent evaluations among themselves. In other words, whereas my research analyzes aggregate evaluations of critics (or consumers), it is interesting to examine the variance of their individual evaluations (Sun, 2012). Although I touch upon it in additional analyses, more work is needed. Zooming into individual evaluations also allows scholars to explore their dynamics and inconsistency at the product (game) level. Some games encounter a high consensus among audiences, while others a high dissensus (d’Astous and Touil, 1999). It is therefore interesting to investigate the implications of this type of inconsistency at the product level, which is overlooked in this firm-level inconsistency research. Relatedly, while focusing on the aggregate evaluations of audiences, I have also missed their dynamics. Earlier reviews may shape how later audiences evaluate focal products (Hoskins et al., 2021; Pang et al., 2022). I leave this question to future studies.

Third, whereas I emphasize that audiences differ in their attention to market signals, I do not look into their heterogeneity in taste and preference (Holbrook, 1999; Kim and Jensen, 2011). Future studies could try to explore whether certain types of audiences are in favor of signal inconsistency. Such research will help us understand the potential positive side of signal inconsistency, extending the current focus on its negative consequences. Relatedly, while I compare critics and consumers, two important audiences in the market, it would be interesting to extend my framework to other types of audiences, such as investors, peers, and strategic partners (Cattani et al., 2014; Pontikes, 2012). For instance, how would investors or competitors react to the inconsistency of a firm’s reputation signals?

Finally, it is important to note that my findings can be context-dependent. In evaluating new games, for instance, consumers usually follow critics (Zhu and Zhang, 2010), which is an important scope condition for my mediation hypothesis. In the settings where consumers and critics evaluations are simultaneous, however, the argument for the mediation role of critics might not hold. Moreover, in the setting of video games, consumers pay close attention to critic evaluations, so that their behavior is influenced by the latter (Zhao et al., 2018). If consumers in other settings rely more on their own assessments or other social media, rather than referring to critics as their opinion leaders (Eliashberg and Shugan, 1997), the mediating role of critics will be dampened. It is also useful to consider the distinction between connoisseurial and procedural reviews (Blank, 2007). My empirical evidence is based on the connoisseurial reviews of video games that are subject to audiences’ experiences, tastes, and training. In the process of procedural reviews (e.g. digital cameras or software) that depends on objective and mechanical testing, we may see a different pattern.

Conclusion

In conclusion, this study emphasizes a key scope condition for signal inconsistency to take effects: audiences process various signals emitted by a firm. Based on that, I argue and find evidence that professional critics are more reactive to signal inconsistency than end consumers in their evaluations of video games. I also observe that critics act as an important market intermediary to bridge the effect of signal inconsistency on consumers. Moving beyond the general signal inconsistency discount, this article highlights the importance of investigating audience heterogeneity in the research of signal inconsistency.

Footnotes

Appendix 1

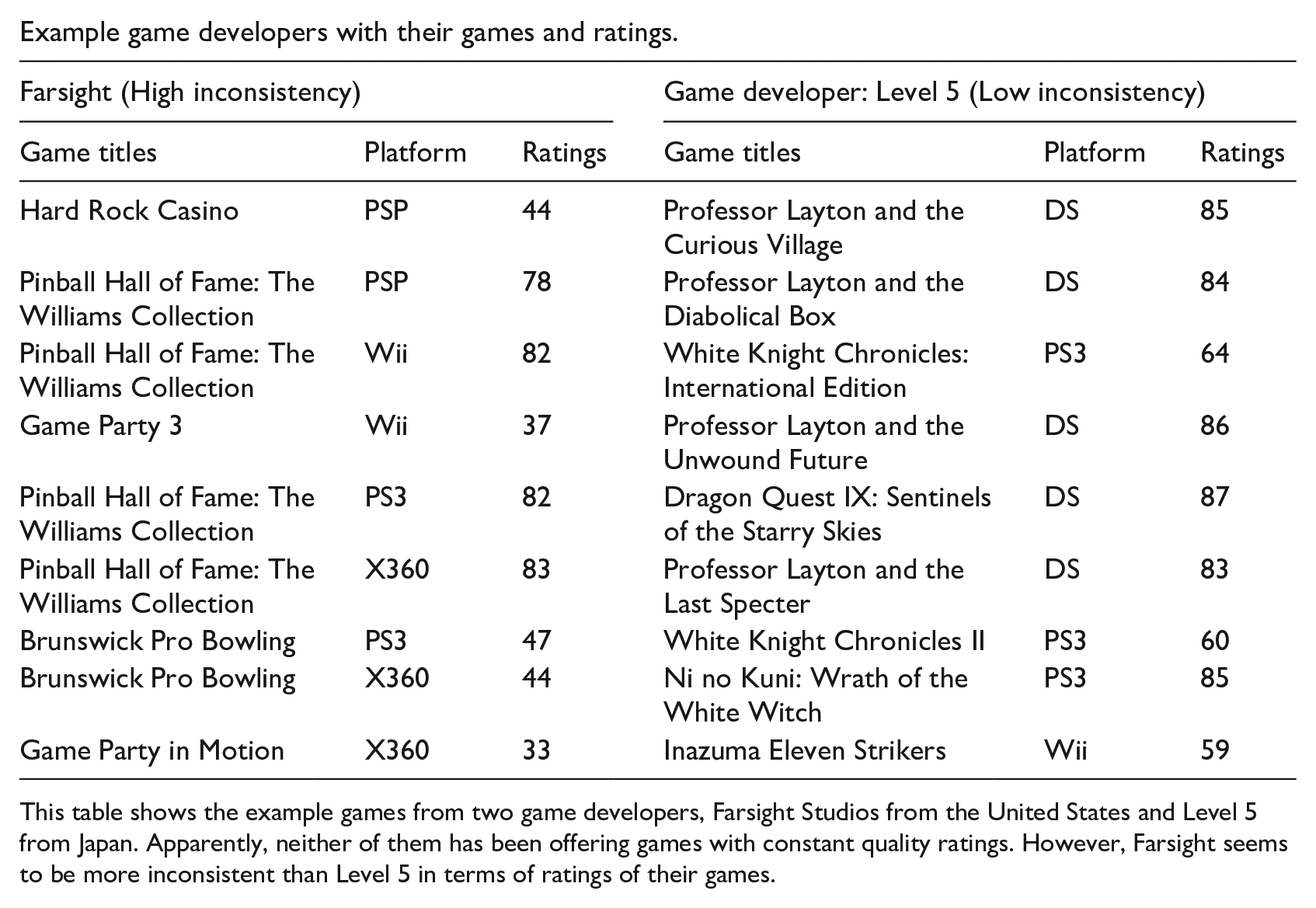

Example game developers with their games and ratings.

| Farsight (High inconsistency) | Game developer: Level 5 (Low inconsistency) | ||||

|---|---|---|---|---|---|

| Game titles | Platform | Ratings | Game titles | Platform | Ratings |

| Hard Rock Casino | PSP | 44 | Professor Layton and the Curious Village | DS | 85 |

| Pinball Hall of Fame: The Williams Collection | PSP | 78 | Professor Layton and the Diabolical Box | DS | 84 |

| Pinball Hall of Fame: The Williams Collection | Wii | 82 | White Knight Chronicles: International Edition | PS3 | 64 |

| Game Party 3 | Wii | 37 | Professor Layton and the Unwound Future | DS | 86 |

| Pinball Hall of Fame: The Williams Collection | PS3 | 82 | Dragon Quest IX: Sentinels of the Starry Skies | DS | 87 |

| Pinball Hall of Fame: The Williams Collection | X360 | 83 | Professor Layton and the Last Specter | DS | 83 |

| Brunswick Pro Bowling | PS3 | 47 | White Knight Chronicles II | PS3 | 60 |

| Brunswick Pro Bowling | X360 | 44 | Ni no Kuni: Wrath of the White Witch | PS3 | 85 |

| Game Party in Motion | X360 | 33 | Inazuma Eleven Strikers | Wii | 59 |

This table shows the example games from two game developers, Farsight Studios from the United States and Level 5 from Japan. Apparently, neither of them has been offering games with constant quality ratings. However, Farsight seems to be more inconsistent than Level 5 in terms of ratings of their games.

Appendix 2

Acknowledgements

The author would like to thank the Coeditor Oliver Alexy and three anonymous reviews for their constructive feedback and guidance in the review process, which substantially improved the manuscript.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.