Abstract

Educational videos hold great potential to support learning in psychology education. However, viewers typically process the content passively, as opportunities to interact with videos are usually restricted to simple control features. H5P, a technology to enhance videos with feedback features, may foster learners’ engagement in generative activities and in more constructive ways of learning. Yet, research on H5P remains limited. A field study with

Keywords

Introduction

Fueled by the COVID-19 pandemic, the usage of educational videos has strongly increased across disciplines (Schorn, 2022; Zander et al., 2020), including psychology (e.g., Jacob & Centofanti, 2023; Shen et al., 2017). Research from multimedia learning ascribes high potential to the use of educational videos (Rolfe & Gray, 2011), as watching videos can help students integrate textual and pictorial information with their prior knowledge to help them make efficient use of their limited working memory capacity (Mayer, 2014; Noetel et al., 2021). However, the rapidly changing, fleetingly presented content may cause cognitive overload (Merkt & Schwan, 2016). Students might also process the presented content rather passively, which is assumed to be less effective for knowledge acquisition than more active forms of learning (Chi & Wylie, 2014), rather than being prompted to engage in generative learning activities, which have been shown to be conducive for learning (e.g., Fiorella, 2023; Mende et al., 2021; Roelle & Nückles, 2019).

Against this background, it seems promising to augment educational videos with interactive features, typically grouped into two types: learner control features and feedback features.

Theoretical Framework

Learning with Educational Videos

Educational videos were identified as a mega-trend a few years ago (Simschek & Kia, 2017). Reactions to the COVID-19 pandemic have further increased their use in education (Rhody, 2022; Schorn, 2022), especially in formal academic settings (Brame, 2016; Noetel et al., 2021; Persike, 2020; Zander et al., 2020). Meta-analytical evidence suggests that the use of digital technology, and instructional video in particular, is not only popular but also an effective way to stimulate learning (e.g., Lin & Yu, 2024; Schmid et al., 2014).

Studies examining the impact of educational videos frequently explain their effects using the Cognitive Theory of Multimedia Learning (CTML; Mayer, 2014). According to CTML, videos offer added didactic value compared to monomedia educational material since they appeal to both the channels for processing auditory and visual information in working memory (Mayer, 2014; Noetel et al., 2021). Given that each channel has a limited processing capacity, utilizing a dual-channel approach in content creation is supposed to minimize information-processing overload in one channel. Also, selecting images and sounds into verbal and pictorial models, organizing these models, and integrating them with prior knowledge are regarded as crucial processes for learning. As meta-analyses focusing on the multimedia effect show, learners learn better when both channels are addressed compared to when only one is targeted (e.g., Rolfe & Gray, 2011). Combining auditory and visual information, as videos do, therefore offers great potential to support learning.

While the dynamic nature of videos can aid understanding of processes and mechanisms (Mayer et al., 2007), inhibitory effects may also occur: Because the presented information is transient, learners are forced to hold multiple elements in working memory. If the element interactivity is too high, the capacity is exceeded and earlier elements are discarded (Costley et al., 2021; Merkt & Schwan, 2016) a phenomenon known as the transient information effect, which may cause extraneous cognitive load (e.g., Leahy & Sweller, 2011; see the section “Extraneous cognitive load when learning with interactive educational videos”).

Fostering Learning with Interactive Features in Educational Videos

Despite their overall effectiveness (Hoogerheide & Sepp, 2024), educational videos may also pose challenges for learners because they place learners in a passive role. According to the ICAP framework (Chi & Wylie, 2014), learners who remain behaviorally passive (such as those merely watching a video) tend to store information only in an isolated way, likely leading to minimal understanding of the to-be-learnt concepts. In contrast, active, constructive, and interactive learning activities are expected to promote deeper processing, more complete schemas, and better transfer (Chi, 2009; Chi & Wylie, 2014; for a critique, see Thurn et al., 2023).

Therefore, designers of educational videos should explore ways to move learners beyond a passive mode of engagement, for example, by adding interactive features to the video.

1

In this article, we follow the definition of

For this study, we focus on the relationship between features of the learning environment (i.e., digital videos) and learners’ behavioral and (meta-)cognitive activities. Accordingly, we examine the effects of two types of interactive features: learner control features and feedback features.

Interactive Features to Support Learner Control

Learner control refers to granting learners the opportunity to determine how instructional information is delivered. In the context of digital videos, this refers to options to play/pause the video, change its speed, re-watch specific parts, and adjust the screen size (e.g., Merkt & Schwan, 2016). Ploetzner (2024) labels these “navigational features". Usage is closely tied to learners’ (meta-)cognitive processes, with decisions to use these features depending on ongoing monitoring of understanding. By doing so, learners can create more favorable conditions for subsequent cognitive processing.

Prior research indicates that videos and animations affording learner control lead to superior learning outcomes than those lacking such control (e.g., Hasler et al., 2007; Mayer & Chandler, 2001; Moreno, 2007; Schwan & Riempp, 2004; for a review, see Moreno and Mayer, 2007). For example, Schwan and Riempp (2004) investigated how students learned to tie nautical knots using either an interactive video with features to play/pause, change its speed, and re-watch segments, or a noninteractive video without these features. The results showed that students with access to learner control features learned to tie the knots shown in the video significantly faster than students in the noninteractive condition.

Interactive Features to Provide Feedback

For some learners, the affordances of learner control features in educational videos may not be sufficient. According to multimedia research, some learners may lack the (meta-)cognitive skills needed to use these features effectively (Scheiter, 2014). Within the ICAP framework, learner control features are limited in that they support only

One approach is to embed features that offer learners feedback on their understanding. Ploetzner (2024) calls them “enhanced interactive features.” For example, clickable quiz tasks (e.g., Mutawa et al., 2023; Weinert et al., 2021) prompt learners to answer questions that assess their understanding. After responding to a question, learners receive feedback indicating whether they have understood the to-be-learned content correctly. Providing answers to such quiz tasks can be regarded as a generative learning activity (e.g., Fiorella, 2023; Mende et al., 2021; Roelle & Nückles, 2019), especially when they involve the generation of shorter or longer answers (as opposed to clicking on correct options of multiple-choice questions). An engagement in such generative learning activities has repeatedly been shown to have beneficial effects on learning and understanding, as they require learners to review what they have learned, make connections, draw inferences, and draw conclusions, thereby leading them to deeply process the learning material and expand upon their schemas (e.g., Chi & Wylie, 2014; Craik & Lockhart, 1972; Fiorella, 2023).

A meta-analysis of 17 studies by Ploetzner (2024) showed a small to medium effect (Hedges’

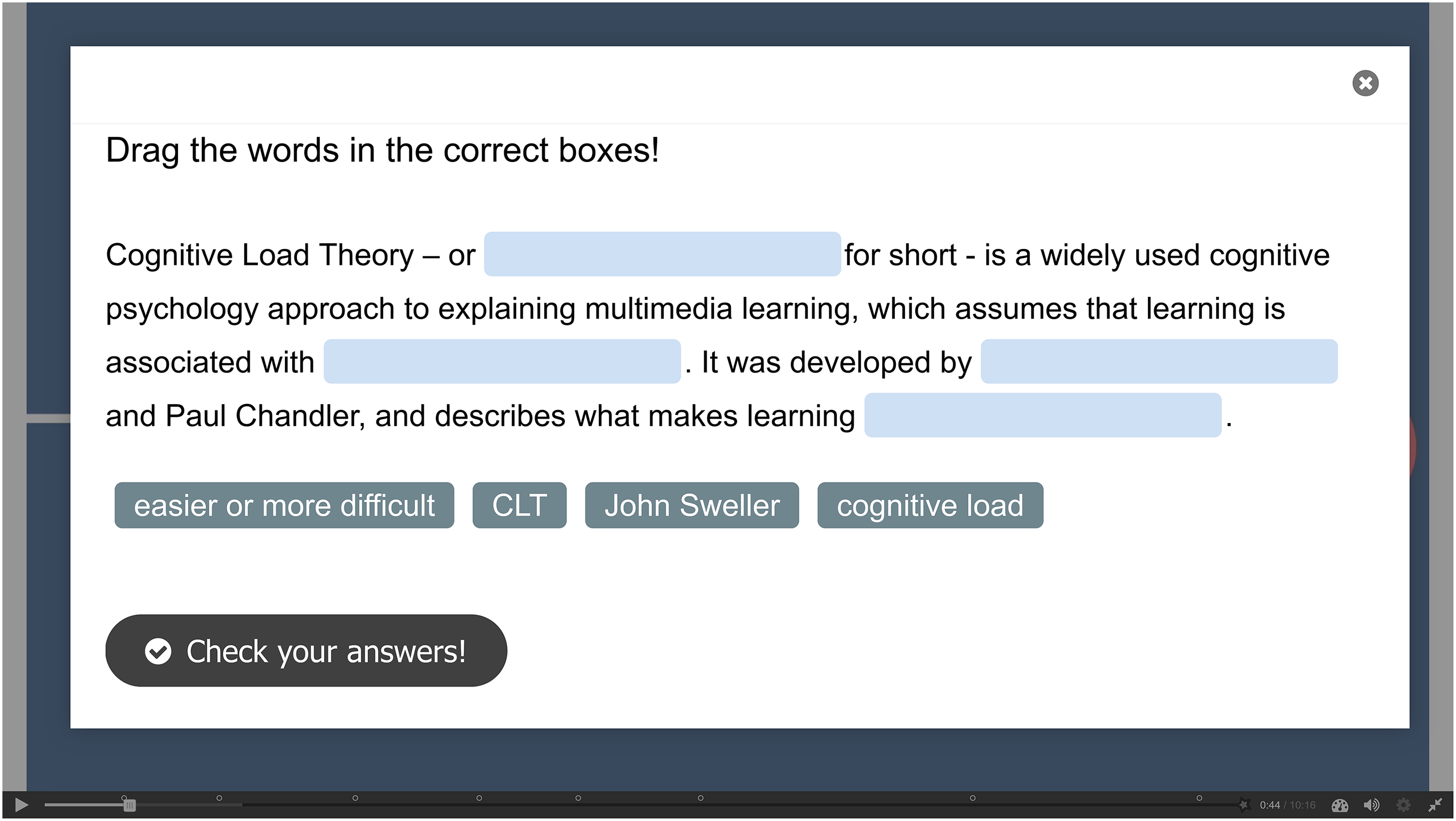

One technology that is increasingly used in higher education to implement feedback features in videos is the H5P software (Jacob & Centofanti, 2023; Mutawa et al., 2023; Weinert et al., 2021). To foster active and constructive learning behaviors (Chi & Wylie, 2014), the H5P content type “interactive video” allows for the enrichment of clickable features, such as embedded quiz tasks. One example are “fill in the blanks” quiz tasks, by which students are prompted to enter terms or short answers into a given text at the right places. Another H5P feature is called “drag the words,” which involves students in categorization and conceptual organization processes, which may also activate deeper processing.

These features show learners whether their answers are correct, allow them to answer incorrectly answered questions again, and thus provide immediate, yet simple feedback. A recent meta-analysis by Brummer et al. (2024) found a medium to large effect (

So far, research about the effects of H5P-based feedback features in educational videos in tertiary education is scarce (Mutawa et al., 2023; Ploetzner, 2024; Weinert et al., 2021). A study by Jacob and Centofanti (2023) yielded no significant differences in summative quiz scores between students using H5P-enriched and noninteractive videos covering the topics “classical conditioning” and “sleep regulation.” The results also showed that overall engagement in the interactive content was low, with only about one-third of the students watching the assigned video and completing all feedback features. Thus, further research that investigates the effects of H5P-based feedback features on learning is very much needed, especially given the inconclusive findings from the meta-analyses by Brummer et al. (2024) and Mertens et al. (2022).

Extraneous Cognitive Load When Learning with Interactive Educational Videos

One of the most influential theories guiding research on educational videos is the

Extraneous Load and Educational Videos Without Interactive Features

Without interactive features in videos, learners have no control over how the information is presented. On the one hand, this may result in low extraneous cognitive load, as learners are not distracted by features that draw attention away from the content. On the other hand, in line with the transient information effect, extraneous cognitive load might also be high (see Skulmowski & Xu, 2022): If learners do not have the opportunity to pause or re-watch complex content (i.e., when element interactivity is high), they may have to retain too many elements in working memory at once, which hinders the processing of additional information. Likewise, when content is easy (i.e., when element interactivity is low), learners may lose attention and engage in unrelated thinking, which can raise extraneous cognitive load.

Extraneous Load and Learner Control Features

In educational videos that grant learner control features (e.g., start/pause/re-watch), extraneous cognitive load may also either increase or decrease. In one respect, such learner control features might distract learners from the content or prompt suboptimal decisions, such as skipping or accelerating segments they believe they have already mastered, which can hinder learning. In contrast, these features allow learners to adapt the presentation to their needs, for example, by rewatching difficult sections, which can help reduce extraneous cognitive load (e.g., Hasler et al., 2007; Merkt & Schwan, 2016).

Extraneous Load and Feedback Features

Feedback features can also increase extraneous cognitive load, as quiz tasks may disrupt the learning process and create a split-attention situation (see Ayres & Sweller, 2021), which may hinder schema construction (Cummins et al., 2016; Ploetzner, 2024). However, this can be prevented by embedding quiz tasks directly into the video immediately after each section (see temporal contiguity principle, Mayer & Fiorella, 2014) and automatically pausing it during the response. This allows learners to focus on one task at a time and, in doing so, may reduce unnecessary extraneous cognitive load (Ayres & Sweller, 2021; Hasler et al., 2007; Ploetzner, 2024). Furthermore, empirical evidence (e.g., Fyfe et al., 2015; Moreno, 2004; Wang et al., 2019) based on CLT (Sweller et al., 2019) suggests that immediate feedback on quiz tasks helps learners understand their mistakes, thereby reducing extraneous cognitive load by preventing the need for additional cognitive effort to process what was missed. To our knowledge, only one study by Kok et al. (2024) assessed educational videos with feedback features while measuring extraneous cognitive load. Yet, its findings remain inclusive because the study lacked a control group and did not assess learning outcomes.

Research Questions and Hypotheses

Building on the theoretical considerations and empirical findings, we conducted an experimental field study to examine how integrating learner control and feedback features in educational videos that covered psychological theories and evidence affects students’ extraneous cognitive load and learning. Students watched one of three versions of an animated educational video on psychological learning theories: one without any features, one with only learner control features, and one with a combination of learner control and feedback features implemented via H5P.

RQ1: To What Extent do Educational Videos with Learner Control Features and the Combination of Learner Control and Feedback Features Affect Extraneous Cognitive Load Compared to Educational Videos without Interactive Features?

As outlined above, there are theoretical arguments suggesting that higher levels of interactivity could increase extraneous cognitive load. Yet, when interactive features are well-designed, they should decrease extraneous cognitive load (e.g., Hasler et al., 2007; Moreno, 2007; Moreno & Mayer, 2007; Spanjers et al., 2011). Therefore, we hypothesized that interactive functionality in educational videos would reduce extraneous cognitive load relative to videos without interactive functionality (H1). Furthermore, we expected extraneous cognitive load to be even lower when students learn with an educational video offering both learner control and feedback features, compared to a video with learner control features alone (H2).

RQ2: What are the Effects of Educational Videos with No, Only Learner Control Features, and the Combination of Learner Control and Feedback Features on Students’ Knowledge Acquisition?

In line with recent studies (e.g., Menekse et al., 2013; Merkt & Schwan, 2016; Schwan & Riempp, 2004), we expected that the integration of interactive features in educational videos (whether providing learner control or feedback) would support knowledge acquisition better than the omission of such features. We assumed this to be the case both for self-assessed and externally assessed (declarative and transfer) knowledge (H3). Based on the ICAP framework (Chi & Wylie, 2014) and empirical findings (Ploetzner, 2024), we further assumed that learners watching the video with combined learner control and feedback features would outperform those who learned with a video with learner control features only. Again, we expected that this would apply to both self-assessed and externally assessed knowledge (i.e., declarative and transfer knowledge; H4).

Method

Participants and Design

Although 231 students initially clicked on the survey, only 120 finished it. Only complete data sets were included in the subsequent analyses. Following recommendations by Hillygus and LaChapelle (2022) and informed by test runs with experienced testers indicating that reliable responses were possible when investing at least 16 min in the learning experience, we excluded nine cases with unusually fast completion times (under ∼16 min). This threshold was slightly below half the median completion time (∼18 min), which Hillygus and LaChapelle (2022) recommend as a cutoff for excluding unusually fast cases. Such cases likely indicate inattentive or superficial responding or that the educational video was skipped at some point. Additionally, two cases were omitted from further analyses because they reported sound problems while watching the educational video.

In a 1 × 3 between-subjects factorial design, participants were randomly assigned to one of three experimental conditions: (1) Educational video without interactive features (

Educational Video

The intervention consisted of an animated educational video of approximately 8 min, created in a contemporary style using motion graphics. It covered key principles of how to design instructional multimedia content. The content focused on the explanation and practical application of CLT, CTML, and Mayer's 12 principles of Multimedia Learning. Viewers were also given implications on how to put theory into practice.

Procedure

After agreeing to participate, students received a link to an online platform that they could access on a personal computer without a time limit. Participants first answered a prequestionnaire on sociodemographic data. In addition, self-assessed and externally assessed prior knowledge state regarding the content of the video were measured. Each participant watched the educational video following the pretest. After the educational video, students were directed to the posttest, which assessed the experienced extraneous cognitive while watching, as well as their self-assessed and externally assessed knowledge state about the video content. Finally, participants were thanked and debriefed.

Experimental Conditions

The educational video included either (1) no, (2) only learner control or (3) learner control and feedback features. For (2) and (3), we drew on previous research that utilized several control and feedback features at once to enrich the respective video (e.g., Jacob & Centofanti, 2023; Mutawa et al., 2023; Yang & Shen, 2018). Figure 1 shows a still image of the educational video without interactive features. In this condition, once playback began, users could not interact with the medium.

Still image of educational video without interactive features.

Students in the second condition viewed an educational video that was enriched with learner control features (see Figure 2). The selection of the particular learner control features was based on previous research (e.g., Findeisen et al., 2019; Merkt et al., 2011; Schwan & Riempp, 2004). Users could right-click on the window or left-click on the timeline, play/pause, skip forward/backward, and control speed, window size, and volume. These features represent standard functionalities across video platforms, supporting a naturalistic user experience by reflecting learners’ typical media use.

Still image of educational video with learner control features.

In the third condition, the educational video was enriched with the same learner control features as shown in Figure 2, but additionally included feedback features (see Figure 3). Along the lines of prior research by Jacob and Centofanti (2023) and Mutawa et al. (2023), these feedback features included “multiple-choice questions,” “fill in the blanks” tasks, and “drag the words” exercises.

Still image of educational video with learner control and feedback features.

All features gave instant verification feedback (e.g., Brummer et al., 2024; Rüth et al., 2021) and were carefully designed to enhance engagement while avoiding excessive interactivity and its potential negative effects (Yang & Shen, 2018). All quiz tasks were created directly from the video script, with key concepts targeted intentionally after each concept was explained. This approach was informed by Fiorella and Mayer (2018), who recommend system-paced segmentation to allow novice learners to cognitively process information in meaningful units. All feedback features, when opened, functioned as brief pauses for reflection following each segment, reinforcing key declarative information and prompting transfer.

Variables

Extraneous Cognitive Load

Extraneous cognitive load (e.g., “during this video, it was difficult to recognize and link the crucial information,” Cronbach's α = .79) was measured with three items adapted from Klepsch et al. (2017). Items were to be answered on a 7-point Likert scale from (1)

Self-Assessed Knowledge

Five self-developed items to assess how participants rated their level of knowledge of the content of the video (multimedia learning, e.g., “I know what to take into account when designing multimedia learning content,” Cronbach's αpretest = .86, Cronbach's αposttest = .91) were used before and after the video. These items were to be answered on a 7-point Likert scale from (1)

Externally Assessed Knowledge

To determine possible changes in students’ knowledge from pre to posttest, a total of eight self-developed multiple-choice questions with four possible answers each on declarative knowledge related to the video content (e.g., “Which assumption(s) is/are compatible with the theory of cognitive load (CLT)?”) and on transfer knowledge (e.g., “Jasmin watches an animation that explains the formation of a thunderstorm. Unfortunately, she is more confused afterward than before. Which of the following possibilities to explain her confusion are compatible with the Cognitive Theory of Multimedia Learning (CTML)?”) were used, both in the pre and the posttest. Each answer was rated as a partial solution. Participants received partial points for each correct and marked answer as well as for each incorrect and unmarked answer. As a result, a maximum of four points could be scored for each question, leading to a maximum of 16 points. By the recommendations of Stadler et al. (2021), we formulated all knowledge test items to be closely related to the content of the learning material and piloted them for clarity before data collection. We also conducted an item difficulty analysis to identify multiple-choice options that were answered correctly or incorrectly by 90% or more of respondents in the pretest, as these do not sufficiently differentiate between low and high scorers. In total, three multiple-choice options were excluded (one for declarative knowledge and two for transfer knowledge) because they lacked discriminative power and therefore would not validly represent learning progress, which means that participants could achieve 15 points in the declarative knowledge test and 14 points in the transfer knowledge test. Although the knowledge test in the questionnaire did not contain the exact same questions as those presented through the feedback features in the educational video, it was designed to assess the same underlying content and concepts. This approach allowed us to evaluate learning outcomes without introducing a test-retest bias.

Statistical Analyses

To answer RQ1, an ANOVA was used to test the effect of type of educational video on extraneous cognitive load. Regarding RQ2, we ran mixed ANOVAs with measurement point as within-subjects factor and type of video as between-subjects factor for knowledge acquisition, separately for self-assessed and the two kinds of externally assessed knowledge (declarative knowledge and transfer knowledge). In addition to the separate mixed ANOVAs for declarative and transfer knowledge, a supplementary mixed MANOVA with time (pre- and posttest) and domain (declarative and transfer) as within-subjects factors as well as type of video as between-subjects factor was conducted. This multivariate analysis tested whether type of video influenced overall patterns across both externally assessed knowledge states. For all analyses, we set the alpha level to 5% and estimated effect sizes using partial Eta-squared.

Results

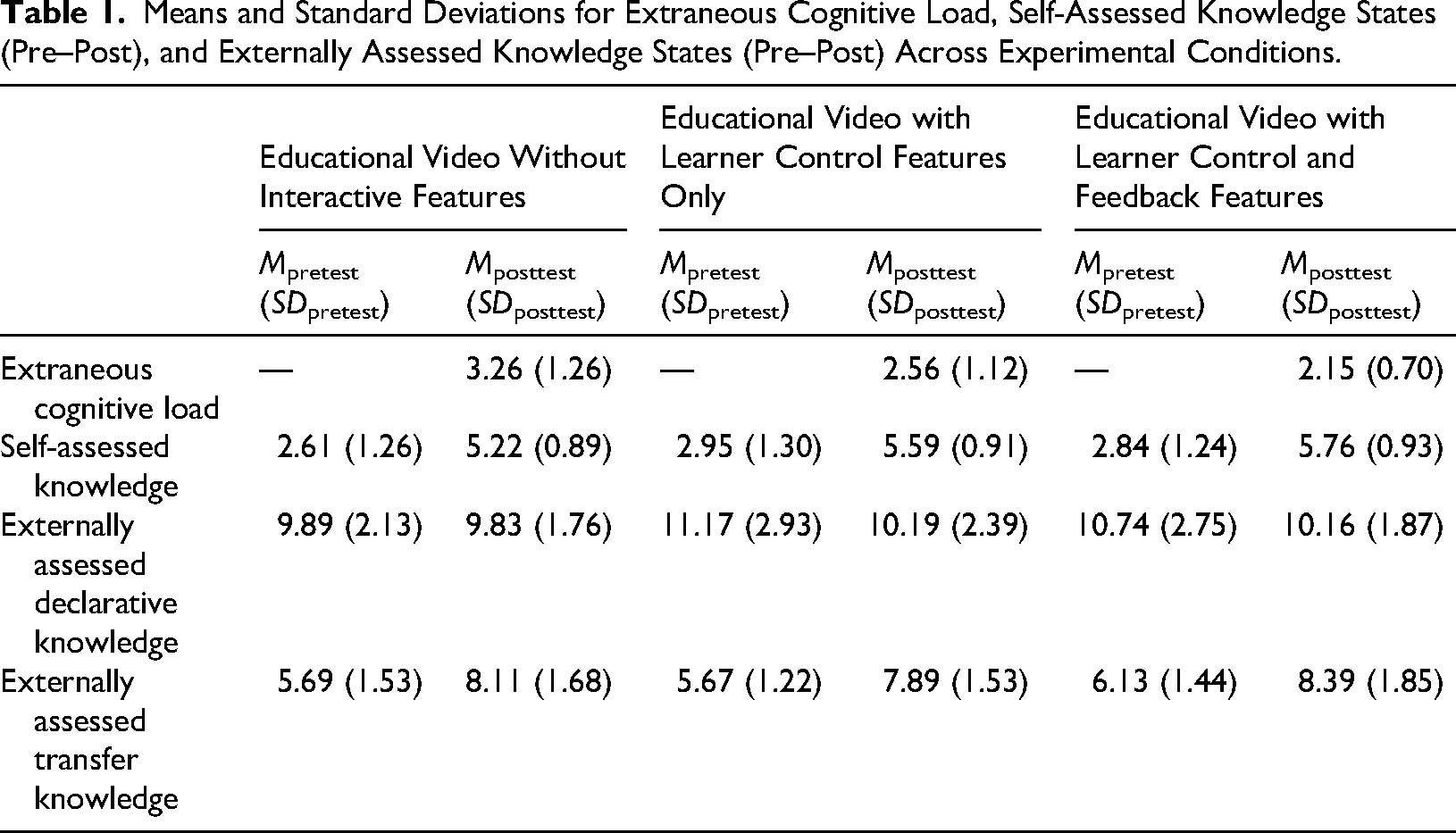

Table 1 shows the means and standard deviations for extraneous cognitive load, self-assessed knowledge states (pre–post), and the two kinds of externally assessed knowledge states (pre–post).

Means and Standard Deviations for Extraneous Cognitive Load, Self-Assessed Knowledge States (Pre–Post), and Externally Assessed Knowledge States (Pre–Post) Across Experimental Conditions.

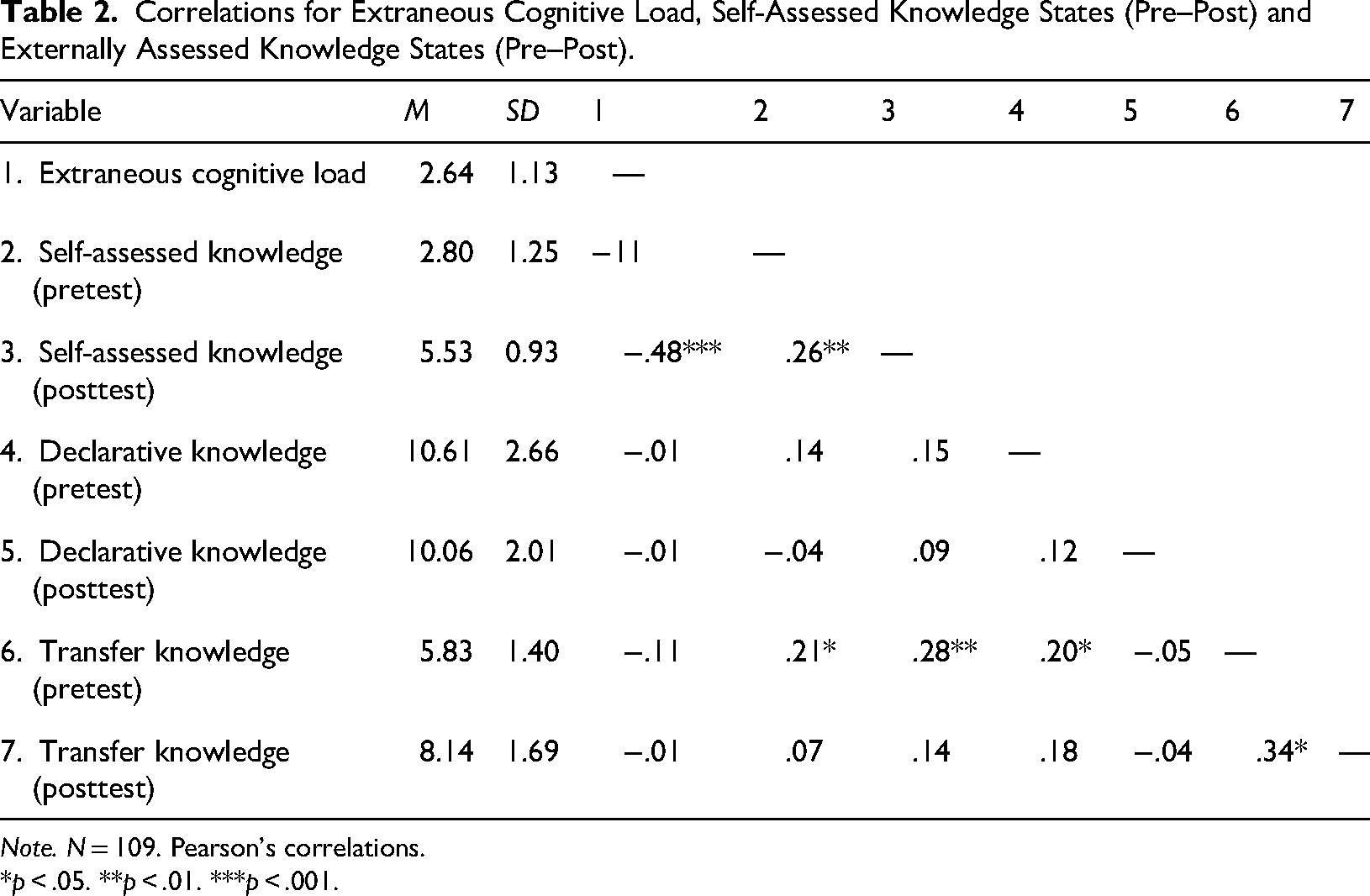

We also computed Pearson correlations between extraneous cognitive load and the different knowledge variables (see Table 2).

Correlations for Extraneous Cognitive Load, Self-Assessed Knowledge States (Pre–Post) and Externally Assessed Knowledge States (Pre–Post).

*

As expected, extraneous cognitive load correlated negatively with posttest self-assessed knowledge (

RQ1: Effects of Interactive Features in Educational Videos on Extraneous Cognitive Load

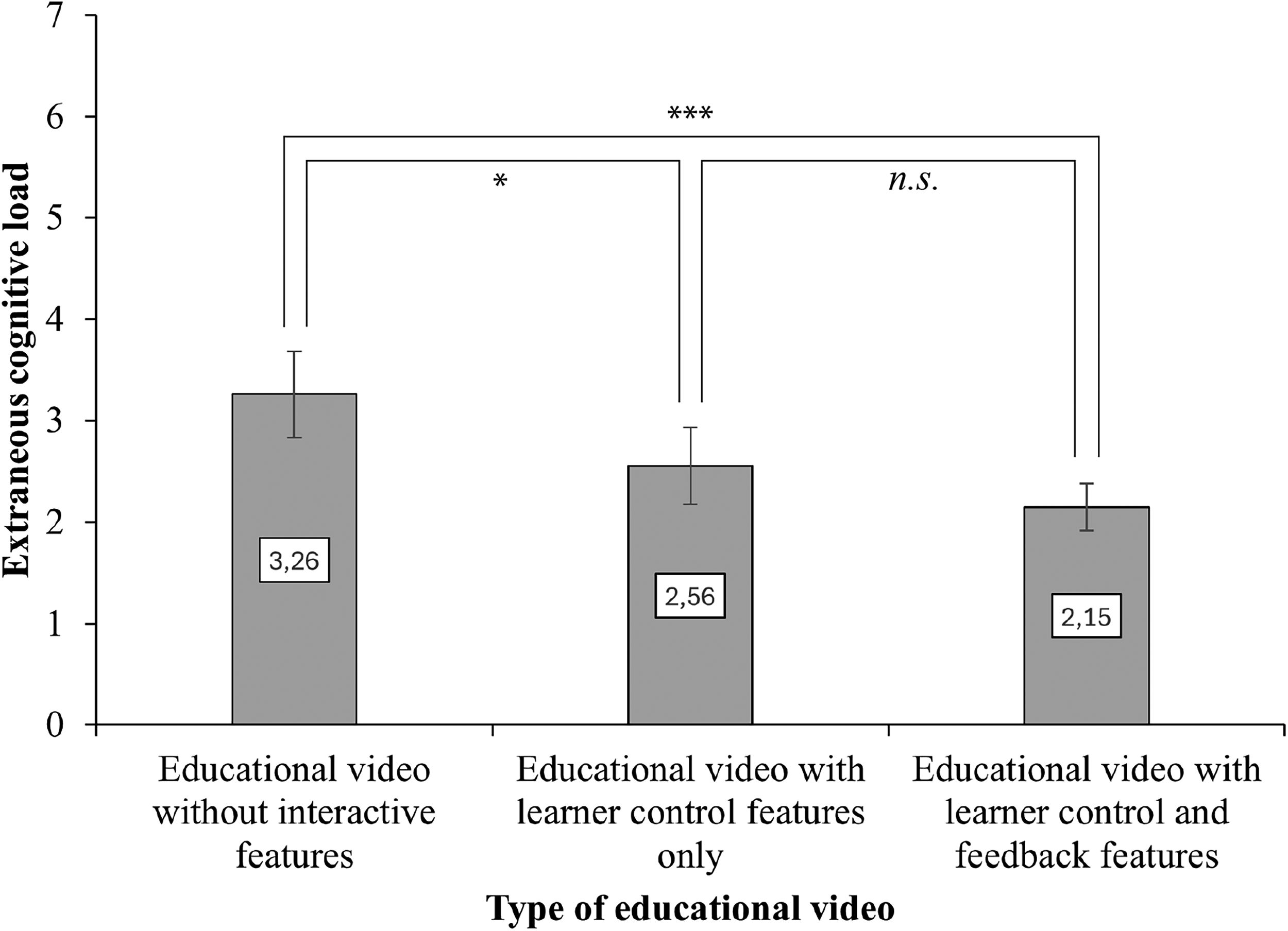

For RQ1, we hypothesized that extraneous cognitive load would decrease with an increasing level of interactivity. Figure 4 shows that the video format had a significant effect on extraneous load,

Extraneous cognitive load of type of educational video. *

RQ2: Effects of Interactive Features in Educational Videos on Knowledge Gain

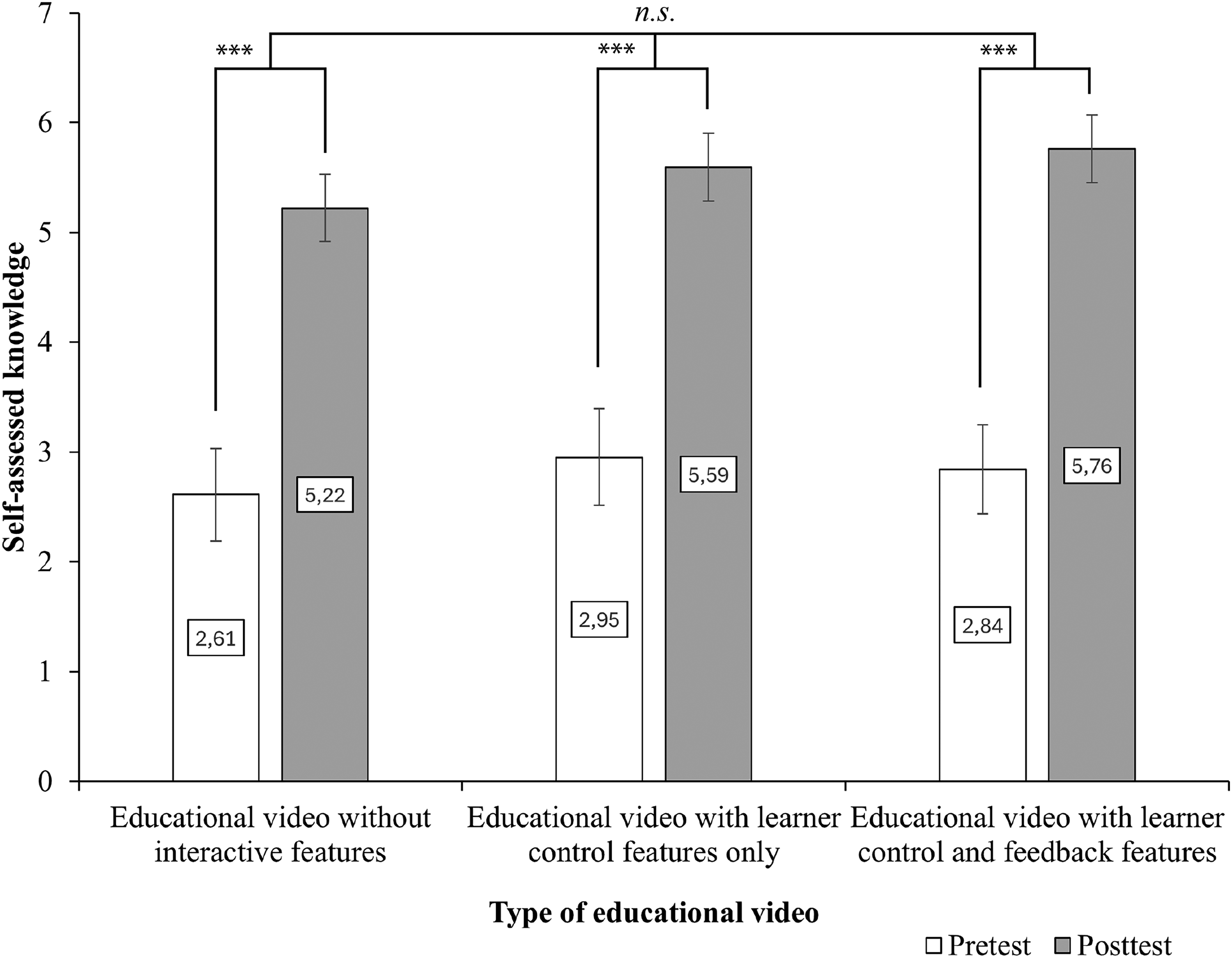

As Figure 5 illustrates, students improved considerably in terms of self-assessed knowledge in all conditions from pre to posttest. However, the differences between the three conditions seemed to be small. To test H3, we ran a mixed ANOVA with measurement time as a within-subjects factor, type of video as between-subjects factor, and self-assessed knowledge as the dependent variable. The analysis revealed a significant main effect for time, indicating an increase in self-assessed knowledge across all three groups with a large effect size,

Self-assessed knowledge states of type of educational video at pre- and posttest. ***

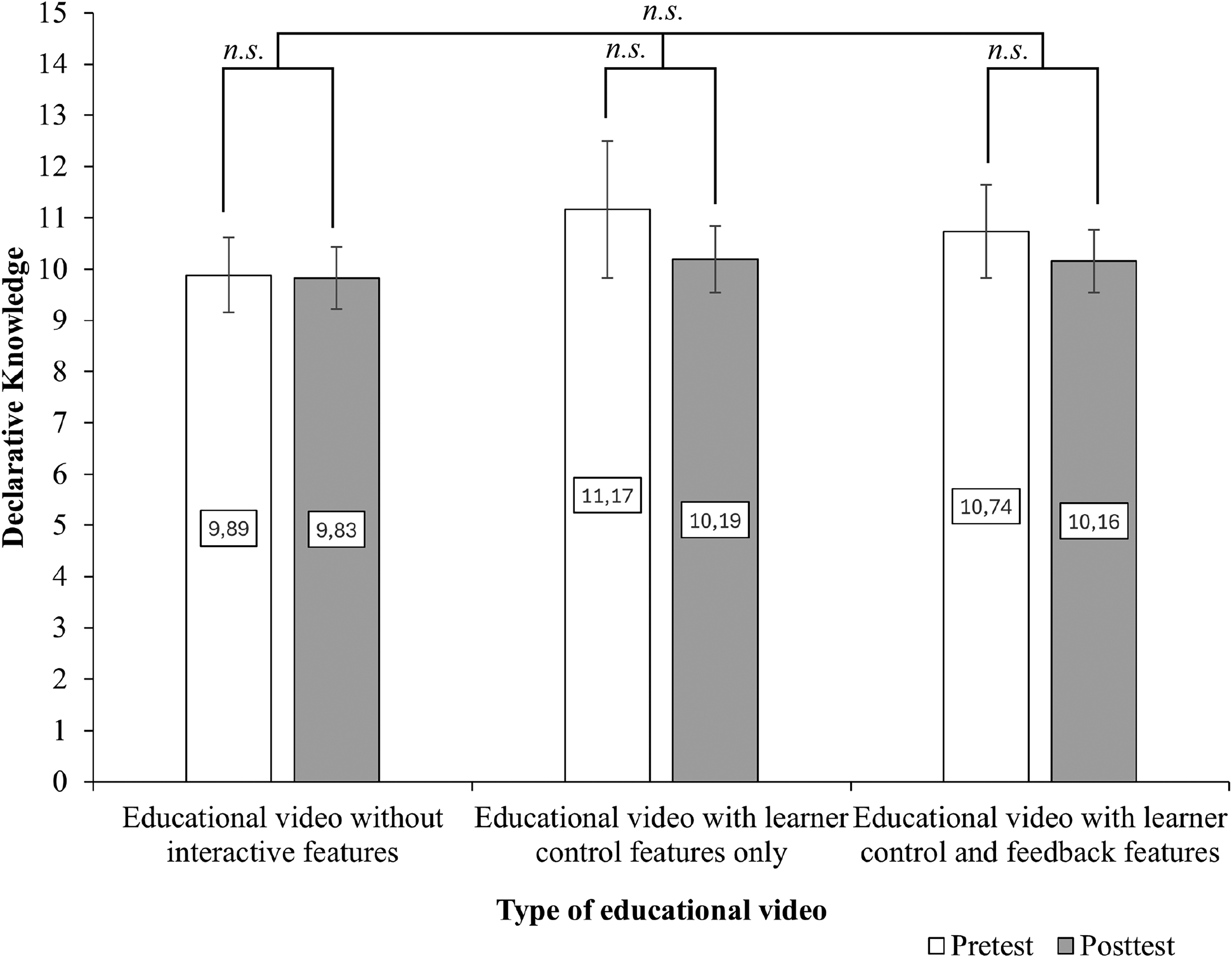

For externally assessed declarative knowledge, Figure 6 indicates a slight decrease in declarative knowledge across all three conditions. At the same time, the differences between the three conditions appeared to be marginal. A mixed ANOVA with measurement time as a within-subjects factor, type of video as between-subjects factor, and declarative knowledge as the dependent variable revealed a marginally nonsignificant main effect of time,

Externally assessed knowledge: declarative knowledge states of type of educational video at pre- and posttest.

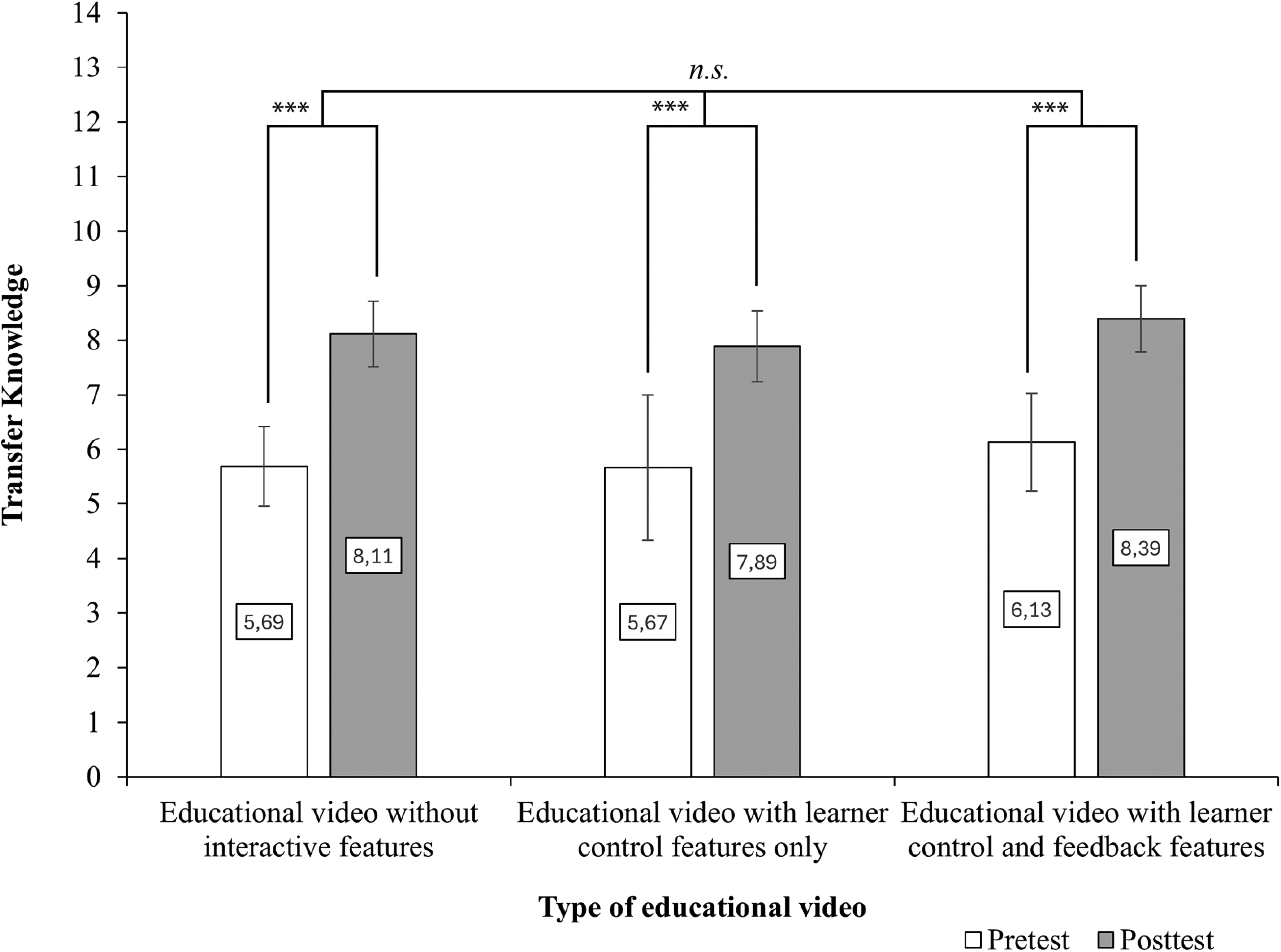

As shown in Figure 7, students’ externally assessed transfer knowledge increased substantially from pre to posttest across all conditions. We found a significant positive effect of measurement point, with a medium effect size,

Externally assessed knowledge: transfer knowledge states of type of educational video at pre- and posttest. ***

A mixed MANOVA confirmed significant multivariate effects for the combined externally assessed knowledge (comprising declarative and transfer knowledge), Wilks’ Λ = .26,

We also conducted one-sample

Discussion

Based on theoretical considerations and empirical evidence, we conducted an empirical study to test the effects of an educational psychology video that offered either no opportunities to interact with it, only learner control features, or a combination of learner control and feedback features on students’ extraneous cognitive load and learning.

Concerning RQ1, the results confirmed H1, as students from the noninteractive video condition reported significantly higher levels of extraneous load than students from the two interactive conditions. Obviously, granting learners control over the way the to-be-learnt information is delivered helps them consume less information simultaneously, that way reducing the transient information effect (Leahy & Sweller, 2011) and decreasing extraneous cognitive load (Sweller et al., 2019). This result seems to be in line with evidence from related studies (e.g., Hasler et al., 2007; Moreno, 2007; Spanjers et al., 2011). Although H2 assumed that well-designed feedback features would lead to a further decrease in extraneous cognitive load (Fyfe et al., 2015; Kok et al., 2024; Moreno, 2004), we found no significant difference between the two interactive conditions. Obviously, adding feedback features to learner control features does not necessarily decrease extraneous cognitive load. Possibly, the design of the feedback features we used was not powerful enough to completely avoid split-attention effects (Ayres & Sweller, 2021), as reading and answering the quiz questions interrupted the learning process. Further research is needed to investigate how such effects can be more effectively avoided.

Regarding RQ2, which examined the impact of the different videos on knowledge acquisition, our findings did not support our hypotheses: Even though self-assessed knowledge state and externally assessed transfer knowledge increased significantly across all conditions from pre to posttest, adding interactive features to the video did not further increase its effectiveness. This is surprising, as Ploetzner (2024) overall found a positive effect, albeit small to medium, of integrating feedback features in educational videos compared to videos with learner control or without interactive features. Based on the ICAP framework, interactive features should shift the learners’ engagement mode from passive to active and even constructive (Chi & Wylie, 2014), thereby increasing their cognitive processing. However, high-level cognitive processing may have occurred in all conditions (Chi, 2009; Thurn et al., 2023).

Yet, the opposite might also be true, i.e., that learners in the two interactive conditions did not engage in more higher-level cognitive processes as learners from the control condition. Especially with respect to the feedback features, their particular design might not have triggered enough of an engagement in generative activities (Fiorella, 2023; Mende et al., 2021; Roelle & Nückles, 2019) to play out their potential, since the quiz questions mainly asked for rather short answers. Considering the rather strong pre- to posttest differences, we would, however, regard shallow learning processes as rather unlikely. In a way, our results seem to imply that the relation between overt learning activities and the quality of cognitive processes might be weaker than the ICAP framework assumes (Thurn et al., 2023). Yet, taking the assumptions of the ICAP framework one step further (i.e., to the interactive mode of engagement), learning might be supported even more when learners jointly watch educational videos with learner control and feedback features, and subsequently discuss the content or the quiz tasks and the feedback afterwards (Chi & Wylie, 2014). Further research is necessary to test this assumption.

Alternatively, although we found no evidence for ceiling effects, the difficulty of the embedded H5P items, the educational video, or the test items may have been too low, which could explain why the interactive items had little impact (Chi, 2009; Chi & Wylie, 2014; Menekse et al., 2013). Even though we increased the psychometric quality of our knowledge test by excluding items that were too simple already at pretest, some items further showed low discrimination indices. While we kept these items to not compromise content validity, this may have limited the sensitivity of the test to detect subtle differences between groups. Additionally, the relatively high level of prior knowledge participants showed in the pretest may have reduced the likelihood of finding positive effects of adding feedback features to learner control features; as research on the expertise-reversal effect (Kalyuga, 2007) indicates, instructional support that works for novice learners can become less effective for more advanced learners. A possible indication of an expertise-reversal effect is that participants showed high externally assessed declarative (but not transfer) prior knowledge, which did not increase from pre to posttest. In other words, our participants might not have been able to benefit much from the support they received from the feedback features we used. This also aligns with the meta-analytic finding by Mertens et al. (2022) that feedback in digital learning environments tends to be more effective for learners with lower rather than higher prior knowledge. More difficult quiz questions may have enhanced the effectiveness of the feedback features.

Regarding the widely used open-source version of H5P, our results highlight the need for further development to enable more elaborate forms of feedback. This is important because the meta-analysis by Mertens et al. (2022) had shown that elaborated feedback provided in digital learning environments leads to better learning outcomes than “simple” feedback. However, because Brummer et al. (2024) reported opposite results, future research should examine the conditions under which simple or elaborated feedback is more beneficial.

Finally, current rapid developments in the field of AI make the use of a truly adaptive design of feedback features appear to come within reach of educators who use educational videos. Such technologies may dynamically offer learner-tailored instructional systems and levels of difficulty based on prior knowledge, which might help achieve greater learning gains (Chernikova et al., 2025).

Limitations and Conclusions

Of course, the present study is not without limitations. First, the H5P platform did not allow for the collection of metadata (e.g., viewing time, scores) or tracking of the video. It is therefore not clear whether and to what extent students from the respective conditions actually used the interactive features. From the observations made by Jacob and Centofanti (2023), one might expect that overall use of the feedback features might have been low, therefore obscuring their positive effects on knowledge acquisition. Although the platform initially recorded overall completion times for the questionnaire, it did not track the duration for which participants watched the educational video. Future studies should collect metadata to gain additional insights into participant engagement.

Another methodological consideration concerns the role of segmentation. Although the third condition included visual timeline indicators (dots) marking embedded quiz tasks, the video did not pause automatically. Segmentation remained self-paced, as the video only paused when participants voluntarily clicked on a prompt to engage with an interactive element. Therefore, the study did not involve a strict contrast between system-controlled and learner-controlled segmentation. However, the presence of such visual cues might still have affected learners’ pacing or attention, and future studies could systematically vary segmentation mode to examine its independent effect.

A third limitation is that both interactive videos contained not just one but multiple interactive features; considering this, the design of our study does not allow us to determine exactly which specific features were responsible for the observed effects. Our experimental design followed an additive logic, in which interactive features were successively layered across conditions—from a video without interactive features, to a version enriched with learner control features, and finally a version combining these learner control features with feedback features. While this additive structure limits the possibility to attribute effects to single interactive features, it mirrors common practice (e.g., Jacob & Centofanti, 2023; Mutawa et al., 2023). Accordingly, the present study provides insights into the cumulative impact of increasing levels of interactivity on learning processes in an authentic context. Future research could employ a fully factorial design to disentangle the specific contributions of individual interactive features while maintaining ecological validity. It could also aim to disentangle the individual effects—given the complexity of measuring the impact of bundled interactive features on learning processes and outcomes—by using experimental designs that systematically vary only a limited number of features at once. Identifying the unique contribution of each feature would offer more precise guidance for designing effective interactive educational videos.

Lastly, the fact that participants watched the video at home may have introduced considerable noise in our data. For example, participants may have watched the educational videos with varying degrees of attentiveness, or they might have used further resources to answer the knowledge test items, or—in the absence of external control—might have activated work avoidance goals and not put much effort into the task (see Kalyuga and Plass (2025) for a recent extension of Cognitive Load Theory that takes the role of learners’ goals into account). While the setting we realized can be deemed as externally valid, given that educational videos are often watched outside of the university context (e.g., at home), the internal validity of the study might be limited. It might therefore be advisable to systematically combine laboratory and field studies in future research to understand both the processes by which interactive features in videos influence learning in principle and the extent to which such effects occur in authentic learning environments.

Despite these limitations, our study helps to arrive at a deeper understanding of how different forms of interactivity in educational videos affect extraneous cognitive load and knowledge acquisition among university students learning psychological theories. Although our hypotheses regarding the impact of interactive features on knowledge gains were falsified, the partially substantial pre- to posteffects on transfer knowledge align with meta-analytical findings (Lin & Yu, 2024), suggesting that educational videos can be powerful tools to support student learning. However, research is needed to further explore the potential of H5P (and possible further developments of this technology) for creating interactive video content and to identify the most effective ways to integrate these features to enhance learning in educational psychology, as the number of studies on the effects of H5P is still limited.

Footnotes

Ethical Approval and Informed Consent Statements

The study complied with European rules regarding privacy protection and the ethical guidelines of the German Psychology Association. Approval for the study was obtained from the Data Protection Officer of the university.

Consent to Participate

All participants gave informed consent before taking part.

Author Contributions

Vincent Dusanek: conceptualization, data collection, data analysis, and write-up. Ingo Kollar: conceptualization, write-up, and supervision.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Stiftung Innovation in der Hochschullehre [grant number FBM2020-128].

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data are available from the corresponding author upon reasonable request.