Abstract

The present study explored the accuracy of participants’ (N = 317) metacognitive awareness (self-reported difficulty and confidence) of psychology concepts and the moderating effects of their psychology background (academic major, number of psychology courses completed, and overall psychology course grades). Participants first rated the difficulty of and their confidence in their knowledge of concepts from seven different subareas of psychology. They then completed a multiple-choice assessment testing their knowledge of the concepts they had previously rated. The results showed that both participants’ metacognitive awareness and experience in psychology predicted their accuracy on the assessment. A more extensive background in psychology was associated with a stronger relationship between metacognitive awareness and accuracy. A hierarchical multiple regression analysis revealed that metacognitive awareness contributed unique variance in predicting accuracy after accounting for students' psychology background. A parallel mediation analysis investigated the relationship between psychology background and metacognitive awareness on accuracy. This analysis revealed that confidence mediated the relationship between participants’ psychology background and their performance on the assessment. The results of the present study demonstrate that participants’ metacognitive awareness of psychology concepts increases with increasing psychological knowledge and experience. These findings suggest that psychology instructors should adjust their instructional and assessment strategies according to their students’ experience and knowledge levels and that more experienced psychology students should rely more on their metacognitive judgments to self-regulate their learning.

Psychology ranks among one of the most popular majors at many institutions (Clay, 2017). Indeed, it is estimated that about 1.5 million students take introductory psychology every year (Gurung et al., 2016). Given the prevalence of the discipline, it is important to understand the variables that predict students’ understanding of the concepts in the field. There are, of course, many variables in addition to the inherent difficulty of the material that can affect students’ learning and mastery of psychology concepts. These variables can include self-regulated learning strategies (e.g., self-testing: Dunlosky et al., 2013; Neely & Cho, 2014) and noncognitive or motivational factors (e.g., Cho & Serrano, 2020; Schneider & Preckel, 2017).

Nearly all variables that affect learning interact with students’ metacognitive awareness, which refers to one's ability to self-regulate or gauge the comprehension of their own learning (Metcalfe, 2009). Indeed, it is logical for a student who believes that they have mastered the material to cease studying the material and allocate their time to other endeavors. However, the cognitive and educational psychology literature is rife with examples demonstrating that students’ metacognition does not always reflect their actual mastery of the material.

For example, a common finding in the self-testing (or retrieval practice) literature is that students who engage in repeated restudy are more confident than their peers who engage in self-testing in the long-term retention of recently learned material. However, actual performance reveals that the opposite is true (Cho & Powers, 2019). One reason why students’ metacognition can be erroneous is that they rely on cues that are nondiagnostic of actual learning when making predictions of their own learning (e.g., relying on the fluency of the information; Rhodes & Castel, 2008). As reported in a large-scale meta-analysis (Schneider & Preckel, 2017), students’ metacognitive ability is a positive correlate of their academic achievement and learning.

Given the inextricable link between metacognitive awareness and learning, it is important for researchers to delineate the variables that affect and improve students’ metacognitive awareness, which are the main goals of the present study. Knowledge of these mechanisms would also benefit instructors who could tailor their pedagogy to better cater to students’ needs. Furthermore, the results of the present study can also be used to encourage students to base their study self-regulated learning strategies on their metacognitive judgments of the to-be-learned materials. When metacognitive judgments are accurate, they can be powerful tools to bolster student learning and student success (Schneider & Preckel, 2017). Previous research (Hartwig & Dunlosky, 2012; Kornell & Bjork, 2007; Morehead et al., 2016) based on more than 1,200 psychology undergraduates shows that more than 50% of students indicated that they prioritized whatever is due soonest when determining what to study next. In comparison, only about 20% of students indicated that their decision is guided by what they believe they are “doing the worst in,” which is a question that gauges students’ metacognitive awareness. This behavior is consequential to student learning and student success. Specifically, Hartwig and Dunlosky (2012) reported that students with lower grade point averages (GPAs) indicated they were more likely to study next whatever was due soonest. This trend was not observed for students who indicated that they prioritized their study time based on what they felt they were doing the worse in.

The Present Study

The primary purpose of the present study is to assess whether students’ self-reported difficulty of and confidence in psychology concepts, both of which are used as measures of their metacognitive awareness, are aligned with their actual knowledge of those concepts. As noted earlier, the metacognitive ability is a strong correlate of student learning (DiFrancesca et al., 2016; Schneider & Preckel, 2017). The present study also extends and improves upon the work of previous similar research that sought to identify difficult concepts in psychology (Gurung & Landrum, 2013; Stoa et al., 2022). Both Gurung and Landrum's (2013) and Stoa et al.’s (2022) studies serve as valuable resources for instructors to help anticipate possible difficulties their prospective students will have understanding certain concepts. As such, instructors could structure their lessons by strategically allocating more class time to review concepts that are likely to be more difficult for students to comprehend. In both studies, participants were presented with concepts in psychology and were asked to rate the difficulty of each concept. Gurung and Landrum (2013) also asked participants to rate their confidence in their understanding of the concept. However, in neither study was participants’ actual knowledge of the concepts assessed. Thus, it is unclear whether students’ metacognitive awareness of the material (e.g., self-reported difficulty) reflected their actual knowledge of the material.

The present study addressed this critical limitation by assessing participants’ actual knowledge of psychology concepts in addition to collecting their subjective judgments of these concepts. Relying solely on students’ subjective ratings as indicators of learning can sometimes be misleading because they can be imprecise gauges of their actual knowledge (e.g., Koriat & Bjork, 2005). Furthermore, students’ subjective judgments of learning could affect their self-regulated learning strategies, which are directly related to their performance in courses (e.g., DiFrancesca et al., 2016). Consequently, students who are overconfident in their knowledge of the material may spend less time studying, to the detriment of their learning. However, it should be noted that when students’ metacognitive judgments are accurate, they can and should be used to guide students’ self-regulated learning strategies, as they are correlates of actual learning (Hartwig & Dunlosky, 2012). As such, the present study included a psychology assessment that measured participants’ actual knowledge of the psychology concepts they had rated to delineate the conditions under which students’ metacognitive awareness is more accurate.

The present study further extended the findings of Gurung and Landrum (2013) and Stoa et al. (2022) in two important ways. First, the authors solicited participants’ ratings for concepts in only two subareas of psychology—research methods (Gurung & Landrum, 2013; Stoa et al., 2022) and learning (Gurung and Landrum only). Second, Gurung and Landum's data are based on only 25 students. These factors severely limit the generality of their findings and conclusions. The present study used concepts from seven major subareas of psychology (biological, cognitive, clinical, developmental, learning, research methods, and social) and included a larger sample.

The present study focused on students’ background in psychology, specifically their prior experience (i.e., number of psychology courses completed and major) and achievement (or aptitude) in psychology (i.e., overall psychology GPA) as potential moderators of metacognitive accuracy. Previous research shows that prior knowledge of psychology is a positive correlate of performance in a psychology course (Thompson & Zamboanga, 2004) and psychology assessments (Solomon et al., 2021). Prior knowledge of psychology can also reduce students’ susceptibility to affirming myths in psychology (e.g., we only use 10% of our brain), which can impede learning (Cho, 2022). Accordingly, the present study also examined whether students’ background knowledge of psychology improves their metacognitive awareness. These findings are important to instructors because if students’ metacognitive awareness of psychology concepts differs as a function of their background in psychology, it would require an instructor to adapt their pedagogical techniques accordingly when assessing and facilitating student learning.

Method

Participants

Three hundred and fifty-seven students enrolled at an ethnically diverse urban public postsecondary institution in the southern United States participated in the study. The sample size was determined via a power analysis using G*Power 3.1.9.7 (Faul et al., 2009). The analysis showed that the minimum sample size to detect a small-to-medium two-tailed correlation (|ρ| = .2; Cohen [1988]) with .90 power required 255 participants.

Design and Materials

Psychology Concepts

Thirty-five psychology concepts, five each from seven different subareas of psychology were selected. The subareas were: biological, cognitive, clinical, developmental, learning, research methods, and social. Concepts were identified and adapted from three sources: Gurung and Landrum (2013), Solomon et al. (2021), and Spielman et al. (2020) and are covered in nearly all introductory psychology textbooks (Zechmeister & Zechmeister, 2000). The list of concepts, organized by subarea, is presented in the second column of Table 1.

Accuracy, Difficulty, and Confidence (Mean and Standard Deviation) of Psychology Concepts.

Note. Confidence and difficulty are based on a 5-point scale.

Psychology Assessment

Each psychology concept was paired with a multiple-choice assessment question with five response options. The questions were a mixture of factual and conceptual questions. An example of a factual question is: According to the information processing model of memory, information moves from long-term memory into short-term [working] memory during ______. An example of a conceptual question is: You overestimate the number of teenagers who engage in mass shootings because the vivid examples from the news of teenagers who engage in mass shootings are easy for you to retrieve from memory. In other words, you are using the ______ to form the basis of your estimate. The questions were taken from Solomon et al. (2021) and Spielman et al. (2020). The list of questions is presented in column three in Table 1.

Academic Information (Psychology Background)

Participants’ psychology GPA (measured on a scale of 0–4), number of psychology courses completed, and academic major were retrieved via the University's Institutional Research Office.

Procedure

Participants completed the entirety of the study online via Qualtrics and worked on the study at a place and time of their choosing and at their own pace. The consent form solicited their permission to obtain their academic information from the University's Institutional Research Office. This study was approved by the institution's Committee for the Protection of Human Subjects.

After completing the consent form, participants were presented with the 35 psychology concepts individually and in a new, randomized order for each participant. For each concept, participants first rated its difficulty using a five-point scale (1 = extremely easy to 5 = extremely difficult), followed by their confidence in their degree of understanding of that concept, also on a five-point scale (1 = not at all to 5 = a great deal).

After rating all 35 concepts, participants were told they would be answering questions assessing their knowledge of various psychological concepts. They were explicitly told to “… not use outside resources (e.g., internet searches, your textbook, your notes) when answering each question.” As before, each question was presented in a new, randomized order for each participant. After answering the last question, participants were debriefed and dismissed.

Data Analysis

Participants’ data were excluded if they failed to respond to more than 5% of the questions (n = 24; 7%) or if their performance on the psychology assessment was below chance (n = 16; 4%). These outcomes were used as indicators of data quality control. Fourteen participants had no psychology GPA because they had not successfully completed any psychology course by the end of the semester in which they participated in this study (i.e., they withdrew from or received an incomplete in the psychology course[s] they were taking that semester). Their data were included to increase the power of all analyses that did not include participants’ psychology GPA and because excluding these participants would create an artificial restriction of range (i.e., remove all 0 s from the number of psychology courses completed variable). Excluding these participants from all analyses did not change the statistical significance of the results reported here.

The effect size for the t-tests is reported as Cohen's d, with the denominator for the paired t being the SD of the difference. When there were violations of the equal variance assumption, as indicated by a Levene's test, Welch's t-test was reported instead. For the repeated-measures ANOVAs, the Greenhouse-Geisser correction was reported when the sphericity assumption was violated. The effect size for ANOVAs is the generalized eta squared (η2G). Per the recommendation by Hayes (2022) and Pek and Flora (2018), unstandardized coefficients are reported for all linear and generalized linear models.

Results

The right-hand side of Table 1 presents the accuracy, difficulty, and confidence of each item, sorted in descending order based on accuracy within each subarea, which is sorted alphabetically. Overall accuracy, difficulty, and confidence differed among the subareas, Fs > 39, ps < .001, η2 G s > .06. Note that because the concepts and assessment questions were not equated on difficulty across subareas (as that is not the purpose of this study), caution should be taken when trying to interpret the absolute differences in accuracy and perceived difficulty among the subareas. The descriptive statistics for these main effects and all pairwise comparisons are presented in Table 1S of the Supplemental Material. 1

Participants’ academic information is presented in the top half, left-hand side of Table 2. Overall, about 39% of participants majored in psychology. Participants, on average, completed about four psychology courses, and their psychology GPA was around three, which is comparable to the overall GPA of students across all U.S. postsecondary institutions (College Board, 2023). The bottom half, left-hand side of Table 2 shows the overall accuracy of participants’ performance on the psychology assessment, and the confidence and difficulty ratings of the psychology concepts. Overall accuracy was about 49%, with participants’ confidence about half a point above the midpoint of the scale (2.5) and difficulty at about the midpoint of the scale.

Psychology Background, Average Accuracy, Perceived Confidence, and Difficulty of Psychology Concepts: Means, Standard Deviations, and Zero-Order Correlations with 95% Confidence Intervals.

Note. *p < .05; **p < .01.

PSY GPA is on a 4-point scale.

Confidence and difficulty were rated using a 5-point scale.

Table 2 (right-hand side) also presents the zero-correlations between participants’ academic variables (rows one to three) and their subjective ratings (i.e., confidence and difficulty; rows five and six) of the psychology concepts and accuracy (row four) on the assessment. Students who majored in psychology completed more psychology courses were more accurate on the assessment, were more confident in their knowledge of the psychology concepts, and found them less difficult (see column one). Similarly, students with more experience in psychology (i.e., completed more psychology classes) and had a higher aptitude in psychology (i.e., psychology GPA) were likewise more accurate, had higher confidence, and found the concepts less difficult, although the correlation between difficulty and psychology GPA was not significant. Overall, these correlations show that participants with a more extensive background in psychology performed better on the assessment, were more confident in their knowledge of the psychology concepts, and found them less difficult.

Metacognitive Awareness and Its Relation to Psychology Knowledge

Accuracy was positively correlated with confidence and negatively correlated with difficulty. These correlations demonstrate that overall, participants have an accurate metacognitive awareness of psychology concepts. Participants’ confidence was negatively correlated with difficulty. To assess whether participants’ psychology experience and knowledge moderated the relationship between accuracy, difficulty, and confidence, the correlations between these variables were computed separately for each participant. These correlations were then correlated with participants’ academic characteristics. The results are displayed in Table 3.

Correlations Between Accuracy and Metacognitive Awareness as a Function of Psychology Background: Means, Standard Deviations, and Correlations with 95% Confidence Intervals.

Note. *p < .05; **p < .01.

Major: Psychology coded as 1.

As seen in the first row in Table 3, the positive correlation between accuracy and confidence was stronger for students who majored in, have taken more classes, or have a higher GPA in psychology. The negative correlations between accuracy and difficulty and difficulty and confidence were likewise stronger among students who majored in or have completed more psychology classes. There was suggestive evidence that the correlation between accuracy and difficulty was likewise moderated by (i.e., is more robust depending on) students’ psychology GPA, although the effect was only significant at the one-tailed level. Overall, these findings suggest that participants’ psychology experience and knowledge moderated the relationship between accuracy, difficulty, and confidence.

Predicting Accuracy Using Both Psychology Background and Metacognitive Awareness

To assess the relative contributions, independently and jointly, of participants’ psychology background (i.e., major, psychology classes completed, and psychology GPA) and metacognitive awareness in predicting their performance on the psychological assessment, hierarchical multiple regression was computed. In the first step, participants’ psychology background was entered as predictors. In the second step, participants’ metacognitive awareness were entered as predictors.

The results are displayed in Table 4. In the top half of the table, which focuses on participants’ psychology background, only psychology GPA emerged as a significant predictor, although the other predictors (i.e., psychology classes completed and major) were in the predicted direction (i.e., positive). Overall, the model was statistically significant, F(3, 296) = 11.2, p < .001) and accounted for 10% of the variance. The second model (bottom half of the table) included the metacognitive awareness variables. Both confidence and difficulty were positive predictors, but only confidence was statistically significant. As in the previous model, psychology GPA was the only significant predictor when considering participants’ psychology background. Overall, the model was statistically significant, accounting for 15% of the variance, F(5, 294) = 10.63, p < .001). The 5% ΔR2 was statistically significant, F(2, 294) = 8.88, p < .001), demonstrating that participants’ metacognitive awareness contributed uniquely to assessment accuracy after accounting for their psychology background. Overall, these results suggest that both participants’ psychology background and metacognitive awareness both jointly and independently predicted participants’ accuracy on the psychology assessment.

Predicting Accuracy Using Psychology Background and Metacognitive Awareness.

Note. b and beta represent the unstandardized and standardized regression weights, respectively.

A significant b-weight indicates the beta-weight is also significant.

**p < .01.

Psychology Experience and Achievement Mediates Metacognitive Awareness

Parallel mediation analyses were computed to examine possible indirect effects and provide causal evidence that students’ experience (i.e., number of psychology courses taken) and achievement in psychology (i.e., psychology GPA) improve their metacognitive awareness of psychology concepts, which in turn affects their performance on the psychology assessment.

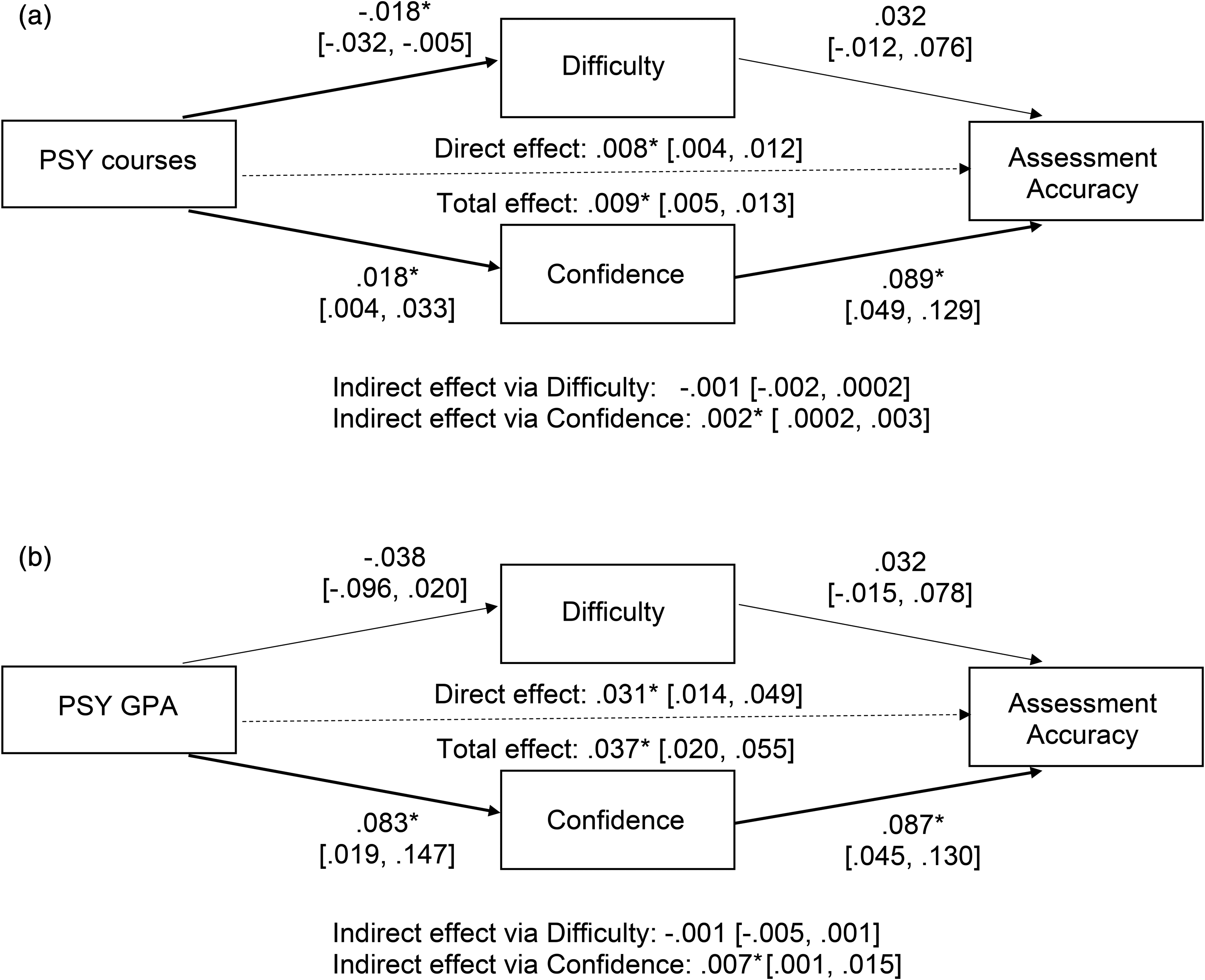

The parallel mediation approach is a superior approach to examine the effects of multiple mediators over the more commonly used multiple single mediator approach for two reasons. First, parallel mediation allows one to assess the effect of a mediator while controlling for the effects of other mediators, similar to why a multiple regression analysis would be preferred over multiple single regression analyses. Second, a parallel mediation allows one to compare statistically significant differences in the strength of the indirect effects of each mediator. Following the recommendation of Coutts and Hayes (2022), statistical differences in indirect paths were assessed using the absolute difference method (e.g., |a1b1| − |a2b2|). The analyses were computed using PROCESS (v. 4.2) macro for R (Hayes, 2022). Confidence intervals for coefficients were computed using 10,000 bootstrap resamples. Bootstrapped 95% confidence intervals that do not include 0 indicate that the coefficient is statistically significant at the .05 level. Evidence for mediation is determined by examining the product of the a (predictor to the mediator) and b (mediator to criterion) path. Figure 1(a) and (b) reports the unstandardized coefficients for all paths along with their 95% confidence intervals; significant paths are also bolded in the figure.

Parallel mediation model depicting the relationship between (a) psychology courses taken or (b) psychology GPA on assessment accuracy mediated by self-perceived difficulty and confidence.

Psychology Experience (Courses Taken). As can be seen in Figure 1(a), the direct path (psychology courses → accuracy) was significant. The significant indirect effect via confidence shows that it mediated the relationship between psychology courses and accuracy. Although difficulty was not a significant mediator, the contrast between the two indirect paths was not statistically significant (.001 [−.0001, .002]). Although not required to establish mediation, psychology courses significantly predicted confidence ratings and difficulty, although only the former was predictive of assessment accuracy.

Psychology Achievement (GPA). Figure 1(b) shows the parallel mediation model using psychology GPA as the predictor. Similar to the model with psychology experience, the direct effect of psychology GPA and the indirect effect with confidence as the mediator were significant. Likewise, the indirect effect of difficulty was not significant. The contrast between the two indirect effects was significant (.006 [.001, .012]). Psychology GPA was predictive of confidence, which in turn was predictive of accuracy. However, the paths with difficulty were not significant. Altogether, the parallel mediation models show that confidence is a significant mediator of the relationship between psychology courses and psychology aptitude on the accuracy of the assessment.

Discussion

The goals of the present study were to explore whether students’ metacognitive awareness (i.e., perceived difficulty and confidence) of psychology concepts was related to their actual knowledge of the concepts and the factors, namely their experience in and knowledge of psychology, that moderated this relationship. Metacognitive skills, which reflect one's ability to intuit their mastery of learning, are linked to students’ academic success (Dembo & Seli, 2016). Indeed, high achievers, who have strong metacognitive skills, are more likely to employ more effective self-regulated learning strategies compared to low achievers (DiFrancesca et al., 2016). Thus, individuals who can more accurately judge their learning are more effective learners (DiFrancesca et al., 2016). Similarly, inaccurate judgments of own's learning can lead to an overestimation of learning and an illusion of one's competence, which can impair learning (Koriat & Bjork, 2005). Assessing students’ metacognitive awareness and factors that affect it, which are the goals of the present study, are therefore important endeavors that have implications on instructors’ pedagogy.

Overall, the correlations among the academic and metacognitive awareness variables revealed three notable findings. First, metacognitive awareness predicted accuracy on the psychological assessment. Second, a more extensive background in psychology (i.e., majoring, completing more classes, and stronger performance, as assessed by GPA, in psychology) improved performance on the psychology assessment, conceptually replicating some of the findings in Solomon et al. (2021). These results are also congruent with the Dunner-Kruger effect, which is the finding that people with a greater deficiency in their knowledge in an area are less likely to recognize their deficiencies in that domain. There is evidence of this finding in a variety of areas, such as reading comprehension, diagnosing patients, and sports performance (see Dunning, 2011, for a review). Third, having a more extensive background in psychology likewise improved participants’ metacognitive awareness of the psychology assessment (see correlations in Table 3). However, participants’ psychology GPA was not a significant predictor of perceived difficulty nor did it moderate the association of accuracy and difficulty or confidence and difficulty. It may be that psychology GPA does not demonstrate participants’ depth of knowledge of the field, which is required for an accurate assessment of concept difficulty, especially given that a wide variety of concepts were assessed in the present study. That is, to gauge a concept's difficulty, a student must first be exposed to it. Indeed, support for this explanation comes from the significant relationships between the number of psychology classes taken and whether a student is majoring in psychology, which are both measures of the breadth of knowledge, improving the calibration of accuracy on the assessment (see Table 3).

The multiple regression analysis showed that participants’ psychology background and metacognitive awareness both independently and jointly predicted participants’ performance on the psychological assessment. However, while all these predictors were significantly correlated with accuracy when assessed independently (see the zero-order correlations in Table 2) when considered simultaneously, only psychology GPA and confidence were significant predictors. These findings are not entirely surprising and are somewhat expected given the interrelatedness (i.e., shared variance) among these variables. For example, a student who is majoring in psychology is likely to have completed more psychology classes and have a higher psychology GPA. That said, all the predictors, when considered simultaneously, were in the predicted direction, consistent with theory.

To explore the mechanisms by which participants’ psychology background affects performance on the assessment, two parallel mediation models were computed in which participants’ psychology experience (number of psychology courses completed) and psychology aptitude (psychology GPA) were used as predictors, with the two metacognitive awareness variables (i.e., difficulty and confidence) as mediators. Both models showed that confidence mediated the relationship between psychology background and accuracy. Specifically, completing more psychology courses or having a higher psychology GPA increased participants’ confidence in their knowledge of psychology concepts, which in turn improved their performance on the assessment. Although psychology background was negatively predictive of the perceived difficulty of the concept (with only the predictor using psychology courses being significant), difficulty did not predict accuracy. One possible explanation for this lack of relationship is that the ability to recognize or assess the difficulty of a concept does not necessarily imply an understanding of the concept. For example, a student who realizes that matrix algebra is a difficult concept will not necessarily perform better on an assessment of matrix algebra. That said, one's ability to recognize the difficulty of a concept is undoubtedly related to learning and achievement, as it serves as an opportunity to regulate one's learning (e.g., spend more time mastering a difficult concept and less time on easier concepts [Metcalfe, 2009] or change one's encoding and retrieval strategies [Cho & Neely, 2017]).

Overall, these findings also extend those of previous similar studies (Gurung & Landrum, 2013; Stoa et al., 2022) that collected only metacognitive awareness ratings for a limited subarea of psychology (research methods and learning) concepts, did not assess participants’ actual knowledge of psychology concepts, and did not consider their findings based on participants’ psychology background.

Limitations and Future Directions

There are a few caveats of the present results that could serve as fruitful areas for future research. First, each concept was tested with only one multiple-choice question. This small sample size meant that a participant could have received credit for knowing a concept due to chance (i.e., guessing the correct answer on the assessment) or penalized for not knowing a concept because the phrasing of the assessment question was ambiguous to them. Either scenario would attenuate the correlations observed in the present study due to measurement error. Thus, future research could use a variety of questions to assess a concept.

A second limitation is that only five concepts per subarea were sampled. Clearly, this represents only a fraction of concepts students are exposed to in a class (at least 150 in introductory psychology alone; Zechmeister & Zechmeister, 2000). The limited number of concepts used in the present study was motivated by the desire to increase the generality of the findings of the study and yet ensure that participants do not lose focus or interest in the study. Successful completion of psychology courses, however, demands that students acquire knowledge of many more concepts than were assessed in the present study. It is therefore important to assess students’ knowledge of additional psychology concepts in future research.

The third limitation, which is related to the second limitation, is that the present study did not include concepts from all the subareas of psychology. However, including concepts from all subareas of psychology, while a lofty goal, would be impractical, given that there are more than 50 divisions in APA, although each division does not necessarily represent a unique subarea of psychology. Although the present study included concepts from the five core areas covered in introductory psychology (i.e., biological, cognitive, developmental, social and personality, and clinical; APA, 2014), other prominent and burgeoning subareas were regrettably omitted. Perhaps most notably, there were no concepts that were specific to industrial–organizational psychology, a subarea that makes profound contributions to the field and society and is a growing subarea of psychology (Diaz, 2019). Of course, given the interdisciplinary nature of psychology, some of the concepts that were assessed in the present study (e.g., prejudge and trait theory), are highly relevant in many areas of psychology, including industrial–organizational. Finally, to increase the predictive and ecological validity of this assessment (or similar assessments), future researchers can compare students’ performance on the assessment to their final grade or other objective performance measures (e.g., final exam) in their psychology course.

Implications for Educators

Instructors can use the data from this study to gauge which concepts their prospective students will find more difficult and adjust their teaching and assessment strategies accordingly. For example, based on these data, an instructor who is covering information pertinent to biological psychology should spend more time focusing on the action potential sequence of a neuron (accuracy: 26%) rather than a topic that students are likely more familiar with such as the basic functions of the cerebral cortex (accuracy: 63%). It would be useful for someone teaching psychology to know that students who have a comprehensive background in psychology are more accurate in their metacognitive awareness of psychology concepts (see row one in Table 3). Specifically, if an instructor were to solicit their students’ subjective confidence in their knowledge of the material, they should be mindful that these ratings are likely to be less accurate among their lower-level students (e.g., students taking an introductory-level course) compared to their upper-level students. As such, an instructor who is teaching a lower-level course may want to supplement students’ subjective confidence ratings with a more objective measure of knowledge (e.g., a low-stakes quiz).

It could be worthwhile for each institution, especially those that serve many psychology majors and minors, to collect normative data on the perceived difficulty and confidence in learning or understanding various psychology concepts among the students they serve. As established in the current study, students have a reasonably accurate intuition and gauge of their understanding of a concept and thus such data, following the procedures used in the present study, would be relatively simple to attain. When collecting these data, educators should be aware that the results of this study suggest that students with more experience and knowledge of psychology are more likely to be able to make reliable metacognitive assessments. Consequently, an educator or researcher should ensure that they also sample from a pool of students with a stronger background in psychology. These data can be obtained as part of a short in-class activity, and if they are used solely for pedagogical purposes, an instructor need not obtain approval from their internal (or human subjects) review board, as such an activity would not be defined as human subjects research per the Office of Human Research Protections (Office for Human Research Protections, 2020). The newly created assessment could also serve as an objective evaluation tool for majors at that institution and the results could be used in several ways to improve pedagogy (e.g., helping instructors gauge whether their approach to teaching students a specific concept is effective).

When students’ metacognitive awareness is accurate, instructors should encourage students to prioritize their perceived knowledge of and confidence in the material when determining what to study next. As noted in the Introduction, a common behavior among psychology students, including those at highly selective institutions (i.e., the University of California, Los Angeles [UCLA]), report basing their study decision on what is due soonest rather than metacognitive factors such as what they believe they are doing worse in (e.g., Morehead et al., 2016; Kornell & Bjork, 2007). This is a notion shared by some instructors as well, as only 10% of instructors surveyed by Morehead et al. (2016) believed that students ought to prioritize studying what they are doing worse in. The results of the previous and present study suggest that students’ metacognitive awareness should be leveraged when it is highly accurate (i.e., greater experience in and knowledge of psychology, as demonstrated in the present study) to facilitate student learning. That is, students should rely on their metacognitive judgments more when determining what to study next and this notion should be reinforced by their instructors.

Conclusion

In conclusion, the present study provides a framework for instructors to gather data on students’ metacognitive awareness of psychology concepts. Furthermore, the present study delineates the conditions under which students’ metacognitive awareness of psychology concepts is more accurate. Students’ metacognitive awareness, when accurate, should be used to guide their self-regulating learning strategies.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the University of Houston-Downtown (grant number Funded Faculty Leave).

Supplemental Material

Supplemental material for this article is available online.