Abstract

Online-quizzes are an economic and objective method for formative assessment in universities. However, closed questions have been criticized for promoting shallow learning and resulting often in poor learning outcomes. These disadvantages can be overcome by embedding closed questions in effective instructional designs involving feedback. In the present field study, a final sample of N = 496 students completed the same online quiz, consisting of 60 true–false statements on the biological bases of psychology in two sessions. In order to enhance the benefit of formative testing on students’ test achievement in Session 2, students received elaborate feedback (i.e., by providing explanations for the in-/correctness) for half of their answers in Session 1, and corrective feedback (i.e., just indicating the in-/correctness) for the other half. The results showed that students scored higher in Session 2 if elaborate feedback had been provided in Session 1, compared with when corrective feedback was provided. More specifically, students profited more from elaborate feedback on incorrect answers in Session 1 than from feedback on correct answers. As a practical recommendation, self-administered formative tests with closed-question format should at least provide explanations why students’ answers are incorrect.

Question Formats in Online Quizzes

In blended- and e-learning scenarios, students’ self-assessment by means of quizzes has recently become a very popular method to present study materials, specifically using a closed-question format (Nguyen & McDaniel, 2015). Closed questions consist of a stem, in which a question, a statement, or a case example is provided. Potential answers are displayed below in form of multiple sentences (also referred to as items; Haladyna et al., 2002). Each sentence is to be evaluated by students, and correct answers are to be selected, for example, by ticking a box. Typically, closed-question formats vary in the number of items presented (Haladyna et al., 2002), with single choice being the most conventional format: Only one of at least three presented items is correct. Alternate choice represents a special case of single-choice questions, including only two items; in the multiple–choice format, more than one of n items can be correct. In the true–false format, participants need to evaluate the correctness of a statement in relation to its stem by making a binary choice (“correct” vs. “incorrect”).

The closed-question format is a very efficient and objective method. Implementing automated evaluations, university lecturers save correction time and can provide self-assessment even for large student groups (e.g., Lindner et al., 2015). Students can work independently according to their own schedule and receive feedback on their learning gains via formative tests during the term. But there are various possibilities how to design tests and quizzes (e.g., single-, alternate- vs. multiple-choice format) and how to implement and enrich them in computer-based environments (Lindner et al., in press). Thus, university lecturers are faced with the question of how to integrate testing with closed questions into their lectures and seminars to make their students profit the most. Even though the existing feedback literature sheds light on the effectiveness of different feedback conditions, the ecological validity of corresponding instructional designs has yet to be tested empirically in naturalistic online-learning environments. This is addressed in the present article by implementing a field study.

Answering Questions as a Study Strategy

In some parts of the psychological curriculum, acquisition of factual knowledge seems to be an important learning outcome. For instance, facts about the structure and function of neurotransmitters, neurons, and larger brain structures is necessary to understand current practical and research questions in many fields of psychology (Simon-Dack, 2014). Under these circumstances, tests-often implemented as answering course questions online-are supposed to assess prior learning gains and academic achievement, but also to foster learning and to serve as study materials (for a seminal study, see Bjork, 1975). Some students tend to use formative assessments for study rather than for diagnostic purposes. That is, they skip attending classes or reading the course materials, and instead they attempt to acquire the relevant knowledge through quizzing (Marsh et al., 2007; Roediger & Marsh, 2005).

In fact, prior research showed that taking tests (i.e., successfully practicing retrieval from memory) during the learning phase can promote long-term retention, as compared to restudying (for meta-analyses, see Adesope et al., 2017; Rowland, 2014; Schwieren et al., 2017). This direct testing effect has been shown to be quite robust across various learning materials (e.g., text passages, Roediger & Karpicke, 2006; visuospatial information, Carpenter & Pashler, 2007; performed actions, Kubik et al., 2018) and also test formats (e.g., multiple-choice tests and short-answer tests, e.g., Butler & Roediger, 2007; Kang et al., 2007; Smith & Karpicke, 2014). However, its magnitude depends on the level of initial test performance (i.e., retrieval success, e.g., Greving & Richter, 2018; Smith & Karpicke, 2014) and feedback (Adesope et al, 2017; Phelps, 2012; Rowland, 2014), specifically in real-life educational contexts (McDaniel & Little, 2019). Recent meta-analyses indicate an overall positive effect size of d = .56 of testing also in psychology classrooms (for metaanalyses, see Schwieren et al., 2017) and a small positive effect of frequent testing in class on higher education achievement in general (d = .24; Schneider & Preckel, 2017).

Answering closed questions makes students engage in retrieval practice (cf., Karpicke, 2012), which is supposed to promote long-term retention and transfer of information (e.g., McDaniel et al., 2013; Pan & Rickard, 2018). Hence, online quizzes with closed questions should elicit retrieval-based learning of facts. In line with the processing-levels theory (Craik & Lockhart, 1972), a deeper information processing when studying with closed questions should improve learning outcomes in general (Collins et al., 2018). According to the retrieval effort hypothesis (Pyc & Rawson, 2009), successful but more difficult retrieval attempts, requiring more retrieval effort for learners, promote better long-term learning than less difficult retrieval attempts. Thus, instructors are supposed to design assessment tasks that create so called desirable difficulties (cf. Bjork & Bjork, 2011) to further enhance retrieval-based learning in (formative) tests. This can be achieved by manipulating the time interval in between the repetition of questions (with a longer time interval making learning more difficult) or the number of times a test item should be repeated by students (Pyc & Rawson, 2009). Yet, the more naturalistic the learning environment becomes, the more difficult it is to control the circumstances under which learning occurs. For example, in online classes, students are studying according to their own schedule by navigating through a learning environment and taking quizzes according to their own pace. An alternative way to enhance retrieval-based learning is to provide feedback on learners’ provided memory responses.

The Importance of Feedback for Successful Learning

Feedback is defined as “information about the gap between actual and desired performance” (Butler & Woodward, 2018, p. 2). Based on laboratory studies (Rowland, 2014) and in the context of learning and teaching psychology (Schwieren et al., 2017), meta-analyses reported positive additive effects of feedback beyond the learning benefits engendered by testing on retention, and on achievement in higher education in general (d =.47; Schneider & Preckel, 2017). Furthermore, feedback decreases the risk of students acquiring incorrect knowledge by correcting memory errors (Butler & Roediger, 2008; Ecker et al., 2020; Marsh et al., 2007; Roediger & Marsh, 2005). Especially in the multiple–choice and true–false question format, students are repeatedly exposed to lure test items which can result in students remembering false answers as they become familiar with the statements (negative suggestion effect, Toppino & Brochin, 1989). This in turn enhances the likelihood of choosing wrong answers in a subsequent test, especially when formative tests are used for study purposes, and lure items represent common misconceptions or personal experiences (Marsh et al., 2007). Furthermore, feedback is also helpful for reassuring low-confidence, correct answers (Butler et al., 2008; Fazio et al., 2010; Pashler et al., 2005). Thus, provided with formative testing include multiple–choice or true–false questions, feedback is argued to magnify the benefits of retrieval practice on retention, while prevents the negative side effects of learning lure items (Butler & Roediger, 2008; Butler & Woodward, 2018).

Note, however, that there are various types of feedback that are differently effective in fostering additional learning (for a review, see Butler et al., 2013; Butler & Woodward, 2018):

right/wrong feedback (also referred to as knowledge of results), in which the learner receives feedback on whether the provided answer(s) is (are) correct; corrective feedback (also referred to as correct answer or verification feedback), in which the correct answer(s) is (are) presented to the learner; elaborate feedback (sometimes referred to as explanatory feedback), in which an explanation is given why the learners’ answer(s) is (are) in-/correct.

Prior research has largely shown that right/wrong feedback, relative to no feedback, engenders no reliable benefits on later memory performance (Fazio et al., 2010; Pashler et al. 2005), with the exception of retaining low-confidence correct answers (e.g., Fazio et al., 2010). Importantly, corrective feedback was revealed to be more effective than right/wrong feedback (Fazio et al., 2010; Pashler et al, 2005). In part this seems to be the case as corrective feedback provides the to-be-corrected information. Furthermore, incorrect retrieval attempts can enhance learning in subsequent restudy opportunities, including corrective feedback (Izawa, 1961). Such test-potentiated learning on subsequent restudy phases has been robustly shown with various materials (Arnold & McDermott, 2013a, b; Kubik et al., 2016; Tempel & Kubik, 2017).

The current literature (e.g., Butler & Woodward, 2018) discusses the efficacy of elaborate feedback to provide learning benefits above and beyond corrective feedback. Until now, rather few studies directly compared both types of feedback (cf. Butler & Woodward, 2018; for a meta-analysis, see Bangert-Drowns et al., 1991), with some studies finding a benefit of elaborate feedback (e.g., Corall & Carpenter, 2020; Gilman, 1969; Petrović et al., 2017), while other studies did not (e.g., Pridemore & Klein, 1995; Thijssen et al., 2019; Whyte et al. 1995). For example, an early study by Gilman (1969) tentatively suggested that elaborate feedback can reduce the necessary number of encounters with the learning material and promote retention. More recent work shows also rather mixed results on the benefits of providing additional information on why a response is correct. For example, Petrović et al. (2017) revealed positive effects of such elaborate feedback when providing three online quizzes in a course on digital signal processing in electrical engineering. More specifically, they manipulated the type of feedback that followed participants’ responses to multiple-choice questions: participants received either (1) no feedback, (2) corrective feedback pointing out the correct answer, or (3) elaborate feedback involving the correct answer plus a short explanation including a misconception that might have led to the incorrect response and a reference to the course material. Knowledge gains were larger with elaborate feedback as compared to corrective feedback, which in turn was still more beneficial than providing no feedback. However, other recent studies, for example, Thijssen et al. (2019) did not show any additional benefit of receiving elaborate feedback above and beyond the benefits produced by formative testing and corrective feedback. They employed a multiple app-based, formative tests during a 4-week long course and also assessed the final exam grades. Note however, that the general performance level was relatively high (i.e., approximating a potential ceiling effect), and corrective feedback included specific source information for background materials to close the gap for the course exam. This source information also enhanced study behavior before the exam in general and may have encouraged the students to look up this information which might have blurred the difference to elaborate feedback.

The potential benefit of elaborate (over corrective) feedback has also been investigated in other research domains, such as knowledge revision and text comprehension: different types of refutation texts are typically provided to correct false beliefs and to enhance the comprehension and application of knowledge statements, concepts, or mental models (e.g., Ecker et al., 2020; Prinz et al., 2019; Rich et al., 2017). For example, in the study by Rich et al. (2017), students were instructed to judge statements that included common misconceptions as correct or incorrect, followed by a feedback phase: refutation texts stated and dispelled the misconceptions, and either included an explanation of the refutation (i.e., elaborate feedback) or not (i.e., corrective feedback). Results showed that—in the long run-learners were more likely to correct misconceptions when an explanation was provided why a statement was incorrect, compared to when only a refutation and the correct answer was given, and this even after longer retention intervals (Ecker et al., 2020; Rich et al., 2017). Similarly, recent studies using more educationally relevant materials (e.g., Prinz et al., 2019) also demonstrated beneficial effects of refutation (over standard) texts on statistics in terms of a decreased rate of misconceptions and, associated with it, an enhanced textual comprehension and metacomprehension accuracy (i.e., ability to predict future comprehension performance).

So far, there is still little known about why and under which circumstances elaborate feedback is beneficial for learning. It has been generally argued that providing an explanation nudges deeper processing of the (to-be-learned) materials and to prevent rote learning of false statements (Butler et al., 2013). More specifically, it has been argued that elaborate feedback on Test 1 may enhance students’ metacognitive awareness of memory errors and previously misunderstood concepts (cf. Metcalfe, 2009) as well as of critical versus superfluous aspects of the to-be-learned materials in terms of enhanced schema abstraction (cf., Corral & Carpenter, 2020). Furthermore, elaborate feedback may trigger students to a higher degree to critically evaluate and integrate the provided feedback, the error or misconception, and prior knowledge (Rich et al., 2017). This, in turn, may elicit processes of conceptual change that are relevant to correct errors or misconceptions (Kendeou & O’Brien, 2014; Prinz et al., 2019).

The above studies leave open relevant questions for research on the effect of feedback on acquiring factual knowledge in educational real-life contexts. For instance, Petrović et al. (2017) mixed in-class teaching with computerized quizzes. Elaborate feedback might potentially draw less attention in large online courses where quizzing is self-administered and self-paced. Given that, at the time of writing, the Covid-19 pandemic restricts education increasingly to online formats, there is a great potential to also use formative assessments for study purposes (and not only as a diagnostic tool) and to reap the benefits of feedback in formative testing. If students attempt to learn by quizzing before more seriously engaging with the course material, elaborate feedback might even strengthen learning on items students answered correctly, which may often be based on guessing or rather weak knowledge. Yet, additional feedback information on why correct answers are correct can be redundant when assessing retention of knowledge (Butler et al., 2013). This research question merits further investigation as the majority of studies have recently focused on the effect of elaborate feedback on revising misconceptions or allocating elaborate feedback to incorrect items (e.g., Ecker et al., 2020; Petrović et al. 2017; Rich et al., 2017). Elaborate feedback may be specifically effective in enhancing metacognitive awareness and inducing conceptual change process in simpler materials, such as factual true–false statements, compared to complex and abstract misconceptions. Yet, there is only sparse research on the relative benefits of different feedback types in formative assessment scenarios (cf. Lindner, 2015, 2018).

To close this gap, the main objective of this study was to evaluate the benefits of corrective versus elaborate feedback on knowledge acquisition in a naturalistic setting. More specifically, we conducted this study in a course at a distance university, including a large sample of psychology undergraduate students. More specifically, we implemented formative testing by means of an online quiz and manipulated feedback type in a within-subjects, mixed-list design, as discussed above. To assess factual knowledge in Session 1, students were tested on 60 verbal statements from course materials in the domain of biological psychology, followed by either corrective or elaborate feedback. After a self-regulated delay, the students’ test achievement was assessed in Session 2. According to the theorizing above in terms of enhanced metacognitive awareness and conceptual-change processes, elaborate feedback should be more potent than corrective feedback in fostering learning. We expected this to be the case for incorrect answers after both shorter and longer delays (see Butler et al., 2013; Ecker et al., 2020; Rich et al., 2017). Furthermore, we explored whether retention of correct answers may profit from elaborate feedback as well. Especially after longer self-regulated inter-session intervals, potential effects of memory consolidation might materialize for guessed or weak responses. Note that in prior studies, the potential benefit of elaborate feedback on knowledge revision may be confounded by a general increase in motivation: with feedback type being manipulated between-subjects, students received elaborate or corrective feedback on all quiz items for students (e.g., Chase & Houmanfar, 2009; Petrović et al., 2017). This might simultaneously enhance students’ motivation and knowledge revision within a student as a function of feedback type. To better isolate the effects on knowledge revision, a within-subjects, mixed-list design was implemented by providing corrective and elaborate feedback on different subsets of test questions. This way students were not able anticipate which items were followed by corrective versus elaborate feedback, and thus the differential benefits of feedback type on knowledge revision can be more selectively studied by holding motivational effects constant.

Method

Materials of this study are available in a public repository (cf. Grahe et al., 2020): https://osf.io/ebaq4/

Participants

As a prerequisite to taking the final course exam, students participated in a course activity, in which this online study was conducted. Before data collection, students were asked for informed consent to use their anonymized study data for research purposes (i.e., evidence-based improvements in teaching formats and materials). Importantly, students were informed that their consent was not required for successful participation in the online-course activity or gaining access to the final course exam. Also, they were specifically instructed on how informed consent could be withdrawn after completed study participation.

In total, 557 students took part in the course activity. Of these, 61 students were not included in the study (i.e., n = 38 did not provide informed consent; n = 23 did not take part in Session 2), resulting in a final sample of 496 students for the study. Data on age and gender were not collected within the course. However, prior studies using psychology students at the FernUniversität in Hagen revealed a rather diverse sample (Stürmer et al., 2018); mean age of 35 years, and 70% women, alleviating the gender bias that typically is observed in other psychology programs in Germany. Furthermore, 80% of the students are working while studying, and around 10% of the sample have acquired the university entrance qualification based on vocational qualifications rather than via a regular A-levels certificate.

Materials

Quiz statements were generated from the course materials on the biological bases of psychology. As a first step, contents were selected that could be transformed into a (1) correct statement (e.g., Action potentials transmit information based on their frequency), (2) paired incorrect statement (e.g., Action potentials transmit information based on their amplitude), and (3) a possible explanation for why the statement is (not) correct (e.g., Because there is a variation in frequency while form and amplitude show little variation among different instances of the action potential). In total, 60 correct and 60 paired incorrect statements were generated. As a second step, 60 statements were selected for the quiz from this material pool, with 30 statements being correct and 30 different statements being incorrect.

Design

In the current study, we employed a three-factorial 2 × 2 × 4 mixed design, with the first two factors being varied within-subjects. The first within-subjects factor is feedback type being provided in Session 1; half of the sentences were receiving corrective and half of the sentences elaborate feedback, with both halves being randomly intermixed (mixed-list design). The second within-subjects factor is response status of the sentences in Session 1, being answered either correctly or incorrectly. The third factor is the self-regulated delay by students to enter Session 2. To this end, we split the delays into four intervals (1st, 2nd, 3rd–5th, vs. >5th day after Session 1) and treated as a between-subjects variable.

Procedure

Data were collected during regular online course activities in a module covering biological bases of psychology (5 credit points) as well as learning, motivation, and emotion (5 credit points) at FernUniversität in Hagen. The module is offered every term and completed with a written exam. The course is delivered using the Moodle learning management system (Costello, 2013). Teaching materials include video lectures that can be watched on demand, texts that resemble textbook chapters, chats for posing, and discussing questions, as well as self-tests of the learned materials. To be eligible for an exam, students have to complete at least two out of three exam-related tasks in the first half of the semester. These tasks are offered in Moodle and include self-tests and questionnaires, with the aim to ensure that students refrain from delaying exam preparation to the second half of the semester. One of the three tasks offered in the timeframe of one month (November–December 2019) was to take a quiz on the course contents on the biological bases of psychology at two separate time points. Students were only eligible to participate once in the quiz in Session 1. It was necessary to complete the quiz in Session 1 for gaining access to the quiz in Session 2. To maximize learning gains, we instructed students to start the quiz in Session 2 following at least a 1-day delay after completing Session 1. Due to the applied setting of the study, students were provided with a flexible time frame in between Sessions 1 and 2. This was thought to make their studies meet the demands of their own private schedule (e.g., work or family occupation). Therefore, they were free to choose any time interval they liked within four weeks. In consequence, the course design did not permit an experimental manipulation of the delay between sessions.

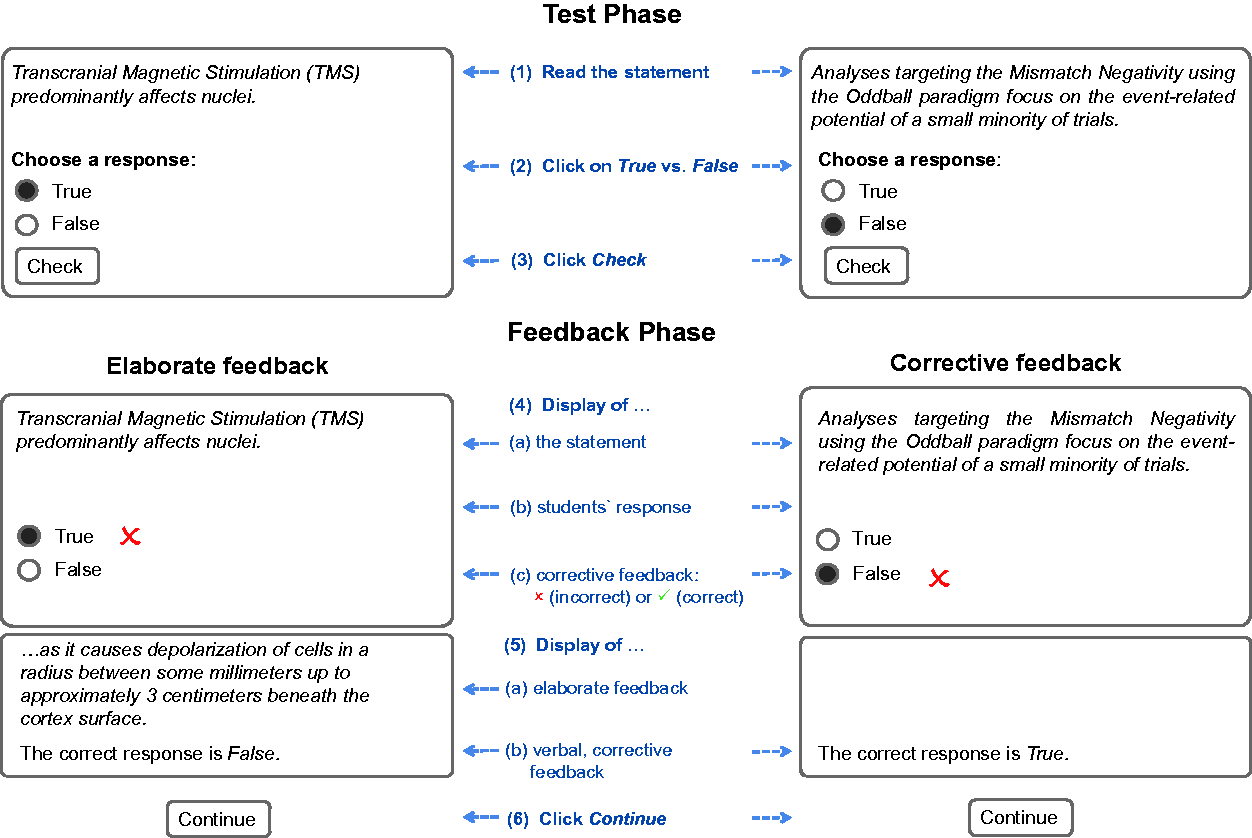

In Session 1, 60 statements were presented one at a time in a uniquely randomized order, and students could process them in a self-regulated manner. Students were asked to indicate whether the statement was true, and received corrective feedback (see Figure 1). Feedback was visible until students, at their own pace, clicked to proceed to the next statement of the quiz. Critically, the feedback type was manipulated in Session 1 between statements. For half of the correct and incorrect statements that each student received, additional elaborate feedback (i.e., why the statement was correct/incorrect) was provided.

Session 1 Test Phase and Feedback Phase. Note: The experimental procedure consisted of a test phase and a feedback phase, which typically involved 6 steps (see Step 5a for the manipulation of whether elaborate feedback was presented for a given statement or not). The example statement on the left includes elaborate feedback, while the example statement on the right includes corrective feedback.

In Session 2, the identical statements were presented for a self-regulated duration. This time, however, elaborate feedback was provided for all of these statements.

Results

Students paused between Sessions 1 and 2 for an average of 2.72 days (SD = 4.97 days, range 0 to 28 days). Test performance on average increased significantly from 63.19% correct in Session 1 (SD = 9.38%, chance baseline = 50% correct) to 78.49% correct (SD = 13.11%) in Session 2, t(495) = 29.18, p < .001, d = 1.31. This suggests that participants profited from testing and subsequent study activities. The low accuracy level in Session 1 suggests that many of the correct answers were based on guessing.

Next, we investigated the specific benefits of elaborate versus corrective feedback. In a two-factorial ANOVA, we tested whether the response status (correct vs. incorrect) and feedback type (corrective vs. elaborate) in Session 1 influenced percentage correct of the response rate in Session 2. As expected, correctly answered sentences in Session 1 were more often correctly answered in Session 2, as indicated by a main effect of response status (correct vs. incorrect) in Session 1, F(1, 495) = 744.61, p < .001,

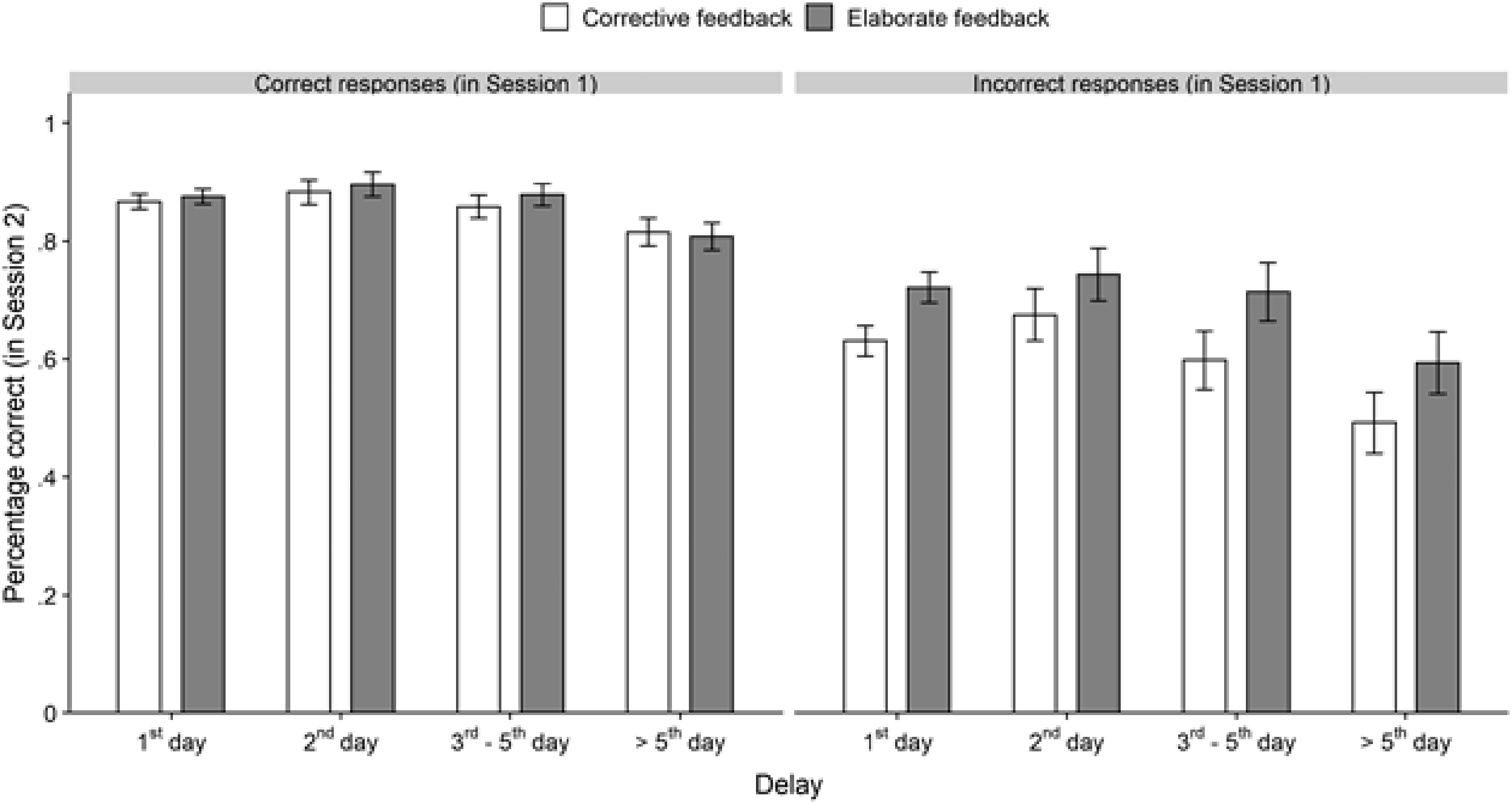

We additionally explored whether the effect of elaborate feedback was present for short versus long delays between sessions. To this end, we split the self-regulated between-session delay into four time intervals (1st day after Session 1, n = 242; 2nd day, n = 82; 3rd–5th day, n = 92; > 5th day, n = 81), aiming for similar sizes of the categories except for the shortest delay group (1st day). As shown in Figure 2, there was no indication that the effect of feedback type changed with increasing time delay. To qualify this impression, we conducted two separate 2 (feedback type: corrective vs. elaborate) × 4 (delay: 1st, 2nd, 3rd–5th, vs. > 5th day) mixed ANOVAs on percentage of correct responses in Session 2. For sentences with incorrect responses in Session 1, there was a significant main effect of feedback type, F(1, 492) = 76.95, p < .001,

For sentences with correct responses in Session 1, there was only a significant main effect of delay, F(3, 492) = 8.24, p < .001,

We checked whether the delay-stability of the effect of elaborate feedback was robust with respect to the delay intervals chosen as categories. To do so, Spearman rank correlations were computed between (1) the delay between the sessions and (2) the recall advantage of items with elaborate feedback over items with corrective feedback for the full sample. Such a correlation was not significant, neither for items that had been answered correctly in Session 1 (r = −.033, p = .47) nor for items that were answered incorrectly (r = .038, p = .401).

General Discussion

In formative online-assessment, feedback is an important ingredient to foster learning. Hence, we compared the benefit of feedback on true–false questions for students’ knowledge acquisition in a biological psychology class. Although lab experiments have manipulated feedback type, few researchers have examined how the nature of feedback affects learning in a naturalistic learning environment. Furthermore, especially research on corrective versus elaborate feedback is sparse. The present field study helps to fill this gap in the literature.

The results show that students’ performance increased between Sessions 1 and 2, largely reflecting the well-known benefits of testing and feedback as well as the general cumulative learning gains that would be expected as the e-learning course progresses during the term. Most importantly, adding a supplementary explanation to the provided feedback helped students to acquire more fact-based knowledge, and thus students profited from this elaborate feedback even beyond potential benefits of testing and corrective feedback. The results are in line with previous studies showing that adding a supplementary explanation to refutation texts reduced the number of misconceptions in the context of knowledge revision (Ecker et al., 2020; Rich et al., 2017). Similarly, refutation (over standard) texts decreased the rate of statistical misconceptions and thereby enhanced textual comprehension and metacomprehension accuracy (Prinz et al, 2019). The results of this study help to enrich the perspective offered by earlier studies (Chase & Houmanfar, 2009; Gilman, 1969; Petrović et al., 2017) and provide support for the effectiveness of elaborate feedback on learning and knowledge acquisition. It would have been conceivable that elaborate feedback does not further enhance retention (see e.g., Butler et al., 2013; Thijssen et al., 2019) or even harm fact learning by drawing attention away from the statement towards processing the explanation. Such a potential disadvantage was not supported by our data.

The positive effect of elaborate feedback in our study was exclusively shown for statements that were answered incorrectly in Session 1. Given the low accuracy rate in Session 1, many of the correct answers likely were based on guessing. One could have expected that elaborate feedback on correct items, which were in the majority guesses, might have helped students to reassure their low-confidence correct answers and thereby covertly improved their learning. As we did not assess confidence ratings, future studies are encouraged to include such students’ metacognitive judgement and also a no-feedback baseline condition to more systematically examine the relative benefits of elaborate feedback in formative testing for both correct and incorrect answers. It is yet to be determined whether practitioners should also provide feedback on correct responses for their students and whether this will enhance long-lasting learning, confidence ratings, and transfer of knowledge. Through automated testing in e-assessments, elaborate feedback can be given cost- and time-effectively.

So far there is a dearth of evidence-based knowledge why elaborate feedback (relative to corrective) feedback enhanced retrieval-based learning in formal testing settings (Butler et al., 2013; Butler & Woodward, 2018). Several potential mechanisms are discussed in the literature. One mechanism may be that elaborate feedback on Test 1 might have raised students’ metacognitive awareness. That is, students were likely to be more sensitive to errors and previously misunderstood concepts, as well as they may have more actively monitored their learning activities (cf. Metcalfe, 2009). Relatedly, in example-based learning of more complex concepts, students may become more sensitive to the properties of the to-be-learned materials that are superfluous and can be ignored, or respectively to the more critical features (Corrall & Carpenter, 2020). With increased metacognitive awareness and accuracy, students might have engaged in more strategic self-regulation and thereby more effectively (re-)study the test materials and the provided explanations (Kornell & Finn, 2016; Metcalfe, 2009; Thiede, Anderson, & Therriault, 2003)-a possible explanation that should be examined more closely in future research on knowledge acquisition. A related mechanism is that elaborate feedback, similar to refutation texts, might have triggered a critical evaluation, elaboration, and integration of the provided feedback, misconception, and prior knowledge (Rich et al., 2017). Such conceptual-change processes (Kendeou & O’Brien, 2014; Prinz et al., 2019) are important to straighten out incorrect answers or misconceptions, and foster comprehension and retention of the studied materials (cf. Prinz et al, 2019). On a speculative note, elaborate feedback (compared to other feedback types) might be particularly effective in correcting high-confidence wrong answers or misconceptions (cf. Butler et al., 2008; Rich et al., 2017). While the present study cannot address this research question, future research is encouraged to assess students’ own confidence judgements about their single-choice answers, and to examine the effectiveness of different feedback types to correct misconceptions held with different confidence levels.

The current findings are of high practical utility for formative assessment, specifically in e-learning contexts. While in formative assessment, tests typically are designed to provide diagnostic information for the students, the present study demonstrated that elaborate feedback can further enhance the learning outcome and academic achievement beyond potential benefits produced by formative testing with true–false questions and corrective feedback. Importantly, the beneficial effects of elaborate feedback were demonstrated with educationally relevant materials in a naturalistic, self-regulated learning environment of formative testing, suggesting a rather high ecological validity of our findings. We speculate that providing elaborate feedback in retrieval-based learning is specifically effective in applied settings such as online courses, as the case in the present study. Here, elaborate feedback was provided to students’ answers on exam-relevant quiz questions, while the materials in many laboratory studies were instead course-unrelated and rather arbitrary. For this reason, students of the present study might have been additionally motivated to fully take advantage of the benefit provided by elaborate feedback, as it was of higher value to achieve better results in the final exam. Furthermore, prior experimental work even showed that elaborate feedback is effective in promoting the application of knowledge to new questions and contexts (cf. Butler et al., 2013; Corral & Carpenter, 2020; Moreno, 2004), and likely more so than enhancing retention (cf. Butler et al., 2013).

The present study has, however, also several limitations. Here we focused on the retention of knowledge by assessing the correct responses to the same true–false questions as in the initial quiz. However, providing an additional explanation may further the comprehension (from superficial fact knowledge to a deeper understanding; Butler et al., 2013) even for correct responses, and thereby their successful application to new contexts. Follow-up studies should examine whether elaborate feedback can further enhance transfer of learning to new questions in real-life educational contexts. Furthermore, the interval between both test sessions in this study was not controlled. Future research is encouraged to experimentally manipulate other factors such as type of the learning material, the feedback timing following the test question in Session 1, and the delay of Session 2 (Butler & Woodward, 2018; Fazio et al, 2010).

In sum, elaborate feedback provides an additional and effective learning gain beyond the potential benefits that testing knowledge and corrective feedback produces in true–false questions. Importantly, this pattern of results was obtained in a self-regulated online quiz embedded in a regular course and with a student sample characterized by large diversity in demographical and educational background, supporting the robustness, generalizability, and practical relevance of the presented findings. Future research is directed towards identifying the specific effects of different question formats and feedback types on knowledge acquisition and transfer of learning as well as to explore the moderating role of students’ characteristics in educational, cognitive, and motivational domains.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.